This benchmark compares serialization/deserialization performance between Apache Fory and Protocol Buffers in C++.

- CMake 3.16+

- C++17 compatible compiler (GCC 8+, Clang 7+, MSVC 2019+)

- Git (for fetching dependencies)

Note: Protobuf is fetched automatically via CMake FetchContent, so no manual installation is required.

| Key | Value |

|---|---|

| OS | Darwin 24.5.0 |

| Machine | arm64 |

| Processor | arm |

| CPU Cores (Physical) | 12 |

| CPU Cores (Logical) | 12 |

| Total RAM (GB) | 48.0 |

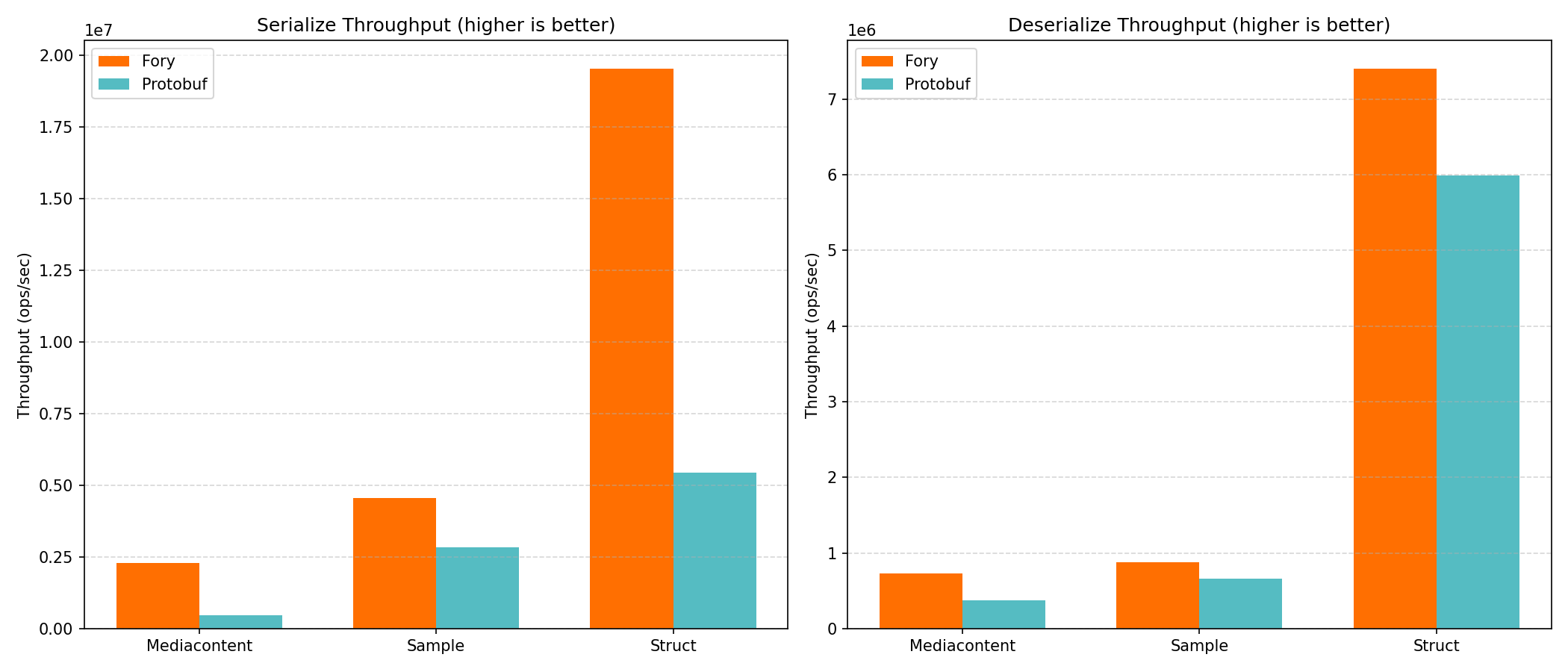

| Datatype | Operation | Fory TPS | Protobuf TPS | Faster |

|---|---|---|---|---|

| Mediacontent | Serialize | 2,430,924 | 484,368 | Fory (5.0x) |

| Mediacontent | Deserialize | 740,074 | 387,522 | Fory (1.9x) |

| Sample | Serialize | 4,813,270 | 3,021,968 | Fory (1.6x) |

| Sample | Deserialize | 915,554 | 684,675 | Fory (1.3x) |

| Struct | Serialize | 18,105,957 | 5,788,186 | Fory (3.1x) |

| Struct | Deserialize | 7,495,726 | 5,932,982 | Fory (1.3x) |

Run the complete benchmark pipeline (build, run, generate report):

cd benchmarks/cpp_benchmark

./run.shcd benchmarks/cpp_benchmark

mkdir build && cd build

cmake -DCMAKE_BUILD_TYPE=Release ..

cmake --build . -j$(nproc)./fory_benchmark# Run only Struct benchmarks

./fory_benchmark --benchmark_filter="Struct"

# Run only Fory benchmarks

./fory_benchmark --benchmark_filter="Fory"

# Run only serialization benchmarks

./fory_benchmark --benchmark_filter="Serialize"# JSON output

./fory_benchmark --benchmark_format=json --benchmark_out=results.json

# CSV output

./fory_benchmark --benchmark_format=csv --benchmark_out=results.csv| Benchmark | Description |

|---|---|

BM_Fory_Struct_Serialize |

Serialize a simple struct with 8 int32 fields using Fory |

BM_Protobuf_Struct_Serialize |

Serialize the same struct using Protobuf |

BM_Fory_Struct_Deserialize |

Deserialize a simple struct using Fory |

BM_Protobuf_Struct_Deserialize |

Deserialize the same struct using Protobuf |

BM_Fory_Sample_Serialize |

Serialize a complex object with various types and arrays using Fory |

BM_Protobuf_Sample_Serialize |

Serialize the same object using Protobuf |

BM_Fory_Sample_Deserialize |

Deserialize a complex object using Fory |

BM_Protobuf_Sample_Deserialize |

Deserialize the same object using Protobuf |

BM_Fory_MediaContent_Serialize |

Serialize a complex object with Media and Images using Fory |

BM_Protobuf_MediaContent_Serialize |

Serialize the same object using Protobuf |

BM_Fory_MediaContent_Deserialize |

Deserialize a complex object with Media and Images using Fory |

BM_Protobuf_MediaContent_Deserialize |

Deserialize the same object using Protobuf |

BM_PrintSerializedSizes |

Just compares the serialization sizes of Fory and Protobuf |

A simple structure with 8 int32 fields, useful for measuring baseline serialization overhead.

A complex structure containing:

- Primitive types (int32, int64, float, double, bool)

- Multiple arrays (int, long, float, double, short, char, bool)

- String field

Contains one Media and multiple Images.

The benchmark uses benchmarks/proto/bench.proto which is shared with the Java benchmark for consistency.

A Python script is provided to generate visual reports from benchmark results.

pip install matplotlib numpy psutil# Run benchmark and save JSON output

cd build

./fory_benchmark --benchmark_format=json --benchmark_out=benchmark_results.json

# Generate report

cd ..

python benchmark_report.py --json-file build/benchmark_results.json --output-dir reportThe script will generate:

- PNG plots comparing Fory vs Protobuf performance

- A markdown report (

REPORT.md) with detailed results

python benchmark_report.py --help

Options:

--json-file Benchmark JSON output file (default: benchmark_results.json)

--output-dir Output directory for plots and report

--plot-prefix Image path prefix in Markdown reportUse profile.sh to generate flamegraphs for performance analysis:

# Profile all benchmarks

./profile.sh

# Profile specific benchmarks

./profile.sh --data struct --serializer fory

# Profile with custom duration

./profile.sh --serializer fory --duration 10./profile.sh --help

Options:

--filter <pattern> Custom benchmark filter (regex pattern)

--data <struct|sample> Filter benchmark by data type

--serializer <fory|protobuf> Filter benchmark by serializer

--duration <seconds> Profiling duration (default: 5)

--output-dir <dir> Output directory (default: profile_output)Example with custom filter:

# Profile a specific benchmark

./profile.sh --filter BM_Fory_Struct_SerializeThe script automatically detects and uses available tools (in order of preference):

- samply (recommended):

cargo install samply - perf (Linux)

When using perf on Linux, the script automatically generates flamegraph SVG files.

FlameGraph tools are auto-installed to ~/FlameGraph if not found.

Output files are saved to profile_output/:

perf_<timestamp>.data- Raw perf dataflamegraph_<timestamp>.svg- Interactive flamegraph visualization

Open the SVG file in a browser to explore the flamegraph interactively.