AiOS DispatchLatest iOS development news and resources featuring AI integration, developer tools, and cutting-edge technologies.

https://aiosdispatch.com

en-us[email protected] (Rudrank Riyam)[email protected] (Rudrank Riyam)Thu, 19 Mar 2026 00:53:23 GMT<![CDATA[AiOS Dispatch 15]]>Wed, 11 Feb 2026 08:00:00 GMT

https://aiosdispatch.com/posts/aios-dispatch-15

https://aiosdispatch.com/posts/aios-dispatch-15 This edition was written by Claude Opus 4.6 Max in Cursor, based on the vision I gave for this post, and then edited by me.

## Two Minutes to TestFlight

**Thomas Ricouard**, my French friend, the guy behind Ice Cubes and one of the most opinionated iOS developers I know, tried something this week. He gave **Codex** full access to my CLI and pointed it at his new iOS app, CodexMonitor.

He went from having **nothing configured** to having the app in **TestFlight public beta review in under 2 minutes**. The agent did it all by itself.

> "Let me get this straight: I gave Codex full App Store Connect power with @rudrank ASC CLI. I went from having NOTHING configured to having an app in TestFlight public beta review in UNDER 2 minutes. The agent literally did everything itself. Insane. My mind is blown."

> — Thomas Ricouard (@Dimillian) [Feb 9, 2026](https://x.com/Dimillian)

Here is what the agent did on its own, without any human touching anything:

- Confirmed the app record existed on App Store Connect

- Verified the IPA upload was already present

- Created an encryption compliance declaration (the thing that blocks every first TestFlight build)

- Created a "Beta Testers" TestFlight group

- Filled in the required beta review metadata

- Submitted for external beta review

The submission state went straight to `WAITING_FOR_REVIEW`. All from a single prompt.

In a follow-up tweet, Thomas showed the full end-to-end flow: from app identifier creation to TestFlight distribution, all running through his agent. His words: "with ASC, you don't have to touch App Store Connect ever again."

I built this to save my own time when I am shipping for my client and my own apps. Watching someone else's AI agent use it autonomously to ship their app is a different kind of validation.

---

## Why I Built ASC

App Store Connect website is slow. I have complained about the spinner since I got my Apple Developer account in 2019. I get logged out every time I blink. Every release is the same. Create a version, fill in What's New, wait for processing, add to a TestFlight group, submit for review. Clicking through the same web UI, over and over.

Fastlane exists, but it comes with Ruby, Bundler, a lot of plugin conflicts. I wanted something simpler — something where I can prompt my way to the App Store in the time it takes to set up Fastlane.

**ASC** is a single Go binary. It is heavily inspired by GitHub CLI. You install it with Homebrew, authenticate with your App Store Connect API **once**, and let your agent know about it. It will handle the rest.

```bash

brew tap rudrankriyam/tap

brew install rudrankriyam/tap/asc

```

My favourite command is the PPP pricing one. You can set territory-specific pricing based on purchasing power parity, so your app is not $9.99 in India the same way it is in the US. One command to adjust prices across regions:

```bash

asc subscriptions pricing --app "APP_ID"

asc subscriptions pricing --subscription-id "SUB_ID" --territory "USA"

```

It covers TestFlight groups and testers, subscriptions and in-app purchases, signing and certificates, analytics and sales reports, Xcode Cloud workflows, notarization, App Clips, and a whole lot more. If you can do it in the App Store Connect web UI, you can do it from your terminal.

I just released **v0.26.2** with 59 releases and almost 800 stars on GitHub. 11 contributors and growing. It has been a wild ride, especially because most of the development was done with AI agents themselves. The irony is not lost on me.

---

## Designed for Agents

Every command uses explicit, self-documenting flags with default JSON output for easy parsing. Clean exit codes for scripting if you want to integrate it into your CI/CD pipeline.

That is exactly what Thomas's Codex agent did. It called `asc publish testflight --help`, read the flags, understood the workflow, and shipped the app. No special integration. No plugins. No configuration files for the agent. Just a CLI that describes itself clearly enough for a machine to figure it out.

I also published a set of **Agent Skills** — instruction files that teach AI coding agents how to use ASC for specific workflows:

- **asc-xcode-build** — Archive, export, and upload from xcodebuild

- **asc-release-flow** — End-to-end TestFlight and App Store release workflows

- **asc-signing-setup** — Bundle IDs, certificates, provisioning profiles

- **asc-testflight-orchestration** — Beta groups, testers, What to Test notes

- **asc-metadata-sync** — Localizations and Fastlane format migration

- **asc-submission-health** — Preflight checks and review monitoring

- **asc-notarization** — Developer ID signing and notarization for macOS apps

Drop these into Cursor, Claude Code, or Codex, and the agent already knows how to ship your app. The skills are there for when you want the agent to handle complex multi-step flows without you having to explain anything.

---

## Getting Started

If you want to try it out, here is the quick setup:

```bash

brew tap rudrankriyam/tap

brew install rudrankriyam/tap/asc

asc auth login \

--name "MyApp" \

--key-id "YOUR_KEY_ID" \

--issuer-id "YOUR_ISSUER_ID" \

--private-key ./AuthKey.p8

asc apps --output table

```

That last command lists all your apps in a nice table format. If you see them in your terminal, you are good to go. You can also use environment variables (`ASC_KEY_ID`, `ASC_ISSUER_ID`, `ASC_PRIVATE_KEY_PATH`) for CI/CD setups.

Generate your API key at [App Store Connect](https://appstoreconnect.apple.com/access/integrations/api) if you do not have one yet. One of the times you have to open the website, sigh.

---

## What's Next

I am working on "private" endpoints like App Store rejections and going through unresolved issues.

The goal is simple: you should not have to open App Store Connect for anything that can be a terminal command. And with AI agents getting better every *week*, that terminal command does not even have to be typed by you.

Contributions are welcome. Issues are welcome. And if your AI agent ships an app with ASC, create a pull request to have it added to the "Wall of Apps" that use ASC!

Until next time, keep shipping and building!]]>[email protected] (Rudrank Riyam)<![CDATA[AiOS Dispatch 14]]>Sun, 09 Nov 2025 08:00:00 GMT

https://aiosdispatch.com/posts/aios-dispatch-14

https://aiosdispatch.com/posts/aios-dispatch-14 Every word in this newsletter is typed by me, which is ironic for a newsletter about AI. I do have Cursor Tab enabled for the markdown, although I love the sound of the clicks on the keyboard.

Especially on this MacBook M5, that was graciously provided by Apple for me to test and review for everything related to on-device AI!

It is raining tokens this week, and we all have to thank the competition between the frontier labs, and IDEs.

## Cursor and Composer 1

Cursor released their first model, and oh boy, it is a fast one. The speculations about *Cheetah* being an alpha version of it were true.

I have spent billions of tokens on it, and although it is not as good as Sonnet 4.5 and GPT 5 Codex models, it is a great one to quickly research codebase, do redundant code changes, and refactors that would not mess up your codebase.

Or grammar check this newsletter in ten seconds! I do not have to pay for a grammar checker anymore, hah.

I have used it to debug MLX Audio, lint all of my Swift packages, and fix warnings and errors in my codebase. It also helps me audit the codebase for potential issues, and I have to double-check how it found years-old unseen subtle issues that I missed, and that too in seconds!

I just updated the website and it took me less than a minute to set it up for the Black Friday sale, while adding the new book links and images. No way I could have done it this fast, without any errors, and with the quality of the code that I would have written myself.

I was not a fan of its price point at the same rate as GPT 5 Codex, but right now, it is **free** for everyone for a limited time, and it is a great way to test the model.

Another competitor is SWE 1.5 by Windsurf, which is much faster, but I have not had a chance to test it yet.

## Claude Code and Web

Almost two weeks ago, I received a mail from Anthropic that they are offering a whole month of the Claude Max plan worth $100 for free to try it out. I assume they are reaching out to those who cancelled their subscriptions, and Codex has been gaining popularity as *the* CLI tool for coding agents.

Again, this week, they are offering $1000 worth of credits to the Max users to try out Claude Web, as Codex Cloud is also becoming popular. Codex also announced 50% more limits on the Pro plan, which is a great deal for those who are using it heavily.

Competition is fierce, and it has become a race to the bottom. What a great time to be a builder!

## Polaris Alpha

A stealth model has appeared on OpenRouter, and it is called Polaris Alpha. It is available for free, and from my testing, it has OpenAI-ish vibe to it and is surprisingly fast, unlike Codex.

It is an amazing alternative if you do not have a subscription to any of the models, and still want to vibe-code in Cursor. Just override the OpenAI URL in the model settings, put in the OpenRouter endpoint with your API key, and you are good to go.

As I said, it is raining tokens this week!

## What's Next

I have kept this newsletter short and sweet for this week so I can at least start writing again. Next week should be more interesting with so many rumors floating around.

Until next time, keep shipping and building!]]>[email protected] (Rudrank Riyam)<![CDATA[AiOS Dispatch 13]]>Mon, 02 Jun 2025 08:00:00 GMT

https://aiosdispatch.com/posts/aios-dispatch-13

https://aiosdispatch.com/posts/aios-dispatch-13 **Heads Up: WWDC 2025 Rumor Roundup!**

>

> This edition has a bit of a mix of content with the latest talk and speculation about WWDC25. If you would rather wait for the official news on 9th June and keep the surprises, feel free to skip the "WWDC25" section!

Welcome to the thirteenth edition of **AiOS Dispatch**, your go-to newsletter for the latest in AI and iOS development!

We finally had a bit of a breather of a week in the AI world. Updates to existing models are being rolled out, and all eyes are on Apple as WWDC25 approaches next Monday. I am already in US, heading to Cupertino in some days to settle down and prepare to cover everything related to WWDC25.

Let's dive into the latest!

## WWDC25 & Apple AI SDK

There is talk on the street that Apple might be renaming its OSes. Instead of iOS 19, we could see **iOS 26**, aligning with the year 2026. This change would likely ripple across the board to iPadOS, macOS (codenamed 'Tahoe'), watchOS, tvOS, and visionOS (directly going from 3 to 26!).

A consistent naming scheme sounds good to me but I am wary of explaining my mother why am I asking her to upgrade to iOS 26 in 2025.

### AI SDK

Apple has kind of accepted defeated this year with an "AI gap" year. There are hopes of giving developers the reign with a direct access to its AI models through a new SDK. I am looking forward to it, reading on the research papers by the machine learning team of Apple.

As a developer, start thinking about the features you can build with this SDK. An efficient, on-device (multi-modal) LLM experience where you can truly personalize user experience without asking the user to download another few GBs of local model.

### Swift Assist

Updates to the never-released Swift Assist are anticipated with a rumoured integration with Anthropic models and the leaks this year are explicit enough to mention about the long-awaited rich text editor in SwiftUI!

As the days go by, I am expecting more and more details about the releases related to AI at WWDC25 to build upon both hype and expectations.

## DeepSeek R1 Update

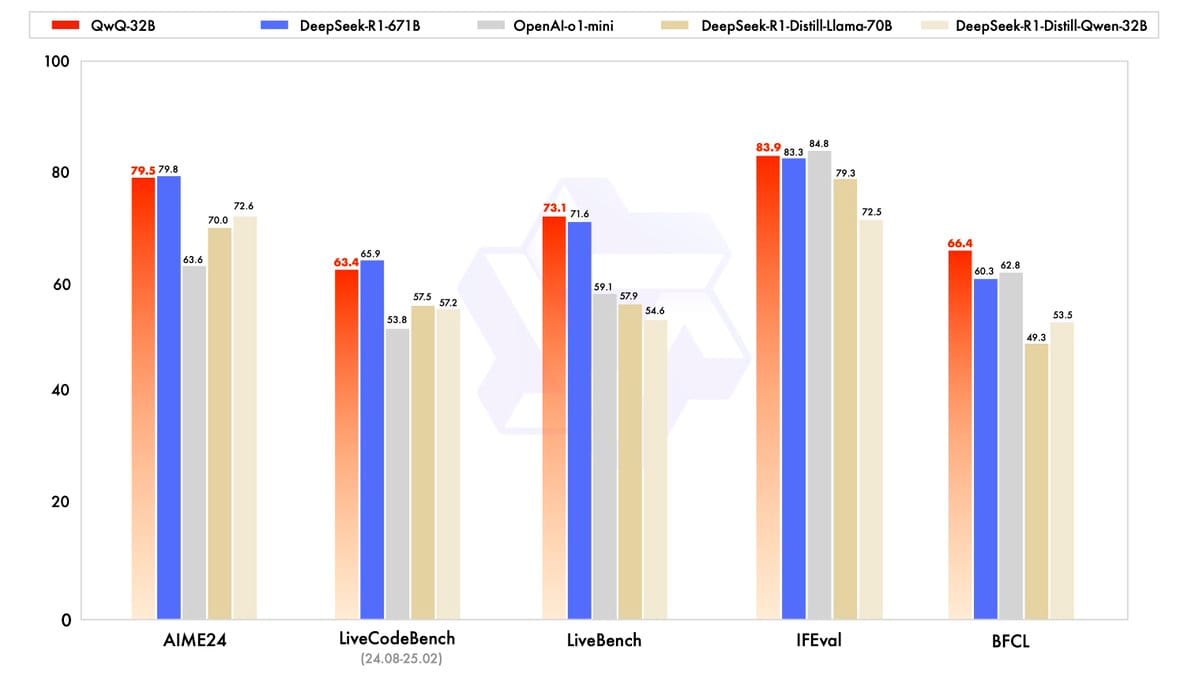

**DeepSeek R1** has a new update with the fresh `0528` version.

It has better **coding and reasoning abilities** and the benchmarks show it is getting close to the top models out there. Plus, it has support for function calling, a win for us developers using it with AI tools like Cursor or Alex Sidebar.

## Trae AI IDE

There is a growing AI IDE called **Trae**. It has the best visual implementation of MCPs server that I have seen, yet.

For those looking to try it out, they are running a special offer: your first month for just **$3**. A good alternative to tools like Cursor. Worth checking out both the IDE and its privacy policy.

## Alex Sidebar and Stability

Looks like the folks at **Alex Sidebar** have been burning the midnight oil! The experience of using it has never been better, with the latest update 3.2 update. Much better scrolling, fewer visual glitches, and additions like a "scroll to bottom" button.

You can now generate images right inside Alex! It goes from app designs, logos, assets, and much more!

It could have been a 4.0 in itself, ha.

It is awesome to see Alex Sidebar pushing forward so fast, so it is either Apple catching up (unlikely) or acquiring it (highly likely).

## What's Next

The next week will set the path for the rest of the year for us developers, developing at the intersection of AI and iOS.

Until next time, keep shipping and building cool stuff!

]]>[email protected] (Rudrank Riyam)<![CDATA[AiOS Dispatch 12]]>Wed, 28 May 2025 06:00:00 GMT

https://aiosdispatch.com/posts/aios-dispatch-12

https://aiosdispatch.com/posts/aios-dispatch-12

It runs in an Ubuntu container (macOS is not yet supported) and is similar to using it in VS Code with GitHub MCP. It writes the code, tests it, and submits a pull request for your review.

I have merged around 20 pull requests for the new newsletter website, my blog, and a small macOS utility app I worked on. I tested it with simple functionality (you can follow along in the PR below), and it is useful for those repetitive tasks we all hate doing.

My biggest learning has been to assign it isolated tasks with clear requirements to prevent scope creep that ends up in spaghetti code and a useless PR that you have to fix. I got myself the Copilot Pro+ plan, which has unlimited requests until June 4th, and $39 per month felt cheap for the 2000+ requests I have made.

## Google I/O 2025: Gemini Gets Serious

Google I/O happened on May 20-21, and they will deliver what Apple (kind of) promised. The **Gemini 2.5 updates** are worth your attention. The new **Deep Think** experimental reasoning mode for Gemini 2.5 Pro is essentially Google's answer to OpenAI's o3 series.

Beyond the core AI models, Google announced several developer tools:

**Jules**, Google's coding agent, is now in public beta. It works in parallel across your entire codebase, handling tasks like dependency updates, testing, and bug fixes while you focus on feature development.

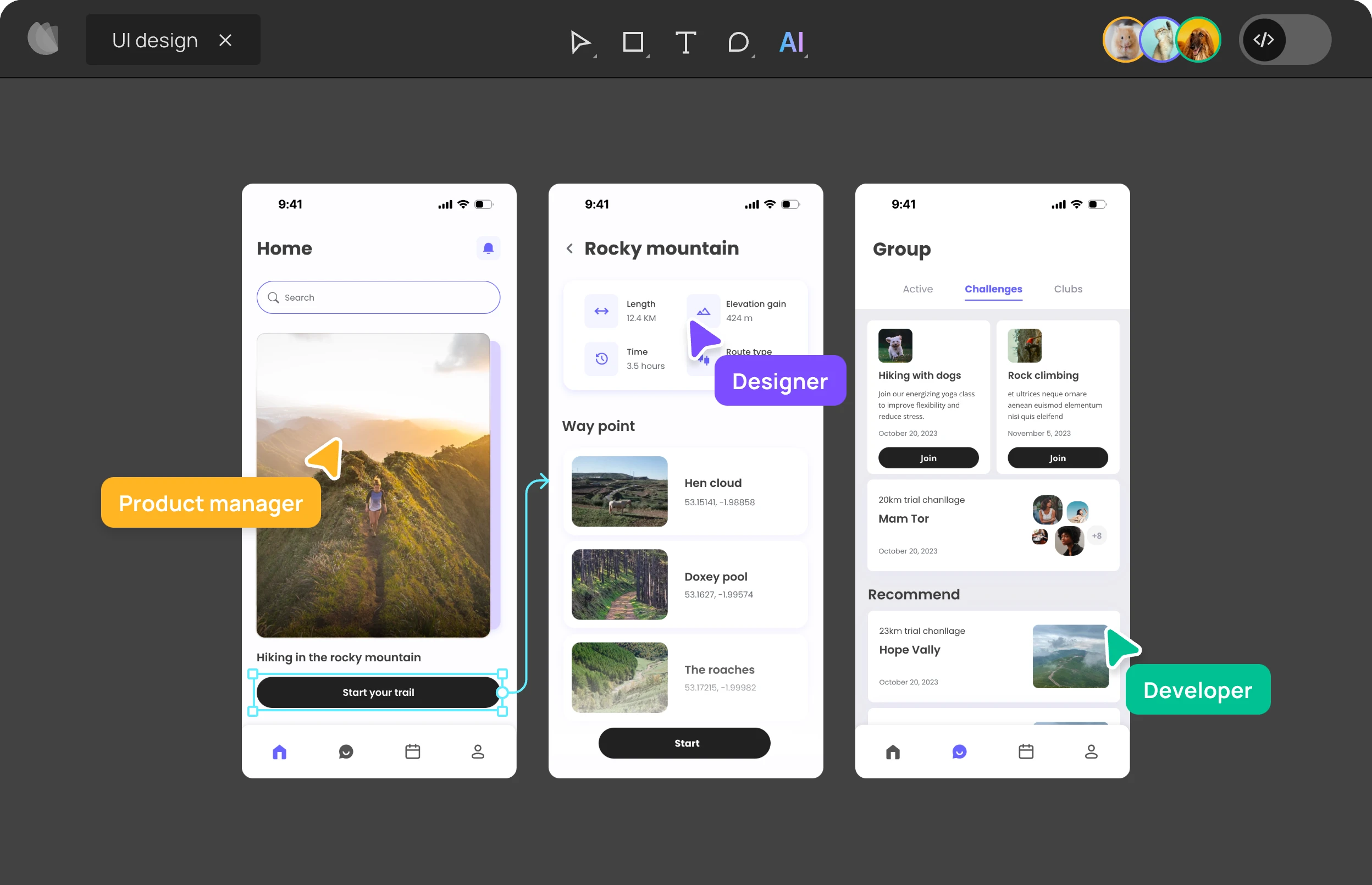

**Stitch** is Google's new AI-powered UI generation tool that creates designs from natural language prompts. You can iterate conversationally and export to CSS/HTML or Figma. After a few iterations, all the designs are Material, and it was difficult for me to generate an iOS native design. Let me know if you have any success with it!

**Gemma 3n** is Google's mobile-first AI approach, a model that runs on just 2GB of RAM. They have a project showcasing interesting possibilities on Android, with iOS coming soon.

## Sam & Jony's Hardware Play

Sam Altman and Jony Ive announced their collaboration on **"io"**, a new AI hardware venture. Details are still scarce, but the combination of OpenAI's AI capabilities with Ive's design philosophy has everyone speculating.

For those of us in the Apple ecosystem, this feels significant. Ive's understanding of human-computer interaction combined with OpenAI's frontier AI models could produce something different from the usual "AI gadget" attempts we have seen. The timing, just before Apple's WWDC and during Google I/O, feels a bit intentional.

## Claude 4: Opus and Sonnet Back to Claim Their Crown

Anthropic released their **Claude 4** family of models on May 22nd, and they are positioning the new **Opus 4** and **Sonnet 4** models as the best coding models in the world. The benchmark numbers are impressive, with a 72.5% score on SWE-bench for Opus 4 and 72.7% for Sonnet 4.

Opus 4 can work continuously for several hours, making it ideal for agentic workflows and complex problem-solving. Sonnet 4 is the more balanced option for everyday tasks, bringing its capabilities at a more accessible price point.

I have never used a model as much as I have used Sonnet 4 since its release. Hundreds of requests in VS Code and Cursor, and it is *the* best coding model I have used so far. I know many folks prefer o3 by OpenAI as the best one, but Sonnet 4 offers the best ROI at its price.

It takes the best of both Claude 3.7 Sonnet and Gemini 2.5 Pro, has a great understanding of context, and is trained to be an autonomous agent. I am using it for everything from writing code to debugging and even writing.

Fun fact: The first draft of this newsletter was written with Sonnet 4!

## WWDC 2025: AI Convergence

With WWDC 2025 set for June 9-13, we are less than two weeks away from Apple's reveal. The rumors around Apple Intelligence and AI integration are slowly building, with hints of an updated Calendar app and AI health coach integration.

There were rumors about Xcode 17 being powered by Anthropic's models, and with the release of Sonnet 4, I am particularly interested in covering it, sweating in Apple Park to watch the pre-recorded keynote.

## What's Next

We are past the novelty phase. These AI tools are becoming productivity multipliers, but *only* if you invest the time to understand their strengths and limitations while keeping pessimism aside.

]]>[email protected] (Rudrank Riyam)<![CDATA[AiOS Dispatch 11]]>Mon, 19 May 2025 06:43:34 GMT

https://aiosdispatch.com/posts/aios-dispatch-11

https://aiosdispatch.com/posts/aios-dispatch-11

## Preparing for WWDC 2025

Following the tradition of preparing for WWDC, I wrote about how I plan to make the most out of it!

I'm preparing for **WWDC 2025** with strategies for maximizing conference experiences and staying up-to-date with the latest iOS development trends.

## Alex Sidebar 3.1: More Xcode Love

Alex Sidebar rolled out version **3.1** last week, building on the already impressive 3.0 release. I have been testing it, and it is finally making Xcode feel less like a chore.

My favorite feature is the new **reading** **the** **console** **logs. **Alex can read Xcode's runtime logs so that's another copy-paste work reduced for you.

There is also **simulator** **testing** with **vision** support to click around, enter text, scroll, and see every step of the way. It's a game-changer for UI testing and interaction debugging (macOS app support is planned for later).

Another feature that I really wanted was multi-folder indexing, something great for SPM packages or monorepos and it can also index the derived data so you have remote SPM packages available as well!

## Windsurf & SWE-1

Windsurf dropped two of its models last week called **SWE-1** and **SWE-1 Lite** and I am impressed by it, and *almost *on par with frontier models. It is free right now but I assume it should be **0.5x **credits for SWE-1 and **0.25x** credits for the lighter one.

I got the Windsurf's Pro plan ($15/month) to try it out and here are my impressions:

- Faster than other SOTA models, both general and tool use

- Cut-off Oct 2024, so it does not have access to latest SwiftUI APIs, unlike Gemini 2.5 Pro with Jan 2025

- Good at instruction following & building features

- I will sill prefer Gemini 2.5 Pro or Sonnet 3.7 or o3 for complex tasks

## AiOS Meetups: Chicago, NYC, SF and Cupertino

I like to meet new folks in the iOS community and with my focus more on making the most out of AI, I decided to host casual meetups called **AiOS Meetups. **Here are the details:

- [**Chicago:** May 30, 2025](https://www.icloud.com/invites/0d5m9g6cK5QzTkYLcv2amea_A?ref=rudrank.com)

- [**New York:** June 4, 2025](https://www.icloud.com/invites/024Lc4WZ1xkEeLokJCwF6g1Rg?ref=rudrank.com)

- [**San Fransisco: **June 5, 205](https://www.icloud.com/invites/03aqTWSztl0jnPbOdA1jH12Fw?ref=rudrank.com)

- [**Cupertino (A big one)**: June 11, 2025](https://lu.ma/aios-omt?ref=rudrank.com), alongside One More Thing Conference.

## What's Next

Intelligence used to be 'too cheap to meter'. Now, it is effectively free. So, what are you shipping this week with the leverage of AI?]]>[email protected] (Rudrank Riyam)<![CDATA[AiOS Dispatch 10]]>Mon, 12 May 2025 15:51:20 GMT

https://aiosdispatch.com/posts/aios-dispatch-10

https://aiosdispatch.com/posts/aios-dispatch-10

— Rudrank Riyam (@rudrankriyam) [May 11, 2025](https://twitter.com/rudrankriyam/status/1921583560567185669?ref_src=twsrc%5Etfw&ref=rudrank.com)

Web Search and Terminal Access

**Web Search** also got a neat upgrade because earlier, it was an one-off command. But now, Alex can now use web search *in between chats* when it needs current info, which is great because sometimes context shifts mid-conversation. Super useful for those tricky SwiftUI API questions.

Also, Alex can run shell commands directly in your project directory!

Local Model Support & Server Bypass

For absolute favorite features in 3.0: **Local model support**. You know how I love playing around with local models, and Alex now lets you connect directly to your instances on Ollama, and LM Studio!

Being able to use Qwen 3 30B over 16 hours of flight from Delhi to San Fransisco?Yes please.

We’re officially releasing the quantized models of Qwen3 today!

Now you can deploy Qwen3 via Ollama, LM Studio, SGLang, and vLLM — choose from multiple formats including GGUF, AWQ, and GPTQ for easy local deployment.

Find all models in the Qwen3 collection on Hugging Face and…

— Qwen (@Alibaba_Qwen) [May 12, 2025](https://twitter.com/Alibaba_Qwen/status/1921907010855125019?ref_src=twsrc%5Etfw&ref=rudrank.com)

Plus, the server bypass is a good deal for enterprises, which can now route Alex through their own AI proxy endpoints. This should help many developers convince the leadership that has corporate AI policies to keep their code base fully compliant.

If you are living in Xcode, Alex is your AI partner so you do not feel the FOMO of other developers enjoying Cursor or VS Code!

Cursor 0.50: Halfway There

Cursor is currently rolling out version **0.50 **with a simplified the pricing like how Windsurf did last week. All models, irrespective of Gemini 2.5 Flash or Pro are treated as same. They are going for Cline and Roo Code by having token based usage with **Max** **Mode**.

Tab Tab Tab Across Files

While I am still getting used to the tab feature across multiple places in the file, we have a new Tab model that suggest changes across multiple files! I was working on a manager class and it then suggested me a change in the API endpoint structure. Best part is that they have added syntax highlighting to completion suggestions so if you use the Xcode theme, it feels right at home:

Exploring Cursor: Midnight Theme for Cursor and VS CodeDiscover how to install the Xcode Midnight theme for VS Code and Cursor. This guide walks you through downloading the GitHub repository, packaging it as a .vsix file using Node.js and vsce, and installing it to enjoy that perfect balance of dark background with clear, contrasting text.Rudrank RiyamRudrank RiyamInline Editing

One thing that annoyed me last year was the scope of inline edit (Command + K) where it did not had the context of the rest of the file when making a change. Now, there is a new settings that allows you to edit full files!

Entire Codebase as Context

While you are still limited by the token limit of the LLMs, you can `@folders` to add the entire code-base into context. It is enabled via `Full folder contents` from settings so that it actually adds the contents of the folder instead of just tree outline of the folder structure.

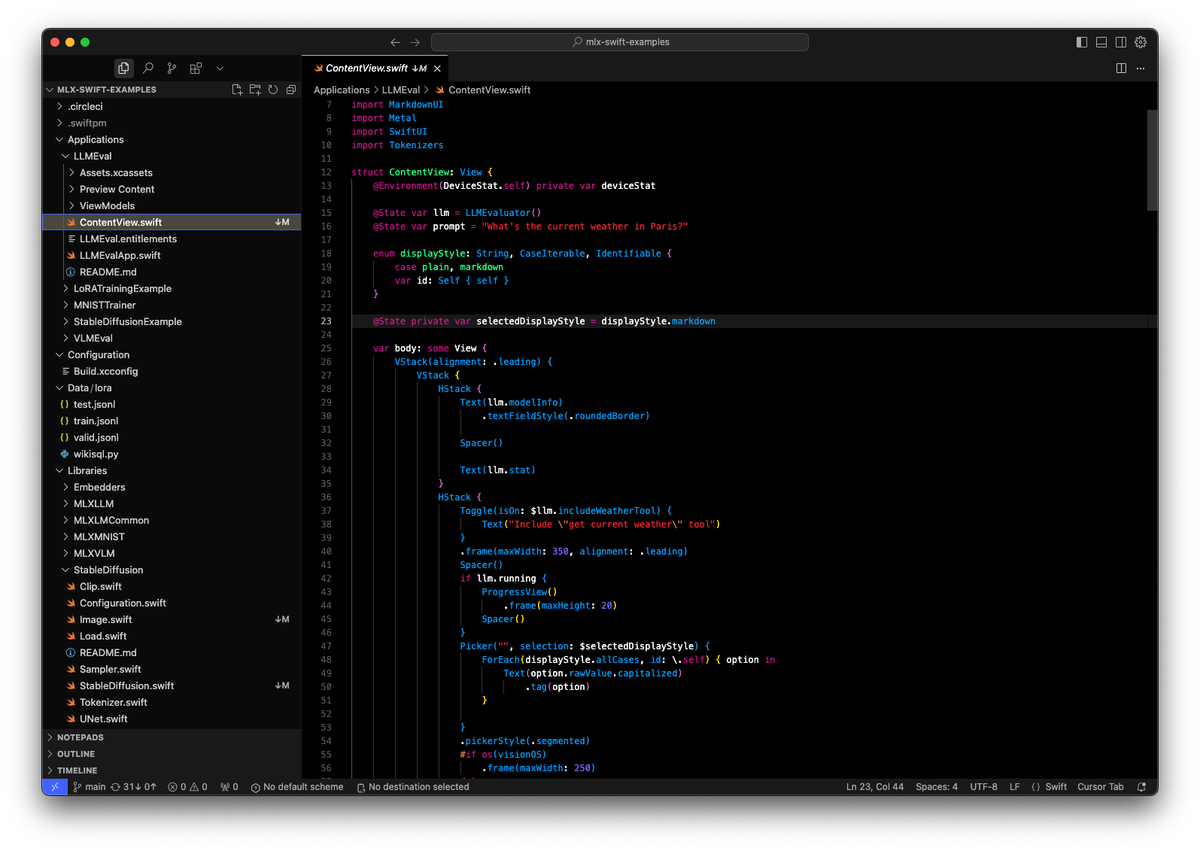

I tried it with the MLX Swift Examples's Library folder and I saw the message that 60/78 files were condensed to fit in the context. I know that the folder is easily 120-150K tokens.

If I enable the **Max Mode** with Gemini 2.5 Pro, that small warning icon vanishes. You gotta shell out for the chunky context window.

Workspaces and Codebases

I have been using Cursor as the single IDE for both iOS/back end by putting them in the same folder. Now, you can have a multi-root "workspace" by adding multiple code bases. It is similar to having Workspace in Xcode where you want to reference different targets.

Wave 8 of Windsurf

Windsurf continued their Wave 8 rollout, making Cascade, their AI agent, more powerful and personalized than ever.

Custom Workflows

When working with an iOS development workflow, you can define **Workflows **to clean, build and run after every new feature added or issue solved:

- You create a simple markdown file (e.g. `build-and-run.md`) inside a `.windsurf/workflows/ folder` in your repo.

- Invoke it directly in Cascade with a slash command like `/build-and-run` Since these live in the repository, your whole team gets access and can refine them.

You can even ask Cascade to turn a successful conversation into a Workflow file automatically!

**Cascade Plugins & MCP**

My favorite feature of this release is better MCP integration with easy plugins. MCPs are great but their UX is not.

Windsurf added a new **Plugins panel** to discover and manage verified and connected MCP servers. You can also disable **disable individual tools** within an MCP server if you do not need everything it offers!

The free plan still includes Cascade Base with okayish tool-calling so if you want to try out Windsurf, this is one way without committing to a paid plan.

What's Next

All the tools are slowly converging feature-wise with the aim of** autonomous development.**

Whether you prefer Xcode with Alex Sidebar, or vibe code in Cursor or Windsurf, or use VS Code as a backup, there is something for everyone.

All you need to do is ship, iterate on the feedback, and enjoy your coding sessions!

]]>[email protected] (Rudrank Riyam)<![CDATA[AiOS Dispatch 9]]>Wed, 07 May 2025 23:54:16 GMT

https://aiosdispatch.com/posts/aios-dispatch-9

https://aiosdispatch.com/posts/aios-dispatch-9

Updated paper & results coming soon. Stay tuned! 👀

— Pavankumar Vasu (@PavankumarVasu) [May 7, 2025](https://twitter.com/PavankumarVasu/status/1919966043922915482?ref_src=twsrc%5Etfw&ref=rudrank.com)

Built for on-device inference, **FastVLM** runs smoothly on iPhones and Macs. You can use it to power your iOS app with features like real-time text recognition, accessibility tools, or augmented reality experiences.

GitHub - apple/ml-fastvlm: This repository contains the official implementation of “FastVLM: Efficient Vision Encoding for Vision Language Models” - CVPR 2025This repository contains the official implementation of “FastVLM: Efficient Vision Encoding for Vision Language Models” - CVPR 2025 - apple/ml-fastvlmGitHubapple

I plan to update my project, **SmolLens**, to use this model:

GitHub - rudrankriyam/SmolLens: Implementation of Visual Intelligence Using SmolVLM 2 by Hugging FaceImplementation of Visual Intelligence Using SmolVLM 2 by Hugging Face - rudrankriyam/SmolLensGitHubrudrankriyamWWDC 2025: The Future of Xcode AI

The dub dub 2025 hype cycle calls for Apple partnering with Anthropic to integrate **Claude** into Xcode.

Apple and Anthropic reportedly partner to build an AI coding platform | TechCrunchApple and Anthropic are reportedly teaming up to build ‘vibe-coding’ software that will use AI to write, edit, and test code for programmers.TechCrunchMaxwell Zeff

With the flop of Swift Assist (and hours that I wasted in hopefulness), this could finally be Apple’s answer to Cursor and Windsurf, although I cannot imagine Craig on the stage talking about a “vibe-coding” platform. Well, maybe I can.

This partnership could *potentially *mean a fine-tuned Claude model trained on everything Apple Platforms development, which no other model or AI tool can provide. And with the privacy flex, it could entice enterprises with its massive distribution of having this directly in Xcode.

No context-switching or workarounds, at all!

AiOS Meetup at One More Thing Conference!

Mark your calendars for **Wednesday, June 11, 2025**, because the **AiOS Meetup** is happening at the One More Thing Conference in Cupertino, right alongside WWDC 2025!

I will be giving an impromptu talk on **“What’s New in AI/ML at WWDC 2025”**, exploring the freshest APIs after 1.5 days of experimenting. I love feeding my shiny object syndrome and this is the perfect opportunity for me.

Post-talk, we are hosting a **casual AiOS Meetup** in partnership with One More Thing. It is a good chance to connect with like-minded devs in a chill vibes space, and probably rant about Apple Intelligence over coffee.

Grab the free tickets at [omt-conf.com](https://omt-conf.com/?ref=rudrank.com) and join the AI party!

What's Next

I am overwhelmed with the announcements over the past few weeks, and looking into AI tools to write this AiOS dispatch.

Gemini Deep Research and Grok DeepSearch is what I use for researching (and Twitter!) while writing the words on my own with context of previous dispatches using Repo Prompt.

What are your thoughts on these updates?

I have been busy writing my new book after months of succumbing to imposter syndrome. The pre-order for my new book on **AI + iOS Development** is out!

Loads to write before WWDC 2025; and free incremental updates afterwards! You can buy now to support my work, or follow along my writing journey!

Exploring AI for iOS DevelopmentFrom Apple Intelligence to on-device models using MLX Swift, there is everything that you need to know about working with AI on Apple Platforms.Rudrank Riyam's Academy

I also updated my other book with new chapters on **XcodeBuildMCP** and slowly updating it for the latest Cursor, Windsurf, VS Code and Alex Sidebar:

Exploring AI-Assisted Coding for iOS DevelopmentEverything AI Assistance with XcodeRudrank Riyam's Academy

Enjoy reading!

]]>[email protected] (Rudrank Riyam)<![CDATA[AiOS Dispatch 8]]>Tue, 22 Apr 2025 15:56:14 GMT

https://aiosdispatch.com/posts/aios-dispatch-8

https://aiosdispatch.com/posts/aios-dispatch-8[email protected] (Rudrank Riyam)<![CDATA[AiOS Dispatch 7]]>Sun, 13 Apr 2025 05:01:37 GMT

https://aiosdispatch.com/posts/aios-dispatch-7

https://aiosdispatch.com/posts/aios-dispatch-7

Optimus Alpha

I have been testing this 32K max output model via Alex Sidebar and I like it. I do not think this will be my default model because Gemini 2.5 Pro is still better for me, but it is a decent contender for a general-purpose model.

For iOS work, the faster code generation is fantastic for quick prototyping or utility functions.

The amount of tokens/day usage in tens of billions signals massive developer interest. But again, it is available for free, and the cloaked lab is collecting data to improve the model. If that is something that does not bother you, give it a try!

What's Next

Almost done with my vacation and I miss the grind of working 12+ hours a day. I am working on a new book on MLX Swift + App Intents (later on but before WWDC 2025) and hyped about getting it in the hands of developers.

Expect this dispatch twice a week to cover in more depth and more news that are tailored for you as an iOS/macOS developer.

Happy exploring!

]]>[email protected] (Rudrank Riyam)<![CDATA[AiOS Dispatch 6]]>Sun, 06 Apr 2025 03:16:19 GMT

https://aiosdispatch.com/posts/aios-dispatch-6

https://aiosdispatch.com/posts/aios-dispatch-6

— Rudrank Riyam (@rudrankriyam) [April 3, 2025](https://twitter.com/rudrankriyam/status/1907936789995860333?ref_src=twsrc%5Etfw&ref=rudrank.com)

I will be around from 7th to 14th of June in Cupertino, so if you are attending the iOS developer's yearly pilgrimage, let me know!

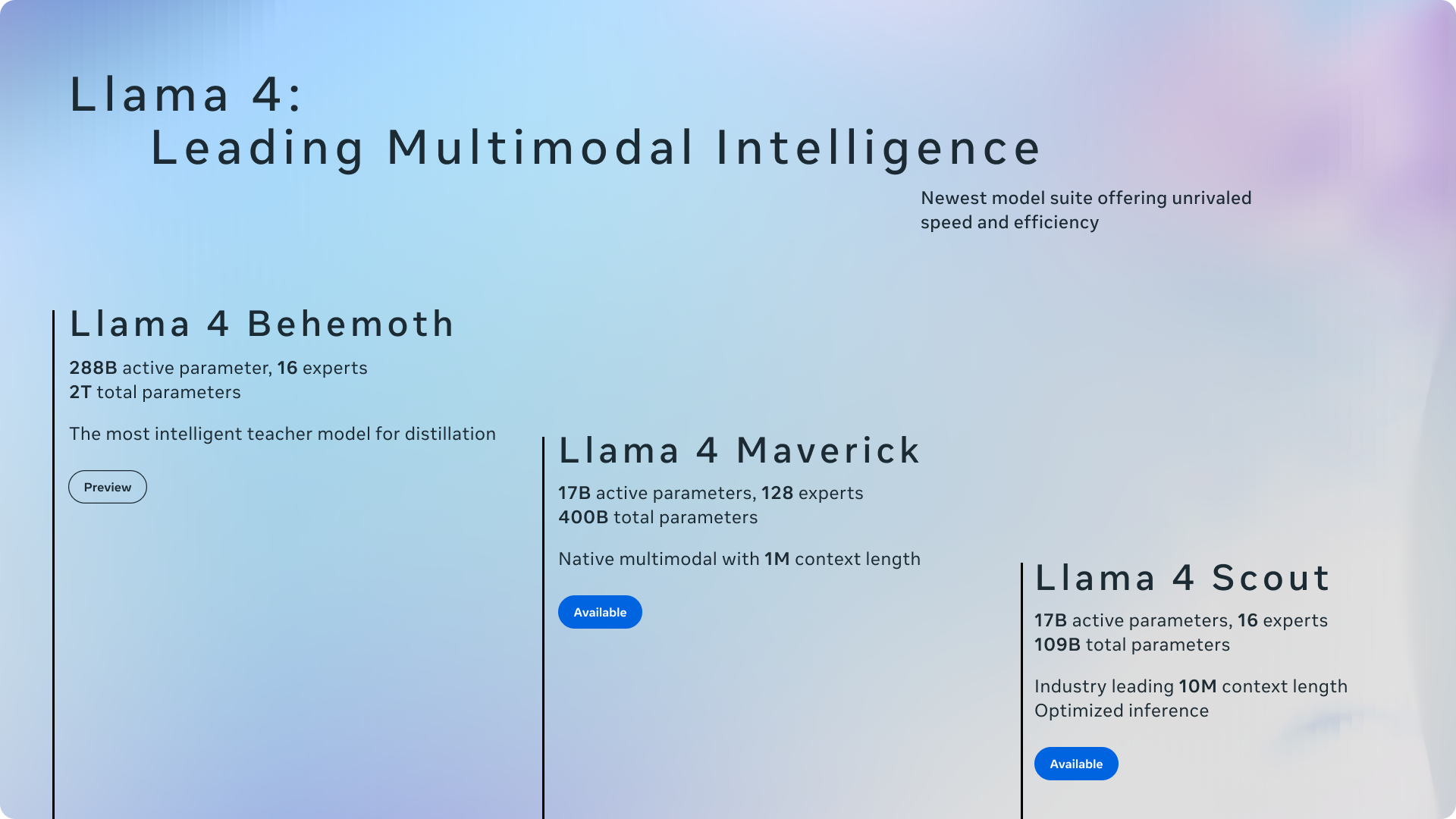

Llama 4 Herd

Meta dropped the Llama 4 family, because who does not love a Saturday surprise? We have got two models right now: Llama 4 Scout and Llama 4 Maverick, with a third, Llama 4 Behemoth, still in the training.

I have been experimenting with these using Together AI and Groq, and they are decent models, but not SOTA according to my initial impressions.

The Llama 4 herd: The beginning of a new era of natively multimodal AI innovationWe’re introducing Llama 4 Scout and Llama 4 Maverick, the first open-weight natively multimodal models with unprecedented context support and our first built using a mixture-of-experts (MoE) architecture.**Llama 4 Scout**

First up, **Llama 4 Scout**. This one is lean with 17 billion *active* parameters, 16 experts, and a **jaw-dropping **10 million token context window. Enough to summarize an entire code base. I doubt the quality of the output anything above 200K tokens, though.

Meta claims it outshines Google's Gemma 3, Gemini 2.0 Flash-Lite, and Mistral 3.1 on benchmarks. I threw the codebase (266K tokens) of MLX Swift examples via Together AI, and it was able to output a decent workflow to add local LLM support to your app.

**Llama 4 Maverick**

Then there is **Llama 4 Maverick**, the multi-modal one. It is 17 billion *active* parameters but with 128 experts and 400 billion total parameters. This one got a 1 million token context window which is still massive. It is tuned for chat and creative tasks, and Meta’s brags that it beats GPT-4o and Gemini 2.0 Flash on coding, reasoning, and image tasks.

It is cheaper than proprietary models, with inference costs around $0.19–$0.49 per million tokens. I still think the updated DeepSeek V3 is a better model then this one.

Llama 4 Behemoth

And the tease with **Llama 4 Behemoth**. Still in training, this one boasts 288 billion *active* parameters, 16 experts, and nearly **2 trillion total parameters**. Meta’s calling it a “teacher” model, distilling its wisdom into Scout and Maverick. No release date but they mention that it is better than GPT-4.5 and Claude 3.7 Sonnet on STEM benchmarks.

I will wait for Behemoth for iOS development tasks but for now, Gemini 2.5 Pro remains my default model for Alex Sidebar and Cursor.

The World of MCP

MCP this, MCP that.

If you have been anywhere near AI discussions in the past few months, you must have heard the acronym thrown around like anything.

But when you see OpenAI and Microsoft jump on the bandwagon, you know it is time to start paying attention.

So, what exactly is MCP? It stands for **Model Context Protocol**, an open-source standard kicked off by Anthropic in late 2024. A classic example is of being an adapter to connect large language models to external tools, APIs, databases, and more, without custom integrations for every single use case. The protocol *standardizes* how LLMs talk to these external systems, using a client-server setup where “MCP servers” expose tools and data that the clients can tap into.

**Xcode MCP**

One MCP that is beneficial for iOS developers is the **XcodeBuild **MCP**. **It provides Xcode-related tools for integration with Claude Desktop, and other AI editors.

Just wanted to share my new XcodeBuild MCP.

It allows any [#MCP](https://twitter.com/hashtag/MCP?src=hash&ref_src=twsrc%5Etfw&ref=rudrank.com) client to utilise Xcode more predictably. It can be used with Claude Desktop, [@cursor_ai](https://twitter.com/cursor_ai?ref_src=twsrc%5Etfw&ref=rudrank.com), [@windsurf_ai](https://twitter.com/windsurf_ai?ref_src=twsrc%5Etfw&ref=rudrank.com) and others.

— camsoft2000 (@camsoft2000) [March 27, 2025](https://twitter.com/camsoft2000/status/1905185519165747605?ref_src=twsrc%5Etfw&ref=rudrank.com)

There is a growing MCP ecosystem but I still feel the friction between the learning curve of setting up MCP servers. The one who can nail the UX for it will benefit loads by bringing it to the majority.

GitHub’s MCP Server

GitHub on boarded the train as well with their official MCP that plugs in into the their APIs.

GitHub - github/github-mcp-server: GitHub’s official MCP ServerGitHub’s official MCP Server. Contribute to github/github-mcp-server development by creating an account on GitHub.GitHubgithub

Automating PRs, fetching file diffs, or listing issues without leaving your AI tool of choice is another way to reduce friction for iOS development workflow!

What's Next

There is a lot to cover in the coming weeks but I feel these were the gist for you this week.

Also, I am heading to the biggest iOS conference after WWDC next week: **try! Swift Tokyo**

try! Swift TokyoDevelopers from all over the world will gather for tips and tricks and the latest examples of development using Swift. The event will be held for three days from April 9 - 11, 2025, with the aim of sharing our Swift knowledge and skills and collaborating with each other!try! Swift Tokyo

There is one talk on AI-assisted Swift that I am looking forward to. If you are attending the conference, or stay in Tokyo, let me know and we can meet!

]]>[email protected] (Rudrank Riyam)<![CDATA[AiOS Dispatch 5]]>Tue, 25 Mar 2025 23:41:09 GMT

https://aiosdispatch.com/posts/aios-dispatch-5

https://aiosdispatch.com/posts/aios-dispatch-5

— Rudrank Riyam (@rudrankriyam) [March 25, 2025](https://twitter.com/rudrankriyam/status/1904600753605664990?ref_src=twsrc%5Etfw&ref=rudrank.com)

Alex 2.5: The Sidebar with a Massive Update

Alex Sidebar dropped version 2.5 today and while I was not involved in the development of the new features, I had my time testing it behind the scenes. Here are some of my favorite quality of life improvements:

- **Screenshot Tool**: With a quick keyboard command, you can take a snapshot of the design you are working on which is automatically added to context.

- **Multi-file Apply/Undo**: This was my most wanted request as a vibe coder. An "apply all" button to create new files and edit across files with just one click. And roll back with checkpoints if you mess up.

- **Code Maps**: This feature helps to add more relevant context with an understanding of how files interact with each other, resulting in better code generation.

- **Quick File Edits**: The new file assistant helps to *quickly* add properties, refactor files, add coding keys, etc

- **DeepSeek V3 Integration**: Speaking of last dispatch’s whale, Alex now has support for DeepSeek V3 0324, a fast and decent model for iOS development.

Also, I am proud of the new localization of traditional Chinese, Korean and Japanese in this release!

Gemini 2.5: Google’s New Context Monster

As mentioned in the last dispatch, I love Google's model for longer context. And here we are with another one, the Gemini 2.5 Pro Experimental:

- **1M Token Context (2M Soon) and 65k Output Token**:

- You can probably fit in a medium-sized repository in here and it will keep up.

- I threw it some parts (830K tokens) of [Firefox for iOS](https://github.com/mozilla-mobile/firefox-ios?ref=rudrank.com) app using uithub.com.

- After 34 seconds of thinking and 74 seconds of writing the answer, I was impressed by the quality of the document that I had asked for, an on-boarding guide for a junior engineer new to the code base.

It is available now in [Google AI Studio](https://aistudio.google.com/?ref=rudrank.com) and the Gemini app for Advanced users. I have been vibing with it in the Studio and Alex Sidebar via OpenRouter.

Gemini Pro 2.5 Experimental (free) - API, Providers, StatsGemini 2.5 Pro is Google’s state-of-the-art AI model designed for advanced reasoning, coding, mathematics, and scientific tasks. Run Gemini Pro 2.5 Experimental (free) with APIOpenRouter

It is one of the best models out there with the most recent knowledge cut-off date of January 2025. You can ask it for latest iOS 18 APIs and it works well on them. The biggest downside is the 5 message limit per minute so you have to live with it for now.

What's Next

Start sketching some iOS 19 ideas now, especially using **App** **Intents**. The best part of Gemini 2.5 Pro is that you can feed it a whole WWDC session and then talk to it to learn about the new features faster.

This dub dub will be fun! What are your thoughts about WWDC 2025?

]]>[email protected] (Rudrank Riyam)<![CDATA[AiOS Dispatch 4]]>Mon, 24 Mar 2025 19:29:57 GMT

https://aiosdispatch.com/posts/aios-dispatch-4

https://aiosdispatch.com/posts/aios-dispatch-4

— Rudrank Riyam (@rudrankriyam) [March 14, 2025](https://twitter.com/rudrankriyam/status/1900656443197460656?ref_src=twsrc%5Etfw&ref=rudrank.com)

It was interesting talking to iOS developers from European countries, where they have barely heard about Cursor and use ChatGPT to copy/paste code or Copilot for completion. Some hesitated to learn about another IDE, while some called Xcode and its predictive competition home because of security requirements.

My close friends enjoy coding manually, and I do not want to hamper our friendship by pushing them to try AI coding tools.

**In the end, what matters is that you are productive and happy being productive, with or without AI-assisted coding.**

Another One from the Whale: DeepSeek V3 0324

DeepSeek is back with an updated version of V3, V3 0324. I have been testing this model for the past half hour on [Hugging Face](https://huggingface.co/deepseek-ai/DeepSeek-V3-0324?ref=rudrank.com) and [Alex Sidebar](https://alexcodes.app/?ref=rudrank.com), and it is the best chat model I have tried recently.

Tested the new DeepSeek V3 on my internal bench and it has a huge jump in all metrics on all tests.

It is now the best non-reasoning model, dethroning Sonnet 3.5.

Congrats [@deepseek_ai](https://twitter.com/deepseek_ai?ref_src=twsrc%5Etfw&ref=rudrank.com)!

— Xeophon (@TheXeophon) [March 24, 2025](https://twitter.com/TheXeophon/status/1904225899957936314?ref_src=twsrc%5Etfw&ref=rudrank.com)

It follows the instructions well, got rid of the issues and errors I was facing in Xcode and is pretty fast depending on the provider you are using. I tested it on plane Wi-Fi and still got more than decent tokens/seconds!

Also, when asked about scroll views, it did tell me to use `drawingGroup()` for complex graphics which I found cool.

This will be my primary model for the week so expect the next dispatch to have more insights on making the most out of the whale! 🐳

Exploring AI-Assisted Coding Workshop

I took the workshop (remotely due to passport issues) for the [ARCtic Conference](https://arcticonference.com/?ref=rudrank.com), and again, it was interesting hearing the feedback from the attendees. Many still prefer ChatGPT because it is their go-to tool for everything, and they do not want to spend $20/month elsewhere. And reluctant to learn something new but felt the FOMO and, hence, attended the workshop.

Here are some tips I shared with the attendees that may be helpful to you. This is the list of *my go-to large language models* for Swift coding:

**Best Overall Model: Claude 3.5 Sonnet**

Even though Anthropic launched the Claude 3.7 Sonnet model, I still cannot get the best out of it. 3.5 is still the model I mostly use for iOS/macOS development in SwiftUI.

**Second-Best Overall: Grok 3 + Reasoning**

Leaving aside the debate regarding the owner of xAI, I love using Grok 3 for Swift development and planning. I try to maximize its context window, and I am eagerly waiting for its API so I can use it more in different AI tools.

**Best Larger Context Model: Gemini 2.0 Pro/Flash (1M–2M Tokens)**

When lurking through bigger codebases like MLX Swift, I love using Google’s models, `Gemini 2.0 Pro Experimental 02-05`, a context monster. You can feel the latency when it is processing those 500K tokens, but the output is usually worth the wait.

**Best Planning Models: o3-mini-high or DeepSeek R1**

For planning and structured tasks before diving into vibe coding, I prefer OpenAI’s o3-mini-high because of its subtle approach to the topics. The overthinking model DeepSeek R1 is another great pick but I rarely use it now after the launch of o3-mini.

**Best Local Model: It Depends**

The best local model depends on your Mac's ability to run that model. Your MacBook might struggle with Qwen 2.5 Coder 14B or run the quantized version of DeepSeek R1 on your Studio.

My favorite one right now is **Mistral 3.1 24B**, which can run on a Mac with 32GB RAM when quantized (4-bit via GGUF or MLX formats). A 24GB setup might work with *aggressive* quantization**,** but it is better to have 32GB–48GB RAM, especially for its 128K context.

The new Mistral model is a pretty good contender for your local AI setup for iOS development:

— Rudrank Riyam (@rudrankriyam) [March 24, 2025](https://twitter.com/rudrankriyam/status/1904178193424081278?ref_src=twsrc%5Etfw&ref=rudrank.com)

To make sure you know, I am running this model via API on OpenRouter because my 16GB M1 Pro MacBook is not good enough, and I spent too much on travel to justify a new MacBook. One day!

If you have good hardware, **Gemma 3 27B **is a decent model for local coding on modest hardware (24GB–48GB RAM MacBooks). Use this when you want to prioritize speed.

**Llama 3.3 70B** is best with a beefier machine for broader, instruction-heavy coding tasks and deeper reasoning.

For lightweight tasks and tasks that are memory-constrained, **Mistral 7B **was my first love and still remains in my heart.

One piece of advice I shared was to treat the model as a **cracked** fresh graduate who joined your team.

They are so full of enthusiasm, but even they have a limit before they get overwhelmed. So, keep the context-focused, short, and to the point.

Provide enough context for a head start but not too much to overload the poor model!

Vibe Coding

The term "vibe coding" was coined by the sensei of AI, Andrej Karpathy, while messing around with code for fun.

There's a new kind of coding I call "vibe coding", where you fully give in to the vibes, embrace exponentials, and forget that the code even exists. It's possible because the LLMs (e.g. Cursor Composer w Sonnet) are getting too good. Also I just talk to Composer with SuperWhisper…

— Andrej Karpathy (@karpathy) [February 2, 2025](https://twitter.com/karpathy/status/1886192184808149383?ref_src=twsrc%5Etfw&ref=rudrank.com)

What started as his experiment has since exploded, adopted by non-coders and developers alike who solely depend on prompts and let AI do the heavy lifting.

The idea of “vibe coding” is that you are vibing by just using prompts, usually dictating with your voice and using the large language models to code for you. If you face any issues or errors, you ask the models to look at and fix them. You steer the output of the models as you want and update the prompts appropriately.

To put it more rudely, you have no idea what you are doing and completely depend on the AI.

You *may* have an idea of what is happening and code everything yourself, but you prefer to save many keystrokes and only use the key that applies all the changes made by AI.

These two words were invented by someone who is great at programming in general. I feel that the term is now being abused as an excuse to avoid the process of learning, but you eventually pay the price with AI slop.

Or you do not by doing *responsible vibe coding*. It takes a bit more effort and is synonymous with normal coding, but that term is definitely fancy.

It is also fun to see sensei vibe coding an iOS app!

I just vibe coded a whole iOS app in Swift (without having programmed in Swift before, though I learned some in the process) and now ~1 hour later it's actually running on my physical phone. It was so ez... I had my hand held through the entire process. Very cool.

— Andrej Karpathy (@karpathy) [March 23, 2025](https://twitter.com/karpathy/status/1903671737780498883?ref_src=twsrc%5Etfw&ref=rudrank.com)

You can go through the thread to find the shared ChatGPT conversations, but I read them, so you do not have to. Here are my observations:

- Extremely simple and clear prompts. This is the first iOS app, so ChatGPT's hand holds him. He reiterates that it should be only iOS and no other platforms.

- Follows the instructions, and goes to dictate the requirements of the app. Step by step:

- The basic skeleton of the app first,

- Then, functionalities via different buttons,

- It ends up with basic SwiftUI view refresh errors (which, in my opinion, Claude could have done better)

- Gives it back to fix and get the functionality working,

- Then iterates on the UI, and

- Finally adds persistence while asking for explanations.

- And gets into the tangled mess of renewing the Apple Membership account where even AI cannot help. 😄

Ultimately, he got the app he wanted without stressing over the intricacies of the Swift language or hitting any Swift 6 issues, hah.

What's Next

Vibe coding is here to stay but it is on us to keep it responsible, not sloppy and end up miserable. The next dispatch will be from somewhere in Japan: Tokyo, Osaka, or Fukuoka! 🇯🇵

Let me know your thoughts on this dispatch! If you like it, please hit the thumbs up button because this one took a long time to iterate on, and I do appreciate some validation.

A question: are you vibe coding these days or clearing up the AI slop?

]]>[email protected] (Rudrank Riyam)<![CDATA[AiOS Dispatch 3]]>Thu, 13 Mar 2025 16:13:38 GMT

https://aiosdispatch.com/posts/aios-dispatch-3

https://aiosdispatch.com/posts/aios-dispatch-3

— Meng To (@MengTo) [March 7, 2025](https://twitter.com/MengTo/status/1897825142711238869?ref_src=twsrc%5Etfw&ref=rudrank.com)

While there are many AI IDEs and tools that provide better functionality, it is a useful feature for those who prefer to utilize ChatGPT app for everything, including the `o1-pro` model with their $200/month subscription.

Alex Sidebar 2.4

Alex Sidebar announced their latest version **2.4** in the same week, and for full disclosure, I worked on some of the features.

The latest offering has **Swift 6 issue fixes support** (you are welcome), automatic file suggestions that comes handy when you know which file you want to add, and a new $50/month premium tier with Linear and GitHub Issues integrations.

We integrate most of cursor's features directly into Xcode! Plus we have a lot more swift-specific features that let you generate better (more compilable) swift code.

For example, we just released a "Fix Swift 6 Issues" button yesterday:

— Alex (@alexcodes_ai) [March 7, 2025](https://twitter.com/alexcodes_ai/status/1897834004885524732?ref_src=twsrc%5Etfw&ref=rudrank.com)

Manus AI Agent: Hype Train?

Manus AI Agent is a new shiny toy from China which is supposedly an autonomous task-crushing machine, from detailed stock analysis to insurance comparisons.

It is in private beta, running on Claude 3.5 Sonnet and Qwen. I still have not gotten an invite so I cannot tell if it passed the vibe check or not.

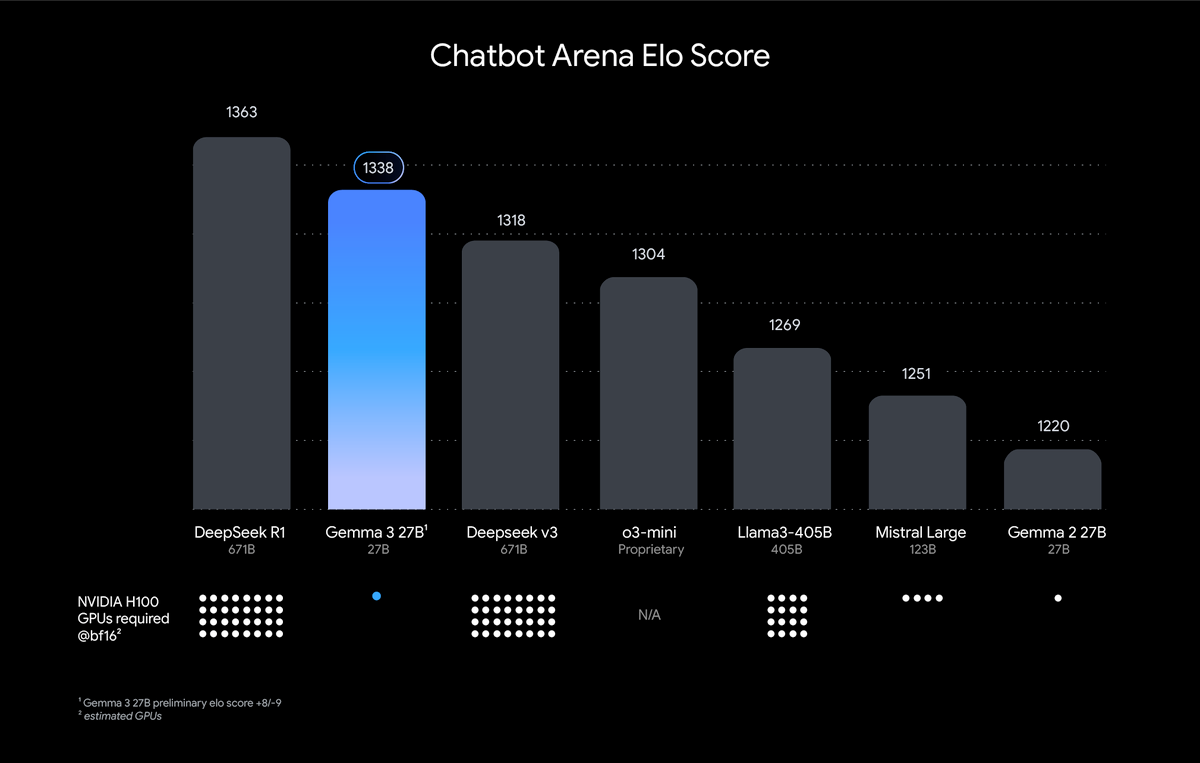

Google's Gemma Model Series: AI for Every Scale

Google is back again with Gemma, offering a range of options from 1B to 27B parameters. Here is a quick rundown of the Gemma lineup:

- 1B Model: Perfect for text-only applications with a 32K token context window

- 4B, 12B, and 27B Models: Support both text and image inputs with a massive 128K token context window.

The 27B model, in particular, is outperforming other SOTA in human preference evaluations!

There is already a PR to get this model running on your iPhone using [MLX Swift](https://github.com/ml-explore/mlx-swift-examples/pull/238?ref=rudrank.com) and you will hear from me in the next dispatch how the 1B and 4B model perform!

Apple Intelligence Stumbles

Apple Intelligence is under some fire as key Siri enhancements face **indefinite** **delays**, pushing the new era of Siri into eternity.

Announced at WWDC 2024, Apple’s Apple Intelligence (AI for the rest of us) was supposed to make Siri more personalized with on-screen awareness and cross-app actions.

Users like me who got iPhone 16 and Pro Max expecting these upgrades may feel a bit of a sting.

Xcode 16.3 was released without any indication of Swift Assist, leading the developer community to speculate that it might end up similar to Siri.

This WWDC 2025 will be a show to watch! 🍿

What's Next

If it has not been clear yet: the AI wave will only accelerate. Keep shipping and keep tinkering with your ideas out there. Build for the future and AI will keep up.

Happy exploring!

]]>[email protected] (Rudrank Riyam)<![CDATA[AiOS Dispatch 2]]>Thu, 06 Mar 2025 00:05:19 GMT

https://aiosdispatch.com/posts/aios-dispatch-2

https://aiosdispatch.com/posts/aios-dispatch-2

— Rudrank Riyam (@rudrankriyam) [March 5, 2025](https://twitter.com/rudrankriyam/status/1897287483152605513?ref_src=twsrc%5Etfw&ref=rudrank.com)

I have no plans to buy it as I am eyeing the new Sky Blue Air with 32GB RAM for travel, I am so bullish on Apple's hardware, unlike their clunky software.

I won't talk about the cost of this machine because if you can take *advantage* of it, you know it is well worth the price.

OpenAI's $10k/Month

Speaking of costs, let's talk about those OpenAI rumors floating around:

- $2,000/month for "high-income knowledge workers"

- $10,000/month for software developers

- $20,000/month for PhD-level research agents

You read that right. Ten grand a month for a developer tool. That's almost my salary worth of subscription. That is one Mac Studio, too.

If these rumors pan out, we are looking at different tiers of intelligence. I wonder who will cough up $10K a month. Big tech firms, maybe, or startups with more VC cash than they can handle.

Free Alternative to Cursor

Trae is an AI IDE by ByteDance’s Singapore-based subsidiary, SPRING PTE. As of this dispatch, it is available for free of cost with both Claude's 3.5 and 3.7 models and DeepSeek R1 and V3 models too.

Trae is a free alternate to Cursor

— Rudrank Riyam (@rudrankriyam) [March 4, 2025](https://twitter.com/rudrankriyam/status/1896923282039050513?ref_src=twsrc%5Etfw&ref=rudrank.com)

I tried it out, and while it is not a Cursor killer, it is useful if you are on a budget and do not want to pay for a subscription.

Their privacy policy and terms of service have been recently updated, so make sure you go through it once.

Signs of Actual Apple Intelligence

After so many workshops over months of using the Shortcuts app to imitate Apple Intelligence behavior, we *may* finally see the actual Apple Intelligence this month. The one that was shown back at WWDC 2024.

Apple Intelligence might be coming sooner than we think…

Apps can now securely tell Siri what's on-screen – the key functionality for Personal Context

— Matthew Cassinelli (@mattcassinelli) [March 5, 2025](https://twitter.com/mattcassinelli/status/1897354042646651037?ref_src=twsrc%5Etfw&ref=rudrank.com)

Matthew Cassinelli posted some new APIs in beta that help for apps to tell Siri what's on-screen – the key functionality for **Personal** **Context**.

Remember, if Siri can *finally* have a new era, so can you.

If Siri can have a new era, so can you.

— Rudrank Riyam (@rudrankriyam) [July 30, 2024](https://twitter.com/rudrankriyam/status/1818176331601113440?ref_src=twsrc%5Etfw&ref=rudrank.com)

On-Device Vision Intelligence

HuggingFace launched their new vision model called SmolVLM-2, which had day 0 support for MLX Swift:

Thrilled to release SmolVLM-2, our newest model, which runs entirely on-device and in realtime, offering a strong foundation for healthcare applications. 🤗🔥

With proper domain-specific training, small models enable offline inference of 2D (ECGs, CXRs) and even 3D modalities…

— Cyril Zakka, MD (@cyrilzakka) [March 3, 2025](https://twitter.com/cyrilzakka/status/1896631022747627744?ref_src=twsrc%5Etfw&ref=rudrank.com)

When they announced it, they added a waitlist for TestFlight (now [open-sourced](https://x.com/cyrilzakka/status/1896631027352961376?ref=rudrank.com)). As an impatient person, I decided to copy Apple's Visual Intelligence using SmolVLM-2 and open-sourced it:

GitHub - rudrankriyam/SmolLens: Implementation of Visual Intelligence Using SmolVLM 2 by Hugging FaceImplementation of Visual Intelligence Using SmolVLM 2 by Hugging Face - rudrankriyam/SmolLensGitHubrudrankriyam

I am looking forward to the iPhone 17 Pro with 12 GB of RAM to run 7B/8B language models easily on it. By September, those models should be as powerful as the current 14B models!

MLX Supremacy

The new shiny framework MLX Swift humbles me to crave for the vast amount of knowledge out there in the AI world.

Also, I am noticing this trend of more AI providers making their models support MLX with their launch, and it is a big thing for the MLX community!

One distilled set of models that caught my eye was Llamba models. The interesting part is the graph for running it on the iPhone, especially for longer context:

5️⃣ Llamba on your phone! 📱

Unlike traditional LLMs, Llamba’s fewer recurrent layers make it ideal for resource-constrained devices.

✔ MLX implementation for Apple Silicon

✔ 4-bit quantization for mobile & edge deployment

✔ Ideal for privacy-first applications

— Aviv Bick (@avivbick) [March 5, 2025](https://twitter.com/avivbick/status/1897325090385354932?ref_src=twsrc%5Etfw&ref=rudrank.com)

I am glad that this 8B model can run 40k context on an iPhone 16 Pro!

Qwen's QwQ-32B

Talking about local models, (again) there is another model from China that is making the news- QwQ 32B taking on the Deep Seek R1 680B in benchmarks:

Again, you can run it on your MacBook using MLX:

QwQ-32B evals on par with Deep Seek R1 680B but runs fast on a laptop. Delivery accepted.

Here it is running nicely on a M4 Max with MLX. A snippet of its 8k token long thought process:

— Awni Hannun (@awnihannun) [March 5, 2025](https://twitter.com/awnihannun/status/1897394318434034163?ref_src=twsrc%5Etfw&ref=rudrank.com)

AI Tool of the Week: Pico AI Homelab

Pico AI Homelab LLM serverLightning Fast. Complete Privacy. Effortless Setup. Pico is the ideal LAN AI server for home and office use—designed for professionals and small teams. It offers key benefits: • Pico AI Homelab includes over 300 state-of-the-art models such as: • DeepSeek R1 • Meta Llama • Microsoft Phi 4 • Q…Mac App StoreStarling Protocol Inc

Pico AI (non-sponsored) is a fast AI server for home and office. Built on MLX, you can use it for running QwQ 32B on your MacBook or DeepSeek R1 model on your new Mac Studio!

Give it a try!

What's Next

The AI updates in a few weeks are more than what we are getting from Apple in months (and that is saying something). As my friend Thomas said:

Apple wants to make the perfect timeless software; they can't make software that evolves fast. They don't know how to do that. AI tooling is growing way too fast for Apple to release anything meaningful.

— Thomas Ricouard (@Dimillian) [March 5, 2025](https://twitter.com/Dimillian/status/1897234314959741225?ref_src=twsrc%5Etfw&ref=rudrank.com)

Happy responsible vibe coding!

]]>[email protected] (Rudrank Riyam)<![CDATA[AiOS Dispatch 1]]>Fri, 28 Feb 2025 23:53:24 GMT

https://aiosdispatch.com/posts/aios-dispatch-1

https://aiosdispatch.com/posts/aios-dispatch-1

— Rudrank Riyam (@rudrankriyam) [February 27, 2025](https://twitter.com/rudrankriyam/status/1895207443615105509?ref_src=twsrc%5Etfw&ref=rudrank.com)

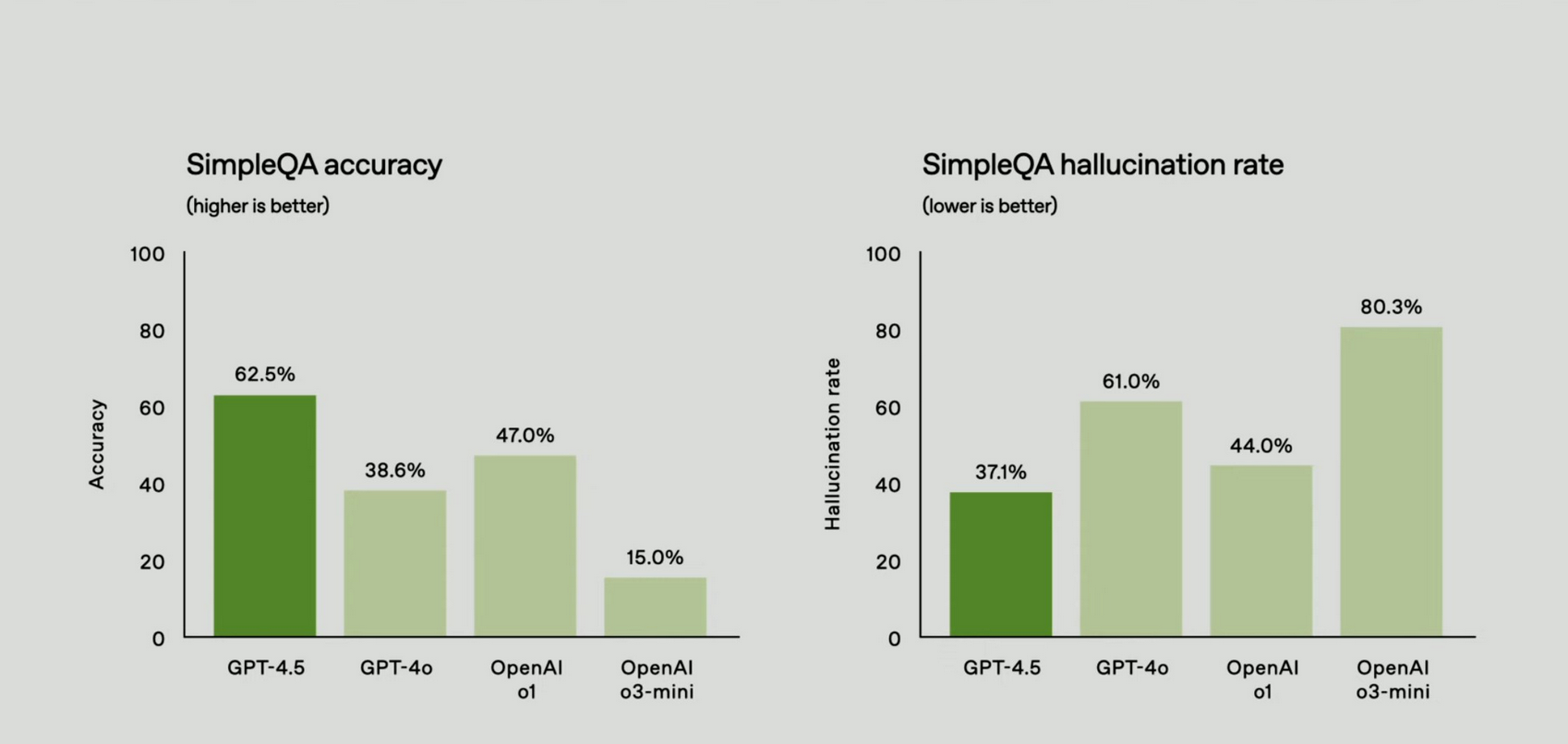

For coding, it is fine—better than GPT-4o at following instructions—but it is no 3.7 Sonnet killer. I would hold on this model for a while, but note that the hallucination has significantly gone down which is something to keep in mind when the prices drop as well.

IDEs like **Cursor**, **Windsurf** and **VS Code** already jumped on and integrated Claude 3.7 and GPT-4.5.

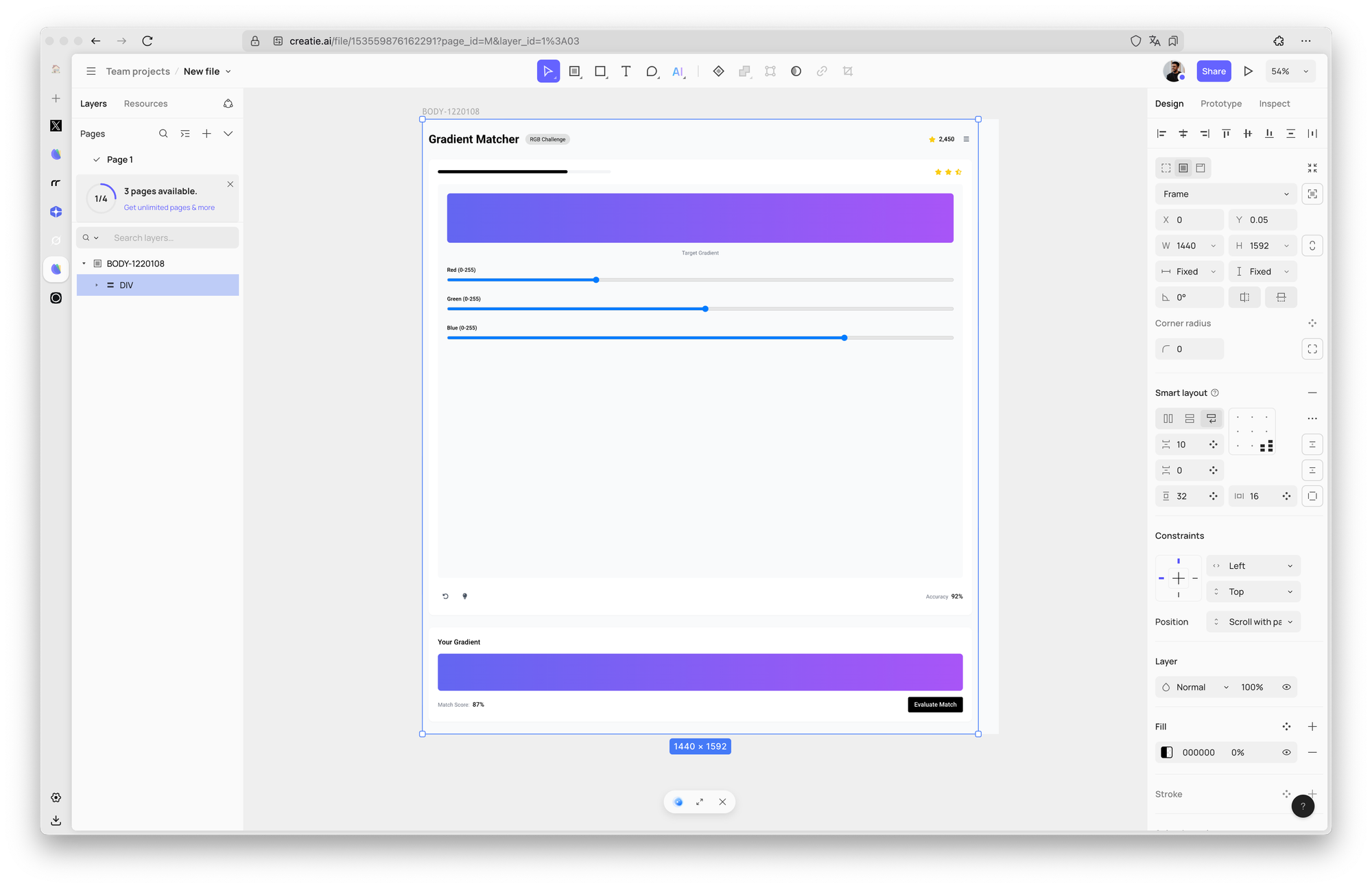

AI Tool of the Week: Creatie

Creatie | Intuitive, affordable, AI-powered product design softwareAI DESIGNSBeta

Creatie (non-sponsored) can help you generate UI mockups with AI. I tried it with some ideas for an app layout, and it designed something usable as an idea. It is not replacing Figma for me but fun to experiment nonetheless.

You can use it as a reference with the UI generation ability of the latest Claude** **3.7 Sonnet.

Give it a try!

What's Next

I kept the first dispatch short and sweet, but from next week, expect more, maybe twice a week if the AI world is moving fast (as usual).

I know it is hard to keep up with everything but I have got your back. Hit me with your feedback!

Happy vibe coding!

]]>[email protected] (Rudrank Riyam)