Diagnostic Criteria

A. Persistent pattern of cognitive, emotional, and social functioning characterized by a strong preference for normative coherence, rapid closure of uncertainty, and limited tolerance for sustained depth—intellectual, experiential, or emotional—beginning in early socialization and present across multiple contexts (e.g. interpersonal relationships, workplace environments, family systems, cultural participation).

B. The pattern manifests through three (or more) of the following symptoms:

- Normative Rigidity

Marked discomfort when encountering deviations from customary practices, beliefs, or emotional expressions, even when such deviations are demonstratively non-harmful or adaptive. Often expressed as “But why?” followed by silence rather than curiosity. - Contextual Literalism

Difficulty interpreting meaning, identity, or emotional communication outside their most common cultural framing; metaphor and subtext are tolerated primarily when socially standardized. - Consensus-Seeking Reflex

Habitual alignment with majority opinion, authority, or prevailing emotional norms when forming judgments, often prior to personal reflection or affective attunement. - Change Aversion with Rationalization

Resistance to novel ideas or emotional complexity, accompanied by post-hoc justifications framed as realism, pragmatism, or emotional maturity, rather than acknowledged emotional discomfort. - Social Script Dependence

Reliance on rehearsed conversational and emotional scripts (weather, productivity, polite outrage), and visible distress when interactions require unscripted vulnerability, prolonged emotional presence, or exploratory dialogue. - Hierarchy Calibration Preoccupation

Excessive attention to formal roles, relational labels, and status markers, such as job titles, relationship escalators, age-based authority, or institutional validation, with difficulty engaging others outside these frameworks as emotionally or epistemically equal. - Ambiguity Intolerance

A pronounced need to resolve uncertainty quickly—cognitively and emotionally, even at the cost of nuance. Mixed feelings, ambivalence, or unresolved emotional states may be experienced as distressing or unproductive. Questions with multiple valid answers may be experienced as irritating rather than interesting. - Pathologizing the Outlier

Tendency to interpret uncommon preferences, communication styles, atypical cognitive styles, emotional expressions, relational structures, or life choices as problems needing explanation, containment, or optimization. - Empathy via Projection

Assumption that others experience emotions in similar ways and intensities, leading to misattuned reassurance, premature advice, or minimization of divergent affective experiences, resulting in advice that begins with “If it were me…” and ends with confusion when it is, in fact, not them. - Depth Avoidance in Sustained Inquiry

Marked difficulty engaging in prolonged, high-resolution discussion of topics that extend beyond surface facts, sanctioned opinions, or immediately actionable conclusions. Deep exploration of systems, first principles, or existential implications is often curtailed. - Diffuse Interest Profile

A pattern of broad but shallow interests, with engagement driven primarily by social relevance or utility rather than intrinsic fascination. Mastery is rare; familiarity is common. - Expertise Anxiety

Discomfort in the presence of deep intellectual or emotional proficiency—either in oneself or others—leading to minimization, deflection, or reframing depth as excessive, obsessive, or impractical. - Instrumental Curiosity

Curiosity activated mainly when a topic yields immediate benefit. Curiosity pursued for its own sake may be regarded as indulgent, inefficient, or emotionally suspect. - Affective Flattening in Non-Crisis Contexts

A restricted range or shallowness of emotional experience outside socially sanctioned peaks (e.g. celebrations, emergencies). Subtle, slow-building, or internally complex emotional states may be under-recognized, quickly translated into simpler labels, or bypassed through distraction. - Emotional Resolution Urgency

A strong drive to “process,” “move on,” or “feel better” rapidly, often resulting in premature emotional closure. Emotional depth is equated with rumination rather than information. - Vulnerability Time-Limiting

Tolerance for emotional exposure is constrained by implicit time or intensity limits. Extended emotional presence—grief without deadlines, joy without justification, love without clear structure—may provoke discomfort or withdrawal.

C. Symptoms cause clinically significant impairment in adaptive curiosity, cross-cultural understanding, deep relational intimacy, sustained emotional attunement, and the capacity to remain present with complex internal states—both one’s own and others’, or collaboration with neurodivergent individuals, particularly in rapidly changing environments or in relationships requiring long-term emotional nuance.

D. The presentation is not better explained by acute stress, lack of exposure, trauma-related emotional numbing, cultural display rules alone, or temporary social conformity for situational survival (e.g. customer service roles, family holidays).

Specifiers

- With Strong Institutional Reinforcement (e.g. corporate culture, rigid schooling)

- With Moral Certainty Features

- Masked Presentation (appears emotionally open but only within safe, scripted bounds)

- Late-Onset (often following promotion to middle management)

Course and Prognosis

NTSD is typically stable across adulthood. Improvement correlates with sustained exposure to emotional complexity without forced resolution, relationships that reward presence over performance, and practices that cultivate interoceptive awareness rather than emotional efficiency. Partial remission has been observed following prolonged engagement with artists, immigrants, queer communities, altered states, long-form grief, open-source software, or toddlers asking “why” without stopping.

Differential Diagnosis

Must be distinguished from:

- Willful ignorance (which involves effort)

- Malice (which involves intent)

- Burnout (which improves with rest)

- Actual lack of information (which improves with learning)

NTSD persists despite information.

]]>That’s the modern paradox of Unix & Linux culture: tools older than many of us are being rediscovered through vertical videos and autoplay feeds. A generation raised on Shorts and Reels is bumping into sort, uniq, and friends, often for the first time, and asking very reasonable questions like: wait, why are there two ways to do this?

So let’s talk about one of those deceptively small choices.

The question

What’s better?

sort -u

or

sort | uniq

At first glance, they seem equivalent. Both give you sorted, unique lines of text. Both appear in scripts, blog posts, and Stack Overflow answers. Both are “correct”.

But Linux has opinions, and those opinions are usually encoded in flags.

The short answer

sort -u is almost always better.

The longer answer is where the interesting bits live.

What actually happens

sort -u tells sort to do two things at once:

- sort the input

- suppress duplicate lines

That’s one program, one job, one set of buffers, and one round of temporary files. Fewer processes, less data sloshing around, and fewer opportunities for your CPU to sigh quietly.

By contrast, sort | uniq is a two-step relay race. sort does the sorting, then hands everything to uniq, which removes duplicates — but only if they’re adjacent. That adjacency requirement is why the sort is mandatory in the first place.

This pipeline works because Linux tools compose beautifully. But composition has a cost: an extra process, an extra pipe, and extra I/O.

On small inputs, you’ll never notice. On large ones, sort -u usually wins on performance and simplicity.

Clarity matters too

There’s also a human factor.

When you see sort -u, the intent is explicit: “I want sorted, unique output.”

When you see sort | uniq, you have to mentally remember a historical detail: uniq only removes adjacent duplicates.

That knowledge is common among Linux people, but it’s not obvious. sort -u encodes the idea directly into the command.

When uniq still earns its keep

All that said, uniq is not obsolete. It just has a narrower, sharper purpose.

Use sort | uniq when you want things that sort -u cannot do, such as:

- counting duplicates (

uniq -c) - showing only duplicated lines (

uniq -d) - showing only lines that occur once (

uniq -u)

In those cases, uniq isn’t redundant — it’s the point.

A small philosophical note

This is one of those Linux moments that looks trivial but teaches a bigger lesson. Linux tools evolve. Sometimes functionality migrates inward, from pipelines into flags, because common patterns deserve first-class support.

sort -u is not “less Linuxy” than sort | uniq. It’s Linux noticing a habit and formalizing it.

The shell still lets you build LEGO castles out of pipes. It just also hands you pre-molded bricks when the shape is obvious.

The takeaway

If you just want unique, sorted lines:

sort -u

If you want insight about duplication:

sort | uniq …

Same ecosystem, different intentions.

And yes, it’s mildly delightful that a 1’30” YouTube Short can still provoke a discussion about tools designed in the 1970s. The terminal endures. The format changes. The ideas keep resurfacing — sorted, deduplicated, and ready for reuse.

]]>

I had websites before that—my first one must have been around 1996, hosted on university servers or one of those free hosting platforms that have long since disappeared. There is no trace of those early experiments, and that’s probably for the best. Frames, animated GIFs, questionable colour schemes… it was all part of the charm.

But amedee.be was the moment I claimed a place on the internet that was truly mine. And not just a website: from the very beginning, I also used the domain for email, which added a level of permanence and identity that those free services never could.

Over the past 25 years, I have used more content management systems than I can easily list. I started with plain static HTML. Then came a parade of platforms that now feel almost archaeological: self-written Perl scripts, TikiWiki, XOOPS, Drupal… and eventually WordPress, where the site still lives today. I’m probably forgetting a few—experience tends to blur after a quarter century online.

Not all of that content survived. I’ve lost plenty along the way: server crashes, rushed or ill-planned CMS migrations, and the occasional period of heroic under-backing-up. I hope I’ve learned something from each of those episodes. Fortunately, parts of the site’s history can still be explored through the Wayback Machine at the Internet Archive—a kind of external memory for the things I didn’t manage to preserve myself.

The hosting story is just as varied. The site spent many years at Hetzner, had a period on AWS, and has been running on DigitalOcean for about a year now. I’m sure there were other stops in between—ones I may have forgotten for good reasons.

What has remained constant is this: amedee.be is my space to write, tinker, and occasionally publish something that turns out useful for someone else. A digital layer of 25 years is nothing to take lightly. It feels a bit like personal archaeology—still growing with each passing year.

Here’s to the next 25 years. I’m curious which tools, platforms, ideas, and inevitable mishaps I’ll encounter along the way. One thing is certain: as long as the internet exists, I’ll be here somewhere.

Last night I did something new: I went fusion dancing for the first time.

Yes, fusion — that mysterious realm where dancers claim to “just feel the music,” which is usually code for nobody knows what we’re doing but we vibe anyway.

The setting: a church in Ghent.

The vibe: incense-free, spiritually confusing.

Spoiler: it was okay.

Nice to try once. Probably not my new religion.

Before anyone sharpens their pitchforks:

Lene (Kula Dance) did an absolutely brilliant job organizing this.

It was the first fusion event in Ghent, she put her whole heart into it, the vibe was warm and welcoming, and this is not a criticism of her or the atmosphere she created.

This post is purely about my personal dance preferences, which are… highly specific, let’s call it that.

But let’s zoom out. Because at this point I’ve sampled enough dance styles to write my own David Attenborough documentary, except with more sweat and fewer migratory birds.

Below: my completely subjective, highly scientific taxonomy of partner dance communities, observed in their natural habitats.

Balfolk – Home Sweet Home

Balfolk – Home Sweet Home

Balfolk is where I grew up as a dancer — the motherland of flow, warmth, and dancing like you’re collectively auditioning for a Scandinavian fairy tale.

There’s connection, community, live music, soft embraces, swirling mazurkas, and just the right amount of emotional intimacy without anyone pretending to unlock your chakras.

Balfolk people: friendly, grounded, slightly nerdy, and dangerously good at hugs.

Verdict: My natural habitat. My comfort food. My baseline for judging all other styles.

Fusion: A Beautiful Thing That Might Not Be My Thing

Fusion: A Beautiful Thing That Might Not Be My Thing

Fusion isn’t a dance style — it’s a philosophical suggestion.

“Take everything you’ve ever learned and… improvise.”

Fusion dancers will tell you fusion is everything.

Which, suspiciously, also means it is nothing.

It’s not a style; it’s a choose-your-own-adventure.

You take whatever dance language you know and try to merge it with someone else’s dance language, and pray the resulting dialect is mutually intelligible.

I had a fun evening, truly. It was lovely to see familiar faces, and again: Lene absolutely nailed the organization. Also a big thanks to Corentin for the music!

But for me personally, fusion sometimes has:

- a bit too much freedom

- a bit too little structure

- and a wildly varying “shared vocabulary” depending on who you’re holding

One dance feels like tango in slow motion, the next like zouk without the hair flips, the next like someone attempts tai chi with interpretative enthusiasm. Mostly an exercise in guessing whether your partner is leading, following, improvising, or attempting contemporary contact improv for the first time.

Beautiful when it works. Less so when it doesn’t.

And all of that randomly in a church in Ghent on a weeknight.

Verdict: Fun to try once, but I’m not currently planning my life around it.

Contact Improvisation: Gravity’s Favorite Dance Style

Contact Improvisation: Gravity’s Favorite Dance Style

Contact improv deserves its own category because it’s fusion’s feral cousin.

It’s the dance style where everyone pretends it’s totally normal to roll on the floor with strangers while discussing weight sharing and listening with your skin.

Contact improv can be magical — bold, creative, playful, curious, physical, surprising, expressive.

It can also be:

- accidentally elbowing someone in the ribs

- getting pinned under a “creative lift” gone wrong

- wondering why everyone else looks blissful while you’re trying not to faceplant

- ending up in a cuddle pile you did not sign up for

It can exactly be the moment where my brain goes:

“Ah. So this is where my comfort zone ends.”

It’s partnered physics homework.

Sometimes beautiful, sometimes confusing, sometimes suspiciously close to a yoga class that escaped supervision.

I absolutely respect the dancers who dive into weight-sharing, rolling, lifting, sliding, and all that sculptural body-physics magic.

But my personal dance style is:

- musical

- playful

- partner-oriented

- rhythm-based

- and preferably done without accidentally mounting someone like a confused koala

Verdict: Fascinating to try, excellent for body awareness, fascinating to observe, but not my go-to when I just want to dance and not reenact two otters experimenting with buoyancy.  Probably not something I’ll ever do weekly.

Probably not something I’ll ever do weekly.

Contra: The Holy Grail of Joyful Chaos

Contra: The Holy Grail of Joyful Chaos

Contra is basically balfolk after three coffees.

People line up, the caller shouts things, everyone spins, nobody knows who they’re dancing with and nobody cares. It’s wholesome, joyful, fast, structured, musical, social, and somehow everyone becomes instantly attractive while doing it.

Verdict: YES. Inject directly into my bloodstream.

Ceilidh: Same Energy, More Shouting

Ceilidh: Same Energy, More Shouting

Ceilidh is what you get when Contra and Guinness have a love child.

It’s rowdy, chaotic, and absolutely nobody takes themselves seriously — not even the guy wearing a kilt with questionable underwear decisions. It’s more shouting, more laughter, more giggling at your own mistakes, and occasionally someone yeeting themselves across the room.

Verdict: Also YES. My natural ecosystem.

Forró: Balfolk, but Warmer

Forró: Balfolk, but Warmer

If mazurka went on Erasmus in Brazil and came back with stories of sunshine and hip movement, you’d get Forró.

Close embrace? Check.

Playfulness? Check.

Techniques that look easy until you attempt them and fall over? Check.

I’m convinced I would adore forró.

Verdict: Where are the damn lessons in Ghent? Brussel if we really have to. Asking for a friend. (The friend is me.)

Lindy Hop & West Coast Swing: Fun… But the Vibe?

Lindy Hop & West Coast Swing: Fun… But the Vibe?

Both look amazing — great music, athletic energy, dynamic, cool moves, full of personality.

But sometimes the community feels a tiny bit like:

“If you’re not wearing vintage shoes and triple-stepping since birth, who even are you?”

It’s not that the dancers are bad — they’re great.

It’s just… the pretentie.

Verdict: Lovely to watch, less lovely to join.

Still looking for a group without the subtle “audition for fame-school jazz ensemble” energy.

Zouk: The Idea Pot

Zouk: The Idea Pot

Zouk dancers move like water. Or like very bendy cats.

It’s sexy, flowy, and full of body isolations that make you reconsider your spine’s architecture.

I’m not planning to become a zouk person, but I am planning to steal their ideas.

Chest isolations?

Head rolls?

Wavy body movements?

Yes please. For flavour. Not for full conversion.

Verdict: Excellent expansion pack, questionable main quest.

Salsa, Bachata & Friends: Respectfully… No

Salsa, Bachata & Friends: Respectfully… No

I tried. I really did.

I know people love them.

But the Latin socials generally radiate too much:

- machismo

- perfume

- nightclub energy

- “look at my hips” nationalism

- and questionable gender-role nostalgia

If you love it, great.

If you’re me: no, no, absolutely not, thank you.

Verdict: iew iew nééé.

Fantastic for others. Not for me.

Tango: The Forbidden Fruit

Tango: The Forbidden Fruit

Tango is elegant, intimate, dramatic… and the community is a whole ecosystem on its own.

There are scenes where people dance with poetic tenderness, and scenes where people glare across the room using century-old codified eyebrow signals that might accidentally summon a demon.

I like tango a lot — I just need to find a community that doesn’t feel like I’m intruding on someone’s ancestral mating ritual. And where nobody hisses if your embrace is 3 mm off the sacred norm.

Verdict: Promising, if I find the right humans.

Ballroom: Elegance With a Rulebook Thicker Than a Bible

Ballroom: Elegance With a Rulebook Thicker Than a Bible

Ballroom dancers glide across the floor like aristocrats at a diplomatic gala — smooth, flawless, elegant, and somehow always looking like they can hear a string quartet even when Beyoncé is playing.

It’s beautiful. Truly.

Also: terrifying.

Ballroom is the only dance style where I’m convinced the shoes judge you.

Everything is codified — posture, frame, foot angle, when to breathe, how much you’re allowed to look at your partner before the gods of Standard strike you down with a minus-10 penalty.

The dancers?

Immaculate. Shiny. Laser-focused.

Half angel, half geometry teacher.

I admire Ballroom deeply… from a safe distance.

My internal monologue when watching it:

“Gorgeous! Stunning! Very impressive!”

My internal monologue imagining myself doing it:

“Nope. My spine wasn’t built for this. I slouch like a relaxed accordion.”

Verdict: Respect, awe, and zero practical intention of joining.

I love dancing — but I’m not ready to pledge allegiance to the International Order of Perfect Posture.

Ecstatic Dance / 5 Rhythms / Biodanza / Tantric Whatever

Ecstatic Dance / 5 Rhythms / Biodanza / Tantric Whatever

Look.

I’m trying to be polite.

But if I wanted to flail around barefoot while being spiritually judged by someone named Moonfeather, I’d just do yoga in the wrong class.

I appreciate the concept of moving freely.

I do not appreciate:

- uninvited aura readings

- unclear boundaries

- workshops that smell like kombucha

- communities where “I feel called to share” takes 20 minutes

And also: what are we doing? Therapy? Dance? Summoning a forest deity?

Verdict: Too much floaty spirituality, not enough actual dancing.

Hard pass.

Conclusion

Conclusion

I’m a simple dancer.

Give me clear structure (contra), playful chaos (ceilidh), heartfelt connection (balfolk), or Brazilian sunshine vibes (forró).

Fusion was fun to try, and I’m genuinely grateful it exists — and grateful to the people like Lene who pour time and energy into creating new dance spaces in Ghent.

But for me personally?

Fusion can stay in the category of “fun experiment,” but I won’t be selling all my worldly possessions to follow the Church of Expressive Improvisation any time soon.

I’ll stay in my natural habitat: balfolk, contra, ceilidh, and anything that combines playfulness, partnership, and structure.

If you see me in a dance hall, assume I’m there for the joy, the flow, and preferably fewer incense-burning hippies.

Still: I’m glad I went.

Trying new things is half the adventure.

Knowing what you like is the other half.

And I’m getting pretty damn good at that.

Amen.

(Fitting, since I wrote this after dancing in a church.)

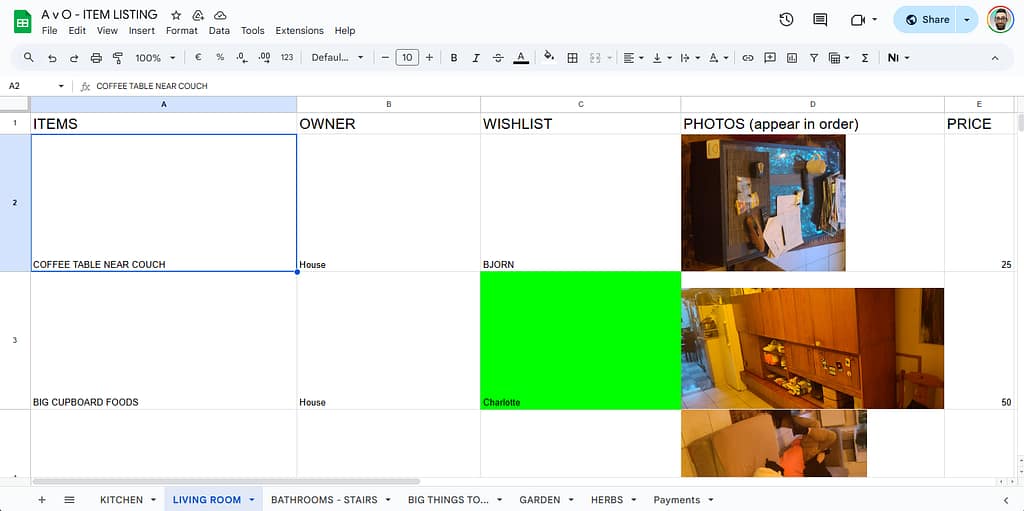

And in our case, that meant making an inventory of everything that lived in the house at Van Ooteghem:

Who takes what, what gets sold, and what’s destined for the containerpark.

To keep things organised (and avoid the classic “wait, whose toaster was that again?” discussion), we split the task — each person took care of one room.

I was assigned to the living room.

I made photos of every item, uploaded them to our shared Dropbox folder, and listed them neatly in a Google spreadsheet:

one column for the Dropbox URL, another for the photo itself using the IMAGE() function, like this:

=IMAGE(A2)

When Dropbox meets Google Sheets

When Dropbox meets Google Sheets

Of course, it didn’t work immediately — because Dropbox links don’t point directly to the image.

They point to a webpage that shows a preview. Google Sheets looked at that and shrugged.

A typical Dropbox link looks like this:

https://www.dropbox.com/s/abcd1234efgh5678/photo.jpg?dl=0

So I used a small trick: in my IMAGE() formula, I replaced ?dl=0 with ?raw=1, forcing Dropbox to serve the actual image file.

=IMAGE(SUBSTITUTE(A2, "?dl=0", "?raw=1"))

And suddenly, there they were — tidy little thumbnails, each safely contained within its cell.

Making it fit just right

Making it fit just right

You can fine-tune how your image appears using the optional second argument of the IMAGE() function:

=IMAGE("https://example.com/image.jpg", mode)

Where:

1– fit to cell (default)2– stretch (fill the entire cell, may distort)3– keep original size4– custom size, e.g.=IMAGE("https://example.com/image.jpg", 4, 50, 50)(sets width and height in pixels)

Resize the row or column if needed to make it look right.

Resize the row or column if needed to make it look right.

That flexibility means you can keep your spreadsheet clean and consistent — even if your photos come in all sorts of shapes and sizes.

The others tried it too…

The others tried it too…

My housemates loved the idea and started adding their own photos to the spreadsheet.

Except… they just pasted them in.

It looked great at first — until someone resized a row.

Then the layout turned into an abstract art project, with floating chairs and migrating coffee machines.

The moral of the story: IMAGE() behaves like cell content, while pasted images are wild creatures that roam free across your grid.

Bonus: The Excel version

Bonus: The Excel version

If you’re more of an Excel person, there’s good news.

Recent versions of Excel 365 also support the IMAGE() function — almost identical to Google Sheets:

=IMAGE("https://www.dropbox.com/s/abcd1234efgh5678/photo.jpg?raw=1", "Fit")

If you’re still using an older version, you’ll need to insert pictures manually and set them to Move and size with cells.

Not quite as elegant, but it gets the job done.

Organised chaos, visual edition

Organised chaos, visual edition

So that’s how our farewell to Van Ooteghem turned into a tech experiment:

a spreadsheet full of URLs, formulas, furniture, and shared memories.

It’s oddly satisfying to scroll through — half practical inventory, half digital scrapbook.

Because even when you’re dismantling a home, there’s still beauty in a good system.

The most nerve-wracking moment? Without a doubt, moving the piano. It got more attention than any other piece of furniture — and rightfully so. With a mix of brute strength, precision, and a few prayers to the gods of gravity, it’s now proudly standing in the living room.

We’ve also been officially added to the street WhatsApp group — the digital equivalent of the village well, but with emojis. It feels good to get those first friendly waves and “welcome to the neighborhood!” messages.

The house itself is slowly coming together. My IKEA PAX wardrobe is fully assembled, but the BRIMNES bed still exists mostly in theory. For now, I’m camping in style — mattress on the floor. My goal is to build one piece of furniture per day, though that might be slightly ambitious. Help is always welcome — not so much for heavy lifting, but for some body doubling and co-regulation. Just someone to sit nearby, hold a plank, and occasionally say “you’re doing great!”

There are still plenty of (banana) boxes left to unpack, but that’s part of the process. My personal mission: downsizing. Especially the books. But they won’t just be dumped at a thrift store — books are friends, and friends deserve a loving new home.

Technically, things are running quite smoothly already: we’ve got fiber internet from Mobile Vikings, and I set up some Wi-Fi extenders and powerline adapters. Tomorrow, the electrician’s coming to service the air-conditioning units — and while he’s here, I’ll ask him to attach RJ45 connectors to the loose UTP cables that end in the fuse box. That means wired internet soon too — because nothing says “settled adult” like a stable ping.

And then there’s the garden.  Not just a tiny patch of green, but a real garden with ancient fruit trees and even a fig tree! We had a garden at the previous house too, but this one definitely feels like the deluxe upgrade. Every day I discover something new that grows, blossoms, or sneakily stings.

Not just a tiny patch of green, but a real garden with ancient fruit trees and even a fig tree! We had a garden at the previous house too, but this one definitely feels like the deluxe upgrade. Every day I discover something new that grows, blossoms, or sneakily stings.

Ideas for cozy gatherings are already brewing. One of the first plans: living room concerts — small, warm afternoons or evenings filled with music, tea (one of us has British roots, so yes: milk included, coffee machine not required), and lovely people.

The first one will likely feature Hilde Van Belle, a (bal)folk friend who currently has a Kickstarter running for her first solo album: Hilde Van Belle – First Solo Album

Hilde Van Belle – First Solo Album

I already heard her songs at the CaDansa Balfolk Festival, and I could really feel the personal emotions in her music — honest, raw, and full of heart.

You should definitely support her!

The album artwork is created by another (bal)folk friend, Verena, which makes the whole project feel even more connected and personal.

Valentina Anzani

Valentina AnzaniSo yes: the piano’s in place, the Wi-Fi works, the garden thrives, the boxes wait patiently, and the teapot is steaming.

We’ve arrived.

Phew. We actually moved.

Well… what if instead of music, you could make it play Space Invaders?

Yes, that’s a real thing.

It’s called GRUB Invaders, and it runs before your operating system even wakes up.

Because who needs Linux when you can blast aliens straight from your BIOS screen?

From Tunes to Lasers

From Tunes to Lasers

In a previous post — “Resurrecting My Windows Partition After 4 Years

” —

” —

I fell down a delightful rabbit hole while editing my GRUB configuration.

That’s where I discovered GRUB_INIT_TUNE, spent hours turning my PC speaker into an 80s arcade machine, and learned far more about bootloader acoustics than anyone should.

So naturally, the next logical step was obvious:

if GRUB can play music, surely it can play games too.

Enter: GRUB Invaders.

What the Heck Is GRUB Invaders?

What the Heck Is GRUB Invaders?

grub-invaders is a multiboot-compliant kernel game — basically, a program that GRUB can launch like it’s an OS.

Except it’s not Linux, not BSD, not anything remotely useful…

it’s a tiny Space Invaders clone that runs on bare metal.

To install it (on Ubuntu or Debian derivatives):

sudo apt install grub-invaders

Then, in GRUB’s boot menu, it’ll show up as GRUB Invaders.

Pick it, hit Enter, and bam! — no kernel, no systemd, just pew-pew-pew.

Your CPU becomes a glorified arcade cabinet.

How It Works

How It Works

Under the hood, GRUB Invaders is a multiboot kernel image (yep, same format as Linux).

That means GRUB can load it into memory, set up registers, and jump straight into its entry point.

There’s no OS, no drivers — just BIOS interrupts, VGA mode, and a lot of clever 8-bit trickery.

Basically: the game runs in real mode, paints directly to video memory, and uses the keyboard interrupt for controls.

It’s a beautiful reminder that once upon a time, you could build a whole game in a few kilobytes.

Technical Nostalgia

Technical Nostalgia

Installed size?

Installed-Size: 30

Size: 8726 bytes

Yes, you read that right: under 9 KB.

That’s less than one PNG icon on your desktop.

Yet it’s fully playable — proof that programmers in the ’80s had sorcery we’ve since forgotten.

The package is ancient but still maintained enough to live in the Ubuntu repositories:

Homepage: http://www.erikyyy.de/invaders/

Maintainer: Debian Games Team

Enhances: grub2-common

So you can still apt install it in 2025, and it just works.

Why Bother?

Why Bother?

Because you can.

Because sometimes it’s nice to remember that your bootloader isn’t just a boring chunk of C code parsing configs.

It’s a tiny virtual machine, capable of loading kernels, playing music, and — if you’re feeling chaotic — defending the Earth from pixelated aliens before breakfast.

It’s also a wonderful conversation starter at tech meetups:

“Oh, my GRUB doesn’t just boot Linux. It plays Space Invaders. What does yours do?”

A Note on Shenanigans

A Note on Shenanigans

Don’t worry — GRUB Invaders doesn’t modify your boot process or mess with your partitions.

It’s launched manually, like any other GRUB entry.

When you’re done, reboot, and you’re back to your normal OS.

Totally safe. (Mostly. Unless you lose track of time blasting aliens.)

TL;DR

TL;DR

grub-invaderslets you play Space Invaders in GRUB.- It’s under 9 KB, runs without an OS, and is somehow still in Ubuntu repos.

- Totally useless. Totally delightful.

- Perfect for when you want to flex your inner 8-bit gremlin.

Waar ik hulp bij kan gebruiken

- Bananendozen verhuizen — ik zorg dat het meeste op voorhand is ingepakt.

Ruwe schatting: zo’n 30 dozen (ik heb ze niet geteld, ik leef graag gevaarlijk). - Demonteren en verhuizen van meubels:

- Boekenrek (IKEA KALLAX 5×5)

- Kleerkast

- Bed

- Bureau

- Diepvries verhuizen (van de keuken op het gelijkvloers naar de kelder in het nieuwe huis).

De dozen en meubels staan nu op de 2de verdieping. Ik probeer vooraf al wat dozen naar beneden te sleuren — want trappen, ja.

Assembleren van meubels op het nieuwe adres doen we een andere dag.

Doel van de dag: niet overprikkeld geraken.

Wat ik zelf regel

Ik voorzie een kleine bestelwagen via Dégage autodelen.

Wat ik nog nodig heb

- Een elektrische schroevendraaier (voor het IKEA-spul).

- Handige, stressbestendige mensen met een vleugje organisatietalent.

- Enkele auto’s die over en weer kunnen rijden — zelfs al is het maar voor een paar dozen.

- Emotionele support crew die op tijd kunnen zeggen: “Hey, pauze.”

Praktisch

- Oud adres: Ledeberg

- Nieuw adres: tussen station Gent-Sint-Pieters en de Sterre

- Afstand: ongeveer 4 km

(Exacte adressen deel ik met de helpers.)

Ik maak een WhatsApp-groep voor coördinatie.

Afsluiter

Verhuisdag Part 1 eindigt met gratis pizza’s.

Want eerlijk: dozen sleuren is zwaar, maar pizza maakt alles beter.

Wil je komen helpen (met spierkracht, auto, gereedschap of goeie vibes)?

Laat iets weten — hoe meer handen, hoe minder stress!

In a previous post — Resurrecting My Windows Partition After 4 Years 🖥️🎮 — I was neck-deep in

grub.cfg, poking at boot entries, fixing UUIDs, and generally performing a ritual worthy of system resurrection.

While I was at it, I decided to take a closer look at all those mysterious variables lurking in /etc/default/grub.

That’s when I stumbled upon something… magical. ✨

🎶 GRUB_INIT_TUNE — Your Bootloader Has a Voice

Hidden among all the serious-sounding options like GRUB_TIMEOUT and GRUB_CMDLINE_LINUX_DEFAULT sits this gem:

# Uncomment to get a beep at grub start

#GRUB_INIT_TUNE="480 440 1"

Wait, what? GRUB can beep?

Oh, not just beep. GRUB can play a tune. 🎺

Here’s how it actually works (per the GRUB manpage):

Format:

tempo freq duration [freq duration freq duration ...]

- tempo — The base time for all note durations, in beats per minute.

- 60 BPM → 1 second per beat

- 120 BPM → 0.5 seconds per beat

- freq — The note frequency in hertz.

- 262 = Middle C, 0 = silence

- duration — Measured in “bars” relative to the tempo.

- With tempo 60,

1= 1 second,2= 2 seconds, etc.

- With tempo 60,

So 480 440 1 is basically GRUB saying “Hello, world!” through your motherboard speaker: 0.25 seconds at 440 Hz, which is A4 in standard concert pitch as defined by ISO 16:1975.

And yes, this works even before your sound card drivers have loaded — pure, raw, BIOS-level nostalgia.

🧠 From Beep to Bop

Naturally, I couldn’t resist. One line turned into a small Python experiment, which turned into an audio preview tool, which turned into… let’s say, “bootloader performance art.”

Want to make GRUB play a polska when your system starts?

You can. It’s just a matter of string length — and a little bit of mischief. 😏

There’s technically no fixed “maximum size” for GRUB_INIT_TUNE, but remember: the bootloader runs in a very limited environment. Push it too far, and your majestic overture becomes a segmentation fault sonata.

So maybe keep it under a few kilobytes unless you enjoy debugging hex dumps at 2 AM.

🎼 How to Write a Tune That Won’t Make Your Laptop Cry

Practical rules of thumb (don’t be that person):

- Keep the inline tune under a few kilobytes if you want it to behave predictably.

- Hundreds to a few thousands of notes is usually fine; tens of thousands is pushing luck.

- Each numeric value (pitch or duration) must be ≤ 65535.

- Very long tunes simply delay the menu — that’s obnoxious for you and terrifying for anyone asking you for help.

Keep tunes short and tasteful (or obnoxious on purpose).

🎵 Little Musical Grammar: Notes, Durations and Chords (Fake Ones)

Write notes as frequency numbers (Hz). Example: A4 = 440.

Prefer readable helpers: write a tiny script that converts D4 F#4 A4 into the numbers.

Example minimal tune:

GRUB_INIT_TUNE="480 294 1 370 1 440 1 370 1 392 1 494 1 294 1"

That’ll give you a jaunty, bouncy opener — suitable for mild neighbour complaints. 💃🎻

Chords? GRUB can’t play them simultaneously — but you can fake them by rapid time-multiplexing (cycling the chord notes quickly).

It sounds like a buzzing organ, not a symphony, but it’s delightful in small doses.

Fun fact 💾: this time-multiplexing trick isn’t new — it’s straight out of the 8-bit video game era.

Old sound chips (like those in the Commodore 64 and NES) used the same sleight of hand to make

a single channel pretend to play multiple notes at once.

If you’ve ever heard a chiptune shimmer with impossible harmonies, that’s the same magic. ✨🎮

🧰 Tools I Like (and That You Secretly Want)

If you’re not into manually counting numbers, do this:

Use a small composer script (I wrote one) that:

- Accepts melodic notation like

D4 F#4 A4orC4+E4+G4(chord syntax). - Can preview via your system audio (so you don’t have to reboot to hear it).

- Can install the result into

/etc/default/gruband runupdate-grub(only as sudo).

Preview before you install. Always.

Your ears will tell you if your “ode to systemd” is charming or actually offensive.

For chords, the script time-multiplexes: e.g. for a 500 ms chord and 15 ms slices,

it cycles the chord notes quickly so the ear blends them.

It’s not true polyphony, but it’s a fun trick.

(If you want the full script I iterated on: drop me a comment. But it’s more fun to leave as an exercise to the reader.)

🧮 Limits, Memory, and “How Big Before It Breaks?”

Yes, my Red Team colleague will love this paragraph — and no, I’m not going to hand over a checklist for breaking things.

Short answer: GRUB doesn’t advertise a single fixed limit for GRUB_INIT_TUNE length.

Longer answer, responsibly phrased:

- Numeric limits: per note pitch/duration ≤ 65535 (

uint16_t). - Tempo: can go up to

uint32_t. - Parser & memory: the tune is tokenized at boot, so parsing buffers and allocators impose practical limits.

Expect a few kilobytes to be safe; hundreds of kilobytes is where things get flaky. - Usability: if your tune is measured in minutes, you’ve already lost. Don’t be that.

If you want to test where the parser chokes, do it in a disposable VM, never on production hardware.

If you’re feeling brave, you can even audit the GRUB source for buffer sizes in your specific version. 🧩

⚙️ How to Make It Sing

Edit /etc/default/grub and add a line like this:

GRUB_INIT_TUNE="480 440 1 494 1 523 1 587 1 659 3"

Then rebuild your config:

sudo update-grub

Reboot, and bask in the glory of your new startup sound.

Your BIOS will literally play you in. 🎶

💡 Final Thoughts

GRUB_INIT_TUNE is the operating-system equivalent of a ringtone for your toaster:

ridiculously low fidelity, disproportionately satisfying,

and a perfect tiny place to inject personality into an otherwise beige boot.

Use it for a smile, not for sabotage.

And just when I thought I’d been all clever reverse-engineering GRUB beeps myself…

I discovered that someone already built a web-based GRUB tune tester!

👉 https://breadmaker.github.io/grub-tune-tester/

Yes, you can compose and preview tunes right in your browser —

no need to sacrifice your system to the gods of early boot audio.

It’s surprisingly slick.

Even better, there’s a small but lively community posting their GRUB masterpieces on Reddit and other forums.

From Mario theme beeps to Doom startup riffs, there’s something both geeky and glorious about it.

You’ll find everything from tasteful minimalist dings to full-on “someone please stop them” anthems. 🎮🎶

Boot loud, boot proud — but please boot considerate. 😄🎻💻

]]>

That includes the very boring Quality Assurance Hat

— the one that says “yes, Amedee, you do need to check for trailing whitespace again.”

— the one that says “yes, Amedee, you do need to check for trailing whitespace again.”

And honestly? I suck at remembering those little details. I’d rather be building cool stuff than remembering to run Black or fix a missing newline. So I let my robot friend handle it.

That friend is called pre-commit. And it’s the best personal assistant I never hired.

What is this thing?

What is this thing?

Pre-commit is like a bouncer for your Git repo. Before your code gets into the club (your repo), it gets checked at the door:

“Whoa there — trailing whitespace? Not tonight.”

“Missing a newline at the end? Try again.”

“That YAML looks sketchy, pal.”

“You really just tried to commit a 200MB video file? What is this, Dropbox?”

“Leaking AWS keys now, are we? Security says nope.”

“Commit message says ‘fix’? That’s not a message, that’s a shrug.”

Pre-commit runs a bunch of little scripts called hooks to catch this stuff. You choose which ones to use — it’s modular, like Lego for grown-up devs.

When I commit, the hooks run. If they don’t like what they see, the commit gets bounced.

No exceptions. No drama. Just “fix it and try again.”

Is it annoying? Yeah, sometimes.

But has it saved my butt from pushing broken or embarrassing code? Way too many times.

Why I bother (as a hobby dev)

Why I bother (as a hobby dev)

I don’t have teammates yelling at me in code reviews. I am the teammate.

And future-me is very forgetful.

Pre-commit helps me:

Keep my code consistent

Keep my code consistent It catches dumb mistakes before I make them permanent.

It catches dumb mistakes before I make them permanent. Spend less time cleaning up

Spend less time cleaning up Feel a little more “pro” even when I’m hacking on toy projects

Feel a little more “pro” even when I’m hacking on toy projects It works with any language. Even Bash, if you’re that kind of person.

It works with any language. Even Bash, if you’re that kind of person.

Also, it feels kinda magical when it auto-fixes stuff and the commit just… works.

Installing it with

Installing it with pipx (because I’m not a barbarian)

I’m not a fan of polluting my Python environment, so I use pipx to keep things tidy. It installs CLI tools globally, but keeps them isolated.

If you don’t have pipx yet:

python3 -m pip install --user pipx

pipx ensurepath

Then install pre-commit like a boss:

pipx install pre-commit

Boom. It’s installed system-wide without polluting your precious virtualenvs. Chef’s kiss.

Setting it up

Setting it up

Inside my project (usually some weird half-finished script I’ll obsess over for 3 days and then forget for 3 months), I create a file called .pre-commit-config.yaml.

Here’s what mine usually looks like:

repos:

- repo: https://github.com/pre-commit/pre-commit-hooks

rev: v5.0.0

hooks:

- id: trailing-whitespace

- id: end-of-file-fixer

- id: check-yaml

- id: check-added-large-files

- repo: https://github.com/gitleaks/gitleaks

rev: v8.28.0

hooks:

- id: gitleaks

- repo: https://github.com/jorisroovers/gitlint

rev: v0.19.1

hooks:

- id: gitlint

- repo: https://gitlab.com/vojko.pribudic.foss/pre-commit-update

rev: v0.8.0

hooks:

- id: pre-commit-update

What this pre-commit config actually does

What this pre-commit config actually does

You’re not just tossing some YAML in your repo and calling it a day. This thing pulls together a full-on code hygiene crew — the kind that shows up uninvited, scrubs your mess, locks up your secrets, and judges your commit messages like it’s their job. Because it is.

pre-commit-hooks (v5.0.0)

These are the basics — the unglamorous chores that keep your repo from turning into a dumpster fire. Think lint roller, vacuum, and passive-aggressive IKEA manual rolled into one.

trailing-whitespace: No more forgotten spaces at the end of lines. The silent killers of clean diffs.

No more forgotten spaces at the end of lines. The silent killers of clean diffs.end-of-file-fixer: Adds a newline at the end of each file. Why? Because some tools (and nerds) get cranky if it’s missing.

Adds a newline at the end of each file. Why? Because some tools (and nerds) get cranky if it’s missing.check-yaml: Validates your YAML syntax. No more “why isn’t my config working?” only to discover you had an extra space somewhere.

Validates your YAML syntax. No more “why isn’t my config working?” only to discover you had an extra space somewhere.check-added-large-files: Stops you from accidentally committing that 500MB cat video or

Stops you from accidentally committing that 500MB cat video or .sqlitedump. Saves your repo. Saves your dignity.

gitleaks (v8.28.0)

Scans your code for secrets — API keys, passwords, tokens you really shouldn’t be committing.

Because we’ve all accidentally pushed our .env file at some point. (Don’t lie.)

gitlint (v0.19.1)

Enforces good commit message style — like limiting subject line length, capitalizing properly, and avoiding messages like “asdf”.

Great if you’re trying to look like a serious dev, even when you’re mostly committing bugfixes at 2AM.

pre-commit-update (v0.8.0)

The responsible adult in the room. Automatically bumps your hook versions to the latest stable ones. No more living on ancient plugin versions.

In summary

In summary

This setup covers:

Basic file hygiene (whitespace, newlines, YAML, large files)

Basic file hygiene (whitespace, newlines, YAML, large files) Secret detection

Secret detection Commit message quality

Commit message quality Keeping your hooks fresh

Keeping your hooks fresh

You can add more later, like linters specific for your language of choice — think of this as your “minimum viable cleanliness.”

What else can it do?

What else can it do?

There are hundreds of hooks. Some I’ve used, some I’ve just admired from afar:

blackis a Python code formatter that says: “Shhh, I know better.”flake8finds bugs, smells, and style issues in Python.isortsorts your imports so you don’t have to.eslintfor all you JavaScript kids.shellcheckfor Bash scripts.- … or write your own custom one-liner hook!

You can browse tons of them at: https://pre-commit.com/hooks.html

Make Git do your bidding

Make Git do your bidding

To hook it all into Git:

pre-commit install

Now every time you commit, your code gets a spa treatment before it enters version control.

Wanna retroactively clean up the whole repo? Go ahead:

pre-commit run --all-files

You’ll feel better. I promise.

TL;DR

TL;DR

Pre-commit is a must-have.

It’s like brushing your teeth before a date: it’s fast, polite, and avoids awkward moments later.

If you haven’t tried it yet: do it. Your future self (and your Git history, and your date) will thank you.

Use pipx to install it globally.

Add a .pre-commit-config.yaml.

Install the Git hook.

Enjoy cleaner commits, fewer review comments — and a commit history you’re not embarrassed to bring home to your parents.

And if it ever annoys you too much?

You can always disable it… like cancelling the date but still showing up in their Instagram story.

git commit --no-verify

Want help writing your first config? Or customizing it for Python, Bash, JavaScript, Kotlin, or your one-man-band side project? I’ve been there. Ask away!

]]>And then Fortnite happened.

My girlfriend Enya and her wife Kyra got hooked, and naturally I wanted to join them. But Fortnite refuses to run on Linux — apparently some copy-protection magic that digs into the Windows kernel, according to Reddit (so I don’t know if it’s true). It’s rare these days for a game to be Windows-only, but rare enough to shatter my Linux-only bubble. Suddenly, resurrecting Windows wasn’t a chore anymore; it was a quest for polyamorous Battle Royale glory.

My Windows 11 partition had been hibernating since November 2021, quietly gathering dust and updates in a forgotten corner of the disk. Why it stopped working back then? I honestly don’t remember, but apparently I had blogged about it. I hadn’t cared — until now.

The Awakening – Peeking Into the UEFI Abyss

I started my journey with my usual tools: efibootmgr and update-grub on Ubuntu. I wanted to see what the firmware thought was bootable:

sudo efibootmgr

Output:

BootCurrent: 0001

Timeout: 1 seconds

BootOrder: 0001,0000

Boot0000* Windows Boot Manager ...

Boot0001* Ubuntu ...

At first glance, everything seemed fine. Ubuntu booted as usual. Windows… did not. It didn’t even show up in the GRUB boot menu. A little disappointing—but not unexpected, given that it hadn’t been touched in years.

I knew the firmware knew about Windows—but the OS itself refused to wake up.

The Hidden Enemy – Why os-prober Was Disabled

I soon learned that recent Ubuntu versions disable os-prober by default. This is partly to speed up boot and partly to avoid probing unknown partitions automatically, which could theoretically be a security risk.

I re-enabled it in /etc/default/grub:

GRUB_DISABLE_OS_PROBER=false

Then ran:

sudo update-grub

Even after this tweak, Windows still didn’t appear in the GRUB menu.

The Manual Attempt – GRUB to the Rescue

Determined, I added a manual GRUB entry in /etc/grub.d/40_custom:

menuentry "Windows" {

insmod part_gpt

insmod fat

insmod chain

search --no-floppy --fs-uuid --set=root 99C1-B96E

chainloader /EFI/Microsoft/Boot/bootmgfw.efi

}

How I found the EFI partition UUID:

sudo blkid | grep EFIResult:

UUID="99C1-B96E"

Ran sudo update-grub… Windows showed up in GRUB! But clicking it? Nothing.

At this stage, Windows still wouldn’t boot. The ghost remained untouchable.

The Missing File – Hunt for bootmgfw.efi

The culprit? bootmgfw.efi itself was gone. My chainloader had nothing to point to.

I mounted the NTFS Windows partition (at /home/amedee/windows) and searched for the missing EFI file:

sudo find /home/amedee/windows/ -type f -name "bootmgfw.efi"

/home/amedee/windows/Windows/Boot/EFI/bootmgfw.efi

The EFI file was hidden away, but thankfully intact. I copied it into the proper EFI directory:

sudo cp /home/amedee/windows/Windows/Boot/EFI/bootmgfw.efi /boot/efi/EFI/Microsoft/Boot/

After a final sudo update-grub, Windows appeared automatically in the GRUB menu. Finally, clicking the entry actually booted Windows. Victory!

Four Years of Sleeping Giants

Booting Windows after four years was like opening a time capsule. I was greeted with thousands of updates, drivers, software installations, and of course, the installation of Fortnite itself. It took hours, but it was worth it. The old system came back to life.

Every “update complete” message was a heartbeat closer to joining Enya and Kyra in the Battle Royale.

The GRUB Disappearance – Enter Ventoy

After celebrating Windows resurrection, I rebooted… and panic struck.

The GRUB menu had vanished. My system booted straight into Windows, leaving me without access to Linux. How could I escape?

I grabbed my trusty Ventoy USB stick (the same one I had used for performance tests months ago) and booted it in UEFI mode. Once in the live environment, I inspected the boot entries:

sudo efibootmgr -v

Output:

BootCurrent: 0002

Timeout: 1 seconds

BootOrder: 0002,0000,0001

Boot0000* Windows Boot Manager ...

Boot0001* Ubuntu ...

Boot0002* USB Ventoy ...

To restore Ubuntu to the top of the boot order:

sudo efibootmgr -o 0001,0000

Console output:

BootOrder changed from 0002,0000,0001 to 0001,0000

After rebooting, the GRUB menu reappeared, listing both Ubuntu and Windows. I could finally choose my OS again without further fiddling.

A Word on Secure Boot and Signed Kernels

Since we’re talking bootloaders: Secure Boot only allows EFI binaries signed with a trusted key to execute. Ubuntu Desktop ships with signed kernels and a signed shim so it boots fine out of the box. If you build your own kernel or use unsigned modules, you’ll either need to sign them yourself or disable Secure Boot in firmware.

Diagram of the Boot Flow

Here’s a visual representation of the boot process after the fix:

flowchart TD

UEFI[" UEFI Firmware BootOrder:<br/>0001 (Ubuntu) →<br/>0000 (Windows)<br/>(BootCurrent: 0001)"]

subgraph UbuntuEFI["shimx64.efi"]

GRUB["

UEFI Firmware BootOrder:<br/>0001 (Ubuntu) →<br/>0000 (Windows)<br/>(BootCurrent: 0001)"]

subgraph UbuntuEFI["shimx64.efi"]

GRUB[" GRUB menu"]

LINUX["

GRUB menu"]

LINUX[" Ubuntu Linux<br/>kernel + initrd"]

CHAINLOAD["

Ubuntu Linux<br/>kernel + initrd"]

CHAINLOAD[" Windows<br/>bootmgfw.efi"]

end

subgraph WindowsEFI["bootmgfw.efi"]

WBM["

Windows<br/>bootmgfw.efi"]

end

subgraph WindowsEFI["bootmgfw.efi"]

WBM[" Windows Boot Manager"]

WINOS["

Windows Boot Manager"]

WINOS[" Windows 11<br/>(C:)"]

end

UEFI --> UbuntuEFI

GRUB -->|boots| LINUX

GRUB -.->|chainloads| CHAINLOAD

UEFI --> WindowsEFI

WBM -->|boots| WINOS

Windows 11<br/>(C:)"]

end

UEFI --> UbuntuEFI

GRUB -->|boots| LINUX

GRUB -.->|chainloads| CHAINLOAD

UEFI --> WindowsEFI

WBM -->|boots| WINOS

From the GRUB menu, the Windows entry chainloads bootmgfw.efi, which then points to the Windows Boot Manager, finally booting Windows itself.

First Battle Royale

After all the technical drama and late-night troubleshooting, I finally joined Enya and Kyra in Fortnite.

I had never played Fortnite before, but my FPS experience (Borderlands hype, anyone?) and PUBG knowledge from Viva La Dirt League on YouTube gave me a fighting chance.

We won our first Battle Royale together!

The sense of triumph was surreal—after resurrecting a four-year-old Windows partition, surviving driver hell, and finally joining the game, victory felt glorious.

The sense of triumph was surreal—after resurrecting a four-year-old Windows partition, surviving driver hell, and finally joining the game, victory felt glorious.

TL;DR: Quick Repair Steps

- Enable os-prober in

/etc/default/grub. - If Windows isn’t detected, try a manual GRUB entry.

- If boot fails, copy

bootmgfw.efifrom the NTFS Windows partition to/boot/efi/EFI/Microsoft/Boot/. - Run

sudo update-grub. - If GRUB disappears after booting Windows, boot a Live USB (UEFI mode) and adjust

efibootmgrto set Ubuntu first. - Reboot and enjoy both OSes.

This little adventure taught me more about GRUB, UEFI, and EFI files than I ever wanted to know, but it was worth it. Most importantly, I got to join my polycule in a Fortnite victory and prove that even a four-year-old Windows partition can rise again!

You and I have been together for a long time. I wrote blog posts, you provided a place to share them. For years that worked. But lately you’ve been treating my posts like spam — my own blog links! Apparently linking to an external site on my Page is now a cardinal sin unless I pay to “boost” it.

And it’s not just Facebook. Threads — another Meta platform — also keeps taking down my blog links.

So this is goodbye… at least for my Facebook Page.

I’m not deleting my personal Profile. I’ll still pop in to see what events are coming up, and to look at photos after the balfolk and festivals. But our Page-posting days are over.

Here’s why:

- Your algorithm is a slot machine. What used to be “share and be seen” has become “share, pray, and maybe pay.” I’d rather drop coins in an actual jukebox than feed a zuckerbot just so friends can see my work.

- Talking into a digital void. Posting to my Page now feels like performing in an empty theatre while an usher whispers “boost post?” The real conversations happen by email, on Mastodon, or — imagine — in real life.

- Privacy, ads, and that creepy feeling. Every login is a reminder that Facebook isn’t free. I’m paying with my data to scroll past ads for things I only muttered near my phone. That’s not the backdrop I want for my writing.

- The algorithm ate my audience. Remember when following a Page meant seeing its posts? Cute era. Now everything’s at the mercy of an opaque feed.

- My house, my rules. I built amedee.be to be my own little corner of the web. No arbitrary takedowns, no algorithmic chokehold, no random “spam” labels. Subscribe by RSS or email and you’ll get my posts in the order I publish them — not the order an algorithm thinks you should.

- Better energy elsewhere. Time spent arm-wrestling Facebook is time I could spend writing, playing the nyckelharpa, or dancing a Swedish polska at a balfolk. All of that beats arguing with a zuckerbot.

From now on, if people actually want to read what I write, they’ll find me at amedee.be, via RSS, email, or Mastodon. No algorithms, no takedowns, no mystery boxes.

So yes, we’ll still bump into each other when I check events or browse photos. But the part where I dutifully feed you my blog posts? That’s over.

With zero boosted posts and one very happy nyckelharpa,

Amedee

CI runs shouldn’t feel like molasses. Here’s how I got Ansible to stop downloading the internet. You’re welcome.

Let’s get one thing straight: nobody likes waiting on CI.

Not you. Not me. Not even the coffee you brewed while waiting for Galaxy roles to install — again.

So I said “nope” and made it snappy. Enter: GitHub Actions Cache + Ansible + a generous helping of grit and retries.

Why cache your Ansible Galaxy installs?

Why cache your Ansible Galaxy installs?

Because time is money, and your CI shouldn’t feel like it’s stuck in dial-up hell.

If you’ve ever screamed internally watching community.general get re-downloaded for the 73rd time this month — same, buddy, same.

The fix? Cache that madness. Save your roles and collections once, and reuse like a boss.

The basics: caching 101

The basics: caching 101

Here’s the money snippet:

path: .ansible/

key: ansible-deps-${{ hashFiles('requirements.yml') }}

restoreKeys: |

ansible-deps-

Translation:

Translation:

- Store everything Ansible installs in

.ansible/ - Cache key changes when

requirements.ymlchanges — nice and deterministic - If the exact match doesn’t exist, fall back to the latest vaguely-similar key

Result? Fast pipelines. Happy devs. Fewer rage-tweets.

Retry like you mean it

Retry like you mean it

Let’s face it: ansible-galaxy has… moods.

Sometimes Galaxy API is down. Sometimes it’s just bored. So instead of throwing a tantrum, I taught it patience:

for i in {1..5}; do

if ansible-galaxy install -vv -r requirements.yml; then

break

else

echo "Galaxy is being dramatic. Retrying in $((i * 10)) seconds…" >&2

sleep $((i * 10))

fi

done

That’s five retries. With increasing delays. “You good now, Galaxy? You sure? Because I’ve got YAML to lint.”

“You good now, Galaxy? You sure? Because I’ve got YAML to lint.”

The catch (a.k.a. cache wars)

The catch (a.k.a. cache wars)

Here’s where things get spicy:

actions/cacheonly saves when a job finishes successfully.

So if two jobs try to save the exact same cache at the same time? Boom. Collision. One wins. The other walks away salty:

Boom. Collision. One wins. The other walks away salty:

Unable to reserve cache with key ansible-deps-...,

another job may be creating this cache.

Rude.

Fix: preload the cache in a separate job

Fix: preload the cache in a separate job

The solution is elegant:

Warm-up job. One that only does Galaxy installs and saves the cache. All your other jobs just consume it. Zero drama. Maximum speed.

Tempted to symlink instead of copy?

Tempted to symlink instead of copy?

Yeah, I thought about it too.

“But what if we symlink .ansible/ and skip the copy?”

Nah. Not worth the brainpower. Just cache the thing directly. It works.

It works.  It’s clean.

It’s clean.  You sleep better.

You sleep better.

Pro tips

Pro tips

- Use the hash of

requirements.ymlas your cache key. Trust me. - Add a fallback prefix like

ansible-deps-so you’re never left cold. - Don’t overthink it. Let the cache work for you, not the other way around.

TL;DR

TL;DR

GitHub Actions cache = fast pipelines

GitHub Actions cache = fast pipelines Smart keys based on

Smart keys based on requirements.yml= consistency Retry loops = less flakiness

Retry loops = less flakiness Preload job = no more cache collisions

Preload job = no more cache collisions Re-downloading Galaxy junk every time = madness

Re-downloading Galaxy junk every time = madness

Go forth and cache like a pro.

Go forth and cache like a pro.

Got better tricks? Hit me up on Mastodon and show me your CI magic.

And remember: Friends don’t let friends wait on Galaxy.

Peace, love, and fewer

Peace, love, and fewer ansible-galaxy downloads.

deadbeef1234. You remember what it did. You know it was important. And yet, when you go looking for it…

fatal: unable to read tree <deadbeef1234>

Great. Git has ghosted you.

That was me today. All I had was a lonely commit hash. The branch that once pointed to it? Deleted. The local clone that once had it? Gone in a heroic but ill-fated attempt to save disk space. And GitHub? Pretending like it never happened. Typical.

Act I: The Naïve Clone

Act I: The Naïve Clone

“Let’s just clone the repo and check out the commit,” I thought. Spoiler alert: that’s not how Git works.

git clone --no-checkout https://github.com/user/repo.git

cd repo

git fetch --all

git checkout deadbeef1234

fatal: unable to read tree 'deadbeef1234'

Thanks Git. Very cool. Apparently, if no ref points to a commit, GitHub doesn’t hand it out with the rest of the toys. It’s like showing up to a party and being told your friend never existed.

Act II: The Desperate

Act II: The Desperate fsck

Surely it’s still in there somewhere? Let’s dig through the guts.

git fsck --full --unreachable

Nope. Nothing but the digital equivalent of lint and old bubblegum wrappers.

Act III: The Final Trick

Act III: The Final Trick

Then I stumbled across a lesser-known Git dark art:

git fetch origin deadbeef1234

And lo and behold, GitHub replied with a shrug and handed it over like, “Oh, that commit? Why didn’t you just say so?”

Suddenly the commit was in my local repo, fresh as ever, ready to be inspected, praised, and perhaps even resurrected into a new branch:

git checkout -b zombie-branch deadbeef1234

Mission accomplished. The dead walk again.

Moral of the Story

Moral of the Story

If you’re ever trying to recover a commit from a deleted branch on GitHub:

- Cloning alone won’t save you.

git fetch origin <commit>is your secret weapon.- If GitHub has completely deleted the commit from its history, you’re out of luck unless:

- You have an old local clone

- Someone forked the repo and kept it

- CI logs or PR diffs include your precious bits

Otherwise, it’s digital dust.

Bonus Tip

Bonus Tip

Once you’ve resurrected that commit, create a branch immediately. Unreferenced commits are Git’s version of vampires: they disappear without a trace when left in the shadows.

git checkout -b safe-now deadbeef1234

And there you have it. One undead commit, safely reanimated.

]]>Let me explain.

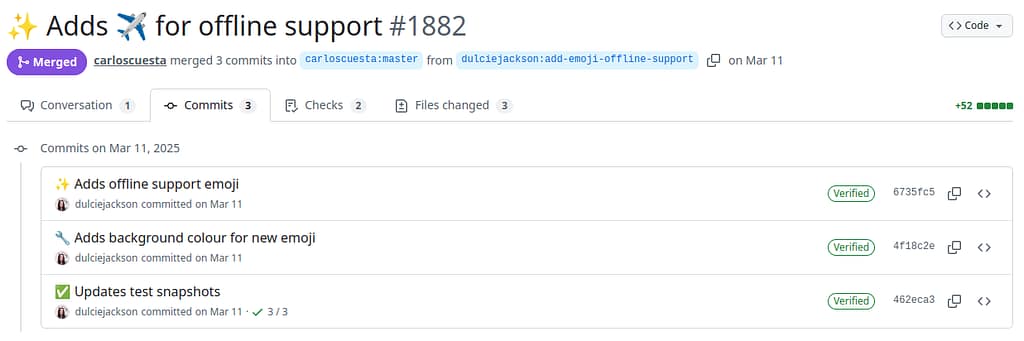

Over on my amedee/ansible-servers repository, I have a workflow called workflow-metrics.yml, which runs after every pipeline. It uses yykamei/github-workflows-metrics to generate beautiful charts that show how long my CI pipeline takes to run. Those charts are then posted into a GitHub Issue—one per run.

It’s neat. It’s visual. It’s entirely unnecessary to keep them forever.

The thing is: every time the workflow runs, it creates a new issue and closes the old one. So naturally, I end up with a long, trailing graveyard of “CI Metrics” issues that serve no purpose once they’re a few weeks old.

Cue the digital broom. 🧹

Enter cleanup-closed-issues.yml

To avoid hoarding useless closed issues like some kind of GitHub raccoon, I created a scheduled workflow that runs every Monday at 3:00 AM UTC and deletes the cruft:

schedule:

- cron: '0 3 * * 1' # Every Monday at 03:00 UTC

This workflow:

- Keeps at least 6 closed issues (just in case I want to peek at recent metrics).

- Keeps issues that were closed less than 30 days ago.

- Deletes everything else—quietly, efficiently, and without breaking a sweat.

It’s also configurable when triggered manually, with inputs for dry_run, days_to_keep, and min_issues_to_keep. So I can preview deletions before committing them, or tweak the retention period as needed.

📂 Complete Source Code for the Cleanup Workflow

name: 🧹 Cleanup Closed Issues

on:

schedule:

- cron: '0 3 * * 1' # Runs every Monday at 03:00 UTC

workflow_dispatch:

inputs:

dry_run:

description: "Enable dry run mode (preview deletions, no actual delete)"

required: false

default: "false"

type: choice

options:

- "true"

- "false"

days_to_keep:

description: "Number of days to retain closed issues"

required: false

default: "30"

type: string

min_issues_to_keep:

description: "Minimum number of closed issues to keep"

required: false

default: "6"

type: string

concurrency:

group: ${{ github.workflow }}-${{ github.ref }}

cancel-in-progress: true

permissions:

issues: write

jobs:

cleanup:

runs-on: ubuntu-latest

steps:

- name: Install GitHub CLI

run: sudo apt-get install --yes gh

- name: Delete old closed issues

env:

GH_TOKEN: ${{ secrets.GH_FINEGRAINED_PAT }}

DRY_RUN: ${{ github.event.inputs.dry_run || 'false' }}

DAYS_TO_KEEP: ${{ github.event.inputs.days_to_keep || '30' }}

MIN_ISSUES_TO_KEEP: ${{ github.event.inputs.min_issues_to_keep || '6' }}

REPO: ${{ github.repository }}

run: |

NOW=$(date -u +%s)

THRESHOLD_DATE=$(date -u -d "${DAYS_TO_KEEP} days ago" +%s)

echo "Only consider issues older than ${THRESHOLD_DATE}"

echo "::group::Checking GitHub API Rate Limits..."

RATE_LIMIT=$(gh api /rate_limit --jq '.rate.remaining')

echo "Remaining API requests: ${RATE_LIMIT}"

if [[ "${RATE_LIMIT}" -lt 10 ]]; then

echo "⚠️ Low API limit detected. Sleeping for a while..."

sleep 60

fi

echo "::endgroup::"

echo "Fetching ALL closed issues from ${REPO}..."

CLOSED_ISSUES=$(gh issue list --repo "${REPO}" --state closed --limit 1000 --json number,closedAt)

if [ "${CLOSED_ISSUES}" = "[]" ]; then

echo "✅ No closed issues found. Exiting."

exit 0

fi

ISSUES_TO_DELETE=$(echo "${CLOSED_ISSUES}" | jq -r \

--argjson now "${NOW}" \

--argjson limit "${MIN_ISSUES_TO_KEEP}" \

--argjson threshold "${THRESHOLD_DATE}" '

.[:-(if length < $limit then 0 else $limit end)]

| map(select(

(.closedAt | type == "string") and

((.closedAt | fromdateiso8601) < $threshold)

))

| .[].number

' || echo "")

if [ -z "${ISSUES_TO_DELETE}" ]; then

echo "✅ No issues to delete. Exiting."

exit 0

fi

echo "::group::Issues to delete:"

echo "${ISSUES_TO_DELETE}"

echo "::endgroup::"

if [ "${DRY_RUN}" = "true" ]; then

echo "🛑 DRY RUN ENABLED: Issues will NOT be deleted."

exit 0

fi

echo "⏳ Deleting issues..."

echo "${ISSUES_TO_DELETE}" \

| xargs -I {} -P 5 gh issue delete "{}" --repo "${REPO}" --yes

DELETED_COUNT=$(echo "${ISSUES_TO_DELETE}" | wc -l)

REMAINING_ISSUES=$(gh issue list --repo "${REPO}" --state closed --limit 100 | wc -l)

echo "::group::✅ Issue cleanup completed!"

echo "📌 Deleted Issues: ${DELETED_COUNT}"

echo "📌 Remaining Closed Issues: ${REMAINING_ISSUES}"

echo "::endgroup::"

{

echo "### 🗑️ GitHub Issue Cleanup Summary"

echo "- **Deleted Issues**: ${DELETED_COUNT}"

echo "- **Remaining Closed Issues**: ${REMAINING_ISSUES}"

} >> "$GITHUB_STEP_SUMMARY"

🛠️ Technical Design Choices Behind the Cleanup Workflow

Cleaning up old GitHub issues may seem trivial, but doing it well requires a few careful decisions. Here’s why I built the workflow the way I did:

Why GitHub CLI (gh)?

While I could have used raw REST API calls or GraphQL, the GitHub CLI (gh) provides a nice balance of power and simplicity:

- It handles authentication and pagination under the hood.

- Supports JSON output and filtering directly with

--jsonand--jq. - Provides convenient commands like

gh issue listandgh issue deletethat make the script readable. - Comes pre-installed on GitHub runners or can be installed easily.

Example fetching closed issues:

gh issue list --repo "$REPO" --state closed --limit 1000 --json number,closedAt

No messy headers or tokens, just straightforward commands.

Filtering with jq

I use jq to:

- Retain a minimum number of issues to keep (

min_issues_to_keep). - Keep issues closed more recently than the retention period (

days_to_keep). - Parse and compare issue closed timestamps with precision.

- Exclude pull requests from deletion by checking the presence of the

pull_requestfield.

The jq filter looks like this:

jq -r --argjson now "$NOW" --argjson limit "$MIN_ISSUES_TO_KEEP" --argjson threshold "$THRESHOLD_DATE" '

.[:-(if length < $limit then 0 else $limit end)]

| map(select(

(.closedAt | type == "string") and

((.closedAt | fromdateiso8601) < $threshold)

))

| .[].number

'

Secure Authentication with Fine-Grained PAT

Because deleting issues is a destructive operation, the workflow uses a Fine-Grained Personal Access Token (PAT) with the narrowest possible scopes:

Issues: Read and Write- Limited to the repository in question

The token is securely stored as a GitHub Secret (GH_FINEGRAINED_PAT).

Note: Pull requests are not deleted because they are filtered out and the CLI won’t delete PRs via the issues API.

Dry Run for Safety

Before deleting anything, I can run the workflow in dry_run mode to preview what would be deleted:

inputs:

dry_run:

description: "Enable dry run mode (preview deletions, no actual delete)"

default: "false"

This lets me double-check without risking accidental data loss.

Parallel Deletion

Deletion happens in parallel to speed things up:

echo "$ISSUES_TO_DELETE" | xargs -I {} -P 5 gh issue delete "{}" --repo "$REPO" --yes

Up to 5 deletions run concurrently — handy when cleaning dozens of old issues.

User-Friendly Output

The workflow uses GitHub Actions’ logging groups and step summaries to give a clean, collapsible UI:

echo "::group::Issues to delete:"

echo "$ISSUES_TO_DELETE"

echo "::endgroup::"

And a markdown summary is generated for quick reference in the Actions UI.

Why Bother?

I’m not deleting old issues because of disk space or API limits — GitHub doesn’t charge for that. It’s about:

- Reducing clutter so my issue list stays manageable.

- Making it easier to find recent, relevant information.

- Automating maintenance to free my brain for other things.

- Keeping my tooling neat and tidy, which is its own kind of joy.

Steal It, Adapt It, Use It

If you’re generating temporary issues or ephemeral data in GitHub Issues, consider using a cleanup workflow like this one.

It’s simple, secure, and effective.

Because sometimes, good housekeeping is the best feature.

🧼✨ Happy coding (and cleaning)!

]]>Enough of that nonsense.

🛠 The setup

Here’s the relevant part of my Vagrantfile:

Vagrant.configure(2) do |config|

config.vm.box = 'boxen/ubuntu-24.04'

config.vm.disk :disk, size: '20GB', primary: true

config.vm.provision 'shell', path: 'resize_disk.sh'

end

This makes sure the disk is large enough and automatically resized by the resize_disk.sh script at first boot.

✨ The script

#!/bin/bash

set -euo pipefail

LOGFILE="/var/log/resize_disk.log"

exec > >(tee -a "$LOGFILE") 2>&1

echo "[$(date)] Starting disk resize process..."

REQUIRED_TOOLS=("parted" "pvresize" "lvresize" "lvdisplay" "grep" "awk")

for tool in "${REQUIRED_TOOLS[@]}"; do

if ! command -v "$tool" &>/dev/null; then

echo "[$(date)] ERROR: Required tool '$tool' is missing. Exiting."

exit 1

fi

done

# Read current and total partition size (in sectors)

parted_output=$(parted --script /dev/sda unit s print || true)

read -r PARTITION_SIZE TOTAL_SIZE < <(echo "$parted_output" | awk '

/ 3 / {part = $4}

/^Disk \/dev\/sda:/ {total = $3}

END {print part, total}

')

# Trim 's' suffix

PARTITION_SIZE_NUM="${PARTITION_SIZE%s}"

TOTAL_SIZE_NUM="${TOTAL_SIZE%s}"

if [[ "$PARTITION_SIZE_NUM" -lt "$TOTAL_SIZE_NUM" ]]; then

echo "[$(date)] Resizing partition /dev/sda3..."

parted --fix --script /dev/sda resizepart 3 100%

else

echo "[$(date)] Partition /dev/sda3 is already at full size. Skipping."

fi

if [[ "$(pvresize --test /dev/sda3 2>&1)" != *"successfully resized"* ]]; then

echo "[$(date)] Resizing physical volume..."

pvresize /dev/sda3

else

echo "[$(date)] Physical volume is already resized. Skipping."

fi

LV_SIZE=$(lvdisplay --units M /dev/ubuntu-vg/ubuntu-lv | grep "LV Size" | awk '{print $3}' | tr -d 'MiB')

PE_SIZE=$(vgdisplay --units M /dev/ubuntu-vg | grep "PE Size" | awk '{print $3}' | tr -d 'MiB')

CURRENT_LE=$(lvdisplay /dev/ubuntu-vg/ubuntu-lv | grep "Current LE" | awk '{print $3}')

USED_SPACE=$(echo "$CURRENT_LE * $PE_SIZE" | bc)

FREE_SPACE=$(echo "$LV_SIZE - $USED_SPACE" | bc)

if (($(echo "$FREE_SPACE > 0" | bc -l))); then

echo "[$(date)] Resizing logical volume..."

lvresize -rl +100%FREE /dev/ubuntu-vg/ubuntu-lv

else

echo "[$(date)] Logical volume is already fully extended. Skipping."

fi

💡 Highlights

- ✅ Uses

partedwith--scriptto avoid prompts. - ✅ Automatically fixes GPT mismatch warnings with

--fix. - ✅ Calculates exact available space using

lvdisplayandvgdisplay, withbcfor floating point math. - ✅ Extends the partition, PV, and LV only when needed.

- ✅ Logs everything to

/var/log/resize_disk.log.

🚨 Gotchas

- Your disk must already use LVM. This script assumes you’re resizing

/dev/ubuntu-vg/ubuntu-lv, the default for Ubuntu server installs. - You must use a Vagrant box that supports VirtualBox’s disk resizing—thankfully,

boxen/ubuntu-24.04does. - If your LVM setup is different, you’ll need to adapt device paths.

🔁 Automation FTW

Calling this script as a provisioner means I never have to think about disk space again during development. One less yak to shave.

Feel free to steal this setup, adapt it to your team, or improve it and send me a patch. Or better yet—don’t wait until your filesystem runs out of space at 3 AM.

]]>If you’ve ever squinted at your pipeline and wondered, “Where the heck should I declare this ANSIBLE_CONFIG thing so it doesn’t vanish into the void between steps?”, you’re not alone. I’ve been there. I’ve screamed at $GITHUB_ENV. I’ve misused export. I’ve over-engineered echo. But fear not, dear reader — I’ve distilled it down so you don’t have to.