Microsoft finally released SQL Server 11 “Denali” as CTP3 (Community Technology Preview) for public … Preview. Microsoft is (these are politeness words I can type) stubbornly refusing to have/build own Data Visualization Product. I doubt Crescent “experience” can be considered as a product, especially because it is Silverlight-base, while world already moved to HTML5.

If you have 7 minutes, you can watch Crescent Demo from WPC11, which is showing that while trailing a few years behind DV Leaders and Google, Microsoft is giving to its die hard followers something to cheer about:

I have to admit, that while there is nothing new (for DV expert) in video above, it is a huge progress compare with Excel-based Data Visualizations, which Microsoft tried to promote as a replacement of ProClarity and PerformancePoint Server. Even Microsoft itself positions Crescent (which is 32-bit only!) as a replacement for SSRS Report Builder, so DV Leaders can sleep well another night.

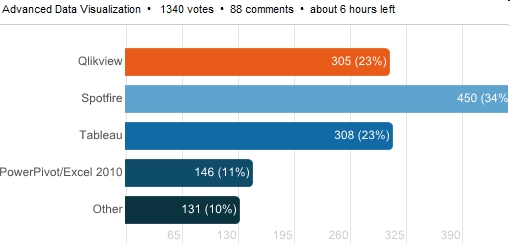

However, Microsoft’s BI Stack is the number 4 or 5 on my list of DV Leaders and CTP3 is so rich with new cool functionality, that it deserves to be covered on this blog.

However, Microsoft’s BI Stack is the number 4 or 5 on my list of DV Leaders and CTP3 is so rich with new cool functionality, that it deserves to be covered on this blog.

Of course major news is availability of Tabular Data Model, which means VertiPaq in-memory columnar Engine, similar to PowerPivot Engine but running on Server without any SharePoint (which is a slow virus, as far as I am concerned) and without stupid SharePoint UI and limitations and I quote Microsoft: ” In contrast with the previous release, where VertiPaq was only available via in PowerPivot for SharePoint, you can now use VertiPaq on a standalone Analysis Services instance with no dependency on SharePoint.“!

SSAS (SQL Server Analysis Services) has new (they may existed before, but before CTP3 – ALL who knew that were under NDA) features like memory paging (allows models to be larger than the physical memory of the server, means unlimited scalability and BIG Data support), row level security (user identity used to hide/show visible data), KPI, Partitions; CTP3 removes the maximum 4GB file size limit for string storage file, removes the limit of 2 billion rows per table (each column is still limited to a maximum of 2 billion distinct values, but in columnar database it is much more tolerable restriction!).

New version of PowerPivot is released with support of Tabular Model and I quote: “You can use this version of the add-in to author and publish PowerPivot workbooks from Excel 2010 to Microsoft SQL Server” and it means no SharePoint involvement again! As Marco Russo put it: “Import your existing PowerPivot workbooks in a Tabular project (yes, you can!)” and I agreed 100% with Marco when he said 4 times: Learn DAX!

After 3 years of delays, Microsoft is finally has BIDS for Visual Studio 2010 and that is huge too, I quote again: “The Tabular Model Designer … is now integrated with Microsoft SQL Server “Denali” (CTP 3) Business Intelligence Development Studio.” It means that BIDS now is not just available but is the main unified development interface for both Multidimensional and Tabular Data Models. Now we can forget about Visual Studio 2008 and finally use more modern VS2010!

Another extremely important for Data Visualization feature is not in SSAS but in SQL Server itself: Columnstore index is finally released and I a quote 1 more time again: “The … SQL Server (CTP 3) introduces a new data warehouse query acceleration feature based on a new type of index called the columnstore. This new index … improves DW query performance by hundreds to thousands of times in some cases, and can routinely give a tenfold speedup for a broad range of decision support queries… columnstore indexes limit or eliminate the need to rely on pre-built aggregates, including user-defined summary tables, and indexed (materialized) views. Furthermore, columnstore indexes can greatly improve ROLAP performance” (ROLAP can be used for real-time Cubes and real-time Data Visualizations).

All these cool SQL Server 11 new stuff is coming soon into Azure Cloud and this can be scary for any DV vendor, unless it knows (Tableau does; Qliktech and Spotfire still ignore SSAS) how to be friendly with Microsoft.

As we know now the newly coined by Microsoft term BISM (Business Intelligence Semantic Model) was a marketing attempt to have a “unified” umbrella

for 2 different Data Models and Data Engines: Multidimensional Cubes (invented by Mosha Pasumansky 15 years ago and the foundation for SSAS and MDX – SQL Server Analysis Services) and Tabular Model (used in PowerPivot and VertiPaq in-memory columnar Database with new DAX Language which is going to be very important for future Data Visualization projects).

New CTP3-released BIDS 2010 (finally almighty Visual Studio 2010 will have a “Business Intelligence Development Studio” after 3+ years of unjustified delays!) UI-wise will able to handle these 2 Data Models, but it is giving me a clue why Mosha left Microsoft for Google. And lack of DV product is a clue for me why Donald Farmer (face of Microsoft BI) left Microsoft for Qliktech.

Even more: if you need both Data Models to be present, you need to install 2 (TWO!) different instances of “Analysis Services”: one with Multidimensional Engine and one with new Tabular (VertiPaq/PowerPivot) Engine. It seems to me not as ONE “BI” architecture but TWO “BI” Architectures, interface-glued on Surface by BIDS 2010 and on back-end by all kind of Data Connectors. Basically Microsoft is in confused BI state now because financially it can afford 2 BI Architectures and NO Data Visualization Product!

I cannot believe I am saying this, but I wish Bill Gates back from retirement (it will be good for Microsoft shares and good for Microsoft market capitalization too – just ask Apple’s shareholders about Steve and they will say he is a god)!

Permalink: https://apandre.wordpress.com/2011/07/14/tabular-model/