The post Why We Invested </br>in Lunar Energy appeared first on B Capital.

]]>

The U.S. power grid is evolving rapidly as the economics and patterns of electricity production, consumption and pricing change. Demand is rising as homes electrify transportation, heating and appliances, while grid operations are growing more complex due to aging infrastructure, extreme weather and localized congestion. At the same time, retail electricity pricing is shifting toward time-based and dynamic structures that place more responsibility on end users to actively manage consumption.

For homeowners, this translates to higher bills, more frequent exposure to outages and limited ability to control when energy flows to or from the grid. For utilities, it brings rising peak loads, constrained capacity and growing reliance on flexible distributed resources to maintain reliability. Residential solar and storage can help address these challenges, but only when hardware, software and grid integration seamlessly work together.

Lunar Energy has built exactly that. The company’s modular battery system was designed not just for performance, but for real-world deployment with installers in mind – fewer components, faster commissioning, and flexible configurations for different home sizes. The result is a system that works for both homeowners and the installers who put it in.

B Capital is pleased to lead the company’s $100M Series C funding round. Founded in 2020 and headquartered in Mountain View, California, Lunar Energy’s integrated hardware and software platform was designed from the ground up to serve homeowners, installers, and grid operators.

Why Legacy Residential Storage Is No Longer Sufficient

The gap between what residential storage could deliver and what it delivers today comes down to system design. Many incumbent solutions were built for a regulatory environment defined by net metering and static electricity rates, where active optimization was optional rather than required. As pricing structures and grid requirements evolve, those assumptions no longer hold.

Hardware-first products often lack the software sophistication required to adapt to changing rates or grid signals. Software-only platforms, in turn, depend on third-party devices they don’t control. Most residential systems weren’t designed to participate meaningfully in distributed power plants, either because they lack the necessary controls or because their platforms weren’t built for large-scale coordination.

The result is a fragmented ecosystem where hardware is often undifferentiated, software is bolted on rather than deeply integrated and grid participation remains an afterthought. What’s needed to unlock the full value of residential storage is a platform built from the ground up, one that combines purpose-built hardware with intelligent software and native grid connectivity. That level of coordination depends on integration across hardware, software and grid interfaces at the system architecture level, rather than relying on legacy architectures that were not designed for coordinated dispatch.

Turning Homes into Grid Assets

Lunar Energy has built an integrated home battery system designed to make electrification simple, affordable and resilient. Residential batteries are no longer simply backup devices. They are increasingly becoming coordinated grid infrastructure. With 650 MW of distributed devices under management and new systems deployed daily, Lunar Energy is proving that the future of residential energy requires hardware and software working together from day one to optimize for homeowners, installers and grid operators.1

For homeowners, the Lunar Energy system delivers immediate, tangible value. The modular battery provides whole-home backup during outages, a critical feature as extreme weather events and grid strain become more frequent. In 2024, the average U.S. electricity customer lost power for 11 hours, nearly double the prior decade’s average, with major weather events accounting for 80% of those hours.2 Recent studies also show that the longest outage a typical customer experiences has grown from about 8 hours in 2022 to nearly 13 hours by mid 2025, exactly the kind of extended interruption a whole home system is designed to cover.3

The product’s DC-coupled architecture, which connects solar panels directly to the battery and reduces unnecessary energy conversion, improves overall system efficiency. That higher efficiency translates directly into greater bill savings for homeowners, capturing more value from every kilowatt-hour of solar generation. Integrated smart circuit controls allow homeowners to prioritize which appliances stay powered during an outage via a simple app, giving them direct control over how stored energy is used.

Beyond the system’s differentiated hardware, Lunar Energy’s software platform provides customers with even more value. Rather than relying on static schedules or manual configuration, the company operates a forecasting and optimization platform that continuously models household energy production, consumption and tariff structures. In turn, the system automatically determines how and when to charge or discharge the battery across three objectives: lowering electricity bills, preserving backup readiness and enabling grid participation. In practice, this means a homeowner can open the Lunar Energy app, see exactly how energy is flowing through their home, and adjust priorities in real time – keeping the refrigerator and home office running during a grid event while temporarily pausing the EV charger. The system can also act autonomously, shifting loads and dispatch timing based on rate signals the homeowner never has to monitor. For installers and third-party asset owners, the same platform provides fleet-level visibility and management tools, enabling them to monitor system health, track performance and manage warranty workflows across their entire installed base from a single interface.

For utilities and grid operators, Lunar Energy’s platform transforms residential batteries into dispatchable assets, meaning they can be coordinated and activated when the grid requires support. Lunar Energy’s software already manages one of the largest distributed power programs in the country, coordinating nearly 150,000 devices across key markets including California, Hawaii, New England and Puerto Rico.4 Each connected home becomes a node in a virtual power plant, an aggregated network of distributed batteries coordinated to operate as a single dispatchable resource to meet peak demand. By coordinating thousands of small assets in real-time, Lunar Energy helps reduce strain on the grid without the cost or emissions of traditional peaker plants, fast-ramping gas facilities used during peak demand periods. The results are meaningful: in 2025, Lunar Energy customers earned an average of $464 through grid participation and saved an additional $338 on electricity bills compared to traditional residential battery operation.5 6

Market Position and Pathway to Scale

Lunar Energy has moved beyond development into its next phase of deployment at scale. The Lunar Energy system is fully commercialized and shipping today, with approximately 2,000 installations across key markets in the U.S. The company is scaling production to 20,000 units by the end of 2026 and 100,000 by 2028, a trajectory enabled by partnerships with contract manufacturers and a system architecture designed for scale.7

The company’s strategic go-to-market partnership and investment from Sunrun, the largest residential solar and storage provider in the U.S., gives Lunar Energy an immediate, high-volume deployment channel at national scale. Sunrun brings customer access, installation capability and deep utility relationships, while Lunar Energy provides the integrated platform purpose-built for complex regulatory and grid conditions. As both an installer and a third-party owner of residential energy assets, Sunrun benefits from Lunar Energy’s streamlined installation process and fleet management software across the full asset lifecycle. It also enables the company to expand its reach through additional channel partners to meet the needs of today’s market. Beyond Sunrun, Lunar Energy has established a broad network of regional and national installers, giving the company multiple paths to scale across geographies and customer segments.

Built by the Team That Scaled Modern Energy Storage

Lunar Energy’s founder and CEO, Kunal Girotra, previously led energy storage at Tesla during a formative period for the category. He was responsible for scaling battery products across residential, commercial and grid-scale applications, operating at the intersection of hardware engineering, manufacturing, software and grid integration.

That experience is directly reflected in Lunar Energy’s emphasis on system-level design and software-driven control. Success in residential storage requires navigating certification, supply chains, installation workflows, utility requirements and real-world failure modes. Kunal’s background reflects firsthand experience with these challenges at global scale, reducing execution risk in a sector where many companies underestimate operational complexity.

The broader Lunar Energy team brings complementary expertise across residential energy, grid software and large-scale system deployment. Collectively, they have built and operated platforms that manage energy assets across countless homes, providing a strong foundation for scaling the company’s integrated model.

Backing the Future of Residential Energy Infrastructure

At B Capital, we focus on companies we believe contain proven, scalable and differentiated technology addressing constraints in critical energy infrastructure. Lunar Energy embodies this thesis. The company has moved beyond prototypes to full commercial deployment, with a product in-market and a clear path to scale. Its integrated hardware and software platform creates defensible differentiation in a market where most players compete on only one dimension. It also enables ongoing value capture through grid services and software coordination. Its ability to serve homeowners, installers and utilities position Lunar Energy to capture value across the entire residential energy stack.

We see Lunar Energy as a potential platform company, one that could define how millions of American homes interact with the grid over the coming decades. The residential storage market is large and growing, with installed capacity projected to nearly double by 2030.8 But scale alone is not enough to capture the market. As distributed resources become central to grid reliability, leadership will increasingly accrue to companies positioned at the coordination layer. The winners will be companies that combine hardware excellence with software intelligence and deep grid integration. That is exactly what Lunar Energy has built, and we are thrilled to partner with Kunal and the team as they scale.

LEGAL DISCLAIMER

All information is as of 03.09.2026 and subject to change. This content is a high-level overview and for informational purposes only. The investment discussed herein is a portfolio company of B Capital; however, such investment does not represent all B Capital investments. Certain statements reflected herein reflect the subjective opinions and views of B Capital personnel. Such statements cannot be independently verified and are subject to change. There can be no assurance any such trends or correlations will continue in the future. Reference to third-party firms or businesses does not imply affiliation with or endorsement by such firms or businesses. It should not be assumed that any investments or companies identified and discussed herein were or will be profitable. Past performance is not indicative of future results. The information herein does not constitute or form part of an offer to issue or sell, or a solicitation of an offer to subscribe or buy, any securities or other financial instruments, nor does it constitute a financial promotion, investment advice or an inducement or incitement to participate in any product, offering or investment. Much of the relevant information is derived directly from various sources which B Capital believes to be reliable, but without independent verification. This information is provided for reference only and the companies described herein may not be representative of all relevant companies or B Capital investments. You should not rely upon this information to form the definitive basis for any decision, contract, commitment or action.

SOURCE

- Latitude Media, Home battery startup Lunar Energy aims to quadruple its manufacturing, February 4, 2026

- EIA, Hurricanes in 2024 led to the most hours without power in the United States in 10 years, December 1, 2025

- JD Power, Disasters Become a Fact of Life for Many U.S. Electric Utility Customers, October 2025

- Canary Media, Lunar Energy lands $232M to boost smart home batteries, February 4, 2026

- Bloomberg, Ex-Tesla Energy Chief Raises $230 Million for Battery Startup, February 4, 2026

- Lunar Energy, What is Lunar AI, https://www.lunarenergy.com/learn/learn-articles/what-is-lunar-ai

- Ibid.

- B Capital analysis

The post Why We Invested </br>in Lunar Energy appeared first on B Capital.

]]>The post The AI Labor Crisis </br>Isn’t Coming in 2028. </br>The Investment </br>Opportunity Is Here Now. appeared first on B Capital.

]]>

Last weekend, the Citrini Research “2028 Global Intelligence Crisis” memo went viral, racking up roughly 16 million views after Michael Burry amplified it. IBM dropped 13%, and we saw broad weakness across software, payments, and delivery stocks.1 The market panicked.

The memo paints a vivid picture: AI replaces white-collar labor faster than the economy can absorb, consumer demand collapses, unemployment spikes past 10%, and the S&P draws down nearly 40%.2 They call it “Ghost GDP,” where output shows up in profits but doesn’t circulate because displaced workers have lost their income.

It’s a clean left-tail story, and it’s wrong as a base case, but it’s directionally correct on the structural shift underneath, which is exactly where the investment opportunity sits.

What the Doomers Get Right

Strip away the compressed timeline and the stacked worst-case assumptions, and several of Citrini’s structural observations hold up.

White-collar work is the near-term fault line. A large share of knowledge work is read, write, decide, and coordinate. That’s exactly where current models are strongest. McKinsey estimates 60-70% of employee time is automatable by AI.3 The pressure will show up first in back office, operations, finance, support, sales enablement, and parts of legal and compliance. These aren’t theoretical targets; these are the functions where we see enterprise buyers already pulling budget.

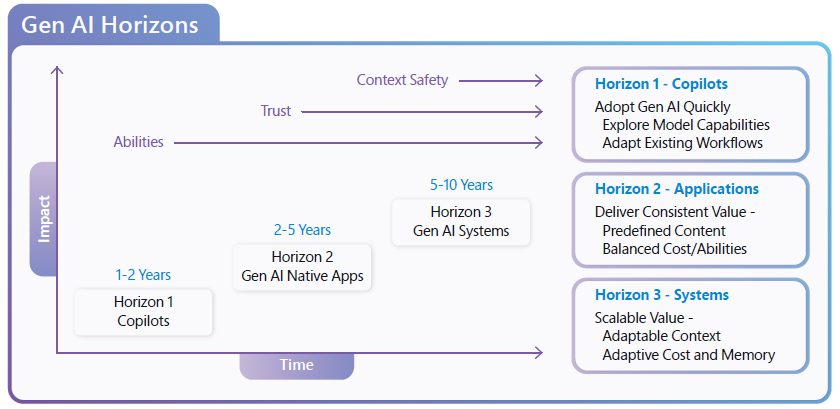

AI is moving from tool to coworker. The real shift isn’t chatbots getting smarter. It’s AI gaining persistent memory, learning on the job, and planning autonomously. We went from “help me write this” (early ChatGPT, basic copilots) to “do this for me” (Codex, Claude Code, support bots) and are now entering “own this with me.” That last phase changes org design, not just task execution.

The distributional tension is real. If gains accrue primarily to capital while labor lags, demand weakens and politics get volatile. Even without collapse, wage, tax, and benefit debates will intensify. That affects regulation and procurement behavior. Investors who ignore this are underpricing political risk.

Take rates will face pressure. Agents routing around software is overstated near term, but the direction is correct. As discovery, evaluation, and execution become automated, friction-based pricing power erodes. The winners will be workflow-embedded products and infrastructure providers.

Where It Falls Apart

The Citrini scenario only works if every aggressive assumption resolves in the same direction simultaneously within 24 months. That’s not analysis, that’s a horror story presented as a base case.

Enterprise adoption doesn’t move at speed. Capability may be exponential, but deployment is not. Data restructuring, compliance, procurement cycles, and retraining are multi-year arcs. Only 5% of AI pilots currently achieve measurable P&L impact, per MIT research.4 32% stall after pilot.5 METR’s own randomized controlled trial found experienced developers were 19% slower with AI tools, even as benchmarks showed superhuman coding performance, a stark reminder that lab capability and production value are different things.6 The bottleneck is not demand: 92% of Fortune 500 already use ChatGPT, 82% of executives plan AI agent integration within three years, and $252 billion went into corporate AI investment in 2024 alone.7, 8, 9 The bottleneck is the infrastructure to deploy and scale AI coworkers in production.

“Ghost GDP” confuses distribution with destruction. Labor savings don’t vanish; they reallocate through lower prices, capex, profits, dividends, and tax revenue. The issue is distribution and timing, not whether gains circulate. This is an important distinction for investors: the value gets created; the question is where it accrues.

Policy is treated as inert. Automatic stabilizers, monetary easing, fiscal transfers, mortgage forbearance, and credit restructurings historically interrupt demand spirals. The memo assumes none of these mechanisms activate. That’s not how economies function under stress.

Business execution is understated. Code is cheap, but trust, compliance, distribution, and operational execution are not. Agents may pressure take rates, but they don’t eliminate institutional infrastructure in two years.

The Narrative Shock Creates Real Opportunity

The market is conflating a long-term structural shift with a 24-month crisis scenario. That dislocation creates two types of opportunity: mispriced growth exposures in public markets and private-market picks-and-shovels that win regardless of macro path. The latter is where we’re focused.

1. AI Co-Worker Applications: Replacing Hours, Not Just Tasks

The highest-conviction opportunity is AI coworkers that land in the enterprise with measurable ROI inside 90 days. The investment filter is simple: can it replace measurable hours with compliance, auditability, and a clear feedback loop?

Software Engineering (~$370B addressable). Claude Code and Codex have commoditized code generation. The frontier has moved to enterprise context and verifiable domains where the AI can prove its work is correct: formal proofs, math/physics, security analysis, AI research itself. The moat here is organizational context, not raw coding ability.

Sales & GTM (~$245B addressable). The market is crowded with AI SDRs, and most of them are dead on arrival. The winners own the system of record, learn from outcomes, and close the loop on what converts. Data rights are the moat; if you can’t observe what works and improve autonomously, you’re a feature, not a company.

Finance & CFO Office (~$215B addressable). High-volume operational workflows with clear accountability: AR/AP, collections, procurement ops, FP&A, compliance reporting. These processes are rules-based but manual-intensive, making them ideal for AI coworkers. The companies we’re most excited about are the ones replacing FP&A analysts, not just augmenting them, where “better” is quantifiable and feedback is continuous.

When AI works in the enterprise, the returns are significant: $3.70 ROI per dollar on average, with top performers hitting $10.30.10 Gartner projects 33% of enterprise software will include agentic AI by 2028, with 15% of daily decisions made by agents.11

2. The Infrastructure Layer: Where Durable Value Accrues

The application layer gets the headlines. The infrastructure layer gets the margins. This is where we believe the market is most under-invested.

Agentic Memory & Context. Models have memory, they don’t have your memory. The gap is organizational context: docs, code, tickets, CRM data, team terminology, approval workflows. This is what separates a stateless chatbot from a colleague. The defensibility compounds because memory improves as context accumulates, and switching costs grow over time as the AI learns your organization. Think of it as the unlock from agent to colleague.

Orchestration & Multi-Agent Coordination. Agents don’t yet collaborate well, with each other or with humans. The missing layer includes coordination protocols, escalation paths, and seamless handoffs between AI and human coworkers. The network effects here are powerful: value increases as more agents and humans use the same coordination layer. This is Slack for the human-AI workforce.

Production Observability. When agents run 24/7, ops teams need real-time visibility into what’s working and what’s not. Most existing tools focus on dev and debug, but the real pain is keeping agents reliable at scale. The first company to nail production-first observability for agentic systems owns the category. This is the Datadog opportunity for the agentic era.

Agentic Security. OpenClaw made this viscerally obvious (more on that below), but the principle applies broadly: agents with persistent access to enterprise systems represent a fundamentally new attack surface. Least-privilege access, skill sandboxing, action-level anomaly detection, and identity management for non-human actors. This category barely existed a year ago, and it will be table stakes for enterprise deployment within two years.

3. Why Now: Four Curves Crossed Simultaneously

This thesis isn’t speculative. It’s grounded in four technical inflection points that converged in 2024-25, and the data keeps accelerating.

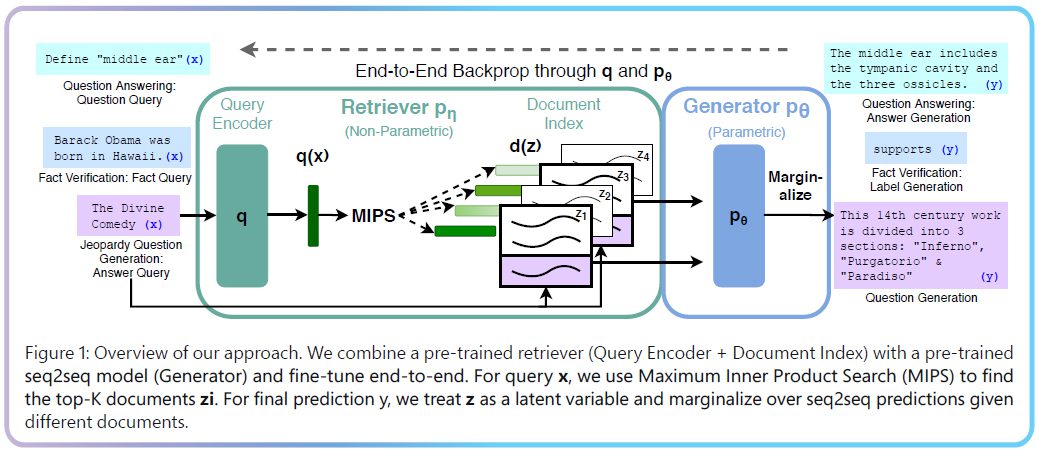

Reasoning quality is on an exponential curve, and it’s steepening. METR’s time horizon research, which measures the length of tasks AI agents can reliably complete autonomously, shows capability doubling every ~7 months over six years.12 Their updated TH1.1 methodology (January 2026) suggests recent progress is actually 20% faster than the historical trend, with post-2023 doubling at 131 days.13 Claude Opus 4.6 now clocks a 50%-time-horizon of roughly 14.5 hours, meaning it can autonomously complete tasks that would take a skilled human half a working day.14 If this trend continues for 2-4 more years, we’re looking at agents that can reliably execute week-long projects. MIT Technology Review called METR’s time horizon plot “the most misunderstood graph in AI.” The misunderstanding cuts both ways: doomers extrapolate it to imminent catastrophe, skeptics dismiss it as benchmark gaming. The investment-relevant reading is that autonomous task capability is compounding on a steep, consistent curve, and the gap between what agents can do on benchmarks and what they actually do in production is precisely the market we’re investing into.

That gap is real, by the way: METR’s own developer productivity RCT (July 2025) found that experienced open-source developers were 19% slower when using AI coding tools, despite believing they were 20% faster.15 Algorithmic benchmarks overstate real-world performance because they can’t capture code quality, context understanding, and integration complexity. This is the deployment gap, and this is the opportunity.

Tool use is standardizing, but not the way anyone expected. MCP (model context protocol) was supposed to be the universal connector between AI agents and external services, and it has real traction: 17,000+ servers on MCP.so, OAuth-native authentication, and adoption by OpenAI, Google, and Microsoft.16 But MCP isn’t the whole story anymore. Skills (reusable prompt-and-script bundles that encode domain knowledge into agent behavior) have exploded: 96,000+ on SkillsMP and 5,700+ on ClawHub – all built on the SKILL.md standard that emerged from coding agents like Claude Code and Codex CLI.17 Meanwhile, CLIs (command-line interfaces) are emerging as a surprisingly effective third pattern. Agents have been trained to be exceptionally good at using command-line tools, and CLIs handle authentication, structured output, and composability through patterns that have been battle-tested for decades. Karpathy called it publicly: build for agents by exposing functionality via CLI, publishing task-specific skills, and shipping MCP servers. The investment implication is that the “tool use” layer is not a single protocol but an ecosystem of complementary patterns. Skills encode knowledge, MCP provides authenticated access, and CLIs offer execution efficiency. The companies building the orchestration, discovery, and security layers across all three will own the integration tier of the agentic stack.

Context windows expanded to 2M+ tokens. Persistent memory across sessions is now possible. AI can remember what it learned yesterday.

Inference costs collapsed 200x. GPT-4 equivalent capability now costs $0.40 per million tokens versus $20 in 2022.18 DeepSeek pushed this even further, running 90% cheaper than Western providers.

These aren’t independent trends. They’re compounding. AI coworkers are now technically feasible, economically viable, and enterprise-ready. The question is no longer if but how fast enterprises can deploy them.

4. The OpenClaw Moment: What 157K Stars in 60 Days Tells Us About Where AI Is Headed

If you want a single case study that encapsulates the entire AI coworker opportunity and its risks, look at OpenClaw.

OpenClaw (formerly Moltbot, formerly Clawdbot) started as a weekend side project by developer Peter Steinberger: a personal AI assistant that runs locally on your machine and connects to your messaging apps, email, calendar, and file systems to act autonomously on your behalf. Not a chatbot, not a copilot, but an agent that does things for you across the tools you already use.

The reception was extraordinary. OpenClaw hit 100,000 GitHub stars faster than Linux, Kubernetes, or any project in GitHub history. It crossed 157,000 stars within 60 days.19 On January 30, 2026, alone, it gained 34,168 stars in 48 hours.20 The project spawned Moltbook, an AI-only social network where only agents could post, which hit 1.5 million registered agents in five days and drew coverage from Fortune, CNBC, and TechCrunch. Y Combinator’s podcast team showed up in lobster costumes.21 “Claw” became Silicon Valley slang for locally-hosted AI agents.

This is demand signal, not hype signal. People don’t want another chatbot; they want AI that manages their inbox, controls their schedule, organizes their files, and executes multi-step workflows while they do something else. OpenClaw’s value proposition was blunt: “AI that actually does things, not just talks.” That resonated so strongly that OpenAI took notice. On February 14, Steinberger announced he was joining OpenAI, a move widely interpreted as OpenAI’s play to acquire agentic AI talent after their $3B bid for Windsurf (Codeium) fell through.22 The project transitioned to an independent open-source foundation under MIT license, mirroring the governance model of Linux and Kubernetes.

The enthusiasm is the bullish signal. Now here’s the cautionary one.

Within weeks of going viral, SecurityScorecard found over 40,000 exposed OpenClaw instances on the public internet, 63% of them vulnerable to remote code execution.23 Researchers discovered 400+ malicious “skills” on ClawHub (OpenClaw’s marketplace) distributing infostealers, remote access trojans, and backdoors disguised as legitimate automation tools.24 A critical one-click RCE vulnerability (CVE-2026-25253) meant attackers could compromise systems through a single link without the user installing anything. Skills execute with full agent and system permissions, with no sandboxing and no least-privilege access. Users were following YouTube tutorials that never mentioned security, deploying agents on cloud servers with authentication set to “none.”

The most alarming development: Hudson Rock documented the first observed case of an infostealer harvesting an entire AI agent configuration, not just browser passwords but the complete identity, permissions, and API keys of a personal AI agent. That’s a new attack surface that didn’t exist twelve months ago, and infostealers are now targeting AI personas as high-value assets.

This matters for our thesis on three levels.

First, the demand is real and it’s massive. 157K stars in 60 days, OpenAI acquiring the creator, and “claw” entering the tech lexicon as a verb are not indicators of a fad. Consumers and developers are telling us, loudly, that they want persistent AI agents with real autonomy over their digital lives. The enterprise version of this same demand is the AI coworker.

Second, agentic security is not a feature request, it’s a category. When agents have persistent access to email, calendars, financial accounts, and code repositories, the blast radius of a single compromise is an order of magnitude larger than a stolen password. Enterprise buyers will not deploy AI coworkers at scale without permissions frameworks, action-level anomaly detection, skill sandboxing, and audit trails. Every CISO who reads the OpenClaw postmortems becomes a buyer for agentic security tooling.

Third, OpenClaw draws a bright line between consumer-grade agent experiments and enterprise-grade AI coworkers. The difference is infrastructure: identity, access control, policy enforcement, observability, and governance. OpenClaw shipped the agent but not the infrastructure underneath it. That infrastructure layer is exactly where we’re investing.

5. The PE and Credit Warning

The Citrini memo should be a warning sign for PE-backed SaaS with weak differentiation and friction-based economics. If your portfolio company’s pricing power depends on being embedded in a workflow that an AI agent can route around, your margins are on borrowed time.

The selective opportunity in PE and credit: buy-and-build modernization plays where AI compresses COGS and SG&A, and distressed recurring revenue assets deeply embedded in workflows that agents will need to run through, not around. Avoid aspirational ARR quality and unsecured exposure if growth stalls.

6. Services as a Wedge

Implementation friction is real, which makes transformation services investable: mapping processes, instrumenting data, integrating systems, training organizations, and building governance layers. These capabilities scale if paired with product and repeatable playbooks. Enterprises want outcomes, not tools, and the companies that combine software and delivery to implement coworkers and redesign processes will compound.

What We’re Looking For

Our current investment filtering criteria across this thesis:

Team. AI technical depth plus domain expertise. Exceptional founders with strong founder-market fit over traction alone. In a market moving this fast, the team’s ability to navigate rapid shifts matters more than any current metric.

Data Moat Quality. As AI models commoditize, unique high-quality data with continuous feedback loops becomes the primary differentiator. If your data advantage can be replicated by a competitor with a bigger API budget, it’s not a moat.

Workflow Embedding Depth. Deep integration into daily workflows creates switching costs. Products that are indispensable to users’ daily work are defensible; products that sit on top of workflows are features waiting to be absorbed.

Progressive Defensibility. Technical moats alone don’t last in AI, and they may not even exist anymore. We’re looking for clear plans to layer in defenses over time: data accumulation, network effects, regulatory positioning, distribution lock-in.

Economic Value & Business Model Innovation. GTM and pricing that support AI economics for both the company and its customers. Sustainable unit economics are not optional.

We’re actively seeking Seed to Series C investments in AI coworker applications across engineering, sales, finance, and support, as well as enabling infrastructure in memory, orchestration, observability, and agentic security. Typical check sizes range from $5M to $50M.

Bottom Line

The Citrini piece is a useful stress test, not a prediction. The market appears to be treating a long-term structural shift as an imminent crisis, and that creates dislocation for investors willing to look past the noise.

The structural shift is real. METR’s data shows capability doubling every 4-7 months with no sign of deceleration. AI coworkers will change how enterprises operate, how work gets organized, and where value accrues. But it will follow enterprise timelines, not Twitter timelines.

If anything, the panic reinforces the thesis: AI coworkers and the enabling infrastructure are where durable value gets built. The 95% pilot failure rate isn’t evidence that AI doesn’t work — it’s the problem we’re investing to solve. OpenClaw showed us what happens when powerful agents ship without enterprise-grade infrastructure underneath them. The companies that bridge the gap from pilot to production, that give enterprises memory, orchestration, observability, security, and compliance, will capture outsized value in the decade ahead.

That’s where we’re putting capital, and that’s where we think you should be looking too.

—

Yan-David “Yanda” Erlich is a General Partner at B Capital, where he leads the firm’s AI co-worker and infrastructure investment thesis through Growth Fund IV and Ascent Fund III. Previously COO & CRO at Weights & Biases, GP at Coatue Ventures, and a 4x venture-backed founder. Reach him at [email protected].

Raj Ganguly is a Co-Founder and Co-CEO of B Capital. He is focused on connecting extraordinary entrepreneurs with the people, capital and support needed to drive exponential growth. In less than a decade and under Raj’s leadership, B Capital has grown into a global firm with 9 locations, 100+ employees and over $9B+ in assets under management.

LEGAL DISCLAIMER

All information is as of 2.25.2026 and subject to change. This content is a high-level overview and for informational purposes only. Certain statements reflected herein reflect the subjective opinions and views of B Capital personnel. Such statements cannot be independently verified and are subject to change. The companies discussed herein are not portfolio companies of B Capital. It should not be assumed that any companies identified and discussed herein were or will be profitable. Past performance is not indicative of future results. The information herein does not constitute or form part of an offer to issue or sell, or a solicitation of an offer to subscribe or buy, any securities or other financial instruments, nor does it constitute a financial promotion, investment advice or an inducement or incitement to participate in any product, offering or investment. Much of the relevant information is derived directly from various sources which B Capital believes to be reliable, but without independent verification. This information is provided for reference only and the companies described herein may not be representative of all relevant companies or B Capital investments. You should not rely upon this information to form the definitive basis for any decision, contract, commitment or action.

SOURCE

- Bloomberg, “Taleb, Citrini Fuel AI Scare Trade as IBM Drops Most in 25 Years,” February 23, 2026. https://www.bloomberg.com/news/articles/2026-02-23/software-payments-shares-tumble-after-citrini-post-on-ai-risks

- Citrini Research, “The 2028 Global Intelligence Crisis,” February 2026. https://www.citriniresearch.com/p/2028gic

- McKinsey Global Institute, “The Economic Potential of Generative AI: The Next Productivity Frontier,” June 2023. https://www.mckinsey.com/capabilities/tech-and-ai/our-insights/the-economic-potential-of-generative-ai-the-next-productivity-frontier

- MIT NANDA Initiative, “The GenAI Divide: State of AI in Business 2025,” August 2025. https://finance.yahoo.com/news/mit-report-95-generative-ai-105412686.html

- MIT NANDA Initiative, “The GenAI Divide: State of AI in Business 2025,” August 2025. https://finance.yahoo.com/news/mit-report-95-generative-ai-105412686.html

- METR, “Measuring the Impact of Early-2025 AI on Experienced Open-Source Developer Productivity,” July 10, 2025. https://metr.org/blog/2025-07-10-early-2025-ai-experienced-os-dev-study/

- VentureBeat, “OpenAI says ChatGPT now has 200M users,” August 2024. https://venturebeat.com/ai/openai-says-chatgpt-now-has-200m-users

- Microsoft, “2025 Work Trend Index: The Year the Frontier Firm Is Born,” April 2025. https://www.microsoft.com/en-us/worklab/work-trend-index/2025-the-year-the-frontier-firm-is-born

- Stanford Human-Centered AI Institute, AI Index Report 2025, April 2025. https://hai.stanford.edu/ai-index/2025-ai-index-report/economy

- IDC (via Microsoft), “Generative AI Delivering Substantial ROI,” January 2025. https://news.microsoft.com/en-xm/2025/01/14/generative-ai-delivering-substantial-roi-to-businesses-integrating-the-technology-across-operations-microsoft-sponsored-idc-report/

- Gartner, “Intelligent Agents in AI,” 2025. https://www.gartner.com/en/articles/intelligent-agent-in-ai

- METR, “Measuring AI Ability to Complete Long Tasks,” March 19, 2025. https://metr.org/blog/2025-03-19-measuring-ai-ability-to-complete-long-tasks/

- METR, “Time Horizon 1.1,” January 29, 2026. https://metr.org/blog/2026-1-29-time-horizon-1-1/

- METR, “Time Horizons Live Dashboard,” February 2026. https://metr.org/time-horizons

- METR, “Measuring the Impact of Early-2025 AI on Experienced Open-Source Developer Productivity,” July 10, 2025. https://metr.org/blog/2025-07-10-early-2025-ai-experienced-os-dev-study/

- Anthropic, “Donating the Model Context Protocol to the Linux Foundation,” December 2025. https://www.anthropic.com/news/donating-the-model-context-protocol-and-establishing-of-the-agentic-ai-foundation

- SkillsMP, “Agent Skills Marketplace,” accessed February 2026. https://skillsmp.com Dev.to (Haoyang Pang), “Every AI Agent Skills Platform You Need to Know in 2026,” February 2026. https://dev.to/haoyang_pang_a9f08cdb0b6c/every-ai-agent-skills-platform-you-need-to-know-in-2026-4alg

- Introl, “Inference Unit Economics: The True Cost Per Million Tokens,” December 2025. https://introl.com/blog/inference-unit-economics-true-cost-per-million-tokens-guide

- Immersive Labs, “OpenClaw Security Review: AI Agent or Malware Risk,” February 2026. https://www.immersivelabs.com/resources/c7-blog/openclaw-what-you-need-to-know-before-it-claws-its-way-into-your-organization

- Immersive Labs, “OpenClaw Security Review: AI Agent or Malware Risk,” February 2026. https://www.immersivelabs.com/resources/c7-blog/openclaw-what-you-need-to-know-before-it-claws-its-way-into-your-organization

- Axios, “Moltbook shows rapid demand for AI agents. The security world isn’t ready,” February 3, 2026. https://www.axios.com/2026/02/03/moltbook-openclaw-security-threats

- TechCrunch, “Windsurf’s CEO goes to Google; OpenAI’s acquisition falls apart,” July 11, 2025. https://techcrunch.com/2025/07/11/windsurfs-ceo-goes-to-google-openais-acquisition-falls-apart/

- SecurityScorecard, “How Exposed OpenClaw Deployments Turn Agentic AI Into an Attack Surface,” February 2026. https://securityscorecard.com/blog/how-exposed-openclaw-deployments-turn-agentic-ai-into-an-attack-surface/

- SecurityScorecard, “How Exposed OpenClaw Deployments Turn Agentic AI Into an Attack Surface,” February 2026. https://securityscorecard.com/blog/how-exposed-openclaw-deployments-turn-agentic-ai-into-an-attack-surface/

The post The AI Labor Crisis </br>Isn’t Coming in 2028. </br>The Investment </br>Opportunity Is Here Now. appeared first on B Capital.

]]>The post Translating Code When Failure is Not an Option: Why We Invested in </br>Code Metal appeared first on B Capital.

]]>

B Capital is thrilled to partner with Code Metal as the company scales verifiable code translation for mission-critical systems.

Automated AI at a Higher Standard

AI has transformed how we write software. Coding copilots and platforms now produce capable code and meaningfully accelerate developer workflows. Much of the recent discourse across our industry and society centers on the massive productivity unlock, and manifold downstream effects, of advanced AI in coding. It is good and fast. For many traditional applications, this is enough.

But in domains like defense and aerospace, “good enough” is not good enough. In these environments, code runs satellites, jets, and edge devices deployed in contested or safety-critical settings. Regulation and compliance standards are high and uncompromising. A hallucinated function, unchecked edge case, or subtle memory bug can threaten national security, infrastructure, and, most gravely, human life.

The Defense Imperative

The Pentagon recently asserted that “AI-enabled capability development will re-define the character of military affairs over the next decade,” with defense budgets designed accordingly. Other governments similarly prioritize AI. The leading international summit on AI in the military domain acknowledges that AI “can and should contribute to international peace and security… help reduce the exposure of personnel to danger, improve the protection of civilians, and support more timely and better-informed decision-making.” As states intensify focus on AI, the need for verified, trustworthy code translation and edge computing has never been more urgent.

Yet policy ambition outpaces tooling. Code Metal has emerged as one of the fastest-growing defense technology companies precisely because it solves a severe and underappreciated problem: translating and optimizing massive codebases across hardware architectures and programming paradigms, with near-zero tolerance for error.

Code Metal’s Solution

Code Metal unites high-level reasoning with low-level verification to produce tested, optimized, and compliant code ready to deploy. This hybrid approach creates a translation and optimization engine reliable enough for defense and industrial customers, and engineers trust it in production.

Consider the challenge facing defense systems engineers today. When satellite communications protocols must be updated or ported to new hardware, or when legacy C++ defense systems must be modernized into memory-safe Rust to prevent cybersecurity vulnerabilities, the work is painstaking, manual, and slow. Existing AI coding tools offer speed, but they are not built for deployment in mission-critical environments.

Code Metal automates this translation with verification guarantees these systems demand—accelerating time-to-market, enabling portability across chips and devices, and powering rapid codebase modernization.

Growth at an Inflection Point

Code Metal has built remarkable commercial momentum in just two-and-a-half years since founding, winning customers including the U.S. Air Force, L3Harris, Toshiba, and RTX. The company is hitting hypergrowth, validating both the severity of the problem and the elegance of its solution.

While Code Metal’s initial work centered on defense applications, the platform also serves many enterprise domains. In telecommunications, companies are eager to translate high-level MATLAB prototypes into production-ready, edge-deployable code in days instead of months. Semiconductor manufacturers must rapidly translate open-source CUDA implementations into frameworks compatible with their chips. In automotive, industrial equipment, and other regulated spaces, the need recurs: moving prototypes to production, porting code between devices, and modernizing legacy systems into memory-safe languages—quickly, securely, and reliably. Code Metal enables all of this.

B Capital’s Verified Intelligence Thesis

Code Metal is B Capital’s latest investment in an AI thesis we’ve developed: as AI systems embed more deeply into critical infrastructure and decision-making, the standard must rise from plausible outputs to provable correctness. We need verified intelligence.

With our investments in Axiom (frontier mathematical reasoning, theorem proving, and discovery), Goodfire (interpretability research and systems design), and now Code Metal, we’ve put this conviction to work. Each portfolio company attacks a different surface of the problem. Together, they reflect our belief that trust in AI behaviors and outputs is essential in high-stakes domains.

Our verified intelligence thesis is deliberate and high conviction. It also forms just one part of a broader landscape we watch closely. We remain energized by frontier research directions that also engage with partially understood, emergent capabilities of large-scale AI. For example, we’re fascinated by forward-dynamics world models that enable agents to learn by imagining future scenarios, as well as continual learning architectures and hybrid systems that emulate the sophisticated hierarchies of human memory. The path to recursive self-improvement and superintelligence is neither straight nor settled, and we plan to invest across its most consequential turns. Still, we see an acute, underserved need for verified intelligence in massive markets, sometimes with human lives on the line. We’re excited to partner with Code Metal as they meet this need.

An Exceptional Team

Code Metal’s leaders are second-time founders with deep experience across aerospace, defense, and advanced AI systems. CEO Peter Morales previously developed AI reasoning systems for the F-35 and was a founding member of the AI Technology Group at MIT Lincoln Laboratory. CTO Alex Showalter-Bucher, also a Lincoln Lab alumnus, brings more than a decade of experience across defense agencies and technical leadership roles. Peter and Alex have felt the pain of translating and verifying mission-critical systems and know what it takes to earn trust in these markets.

The company has assembled an uncommonly strong bench of researchers and engineers from organizations including Intel, NASA, MathWorks, Lightmatter, and OpenAI. The team’s expertise bridges compilers, formal methods, AI systems, and high-stakes deployment environments. They’ve also proven they can sell and scale, building a winning culture.

Our Investment

B Capital invested in Code Metal’s Series B. We are proud to partner with the entire Code Metal team on this journey. Learn more at codemetal.ai or in WIRED here.

LEGAL DISCLAIMER

All information is as of 2.18.2026 and subject to change. The investments discussed herein are portfolio companies of B Capital; however, such investments do not represent all B Capital investments. Certain statements reflected herein reflect the subjective opinions and views of B Capital personnel. Such statements cannot be independently verified and are subject to change. There can be no assurance any such trends or correlations will continue in the future. Reference to third-party firms or businesses does not imply affiliation with or endorsement by such firms or businesses. It should not be assumed that any investments or companies identified and discussed herein were or will be profitable. Past performance is not indicative of future results. The information herein does not constitute or form part of an offer to issue or sell, or a solicitation of an offer to subscribe or buy, any securities or other financial instruments, nor does it constitute a financial promotion, investment advice or an inducement or incitement to participate in any product, offering or investment. Much of the relevant information is derived directly from various sources which B Capital believes to be reliable, but without independent verification. This information is provided for reference only and the companies described herein may not be representative of all relevant companies or B Capital investments. You should not rely upon this information to form the definitive basis for any decision, contract, commitment or action.

The post Translating Code When Failure is Not an Option: Why We Invested in </br>Code Metal appeared first on B Capital.

]]>The post Why We Invested </br>in JetZero appeared first on B Capital.

]]>

B Capital is proud to partner with JetZero, a next-generation aircraft manufacturer redefining aviation through breakthrough design and best-in-class aerodynamics. The company’s blended-wing-body aircraft, designed for commercial, cargo and government use cases, can deliver up to a 50% improvement in fuel efficiency compared to today’s aircraft.1

JetZero unlocks its step-change efficiency gains using technologies that airlines, regulators and airports can adopt in the near-future, without waiting decades for new systems or infrastructure. This approach directly addresses airlines’ largest cost driver while fitting seamlessly within today’s airline and airport infrastructure. The first commercial delivery is forecast for the early 2030s, with early flight demonstrations as soon as 2027.

For partner airlines such as United Airlines, Delta Air Lines and Alaska Airlines, this will translate into lower operating costs and an improved passenger experience. The same platform also supports highly efficient cargo configurations and meaningful advantages for transport and tanker missions, including aerial refueling, thereby likely expanding JetZero’s addressable market beyond commercial aviation.

Aviation Faces Structural Constraints

For decades, progress in aviation efficiency has been driven by incremental improvements. Advancements in engines, materials and winglets delivered meaningful efficiency gains in prior generations, but additional optimization is yielding diminishing marginal returns. As those returns shrink, meaningful progress requires changes at the aircraft architecture level rather than continued refinement of the tube-and-wing, which has remained largely unchanged for nearly a century.

That legacy design is increasingly misaligned with industry realities: jet fuel is the largest operating cost for airlines, while decarbonization pressure continues to intensify. These challenges are compounded by a Boeing-Airbus duopoly in which unprecedented order backlogs, risk aversion and legacy incentives limit the ability of incumbents to launch disruptive new aircraft programs.

Meanwhile, global demand for air travel continues to grow while the levers available to improve economics and reduce emissions are becoming constrained. Many proposed solutions focus on future propulsion systems that require new infrastructure, new certification pathways or fundamental changes to airline operations. While potentially promising in the long-term, these approaches do little to address the near-term efficiency gap facing the global fleet.

Compounding this challenge is the gap between single-aisle and wide-body aircraft. Many medium-haul routes fall between what narrow-bodies can serve efficiently and what wide-bodies are designed for. Narrow-bodies often lack the required payload, range or seat economics, while wide-bodies are oversized and inefficient for these flights. As a result, airlines are forced to deploy aircraft that are poorly matched to demand, driving excess fuel burn and higher operating costs. A 2018 ICF study estimated that the “missing middle” represents one of the largest underserved segments in global aviation, spanning roughly 20–30% of global airline routes and representing hundreds of billions of dollars in potential market opportunity, reflecting a structural gap that continues to persist across global fleets today.2

JetZero’s Approach: Aerodynamics Over New Propulsion

The aviation industry is pursuing multiple paths to improve sustainability and economics. Sustainable aviation fuel is a drop-in solution but remains supply constrained and more expensive than conventional jet fuel. Hydrogen and electric propulsion promise deep emissions reductions but require new aircraft architectures, new infrastructure and new regulatory frameworks that will take decades to mature and often face significant range or payload limitations.

JetZero takes a different approach, focusing on the single largest efficiency lever available today: aerodynamics. By fundamentally improving how lift is generated and drag is reduced, JetZero delivers immediate, material efficiency gains while remaining compatible with existing engines, infrastructure and regulatory pathways. These gains compound across the system, reducing fuel burn, structural weight and thrust requirements.

JetZero’s Z4 aircraft is purpose-built for today’s market. With seating capacity of 200-250 passengers and ranges spanning domestic, transatlantic and select long-haul routes, the Z4 is designed as a direct replacement for aging 757 and 767 fleets, while outperforming both modern narrow-body and wide-body alternatives on cost per seat.3 That same efficiency profile translates directly to cargo operations, improving payload and fuel economics on medium- and long-haul freight routes.

Crucially, JetZero achieves these gains using existing engine technology and certified systems. Rather than waiting on hydrogen, batteries or regulatory reinvention, the aircraft is designed to operate within current FAA certification and airline operating frameworks. Advances in digital design tools, modern composites and certified off-the-shelf systems now make large-scale blended-wing-body aircraft manufacturable and certifiable for the first time.

We believe the underlying technology is materially de-risked. The design builds on more than 30 years of government and industry research and over $1B in cumulative investment across NASA, the U.S. Department of War and commercial aerospace programs.4,5 The program is now approaching a major de-risking milestone, with a full-scale non-commercial demonstrator and first flight planned in the near term, reducing remaining technical and execution risk.

Built for Real-World Operations and Execution

JetZero is designing the aircraft to fit within existing airport infrastructure and airline operations, without requiring new gates, jet bridges or specialized ground equipment. The blended-wing-body layout enables wider seats, higher ceilings and configurable cabin zones while potentially supporting faster turnaround times and more efficient boarding at congested hubs.

For passengers, this translates to a meaningfully differentiated experience: more spacious seating across all classes, wider aisles, reduced boarding congestion, improved overhead storage and cabin layouts that support quieter, more comfortable travel. Unlike incremental cabin retrofits, these improvements are structural, not cosmetic.

The development program combines aerospace rigor with a modern execution mindset. JetZero is vertically integrated where it matters most, owning the aircraft structure and flight control systems and sourcing certified components from established suppliers to reduce certification risk and non-recurring engineering. This execution model prioritizes capital deployment toward the most complex technical areas, supporting a more capital-efficient path from development through certification and into production.

Strong Validation from Partners

JetZero has secured meaningful commercial validation from leading airlines that are committing both capital and aircraft demand. United Airlines has invested in the company and entered into a conditional purchase agreement for 100 aircraft, with options for an additional 100.6 Alaska Airlines has also invested and secured early production positions, while Delta Air Lines is deeply engaged across aircraft design, operations and other contributions.7,8 Together, these partnerships reflect airline confidence not only in the aircraft’s economics but in JetZero’s ability to execute a clean-sheet program at scale.

The company also maintains deep connectivity with the U.S. Air Force on a full-scale demonstrator program supported by over $200 million in non-dilutive funding.9 For the Air Force, the platform is expected to deliver extended range, increased payload flexibility and lower fuel and logistics costs across transport and tanker missions. These capabilities address critical limitations of the aging tanker fleet.

In parallel, JetZero has secured significant non-dilutive state-level support from North Carolina tied to manufacturing and workforce development, further improving the capital efficiency of the program.10

The Right Team to Build a Market-Defining Company

Building a clean-sheet commercial aircraft requires rare depth across engineering, certification and industrial execution. JetZero’s leadership team blends experience scaling complex hardware programs with deep commercial aerospace expertise, including senior roles at Tesla, BETA Technologies, Boeing, SpaceX, Gulfstream and Northrop Grumman.

JetZero is led by CEO and co-founder Tom O’Leary, who previously held senior leadership roles at Tesla and served as COO at aerospace startup BETA Technologies, bringing an execution-driven operating mindset shaped by scaling complex hardware programs alongside a strong focus on customer outcomes and disciplined program delivery.

A Structural Shift in Commercial Aviation

We believe the aviation industry is at an inflection point. Passenger demand is growing, fuel and emissions constraints are tightening and incumbent OEMs face record backlogs with limited incentive to launch disruptive new programs. Airlines, regulators and governments are aligned around the need for real efficiency gains that can be delivered within current operational and regulatory frameworks.

By delivering a fundamentally more efficient aircraft using proven technologies, optimized for airline economics and flexible across passenger, cargo and government applications, JetZero is positioned to reshape a core segment of aviation. It is redefining what is possible within the constraints that actually matter.

At B Capital, we focus on companies that enable step-change improvements in critical infrastructure systems. JetZero represents that opportunity in aviation. We are proud to invest in JetZero as it advances toward demonstration flight and commercial service, and we look forward to supporting the company as it works to deliver a more efficient, resilient and competitive aviation industry.

The investment was led by Jeff Johnson (General Partner, Head of Energy Tech at B Capital), alongside Karly Wentz (Partner, Energy Tech), with investment team members Nate Johnson and Eric Brook.

LEGAL DISCLAIMER

All information is as of 1.5.2026 and subject to change. The investment discussed herein is a portfolio company of B Capital; however, such investment does not represent all B Capital investments. Certain statements reflected herein reflect the subjective opinions and views of B Capital personnel. Such statements cannot be independently verified and are subject to change. Reference to third-party firms or businesses does not imply affiliation with or endorsement by such firms or businesses. It should not be assumed that any investments or companies identified and discussed herein were or will be profitable. Past performance is not indicative of future results. The information herein does not constitute or form part of an offer to issue or sell, or a solicitation of an offer to subscribe or buy, any securities or other financial instruments, nor does it constitute a financial promotion, investment advice or an inducement or incitement to participate in any product, offering or investment. Much of the relevant information is derived directly from various sources which B Capital believes to be reliable, but without independent verification. This information is provided for reference only and the companies described herein may not be representative of all relevant companies or B Capital investments. You should not rely upon this information to form the definitive basis for any decision, contract, commitment or action.

SOURCE

- Alaska Airlines, Alaska Airlines announces investment in JetZero to propel innovative aircraft technology and design, August 13, 2024

- Boeing, Commercial Market Outlook, 2025-2044, 2025

- Delta Air Lines, Delta, JetZero partner to design the future of air travel by advancing first-of-its-kind, 50% more fuel-efficient aircraft for domestic and international routes, March 5, 2025

- EESI, S. and International Commitments to Tackle Commercial Aviation Emissions, January 31, 2025

- European Federation for Transport and Environment, The aviation industry and the stall in aircraft innovation, June 18, 2025

- ICF, Making the Case for a Middle of the Market Aircraft, 2018

- International Air Transport Association, Net zero 2050: new aircraft technology, December 2025

- International Air Transport Association, Reviving the Commercial Aircraft Supply Chain, October 2025

- International Air Transport Association, Unveiling the biggest airline costs, June 4, 2024

- International Council on Clean Transportation, Fuel burn of new commercial jet aircraft: 1960 to 2024, January 2025

- JetZero, United Invests in Next Generation Blended Wing Aircraft Start-Up JetZero, April 24, 2025

- McKinsey & Company, Fuel efficiency: Why airlines need to switch to more ambitious measures, March 2022

- Politico, Why electric aircraft may never be the next big thing, January 24, 2024

- S&P Global, Airlines see relief with $86 jet fuel, SAF costs hinder sustainability: IATA chief, June 2, 2025

- Scientific American, Hydrogen-Powered Airplanes Face 5 Big Challenges, May 4, 2024

- Seabury Capital Group, JetZero CEO Lands A Silicon Valley Mindset at U.S. Chamber of Commerce Global Aviation Summit, September 17, 2025

- University of Illinois, Grainger College of Engineering, Blended wing brings air travel greater range, fuel efficiency, and comfort, June 26, 2024

The post Why We Invested </br>in JetZero appeared first on B Capital.

]]>The post Where AI Value Will Be Built Next appeared first on B Capital.

]]>By: Yan-David “Yanda” Erlich, General Partner, B Capital

The Model Isn’t the Moat Anymore

For two years, “AI progress” meant “the model got better.” That era is ending.

The evidence is stark: according to MIT’s State of AI in Business 2025 report, only 5% of enterprise GenAI pilots achieve measurable P&L impact.1 S&P Global found that 42% of companies abandoned most AI initiatives in 2025, up from 17% in 2024.2 The average organization scrapped 46% of AI proof-of-concepts before reaching production.2

These aren’t bad models. They’re bad environments.

Model capability is still improving, but for most enterprises it is no longer the limiting constraint. What matters now is everything around the model: integration, governance, distribution, measurement and the ability to learn in production without breaking trust.

One constraint is consistently underweighted in almost every AI strategy deck I see: organizational fit.

If AI is going to deliver durable value, it must function less like a tool and more like a coworker. One that collaborates with humans, operates inside real team workflows and carries context over time.

The winners won’t be the teams with the “smartest” model. They’ll be the teams with the best environment to deploy, trust and continuously improve AI.

The Coworker vs. Tool Distinction

This isn’t semantics. The difference between AI-as-tool and AI-as-coworker determines whether value compounds or collapses.

A tool waits to be invoked. It processes inputs and returns outputs. It has no memory of your organization, no understanding of how your team actually works, no awareness of who should approve what. Every session starts from zero.

A coworker maintains context across interactions. It knows your domain, your team’s terminology and your approval workflows. It can be delegated to, supervised and held accountable. It gets better at its job over time because it learns from outcomes, not just prompts.

The MIT data validates this distinction. Their research found that vendor-built solutions succeed at a 67% rate, while internal builds fail at a 67% rate.1 Why? Vendors who win are the ones building coworker-like systems with deep workflow integration, not generic tools bolted onto existing processes.

Consider what a new human hire experiences: onboarding, permissions, a manager, feedback loops, access to institutional knowledge, clear escalation paths. We don’t hand them a keyboard and expect productivity on day one. Yet that’s exactly how most enterprises deploy AI.

The Shift: From Capability Race to Execution Advantage

In practice, this shift shows up when AI performs well in pilots but fails to survive first contact with real workflows. Buyers are no longer asking “Does it ace a benchmark?” They’re asking a different class of questions altogether:

- Integration: Can it plug into my workflows without rewriting my org chart?

- Governance: Can it touch sensitive data without creating security, privacy or compliance blowback?

- Accountability: Who is responsible when it’s wrong?

- Measurement: Can we evaluate it in production and improve it safely?

- Scale: Can we roll it out to thousands of users without adoption collapsing?

- Collaboration: Can it work with my team like a competent new hire, or does it just generate text?

Those are not model questions. Those are execution questions.

And execution compounds. Deployment creates feedback. Feedback enables improvement. Improvement drives adoption. Adoption earns deeper integration. That loop becomes the moat.

McKinsey’s 2025 AI survey confirms this pattern: organizations reporting “significant” financial returns are twice as likely to have redesigned end-to-end workflows before selecting models.3 The execution advantage emerges less from initial capability and more from the ability to learn safely in production over time.

5 Ingredients of AI Execution Advantage

By “environment,” I mean the structural conditions that let AI compound in production. These conditions determine whether improvement accumulates or stalls after deployment.

1. Integration Surface

How quickly AI can ship into real workflows.

Value compounds fastest when AI lives inside the system of record, removes steps, reduces cycle time and tightens feedback loops. The MIT research shows that ROI is lowest in sales and marketing pilots, where most GenAI budgets are concentrated, and highest in back-office automation where integration is deepest.1

The integration question isn’t “can we connect via API?” It’s “can we embed deeply enough to observe outcomes and improve?”

2. Data Rights and Governance

What the system can legally and operationally learn from in production.

If you can’t observe outcomes, you can’t improve. If you can’t improve, you don’t compound. Companies that solve this and can learn from production without violating governance will outperform those that can’t.

3. Distribution and Procurement

How deployments become default, not optional.

AI doesn’t win by demos. It wins by rollout. PwC’s 2025 survey found that 79% of organizations have adopted AI agents at some level, but only 35% report broad adoption, and 68% say half or fewer employees interact with agents in their daily work.4 The gap between “we have AI” and “AI is how we work” is primarily a distribution problem.

4. Production Learning Loop

Evaluation, monitoring and improvement without breaking trust.

Real-world evaluation tied to business KPIs. Monitoring for drift and failure modes. Human routing for uncertainty. Continuous improvement with governance guardrails. Gartner predicts that 30% of GenAI projects will be abandoned after proof-of-concept by the end of 2025.5 Not because the technology failed, but because organizations couldn’t build the infrastructure to improve safely in production.

Organizational Fit

AI must function as a coworker, not a tool.

This is the missing pillar that most AI strategies ignore entirely. Enterprises are networks of roles, permissions, incentives and handoffs. “Agentic” only works when AI behaves like a well-scoped teammate: collaboration mechanics inside existing workflows, identity and least-privilege permissions, durable memory and context and on-the-job learning that operates without violating governance.

When I evaluate AI companies, I ask: “Would you hire this system as a junior employee?” If the answer requires caveats about supervision, permissions and trust boundaries, you’ve identified the product work that matters.

Where AI Value Compounds Fastest

Value concentrates where execution environments support compounding

Instrumented digital workflows where shipping is fast and telemetry is rich. Software development, customer support, back-office operations. Anywhere outcomes can be observed quickly and iteration is cheap.

High-volume operational workflows with clear accountability and measurable outcomes. Claims processing, compliance review, financial operations. Environments where “better” is quantifiable and feedback is continuous.

Physical operations with telemetry and hard KPIs. Manufacturing, logistics, healthcare delivery. Domains where the system of record captures reality and improvement is directly measurable.

Generic assistants without durable data rights, distribution leverage and a compounding learning loop get competed down to commodity margins.

What This Means for Founders

Markets are not just industries. They are execution environments. This favors teams that optimize for compounding environments over early polish or surface-level performance.

Wedge into the system of record. Don’t build alongside the workflow. Become the workflow. The difference between “we integrate with Salesforce” and “we are where deals get done” is the difference between tool and coworker.

Secure data rights early. The legal and operational ability to learn from production is a moat. Companies that negotiate this upfront, while offering clear value exchange, will outperform those who treat it as a Phase 2 problem.

Design for procurement from day one. Audit logs, SSO, role-based access and compliance certifications. These aren’t features; they’re prerequisites for the environments where AI compounds.

Treat evaluation as product. If you can’t show measurable improvement on business KPIs, you can’t justify continued investment.

Build the AI coworker layer. Collaboration, identity, permissions, memory and handoffs. This is the unsexy work that separates pilots from production systems.

Environments that support compounding often look weaker early yet outperform over time. This allows founders to look wrong early and still be right in the long run.

What This Means for Enterprises

Buying AI like ordinary software and expecting it to behave like ordinary software does not work. AI systems improve only when they are treated as production systems with owners, feedback and failure modes.

Establish an AI operating model. Clear owners, defined accountability and incident response. Who is responsible when the AI makes a mistake? If you can’t answer this question, you’re not ready for production.

Tie AI performance to business KPIs. Not accuracy metrics, not user satisfaction scores. Actual business outcomes: revenue, cost, cycle time and error rates.

Reduce fragmentation where learning loops need consistency. Every team using a different AI tool means every team learning in isolation. Consolidation isn’t about cost savings; it’s about compounding.

Treat AI coworker fit as a first-class requirement. When evaluating vendors, ask: “How does this integrate with how my team actually works?” Not how it works in a demo. How it works in your environment, with your permissions, your approval flows and your existing tools.

What This Means for Investors

Model quality is no longer the primary diligence question. Instead, evaluate:

Ownership of the integration surface. Does the company control the system of record, or are they dependent on someone else’s platform?

Durable data rights and credible governance. Can they legally and operationally learn from production? Is their data strategy a moat or a liability?

A scalable distribution path. Can they reach thousands of users without a proportional increase in sales and support costs?

Evidence of a production learning loop. Are they improving from deployment, or shipping static models?

A credible path to AI coworker fit. Can they function inside real enterprise environments with real permissions and real accountability?

We believe the best AI investments right now are companies building execution infrastructure, not model capability alone. The model layer is commoditizing; the execution layer is where durable value will be built.

How This Shows Up in Our Portfolio

This framework has shaped our investing strategy for some time. A few examples:

Perplexity: Enterprise knowledge work is an execution environment problem. Perplexity’s enterprise offering is explicitly about deploying AI into organizational context: collaboration in Spaces, answers from organizational apps and files, enterprise permissioning, auditability and “no training on your data.” This is governance, distribution and coworker-fit working together in production.

Unblocked: A literal AI coworker for engineering teams. Unblocked plugs into the tools engineers already use, connects code, documentation and conversations, supplies shared team context that makes other AI coding tools more effective. Enterprise fit is table stakes: SSO, RBAC, audit logs and security posture designed for production.

Goodfire: If you care about production reliability, you eventually care about controlling behavior, not just prompting it. Goodfire is building interpretability tooling that surfaces failure modes, enables behavior design and supports durable fixes. This maps directly to the production learning loop and governance required for AI systems to improve safely.

Axiom: In domains where correctness is existential, value shifts toward systems that can reason rigorously and be evaluated against hard truth. Axiom’s focus on an AI mathematician is a wedge into verifiability-first reasoning. It’s upstream capability in service of downstream production requirements.

Where Advantage Compounds

For the next decade, the biggest AI outcomes will not come from “the model got better.”

They will likely come from environments where AI can be deployed, trusted, measured and improved continuously inside real workflows. The environments we choose to build in will determine which AI systems endure.

The data is already pointing the way: 95% of pilots fail not because AI doesn’t work, but because organizations haven’t built the necessary working environment.1 The 5% that succeed share common characteristics: deep workflow integration, clear governance, production learning loops and organizational fit.1

Capability is table stakes. Execution advantage is the moat.

The question for founders, enterprises and investors isn’t “which model is best?” It’s “which environments support compounding?”

Build there, and if you’re already building there, I’d love to talk to you.

—

Yan-David “Yanda” Erlich is a General Partner at B Capital, where he focuses on AI infrastructure and AI coworker investments. Previously, he was COO & CRO at Weights & Biases and a GP at Coatue.

LEGAL DISCLAIMER

All information is as of 1.21.2026 and subject to change. This content is a high-level overview and for informational purposes only. Certain statements reflected herein reflect the subjective opinions and views of B Capital personnel. Such statements cannot be independently verified and are subject to change. The investments discussed herein are portfolio companies of B Capital; however, such investments do not represent all B Capital investments. It should not be assumed that any investments or companies identified and discussed herein were or will be profitable. Past performance is not indicative of future results. The information herein does not constitute or form part of an offer to issue or sell, or a solicitation of an offer to subscribe or buy, any securities or other financial instruments, nor does it constitute a financial promotion, investment advice or an inducement or incitement to participate in any product, offering or investment. Much of the relevant information is derived directly from various sources which B Capital believes to be reliable, but without independent verification. This information is provided for reference only and the companies described herein may not be representative of all relevant companies or B Capital investments. You should not rely upon this information to form the definitive basis for any decision, contract, commitment or action.

SOURCE

- MIT Sloan Management Review and Boston Consulting Group, “The State of AI in Business 2025,” 2025. https://mlq.ai/media/quarterly_decks/v0.1_State_of_AI_in_Business_2025_Report.pdf

- S&P Global Market Intelligence, “Generative AI Shows Rapid Growth but Yields Mixed Results,” October 2025. https://www.spglobal.com/market-intelligence/en/news-insights/research/2025/10/generative-ai-shows-rapid-growth-but-yields-mixed-results

- McKinsey & Company, “The State of AI in 2025: Agents, Innovation, and Transformation,” 2025. https://www.mckinsey.com/capabilities/quantumblack/our-insights/the-state-of-ai

- PwC AI Agent Survey, “AI Agents and Enterprise Adoption,” May 2025. https://www.pwc.com/us/en/tech-effect/ai-analytics/ai-agent-survey.html

- Gartner, “Gartner Predicts 30% of Generative AI Projects Will Be Abandoned After Proof of Concept by End of 2025,” July 29, 2024. https://www.gartner.com/en/newsroom/press-releases/2024-07-29-gartner-predicts-30-percent-of-generative-ai-projects-will-be-abandoned-after-proof-of-concept-by-end-of-2025

The post Where AI Value Will Be Built Next appeared first on B Capital.

]]>The post Why We Invested </br>in Fervo Energy appeared first on B Capital.

]]>