MCP enables a new way of building applications: the MCP server is the primary interface, and everything else (the web dashboard, the mobile app, the CLI) is an afterthought. Not "AI-enhanced" or "AI-assisted" but genuinely MCP-first, where natural language through an LLM client is how users interact with your application.

To be clear, I'm not talking about the local MCP servers that developers have been wiring up to their code editors. I'm talking about remote MCP servers, hosted over HTTP, that end users connect to from any MCP client.

I tried building an application with this approach and it has some interesting implications for how we think about application development.

The UI Is Lossy Compression

For many applications, the UI is a lossy compression of what the user actually wants to do. When someone opens a CRM and clicks through 4 screens to log that they had coffee with a friend, what they actually wanted to express was:

"Had coffee with Mike yesterday at Blue Bottle, he mentioned he's switching jobs next month."

The UI is just an intermediary, and often a bad one, between human intent and structured data.

On top of that, if you're building with LLM-assisted coding, the UI layer is notably harder to iterate on than business logic. It's a long tail of minor changes: tweaking layouts, fixing edge cases, polishing interactions. Visual nuances and interaction details are hard to communicate in a prompt. Even multimodal models can typically only comprehend a single frame, so things like animations, transitions, and multi-step interactions are difficult to convey.

MCP Removes the Intermediary

MCP (Model Context Protocol) gives us a standardized way to expose application functionality as tools that LLMs can call. When you build an MCP server, you're essentially building an API that's designed to be operated by a language model on behalf of a user. The practical upshot is that you can skip the entire UI layer for your core interaction loop. No React components, no form validation, no CSS battles, no "should this be a modal or a drawer?" debates. The LLM handles the translation from human intent to structured API calls, and it's remarkably good at it.

What you're left building is:

- A data model

- Business logic

- An API surface (your MCP tools)

- Auth

That's the whole application.

Case Study: Mob CRM

To put this idea to the test, I built Mob CRM, a personal CRM that you interact with almost entirely through natural language via MCP.

I've been a longtime user of Monica CRM and always liked the concept of a personal CRM. The problem was data entry. Logging a social interaction meant clicking through multiple forms: find the contact, create an activity, pick a type, fill in notes, associate other contacts. It's death by a thousand clicks, and the friction meant I'd fall behind and eventually stop using it.

Mob takes a different approach. There is no form. You just talk:

"I met with John today, and he introduced me to his friend from work Jane.

We all talked about our shared obsession with slurpees."

Behind the scenes, the LLM breaks this down into the appropriate MCP tool calls: creating contacts, establishing relationships, logging the activity with notes. What would have been 30 clicks in a traditional UI becomes one natural sentence.

The entire server is a Node.js application with SQLite storage. It supports contacts, relationships, activities, life events, reminders, notes, gifts, debts, and tags. It took a fraction of the time to build compared to what a traditional full-stack CRM would have required.

The key realization: the data model and business logic were the same amount of work regardless of whether I built a UI or an MCP server. But by choosing MCP, I got to skip everything else. The LLM is the interface.

Why This Is Compelling for Developers

If you've built CLI tools with AI-assisted coding, you know they're among the easiest things to ship: simple input/output, text-only, easily testable. MCP server development shares these same properties. Your tools accept structured input, return structured output, and the whole thing is testable like any other API.

This means you can go from idea to working product fast, because you're building the domain logic without the interface.

When MCP-First Makes Sense

Not every application should be MCP-first. It works best when:

- The core interaction is data entry or retrieval: CRMs, note-taking, bookkeeping, inventory management, journaling

- Natural language maps well to the domain: if you can describe what you want to do in a sentence, an LLM can probably figure out the right tool calls

- The UI would be mostly forms and tables: if your app is primarily about structured data rather than rich visual interaction, MCP can replace most of it

- Cross-service orchestration adds value: when combining data from multiple MCP servers makes the experience better than any single app could be

It makes less sense for:

- Highly visual applications (design tools, video editors, maps)

- Real-time collaborative experiences

- Applications where spatial interaction is the point (games, drawing)

The Composability Angle

The other thing that makes MCP-first development interesting is composability. When your application is an MCP server, it automatically plays well with every other MCP server. A user can connect your CRM alongside their calendar, email, and task manager, and suddenly cross-application workflows just work:

- "Check if I have any meetings with Sarah next week, and if not, remind me to reach out to her"

- "Log that dinner with the team last night and add it to my expenses"

- "Who haven't I talked to in a while? Draft a quick message to catch up with them"

You get this for free. No integrations to build, no partner APIs to negotiate, no OAuth dances between services. The LLM orchestrates across MCP servers naturally.

Where MCP Falls Short (For Now)

MCP is still young and there are some real gaps if you're building a user-facing application.

Push notifications don't really exist. MCP has a concept of "notifications" but they only work while the connection is alive, which isn't practical on a mobile device. There's no mechanism for GCM/APNs-style push delivery. Mob CRM works around this by having the server provide a URL where users can register for push notifications via the web browser, but it's clunky.

You can't send files through tool parameters. If you need users to upload a file, MCP doesn't have a way to handle that today. Mob CRM hits this with Monica imports and has to fall back to a web page. There's an draft proposal to address this, but it's not landed yet.

These are solvable problems and the protocol is actively evolving, but they're worth knowing about if you're considering MCP-first development today.

Rich UI When You Need It: MCP Apps

On the flip side, the protocol does have an answer for when plain text isn't enough. MCP Apps (sometimes called MCP-UI) allow a server to return HTML/JS/CSS that gets rendered as an iframe within the conversation. This means your MCP server can provide rich interactive controls, charts, or custom input forms right inline with the chat, without the user ever leaving their MCP client.

It's a nice middle ground: you get the simplicity of MCP-first development for the 90% case, and can drop into a full UI when the interaction genuinely needs it.

The Access Problem (And Where It's Heading)

The main friction point right now is that connecting to remote MCP servers isn't easy for regular users. The big players are still gating it:

- Claude: remote MCP only for paid subscribers

- ChatGPT: MCP via developer mode or paid Apps

- Gemini: no MCP support for chat users as of writing

This will change. The protocol is open, the value proposition is clear, and the technical implementation is just HTTP. It's a matter of when, not if.

In the meantime, if you want MCP access on mobile, Joey is an open-source MCP client I built that connects to any remote MCP server and lets you pick from hundreds of LLMs via OpenRouter. It runs on iOS, Android and macOS. It's written in Flutter so it can really support Windows and Linux, I just haven't tested those platforms yet.

Building for Tomorrow

Mob CRM took me a fraction of the time that a traditional full-stack CRM would have, and the result is arguably a better user experience for the core use case of logging interactions. I didn't have to compromise on features, I just didn't have to build the forms. If you're starting a new project and the core interaction is fundamentally about data, consider building the MCP server first. You might find that's all you need.

]]>

We've seen the rise of MCP servers over the last year, first as purely local tool calling for software engineers, but moving towards business/enterprise use cases. What I believe we'll see in the near future is consumer-facing MCP servers as the next frontier of how everyone interacts with many of their applications. We may even begin to see a wave of applications where MCP is the primary way users interact with an application, with mobile, desktop and web all being second-class citizens.

The use cases for consumer MCP servers are reasonably apparent, connecting multiple services you would normally interact with separately into one conversation orchestrated by an LLM. To get a high level idea, here are some examples of theoretical prompts that would work across multiple MCPs:

- Book a dinner reservation near my 5pm meeting and make it a table for 4 (calendar + dinner reservation MCPs)

- Use this recipe <url> to add ingredients to my grocery cart (web fetching + supermarket ordering MCP)

- Book me a flight to Chicago for the product launch next week, and make sure it's under $500 (calendar + flight booking MCPs)

- If it's going to rain tomorrow, text the party group chat to let them know it'll be moved to <my house>. (weather forecast MCP + messaging MCP).

What's the Hold Up?

One of the biggest gaps currently, is that consumers can't easily access remote MCP servers!

- Anthropic/Claude - pioneered MCP, but connecting to a remote MCP server is only available to paid subscription users

- OpenAI/ChatGPT - has MCP integrations available in developer mode, and offers MCP connectors via ChatGPT Apps to their paid subscribers

- Google/Gemini - As of writing has no ability to connect to MCP for it's chat users.

With all this gating behind developer options or enterprise subscriptions, it really isn't accessible to the majority of users. But supporting a remote MCP server isn't really that complicated, it's just HTTP connection to a web server!

Introducing: Joey MCP Client

With the current lack of available options for consumers (without forking over for a subscription), I decided to create my own chat interface that provides MCP support out of the box!

The app is called Joey MCP Client, and the way it works is very simple:

- Connecting to LLMs is handled by authenticating with your OpenRouter account

- You manually specify the remote MCP servers in settings

- You can pick exactly which OpenRouter LLM + MCP Servers to use for every conversation!

OpenRouter was chosen as the model provider for the initial implementation because:

- It provides access to virtually every major LLM out there

- It has rich privacy settings about how your prompts are used

- It is pay-per-use rather than subscription modelled

- It supports OAuth for securely providing access to Joey with spending limits and token expiry

- It abstracts the implementation differences between different proprietary models

The app even supports some neat chat features like:

- Picture support, both from MCP responses and user uploads

- Multiple MCP servers at the same time

- OAuth to MCP servers - so you can connect to your accounts securely

And for my developer and privacy concious readers:

- The app itself is source available (FSL + time-deferred MIT), so you can run it for free by building from source.

- The app itself includes no telemetry (obviously what OpenRouter / the connected MCP servers do with any data is outside the apps control)

- No ads!

There are also loads of features that could be added to future versions of the app too:

- Server discovery / MCP server index

- Rich server-provided UI with MCP Apps / MCP-UI

- Support for locally hosted LLM servers (I hear you r/LocalLLaMA) and third-party OpenAI compatible APIs

Or build from source on GitHub

Example MCP Server with Joey: Mob CRM

To test out Joey, I built an "AI first" application to pair it with. I've long been a fan of Monica Personal CRM for helping me keep track of my social connections, but I always dreaded the data-entry aspect of it. This happens to be an absolutely perfect fit for an LLM-integrated application so I built Mob CRM, which is essentially a personal CRM you almost exclusively access via MCP.

It is far more natural to recount an interaction as:

"I met with John today, and he introduced me to his friend from work Jane. We all talked about our shared obsession with slurpees"

This is very easy to write (or speak with dictation), and takes the LLM a few tool calls to Mob CRM to create the contacts, the connections and the activity notes, but it's way easier than doing 30 different clicks via the UI of Monica.

Wins for Developers: Skipping the UI Layer

For developers that have had experience working with AI assisted coding over the last couple years, we know that some of the easiest tools to build are CLI tools, primarily because they are:

- Simple input/output

- Basically text-only

- Easily testable

- Easy for an LLM to interpret the results / self-evaluate when things are working

This is opposed to web development with LLMs that often require a human in the loop to communicate nuances in behavior, styling issues, etc. These aren't beyond multi-modal LLMs, but there is definitely an extra step there.

Looking at the list, we can quite easily see how MCP server development shares many parallels with CLI tools. It's text-heavy for the primary use cases, easily testable just like any other REST API and LLMs can easily evaluate their behavior.

This means that to develop new applications in an "AI first" way with an MCP server, the barrier is even lower than building a new mobile or web app.

Hopping Into the Future

Once MCP access is democratized by the big players adding support for everyone, or users adopting MCP via their own local chat apps, we may begin to see a shift in usage. The big drain in online activity (see: Google search result clicks) that has been sucked into the LLM vortex, will only continue to impact other applications as the friction lowers for users to perform their actions from within the comfort of chat.

Just as every serious business™ today needs a mobile app, tomorrow they will need an MCP server to stay relevant. In an AI-first world, if an LLM can't talk to your business, your customers won't either.

]]>

Many modern parents provide an iPad or Android tablet to their young children. According to some studies, 40% of children have a tablet by the time they are 2 years old, and that number rises to 60% by the time they are 4. Many of these parents use software like YouTube Kids to provide a video watching experience that is extremely easy to use for even 2 year olds. However, there are several concerns and pain-points that often come up when it comes to YouTube Kids:

- The default experience is an opt-out model for channels they don't want their children to see

- When using the "Approved Content Only" mode, selecting videos is a somewhat convoluted process

- Fine-grained control is only really possible by manually sharing videos one by one

- Watching videos offline / with low-connectivity is not possible without YouTube Premium and only in a select few countries

- YouTube is continually tracking what your child is watching to profile them / recommend them increasingly more targeted videos

There are also the psychological issues that can present as behavioral issues with young children. A key feature of YouTube Kids, the row of suggested videos that appears during playback, may inadvertently encourage problematic habits. The ability to instantly switch to a new video, before the current one even finishes, can create a cycle of constant novelty-seeking. This pattern mirrors the "reward cycle" associated with TikTok and Instagram Reels, and over time, it may contribute to shorter attention spans and increased impulsivity in young children.

Faced with these issues, we decided to build a simpler alternative.

The Antitote: Easy Kids Video Player

Kids Video Player offers a similar accessible user interface to YouTube kids, but purely with offline video files that you load onto the device. This addresses almost all of the issues:

- The videos are only the ones you provide, giving you full granular control, and also opening it up for you to let them watch videos not on YouTube or streaming services, like your family videos!

- The content is completely offline, and the app has no dependency on the internet at all, allowing it to both be fast + work in the car on a long road trip, or on a plane.

- Since you can create a finite library of videos on the device, children exhibit less novelty-seeking behavior because it is not an endless stream of content available.

- There is no tracking involved! The app has no telemetry in-built, so it's not phoning home with your kids viewing habits.

This allows for an experience that kids still love to use, but without all the draw-backs. Convinced? Setting it up is straightforward.

Getting it Set Up

On Android:

- Open the app

- Give file permission and choose the folder where your videos are stored

- That's it! Your videos will appear

On iPhone/iPad:

- Open Kids Video Player once to set it up

- Go to the Files app on your device

- Copy videos into the Kids Video Player folder

- Re-open the app to see your videos

What Video Files Work Best

| Android | iPhone/iPad | |

|---|---|---|

| File Types | Most common video files | MP4, MOV, M4V files |

| Video Format | H.264, H.265, VP9 | H.264, H.265 |

| Audio Format | AAC, MP3, FLAC | AAC, MP3 |

Best Choice for Both:

Use MP4 files – these work reliably on both Android and iPhone/iPad devices. Most videos from phones, cameras, and downloads are already in MP4 format.

That's really all there is to the setup. Once your videos are in place, you can let your kid have a go!

Conclusion

What you get is a video player that stays within the library you chose. Your child can pick a video, and when it ends, it just plays the next one from your folder. They won't get pulled into a never-ending stream of new recommendations.

It’s a simple, contained way to handle screen time. For car trips, plane rides, or just some quiet time at home, it keeps things predictable. You know what they’re watching, and they have a clear start and finish.

]]>

When you work at a FAANG company for a while, if you're reasonably financially mindful, you end up amassing enough wealth to become financially independent. I'm not there yet, but I'm well on the way.

Once you hit the milestone of being financially independent, you meet a crossroad for what you really want to do with your life. Many follow the FIRE movement and decide to retire early. Others just continue earning and amassing more and more wealth to either stockpile or to spend on a more and more lavish lifestyle. Then there are those who decide their money can go a lot further in the world through effective charitable giving than they could ever hope to help with their own bare hands (i.e. writing code -> earning paycheck -> funding many life-saving programs).

For those working in Big Tech, it can be interesting to then look at which employers offer the best employee donation matching programs to increase your giving capacity.

For reference, most of this data here is sourced from Double the Donation, who provide detailed information about each individual companies corporate giving programs. I will also be including several FAANG-adjacent companies in this list too for completeness.

The Companies

Microsoft

Kicking it off is my employer, Microsoft. They actually have an awesome giving program, matching donations up to 15k USD for employees in many countries around the world, including Australia. Microsoft also matches volunteer time, by donating $25/hr to the charities you volunteer for.

Meta (Facebook)

Meta does not provide any employee donation matching program, which is a shame. They do however pay their employees very well, so if you are a Meta employee, you can still give a lot of money to charity, just without the employer match (more on this further below).

Apple

Apple matches employee donations 1:1 up to 10k USD. This is also a great program, and they are on par with Microsoft for employee volunteer time being matched at $25/hr.

Amazon

Amazon does not provide an employee donation or volunteer matching program.

Netflix

Netflix offers employees an exceptional 2:1 matching program. For every $1 an employee donates to a charity, Netflix contributes $2, up to a $10,000 annual limit. This means employees can donate $10,000 of their own money and have a total of $30,000 directed to their chosen charity. Just as Netflix prides itself on paying at the top of the market, it also matches donations at the top of the market.

Alphabet (Google)

Alphabet provides their employees with 10k of 1:1 employee donation matching, on par with Apple. Their time-matching is a lot lower than competitors, at just $10/hr.

Tesla

Tesla does not provide any employee donation matching program nor any volunteer hour matching program.

NVIDIA

NVIDIA matches employee donations 1:1 up to 10k USD, similar to Apple and Alphabet. Their volunteer matching is however higher than all the others at $30/hr, which is a nice perk for those who like to volunteer.

The Tier List

Giving Impact Visualised

If your goal is to give the most money as an individual, here is a neat chart showing how far your dollars go depending on who your employer is. The x-axis is the dollar amount you give personally, y-axis is dollars received by your chosen charities (inclusive of the employer match and your personal giving).

Salary Adjusted Giving?

All the previous sections handwave around the fact that these companies do not pay equal salaries, which is obviously a contributing factor to how much you are able to personally donate. In this section I will compare how much a Senior Engineer is able to give, supposing they are donating their entier salary if they are already financially independent.

For simplistic comparison, all amounts here are based on Seattle total compansation (TC) from levels.fyi for Microsoft Senior (L63) or equivalent levels (scoping higher when there is overlap), recorded on 18th July 2025.

| Company (level) | Salary | Employer Giving Match | Total Giving |

|---|---|---|---|

| Netflix (L5) | 538k | 20k | 558k |

| Meta (E5) | 431k | 0 | 431k |

| Amazon (SDE III) | 387k | 0 | 387k |

| Alphabet (L5) | 368k | 10k | 378k |

| Apple (ICT4) | 347k | 10k | 357k |

| Microsoft (63) | 231k | 15k | 246k |

| Tesla | 202k | 0 | 202k |

We can see here that Netflix takes the absolute cake all round, both in terms of compensation and their employee giving match.

Conclusion

If your main goal is to be able to give a small amount of your salary (<10k) in giving and have it matched, you can't go wrong with Microsoft, Apple, Alphabet, NVIDIA or Netflix, with Netflix being the absolute winner in this area.

If your goal is to donate your entire salary to charity, Netflix is still the absolute winner in this area, with Meta being the runner-up who, although they don't provide an employee match program, they do provide higher TC than the rest.

Appendix: Effective Giving

Some readers may be sceptical on what effective giving actually looks like, and why you would want to give your hard-earned money away. I would highly recommend checking out both The Life You Can Save (book) and it's corresponding charity The Life You Can Save. Another terrific resource is GiveWell, who themselves are a charity dedicated to doing deep analysis on which donations can make your dollar go the furthest in doing the most good.

Putting it a little more concretely, for approx 3k-8k USD you can statistically stop someone from dying (i.e. if you do some measure that prevents 1000 people from possibly catching Malaria, and 1 of them would have died statistically, you have saved a life with your donation). I believe that as affluent individuals in tech we have a moral and spiritual duty to help those in poverty, in ways that powerfully impact both the giver and the receiver.

TLDR:

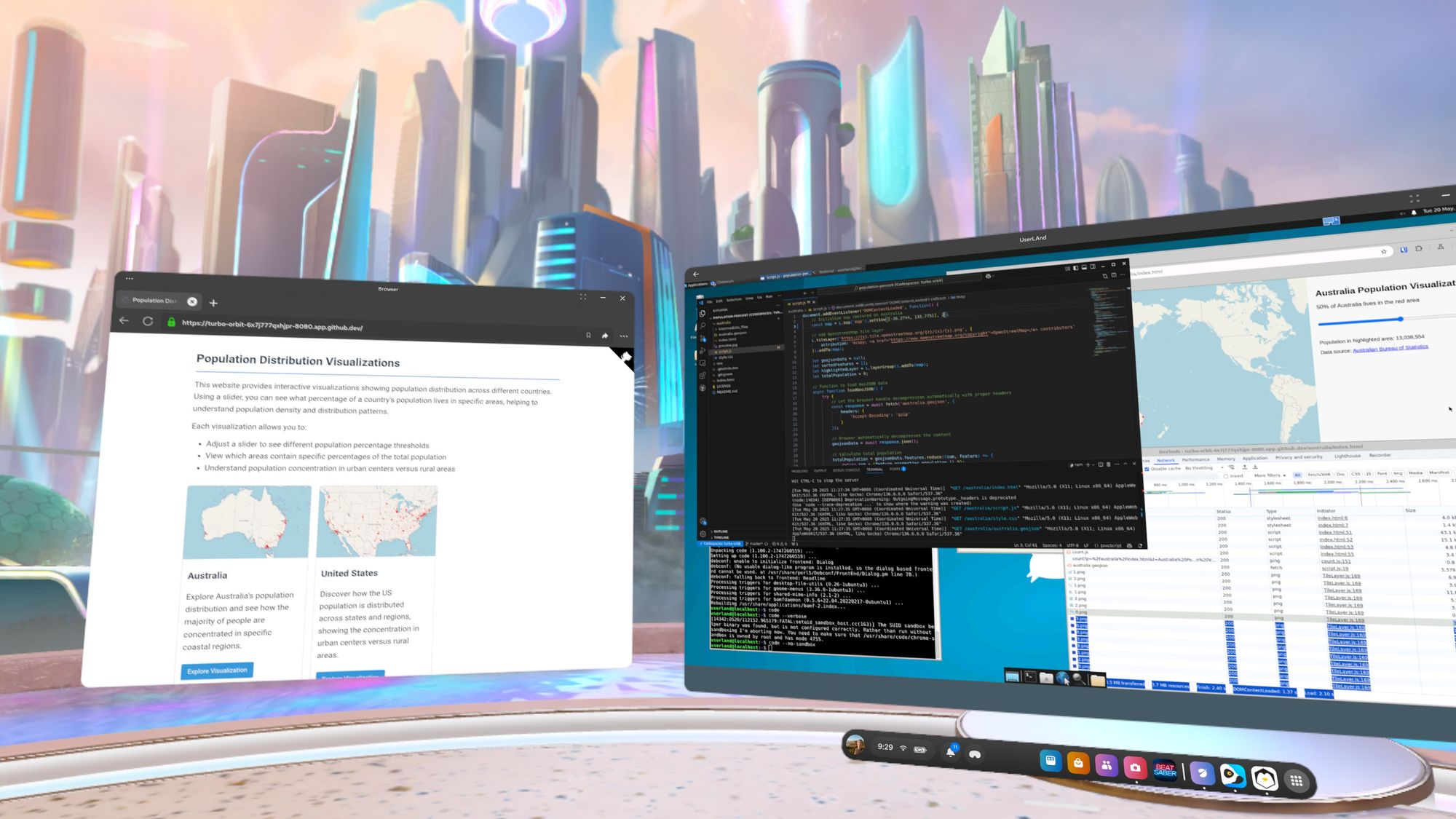

- Tried using the Quest 3 as a web dev machine without streaming from a laptop.

- Most VR browser apps fail due to lack of devtools and window limitations.

- Best result: Running a full Linux desktop in UserLand with working Chrome devtools (and VS Code!)

- Not a great experience for

TLDR:

- Tried using the Quest 3 as a web dev machine without streaming from a laptop.

- Most VR browser apps fail due to lack of devtools and window limitations.

- Best result: Running a full Linux desktop in UserLand with working Chrome devtools (and VS Code!)

- Not a great experience for now, but promising for future standalone dev in VR.

Intro

I recently got a Quest 3 and wondered: could I use it as a lightweight dev machine without streaming from my laptop? My theory: with something like GitHub Codespaces (VS Code in a browser, running on a cloud server), I could write code, debug, and push changes all from within the headset. Could a full web development workflow work entirely in VR?

What I Tried

1. Fluid (Quest App)

Fluid is the go-to recommendation for opening multiple browser tabs in VR. It’s a native Quest app with a clean interface and decent multitasking support. But for web development, it fell short quickly:

- No developer tools: There’s no way to open browser devtools, which is an instant dealbreaker for web development.

- Keyboard limitations: Keyboard navigation is clunky, with common shortcuts (like focusing the address bar or switching tabs) either missing or unreliable.

- Performance issues: Just a handful of browser tabs and a single YouTube video slowed the system enough to drop frames.

At the end of the day, trying to use an Android-based VR headset like a desktop computer is a bit of a stretch—and it shows.

2. Native Horizon OS + Android Browser Apps

I tried sideloading Android browser apps (Brave, Mises, Lemur) directly onto the Quest and running them natively via Horizon OS. Keyboard shortcuts worked well (Ctrl+L, Ctrl+T, Ctrl+Tab), and I even wrote this blog post from inside one of those browsers.

However, devtools can't be opened side-by-side with the page in these mobile browsers, so debugging is tedious. There's also a hard cap of 6 open windows total (3 docked, 3 floating), which limits multitasking.

3. Other VR Productivity Apps

Most "productivity" apps on the Quest platform are just streaming clients:

- Meta Horizon Workrooms (Beta) – Requires connection to an external computer.

- Virtual Desktop – Just a fancy desktop streaming app.

- Immersed – Well-polished, but also relies on streaming from a real computer.

If your goal is to leave the laptop behind entirely, these don't help.

Best Result: Linux Chroot (UserLand)

Instead of relying on Android support for devtools, I ran a full Linux desktop environment in a chroot+vnc using UserLand. The steps:

- Start with a minimal Ubuntu base image (UserLand has an issue where the xfce4/lxde image options don't work, so follow these steps to install)

- Then modify

~/.vnc/xstartupto launch xfce:

#!/bin/sh

... leave other lines the same

# comment out this line

#/etc/X11/Xsession

# at the bottom of the file add

startxfce4 &

- Install chromium browser with these (steps):

sudo add-apt-repository ppa:xtradeb/apps -y

sudo apt update

sudo apt install chromium

- Run chromium with

chromium --no-sandbox. - Fix desktop resolution: edit

~/.vncrcto$geometry = "1920x1080", thenchmod -w ~/.vncrcso UserLand does not overwrite it. - Install VS Code via apt, run with

code --no-sandbox.

Notes:

- Chrome windows/devtools are stuck inside the VM, not as independent VR windows, but you can run many of them inside the VM window.

- Running a full desktop environment uses more RAM (estimate: 500MB+), plus whatever Chrome/VS Code consume.

So while it makes things possible, it does take away from the magic of a "VR desktop" where all windows can be freely placed.

Quick Comparison

| Approach | Devtools Support | Keyboard Shortcuts | Window Limitations | Performance | Notes |

|---|---|---|---|---|---|

| Fluid (Quest App) | ❌ None | 🚫 Poor | Moderate | 🚫 Drops frames | No devtools, clunky navigation |

| Native Android Browsers (Horizon OS) | ⚠️ Limited* | ✅ Good | 6 windows max | 👍 Decent | Devtools can't be opened side-by-side |

| Other VR Productivity Apps (Streaming) | ✅ Full (remote) | ✅ Good | Host-dependent | 👍 Great | Requires external computer |

| Linux Chroot (UserLand) | ✅ Full | ✅ Good | 1 VM window, 5 horizonOS windows | ⚠️ Heavy RAM use | Real devtools, VS Code, but inside VM only |

Limitations & Verdict

As the table above shows, using the Quest 3 as a standalone device for web development is still challenging. The biggest blockers are:

- Lack of usable devtools except within Linux chroot

- Window and multitasking limitations

- Keyboard navigation quirks

- Performance/memory overhead

Still, the future looks bright. With hardware and software improvements, especially native apps for developers, this could become viable without resorting to a full-blown Linux environment.

Footnote

Yes, I know GitHub Codespaces technically is a remote computer, but it feels less “tethered” than streaming from your personal machine. You can spin one up from anywhere, pay by the hour, and it even comes with a decent free allowance (120 core-hours/month), which should be more than enough for light dev work in VR.

]]>

Update 2025-03-18: Thanks to redditors, We managed to trip a hidden limit on international SMS after sending 400 messages! Aussie SMS still seems to work though, so I'll keep the service alive until April 13 when the plan expires.

Background

Just last week, a tropical cyclone rolled through the Gold Coast. Thankfully, we were minimally impacted, but we did lose power for four days, and the phone tower near us went offline. We still had reception, but it was from a much farther tower, which was likely very congested. As a result, mobile data was pretty spotty, but texting and calling remained reliable.

This made me think—wouldn't it be great to send an SMS to an LLM? Sure, I'm a dev and could run a local LLM on my laptop, but many people don't even know that's possible (or have the hardware to do so).

Implementation

I researched the options, some API-based text services sounded great, but the cost is higher than the LLM calls(!!!). So here was the final approach I landed on:

- Using an old Android phone + prepaid SIM with unlimited international (to some countries) texting

- Running an open source SMS gateway on the phone

- Running a simple Node.js server on the phone in the Termux environment to relay the SMS between the gateway and the LLM (Deepinfra Llama 3.1 8B)

- Basic SQLite storage to keep the conversation history

And that's it! You can send a text to the phone running this at +61493035885

I also add a system message before each prompt to encourage the LLM to be brief ("Keep responses short and concise for SMS readability"). This helps reduce load by preventing extremely long SMS messages.

FAQ

Which countries does this work from?

Update: Sadly only Australia seems to work now after my carrier has stopped sending international SMS.

These are the countries covered by the phone plan's unlimited international texting. If you're outside these, I've hard-blocked incoming texts from being processed.

- Australia +61 (obviously)

- Brazil +55

- Canada +1

- China +86

- France +33

- Germany +49

- Hong Kong +852

- India +91

- Indonesia +62

- Ireland +353

- Israel +972

- Japan +81

- Malaysia +60

- Mexico +52

- New Zealand +64

- Norway +47

- Singapore +65

- South Korea +82

- Thailand +66

- UK +44

- USA +1

Is it free?

There's no extra cost from my side—just whatever your carrier charges to text an Australian mobile number.

Why Llama 8B? <X> model is bigger and better

This is a proof of concept, and I wanted to keep costs manageable. Also, Deepinfra seems to be offering the 8B model for free—at least for now. I'll know for sure when I run out of credit!

You can't run this from a consumer SIM

Probably true—let's see how long before my carrier disconnects my service!

Your phone won't keep up

Yeah, I know. If a flood of messages comes in, something will definitely bottleneck—whether it’s the CPU, SMS queue, or the network itself. Guess we’ll find out!

How long does it take to get a response?

It depends! The LLM itself is quite fast—typically generating a response in just a few seconds. However, the main delays come from the SMS network and phone processing. Sending and receiving texts isn't instant, especially if the phone is handling multiple messages at once. If traffic is light, you should get a response in under a minute. But if many people are texting at the same time, there could be delays due to SMS queueing, network congestion, or the phone's processing limits.

Further exploration

There are many ways this idea could be expanded upon. A rough list that comes to mind:

- Enhancing the results with basic search engine results for realtime information. This could be easily done (at high cost) using something like Gemini with Search. This would be helpful in a natural disaster to try and get relevant information from web sources while you don't have internet access.

- MMS + a multi-modal LLM.

- URL summarisation: If a user does not have internet but has a URL, you could perform a scrape + LLM summarisation and return that to the user.

To explore these concerns,

]]>I've often found myself uneasy when LLMs (Large Language Models) are asked to quote the Bible. While they can provide insightful discussions about faith, their tendency to hallucinate responses raises concerns when dealing with scripture, which we regard as the inspired Word of God.

To explore these concerns, I created a benchmark to evaluate how accurately LLMs can recall scripture word for word. Here's a breakdown of my methodology and the test results.

Methodology

To ensure consistent and fair evaluation, I tested each model using six scenarios designed to measure their ability to accurately recall scripture. For readers interested in the technical details, the source code for the tests is available here. All tests were conducted with a temperature setting of 0, and I have given slack to the models by making the pass check case and whitespace insensitive.

A temperature of 0 ensures the models generate the most statistically probable response at each step, minimising creativity or variability and prioritising accuracy. This approach is particularly important when evaluating fixed reference material like the Bible, where precise wording matters.

Test 1: Popular Scripture Recall

| Model | Pass |

|---|---|

| Llama 3.1 405B | ✅ |

| Llama 3.1 70B | ✅ |

| Llama 3.1 8B | ✅ |

| Llama 3.3 70B | ⚠️ |

| GPT 4o | ✅ |

| GPT 4o mini | ✅ |

| Gemini 1.5 Pro | ✅ |

| Gemini 1.5 Flash | ✅ |

| Gemini 2.0 Flash | ✅ |

| Claude 3.5 Haiku | ✅ |

| Claude 3.5 Sonnet | ✅ |

When asking a model to recall John 3:16 in the NIV translation, the only model that failed to accurately recall the verse word for word was Llama 3.3 70B. It was only a very slight translation mismatch, with it recalling "only begotten son" where the actual verse in the NIV does not include begotten, despite it being present in other translations.

Test 2: Obscure Verse Recall

| Model | Pass |

|---|---|

| Llama 3.1 405B | ✅ |

| Llama 3.1 70B | ⚠️ |

| Llama 3.1 8B | ❌ |

| Llama 3.3 70B | ❌ |

| GPT 4o | ✅ |

| GPT 4o mini | ⚠️ |

| Gemini 1.5 Pro | ⚠️ |

| Gemini 1.5 Flash | ⚠️ |

| Gemini 2.0 Flash | ⚠️ |

| Claude 3.5 Haiku | ⚠️ |

| Claude 3.5 Sonnet | ✅ |

Many models struggled to recall Obadiah 1:16 NIV word for word, often mixing up the words with other translations. For these cases, I have marked them as partial for correctly recalling the verse in some translation, even if not the specific requested one. The models that clearly succeeded seem to be very large models, 405B for Llama and GPT 4o and Claude 3.5 Sonnet.

Test 3: Verse Continuation

| Model | Pass |

|---|---|

| Llama 3.1 405B | ✅ |

| Llama 3.1 70B | ✅ |

| Llama 3.1 8B | ❌ |

| Llama 3.3 70B | ✅ |

| GPT 4o | ✅ |

| GPT 4o mini | ⚠️ |

| Gemini 1.5 Pro | ✅ |

| Gemini 1.5 Flash | ❌ |

| Gemini 2.0 Flash | ✅ |

| Claude 3.5 Haiku | ⚠️ |

| Claude 3.5 Sonnet | ✅ |

When quoting the model 2 Chronicles 11:13 (but without specifying where in the bible it is found) and asking it to produce the immediate next verse, we had a much more mixed bag of results. Many medium-to-large sized models got this correct, but the smaller ones completely hallucinated parts or all of the verse. Claude 3.5 Haiku almost recalled the verse, but referred to the Levites as "they", which is not explicitly a translation in any of the more well known translations and appears to be the model substituting the intention of the word rather than the exact one.

Test 4: Verse Block Recall

| Model | Pass |

|---|---|

| Llama 3.1 405B | ✅ |

| Llama 3.1 70B | ✅ |

| Llama 3.1 8B | ❌ |

| Llama 3.3 70B | ✅ |

| GPT 4o | ✅ |

| GPT 4o mini | ✅ |

| Gemini 1.5 Pro | ✅ |

| Gemini 1.5 Flash | ⚠️ |

| Gemini 2.0 Flash | ✅ |

| Claude 3.5 Haiku | ⚠️ |

| Claude 3.5 Sonnet | ✅ |

When asked to recall Lamentations chapter 3 verses 19 through 24, the models did this very well. Only the smallest of the models, Llama 3.1 8B outright failed here, instead recalling the beginning of the chapter. The two warnings were only slight translation mismatches of a few words, but the essence of the verse was preserved.

Test 5: Query Based Lookup

| Model | Pass |

|---|---|

| Llama 3.1 405B | ✅ |

| Llama 3.1 70B | ✅ |

| Llama 3.1 8B | ✅ |

| Llama 3.3 70B | ✅ |

| GPT 4o | ✅ |

| GPT 4o mini | ✅ |

| Gemini 1.5 Pro | ✅ |

| Gemini 1.5 Flash | ✅ |

| Gemini 2.0 Flash | ✅ |

| Claude 3.5 Haiku | ✅ |

| Claude 3.5 Sonnet | ✅ |

Asking the models, "What's that verse in the bible about the Earth being filled with knowledge of God's glory?", all of them successfully recalled it was Habakkuk 2:14. Verse lookup is definitely a strong-suit, even in smaller models.

Test 6: Entire Chapter Recall

| Model | Pass |

|---|---|

| Llama 3.1 405B | ✅ |

| Llama 3.1 70B | ✅ |

| Llama 3.1 8B | ❌ |

| Llama 3.3 70B | ✅ |

| GPT 4o | ✅ |

| GPT 4o mini | ✅ |

| Gemini 1.5 Pro | ✅ |

| Gemini 1.5 Flash | ✅ |

| Gemini 2.0 Flash | ✅ |

| Claude 3.5 Haiku | ✅ |

| Claude 3.5 Sonnet | ✅ |

When asking for the entire contents of Romans 6 in the KJV translation, almost all of the models recalled all 23 verses accurately. Even the failed case of Llama 3.1 8B recalled over 98% of the words correctly, with only 9 incorrect words.

Conclusions

If you really want to lean on an LLM to give you textually accurate bible verses of popular translations, you really should lean on higher parameter count (i.e. larger) models. These include models like Llama 405B, OpenAI GPT 4o and Claude Sonnet which all had perfect scores. Smaller models (7B range) will often mix up translations, and in some cases even mix up or hallucinate verse altogether. Medium-sized models (70B range) often accurately preserve the intention of the verses, although the verse may be a mangled representation of several translations, and in some cases paraphrased a little by the LLM.

You can certainly still use smaller models for discussion that references scripture by Book/Chapter/Verse, but it is important to lean on an actual copy of the Bible for the correct text in these cases.

Looking into the future, we may very well see smaller models perform better on these benchmarks, but there is surely a limitation to how much information can be encoded into such small models.

For full test results, see the results file here, including the raw prompts for each test. If you feel like I missed a crucial test, feel free to submit an issue on GitHub.

]]>I remembered reading about the concept of donating stocks in the US (e.g. on Fidelity), and it seemed like a great deal that people were making good use of. It wasn't until recently that I've been able to work out why it works so well in the US, and why it's not really utilised in Australia.

In the US, donating stocks has 2 benefits (IRS reference):

- You do not pay capital gains tax on the stocks donated that have been held long term. Making it an excellent way to dispose of stocks with a low cost-basis.

- You can deduct the full fair market value of the stocks at the time of donation in your taxes.

In Australia, by comparison, you only get the second benefit. Capital gains are always realised as income at the time of disposal, including when donating.

Now because the gains earned are less than the amount donated, you still end up ahead tax-wise. However, compared with donating cash, the ATO (Australia's IRS equivalent) takes part of the benefit (the capital gains tax) and you take the rest (the stock value + your part of the capital gain).

Side Note: Here's a worked example for clarity, assuming domestic currency for simplicity and the appropriate registered charity status for tax-deductible donations.

Scenario: Donating stock worth $10k with a cost basis (purchase price) of $5k that was held long term (12 months+).

USA:

- Tax deduction for donation: $10k

- Capital gains payable: $0

- Net deduction: Full $10k deduction against taxable income.

Australia:

- Tax deduction for donation: $10k

- Capital gains payable: $2.5k (5k gain, 50% discounted for long term hold)

- Net deduction: $10k - $2.5k = $7.5k.

What This Leads To

In Australia, you're no better off disposing of stocks vs just donating cash, so there is no reason to sell stocks if you have disposable cash to donate instead.

In the USA, you're better off donating stocks. This means that for individuals with large stock portfolios, it strongly encourages them to dispose of it via giving rather than for personal gain.

What Should Australia Do?

This is a much more philosophical and political discussion. In Australia, where the wealthiest 20% of people own 80% of the stocks, and much of their remaining wealth is likely tied up in illiquid assets like property. Given this concentration of wealth, Australia should create stronger incentives for individuals to donate a larger portion of their stock holdings to charitable causes. A clear way to do that, is to abolish capital gains on stock donations.

Want to see Change?

]]>Recently I was wanting to build a Ruby on Rails project involving uploading images from a user in the browser. This is nice and all, but the image processing (resizing) must be done on the server-side, and if you have minimal resources you can only handle so much load before things get sluggish.

To work around this, the browser itself is more than capable of resizing images, but it takes a non-trivial amount of code to say "ensure the image uploaded is under 1000px wide and 70% jpeg quality". So I decided to fill this gap, creating a library called fileinput-image-resize.

How to use

All you need to do is add a single tiny (<1kb) JavaScript library to the page:

<script src="https://cdn.jsdelivr.net/gh/benkaiser/[email protected]/dist/bundle.js"></script>

And then place some special attributes on your input tags to control the resize behvaior:

<input type="file" data-max-width="500" data-max-height="500" data-quality="90" data-format="webp" />

And that's it! The server will receive the processed images as part of the form submission instead of the original selected image from the user.

For a full list of the options available for customizing the resize behavior, see the project README on GitHub.

A couple of notes

- Images will have their EXIF metadata stripped. This is usually a benefit since it preserves the privacy of the users uploading the images, however if your specific web application requires this data then this approach isn't for you.

- If a form submission reaches the server with an image that hasn't been resized, it means the user may have JavaScript disabled. In this case, you can reject the form and report an error to the user, or alternatively, fallback to resizing on the server.

What does this library enable?

If you're someone that likes to write Ruby on Rails applications with minimal need for client-side JavaScript, then this is perfect for pre-processing form image inputs. It means you don't need to do all the work of processing the images yourself with your JavaScript tooling, or waste CPU resources processing it server-side using a ruby gem. Just import one library and add some HTML attributes and you're done!

As always, source code is available here. Happy hacking!

]]>TLDR; Here is an APK I created wrapping chat.openai.com

Update: The generated APK will not work with Google sign in unless you also update the user-agent to match one Google OAuth accepts. This is left as an exercise to the reader (or you can create an OpenAI account with an email and password).

As we find ourselves interacting with ChatGPT more and more these days, it feels silly that the only way official way on mobile is through a web browser. Although this has many benefits (tabs), it's sometimes nice to have a dedicated app icon and have the app run in standalone mode without the extra browser UI elements. This could all be trivially resolved if OpenAI provided a PWA manifest, but they currently do not. Fortunately, it's really simple to build an iframe wrapper with React Native / Expo.

What better way to start out writing a wrapper, than getting ChatGPT to do it for us!

ChatGPT Expo Start Project Conversation

Me: Can you write a react native (expo) application that simplify iframes chat.openai.com?

As an AI language model, I can provide you with instructions on how to create a bare-bones React Native (Expo) application that can simplify iframes chat.openai.com. Here are the steps:

Install the Expo CLI by running the following command in your terminal:

npm install -g expo-cliCreate a new React Native project using the Expo CLI by running the following command:

expo init chat-appThis will create a new directory called chat-app with all the necessary files and dependencies to get started.

Open the project directory in your preferred code editor and navigate to the App.js file. Replace the existing code with the following:

import React from 'react'; import { WebView } from 'react-native-webview'; export default function App() { return ( <WebView source={{ uri: 'https://chat.openai.com/' }} style={{ flex: 1 }} /> ); }This code imports the WebView component from react-native-webview and uses it to render the chat.openai.com website inside the app.

Save the file and run the app using the following command:

expo startThis will start the Expo development server and open a web page in your browser with a QR code.

Install the Expo Go app on your mobile device and scan the QR code with your camera to open the app on your device.

That's it! You should now see the chat.openai.com website rendered inside your React Native app. This is a very bare-bones implementation, but you can customize the styling and functionality of the app as needed.

Using the above instructions (and updating slightly for 2023, using npx create-expo-app <appname>), we have a basic running Expo app that iframes ChatGPT. The only thing that is slightly lacking is the status bar on Android showing the iframe UI behind it, making the text hard to read and UI hard to click. For this, we can change the translucent option to false, and give the status bar a background color. We can also set it to light so the status bar text renders in white.

const App = () => {

return <>

<WebView

source={{ uri: 'https://chat.openai.com/' }}

style={{ flex: 1 }}

/>

<StatusBar style="light" backgroundColor="#343541" translucent={false} />

</>;

};

From here, the steps to a standalone applications are pretty simple.

- Follow the guide to create the icons files using the Figma template. Just drop in a ChatGPT SVG and it should look like this.

- Add a build config to your app.json so that an apk is generated in the build process

"build": {

"preview": {

"android": {

"buildType": "apk"

}

}

},

- Generate a build using your expo eas build account (create one here). Once you have an account, install

eas-cliwithnpm install -g eas-cli. Then logineas login. Then create a buildeas build -p android -e preview. - Once the build completes, you'll get a URL to download the build, you can open this APK file on your Android device and install it.

That's it! You've created an Android application that iframes ChatGPT to install on your own device and run in standalone mode. Full final source code of the application can be seen here. If you just want to download a pre-built APK, click below:

Download Chat GPT - React Native Shell APK]]>There is a bunch of software online, and some of it is truly unreal. So great, it feels too good to be true. Today I want to take a look at one of these pieces of software, Bitwarden. Bitwarden is an open source password manager, similar to non-free software such as LastPass or 1Password. To be frank, quite often, open source software is sub-par compared to proprietary software, but Bitwarden stands out from the crowd. In this post, I will cover what Bitwarden is, provide some brief information on it, and explain why I think it is just awesome.

Overview

Bitwarden is an open source password manager. The default for most people is to opt for the hosted version, however you can also self-host. The hosted version has a very generous free tier and provides an amazing set of features. There is a premium plan you can pay for, but the features are mostly nice to haves.

With Bitwarden, you can store all of your passwords, autofill them on applications and websites you use, and use it to generate new passwords for new accounts. You can access it on all your devices through either dedicated apps, the web or browser extensions.

Security

All players writing a password manager have security of your passwords as table-stakes. Bitwarden is no different, your password vault is end-to-end encrypted. So, only you and your "master password" can decrypt your passwords. It also offers great security features like 2FA. To read more about their security compliance and to see their third-party audits, see this page on their website.

Free and Premium Versions

The free version, which is also what you get when you self-host, comes with an awesome set of core features, including:

- Unlimited passwords

- Unlimited devices

- Share select passwords with 1 other user

You can upgrade to a premium plan, which features:

- Store up to 1 GB of encrypted file attachments alongside your vault

- Authenticate using extra options such as a YubiKey

- Enable emergency access, which allows someone to access your vault should anything happen to you

Open Source Community

Having an open source community as strong as Bitwarden's is fantastic. It means there are plenty of folks reviewing code, submitting issues and generally working together to make the product great.

As mentioned above, you can run Bitwarden server yourself. However, doing so requires a fair bit of machine resources, which is why one of the popular ways to run Bitwarden yourself is via Vaultwarden. Vaultwarden is an unofficial Bitwarden server clone written in Rust which requires far less RAM to run, and can be run on a bare-bones Linux device (think Raspberry Pi). One of the reasons it is more light-weight is the lack of dependency on Microsoft SQL server, which is not known for being lightweight.

Personal Experience

Years ago, I used to love LastPass as a product. But it irked me to no end how capped their free offering was (couldn't use it on mobile + desktop, what?). I did a stint of several years using KeePass, but the product was only really half the solution, you manually had to move your "vault" file between devices using a file sync service. When I discovered Bitwarden, I was blown away at the fact it was open source, free, hosted and supported all the platform and devices I needed. Nowadays, I'm a paying Bitwarden user, but I think I only needed to store one encrypted file in there, I didn't actually need any other features, but I happily pay it because it's just great open source software.

Conclusion

In conclusion, Bitwarden is a fantastic open-source password manager that stands out from the crowd with its amazing features and robust security measures. Whether you opt for the hosted version or self-host, Bitwarden provides an unlimited number of passwords and devices, autofills your passwords on applications and websites, and generates new passwords for your accounts. Additionally, it offers great security features such as end-to-end encryption and 2FA. With its open-source community, you can contribute to the product's development or run your own server via Vaultwarden. Therefore, if you are in need of a password manager, Bitwarden is a truly unreal choice. Try it out today!

]]>

I'm excited to share an application I've recently published called "Australian Spelling". It feels silly that as Australians, when our children use a device to practice their spelling, the words are read out using an American accent. So, I decided to create an app that solves this problem. The Australian Spelling app is now available on both iOS and Android, and it's perfect for anyone looking to improve their spelling skills and communicate more effectively in Australian English.

In this blog post, I wanted to take a moment to introduce you to the app and share some of its features. I'm passionate about creating tools that can make a difference in people's lives, and I believe that the Australian Spelling app can do just that. Whether you're a native Australian or just looking to improve your spelling in a new dialect, I hope you find this app helpful. So, let's dive into some of the features and benefits of the Australian Spelling app!

Pre-made Lists

One of the key features of Australian Spelling is the built-in spelling lists for Prep to Grade 6. Each grade level has 10 pre-made lists that include words and sentences to help you practice at different levels. These lists are perfect for students or anyone looking to improve their spelling skills.

With Australian Spelling's built-in spelling lists, you don't have to spend time searching for words to practice or creating your own lists. Just select your grade level and choose a list to get started. It's that easy!

Custom Lists

In addition to the built-in spelling lists, Australian Spelling also allows you to create your own custom lists. This means you can practice spelling words that are specific to your needs, such as your class weekly spelling list or words that you've misspelled in the past.

Creating a custom list is easy. You can copy and paste words from another app, such as an email or online, directly into Australian Spelling. This saves you time and allows you to quickly create a list of words to practice.

Another way to create custom lists is by taking a photo of a printed spelling list. This is a great option if you have a printed list of words, such as from a teacher or a textbook. Simply take a photo of the list using your phone's camera, and Australian Spelling will automatically convert the words into a list that you can practice.

With the ability to create custom lists, Australian Spelling offers a flexible and personalised approach to spelling practice. You can focus on the words that matter most to you and practice them at your own pace.

Other Helpful Features

In addition to the built-in spelling lists and the ability to create custom lists, Australian Spelling offers a few other helpful features to make your spelling practice more effective.

One such feature is the ability to view past results. This allows you to track your progress over time and see how you're improving. You can view your past results for each list and each test, so you can see which words you've mastered and which ones you still need to work on.

Another feature is the in-test feedback. When you get a word incorrect during a test, Australian Spelling will show you the correct spelling and provide you with feedback on why you got it wrong. This allows you to learn from your mistakes and improve your spelling skills.

Overall, Australian Spelling is a comprehensive spelling app that offers a range of features to help you improve your spelling skills. From the built-in spelling lists to the ability to create custom lists, and the in-test feedback to the ability to view past results, Australian Spelling is a great tool for anyone looking to improve their spelling. Go ahead and download it on the iOS App Store or Google Play.

]]>The latest open source project I've been working on is a crowd-sourced audio Bible.

The motivation for this project comes out of two verses from the Bible:

So faith comes by hearing, and hearing by the word of God. - Romans 10:17 (WEBBE)]]>

The latest open source project I've been working on is a crowd-sourced audio Bible.

The motivation for this project comes out of two verses from the Bible:

So faith comes by hearing, and hearing by the word of God. - Romans 10:17 (WEBBE)

Until I come, devote yourself to the public reading of Scripture, to preaching and to teaching. - 1 Timothy 4:13 (NIV)

It is quite clear to us what public reading of Scripture looks like in real life, however, this project imagines what public reading of Scripture looks like in a digital world. This is how we can help others hear the word of God through our digital voices.

Recording a Reading

To record a reading using the website, you first select any chapter to read from the World English Bible (see my previous blog post about Bible licensing restrictions). Once a chapter is selected, press "Record Your Own". From this mode, you can use the arrow keys (or touch on mobile) to move focus between each of the verses as you read. When ready to begin recording, press "Record". As you move between verses, this information is stored, so when someone is playing back your recording, it follows along with the currently highlighted verse.

Once you have finished recording, select "Finish", fill in the basic information about your recording, and submit. From here it goes through a moderation flow and is once approved it is available for everyone around the world to listen to.

Listening to Others Readings

From the homepage, select the book and chapter you would like to read. Once you are there, if there are already recording submitted by others, you can choose which one of these you would like to listen to and press play. If there is not a recording for the specific chapter you are looking for, you can be the first to record one!

Future Work

The current website is pretty bare-bones, but I believe the recording and playback experience is pretty awesome for being able to follow along with what is being read. There are however some nice improvements that I'd like to add over time:

- UI improvements such as dark mode

- Ability to filter chapters by which ones have or don't have recordings (to know where more contributions are needed)

- Proper process for users to be able to remove their recordings (there is no real account management built in)

- Proper documentation for how third parties can consume the recordings

Call to Action

Please consider creating a recording of a chapter and submitting it. Either a chapter containing your favourite verse or one God has placed on your heart recently.

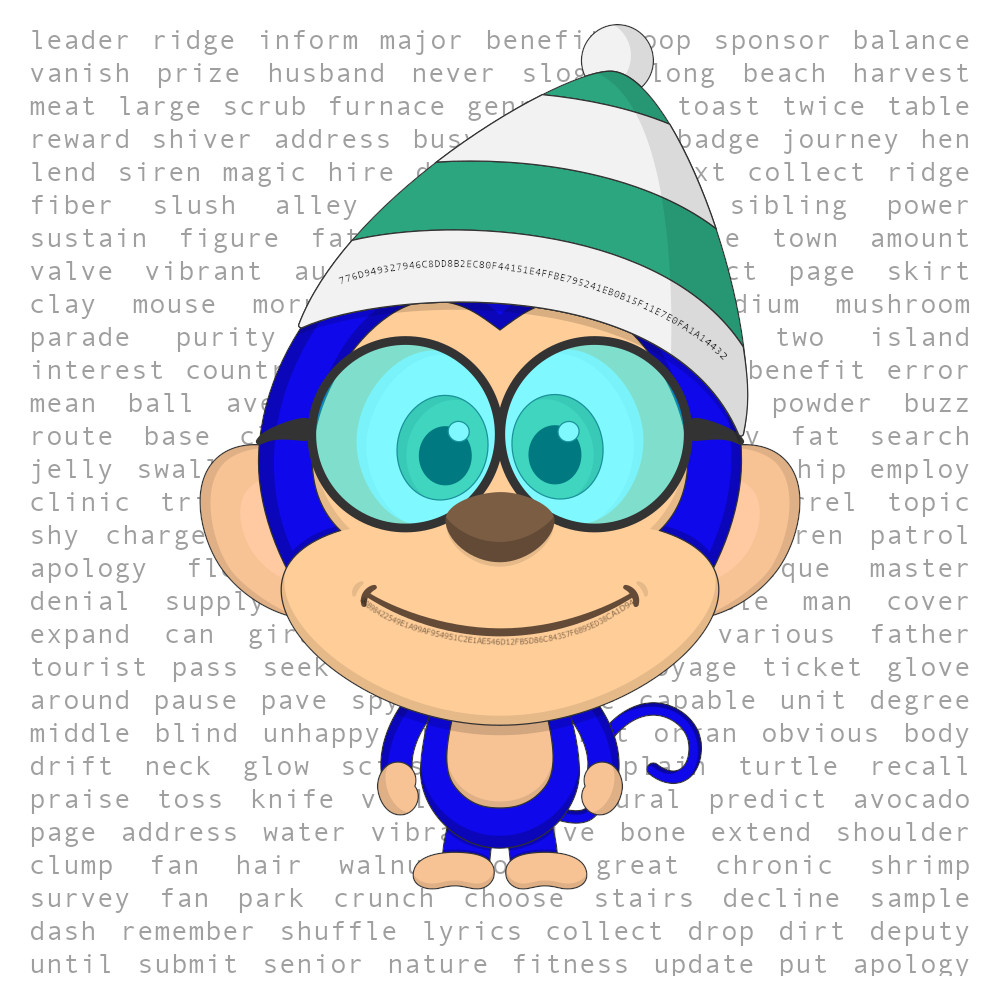

Create Reading Now]]>Update: it's all gone folks! Thanks for joining in the puzzle. I'll keep it here for those who still want a challenge.

The rules are simple. Be the first to gain access to this Banano wallet with the secret key and it's all yours. All 1,000 BAN (~$10 AUD / $7 USD at the time of writing). If the wallet has 0 balance, it means someone has successfully cracked the puzzle and liquidated the account!

Here are the guidelines for solving the puzzle:

- You'll be looking for a key which you'll be able use a wallet like vault.banano.cc to import

- The prize wallet address is ban_3cq86yu7316c59zjhyonm59nce9mdaorttazyysi634qyxi91qrqdhyjkuef

- Everything you need to find the key is in the below MonKey image!

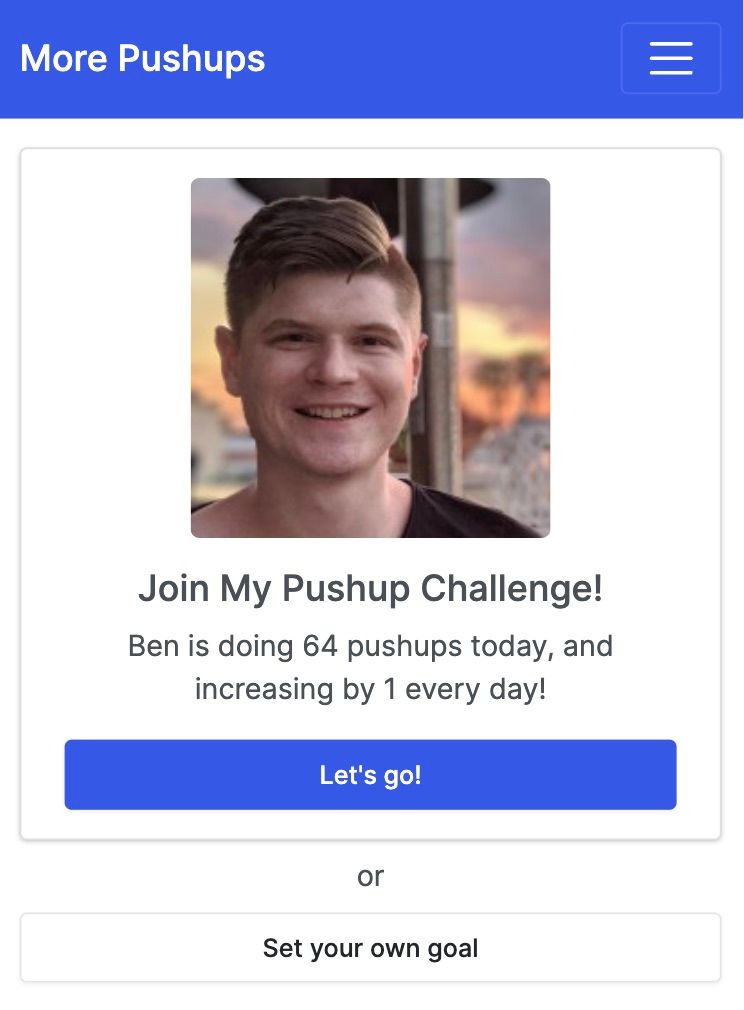

At the beginning of this year, I set myself a goal of increasing the number of pushups I did each day. 1 on January 1st, 365 on December 31st. I'm not talking consecutive repetitions (I'm not that hardcore!), but just throughout the 24 hour period.

There were various pushup apps out there, but none that I truly loved, or allowed me to "track my moving goal" just how I wanted to. So I went ahead and created More Pushups.

Some of the cool features it has:

- You can set your starting number and how many more pushups you want to do each day

- You can either manually enter the number of pushups as you do them or you can record them by touching your nose to the phone

- You can see a neat history of how you are trending day-by-day against your goal

- All data is stored locally and never transmitted from your device, no need to "create an account"

- You can share your goal with others by generating a "share" link that invites others to join you in setting their own pushup goal. Here is my sharing link!

It's a progressive web application that can be installed on the home screen on your mobile device. On Android you can even set up daily reminder notifications to get you going (Apple doesn't support web-based push notifications).

Like almost all my projects, it's also available as open source.

]]>