上禮拜看到的文章,作者在 AWS 上面只用 25.5 個小時就爬了 1B 個頁面,在 tune 過效能後的成本是 US$462:「Crawling a billion web pages in just over 24 hours, in 2025 (via)」。

作者在文章裡面有提到一篇 2012 年的「How to crawl a quarter billion webpages in 40 hours」,當時用了 39.5 個小時左右,花了 US$580 爬了 250M 個頁面:

For some reason, nobody’s written about what it takes to crawl a big chunk of the web in a while: the last point of reference I saw was Michael Nielsen’s post from 2012.

這次是純 HTML (沒有 JavaScript),在 2012 年的文章沒有特別提,應該也是沒有在管 JavaScript:

HTML only. The elephant in the room. Even by 2017 much of the web had come to require JavaScript. But I wanted an apples-to-apples comparison with older web crawls, and in any case, I was doing this as a side project and didn’t have time to add and optimize a bunch of playwright workers.

裡面有提到幾個有趣的問題,一個是 parsing 的部分其實很吃 CPU,會是瓶頸之一,主要的原因是現在頁面比 2012 年大許多了,中位數與平均數都大很多:

Profiles showed that parsing was clearly the bottleneck, but I was using the same lxml parsing library that was popular in 2012 (as suggested by Gemini). I eventually figured out that it was because the average web page has gotten a lot bigger: metrics from a test run indicated the P50 uncompressed page size is now 138KB, while the mean is even larger at 242KB - many times larger than Nielsen’s estimated average of 51KB in 2012!

他的解法是換 parsing library,從 lxml 換成 selectolax,效能有巨大的提升:

I switched from lxml to selectolax, a much newer library wrapping Lexbor, a modern parser in C++ designed specifically for HTML5. The page claimed it can be 30 times faster than lxml. It wasn’t 30x overall, but it was a huge boost.

另外一個就是 HTTPS 的 handshake overhead 了:

That said, one part of fetching got harder: a LOT more websites use SSL now than a decade ago. This was crystal clear in profiles, with SSL handshake computation showing up as the most expensive function call, taking up a whopping 25% of all CPU time on average, which - given that we weren’t near saturating the network pipes, meant fetching became bottlenecked by the CPU before the network!

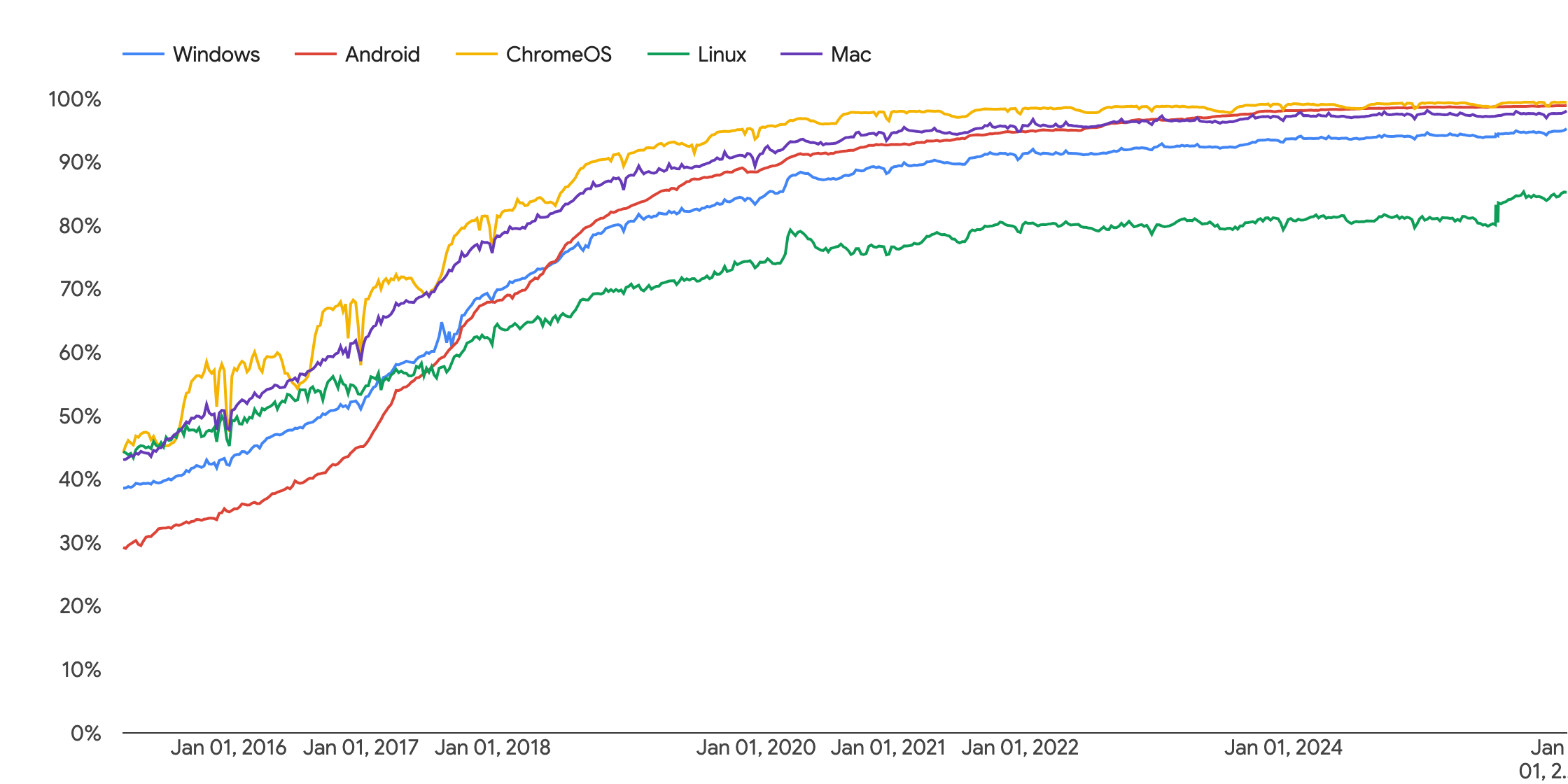

從 Chrome 的 telemetry 可以看出來 HTTPS 的成長速度,這邊比較重要的時間點是 Let's Encrypt 在 2015 年十月的時候透過 IdenTrust 的簽名讓現有的瀏覽器支援 Let's Encrypt:

所以現在的硬體與技術對於 raw data 的取得已經不是太大的問題了 (甚至 2012 年的時候就已經是可行的了...)。