If you have an iPhone, each time you get a call today you’re using private information retrieval

]]>We often get asked if homomorphic encryption is used at scale today (i.e. deployed to hundreds of millions of users). The answer is yes, primarily thanks to private information retrieval!

If you have an iPhone, each time you get a call today you’re using private information retrieval (PIR) under the hood to look up information about the caller id without revealing to the server who’s calling you. Additionally, if you happen to use Facebook’s end-to-end encrypted Messenger app, you’re using PIR each time you click a link in your chat. These links are checked against known malicious links in a way that doesn’t compromise the user’s privacy. There are in fact more wide scale deployments of PIR but these are some of the more interesting consumer-facing ones.

If you’re familiar with Ethereum’s privacy roadmap, PIR fits most cleanly into the bucket of “private reads,” enabling users to query or browse Ethereum apps without worry of surveillance from say their RPC provider. You can learn more about the effort to bring private reads to Ethereum here.

In this blog post, we'll be providing an overview of PIR, along with a demo on how we can bring privacy to DNS so that you can privately look up websites. We chose DNS as it's something many people are familiar with and a good introductory medium with which to showcase PIR. If you'd like to jump straight to our demo, you can check it out here.

So what is private information retrieval and what does it have to do with homomorphic encryption?

Developing intuition for PIR

Private information retrieval (PIR for short) can be thought of as a way to privately read some information from a database. Specifically, PIR enables a user (often referred to as the "client") to retrieve an item from a database without the database owner (the "server") knowing which item was retrieved! Many PIR constructions rely on homomorphic encryption, not to be confused with fully homomorphic encryption (FHE) which is more than a decade younger than PIR.

The strawman construction of a PIR scheme simply involves the server sending the entire database back to the user. While this construction achieves our privacy goal of the server not learning which item the user wants to look up, it is obviously unsatisfactory. Thus, one goal of PIR is to minimize the data that has to be shared between the user and server (in technical speak, reduce communication). Another major goal is to reduce the amount of work the server has to do in providing an answer to the user; this is what most of the research work is focused on today (reduce computation). Homomorphic encryption enables computation directly over encrypted data and will ultimately help in reducing the amount of communication between the client and server.

PIR constructions fall into two camps: those that are “single server” and those that are “multi-server.” Multi-server PIR looks more like MPC in that to obtain privacy you must assume the servers do not collude with one another. In this article, we’ll be looking at single-server PIR which often relies on lattice-based cryptography (which forms the the foundation of FHE as well).

A brief math detour

We'll put the encryption part aside for now and look at how we can use matrix-vector multiplication to just perform an array lookup on a database.

Doing lookups in the clear

Suppose we have a database containing the values \([16, 15, 14, 13, 12, 11, 10, 9, 8, 7, 6, 5, 4, 3, 2, 1]\). We can pack these into the following matrix:

\[ D=\begin{bmatrix} 16 & 15 & 14 & 13 \\ 12 & 11 & 10 & 9 \\ 8 & 7 & 6 & 5 \\ 4 & 3 & 2 & 1 \\ \end{bmatrix} \]

To make a query against the server, the client must compute the row \(r\) and column \(c\) indices from \(i\) where

\[r = i / 4\]

\[c = i\ \mathrm{mod}\ 4\]

Suppose the client wants to retrieve the item at index \(i=6\). This gives \(r=1\) and \(c=2\).

The client constructs a vector with zeros everywhere except in the \(c=2\) row where they place a 1:

\[ q=\begin{bmatrix} 0 \\ 0 \\ 1 \\ 0 \\ \end{bmatrix}\]

Now, the server can compute:

\[ y=Dq= \begin{bmatrix} 16 & 15 & 14 & 13 \\ 12 & 11 & 10 & 9 \\ 8 & 7 & 6 & 5 \\ 4 & 3 & 2 & 1 \\ \end{bmatrix}\begin{bmatrix} 0 \\ 0 \\ 1 \\ 0 \\ \end{bmatrix} =\begin{bmatrix} 14 \\ 10 \\ 6 \\ 2 \\ \end{bmatrix} \]

and send \(y\) back to the user. The user can then locally extract the element \( r=1\) , which is 10, the value at index 6.

Obviously this construction provides little privacy for the user since the server can see the user's request and deduce the user is interested in the value at index 6.

Encrypting the client's request

So how will we augment the prior construction to hide the user's request from the server?

At the heart of many PIR schemes lies some form of homomorphic encryption, meaning an encryption scheme supporting computation directly over encrypted data (ciphertexts). Suppose we have an encryption scheme that supports the following operations over messages \(m_a\) and \(m_b\):

- Multiplication by a plaintext scalar \(b\): \(\mathrm{Enc}(m_a * b) = b * \mathrm{Enc}(m_a)\)

- Addition of ciphertexts: \(\mathrm{Enc}(m_a + m_b) = \mathrm{Enc}(m_a) + \mathrm{Enc}(m_b)\)

Now that we know what a linearly homomorphic encryption scheme is, let's see how the user can privately lookup items in a database. Instead of sending a vector with plaintext data, the user now sends over the following vector where all the values are encrypted under a key they own:

\[ q=\begin{bmatrix} Enc(0) \\ Enc(0) \\ Enc(1) \\ Enc(0) \\ \end{bmatrix} \]

Since the encryption scheme is linearly homomorphic, the server can directly compute the following:

\[y=Dq=\begin{bmatrix} 16 & 15 & 14 & 13 \\ 12 & 11 & 10 & 9 \\ 8 & 7 & 6 & 5 \\ 4 & 3 & 2 & 1 \\ \end{bmatrix}\begin{bmatrix} Enc(0) \\ Enc(0) \\ Enc(1) \\ Enc(0) \\ \end{bmatrix} \]

The user receives the following result from the server:

\[ y=\begin{bmatrix} Enc(14) \\ Enc(10) \\ Enc(6) \\ Enc(2) \\ \end{bmatrix} \]

and can decrypt it using their private key to get back

\[ \begin{bmatrix} 14 \\ 10 \\ 6 \\ 2 \\ \end{bmatrix} \]

This solution at least reduces the communication; instead of returning the full \(NxN\) matrix to the user, the server sends back a single column. Modern constructions are a lot more interesting than what we've shown but hopefully our brief walkthrough provides some intuition for how homomorphic encryption enables private database lookups.

Toolbox for modern PIR constructions

If you're more mathematically inclined, you won't be surprised to learn that many single-server PIR constructions rely on lattice-based cryptography which nicely supports matrix-vector multiplication as we saw above.

Intuitively, it seems like if the server truly doesn't learn what item the user is interested in, then the server must necessarily "touch" every item in the database. Such a setup is prohibitively expensive. Indeed, in the simple construction above, the server touched every element in the database matrix when performing the matrix-vector multiplication. One option to address this is batching many different user queries together when doing a single linear scan over the database so that the amortized cost per query is lower.

To provide better efficiency, a lot more machinery is involved in modern day constructions. Intuitively, there are a few levers researchers can pull on-communication, security, and correctness. Specifically, we can reduce the amount of computation that needs to be done by the server (thereby improving response times) if we're okay with the user or server performing some "pre-processing" or if we don't mind introducing some additional commmunication between the two parties. Another trick is to relax security/privacy, meaning the server learns that the user is interested in say one of the first 100 items in a database with 1000 total entries. Finally, researchers can relax correctness, meaning there may be a non-neglible probability that the client does not get back the item they were looking for; however, the client will know that they received the wrong item so they can submit another private query.

Finally, PIR constructions often assume the user provides the server with the relevant index, meaning the user must know the item's location in the database. This seems impractical for many applications but there is a way around this using probabilistic filters to obtain keyword search.

Augmenting DNS to provide private lookups

Now that we've covered PIR, let's look at how to provide private lookups in the context of DNS.

DNS and HTTPS 101

Before diving into our demo, we'll provide a brief rundown of DNS and the privacy it does and does not provide today. Feel free to skip this section if you're familiar with DNS.

Suppose you want to check out the latest happenings in FHE, so you open up your web browser, type blog.sunscreen.tech into your address bar and hit enter. Let's take a look under the hood to see how this actually works via Domain Name System (DNS).

Akin to how phone numbers direct your call to Alice's phone and not Bob's, an Internet Protocol (IP) address allows the network to direct your request to Sunscreen and not Youtube. Unfortunately, just as remembering your friends' phone numbers is hard, it's also hard to remember which IP address goes with which service. The Domain Name System (DNS) was introduced in 1983 to solve this problem. Think of DNS like an old school phone book; you can give it the name of a service (blog.sunscreen.tech) and it will give you the IP address (i.e. "number"). When you type in a URL and hit enter in your browser it actually makes 2 network requests: the first is a DNS lookup to convert the name into a number and the second is to actually fetch the blog.

When we run the nslookup command (to manually perform a DNS request) in our computer's terminal for sunscreen.tech, it responds that there are 4 IP addresses corresponding to sunscreen.tech: 143.204.160.48, 143.204.160.16, 143.204.160.54, and 143.204.160.53.

Security

Unfortunately, DNS as originally designed has a few privacy and security problems:

- Due to the lack of encryption, passive observers can see what host your browser is requesting.

- The DNS server operator knows which host you're looking up.

- Even worse, DNS lacks message authentication, allowing active adversaries to modify either the DNS request or response. This allows the adversary to trick your browser into sending the request to a malicious host.

While the first two issues aren't ideal, the third is extremely dangerous given the distributed nature of the internet. To address this, DNSSEC was developed in the mid 1990s to provide cryptographic authentication to ensure your lookup isn't tampered with. However, passive network observers can still see what address you're looking up and easily track your browsing behavior. With DNS over HTTPS, we additionally get encryption, which hides the request and response from prying third party eyes.

However, the DNS server itself can still see the user's request. Is this as good as we can hope to do? Intuitively, it feels like the server must be able to view the user's query in order to process a response.

Our demo

As it turns out, we can additionally hide the request from even the DNS server itself using PIR.

We'll be using FrodoPIR, a 2023 PIR scheme that relies on lattices from the Brave team. What's great about this construction is that it's inexpensive to deploy/run in practice and it requires little computation done by the end-user. Unfortunately, it blows up the database size by ~3x but for our purposes this won't be much of an issue since the database is relatively small to begin with.

How does this work at a high level?

- Preprocessing: First, the server converts and "compresses" the database into a matrix (a fancier version of the \(D\) above). The database here consists of various websites (e.g.

sunscreen.tech) along with their IP address. The client downloads this compressed matrix along with some additional info and uses that to perform some preprocessing for future queries (allowing them to save time later!). - Send encrypted request: Next, the client creates an encrypted query vector with an indicator value for the corresponding entry in their vector (similar to the \(q\) vector in the simple construction above) and sends this vector over to the server.

- Server lookup: The server then performs the database lookup using the encrypted query vector and returns an encrypted result to the client. Roughly speaking, this is similar to the matrix-vector multiplication of \(Dq\) above to get the encrypted vector result \(y\).

- Receive result: Finally, the client uses their private key to decrypt and get the result (the website they wanted)!

Challenges

Unfortunately, things aren't entirely straightforward.

- Standard PIR schemes (like FrodoPIR) require the client knowing the location of the entry in the database. To get around this problem, we'll use ChalametPIR which provides a nice way to convert FrodoPIR (specifically PIR schemes relying on the Learning With Errors problem) into a scheme supporting keyword search. This is achieved via binary fuse filters, generally a faster and more memory efficient alternative to bloom filters.

- What happens if client looks up an entry (i.e. website) that doesn't exist? To avoid sending random garbage back to the user, we get around this by checksumming the value entry and returning an invalid checksum with overwhelming probability if the entry isn't in the database.

Our demo putting all these concepts together is available here. You can lookup various websites, trying out old school DNS, DNSSEC, DNS over HTTPS, and then finally our DNS using PIR.

So can I integrate PIR into my app today?!

Potentially. If you're primarily interested in point lookups and have a relatively small database (say <100GB) that you're okay updating say once or twice a day, then yes.

However, depending on what kind of data is in the database and what sort of lookups you want to offer, there may be some complexity in reformatting the database to support PIR. The most straightforward query to support today is point lookups. If you're hoping to support some sort of SQL, graph, or vector database, there will be a number of challenges to bring PIR to your app.

Additionally, you'll want to consider how large the database is and how often the database needs to be updated. Single-server PIR (what we looked at here) tends to have some performance issues beyond databases of a few hundred GB. If you'd like to work with larger databases, it's worth exploring multi-server PIR schemes. Furthermore, PIR can be tricky to work with if you need the database to be updated in real time. You may already have a sense for why database updates are problematic based on the preprocessing step above. Pre-processing usually depends on the contents of the database and would thus need to be repeated any time the database changes.

Finally, if you're worked with FHE before, you may know that adding privacy to the computation slows things down. We'll run into a similar issue with PIR so you'll have to take into account if your use case can support a bit more added latency. Many of the real-world deployments of PIR (e.g. Apple, Meta) are highly customized to get good performance.

Parting thoughts

To bring meaningful privacy to the web, we need PIR, often in conjunction with other advanced cryptography.

Although we've focused on consumer use cases in this blog, we think much more value can be unlocked by bringing PIR to B2B applications, starting with the crypto and fintech space. Some of the most exciting use cases we've explored are enabling government customers to privately lookup individual transaction history data on blockchain analytics platforms, as well as enabling crypto exchanges (or more generally fintechs) to cross-query other platforms to identify bad actors.

If you're more technically inclined and want to continue learning about PIR, we highly recommend this video. As always, feel free to reach out with any questions or feedback.

]]>At Sunscreen, we've spent the past few years building what we believe is the most developer-friendly TFHE compiler out there. Our Parasol compiler lets you write your program in C, add a few directives to indicate which inputs should be encrypted, and we handle the rest - parameter selection, circuit optimization, bootstrapping, all of it. If you've read our previous posts, you know how strongly we feel about letting developers "bring their own program" rather than rewriting everything from scratch.

But there's always been a gap between having a working TFHE program and running it in production.

The missing piece

Let's be honest about the state of things. You can write an FHE program, compile it, and run it locally. But what happens when you actually want to deploy it? Suddenly you're managing compute infrastructure, figuring out key lifecycle management, handling orchestration, and worrying about resource allocation. These are hard problems, and they're not cryptography problems. Most teams building with FHE are not (and shouldn't have to be) infrastructure teams.

This is the gap we've been thinking about for a while: how do we get from "cool demo" to "production service"?

Lattica

Today we're excited to announce our partnership with Lattica to bring practical TFHE to production environments.

Here's how the two pieces fit together:

Sunscreen provides the cryptographic engine via our Parasol TFHE compiler transforming your program into optimized encrypted computation, and our runtime executes it.

Lattica provides the rest of the infrastructure around it: orchestration and workflow automation, compute resource allocation, key management, and monitoring.

The result is a workflow that feels much closer to deploying a normal application:

- Compile with Sunscreen: write your TFHE program and compile it locally with Parasol to produce a deployable artifact.

- Deploy to Lattica: upload your compiled artifact to Lattica's platform.

- Configure access and resources: set permissions, choose your compute resources, and control credit usage.

- Accept encrypted queries: your program is live and ready for use.

If you've ever dealt with the pain of trying to stand up FHE infrastructure from scratch, you'll appreciate how much complexity this abstracts away.

“Sunscreen has built a powerful TFHE compiler that makes encrypted programs accessible to developers. At Lattica, our focus is making those programs deployable, scalable, and operable in real environments. This partnership is about closing the gap between cryptographic capability and production reality, so teams can move from prototype to live encrypted services without reinventing infrastructure,” shared Rotem Tsabary, Founder & CEO at Lattica.

“This partnership represents something we've been working toward since we started Sunscreen. We always believed that TFHE would only see real adoption when deploying it felt as natural as deploying any other application. Lattica brings the infrastructure expertise that complements our cryptographic engine, helping privacy builders turn working demos into production services. We’re excited to see what developers will build,” said Ravital Solomon, CEO at Sunscreen.

Why TFHE (and when not)

If you've been following FHE developments, you know there's more than one FHE scheme out there, and the choice matters quite a bit depending on your application. Lattica’s platform makes encrypted compute a cloud-native capability for CKKS, and provides the execution layer that brings sensitive workloads and cloud infrastructure together, so it's worth briefly touching on when you'd reach for each.

CKKS excels at approximate arithmetic on large datasets. It's parallelizable, GPU-accelerated, and ideal for AI workloads. If you're doing data-heavy batch operations, CKKS is likely your best bet.

TFHE is different. It's built for exact computation: comparisons, conditionals, branching logic. The tradeoff is that it's CPU-based and generally more expensive per operation than CKKS for pure arithmetic.

So when do you want TFHE? When precision is a requirement. Smart contracts that need exact balance checks. Voting systems where rounding errors would be catastrophic. Any application involving complex decision logic over encrypted data - if-else branches, comparisons, lookups - this is where TFHE shines.

Our partnership with Lattica means you don't have to choose between CKKS and TFHE at the infrastructure level. Both are available through the same platform, so you can use the right tool for each part of your application.

What we're excited about

This partnership represents something we've been working toward for a long time: making TFHE not just possible but practical. We've always believed that one of the biggest barrier to adoption isn't the cryptography itself - it's the infrastructure around it like the deployment complexity, the operational overhead etc. By partnering with Lattica, we can focus on what we do best (the cryptographic engine) while Lattica handles the infrastructure challenges that have historically kept TFHE out of production environments.

We think this is how TFHE gets adopted in the real world - not by asking every team to become infrastructure experts, but by making encrypted computation something you can deploy as easily as any other service.

Get started

Lattica is offering 3 free compute hours to get you up and running. You can check out the full workflow and sign up on Lattica's website. For more info on Sunscreen's TFHE compiler and some demos of what's possible, take a look at our documentation and demos.

We're excited to see what you build with this. If you're working on an application that could benefit from encrypted computation - whether it's in finance, healthcare, blockchain, or something we haven't thought of yet - we'd love to hear from you.

]]>To create privacy-preserving applications, we need a concept of "verifiable hidden state" so that users can protect their individual data while still

]]>With fully homomorphic encryption (FHE), we can bring privacy to existing applications and enable entirely new applications across finance, prediction markets, machine learning, and much more.

To create privacy-preserving applications, we need a concept of "verifiable hidden state" so that users can protect their individual data while still allowing that data to be used in an application (e.g. trading, matching, prediction). Threshold FHE accomplishes exactly that. Using this technology, program inputs can be hidden from everyone. The program output may or may not be hidden, depending on the particular use case.

Our team has developed what we call our "secure processing framework" (SPF for short), our end-to-end offering that allows developers to create, deploy, and use FHE programs. Its architecture was inspired by that of CPUs and GPUs, and the underlying technology was developed in-house by our team. We've combined our experiences across cryptography as well as high performance computing, signal processing, and high frequency trading to build FHE in a way that scales.

So what motivated us to build SPF and why isn't FHE production-ready yet?

Challenges building FHE apps

The first set of challenges occurs when creating an FHE application.

- You must determine the best FHE scheme for your needs. There are a few different FHE schemes out there such as BFV, CKKS, and TFHE. You'll need to select which scheme is most appropriate for your particular needs (e.g. are you looking to primarily do arithmetic over floats? Comparisons over integers?)

- You must select FHE scheme parameters very carefully. Unlike some other cryptographic primitives (e.g. zero knowledge proofs), program performance is very sensitive to parameter selection. By choosing too large parameters for a particular use case, you may experience a 10x degradation in performance versus having chosen parameters exactly right. For certain FHE schemes (like ours) there are almost a dozen parameters to configure that are deeply interconnected and influence everything from security to correctness and performance.

- You must structure the program exactly right. Working with FHE today is a bit like programming with computers in the early days; you need to express programs in a very low-level way and consider what operations are relatively expensive vs cheap for the particular FHE scheme. Quite a bit of creativity is needed to effectively translate your high level program into arithmetic or binary circuits. If you've already written your application to operate over data in the clear, you may have to restructure it so that it's "FHE friendly."

Challenges deploying FHE apps

In summary, programming with FHE is similar to Goldilocks looking for just the right fit. Unfortunately, more challenges arise in deploying an FHE app.

- Someone must provide the appropriate hardware/machines for running the FHE program. To get good performance with the technology today, very specific hardware must be set up. As an example, we've seen that working with a 64 core machine helps significantly for our TFHE scheme variant as it allows us to take advantage of parallelism opportunities for various operations.

- Someone must set up a "threshold committee" to hold shares of FHE public key. This committee is responsible for responding to decryption requests in a timely manner.

- Someone must set up an access control system and/or a data storage system. How do we ensure that only the "correct" parties have access to data in the clear? This is where access control becomes important. Additionally, FHE-encrypted data is large. If the app is deployed on blockchain, we can't expect to store this on-chain. Where then do we store it and who manages this?

Thus, there's quite a bit of work involved with actually using FHE in an application today beyond just translating your application code into FHE code.

Secure Processing Framework (SPF)

SPF aims to handle all of the above problems on the developer's behalf.

To bring verifiable hidden state to blockchain applications, we make use of threshold FHE, building on our own variant of the Torus FHE scheme (TFHE).

Looking inside

There are 3 major pieces in SPF: the core stack, the control stack, and the data bus.

Core stack

The core stack allows developers to write highly-optimized FHE programs by default. It consists of our LLVM-based compiler, our customized virtual processor, and our low-level TFHE library implementing the cryptographic algorithms necessary for our variant of TFHE. If you'd like to learn more about the core stack, we discuss it in detail in a prior blog post.

Developers only interact with the compiler which abstracts away the usual difficulties in working with FHE. The processor and low-level TFHE library are hidden away.

Control stack

The control stack supports the core stack so that developers can use their FHE programs on chain. It provides important functionalities like threshold decryption (via a decentralized network of nodes holding shares of the secret key), access control (to ensure only authorized parties have access to plaintext data), and off-chain storage.

As we utilize threshold FHE, a network of parties needs to be set up to hold shares of the secret key and respond to decryption requests. We handle this on the developer and user's behalf automatically.

A practical challenge with FHE today is that the encrypted data is fairly large--for blockchain standards at least--and accordingly should not be stored directly on-chain. We provide a data store for ciphertexts and program data. In later versions, we can provide developers with the flexibility of choosing among a few decentralized immutable data storage solutions.

Data bus

So how do you actually interact with SPF on-chain today? That's where the data bus comes in. The data bus can be thought of as a pull oracle; it listens for events emitted on-chain. When you request a program run or decryption, an event is omitted on chain. The data bus picks up the event and forwards the request. The result of the computation is put back on-chain via a callback.

Features

What sets SPF apart from existing FHE offerings is the features it can offer developers today and in the future.

- One program, any chain: Today, blockchains are operating in silos. To unlock the full potential of web3, the community must enable seamless interoperability between various ecosystems. Specifically, we believe there will no longer be chain and language silos in blockchain as there are today. SPF puts this plan in action by enabling developers to write one (FHE) program and use it on any chain, regardless of virtual machine. This makes it easy for developers to experiment with different blockchains as well as deploy their app to multiple chains.

- Bring your own program: Building on our prior belief, developers should not have to re-write their application to take advantage of FHE. In a perfect world, developers can transform their existing application code into FHE-enabled code. To support this experience, we allow developers to write in mainstream programming languages. They simply add directives to indicate which inputs/outputs should be hidden and which functions are FHE programs. Bringing more developers into the blockchain space also requires meeting developers where they're at---whether in terms of programming languages or cryptography knowledge.

- Automatically optimized FHE programs: As we touched upon prior, a big challenge with FHE today is determining how to structure your FHE program to get good performance. SPF includes a compiler and virtual processor which determines, behind-the-scenes, how to optimize a developer's code for them.

- Modular stack: There will be better FHE schemes in the future. Accordingly, we designed SPF so that the backend FHE scheme can be swapped out without interfering with the developer's programming experience. We may also wish to switch out our custom instruction set architecture (called PISA) to something more standardized like RISC-V. Modularity also extends to our control stack, so that off-chain data storage mechanisms can be updated or better access control mechanisms can be introduced.

- Built for the hardware endgame: Much like transformers in deep learning were architected to take advantage of the parallelism provided by GPUs, FHE must be architected to suit hardware. What this means in practice is extracting parallelism from how FHE computing is done as well as reducing the critical path in the circuit itself; together, doing these two things can unlock higher throughput and lower latency as specialized hardware for FHE becomes available. This is accomplished in large part by creating our own variant of the TFHE scheme.

Developer workflow

SPF enables developers to bring FHE directly to applications on any chain. You do not need to migrate your program to a new chain, L2, rollup, etc.

For simplicity, we'll look at how to write and deploy your program on Ethereum. Other chains work similarly.

- Write your FHE program in C. While there are some restrictions as to what you can vs can't write today, your application code is just normal C code.

- Compile your FHE program using our compiler. Doing so will create an ELF object file that can be used by Sunscreen.

- Register your program. As part of registration, you'll receive a hash for the binary program file which will be needed to reference your program on-chain.

- Write your accompanying smart contract and deploy the program. Next, you'll write the Solidity dApp that allows users to actually interact with your FHE program. There are particular ways in which this contract must be structured.

- Requesting programs runs. To request program execution using SPF, you'll specify the handle of the program to be run along with the number of output parameters. Our data bus monitors the chain for program run requests and routes it to the SPF for off-chain execution.

- Requesting decryption. To request decryption of the output parameters, you'll need to specify the handle of the program along with the number of output parameters to decrypt. Our data bus monitors the chain for decryption requests and routes it to the off-chain threshold decryption service for processing. The threshold decryption service will then decrypt the desired value and post the results back on-chain using the specified callback.

Alternatively, if you're interested in deploying FHE apps in web2, we also support that experience with SPF.

The only piece that changes is the last step. Instead, you will:

- Develop an application around your FHE program. This involves creating a frontend and backend that interacts with the SPF service. The FHE component will consist of two main pieces:

- Requesting the SPF service to run your FHE program. You will submit the program, along with the encrypted input data, to an endpoint using an HTTP POST request. The SPF service will respond with a unique identifier for the computation that you can use to track the status of the computation, along with references to resulting ciphertexts.

- Handling the result. Once the SPF service has completed the computation (which you can check by polling our

runsSPF REST endpoint), the appropriate party can request the results be decrypted by making a GET request toour threshold decryption service. This will then return the decrypted result.

We've omitted details about access control as this feature will be developed in a later release.

A delicate balance between on-chain and off-chain

To ensure FHE programs can be used on any chain without having to modify the chain's architecture or consensus mechanism, we must move a number of tasks off-chain.

Specifically, we store FHE programs, their inputs, and any encrypted program outputs off-chain. Instead, only links/handles to these are posted on-chain. For plaintext program outputs, these can be stored on-chain as they're small in size.

The FHE computation is run off-chain using relatively powerful hardware deployed by Sunscreen initially. The threshold committee responsible for decrypting data also lives off-chain.

Why FHE

For many years now, extractive corporations have been feeding on our data without returning any of its value to us; privacy-preserving technology returns power to those of us (be it individuals or corporations) who actually produce valuable data. While there have been many exciting developments in AI and ML, these do not shift the existing power paradigm; rather, these developments have the potential to exacerbate the existing situation.

Trusted execution environments (TEEs) and multi-party computation (MPC) are alternative privacy-preserving technologies. However, we view them as further centralizing forces, if not used in conjunction with FHE.

As a hardware-based and centralized solution, there's a natural vendor lock-in when using TEEs for privacy. Additionally, securely programming an application with TEEs continues to be incredibly challenging, with new attacks found frequently.

MPC is a software-based solution but is communication-bound. Thus, to get good program performance, the relevant computing parties must be located very close in physical proximity. In some cases, this even means co-location of the servers.

We believe in a decentralized future in which users own their own data. Fully homomorphic encryption allows us to best achieve that vision.

Our evolving vision

Devnet and testnet for SPF will be available soon. Our whitepaper will be available in the coming weeks, laying out our vision for how to bring verifiable hidden state to applications in web2 and web3 in a scalable manner.

We'd love to hear what you're excited about building and what features you need to make your dream (d)app a reality!

Resources to get started

- Parasol developer docs: Start using our next-gen FHE compiler today.

- Past blog post: Learn more about our vision for homomorphic computing.

- Ask our team anything: Chat with our team about FHE or any app ideas!

Fully homomorphic encryption (FHE) enables running arbitrary computer programs over encrypted data, allowing users to protect their data while still allowing that encrypted data to be used in an application. With FHE, we can enable apps like fully customizable AI agents--trained on any data, no matter how sensitive--or hidden trading strategies in an on-chain ecosystem.

Existing tools for FHE are not built in a way that allows developers to easily create privacy preserving applications. Furthermore, their underlying approaches to homomorphic computing (i.e. computing over the encrypted data) are not architected in a way that will scale well as hardware evolves.

The inspiration for our latest work comes from asking ourselves two fundamental questions:

- How do we truly scale a technology?

- How do we effectively onboard developers to a new technology?

In answering these questions, we (1) re-imagine how computing should be done in FHE using a novel computing paradigm and (2) develop a new FHE compiler design to realize the vision in which developers "bring their own program," rather than re-write their existing application to leverage FHE.

We focus on the Torus FHE (TFHE) scheme, a particular FHE scheme that supports a variety of operations over encrypted data as well as unlimited computation. These features make TFHE especially well-suited to blockchain where it's unknown at setup time how many computations or what kind of computations the developer would like to perform (e.g. this is akin to how Vitalik/EF doesn't know all possible applications that developers might try to build on Ethereum).

Unlike existing approaches to TFHE, our new paradigm combines a special form of bootstrapping, known as "circuit bootstrapping," with multiplexers to realize arbitrary computation in a way that scales well with hardware. By building a virtual processor customized for FHE operations and integrating it as backend to the LLVM toolchain, we enable developers to write their program directly in a mainstream language today.

Re-architecting (T)FHE

Many people (experts included) take a potentially misguided approach to evaluating FHE schemes, focusing on the performance of individual operations rather than entire programs. As a simple thought experiment, consider the following: In System A, each operation takes 1 second. In System B, each operation takes 2 seconds. To instantiate program P with System A, we will need 3 operations but these must be done sequentially. With System B, we also need 3 operations but these can be done all in parallel. Would you prefer to use System A or B for the program?

A visual for those of you that don't want to do the math.

We take a long term view on FHE. For FHE to scale over the coming years, it must be architected to best suit hardware (be it cores, GPUs, or eventually ASICs), rather than expecting hardware to somehow magically accelerate FHE. Specifically, this means designing the homomorphic computing paradigm to maximize parallelism and reduce the critical path in the circuits themselves. Roughly speaking, maximizing parallelism enables us to increase throughput, which is key to unlocking performance with dedicated accelerators. By reducing the critical path, we can obtain lower latency.

In looking at the landscape today, we found that existing TFHE-based approaches do not prioritize these goals.

A brief history on TFHE and bootstrapping

Regardless of which FHE scheme you choose to work with today, a major challenge is "noise." We won't go into exactly what this is but it suffices to say noise is a bad thing. In working with FHE, we need to make sure that the noise doesn't grow so large that we will no longer be able to successfully decrypt the message.

To reduce the noise in a ciphertext so that we can keep computing over it, a special operation called bootstrapping is needed. Bootstrapping is key to performing unlimited computation so a lot of research effort has gone into figuring out how to make this operation fast.

There are various FHE schemes out there such as BFV (which our old compiler was based on), CKKS, and TFHE. The "T" in TFHE stands for torus and this scheme is sometimes referred to as CGGI16 after its authors and the year in which it was published. The former two schemes are generally used in "leveled" format, meaning we only evaluate circuits up to a certain depth, which severely restricts the applications developers can build.

What's great about TFHE is that it made bootstrapping and comparisons relatively cheap (i.e. fast) compared to other FHE schemes. There are some drawbacks to this scheme; most notably, arithmetic is usually more expensive using TFHE than with BFV or CKKS. Additionally, there's a fairly noticeable performance hit when upping the precision (i.e. bit width) in TFHE.

It turns out that there are actually a few bootstrapping varieties for TFHE; namely, we have gate bootstrapping (the OG way), functional/programmable bootstrapping, and circuit bootstrapping.

Gate bootstrapping is what most TFHE libraries and compilers rely upon. It's a technique designed to reduce the noise in a ciphertext and comes from the original TFHE paper.

Functional (sometimes known as programmable) bootstrapping can be viewed as a generalization of gate bootstrapping in that it (1) reduces the noise in a ciphertext and (2) performs a computation over the ciphertext at the same time. At first glance, this approach seems to provide only benefits...after all, you're basically getting two operations for the price of one, right? Going back to our thought experiment, it turns out this approach behaves a lot like System A.

Circuit bootstrapping was introduced many years back but appears to be used thus far for either "leveled" computations or as an optimization when implementing certain forms of functional/programmable bootstrapping. Circuit bootstrapping, like gate bootstrapping, only reduces ciphertext noise. However, it has the nice property of having an output that's directly compatible with a (homomorphic) multiplexer...

Next, we'll look at our computing paradigm which makes use of circuit bootstrapping and homomorphic multiplexers.

Breaking down our approach: CBS-CMUX

We can realize arbitrary computation using multiplexers, independent of FHE. You can think of a multiplexer (known as a mux) as implementing an "if" condition; given two options d0 and d1 and a select bit b, we get d0 if b is 0 and d1 if b is 1. The FHE equivalent of a multiplexer is called a "cmux" as it operates over encrypted data to produce an encrypted output. This turns out to be interesting in the context of FHE.

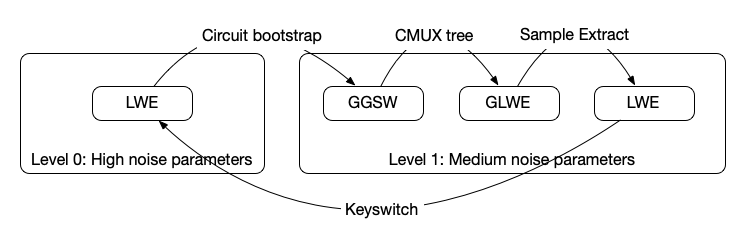

Our high level goal is to perform general computation using a multiplexer tree before going on to reduce the noise (via the circuit bootstrap) so that we can continue performing more computations over the encrypted data. We must make sure we do not exceed the noise budget when doing the multiplexer tree, otherwise we're in a lot of trouble since we may no longer be able to recover the data. While we won't get into the details, there's also a type mismatch between the cmux and circuit bootstrap which requires us to perform some additional FHE operations to get the output of the cmux into the "correct" format to feed back into the circuit bootstrap.

We call our computing approach the "CBS-CMUX approach" as the combination of these two operations allows us to realize arbitrary programs.

There are a few different ciphertext types (e.g. GGSW, GLWE) that we'll have to switch between but we won't discuss those here. While we won't dive into the details now, an upcoming technical paper will provide more insight on how all of this fits together.

While all of this may seem like a nightmare to an engineer, our compiler handles all of these tasks on your behalf automatically.

Our approach:

- Reduces the number of bootstrapping operations in the critical path: Specifically, for key operations like 16-bit homomorphic comparisons, our approach reduces the number of bootstraps in the critical path down to 1. This is important because bootstrapping is one of the most expensive operations in TFHE. When we instantiate a 16-bit homomorphic comparison using functional/programmable bootstrapping, there are 3 respective bootstrapping operations in the critical path. Even if we have specialized hardware, we would have to wait for each one of these functional bootstrapping operations to be done sequentially.

- Provides greater intra-circuit parallelism: When given access to sufficient cores/computing resources, we can speed things up significantly (like System B).

"BYO program"

Developers should be able to bring their own program, rather than having to rewrite their program in some eDSL or newly created language.

Accordingly, the developer experience today allows you to write your program directly in C or bring your existing C program (with some restrictions). To create an FHE program, you just need to add directives (i.e. simple syntax) indicating which functions are FHE programs and which inputs/outputs should be encrypted (i.e. hidden).

Parasol compiler today

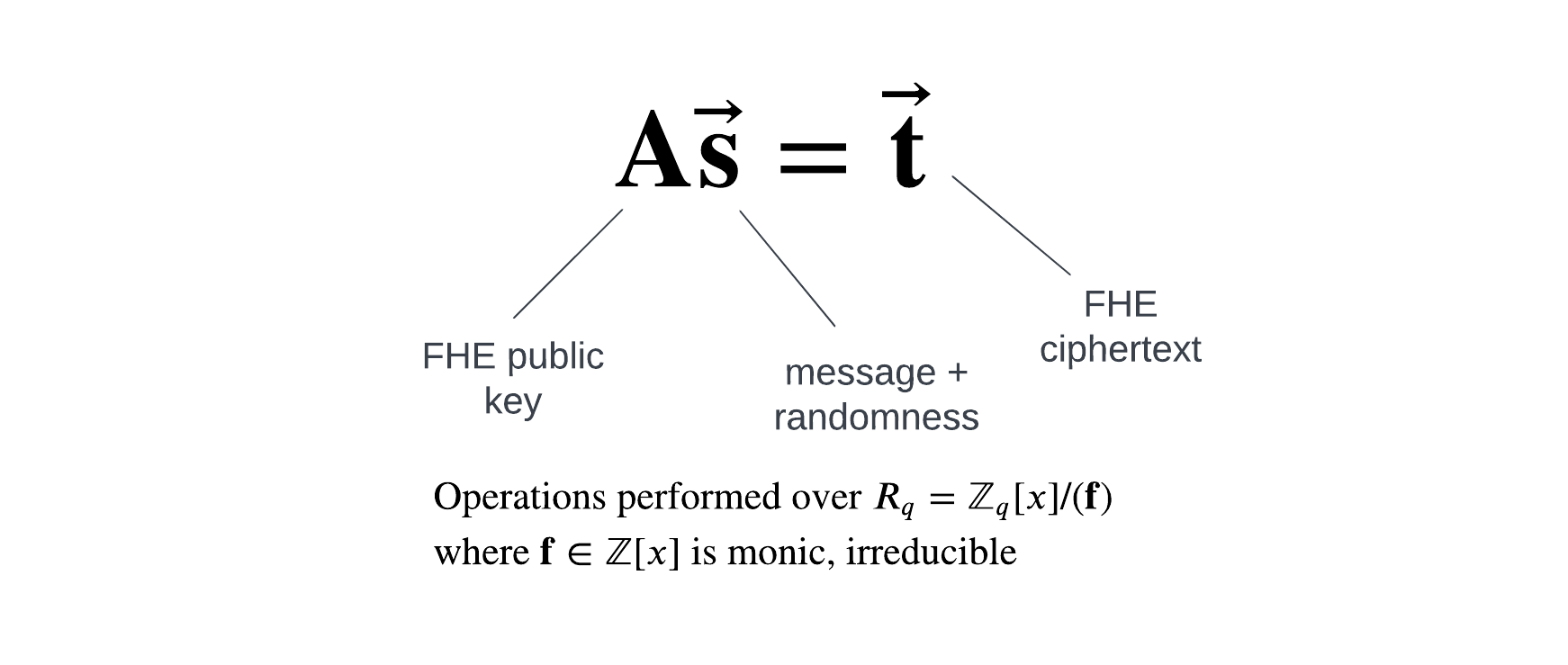

Our compiler abstracts away the usual difficulties of working with FHE which includes selecting FHE scheme parameters, translating your program into an optimized circuit representation, and inserting in FHE-specific operations. Additionally, it's compatible with our single-key TFHE scheme variant (useful if your application operates over a single user or entity's data) as well as our threshold TFHE scheme variant (useful if your application operates over multiple entites data).

Parasol supports common operations like arithmetic, logical, comparison operations. We support imports, and functions can be inlined, making it easier to create modular programs with reusable pieces.

We started with C as a proof-of-concept. Depending on demand, we can add support for other LLVM-compatible frontends like Rust.

There are a number of features we plan to add soon such as signed integers, higher precision integers (today we only support up to 64 bits), division by plaintext, branching over plaintext values, and arrays.

Overview of our design

To provide a great developer experience, we built an LLVM-based compiler. The benefits of this approach are twofold. First, it allows developers to use a variety of mainstream programming languages like Rust, C, or C++ with the only changes to their programs being the directives mentioned above. Second, it allows us to leverage decades worth of optimizations and transformations that are already built into LLVM.

However, how do we efficiently run whatever is output from this compiler? The machine you and I are using won't be able to do this. Accordingly, we need to build a virtual processor that can actually run these FHE programs in an efficient manner.

An important goal of ours was to provide compact program representations. To achieve this, our processor expresses programs as high level operations (e.g. add, multiply, etc.) that it dynamically expands into FHE circuits at runtime.

Our processor, and thus compiler, rely on our in-house created variant of the TFHE scheme.

Technical teaser

For those who'd like to understand more about our stack, we provide some details below. Stay tuned for our upcoming research paper!

LLVM-based compiler: Developers write their FHE programs in C today and compile it using a version of clang that supports our virtual processor. Without the virtual processor, the generated FHE programs can't be run anywhere! Feel free to look here if you'd like to understand how to create an LLVM backend.

Virtual processor: Now to actually run the programs output by clang, we have our virtual processor. We adopt an out-of-order processor design and feature dynamic scheduling within instructions to maximize parallelism. We designed a custom instruction set architecture that enables running programs over a mix of plaintext and encrypted data. Doing so required re-imagining memory at the processor level, adding new instructions to support branching over encrypted data, and building our own circuit processor to support the CBS-CMUX computing approach.

TFHE library: At the lowest level of the stack is our optimized TFHE library which implements the cryptographic operations needed in the TFHE scheme. In theory, different TFHE libraries could be plugged in to work with our processor. However, given we're the only team implementing the CBS-CMUX approach, it made the most sense to have our own library.

The high level workflow is depicted below.

Show me some code!

So what does a program look like when using Parasol? We provide a simple example showing how to do a private transfer below.

#include <stdint.h>

[[clang::fhe_circuit]] void transfer(

[[clang::encrypted]] uint32_t *amount,

[[clang::encrypted]] uint32_t *balance

) {

uint32_t transfer_amount = *amount < *balance ? *amount : 0;

*balance = *balance - transfer_amount;

}

You'll notice that apart from [[clang::fhe_circuit]] and [[clang::encrypted]], this is just a regular C program.

While the benefits of our approach are more apparent in buidling large complex programs, we hope this gives you a taste of what the experience is like!

Benchmarks

We benchmark Parasol against existing FHE compilers (that support comparisons over encrypted data).

Based on the criteria set out in this SoK paper, we consider how compilers perform on a chi-squared test and a simple cardio application that computes a patient's risk of heart disease in the same manner as risk estimators provided by the American College of Cardiology. The former application is heavy on addition and multiplication operations whereas the latter is heavy on comparison operations.

Of the 5 compilers we consider, ours is the only one to rely on circuit bootstrapping as the primary bootstrapping method. Concrete relies on programmable (aka functional) bootstrapping whereas Google, E3, and Cingulata rely on the older gate bootstrapping approach.

Experiments were performed on an AWS c7a.16xlarge instance (64 vCPUs, 128GB RAM) and all compilers are set to provide 128 bits of security.

Transparently, our approach shines when sufficient cores are provided with which we can parallelize tasks across. You will see less of a performance benefit with our compiler/approach if the FHE computation is being carried out on a machine with relatively few cores (e.g. 16, 32 cores). Please note that this only applies to the computing party; there's no issue at all if the end-user, providing their encrypted data, uses a laptop or smartphone!

Chi squared benchmarks

We first look at how our compiler performs for the chi-squared test with respect to runtime of the generated FHE program, size of the compiler output, and memory usage.

Runtime includes circuit evaluation (e.g. not key generation, encryption of inputs). In considering program size, there are slightly different meanings depending on the compiler due to architectural differences. We list the size of Parasol’s emitted ELF program, Concrete’s MLIR representation, and Google transpiler’s binary circuit intermediate representation. For E3 and Cingulata, we give the size of the resulting native executable.

| Compiler | Runtime | Program size | Memory usage |

|---|---|---|---|

| Parasol | 610ms | 256B | 1.0GB |

| Concrete | 1.84s | 1.41kB | 1.82GB |

| Google Transpiler | 13.8s | 323kB | 299MB |

| E3-TFHE | 440s | 2.87MB | 182MB |

| Cingulata-TFHE | 85.6s | 579kB | 254MB |

You'll notice that Parasol consumes more memory than compilers based on gate bootstrapping (i.e. Google, E3, Cingulata); this is due in part to the larger circuit bootstrapping key.

Cardio benchmarks

We also consider how our compiler performs for the cardio program with respect to runtime of the generated FHE programs, size of the compiler output, and memory usage.

| Compiler | Runtime | Program size | Memory usage |

|---|---|---|---|

| Parasol | 920ms | 528B | 1.0GB |

| Concrete | 2.13s | 10.4kB | 4.0GB |

| Google Transpiler | 3.26s | 11.4kB | 274MB |

| E3-TFHE | 119s | 1.87MB | 181MB |

| Cingulata-TFHE | 2.98s | 613kB | 254MB |

What the future holds

The Parasol compiler forms the foundation of our new vision, the "secure processing framework." The SPF will allow one (FHE) program to be used on any chain, regardless of virtual machine.

We'll be open-sourcing Parasol in the next few weeks and providing comprehensive documentation to help get you started. We're excited to finally release work that's taken us many months to achieve! We'll be sharing more about the SPF and accompanying testnets soon. If you're interested in previewing these offerings, our team is happy to chat and learn about your unique needs.

Finally, if you're in Toronto this May for ZK Summit or Consensus, feel free to reach out to me personally if you'd like to say hi!

]]>At Sunscreen, we've recently been spending time on private double auctions where the operators only learn of an order's details after the order has been matched (details of unsuccessful orders are never revealed, even to the operators!). Building upon our last demo, we showcase a truly dark dark pool and how to build it using (threshold) fully homomorphic encryption.

Although our focus is on cryptocurrency in this post, dark pools trace their history back to equities. As "cryptocurrencies are not securities," there are a number of challenges or opportunities (depending on if you're a glass half empty or a glass half full kind of person) outside of privacy to grapple with when building trading venues in crypto. We touch upon some of these issues before presenting our demo.

A brief introduction to dark pools

Traditionally, orders are stored in order books where other parties can view existing bids/offers. However, placing a "large" order (volume-wise, relative to the item's market cap) will almost always cause the market to move against you (e.g. for a large buy order, price may move up once others see your order in the book; for a large sell order, price may go down once others see your order). Dark pools popped up as one solution to this problem within equities, allowing sophisticated (often institutional) investors to place large orders without alerting the market to their intention--thereby incurring minimal price impact/slippage. Dark pools also allow such investors to hide/obscure their trading strategy from others (i.e. protect their alpha).

Dark pools came into being in the 80s, after the SEC allowed securities to be traded off their listed exchange. However, they didn't truly take off until after the mid-2000s when the SEC allowed investors to bypass public markets if price improvement were possible. Nowadays, a significant portion of daily US equities volume passes through dark pools.

So what are dark pools and how do they work? You can think of a dark pool as an invite-only trading venue where only the operator can view the full order book. Assuming participants trust the dark pool operator, they can trade large blocks of securities with minimal to no slippage as other participants are unaware of outstanding orders. Unfortunately, dark pool operators have historically abused their privileged position for their own benefit (some examples include 1, 2, 3).

Make it dark dark

So we ask—can we build a truly dark dark pool where even the dark pool operators can't view the order book?

Specifically, we'd like to build a dark pool where the operator(s) can't view orders until they've been successfully matched. We refer to this as "blind matching" since we want to match orders together without knowing what the underlying order values are (e.g. volume, limit price).

Although there are a few different cryptographic solutions to this problem, we'll showcase how to build a dark dark pool using (threshold) fully homomorphic encryption (which minimizes communication between parties and is a purely software-based solution without the same attack vectors of hardware-based privacy solutions).[1]

At a high level, the following happens:

- A committee generates a public-private key pair such that each committee member holds a portion of the private (i.e. decryption) key.

- Dark pool participants submit encrypted orders.

- Committee members run the matching algorithm directly on the encrypted orders to check for possible matches.

- Upon knowing a successful match can be done between two orders (without knowing price or volume information for the orders), the committee members provide their respective piece of the private key to decrypt the final result (i.e. "Party A has sold a quantity of x items to Party B for y price").

By having the key split into shares, we construct a distributed trust model where no single party can view outstanding orders (which would violate traders' privacy). Furthermore, we can configure trust so that we require just a single honest operator within the pool to ensure order privacy (i.e. say we have 5 committee members; even if 4 out of 5 of them were corrupt, they would not be able to decrypt and view the traders' orders).

How does crypto fit into all of this?

If we were building a dark pool for a security, most of the decisions would be made for us with regards to price execution, auction design, settlement process, and system permissioning so we'd just move directly on to presenting a demo of what such a dark dark pool might look like.

Cryptocurrency currently operates in a regulatory grey zone. While the design space for crypto trading platforms is therefore much richer, there are also a number of challenges due to the lack of established infrastructure and regulations within the space.

Given the dearth of crypto dark pools, there is also a unique opportunity to rethink design to benefit a new class of participants. Below, we bring light to some of the challenges in designing a dark pool for cryptocurrencies, with the goal of sparking a broader conversation within the community and relevant experts.

(Non-)existence of NBBO

Most equities dark pools use midpoint price execution, minimizing the number of disputes between buyers and sellers, as well as allowing trading venues to ensure they provided "best execution" for their clients. What does best execution mean for crypto (especially when no NBBO equivalent is available for a given cryptocurrency)?

For securities, there are best execution requirements that require brokers to execute customers' orders with the most favorable terms possible. Although best execution is defined intentionally ambiguously, it tends to take into account price improvement as well as execution time. To understand price improvement, the NBBO (national best bid and offer) is used as reference. However, when trading larger blocks of securities where slippage is a serious problem, beating the national best bid and offer seems unlikely. Thus, equities dark pools often rely on midpoint price execution.

Given the lack of regulation, there is no centralized entity (like the SIP) that disseminates NBBO for a given cryptocurrency. Even if such a thing were to exist, would we consider best bids and offers with respect to only centralized exchanges or should we include decentralized venues as well? Should crypto dark pools, by default, mimic their equities equivalent providing midpoint price execution or should we allow for a more traditional order book model where buyers and sellers can specify price as well as volume?

Continuous vs. periodic auctions

While most equities dark pools perform matching on a continuous basis, there are a few options for clients who prefer a periodic model. Which option is best for the crypto space?

Continuous double auctions are prevalent within traditional finance, allowing buyers and sellers to submit orders whenever they want. Matching occurs on a continuous basis, meaning as soon as an order comes in the operators search for a counterparty/match. There are a few venues within traditional finance in which auctions are done "periodically," meaning buyers and sellers can submit orders during a specified time period and crossings are performed at a set time. However, by and large, continuous auctions dominate the landscape.

Opponents of continuous double auctions argue that such a model inherently favors those with a speed advantage like sophisticated high frequency trading firms, encouraging co-location and a latency arms race. To counter this speed and latency arms race within tradfi, some have suggested frequent batch auctions, a type of periodic auction in which orders from a specified time period are batched together and then executed at a uniform clearing price. Proponents of periodic auctions also argue that a discrete time model allows participants to compete on price (to the advantage of other system participants) rather than speed.

While the existing winners in tradfi have little incentive to push for periodic auctions, an open design space still exists for crypto markets. We can already see some of the continuous vs period auction debate playing out, with popular decentralized trading venues like dYdX, Cowswap, and Penumbra championing the use of frequent batch auctions to mitigate the negative effects of MEV.

Should crypto dark pools further entrench HFT firms' dominance or seek to level the playing field for the rest of us by adopting a new design?

Insider trading and toxic order flow

How does lack of insider trading laws impact order flow in crypto?

When it comes to startups, founders, early employees, as well as (venture capital) investors generally hold a substantial portion of the respective company's stock. When the company goes public, employees face restrictions on trading their company's stock to prevent "insider trading."

Similarly, for cryptocurrency startups, early employees and investors often control a substantial portion of the project's initial token supply. The equivalent situation of an IPO for a cryptocurrency startup might be having the token listed on a prominent centralized exchange like Coinbase. However, given the lack of regulation in the crypto space, there is very little to legally prevent early employees from acting on material non-public information that they strongly suspect will affect the token's price (e.g. employee Alice knows her company's token will be listed on xyz prominent exchange in 3 months, likely causing the price to go up once the news is announced).

Although early employees and investors are likely to trade in large blocks, it's not a great look for a founder or early employee to be seen selling off large portions of their own company's stock or cryptocurrency.

With a crypto dark pool, early employees could now hide their activity, acting on material non-public information whenever they wish without alerting the broader market about what they're doing. Furthermore, it's very possible (if not likely) that some of this order flow would be "toxic."

While a large number of crypto trading venues are decentralized and permissionless, they do not offer privacy which may affect how participants behave when using the system (knowing they can likely be de-anonymized and all their bids/offers viewed). So we must ask--will building a dark pool that supports trading large blocks of altcoins further encourage "insider" trading within crypto and allow toxic flow to proliferate?

Custody, settlement, and information leakage

With no DTC(C) available, how will settlement and clearing work for crypto dark pools?

For the vast majority of securities transactions within the US, the DTCC provides clearing and settlement services to ensure the successful handoff between buyers and sellers.

The goal of a dark pool is to allow participants to shield their activity from others. While FINRA requires some information regarding dark pools to be made public, it's fairly minimal, revealing high level info like total number of shares and trades done per instrument each week rather than details on individual trades and the participants' identities.

How would custody and settlement work for a crypto dark pool when there is no DTCC equivalent? The natural and obvious solution is to rely on the blockchain to handle these tasks. Unfortunately, if orders settle via a public blockchain, some information will almost certainly be revealed. It may be possible to hide transactions made within the dark pool (if it's properly set up as a rollup or L2) but how do participants get their cryptocurrency into the dark pool in the first place and what happens when they attempt to exit the dark pool? Surely there will be public transactions (on say Ethereum) showing that they've deposited (withdrawn) x number of tokens into (from) some contract.

Another option might involve relying on some semi-trusted third party to handle custody and settlement, as well as shielding participants' activity from the public (say via the use of wash accounts).

How much information leakage can we eliminate while still relying on a public blockchain? What amount of information leakage is acceptable to dark pool users?

Our demo (aka make it fast)

We've built a demo showcasing what such a truly dark dark pool might look like where operators cannot abuse their privileged role to view orders before they've been matched.

You can test out two different experiences:

- A continuous double auction where participants specify both price and volume, with matchings attempted as soon as a new order comes in (mimicking a traditional order book model).

- A periodic volume match where crossings are performed every 5 seconds and price is determined from an external source (akin to a frequent batch auction). We could just as well provide midpoint price execution if we collected price data across various centralized exchanges.

While our demo currently runs on a 64-core machine (c7g.16xlarge instance), much better performance can be achieved with beefier machines and hardware acceleration (e.g. GPU-accelerated implementation).

For simplicity, we provide partial fills by default as well as fill-or-kill orders. We could just as well allow for minimum fills but have chosen to omit them for an initial version. Quantity and price are currently restricted to a 216 bit range; larger ranges can be provided but come with a performance penalty. Other aspects that impact performance include number of orders to process and security level.

Our demo makes use of our own version of the TFHE fully homomorphic encryption scheme under the hood, allowing us to get better performance than existing FHE libraries. As dark pools are one example of double auctions, this work naturally builds upon our previous work for private verifiable auctions in which we showcased how to build a first-price sealed-bid auction in which losing bids were never revealed.

We imagine initial versions of dark pools will need to be permissioned to best optimize for user experience and protect against bad actors. When it comes to custody and settlement, there are many open questions that need to be answered before a dark pool can be deployed into the wild.

Looking forward

Can we build a future with private and fair financial systems? We believe the answer is yes and our work here is one step towards that.

We're at a potential crossroads in time as institutions and tradfi players start to move into crypto. Given how young the space is, there are opportunities to rethink market design and determine how the winners of the next financial systems will be chosen. What worked well for tradfi may not necessarily work as well for us in crypto.

As for our plans, we'll soon be open-sourcing our low-level implementation of the TFHE (fully homomorphic encryption) scheme. We'd love to hear your thoughts if you've been thinking about some of the issues here and, if you happen to be at ETHDenver, come say hi!

- Threshold FHE has the advantage of requiring little communication between parties and no communication during the computation itself (as FHE is compute-bound). MPC-based solutions (e.g. SPDZ) are communication-bound, on the other hand. In practice, this means that the various parties need to be co-located to get good performance. ↩︎

At Sunscreen, we're working to enable a new class of applications operating on private shared state via fully homomorphic encryption (FHE). These applications tend to have two requirements—input privacy (keeping inputs hidden even after the program is complete) as well as verifiability (how can we know for a fact the program was run correctly?). One such application is auctions, with one of the more interesting use cases aimed at determining blockspace ordering.

In this article, we'll walk through how blockspace auctions work at a high level and how threshold FHE can improve the auction process. We'll then look at where threshold FHE is as a field and how Sunscreen is working to make private verifiable (d)apps available to all.

So far we've focused on developers but we're excited to showcase our first demo where end users can see what such an FHE-enabled application might look like! Our demo implements a first-price sealed-bid auction using our own variant of the torus fully homomorphic encryption scheme (TFHE) and is inspired by top-of-block blockspace auctions. With a 64-core machine, determining the winner over all 93 encrypted bids should take <2 seconds but, depending on the number of people using the demo, you may experience a slowdown.

For the full experience, connect via Metamask and make sure to have some Sepolia ETH available!

First-price sealed bid auctions are just the start for us and we're excited to explore bringing privacy and verifiability to all kinds of auctions.

Crash course on blockspace auctions

If you're new to blockspace auctions, keep reading! We'll take a look at why they were created, how some of them operate at a high level, and limitations with existing constructions. Please note that the following provides a simplified overview; blockspace auctions are just one example of an auction where privacy and verifiability are crucial so don't get too hung up on the details.

Let’s say there’s an arbitrage opportunity across two decentralized exchanges (DEXs) on the same chain—specifically, we notice that token τ is quoted at price α on exchange A and price β on exchange B where α < β. This seems like a good opportunity to potentially purchase token τ on exchange A and then sell it at a higher price on exchange B, pocketing the profit.[1]

Unfortunately, it turns out that many people are thinking exactly the same thing! We'll broadly refer to the individuals competing to take advantage of arbitrage opportunities as "searchers."[2] Once one person takes advantage of this arbitrage opportunity, token τ’s price on both exchange A and B are likely to change (potentially such that the price difference between the two is smaller or so that the prices converge across the two exchanges).

How do we decide who gets to the opportunity first? In blockchain, this boils down to transaction ordering within a block but how transaction ordering occurs is chain-dependent. If we're looking at Ethereum, transaction ordering is generally tied to gas price. Thus, searchers could submit transactions directly to the public mempool and compete by offering a higher gas price to try to get their transaction processed faster. If we're looking at Cosmos, transaction ordering generally obeys a first in, first out (FIFO) rule so a searcher may attempt to spam the network to get their transaction in first. The space becomes even more complex when looking at L2s, which lack public mempools and often operate on a FIFO basis. Irregardless of which chain we're on, both of these strategies lead to a number of issues for both searchers and ordinary users.

Professional searchers may also be looking to take advantage of many arbitrage opportunities within a single day, meaning they submit a large number of transactions in a fairly small time period. By revealing both the transaction details and gas price, competitors might be able learn that searcher’s trading strategy. Ideally, there should be way for searchers to submit transactions such that the transaction details either aren't revealed to competitors or so that whatever they’re willing to pay for the transaction remains private to other searchers/users (especially in the case of unsuccessful transactions).[3]

How might we determine transaction ordering within a block in a way that optimizes the experience for both ordinary users and searchers? Blockspace auctions are one such solution (offered by the likes of Flashbots and Skip); these auctions segment searchers from ordinary users, allowing searchers to compete against one another for priority ordering while preventing a degradation of experience for ordinary users.

Let's dive into how blockspace auctions work at a high level, as the process is often not that transparent and differs based on the particular protocol/chain.

We'll look at the most straightforward of these auctions where we're bidding for the top of a block using a first-price sealed-bid auction (e.g. Skip Select). Flashbots also employs a first-price sealed-bid auction but allows searchers to express more granular ordering preferences but with no guarantee of top of block execution.[4]

Roughly speaking, the following happens:

- Searchers submit a "sealed bid" directly to a third party. The sealed bid consists of how much the searcher is willing to pay for their transaction (potentially a bundle of transactions) to be placed at the top of the block. If the searcher wins, they'll have to pay this bid amount (potentially to the block builder/validator but in theory this bid amount could be distributed across several parties).

- The third party looks through all submitted bids and determines who placed the highest bid.

- The third party gossips the result of the auction to the validators/block builders who will then ensure the winner's transaction (bundle) will be placed at the top of the block.

A major shortcoming in this process is the need for a trusted party. As presented, there is a third party who is trusted for both (a) correct execution of the program (they've correctly declared the winner) and (b) maintaining the privacy of searchers' bids (neither sharing these values nor using this information for their own benefit).

Alternative constructions involve searchers submitting their bids directly to block builders but this introduces other problems (since builders can front-run, sandwich, or censor searchers' transactions at will). We won't be diving into the tradeoffs of that construction here but, if the topic interests you, we recommend listening to Interview with a Searcher 2.0.

When folks think of privacy tools, like FHE, they imagine how privacy can prevent MEV. Perhaps surprisingly, FHE also has the potential to enhance MEV extraction.

Reimagining the auction process

Can we improve the existing auction process? Ideally, this would mean:

- Anyone can verify that the auction ran correctly (we'll refer to this property as verifiability).

- Unsuccessful bids remain private to all parties (we'll refer to this property as privacy).

Imagine that auction participants (in our case searchers) now encrypt their bids—not with just any old public key encryption scheme, but using threshold fully homomorphic encryption. Specifically, the auction might work in the following way:[5]

- A set of parties jointly generate a public-private key pair such that each party (we'll refer to them as the threshold committee) holds a share of the private key (i.e. decryption key).

- Auction participants encrypt their bids using the public key and post their encrypted bids to a data availability layer or some sort of ledger (the choice of which is up to the system designers).[6]

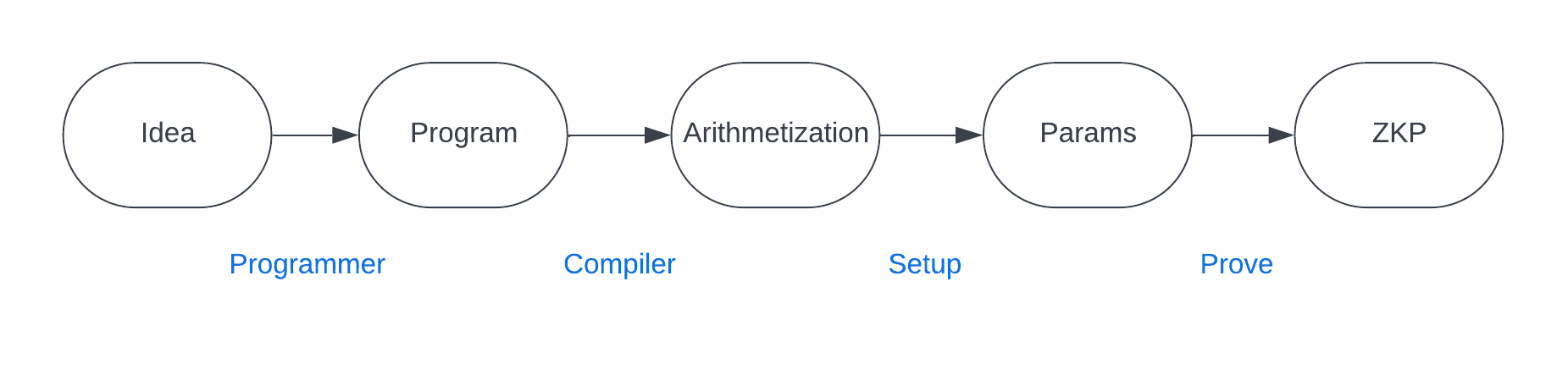

- Anyone can run the auction program (whether it's as simple as a first-price sealed-bid auction or something more complex) directly on the encrypted bids to determine who the winner should be (thanks to the homomorphic properties of the encryption scheme).

- Depending on the application's needs, there may a set of specialized parties tasked with running the auction program at scale to guarantee certain performance requirements are met. For example, say we need to determine who placed the highest bid in sub-second time on ~100 bids with 32-bit precision; achieving this sort of performance may require fairly beefy hardware. There will need to be an appropriate mechanism for punishing these specialized computing parties if the program wasn't faithfully run (e.g. via slashing).

- Only the winning bid is decrypted by the threshold committee (who come together to reconstruct the private key) and the outcome is gossiped.

How does threshold FHE allow us to satisfy the conditions of verifiability and privacy? FHE allows anyone to run computations on encrypted data. Thus, anyone can run the auction program on the encrypted bids to determine the winning bid (without even knowing what any of the underlying values are!). The "threshold" aspect ensures that losing bids remain private to everyone (including those on the threshold!). Roughly speaking, to construct a threshold FHE scheme, we start with a single-key FHE scheme and then split the private key into shares which we'll distribute across a certain set of parties. Encryption and computation work the same as usual but decryption will require some (pre-determined number) t of the n total threshold committee members coming together to reconstruct the private key. For most blockchains, we assume that there is an honest majority of validators; we'll make a similar assumption for the threshold committee members when using threshold FHE.[7]