A while back, I developed a simple newsletter application to demonstrate telemetry integration in a real-world scenario. If you haven't already seen this post, I highly recommend checking it out. This application will now serve as a foundation for exploring a new feature in BullMQ. Version 5.16.0 introduced the Job Scheduler, designed to replace repeatable jobs. We'll be incorporating this functionality into our newsletter application.

Before diving into the implementation, let's understand why repeatable jobs are deprecated and explore the key differences between the old and new approaches.

Before:

- You add a repeatable job by providing a “repeat” option to

Queue.add - You manage repeatable jobs by using

removeRepeatableorremoveRepeatableByKey

Now:

- You add repeatable job by calling

Queue.upsertJobScheduler - You manage repeatable jobs by using

removeJobSchedulerorgetJobSchedulers

Similarities:

- Both schedulers add new jobs when the currently active job begins processing.

- A job will always remain in a delayed state as long as the scheduler continues to produce jobs.

- Both support custom repeat strategies, which must be configured in both the Worker and the Queue.

You can read more about why the new Job Schedulers API is much more useful than the old one here: https://docs.bullmq.io/guide/job-schedulers

Equipped with an understanding of the new job scheduler, let's integrate it into our project. Bear with me that this is a theoretical example just for learning purposes, as in real life it would require that some newsletter has actually been written during the week, otherwise there will be no newsletter to send to any subscriber. Moreover, in a simple use case like this, you most likely will only have 1 job scheduler, as all newsletters are sent the same day and time of the week. In a more practical case you would probably use different cron patterns for different users depending on their timezones, or you would just have one job scheduler for all your users.

The implementation will be straightforward, requiring minimal code modifications. Notably, the new job scheduler seamlessly integrates with the OpenTelemetry instrumentation we added earlier. We'll begin by updating the main service:

import { NodemailerInterface } from '../interfaces/nodemailer.interface';

import { Queue } from 'bullmq';

import config from '../config';

import SubscribedUserCrud from '../crud/subscribedUser.crud';

import { BullMQOtel } from 'bullmq-otel';

const { bullmqConfig } = config;

class NewsletterService {

private queue: Queue;

private subscribedUserCRUD: typeof SubscribedUserCrud;

private cronPattern: '0 0 12 * * 5';

constructor() {

this.queue = new Queue<NodemailerInterface>(bullmqConfig.queueName, {

connection: bullmqConfig.connection,

telemetry: new BullMQOtel('newsletter-tracer'),

});

this.subscribedUserCRUD = SubscribedUserCrud;

}

async subscribeToNewsletter(email: string) {

const subscribedUser = await this.subscribedUserCRUD.create(email);

if (!subscribedUser) {

return false;

}

await this.queue.add('send-simple', {

from: '[email protected]',

subject: 'Subscribed to newsletter',

text: 'You have successfully subscribed to a newsletter',

to: `${email}`,

});

console.log(`Enqueued an email sending`);

await this.startSendingWeeklyEmails(email);

return subscribedUser;

}

async unsubscribeFromNewsletter(email: string) {

const removedUser = await this.subscribedUserCRUD.delete(email);

if (!removedUser) {

return false;

}

await this.queue.add('send-simple', {

from: '[email protected]',

subject: 'Unsubscribed from a newsletter',

text: 'You have successfully unsubscribed from a newsletter',

to: `${email}`,

});

console.log(`Enqueued an email sending`);

const result = await this.stopSendingWeeklyEmails(email);

console.log(result ? `scheduler for email: ${email} removed` : `scheduler for email: ${email} not found`);

return removedUser;

}

/* Add this new method */

private async startSendingWeeklyEmails(email: string) {

await this.queue.upsertJobScheduler(

`${email}`,

{ pattern: this.cronPattern },

{

name: 'send-weekly-newsletter',

data: {

from: '[email protected]',

subject: 'weekly newsletter',

text: 'newsletter',

to: `${email}`,

}

}

);

}

/* Add this new method */

private async stopSendingWeeklyEmails(email: string) {

return this.queue.removeJobScheduler(`${email}`);

}

}

export default new NewsletterService();src/services/newsletter.service.ts

As you can see we added 2 more methods: startSendingWeeklyEmails and stopSendingWeeklyEmails.

In this updated initialization method, we utilize the upsertJobScheduler function. We generate a unique job scheduler ID based on the user's email address. Then, we define the scheduling pattern using cron syntax to ensure emails are sent every Friday at 12 AM. Finally, we create a job template that will be executed weekly, sending a simple email notification.

In the second method, we utilize the removeJobScheduler function to delete the recurring job based on its unique ID. For better insight, we've included a console log to confirm successful deletion.

We then integrated these methods by triggering them after the initial subscription email, providing users with immediate feedback. With that, our implementation is complete, and we can proceed to testing.

Send a POST request to /api/newsletter/subscribe. The console should display a clear indication that the scheduler successfully completed its task: "Completed job repeat:[email protected]:1732577580000 successfully".

Now, let's examine the Jaeger results:

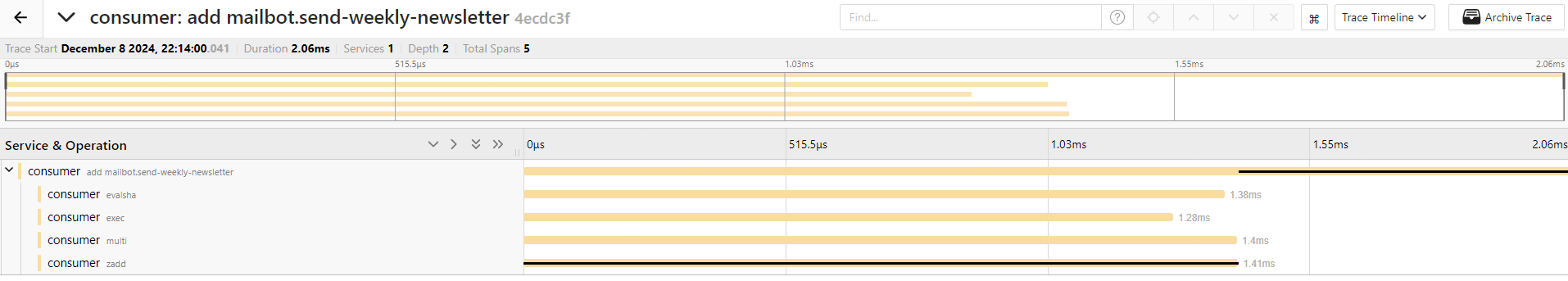

Let's tweek the code a bit. We are gonna change the cron expression to better see how telemetry is handling it. Change cron to * * * * * . This expression will send newsletter every minute. Let's examine the results. Go to the menu on the left, change service to consumer , operation to add mailbot.send-weekly-newsletter and hit search:

This will show us the output of the newsletter scheduler:

You'll notice a difference in the number of consumer services utilized between the two operations. Let's investigate the reason behind this discrepancy. Select the more recent trace to begin our analysis:

This particular trace provides valuable insights into the inner workings of our scheduling mechanism, revealing five distinct operations involved in the process. One of these operations originates directly from BullMQ, the remaining four operations stem from Redis, the in-memory data store that BullMQ utilizes to persist and manage job data. Let's delve deeper into the BullMQ operation. Its primary function is to add a scheduled task to the "delayed" state, effectively queuing it up for future execution. As we discussed earlier, BullMQ's job schedulers are designed to maintain a single job in this delayed state at all times, ensuring that there's always a task ready to be processed when the time is right. This job patiently bides its time for one minute before it's finally promoted to the active state. Once active, the job is picked up by a worker and processed according to the defined logic.

Now, let's compare this to the older trace:

In this trace, we observe two additional operations, one from Redis and one from BullMQ. As expected, these operations are processed one minute later, aligning with the cron schedule we defined. Let's expand the process mailbot operation to examine its details:

Notice the new Logs label. Let's expand it to see what it contains:

This reveals an event generated by the application, indicating that the job has been successfully completed. Since the scheduler always maintains a delayed job, it immediately adds another job with the same configuration as the one we just examined, thus continuing the cycle.

Now, let's unsubscribe from the newsletter and observe the process. Send a POST request to /api/newsletter/unsubscribe. You should see a confirmation message in the console: scheduler for email: [email protected] removed, indicating a successful operation. The same confirmation can be found in Jaeger:

This concludes our exploration of the newsletter application, which only scratches the surface of what job schedulers offer. These powerful tools provide a wealth of additional functionality. For instance, we could leverage the advanced options available for recurring jobs, such as:

limit- lets us limit the number of executions:

queue.upsertJobScheduler(

`scheduler-id`,

{ limit: 10 },

{ jobData }

)immediately- job scheduler adds jobs in the delayed state, it ensures that the job will be run as fast as possible without a delay:

queue.upsertJobScheduler(

`scheduler-id`,

{ immediately: true },

{ jobData }

)count- start value of the repeat iteration count:

queue.upsertJobScheduler(

`scheduler-id`,

{ count: 2 },

{ jobData }

)In the application we made use of the cron expression, but if we don’t want to, we can use every. This one will repeat the job every 10 seconds:

queue.upsertJobScheduler(

`scheduler-id`,

{ every: 10000 },

{ jobData }

)We can use it together with startDate/endDate to make sure the job will be repeating only after/before certain date:

queue.upsertJobScheduler(

`scheduler-id`,

{

every: 10000,

startDate: new Date('2024-10-15T00:00:00Z'),

endDate: new Date('2024-10-16T00:00:00Z'),

},

{ jobData }

)While this blog post covers the essentials of using job schedulers, there's much more to explore. For a comprehensive understanding of their capabilities, please check the official BullMQ documentation for more information.

]]>In order to show the potential of telemetry, we want an application that is composed of several components that interact with each

]]>In this tutorial we are going to demonstrate how to use the new telemetry support thats built-in in BullMQ by creating a simple newsletter's registration application.

In order to show the potential of telemetry, we want an application that is composed of several components that interact with each other. In this case we will have a standard ExpressJS server to handle HTTP requests for registering the newsletter's subscriptions, a BullMQ queue to handle the registrations and a postgreSQL database to store the subscribers and their statuses.

The idea is being able to see the requests all the way from the HTTP request up to the update of the database.

Why should you read this?

If you're using BullMQ and want to improve your application's monitoring and troubleshooting capabilities, this blog post is for you. The new telemetry functionality and this guide will save you time and effort by providing a streamlined approach to instrumenting BullMQ with OpenTelemetry.

What you will learn:

- OpenTelemetry basics: Get a quick overview of OpenTelemetry and its core components (tracing, metrics, logs).

- Introducing the new package: Explore the features and benefits of the new package that simplifies OpenTelemetry integration with BullMQ.

- Step-by-step guide: Follow a practical tutorial to instrument your BullMQ queues and workers with OpenTelemetry.

Impatient? Jump to the solution:

If you prefer to dive straight into the code, check out the GitHub repository. Each chapter of the following tutorial is a separate branch and it will be linked accordingly.

Project Scaffold

To begin, let's set up our project. Start by creating a package.json file using the command npm init -y.

Next, install the necessary dependencies:

- tsx

- typescript

- dotenv

Now, let's configure TypeScript. Create a tsconfig.json file in your project's root directory, using the recommended settings from tsx.

{

"compilerOptions": {

"moduleDetection": "force",

"module": "Preserve",

"resolveJsonModule": true,

"allowJs": true,

"esModuleInterop": true,

"isolatedModules": true,

"experimentalDecorators": true,

"emitDecoratorMetadata": true

},

}tsconfig.json

Next, create a src directory in the project's root directory. Inside the src directory, create an empty file named index.ts.

Express setup

With the project's foundation in place, let's set up the necessary files, including routes, controllers, and services. First, install the required dependencies:

- @types/express

- express

Now, let's populate the index.ts file with the following code:

import express from 'express';

import config from './config';

import router from './routes';

const app = express();

const port = config.port || 3000;

app.use(express.json());

app.use(router);

app.listen(port, () => {

console.log(`Listening ${port}`);

});src/index.ts

This is the configuration file where we'll store all the essential values for the project:

import dotenv from 'dotenv';

dotenv.config();

export default {

port: process.env.PORT

};src/config/index.ts

Router inside routes folder:

import express from 'express';

import newsletterRoutes from './newsletter.route';

const router = express.Router();

router.use('/api/newsletter', newsletterRoutes);

export default router;src/routes/index.ts

import express from 'express';

import controllers from '../controllers';

const router = express.Router();

const {newsletterController} = controllers;

router.post('/subscribe', newsletterController.subscribeToNewsletter);

router.post('/unsubscribe', newsletterController.unsubcribeFromNewsletter);

export default router;src/routes/newsletter.route.ts

Controller inside controllers folder:

import newsletterController from './newsletter.controller';

export default {

newsletterController

};

src/controllers/index.ts

import { Request, Response, NextFunction } from 'express';

import NewsletterService from '../services/newsletter.service';

class NewsletterController {

constructor() {

this.subscribeToNewsletter = this.subscribeToNewsletter.bind(this);

this.unsubcribeFromNewsletter = this.unsubcribeFromNewsletter.bind(this);

}

async subscribeToNewsletter(req: Request, res: Response, next: NextFunction) {

try {

const subscribedUser = await NewsletterService.subscribeToNewsletter(req.body.email);

if (!subscribedUser) {

return res.json({message: 'user already subscribed'});

}

return res.json(subscribedUser);

} catch(err) {

return next(err);

}

}

async unsubcribeFromNewsletter(req: Request, res: Response, next: NextFunction) {

try {

const unsubscribedUser = await NewsletterService.unsubcribeFromNewsletter(req.body.email);

if (!unsubscribedUser) {

return res.json({message: 'user is not a member of a newsletter'});

}

return res.json(unsubscribedUser);

} catch(err) {

return next(err);

}

}

}

export default new NewsletterController();src/controllers/newsletter.controller.ts

Service for future logic inside services folder:

class NewsletterService {

constructor() {}

async subscribeToNewsletter(email: string) {

return false;

}

async unsubcribeFromNewsletter(email: string) {

return false;

}

}

export default new NewsletterService();src/services/newsletter.service.ts

Next, create an .env file to store the application's port:

PORT=3000.env

The only remaining step is to add a script to run the application. In your package.json file, within the scripts section, add:

"start": "tsx ./src/index.ts"package. json

We now have a basic project structure for newsletter subscriptions. This includes a dedicated route, a controller to manage various service outcomes, and an empty service that we'll implement later with the core logic.

Nodemailer setup

To implement the service, we need a way to send emails. We'll use Nodemailer for this purpose, as it's user-friendly and offers a free testing account where you can inspect outgoing emails through their UI. Guide for that is here!

Install:

- @types/nodemailer

- nodemailer

To connect to Nodemailer, add the following values to your .env file, referencing the guide above for specific instructions:

NODEMAILER_HOST='smtp.ethereal.email'

NODEMAILER_AUTH_USER=''

NODEMAILER_AUTH_PASS=''

NODEMAILER_PORT=587.env

Import them with config file:

import dotenv from 'dotenv';

dotenv.config();

export default {

port: process.env.PORT,

nodemailerConfig: {

host: process.env.NODEMAILER_HOST,

port: parseInt(process.env.NODEMAILER_PORT),

auth: {

user: process.env.NODEMAILER_AUTH_USER,

pass: process.env.NODEMAILER_AUTH_PASS

}

},

};

src/config/index.ts

With our Nodemailer credentials in place, let's integrate it into our application. Create a new directory named nodemailer within the src directory, and inside it, create an index.ts file:

import nodemailer from 'nodemailer';

import config from '../config';

const { nodemailerConfig } = config;

const transporter = nodemailer.createTransport({

host: nodemailerConfig.host,

port: nodemailerConfig.port,

auth: {

user: nodemailerConfig.auth.user,

pass: nodemailerConfig.auth.pass

}

});

export default transporter;src/nodemailer/index.ts

This code will enable our service to send emails. Let's update the service accordingly:

import nodemailer from '../nodemailer';

class NewsletterService {

constructor() {}

async subscribeToNewsletter(email: string) {

await nodemailer.sendMail({

from: '[email protected]',

subject: 'Subscribed to newsletter',

text: 'You have succesfully subscribed to a newsletter',

to: `${email}`,

});

return true;

}

async unsubcribeFromNewsletter(email: string) {

await nodemailer.sendMail({

from: '[email protected]',

subject: 'Unsubscribed from a newsletter',

text: 'You have succesfully unsubscribed from a newsletter',

to: `${email}`,

})

return true;

}

}

export default new NewsletterService();src/services/newsletter.service.ts

With these changes, sending a POST request to /api/newsletter/subscribe with an email field, or to /api/newsletter/unsubscribe, will trigger a response and generate an email notification visible in the Nodemailer UI.

Postgres Setup

To persist user data, we'll utilize PostgreSQL. Install the following dependencies:

- pg

- typeorm

- @types/pg

Next, create a docker-compose.yaml file in the project root to run the database:

services:

pg:

image: postgres:16

container_name: opentelemetry_bullmq_pg

ports:

- '5432:5432'

environment:

POSTGRES_USER: "${POSTGRES_USER}"

POSTGRES_PASSWORD: "${POSTGRES_PASSWORD}"

POSTGRES_DB: "${POSTGRES_DB}"docker-compose.yaml

This configuration exposes the database port for external access and retrieves configuration values from the .env file.

POSTGRES_USER=

POSTGRES_PASSWORD=

POSTGRES_DB=

POSTGRES_HOST=127.0.0.1

POSTGRES_PORT=5432.env

import dotenv from 'dotenv';

dotenv.config();

export default {

port: process.env.PORT,

nodemailerConfig: {

host: process.env.NODEMAILER_HOST,

port: parseInt(process.env.NODEMAILER_PORT),

auth: {

user: process.env.NODEMAILER_AUTH_USER,

pass: process.env.NODEMAILER_AUTH_PASS

}

},

postgresConfig: {

user: process.env.POSTGRES_USER,

pass: process.env.POSTGRES_PASSWORD,

db: process.env.POSTGRES_DB,

host: process.env.POSTGRES_HOST,

port: parseInt(process.env.POSTGRES_PORT || '5432')

}

};

src/config/index.ts

Run docker-compose up to ensure everything is working correctly. If the database starts successfully, proceed to create the model, CRUD operations, and setup file.

import { Entity, PrimaryGeneratedColumn, Column } from 'typeorm';

@Entity()

export class SubscribedUser {

@PrimaryGeneratedColumn('uuid')

id: number

@Column('text')

email: string

}

src/models/subscribedUser.model.ts

import PGClient from '../db';

import { SubscribedUser } from '../models/subscribedUser.model';

class SubcribedUserCRUD {

private pgClient: typeof PGClient.manager;

private model: typeof SubscribedUser = SubscribedUser;

constructor() {

this.pgClient = PGClient.manager;

}

async create(email: string) {

const exist = await this.read(email);

if (!!exist) {

return false;

}

const newSubscribedUser = new this.model();

newSubscribedUser.email = email;

return await this.pgClient.save(newSubscribedUser);

}

async read(email: string) {

return await this.pgClient.getRepository(this.model).findOneBy({

email: email

});

}

async delete(email: string) {

const subscribedUser = await this.read(email);

if (!subscribedUser) {

return false;

}

return await this.pgClient.getRepository(this.model).remove(subscribedUser);

}

}

export default new SubcribedUserCRUD();src/crud/subscribedUser.crud.ts

import { DataSource } from 'typeorm';

import { SubscribedUser } from '../models/subscribedUser.model';

import config from '../config';

const { postgresConfig } = config;

class PGClient {

private client: DataSource;

constructor() {

this.client = new DataSource({

type: 'postgres',

host: postgresConfig.host,

port: postgresConfig.port,

username: postgresConfig.user,

password: postgresConfig.pass,

database: postgresConfig.db,

entities: [SubscribedUser],

synchronize: true,

logging: false

});

}

async init() {

try {

await this.client.initialize();

console.log('database connected');

} catch (err) {

console.log('database connection error: ', err)

}

}

async disconnect() {

await this.client.destroy();

}

get clientInstance() {

return this.client;

}

get manager() {

return this.client.manager;

}

}

export default new PGClient();src/db/index.ts

And update main entry file for the project index.ts to initialize a postgres:

import express from 'express';

import config from './config';

import router from './routes';

import pgClient from './db';

const app = express();

const port = config.port || 3000;

(async () => {

await pgClient.init();

})();

app.use(express.json());

app.use(router);

app.listen(port, () => {

console.log(`Listening ${port}`);

});

src/index.ts

This code defines a simple model with an email field and CRUD operations to create, read, and delete users for newsletter management. Now, let's update the service to utilize these functionalities:

import nodemailer from '../nodemailer';

import SubscribedUserCrud from '../crud/subscribedUser.crud';

class NewsletterService {

private subscribedUserCRUD: typeof SubscribedUserCrud;;

constructor() {

this.subscribedUserCRUD = SubscribedUserCrud;

}

async subscribeToNewsletter(email: string) {

const subscribedUser = await this.subscribedUserCRUD.create(email);

if (!subscribedUser) {

return false;

}

await nodemailer.sendMail({

from: '[email protected]',

subject: 'Subscribed to newsletter',

text: 'You have succesfully subscribed to a newsletter',

to: `${email}`,

});

return subscribedUser;

}

async unsubcribeFromNewsletter(email: string) {

const removedUser = await this.subscribedUserCRUD.delete(email);

if (!removedUser) {

return false;

}

await nodemailer.sendMail({

from: '[email protected]',

subject: 'Unsubscribed from a newsletter',

text: 'You have succesfully unsubscribed from a newsletter',

to: `${email}`,

})

return removedUser;

}

}

export default new NewsletterService();src/services/newsletter.service.ts

BullMQ setup

Finally, let's integrate BullMQ. Install the necessary dependency:

- bullmq

Next, add a Redis service to your docker-compose.yaml file:

services:

pg:

image: postgres:16

container_name: opentelemetry_bullmq_pg

ports:

- '5432:5432'

environment:

POSTGRES_USER: "${POSTGRES_USER}"

POSTGRES_PASSWORD: "${POSTGRES_PASSWORD}"

POSTGRES_DB: "${POSTGRES_DB}"

redis:

image: redis:latest

container_name: opentelemetry_bullmq_redis

ports:

- '6379:6379'

docker-compose.yaml

import dotenv from 'dotenv';

dotenv.config();

export default {

port: process.env.PORT,

nodemailerConfig: {

host: process.env.NODEMAILER_HOST,

port: parseInt(process.env.NODEMAILER_PORT),

auth: {

user: process.env.NODEMAILER_AUTH_USER,

pass: process.env.NODEMAILER_AUTH_PASS

}

},

postgresConfig: {

user: process.env.POSTGRES_USER,

pass: process.env.POSTGRES_PASSWORD,

db: process.env.POSTGRES_DB,

host: process.env.POSTGRES_HOST,

port: parseInt(process.env.POSTGRES_PORT || '5432')

},

bullmqConfig: {

concurrency: parseInt(process.env.BULLMQ_QUEUE_CONCURRENCY || '1'),

queueName: process.env.BULLMQ_QUEUE_NAME || 'mailbot',

connection: {

host: process.env.BULLMQ_REDIS_HOST || 'redis',

port: parseInt(process.env.BULLMQ_REDIS_PORT || '6379'),

},

},

};

src/config/index.ts

To integrate queueing into our application, we need to make a few modifications. First, let's create an interface for Nodemailer. Create a new directory named interfaces:

export interface NodemailerInterface {

from: string;

to: string;

subject: string;

text: string;

}

src/interfaces/nodemailer.interface.ts

Next, modify the index.ts file within the nodemailer directory to export a job for processing emails:

import nodemailer from 'nodemailer';

import { Job } from 'bullmq';

import config from '../config';

import { NodemailerInterface } from '../interfaces/nodemailer.interface';

const { nodemailerConfig } = config;

const transporter = nodemailer.createTransport({

host: nodemailerConfig.host,

port: nodemailerConfig.port,

auth: {

user: nodemailerConfig.auth.user,

pass: nodemailerConfig.auth.pass

}

});

export default (job: Job<NodemailerInterface>) => transporter.sendMail(job.data);src/nodemailer/index.ts

In the same file, create a worker to consume the job. We'll add some helpful events and console logs to verify that everything is functioning as expected:

import { Worker } from 'bullmq';

import config from '../config';

import processor from './';

const { bullmqConfig } = config;

export function initWorker() {

const worker = new Worker(bullmqConfig.queueName, processor, {

connection: bullmqConfig.connection,

concurrency: bullmqConfig.concurrency,

});

worker.on('completed', (job) =>

console.log(`Completed job ${job.id} successfully`)

);

worker.on('failed', (job, err) =>

console.log(`Failed job ${job.id} with ${err}`)

);

}

src/nodemailer/worker.ts

And modify service to use our queue system:

import { NodemailerInterface } from '../interfaces/nodemailer.interface';

import { Queue } from 'bullmq';

import config from '../config';

import SubscribedUserCrud from '../crud/subscribedUser.crud';

const { bullmqConfig } = config;

class NewsletterService {

private queue: Queue;

private subscribedUserCRUD: typeof SubscribedUserCrud;

constructor() {

this.queue = new Queue<NodemailerInterface>(bullmqConfig.queueName, {

connection: bullmqConfig.connection,

});

this.subscribedUserCRUD = SubscribedUserCrud;

}

async subscribeToNewsletter(email: string) {

const subscribedUser = await this.subscribedUserCRUD.create(email);

if (!subscribedUser) {

return false;

}

await this.queue.add('send-simple', {

from: '[email protected]',

subject: 'Subscribed to newsletter',

text: 'You have succesfully subscribed to a newsletter',

to: `${email}`,

});

console.log(`Enqueued an email sending`);

return subscribedUser;

}

async unsubcribeFromNewsletter(email: string) {

const removedUser = await this.subscribedUserCRUD.delete(email);

if (!removedUser) {

return false;

}

await this.queue.add('send-simple', {

from: '[email protected]',

subject: 'Unsubscribed from a newsletter',

text: 'You have succesfully unsubscribed from a newsletter',

to: `${email}`,

});

console.log(`Enqueued an email sending`);

return removedUser;

}

}

export default new NewsletterService();

src/services/newsletter.service.ts

Additionally, create a file to initialize the worker:

import { initWorker } from './nodemailer/worker';

initWorker();

console.log('worker listening');src/worker.ts

To run the worker, add a new script to your package.json file:

"start:worker": "tsx ./src/worker.ts"package.json

With the queue system integrated, our application now stores jobs in Redis and processes them using the worker. You can run the application using the scripts we defined. First, start the worker:

npm run start:worker

Once the worker is initialized, start the main application:

npm run start

You can then test the application as before.

OpenTelemetry setup

Let's move on to the main part: integrating telemetry. First, install the required packages:

- @opentelemetry/instrumentation-express

- @opentelemetry/instrumentation-http

- @opentelemetry/instrumentation-ioredis

- @opentelemetry/instrumentation-pg

- @opentelemetry/sdk-metrics

- @opentelemetry/sdk-node

- @opentelemetry/sdk-trace-node

- bullmq-otel

bullmq-otel is the official library for seamlessly integrating OpenTelemetry with BullMQ. I'll demonstrate how to use it and create a setup file for OpenTelemetry shortly.

To begin, we need to update all instances where queues and workers are initialized, adding a new option to pass the BullMQOtel class:

import { Worker } from 'bullmq';

import config from '../config';

import processor from './';

import { BullMQOtel } from 'bullmq-otel';

const { bullmqConfig } = config;

export function initWorker() {

const worker = new Worker(bullmqConfig.queueName, processor, {

connection: bullmqConfig.connection,

concurrency: bullmqConfig.concurrency,

telemetry: new BullMQOtel('example-tracer')

});

worker.on('completed', (job) =>

console.log(`Completed job ${job.id} successfully`)

);

worker.on('failed', (job, err) =>

console.log(`Failed job ${job.id} with ${err}`)

);

}

src/nodemailer/worker.ts

import { NodemailerInterface } from '../interfaces/nodemailer.interface';

import { Queue } from 'bullmq';

import config from '../config';

import SubscribedUserCrud from '../crud/subscribedUser.crud';

import { BullMQOtel } from 'bullmq-otel';

const { bullmqConfig } = config;

class NewsletterService {

private queue: Queue;

private subscribedUserCRUD: typeof SubscribedUserCrud;

constructor() {

this.queue = new Queue<NodemailerInterface>(bullmqConfig.queueName, {

connection: bullmqConfig.connection,

telemetry: new BullMQOtel('example-tracer')

});

this.subscribedUserCRUD = SubscribedUserCrud;

}

async subscribeToNewsletter(email: string) {

const subscribedUser = await this.subscribedUserCRUD.create(email);

if (!subscribedUser) {

return false;

}

await this.queue.add('send-simple', {

from: '[email protected]',

subject: 'Subscribed to newsletter',

text: 'You have succesfully subscribed to a newsletter',

to: `${email}`,

});

console.log(`Enqueued an email sending`);

return subscribedUser;

}

async unsubcribeFromNewsletter(email: string) {

const removedUser = await this.subscribedUserCRUD.delete(email);

if (!removedUser) {

return false;

}

await this.queue.add('send-simple', {

from: '[email protected]',

subject: 'Unsubscribed from a newsletter',

text: 'You have succesfully unsubscribed from a newsletter',

to: `${email}`,

});

console.log(`Enqueued an email sending`);

return removedUser;

}

}

export default new NewsletterService();

src/services/newsletter.service.ts

Next, let's set up OpenTelemetry. Create a new directory named instrumentation inside the src directory.

Within the instrumentation directory, create two files: producer.instrumentation.ts and consumer.instrumentation.ts. These files will handle instrumentation for producers and consumers, respectively:

import { NodeSDK } from '@opentelemetry/sdk-node';

import { PeriodicExportingMetricReader, ConsoleMetricExporter } from '@opentelemetry/sdk-metrics';

import { ConsoleSpanExporter } from '@opentelemetry/sdk-trace-node';

import { PgInstrumentation } from '@opentelemetry/instrumentation-pg';

import { ExpressInstrumentation } from '@opentelemetry/instrumentation-express';

import { HttpInstrumentation } from '@opentelemetry/instrumentation-http';

import { IORedisInstrumentation } from '@opentelemetry/instrumentation-ioredis';

const sdk = new NodeSDK({

serviceName: 'producer',

traceExporter: new ConsoleSpanExporter(),

metricReader: new PeriodicExportingMetricReader({

exporter: new ConsoleMetricExporter(),

}),

instrumentations: [

new ExpressInstrumentation(),

new HttpInstrumentation(),

new PgInstrumentation(),

new IORedisInstrumentation()

],

});

sdk.start();

src/instrumentation/instrumentation.producer.ts

import { NodeSDK } from '@opentelemetry/sdk-node';

import { PeriodicExportingMetricReader, ConsoleMetricExporter } from '@opentelemetry/sdk-metrics';

import { ConsoleSpanExporter } from '@opentelemetry/sdk-trace-node';

import { PgInstrumentation } from '@opentelemetry/instrumentation-pg';

import { ExpressInstrumentation } from '@opentelemetry/instrumentation-express';

import { HttpInstrumentation } from '@opentelemetry/instrumentation-http';

import { IORedisInstrumentation } from '@opentelemetry/instrumentation-ioredis';

const sdk = new NodeSDK({

serviceName: 'consumer',

traceExporter: new ConsoleSpanExporter(),

metricReader: new PeriodicExportingMetricReader({

exporter: new ConsoleMetricExporter(),

}),

instrumentations: [

new ExpressInstrumentation(),

new HttpInstrumentation(),

new PgInstrumentation(),

new IORedisInstrumentation()

],

});

sdk.start();src/instrumentation/instrumentation.consumer.ts

This code configures OpenTelemetry with a basic setup. We've assigned a service name for easy identification of trace origins and enabled console output for all telemetry data. Additionally, we've included automatic instrumentation libraries for various parts of our application: HTTP, Express, PostgreSQL, and Redis.

You might wonder how this differs from the bullmq-otel instrumentation. The key distinction is that these libraries utilize monkey patching to observe the application, while bullmq-otel doesn't. Observability in BullMQ is achieved through direct source code integration, providing greater control, flexibility, and maintainability. Rest assured, these libraries work together seamlessly.

To utilize the instrumentation files, update the starting scripts in your package.json file:

"start": "tsx --import ./src/instrumentation/instrumentation.producer.ts ./src/index.ts",

"start:worker": "tsx --import ./src/instrumentation/instrumentation.consumer.ts ./src/worker.ts"package.json

Now, run the application as before. You should see OpenTelemetry spans displayed in the console.

Jaeger setup

While the additional logs provide some insight into BullMQ's internal operations, to fully leverage the power of observability, we need a centralized system for storing and visualizing traces. This will allow us to generate insightful diagrams and understand the precise execution order of different application components. OpenTelemetry's popularity brings a wide array of options, both commercial and open source, for achieving this.

Using the console alone for observability can be overwhelming and difficult to interpret. A more effective approach is to utilize a dedicated tool like Jaeger, specifically designed for trace storage and visualization.

To export spans to Jaeger, we need to install the necessary packages:

- @opentelemetry/exporter-metrics-otlp-proto

- @opentelemetry/exporter-trace-otlp-proto

Now, update your docker-compose.yaml file with a new Jaeger service:

services:

pg:

image: postgres:16

container_name: opentelemetry_bullmq_pg

ports:

- '5432:5432'

environment:

POSTGRES_USER: "${POSTGRES_USER}"

POSTGRES_PASSWORD: "${POSTGRES_PASSWORD}"

POSTGRES_DB: "${POSTGRES_DB}"

redis:

image: redis:latest

container_name: opentelemetry_bullmq_redis

ports:

- '6379:6379'

jaeger:

image: jaegertracing/all-in-one:latest

container_name: opentelemetry_jaeger_redis

ports:

- '16686:16686'

- '4318:4318'

docker-compose.yaml

This configuration exposes two ports:

4318: The endpoint for exporting traces in protobuf format.16686: The port for accessing the Jaeger UI.

Note that I'm using protobuf as the data format for Jaeger, which requires exposing port 4318. If you're using a different method, such as HTTP, you'll need to expose the appropriate port for that format.

Now, let's update the instrumentation files:

import { NodeSDK } from '@opentelemetry/sdk-node';

import { PeriodicExportingMetricReader } from '@opentelemetry/sdk-metrics';

import { PgInstrumentation } from '@opentelemetry/instrumentation-pg';

import { ExpressInstrumentation } from '@opentelemetry/instrumentation-express';

import { HttpInstrumentation } from '@opentelemetry/instrumentation-http';

import { IORedisInstrumentation } from '@opentelemetry/instrumentation-ioredis';

import { OTLPTraceExporter } from '@opentelemetry/exporter-trace-otlp-proto';

import { OTLPMetricExporter } from '@opentelemetry/exporter-metrics-otlp-proto';

const sdk = new NodeSDK({

serviceName: 'producer',

traceExporter: new OTLPTraceExporter({

url: 'http://127.0.0.1:4318/v1/traces'

}),

metricReader: new PeriodicExportingMetricReader({

exporter: new OTLPMetricExporter({

url: 'http://127.0.0.1:4318/v1/metrics'

}),

}),

instrumentations: [

new ExpressInstrumentation(),

new HttpInstrumentation(),

new PgInstrumentation(),

new IORedisInstrumentation()

],

});

sdk.start();src/instrumentation/instrumentation.producer.ts

import { NodeSDK } from '@opentelemetry/sdk-node';

import { PeriodicExportingMetricReader } from '@opentelemetry/sdk-metrics';

import { PgInstrumentation } from '@opentelemetry/instrumentation-pg';

import { ExpressInstrumentation } from '@opentelemetry/instrumentation-express';

import { HttpInstrumentation } from '@opentelemetry/instrumentation-http';

import { IORedisInstrumentation } from '@opentelemetry/instrumentation-ioredis';

import { OTLPTraceExporter } from '@opentelemetry/exporter-trace-otlp-proto';

import { OTLPMetricExporter } from '@opentelemetry/exporter-metrics-otlp-proto';

const sdk = new NodeSDK({

serviceName: 'consumer',

traceExporter: new OTLPTraceExporter({

url: 'http://127.0.0.1:4318/v1/traces'

}),

metricReader: new PeriodicExportingMetricReader({

exporter: new OTLPMetricExporter({

url: 'http://127.0.0.1:4318/v1/metrics'

}),

}),

instrumentations: [

new ExpressInstrumentation(),

new HttpInstrumentation(),

new PgInstrumentation(),

new IORedisInstrumentation()

],

});

sdk.start();

src/instrumentation/instrumentation.consumer.ts

Here, we've replaced the console exporter with OTLP, directing the telemetry data to Jaeger.

Run the starting scripts again and navigate to http://localhost:16686 in your browser.

In the left-hand menu, you'll find an option to view traces from various services. You should see at least two services listed: producer and consumer. Select producer and click Find Traces to view the observed BullMQ operations.

This view displays comprehensive information about the observed application components, starting from the initial HTTP call, through Express and PostgreSQL, to BullMQ and Redis.

The left-hand menu allows you to filter traces by their origin, such as by consumer:

...or by other operations specific to your application:

One of the powerful capabilities of telemetry is the ability to gain insights into various parameters passed within your application. This can be invaluable for debugging and further development:

Furthermore, if any errors occur, they will be displayed as special events within the span. You can expand the Logs section of a span to view detailed information about the error:

It's crucial to remember that spans must be explicitly ended before they are sent to the telemetry backend. This means that if a worker process crashes mid-process, preventing the span from being closed, the entire trace will be lost and won't appear in Jaeger (or any other telemetry system you might be using).

For instance, if a worker dies in our example application, the complete trace, from the initial HTTP call to the Redis operation, will not be saved. This is a critical limitation to be aware of to avoid misinterpreting missing traces.

And there you have it! You've successfully built a simple BullMQ application with OpenTelemetry integration.

]]>This post is not about mounting a file with environment

]]>I spent more time than I would like to admit trying to solve a problem I thought would be standard in the Docker world: passing a secret to Docker build in a CI environment (GitHub Actions, in my case).

This post is not about mounting a file with environment secrets, as there are already good posts about it (such as this one). Instead, I needed to pass an environment variable as a secret for building my Docker container using GitHub Actions.

The solution turned out to be simple, but with one limitation: you can only use one secret per RUN statement in the Dockerfile.

To build the Docker image, you can pass the secret from an environment variable like this:

docker build --secret id=env,env=MY_SECRET -t my_image .This will take the environment variable MY_SECRET and allow the Dockerfile to mount it as a file.

For example, if we need to pass an Npm token when installing the packages of a NodeJS application, we would do the following:

RUN --mount=type=secret,id=env export MY_SECRET=$(cat /run/secrets/env) \

yarnThis statement does two things:

- It mounts the environment variable assigned to the "env" ID that was passed to the build command, and mounts it as a file, which by default would be placed in

/run/secrets/myId(envin this example). - It assigns the contents of the file to an environment variable that will only be available in that line of the Dockerfile (so you will need to mount it multiple times if you need it in different places). So yarn will hace access to it during the installation of the NodeJS packages.

Using this technique, you can mount several environment variables, but as far as I could figure out, you can only mount one per RUN statement. Maybe there is a way to overcome this limitation, but I did not have time to investigate further.

]]>We have just released a new major version of BullMQ. And as all major versions it includes some new features but also some breaking changes that we would like to highlight in this post. If you are using Typescript (as we dearly recommend), you will get compiler errors if you are affected by these changes.

Better Rate-Limiter

The rate-limiter provided by the previous versions of BullMQ was functional and did its job. The rate-limiter was based on delayed jobs, so as soon as a queue became rate-limited, all the jobs would be moved to the delay set with a carefully calculated delay so that they would be picked up as soon as the rate-limiter had expired.

This approach worked for many scenarios, and also made possible the implementation of a rudimentary rate-limiter based on group ids. However, we were never fully satisfied with this solution. It seemed unnecessary to move jobs from one set to another, which in some degenerate cases would result in very high CPU usage due to movements of jobs back and forward between these two sets.

Instead, we knew that the optimal solution would be to not perform any work as long as a queue was rate-limited. The problem was with the groups. Since we could not know if there were groups far away in the wait list, we could not just stop processing the queue, as it could be possible that there were jobs in groups that were not rate-limited yet.

In the end, we came to the conclusion that it was better to sacrifice the group based limiter in favor of a much better and robust global rate-limiter. The group rate limiter will still be available in a much better form and with more functionality in the Pro version.

Dynamic Rate-Limiter

A highly requested feature has been to be able to dynamically activate rate-limiting in a queue based on some condition that happened during the processing. For example, we can now activate the rate-limiter if an HTTP response included the 429 (Too Many Requests) code:

const rateLimitWorker = new Worker(

"rate-limit",

async (job) => {

const result = await callExternalAPI(job.data);

if (rateLimited(result)) {

await rateLimitWorker.rateLimit(result.expireTime);

throw Worker.RateLimitError();

}

}, { connection });You can read this blog post with more details on how to implement rate-limiters with BullMQ.

Better typing for Backoff strategies

We made a small change in the way backoff strategies (how the delay is calculated when a job fails and should be retried) are defined. Instead of passing an object with the strategies keyed by strategy name, we have simplified it so you only pass a function callback.

const myWorker = new Worker("test", async () => {}, {

connection,

settings: {

backoffStrategy: (attemptsMade: number) => {

return attemptsMade * 500;

},

},

});

Replaced "cron" by the more generic "pattern"

Since the introduction of custom repeat strategies, we have used the option "pattern", that is generic for any kind of strategy. With this new version of BullMQ we finally remove the "cron" option, so if you are still using it, please just rename it to "pattern" when migrating to version 3.0.

await myQueue.add(

'submarine',

{ color: 'yellow' },

{

repeat: {

pattern: '* 15 3 * * *',

},

},

);As the communication between microservices increases and becomes more complex, we often have to deal with limitations on how fast we can call internal or external APIs. When the services are distributed and scaled horizontally, we find that limiting the speed while preserving high availability and robustness can become quite challenging.

In this post, we will describe strategies that are useful when dealing with processes that need to be rate-limited using BullMQ.

What is rate limiting?

When we talk about rate limiting we often refer to the requirement of a given service to not be called too fast or too often. The reason is that, in practice, all services have limitations, and by putting a rate limit they protect themselves from going down due to too high traffic that they are just not design to cope with.

As a consumer of a service that is rate limited, we need to comply with the service's constraints to avoid the we risk of being banned or rate limited even harder.

A basic rate-limiter

Let's say that we have some service from which we want to consume some data by calling an API. The service specifies a limit on how fast we are allowed to call its API. The limit is usually specified in requests per second. So let's say for the sake of this example that we are only allowed to perform up to 300 requests per second.

Using BullMQ we can achieve this requirement using the following queue:

import { Worker } from "bullmq";

const rateLimitWorker = new Worker(

"rate-limit",

async (job) => {

await callExternalAPI(job.data);

},

{

connection: { host: "my.redis-host.com" },

limiter: { max: 300, duration: 1000 },

}

);

The worker above will call the API at most 300 times per second. So now we can add as many jobs as we want using the code below, and we will have the guarantee that we will not call the external API more often than allowed.

import { Queue } from "bullmq";

const rateLimitQueue = new Queue("rate-limit", {

connection: { host: "my.redis-host.com" },

});

await rateLimitQueue.add("api-call", { foo: "bar" });

Since we are using queues, we would like to get some extra robustness in case the call to the external API fails for any reason. We can simply add a retry option so that we can handle temporal failures as well:

import { Worker } from "bullmq";

const rateLimitWorker = new Worker(

"rate-limit",

async (job) => {

await callExternalAPI(job.data);

},

{

connection: { host: "my.redis-host.com" },

limiter: { max: 300, duration: 1000 },

attempts: 5,

backoff: {

type: "exponential",

delay: 1000,

},

}

);

The worker will now retry in the case the API call fails, since we used the "exponential" back-off it will retry after 1, 2, 4, 8, and 16 seconds. If the job still fails after the last attempt, then the job will be marked as failed. You can still manually inspect the failed jobs (or retry manually), either using the Queue API of BullMQ or using a dashboard such as Taskforce.sh.

Scaling up

The avid reader may have discovered an issue in the example above. If the API call would take, for example, 1 second to complete, then even though we have a rate-limiter of 300 requests per second, we would just perform 1 request per second, since only one job will be processed at a any given time.

Thankfully we can easily increase the concurrency of our worker by specifying the concurrency factor. Lets crank it up to 500 in this case, this means that at most 500 calls will be processed in parallel (but no more than 300 per second):

import { Worker } from "bullmq";

const rateLimitWorker = new Worker(

"rate-limit",

async (job) => {

await callExternalAPI(job.data);

},

{

connection: { host: "my.redis-host.com" },

concurrency: 500,

limiter: { max: 300, duration: 1000 },

attempts: 5,

backoff: {

type: "exponential",

delay: 1000,

},

}

);

When the work to be done is mostly IO, it is efficient to increase the concurrency factor as much as we did in this example. If, on the other hand, the work had been more CPU intensive, like for example transcoding an image from one format to another, then we would certainly not use such a large concurrency value, since it would only add overhead without any gains in performance.

In order to increase performance on a CPU bound job, it is more effective to increase the number of workers instead, as we get more CPUs to do the actual work.

Adding redundancy

We have so far one single worker with a lot of concurrency. The worker will process jobs as fast as 300 requests per second, and retry in the case of failure. This is quite robust, but often times you need even more robustness. For instance, the worker may go offline, and in that case the jobs will stop processing. The queue will grow as new jobs are added to it, but none will be processed until the worker comes up online again.

The simplest solution to this problem is to spawn more workers such as the one shown above. You can have as many workers running in parallel on different machines as you want. With the added redundancy, you minimize the risk of not processing your jobs in time. Also, for jobs that require a lot of CPU, having several workers will increase the throughput of your queue.

Smoothing out requests

When defining a rate-limiter as we did in our example above, by specifying a number of requests per second, the workers will always try to process as fast as possible until they get rate limited. This means that the 300 requests may all be executed almost at once, and then the workers will be mostly idling during one second where they will once again perform a very fast burst of calls.

This behaviour may not be the most desirable one in many cases, fortunately it is quite easy to fix. Instead of defining the duration of the rate-limiter in seconds we can divide one second by the number of jobs, so that the workers process jobs during the whole second instead of in bursts.

So for example, in our case where we limited to 300 jobs per second, we can instead write the limiter max value as 1 and the duration like this: 1000 / 300 = 3.33:

{

limiter: {

max: 1,

duration: 3.33

}

}With such rate-limiter, the jobs will now be processed at regular 3.33ms intervals, instead of being processed in bursts.

Dynamic rate-limit

There are occasions when a fixed rate-limit is not enough. For example, some services do rate limit you based on more complex rules and your HTTP call will return a 429 (Too Many) response. In this case the service will tell you how much you need to wait until you are allowed to perform the next call, for example in the response header we can find something like this:

Retry-After: 3600BullMQ also supports dynamic rate-limiting, by signalling in the processor that a given queue is rate limited for some specified time:

const rateLimitWorker = new Worker(

"rate-limit",

async (job) => {

const result = await callExternalAPI(job.data);

if (rateLimited(result)) {

await rateLimitWorker.rateLimit(1000);

throw Worker.RateLimitError();

}

},

{

connection: { host: "my.redis-host.com" },

concurrency: 500,

limiter: { max: 300, duration: 1000 },

attempts: 5,

backoff: {

type: "exponential",

delay: 1000,

},

}

);

In this example, we assume that we get some result from the call to the external API that tells us that we should be rate limited. So we call the "rateLimit" method in the worker specifying, in milliseconds, how much to wait until we can process a job again.

We can additionally throw a special exception that tells the worker that this job has been rate limited, so we should process it again when the rate limit time has expired.

Groups

If you require even more advanced rate limit functionality, the profesional version of BullMQ provides support for groups.

Groups are necessary when you want to provide a separate rate-limiter to a given subset of jobs. For example, you may have a Queue where you put jobs for all the users in your system. If you used a global rate-limiter for the queue, then some users may get to use more of the system resources than others, as all are affected by the same limiter.

Using groups however, every user could have its own separate rate-limiter, and therefore they would not be affected by other user's high volume of work, as the busy users will be rate limited independently of the less "busy" users.

Furthermore, it is also possible to combine a global rate-limit with the per-group rate-limiter, so even in the case when you have a lot of jobs for many users all at the same time, you can still limit the total overall amount of jobs that get to be processed per unit of time.

const rateLimitWorker = new Worker(

"rate-limit",

async (job) => {

await callExternalAPI(job.data);

},

{

connection: { host: "my.redis-host.com" },

concurrency: 500,

limiter: { max: 300, duration: 1000 },

attempts: 5,

backoff: {

type: "exponential",

delay: 1000,

},

groups: {

limit: {

max: 20,

duration: 1000,

},

},

}

);

For more information about groups check the documentation.

]]>It is always an extra effort to deal with breaking changes, so we try to minimize them as much as we can. There are normally 2 types of

]]>BullMQ has reached version 2.0. Which according to the "SemVer" standard means that we have introduced some breaking changes.

It is always an extra effort to deal with breaking changes, so we try to minimize them as much as we can. There are normally 2 types of possible breaking changes in BullMQ:

- Changes that affect the API, or functional behaviour of the library.

- Changes that affect the structure of the data stored in Redis.

In this version we only made breaking changes of the first type, so as long as your typescript compiles after upgrading, you should be all set.

Without further ado let's review the changes which will affect your current codebase if you want to upgrade.

QueueScheduler has been removed

The class that took care of delayed and stalled jobs has been removed from the library and is no longer necessary. We have implemented a simpler mechanism for handling delayed jobs (in a separate post I will go through the details of this mechanism), and also moved the stalled jobs checker to the workers themselves.

This change does not only make your codebase simpler, but also consumes less connections and have one less moving part that, honestly, has always been like a stone on my shoe, but we did not have a good solution to get rid of it.

With the development of the groups functionality in BullMQ Pro, however, we discovered a trick that could also be applied to how delayed jobs where handled, so after some redesign we were able to implement it and ultimately get rid of the QueueScheduler altogether.

The "Added" event does not contain data and options anymore

Previously to version 2.0 we were storing the data and opts of the job on the "added" event. This was for most users just a waste of memory and CPU, so we are now only sending the job id, and if you need the data for your specific use case you can just get it from the job.

Compatibility layer with legacy Bull removed

This layer was conceived to be used if you wanted to upgrade from a Bull codebase to BullMQ without needing to use the newer classes. But as far as we are aware, it was not used by anyone (not a single issue or question since BullMQ was release 3 years ago), so we decided to remove it to make the codebase smaller.

Minimum Redis version recommended is now 6.2

We have raised the minimum version of Redis to 6.2. Although version 5.0+ will kind of work, it is highly recommended that you upgrade to at least version 6, since with the removal of the QueueScheduler we now need that the timeout argument is interpreted as a "double" in the BRPOPLPUSH command. If you are still on 5.0 version, the delayed jobs will sometimes be delayed more than they should as the timeout cannot represent exactly the remaining delay to the next job.

We also made some smaller fixes here and there, you can find the complete changelog here. As always, we will keep fixing issues and adding new features until we reach the next major version of BullMQ.

]]>BullMQ provides a powerful feature called "flows

]]>Divide and conquer is a well-known programming technique for attacking complex problems in an easier way. In a distributed environment it can be used to accelerate long processes by dividing them into small chunks of work and then joining the results together.

BullMQ provides a powerful feature called "flows" that allows a parent job to depend on several children. In this blog post, we are going to show how this feature can be used to divide a long CPU-intensive job (video transcoding), into smaller chunks of work that can be parallelized and processed faster.

All the code for this blog post can be found here. Note that this code is written for a tutorial and not really a production standard.

Video transcoding

Transcoding of videos is a common operation on today's internet as videos that are uploaded to sites are often not in a suitable format for being reproduced on a webpage. The most common transcoding is performed to produce a standard format (often mp4) with a standard resolution and compression quality.

But transcoding is a quite CPU-demanding operation, and the time to transcode a video is normally proportional to the length of the video, so the process can become quite lengthy if the videos are really long.

Using the excellent video handling library FFmpeg we can transcode the videos from almost any known format to any other format. FFmpeg also allows us to quickly split a video into parts and also join the parts together. We will use these capabilities in order to implement a faster transcoding service using BullMQ flows.

Flow structure

We will create a flow with 3 levels, every level is handled by a queue and a specific worker.

The first queue will take the input file and divide it into chunks and add a new job to the second queue for every chunk as well as a job to a parent queue that will be processed when all the chunks have been processed.

Here is a diagram depicting the whole structure:

File storage

For this tutorial we will, for simplicity, just use the local file storage, however, in a production scenario, we should use a distributed storage system such as S3 or similar so that all workers can access all data independently on where they run.

Worker Factory

Since we are going to use several queues, we will refactor the worker creation into its own function, this function will also create a queue scheduler so that we can get automatic job retries and stalled jobs handling if needed. We will attach a couple of listeners in order to get some feedback when the jobs are completed or failed.

For this application, even though it is very CPU intensive we will not use "sandboxed" processors because the call to FFmpeg is already spawning a new process, so using sandboxed processors would just increase the overhead.

Also note that although in this example we only use 1 worker per queue, in a production system we could have as many workers as we wish in order to process more videos faster.

import { Worker, QueueScheduler, Processor, ConnectionOptions } from "bullmq";

export function createWorker(

name: string,

processor: Processor,

connection: ConnectionOptions,

concurrency = 1

) {

const worker = new Worker(name, processor, {

connection,

concurrency,

});

worker.on("completed", (job, err) => {

console.log(`Completed job on queue ${name}`);

});

worker.on("failed", (job, err) => {

console.log(`Faille job on queue ${name}`, err);

});

const scheduler = new QueueScheduler(name, {

connection,

});

return { worker, scheduler };

}

Splitter Queue

The process starts with a queue "splitter" where a worker will take the video input and split it into similar-sized parts that can later be transcoded in parallel. Since the whole point of splitting is to accelerate the transcoding process, we need the split to be very fast, for this we can use the following FFmpeg flags: "-c copy -map 0 -segment_time 00:00:20 -f segment", they will split the file into around 20 seconds chunks without re-encoding, more information can be found in stackoverflow. It is possible there are other chunk sizes that are more optimal, but for this example, 20 seconds is good enough.

After the video file has been split we need to parse FFmpeg output to get the filenames of every chunk, and with this information create a BullMQ flow that will process every chunk, and when all chunks are processed a "parent" worker will concatenate the transcoded chunks into one output file.

export default async function (job: Job<SplitterJob>) {

const { videoFile } = job.data;

console.log(

`Start splitting video ${videoFile} using ${pathToFfmpeg}`,

job.id,

job.data

);

// Split the video into chunks

const chunks = await splitVideo(videoFile, job.id!);

await addChunksToQueue(chunks);

}

async function splitVideo(videoFile: string, jobId: string) {

// Split the video into chunks

// around 20 seconds chunks

const stdout = await ffmpeg(

resolve(process.cwd(), videoFile),

"-c copy -map 0 -segment_time 00:00:20 -f segment",

resolve(process.cwd(), `output/${jobId}-part%03d.mp4`)

);

return getChunks(stdout);

}

function getChunks(s: string) {

const lines = s.split(/\n|\r/);

const chunks = lines

.filter((line) => line.startsWith("[segment @"))

.map((line) => line.match("'(.*)'")[1]);

return chunks;

}

async function addChunksToQueue(chunks: string[]) {

const flowProducer = new FlowProducer();

return flowProducer.add({

name: "transcode",

queueName: concatQueueName,

children: chunks.map((chunk) => ({

name: "transcode-chunk",

queueName: transcoderQueueName,

data: { videoFile: chunk } as SplitterJob,

})),

});

}

Transcoder Queue

In this queue, we add the chunks that are part of some video file. Note that the worker of this queue does not need any other information than the chunk file itself so it can just focus on performing the transcoding of that chunk as efficiently as possible. In fact, this worker is quite simple compared to the splitter worker:

export default async function (job: Job<SplitterJob>) {

const { videoFile } = job.data;

console.log(`Start transcoding video ${videoFile}`);

// Transcode video

return transcodeVideo(videoFile, job.id!);

}

async function transcodeVideo(videoFile: string, jobId: string) {

const srcName = basename(videoFile);

const output = resolve(process.cwd(), `output/transcoded-${srcName}`);

await ffmpeg(

resolve(process.cwd(), videoFile),

"-c:v libx264 -preset slow -crf 20 -c:a aac -b:a 16k -vf scale=320:240",

output

);

return output;

}It transcodes the input to an output file and returns the file path to said file.

Concat Queue

The last queue is the parent of the "transcoder" code, and as such will start processing its job as soon as all the children have completed it. In order to concatenate all the files we need to generate a special text file with all the filenames and feed it to FFmpeg:

async function concat(jobId: string, files: string[], output: string) {

const listFile = resolve(process.cwd(), `output/${jobId}.txt`);

await new Promise<void>((resolve, reject) => {

writeFile(

listFile,

files.map((file) => `file '${file}'`).join("\n"),

(err) => {

if (err) {

reject(err);

} else {

resolve();

}

}

);

});

await ffmpeg(null, `-f concat -safe 0 -i ${listFile} -c copy`, output);

return output;

}With this last worker, we conclude the whole process. I hope this simple example for videos is useful to illustrate how jobs can be split in order to accelerate the time it takes for lengthy operations. The complete source code can be found here.

]]>A common pattern that arises when working with queues in general and BullMQ in particular, is the one of waiting for a job to complete. The reasoning goes, that if the job is doing something useful we need to wait for it to be complete so that we can get the result of its work.

So an intuitive way to code this behaviour would be something like this:

const job = queue.add("my-job", { foo: 'bar' });

const result = await job.waitUntilFinished();

doSomethingWithResult(result);This is intuitive because it follows a sequence of actions and it's what you may be used to do on a single process, sequential program. However when using distributed queues on a highly tolerant system you do not want to solve the problems like this.

First of all, the code above does not provide any guarantees that the call to "doSomethingWithResult(result)" will ever be performed successfully, and if it fails, the result of that job will be lost forever.

Secondly, we do not get any guarantees on how long time it will take for a given job to complete. The point of the queue in the first place was to offload job to workers, so it could very easily be the case the workers are quite busy and the duration of the call to "waitUntilFinished" be unacceptable. You could add a timeout, but that would also make us lose that job's result forever.

Finally, it is much less efficient, imagine that for every job that you add to the queue you are also creating a promise and listeners and wait them to resolve. If you are adding many jobs per second this will be a slow way to handle them and will most likely become a performance and memory bottleneck.

So "waitUntilFinished" has very limited use, it is mostly used by test code but not on production code unless the above limitations do not matter for your specific case, but most likely you will be better off using a different approach.

How to do it instead?

The solution is to think about completing jobs in a different way. You have to consider that once you add a job to a queue, the "producer" of that job must let go. Now the job will live its own life processed by one of the potentially hundreds of workers running in parallel.

Let's illustrate this different way of thinking with a couple of examples.

HTTP Call that must return the job result

This one is quite a common case where you have for example a POST HTTP call to a service, the service will do some heavy work so you want to offload this job to a queue and when the job completes return this result to the caller.

If these are your requirements, then you will need to actually change them a little bit because waiting for a job to complete inside an HTTP handler does not scale and suffers from all the problems highlighted above.

Instead what you typically do in this case is to break the call into 2 (or more) calls.

The first call will just enqueue the job and return to the caller a Job ID or any other ID that the caller can later use for asking about this particular job status.

app.post("/jobs", json(), async (req, res) => {

try {

const job = await queue.add("my-queue", req.body);

res.status(201).send(job.id);

} catch(err) {

res.status(500).send(err.message);

}

});

The job has been queued and the user gets a response from the HTTP call immediately. With the provided Job ID, the caller can now ask for the job status, something like:

app.get("/jobs/:id/status", async (req, res) => {

try {

const status = await queue.getJobStatus("my-queue", req.params.id);

res.status(201).send(status);

} catch(err) {

res.status(500).send(err.message);

}

});Long running process

Sometimes you have jobs that will need to work for a long time until completing. In this case it is quite common to report progress for some interested parties. The way to do this is by using the job api "progress":

export default async function (job: Job) {

await job.log("Start processing job");

for(let i=0; i < 100; i++) {

await processChunk(i);

await job.progress({ percentage: i, userId: job.data.userId });

}

}You can listen to all the progress events for a given queue, and when some progress is detected report to the interested party.

const queueEvents = new QueueEvents("my-queue", { connection });

queueEvents.on("progress", async ({ jobId, data }) => {

const { userId } = data;

// Notify userId about the progress

await notifyUser(userId, data);

});Now the question which falls a bit outside of the scope of this post is how to practically notify the relevant user with the progress information. Typically it would be via some WebSocket associated with a certain user id, so you could just send a message to that socket. Now, the important thing to notice here is that notifying the progress is usually not a critical thing to do, so it is not the end of the world if for some temporal network issue the user does not receive a particular progress event.

However, it may be critical to notify the completion of the job to some other system. In this case, a robust pattern is to use another queue only to notify completions. As we will be adding a job to a different queue inside the process function, we get the guarantee that if the job is added successfully, the "message" will be delivered:

export default async function (job: Job) {

await job.log("Start processing job");

for(let i=0; i < 100; i++) {

await processChunk(i);

await job.progress({ percentage: i, userId: job.data.userId });

}

await otherQueue.add("completed", { id: job.id, data: job.data });

}The otherQueue would be handled by the service interested in knowing that the job has been performed, just process the message and do something useful, maybe update a status in a database, or whatever.

export default async function (job: Job) {

await updateDatabaseWithResult(job.data);

}However there is a special case that has kept people scratching their heads unnecessarily and that is when you want to use sandboxed workers, i.e. a worker that is

]]>Writing code using Typescript and BullMQ is quite straightforward most of the time since BullMQ itself is written in Typescript.

However there is a special case that has kept people scratching their heads unnecessarily and that is when you want to use sandboxed workers, i.e. a worker that is run in a separated NodeJS process. In this blog post I would like to offer an example with some scaffolding to easily accomplish this. It is by no means the only possible way to do it, but it is one way that works well in most cases.

Source code

The source code for this blog post is available here. Feel free to fork or copy the repo if you want to use it as a blueprint for new projects. And here the documentation for sandboxed processors.

ESM or CommonJS

Before writing this post (in mid 2022), I spent a lot of time trying to port a medium sized project to a pure ESM setup with Typescript. The amount of problems I got down this path made me reconsider and finally I found a sweet spot which is, use ES6 import syntax within Typescript but configure the compiler to generate CommonJS modules. This happens to work quite well, although it would be of course more elegant to use the new ES6 modules all the way. Maybe in the future it would be easier but for now I recommend this setup when working with Typescript in the NodeJS ecosystem.

Project structure

We will create a typical structure for a Typescript application in NodeJS, that is providing a package.json with scripts for building, running in dev mode as well as running tests. We will also provide a suitable tsconfig.json file.

Inside the src directory we will put all the code for our application, including the workers. Note that for large apps you may want to move the worker code to a separate repository, away from the main application logic.

Worker

For this example we will just have a dummy worker that doesn't do anything:

import { Job } from "bullmq";

/**

* Dummy worker

*

* This worker is responsible for doing something useful.

*

*/

export default async function (job: Job) {

await job.log("Start processing job");

console.log("Doing something useful...", job.id, job.data);

}

As the worker is going to be used as a sandboxed worker we just implement the "process" function and export it as the default export.

The worker is then instantiated in a different file, in our case it would be on the index.ts. For this example we will just use concurrency 1 so we leave all the options to their defaults:

const myQueue = new Worker(queueName, `${__dirname}/workers/my-worker.js`, {

connection,

});

Note that we do not specify the original ".ts" file, but the compiled ".js" file instead which will be built along the rest of the source code. This may look a bit awkward but it does not have any major drawbacks.

Producer

For this dummy example provide also a "producer" that adds some jobs to the queue so that the worker can process them. The code is very simple and we can just add a bunch of jobs like this:

import { Queue } from "bullmq";