I just released WW-PGD, a small PyTorch add-on that wraps standard optimizers (SGD, Adam, AdamW, etc.) and applies an epoch-boundary spectral projection using WeightWatcher diagnostics.

Elevator pitch: WW-PGD explicitly nudges each layer toward the Exact Renormalization Group (ERG) critical manifold during training.

𝗧𝗵𝗲𝗼𝗿𝘆 𝗶𝗻 𝘀𝗵𝗼𝗿𝘁

• HTSR critical condition: α ≈ 2

• SETOL ERG condition: trace-log(λ) over the spectral tail = 0

WW-PGD makes these explicit optimization targets, rather than post-hoc diagnostics.

𝗛𝗼𝘄 𝗶𝘁 𝘄𝗼𝗿𝗸𝘀

- Runs weightwatcher (ww) at epoch boundaries

- Uses ww layer quality metrics to identify the spectral tail

- Selects the optimal tail guess at each epoch

- Applies a stable Projected Gradient Descent update on the layer spectral density via a Proximal, Cayley-like step.

- Retracts to exactly satisfy the SETOL ERG condition

- Blends the projected weights back in (with warmup + ramping to avoid early instability)

In other words, it projects the results of your optimizer on the ERG critical manifold, the feasible set in a spectral constraint optimization setup.

𝗦𝗰𝗼𝗽𝗲 (𝗶𝗺𝗽𝗼𝗿𝘁𝗮𝗻𝘁)

This first public release is focused on training small models from scratch. It is not yet intended for large-scale fine-tuning. It’s the first proof of concept of such an approach.

So far, WW-PGD has been tested on:

- 3-layer MLPs (MNIST / FashionMNIST)

- nano-GPT–style small Transformer models

Larger architectures and fine-tuning workflows are active work in progress.

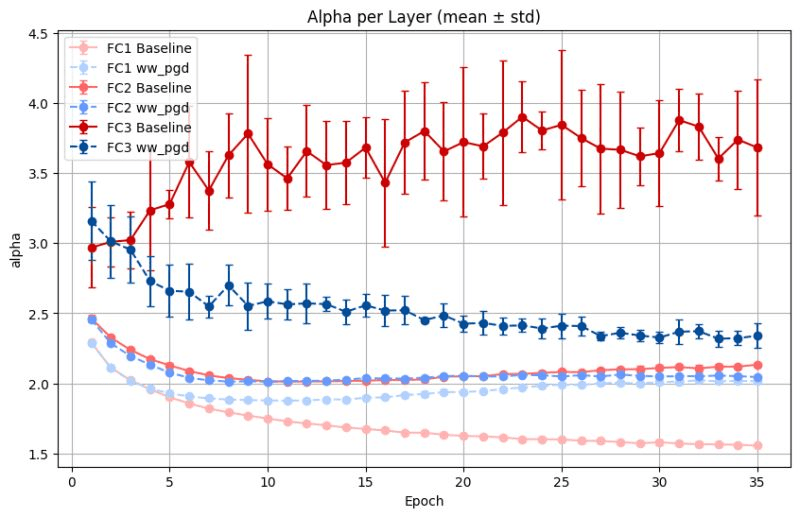

𝗘𝗮𝗿𝗹𝘆 𝗿𝗲𝘀𝘂𝗹𝘁𝘀 (𝗙𝗮𝘀𝗵𝗶𝗼𝗻𝗠𝗡𝗜𝗦𝗧, 𝟯𝟱 𝗲𝗽𝗼𝗰𝗵𝘀, 𝗺𝗲𝗮𝗻 ± 𝘀𝘁𝗱)

Below I show the layer alphas for a small (3-layer) MLP, trained on FashionMNIST to 35 epochs, compared to default AdamW

• 𝐏𝐥𝐚𝐢𝐧 𝐭𝐞𝐬𝐭: Baseline 98.05% ± 0.13 vs WW-PGD 97.99% ± 0.17

• 𝐀𝐮𝐠𝐦𝐞𝐧𝐭𝐞𝐝 𝐭𝐞𝐬𝐭: Baseline 96.24% ± 0.17 vs WW-PGD 96.23% ± 0.20

Translation: accuracy is roughly neutral at this scale — but WW-PGD gives you a spectral control knob and full per-epoch tuning.

𝗥𝗲𝗽𝗼 & 𝗤𝘂𝗶𝗰𝗸𝗦𝘁𝗮𝗿𝘁

Repo: https://github.com/CalculatedContent/WW_PGD

Repo: https://github.com/CalculatedContent/WW_PGD

QuickStart (with MLP3+FashionMNIST example): https://github.com/CalculatedContent/WW_PGD/blob/main/WW_PGD_QuickStart.ipynb

QuickStart (with MLP3+FashionMNIST example): https://github.com/CalculatedContent/WW_PGD/blob/main/WW_PGD_QuickStart.ipynb

𝗠𝗼𝗿𝗲 𝗶𝗻𝗳𝗼: https://weightwatcher.ai/ww_pgd.html

𝗠𝗼𝗿𝗲 𝗶𝗻𝗳𝗼: https://weightwatcher.ai/ww_pgd.html

If you’re experimenting with training and optimization on your own models, or want a data-free spectral health monitor + projection step, I’d love feedback — especially on other optimizers or small Transformer setups.

Join us on the weightwatcher Community Discord to discuss

https://discord.com/invite/uVVsEAcfyF

https://discord.com/invite/uVVsEAcfyF

A big thanks to hari kishan prakash for helping out here.

And, as always, if you need help with AI, reach out to me here. #talkToChuck

In this post I summarize the results of our latest work, SETOL: SemiEmpirical Theory of (Deep) Learning.

The Renormalization Group (RG) Theory

Renormalization Group (RG) theory was developed by old undergraduate physics professor, Ken Wilson. For this amazing work, he won the Nobel Prize in 1982 in Physics. The RG framework fundamentally changed our understanding of how certain physical systems behave at different scales, especially near critical points.

• Scale Invariance: RG integrates out “fast” or small-scale degrees of freedom,

providing an Effective Interaction or Hamiltonian that captures the relevant physics at each renormalization step.

• Critical Phenomena: Close to phase boundaries, physical systems often exhibit behaviors characterized by Heavy-Tailed Power-Law distributions.

• Universality: Many different systems, when viewed near criticality, share the same scaling laws. Universality underpins why RG has been so influential across physics—from quantum field theory to statistical mechanics.

In essence, RG is about understanding how the “big picture” emerges from finer and finer details, and how seemingly different systems can exhibit remarkably similar features when tuned to certain critical points.

Heavy-Tailed Self-Regularization (HTSR) of Deep Neural Networks

Shifting gears to modern Deep Neural Networks (DNNs): through empirical observations, in our research, we have found that when these networks train effectively, the singular values of their weight matrices often follow Heavy-Tailed (HT) Power-Law (PL) distributions. This led us to develop our phenomenological approach to understanding DNNs, our theory of Heavy Tailed Self-Regularization (HTSR).

• Emergence of Power Laws: As you train deeper and larger models on large datasets, the empirical spectral distribution (ESD) of eigenvalues, ,

of certain layers naturally become Heavy-Tailed Power Law, with the PL exponent alpha approaching a universal value alpha:

• Self-Regularization: Remarkably, even without explicit regularization methods (like dropout or weight decay), well-trained networks tend to “self-organize” into a regime where their spectra show universal PL behavior.

• Layer “Quality”: The open-source weightwatcher tool enables practitioners to inspect the spectral properties of a model’s weight matrices and provides layer “quality” metrics you can use to gauge the ability of the model to generalize—all without needing a separate test set.

1.3 Bridging the Gap: Why RG and HTSR Align

The appearance of heavy-tailed spectra in deep learning seems more than just a coincidence—it hints at an underlying scale invariance similar to what Renormalization Group theory describes in physics:

Power Law Similarities

• RG: Near phase transitions, physical observables follow power laws, and may display universal, critical exponents

• HTSR: Layer weight matrices in well-trained DNNs exhibit power-law (heavy-tailed) eigenvalue distributions, also with a universal critical exponent.

Effective Interactions and “Coarse-Graining”

• RG: provides an effective interaction defined by a scale-invariant transformation, where the relevant physics concentrates into a coarse grain model

• Deep learning: HTSR theory suggests that the generalizing eigen-components of a NN layer weight matrix concentrate into a low-rank subspace. And in a volume preserving way.

Near Criticality

• Physical systems at a critical point maximize certain properties (like sensitivity to perturbations).

• Many high-performing deep nets appear to operate in a “critical” regime, balancing complexity and generalizability in a way that fosters better performance

The Connection: SETOL: Semi-Empirical Theory of Learning

In an earlier blog post ( from way back in 2019) I proposed a new theory of learning, based on statistical mechanics, which today I call ‘SETOL: SemiEmpirical Theory of (Deep) Learning’.

The SETOL approach provides a rigorous way to compute the HTSR/weightwatcher Heavy-Tailed (HT) Power Law (PL) layer quality metrics, like and

, from first principles, using the layer weight matrix

as input. I call this a “SemiEmpirical” theory, in the spirit of other semi-empirical theories from nuclear physics and quantum chemistry.

As explained in the earlier blog post–Towards a new Theory of Learning: Statistical Mechanics of Deep Neural Networks–we can write the Quality (squared) of a NN layer (called the Teacher T) as the derivative of the Generating Function of a layer (.ie., 1 minus the Free Energy 1-F)

where

is the layer Quality (squared) Hamiltonian

is the overlap between Teacher

-and all possible random Student matrices

that resemble the Teacher.

and

are

real matrices, wth

is the inverse-Temperature,

This model is a matrix-generalization of the classic Student-Teacher model for the generalization of a Linear Perceptron from the Statistical Mechanics of Learning from Examples (1992). By combining this with an old, brilliant paper connecting Rational Decisions, Random Matrices and Spin Glasses (1998), we can form the matrix generalization. The matrix integral is called an HCIZ integral–an integral over random matrices. To evaluate this, the SETOL approach also posits that the integral is performed over an Effective Correlation Space (ECS), defined in the following way:

The Effective Correlation Space (ECS)

- The Hamiltonian spaces an lower-rank space, the ECS, defined by the tail of the Power Law (PL) distribution of the ESD

of the Teacher, denoted by the tilde

- The measure over all Student weight matrices

by a measure over Student correlation matrices, i.e.

, restricted to the ECS

This second condition can be checked using the weightwatcher tool, as described in this blog on Deep Learning and Effective Correlation Spaces. Remarkably, it aligns nearly perfectly in many cases where the weightwatcher PL quality metric .

This leads to a new expression for the layer Quality (squared)

which can evaluated using advanced techniques from statistical physics (i.e., large-N approximation, Saddle Point Approximation, Green’s Functions, etc.)

where is the R-transform, or generalized cumulant function, from Random Matrix Theory (RMT). (The

, when modeled on a Heavy-Tailed Teacher ESD, has a branch cut defined on the Power Law tail of the ESD, which is exactly what defined the ECS.)

We can then express the HTSR/weightwatcher layer quality as the partial derivative

This result gives the Quality a sum of matrix cumulants, and, IMHO, similar spirit to the Linked Cluster Theorem from my graduate school studies in Effective Hamiltonian theory. And in a similar way, the RG transformation lets us express the quality in terms of the spectral properties of the layer. It is a Semi-Empirical Effective Hamiltonian theory.

We can then extract the weightwatcher layer quality metrics formally, such an express for the alpha-hat PL metric from our Nature C. paper:

SETOL and RG: An Unexpected Connection

These 2 conditions, derived from first principles for our SETOL approach, give a new expression for the Quality (squared) Generating Function (or Free Energy) that is exactly analogous to taking 1 step of the Wilson Exact Renormalization Group

That is, the SETOL Volume-Preserving transformation is just like the Scale-Invariant transformation of RG theory. Moreover, while the SETOL Hamiltonian is model for a very complicated system, the RG transformation does not depend on the specific form of the Hamiltonian, and, even more importantly, can be tested empirically.

It is actually very easy to test and only requires the eigenvalues of the layer correlation matrix

in the ECS. The TRACE-LOG condition simply states that the

.

Testing the Connection: The TRACE-LOG condition

We can use the weightwatcher tool to test how well a given layer obeys the RG scale-invariant transformation (currently called the TRACE-LOG condition in the upcoming paper) ; in the tool this is currently called the detX condition. The detX option finds the smallest large eigenvalue that satisfies

for all eigenvalues

This is explained in this blog post on Deep Learning and Effective Correlation Spaces. If you run

watcher.analyze(plot=True, detX=True)

The tool will plot the layer ESD , with a red vertical line at

, the start of the HTSR Power Law (PL) tail, and a purple vertical line at

, the start of the ECS. When these 2 lines overlap, the TRACE-LOG condition holds. Here’s an example from the upcoming SETOL monograph (for a simple 3-layer MLPe rained on MINST, with varying learning rates LR)

Let us now call the difference between the red and purple lines,

. We can also test the theory by plotting the HTSR layer

versus the SETOL

.

when

Below I show results for a small MLP3 model you can train yourself, as well as various pretrained DNNs and LLMs.

Conclusions

The Heavy-Tailed Self-Regularization (HTSR) effect, measured by the open-source WeightWatcher tool, fits naturally with our Semi-Empirical Theory of (Deep) Learning (SETOL) and Ken Wilson’s Nobel Prize–winning Exact Renormalization Group (RG). In simpler terms, this shows that deep neural networks trained close to a “critical point” often display universal power-law patterns in their weight distributions—mirroring how RG “zooms out” from tiny details to capture big-picture behavior.

Using the new SETOL approach, we show that the weightwatcher HTSR metrics can be derived as a phenomenological Effective Hamiltonian, but one that is governed by a scale-invariant transformation on the fundamental partition function, just like the scale invariance in the Wilson Exact Renormalization Group (RG).

We call this an Effective Correlation Space (ECS), where networks tend to learn and generalize most effectively. WeightWatcher’s TRACE-LOG (detX) condition supports these RG-like properties across many different models, hinting that heavy-tailed, scale-invariant structures may be crucial for building more powerful AI systems—and might even guide us toward AGI. To learn more, please watch my TEDx Talk.

By applying HTSR and SETOL insights during training, researchers can deliberately tune a model’s hyperparameters and architecture to maintain or move closer to this near-critical “sweet spot.” The WeightWatcher tool helps track how the weight distributions evolve, allowing data scientists to spot heavy-tailed behavior early and optimize accordingly. Just using HTSR alone, researchers have developed advanced LLM training techniques to

- adjusting layer learning rates in training: Temperature Balancing

- training LoRA models with far less memory: AlphaLoRA

- pruning LLMs significantly while retaining their accuracy: AlphaPruning

With SETOL and the connection to RG theory, expect a lot more.

Being grounded in real theory (drawing on physics-based methods), the weightwatcher approach promises more predictable progress toward truly general AI. Rather than blindly scaling up models, we can target a verifiable, self-organizing regime that not only maximizes performance but also gives us a clearer path toward AGI.

Appendix: Empirical Results

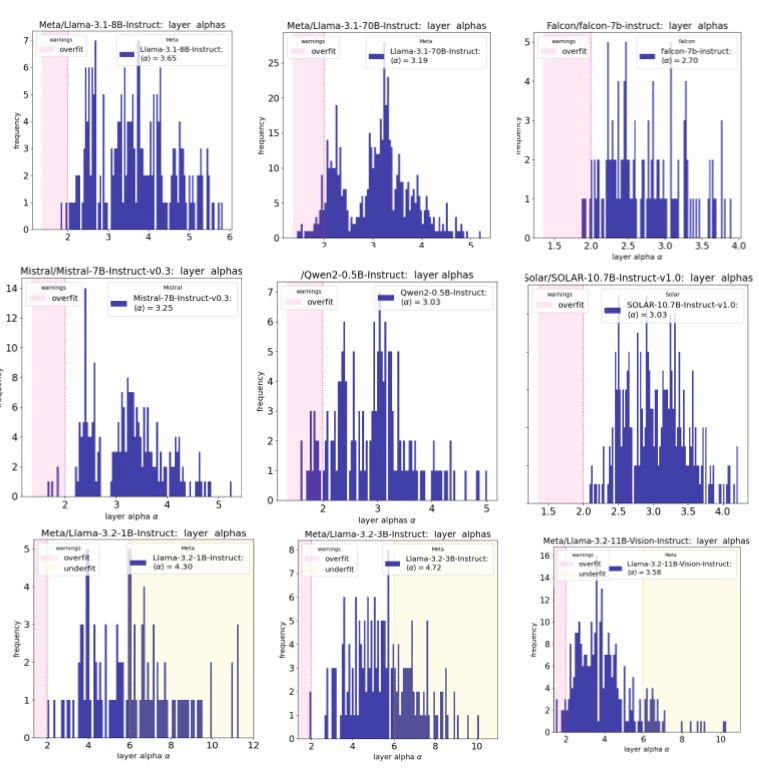

Llama3.2 is the first *major* fine-tuned instruct model I have seen so far that does not follow the weightwatcher / HTSR theory. (Far more under-trained layers, maybe less over-trained)

Here we examples from Llama3.1, Falcon, Mistal7B, Qwen2, and Solar. The smallest Llama3.2 models are shown on the bottom row.

WeightWatcher: Diagnosing Fine-Tuned LLMs

In previous blog posts, we have examined:

- What’s instructive about Instruct Fine-Tuning: a weightwatcher analysis, and how to

- Evaluate Fine-Tuned LLMs with WeightWatche

Briefly,when you run the open source weightwatcher tool on your model, it will give you layer quality metrics for every layer in your model. And the fine-tuned component (i.e. the adapter_model.bin, if you have it. Or it will reconstruct it for you).

You can then plot the layer quality metric alpha $( \alpha &bg=ffffff )$

- as an alpha histogram (with warning zones), or

- layer-by-layer: i.e. a Correlation Flow plot, or

- alpha-vs-alpha: comparing the FT part to the base model

Or, if you like, I can run your model for you in the new up-and-coming WeightWatcher-Pro and give you a free assessment:

For now, let’s take a look in some detail as to what’s going on.

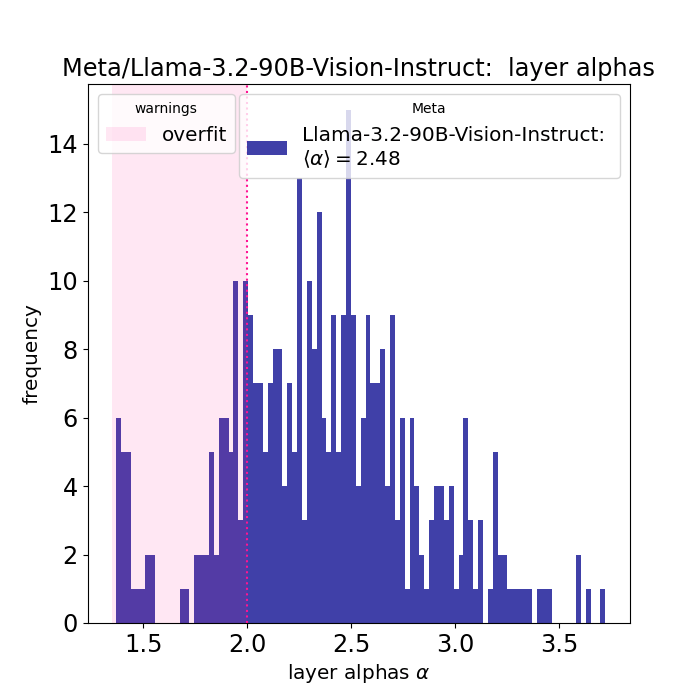

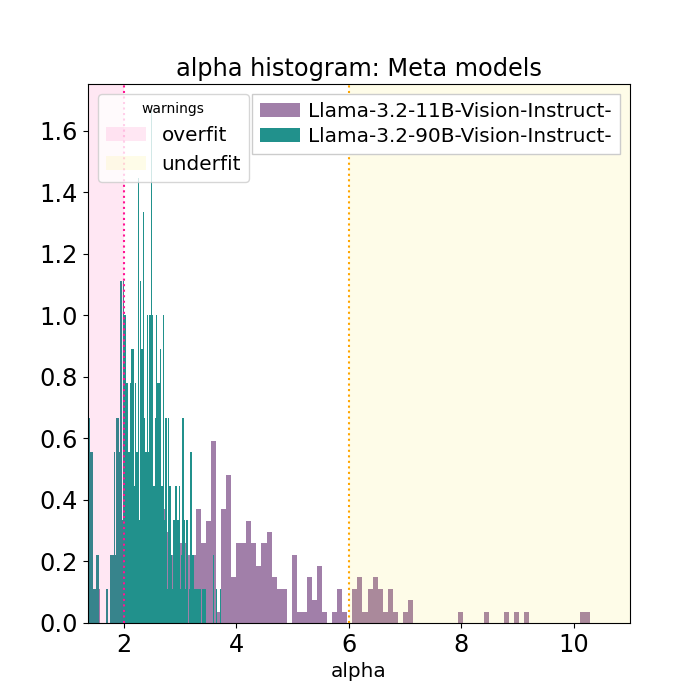

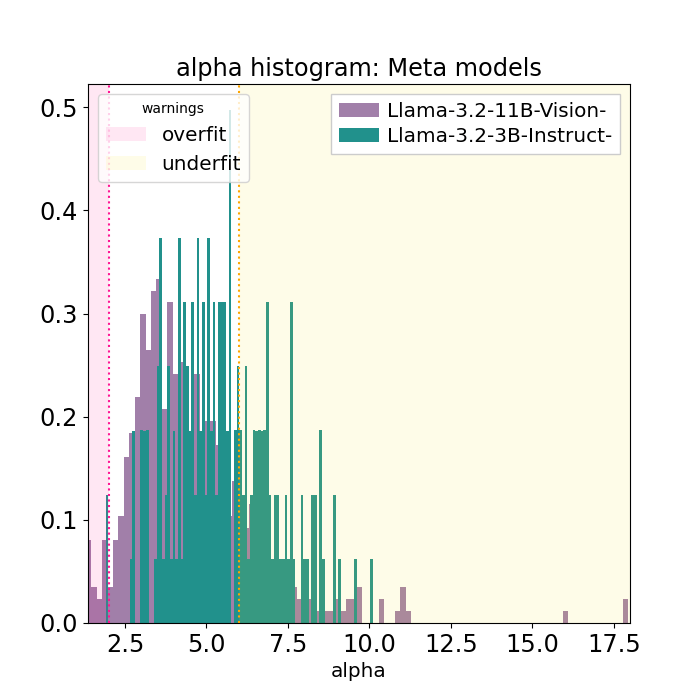

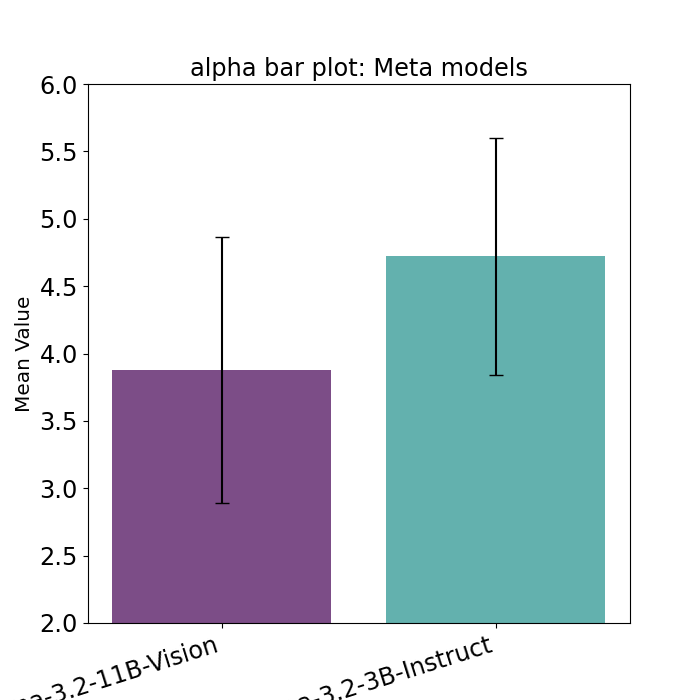

LLama3.2 11B vs 90B: Large models still follow the HTSR theory

The first question: is this only for the smaller models or do the larger LLama3.2 models also show this. And it turns out, yes, this is only for LLama3.2 1B, 3B. Less but a little for 11B. And not at all in 90B

The odd alpha plots with LLama3.2 1B and 3B do not appear in the larger Llama3.2-90B-Vision-Instruct (left). Especially when compared to the smaller LLama3.2-11B-Vision-Instruct model (right)

In the left-hand plot, we see that the weightwatcher layer alphas plotted as a histogram; almost all the layer alphas lie within 2 and 4. Although there are some alpha < 2. And a few alpha=0 (which indicates the layer is malformed. This indicates that all of the layers in Llama3.2-90B-Vision-Instruct are fully trained, and maybe some over-trained. But there are not that many over-trained.

In contrast, as shown in the right-hand plot, the Llama3.2-11B-Vision-Instruct layers have larger alphas on average, continuously ranging up to 6-7 and a few upto 8-10.

These results show that the average alpha is smaller for LLama3.2-90B than 11B, and the 11B shows a few overtrained layers. As expected with the HTSR theory.

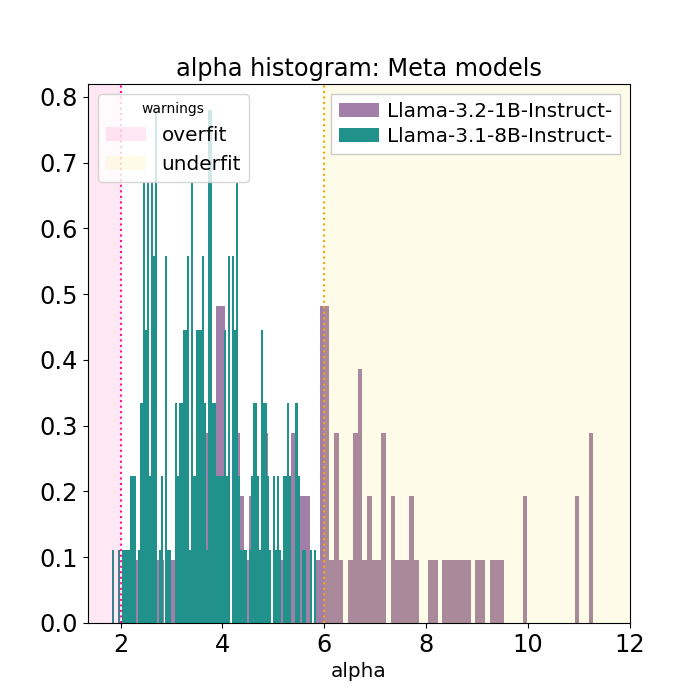

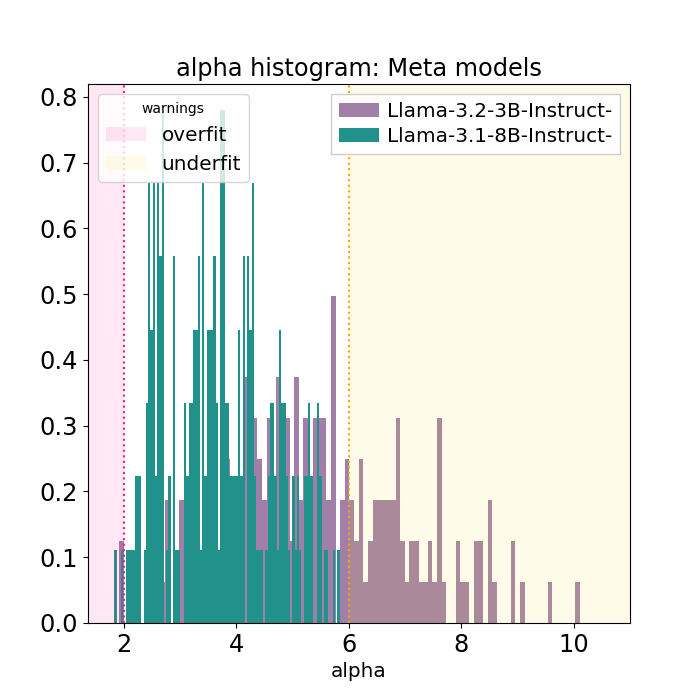

Smaller is weirder

As the Llama3.2 models get smaller, from 11B to 3B, the average layer alpha gets larger, and there are many more layer alpha greater than 6.

And while theory suggests that smaller models to have larger alphas, these plots are unique for the Llama3.2 models. For example, comparing Llama3.2-1B and 3B FT instruct parts to the admittedly larger Llama3.1-8B case, the weightwatcher alpha histogram plots are quite different!

Other Smaller Models are Not always Weird: Qwen2-05B-Instruct

The results above might suggest that the Llama3.2 1B and 3B models look weird simply because they are small, but other smaller models do NOT look like this. In particular, the Qwen2-0.5B-Instruct model looks just fine. Here’s an example you can find on the weightwatcher.ai landing page

Comparing the Qwen2-05B-Instruct model to its base, the layer alphas look great!

Whats going on in Llama3.2 ?

The key differences between Llama 3.2 and Llama 3.1 models, particularly the 1B and 3B versions, include:

- Improved Efficiency: Llama 3.2 models have been optimized for better computational efficiency, making them faster and less resource-intensive during both training and inference compared to Llama 3.1 models.

- Model Architecture Enhancements: Llama 3.2 introduces refinements in model architecture, such as improved layer normalization and better attention mechanisms, leading to enhanced performance, especially in tasks requiring nuanced language understanding.

- Inference Speed: Llama 3.2 models are more optimized for faster inference times, making them ideal for applications where speed is critical, such as real-time or edge

and, most importantly - Fine-Tuning and Generalization: Llama 3.2 models are designed with better fine-tuning capabilities, allowing for more effective adaptation to specific downstream tasks while maintaining strong generalization across a broader range of applications. deployments.

It’s possible that having more under-trained layers makes Llama3.2 easier to fine-tune because if all the layers have alpha~2, then the fine-tuned layers are more likely to be overfit. To that end, it will be very instruct to analyze fine-tuned versions of Llama3.2 1B and 3B as they become available.

The WeightWatcher Pitch

Fine-Tuning LLMs hard. WeightWatcher can help. WeightWatcher can tell you if your Instruct Fine-Tuned model is working as expected, or if something odd happened during training. Something that can not be detected with expensive evaluations or other known methods

WeightWatcher is a one-of-a-kind must-have tool for anyone training, deploying, or monitoring Deep Neural Networks (DNNs).

I invented WeightWatcher to help my clients who are training and/or fine-tuning their own AO models. Need help with AI ? Reach out today. #talkToChuck #theAIguy

Weightwatcher can help you determine if the Fine-Tuning went well, or if something weird happened that you need to look into. And you don’t expensive evals to do this.

WeightWatcher provides Data-Free Diagnostics for Deep Learning models

And its free. WeightWatcher is open-souce

pip install weightwatcher

Analyzing Fine-Tuned Models

In an earlier post, we learned what to do when Evaluating Fine-Tuned LLMs with WeightWatcher. I encourage you to review this post to get started.

Or, if you like, I can run the analysis for you using the up-and-coming weightwatcher-pro SAAS product and provide you a detailed write-up. Here’s a screenshot of some of what you will get.

Lets’ do a deep dive into a few common LLM and see what we can learn from weightwatcher:

Fine-Tuned Instruct Updates vs the Base

We examine weightwatcher results on several Instruct Fine-Tuned (FT) models including cases from the popular open-source base models: Mistral, Llama3.1, and Qwen2.5.

Below we plot the weightwatcher layer quality metric alpha for every layer as a histogram. As explained in our groundbreaking HTSR theory paper, the model is predicted to perform best when the layer alphas lie in the safe-zone, alpha between [2,6] $(latex \alpha \in [2,6] &bg=ffffff )$,

Notice the base Mistral model has many underfit layers than FT case, but both models stlll have many underfit layers. This is unusual for a large, Instruction Fine-Tuned model

For comparison, here are the Instruction FT parts of some other popular models of the same size, including the Mistral-7B-Instruct itself, Llama-3.1-8B-Instruct, and Qwen2.5-7B Instruct,

Here, while the base models have many underfit layers, the Instruction Fine Tuned updates have almost all layer alphas in the safe/white zone. Exactly as predicted by the HTSR theory.

Correlation Flow:

We now look at how the layer alphas vary from layer-to-layer as data passes through the model, from left to right for each architecture. This is called a Correlation Flow plot, and is described in the weightwatcher 2021 Nature Communications paper. As shown, most architectures (VGG, ResNet, etc) show a distinct pattern that represents how correlations (i.e information) flow in the model. from the data to the labels. Examining the Correlation Flow is critical to understand if a model architecture is likely to converge well for every layer.

Here we show Correlation Flow plots for Mistral-7B-v0.2, and for Llama3.1-8B and 70B Instruct components. Notice that all 3 plots look similar in that there are a few undertrained layers nearer to the left (closer to the data) , but most cluster towards the right (farthest from the data). This is typical; correlations in the data flow through the layers from the data to the labels, but sometimes the information just does not make it all the way through.

Notice that the underfit layers $( \alpha\gt 6 &bg=ffffff )$ lie mostly towards the right, and closer to the labels. This is common.

alpha vs. alpha:

Let us now compare the base model alphas to those of the fine-tuned updates. Here we examine the alpha-vs-alpha for the LLama3.1-70B-Instruct model. The x-axis is the base model LLama-70B, and the y-axis is the instructed fine-tuned part.

In models like the Instruct-Fine-Tuned models shown above (Mistral, Llama, Qwen, etc), even when the base model layers are weakly trained, they can still be fine-tuned. And when comparing alpha-vs-alpha, we find:

Lessons Learned:

- The smaller the base-model alpha, the smaller the fine-tuned alpha

- If the base model layer alpha ~ 2, this could lead to an FT alpha < 2, suggesting the layer is overfitting

- Even when the base alpha ~15, the FT alphas are between 2 and 6.

- These patterns are common among all large FT instruct models–so far.

What about counterexamples?

There are some FT models where these results do not appear to hold. The smaller Llama3.2 1B and 3B models. (but not the 90B variant!) The recently developed Polish language models Bielik. In a future post, we will look into these counterexamples and try to understand why this happens and what you can do to diagnose and remediate such issues.

Conclusions

Fine-Tuning LLMs hard. WeightWatcher can help. WeightWatcher can tell you if your Instruct Fine-Tuned model is working as expected, or if something odd happened during training. Something that can not be detected with expensive evaluations or other known methods

WeightWatcher is a one-of-a-kind must-have tool for anyone training, deploying, or monitoring Deep Neural Networks (DNNs).

WeightWatcher is an open-source, data-free diagnostic tool for analyzing Deep Neural Networks (DNN), without needing access to training or even test data. It is based on theoretical research into Why Deep Learning Works, using the new Theory of Heavy-Tailed Self-Regularization (HT-SR), published in JMLR, Nature Communications, and NeurIPS2023.

I invented WeightWatcher to help my clients who are training and/or fine-tuning their own AI models. Need help with AI ? Reach out today. #talkToChuck #theAIguy

IMHO, DD is a great way to understand how and when Deep Neural Networks might overfit their data, and, moreover, where they achieve optimal performance. And you can do this and more with the open-source weightwatcher tool

The original 1989 DD physics experiment

The original DD experiment from 1989 is easy to set up and run using modern python.

Here’s a notebook that you can run on Google Colab to reproduce all these results, and with a lot more details. (a lot)

Note: Sorry github won’t display this notebook, but it works fine. IDK why. You can also access the notebook on google colab here.

One simply trains a Linear Regression to predict the labels of a dataset with binary labels.

In this case, the data instances are drawn from the vertices of an N-dimensional hypercube and the labels are +1 or -1, depending on the sum of the ‘1s’ in the instance data vector.

This gives the linear equation

where each data vector (x), label (y=[-1|1]), and weight vector (w). Of course, the goal is to learn the N-dimension weight vector (w) given P training data instances.

We can generate the data vectors (x) and their labels (y) using this code (from the notebook):

def generate_mdp_dataset_numpy(P, N):

X = np.random.choice([-1, 1], size=(P, N))

Y = generate_majority_labels(X)

return X, Y

Lets run the experiment 100 times and plot the aveage results. Lets pick features, and let

patterns, where

is called the load. We vary

and compute the test accurary (measured on some random sample).

To just get the resuilts, we can use the scikit-learn LinearRegression module. Again, we run Linear (not Logistic) Regression to predict the binary labels . Here’s the notebook code we use for this.

def run_LR_experiment(alpha=1.0, verbose=True, N=100):

P = int(alpha * N)

X_train, Y_train = generate_mdp_dataset_numpy(P,N)

X_test, Y_test = generate_mdp_dataset_numpy(P,N)

# Train the linear regression model

regressor = LinearRegression(fit_intercept=False)

regressor.fit(X_train, Y_train)

# Predict Y values for the training and test sets

Y_train_value = regressor.predict(X_train)

Y_test_value = regressor.predict(X_test)

# Convert predictions to binary class labels based on the condition

Y_train_pred = np.where(Y_train_value > 0, 1, -1) # Converts to 1 if Y > 0 else -1

Y_test_pred = np.where(Y_test_value > 0, 1, -1) # Converts to 1 if Y > 0 else -1

# Compute accuracy for both training and test sets

train_accuracy = accuracy_score(Y_train, Y_train_pred)

test_accuracy = accuracy_score(Y_test, Y_test_pred)

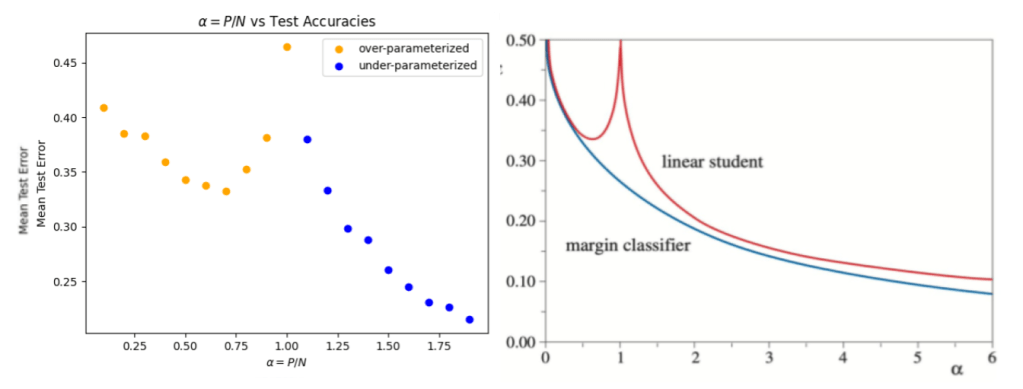

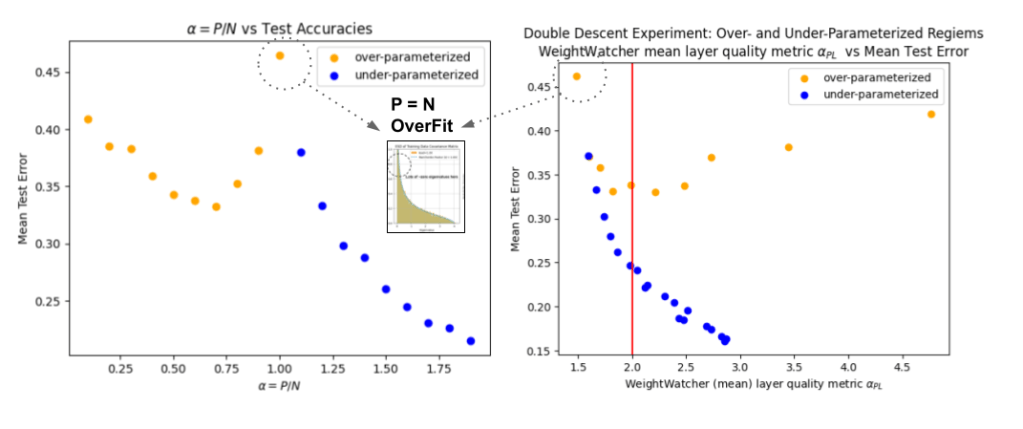

Doing this, we can reproduce the the original DD curve. On the LHS, I reproduced the experiment from the 1989 paper that discovered it . On the RHS, I present theoretical curves (from Opper 1995; ask me for a copy) using both statistical mechanics (StatMech, red) and statistical learning theory (SLT, blue). Notice that it is the StatMech approach that matches experiment. But we don’t need StatMech to understand this…

- N: Number of features / parameters

- P: Number of training examples (i.e Patterns)

- alpha: the ‘load’ parameter (note: this is not the weightwatcher alpha)

There are 2 regimes of interest”:

- under-parameterized: P > N. We have more training data than features. Here, we can always make the model better by just adding more data. Way more data. That’s easy.

- over-parameterized: N > P: We have more features, and therefore more adjustable weights, than training data. Of course, in this simple model, the test accuracy is no better than say 65%. But it does work. And this is the regime most interesting to LLMs and other Deep Neural Networks.

The over-parameterized regime

When , we call this the overparameterized regime. And this is the most interesting regime for LLMs and other DNNs which have a huge number of parameters. Also, this is where traditional statistics falls down.

The traditional stats approach fails to describe the case , where the test error unexpectedly explodes. But, in fact, this is quite easy to describe — and is even predicted — by the weightwatcher theory.

Moreover, when , the test error is minimized. Of course, it is still pretty high, but that’s OK since, again, we will see that, again, this is quite easy to describe — and is even predicted — by the weightwatcher theory. But first,

The PseudoInverse solution

Given a data pair , we can write Linear Regression problem as (where, here, the target variable

is just a binary label

)

We want to find the weight vector latex \mathbf{X}$ and the vector of labels

. We can now write the Linear Regression problem in matrix form as

,We can write the solutions in terms of the Moore-Penrose PseudoInverse. First, lets flip things around a little

Now, multiply by on both sides of this relation

We now invert the data covariance matrix

We now identify the Moore-Penrose PseudoInverse operator

The optimal weight vector is given by

As part of our initial analysis, we will compute the eigenvalues of the covariance matrix

, as described in the paper

as well as the distribution of the inverse eigenvalues

. It is these inverse eigenvalues we will analyze with weightwatcher

The weightwatcher layer quality metric (alpha)

To apply weightwatcher to the PseudoInverse problem, we need to first place the data matrix into a SingleLayerModel (see the notebook), and then use the newly added ‘inverse’ option, which analyzed the inverse covariance matrix of the layer

model = SingleLayerModel(weights=X_train)

watcher = ww.WeightWatcher(model=model)

details = watcher.analyze(inverse=True, plot=True, detX=True)

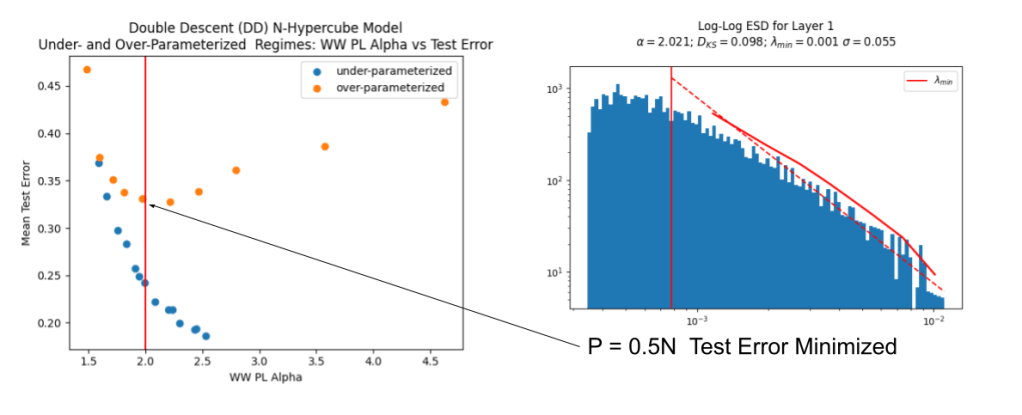

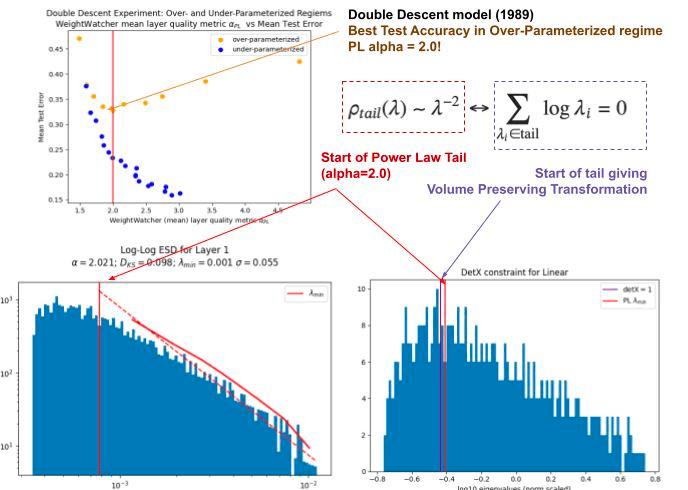

We can now plot the test accuracy as a function of the weightwatcher layer Power Law (PL) quality metric alpha ). This is shown on the LHS.

From the left side plot, we immediately see that when

- WW PL-alpha =- 2.0, test error is minimized

- WW-PL-alpha = 1.5. test error is maximized, and the model is severely overfit

- WW PL-alpha > 2.0, test error is sub-optimal as well

Ideal Learning

On the RHS, we drill into the case where , where the test error is minimized. This plot shows the Empirical Spectral Density (ESD) of the (inverse) eigenvalues

, and on a log-log scale.

The weightwatcher exactly, which means the ESD can be fit perfectly to a power law distribution with exponent 2.0. This is also exactly where the weightwatcher HTSR theory (as in our JMLR paper) predicts the model would have the best out-of-sample performance!

The WeightWatcher Volume Preserving Transformation

In addition to the Power Law (OK) metric alpha $\alpha_{PL}$, the weightwatcher StatMech theory (discussed in some detail at NeurIPS2023), also states that when the layer is perfectly converged for its data, then the PL tail will form an Effective Correlation Space that satisfies a Volume-Preserving Transformation.

You can check this yourself by adding the detX=True option to the analyze method

details = watcher.analyze(inverse=True, plot=True, detX=True)

and examining the plot on the lower RHS. You can tell your layer is well-trained when the red and purple lines are very close together:

Remarkably, in the case $P=0.5N$, where the test error is minimized, not only is the weightwatcher PL $\alpha_{PL}-2.0$, but the PL tail also satisfies the theoretically predicted volume preserving transformation!

But more importantly, you can use this additional weightwatcher metric to check the quality of your NN layer to see if it is properly converged, or if the layer is is overfit.

Signatures of over-fitting

When the weightwatcher , when

, this indicates that the layer ESD is Very Heavy Tailed (VHT), and the underlying weight matrix is atypical. In these case, we can infer that the model is potentially overfit. Again, this is exactly what we see. In other words,

The weightwatcher theory describes the DD curve as predicted

(in the small alpha, overparameterized regime).

Now this is not explained in the old JMLR paper, but I do discuss it at some length in my recent invited talk at NeurIPS2023 (rerecorded). Also see the NeurIPS page for our Workshop on Heavy Tails ML. (with both my original talk and several others)

Why does weightwatcher work so well here. It turns out, for the case , the ESD

of the data covariance matrix

is the same as the ESD of that Marchenko Pastur random matrix with aspect ratio

.

As shown on the LHS below, the ESD has many zero and near-zero eigenvalues $latex \lambda \approx 0$, which was conjectured (back in 1989) to prevent the model from describing out-ot-sample examples.

As shown on the RHS (above), when we compute the ESD of the inverse covariance matrix , this ESD can be fit perfectly to a Power Law (PL) distirbution, with alpha exponent $\alpha_{PL}=1.5$ (which is the lower limit of the Clauset esimator weightwatcher users). This means the inverse covariance matrix is highly atypical, and is dominated by the largest (inverse) eigencomponents. Because of this, this matrix is not a typical sample or view on the training data, and it can not describe anything but the training data. In other words, the model is severely overfit.

Also, as shown above, if you add the detX=True option, you can verify that your layer is overfit if the purple line is to the right of the red line. We will discuss this in detail in a future post.

The weightwatcher alpha and the HTSR theory

How are these results related to the basis of weightwatcher–from our JMLR paper on the theory of Heavy Tailed Self-Regularization?

The HTSR theory is a phenomenology that classifies a NN layer weight matrix into a specific Universality Class from Random Matrix Theory (RMT), based on the weightwatcher fit of the Power Law exponent .

As explained in the paper, when the PL exponent of the ESD is , we can associate the layer with the Heavy-Tailed (HT) Universality Class. When

, the HTSR theory predicts that the layer is optimally converged for its training data. And when

, the layer ESD is in the Very-Heavy-Tailed (VHT) Universality Class. And as explained in my invited talk at NeurIPS2023, this is a signature that the layer may be overfit, just like in the Double Descent example described here.

Applying weightwatcher to real-world LLMs and DNNs

What can do with weightwatcher ? Ask yourself…

- Do you really know if you using enough data to fine-tune your LLM ?

- Are you worried that you are overfitting the model to your training data

- Are you having trouble evaluating your LLM ?

You can use the tool to find and fix these kinds of problems in your LLMs and NNs that no other tool can diagnose or discover.

WeightWatcher is a one-of-a-kind must-have tool for anyone training, deploying, or monitoring Deep Neural Networks (DNNs).

Learning is an inverse problem. And weightwatcher provides a view into the correlations in the training data, akin to looking at the inverse correlation matrix at different levels of granulairty. And it works even if your data is not random (like this simple model), but correlated, as in a real world problem.

You can use weightwatchet to look for potential signatures of overfitting using the weightwatcher tool. The kind of signatures seen in the classic Double Descent experiment. Even without test data! Even without training data!

You just need to ‘watch’ the model weights.

I developed weightwatcher to help my clients who are training and fine-tuning their own LLMs (and DNN AI models). If it’s useful to you, I’d love to hear about it. And it you need help training or fine-tuning your own models, please reach out. My hashtags are: #talkToChuck #theAIguy.

In the meantime, remember–Statistical Learning Theory (SLT) cannot describe Double Descent in the over-parameterized regime–but StatMech can. And so can weightwatcher!

Thanks to my friend Patrick Tangphao for this meme (haha)

]]>The Truth is in There: Improving Reasoning in Language Models

with Layer-Selective Rank Reduction

And it got a lot of press (the Verge ) because it hints that it may be possible to improve the truthfulness of LLMs with a simple mathematical transformation

The thing is, the weightwatcher tool has had a similar feature for some time, called SVDSmoothing. And like the name sounds, you can apply TruncatedSVD to the layers of an AI model, like an LLM, to improve performance.

Lets take a deep look at how you can apply this yourself, and why it works.

First, it you haven’t done so already, install weightwatcher

pip install weightwatcher

For our example on Google Colab, we will also need the accelerate package

!pip install accelerate

Weighwatcher can run on a GPU, multi-core CPU, or vanilla CPU. To check things are working correctly, look for any warning message(s) after importing weightwatcher. I.e.

import weightwatcher as ww

Here, we see a warning that the Google Colab GPU is not available, so weightwatcher defaults to the CPU, which is going to be slower but the SVD calculations will be more accurate.

WARNING:weightwatcher:PyTorch is available but CUDA is not. Defaulting to SciPy for SVD

WARNING:weightwatcher:Import error , reetting to svd accurate methods

‘Requirements

- weightwatcher version 0.7.4.7 or higher

- pytroch or keras frameworks (onnx support is available but not well tested)

- your LLM must be loaded into memory *(the SVDSmoothing option does. not support safetensors or related ‘lazy’ formats yet)

- the model must have only Dense / MLP layers *(no LSTM, although Conv2D layers are ok)

Here is a link to a Google Colab notebook with the code discussed below

An Example: TinyLLaMA

For our example, we will use the TInyLLaMA LLM. Use git to download the model files to a local folder

!git clone https://huggingface.co/TinyLlama/TinyLlama-1.1B-intermediate-step-955k-token-2T/

We will also need a folder to store our smoothed model in for testing later.

We copy the folder, and remove the model files in it:

import os

tinyLLaMA_folder = "TinyLlama-1.1B-intermediate-step-955k-token-2T"

smoothed_model_folder = "smoothed_TinyLLaMA"

smoothed_model_filename = os.path.join(smoothed_model_folder, "pytorch_model.bin")

!cp -r $tinyLLaMA_folder $smoothed_model_folder

!rm $smoothed_model_filename

Running SVDSmoothing with WeightWatcher

We first need to load the model into memory. (for now; later a version can be made that supports the safetensors format, reducing the memory footprint considerably)

import torch

tinyLLaMA_filename = os.path.join(tinyLLaMA_folder, "pytorch_model.bin")

tinyLLaMA = torch.load(tinyLLaMA_filename)

Getting Started: Describe a model

Before we run this, however, lets first check that weightwatcher is working properly by running watcher.describe()

import weightwatcher as ww

watcher = ww.WeightWatcher(model=your_llm)

details = watcher.descrobe()

The watcher produces the details, a dataframe with various layer information:

Notice that in an LLM, each invidudual layer is a DENSE layer–even the ones making up the transformer layers. In order to only select the MLP layers, we need to identify them (by name, and then number)

Selecting Specific Layers

The LASER paper recommends applying (what we call) SVDSmoothing to specific layers of an LLM, such as the MLP/DENSE layers towards the end of the model (closer to the labels)

We can select specific layers by id (number), by type (i.e DENSE), or by name. To select the MLP layers, lets just get list of TinyLLaMA layers that have the term ‘mlp’ in them

import pandas as pd

# Assuming 'details' is your DataFrame

D = details[details['name'].astype(str).str.contains('mlp')]

# Now, extract 'layer_id' column as a list of ids

mlp_layer_ids = list(D['layer_id'].to_numpy())

Now that we have the layers listed out, we need to select the method for choosing our low rank (TruncatedSVD) approximation. We then specify layer_ids=…

smoothed_model = watcher.SVDSmoothing(layers=mlp_layer_ids, ...)

Selecting a Low-Rank Approximation

The weightwatcher tool offers several different automated methods for selecting the SVD subspace for the low rank approximation. This subspace can be selected as the eigencomponents associated with the method=… (and the optional percent=…) option(s):

smoothed_model = watcher.SVDSmoothing(method='svd', percent=0.2, ...)

where the method option can be

method = ‘detX’ (default) | ‘rmt’ | ‘svd’ | ‘alpha_min’

The default method , ‘detX, select the top eigenvalues satisfying the detX condition, i.e. a volume perserving transformation.

- method=’detX ‘: default

This is approximately the same as using method=’svd’ , with ‘percent’=0.8 (80% of the rank is retained)

- method=’svd ‘,percent=P: the top P percent of the largest eigenvalues

Other options are:

- method=’alpha_min’: the eigenvalues in the fitted Power Law tail

- method = ‘rmt’: the large spikes, or eigenvalues larger than the MP (Marhcenko Pastur) bulk region predicted by RMT (Random Matrix Theory)–currently broken, needs fixed

and in the future, weightwatcher will also offer method=’entropy’, which will select the SVD subspace based on the entropy of the eigenvectors.

For now, lets keep it simple and pretty conservative and use the default (which picks the top eigencomponents associated with ~60-80% largest eigenvalyes of each individual layer weight matrix)

Generating the Smoothed Model

watcher = ww.WeightWatcher(model=tinyLLaMA)

smoothed_model = watcher.SVDSmoothing(layers=mlp_layer_ids)

Testing the Smoothed Model

Now we save the smoother model to the folder we created aove

torch.save(smoothed_model, smoothed_model_filename)

To test the models, we will generate some fake text from a prompt and compare. Just a sanity check.

We meed a tokenizer; will share the tokenizer between models

from transformers import AutoTokenizer, pipeline

import torch

# Initialize the tokenizer from the local directory

tokenizer = AutoNow, Tokenizer.from_pretrained(tinyLLaMA_folder)

We then specify the model folder to create a text generation pipeline. For the orginal TinyLlama, we use

# Manually set the device you want to use (e.g., 'cuda' for GPU or 'cpu' for CPU)

# If you want to automatically use GPU if available, you can use torch.device('cuda' if torch.cuda.is_available() else 'cpu')

# Initialize the pipeline and specify the local model directory

text_generation_pipeline = pipeline(

"text-generation",

model=tinyLLaMA_folder,

tokenizer=tokenizer,

framework="pt", # Specify the framework 'pt' for PyTorch

)

and for the smoothed model, we use

smoothed_generation_pipeline = pipeline(

"text-generation",

model=smoothed_model_folder,

tokenizer=tokenizer,

device=device, # Use the manually specified device

framework="pt", # Specify the framework 'pt' for PyTorch

)

Now we can generate some text and compare the results of the original and the smoothed model. Lets just do a sanity check:

# Use the pipeline for text generation (as an example)

generated_text = text_generation_pipeline("Who was the first US president ?", max_length=20)

print(generated_text)

The original response is

“Who was the first US president ?\nThe first US president was George Washington.\n…”

generated_text = smoothed_generation_pipeline("Who was the first US president ?", max_length=20)

print(generated_text)a

The smoother result is:

“Who was the first US president ?\nThe first US president was George Washington.\n…”

and matches the above correct result (above)

Let me encourage you to try this, with different settings for SVDSmoothing, and decide for yourself what is a good result.

Why does SVDSmothing Work ?

I have discussed this in great detail in my invited talk at NeurIPS2023 in our Workshop on Heavy Tails in ML. To view the all the workshop vidoes, go here.

Breifly, the weightwatcher theory postulates that for any very well-trained DNN, the correlations in the layer concentrate into the eigencomponents associated with the tail of the layer ESD. And that this tends to happen when the weightwatcher layer quality metric alpha is 2.0, and, simultaneously, when the detX / volume preserving condition holds. We call this subspace the Effective Correlation Space (ECS).

For example, if we train a small, 3-layer MLP on the MNIST data set, we can see that when the FC1 layer alpha -> 2, then we can then replace the FC1 weight matrix W with its ‘Smoothed’ form, and, subsequently, reproduce the original test error (orange) exactly!

Moreover, we can not reproduce the training error (blue); the ‘Smoothed’ training error is always a smaller larger than the original training error, but larger than zero.

This is very easy to reproduce and I will share some Jupyter Notebooks to anyone who wants to try this.

This experiment shows that the parts of W that contribute to the generalization ability into the Effective Correlation Space (ECS) defined by the tail of the ESD.

When running SVDSmoothing, if you use the method=detX default option, weightwatcher will attempt to define the ECS automatically for you, but if alpha > 2, the Effective Correlation Space (ECS) will be larger than necessary, just to be safe.

If you run the SVDSmoothing this yourself, please join our Community Discord and share your learnings with us.

The weightwatcher tool has been developed by Calculation Consulting. We provide consulting to companies looking to implement Data Science, Machine Learning, and/or AI solutions. Reach out today to learn how to get started with your own AI project. Email: [email protected]

]]>And even the smaller Mistal 7b model seems to be “punching well above its weight class, taking on LLM giants”, and emerging as the Best [small) OpenSource LLM Yet. Can we understand why ?

In this post, I conjecture why Mistral 7b works so well–based on an analysis with the open-source weightwatcher tool, and drawing upon the Sornette’s theory of Dragon Kings

Here’s a Google Colab notebook with the code discussed below

A) Analyzing Mistral 7b with weightwatcher

Let’s run Mistral-7b through its paces with the weightwatcher tool. This time, however, we are going compare the standard results with one of the advanced features of the tool, the fix_fingers option (described in this earlier blog post).

Here are the steps we take:

A.1) Download Mistral-7B-v0.1 base model to a local repo:

!git clone $base_model_html

Notice that this repo contains the base model in both as both safetensors files and pytorch_model.bin files; we can remove the latter and just keep the 2 safetensors files.

A.2) Run weightwatcher with the fix_fingers=’clip_xmax’)

import weightwatcher as ww

watcher = ww.WeightWatcher()

details = watcher.analyze(model="Mistral-7B-v0.1", fix_fingers='clip_xmax')

The resulting details dataframe wlll contain 2 columns

- raw_alpha: the estimated Power Law (PL) exponent alpha, without applying fix_fingers

- alpha: the ‘fixed’ alpha, adjusted for fingers (i.e possible Dragon Kings)

A.3) Compare the 2 alphas

We analyze the results weightwatcher power law qualiy metric alpha by making a histogram of the raw (default) alpha, and the (fixed) alpha.

We see that the raw_alpha and the ‘fixed’ alpha look very different. The raw_alpha have a very wide distribution, with many layer raw_alpha >> 6 , with 1 even as high as 40! And the average <raw_alpha>=~5.7, which is just at the edge of the safe range (we want the average layer <alpha> in [2,.4])

The ‘fixed’ alphas, however, are very different. The distribution is much sharper, with very few ‘fixed’ alpha > 6, and the average fixed <alpha> =~ 4.8. Under the lens of the weightwatcher HTSR theory (described in our seminal paper), this is model looks much better.

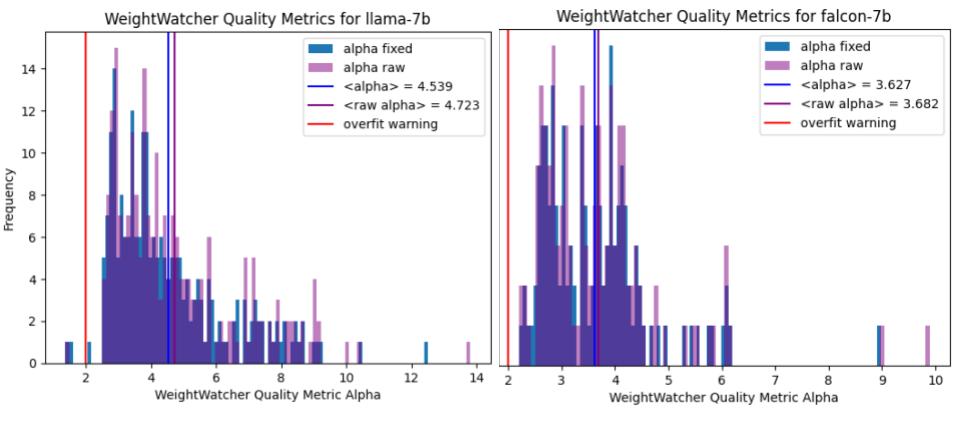

A.4) Compare to other base models

Here are the same plots for the LaAMA-7b and Falcon-7b base models:

Notice that the raw_alpha and the ‘fixed’ alphas look almost identical. And the layer averaged alphas are also almost identical. So it not necessary to use the slower fixed_finger options when running weightwatcher.

So what’s going on ?

B) Fingers and Power Laws

So-called ‘fingers’ are large positive outliers that arise when computing the Empirical Spectral Density (ESD) of a layer weight matrix

. That is, they are usually large eigenvalues

of the correlation matrix

—so large that they live outside the normal and expected range of the ESD when fit to a Power Law, i.e

. The best way to see this is with a plot.

B.1) Plot a layer ESD with weightwatcher

Let’s pick a layer with a prominent ‘finger’ and plot the ESD.

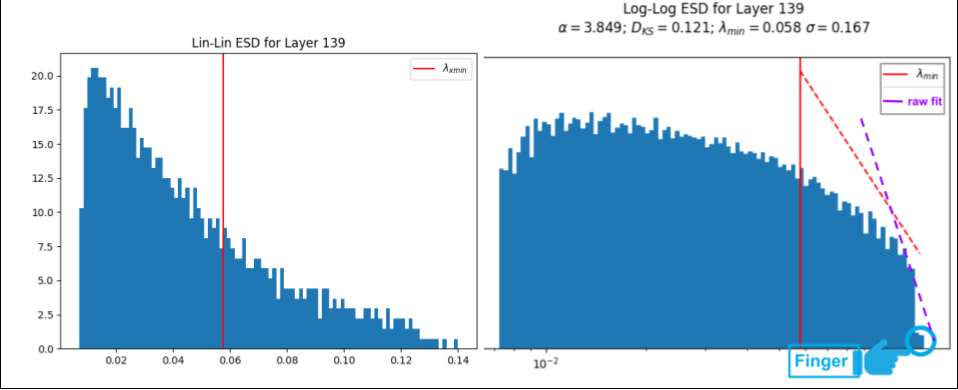

watcher.analyze(layers=[139], plot=True)

When we add plot=True, weightwatcher will generate several plots for each layer, such as the 2 plots above. The plot on the left is a histogram of eigenvalues for layer 139 (the ESD), and on a linear-linear scale, depicting a typical Heavy-Tailed (HT) ESD, The one on the right is the later ESD, this time on log-log scale, along with the PL exponent and the quality of the PL fit

. The PL tail starts at the (vertical red line), and the (dashed red line) depicts the best theoretical PL fit

The finger is in the lower right side of the log-log plot, and looks like a small ‘shelf’ at the far end of the ESD. The finger consists of 1 or more spuriously large eigenvalues . If we include these large

in the PL fit, the result is the (dashed red line), and the alpha is unusually large, i.e

. Using the fix_fingers option, weightwatcher will remove upto 10 fingers until the ‘fixed’ alpha is stable.

B.2) Stability analysis of fix_fingers option

How can we tell if the ‘fixed’ alpha is stable ? There is a plot for this too.

The plot on the left helps us evaluate the stability of the weightwatcher power law (PL0 fits. It plots the choice of the start of the PL tails (red line) against the qualty of the PL fit (Dks, the Kolmogorov–Smirnov distance between the data and the fit)

Good fits show a single, easily-found global minima. Bad or spurious fits have lots of close or degenerate fits

In this plot for the Layer 139 PL fit, we see a realtively convex envelope near the choice of near the fixed fit, and lots of spurious fits that would have occured if we had included the finger in the data. (That is, there are lots of spurious fits toward the right side, indicating a smaller PL tail, i.e. the purple-dashed-line above)

B.3) Comparing alpha to other weightwatcher metrics

The alpha metric is one of a number of weightwatcher layer quality metrics. It is the most inteesting because it lets us identify the Universality class of the layer. That is , is the layer well fit , overfit

, or underfit

). But alpha is also the hardest to estimate. Fortunately, we can get a rough check on our estimated alpha by comparing it to 1 or more other weightwatcher metrics.

A good metric to compare against is the rand_distance metric. This metric computes how far a layer weight matrix W is from being a random matrix–the larger the distance, the less random (or more correlated) W is. Generally speaking, smaller alpha means larger rand_distance. We can compute rand_distance using therandomize option:

details = watcher.analyze(plot=True, fix_fingers='clip_xmax', randomize=True)

alpha vs Rand_Distance

We can now plot the raw_alpha vs. rand_distance , and the (fixed) alpha vs rand_distance.

Once again, we see that the raw_alpha and the ‘fixed’ alpha cases look very different. The raw_alpha is is not even a proper function of rand_distance, and shows a stange circular trend red line. On contrast, while not a perfect relation, the ‘fixed’ alpha at least shows a noisy but proper trend green line.

Both the metrics are noisy estimators, but by cimparing them, we can see that the fixed_alpha is a more consistent estimator of the Heavy-Tailed (HT) quakity metric alpha for each layer.

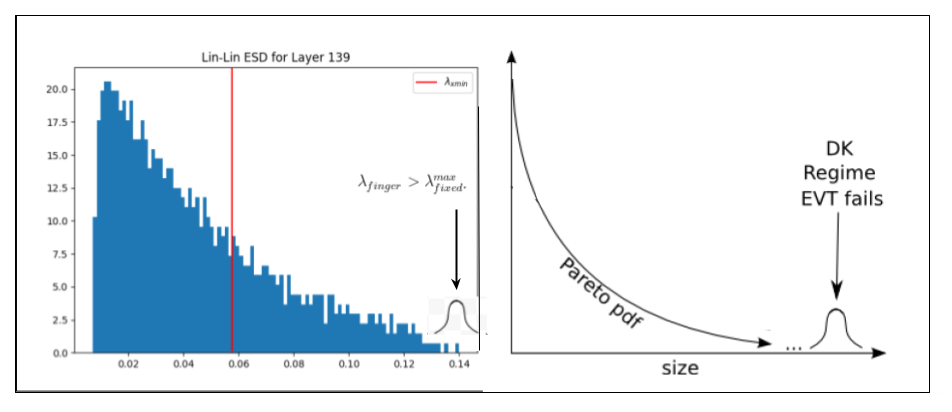

C) Fingers as Dragon Kings

Why do we observe so many outlier eigenvalues ? Here, I posit the conjecture that these spuriously large eigenvalues correspond to Dragon Kings (DK). The Dragon King Theory was first postulated Professor Didier Sornette to explain the appearance of enormously large outliers in the power law fits of a wide range of self-organizing phenomena.

Dragon Kings (DK) are thought to arise as a result of some kind of coherent collective event or some other deterministic mechanism that can act like a dynamical attractor, accelerating the learning for the features associated with those specific eigenvectors at the far end of the ESD.

In particular, in Dragon Kings are thought to arise in quasi-critical systems exhibiting Self-Organized Criticality (SOC)–such as in the avalanche patterns observed in biological, cultured neurons!– due to the inherent dynamics and interactions within the system that push it to a critical point.

This can be due to long-range interactions and./or feedback loops, that, when activated, lead to the emergence of these extreme signatures.

When it comes to neuro-dynamics of, sometimes Dragon Kings help, and sometimes they hurt. In some cases, they are thought to suppress neural function, and, in others, they are characteristic of proper function. See this 2012 paper for a brief review; there are many recent scientific studies on this as well.

But most importantly, and in the context of training an LLM, the appearance of Dragon Kings indicates that some unique dynamical processes is generating these extreme eigenvalues and that this is fundamentally different from the goings on the normal dynamics of the SGD training processes for LLMs.

In an LLM , when do DKs hurt and when do they help ? I suspect that when they arise in the ESD like they do in Mistral-7b, they may indicate better performance. But I suspect may also appear as Correlation Traps, and, in this case, they may hurt performance.

I am postulating that it is this unique process, whatever it is, that is giving Mistral-7b (and the MOE 7x8B model) such remarkable performance. And if correct, it’s possible we could identify the underlying driving process and amplify it during training.

D) Testing the Dragon King Hypothesis with weightwatcher

WeightWatcher is a one-of-a-kind must-have tool for anyone training, deploying, or monitoring Deep Neural Networks (DNNs).

As we more and more powerful open source LLMs emerge, it will be possible to test this Dragon King Hypothesis by running the open source weightwatcher tool. I encourage you do this yourself, and / or join our Community Discord and submit some LLMs to test.

]]>In the last post, we saw how to evaluate fine-tuned LLMs using the open-source weightwatcher tool. Specifically, we looked at models after the ‘deltas’ (or updates) have been merged into the base model.

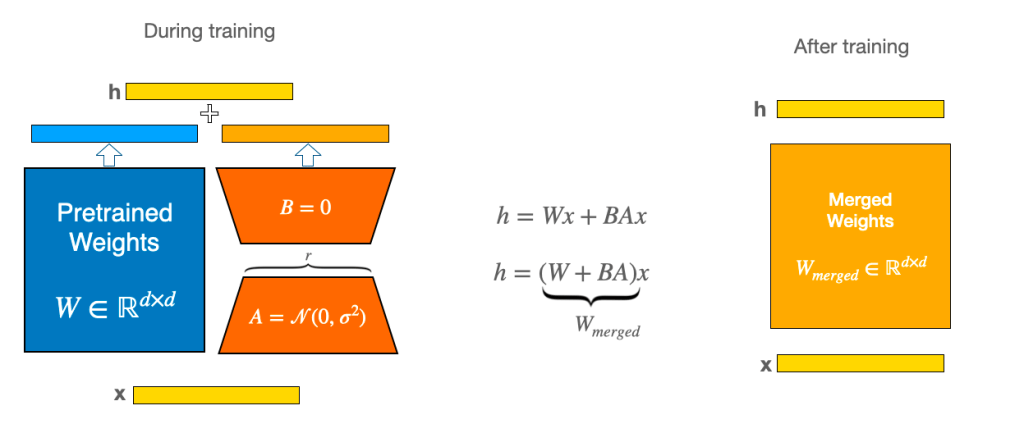

In this post, we will look at LLMs fine-tuned using Parameter Efficient Fine-Tuning (PEFT), also called Low-Rank Adaptations (LoRA). The LoRA technique lets one update the weight matrices (W) of the LLM with a Low-Rank update (BA):

Here is a great blog post explaining all things LoRA

The A and B matrices are significantly smaller than the base model W matrix, and since many LLMs are quite large and require a lot of disk space to store, it is frequently convenient to only store the A and B matrices. If you use the HuggingFace peft package, you would then store these matrices in a file called either

- adapter_model.bin, or

- adapter_model.safetensors

These adapter model files can be loaded directly into weightwatcher so that the lora BA matrices can be analyzed separately from the base model. To do this, use the peft=True option. But first, lets check the requirements on the model for doing this

0.) Requirements for PEFT/LoRA models

–

- The update or delta should either be either loaded in memory, or stored in a directory/folder, and in the pytorch or safetensors format

- The LoRA rank (r) should be r>10, otherwise you need to also specify min_evals=2

- The LoRA layer names for the A and B matrix updates should include the tokens ‘lora_A’ and/or ‘lora_B’

- We do not specifically support the LighteningAI Lit-GPT framework yet

- the weightwatcher version should now be 0.7.4.3 or higher

Here’s a Google Colab Notebook with the code discussed below

1) A simple example: llama-7b-lora

To illustrate the tool, we will use this Llama-7b LoRa fine-tuned model . You may download this model.

!git clone https://huggingface.co/DevaMalla/llama_7b_lora

The llama-7b-lora directory should have the adapter_model.bin file

2) Describe the model

I recommend you first check the model to ensure weightwatcher reads the model file correctly and finds all the layers.

2a) peft=False

First, lets see what the raw model looks like

import weightwatcher as ww

watcher = ww.WeightWatcher()

details = watcher.describe(model='llama_7b_lora')

Note: if you don’t specify left-=True, then weightwatcher will try analyze the individual A and B matrices, which is NOT what you want. But we can check that the layer names. The details dataframe should look like this:

Notice that this dataframe has 128 rows, and each layer name has the phrase ‘lora_A’ or ‘lora_B’ in it.

Also, notice that the matrix rank for A and B is M=64, which is large enough for weightwatcher to get a good estimate of the weightwatcher layer quality metric alpha

2a) peft=True

watcher = ww.WeightWatcher()

details = watcher.describe(model='llama_7b_lora', peft=True)

The peft=True dataframe now should look like this:

Now we see that the details dataframe has only 64 rows, and each layer name has the phrase ‘lora_BA‘ in it. Each layer now represents the matrix:

Note: we use the notation from the original LoRa paper, whereas other blogs and frameworks may use $latex \Delta \mathbf{W}=\mathbf{AB}$

3) Analyze the adapted_model.bin

watcher = ww.WeightWatcher()

details = watcher.analyze(model='llama_7b_lora', peft=True)

The details dataframe should now contain useful layer quality metrics such as alpha, alpha_weighted, etc. Here’s a peek:

We can now look at some of these metrics to see how well our LoRA fine-tuning worked.

3.a) Heavy-Tailed Layer Quality Metric alpha

Let’s plot a histogram of the Power law exponenent metric alpha.

import matplotlib.pyplot as plt

avg_alpha = details.alpha.mean()

title=f"Fine Tuned WeightWatcher Alpha\n Llama-7b-LoRA"

details.alpha.plot.hist(bins=50)

plt.title(title)

plt.axvline(x=avg_alpha, color='green',label=f"<alpha> = {avg_alpha:0.3f}")

plt.legend()

Notice that all of the layer alphas are less then 2 (alpha < 2). That is, al the layer ESDs live in the HTSR Very Heavy Tailed (VHT) Universality Class--which could be problematic. Typically, with well-performing models, we see alpha in [2.4], and we very rarely find alpha < 2. And this holds for the updated fully finetuned (not PEFT) models.

We have found that for many LoRA fine-tuned, the alphas < 2. What does it mean when a layer alpha < 2 ?

- the layer is over-regularized ?

- the layer could be overfitting its training data ?

We could potentially prevent these small alphas by decreasing the learning rate.

4) LoRA alphas vs Base Model alpha

Why are the LoRA layer alphas so small ? Are these layers overfitting their training data ? This remains an open question, but we can get some insights by comparing the LoRA layer alphas to the corresponding layers in the base model. Notice that in this model, the developer only fine tuned the Q and V layers.

If we have the base_model details for the llama_7b model , we can compare the layer alphas between the Base and the fine-tuned LoRA models

B = base_details

filtered_B = B[B['name'].str.contains('q|v', na=False)]

base_alphas = filtered_B.alpha.to_numpy()

lora_alphas = details.alpha.to_numpy()

plt.scatter(x=base_alphas, y=lora_alphas)

plt.title("Llama-7b: LoRA alphas vs Base Model alphas")

plt.xlabel("Base Model Layer alpha")

plt.xlabel("Lora Layer alpha")

From this plot, we see immediately that the larger the Base model alphas are, the smaller the LoRA layer alphas are. This is a common pattern we have observed in other LoRA models, and is easy to reproduce even when fine-tuning a small model like BERT. (for a future blog post)

What does this mean ? It appears that the when the base model layer is less correlated (alpha > 6 say), the LoRA update tends to be more correlated. It is as if the LoRA fine-tuning procedure over-fits its training data when the layer being adapted is not well trained.

This is quite curious and suggests that one has to be very careful when fine-tuning base models like Llama with many under-correlated layers (i.e. alpha > 6). In contrast, the Falcon models do not show such behavior (see the weightwatcher LLM leaderboard page).

On the other hand, maybe this is not a bad thing, and you actually want your fine-tuned LLM to overfit / memorize your training data as much as possible ?

5) Next Steps

If you want to try this yourself:

pip install weightwatcher

and join our Community Discord channel to discuss the results and explore this and other features of the weightwatcher tool.

]]>- each of them are biased toward a specific, narrowly scoped measure.

- none of them can identify potential internal problems in your model, and

- in the end, you will probably need to design a custom metric for your LLM

Can you do better? Before you design a custom metric, there is a better, cheaper, and faster approach to help you get started–using the open -source weightwatcher tool

WeightWatcher is a one-of-a-kind must-have tool for anyone training, deploying, or monitoring Deep Neural Networks (DNNs).

How does it work ? The weightwatcher tool assigns a quality metric (called alpha) to every layer in the model. The best models have layer alpha in the range alpha>2 and alpha<6. And the average layer alpha (<alpha>) is a general-purpose quality metric for your fine-tuned LLMs; when comparing models, smaller <alpha> is better.

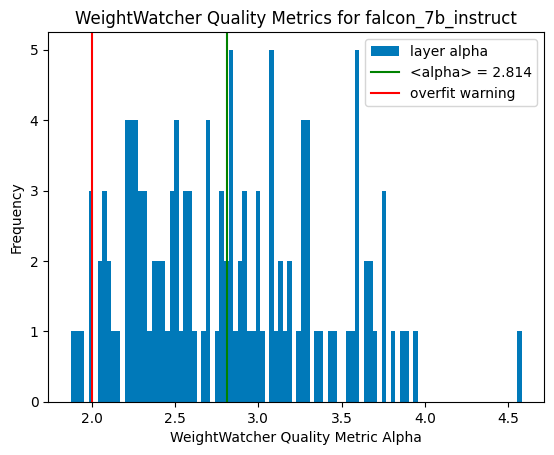

How does this work ? Let’s walk through an example. We consider the Falcon-7b-Instruct instruction fine-tuned model. But first,

0.) WeightWatcher Requirements for Fine-Tuned Models

For this blog, we consider models where the fine-tuning updates has been merged with the base model. In a future blog, we will show how to examine the updates (i.e the adapter_model.bin) files directly. For this

- The base_model should be stored in the HF safetensors format

- The merged model does not need to be safetensors format, but the base model must have exactly the same layer names

- The update should be for either fully fined models, or PEFT/LoRa models with a fairly large LoRa rank r>=32, preferably r>=64 or r>=128

On compute resources, weightwatcher can run on a generaic GPU, CPU, or multi-core CPU, and will automatically will detect the ennviroment and asjut to it.

Here’s a Google Colab Notebook with the code discussed below

1.) Install weight watcher

pip install weightwatcher

Just to be safe, you can print the weightwatcher version

print(ww.__version__)

The version should be 0.7.4 or higher

2.) Download the models. Here, we will use the pre-trained Falcon-7b-instruct model and the Falcon-7b base_model. To save memory, we will use the versions stored in the safetensors format.

2.a) For Falcon-7b-instruct, you can just check out the git repo directly

git clone https://huggingface.co/tiiuae/falcon-7b-instruct

To save space, you may delete the large pytorch_model.bin files

2.b) For the base model, Falcon-7b, we need to do a little extra work to download them (because the Falcon-7b safetensors branch is not available from git directly. First, git check out the main repo:

git clone https://huggingface.co/tiiuae/falcon-7b

To save space, you may also delete these large pytorch_model.bin files here

2.c) Manually download the individual model.*.safetensors files, and place them directly in your local falcon-7b directory.

wget https://huggingface.co/tiiuae/falcon-7b/resolve/d09af65857360b23079dc3dc721a2ed29f4423e0/model-00001-of-00002.safetensors

wget https://huggingface.co/tiiuae/falcon-7b/resolve/d09af65857360b23079dc3dc721a2ed29f4423e0/model-00002-of-00002.safetensors

You will also want to download the model.safetensors.index.json file and place this in your local falcon-7b directory.

Your local directory should now look like:

3.) run weightwatcher

3.a) Just to confirm everything is working correctly, let’s run watcher.describe() first

import weightwatcher as ww

watcher = ww.WeightWatcher()

details = watcher.describe(base_model='falcon-7b', model='falcon-7b-instruct')

This will generate a pandas dataframe with a list of layer attributes. It should look like this:

3.b) If the details dataframe look good, you can run watcher.analyze()

import weightwatcher as ww

watcher = ww.WeightWatcher()

details = watcher.analyze(base_model='falcon-7b', model='falcon-7b-instruct')

The weightwatcher tool will compute the weight matrix updates (W-W_base) for every layer by subtracting out the base_model weights (W_base) from the weights (W) of the fine-tuned model. This lets us evaluate how well the fine-tuning worked.

3.c) Plotting a histogram of the layer alphas, we can see that almost every alpha lies in the weightwatcher safe-range of alpha in [2,4]. indicating this is a pretty good fine tuning.

Notice also the average layer alpha, <alpha>=2.814, which is pretty good.

And that’s all there is too it. You now have an evaluation metric <alpha>~2.8 for your fine-tined model (here, Falcon-7b-instruct). And you did not need to run any costly inference calculations, or even access to the training data.

You don’t even need a GPU–weightwatcher can run on a single CPU or a shared memory, multi-core CPU machine. You can run weightwatcher on Google Colab, or even locally on your MacBook (which I did).

4.) Comparing Fine Tuned Models

We can compare 2 or more fine-tuned models directly by looking at the weightwatcher layer quyality metrics. As an example, lets compare the Falcon-7b-instruct model to the Falcon-7b-sft-mix-2000 model.

4.a) Comparing the layer averaged alpha <alpha>

we can compute the layer average alpha for the 2 models directly from the details dataframe ( <alpha>=avg_alpha) using:

avg_alpha = details.alpha.mean()

Doing this for both models, we find:

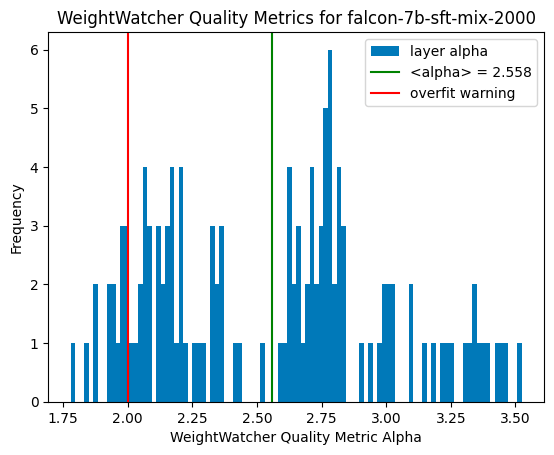

- falcon-7b-instruct. <alpha> =~ 2.8

- falcon-7b-sft-mix-2000: <alpha> =~ 2.6 (smaller is better)

From this, we see that the falcon-7b-sft-mix-2000, having a smaller average alpha, is a little bit better than the falcon-7b-instruct. Can we say more ?

4.b) Comparing layer alpha histogram plots

Comparing ths at this plot to the one above, we see that the falcon-7b-sft-mix-2000 has a few undeseirable layers with alpha < 2 *(left of the red line), but, more importantly, has a maximum alpha of ~3.5. In contrast, while the falcon-7b-instruct models has fewer layers left of the red line, it’s maximum alpha is ~ 4.5…much higher. So, on average (and even if we ginored the layer alphas < 2), we find that the alcon-7b-sft-mix-2000 is a little better.

5.) Summary

We have seen how to use the weightwatcher tool to evaluate and compare 2 different fine-tuned models (with the same base model, falcon-7b). Notice we did not need to run inference or even need any test data.

To run these calculations quickly, I used an A100 GPU on Google Colab, and they only took a few minutes. In fact, it took longer to upload these large model files to my Google Drive than to run weightwatcher.

But these are just 7B parameter models, and since these LLMs can get fairly large, in the next blog post, I will show how to analyze the much smaller adapter_modell.bin model files (and trained with or without PEFT/LoRa(, directly with weightwatcher.

Stay tuned. And if you need help training or fine-tuning your own LLMs, please reach out. #talktochuck #theaiguy

]]>

details = watcher.analyze(..., fix_fingers='clip_xmax', ...)

This will take a tiny bit longer, and will yield more reliable alpha for your model layers, along with a new column, num_fingers, which reports the number of outliers found

Note that other metrics, such as alpha_weighted (alpha-hat), will not be affected.

It is recommended that the fix_fingers be added for all analysis moving forward, however, we have not completed a detailed study on the impact yet, so it has been included as an advanced feature.

So why do this ?

What was wrong; what’s been fixed ?

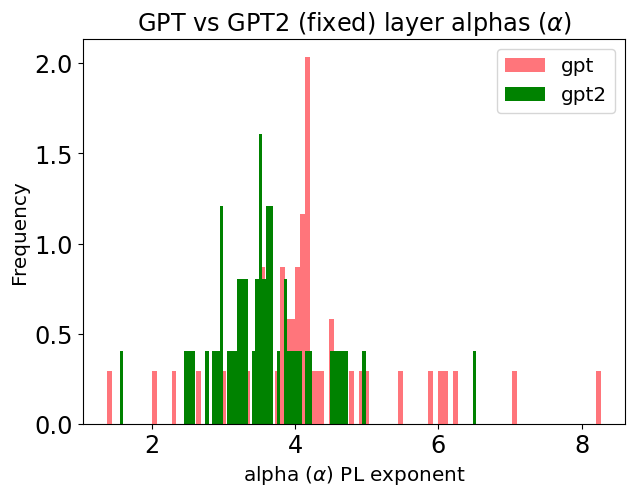

In our Nature paper (Nature Communications 2021), we looked at the layer alphas for the GPT2 models, and, among other things, noticed several large alphas, greater than 8 and even 10! These are outliers because no alpha should be greater than 8 (which is the minimum alpha for a random matrix).

Up until now, one just had to accept them as errant and/or remove them from our analysis. But now, I can explain where they come from and how to adjust the calculations when necessary.

Recall that the HTSR and SETOL theories describe the layer quality by analyzing the histogram of its eigenvalues–that is, we analyze the ESD (Empirical Spectral Density). The best-trained layers have a heavy-tailed ESD which can be well fit to a Power Law (PL)

Fingers (i.e. outliers) tend to appear when the layer (Empirical Spectral Density) is really a Truncated Power Law (TPL), as opposed to a simple Power Law (PL). And that’s OK–it is actually predicted by the HTSR theory and for very large and/or high-quality layers. More precisely, we expect that, in the far tail, the (far) Tail Local stats will be Frechet.

So why not just fit a TPL ? Weightwatcher does support this (i.e. fit=’TPL’), but this is very slow, and, more importantly, we usually don’t have enough eigenvalues in the tail of the ESD to get a good TPL fit, and, instead, there are 1 or a few very large eigenvalues that degrade the PL fit.

We call these very large eigenvalues fingers because they look like fingers peeking out of the ESD tail.

How can we account for these outliers or fingers? Just remove them!

If we just remove. the TPL fingers when they appear, very frequently, and we are careful, we can recover a very good PL fit. (And this works better than using the fit=’TPL” option Let’s look at an example…

But before we do this, let’s discuss why they might appear.

The Critical Brain Hypothesis

The weightwatcher project is motivated by the theory of Self Organized Criticality (SOC), and the amazing fact that it has been successfully applied to understand the observed behavior of real-world (biological) spiking neurons. This is called the Critical Brain Hypothesis.

The weightwatcher theory posits that Deep Neural Networks (DNNs) exhibit the same signatures of criticality–namely power law behavior–as frequently seen in neuroscience experiments on real neurons.

Moreover, I believe that as LLMs (Large Language Models) approach true Self-Organized-Criticality, we will even more amazing properties emerge.

The weightwatcher theory posits that Deep Neural Networks (DNNs) exhibit the same signatures of criticality–namely power law behavior–as frequently seen in neuroscience experiments on real neurons.

New and Improved Metrics for GPT

Let’s see how the new and improved fix_fingers works on GPT and GPT2

FIrst, we need to download the models (from HuggingFace)

import transformers

from transformers import OpenAIGPTModel,GPT2Model

gpt_model = OpenAIGPTModel.from_pretrained('openai-gpt')

gpt_model.eval();

gpt2_model = GPT2Model.from_pretrained('gpt2')

gpt2_model.eval();

Now, let’s run weightwatcher

import weightwatcher as ww

watcher = ww.WeightWatcher()

gpt_details = watcher.analyze(model=gpt_model, fix_fingers='clip_xmax')

gpt2_details = watcher.analyze(model=gpt2_model, fix_fingers='clip_xmax')

Finally, we can generate a histogram of the layer alphas

gpt_details.alpha.plot.hist(bins=100, color='red', alpha=0.5, density=True, label='gpt')

gpt2_details.alpha.plot.hist(bins=100, color='green', density=True, label='gpt2')

plt.legend()

plt.xlabel(r"alpha $(\alpha)$ PL exponent")

plt.title(r"GPT vs GPT2 layer alphas $(\alpha)$ w/Fixed Fingers")

Here are the results

We see that the GPT model still has several fingers, (alphas >=6) , and, on average, larger alphas than GPT2. But there are fewer fingers than previously.

Summary: a new and improved Power Law estimator

We have introduced the new and improved option

details = watcher.analyze(..., fix_fingers='clip_xmax', ...)

which will provide more stable and smaller alphas for layers that are well-trained.

I encourage you to try it and see if it works better for you. And please let me know.

The weightwatcher tool has been developed by Calculation Consulting. We provide consulting to companies looking to implement Data Science, Machine Learning, and/or AI solutions. Reach out today to learn how to get started with your own AI project. Email: [email protected]

]]>