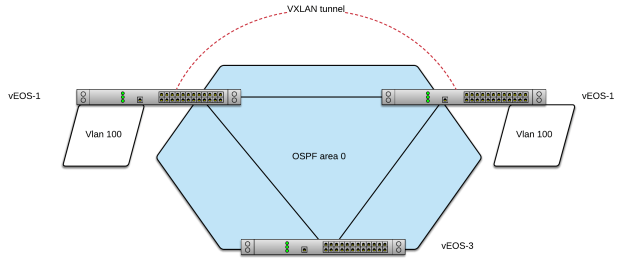

In our last blog we broke VXLAN via breaking layer 3 and multicast. We fixed layer 3 but left multicast broken. Now we’ll convert the configuration from multicast to unicast flooding and notice some very interesting behavior.

Note this configuration is often used in conjunction with multicast for Head-End Replication (HER) where needed but you can use it to create a completely unicast flooding topology as well.

First, let’s add the unicast flood statements just on vEOS-2:

First, let’s add the unicast flood statements just on vEOS-2:

vEOS-2(config-if-Vx1)#sh ac interface Vxlan1 vxlan multicast-group 227.0.0.1 vxlan source-interface Loopback0 vxlan udp-port 4789 vxlan vlan 100 vni 100 vEOS-2(config-if-Vx1)#ping 10.0.100.1 PING 10.0.100.1 (10.0.100.1) 72(100) bytes of data. --- 10.0.100.1 ping statistics --- 5 packets transmitted, 0 received, 100% packet loss, time 47ms vEOS-2(config-if-Vx1)#vxlan flood vtep 1.1.1.1 vEOS-2(config-if-Vx1)#ping 10.0.100.1 PING 10.0.100.1 (10.0.100.1) 72(100) bytes of data. 80 bytes from 10.0.100.1: icmp_seq=1 ttl=64 time=12.6 ms 80 bytes from 10.0.100.1: icmp_seq=2 ttl=64 time=16.1 ms 80 bytes from 10.0.100.1: icmp_seq=3 ttl=64 time=13.6 ms 80 bytes from 10.0.100.1: icmp_seq=4 ttl=64 time=19.3 ms 80 bytes from 10.0.100.1: icmp_seq=5 ttl=64 time=11.8 ms --- 10.0.100.1 ping statistics --- 5 packets transmitted, 5 received, 0% packet loss, time 59ms rtt min/avg/max/mdev = 11.886/14.748/19.306/2.701 ms, pipe 2, ipg/ewma 14.940/13.698 ms vEOS-2(config-if-Vx1)#

Wait what?! The ping worked without adding it to vEOS-1. Yes because the flood and learn behavior vEOS-1 learned the source for 10.0.100.2…

vEOS-1(config-if-Vx1)#sh ac interface Vxlan1 vxlan multicast-group 227.0.0.1 vxlan source-interface Loopback0 vxlan udp-port 4789 vxlan vlan 100 vni 100 vEOS-1(config-if-Vx1)#sh vxlan vni VNI to VLAN Mapping for Vxlan1 VNI VLAN Source Interface 802.1Q Tag --------- ---------- ------------ --------------- ---------- 100 100 static Vxlan1 100 vEOS-1(config-if-Vx1)#sh vxlan address-table Vxlan Mac Address Table ---------------------------------------------------------------------- VLAN Mac Address Type Prt VTEP Moves Last Move ---- ----------- ---- --- ---- ----- --------- 100 0800.2732.55e8 DYNAMIC Vx1 2.2.2.2 1 0:07:37 ago Total Remote Mac Addresses for this criterion: 1 vEOS-2(config-if-Vx1)#sh vxlan address-table Vxlan Mac Address Table ---------------------------------------------------------------------- VLAN Mac Address Type Prt VTEP Moves Last Move ---- ----------- ---- --- ---- ----- --------- 100 0800.2734.9233 DYNAMIC Vx1 1.1.1.1 1 0:02:19 ago Total Remote Mac Addresses for this criterion: 1 vEOS-1(config-if-Vx1)#sh ip arp Address Age (sec) Hardware Addr Interface 10.0.0.2 0:10:22 0800.2732.55e8 Vlan1, Ethernet1 10.0.0.3 0:10:22 0800.2742.ed7e Vlan1, Ethernet1 10.0.100.2 0:10:10 0800.2732.55e8 Vlan100, Vxlan1 192.168.100.100 0:00:00 0a00.2700.0014 Management1 vEOS-1(config-if-Vx1)#ping 10.0.100.2 PING 10.0.100.2 (10.0.100.2) 72(100) bytes of data. 80 bytes from 10.0.100.2: icmp_seq=1 ttl=64 time=10.5 ms 80 bytes from 10.0.100.2: icmp_seq=2 ttl=64 time=10.7 ms 80 bytes from 10.0.100.2: icmp_seq=3 ttl=64 time=10.5 ms 80 bytes from 10.0.100.2: icmp_seq=4 ttl=64 time=10.5 ms 80 bytes from 10.0.100.2: icmp_seq=5 ttl=64 time=11.0 ms --- 10.0.100.2 ping statistics --- 5 packets transmitted, 5 received, 0% packet loss, time 44ms rtt min/avg/max/mdev = 10.520/10.683/11.024/0.216 ms, ipg/ewma 11.097/10.624 ms

Now, let’s clear the mac address tables and see if vEOS-1 can still reach vEOS-2 vlan 100 SVI:

vEOS-1(config-if-Vx1)#clear mac address-table dynamic vEOS-2(config-if-Vx1)#clear mac address-table dynamic vEOS-1(config-if-Vx1)#sh vxlan address-table Vxlan Mac Address Table ---------------------------------------------------------------------- VLAN Mac Address Type Prt VTEP Moves Last Move ---- ----------- ---- --- ---- ----- --------- Total Remote Mac Addresses for this criterion: 0 vEOS-1(config-if-Vx1)#sh vxlan address-table Vxlan Mac Address Table ---------------------------------------------------------------------- VLAN Mac Address Type Prt VTEP Moves Last Move ---- ----------- ---- --- ---- ----- --------- Total Remote Mac Addresses for this criterion: 0 vEOS-1(config-if-Vx1)#sh mac address-table Mac Address Table ------------------------------------------------------------------ Vlan Mac Address Type Ports Moves Last Move ---- ----------- ---- ----- ----- --------- 1 0800.2732.55e8 DYNAMIC Et1 1 0:00:46 ago 1 0800.2742.ed7e DYNAMIC Et1 1 0:00:50 ago Total Mac Addresses for this criterion: 2 vEOS-2(config-if-Vx1)#sh vxlan address-table Vxlan Mac Address Table ---------------------------------------------------------------------- VLAN Mac Address Type Prt VTEP Moves Last Move ---- ----------- ---- --- ---- ----- --------- Total Remote Mac Addresses for this criterion: 0 vEOS-2(config-if-Vx1)#sh mac address-table Mac Address Table ------------------------------------------------------------------ Vlan Mac Address Type Ports Moves Last Move ---- ----------- ---- ----- ----- --------- 1 0800.2734.9233 DYNAMIC Et1 1 0:00:50 ago 1 0800.2742.ed7e DYNAMIC Et3 1 0:00:50 ago Total Mac Addresses for this criterion: 2 vEOS-1(config-if-Vx1)#ping 10.0.100.2 PING 10.0.100.2 (10.0.100.2) 72(100) bytes of data. --- 10.0.100.2 ping statistics --- 5 packets transmitted, 0 received, 100% packet loss, time 47ms vEOS-1(config-if-Vx1)#sh vxlan address-table Vxlan Mac Address Table ---------------------------------------------------------------------- VLAN Mac Address Type Prt VTEP Moves Last Move ---- ----------- ---- --- ---- ----- --------- Total Remote Mac Addresses for this criterion: 0

Ok so vEOS-1 cannot source traffic still as he doesn’t have a unicast flood statement so he is still just sending frames via multicast. If we source traffic from vEOS-2 we will recover two way communication:

vEOS-2(config-if-Vx1)#ping 10.0.100.1 PING 10.0.100.1 (10.0.100.1) 72(100) bytes of data. 80 bytes from 10.0.100.1: icmp_seq=1 ttl=64 time=13.4 ms ... --- 10.0.100.1 ping statistics --- 5 packets transmitted, 5 received, 0% packet loss, time 52ms rtt min/avg/max/mdev = 10.557/11.839/13.480/1.048 ms, pipe 2, ipg/ewma 13.015/12.588 ms vEOS-2(config-if-Vx1)#sh vxlan address-table Vxlan Mac Address Table ---------------------------------------------------------------------- VLAN Mac Address Type Prt VTEP Moves Last Move ---- ----------- ---- --- ---- ----- --------- 100 0800.2734.9233 DYNAMIC Vx1 1.1.1.1 1 0:00:02 ago Total Remote Mac Addresses for this criterion: 1 vEOS-2(config-if-Vx1)# vEOS-1(config-if-Vx1)#sh vxlan address-table Vxlan Mac Address Table ---------------------------------------------------------------------- VLAN Mac Address Type Prt VTEP Moves Last Move ---- ----------- ---- --- ---- ----- --------- 100 0800.2732.55e8 DYNAMIC Vx1 2.2.2.2 1 0:00:14 ago Total Remote Mac Addresses for this criterion: 1 vEOS-1(config-if-Vx1)#ping 10.0.100.2 PING 10.0.100.2 (10.0.100.2) 72(100) bytes of data. 80 bytes from 10.0.100.2: icmp_seq=1 ttl=64 time=11.4 ms ... --- 10.0.100.2 ping statistics --- 5 packets transmitted, 5 received, 0% packet loss, time 53ms rtt min/avg/max/mdev = 10.673/14.111/20.058/3.755 ms, pipe 2, ipg/ewma 13.342/12.786 ms

Let’s clear mac address tables again and add the flood command to vEOS-1 and test again:

vEOS-1(config-if-Vx1)#clear mac address-table dynamic vEOS-1(config-if-Vx1)#sh vxlan address-table Vxlan Mac Address Table ---------------------------------------------------------------------- VLAN Mac Address Type Prt VTEP Moves Last Move ---- ----------- ---- --- ---- ----- --------- Total Remote Mac Addresses for this criterion: 0 vEOS-2(config-if-Vx1)#clear mac address-table dynamic vEOS-2(config-if-Vx1)#sh vxlan address-table Vxlan Mac Address Table ---------------------------------------------------------------------- VLAN Mac Address Type Prt VTEP Moves Last Move ---- ----------- ---- --- ---- ----- --------- Total Remote Mac Addresses for this criterion: 0 vEOS-1(config-if-Vx1)#vxlan flood vtep 2.2.2.2 vEOS-1(config-if-Vx1)#sh ac interface Vxlan1 vxlan multicast-group 227.0.0.1 vxlan source-interface Loopback0 vxlan udp-port 4789 vxlan vlan 100 vni 100 vxlan flood vtep 2.2.2.2 vEOS-1(config-if-Vx1)#vxlan flood vtep 2.2.2.2 vEOS-1(config-if-Vx1)#sh ac interface Vxlan1 vxlan multicast-group 227.0.0.1 vxlan source-interface Loopback0 vxlan udp-port 4789 vxlan vlan 100 vni 100 vxlan flood vtep 2.2.2.2 vEOS-1(config-if-Vx1)#ping 10.0.100.2 PING 10.0.100.2 (10.0.100.2) 72(100) bytes of data. 80 bytes from 10.0.100.2: icmp_seq=1 ttl=64 time=13.3 ms ... --- 10.0.100.2 ping statistics --- 5 packets transmitted, 5 received, 0% packet loss, time 52ms rtt min/avg/max/mdev = 10.522/11.491/13.303/1.028 ms, ipg/ewma 13.107/12.336 ms vEOS-1(config-if-Vx1)#sh vxlan address-table Vxlan Mac Address Table ---------------------------------------------------------------------- VLAN Mac Address Type Prt VTEP Moves Last Move ---- ----------- ---- --- ---- ----- --------- 100 0800.2732.55e8 DYNAMIC Vx1 2.2.2.2 1 0:00:07 ago Total Remote Mac Addresses for this criterion: 1 vEOS-2(config-if-Vx1)#sh vxlan address-table Vxlan Mac Address Table ---------------------------------------------------------------------- VLAN Mac Address Type Prt VTEP Moves Last Move ---- ----------- ---- --- ---- ----- --------- 100 0800.2734.9233 DYNAMIC Vx1 1.1.1.1 1 0:00:55 ago Total Remote Mac Addresses for this criterion: 1

Ok all is good in the world now let’s completely remove all multicast configuration, clear mac address-tables one more time and re-validate:

vEOS-1(config-if-Vx1)#no vxlan multicast-group 227.0.0.1 vEOS-1(config-if-Vx1)#no router pim sparse-mode vEOS-1(config)#no ip multicast-routing vEOS-2(config)#no ip multicast-routing vEOS-1(config)#sh ip mroute % ipv4 multicast routing is not configured on VRF default. vEOS-2(config)#sh ip mroute % ipv4 multicast routing is not configured on VRF default. vEOS-2(config)#clear mac address-table dynamic vEOS-1(config)#clear mac address-table dynamic vEOS-1(config)#ping 10.0.100.2 PING 10.0.100.2 (10.0.100.2) 72(100) bytes of data. 80 bytes from 10.0.100.2: icmp_seq=1 ttl=64 time=15.8 ms ... --- 10.0.100.2 ping statistics --- 5 packets transmitted, 5 received, 0% packet loss, time 59ms rtt min/avg/max/mdev = 10.997/12.411/15.886/1.861 ms, pipe 2, ipg/ewma 14.958/14.050 ms vEOS-1(config)#sh vxlan address-table Vxlan Mac Address Table ---------------------------------------------------------------------- VLAN Mac Address Type Prt VTEP Moves Last Move ---- ----------- ---- --- ---- ----- --------- 100 0800.2732.55e8 DYNAMIC Vx1 2.2.2.2 1 0:00:12 ago Total Remote Mac Addresses for this criterion: 1 vEOS-1(config)#sh ip arp Address Age (sec) Hardware Addr Interface 10.0.0.2 0:25:53 0800.2732.55e8 Vlan1, Ethernet1 10.0.0.3 0:25:53 0800.2742.ed7e Vlan1, Ethernet1 10.0.100.2 0:25:40 0800.2732.55e8 Vlan100, Vxlan1 192.168.100.100 0:00:00 0a00.2700.0014 Management1

Ok all is good, we are running VXLAN with a completely unicast flooding configuration, no multicast needed.

Next post we will begin layering in EVPN!