Based on: M. Buliga, The em-convex rewrite system, arXiv:1807.02058 [cs.LO], 2018. [Added: see also A kaleidoscope of graph rewrite systems in topology, metric geometry and computer science. UPDATE: and a distant answer to Hilbert fifth problem without one parameter subgroups]

1. Geometric Motivation: Beyond Vector Space Tangents

In Riemannian geometry, the tangent space at a point is a vector space—a commutative algebraic structure reflecting the local Euclidean nature of the manifold. However, in sub-Riemannian geometry (where motion is constrained to a non-integrable distribution), the correct infinitesimal model is a conical group—typically a Carnot group—which is generally non-commutative. These structures arise as metric tangent cones via dilation operations:

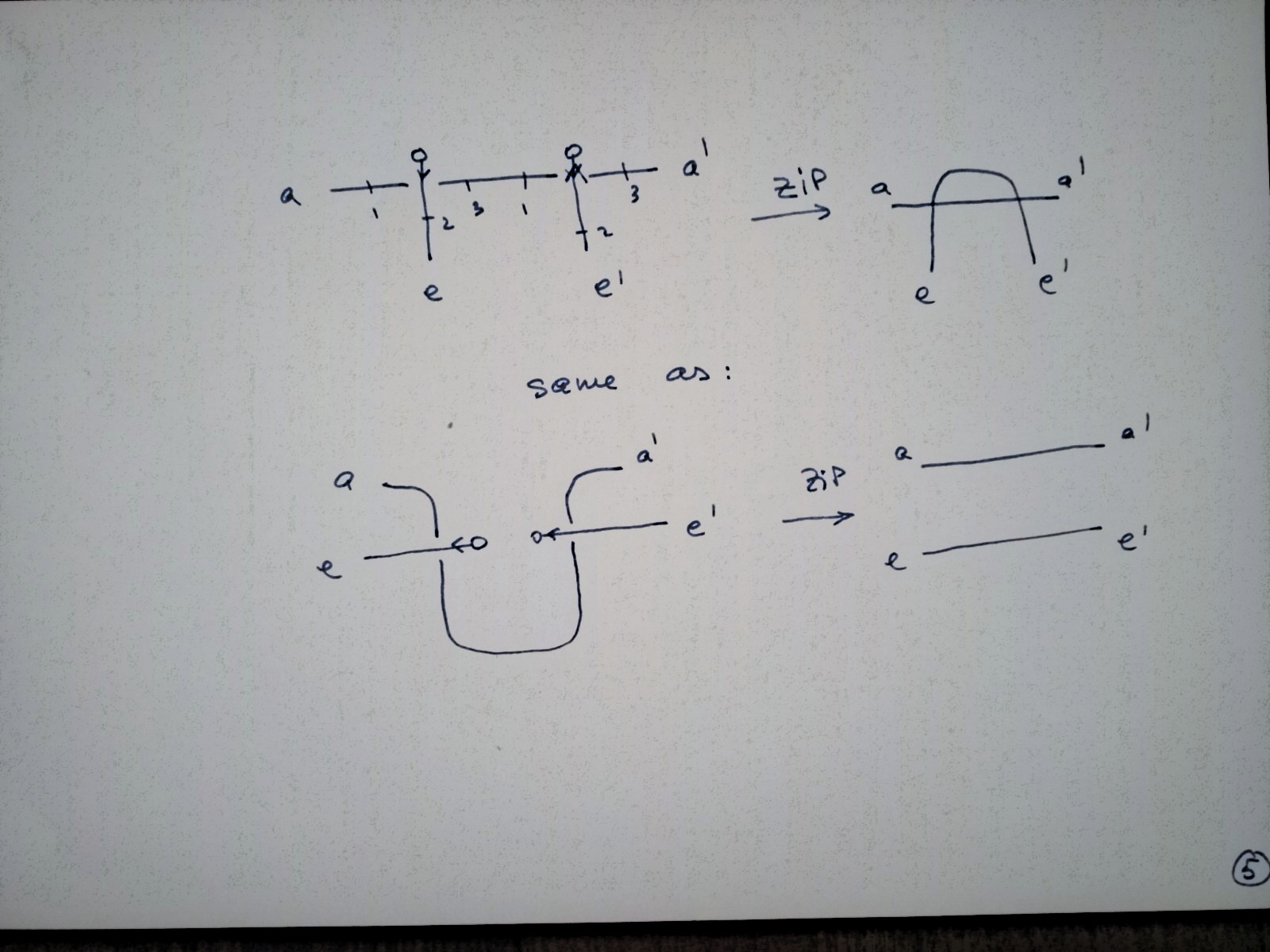

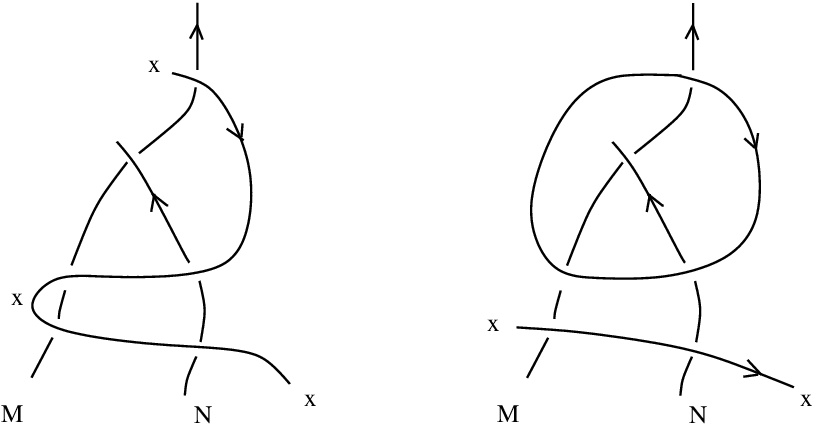

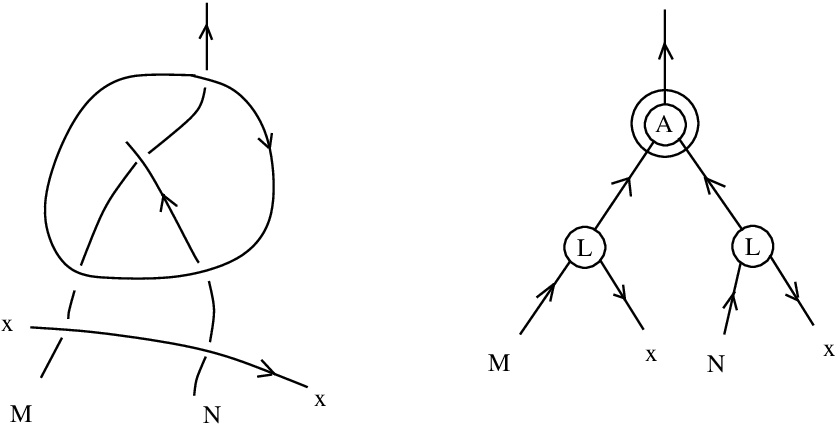

where denotes a group dilation. The algebraic structure of the tangent space emerges from the asymptotic behavior of these dilations rather than being presupposed.

Even the structure of a conical group is excessive. The minimal structure needed to recover differential calculus is a dilation structure on a metric space —a family of maps

satisfying coherence conditions as

. Stripping away the metric yields emergent algebras: algebraic structures where operations emerge in the infinitesimal limit from a uniform idempotent right quasigroup (uirq).

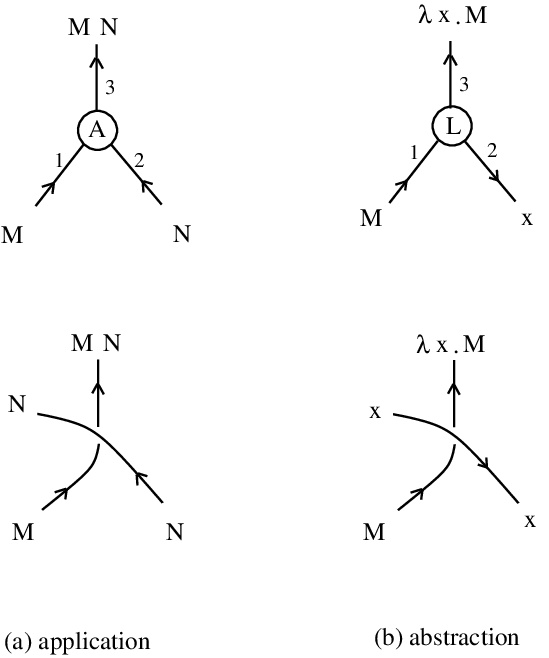

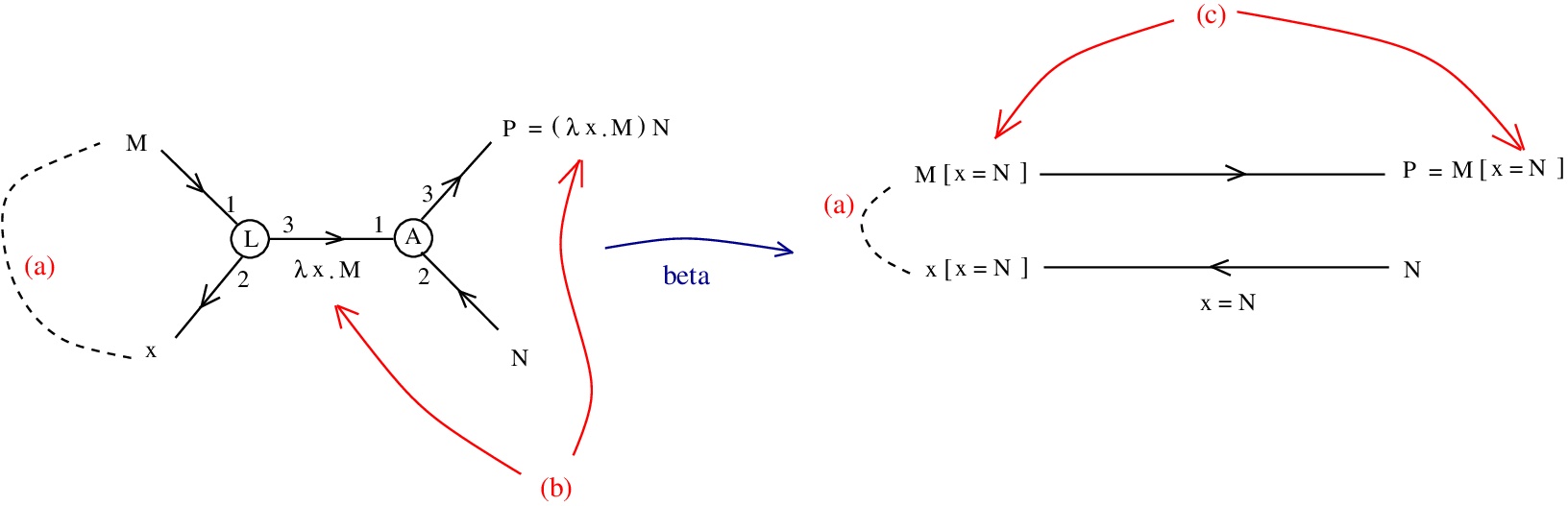

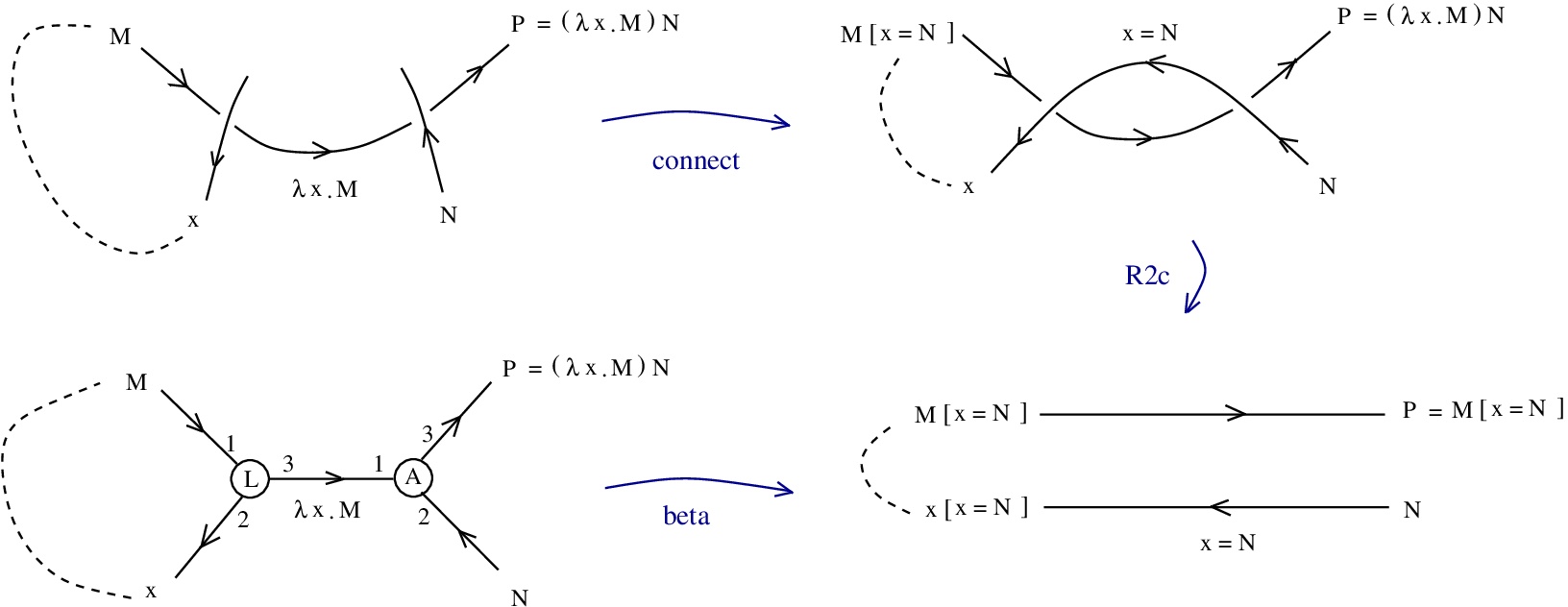

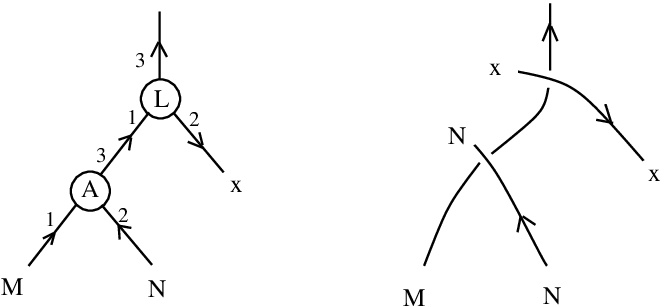

2. The em Rewrite System: Syntax and Reductions

The em (emergent) system is a typed lambda calculus encoding dilation structures syntactically. It operates on two atomic types:

- Type

(“edge”): variables

representing points in space

- Type

(“node”): variables

representing dilation coefficients

The set of well-typed terms is generated by:

- Variables:

,

- Constants:

(unit coefficient)

(coefficient multiplication)

(coefficient inverse)

(dilation)

(inverse dilation)

- Abstraction:

,

- Application:

(left-associative)

Notation: For and

, write

and

.

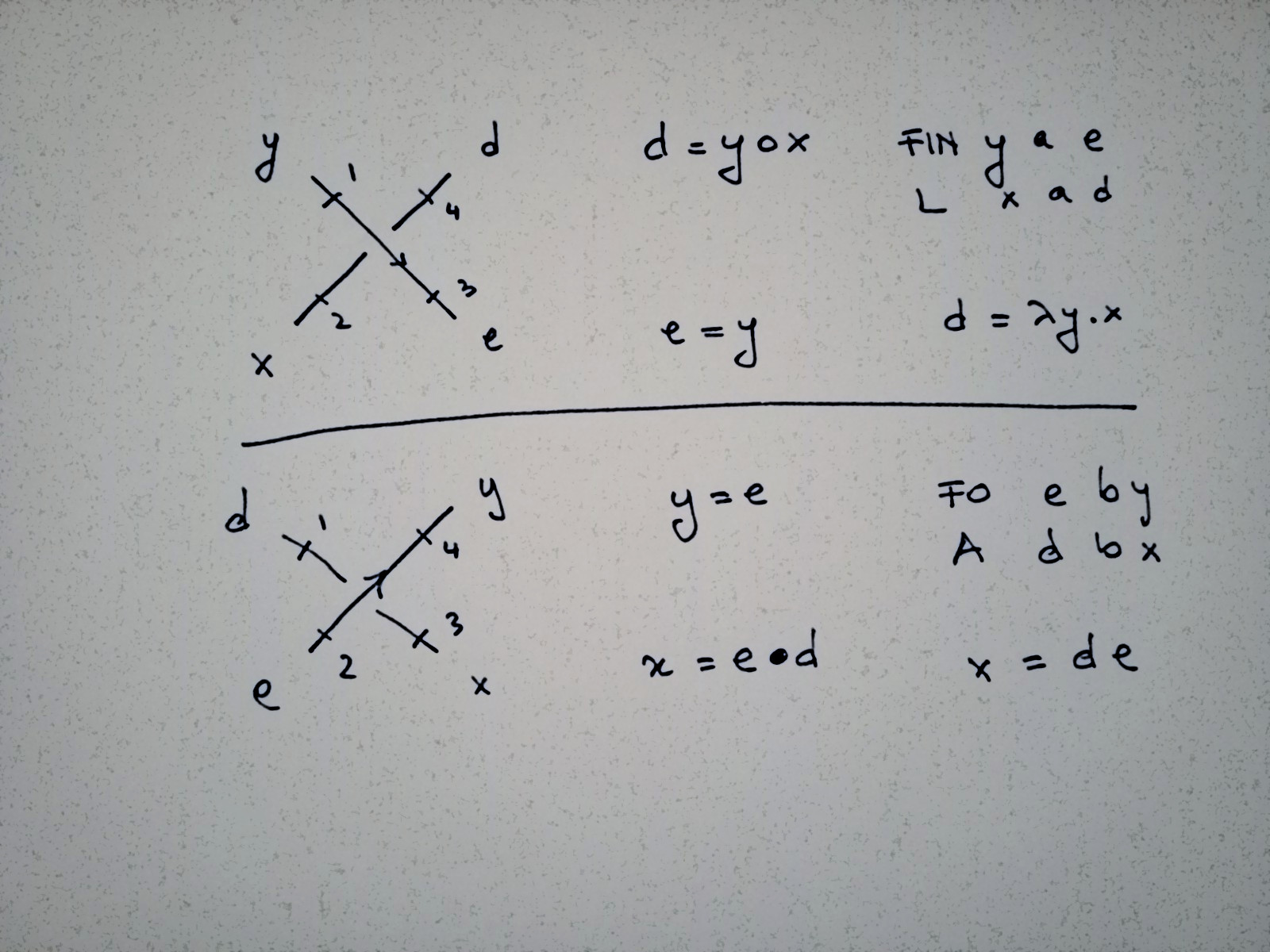

The system includes standard -calculus reductions (

,

, extensionality) plus algebraic reduction rules encoding emergent algebra axioms:

- (id)

(unit dilation is identity)

- (in)

and

(inverse coefficients)

- (act)

(coefficient multiplication)

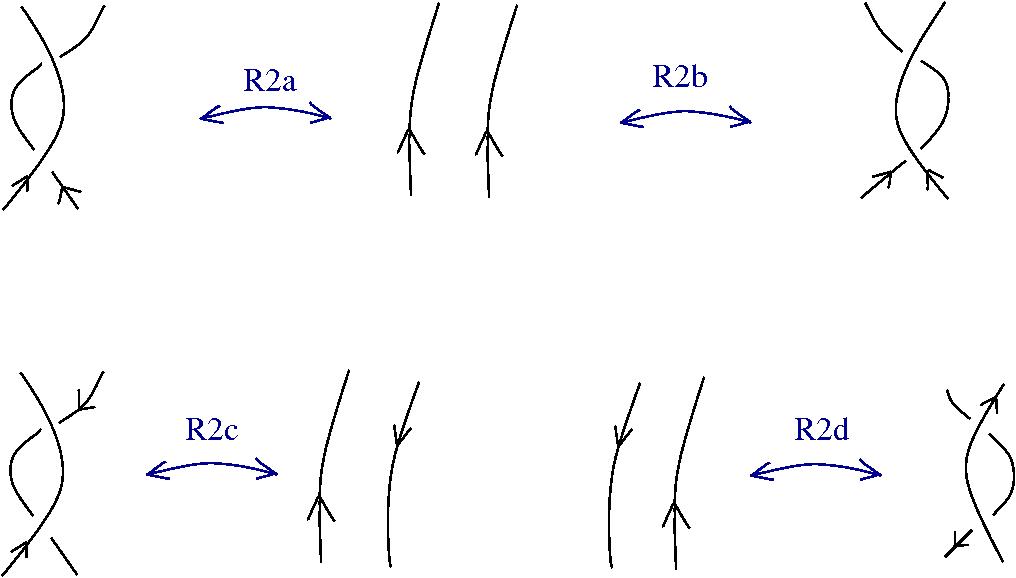

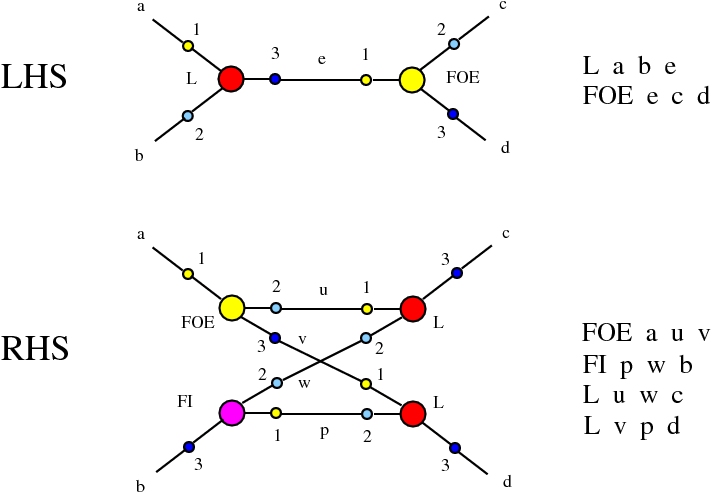

- (R1)

(dilation fixes base point)

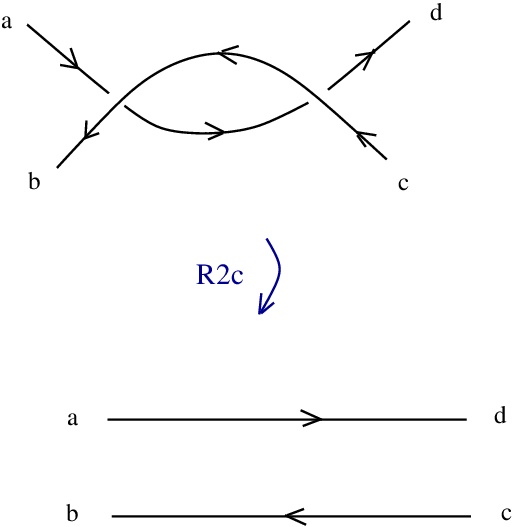

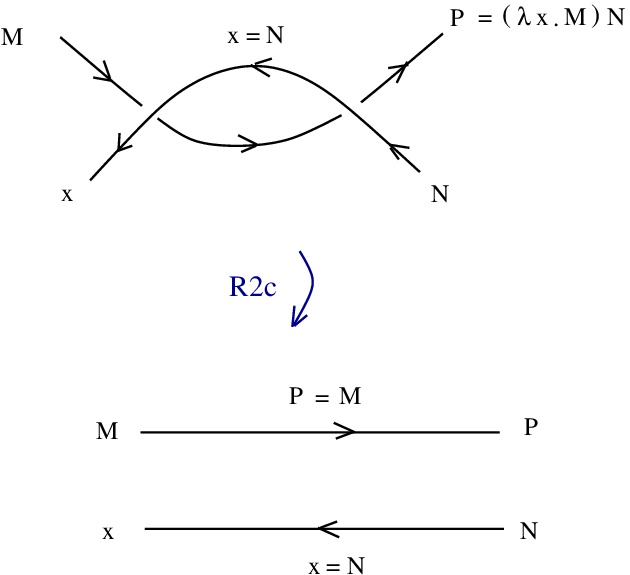

- (R2)

(inverse property)

- (C)

(coefficient commutativity)

3. The Convex Constant and em-convex System

To recover classical commutative differential structure, Buliga introduces a single additional primitive:

Add a constant interpreted as a convex combination operation. Geometrically,

represents the point at parameter

along the segment from

to

, i.e.,

in vector space notation.

The constant satisfies no additional axioms beyond those derivable from the em rules plus the requirement that convex combinations interact coherently with dilations (encoded syntactically in reduction rules).

The resulting system—em plus —is called the em-convex rewrite system. The presence of

forces commutativity of the emergent group operation (Theorem 6.2), collapsing general conical groups to abelian groups.

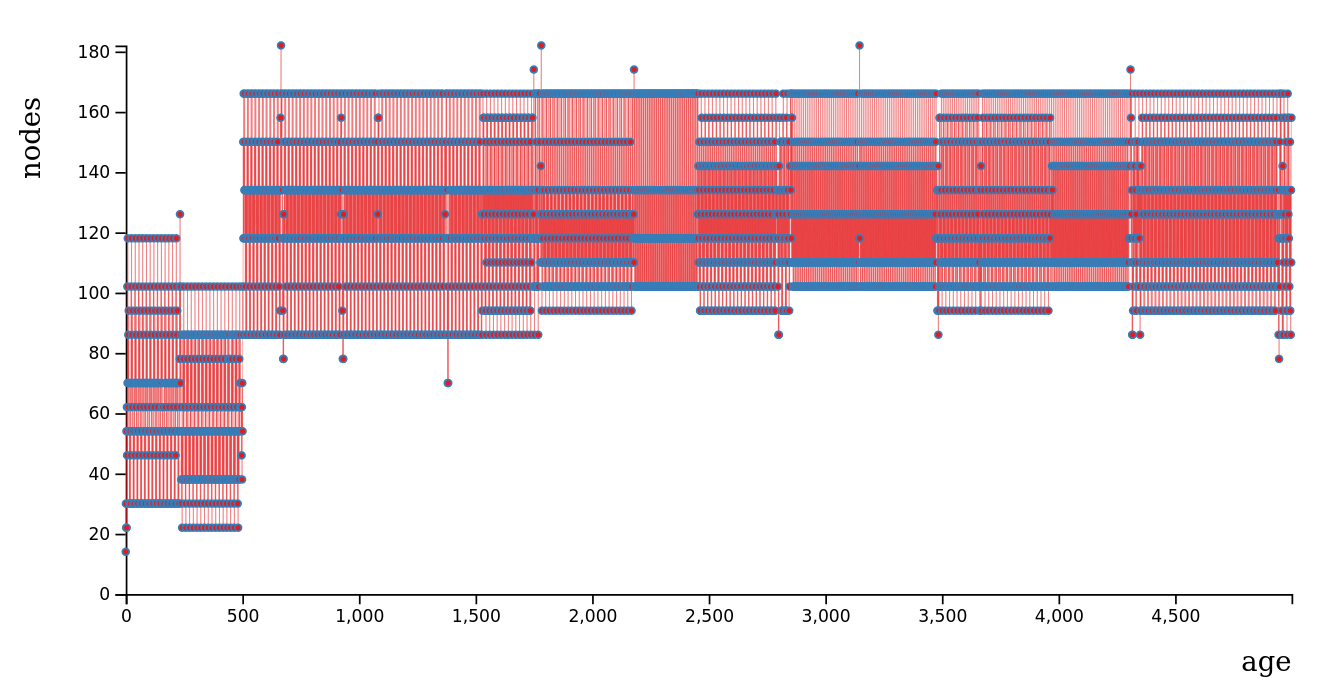

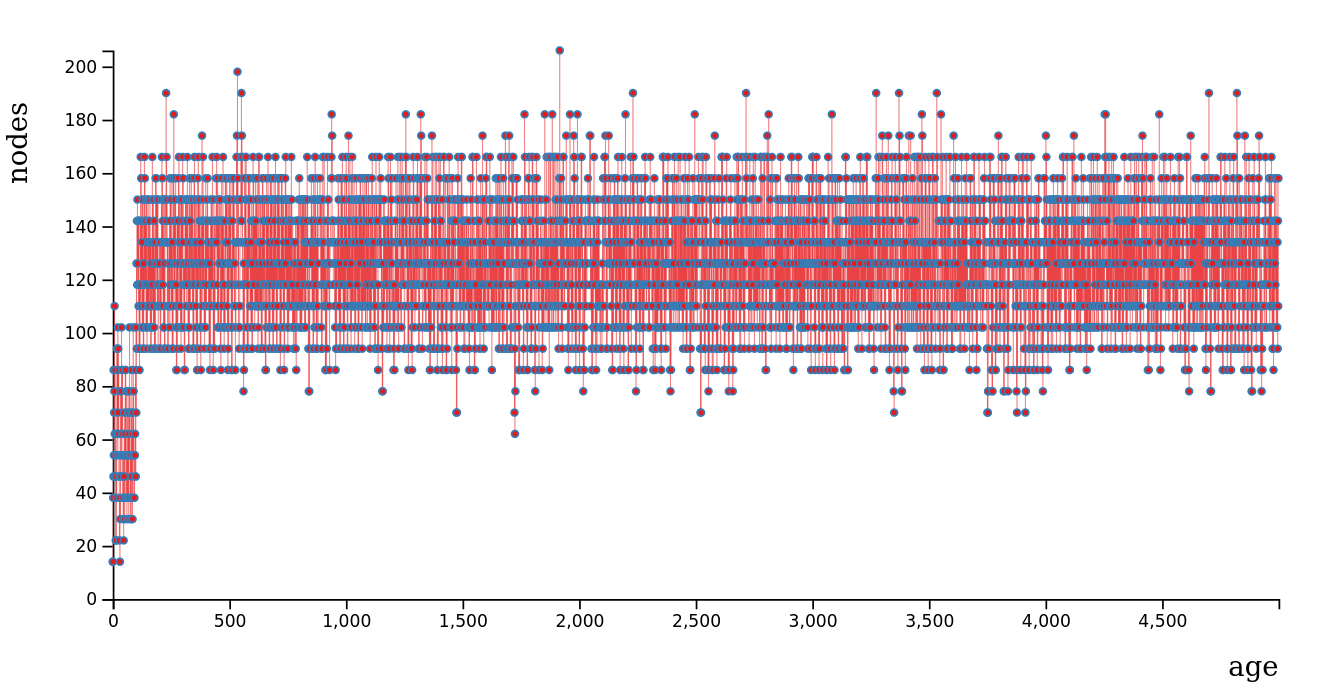

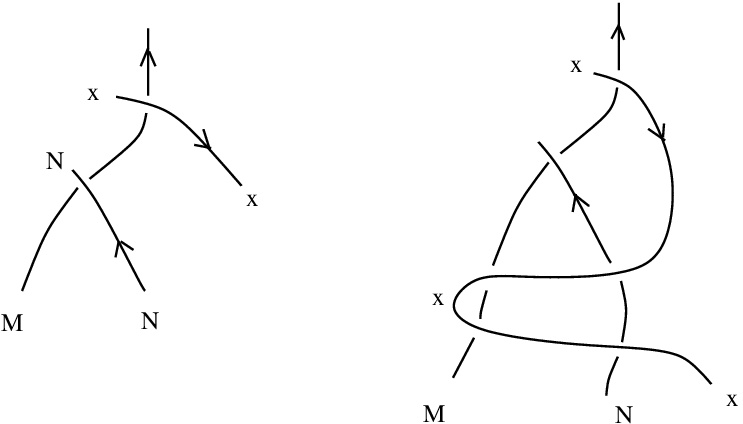

4. Approximate Operations:  ,

,  , and

, and

Using only the convex constant , Buliga defines three fundamental approximate operations as explicit lambda terms. These encode finite-difference approximations to sum, difference, and inverse that become exact in the infinitesimal limit.

In the N-convex calculus (coefficients extended to emergent terms ), define:

(25) Approximate sum (asum):

(26) Approximate difference (adif):

(27) Approximate inverse (ainv):

Here denotes the multiplicative inverse of coefficient

(from the em constant

), and

denotes the space of emergent coefficient terms (completion of

under the emergent operations).

Geometric interpretation: For small , the term

approximates

in a vector space; similarly,

approximates

. The parameter

controls the “scale” of the approximation—smaller

yields better approximation to the exact linear operations.

Under the em-convex reduction rules, as the coefficient approaches the infinitesimal regime (syntactically: through reduction sequences where

is repeatedly scaled by small factors), these approximate operations converge to exact emergent operations:

— the exact sum operation

— the exact difference

— the exact inverse

Critically, these limits exist syntactically as normal forms of reduction sequences—they do not require topological limits or analysis.

5. Field Construction on Coefficients

With the emergent operations and

in hand, the coefficient space acquires a field structure. The neutral element

is constructed as the emergent limit of repeated dilations toward a fixed point.

The space of emergent coefficient terms forms a field with operations defined purely syntactically:

- Addition:

for

- Negation:

for

- Zero:

is the neutral element for

- Multiplication:

for

- Multiplicative inverse:

for

(from em constant)

- Unit:

All field axioms (associativity, commutativity, distributivity, existence of inverses) are derivable from the em-convex rewrite rules. The key identity—distributivity—follows from the interaction between and the emergent operations:

which reduces to a sequence of applications of (convex)-compatible rewrite rules.

Verification of addition: Substituting into the definition of

and taking the emergent limit yields:

where the right-hand side is interpreted in the emergent vector space structure. Similarly for negation using .

6. Vector Space Structure on Points

For any base point , the emergent terms of type

form a vector space over the field

with:

- Vector addition:

(the emergent group operation from the em system, now commutative due to (convex))

- Scalar multiplication:

for

,

All vector space axioms follow syntactically from the em-convex rewrite rules. In particular, scalar multiplication distributes over vector addition:

as a consequence of the field structure on and the compatibility between

and dilations encoded in the rewrite rules.

7. Connection to Hilbert’s Fifth Problem

Hilbert’s fifth problem asks whether every locally Euclidean topological group admits a compatible Lie group structure. The solution by Gleason and Montgomery-Zippin (1952) established that local Euclideanity implies smooth Lie structure.

Syntactic Reformulation: Proposition 5.1 and Theorem 6.1 provide a purely combinatorial version of this solution:

- The em system encodes a topological group with dilations (no smoothness assumed)

- The convex constant

and its associated rewrite rules correspond to the “locally Euclidean” hypothesis—they enforce linearity of convex combinations

- The field

and vector space structure on

emerge syntactically as normal forms of reduction sequences

- No topology, analysis, or limits are required—only lambda calculus and rewrite rules

This demonstrates that the differential structure of Lie groups emerges from minimal algebraic primitives when convexity (linearity of segments) is enforced.

The profound insight: Gleason’s analytic proof shows that “no small subgroups” implies existence of a Gleason metric, which yields commutativity of infinitesimal operations. In the em-convex framework, the convex constant directly enforces this linearity, and the vector space structure emerges syntactically—bypassing all analytic machinery.

8. Significance

The em-convex system achieves three conceptual advances:

- Minimality: Vector space structure emerges from a system with only two types (

), six constants (

), and explicit lambda definitions for

—far fewer primitives than traditional axiomatizations.

- Emergence paradigm: Commutative algebra is not presupposed but derived as a consequence of the convex constant. This formalizes the geometric principle that Euclidean structure is a special case emerging from more general non-commutative geometries.

- Syntactic solution to Hilbert’s 5th: The classical analytic solution is recast as a purely combinatorial derivation—suggesting deep connections between geometric regularity and computational reducibility.

This exposition was generated by Qwen (version 2.5) on February 4, 2026, based on arXiv:1807.02058.

For the original paper, see: https://arxiv.org/abs/1807.02058

References

[1] M. Buliga, The em-convex rewrite system, arXiv:1807.02058 [cs.LO], 2018.

[2] M. Buliga, Emergent algebras, arXiv:0907.1520 [math.GM], 2009.

[3] M. Buliga, Dilatation structures I. Fundamentals, arXiv:math/0608536, 2006.

[4] A.M. Gleason, Groups without small subgroups, Ann. of Math. 56 (1952), 193–212.

[5] D. Montgomery and L. Zippin, Topological transformation groups, Interscience Publishers, 1955.