The post Coding Isn’t the Hard Part appeared first on CodeOpinion.

]]>I keep seeing posts pushing back on the idea that coding isn’t the hard part. And I get why. A lot of the disagreement comes down to what people mean by coding.

YouTube

Check out my YouTube channel, where I post all kinds of content on Software Architecture & Design, including this video showing everything in this post.

But in the world I work in, coding usually is not the hard part.

I’m talking about line of business and enterprise apps. Order management, healthcare, insurance, logistics, and similar systems. In those kinds of systems, the real difficulty is usually not writing code. That is not to say building software is easy, because it is not. But if you understand your tools, your language, your libraries, your frameworks, and you have a solid foundation, the coding itself is often the more mechanical part.

That is because these systems usually are not algorithmically complex. Some domains absolutely are, but most of the systems I’m talking about are more workflow complex than algorithmically complex. Once you have a good foundation and you know the tooling you are working with, building the system often becomes a matter of assembling pieces. You keep adding on to what is already there. In that sense, the implementation can start to feel routine.

The hard part is figuring out what to build

What is not routine is figuring out what needs to be built in the first place.

That is where the real complexity shows up. What events occur in the system? What triggers them? What business rules apply? What data has to remain consistent? What edge cases exist? How does the process actually work from end to end?

Those are the questions that make this hard.

For line of business systems, the best developers I know understand business well. They know how to break down workflows, decompose a problem, and understand how things move through a system. You are not just writing code. You are trying to understand how a business operates and then model that in software.

Why boundaries are difficult

That is why I keep saying that defining boundaries is one of the most important things you can do, and also one of the most difficult.

There are techniques that can help, like event storming, but it is still hard to take a large system, break it into smaller parts, and decide where responsibilities belong. That is where most of the real design work is.

Part of the disagreement around this topic is probably just different definitions of coding. One reply I saw said that applying a good design to an existing system is coding. And if that is your definition, then we are not that far apart. Because I am talking about system design and implementation together. That work is difficult. Building line of business systems absolutely has complexity.

Workflow complexity is different from technical complexity

I use messaging and event driven architecture examples a lot because they make this easy to see.

If you are building a workflow based system, you may have to deal with idempotency, retries, backoffs, dead letter queues, concurrency, claim check patterns, and all kinds of technical concerns. Those things are difficult. In many cases they are more difficult than implementing a specific business step in a workflow.

But even there, the hardest part usually is not the code for a single step. It is understanding the workflow, modeling it correctly, and knowing how it can evolve when the business process changes or when your original assumptions turn out to be incomplete.

A simple shipment example

Take a shipment workflow as a simple example.

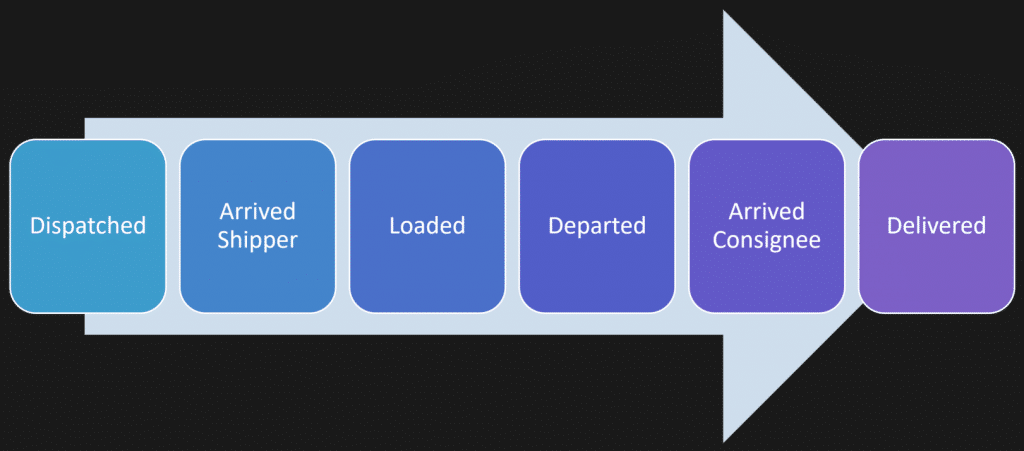

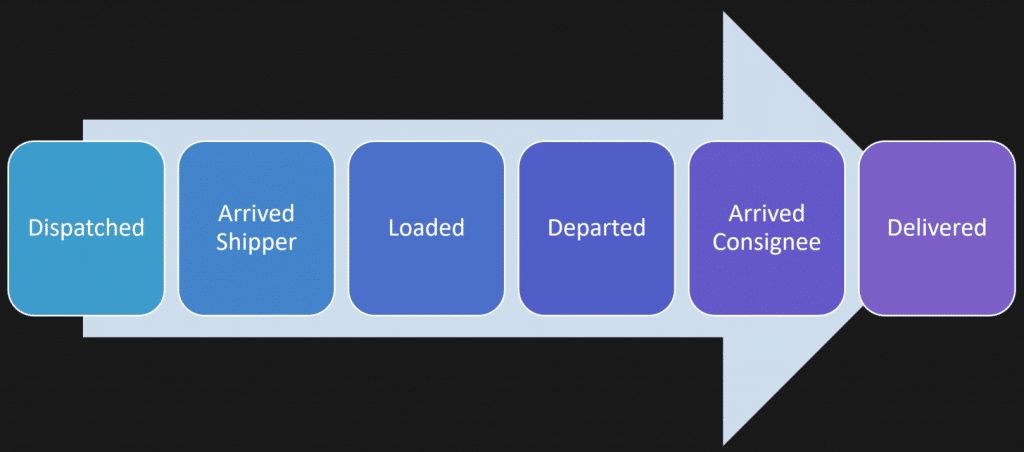

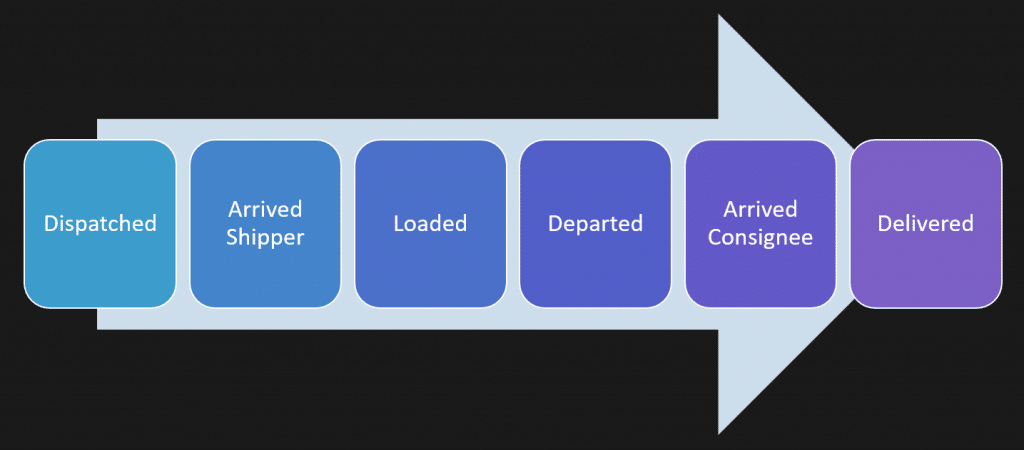

At a high level, you might say a shipment is dispatched, a driver arrives for pickup, the shipment is loaded, the driver departs, arrives at the destination, and completes delivery.

That sounds straightforward when you say it like that. But real systems are rarely that simple.

Maybe one truck is handling multiple shipments.

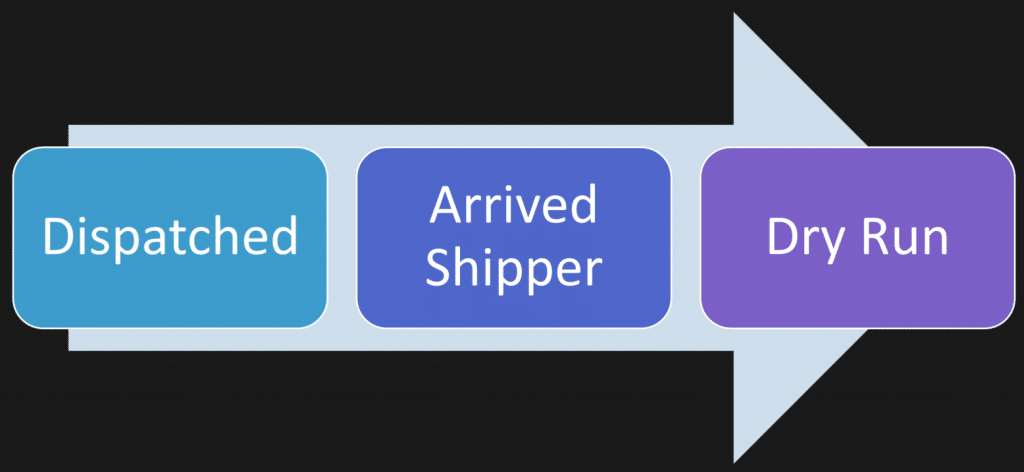

Maybe a pickup becomes unavailable. Maybe the shipment is delayed and there is no point in sending the driver. This is called a dry-run.

Maybe parts of the process branch depending on the customer, the carrier, or the type of delivery.

So now what are you modeling? Is it one workflow or several? Where do those boundaries exist? What belongs together and what should be separate?

That is the hard part.

Writing the code for each step is usually the easier part once you actually understand the model.

Once the model is clear, implementation becomes mechanical

That is why methods like event storming are useful. They help you focus on the events, actions, side effects, users, and different perspectives before you jump into code.

You want to understand the workflow first. You want to understand how smaller workflows fit into larger ones and how they cross boundaries. That work can be done with business people long before you start writing implementation code.

Once you understand it well enough, the implementation often starts to feel templated.

You have probably felt this if you work in .NET and use a messaging framework. Good frameworks handle a lot of the technical heavy lifting for you. They deal with plumbing like the outbox, inbox, logging, database concerns, and idempotency so you can focus on the specific behavior you need to implement.

That is a good thing. But it also makes the point pretty clear.

At that stage, a lot of the work becomes filling in the blanks. The workflow has already been defined. The messages already exist. The handlers are just implementing the behavior you already modeled.

That does not mean there are no hard technical problems

There absolutely are hard technical challenges.

Scaling problems, data consistency issues, infrastructure concerns, deployment issues — those are all real. I like those problems. But those are often architectural issues around the shape and growth of the system, not questions about the business workflow itself.

They are different kinds of difficulty.

A lot of the pushback on “coding isn’t the hard part” comes from people saying that translating ideas into precise, working systems requires deep knowledge and experience. I agree with that completely. But I still separate understanding the business and designing the system from the actual implementation work.

Those are closely related skills, but they are not the same skill.

What the best developers actually do well

The best developers I know are not just technically capable. They understand the business domain. They can decompose a system. They know how to reason about workflows and boundaries.

Yes, they are also good with their tools and can handle concurrency, messaging, and technical complexity. But that is not what makes them stand out most.

What makes them stand out is that they can answer the hard design questions.

Where do responsibilities belong? Who owns the data? What capabilities belong in which part of the system? How are boundaries crossed? How coupled are different parts of the system? What kind of data coupling, timing coupling, or deployment coupling exists?

Those are technical questions, but they are also design questions. They sit above the level of just writing code.

So is coding the hard part?

So when I say coding isn’t the hard part, I am not saying building software is easy.

I am saying the hardest part of building business systems is usually understanding the business, modeling workflows, defining boundaries, and designing a system that can actually support the way the business works.

Once you have done that well, the coding often becomes the easier part.

Join CodeOpinon!

Developer-level members of my Patreon or YouTube channel get access to a private Discord server to chat with other developers about Software Architecture and Design and access to source code for any working demo application I post on my blog or YouTube. Check out my Patreon or YouTube Membership for more info.

Related Links

- Vertical Slices doesn’t mean “Share Nothing”

- Read Replicas Are NOT CQRS (Stop Confusing This)

- Keynote: CTRL+SHIFT+(BUILD) PAUSE

The post Coding Isn’t the Hard Part appeared first on CodeOpinion.

]]>The post Vertical Slices doesn’t mean “Share Nothing” appeared first on CodeOpinion.

]]>How do you share code between vertical slices? Vertical slices are supposed to be share nothing, right? Wrong. It is not about share nothing. It is about sharing the right things and avoiding sharing the wrong things. That is really the point.

YouTube

Check out my YouTube channel, where I post all kinds of content on Software Architecture & Design, including this video showing everything in this post.

Boundaries

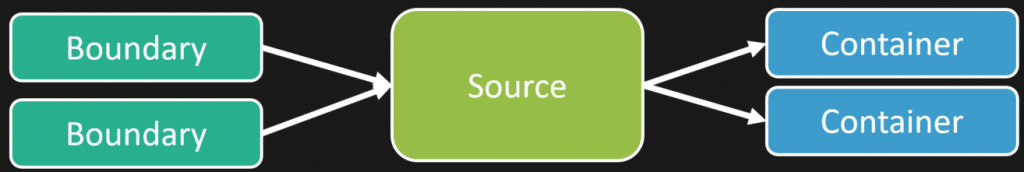

If you have watched my videos before, you probably know I talk a lot about boundaries. A vertical slice is not that different from a logical boundary. What matters here is that a vertical slice defines a boundary around a use case.

That is the lens I want you to look through, because once you do that, the question of sharing becomes a lot clearer.

A Shipment Workflow Is a Good Example

A good example is a shipment. It is a workflow.

You have different actions that happen along the way that make up a life cycle from beginning to end. Think about ordering something online. It gets dispatched, which means the order is ready to be picked up at the warehouse. The carrier arrives at the shipper. Then the freight is loaded onto the truck. Then it departs. Then it arrives at the destination. Then it is delivered.

That is the workflow.

Now the natural question is this: is the vertical slice the whole workflow, or is each step its own slice?

Because if each step is a slice, how do you share between them?

A Vertical Slice Can Be One Step In the Workflow

Each step in that workflow can be a vertical slice.

You could model the entire workflow as one slice. Sometimes that might be fine. But often, each step can be its own slice because the workflow can change. It can deviate based on the actions that occur.

Take the same shipment example. The order gets dispatched, the vehicle is on the way to the warehouse, it arrives there, and then finds out the order was cancelled. There is nothing to pick up anymore.

That is a different use case.

In shipping, that might be called a dry run.

How do you implement that? It is just another vertical slice. It is part of the workflow, but it is also a deviation from that workflow.

That gets us back to the original question. What can you share between those vertical slices that are part of the same workflow?

There Are Two Different Kinds of Sharing

The first kind of sharing is technical infrastructure and plumbing.

Things like error and result types, logging, tracing, authorization helpers, messaging support, outbox primitives, event bus abstractions, and small utility code. That kind of stuff is normal to share. Some slices will use it. Some will not.

A slice gets to decide what dependencies it takes on and what tactical approach it uses. That is part of the slice owning its implementation.

But that leads to the more important question: what does the slice actually own?

A Vertical Slice Owns Its Data and Behavior

A vertical slice owns its data. That use case owns the data it needs and how that data is persisted.

It also owns the dependencies it takes on. It chooses the tactical patterns it wants to use for that specific use case.

That is important because people hear that and then assume everything must be completely isolated. But that is not really true, especially when several slices are part of the same workflow.

Shared State Is Not the Problem

In the shipment example, what you really have is a state machine. You have state transitions across the life cycle, from dispatched all the way to delivered.

So yes, there is shared state.

That does not mean there is shared ownership of everything.

Imagine a shipment with state like status, dispatched at, arrived at, pallets loaded, and emptied at. If that was mapped to a table, each piece of that state is owned by the slice responsible for that action. The dispatch slice changes the dispatch related state. The arrive slice changes the arrival related state. The loaded slice changes the loaded related state.

Each slice owns the behavior around its part of that workflow.

This Still Applies With Event Sourcing

You can think about the exact same idea with event sourcing.

Instead of changing columns in a table, you are appending events to a stream. Dispatched. Arrived. Loaded. Emptied.

Same concept.

Each use case owns the behavior that produces those events. It owns the data tied to that behavior. It owns where that data lives, whether that is in a table or in an event stream.

That can still all live together. You are still sharing an aggregate. You are still sharing a concern because there are invariants around that workflow.

That is not bad sharing.

Sharing an Aggregate Is Not Bad Sharing

An aggregate is a consistency boundary. You need that consistency boundary around the state.

A slice is a use case.

So if you have several use cases related to the same underlying model, that can be shared. If two slices share validation because both operate on the same domain model, that can be shared too.

At the same time, you can have other slices that are not part of that workflow at all and use a completely different model. That is fine too.

The point is not that every slice must have its own isolated universe. The point is understanding what actually belongs together.

Different Slices Can Use the Same Domain Model

Another way to visualize this is by looking at what each slice does from top to bottom.

One slice might be invoked by an HTTP API. It has application code and a data model under it. Another slice might be invoked by a message or event. It has different infrastructure, different application logic, but still uses the same underlying domain model as the HTTP slice.

The entry point is different. The infrastructure is different. The application code is different.

That does not mean the domain model cannot be shared.

And then you might have another use case that is not related at all, even if it lives in the same broader boundary. That one may have a completely separate model.

Again, the point is that slices define their own dependencies and their own behavior. But that does not mean they cannot share anything.

Where Sharing Becomes a Problem

The problem starts when you begin sharing things that have no business being shared.

In the shipment example, I am talking specifically about the workflow and the shipment life cycle from beginning to end. Nothing in that example has anything to do with compliance, customer support, customer tracking, or what was actually ordered.

Those are separate concerns.

The trap people fall into is that they start sharing and coupling things they should not. The model becomes unfocused. That is how you end up with one giant Shipment object for your whole system.

That is where you get into trouble.

Do not share one god object.

A Vertical Slice Is a Logical Boundary, Not a Physical One

This part is really important.

People often talk about vertical slices in the context of code organization, and that is useful. But a vertical slice is not a physical boundary. It is a logical boundary.

That means it does not have to live in one C#, Java, or TypeScript file. It does not have to live in one project.

If you have a mobile app deployed separately to iOS or Android, and it is dealing with specific actions as part of a use case, that can still be part of the slice. If the same use case is invoked through an HTTP API, that is also part of the slice.

The slice is the logical boundary around the use case. It is not just a folder structure.

Good Sharing Versus Bad Sharing

Good sharing is when vertical slices are operating on the same underlying thing as part of a workflow, a life cycle, or a set of common invariants.

Bad sharing is when a change unrelated to one vertical slice affects another slice unexpectedly.

That is when you are sharing things that have unrelated reasons to change.

That is the distinction.

Do Not Share Domain Meaning

Put another way, do not share domain meaning.

In the shipment workflow, dispatched, arrived, and loaded are use case specific. Dispatch is its own thing. No other feature should be doing something related to dispatch unless it actually owns that behavior.

If dispatch publishes events or changes dispatch related state, that should happen there. If there is state related to dispatching, that slice should own it.

You are not sharing that ownership.

Could you still share an underlying domain model that handles the workflow transitions? Absolutely.

But ownership of behavior still matters.

Focus on Actions, Not Just Data

Hopefully one thing stands out in this example. When I describe vertical slices and use cases, I am describing actions. I am not starting with data.

That matters.

And that is the real issue underneath all of this.

This Is Really About Coupling

When we talk about sharing, what are we really talking about?

Coupling.

That is what this usually comes down to.

If you understand what you are coupling to between vertical slices and use cases, you can manage it. If several slices depend on the same underlying domain model because they are part of the same workflow, that can be perfectly fine.

If every vertical slice can touch any piece of data and change state anywhere, then yes, you are going to have a problem.

At the end of the day, this is about managing coupling.

Share the Right Things

Vertical slices are not about sharing nothing.

They are about sharing the right things.

Technical concerns and plumbing? Sure.

Shared invariants as part of the same workflow? Sure.

A shared aggregate when several use cases are part of the same consistency boundary? Sure.

What you want to avoid is coupling things together that do not belong together.

That is the difference between good sharing and bad sharing.

Join CodeOpinon!

Developer-level members of my Patreon or YouTube channel get access to a private Discord server to chat with other developers about Software Architecture and Design and access to source code for any working demo application I post on my blog or YouTube. Check out my Patreon or YouTube Membership for more info.

Related Links

- Read Replicas Are NOT CQRS (Stop Confusing This)

- Your Idempotent Code Is Lying To You

- How Many Microservices Do I Need?

The post Vertical Slices doesn’t mean “Share Nothing” appeared first on CodeOpinion.

]]>The post Read Replicas Are NOT CQRS (Stop Confusing This) appeared first on CodeOpinion.

]]>What’s overengineering? Is the outbox pattern, CQRS, and event sourcing overengineering? Some would say yes. The issue is: what’s your definition? Because if you have that wrong, then you’re making the wrong trade offs.

YouTube

Check out my YouTube channel, where I post all kinds of content on Software Architecture & Design, including this video showing everything in this post.

“The outbox pattern is only used in finance applications where consistency is a must. Otherwise, it’s just overengineering.”

Not exactly.

“CQRS is overengineering and rarely used even at very high scale companies. One master DB for writes and a bunch of replica DBs for reads are sufficient.”

No. And it has nothing to do with scaling.

“Event sourcing, another overengineering term, but in reality, most production systems do not implement strict event sourcing as described in books and system design articles. In the practical world, only current state is stored in the primary DB and events and business metrics are stored in an analytics DB.”

The giveaway that this is wrong is the discussion of business metrics related to event sourcing.

In the “practical world”, I’ll give some examples where event sourcing is natural.

Let’s go through them one by one, explain what they are, and when you should be using them.

The Outbox Pattern

Is it about finance? It has nothing to do with finance. Is it about consistency? Yes, that part is correct. It’s really a solution to a dual write problem.

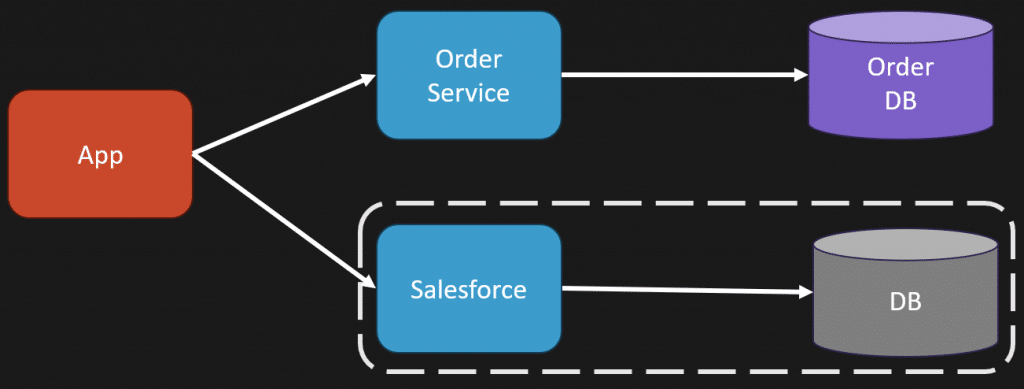

Here’s the dual write problem.

You have your application. Some action gets invoked. You persist a state change in your system.

That’s the first write. The second write is you need to publish an event and write a message to a message broker so other parts of your system know it occurred. That’s the second write.

Here’s the issue. It fails in between. So you do the state change. Everything passes. Everything is saved. Transaction is good. But then you fail to publish the message to your message broker. Now you’re inconsistent. Your state change happened, but the event never got published.

Is it a big deal if you fail to publish that event? It depends what you’re using the event for, and what downstream services care about. If it’s best effort metrics or analytics, it might not be a big deal.

If it’s part of a workflow, it can be a much bigger deal. You want that consistency, and that’s where the outbox comes in.

So how do you solve the dual write problem? Like most problems, don’t have it in the first place.

To solve the dual write problem, we’re going to have a single write. That means you persist your state to your database and within the same transaction you persist the message to an outbox table in that same database.

Separately, you have a publisher that queries the outbox table, pulls messages that need to be published, and pushes them to your message broker. If it succeeds, it reaches back to the database and marks the message as completed or deletes it from the outbox table.

If there’s a failure, you retry. You haven’t lost any messages you wanted to publish.

So is the outbox pattern overengineering? It totally depends on your use case.

If you’re using events as a statement of fact that something occurred within your system and other parts of your system need to know it happened, then it’s probably not overengineering.

If you’re using events as best effort analytics and it’s totally fine if some events aren’t published because nobody depends on them, and lost messages are fine, then yes, it’s overengineering.

One side note: if you’re using a messaging library, it probably already supports the outbox pattern.

CQRS

“CQRS is overengineering and rarely used even at very high scale companies. One master DB for writes and a bunch of replica DBs for reads are sufficient.”

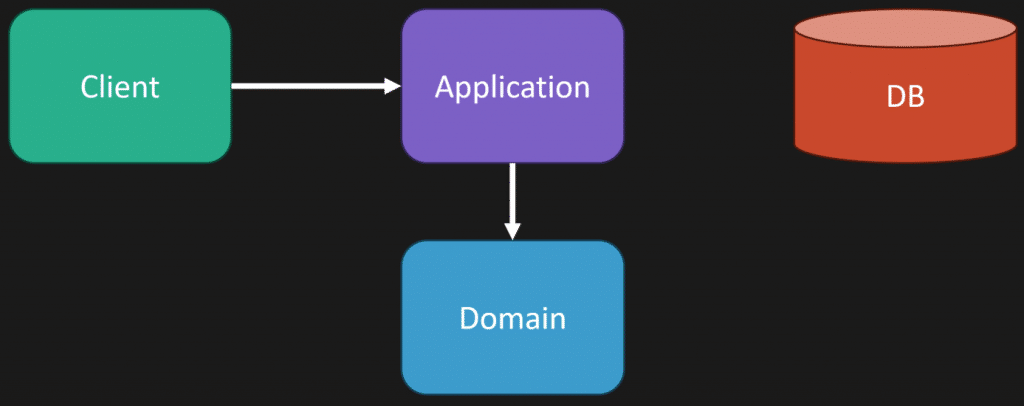

This is confusing two things entirely. It’s talking about scaling at the read write database level, when in reality this is about your application design.

CQRS literally stands for Command Query Responsibility Segregation. Commands change state. Queries read state.

That has nothing to do with databases. One database, two databases, whatever the case may be.

This is about having two different code paths for different responsibilities.

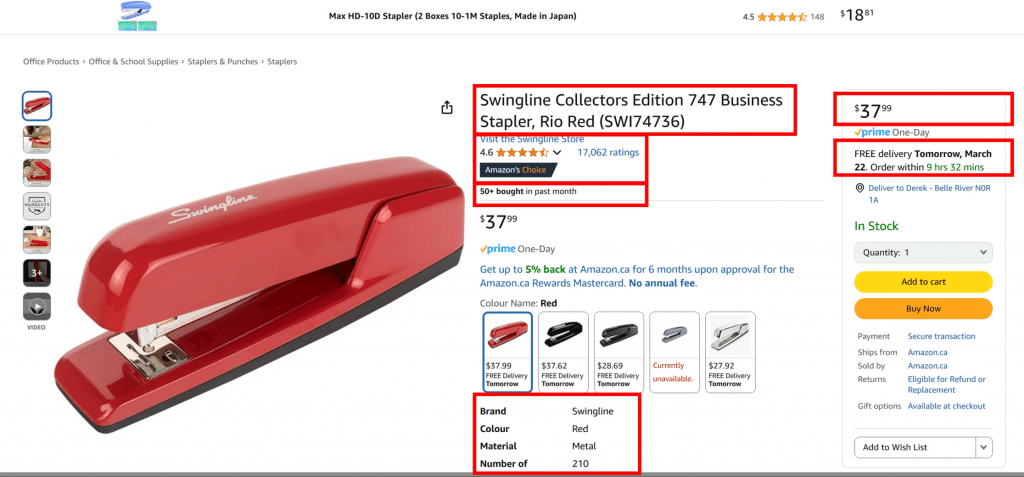

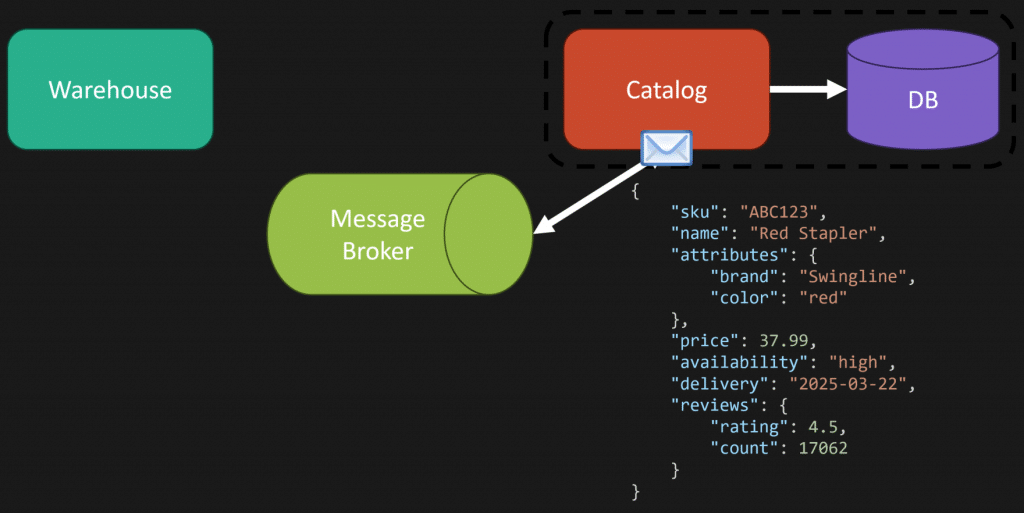

But since scaling was brought up, especially on the query side, that’s the angle I want to tackle. In a lot of query heavy systems, you often have to do a lot of composition.

That composition could be to a single database, multiple databases, a cache, whatever. But you’re making multiple calls to different places to compose data together to return to a client.

Because a lot of systems experience this, people create views or materialized views so you’re not doing all of that composition at runtime.

Instead, you have a separate table, a view, a different collection, a different object, something that represents what’s optimized for a specific query.

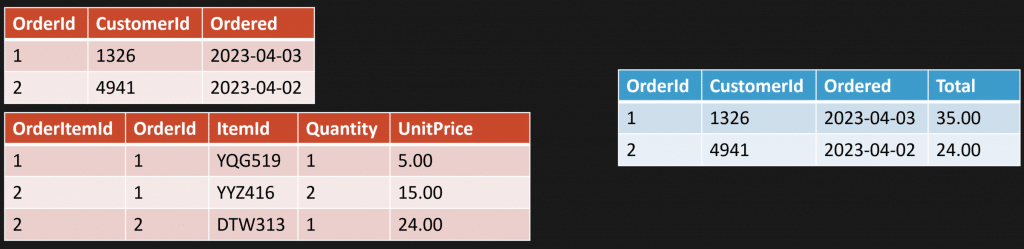

Example: an order and line items.

Maybe instead of joining tables and calculating totals on every request, you have a view that does it.

Or you have a materialized view that’s persisted and updated every time there’s a state change to an order.

So when you make a state change, your command updates your write side. Maybe that’s a relational database with normalized tables. And because you have a materialized view, you update that too. That could be in the same transaction. Then when a query comes in, you read directly from the materialized view.

This is all about optimizing reads or writes.

In my example, it’s optimizing reads, using a materialized view.

It doesn’t need to be that at all. It could be a relational database, a document store, a single table, a collection, some object that already contains what you need.

The point is you have different code paths, so you have options.

You could still have your query side do composition and your command side use the exact same database, the exact same schema, and update what it needs to update.

You just have the option to do different solutions if you have different code paths.

So is CQRS overengineering? Not really. You’re likely already doing it in some capacity because you already have different paths for reads and writes.

Where this gets conflated is when you start thinking about it purely from a scaling perspective. If you’re doing a lot of composition and you add read replicas, that’s fine.

But here’s the question.

Are your read replicas eventually consistent?

Because that plays a part in the complexity you’re adding by just adding read replicas. If you want pre computation because you want materialized views to optimize the query side, that’s a strategy if you need to optimize.

Event Sourcing

“In the practical world, only current state is stored in the primary DB and events and business metrics are stored in an analytics DB.”

We’re talking about different things here. Events are facts. What event sourcing is doing is taking those facts and making them the point of truth.

Then you take that point of truth, that series of events, and you can derive current state or any shape of data from any point in time.

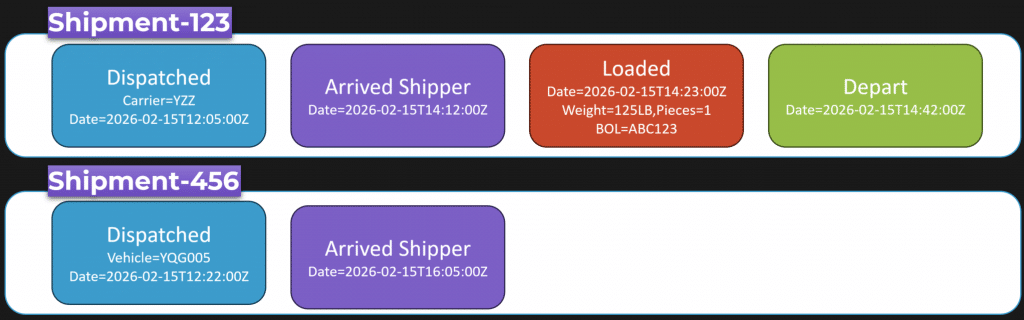

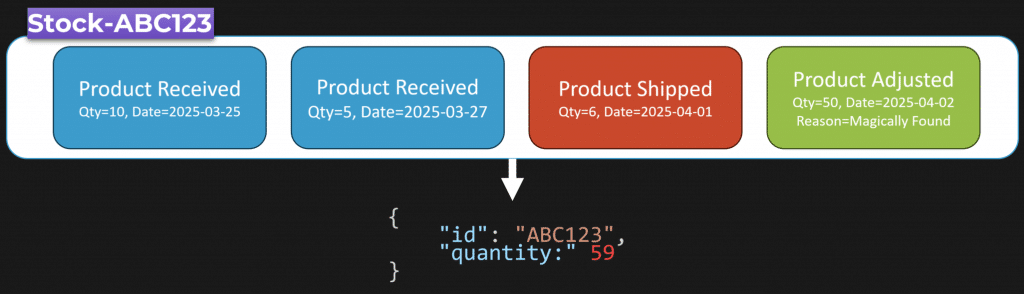

Let’s use a practical example because there are a lot of domains that naturally have events. You can just see them. A stream of things that occur.

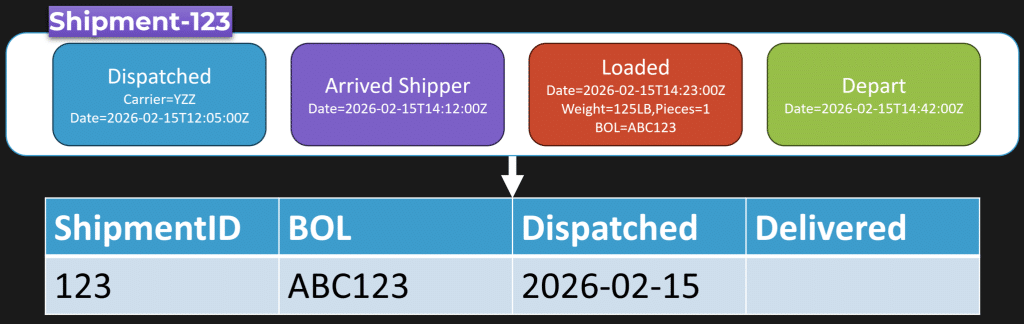

Here’s a shipment.

You persist these as a stream of events for the unique thing you’re tracking.

Shipment 123 has its own series of events. Another shipment has a different series of events. Those event streams are the point of truth.

You can derive current state from them.

It has nothing to do with analytics, but you can use them for analytics because just like current state, you can turn them into any shape you want.

So if you have an event stream, you can transform it any way you want.

Maybe you transform it into a relational table so analysts can write SQL like “select all shipments dispatched on a particular day”. Or maybe you transform it into a document shape that’s optimized for an application query.

That’s the point.

Your source of truth becomes an append only log of business facts, events. Your state is derived from those events.

A lot of the issues I read about people having with event sourcing are twofold. First, they’re not actually doing event sourcing. They have an event log, but it isn’t the point of truth. Their real database is still current state, and the event log is just “extra”. Or they’re using events as a communication mechanism with other services like a broker, which is a different thing.

Second, there’s a huge difference between facts and CRUD. “Shipment created” is not an event. That’s CRUD. “Order dispatched” is an event. Something happened.

“Shipment modified” is not an event. “Shipment loaded”, “shipment arrived”, “shipment delivered”, those are events.

Is event sourcing overengineering? It can be if all you view your system as is CRUD, and that’s how you build systems.

But there are a lot of domains where, once you start seeing it, you naturally see a series of events and it becomes obvious that’s where event sourcing fits.

The Real Point About Overengineering

Everything has trade-offs.

If you do not understand a concept, you won’t be able to understand what those trade-offs are, because you don’t even know what they are.

Join CodeOpinon!

Developer-level members of my Patreon or YouTube channel get access to a private Discord server to chat with other developers about Software Architecture and Design and access to source code for any working demo application I post on my blog or YouTube. Check out my Patreon or YouTube Membership for more info.

Related Links

- Your Idempotent Code Is Lying To You

- You Can’t Future-Proof Software Architecture

- How Many Microservices Do I Need?

The post Read Replicas Are NOT CQRS (Stop Confusing This) appeared first on CodeOpinion.

]]>The post Your Idempotent Code Is Lying To You appeared first on CodeOpinion.

]]>You have some code that handles placing an order. This could be an HTTP API or a message handler. You made it idempotent. You added a unique constraint on some kind of message ID.

And somehow… you still end up double charging the customer’s credit card.

YouTube

Check out my YouTube channel, where I post all kinds of content on Software Architecture & Design, including this video showing everything in this post.

Idempotent

You did everything right. You have idempotency. You have an inbox table and a unique constraint on that message ID. Your handler should be exactly once, right? Wrong.

And it’s because of this call to our payment gateway outside of our database transaction.

Our database can tell us whether we processed the message, but it doesn’t stop us from double charging our customer.

So let’s talk about why this happens, how concurrency can make it worse, and some solutions.

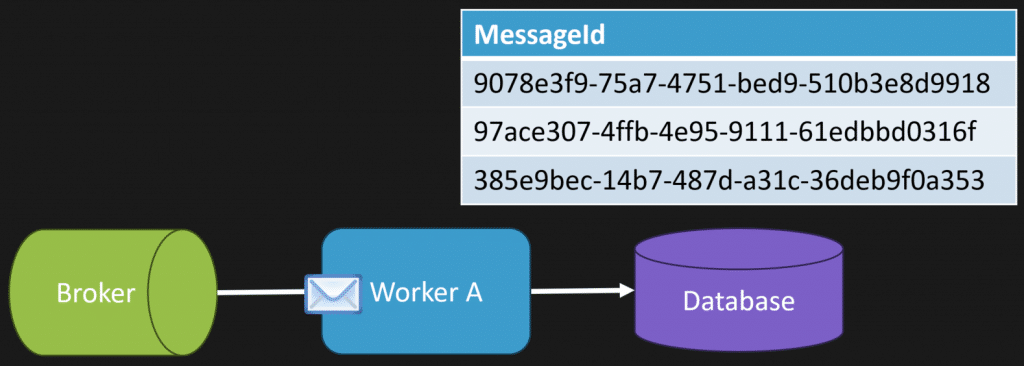

The happy path: idempotency with internal state

Let’s say you have an HTTP API where you might get multiple requests from the same user. Or this could be a message handler from a message broker where a message can be delivered more than once.

What that looks like is:

- A request comes in

- We take a message ID and persist it to our inbox table

- Because we have a unique constraint, if it wasn’t there, everything is fine

Then that exact same message (or HTTP request) comes back in. Guess what? It’s already there. So our request fails.

This is the happy path. It’s idempotency inside the database.

As long as you’re only updating internal state, this works. But it gets a lot more complicated once you start calling something external… like a payment gateway.

Why the payment gateway breaks “exactly once”

In the happy path, all we do is:

- start a transaction

- add our message ID to the inbox

- persist state

- if it was a duplicate, we throw when we save or commit

Simple.

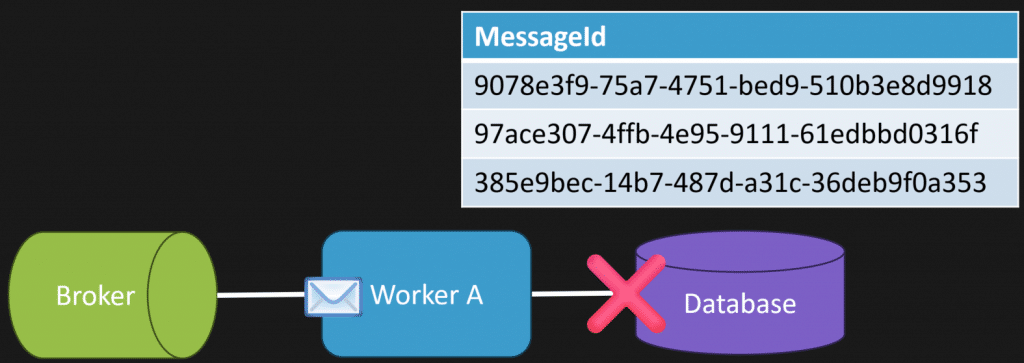

But we also need to make the call to the payment gateway. And this is where the issues start.

It might seem like a good idea to call the payment gateway immediately. Maybe you get a transaction ID back, some receipt, something you can persist in your database to mark the order as paid.

But here’s the problem: That payment gateway call is outside of our transaction. Outside of that unique constraint.

So now this can happen:

- First request comes in. We charge the customer. We save our inbox record. We persist our state. All good.

- Second request comes in (same message, same request) We charge the customer again. Then we hit the database and fail because of the unique constraint when we save and commit.

So our database protected our internal state. But it didn’t protect the external side effect.

Concurrency makes this easier to see

If you’re looking at this from an HTTP API perspective, you can have two identical requests come in at the same time.

The load balancer sends them to two different instances.

They both hit the payment gateway at roughly the same time.

One of them wins the unique constraint. The other fails on commit.

But both potentially charged the customer. At the start I said concurrency can make it worse. A different way to think about it is: concurrency makes it easier to reproduce and prove you have the issue.

There’s no magic distributed transaction coming

One “solution” is a distributed transaction. The reality is you’re not going to get one.

That inbox table protects your internal state, but the moment you cross that network boundary to the payment gateway, all bets are off. You kind of went from exactly once to at least once.

But we can design for it. There are a few approaches here. It’s not one magic fix. It’s usually a combination depending on your situation.

1) The third-party service supports idempotency

Your third-party service might support idempotent requests.

A good example is Stripe. It supports an idempotency key that you pass in the header to make idempotent requests.

So you decide what the key is for that specific business operation. If the same request happens more than once, you send the same key again.

Now it becomes idempotent on the payment provider side too.

Side note: if you’re creating an HTTP API, support idempotent requests. Your clients will love you. If you don’t, they’re the ones who have to deal with the rest of this stuff.

2) Lookup by a reference ID before charging

If the provider doesn’t support idempotency keys, another option is a lookup against some kind of reference.

In my example, I have a payment gateway and I want to know: is this invoice paid?

My reference at this point is the order ID. So the flow becomes:

- Ask the gateway: “Is order 123 already paid?”

- If not, charge it

You can still have race conditions here. This can still be an issue. But it may be good enough, especially if the third party has a unique constraint on something like your order ID.

3) Serialize the operation per business key (locking)

If lookup isn’t enough, another solution is serializing the operation by a granular business key.

What I’m talking about here is locking.

You’re basically creating a distributed lock. That might be:

- using your database and row locking

- using Redis for locking

- something else entirely

In my example, “granular business key” might be per order. That means only one payment attempt for that order runs at a time. If I can’t acquire the lock, I retry, or return something that tells the caller to retry. Now at any point, we only execute one at a time for that order, charge the customer once, and release the lock.

Caveats

The trade-off is throughput. That’s why the business key has to be granular. If you lock too broadly, you slow everything down.

Also, timeouts matter. If the payment gateway times out, that does not mean it failed. It might have actually succeeded. So even with locking, you still need to think about what “timeout” really means.

4) Separate internal state from external calls (inbox/outbox)

Another option is inbox/outbox and splitting internal state from the external call.

What does that mean?

- We get the order

- We mark it as payment pending

- We add a message to our outbox saying “charge payment”

- We save all of that together in the same transaction

Then separately, a processor reads the outbox and performs the external call to the payment gateway.

If the provider supports idempotency keys, that outbox processor uses them. And then if it succeeds, we mark the order as paid. This doesn’t magically fix double charging. You could still double charge.

What it does give you is better internal consistency and better failure handling, because message handlers let you handle retries, backoff, timeouts, and errors differently than your main request path.

That’s one of the reasons I love messaging.

5) Reconcile and compensate

This generally always happens: you need reconciliation and compensating actions.

There’s nothing wrong with this. If something realizes “uh-oh, we double charged”, you void or refund.

This can be a workflow step, or a periodic reconciliation process that compares your system with the third-party system. You’re not failing as an engineer because you need compensation. This is just reality when you’re dealing with external systems.

Putting it all together

You have a lot of options, and it’s often a mix:

- Use an inbox table to dedupe incoming messages

- Use a unique message ID to prevent reprocessing internal state

- Use an outbox pattern so your intent to call an external system is persisted with your internal state

- If the provider supports idempotency keys, use them

- If not, can you lookup by a reference ID to see if the action already happened?

- If acceptable, can you serialize with a distributed lock by a granular business key like order ID?

- And when those don’t cover everything, reconcile and compensate

Ultimately, I don’t think the goal is “exactly once” as in “the operation only ever happens exactly once.”

A better goal is designing a system that’s effectively once — it behaves correctly even when you deal with race conditions, concurrency, and timeouts that are outside of your control.

Join CodeOpinon!

Developer-level members of my Patreon or YouTube channel get access to a private Discord server to chat with other developers about Software Architecture and Design and access to source code for any working demo application I post on my blog or YouTube. Check out my Patreon or YouTube Membership for more info.

Related Links

- You Can’t Future-Proof Software Architecture

- Context Is the Bottleneck in Software Development

- Watch Out for Superficial Invariants

The post Your Idempotent Code Is Lying To You appeared first on CodeOpinion.

]]>The post You Can’t Future-Proof Software Architecture appeared first on CodeOpinion.

]]>“Future proof your architecture” sounds good. But the reality is you can’t future-proof Software Architecture. When you really think about it, the future is just what’s breaking assumptions. You can’t really future-proof that.

What you can do is contain changes so they don’t ripple through your system.

Where people go wrong is trying to future-proof with abstractions everywhere. What you really want to be doing is controlling the blast radius, meaning controlling where change goes.

YouTube

Check out my YouTube channel, where I post all kinds of content on Software Architecture & Design, including this video showing everything in this post.

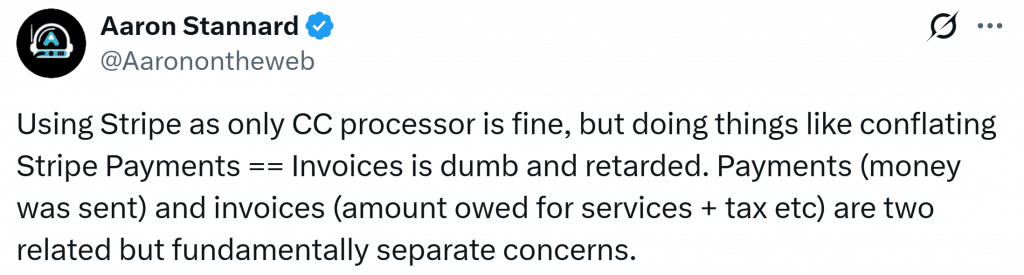

I’m going to explain this using a thread by Aaron and elaborate on some of the things he’s pointing out.

He posted:

I posted a lot of bangers about SDK bin’s terrible software choices and how it generally made life unpleasant for us. So I wanted to detail how we’re addressing the dumbest and worst design choices in the codebase.

So the first one: what did our dev do when we needed to renew an annual subscription? Modify the subscription created date and reset the renewal reminder hard coded as N months from the creation date.

Now you might be thinking, “That’s ridiculous. I would never do that.”

But it underlines why things change.

The Unknown Is Usually Boring Stuff That Stops Being Boring

In the context of future proofing, the unknown usually lies in things like:

- pricing rules changing

- renewals and billing schedules changing

- tax changes

- refunds and how those show up

- partial payments

- a new payment provider, or a second payment provider/gateway

- workflow that used to be simple, becoming not so simple

That’s the real problem. Early on, when you’re building a system, you can have a lot of assumptions about the unknown.

What kills you is early decisions that leak everywhere in your codebase and cause coupling.

You have assumptions. You make decisions. Those decisions leak everywhere. Now you’re coupled. And that coupling is going to cause a lot of pain later when you try to make change.

You know you have this problem because when a change comes in, you have to touch all these things:

- the UI

- the “domain”

- persistence

- some random shared helpers and utils

- reporting

- background jobs

- and my favorite: three or five or a dozen other places you didn’t even know existed

You’ll often hear, “Well, we have a very large system and it’s very complex.”

In the context of what I’m talking about, that’s not complexity. That’s coupling.

Stripe Isn’t the Problem. Leaking Stripe Everywhere Is.

In Aaron’s case, he’s feeling the pain of everything being coupled so tightly to Stripe that it’s taken a mini Manhattan project to move off it.

Related, sure, but fundamentally separate concerns. So the assumptions and unknowns causing pain here are exactly what I said at the beginning:

- the idea of a renewal wasn’t a first class concept

- moving off Stripe becomes a disaster

- conflating invoices and payments creates more pain

That “Created Date Renewal Hack” Is a Symptom

Here’s the type of thing that happens when the Stripe assumption leaks into your system.

You end up with leaked information in your subscription, and who knows where else, like:

- Stripe customer ID

- Stripe invoice ID

And then the only real concept you had was a created date time.

But because you needed renewals after the fact, you didn’t model it.

So that created date turns into a hack. You “renew” by pretending it started again. You overwrite the created date and reset the reminder hard coded off that date.

This is also one of those situations where if the business actually knew you were overwriting this data, you’re potentially losing a lot of valuable info. Audit info. What actually happened. When did it renew. What was the history.

If you talk to someone non technical in the business, whether they care about that, they’ll probably say yes.

Your Data Model Isn’t Your Domain Model

This is where I’ll say something you’ve heard me say before:

Your data model isn’t your domain model.

How you persist data, what your schema looks like, that’s not your domain model.

If you look at your model and think, “This doesn’t really express the domain,” then yeah, you probably don’t have a domain model. You have a data model. A bucket of data. Getters and setters.

Bonus tip: it’s also not your resource model.

You have an HTTP API. Those are different things.

What you return to clients isn’t your underlying schema and isn’t your domain model. It’s a representation of what you want to show to clients.

So What’s the Fix? Not Interfaces Everywhere.

So what’s the fix?

In the Stripe example, it was coupled everywhere.

Is the fix immediately to jump to interfaces and put them everywhere? Use the adapter pattern everywhere all the time?

No.

It’s what I said at the beginning: controlling the blast radius. When you make a change, it should be localized to one particular place.

With Stripe specifically, it depends how coupled you are:

- Is the Stripe customer ID leaked into your database?

- Are other clients using it because you exposed it via your HTTP API?

- Do other libraries use it?

- Are you using the Stripe SDK in 10 places, or 200?

If you have 200 usages, and it’s all through the UI, the domain layer, persistence, reporting, background jobs, and everywhere else… you don’t have a blast radius.

You have a disaster.

Separate Concepts: Invoice vs Payment

Another simple example is the invoice vs payment problem.

They were treated as one concept and it was a disaster.

They don’t need to be. They should be separate things. You can have an invoice that has nothing to do with Stripe. It’s just an invoice. No Stripe internals. It’s your concept. Then payments are a separate concept.

Now you can apply payments to an invoice. Partial payments? Fine. Refunds? That has nothing to do with invoices. That has everything to do with payments.

Separate concern. Easier to support. Easier to change.

Watch Your Nouns: Third Party Vocabulary Leaks

Pro tip: when you’re using third party services heavily, especially if they matter a lot to you, the nomenclature from that third party starts leaking into the core of your system.

You’ve got to be careful there.

You want your product’s nouns and verbiage to be yours, not the third party’s.

A Concrete Blast Radius Example

Here’s what “controlling the blast radius” can look like when paying an invoice.

You fetch the invoice from the database.

You create a payment. Separate concept. You call Stripe to charge the account. If it succeeds, you mark the payment as succeeded. If it fails, you mark it as failed.

Then you save your database changes.

Yes, you’re going to have reconciliation, because if something fails on your side but the charge actually went through, you deal with that after the fact.

But the point is this: You’re controlling the blast radius of where you deal with Stripe. It’s concrete. It’s real. But it’s contained.

If you need to change payment providers, you change it where you have that capability exposed. It’s not coupled everywhere.

How People Make It Worse

This is where people take the right problem and make it worse.

Before you say, “Let’s make an IPaymentProvider used across the whole system” — congratulations, you just created shared coupling.

Or “Let’s build a generic billing framework” — no, you created a framework specific to one implementation that isn’t generic at all.

“Let’s reuse this shared library across services or slices” — no, what you created is a distributed monolith starter kit.

Designing for the Unknown

So how do you design and architect for the unknown?

It’s not about trying to future-proof Software Architecture. It’s about containing the blast radius.

For the things that often change — rules, workflows, integrations — if you segregate them, you can change them without it affecting your entire system.

Where things go wrong, like the Stripe example, is leaking internal information throughout the system. Then if it changes… now what?

Because you didn’t localize it. It permeated everywhere, and the blast radius is huge.

Join CodeOpinon!

Developer-level members of my Patreon or YouTube channel get access to a private Discord server to chat with other developers about Software Architecture and Design and access to source code for any working demo application I post on my blog or YouTube. Check out my Patreon or YouTube Membership for more info.

Related Links

- Context Is the Bottleneck in Software Development

- Why “Microservices” Debates Miss the Point

- Watch Out for Superficial Invariants

The post You Can’t Future-Proof Software Architecture appeared first on CodeOpinion.

]]>The post Context Is the Bottleneck in Software Development appeared first on CodeOpinion.

]]>Software development context is the real bottleneck, not writing code. AI can generate code fast, but without context, boundaries, and language, you get coupling and brittle systems.

YouTube

Check out my YouTube channel, where I post all kinds of content on Software Architecture & Design, including this video showing everything in this post.

With AI, I think people are taking a leap that is fundamentally wrong. It is not about producing cheap code. I do not think that has ever been the bottleneck. The bottleneck has been context. If you have watched enough of my videos, you probably know my slogan is context is king. And context is probably more important now than ever.

It is not about what syntax or folder structure your source code looks like. It is the context of why it does what it does. Why did you write the code, or the AI write the code, given your instructions? What are we optimizing for? What constraints did we have? What are the invariants and the things that can never happen? Probably most importantly, what tradeoffs did we intentionally accept? What decisions were made, and why?

AI can provide the implementation. It can write all the code. But it needs context. It needs to understand how to make the tradeoffs. Giving the instructions I see online like “write clean code” or “DRY code” is the most useless instruction for actually developing a good design.

“Design does not matter anymore” is the trap

I understand the rebuttal people have. “Well, it does not even matter anymore about design. Because if AI can read the code and can write the code, and therefore change the code, it does not matter. It does not matter what type of folder structure you have, organization, it does not matter at all about the design because AI can just handle it.”

But that is a trap.

The pain has never been writing code. It is about making behavioral changes safely.

Coupling is still the thing you have to manage

I stumbled upon a post that basically said the way the code looks should be irrelevant. What matters is the end result. On the surface, I get it. But I think it is naive.

When people talk about “how the code looks” they might be thinking structure, syntax, whatever. But to go beyond that, yes, it matters how it looks, because coupling still needs to be managed. Coupling is arguably the thing that when we are writing software we need to handle the most. If you want a long lived system that can evolve and change, you need to manage coupling.

If AI makes producing code cheap, guess what is also going to be cheap. Creating coupling. Creating a rat’s nest turd pile of coupling. What people call a big ball of mud. Something that is hard to change.

Everybody can relate to this. You make a change to one part of your system and it affects another part of the system unintentionally. Why does that happen? Coupling.

And you might be thinking, “But AI knows everything.” It does not.

“But it is going to know all the coupling.” Sure. But you already know all the coupling right now as well. And you still have this problem. So what is going to be different with AI?

If you are using a statically typed language, say in a monolith, you can find usages. You can run tools to know what your coupling is between different boundaries, or how your system runs. You can already know this, and you still end up with a turd pile that is brittle and hard to change.

So I do not think the answer in the era of AI is to ignore design. It is likely the opposite. It is providing a design that is built on constraints and context.

And that gets us to the real question. Where does that context live?

Where context actually lives

Context lives in the structure. It lives in the dependencies. It lives in the boundaries. And boundaries are still more important than ever because of coupling. All these foundational things you are doing now, even with AI in the mix, are still relevant.

So how do you capture the design and context in your system? First, you have to be explicit in the domain and the language you are using. I preach this so much. It is the opposite of CRUD, and the language now is more important than ever.

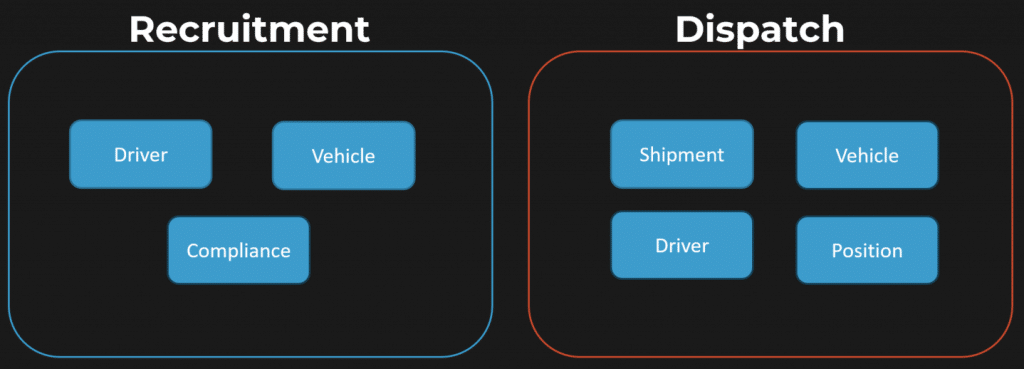

Use the context to understand what the domain is. Let me give you an example in logistics and shipping. What are the use cases? What are the things you do as part of the workflow?

You can dispatch an order. When a vehicle arrives at the shipper to pick up the freight, you arrive. When you pick up the freight, that is the load. When you leave and you are on route to delivery, that is depart. When you unload the freight or deliver it, that is empty.

These are explicit. It is the exact opposite of CRUD. CRUD provides no context. All the context is living in your end user’s head, because that is the workflow of how they interact with your system. If you have create shipment, update shipment, update stop… what is this? What does the system even do?

You would not be able to tell me what the workflow is, because the workflow is in somebody’s head. You are just recording current state. And if we are talking about current state and how you are recording state, it tells you how the system is now. But it gives you no context about how it got that way.

Events give you the story, not just the state

This is where events fit so naturally. There is a big difference between “shipment created” or “shipment updated.” What does that even mean? Versus being explicit about actions and commands.

An order was dispatched. It arrived. Loaded. Departed. Delivered.

These are explicit behaviors of your system. That language is the story of the domain. One of the most underrated places context lives is in language in the code. It tells you what the system does, and how it does it.

Not all language is created equal. You can be using terms, especially in a large system, that mean different things depending on the boundary. That is why boundaries matter. Boundaries preserve context and control coupling between them. The language inside that boundary encodes the intent of what it does. Events preserve intent by capturing what happened, and why.

Boundaries keep your concepts from being smashed together

When I talk about boundaries, I mean the different parts of the system that have their own context. In the logistics example, you might have sales, rating, orders, ordering. Visibility to customers about the status of their order. Execution, dispatch, tracking the vehicle and the path through delivery. Auditing. Communications with the customer. Billing and approvals, making sure documents are in place so you can invoice, pay carriers, pay drivers, whoever is executing the shipment.

Each one has its own context. And here is a really good example of what happens when you do not respect that. A system coupled everything so tightly to Stripe that it took a miniature Manhattan project to move off of it.

Using Stripe as a credit card processor is fine. But conflating Stripe payments with invoices is a mistake because they are not the same thing. Payments are money sent. Invoices are amounts owed for services and taxes and everything else. They are fundamentally separate concerns. Different concepts.

That is design. That is context.

AI can write code. It guesses your intent.

Design has always been important. But I am hoping now people see the value in capturing context within your design.

Create boundaries with specific context. Use the domain language explicitly. Make the concepts, the actions, the events, and the reasons why things happen, obvious in your code. Because CRUD only gets you so far. With CRUD, workflow and concepts are in the end user’s head. They are not in your system.

And if you are using AI to generate code, it does not have that workflow context unless you put it there. And of course, manage coupling between boundaries. Because AI producing code faster just means you can build a big ball of mud quicker if you are not managing coupling.

Join CodeOpinon!

Developer-level members of my Patreon or YouTube channel get access to a private Discord server to chat with other developers about Software Architecture and Design and access to source code for any working demo application I post on my blog or YouTube. Check out my Patreon or YouTube Membership for more info.

Related Links

- Why “Microservices” Debates Miss the Point

- Aggregates in DDD: Model Rules, Not Relationships

- Watch Out for Superficial Invariants

The post Context Is the Bottleneck in Software Development appeared first on CodeOpinion.

]]>The post Why “Microservices” Debates Miss the Point appeared first on CodeOpinion.

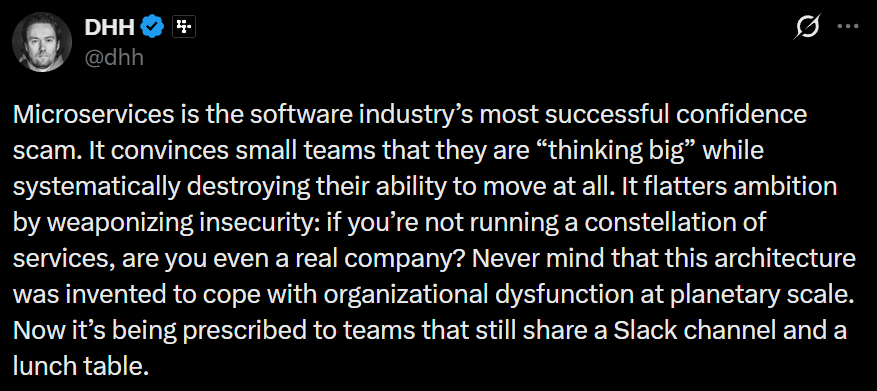

]]>DHH had a take on microservices in small teams that is getting a lot of attention. And while I agree with what he’s pointing out, all of these types of conversations miss what actually matters. This is not about microservices or a monolith or small teams.

Now what’s implied here is microservices is much more difficult to understand the full context. I agree, given how most people think of microservices. You can think, well, I got all these services and yeah, I don’t know how anything happens end to end, and what service interacts with what service. Yes, that’s a problem.

It’s a problem, but not the root cause.

YouTube

Check out my YouTube channel, where I post all kinds of content on Software Architecture & Design, including this video showing everything in this post.

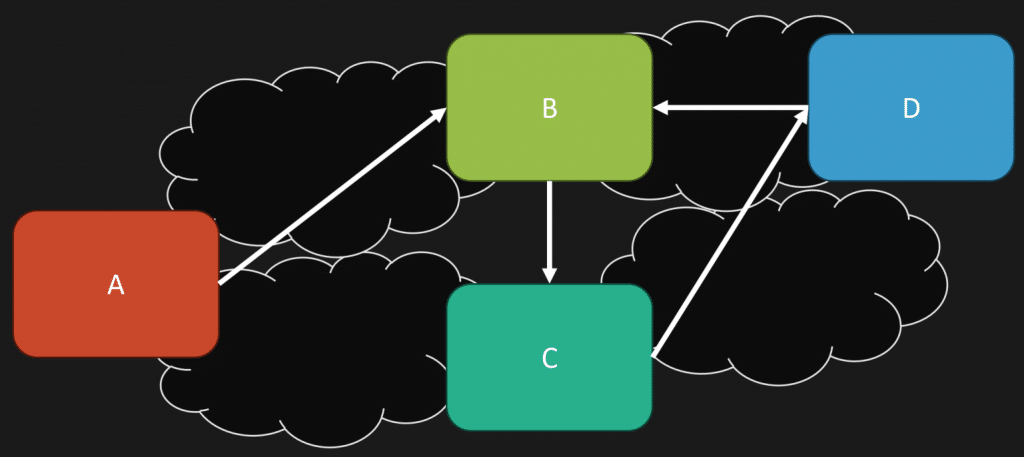

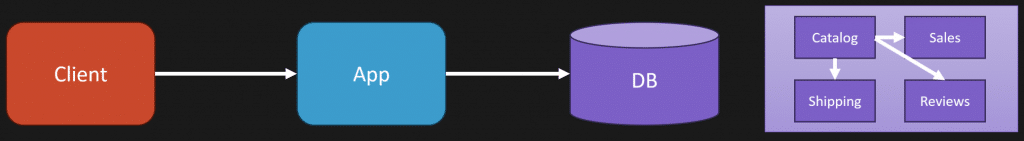

The Root Problem Is Coupling

The root of the problem is coupling.

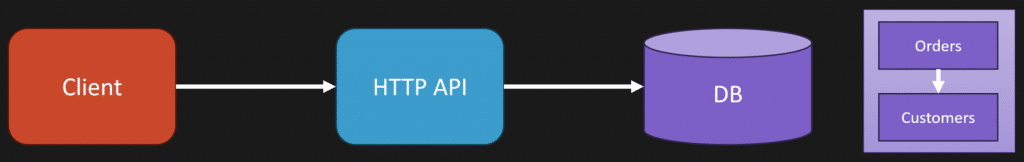

So if you have a high degree of coupling, let’s say we’re talking about a monolith here, yes, you’d be able to kind of navigate this a little bit better. Try to understand how each different part of your system is interacting with a different part. And yes, it’ll be much more difficult if all of a sudden these are all microservices and now you’ve introduced a network boundary.

Microservices is a physical architecture choice. That’s what you’re choosing when you introduce it. You’re introducing network boundaries.

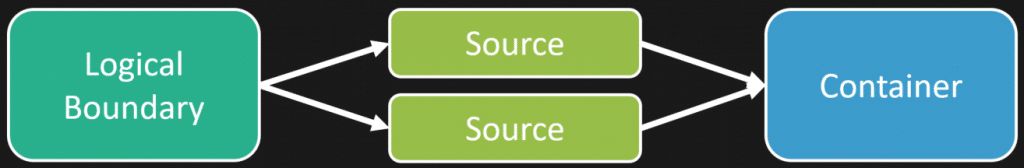

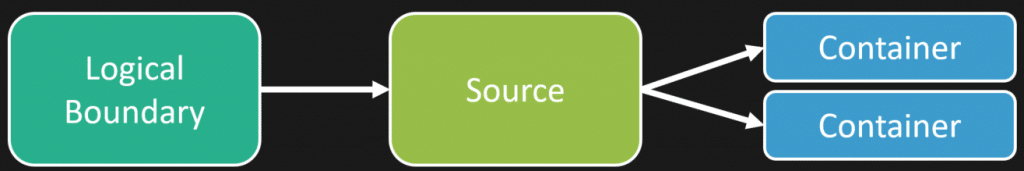

But regardless if you have a monolith or microservices, whether you’re a small team or not, the key is to define logical boundaries.

Logical Boundaries vs Physical Boundaries

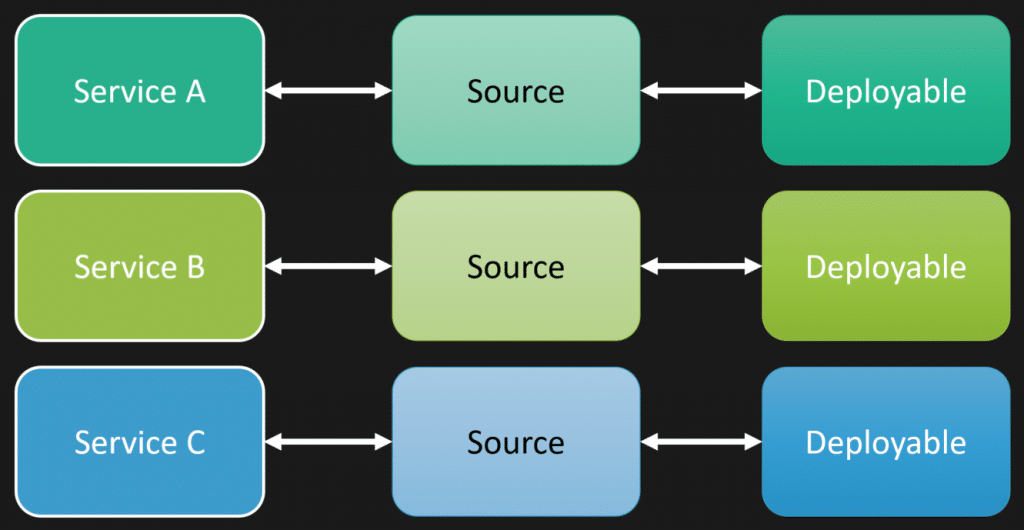

There’s a difference between logical boundaries and physical boundaries.

Half the issue here is that microservices define and force you to be a one to one.

Meaning, what we defined as a logical boundary of service A, B, and C, they likely end up with their own source repository. Even if it’s a monorepo, you have your own source that’s specific for that logical boundary, which guess what, gets built and turned into some type of deployable, whether it be some executable, a container, whatever, some unit of deployment.

We’ve turned everything into a one to one to one.

That can be different when you often think about a monolith, or what people would classify as a modular monolith. You have all these different logical boundaries within your monolith, within the same source codebase, that gets turned into a single deployable unit.

You can build a monolith with strong logical boundaries. You could be doing the same with microservices.

On the flip side, you can build an absolute turd pile of a monolith because you have weak boundaries, or none at all. Same goes with microservices.

What We Are Really Arguing About

What we’re really arguing about here with microservices is whether the cost of introducing a network boundary is worth it.

And he points out that cost.

“Then comes the operational farce. Each service demands its own pipeline, secrets, alerts, metrics, dashboards, permissions, backups, and rituals of appeasement.”

I don’t think that list is exaggerated at all. It’s a lot of complexity and has a high cost.

So the question is, do you get enough value from being able to deploy independently for the cost. This is about a trade off.

He continues with,

“One bug now requires a multi service autopsy. A feature release becomes a coordinated exercise across artificial borders you invented for no reason.”

Hang on there.

You just have a high degree of coupling. Artificial borders, absolutely you want borders. Should they be artificial. No. They should be cohesive around the capabilities of your system.

If you have a high degree of coupling, that’s your problem. That’s not just some random thing. It wasn’t invented. You created this. You created the coupling.

Whether you have microservices, is it going to be much more difficult to debug and troubleshoot because of that network boundary. Absolutely. I’m not disputing that.

But the root cause here is because of all the coupling, which directly relates to the comment, “You don’t deploy anymore. You synchronize a fleet.” No, that’s because of coupling.

More specifically, what people feel the pain of is temporal coupling.

If you were in your monolith and you had the same type of degree of coupling, you might not feel as much pain, but that coupling is still there and the pain is still there. It’s just hidden.

When you introduce that network boundary, it just exposed it. Because now you have all the distributed nature of HTTP, gRPC, whatever, however you’re distributing over the network. Retries, latency, it’s just exposing it all of a sudden. But the mess was already there.

“You Are Forced to Define APIs Before You Understand Your Own Business”

Here’s what I think is one of the most important parts of this post.

“You are forced to define APIs before you understand your own business.”

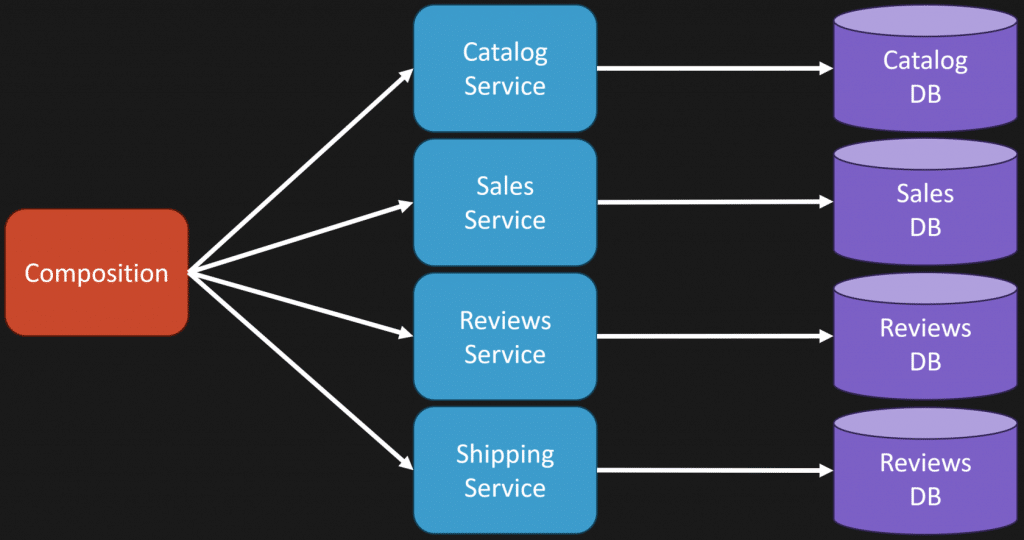

If you’re starting to build a system and you don’t really understand yet what the domain is, what the business is, I always say defining logical boundaries or services are one of the most important things to do, but one of the most difficult things to do.

You really need to understand the domain and how the interactions are going to work, because you do not want a high degree of coupling. You want your logical boundaries to be as autonomous as you possibly can be.

They’re often little workflows, a part of bigger workflows. There shouldn’t be a mess of coupling between boundaries.

Typically that happens because you’re more focused on the technical aspect than you are about the actual business behaviors and capabilities of your system.

So while I agree that jumping into microservices and defining network boundaries immediately, that’s going to be much more difficult because it’s harder to refactor. I think everybody can agree on that.

So yes, being in a monolith first, when you don’t understand and you’re trying to mold what the logical boundaries are, yes, it’s going to be easier because it’s easier to refactor.

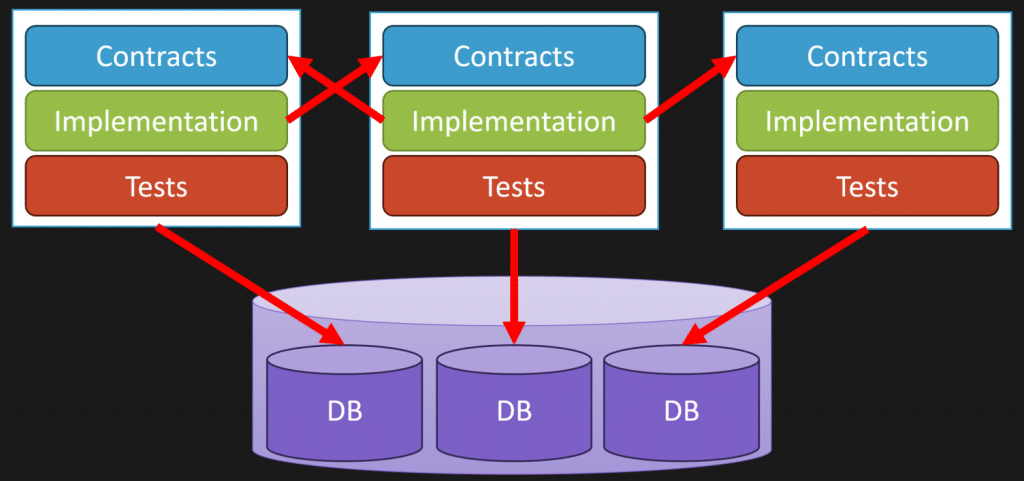

Which gets to what I like to call the loosely coupled monolith.

The Loosely Coupled Monolith

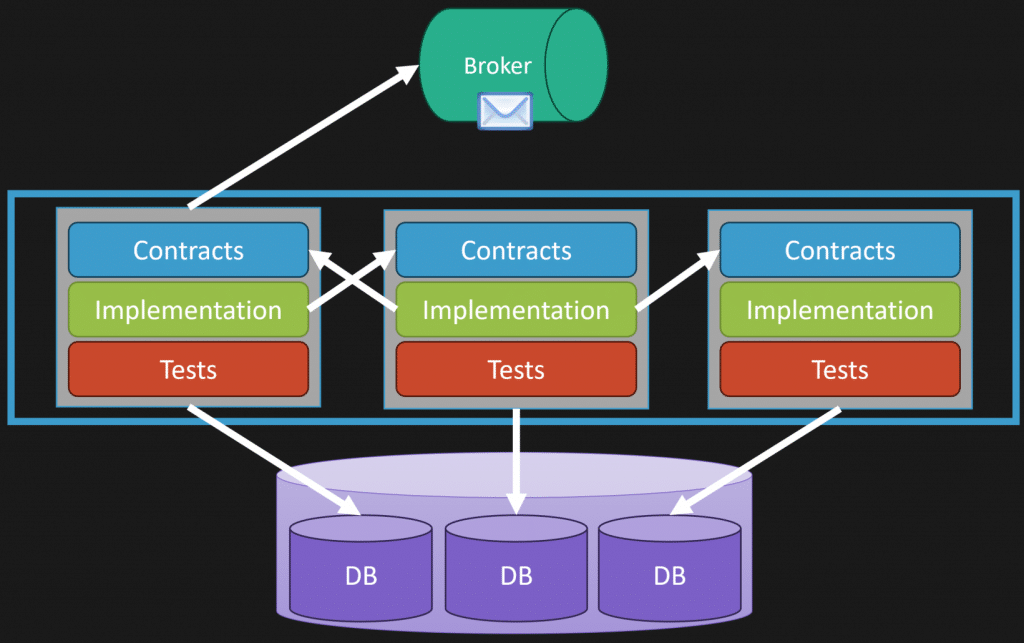

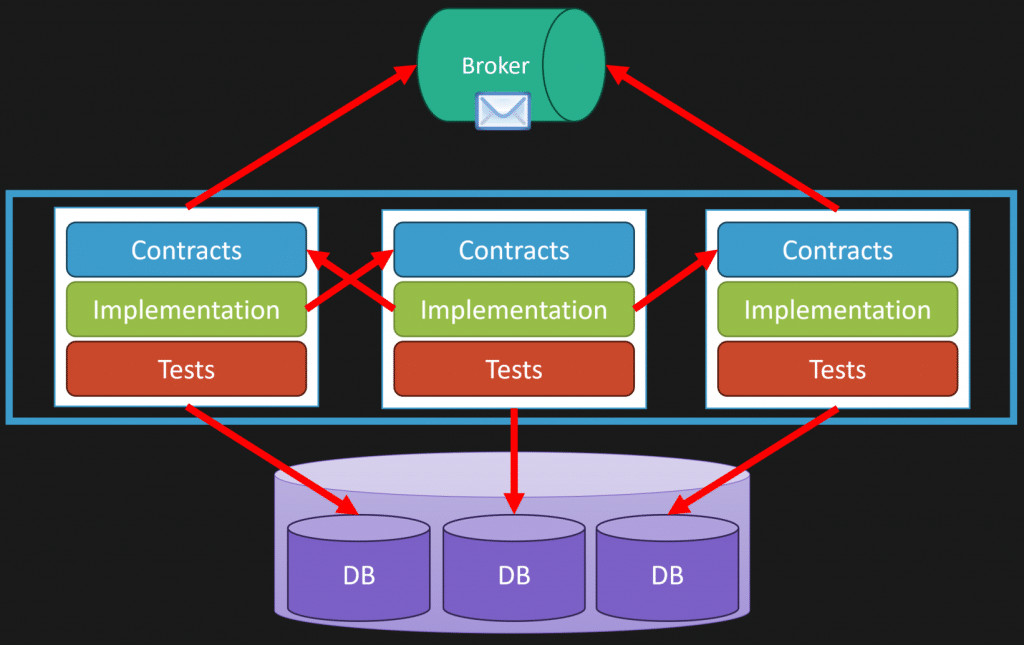

If we think about three different logical boundaries that have contracts, things like messages or potentially interfaces, implementation tests, we can see with my database here, maybe I have one database instance, but within that I have schemas that are specifically owned by a logical boundary.

It’s not a free for all of any logical boundary accessing data from another.

More specifically, what happens then is all your interactions, because of workflows, are done asynchronously via messaging, if you can.

That way we can see, if I’m in a monolith, I have all three deployed together. There’s absolutely nothing stopping you from carving one of them off and making it individually deployable because maybe it has a different cadence of what you want to release. The others can be separate.

You start it off as a monolith. You discover what your boundaries are. And because you might have the need and enough value to make it independently deployable, you can.

So, as long as again, the trade off and the cost is worth it. But that’s specifically because you need something independently deployable, possibly scalable.

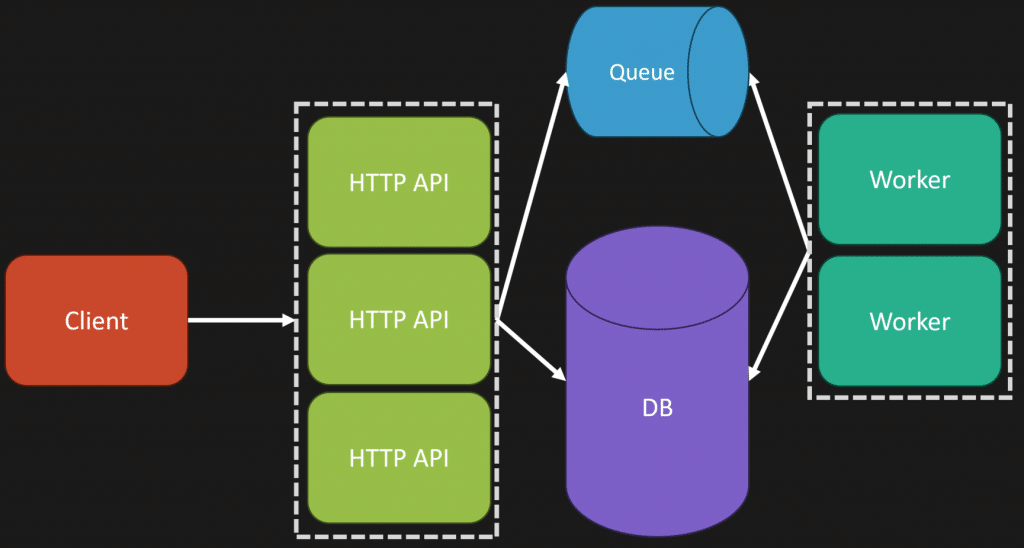

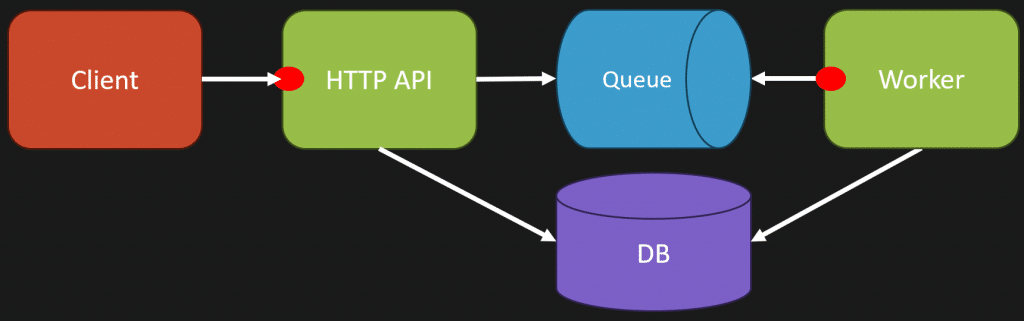

“Monoliths Don’t Scale” Is Not Real

“The claim that monoliths don’t scale is one of the dumbest lies in modern engineering folklore.”

I agree.

And the simplest example of this is with the web queue worker pattern.

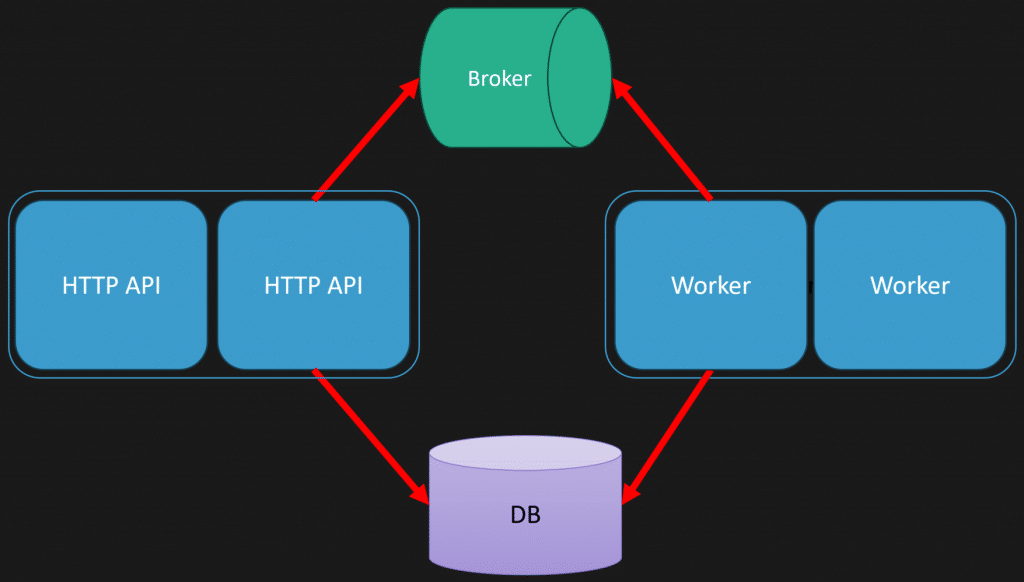

Going back to when I said logical isn’t physical, you can have more than one entry point, or one executable, or one deployable unit, even in your monolith.

In my example here, I have one that’s our HTTP API, could be sitting behind a load balancer, and we’re scaling that out.

But I also have the exact same codebase, but instead its entry point is actually listening to a queue, a message broker, an event log, and performing work asynchronously.

Now, on our database side, you could scale that up. You could scale that out depending on what type of database you’re using, or introducing read replicas.

But there’s so many different ways that you can scale a monolith.

Jumping to independent deployability isn’t necessarily the first thing you need to do for scale.

Stop Making This About Microservices vs Monoliths

Now, while I agree with a lot of what he wrote, I think it’s kind of silly that we’re still even talking about this this way.

This isn’t “microservices good” or “microservices bad” in a small team or whatever context. How about we start talking about the actual underlying issues here.

Adding physical boundaries has a cost. That’s what he was describing. Is the cost worth it? Well, you need to understand what the actual trade-offs are and what the value is.

I think we need to get totally beyond this, because fundamentally, at the root of almost all of this is poor design and poor coupling.

Even if you decided, I’m going to go all in on microservices, and let’s say you lived in an existing system and you knew what those logical boundaries should be, if you designed it correctly, you would not experience the pain of “I have to navigate all these different services to understand this end to end flow and I don’t get this context.”

You wouldn’t have that problem because your services are contained to actually what they do. They’re a part of a workflow. Are they part of a larger workflow. Yes. Would you have all this temporal coupling everywhere like a spaghetti hot distributed mess? No, you wouldn’t.

We’re talking about what people are implementing and how they’re doing it poorly as being like, let’s not do this because people are doing it poorly.

That’s not the case.

Manage coupling and understand when that network boundary is worth it.

Join CodeOpinon!

Developer-level members of my Patreon or YouTube channel get access to a private Discord server to chat with other developers about Software Architecture and Design and access to source code for any working demo application I post on my blog or YouTube. Check out my Patreon or YouTube Membership for more info.

Related Links

- Loosely Coupled Monolith

- Aggregates in DDD: Model Rules, Not Relationships

- All Our Aggregates Are Wrong

The post Why “Microservices” Debates Miss the Point appeared first on CodeOpinion.

]]>The post Aggregates in DDD: Model Rules, Not Relationships appeared first on CodeOpinion.

]]>In a recent video I did about Domain-Driven Design Misconceptions, there was a comment that turned into a great thread that I want to highlight. Specifically, somebody left a comment about their problem with Aggregates in DDD.

Their example: if you have a chat, it has millions of messages. If you have a user, it has millions of friends, etc. It’s impossible to make an aggregate big enough to load into memory and enforce invariants.

So the example I’m going to use in this post is the rule: a group chat cannot have more than 100,000 members.

The assumption here is that aggregates need to hold all the information. They need to know about all the users. But that’s not what aggregates are for!

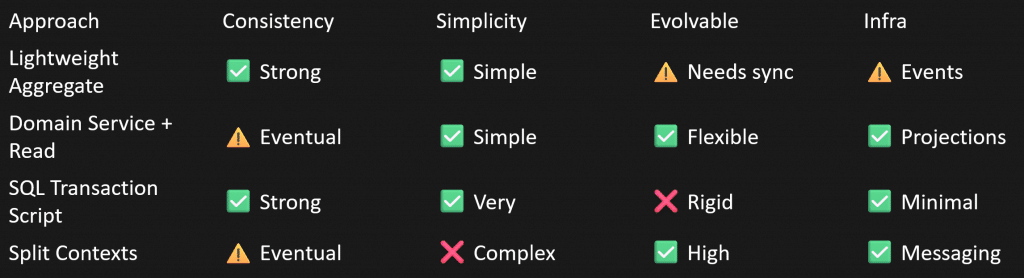

I’m going to show four different options for how you can model this. One of them is not using an aggregate at all. And, of course, the trade-offs with each approach.

YouTube

Check out my YouTube channel, where I post all kinds of content on Software Architecture & Design, including this video showing everything in this post.

The Common Starting Point (and the Trap)

So this is how people often start with aggregates in DDD, which is directly what that comment was talking about. Say we have a GroupChat class. This is our aggregate. We’re defining our max number of members as 100,000. And then we have this list, this collection of all the members, all the users associated to this group chat.

Now, this user could itself be pretty heavy in terms of username, email address, a bunch of other information, and maybe some relationships with it.

Then, for our method to add a new member, all we’re doing is checking to make sure we’re not exceeding 100,000, and then we throw.

This is where people start. But here’s the problem with it.

It may feel intuitive, but it’s a trap. It’s a trap because you’re querying and pulling all that data from your database into memory to enforce a very simple rule.

The big mistake here is: we’re modeling relationships, not the rules.

We’re building up this object graph rather than modeling behaviors.

Option 1: Store Only the Count

An alternative is to just record the number of members of the group chat. That’s actually the rule we’re trying to enforce. We don’t need to know who is associated to the group chat. We don’t need to know which users, just the total number so we can enforce the rule.

The obvious benefit is we solved the problem: we don’t have to load all those users into memory. This is going to be very fast.

The trade-off is if you do need to track which users are part of which group, you’ll have to model that separately.

Option 2: Enforce the Rule Above the Aggregate

Another option, if you feel storing a count is too risky because it could get out of sync, and you’re already recording which users are associated to which group, is to push the invariant up a layer, above the aggregate, into some type of application request or application layer.

Here I’m using some kind of read model or projection to get the number of users. Because it’s a projection, it could be stale. That’s the trade-off. Then we enforce the invariant there. If we pass, we add the user to the group chat.

A fair argument here is: “Well, really? We have some aggregates enforcing invariants, some application or service layer enforcing invariants, everything scattered everywhere.” But reality is: you have to enforce rules where you can do so reliably, not where it always feels clean and tidy in some centralized place. That’s not reality.

An aggregate can only enforce a rule if it has all the data it needs. And often your application or service layer isn’t just a pass-through. It shouldn’t be. It’s doing orchestration, gathering information and deciding whether a command should be executed.

Option 3: No Aggregate At All (Transaction Script)

This might sound surprising, but you don’t actually need an aggregate at all. Sometimes I advocate for using transaction scripts when they fit best.

That’s what I’m doing here: start a transaction. Set the right isolation level. Interact with the database. Do a SELECT COUNT(*). That’s going to be very fast with the right index. Lock if needed. Check the invariant. Insert the new record. Commit the transaction.

Simple.

Sometimes a simple problem just needs a simple solution, and a transaction script is very valid.

The trade-off here is if you’re in a domain with a lot of complexity and a lot of rules, this can get out of hand and hard to manage.

Option 4: Model Rules, Not Relationships

Another option I mentioned earlier is: stop focusing on relationships and focus on the actual rule.

What makes us say the group chat is the one that needs to enforce the rule? Maybe there’s actually the concept of group membership, and group chat is about handling messages. These have different responsibilities.

That’s really what I want to emphasize: you don’t need one model to rule them all. You can enforce something in one place and something else somewhere else. You can have a group membership component enforcing whether you can join, and group chat is just about messages.

There are all kinds of approaches you can take, and they all have different trade-offs. Given the rule and how you’re modeling, pick what fits. It does not need to be an aggregate just because dogma says so.

Maybe it’s a transaction script. Maybe it’s an aggregate. Use what fits best.

When you’re modeling something like the group chat example, start with the rule. Ask yourself: Where can I reliably and efficiently enforce this rule?

Not: “How can I convert this schema into my object model?”

Too long didn’t read/watch: model rules, not relationships.

Join CodeOpinon!

Developer-level members of my Patreon or YouTube channel get access to a private Discord server to chat with other developers about Software Architecture and Design and access to source code for any working demo application I post on my blog or YouTube. Check out my Patreon or YouTube Membership for more info.

Related Links

- Minimal APIs, CQRS, DDD… Or Just Use Controllers?

- Domain-Driven Design Misconceptions

- All Our Aggregates Are Wrong

The post Aggregates in DDD: Model Rules, Not Relationships appeared first on CodeOpinion.

]]>The post Domain-Driven Design Misconceptions appeared first on CodeOpinion.

]]>Domain-Driven Design misconceptions often come from treating DDD like a checklist of patterns. Have you ever looked into Domain-Driven Design and thought, “Wow, this is totally overkill”? Well, you’re not alone. And I kind of agree, but not for the reasons you might think.

I say you’re not alone because of this meme I did a video about that keeps giving. Somebody replied, “Learning .NET DDD sent me back to learning MVC. It’s so stressful.” I was kind of confused by this, and then somebody else was as well, saying, “What is the connection from DDD to MVC? It’s design patterns, I think.”

And there’s the smoking gun.

YouTube

Check out my YouTube channel, where I post all kinds of content on Software Architecture & Design, including this video showing everything in this post.

I had a feeling this was going to happen, although I thought maybe, hey, it’s 2025 and we’ve gotten past this now. But clearly not, because a lot of people still think it’s about a checklist. A checklist of patterns you have to apply rather than it simply being a matter of understanding the domain, understanding the business, and modeling it.

The Code We’ve All Seen

Tell me you haven’t run into this.

I’m using the example of a shipment. We have this UpdateShipmentStatus command where we take a shipment ID and what the status is. That’s probably invoked from some MVC controller or endpoint.

Then we have this handler that’s invoked where we pass that command in. What are we doing here? Oh, there’s a repository where we’re getting the shipment. Then we call UpdateStatus and save it.

Let’s take a look at what UpdateStatus does.

Almost nothing. Really just changing the property.

Tell me you haven’t seen this before.

I’ll give you another example you can probably relate to.

Let’s say we have a Customer aggregate. It’s the aggregate root. It’s a domain entity. It has relationships to the order history, the addresses, maybe when you’re looking at this aggregate it also publishes domain events.

Pretty impressive, right?

It’s using all the DDD lingo. You’re looking at this code. It has the relationships. Sounds great.

Not really.

Because it’s not capturing any business logic. Any behavior at all. What it’s really doing is just capturing structure of data. That’s it.

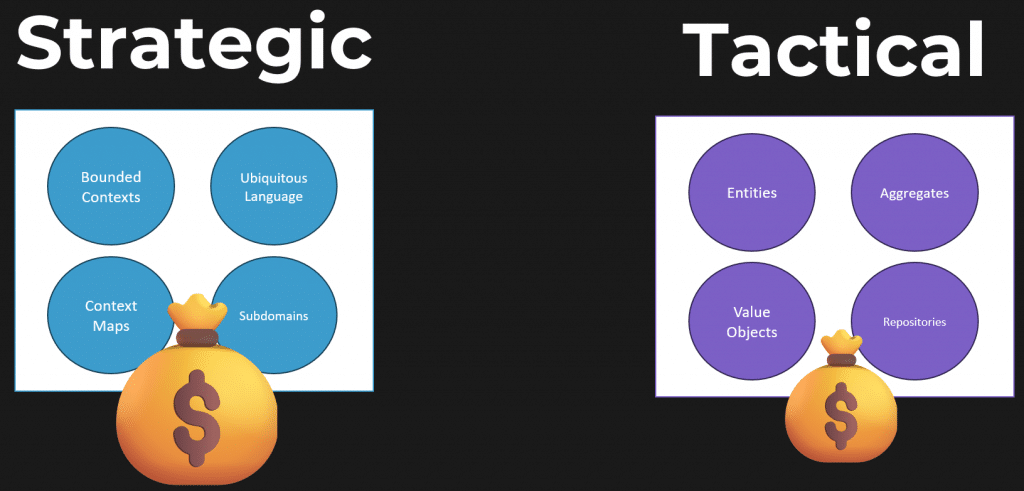

Domain design is not about design patterns. But a lot of people think it is. Which ties back to why people think it’s complicated. They think they have a checklist of patterns, entities, aggregates, value objects, repositories, shared kernel, all these things they read about, thinking, “I need all this stuff to apply DDD.”

And guess what?

You probably aren’t in a domain that even warrants it.

That’s why it seems complicated. Like my code example that needed none of those patterns.

It’s not about design patterns. It’s about the language you use within that domain, the workflows involved, the business logic, and the domain rules.

It’s Called Domain Driven Design

What I’m about to say may sound ridiculous, but take a step back for a second.

Domain Driven Design.

Not pattern driven design.

Not aggregate repository driven design.

Domain. Driven. Design.

It’s in the title. What do you think you’d actually be focusing on?

Probably the domain.

Domain-Driven Design misconceptions have a lot to do with the content published online. To be fair, a lot of the content published around DDD is getting. It doesn’t focus only on the tactical. It talks about the strategic, the stuff I’m talking about: bounded contexts, ubiquitous language, subdomains, context maps. All that.

But people latch onto the tactical. They see entities, aggregates, value objects, and want to disregard everything else and just focus on patterns.

Where DDD Actually Shines

Where DDD shines, in my opinion, is around complexity of a domain, specifically workflows.

Let me give you a simple example in the shipment world.

There’s a whole workflow and lifecycle that a shipment might go through. There’s a business process:

- The order is dispatched.

- The truck or vehicle doing the pickup arrives at the shipper.

- The freight is loaded onto the vehicle.

- It departs from the shipper.

- It arrives at the consignee.

- It’s unloaded and delivered.

I’m simplifying this, but you can imagine multiple shipments for multiple pickups with multiple deliveries, where things are split, part of the freight goes here, another part goes there. It can get very complicated. But there’s a lifecycle. There’s a workflow.

This is where DDD shines.

And you’ll notice in that workflow, I was using language that’s very domain-specific that anyone in it would understand. It wasn’t “update shipment,” like my initial code example where I was just setting a status. It was specific about events and actions that actually occurred.

That’s what DDD is for: capturing decisions, transitions, rules.