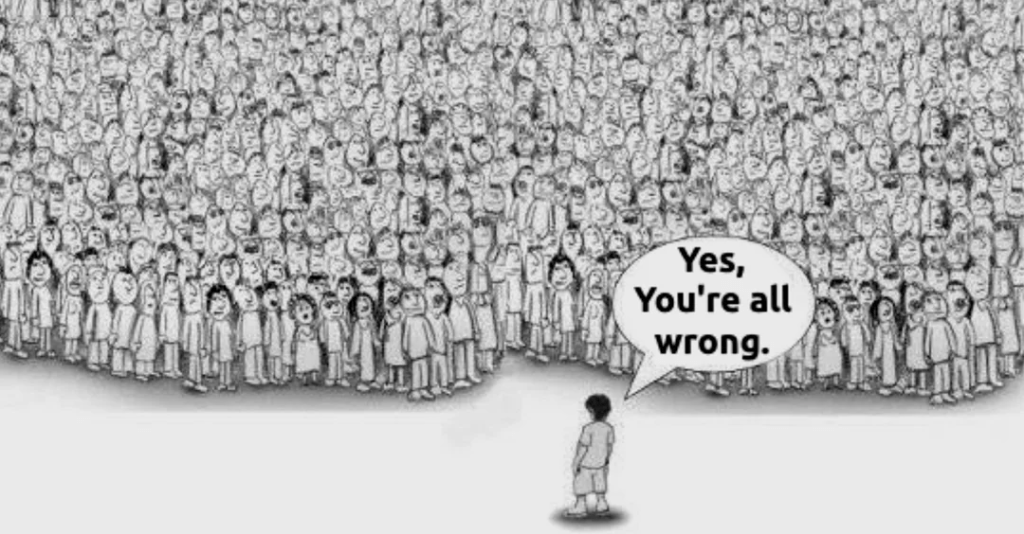

Ever wonder how someone asks you for your opinion on something, and you immediately give your opinion as Good or Bad as if no other option exists?

We are conditioned all our lives to think in the terms of Good and Bad, Right and Wrong, Black and White. Along the way, we forgot that we can be neither of them – The Neutral.

Let’s take a very common example of someone you know ranting about what happened between them and the other person, and asks you what you think about the other person doing that to them. Immediately, you go to the conclusion of whether that is right or wrong based on what information is provided. Mostly, we go with the feedback based on what your friend comes up with. If they are upset and complain, we usually take their side and try to join them in their anger toward the other person. If the same person have a good feedback, we echo that as well. We join them and praise the other person as good. In this whole situation, we are not the first-hand witnesses who actually saw this in person, but, without realizing it, we unconsciously take our friend’s side, because they are, of course, our friend. We should be supporting them after all, right?

These types of incidents happen to me many times, and in the past, I was placed in a spot where I had to respond. Do I like responding to such situations? Hell, no, but in many situations, I almost unconsciously nodded my head, followed along, and supported them, not knowing the whole story. There are chances that we may develop the hate towards the other person as well, believing one side of the story as fact. All of this happens unconsciously.

Now, what can go wrong in these type of situations?

You are unconsciously being conditioned through other people’s opinions. Even the person who rants about others doesn’t know that they are creating this negativity. They do it unconsciously as well. Other people’s opinions don’t have to be your reality.

This is just a single example I explained here. There will be numerous incidents like these happening to us every single day. If anyone puts you in a spot where you need to answer yes or no or give you a set number of choices to choose from, you do not have to oblige to give the answer in between those choices. You always have another choice – to stay Neutral. To tell them that you do not have an opinion.

I learnt this lesson the hard way in my life. Something very insignificant-looking can make a huge impact.

So, the next time you are asked for your opinions, remember you also have another option to choose- Being Neutral! Do not feel uncomfortable about telling that you don’t have an opinion. This will only help you and protect your peace.

That’s all from me today. Thank you for reading!