Visualizing Spatial Data – Show locations with a PushPin

March 19, 2013 1 Comment

After my last two posts (Creating a spatial report, Create an interactive report), I came across a feature I never saw before. Apparently if you add a point layer to your report, you get different layer-options in the list.

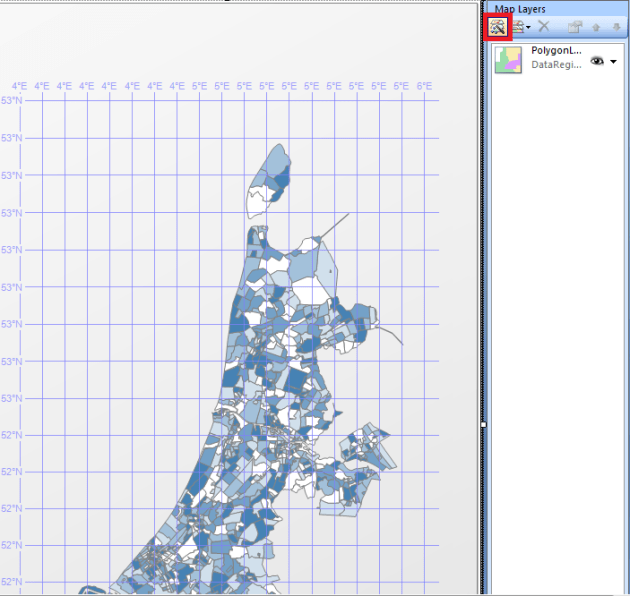

Starting with a single layer

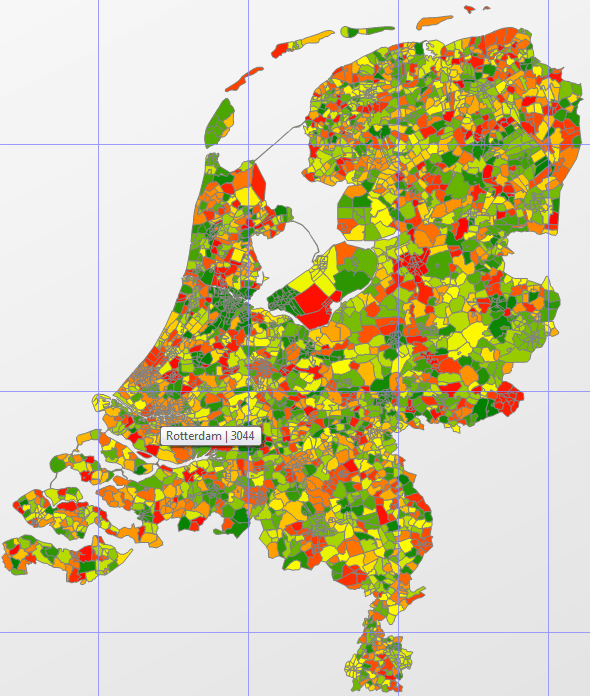

To start off easy, you could use the same steps I used to create my first report. This is a single layer report with a full map of (in this case) the Netherlands.

The next step is adding a few pushpins. How about visualizing the three biggest cities in the Netherlands: Amsterdam (790.000 people), Rotterdam (616.000) and Den Haag (The Hague, 502.000).

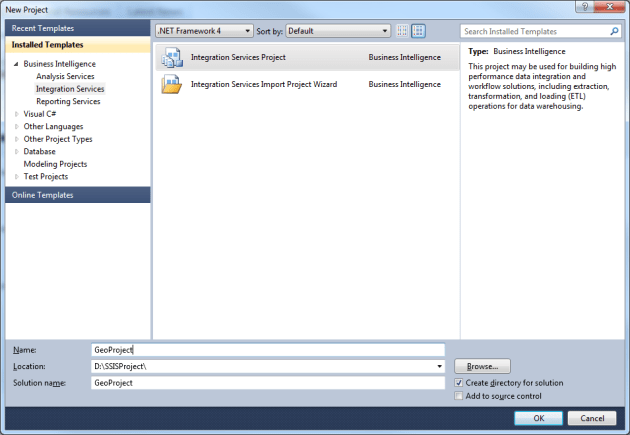

Creating a Point layer

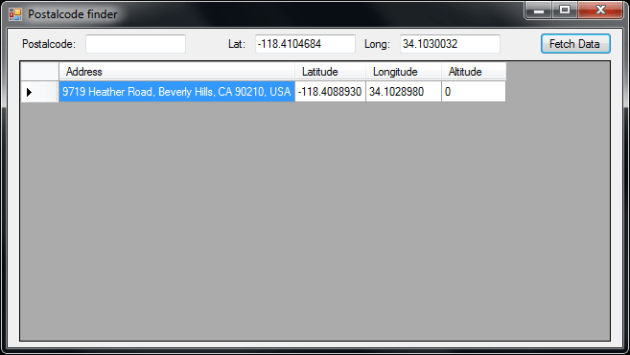

Before creating a point layer, you need to determine what data you want to present to your users. In my case, I chose the center of each city. This might not be the actual center, but I tried to get as close to the center as I could.

Now that you have your data, you can create the Point layer in your report. Start by double-clicking on your map control, and in the “Map Layers” window, click on “New layer wizard:

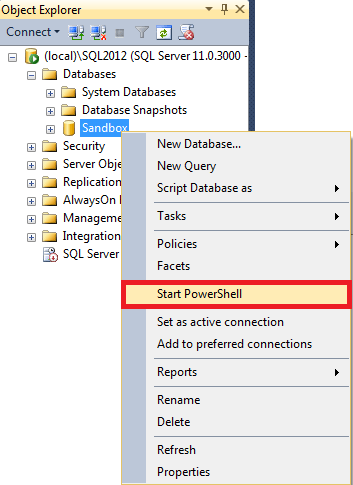

Now choose the bottom option “SQL Server spatial query”, and click next.

Choose the bottom option to add a new dataset to your report, and click next.

Click next to choose the datasource that’s already in your report, and click next.

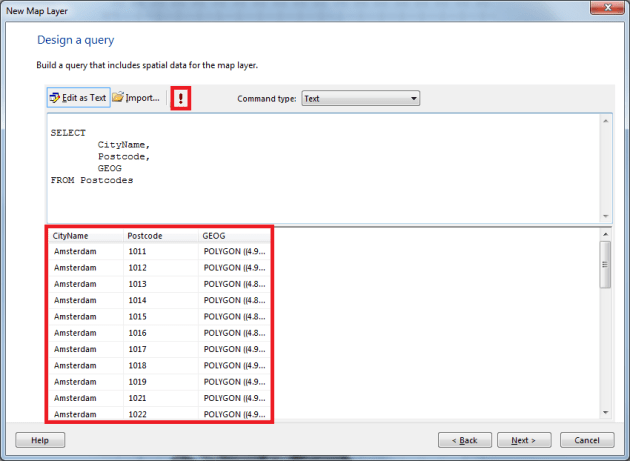

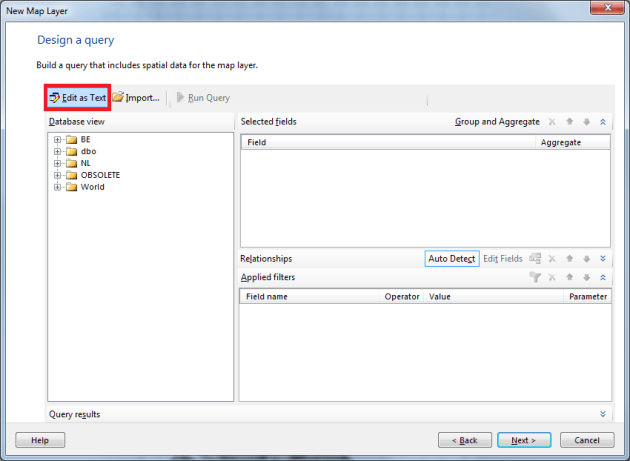

Now click on the option “Edit as Text” in the query designer:

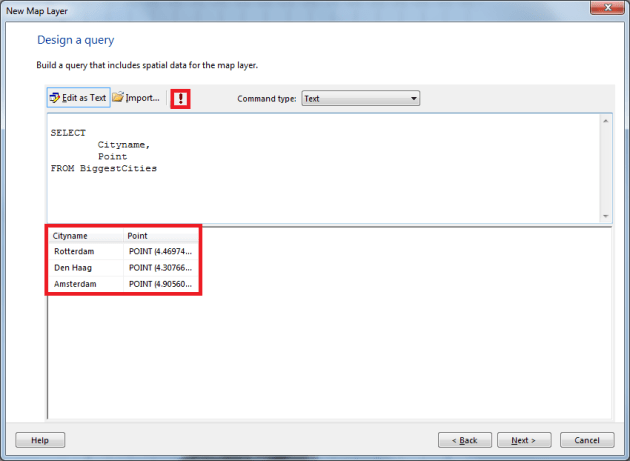

Type or paste your query in the editor, and press the Run button. Once you’ve done that, you’ll see the result in the bottom window:

In the next window, make sure you add a Point layer, and not a Polygon layer:

Now you will see the new layers I mentioned before:

For this report, choose the bottom one: Analytical Marker Map, and click next.

In the next window, select the dataset that contains your points, and click next.

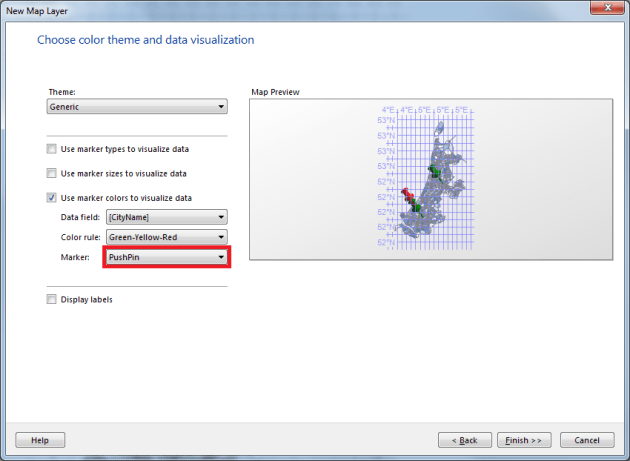

In the window with options, make sure you select the right type of marker (in this case PushPin):

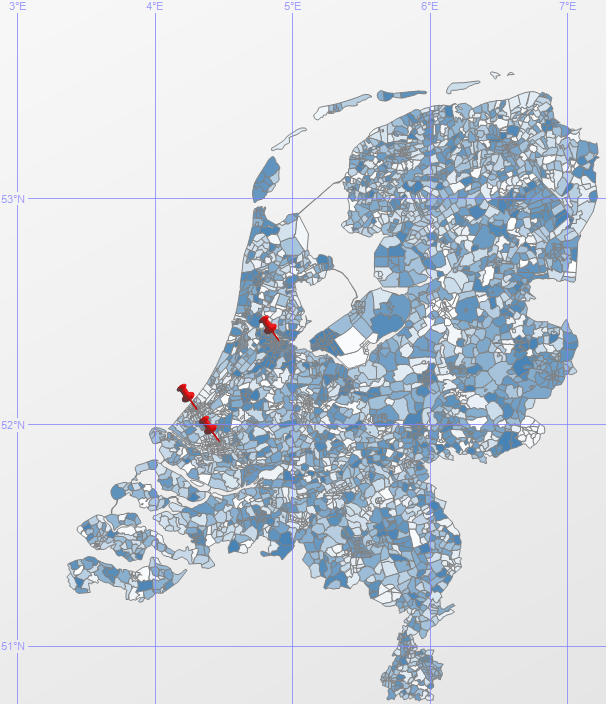

Once you’ve done that, click Finish. Now you see a double layer map with 3 PinPoint on your map:

Using a custom PinPoint

I can imagine that you might want to use your own PinPoint image on your report. This is possible, but remember this might screw up the readability of your report.

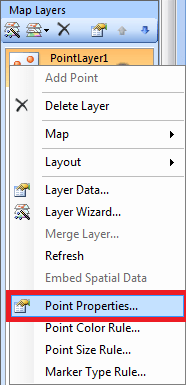

In the Map Layers menu, right click your point layer, and click Point Properties:

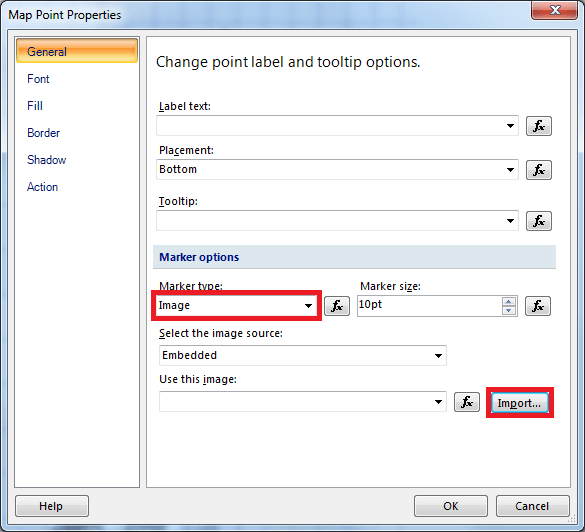

Now change your marker type from PinPoint to Image, and click Import:

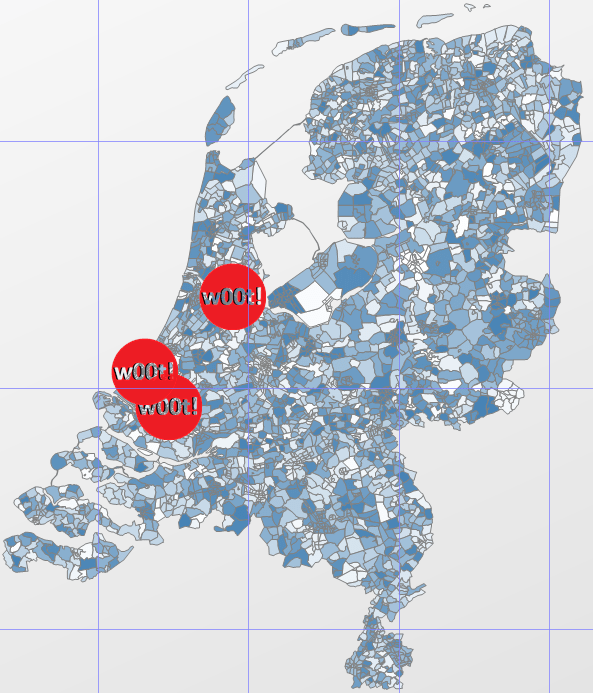

Now if you select an image, the name of your image will show in the “Use this image” text box. If you want to, change the “Marker size”, so your logo will appear larger on the map. Now if you click OK, and run the report, you’ll see something like this:

And if you can’t resist, you can download this EPIC marker right here 😉