Redgate’s DLM Dashboard: The awesome continues

April 7, 2015 Leave a comment

The artist formerly known as SQL Lighthouse, underwent a HUGE overhaul, and now goes by the name DLM Dashboard. This tool allows you to: “monitor your database schemas and alerts you to schema changes, including database drift”. And even though I find Redgate’s tools awesome, I’m still going to be brutally honest in this blog (and I know they appreciate that!).

In the second half of this blog post, there is also a Q&A with a Product Marketer of Redgate. In that Q&A, he answers a few open questions that will clarify the product and the future of this product.

Download and install

When you download and install the Redgate DLM Dashboard, you get a number of things. The most important things the installation folder (C:\Program Files (x86)\Red Gate\DLM Dashboard 1) contains are a webserver and a monitoring service. These are used to host the webinterface of the DLM Dashboard, and the service needed for monitoring your environments and databases. There are also 3 Windows services installed: “DLM Dashboard Monitoring Service”, “DLM Dashboard Storage Service” and “DLM Dashboard Web Server”.

Configuring your environment

After the installation, the DLM Dashboard is started:

When you click on “Set up DLM Dashboard”, you are redirected to the configuration page. First you need to add a project:

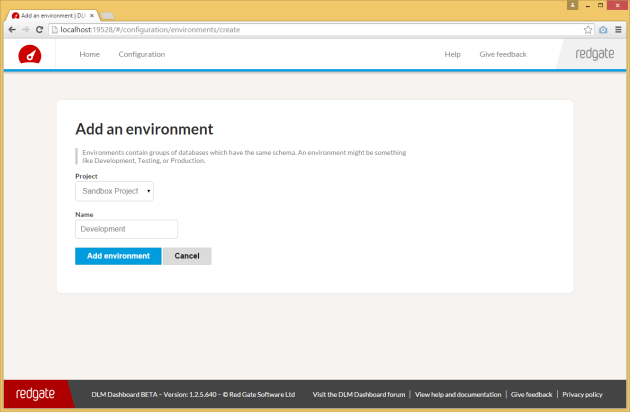

I created a Sandbox project, in which eventually all my environments will be configured. So my project will contain my 4 environments (DTAP). DTAP stands for Development, Test, Acceptance and Production. More on DTAP can be found here. In this test, I’m going to start with configuring a Development environment:

After adding that, you need to add a server:

I’m using the sa account to connect to this server, because it’s on a stand-alone server. If you start using the DLM Dashboard (or any other software), it’s bad practice to use the sa account. So if you want to use this in your environment, don’t use the sa account!!!

In the next screen you see, you get the question if you want to run the monitoring script yourself, or if you want the DLM Dashboard to run it:

When the script completes, you end up with a new database on the server that you configured in the dashboard. The database is named Redgate, and the name of the database can’t be changed at this point. For further information on this, check out the Q&A at the bottom of this post.

This database contains only 1 table named “DDL_Events”, to store all the changes that happened in your database(s).

The DLM Dashboard also creates a server DDL-trigger called “RG_SQLLighthouse_DDLTrigger” (which can be found under “Server Objects” -> “Triggers” in SSMS). This trigger registers all the changes on your database, and stores those changes in the Redgate database.

Now you need to combine all the configured items (Environment, Server and Database) in 1 last configuration:

I’ve executed these steps (configure an environment, add a server, and add a database) twice, to add a test environment as well. I’ve skipped the Acceptance and Production (which would complete the DTAP) in this test. So now my dashboard looks like this:

This is the default view when you just configured your databases. The schema of your database is basically a snapshot of your current database status.

Making changes, and marking versions

After you’ve configured the dashboard with all your environments (in my case 2 environment, just for testing purposes), it’s time to make some changes in your databases. Let’s add a table to the Sandbox database on development:

USE Sandbox GO CREATE TABLE dbo.TriggerSchemaChange (ID INT IDENTITY(1,1), Value VARCHAR(10))

If you wait for a few seconds (the dashboard will automatically refresh), you see that one of your databases is starting to “drift”:

If you click on “Review”, you get an overview of the changes that were made, and by who they were made:

In this case, I’ll tag this version as 1.0.0.1, and save that change. The dashboard changes, and the new version is shown. And if we execute the same change on the other database, the DLM Dashboard automatically recognizes the version:

You just need to acknowledge the “new” version on the test environment, just like on the development environment, and your dashboard looks all okay again:

The world ain’t all sunshine and rainbows…

Even though I think this is a wonderful way to keep an eye on your environment, there are still a few things that are missing in my opinion. I’ve asked Redgate these questions, and Andrew Denty (Blog | @andrewdenty), who is a Product Marketer at Redgate, was kind enough you answer these questions. This Q&A is published after getting permission from Andrew and Redgate. Thanks again Andrew!

1) What is the delay in tracking changes and refreshing the webpage, and can we change that?

The DDL trigger we install on the SQL Server puts entries into a table each time a database is changed.

Currently we poll the server every 13 seconds and query that table to see if there have been any changes.

This at the moment is not configurable, but should be as it is not a lot of work (and will probably be done before we leave beta). It’s also worth pointing out that you don’t need to hit refresh or anything – the dashboard live refreshes on its own.

2) Can we change the port number on which the webinterface runs (default 19528)?

Yes see http://documentation.red-gate.com/display/SL1/Changing+the+port+SQL+Lighthouse+uses

3) Can we change the database name (rename from RedGate to DBA for example), or log events to a dedicated database or server? And how does it react when the target server isn’t available?

At the moment no, the Redgate database is hard coded into the application, and is expected to be on the same server as the databases we are monitoring. The DDL trigger lives on the server so it always runs if the server is running. If DLM dashboard cannot reach the SQL server it will show you in the UI the server is not available. When it becomes available again DLM dashboard will check if there has been changes whilst it has been unavailable’.

4) Why do you use a DDL trigger, instead of Extended Events?

In SQL Lighthouse, because our DDL Trigger runs when a change happens, and crucially because it runs synchronously with the change, i.e. the change is temporarily blocked until we return. Therefore we not only get the information that we’re given, but we can also ask for other information, like the:

1. Accurate date it happened

2. The application that made the change

3. The IP address that made the change

4. The nest level, e.g. was this a change made directly, or did a change run a DDL Trigger + that DDL Trigger made this change

5. The transaction ID to help us group changes together

In contract, events run asynchronously, so by the time we see the message on the queue, the information above *may* still be queryable, but will probably not be available, and we can’t rely on it. The plus side of events is that they can’t break anything, because they run asynchronously, whereas if the DDL Trigger throws an error it’ll break what the user is doing.

In summary, we want the extra information so had to go with DDL Triggers.

5) The configuration limitations, are these just in the beta? The current configuration limitation is 5 database per environment

At the moment the beta isn’t designed to scale. We’re going to be working on improving the performance and scalability over the coming month. When we leave beta we’re planning to have a pro and the current free version. The pro version will allow you to monitor more instances and databases than the free version which will have some limitations. We’re still working out the exact details though.

6) Is there any SQLCompare integration planned? So for example, an overview of the differences between 2 environments (Development and Test), or syncing of 2 databases on different environments?

Yes, we’d like to add this to the Dashboard (assuming you have SQL Compare as well). We’d like to do this later this year– but we’re being agile and responding to feedback so we might change our plans and do something else first. Please do vote this up on UserVoiceas we’re using that to help us decide what to do next.

7) What is the plan on Octopus Deploy integration? We’re looking into that for automatic deploys, and it would be great to deploy a version from DLM Dashboard for example

Triggering deployments from the Dashboard isn’t something we have planned, though we do want to help be part of your release management process. I’d like us to be able to report back to Octopus if there has been drift away from the release it thinks is deployed (e.g .a hotfix has been made directly on production), so that you can get those changes back into process. Our SQL Releaseproduct (which is a database deployment plugin for Release Management tools like Octopus) may well use elements of the dashboard in the future.

Bugs

There are still a few bugs in the software:

– If you remove your project, the whole dashboard is empty, the database monitoring is still active, but you’re not asked if you want to disable/remove it completely

– If you remove your database, the database monitoring is still active, and Redgate database isn’t removed

– If you’re adding a column to a table, 2 columns are marked as changed (bugfix in progress)

– Cancel button that doesn’t work on the email notification screen (bugfix in progress)

– Can’t mark your initial version to a certain name (for example, tag it as version 1.0.0.0) (bugfix in progress)

– If your machine name is long, it’s trimmed in the review changes screen (bugfix in progress)

– Sometimes the webservice doesn’t start after a reboot of the machine, and you need to start it manually (bugfix in progress)

As mentioned, most of these bugs are already fixed, and ready for the next release. Redgate is really fast with picking up on bugs and fixing them. So if you find any bugs, please let them know!

Recap

Looking at the possibilities of the DLM Dashboard, and the version that is available now, I’m pretty confident this can become a great product! Redgate haven’t announced the differences between the free and paid versions yet, but there will always be a free version. Also, knowing Redgate, getting the paid version won’t be the biggest problem to overcome. Their licenses are pretty affordable, and the tools are indispensable if you ask me.

I think Redgate discovered a whole new market with this. They are jumping on the “release early, release often” train, and they do it quite well on the first try! I’ve never seen database professionals do this, in all the companies I’ve seen in the past. Only the .NET developers did that, and we all mocked them for it. But now, we’re starting to see a shift in the market, and Redgate is willing to help us on this. And for that, I want to thank the people of Redgate!