Link

Inspiration

The rise of social media has brought many benefits, but it has also led to an increase in harmful content, particularly hate speech. Witnessing the negative impact of such content on individuals and communities inspired us to create TikGuard. We wanted to leverage technology to foster safer online environments and protect users from harmful interactions. Our goal was to develop a tool that could accurately identify and assess the severity of hate speech, ensuring prompt action and creating a more inclusive digital space.

What it does

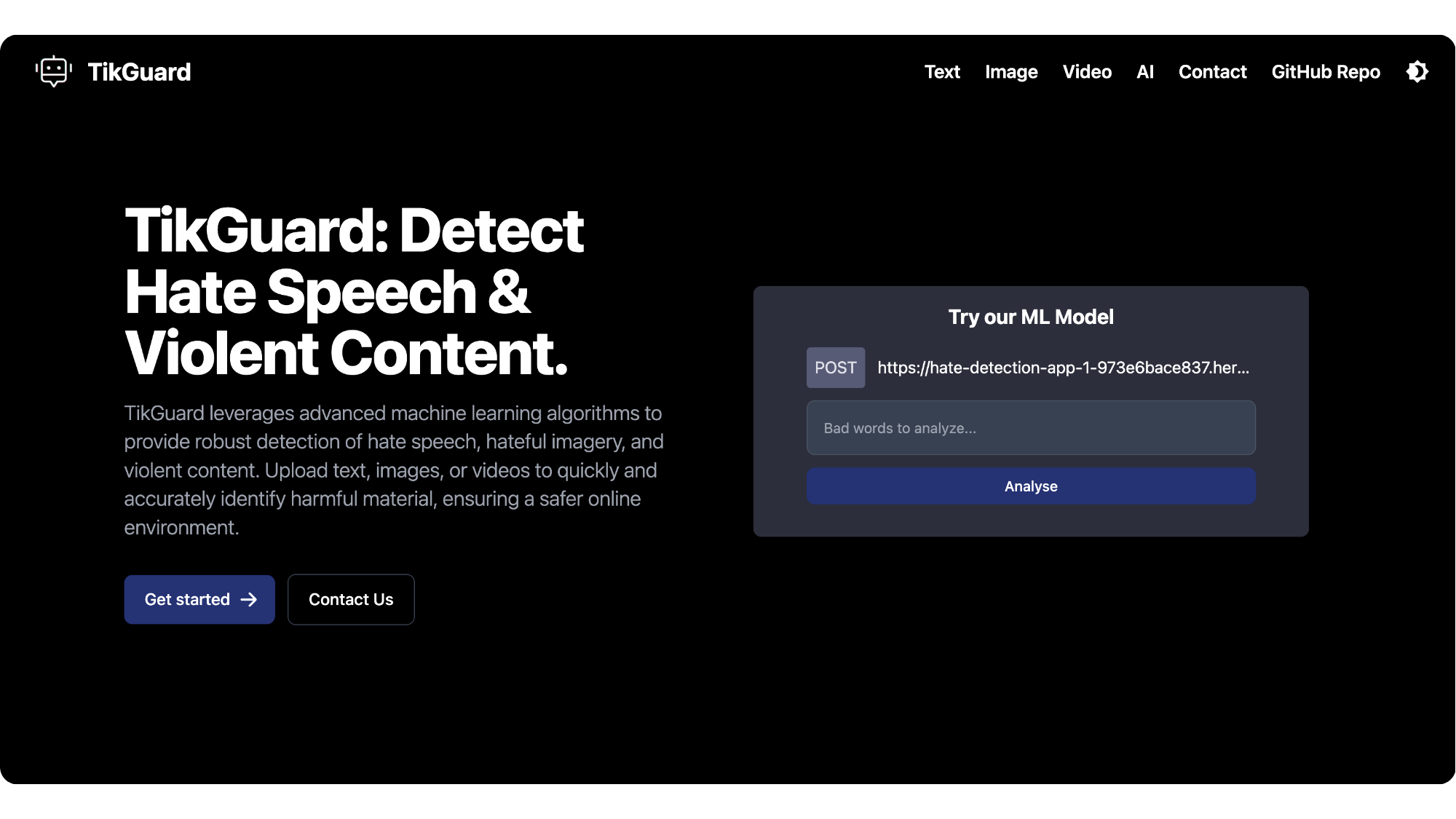

TikGuard is an advanced machine learning system designed to detect and evaluate the severity of hate speech and hateful content. It analyzes text in real-time, identifying harmful language and categorizing it based on severity. By providing detailed assessments, TikGuard enables moderators and automated systems to take appropriate actions, from warnings to content removal. Additionally, TikGuard integrates with various APIs and ML models to offer a comprehensive solution for online safety.

How we built it

Building TikGuard involved several key steps:

Machine Learning Model: We used Python with the Scikit-learn library to develop our machine learning model. This model was trained on a diverse dataset of text samples containing various degrees of hate speech and neutral content, ensuring robust detection and categorization capabilities.

Development Environment: We utilized Jupyter Notebook for data analysis, model training, and iterative refinement, allowing for interactive and efficient development.

Deployment: The model was deployed using Heroku, enabling scalable and reliable access to TikGuard’s functionalities.

Website Development: To demonstrate the ML model and other available APIs and models, we built a user-friendly website using Next.js. This site showcases TikGuard’s capabilities and allows the public to interact with the system, providing real-time demonstrations of its effectiveness.

Development Tools

- Scikit-Learn

- Nextjs

- Python

Challenges we ran into

Developing TikGuard was not without its challenges. One significant hurdle was the diversity of hate speech, which can vary widely in context, language, and subtlety. Ensuring the model could accurately detect and assess these variations required extensive training and continuous refinement. Additionally, balancing the sensitivity of the model to avoid false positives while maintaining high accuracy was a constant challenge.

Accomplishments that we're proud of

We are particularly proud of several accomplishments with TikGuard:

High Accuracy: Achieving a high level of accuracy in detecting and categorizing hate speech, ensuring reliable performance.

Scalability: Creating a system that can be easily integrated with various platforms, demonstrating its versatility and practicality.

Positive Impact: Receiving feedback from users and moderators about the effectiveness of TikGuard in creating safer online environments.

What we learned

Throughout the development of TikGuard, we learned the importance of continuous learning and adaptation. The landscape of online communication is constantly evolving, and staying ahead of new forms of hate speech requires ongoing monitoring and updates. We also gained valuable insights into the complexities of natural language processing and the ethical considerations involved in moderating online content.

What's next for TikGuard

Looking ahead, we plan to enhance TikGuard by expanding its capabilities to include more languages and dialects, ensuring global applicability. We also aim to improve the model's ability to understand context, further reducing false positives and increasing accuracy. Additionally, we are exploring partnerships with social media platforms and other online communities to implement TikGuard more widely, contributing to a safer and more inclusive internet for all.

Built With

- azure

- azurecontentmoderator

- azurecontentsafety

- emailjs

- expertai

- hatehoundapi

- nextjs

- python

- react

- scikit-learn

- sightengine

Log in or sign up for Devpost to join the conversation.