I have just completed a draft of the book. It is the March 4 version on my homepage. CLICK HERE TO DOWNLOAD. (If you downloaded already, check you don’t have the earlier version cached.) I would very much appreciate any feedback on the book, including suggestions on things to include, especially if they fit well and are not overly technical. Please feel completely free to suggest your own results.

After collecting feedback, I plan to do one more pass and then it’s off to the press, so speak now ;-)

Here is the table of contents in case you want to know what the book is about before downloading:

0 Introduction

0.1 Teasers

0.1.1 Computing with three bits of memory

0.1.2 Randomness and derandomization

0.1.3 Proofs and delegating computation

0.1.4 Changing base losing no time and no space

0.2 Notation

0.3 Contents and comparisons

0.4 How to use this book

0.5 Acknowledgments

1 Time

1.1 Word programs

1.2 Complexity classes

1.3 You don’t need much to have it all

1.4 Composing programs

1.5 Universal programs

1.6 The fastest algorithm for Factoring

1.7 On the word size

1.7.1 Factoring with large words

1.8 The grand challenge

1.8.1 Undecidability and diagonalization

1.8.2 The hierarchy of Time

1.9 Problems

1.10 Notes

2 Circuits

2.1 The grand challenge for circuits

2.2 Circuits vs. Time

2.3 Cosmological, non-asymptotic impossibility

2.4 Problems

2.5 Notes

3 Randomness

3.1 Error reduction for one-sided algorithm

3.2 Error reduction for BPTime

3.3 The power of randomness

3.3.1 Verifying matrix multiplication

3.3.2 Checking if a circuit represents zero

3.4 Does randomness really buy time?

3.5 The hierarchy of BPTime

3.6 Problems

3.7 Notes

4 Reductions

4.1 Types of reductions

4.2 Multiplication

4.3 3Sum

4.4 Satisfiability

4.4.1 3Sat to Clique

4.4.2 3Sat to Subset-Sum

4.4.3 3Sat to 3Color

4.4.4 More

4.5 Power hardness from SETH

4.6 Search problems

4.7 Gap-Sat: The PCP theorem

4.8 Problems

4.9 Notes

5 Nondeterminism

5.1 Nondeterministic computation

5.2 Completeness

5.3 From programs to 3Sat in quasi-linear time

5.3.1 Efficient sorting circuits

5.4 Power from completeness

5.4.1 Max-3Sat

5.4.2 NP is as easy as detecting unique solutions

5.5 Alternation

5.5.1 Does the hierarchy collapse?

5.6 Problems

5.7 Notes

6 Space

6.1 Branching programs

6.2 The power of L

6.2.1 Arithmetic

6.2.2 Graphs

6.2.3 Linear algebra

6.3 Checkpoints

6.4 The grand challenge for space

6.5 Randomness

6.6 Reductions

6.6.1 P vs. PSpace

6.6.2 L vs. P

6.7 Nondeterministic space

6.8 An impossibility result for 3Sat

6.9 TiSp

6.10 Computing with a full memory: Catalytic space

6.11 Problems

6.12 Notes

7 Depth

7.1 Depth vs space

7.2 The power of NC2: Linear algebra

7.3 Formulae

7.3.1 The grand challenge for formulae

7.4 The power of NC1: Arithmetic

7.5 Computing with 3 bits of memory

7.6 Group programs

7.7 The power NC0: Cryptography

7.8 Word circuits

7.8.1 Simulating circuits with square-root space

7.9 Uniformity

7.10 Problems

7.11 Notes

8 Majority

8.1 The power of TC0: Arithmetic

8.2 Neural networks

8.3 Amplifying lower bounds by means of self-reducibility

8.4 The power of Majority: Boosting correlation

8.5 Uniformity

8.6 Problems

8.7 Notes

9 Alternation

9.1 The polynomial method over F2

9.1.1 AC0 correlates with low-degree polynomials modulo 2

9.1.2 Using the correlation to show that Majority is hard

9.2 The polynomial method over R

9.2.1 AC0 correlates with low-degree real polynomials

9.2.2 Sign-Approximating Maj-AC0

9.2.3 Using the correlation to show impossibility for Maj-AC0

9.2.4 AC0 has small correlation with parity

9.3 Switching lemmas

9.3.1 Switching I

9.3.2 Switching II

9.3.3 Proof of switching II

9.4 AC0 vs L, NC1, and TC0

9.4.1 L

9.4.2 Linear-size log-depth

9.4.3 TC0

9.5 The power of AC0: Gap majority

9.5.1 Back to the PH

9.6 Mod 6

9.6.1 The power of ACC0

9.7 Impossibility results for ACC0

9.8 The power of AC0: sampling

9.9 Problems

9.10 Notes

10 Proofs

10.1 Static proofs

10.2 Zero-knowledge proofs

10.3 Interactive proofs

10.4 Delegating computation: Interactive proofs for muggles

10.4.1 Warm-up: Counting triangles

10.4.2 Delegating NC

10.5 Problems

10.6 Notes

11 Pseudorandomness

11.1 Basic PRGs

11.1.1 Local tests

11.1.2 Low-degree polynomials

11.1.3 Local tests, II

11.1.4 Local small bias

11.2 PH is a random low-degree polynomial

11.3 Pseudorandom generators from hard functions

11.3.1 Stretching correlation bounds: The bounded-intersection generator

11.3.2 Turning hardness into correlation bounds

11.3.3 Hard-core sets

11.3.4 Derandomizing the XOR lemma

11.3.5 Encoding the whole truth-table

11.3.6 Monotone amplification within NP

11.4 Finding the hard core

11.5 Cryptographic pseudorandom generators

11.5.1 AC0

11.5.2 Circuits

11.6 Problems

11.7 Notes

12 Expansion

12.1 Edge expansion

12.2 Spectral expansion

12.3 Undirected reachability in L

12.4 What do expanders fool?

12.5 On the proof of the PCP theorem

12.6 Problems

12.7 Notes

13 Communication

13.1 Two parties

13.1.1 The rectangle method and the Equality function

13.1.2 Rounds: Pointer chasing

13.1.3 Randomness

13.1.4 Arbitrary partition

13.2 Number-on-forehead

13.2.1 Generalized inner product

13.2.2 The power of logarithmic parties

13.2.3 Pointer chasing

13.3 Problems

13.4 Notes

14 Arithmetic

14.1 Linear transformations

14.1.1 The power of depth-2 xor circuits

14.2 Integers

14.3 Univariate polynomials

14.4 Multivariate polynomials

14.5 Depth reduction and completeness

14.6 Alternation

14.6.1 The power of depth 3

14.6.2 Impossibility results

14.7 Problems

14.8 Notes

15 Structures

15.1 Static

15.1.1 Succinct

15.1.2 Succincter

15.1.3 Impossibility results by ruling out samplers

15.2 Dynamic

15.3 Problems

15.4 Notes

16 Tapes

16.1 On the alphabet

16.1.1 The universal TM

16.2 Multi-tape machines

16.2.1 Time vs. TM-Time

16.3 TMs vs circuits

16.4 The grand challenge for TMs

16.4.1 Communication bottleneck: crossing sequences

16.5 TM-Time hierarchy

16.6 Randomness

16.7 Sub-logarithmic space

16.7.1 The power of sub-logarithmic space

16.8 Problems

16.9 Notes

17 Barriers

17.1 Black-box

17.2 Natural proofs

17.2.1 Examples of proofs that are natural

17.2.2 Ruling out natural proofs under popular conjectures

17.3 Problems

17.4 Notes

18 Speculation

18.1 Critique of common arguments for P ≠ NP

A Miscellanea

A.1 Logic

A.2 Integers

A.3 Sums

A.4 Inequalities

A.5 Probability theory

A.5.1 Deviation bounds for the sum of random variables

A.6 Squaring tricks

A.7 Duality

A.8 Groups

A.9 Fields

A.10 Linear algebra

A.11 Polynomials

A.12 Notes

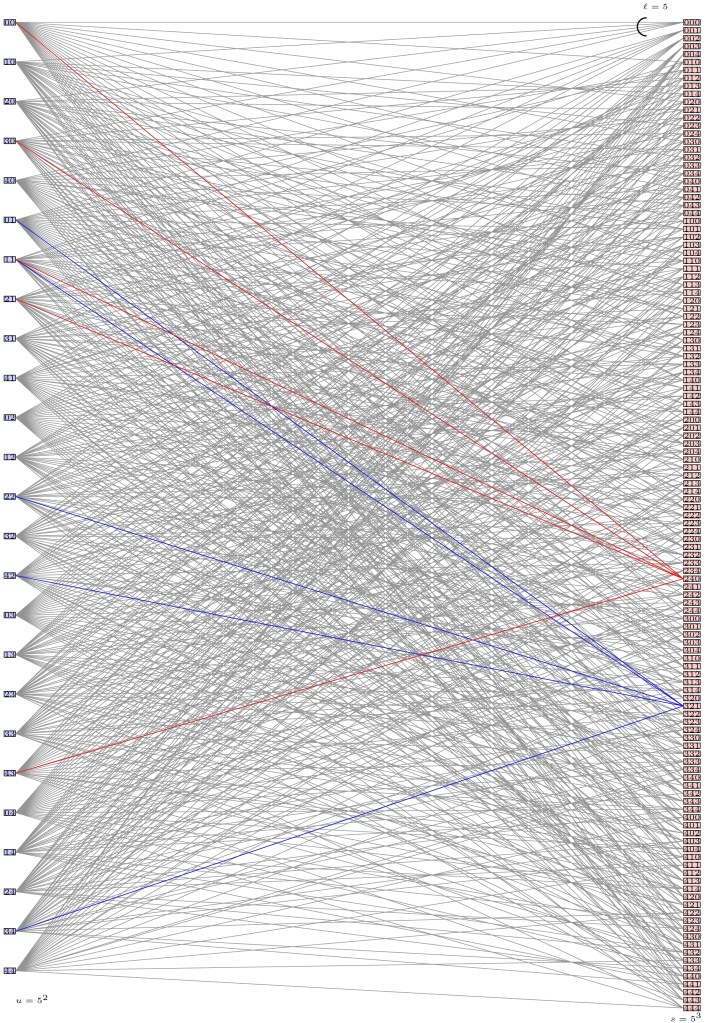

A Landscape

. This simulation was already known for 1-tape machines (did you know?), and it still isn’t known for RAMs (=Time). The result for 2 tapes is significant, as we know less about this model, for example we don’t have strong lower bounds.

. This simulation was already known for 1-tape machines (did you know?), and it still isn’t known for RAMs (=Time). The result for 2 tapes is significant, as we know less about this model, for example we don’t have strong lower bounds.

log log n). In

log log n). In