Schedulers burn out faster than 911 dispatchers. Clients lose coverage because one form got filed a day late. And somewhere in the chaos, a caregiver is driving across town to a shift that got canceled two hours ago.

AI can]]>

Home care is breaking. Medicaid waitlists stretch longer every month.

Schedulers burn out faster than 911 dispatchers. Clients lose coverage because one form got filed a day late. And somewhere in the chaos, a caregiver is driving across town to a shift that got canceled two hours ago.

AI can change all of this. The question isn't whether you'll adopt it—everyone will. The question is whether you'll use it to patch the old way of doing things, or to build something fundamentally new.

This is the choice every home care provider now faces. And like a famous scene from a my favorite film, it comes down to two pills.

🔵 Take the blue pill, and AI becomes a better toolbelt for your admin team. Your schedulers still log into the EMR every morning. They still wake up on Saturdays to a flood of texts from caregivers calling out. The work gets a little faster, a little easier—but it's still the same work, done by the same people, hitting the same ceiling.

🟠 Take the orange pill, and AI stops assisting your operations. It starts running them. Calls get answered on the first ring, every time. Compliance doesn't slip through cracks because there are no cracks. Your people stop firefighting and start building—growing census, deepening referral relationships, actually talking to patients.

The blue pill measures quality the old way: a human voice on every call, even if half go to voicemail. Manual processes, because that's how it's always been done. A patchwork of vendors held together with duct tape and good intentions.

The orange pill measures quality differently: total capacity to deliver care. Zero dropped calls. Zero missed authorizations. Zero hours lost to screens when they could be spent with patients.

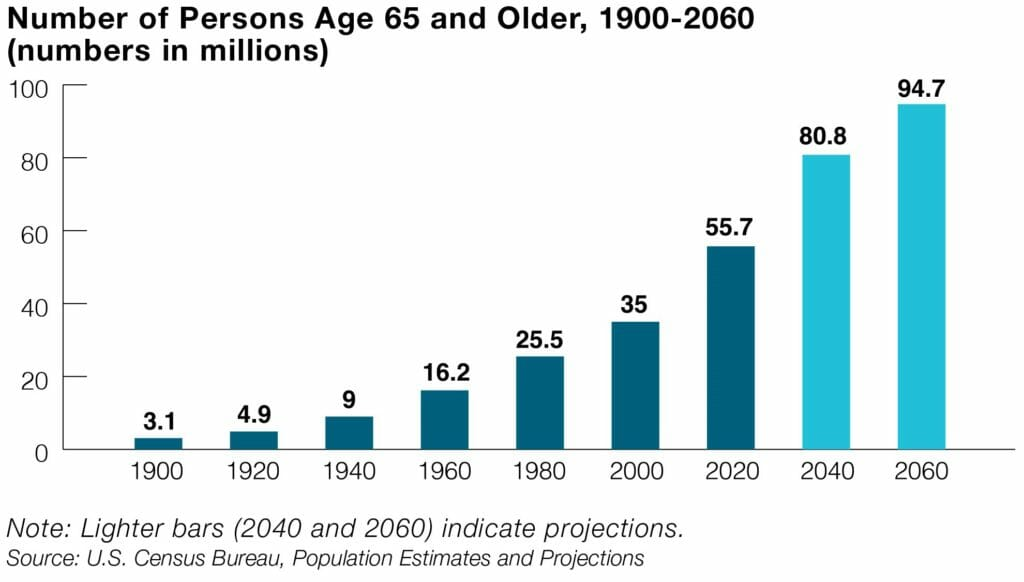

We're entering an era of accelerated pressure. By 2030, one in five Americans will be 65 or older—and the population of potential caregivers will have grown by just 1%. Meanwhile, Medicaid Fraud Control Units recovered $1.4 billion in 2024 alone, with personal care attendants accounting for 36% of fraud convictions. EVV mandates, billing audits, eligibility reviews—the compliance burden isn't shrinking. It's compounding.

The agencies that survive will be the ones whose operations can scale without adding headcount for every new patient.

The choice is yours. But we know which pill we'd take.

Strolls on the Presidio Promenade or nightclubs in Nolita; we all made the hard choices about where to live and build. The talk of remote work and “moving back home” faded like paper vaccine cards. We found our groove, got hitched, settled into brownstones and high‑rises. And yet a question keeps echoing from the burbs: who’s taking care of grandpa?

That’s the question millions of city‑dwellers will face this decade. And it isn’t as easy as booking a ride home. The harsh truth: no matter how high that TC (total comp) goes, finding the right caregiver for our parents is a problem bigger than money. The home front needs care and it’s time for our best minds to make that happen.

I think about Vinesh, a PhD dropout whose family immigrated when he was three. His father came on a student visa to study engineering in Kentucky. They lived the American dream: from middle class aspiration and entrepreneurship to elite education and a real retirement plan. Vinesh lived up to his parents’ ideals: hard work, dedication, service.

Then, in his thirties with a son of his own, came what no one planned for: a father with cancer, hundreds of miles away. At first, logistics didn’t matter. He flew home, met doctors, sorted insurance. The system, slowly, stubbornly, worked. His dad’s care team got him on the right treatment. Crisis averted.

But Dad wasn’t the same. And Mom had aged a decade in a few years. Vinesh wasn’t ready to give up the life he’d built in the Valley. He needed to be home. But “home” now meant two places now.

So began his journey into home care. First call: Senior Helpers, the long‑respected private provider serving thousands. The first caregiver was new to the agency but handled Dad’s care plan well: groceries, appointments, even learning to play Carrom. Then the call‑outs started. A later visit was fine. Until it wasn’t. A no‑show. Then a different caregiver every week. His calendar filled with blocked time to interview other agencies. He quickly realized: this wasn’t an exception; it was the norm.

Senior living? Mom wouldn’t hear it. Home was non‑negotiable. And so the hard choices stacked up. His dreams in one hand, his family in the other. Onward he went, in pursuit of the perfect caregiver.

This is the hidden truth today and the visible future for many more. We dreamed of big city lives and dread the nightmare of our parents with no one to care for them. At Zingage, we’re a collective of builders who know this struggle intimately. We’re building to solve it. And while we’re making progress, it’s still Day 1.

Thousands of seniors don’t have a son like Vinesh or the resources for top quality care. There is a path forward. It starts by empowering the providers dedicated to caring for our parents and by convincing the best engineers in SF and NYC to aim their talent at the home front. If that’s you, let’s build for dad in Louisville.

]]>We chose velocity because velocity is a vector. It is not just a measure of speed but also direction. Going fast only matters when you are

]]>One of our core values at Zingage is velocity. Startups are a dogfight and to win you need speed. But you also need direction.

We chose velocity because velocity is a vector. It is not just a measure of speed but also direction. Going fast only matters when you are going the right way. What most people miss is that velocity looks different for different people.

Building high velocity teams means understanding the role everyone plays in the collective velocity of the company. I have always understood this through the lens of rowing and how we built a crew. For those familiar, Crew is the ultra WASP sport of northeast prep schools. It was one of my biggest motivators to attend Exeter. Coming from New York, the closest I had been to a boat was the Staten Island Ferry.

After my first year on the crew team I was invited to the varsity pre-season in March. We broke the ice with our oars in skintight spandex before spending hours on the Charles River. This was where I first saw how coaches selected the composition of boats, known as “crews.” Each crew had eight rowers and one coxswain. The big guys who looked like the Winklevoss twins sat in the middle. The lanky guys who looked like cyclists or cross country runners either sat in the stroke and two seat, setting rhythm for the rest of us, or they served as the bow pair, feeling every wobble of the shell and adjusting before anyone else noticed.

It is not easy to see why small changes in that composition can alter the boat’s velocity. The clearest way you learn this is when random pairs are sent to row two-man sculls. Take two inexperienced rowers from seat three and stroke, and the boat spins in circles. Scale that to eight rowers and even a slight misplacement can ruin your crew’s chances of reaching Henley.

When I think about velocity at Zingage, it’s never about how fast one engineer ships a feature. It’s about how the whole boat moves.

There’s a lot of noise about 996. We only believe in it in two specific moments as a gear, not a lifestyle:

- The final sprint: the last 300 meters when the call comes and everyone empties the tank.

- Power sprinters: the engine seats that can throw down big watts on command during the body of the race.

Those two moments only work when each seat knows its job.

Your product and engineering leader is the coxswain. She sets direction. Her voice is the only one everyone hears, and when she calls for “ten hard” or “more from port,” you fucking pull because she’s the only person who can see the line we’re taking and what it will take to win.

Then there’s the stroke, usually the most senior engineer. The stroke sets rhythm and rate so the boat can sustain speed and still have a kick left. When the cox calls, the stroke lifts the cadence cleanly so everyone can follow without blowing up.

Next comes the Engine Room aka the power seats. This is where many new grads and junior devs start. Your job here is torque: clean, heavy pulls in rhythm, plus short, violent bursts when the boat needs it. That’s what I mean by power sprinters. You don’t pace the race. That’s the stroke’s job, but you decide it by delivering coordinated power without throwing the set. Done right, this is 996 in micro: intense, time‑boxed effort that serves the boat, not a performative grind.

Finally, there’s the bow pair, our stabilizers. They’re the technicians who keep the set, feel the crosswind first, and make the subtle corrections that keep the shell running straight. In a startup, these are the quiet pros who keep quality high, spot risk early, smooth releases, and protect customers when things rock.

Velocity is a vector. It belongs to the whole crew. Know your seat, pull in time, and make the boat fly.

]]>While Meta plows billions of dollars into one‑shotting seniors to become fodder for the leviathan, healthcare builders are wading through the ugliest stretch of making care great for everyone. This is the part

]]>“Hello, this is Linda from MediCorp—how may I assist you today?”

While Meta plows billions of dollars into one‑shotting seniors to become fodder for the leviathan, healthcare builders are wading through the ugliest stretch of making care great for everyone. This is the part where frontline founders feel the pain of close‑but‑not‑quite models. From mechanical tone to medical‑code hallucinations, we’re so close and still uncomfortably incomplete—just shy of automation that doesn’t make you wince.

Welcome to healthcare’s uncanny valley. It hurts because we know what great looks like. We know how good it will be when voice AI sounds like the receptionist you’ve spoken with every week for years. We know how deliriously happy it feels when the paperwork isn’t work at all.

I dream of a world where health just happens—where regulations don’t slow my grandmother’s care and the nurse is listening, not note‑taking. A world where care coordination means house visits to patients, not mouse clicks in Epic. Our mission is to make that world real.

Most healthcare AI vendors will tout a perfect product. If this is what “perfect” looks like to them, we’re in trouble. In senior care, we benefit from an older ear that’s happy to hear any voice instead of a dial pad—even if it sounds a little too happy sometimes. Our customers know this is still Day 1, and they’re committed to the journey because any future is better than the present.

At the same time, the bar is higher for healthcare AI builders. We’re not judged like humans doing their best to keep up. We’re measured against do‑no‑harm at a scale that prizes coverage over novelty and stability above all. The companies we’re building now will orchestrate care for billions. Before we get there, we’ll keep doing the hard work in the bowels of infrastructure and prompting—to make it work, and make it human.

At Zingage we're doing this hard to make AI actually work in Home Care. If you're interested in learning how we're tackling this problem with Zingage's data platform, check out our latest piece: How We Built a Data Platform for AI Agents.

]]>When Zingage first launched, our data infrastructure looked like many early-stage systems: cron-driven ETLs, periodic syncs, and a warehouse where truth converged slowly. It worked — until we stopped reporting on care and started orchestrating it. Once we began making calls, scheduling visits, and updating records, the abstraction broke. Our software entered the physical world, and the physical world doesn’t wait for nightly jobs.

We saw the failures immediately: Voice AI calling caregivers about visits that were already closed. Operators overwriting each other’s edits. Automations racing humans across time zones on inconsistent state. None of these were application bugs—they were contradictions born from stale or conflicting data. We had treated consistency as a global property. In practice, it’s actor-specific. We rebuilt our data layer not just to scale operations, but to give AI agents a consistent and reliable view of the world they operate in.

From Batch to Proxy

We realized a shift was needed: stop asking “how fresh is our data?” and start asking “how coherent must it be for this actor, right now?” The answer changes by use case. The Voice interface needs near-instant coherence. The scheduling dashboard needs read-your-own-writes. The payroll export can live with delay.

The only architecture that could express those guarantees cleanly was a proxy layer. Every read and write now flows through Zephyr, our data plane. It doesn’t blindly fetch data—it reasons about which data to serve, how stale it can be, and what level of consistency each actor requires. Each request includes Consumer Directives: latency budgets, staleness tolerance, and consistency guarantees.x

type ConsumerDirectives = {

maxStalenessMs: number;

requireReadYourOwnWrites: boolean; // RYOW guarantee

};

When the proxy sees a read, it asks: “Given this actor’s contract, what’s the most coherent view I can safely return?” That framing—no single master, only policy-governed caches behind a proxy—reshaped everything.

Defining Correctness in a World Without a Master

Once we abandoned a central datastore, we faced a deeper question: when there’s no monolithic database, what does correctness even mean? Traditional databases give you consistency for free through ACID transactions. Zephyr doesn’t have that luxury. EMRs, HR systems, and operator dashboards all modify overlapping slices of reality. We had to invent our own invariants—rules that make a decentralized system behave predictably.

The first rule: explicit ownership. Every field in our merged graph is annotated with a single physical writer; everything else is derived. That map underpins merges, conflict detection, and write routing.

const OWNERSHIP: Record<string, "EMR" | "HRIS" | "ZINGAGE"> = {

"visit.scheduledStart": "EMR",

"visit.scheduledEnd": "EMR",

"visit.timeEntries": "EMR",

"practitioner.language":"HRIS",

"assignment.status": "ZINGAGE",

};

At read time, Zephyr uses this ownership map to deterministically merge records. When external IDs align, identity is clear. When they don’t, we fall back to a learned policy that defines what counts as “the same visit”—matching patient, caregiver, and service day within an adaptive epsilon (ε). The epsilon adjusts per integration, based on observed rounding and clock-in drift.

/* Fallback identity predicate for read-time reconciliation.

ε (epsilon) is per-integration tolerance to account for drift. */

SELECT

external_id_a IS NULL AND external_id_b IS NULL

AND caregiver_a = caregiver_b

AND patient_a = patient_b

AND date_trunc('day', start_a AT TIME ZONE biz_tz)

= date_trunc('day', start_b AT TIME ZONE biz_tz)

AND tstzrange(start_a, end_a)

&& (tstzrange(start_b, end_b) + make_interval(mins => :epsilon_minutes))

AS is_same_visit;

Writes enforce the same invariants in reverse. Every update carries an ETag (Entity Tag) so the proxy can detect concurrent edits and preserve read-your-own-writes. If two writers race, we return a deterministic 409 Conflict with a structured diff—never a silent overwrite.

async function updateVisit(id: VisitId, patch: Partial<Visit>, ifMatch: string) {

const current = await loadNormalized(id);

if (current.etag !== ifMatch) {

return {

status: 409,

body: {

code: "ETAG_MISMATCH",

current,

diff: computeDiff(current, patch),

hint: "Rebase and retry with new ETag",

},

};

}

for (const field of Object.keys(patch)) {

if (OWNERSHIP[`visit.${field}`] !== "ZINGAGE") {

throw new Error(`Cannot edit non-owned field: ${field}`);

}

}

await sagaWriteToOwners(id, patch);

const next = await loadNormalized(id);

return { status: 200, body: next, headers: { ETag: next.etag } };

}

Together, explicit ownership, adaptive identity, and conditional writes form Zephyr’s version of a transaction. And because Zephyr doesn’t maintain a canonical datastore, the system effectively operates as multi-master. AxisCare writes clock-ins, HRIS manages caregiver metadata, Zingage orchestrates assignments. Zephyr reconciles them all at read time. In practice, it behaves like a business-logic CRDT - conflict-free not by algebra, but by policy.

The Zephyr Data Plane

With correctness defined, we built the plane to enforce it in real time. Zephyr has four layers: Source Translators (one per EMR), the Proxy Layer (decision engine), a lightweight FHIR-inspired model (shared vocabulary), and Per-Actor Caches (snapshots governed by freshness policies).

The diagram below shows how Zephyr reads from an EMR, normalizes data into a unified schema, and streams it into the cache and event topics for consumption.

Each request is a policy invocation. Actors—voice, UI, or batch—define how fresh data must be, how much latency they can tolerate, and what consistency semantics they require.

type ConsumerDirectives = {

maxStalenessMs: number;

requireReadYourOwnWrites: boolean; // RYOW guarantee

latencyBudgetMs: number;

};

When a read lands:

async function getVisit(id: VisitId, policy: ConsumerDirectives) {

const cached = await cache.get(id);

if (cached && !isStale(cached, policy)) return cached;

if (cached) revalidateInBackground(id);

return await fetchFromSource(id);

}

This yields bounded staleness per actor, not a single global metric. Voice AI trades detail for speed. Dashboards require stronger coherence. Batch jobs prioritize throughput. Each interaction is negotiated.

Writes follow the same pattern—conditional, versioned, and deterministic.

When failures happen, we aim for predictable degradation, not brittle coupling. If an EMR is unreachable, the proxy fails fast on those fields but continues serving Zephyr-owned state flagged as “pending-sync.” Schema drift is quarantined, not guessed. We track per-actor coherence: p95 latency, stale ratio, invalidation lag. The key question isn’t “is the system up?” but “is each actor seeing a coherent world within the bounds it was promised?”

What’s Next with AI: Self-Healing Data at the Edges

With Zephyr’s consistency layer in place — ownership, merging, conflict logic — there’s still plenty left to build. But LLMs today has opened a new set of possibilities. We’re beginning to explore how AI might help Zephyr repair itself: flagging drift, suggesting schema patches, or even filling gaps when systems fail. Below are a few directions that feel especially promising.

Schema Drift

EMRs often change schema: drop, rename, or mutate fields. Those changes propagate silently unless caught. We’re building monitors that detect drift in incoming payloads, propose patches (e.g. remapping fields or migration logic), or issue alerts. We're particularly excited about the research in LLM-Powered Proactive Data Systems (Zeighami et al., 2025), which argues systems should be proactive — rewriting user inputs, inputs’ structure, and query logic.

Data Anomaly & Correction

Data is messy. Records get duplicated, conflict, or violate implicit rules. We plan validator models that flag suspect cases and suggest corrections. Examples: negative durations, timestamp mismatches, out-of-range values. We might output something like:

{

"verdict": "anomaly",

"field": "visit.duration",

"reason": "duration = 0; clock-out missing or wrong",

"suggestedFix": { "duration": "derive from timeEntries" },

"confidence": 0.87

}

Depending on policy, we may block writes, stage reviews, or auto-correct high-confidence cases.

Web Agents for UI Remediation

Sometimes APIs break, scrapers drift, or data hides behind UIs. In those cases, a headless browser agent controlled by an AI may log into the EMR portal, navigate UI flows, extract or patch data, and feed it through Zephyr’s same invariants. We treat this as fallback repair, not the primary path.

const uiResult = await runAgent(directive);

if (!uiResult.visitTime) {

// Use LLM to infer missing field from existing context

const fix = await callLLM({

prompt: `Given these time entries: ${record.timeEntries}, infer missing visitTime.`,

});

uiResult.visitTime = fix.visitTime;

}

// Feed the result back through Zephyr’s merge + ownership logic

normalized.visitTime = uiResult.visitTime;

If any of this sounds interesting? We’re currently hiring for Senior Engineer to join us and lead our Data Platform. Reach out to Daniel at [email protected] or visit our job board here.

]]>Victor and I never set out to build software for other software people. From the beginning, our goal was a generational company in the real economy - systems that move the world outside a browser tab. Victor had just exited Astorian, a marketplace that helped property managers find

]]>

Victor and I never set out to build software for other software people. From the beginning, our goal was a generational company in the real economy - systems that move the world outside a browser tab. Victor had just exited Astorian, a marketplace that helped property managers find contractors. I had just watched my family scramble to find caregivers for my grandfather with dementia during COVID. We didn’t know yet that home care would be our industry, but we knew our ambition belonged where failure has consequences.

We reconnected in 2023 at South Park Commons and started exploring. Our first step was Zingage Perform, a layer on top of the EMR (electronic medical record) to automate communication, engagement, and rewards. At the time we assumed two things: full automation wasn’t yet feasible, and most agencies had foundations we could build on.

We were wrong. The work itself sets humans up to fail. Agencies live in a 24/7 cycle - 2 a.m. hospitalizations, daytime call queues, texts and emails piling up - while trying to coordinate care in systems built for records, billing, and compliance, not minute-to-minute staffing. These are good people doing heroic work inside constraints that make reliability overly dependent on brute force.

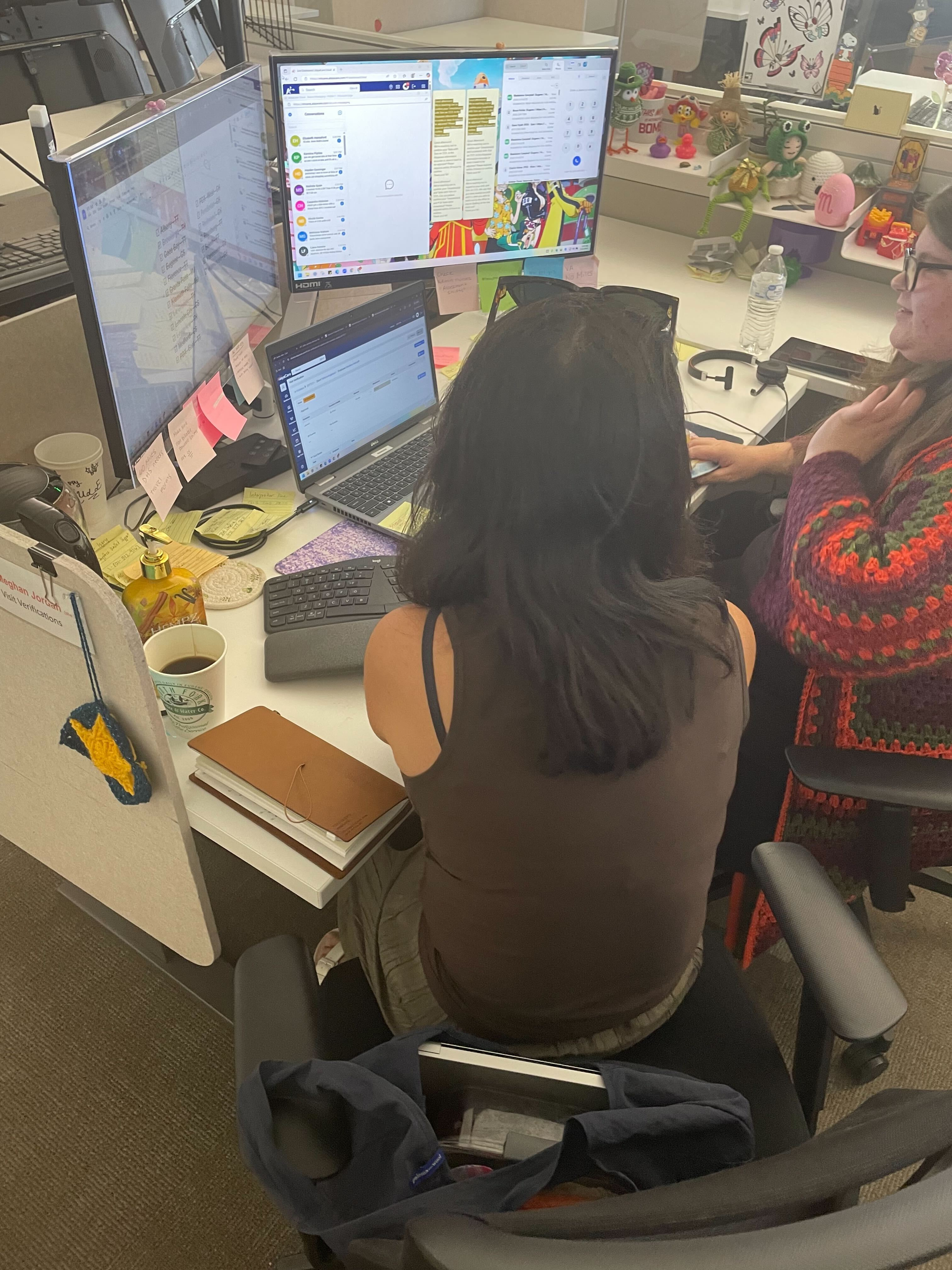

So, we stopped hedging and built what we always meant to build: Zingage Operator - coordination infrastructure that frees agencies from the back-office grind and makes care delivery dependable at scale. For a few months we even became schedulers ourselves to feel the weight of it and learn fast. That changed how we work. Every line of code now has a patient on the other end. A race condition isn’t an edge case - it’s a missed visit. A clumsy workflow isn’t an inconvenience - it’s a family crisis.

And now the curve has bent. Since launching post-pilot in August, four weeks of selling took us past seven figures in annual contracts, and we expect to 10x by year-end. Agencies aren’t just adopting; they’re telling us it’s changing their days. Laura Curry, who runs a veterans-focused CareBuilders agency in Kentucky, once bought cruise tickets with her husband and never used them - she was on call around the clock. When she saw Operator in action, she told us it was the first time in years she could put her phone down and know that her veterans would still get the care they needed.

That’s why we’re writing this now. We’ve shared these values internally, but with the team growing, the company at an inflection point, and the stakes higher than ever, it felt right to share them publicly too.

The Zingage Way

Right now, at this moment, there's a caregiver call out that will end in a hospitalization or worse. Families are drowning in chaos: spreadsheets, late-night panics, missed visits, caregivers churning at 80% per year. Zingage exists to end this. We're building the infrastructure so healthcare can happen in the home, so our parents can age with dignity, and so their children can live without sacrificing everything.

We are the coordination layer that makes home care automatic. That's the mission behind every line of code, every sprint, and every late night. We know that behind every bug fix is a family counting on us. Behind every feature is someone's parent waiting for care.

We move fast, bend convention, and take risks others won't. Our customers need us to be this way because their livelihoods and lives depend on it. We courageously build what others avoid, commit to deliver excellence, and uphold the integrity of our commitments.

Zingage may not be an easy mission to achieve but it is an easy mission to champion. Ultimately, our values will determine whether we win.

Customers First

Forward Deployment Trips to our customer

We are building so that every provider, caregiver, and family can live the life they always dreamed.

We build for the providers whose dream is to deliver excellent care to thousands of people without having to give up their own lives staring at screens and staying up late.

We ship so that caregivers who show up for their patients never have to do this alone.

We deliver so that the families who need care can trust they will always get care.

Serving our customers is not only a privilege, it is a duty that we pledge ourselves to fully.

When Zingage succeeds it means that a daughter sleeps easy knowing her bed-bound mother hundreds of miles away will get the care she needs.

It means a caregiver is supported when her patient suffers a stroke in the middle of a visit, instead of being so overwhelmed that they quit.

It means a provider can continue serving thousands of patients without unforeseen compliance hits shutting them down.

Tradeoff: we will prioritize customer impact over internal preferences or engineering elegance, even if it means cutting scope, scrapping work, or redoing something we personally liked.

Velocity

We don't wait to find the silver bullet, but embrace velocity.

We move fast and we ship responsibly because we care. Our customers’ lives will not wait for us to reach perfect certainty nor will they tolerate careless mistakes. At Zingage we create velocity as a marathon of sprints, punctuated by bursts of intensity and recovery.

We focus on the inputs: work long, work hard, or work smart; pick what works for you, but pick something. Velocity isn't negotiable, but how you achieve it is.

We're pirates. We hired an actress off Craigslist to crash our first conference. We snuck into WellSky's event posing as customers. We hand-deliver donuts at 6am. We take the shots others won't because playing by the rules means families suffer.

We're pirates because this industry needs pirates. The treasure we're after isn't gold, it's every family sleeping soundly knowing care is handled. We burn the ships behind us because there's no retreat when lives are on the line.

In the end, all that matters is what we have done for the customers.

Tradeoff: we accept fatigue and messiness in bursts in order to move fast, but we also commit to cleanup cycles so we can keep sprinting again. If you want a steady, predictable pace, this isn’t the place.

Extreme Ownership

Our Team shadowing schedulers to understand their workflow

We take extreme ownership at Zingage. We don’t make excuses. We don’t blame anyone or anything. We take ownership of the problems and the solutions. We take ownership because it is the bedrock of trust, which is the lifeblood of success.

Ownership shows up when no one is looking. It appears when you choose to sweat the details to fix a bug no one else caught. It’s there when you go out of your way to teach a customer how to use our product. It’s present when you show up in person to listen and to build beside the customer.

Most importantly, extreme ownership is taking care of each other. There is no blame at Zingage and making excuses without providing solutions is intolerable. When something breaks, we don't point fingers, we make solutions. We not only accept but praise support, encouragement, and respect.

Extreme ownership is the manifestation of high agency. If you want to start a podcast then do it; if you want to understand a customer then call them; if you want to build a feature then ship it.

Tradeoff: we stomach the discomfort of eating shit when we make mistakes and we accept everyone's fallibility. Zingage is not a place you can hide nor is it a place to begrudge teammates who try and fail.

Our mission is worth every difficulty. With these values, we won't just succeed, we'll build a world where no call goes unanswered, no family drowns in chaos, and everyone can age with dignity at home.

]]>In truth, I've had this conversation repeatedly with founder

]]>A friend and I recently debated the meaning of work in the looming shadow of AGI. The premise was simple: if OpenAI - or any organization - achieves superintelligence, what's the point of doing anything at all?

In truth, I've had this conversation repeatedly with founder friends. Each new OpenAI release sparks awe and dread, steadily devouring startups conceived just months ago. The meme of startups reduced to mere ChatGPT wrappers feels painfully real. These discussions typically land us at two bleak conclusions: either join an AI lab to stay relevant or succumb to nihilism, lounging on Universal Basic Income in the supposed “post-scarcity” future. Advocates imagine humans pivoting gracefully toward art or leisure, but that vision feels patronizingly hollow.

Why does this scenario feel inevitable and limiting? Perhaps because we’ve mistakenly assumed that a single, centralized AGI - one supreme intelligence directing human affairs - is the optimal and natural outcome. Yet history challenges this assumption. Attempts at centralized planning, such as Mao’s Great Leap Forward or Lenin’s collectivization, repeatedly failed due to oversimplification of complex human systems.

James C. Scott vividly illustrates this danger in Seeing Like a State. Colonial powers in Tanzania enforced monoculture farming, planting a single crop uniformly for maximum yield. Their "scientific" method disastrously ignored local wisdom. Indigenous farmers had traditionally practiced polyculture - planting multiple crops together. While seemingly inefficient and messy, polyculture safeguarded soil health, diversified risk, and allowed flexible responses to unpredictable conditions. The colonial approach, though theoretically optimized, proved rigid and catastrophically vulnerable.

The core misconception underlying singular AGI echoes this colonial mindset: the belief that superintelligence can - and inevitably should - become a digital god capable of making all decisions optimally. Yet real-world decision-making rarely offers neat solutions; it more closely resembles the messy moral complexity of the trolley problem. Intelligence alone, no matter how advanced, cannot dictate correct answers to inherently subjective moral dilemmas. Thus, we must clearly separate intelligence - the neutral ability to solve problems - from agency (authority to act) and values (the moral principles guiding actions).

Intelligence, in its purest form, involves computational power, data processing, and predictive modeling capabilities. It is fundamentally about pattern recognition, scenario forecasting, and logical analysis - essentially neutral skills that can enhance decision-making but do not inherently carry ethical weight or moral guidance. Agency, on the other hand, concerns who or what has the authority and accountability to act upon the outputs of this intelligence. Agency requires legitimacy, trust, and transparency - qualities that purely intelligent systems alone cannot ensure. Values represent the most human dimension of all; they encompass the moral frameworks, cultural contexts, and ethical considerations that ultimately guide decisions.

Today, systems like ChatGPT already display overarching personalities and value frameworks, intentionally designed by organizations like OpenAI. While this approach helps in establishing baseline safety and ethical guardrails, it presents two significant issues. First, these predetermined values might not fully align with the diverse perspectives, cultural contexts, and nuanced ethical landscapes of all users. Second, embedding a singular value system risks oversimplifying complex moral decisions, potentially resulting in outcomes disconnected from local realities or community-specific priorities. Therefore, a more robust approach would empower users and communities to tailor and tune these AI personalities and values to their specific needs and ethical standards, ensuring greater relevance, acceptance, and genuine alignment.

Startups uniquely embody this critical separation of intelligence, agency, and values. They deploy intelligence as technological infrastructure - powerful yet neutral tools capable of addressing specific problems. They restore agency by enabling local communities and users to actively choose, adapt, or reject these tools based on their distinct circumstances. Most crucially, startups allow values to remain community-defined and responsive to context, rather than universally imposed. For example, a rural healthcare clinic might adopt AI specifically tuned for resource-constrained environments, emphasizing preventive care aligned with local priorities. An urban hospital might choose a different AI optimized for managing high patient volumes and specialist coordination. Each community retains genuine agency, reinforcing accountability and achieving true alignment between technological capabilities and diverse human values.

This approach mirrors how governance functions at its best: overarching federal policies exist alongside state laws, city ordinances, trade associations, and grassroots organizations. While centralized institutions like OpenAI attempt broad alignment efforts analogous to federal policy, startups act as local policymakers - crafting tailored, bottom-up solutions that reflect community-specific needs and values.

Such decentralization doesn’t just enable startups to gain initial traction - it positions them for sustained relevance. Startups rapidly build trust through close alignment with local communities, steadily compounding their advantages by integrating powerful open-source models like Llama and DeepSeek with specialized expertise, proprietary data loops, and deep relationships. These assets form an enduring edge, similar to how local clinicians remain indispensable because their practical insights and patient relationships withstand technological disruption.

Ultimately, I’m not advocating for decentralized intelligence and the startups that embody it out of nostalgia or a luddite fear of our soon-to-come AI overlords. Sure, spending retirement as mediocre painters surviving on UBI sounds grimly amusing. But the real danger is more serious: placing all our trust in a single, omnipotent AI planner whose perfectly rational decisions could lead us straight off a cliff. Startups offer something far better - a morally diverse ecosystem of intelligences, built from the ground up by real communities. If history teaches us anything, it’s that pluralism - not centralization - is our strongest safeguard for human liberty. So yes, despite the looming shadow of AGI, it’s (still) time to build.

]]>Error handling in JavaScript and TypeScript is notoriously challenging. Unlike many modern statically-typed languages (Rust, Swift, Kotlin, Go), TypeScript lacks built-in ways to track error types, often leading to fragile, opaque error-handling code. Although third-party Result type implementations have emerged to fill this gap, none have gained widespread adoption—mainly due to cumbersome syntax, complicated interactions with existing error-handling patterns, and unintuitive APIs.

In his latest blog post, Ethan Resnick explores these challenges, critically assesses current solutions, and proposes an improved Result type designed specifically for TypeScript. By aligning closely with the familiar Promise API, reducing boilerplate, and seamlessly integrating with async workflows, Ethan offers a practical, incremental path toward clearer, safer error handling. Read the full post to learn how to rethink your approach to robust error handling in TypeScript—and why simply porting a standard Result pattern isn’t enough.

Read full article here: https://medium.com/@ethanresnick/fixing-error-handling-in-typescript-340873a31ecd

]]>- Data Leakage Risk: Forgetting to filter queries by

businessIdcould

As Zingage rapidly expanded to hundreds of customers nationwide, our engineering team faced increasingly complex technical challenges: robust data isolation, seamless scaling, and high availability during intensive operations. Standard UUIDs quickly proved insufficient, exposing several critical issues:

- Data Leakage Risk: Forgetting to filter queries by

businessIdcould expose sensitive data across businesses. - Complex Partitioning: Lack of inherent business context made data partitioning challenging and inefficient.

- Ambiguous Entity Scope: Without clear entity boundaries, managing data across multiple tenants became error-prone.

The Limitations of Traditional UUIDs

Consider this problematic scenario:

const profileId = uuidv4();

// Risky query (business context omitted)

const profile = await db.profiles.findOne({ id: profileId });

// Potentially exposes data from another business inadvertentlyThis approach, although common, risks critical data leaks in multi-tenant environments.

Introducing Zingage IDs: A Robust Multi-Tenant Solution

To address these challenges, we designed a structured UUIDv8-based identifier system, embedding clear business context and distinct entity scopes directly within the IDs:

- Business IDs (

000prefix): Represent unique business entities. - Business-scoped Entity IDs (

1prefix): Clearly tied to specific businesses, embedding business identifiers. - Cross-business Entity IDs (

001prefix): Explicitly defined to represent resources shared across businesses.

Code Example

Here's how this looks in practice:

import { generateBusinessId, generateScopedId } from 'zingage-id';

const businessId = generateBusinessId();

const profileId = generateScopedId(businessId, 'PROFILE');

// Secure query with embedded business context

const profile = await db.profiles.findOne({ id: profileId });

// Built-in safeguards ensure correct business scope, preventing leaksAdvanced Collision Resistance and Debugging Capabilities

Zingage IDs leverage structured components—42-bit timestamps, 10-bit entity type hints, and opaque random data—to provide strong collision resistance and powerful debugging:

- Collision Resistance: By combining precise timestamps with robust random bits, we drastically lower collision risks, even under high-load scenarios. For example, generating up to 100,000 IDs per day produces only a minimal annual collision probability (~7% under highly conservative assumptions).

- Debugging Efficiency: Entity type hints embedded within IDs enable rapid issue identification during debugging, without imposing rigid constraints. This ensures flexibility for future entity restructuring or data migration tasks.

Built-In Database-Level Security Enforcement

Our ID scheme integrates seamlessly with database-level Row-Level Security (RLS) policies, providing automatic, foolproof data isolation:

-- Enforce strict business context at the database level

CREATE POLICY business_scope_policy ON profiles

USING (extract_business_id(id) = current_setting('app.current_business_id')::uuid);With this policy, database queries automatically apply business scoping, significantly reducing the risk of accidental data exposure.

Middleware further enhances security by automatically setting business context on a request level.

// Middleware example

app.use((req, res, next) => {

const businessId = extractBusinessIdFromRequest(req);

db.setBusinessContext(businessId);

next();

});

// Database query implicitly scoped

const profile = await db.profiles.findOne({ id: profileId });

// Automatically executes as:

// SELECT * FROM profiles WHERE id = :profileId AND business_id = :activeBusinessIdSimplified and Efficient Data Partitioning

Explicitly embedding business identifiers simplifies data partitioning dramatically:

- Business-scoped Entities: Directly embed business IDs, enabling straightforward partitioning and isolation per business.

- Cross-business Entities: Clearly separated and replicated across partitions to ensure consistency and accessibility.

Practical partitioning example:

CREATE TABLE profiles (

id UUID PRIMARY KEY,

...

) PARTITION BY HASH (business_id_embedded_in_uuid);

CREATE TABLE workflow_templates (

id UUID PRIMARY KEY,

...

) -- Replicated across partitions due to cross-business applicabilityThis explicit delineation dramatically enhances scalability, performance, and operational efficiency.

Key Benefits of the Zingage ID Scheme

- Robust Security: Intrinsic business isolation prevents accidental cross-tenant data breaches.

- Scalable Architecture: Simplified, efficient partitioning supports effortless horizontal scaling.

- Improved Developer Experience: Reduced manual context management and minimized risk of oversight.

We are not just here to ship features. We are here to define what it means to work alongside AI in one of the

]]>Design at Zingage is not a support function. It is a way of seeing, reasoning, and building systems that actually work for the people delivering care.

We are not just here to ship features. We are here to define what it means to work alongside AI in one of the most human industries in the world.

The Problem: Designing for AI in a Trust-Based Industry

In home care, people don’t use software because they want to. They use it because they have to.

Schedulers and caregivers operate under constant stress: backlogs of patient visits, late cancellations, last-minute reassignments. Their current tools make this worse—clunky, slow, opaque.

Now, introduce AI. Software that doesn’t just coordinate schedules but acts on its own. Agents that message caregivers, reassign visits, and resolve gaps without human input. This is powerful. But it also creates a new kind of risk:

What happens when something goes wrong, and no one knows why?

Traditional UI patterns break down here. The job of design is no longer just to simplify a workflow—it’s to help users build trust in a system that behaves more like a colleague than a tool.

The Opportunity: Interfaces for Delegation, Not Just Execution

Our goal is not to make care schedulers faster typists. It’s to help them delegate work to intelligent agents. But delegation requires:

- Knowing what the agent is doing

- Understanding why it did something

- Stepping in when something goes off track

In other words: clarity, not control. We are designing for a world where the default state of software is action, not waiting. Where the system moves first, and the human refines it.

This requires new UI patterns, new metaphors, and new forms of accountability. Most importantly, it requires a deep understanding of the users who will live in this hybrid loop.

Core Design Principles

1. Supervision Over Control

Users shouldn’t be expected to micromanage the AI. The interface must:

- Show intent, not implementation

- Offer intuitive paths for feedback and override

- Make risk visible without creating fear

2. Asynchronous by Default

Work doesn’t happen in one sitting. Schedulers reach out, wait for replies, reschedule, escalate, wait again. Our interfaces must:

- Support interrupted workflows

- Track state across time and agents

- Handle reversals and replans with grace

3. Mental Models, Not Just Screens

We don’t design pages. We design systems of meaning:

- What is a plan?

- What does it mean to delegate something?

- When has a task truly been resolved?

These are design questions.

4. Internal Surfaces Matter

We take Sarah Tavel’s advice seriously: "It’s okay to have a human in the loop. It’s better if it’s your human, not your customer's."

Our internal ops team supervises AI decisions, resolves edge cases, and monitors quality. These tools require the same care as the external product. They are the levers that make AI feel dependable.

5. Make Work Delightful

Design is emotional. Even more so in a field like home care.

We use reward loops, milestones, social reinforcement, and small moments of joy to make invisible progress feel tangible. This is not gamification. It’s respect—for people whose work is often ignored.

Why It Matters

Just as early mobile apps mimicked real-world textures (skeuomorphism) before evolving into native mobile patterns, AI will go through its own transition. Right now, AI systems need scaffolding. They need explanation, supervision, and context. Over time, they will disappear into the background.

Our design work must accelerate that curve. We believe the design challenges in AI are not about novelty. They are about sequencing trust. They are about making delegation possible in domains where mistakes have real consequences.

Sounds interesting? - We're looking for the next Diego Zaks to join us as our Founding Product Designer :)

]]>We’ve found a powerful wedge:

]]>At Zingage, we’re building AI-powered systems to automate the critical back-office operations of healthcare providers. Our goal this year is ambitious: scale from $X million to $XX million ARR by delivering intelligent staffing and scheduling agents to home care agencies nationwide.

We’ve found a powerful wedge: integrating deeply with Electronic Medical Records (EMRs). Today, we’re connected with over 300 healthcare sites, representing thousands of caregivers and patients. However, our growth has exposed significant foundational gaps in our data infrastructure, and we’re looking for exceptional engineers to help us solve them.

The Problem: Healthcare Data is Messy

Our competitive advantage lies in seamless EMR integrations—but EMR data is notoriously fragmented and unreliable:

- Multiple Integration Methods: Today, we integrate with EMRs through APIs, file dumps, and RPA. Each method brings a different set of challenges and can introduce issues like delayed responses, unpredictable changes, inconsistent schemas, and unreliable join keys.

- Scaling Pains (300 → 1,000 customers): Our current ETL architecture (Kubernetes pods → transforms → PostgreSQL) was fine initially. But now, write-intensive ETL tasks significantly impact our primary application database’s performance. Worse, performing transformations upfront (ETL) means losing raw data context, hindering debugging, and reducing flexibility.

- Limited Observability & Data Lineage: When data ingestion breaks (and it often does), our lack of lineage and replayability makes debugging slow and painful. Identifying root causes, replaying failed jobs, and rapidly restoring pipelines is currently difficult.

- Data Interpretability Challenges: Healthcare semantics are tricky—simple medical conditions can appear in many forms across EMRs. For example, the diagnosis “diabetes” might appear as five separate coded entries, though clinically identical. Building reliable AI means solving these interpretability challenges systematically.

- AI Data Readiness & Raw Data Storage: Our AI agent accesses data directly from our PostgreSQL database—but it’s restricted to already-transformed, limited-context data. Our AI needs access to richer historical and raw context data to perform optimally.

This combination of challenges—unreliable data, rigid ETL architecture, poor debugging capabilities, interpretability complexity, and insufficient AI-readiness—is limiting our scale, velocity, and product quality.

We must rethink our data architecture from first principles.

The Opportunity: A New Data Stack for Healthcare AI

We’re calling on talented backend and data engineers to help us architect and build a next-generation data pipeline capable of scaling to thousands of healthcare customers. This infrastructure will form the bedrock of our AI-powered staffing and scheduling solutions.

Here's some initial thoughts from our team:

1. Moves from ETL → ELT

- Extract & load raw data first (to a data lake like S3, BigQuery, or Snowflake).

- Delay transformations until downstream, enabling iterative experimentation, faster debugging, and improved raw data access.

2. Implements Event-Driven, Replayable Pipelines

- Capture EMR snapshot outputs as event streams (e.g., Kafka, Google Pub/Sub).

- Achieve full data lineage, observability, replayability, and rapid debugging—transforming our pipeline maintenance from reactive to proactive.

3. Adopts Modern Data Warehousing & Separation of Concerns

- Clearly separate transactional workloads (PostgreSQL) from analytical & AI workloads (BigQuery, Snowflake).

- Ensure high availability, query optimization, and real-time analytics without compromising app performance.

4. Scales Integration Operations

- Invest in automated integration infrastructure, robust error handling, and alerting.

- Scale to 1,000+ healthcare customers gracefully, without proportional increases in operational overhead.

5. Solves Data Interpretability with Systematic Normalization

- Develop modular semantic mapping frameworks or leverage healthcare data standards (FHIR, HL7, EVV).

- Tackle healthcare-specific data nuances systematically, ensuring AI models see consistent, high-quality data.

Why Join Zingage Now?

You’ll join a team of exceptional engineers (early Ramp, Amazon, Block/Square), backed by seasoned operators from healthcare and SaaS, all dedicated to rebuilding how healthcare is delivered through AI and automation.

If you’re energized by the idea of solving messy, mission-critical problems in healthcare—building a new foundational data architecture, owning impactful engineering decisions, and working on high-leverage problems at early-stage scale—we’d love to talk.

]]>In 1965, the United States made a generational promise. Standing beside Harry Truman, Lyndon Johnson signed Medicare and Medicaid into law and declared that “no longer will older Americans be denied the healing miracle of modern medicine… no longer will illness crush and destroy the savings they have carefully put away… no longer will young families see their own incomes, and their own hopes, eaten away simply because they are carrying out their deep moral obligations.” These programs weren’t just policy—they were a commitment to institutionalized dignity. And for a time, they worked.

But that promise is unraveling—not because we’ve lost the will to care, but because we’ve failed to build the infrastructure that care requires. America’s fastest-growing care need—home-based long-term support—is on the brink of collapse. Providers are underfunded. Medicaid reimbursement rates hover near break-even. Caregiver turnover exceeds 60% annually. For every caregiver available, five patients wait. In many parts of the country, families are told it will be weeks—sometimes months—before someone can come help.

When the state fails to care, the burden falls back on the family. A daughter steps back from her job to care for her mother. A son starts dipping into savings to cover private-pay aides. A couple decides to put off having a second child—because they’re already caring for an aging parent. And slowly, silently, the load adds up. Dual-income households fracture. Women exit the workforce. Siblings fight over who will take on more hours. Young people, watching all this, start to wonder whether they can afford to have kids of their own.

A recent study published in Demography found that caregiving burdens significantly reduce fertility intent among adults in their peak childbearing years. The OECD reports that countries with high eldercare demands and low institutional support—like the U.S.—consistently see lower birth rates, greater family stress, and rising mental health burdens. In Japan, where eldercare has similarly overwhelmed the household, the government has identified aging care as a key barrier to national fertility recovery. A society that can’t care for its elders makes it harder to imagine creating the next generation.

And yet, economists call this a “labor shortage.” As if the only problem were a missing headcount. But the truth is deeper—and more dangerous.

At the bottom of the demand curve are not price-sensitive consumers. They are the elderly, the disabled, the poor. Classical economics tells us the invisible hand will resolve this. When demand rises, so too should wages—until the market clears. But that logic breaks down in home care. If providers raise wages to attract workers, patients who rely on fixed Medicaid reimbursements are priced out of the system. The labor supply doesn’t grow—it just shifts toward wealthier, private-pay clients. And the public safety net quietly fails.

It fails not only due to underfunding, but because the infrastructure required to deliver care is fundamentally broken. Today’s home care providers operate with vanishingly thin margins—not because care is too expensive, but because it is built on manual coordination. Scheduling, compliance, shift replacements, documentation, and payroll are stitched together by humans working across spreadsheets, texts, and brittle workflows. The result is a model where every dollar is eaten by complexity before it reaches the caregiver’s paycheck.

This is where technology must intervene—not to replace humans, but to unburden them. A typical home care provider might serve 300 clients with a back office of 15 staff. That means one coordinator for every 20 to 50 caregivers—each one juggling shift coverage, compliance tasks, payroll, and constant crisis management. That ratio should look more like one admin per 1,000 caregivers. That’s not a fantasy—that’s the level of operational leverage already achieved by modern platforms like Uber, which coordinates over 33 million trips per day with just 30,000 employees.

If we can bring that level of software-driven efficiency to home care, the economics shift meaningfully. Today, a typical Medicaid reimbursement of $25 an hour breaks down into $15 for the caregiver, $3 to $4 for administrative overhead, and $1 to $2 in agency margin—leaving little room for stability or growth. But with automation compressing overhead by up to 80 percent, agencies could preserve their margins while redirecting $2 to $3 an hour back into caregiver wages. That’s a 15 to 20 percent raise—without increasing what the system pays.

This isn’t just an economic adjustment. It’s behavioral leverage. Turnover in home care exceeds 65 percent per year, but studies show that even a one-dollar wage increase can reduce churn by up to 20 percent. If we can consistently raise caregiver pay from $15 to $18 an hour, we change the decision calculus for millions of workers—making caregiving a competitive alternative to retail, warehouse, or gig work. In doing so, we not only stabilize the workforce, we begin to grow it.

But the potential goes beyond margin recovery. If we get the operational layer right, we can also expand the clinical scope—and the strategic role—of home care itself.

Today, home care is often treated as glorified nannying: help with meals, light housekeeping, companionship. It’s seen as soft, non-clinical, and low-skilled—which is exactly why reimbursement rates remain so low. But this perception is shaped less by the nature of the work than by the limits of the system that delivers it. When care is fragmented, undocumented, and manually coordinated, it’s easy for policymakers to underfund it and for clinicians to ignore it.

With the right software infrastructure, home care becomes a platform. It becomes the connective tissue between daily living and clinical insight: a place where remote patient monitoring feeds into real-time interventions, where in-home aides are supported by intelligent workflows, where telehealth, vitals tracking, medication adherence, and caregiver observations flow into one continuous stream of context-rich care.

This is the same transformation we’ve seen across other service industries. DoorDash didn’t just digitize food delivery—it redefined the restaurant. Amazon didn’t just optimize shopping—it redefined distribution. Zoom didn’t just replace meetings—it rewired the geography of work. In each case, technology didn’t serve the legacy system—it replaced the coordination layer, and in doing so, changed the nature of the service.

Home care is next. With an intelligent, AI-driven operating system, the home is no longer the periphery of care. It becomes the center—the first line of observation, the earliest site of intervention, and the most stable setting for long-term health.

If we treat the home as the center of care—not just a setting for support, but a site of coordination—then the economic logic changes too. Suddenly, we’re not just talking about lowering costs through efficiency. We’re talking about unlocking a reallocation of the $1.5 trillion the U.S. spends annually on hospital and institutional care.

Acute care settings—hospitals, nursing homes, rehab facilities—absorb the majority of healthcare spending. But much of that spending is reactive: managing crises that could have been prevented with earlier intervention. One in five Medicare patients is readmitted to the hospital within 30 days. Chronic conditions like diabetes, heart failure, and COPD account for the majority of admissions—and nearly all of them are daily-life-sensitive.

If we can shift just a fraction of that spend upstream—toward smarter home-based monitoring, proactive check-ins, and consistent care delivered by trained aides—we don’t just lower costs. We improve outcomes. We catch problems earlier. We avoid hospitalizations entirely. A 2022 CMS pilot found that enhanced home care with remote monitoring reduced hospitalizations by 30 percent and emergency room visits by 40 percent in high-risk populations. If even a modest share of Medicare and Medicaid budgets were redirected toward this kind of integrated home care, it would represent a doubling or tripling of current home care reimbursement rates—without increasing total spend.

This is the opportunity in front of us—not just to fix a broken system, but to finish the work that was started generations ago. When Lyndon Johnson signed Medicare and Medicaid into law, he wasn’t just solving a policy problem; he was laying the foundation for a functional society—a system that recognized care not as a luxury or a handout, but as a condition for national vitality. We don’t need new slogans or new entitlements. We need to rebuild the Great Society with the tools of this century. That means applying AI not as a novelty, but as institutional infrastructure: software that replaces manual coordination, stabilizes agency margins, and turns caregiving into a job worth doing.

]]>Carefully Balance What Your Code Promises and What it Demands; Err on the Side of Promising Less

Every piece of code promises some things to its users — e.g., “this API endpoint will return an object

]]>[Originally written by Zingage Principle Engineer Ethan Resnick as an internal memo]

Carefully Balance What Your Code Promises and What it Demands; Err on the Side of Promising Less

Every piece of code promises some things to its users — e.g., “this API endpoint will return an object with fields x, y, z”; or, “this object supports methods a, b, c”.

Put simply, the more things that a piece of code promises, the harder the code is to change, because a new version of the code either has to continue to deliver all the things that the old code was promising, or all the users of the old code must be updated to no longer depend on the parts of the old promise that the new code will no longer fulfill.

Therefore, the key to long-term engineering velocity is to keep the set of things your code promises small. This usually boils down to not returning data and not supporting operations that aren’t truly necessary.

Some examples of this in practice:

- We've gradually removed methods from our repository classes, and stopped adding a bunch of methods by default, because keeping that API surface small makes it easier to refactor how the underlying data is stored/queried. Similarly, we're phasing out the ability to load entities with their relations, and to save an entity with a bunch of related entities, because those promised abilities are very hard to maintain under many refactors (e.g., as we move off TypeORM, or as we split data across dbs/services).

- As a large-scale, non-Zingage example, consider how the QUIC protocol, which powers HTTP/3, encrypts more than just the message’s content — e.g., it also encrypts details about what protocol features/extensions are being used. These details aren’t actually private, but they’re encrypted primarily so that boxes between the sending and receiving ends of the HTTP request (e.g., routers, firewalls, caches, proxies, etc) can’t see these details, and therefore can’t create implementations that rely on this information in any way. Exposing less information to these middle boxes was a conscious design decision aimed at making the protocol more evolvable, after TCP proved essentially impossible to change at internet scale.

However, there is one real tension when adhering to this principle of “keeping the contract small”, namely: the flip-side of a piece of code promising fewer things is that the user of the code can depend on less.

A great example is whether a field in a returned object can be null. If the code that produces the object promises that the field will be non-null, that promise directly limits the system’s evolvability; supporting a new use case where there is no sensible value for the field (so it should be null) becomes a complicated task of updating all the code’s users to handle null. However, before a use case arises where the field does need to be null, promising that the field will be non-null simplifies all the code that has to work with it; it doesn’t have to be built to handle the case of the field being missing prematurely.

So, when deciding exactly what your code should promise, consider:

- Are the users of the code under your control? For example, our main API is only used by our frontend, which we control. Therefore, it’s relatively-straightforward to make a breaking change in the contract exposed by the API, as we can also update the frontend. Contrast this with an API endpoint in the partnership API, which is called by our customers: we don’t control their code, so changing the endpoint’s contract to no longer return certain data requires a long, complicated coordination process with them. In situations like this, where you don’t control the consumers, keeping your promises small is essential.

- How easy is it to identify and coordinate with all the code’s users? In the case of an endpoint called by our customers, we at least have a comprehensive list of our customers, a way to email all of them; plus, we have a way to see which customers are using which endpoints (through API usage logs or similar). This makes it possible, if time consuming, to coordinate breaking changes with them. However, imagine we had an API endpoint that was open to the world with no authentication; in that case, we’d have no way to know who’s using it, and no effective way to coordinate with them to update their code to accommodate a breaking change. Unauthenticated open endpoints are one extreme; the other extreme might be a Typescipt utility function in our API repo. In a case like that, if we change the function’s return type or to take an extra argument, Typescript will literally guide us to all the callers of the function that are broken by this change.

- Corollary: Invest in tracking the usage of your APIs, because the easier you can identify callers, the easier it is to change the API.

- How much would the callers benefit from a particular addition your code’s promises? Promising that a field won’t be null is a great example of promising something that isn’t strictly necessary to promise — and yet doing so can be worth it if it saves a lot of users of the code a lot of boilerplate and/or edge-case-handling work, which they might need were the field marked as nullable.

- How hard will it be to uphold the promise over time?

Finally, it’s not just the case that code promises things to its users; it also demands some things from them — usually in the form of required arguments. Here again, there’s a similar balancing act: the more your code demands, the more flexible it is — future use cases may be much easier to support if your code can count on having certain arguments provided to it, e.g., and those arguments have no cost from its perspective (it can always ignore some of them, or can loosen those demands later by making some optional). However, everything your code demands is something that its users must be able to promise, so this can complicate all users (e.g., to get the data for the required argument into them).

Make Illegal States Unrepresentable

A huge part of what makes programming hard is that the system can be in an inordinate amount of states, and its very hard to write bug-free code that properly accounts for all of them. By making illegal states/inputs unrepresentable, we can greatly reduce the number of bugs and make our code more reliable and easier to reason about, with the need for fewer assertions.

See https://www.hillelwayne.com/post/constructive/, which refers to this as “constructive data modeling” and reviews some common approaches.

One common manifestation of this principal in our code base is the use of discriminated unions rather than having multiple cases smooshed into one object type with some nullable properties.

To give a concrete example, you should avoid something like:

type ProfileOrBusinessId = { profileId?: string; businessId?: string }

Instead, prefer:

type ProfileOrBusinessId =

| { type: "BUSINESS_ID", id: string }

| { type: "PROFILE_ID", id: string }

The difference is that the former type allows zero or two ids to be provided — both of which should be illegal — and forces all the consuming code to handle both illegal cases. This can create cascading complexity (e.g., if a consuming function throws when it gets no ids, then its caller has to be prepared to catch that error too) and different bits of consuming code could easily end up doing different things in the case where both ids are provided (if they the properties in a different order). By contrast, the second type requires exactly one id to be provided.

“Parse, Don’t Validate”

After your code verifies something about the structure of input value it’s working with, strongly consider encoding that result into the types. See also https://lexi-lambda.github.io/blog/2019/11/05/parse-don-t-validate/

Our extensive use of tagged string types are a good example of that: after we verify something at runtime, we record it in the types so that we can write code that, at the type level, requires the right kind of argument. (See, e.g., OwnedEntityId and isOwnedEntityId).

Duplicate Coincidentally-Identical Code

Two pieces of code that currently do the same thing should only reference common logic or types for that functionality (e.g., a shared base class or a shared utility function) — that is, the code should only be “made DRY” — if the two pieces of code should always, automatically evolve together.

Some concrete examples:

- The input type (e.g., DTO) for updating an entity should not inherit from the type used when creating the entity. If the update type were to

extendthe creation type, that would mean that any new field that can be provided on creation would also automatically be allowed in the input to an update. However, not every new field should be set updateable: having fields be automatically accepted in an update input can create security risks, and it extends the contract that your code has to uphold. On balance, then, it’s likely better to force the developer to decide, for each new field, whether and how that field should be allowed on update — even if that requires a bit more duplication and boilerplate for fields that are settable on create and update. (This example is just a variation of the “fragile base class” problem.) - The types used in the application to reflect the shape of values stored in the database should not be the same as, or in any way linked to, the types that the application’s services use to reflect their legal inputs, outputs, or intermediate values. The reason is that a change to the type the application is working with does not automatically change the shape of data in the database; that data will only change if the developer remembers to explicitly migrate it. Therefore, having the type used for data in the database be linked to (and therefore automatically change with) other types turns the database types into lies, and actively hides the need to first migrate the data in the database, which the compiler would otherwise warn about.

In general, consider that, when multiple pieces of code reference some shared logic, updating that logic in the one place where it’s defined will lead those changes to cascade everywhere; that’s the whole point of DRY, but it’s a double-edged sword: sometimes, it prevents bugs by keeping every user of the shared logic in sync, on the latest (most-correct) version; but, sometimes the automatic propagation of changes (to places where the new assumptions/behavior of the new code should not apply) is itself the cause of bugs. Hence the original guidance: DRY up code that you’re reasonably confident should always, automatically evolve together, or DRY up code where, because of the specifics of the domain, the benefits of automatic change propagation outweigh the risks.

]]>The mythology of startup creation follows a familiar script: brilliant founder has breakthrough insight, starts company in garage, changes world. But there's a more interesting pattern hiding in plain sight: many of the most transformative companies aren't born

]]>[Written by Zingage Head of Operations Samantha Tepper]

The mythology of startup creation follows a familiar script: brilliant founder has breakthrough insight, starts company in garage, changes world. But there's a more interesting pattern hiding in plain sight: many of the most transformative companies aren't born from solitary inspiration – they emerge from the DNA of other great companies.

This isn't just about talented people leaving to start new ventures. It's about how solving hard problems inside fast-growing companies creates the perfect conditions for identifying massive opportunities.

Consider Snowflake, now valued at over $50 billion. Its founders, Benoit Dageville and Thierry Cruanes, spent years as data architects at Oracle, where they intimately understood the limitations of traditional data platforms. This deep operational experience with Oracle's technology and customer needs didn't just inform Snowflake's creation – it was essential to it. They didn't just have an idea for better data warehousing; they had years of pattern recognition about what actually worked and what didn't at massive scale.

We see similar patterns elsewhere in enterprise software. Some trace Retool's innovative approach to internal tooling back to experiences at Palantir, where the challenges of working with complex data systems reportedly inspired new thinking about how to build internal tools. While the exact details of this lineage aren't widely documented, it points to a broader truth about how innovation propagates through the technology industry.

Why does this pattern keep repeating? Three factors make great companies natural incubators for even greater ones:

- Scale creates visibility into problems worth solving. When you're operating at scale, you encounter problems that aren't just annoying – they represent massive market opportunities if solved. The internal tools teams build to solve these problems often have immediate product-market fit because they're built for real needs.

- High-performance teams develop exceptional pattern recognition. Working on complex problems at scale gives builders a sixth sense for which solutions could become standalone products. This isn't just about technical insight – it's about understanding what makes a solution truly valuable.

- These environments force pragmatic innovation. When you're building internal tools for a growing company, you can't hide behind theory. The solutions either work at scale or they don't. This creates a unique kind of builder – one who combines vision with practical experience.

We're entering an era where the most valuable companies will emerge from the DNA of today's scale-ups. Not through acquisition or investment, but through the natural evolution of solving hard problems with great teams. The next wave of breakthrough startups are probably being built right now as an internal tool somewhere.

At Zingage, we're assembling a team of renegades who refuse the AI replacement narrative, and embrace abundance. We're building Agent Swarm that allows everyday entrepreneurs - not just those in Silicon Valley - to bootstrap their businesses from zero to thousands of customers. If this sounds interesting, we'd love to chat at [email protected].

]]>The Bedrock Economy is the part of the economy that’s essential to our everyday lives, yet hard to automate and impossible to offshore. These are the same people taking

]]>Kuzushi is the team behind Zingage.com. We are a team of builders deploying AI for the Bedrock Economy.

The Bedrock Economy is the part of the economy that’s essential to our everyday lives, yet hard to automate and impossible to offshore. These are the same people taking care of our parents, moving goods from coast to coast, and building infrastructure for the coming decades.

When COVID hit in 2021, more than 200,000 businesses in healthcare, construction, food, and hospitality closed doors permanently. Though demand was often through the roof, these businesses struggled operationally. Behind the scenes an army of back offices battling with fragmented software, employee turnover, and a changing regulatory environment. With healthcare costs up 25% in the last 5 years and the price of housing seemingly unreachable for millions of Americans, the stakes have never been higher.

"Our goal is to make running these essential businesses as easy as selling on Shopify."

By adopting AI, the Bedrock Economy can leapfrog from paper forms and fax machines to autonomous agents. Companies will soon be able to automate whole categories of back-office processes without ripping out their system of records, going through expensive retraining, or needing hyper-customized software.

A picture of our customer workflow pre-Kuzushi. They were using 5 different systems to manage their patient onboarding process.

At Kuzushi, we are building anthropomorphic AI – a digital colleague on your side 24/7.

We see a future where everyday entrepreneurs can scale without growing their headcount, reinvest these savings into their employees, and elevate the role of back offices from paper pushers to process designers. We see a future where new entrepreneurs are ushered into best operational practices from Day 1, so that they can get back to growth.

Since launching in 2023, we’ve successfully scaled our first vertical. We’re now working with some of America’s largest Healthcare providers, onboarding thousands of new patients and clinicians every month, and slated to reach profitability this year. We raised a seed round from the same investors behind generational companies like Airtable, Deel, and Figma.

At Kuzushi, we’re humanists at heart. We believe entrepreneurship is the essence of the American Dream, and we believe that our work will enable today’s employees to earn a stake in tomorrow’s economy. You’ll be working alongside teammates who’ve scaled marketplaces to 9-figures in GMV, early builders at AI and fintech unicorns like Ramp.

Kuzushi is always looking to work with talented engineers, designers, and researchers. We heavily prioritize learning speed over seniority and give highly competitive ownership for ones who are in it for the long run. When you join, expect to work on large-scale data platforms, pioneering building blocks for “work”, and designing AI-native user interaction.

What is Kuzushi?

Kuzushi means to break balance. It is a term used in Judo that describes what a Judoka must establish or capitalize on to take down their opponent. We named the company Kuzushi because we believe that winning in markets is the same. It is a series of Kuzushi moments that we either create or seize to push forward against tiny odds of success. Kuzushi is the most high agency way to describe “why now” moments.

We see Kuzushi or the opportunity for Kuzushi in so many places. Two examples: on engineering we are able to ship code multiple folds faster by leveraging Cursor; in sales, we’re automating high touch b2b outreach with AI copy that would’ve cost hours of sales time to create. Neither of these opportunities will last forever but while they do we will capitalize to maximize distribution and fortify our position.

“Whatever you do, do it constantly and massively increase the scope of your ambition.”