- Create a versatile blog site

- Create a framework that makes it easy to add external data to the site

- Give the site the capacity to replicate the logging and rating I do on Serialized and Letterboxd.

- Be able to pull down RSS feeds from other sites and create forward links to my other sites

- Create forward links to sites I want to post about.

- Create a way to pull in my Goodreads data and display it on the site

- Create a way to automate pulls from other data sources

- Combine easy inputs like text lists and JSON data files with markdown files that I can build on top of.

- Add a TMDB credit to footer in base.njk

- Make sure tags do not repeat in the displayed tag list.

- Get my Kindle Quotes into the site

- YouTube Channel Recommendations

- Minify HTML via Netlify plugin.

- Log played games

Day 19

Ok, so I'm going to start setting up the unit tests now to build up to the functionality I want.

Here's the first two, initiating my connection and getting posts:

test('checkPDSForPosts returns the correct number of posts', async () => {

let connectionManager = await getConnection();

if (false == connectionManager) {

throw new Error("connection manager failed");

}

const posts = await checkPDSForPosts(10, 'app.bsky.feed.post', connectionManager.rpc, connectionManager.manager, connectionManager.config);

expect(posts.length).toBe(10);

})

test('Can get a specific post with getSpecificRecord', async () => {

let connectionManager = await getConnection();

if (false == connectionManager) {

throw new Error("connection manager failed");

}

const { rpc, manager, config } = connectionManager;

const rkey = '3kulbtuuixs27'; // Replace with a valid rkey

const post = await getSpecificRecord(rkey, rpc, config.handle, 'app.bsky.feed.post');

expect(post).toBeDefined();

expect(post.value).toBeDefined();

console.log(post);

expect(post.value.createdAt).toBe("2024-06-10T14:27:41.118Z");

});This all works well, so we've got the basics.

The next step is to look at function pushOrUpsertPost and get that working.

I was hoping there would be some sort of search function in the PDS, and I'm not the only one. There's a good walkthrough of the basics for the ATmosphere here that is a place to start up, as is the data model. The protocol does seem to indicate there is no standard for search that exists at the protocol level. There's a listener for Lexicons. But most things are looking for specific posts.

I guess no real option for that other than building my own index, which is not the way to go for this project. I'll have to make sure I save rkey values into the files that they are mapped to.

I'll need to generate TIDs consistently, that should be its own function too, that will let me configure it more effectively.

Let's cover that with tests too:

test('can generate a TID consistently with a record', () => {

const record = { date: "2024-06-10T14:27:41.118Z" };

const tid = generateTID(record);

expect(tid).toBeDefined();

const tid2 = generateTID(record);

expect(tid).toBe(tid2);

});

test('can generate TIDs without a record', async () => {

const tid = generateTID(null);

expect(tid).toBeDefined();

await new Promise(resolve => setTimeout(resolve, 1000));

const tid2 = generateTID(null);

expect(tid).not.toBe(tid2);

});Let's do the insert!

Let's run a test to insert the first record:

test('update a post with pushOrUpsertPost', async () => {

let connectionManager = await getConnection();

if (false == connectionManager) {

throw new Error("connection manager failed");

}

const { rpc, manager, config } = connectionManager;

const lex = 'test.record.activity';

const record = {

$type: lex,

type: 'test',

date: new Date(),

testProject: 'marksky-pub',

testContext: 'ts-vitest',

testOwner: 'AramZS'

}

let result = await pushOrUpsertPost(false, rpc, config.handle, lex, record);

expect(result).toBeDefined();

expect(result.rKey).toBeDefined();

expect(result.resultRecord).toBeDefined();

});That worked! We can take the rkey 3mewhxxahis3h and pull it in so this gets upsert (hopefully).

Let's do the update

Update the test to:

test('update a post with pushOrUpsertPost', async () => {

let connectionManager = await getConnection();

if (false == connectionManager) {

throw new Error("connection manager failed");

}

const { rpc, manager, config } = connectionManager;

const lex = 'test.record.activity';

const record = {

$type: lex,

type: 'test',

date: new Date(),

testProject: 'marksky-pub',

testContext: 'ts-vitest',

testOwner: 'AramZS',

insertStatus: 'upsert'

}

let result = await pushOrUpsertPost('3mewhxxahis3h', rpc, config.handle, lex, record);

expect(result).toBeDefined();

expect(result.rKey).toBeDefined();

expect(result.resultRecord).toBeDefined();

});Looks like this uploaded!

Uploaded activity with rkey: 3mewhxxahis3h {

uri: 'at://did:plc:t5xmf33p5kqgkbznx22p7d7g/test.record.activity/3mewhxxahis3h',

cid: 'bafyreifkiskhiuv6bf2jrskn2xkdpyi4yf2fq54k56z3gxcaczhkz6jqbu',

value: {

date: '2026-02-15T21:25:55.735Z',

type: 'test',

'$type': 'test.record.activity',

testOwner: 'AramZS',

testContext: 'ts-vitest',

testProject: 'marksky-pub'

}

}But it isn't adding the additional property or changing the date value? It isn't on the raw record either.

Hmmm. I had assumed it is possible, but is it not? I see that one coder verdverm created a flow that copies, deletes, and inserts a new version of the record. Nothing in the posts examples for BlueSky. I'll ask. But this is going well.

Next step will be being able to grab a Markdown file and manipulate it to pull from and push the rkey to. I'll need to handle when it is already there, maybe comparing the values and then only handling updates when the file is changed? After that we'll want to scan a specified directory for markdown files.

I think maybe I need to get the original record, pull the cid from a top-level object like

{

"uri": "at://did:plc:t5xmf33p5kqgkbznx22p7d7g/test.record.activity/3mewhxxahis3h",

"cid": "bafyreifkiskhiuv6bf2jrskn2xkdpyi4yf2fq54k56z3gxcaczhkz6jqbu",

"value": {

"date": "2026-02-15T21:25:55.735Z",

"type": "test",

"$type": "test.record.activity",

"testOwner": "AramZS",

"testContext": "ts-vitest",

"testProject": "marksky-pub"

}

}and then pass it into a field on the record at swapCommit?

I think that's what I'm seeing in the verdverm example:

let i: PutRecordInputSchema = {

repo,

collection,

rkey,

record,

}

if (swapCommit) {

i.swapCommit = swapCommit

}

if (swapRecord) {

i.swapRecord = swapRecord

}

return agent.com.atproto.repo.putRecord(i)Oh wait, my fkup here. Forgot to put the right flow in.

Ok, I now have a full update-record flow for atproto here:

export const generateTID = (record: any) => {

let recordDate: Date;

if (record){

recordDate = record.date ? new Date(record.date) : new Date();

} else {

recordDate = new Date();

}

let recordInMS = recordDate.getTime(); // This returns ms right?

// needs to go from milliseconds to microseconds.

return TID.create(recordInMS * 1000, CLOCK_ID);

}

export const putRecord = async (input: any) => await ok(rpc.post('com.atproto.repo.putRecord' as any, {

input

}));

export const pushOrUpsertPost = async (origRkey: string | false, rpc: Client, handle: string, collection: string, recordData: any) => {

//Creates that unique key from the startTime of the activity so we don't have duplicates

let rKey = origRkey ? origRkey : generateTID(recordData)

let newRecord = origRkey ? false : true;

console.log(`Using rkey: ${rKey}. New status: ${newRecord}`);

//let resultRKey = rKey;

let resultRecord;

let inputObj = {

repo: handle,

collection,

rkey: rKey,

record: recordData,

}

if(!newRecord){

// resultRecord = await getSpecificRecord(rKey, rpc, handle, collection);

console.log('updating record')

resultRecord = await putRecord(inputObj);

} else {

resultRecord = await putRecord(inputObj);

}

console.log(`Uploaded activity with rkey: ${rKey}`, resultRecord);

return {rKey, resultRecord};

};On the ATProto Touchers discord, user Nelind gave me the heads up on what swap is used for:

swapRecord and swapCommit essentially say "only perform this update if ..." swapRecord being if the current value of the record is the value provided and swapCommit being if the current commit has the CID provided

basically you can say you only want to update if no other client has changed either the record you want to update or the repo as a whole since last you saw it

you use it to avoid overriding changes other sessions have made or write invalid data due to changes to other records that other sessions have made

Useful and good to know. Maybe this is something I'll want to use if I want a more complex but foolproof updating flow.

]]>- Create a versatile blog site

- Create a framework that makes it easy to add external data to the site

- Give the site the capacity to replicate the logging and rating I do on Serialized and Letterboxd.

- Be able to pull down RSS feeds from other sites and create forward links to my other sites

- Create forward links to sites I want to post about.

- Create a way to pull in my Goodreads data and display it on the site

- Create a way to automate pulls from other data sources

- Combine easy inputs like text lists and JSON data files with markdown files that I can build on top of.

- Add a TMDB credit to footer in base.njk

- Make sure tags do not repeat in the displayed tag list.

- Get my Kindle Quotes into the site

- YouTube Channel Recommendations

- Minify HTML via Netlify plugin.

- Log played games

Day 18

Ok, that worked, what does an article update look like?

I made an edit to my one post on Leaflet. Looks like it just adjusts the record in place, which is nice. Updates are pretty easy then.

Ok, so then how to integrate this with my publishing flow? Let's take a look at a basic authenticated flow from BlueSky. Looks like there is also some ways to reference standard types for standard.site. This uses the atcute package, which seems to be well respected.

There seems to be an interesting example of comments out there, but that doesn't post anything.

There's also an interesting exploration of upserting records.

A good basic example here as well.

To make this flexible, we should make it as it's own extension for static sites. Let's try to make a basic version.

First, I want to get used to tangled, so I'll set the repo up on there.

I'm pretty sure my PDS host would be https://bsky.social right?

I can start with the example. I'll reorg it a little into the pieces I need as a start.

Ok, a nice place to pick up later from.

]]>- Create a versatile blog site

- Create a framework that makes it easy to add external data to the site

- Give the site the capacity to replicate the logging and rating I do on Serialized and Letterboxd.

- Be able to pull down RSS feeds from other sites and create forward links to my other sites

- Create forward links to sites I want to post about.

- Create a way to pull in my Goodreads data and display it on the site

- Create a way to automate pulls from other data sources

- Combine easy inputs like text lists and JSON data files with markdown files that I can build on top of.

- Add a TMDB credit to footer in base.njk

- Make sure tags do not repeat in the displayed tag list.

- Get my Kindle Quotes into the site

- YouTube Channel Recommendations

- Minify HTML via Netlify plugin.

- Log played games

Day 17

Let's start today by uploading a blob for the image.

goat blob upload public/img/posts/1443px-Typical-orb-web-photo.jpg

That seems to have uploaded it. I get back the response:

{

"$type": "blob",

"ref": {

"$link": "bafkreig2247wcpqjkqy2ukjh4gjyqhpl32kg3pva4x55npjmuh4joeware"

},

"mimeType": "image/jpeg",

"size": 347901

}Ok, so here's the formed document so far:

{

"$type": "site.standard.document",

"publishedAt": "2024-06-08T10:00:00.000Z",

"site": "at://did:plc:t5xmf33p5kqgkbznx22p7d7g/site.standard.publication/3mbrgnnqzrr2q",

"path": "/essays/the-internet-is-a-series-of-webs/",

"title": "The Internet is a Series of Webs",

"description": "The fate of the open web is inextricable from the other ways our world is in crisis. What can we do about it?",

"coverImage": {

"$type": "blob",

"ref": {

"$link": "bafkreig2247wcpqjkqy2ukjh4gjyqhpl32kg3pva4x55npjmuh4joeware"

},

"mimeType": "image/jpeg",

"size": 347901

},

"textContent": "",

"bskyPostRef": "https://bsky.app/profile/chronotope.aramzs.xyz/post/3kulbtuuixs27",

"tags": ["IndieWeb", "Tech", "The Long Next"],

"updatedAt":"2024-06-08T10:30:00.000Z"

}I think this is a full post now? I just add the full text content from here. Plaintext representation of the documents contents. Should not contain markdown or other formatting.

What is out there for site.standard.document?

I see some folks are using a Markdown type, even if it doesn't have an NSID:

"content": {

"$type": "site.standard.content.markdown",

"text": "<markdown here>",

"version": "1.0"

},I'm not sure that is the right hierarchy? Should it be site.standard.document.content.markdown? I see others are going that direction. Well, might as well use what is out there.

I'll write a little script to pull the markdown into a single line:

#!/bin/bash

# Check if file argument is provided

if [ $# -eq 0 ]; then

echo "Usage: $0 <markdown-file>"

exit 1

fi

# Check if file exists

if [ ! -f "$1" ]; then

echo "Error: File '$1' not found"

exit 1

fi

# Convert markdown to single line with \n for linebreaks and escape double-quotes

awk '{gsub(/"/, "\\\""); printf "%s\\n", $0}' "$1" | sed 's/\\n$//'Final document is:

{

"$type": "site.standard.document",

"publishedAt": "2024-06-08T10:00:00.000Z",

"site": "at://did:plc:t5xmf33p5kqgkbznx22p7d7g/site.standard.publication/3mbrgnnqzrr2q",

"path": "/essays/the-internet-is-a-series-of-webs/",

"title": "The Internet is a Series of Webs",

"description": "The fate of the open web is inextricable from the other ways our world is in crisis. What can we do about it?",

"coverImage": {

"$type": "blob",

"ref": {

"$link": "bafkreig2247wcpqjkqy2ukjh4gjyqhpl32kg3pva4x55npjmuh4joeware"

},

"mimeType": "image/jpeg",

"size": 347901

},

"textContent": "<textContent>",

"content": {

"$type": "site.standard.content.markdown",

"text": "<markdown here>",

"version": "1.0"

},

"bskyPostRef": "https://bsky.app/profile/chronotope.aramzs.xyz/post/3kulbtuuixs27",

"tags": ["IndieWeb", "Tech", "The Long Next", "series:The Wild Web"],

"updatedAt":"2024-06-08T10:30:00.000Z"

}bskyPostRef can't be a string apparently as the post did not validate in goat. I was able to find a reason using atproto.tools. So now I know from the definition that I need:

"bskyPostRef": {

"$type": "com.atproto.repo.strongRef",

"uri": "at://did:plc:t5xmf33p5kqgkbznx22p7d7g/app.bsky.feed.post/3kulbtuuixs27",

"cid": "bafyreigh7yods3ndrmqeq55cjisda6wi34swt7s6kkduwcotkgq5g5y2oe"

}Since this will be my first document record, I have to push without the validation.

goat record create fightwithtools-publication.json --no-validate

and I get back:

at://did:plc:t5xmf33p5kqgkbznx22p7d7g/site.standard.document/3mdbvp5q2kz2l bafyreiedky4yjivfcm5df5ygqy7vkt3q3qdvzppcg7mcq4osyefjaizyd4

And hey, looks like it worked!

For the curious my final json doc was:

{

"$type": "site.standard.document",

"publishedAt": "2024-06-08T10:00:00.000Z",

"site": "at://did:plc:t5xmf33p5kqgkbznx22p7d7g/site.standard.publication/3mbrgnnqzrr2q",

"path": "/essays/the-internet-is-a-series-of-webs/",

"title": "The Internet is a Series of Webs",

"description": "The fate of the open web is inextricable from the other ways our world is in crisis. What can we do about it?",

"coverImage": {

"$type": "blob",

"ref": {

"$link": "bafkreig2247wcpqjkqy2ukjh4gjyqhpl32kg3pva4x55npjmuh4joeware"

},

"mimeType": "image/jpeg",

"size": 347901

},

"textContent": "<textContent>",

"content": {

"$type": "site.standard.content.markdown",

"text": "<markdown here>",

"version": "1.0"

},

"bskyPostRef": {

"$type": "com.atproto.repo.strongRef",

"uri": "at://did:plc:t5xmf33p5kqgkbznx22p7d7g/app.bsky.feed.post/3kulbtuuixs27",

"cid": "bafyreigh7yods3ndrmqeq55cjisda6wi34swt7s6kkduwcotkgq5g5y2oe"

},

"tags": ["IndieWeb", "Tech", "The Long Next", "series:The Wild Web"],

"updatedAt":"2024-06-08T10:30:00.000Z"

}- Create a versatile blog site

- Create a framework that makes it easy to add external data to the site

- Give the site the capacity to replicate the logging and rating I do on Serialized and Letterboxd.

- Be able to pull down RSS feeds from other sites and create forward links to my other sites

- Create forward links to sites I want to post about.

- Create a way to pull in my Goodreads data and display it on the site

- Create a way to automate pulls from other data sources

- Combine easy inputs like text lists and JSON data files with markdown files that I can build on top of.

- Add a TMDB credit to footer in base.njk

- Make sure tags do not repeat in the displayed tag list.

- Get my Kindle Quotes into the site

- YouTube Channel Recommendations

- Minify HTML via Netlify plugin.

- Log played games

Day 16

Ok, I was able to get the site information published!

Next up is pushing a blog post with the format.

goat account login -u chronotope.aramzs.xyz -p <app password>

So now we craft a JSON in the correct format for a post. We'll start with one of my essays.

If I want to link my main BlueSky post, I need to use a com.atproto.repo.strongRef apparently. This is a standard lexicon type.

I can pull it down with goat lex pull com.atproto.repo.strongRef.

There doesn't seem to be a an example of that? I guess that makes sense if it isn't an actual object. But the docs indicate it can just be a string of either a URI or a CID. I could use the URL to the post, but let's do the CID? How does one get the CID? I see it in the metadata on PDSL. But it isn't in the document or on the goat data. What is a CID anyway? Well I guess I'll look it up. Not so useful. Looks like there is a spec though.

Seems complicated. I'll just do the URL for now.

Interesting that accept: [image/*] is the value for coverImage. I don't know what that means? Let's see if we can find some examples. Let's see if we can find some examples. Here's a fully formatted one.

{

"path": "/a/3mcqr6f5jdg23-hi-from-brookie",

"site": "at://did:plc:v46ojbiop5ebs5h7gaomixcc/site.standard.publication/3mcqr4rrb7x22",

"$type": "site.standard.document",

"title": "Hi from Brookie",

"content": {

"$type": "app.offprint.content",

"items": [

{

"$type": "app.offprint.block.imageGrid",

"images": [

{

"image": {

"$type": "blob",

"ref": {

"$link": "bafkreihub3ikzctbstjy5e34g4hw2ux7tbryd7cpcqg7fbosaxlicapggu"

},

"mimeType": "image/png",

"size": 13270

},

"aspectRatio": {

"width": 480,

"height": 480

}

},

{

"image": {

"$type": "blob",

"ref": {

"$link": "bafkreib5zrxr33anmw6gxjr5232uo3y324xpizzv7c7433jugllobvulxu"

},

"mimeType": "image/png",

"size": 13325

},

"aspectRatio": {

"width": 480,

"height": 480

}

},

{

"image": {

"$type": "blob",

"ref": {

"$link": "bafkreifyqhedylyfocp3wmn4kcv4fe6b2n7cdpmfs2x2ljvdby64gvirjm"

},

"mimeType": "image/png",

"size": 13358

},

"aspectRatio": {

"width": 480,

"height": 480

}

},

{

"image": {

"$type": "blob",

"ref": {

"$link": "bafkreig2xm6piu7lzclljzyiahowvflajsylasqjtnl26m4mqz7gpwqgwu"

},

"mimeType": "image/png",

"size": 13322

},

"aspectRatio": {

"width": 480,

"height": 480

}

}

],

"gridRows": 2,

"aspectRatio": "mosaic"

},

{

"$type": "app.offprint.block.imageDiff",

"images": [

{

"image": {

"$type": "blob",

"ref": {

"$link": "bafkreihub3ikzctbstjy5e34g4hw2ux7tbryd7cpcqg7fbosaxlicapggu"

},

"mimeType": "image/png",

"size": 13270

},

"aspectRatio": {

"width": 480,

"height": 480

}

},

{

"image": {

"$type": "blob",

"ref": {

"$link": "bafkreifyqhedylyfocp3wmn4kcv4fe6b2n7cdpmfs2x2ljvdby64gvirjm"

},

"mimeType": "image/png",

"size": 13358

},

"aspectRatio": {

"width": 480,

"height": 480

}

}

],

"labels": [

"Before",

"After"

],

"alignment": "center"

},

{

"$type": "app.offprint.block.imageCarousel",

"images": [

{

"image": {

"$type": "blob",

"ref": {

"$link": "bafkreihub3ikzctbstjy5e34g4hw2ux7tbryd7cpcqg7fbosaxlicapggu"

},

"mimeType": "image/png",

"size": 13270

},

"aspectRatio": {

"width": 480,

"height": 480

}

},

{

"image": {

"$type": "blob",

"ref": {

"$link": "bafkreib5zrxr33anmw6gxjr5232uo3y324xpizzv7c7433jugllobvulxu"

},

"mimeType": "image/png",

"size": 13325

},

"aspectRatio": {

"width": 480,

"height": 480

}

},

{

"image": {

"$type": "blob",

"ref": {

"$link": "bafkreifyqhedylyfocp3wmn4kcv4fe6b2n7cdpmfs2x2ljvdby64gvirjm"

},

"mimeType": "image/png",

"size": 13358

},

"aspectRatio": {

"width": 480,

"height": 480

}

},

{

"image": {

"$type": "blob",

"ref": {

"$link": "bafkreig2xm6piu7lzclljzyiahowvflajsylasqjtnl26m4mqz7gpwqgwu"

},

"mimeType": "image/png",

"size": 13322

},

"aspectRatio": {

"width": 480,

"height": 480

}

}

],

"autoplay": false,

"interval": 3000

},

{

"$type": "app.offprint.block.text",

"plaintext": "I really like all the image things :) "

},

{

"$type": "app.offprint.block.text",

"facets": [

{

"index": {

"byteEnd": 8,

"byteStart": 0

},

"features": [

{

"did": "did:plc:eob75vcjtmbaef2tn4evc4sl",

"$type": "app.offprint.richtext.facet#mention",

"handle": "aka.dad"

}

]

},

{

"index": {

"byteEnd": 22,

"byteStart": 9

},

"features": [

{

"did": "did:plc:pgjkomf37an4czloay5zeth6",

"$type": "app.offprint.richtext.facet#mention",

"handle": "offprint.app"

}

]

},

{

"index": {

"byteEnd": 36,

"byteStart": 23

},

"features": [

{

"did": "did:plc:v46ojbiop5ebs5h7gaomixcc",

"$type": "app.offprint.richtext.facet#mention",

"handle": "brookie.blog"

}

]

},

{

"index": {

"byteEnd": 47,

"byteStart": 37

},

"features": [

{

"did": "did:plc:revjuqmkvrw6fnkxppqtszpv",

"$type": "app.offprint.richtext.facet#mention",

"handle": "pckt.blog"

}

]

}

],

"plaintext": "@aka.dad @offprint.app @brookie.blog @pckt.blog "

},

{

"$type": "app.offprint.block.text",

"plaintext": ""

},

{

"$type": "app.offprint.block.callout",

"emoji": "💡",

"plaintext": "Good Job Miguel ! "

},

{

"$type": "app.offprint.block.text",

"plaintext": ""

},

{

"$type": "app.offprint.block.text",

"plaintext": ""

}

]

},

"coverImage": {

"$type": "blob",

"ref": {

"$link": "bafkreif6sve2kjuioifion3apv277sggym4jxhlgrkuyqqxdck7cy7x6c4"

},

"mimeType": "image/png",

"size": 8455

},

"description": "brookie from pckt",

"publishedAt": "2026-01-18T21:08:15-07:00",

"textContent": "I really like all the image things :) \[email protected] @offprint.app @brookie.blog @pckt.blog \n\n💡 Good Job Miguel !"

}It looks like the intended format for that field is:

"coverImage": {

"$type": "blob",

"ref": {

"$link": "bafkreif6sve2kjuioifion3apv277sggym4jxhlgrkuyqqxdck7cy7x6c4"

},

"mimeType": "image/png",

"size": 8455

},The indication here is that you'd push a blob of image data to the PDS it looks like? Let's find the documentation. Ok, interesting. Something to figure out later, it is getting late now.

Ok, so here's the formed document so far:

{

"$type": "site.standard.document",

"publishedAt": "2024-06-08T10:00:00.000Z",

"site": "at://did:plc:t5xmf33p5kqgkbznx22p7d7g/site.standard.publication/3mbrgnnqzrr2q",

"path": "/essays/the-internet-is-a-series-of-webs/",

"title": "The Internet is a Series of Webs",

"description": "The fate of the open web is inextricable from the other ways our world is in crisis. What can we do about it?",

"textContent": "",

"bskyPostRef": "https://bsky.app/profile/chronotope.aramzs.xyz/post/3kulbtuuixs27",

"tags": ["IndieWeb", "Tech", "The Long Next"],

"updatedAt":"2024-06-08T10:30:00.000Z"

}Getting there!

]]>- Create a versatile blog site

- Create a framework that makes it easy to add external data to the site

- Give the site the capacity to replicate the logging and rating I do on Serialized and Letterboxd.

- Be able to pull down RSS feeds from other sites and create forward links to my other sites

- Create forward links to sites I want to post about.

- Create a way to pull in my Goodreads data and display it on the site

- Create a way to automate pulls from other data sources

- Combine easy inputs like text lists and JSON data files with markdown files that I can build on top of.

- Add a TMDB credit to footer in base.njk

- Make sure tags do not repeat in the displayed tag list.

- Get my Kindle Quotes into the site

- YouTube Channel Recommendations

- Minify HTML via Netlify plugin.

- Log played games

Day 15

Looks cool. Let's try it like some others have. Going to try to post blog entries to my PDS.

1 - let's do some testing.

brew install goat

goat account login -u chronotope.aramzs.xyz -p <app password here>

Verify resolution:

goat resolve wyden.senate.gov works.

goat bsky post "A quick test" works. I get back:

at://did:plc:t5xmf33p5kqgkbznx22p7d7g/app.bsky.feed.post/3mbpifihvqm2q bafyreih7zufh4rezvg6h766djnptbaizm2thiuxx4chkzql7wmvdcskcwm

view post at: https://bsky.app/profile/did:plc:t5xmf33p5kqgkbznx22p7d7g/post/3mbpifihvqm2qCan I then retrieve it?

goat get at://did:plc:t5xmf33p5kqgkbznx22p7d7g/app.bsky.feed.post/3mbpifihvqm2q

{

"$type": "app.bsky.feed.post",

"createdAt": "2026-01-05T22:29:16.86Z",

"text": "A quick test"

}Yup!

Let's publish a test on Leaflet.pub to see what it looks like on my PDS:

tags: "test"

$type: "pub.leaflet.document"

pages:

- id: "019b729c-2664-7ddb-9229-31341239825f"

$type: "pub.leaflet.pages.linearDocument"

blocks:

- $type: "pub.leaflet.pages.linearDocument#block"

block:

$type: "pub.leaflet.blocks.text"

facets:

plaintext: "This is a test on Leaflet to see how the record looks. "

title: "A quick test post on Leaflet"

author: "did:plc:t5xmf33p5kqgkbznx22p7d7g"

postRef:

cid: "bafyreic44p5jnfa2eq2zdq6pwnrdi2e4nzhiv3ebd2lhmbo2oxcdszm364"

uri: "at://did:plc:t5xmf33p5kqgkbznx22p7d7g/app.bsky.feed.post/3mbpjrkknys2y"

commit:

cid: "bafyreif3xtgrmgqrdoizotxpyo2ryiqfqwwak6c2lm2jxwrx3xz4icmajy"

rev: "3mbpjrknvxl26"

validationStatus: "valid"

description: "Giving this a try"

publication: "at://did:plc:t5xmf33p5kqgkbznx22p7d7g/pub.leaflet.publication/3makguj34cs2t"

publishedAt: "2026-01-05T22:53:50.696Z"or in JSON

{

"$type": "app.bsky.feed.post",

"createdAt": "2026-01-05T22:53:55.543Z",

"embed": {

"$type": "app.bsky.embed.external",

"external": {

"description": "Giving this a try",

"thumb": {

"$type": "blob",

"ref": {

"$link": "bafkreiblxmhoozultlvi5lx6j3lcvcyfqfiructung6fnkrl4yklacqg5e"

},

"mimeType": "image/webp",

"size": 19322

},

"title": "A quick test post on Leaflet",

"uri": "https://chronotope.leaflet.pub/3mbpjrfwqm22y"

}

},

"facets": [],

"text": ""

}Looks like it hasn't been implimented in leaflet yet? Ok, let's try Thomas's top blog example:

goat record get at://did:plc:txurc6ueald5d7462bpvzdby/site.standard.publication/3mbnlfyowxg2v

and we get a response that describes the publication:

{

"$type": "site.standard.publication",

"description": "Keeping up appearances as a professional software developer who has meaningful things to say about computers, programming, and the industry.",

"name": "Serious Computer Business",

"url": "https://octet-stream.net/b/scb"

}And a post: goat record get at://did:plc:txurc6ueald5d7462bpvzdby/site.standard.document/3mbnqpz3ziw2v

{

"$type": "site.standard.document",

"path": "/2026-01-03-including-rust-in-an-xcode-project-with-pointer-auth-arm64e.html",

"publishedAt": "2026-01-03T06:46:21Z",

"site": "at://did:plc:txurc6ueald5d7462bpvzdby/site.standard.publication/3mbnlfyowxg2v",

"title": "Including Rust in an Xcode project with Pointer Authentication (arm64e)"

}Ok, let's try to make one for this publication? First we'll make a JSON file.

{

"$type": "site.standard.publication",

"description": "This is my outpost for documenting and live blogging my work on various side projects. Occasionally it is also the place I write about stuff I learned or useful information. Sometimes it can also be a place for rough writing that is dev-adjacent but doesn't really make sense for my main blog. \n\nTechnology won't save the world, but you can.",

"name": "Fight With Tools: A Dev Blog",

"url": "https://fightwithtools.dev"

}Huh. How do I do a linebreak? \n\n according to BlueSky.

Huh.

goat record create fightwithtools-publication.json

error: API request failed (HTTP 400): InvalidRequest: Lexicon not found: lex:site.standard.publicationLet's get the Lexicons

goat lex pull site.standard.publication

goat lex pull site.standard.documentOoops they seem to fail lint:

goat lex lint

🟡 lexicons/site/standard/document.json

[missing-primary-description]: primary type missing a description

[unlimited-string]: no max length

[unlimited-string]: no max length

🟡 lexicons/site/standard/publication.json

[missing-primary-description]: primary type missing a description

error: linting issues detectedIt seems this is blocking me from publishing.

Ah, I got some advice, and it turns out for the first entry of a lexicon on my PDS I have to not verify the Lexicon.

goat record create fightwithtools-publication.json --no-validate

- Create a versatile blog site

- Create a framework that makes it easy to add external data to the site

- Give the site the capacity to replicate the logging and rating I do on Serialized and Letterboxd.

- Be able to pull down RSS feeds from other sites and create forward links to my other sites

- Create forward links to sites I want to post about.

- Create a way to pull in my Goodreads data and display it on the site

- Create a way to automate pulls from other data sources

- Combine easy inputs like text lists and JSON data files with markdown files that I can build on top of.

- Add a TMDB credit to footer in base.njk

- Make sure tags do not repeat in the displayed tag list.

- Get my Kindle Quotes into the site

- YouTube Channel Recommendations

- Minify HTML via Netlify plugin.

- Log played games

Day 14

Ok, let's get back at the share button.

The only thing left is to style it. I've got some basic stuff in here but do I want to add an additional button? I think I want to have a Share Openly button.

Easy enough to add the button and the logic, ShareOpenly is very easy to use:

triggerShareOpenly(context) {

const shareUrl = this.url;

const shareTitle = this.shareText || this.title;

const shareLink = 'https://shareopenly.org/share/?url='+encodeURIComponent(shareUrl)+"&text="+encodeURIComponent(shareTitle);

// Open the share dialog with the specified URL and text

window.open(shareLink, '_blank');

}I'll re-layout the buttons as a flex layout.

I want to make the share area background just a little bit darker, but I don't want to add another color. I think I can handle an opacity shift with CSS?

Yeah, looks like there's a way to do this in a straightforward way! I'm not super familiar with this technique, but it does work without me declaring another theme color. Pretty nice.

I made it just slightly darker, to give the whole footer area a gradient style by using the css:

background-color: var(--background-muted);

background-image: linear-gradient(hsla(0,0%,0%,.3) 100%,transparent 100%);This is enough to make it live though! I'll have to figure out the share dialogue positioning later. I'll also add some classes to make it trackable in Plausible.

git commit -am "Get share buttons production ready and add shareopenly"

- Create a versatile blog site

- Create a framework that makes it easy to add external data to the site

- Give the site the capacity to replicate the logging and rating I do on Serialized and Letterboxd.

- Be able to pull down RSS feeds from other sites and create forward links to my other sites

- Create forward links to sites I want to post about.

- Create a way to pull in my Goodreads data and display it on the site

- Create a way to automate pulls from other data sources

- Combine easy inputs like text lists and JSON data files with markdown files that I can build on top of.

- Add a TMDB credit to footer in base.njk

- Make sure tags do not repeat in the displayed tag list.

- Get my Kindle Quotes into the site

- YouTube Channel Recommendations

- Minify HTML via Netlify plugin.

- Log played games

Day 13

After thinking about it some more I'm realizing the easiest thing is to turn this into a Eleventy plugin. So I'm going to restructure it that way.

Let's start with a quick template for the eleventy.config.js file:

export default function (eleventyConfig, options = {}) {

let options = Object.assign({

defaultUtms: []

}, options);

eleventyConfig.addPlugin(function(eleventyConfig) {

// I am a plugin!

});

};const { activateShortcodes } = require("./lib/shortcodes");

module.exports = {

configFunction: function (eleventyConfig, options = {}) {

options = Object.assign({

defaultUtms: [],

defaultShareText: "Share this post",

domain: ""

}, options);

eleventyConfig.addPlugin(function(eleventyConfig) {

// I am a plugin!

activateShortcodes(eleventyConfig, options);

});

},

}- Create a versatile blog site

- Create a framework that makes it easy to add external data to the site

- Give the site the capacity to replicate the logging and rating I do on Serialized and Letterboxd.

- Be able to pull down RSS feeds from other sites and create forward links to my other sites

- Create forward links to sites I want to post about.

- Create a way to pull in my Goodreads data and display it on the site

- Create a way to automate pulls from other data sources

- Combine easy inputs like text lists and JSON data files with markdown files that I can build on top of.

- Add a TMDB credit to footer in base.njk

- Make sure tags do not repeat in the displayed tag list.

- Get my Kindle Quotes into the site

- YouTube Channel Recommendations

- Minify HTML via Netlify plugin.

- Log played games

Day 12

Now that the Web Share API is pretty much available everywhere I really have wanted to take some time to figure out how to use it. An article on setting up sharing with HTML/JS popped up on my feed reader and I figured this is the excuse to give it a try.

One thing I really want to do here is avoid having more network requests for script files. This has been an issue that has come up at work and the tactic I thought about there is one I want to try for myself here, which is to pull the script out of a JS file and into the HTML file for inline script tags.

There might be more than one script I want to handle this way, but I think they all belong in a single script tag? The potential problem here is that this could cause problems with how JS pointers bubble up, giving it a broad scope.

Actually... now that I've typed it out... I don't want it to be in a single script tag. Let's not do that.

Ok, so I need a block in the template:

``

I need to make it safe because it is HTML and I don't want it escaped.

This is a build Eleventy data object in the _data folder. In there I have an object now:

const shareActions = require("../../plugins/share-actions");

module.exports = {

env: process.env.ELEVENTY_ENV,

timestamp: new Date(),

bookwyrm: {

username: "aramzs",

instance: "bookwyrm.tilde.zone",

},

footerInlineScript: `<script>${shareActions.js()}</script>`

};Now I'm free to build in .js files easily.

The other thing I may want to add to this process is minification, but I'll worry about that later.

I will want my custom HTML as well, and I can export that from my index also. I might not be able to get the per-page data context with the URL that way, but let's see what we can do.

Ok yeah, the data from the page build just doesn't seem accessible in the global data? At least not with the Nunjucks rendering process. I guess I'll have to define a Nunjucks template and import it into my template.

I'm putting it in the plugin's folder for now. That's not great because it doesn't trigger rebuild but hopefully it has full access to the page context?

]]>- Create a versatile blog site

- Create a framework that makes it easy to add external data to the site

- Give the site the capacity to replicate the logging and rating I do on Serialized and Letterboxd.

- Be able to pull down RSS feeds from other sites and create forward links to my other sites

- Create forward links to sites I want to post about.

- Create a way to pull in my Goodreads data and display it on the site

- Create a way to automate pulls from other data sources

- Combine easy inputs like text lists and JSON data files with markdown files that I can build on top of.

- Add a TMDB credit to footer in base.njk

- Make sure tags do not repeat in the displayed tag list.

- Get my Kindle Quotes into the site

- YouTube Channel Recommendations

- Minify HTML via Netlify plugin.

Day 11

Still working on my page for collecting interesting color contrasts, a fun little feature on my site. Thanks to suggestions from Michael Grossman I've added features citing color sources and making it easier to access the rgb codes for each swatch and background. https://aramzs.xyz/

]]>- Create a new site

- Process the Foursquare data to a set of locations with additional data

- Set the data up so it can be put on a map (it needs lat and long)

- Can be searched

- Can show travel paths throughout a day

Day 5

I want to crawl Foursquare URLs for pages I do not have data in the API for. Here's an example page: https://foursquare.com/v/north-philadelphia/4e9ae7244901d3b0b7190bde

This is one of the pages that are there on the web (though who knows for how long) but are not in the Foursquare Places API. So let's figure out how to determine location data from a URL like that by starting in a Jupyter Notebook.

I'll use requests here as well to get the HTML pages. Then I can use the classic BeautifulSoup package to parse what I need out of it.

Ok, it turns out that there are two types of pages, ones like the one above, which have lat and long in the metadata, but no additional place/address location data. Then there are ones like Baruch College's Vertical Campus which are locations with place data but are, I guess, not business enough to be in the Places API.

So I can always get lat and long, but I'll need to check if the address block exists:

htmlLatitude = soup.find("meta", property="playfoursquare:location:latitude")

print(htmlLatitude)

print(f"Latitude is {htmlLatitude['content']}")

locationDataDict["latitude"] = htmlLatitude['content'];

htmlLongitude = soup.find("meta", property="playfoursquare:location:longitude")

print(htmlLongitude)

print(f"Latitude is {htmlLongitude['content']}")

locationDataDict["longitude"] = htmlLongitude['content'];

htmlAddressBlock = soup.find("div", itemprop="address")

if htmlAddressBlock is None:

print("No address block found")

else:

htmlStreetAddress = soup.find("span", itemprop="streetAddress")

print(htmlStreetAddress)

print(f"address is {htmlStreetAddress.string}")

locationDataDict["address"] = htmlStreetAddress.string;It looks like the Places API that Foursquare open sourced is the API I'm using, so yeah, better crawl the pages I want before they're gone.

Weirdly, this does not appear to have "country" in the HTML. But there is another place to pull it from, the mess of Javascript that gets pushed on to the page. I suppose I could pull this from lat and long, but I'm trying to avoid grabbing a ton of data from external APIs.

I guess someetimes you just need to Do A Regex lol.

# Country pull

pattern = r'","country":"([^"]+)","'

match = re.search(pattern, str(htmlContent))

print("country match")

print(match.groups()[0])Also, it seems not every page has all the address fields, so I'll have to allow some of those to null out.

git commit -am "Crawl HTML as a fallback to foursquare API 404"

Ok, I tried writing all of these rows to JSON:

for i in dataFrames["venues"].index:

idString = dataFrames["venues"].iloc[i].get('id')

dataFrames["venues"].loc[i].to_json("../datasets/venues/{}.json".format(idString))

for i in dataFrames["photos"].index:

idString = dataFrames["photos"].iloc[i].get('id')

dataFrames["venues"].loc[i].to_json("../datasets/photos/{}.json".format(idString))

for i in dataFrames["checkins"].index:

idString = dataFrames["checkins"].iloc[i].get('id')

dataFrames["checkins"].loc[i].to_json("../datasets/checkins/{}.json".format(idString))and took a quick skim through it and it looks like everything looks good, plus I have a full archive of all the data I need.

]]>- Be able to host a server

- Be able to build node projects

- Be able to handle larger projects

- Be able to run continually

Day 4

Ok, I had to reset the device's OS to a 64-bit version because I want to do some applications that require it.

Now I want to use it as a web host, but that means a Dynamic DNS mapping.

I took a look at options and I think I can use the new registrar I've been trying out, Porkbun, directly. I use GoDaddy for most of my domains (yes I know) but their API doesn't seem to allow for you to create keys with restricted access to particular domains, it is all or nothing. Porkbun apparently allows you to limit access to particular domains. So let's try that.

Everything I've read seems to indicate that DDClient is the way to go, but thus far I haven't gotten it to work. Let's see what the deal is.

I can find the Porkbun API down in the footer.

That lets me create the key and secret for the API. Now I can see the API docs.

So I'm going to test out the API to make sure my keys work:

curl --request GET \

--url https://api.porkbun.com/api/json/v3/ping \

--header 'Content-Type: application/json' \

--header 'User-Agent: insomnia/10.3.0' \

--cookie BUNSESSION2=xxxx \

--data '{

"secretapikey": "xxxxx",

"apikey": "xxxxxx"

}

'Ok, that worked! I got back:

{

"status": "SUCCESS",

"yourIp": "xxxx",

"xForwardedFor": "xxxx"

}So I know my keys work!

I wasn't able to get this working with GoDaddy, but that means I configured it incorrectly. So I need to know how to re-configure the config file with whereis ddclient.

Ok, that wasn't where it was, but running it did give me an error that told me where the file was. Going to try to alter the file via sudo nano /etc/ddclient.conf .

# Configuration file for ddclient generated by debconf

#

# /etc/ddclient.conf

protocol=porkbun \

use=web, web=ipify-ipv4 \

login='xxxx' \

password='xxxx' \Huh, Porkbun isn't a protocol. Let's look and see how I activate it.

Ah, well it needs a different configuration:

##

## Porkbun (https://porkbun.com/)

##

# protocol=porkbun

# apikey=APIKey

# secretapikey=SecretAPIKey

# root-domain=example.com

# host.example.com,host2.sub.example.com

# example.com,sub.example.comOh, apparently the package manager version is not up to date. I checked:

ddclient ---

and it is 3.10.0.

Looks like I'm not the only one with this problem.

Let's remove the package from apt-get sudo apt-get remove ddclient.

I found something useful, Porkbun has their own walkthrough! But wait... it points at depreciated tech. Ok, so build DDClient locally instead I guess!

I will get the latest from the GitHub tags.

wget https://github.com/ddclient/ddclient/archive/refs/tags/v3.11.2.tar.gz

tar xvfa v3.11.2.tar.gz

cd ddclient-3.11.2/

sudo mkdir /etc/ddclient

sudo touch /etc/ddclient/ddclient.confOk, stuff isn't working. Let's try and pull it from GitHub. It says first you have to configure the project, but I don't have the needed package to run autoreconf so

sudo apt-get install autoconf

Ok! So now: sudo ./autogen

Now I can run this?

./configure \

--prefix=/usr \

--sysconfdir=/etc/ddclient \

--localstatedir=/varYes!

Then these commands from the ReadMe:

make

make VERBOSE=1 check

sudo make installNow I can configure sudo nano /etc/ddclient/ddclient.conf.

Then down the readme we go!

cp sample-etc_systemd.service /etc/systemd/system/ddclient.service

systemctl enable ddclient.service

systemctl start ddclient.serviceThen I can sudo run the service manually to determine what is going on!

sudo ddclient -daemon=0 -debug -verbose -noquiet

Hmm, it sure is taking a while to get to https://checkip.dyndns.org/ :/

Doesn't look like it works? Not great! Let's investigate the options for the reading the data out of the router. Looks like it won't work for my router.

Instead let's look at other protocols.

We can change the config to try to use a different protocol, now:

use=web, web=ipify-ipv4 # via web

Ok, that is finding the IP properly! But it isn't setting it into the domain.

Looks like I need to delete the ALIAS record set into the domain by default in Porkbun and replace it with an A record. Then the system can use that. I can also map the IP to subdomains.

protocol=porkbun

apikey=xxxx

secretapikey=xxxx

www.my.domain

on-root-domain=yes my.domainAnd hey, that brings me to my home router >.<

I can, however, access the service on the port it is running on via the domain once I've set up port forwarding for that port, so I'm getting close!

It looks like my particular Verizon router may just not allow me to open port 80. Ok, let's try to go on with setting up the task that will keep the static IP up to date for now. At some point in the future I may want to figure out an HTTPS cert as well, once I got everything mapped.

Ok, no, the setup instructions do activate the daemon on the server for ddclient and set it up to come back online when the system restarts. That's good, it should keep my Raspberry Pi connected to the domain.

I think this is a good place to stop, nifty to get this work!

]]>- Create a new site

- Process the Foursquare data to a set of locations with additional data

- Set the data up so it can be put on a map (it needs lat and long)

- Can be searched

- Can show travel paths throughout a day

Day 4

Ok, I've got the whole setup for retrieving from the API done. Places that 200 are being integrated into the dataframe successfully and also being written to local JSON files.

There's only one problem, check-in locations that don't correspond to a business aren't in the API and I have a ton of them.

I'm going to write them all to files, this allows me to debug them a little easier.

git commit -am "Adding more processing for Foursquare's API to get the data I need."

I think I'm going to have to crawl the pages, which are still up for now. That will hopefully get me the data I need.

Every page has this data in the HEAD, it looks like!

We can see lat and long in:

<meta content="40.73920556687168" property="playfoursquare:location:latitude">

<meta content="-73.95259827375412" property="playfoursquare:location:longitude">That is likely all I need, but I can also get the address info it looks like, that's on the page in structured HTML:

<div class="venueAddress">

<div class="adr" itemprop="address" itemscope="" itemtype="http://schema.org/PostalAddress">

<span itemprop="streetAddress">Pulaski Bridge</span> (at Newtown Creek)<br><span itemprop="addressLocality">Brooklyn</span>, <span itemprop="addressRegion">NY</span> <span itemprop="postalCode">11211</span>

</div>

</div>I'll need to add a function to access the url from the dataframe and crawl that page, and do it quickly before this stuff goes offline, assuming it still will. I can then pull that data into my dataframe.

I'll likely have to use beautifulsoup.

I also should investigate if the new Places data set that was open sourced might have this data and, if so, how I can access it.

]]>- Create a new site

- Process the Foursquare data to a set of locations with additional data

- Set the data up so it can be put on a map (it needs lat and long)

- Can be searched

- Can show travel paths throughout a day

Day 3

Moving my work from the Jupyter Notebook into some more useful functions that make it easier to understand what is going on took some adjustments. I want to make the flow simpler and take less code as well. Some accidents along the way, but I think I have it working.

I wanted to flatten the items files as well as use the loop more simply to create a base object that is generic for multiple data files. This means changing some of the downstream functions as well.

I also want to make the whole project as flat as possible while providing some logical divisions for the files based on what needs to be imported and what the functions do.

I also took a look at what to do with the init file:

- python - What is __init__.py for? - Stack Overflow

- What's your opinion on what to include in __init__.py ? : r/Python

- Structuring Your Project — The Hitchhiker's Guide to Python

- python - The Pythonic way of organizing modules and packages - Stack Overflow

- python - How do I write good/correct package __init__.py files - Stack Overflow

- python - What exactly does "import *" import? - Stack Overflow

I ended up using it to refine the exposed API like so:

from .process_to_dfs import process_to_dfs, get_place_details

from .pull_in_data_files import pull_in_data_filesOk, got the data retrieval and the processing into dataframes working now!

]]>- Create a new site

- Process the Foursquare data to a set of locations with additional data

- Set the data up so it can be put on a map (it needs lat and long)

- Can be searched

- Can show travel paths throughout a day

Day 2

I think I got it writing photos effectively, but I think I made a mistake trying to put them in the venue dataframe. I need a photo table.

git commit -am "Setting up a dataframe for individual photos"

Ok, I can see how to do it, but the sequence is getting complicated. I want to actually set up some functions and call them into the notebook I'm working on. Then I need to do the next thing, which is resolve venues to have lat and long values.

git commit -am "Processing sequence of files into dataframes now in functions"

Oh, I know some photos exist without a checkin, which means no venue entry. I'll have to add that.

git commit -am "Photos should always have venues associated with them."

Ok, so now we gotta look at hitting the Foursquare API to get the correct lat and long data.

Using the Foursquare API

Ok, I tried out the place details endpoint in the FourSquare API Explorer.

It returns an object like this:

{

"fsq_id": "59e63da08c35dc3e57ab5520",

"categories": [

{

"id": 10032,

"name": "Night Club",

"short_name": "Night Club",

"plural_name": "Night Clubs",

"icon": {

"prefix": "https://ss3.4sqi.net/img/categories_v2/nightlife/nightclub_",

"suffix": ".png"

}

},

{

"id": 13009,

"name": "Cocktail Bar",

"short_name": "Cocktail",

"plural_name": "Cocktail Bars",

"icon": {

"prefix": "https://ss3.4sqi.net/img/categories_v2/nightlife/cocktails_",

"suffix": ".png"

}

},

{

"id": 13021,

"name": "Speakeasy",

"short_name": "Speakeasy",

"plural_name": "Speakeasies",

"icon": {

"prefix": "https://ss3.4sqi.net/img/categories_v2/nightlife/secretbar_",

"suffix": ".png"

}

}

],

"chains": [],

"closed_bucket": "Unsure",

"geocodes": {

"drop_off": {

"latitude": 40.745138,

"longitude": -73.990299

},

"main": {

"latitude": 40.745324,

"longitude": -73.990221

},

"roof": {

"latitude": 40.745324,

"longitude": -73.990221

}

},

"link": "/v3/places/59e63da08c35dc3e57ab5520",

"location": {

"address": "49 W 27th St",

"census_block": "360610058001001",

"country": "US",

"cross_street": "btwn Broadway & 6th Ave",

"dma": "New York",

"formatted_address": "49 W 27th St (btwn Broadway & 6th Ave), New York, NY 10001",

"locality": "New York",

"postcode": "10001",

"region": "NY"

},

"name": "Patent Pending",

"related_places": {

"parent": {

"fsq_id": "59e618dc4c9be64fbe509006",

"categories": [

{

"id": 13035,

"name": "Coffee Shop",

"short_name": "Coffee Shop",

"plural_name": "Coffee Shops",

"icon": {

"prefix": "https://ss3.4sqi.net/img/categories_v2/food/coffeeshop_",

"suffix": ".png"

}

},

{

"id": 13034,

"name": "Café",

"short_name": "Café",

"plural_name": "Cafés",

"icon": {

"prefix": "https://ss3.4sqi.net/img/categories_v2/food/cafe_",

"suffix": ".png"

}

}

],

"name": "Patent Coffee"

}

},

"timezone": "America/New_York"

}Ok, that has all the information I need! And a lot more besides. I was thinking I could just pull the information in to the dataframe, and I think that is true, but also I think I want to just replicate the object into a physical file for now. I might want to do more with it later.

I want to play around with these new functions and test them in my Jupyter Notebook. Looks like the best way to do this is with autoreload.

%autoreload 2

import data_processing

config = dotenv_values('../.env')

apiKey = config['FSQ_API_KEY']

data_processing.get_place_details("59e63da08c35dc3e57ab5520", apiKey)git commit -am "Adding FSq API checks and file writing and new notebook for testing functions"

- Create a new site

- Process the Foursquare data to a set of locations with additional data

- Set the data up so it can be put on a map (it needs lat and long)

- Can be searched

- Can show travel paths throughout a day

Day 1

Since the Foursquare site is shutting down I got a data export. I have to decide what I want to do with it now that I have it. It is a big set of data, so I will need to do some work to make it usable and figure out how to treat it and turn it into the type of site I want.

Big dataset, so let's turn to Python to process it. That's what it is best at. It has been a while, but I can start drawing some interesting conclusions from it for sure.

There are a bunch of different files, and it seems like the checkins files are the most relevant. But they don't have latitude and longitude data attached. That's not great. I also have a visits file that does have latitude and longitude, but it does seem to map to anything.

I'll assemble the data into a Pandas dataframe to start playing with it. See if I can find if the two connect at all.

It looks like someone else has encountered this problem. They recommend hitting the Foursquare API to get the lat/long.

import requests

import json

import csv

headers = {

"accept": "application/json",

"Authorization": "YOUR_API_KEY"

}

# define path for products adoc file

path = r'foursquare.csv'

# clear attributes file if exists

c = open(path,'w')

c.close()

csv = open(path, 'a')

with open('ids.txt', 'r') as f:

for line in f:

fsq_id = str(line).replace("\n","")

url = "https://api.foursquare.com/v3/places/"+fsq_id+"?fields=geocodes"

response = requests.get(url, headers=headers)

if response.status_code != 404:

locations = response.json()

csv.write(fsq_id)

csv.write(", ")

csv.write(str(locations['geocodes']['main']['latitude']))

csv.write(", ")

csv.write(str(locations['geocodes']['main']['longitude']))

csv.write("\n")

print("done")This leverages the Place Details API.

Once I loaded the JSON files into memory, I can walk them into a dataframe:

# Create a DataFrame from the list of dictionaries

df = pd.DataFrame(columns=[

'id',

'createdAt',

'type',

# 'visibility',

'timeZoneOffset',

'venueId',

'venueName',

'venueURL',

'commentsCount'

])

for checkinList in data:

for item in checkinList["items"]:

if 'venue' not in item:

continue

df = pd.concat([pd.DataFrame([[

item['id'],

item['createdAt'],

item['type'],

# item['visibility'],

item['timeZoneOffset'],

item['venue']['id'],

item['venue']['name'],

item['venue']['url'],

item['comments']['count']

]], columns=df.columns), df], ignore_index=True)

print(df.shape)Checked and for some reason only 1000 items don't have visibility, I've manually defaulted those to "private".

Even now that I've got those included though, the IDs in visits.json and visits.csv don't map to anything in my checkins.

Now my total checkins in the dataframe are 14265 entries.

Ok, so visits seems to be non-check-in occurrences where I was at a location. The Swarm app will occasionally have draft checkins that it suggests, saying it thinks I was in a particular location and prompting me to check in, correct, or not. This appears to be what is going on here. So for example

{

"id": "673e69ccdc7d4627b68ceb3b",

"userId": "15234",

"timeArrived": "2024-11-20 22:59:24.483000",

"timeDeparted": "2024-11-20 23:15:22.419000",

"os": "Android",

"osVersion": "12",

"deviceModel": "SM-G975U1",

"isTraveling": false,

"latitude": 40.6436454,

"longitude": -74.072583,

"city": "Staten Island",

"state": "New York",

"postalCode": "8600000US10301",

"countryCode": "US",

"locationType": "Venue"

}Is a visit. And it happens around the same time as check-ins that I actually made. I looked up the lat/long and found the location seemed to be the Staten Island ferry terminal.

print(df.query('venueName.str.contains("Staten Island")'))Here's the result:

| id | createdAt | type | visibility | timeZoneOffset | venueId | venueName | venueUrl | commentsCount |

|---|---|---|---|---|---|---|---|---|

| 5bc75aa89cadd9002ce17dc3 | 2018-10-17 15:52:08.000000 | checkin | private | -240 | 4165d880f964a5207e1d1fe3 | Staten Island Ferry - Whitehall Terminal | https://foursquare.com/v/staten-island-ferry--... | 0 |

| 673e61fdf804d01340b055f2 | 2024-11-20 22:26:05.000000 | checkin | closeFriends | -300 | 4165d880f964a5207e1d1fe3 | Staten Island Ferry - Whitehall Terminal | https://foursquare.com/v/staten-island-ferry-- ... | 0 |

| 673e69ccc2749c4b190f5fb9 | 2024-11-20 22:59:24.000000 | checkin | closeFriends | -300 | 4a478d19f964a520d2a91fe3 | Staten Island Ferry - St. George Terminal | https://foursquare.com/v/staten-island-ferry--... | 0 |

| 673e73175e573a13d383bcfa | 2024-11-20 23:39:03.000000 | checkin | closeFriends | -300 | 4d35e19d6c7c721eb511cf56 | College of Staten Island Main Gate | https://foursquare.com/v/college-of-staten-isl... | 0 |

| 673ea1637f8f7e3e6b5232db | 2024-11-21 02:56:35.000000 | checkin | closeFriends | -300 | 4165d880f964a5207e1d1fe3 | Staten Island Ferry - Whitehall Terminal | https://foursquare.com/v/staten-island-ferry-- ... | 0 |

Ok, well, that seems fine, but no match really. There is a close match (if you ignore milliseconds) in timing. But it isn't really enough to join on en-mass, even if it is close. This also shows me that the records that don't have a visibility value look like they are early on in my checkin history, which is useful to know.

So I have 9,993 "visits". Which I think are dismissed check-in prompts?

There's also a whole file for unconfirmed_vists.json which has 18,279 entries. Where I can find some matches, it looks like they also are maybe pending prompts that I never confirmed or denied? These aren't just lat and long

There's no clear difference between the two. And there's no documentation from Foursquare. I'm going to go with my assumptions here. In that case, the only thing that matters is my checkins. That's cool.

Interestingly, the unconfirmed_visits have lat and long values. They look like this:

{

"id":"673a6e9eddf0e34a16590965",

"startTime":"2024-11-17 22:08:21.628000",

"endTime":"2024-11-17 22:31:27.761000",

"venueId":"59e63da08c35dc3e57ab5520",

"lat":40.74526808227119,

"lng":-73.99024878341206,

"venue":{

"id":"59e63da08c35dc3e57ab5520",

"name":"Patent Pending",

"url":"https:\/\/foursquare.com\/v\/patent-pending\/59e63da08c35dc3e57ab5520"

}

}There is a checkin around this time, found via

print(df.query('venueName.str.contains("Patent Pending")'))I'm pretty sure it is based around the time I did the actual checkin, found that via the above at createdAt time 2024-11-17 22:31:33.000000.

It looks like comments file is pretty useless:

{

"userId":15234,

"time":"2017-03-03 02:37:36.000000",

"comment":"Hey! Just saw this, we just got to Kimchi Grill, if you want to join for food."

}I could potentially make some assumptions on time, but it doesn't necessarily map out, comments could happen way later, after other checkins.

Then there is the tips file.

It has objects like:

{

"id":"670f00d47d191f5820d40dda",

"createdAt":"2024-10-15 23:55:00.000000",

"text":"Great bookstore, with drinks, snacks, and plentiful recommendations cards with lots of details.",

"type":"user",

"canonicalUrl":"https:\/\/foursquare.com\/item\/670f00d47d191f5820d40dda",

"flags":[],

"viewCount":29,

"agreeCount":0,

"disagreeCount":0,

"user":{

"id":"15234",

"firstName":"Aram",

"lastName":"Zucker-Scharff",

"handle":"chronotope",

"privateProfile":false,

"gender":"male",

"address":"",

"city":"",

"state":"",

"countryCode":"US",

"relationship":"self",

"photo":{

"prefix":"https:\/\/fastly.4sqi.net\/img\/user\/",

"suffix":"\/15234-EMJINMXJKZ5R5S20.jpg"

}

},

"venue":{

"id":"64dd65470e981a714e4c9f6c",

"name":"First Light Books",

"url":"https:\/\/foursquare.com\/v\/first-light-books\/64dd65470e981a714e4c9f6c"

}

}There do appear to be associated photos, but those photos don't seem to be in the pix folder I got with the export. I tried accessing the URL directly, but didn't get anything at that URL. However, looking at the venue, I didn't upload an image.

But that does match my profile image at https://fastly.4sqi.net/img/user/64x64/15234-EMJINMXJKZ5R5S20.jpg.

So I guess that is what that is. This does have a check-in associated with it:

| id | createdAt | type | visibility | timeZoneOffset | venueId | venueName | venueUrl | commentsCount |

|---|---|---|---|---|---|---|---|---|

| 670efb74d8a0e46cd107fea5 | 2024-10-15 23:32:04.000000 | checkin | closeFriends | -300 | 64dd65470e981a714e4c9f6c | First Light Books | https://foursquare.com/v/first-light-books/64d... | 0 |

Nothing there to associate based on except ID of the venue.

I am starting to conclude that maybe I also need just a listing of all the venues I've visited.

So I need to build a venues dataframe too.

Ok, so we'll need to load a few files:

# Get tips file

tipsFile = open('../foursquare-export/tips.json', 'r')

print(f"Reading tips file")

tipsObject = json.load(tipsFile)

tipsSetObject = tipsObject['items']

# get Venue Ratings

ratingsFile = open('../foursquare-export/venueRatings.json', 'r')

print(f"Reading ratings file")

ratingsObject = json.load(ratingsFile)

venueLikes = ratingsObject['venueLikes']

venueDislikes = ratingsObject['venueDislikes']

venueOkays = ratingsObject['venueOkays']

photosFile = open('../foursquare-export/photos.json', 'r')

print(f"Reading photos file")

photosObject = json.load(photosFile)

photosSetObject = photosObject['items']As you can see, the venue ratings file is divided into venueLikes, venueDislikes and venueOkays. So I'm pulling those out.

So we know what a tip looks like.

Here's what each object inside the venue ratings looks like:

{

"id": "6278124503e634412f05cdaf",

"name": "Sobremesa Cocina Mexicana",

"url": "https:\/\/foursquare.com\/v\/sobremesa-cocina-mexicana\/6278124503e634412f05cdaf"

},And then we've got the photos object:

{

"id":"4db1b674fd28f0dcfa1c0d2c",

"createdAt":"2011-04-22 17:10:12.000000",

"prefix":"https:\/\/fastly.4sqi.net\/img\/general\/",

"suffix":"\/BMGMLXNIP44I0WPVA2LOPEMOYCFXBGQ1ZFTY1IILIQXJP3WA.jpg",

"width":538,

"height":720,

"demoted":false,

"visibility":"friends",

"fullUrl":"https:\/\/fastly.4sqi.net\/img\/general\/538x720\/BMGMLXNIP44I0WPVA2LOPEMOYCFXBGQ1ZFTY1IILIQXJP3WA.jpg",

"relatedItemUrl":"https:\/\/www.swarmapp.com\/checkin\/4db1b66fa86e63d21171a701"

}or

{

"id":"51fc5b1f498ea67cd47c6be5",

"createdAt":"2013-08-03 01:21:35.000000",

"prefix":"https:\/\/fastly.4sqi.net\/img\/general\/",

"suffix":"\/15234_GrwLQ564TbAJeS3qJjRxK5ZYDOVCj93L1ATyPJt_3RU.jpg",

"width":720,

"height":960,

"demoted":false,

"visibility":"public",

"fullUrl":"https:\/\/fastly.4sqi.net\/img\/general\/720x960\/15234_GrwLQ564TbAJeS3qJjRxK5ZYDOVCj93L1ATyPJt_3RU.jpg",

"relatedItemUrl":"https:\/\/foursquare.com\/v\/51fc5a63498ec02f4de928e0"

},There the suffix field does match up with a file in the pix folder, so that's where those connect up.

The relatedItemUrl doesn't go to anywhere that is online on the web. The value at the end of that though, the 4db1b66fa86e63d21171a701 part of the relatedItemUrl does match up with an id in the checkins files! So that's how I can associate a photo with a check-in and venue it seems.

So what format should the second dataframe be?

I'm thinking:

venuesDf = pd.DataFrame(columns=[

'id', # venue id

'name', # venue name

'url', # venue url

'latitude',

'longitude',

'tipString', # tip text

'tipCreatedAt',

'tipId',

'tipUrl', # tip canonicalUrl

'tipViews', # tip viewCount

'tipAgreeCount', # tip agreeCount

'tipDisagreeCount', # tip disagreeCount

'rating', # from ratings file, can be like, dislike, okay

'imageSuffix', # from photos file, is the `suffix` field

'imageWidth', # from photos file, is the `width` field

'imageHeight', # from photos file, is the `height` field

'imageId', # from photos file, is the `id` field

'imageCreatedAt', # from photos file, is the `createdAt` field

'checkIns' # array of string checkin IDs.

])Ok, so I want to append the other checkin IDs. How to do this? In theory this should work.

for index, row in df.iterrows():

venueRow = venuesDf.loc[venuesDf['id']==row['venueId']]

if venueRow.empty:

# print(f"Venue not found for {row['venueId']}")

# continue

venuesDf.loc[-1] = [

row['venueId'],

row['venueName'],

row["venueURL"],

"", # latitude

"", # longitude

"", # tipString

"", # tipCreatedAt

"", # tipId

"", # tipUrl

"", # tipViews

"", # tipAgreeCount

"", # tipDisagreeCount

"", # rating

"", # imageSuffix

"", # imageWidth

"", # imageHeight

"", # imageId

"", # imageCreatedAt

[row['id']] # checkIns

] # adding a row

venuesDf.index = venuesDf.index + 1 # shifting index

venuesDf = venuesDf.sort_index() # sorting by index

else:

# add a new checkin to the series of checkins for this venue

venuesDf.loc[venuesDf['id'] == row['venueId'], 'checkIns'] = venuesDf.loc[venuesDf['id'] == row['venueId'], 'checkIns'].apply(lambda x: x + [row['id']])git commit -am "Getting the initial feed in of venues into their own dataframe"

Ok, this is appending the ID where needed.

Now I need to get the ratings in:

def addVenueRating(ratingSet, ratingType, venueDFSet):

for ratingItem in ratingSet:

venueRatingId = ratingItem['url'].split('/')[-1]

venueRow = venueDFSet.loc[venueDFSet['id']==venueRatingId]

if venueRow.empty:

print(f"Venue not found for {venueRatingId}")

venueDFSet.loc[-1] = [

row['venueId'],

row['venueName'],

row["venueURL"],

"", # latitude

"", # longitude

"", # tipString

"", # tipCreatedAt

"", # tipId

"", # tipUrl

"", # tipViews

"", # tipAgreeCount

"", # tipDisagreeCount

"", # rating

"", # imageSuffix

"", # imageWidth

"", # imageHeight

"", # imageId

"", # imageCreatedAt

[row['id']] # checkIns

] # adding a row

venueDFSet.index = venueDFSet.index + 1 # shifting index

venueDFSet = venueDFSet.sort_index() # sorting by index

continue

venueDFSet.loc[venueDFSet['id'] == venueRatingId, 'rating'] = ratingType

addVenueRating(venueLikes, "like", venuesDf)

addVenueRating(venueDislikes, "dislike", venuesDf)

addVenueRating(venueOkays, "okay", venuesDf)I discovered there were a few venues I guess I rated without a corresponding check-in? Had to make sure to create them I guess.

Ok, now I have ratings and checkins. I now need photos and tips.

Tips first? Ok, this does it:

for tip in tipsSetObject:

tipVenueId = tip["venue"]["id"]

venueRow = venuesDf.loc[venuesDf['id']==tipVenueId]

if venueRow.empty:

print(f"Venue not found for {tip['id']}")

continue

# print(f"Venue found for {tipVenueId}")

venuesDf.loc[venuesDf['id'] == tipVenueId, 'tipString'] = tip["text"]

venuesDf.loc[venuesDf['id'] == tipVenueId, 'tipCreatedAt'] = tip["createdAt"]

venuesDf.loc[venuesDf['id'] == tipVenueId, 'tipId'] = tip["id"]

venuesDf.loc[venuesDf['id'] == tipVenueId, 'tipViews'] = tip["viewCount"]

venuesDf.loc[venuesDf['id'] == tipVenueId, 'tipAgreeCount'] = tip["agreeCount"]

venuesDf.loc[venuesDf['id'] == tipVenueId, 'tipDisagreeCount'] = tip["disagreeCount"]

venuesDf.loc[venuesDf['id'] == tipVenueId, 'tipUrl'] = tip["canonicalUrl"]

print(venuesDf.loc[venuesDf['id']=="64dd65470e981a714e4c9f6c"])git commit -am "Set up tip pull into the venue dataframe"

Next up is the images and then the last thing is to figure out how to pull lat and long data.

Hmmm. Looks like checkins can also have a shout value:

{

"id":"673583d957abcd42163c5c32",

"createdAt":"2024-11-14 05:00:09.000000",

"type":"checkin",

"visibility":"closeFriends",

"entities":[],

"shout":"Kareoke time!",

"timeZoneOffset":-300,

"venue":{

"id":"5285412911d2a3e51484ff56",

"name":"The Brew Inn",

"url":"https:\/\/foursquare.com\/v\/the-brew-inn\/5285412911d2a3e51484ff56"},

"comments":{"count":0}

}Ok, back to photos.

Looks like we got a problem: When the URL for a photo contains foursquare it doesn't map to a check-in, but to the location. Need to check.

Interesting. Somehow I have images that don't have attached check-ins. Seems impossible, but ok. Maybe from earlier versions of the app.

The photos don't seem to be joining in. Something is wrong with my logic. Hmmm.

]]>- Create a new site

- Can be searched

Day 1

Going through the Astro Vercel setup

Usage with Vercel

- @astrojs/vercel | Docs

- On-demand rendering | Docs

npx vercel login- Build locally

npx vercel build

- Note: Vercel serverless currently (12/08/24) only works with Node 18. Make sure to use Node 18. - Serverless Function contains invalid runtime error

Trying plugins

I'm also interested in trying out more plugins with Astro. Here's some of the ones I'm looking at

Plugins

]]>- Create a versatile blog site

- Create a framework that makes it easy to add external data to the site

- Give the site the capacity to replicate the logging and rating I do on Serialized and Letterboxd.

- Be able to pull down RSS feeds from other sites and create forward links to my other sites

- Create forward links to sites I want to post about.

- Create a way to pull in my Goodreads data and display it on the site

- Create a way to automate pulls from other data sources

- Combine easy inputs like text lists and JSON data files with markdown files that I can build on top of.

- Add a TMDB credit to footer in base.njk

- Make sure tags do not repeat in the displayed tag list.

- Get my Kindle Quotes into the site

- YouTube Channel Recommendations

- Minify HTML via Netlify plugin.

Day 10

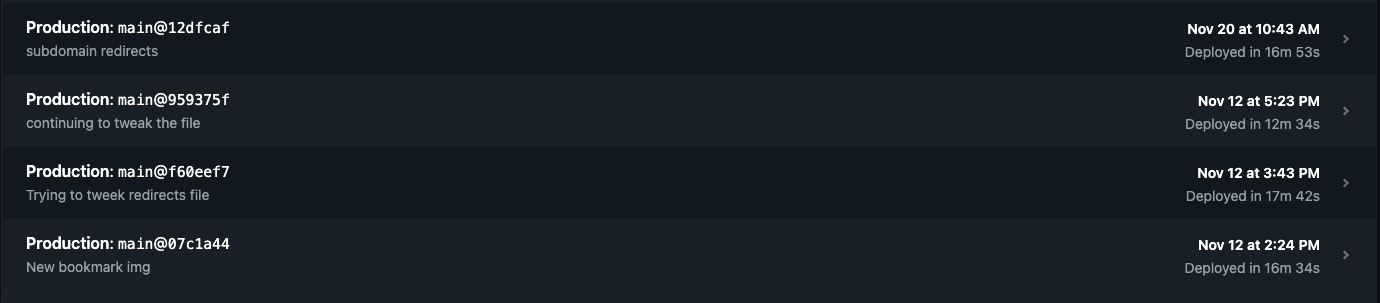

Ok, on a train, and learning all about the Netlify CLI so I can run the build plugin to minify the HTML locally.

I'm making sure my TOML file is configured correctly.

Looks like it is. I can console.log to echo the configuration out and make sure.

I think it is set up correctly to use onPostBuild so that's good.

Using Netlify CLI

I'm installing Netlify's CLI local to the project. So to authenticate in (which I need to do for some reason) I have to use npx netlify link.

I logged in last night, but it looks like I need to push npx netlify status to get it warmed up or something. Then I can use npx netlify build. That gets it running locally!

Looks like these options to give me a similar HTML minification to what I had before:

{

collapseWhitespace: true,

collapseInlineTagWhitespace: false,

conservativeCollapse: true,

preserveLineBreaks: false,

removeComments: true,

useShortDoctype: true

}I think this is looking pretty good. Let's try and push it out.

git commit -am "Setting up logging and, hopefully, the right configuration for my Netlify plugin"

Looks like it works!