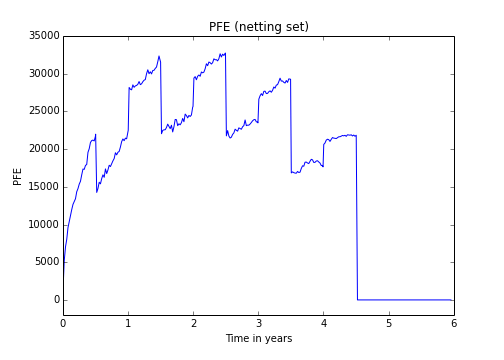

In this post we are going to simulate exposures of a bermudan swaption (with physical settlement) through an backward induction (aka American Monte-Carlo) method. These exposure simulations are often needed at the counterparty credit risk management. We will use this exposures to calculate the Credit Value Adjustment (CVA). One could easily extend this notebook to calculate the PFE or other xVAs. The implementation is based on the paper “Backward Induction for Future Values” by Dr. Antonov et al. .

We assume that the value of a derivate and the default probability of the counterpart are independent (no wrong-way risk). For our example our netting set consists of only one Bermudan swaption (but it can be easily extended). For simplicity we assume a flat yield termstructure.

In our example we consider a 5Y Euribor 6M payer swap at 3 percent. The bermudan swaption allows us to enter this swap on each fixed rate payment date.

Just as a short reminder the unilateral CVA of a derivate is given by:

with recovery rate R, portfolio maturity T (the latest maturity of all deals in the netting set), discount factor df(t) at time t, the expected exposure EE(t) of the netting set at time t and the default probability PD(t).

We can approximate the integral by the discrete sum

In one of my previous posts we calculated the CVA of a plain vanilla swap. We performed the following steps:

- Generate a timegrid T

- Generate N paths of the underlying market values which influences the value of the derivate

- For each point in time calculate the positive expected exposure

- Approximate the integral

As in the plain vanilla case we will use a short rate process and assume its already calibrated.

The first two steps are exactly the same as in the plain vanilla case.

But how can we calculate the expected exposure of the swaption?

In the T-forward measure the expected exposure is given by:

with future value of the bermudan swaption at time

. The npv of a physical settled swaption can be negative at time t if the option has been exercised before time t and the underlying swap has a negative npv at time t.

For each simulated path we need to calculate the npv of the swaption conditional the state

at time $t_i$.

In the previous post we saw a method how to calculate the npv of a bermudan swaption. But for the calculation of the npv we used a Monte Carlo Simulation. This would result again in a nested Monte-Carlo-Simulation which is, for obvious reasons, not desirable.

If we have a look on the future value, we see that is very much the same as the continuation value in the bermudan swaption pricing problem.

But instead of calculate the continuation values only one exercises date we calculate it now on each time in our grid. We use a the same regression based approximation.

We approximate the continuation value through some function of current state of the stochastic process x_i:

.

A common choice for the function is of the form:

with a polynom of the degree

.

The coefficients of this function f are estimated by the ordinary least square error method.

We can almost reuse the code from the previous post, only a few extensions are needed.

We need to add more times to our time grid (we add a few more points in time after the maturity to have some nicer plots):

date_grid = [today + ql.Period(i, ql.Months) for i in range(0,66)] + calldates + fixing_dates

When we exercise the option we will enter the underlying swap. Therefore we need to update the swaption npvs to the underlying swap npv for all points in time after the exercise time on each grid:

if t in callTimes: cont_value = np.maximum(cont_value_hat, exercise_values) swaption_npvs[cont_value_hat < exercise_values, i:] = swap_npvs[cont_value_hat < exercise_values, i:].copy()

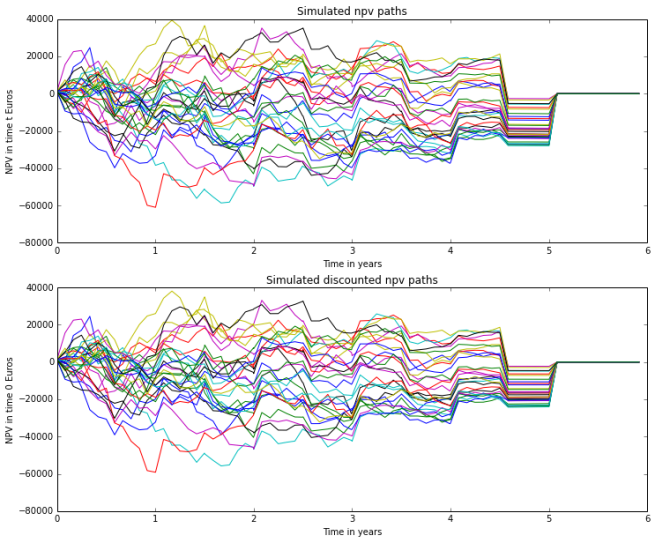

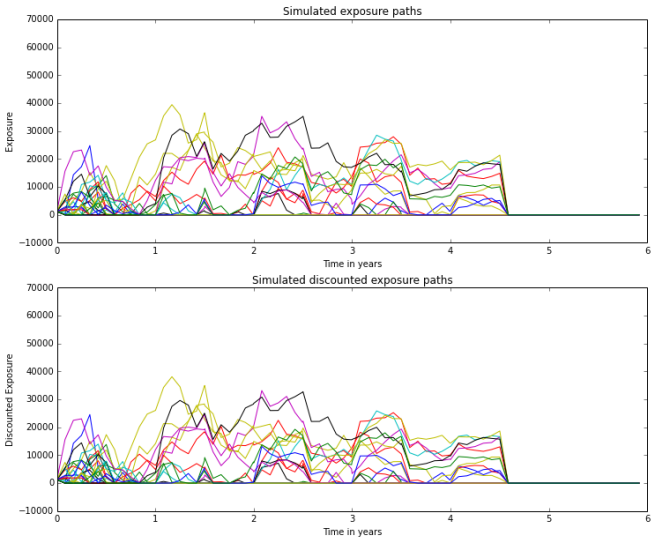

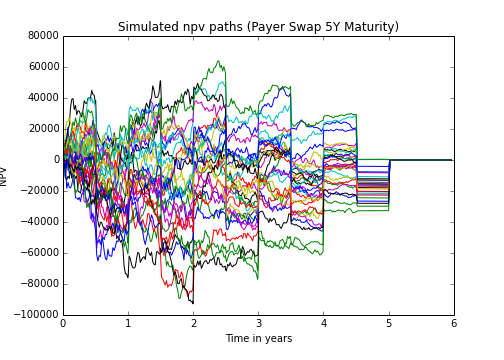

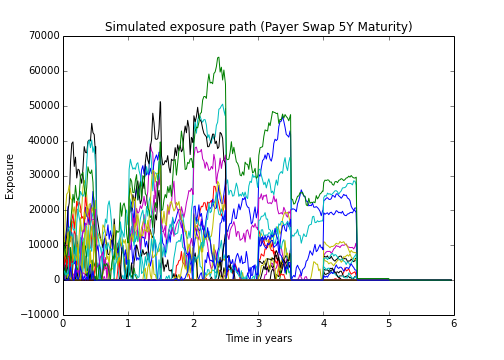

For our example we can observe the following simulated exposures:

If we compare it with the exposures of the underlying swap, we can see that the some exposure paths coincide. This is the case when the swaption has been exercised. In some of this cases we observe also negative npvs after exercising the option.

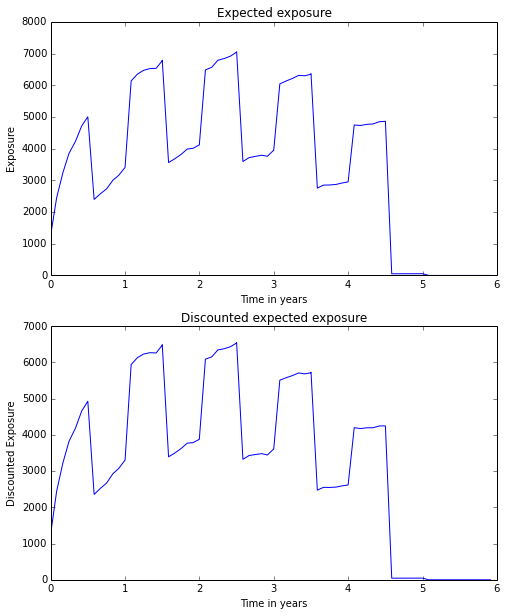

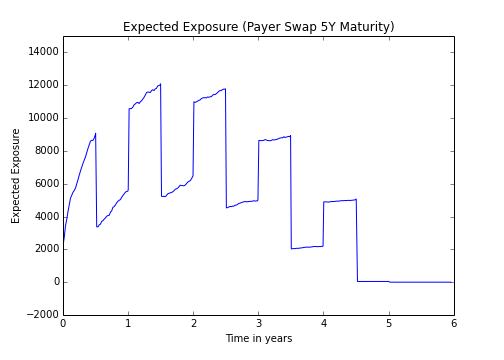

For the CVA calculation we need the positive expected exposure, which can be easily calculated:

swap_npvs[swap_npvs<0] = 0 swaption_npvs[swaption_npvs<0] = 0 EE_swaption = np.mean(swaption_npvs, axis=0) EE_swap = np.mean(swap_npvs, axis=0)

Given the default probability of the counterparty as a default termstructure we can now calculate the CVA for the bermudan swaption:

# Calculation of the default probs defaultProb_vec = np.vectorize(pd_curve.defaultProbability) dPD = defaultProb_vec(time_grid[:-1], time_grid[1:]) # Calculation of the CVA recovery = 0.4 CVA = (1-recovery) * np.sum(EE_swaption[1:] * dPD)

As usual the complete notebook is available here.