And for a lot of people we don't really think any further about it. I need to learn the quirks of this yaml thing that yaml thing. It just is what it is, and little documents like the yaml document from hell exist for us all to laugh about and accept that, yeah that's a thing, but what would you replace it with if not yaml? And at this point I could simply provide a list of lots of different not yaml options that could replace yaml, some of them very yaml like, some of the exact opposite. I have no intention of doing that, if you want to create yet another configuration language syntax then please by all means do so. But that's not the point of this post!

The point of this post is to explore the viability of using Lua as a configuration system. Yes I did link to that intentionally, for if you read the about section it explicitly says this is the intent of Lua. I always sort of saw it as its own minimalist scripting language, so choosing to hold it in this somewhat foreign way is a change of pace for me. Let's dive in!

What is the problem precisely?

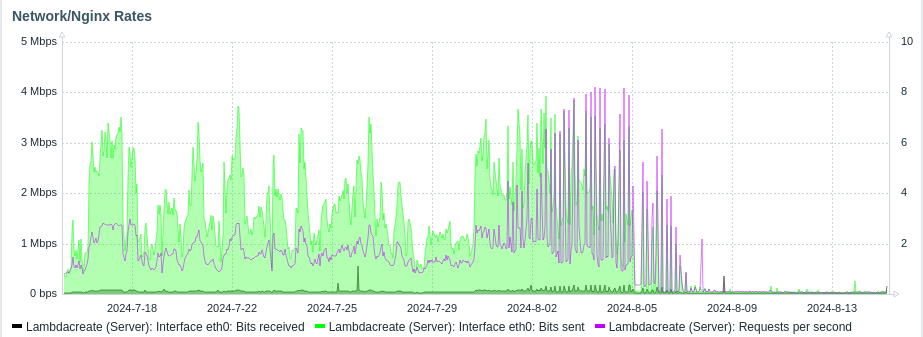

Right, so I can't just do a thing, I need a project to do the thing around. To that extent we'll be building our own Infrastructure as Code tool. I have a ton of experience doing this professionally, and actively contribute to and help maintain SaltStack for Alpine, so we're just going to go straight for SaltStack but without Python. To track my thought process a little bit, this is in the same vein initially as my verkos project which uses a yaml configuration to generate templated shell scripts. That has been the tool of choice to configure systems in my homelab for a couple of years now, but it isn't idempotent, can't produce dry runs, it's truly just generate a script and run it. Infrastructure as Code, even in a simple sense like that implode by a Salt master-less minion or Ansible playbook needs to be able to detect, report, and validate state to be of use.

We'll start with a really simple example, I need a way to install packages for Ubuntu and alpine systems. In Salt we'd do something like

Ensure Nebula is installed:

pkg.installed:

- name: nebula

Well sort of, pkg.installed doesn't work super well on Alpine, and maybe I'd prefer to use the snap package for nebula on my Ubuntu system.

Ensure Nebula is installed:

{% if grains['os'] == "Alpine" %}

cmd.run:

- name: apk -U add nebula

{% elseif grains['os'] == "Ubuntu" %}

cmd.run:

- snap install nebula

{% endif %}

That got out of hand quickly. We immediately had to introduce jinja into the mix to handle flow control on top of our yaml state definition. Yaml is not a programming language, adding jinja on top of it just allows us to change the resulting generated yaml depending on which OS we're targetting. This isn't a terribly design choice, and I feel confident saying that. But it could be better I think.

If we used lua for this configuration maybe we could express this configuration a little more clearly. Assuming we use a lua table as the data structure that is passed between our IaC agent and the configuration we write, then we'd be able to just us Lua code to express our intent.

state = {

type = "package",

name = "nebula",

manager = ""

}

if grains.os == "Ubuntu" then

state.manager = "snap"

elseif grains.os == "Alpine" then

state.manager = "apk"

end

Now you might say, this isn't really all that different than yaml+jinja, and I'd argue yes and no. At this very basic level you're correct it isn't. Just bear with me. If you take away nothing else from this I'd argue that the Lua code is easier to test and debug than the yaml+jinja templating. At a more complex level you can be more expressive using Lua than yaml+jinja.

Right anyways, for the sake of the argument lets just assume we like the second syntax more. How do we consume it? By sticking a programming language into our programming language of course.

Embedding Lua in Nim

Fortunately for the curious there are a couple of different lua libraries available for Nim, so we can skip over creating a shim layer over the C library and just get to hacking by running nimble install lua. That will net us a lua5.1 runtime which is good enough.

Calling Lua from inside Nim is incredibly simplistic, but it requires thinking about stack machines, sort of like Forth. This is done for a few reasons, mostly to facilitate memory management nuances.

import lua

proc main() =

# Load the Lua VM into memory

var L = newstate()

openlibs(L)

# Read in and load our Lua script

if L.dofile("state.lua") != 0:

let error = tostring(L, -1)

echo "Error: ", $error

pop(L, 1)

# Push the value of the global variable state onto the stack

L.getglobal("state")

# From the global state table, push the value of the type key onto the stack

L.getfield(-1, "type")

# Use the value of the type variable that's at the head of the stack as a string value

echo "State of type: " $L.tostring(-1)

# Pop the value of type off the stack

L.pop(1)

# This denotes the first key in the table

L.pushnil()

# Until there are no more values in the state table iterate

while L.next(-2) != 0:

# Push the value of the second element on the stack into a lexical variable named key

let key = $L.tostring(-2)

# Push the value of the first elemenet on the stack into a lexical variable named value

let value = $L.tostring(-1)

echo key, " = ", value

# Pop the values off the stack

L.pop(1)

# And close the VM

close(L)

main()

Assuming we have a lua script called script.lua in the path of of nim program when it's run it will do the following:

- Create an instance of the Lua VM in memory

- Read in the script, and then execute it against that VM

- If there's an error, return that as a string by pushing the value of the error onto the stack, then popping it off once it's done.

- Then we iterate over the

statetable and echo out the key, value tuples from it. - And finally we close the VM and clean up.

This doesn't really do much, but it's all you'd need to use Lua as a configuration system! The hardest part is wrapping your brain around how the stack machine works. Once you internalize that iterating over a table results in a reverse ordered list, precisely because you are first reading in the value of the key and then its value; well after that the entire thing becomes extremely easy to reason about.

Our Lua stack machine looks a bit like this in the nim code above:

[stack] [ ]

[stack] push "type" -> [-1] "type"

[stack] push "package" -> [-1] "package" [-2] "type"

[stack] pop -> [ ]

[stack] push "name" -> [-1] "name"

[stack] push "nebula" -> [-1] "nebula" [-2] "name"

Now lets expand the state configuration and actually do something with it, and while we're at it, lets change name to packages and turn it into a list of packages to install.

state = {

type = "package",

packages = {"nebula", "htop", "iftop", "mg"},

manager = ""

}

if grains.os == "Ubuntu" then

state.manager = "snap"

elseif grains.os == "Alpine" then

state.manager = "apk"

end

We then need to do two things in our nim code, map the global lua variable state to an object, and then parse & action off of it. In a real IaC system only specific values are valid, so instead of iterating over everything, we'll be explicit about what we want to read in.

import lua, os, osproc

# The nim object is pretty straight forward, we have three values, we only really need to use two. Two are strings (cstrings technicaly) and the other is an integer indexed table, so a sequence of strings in nim.

type State = object

`type`: string

packages: seq[string]

manager: string

proc main()=

# Load the Lua VM

var L = newstate()

openlibs(L)

# Read in our state configuration

if L.dofile("state.lua") != 0:

let error = tostring(L, -1)

echo "Error: ", $error

pop(L, 1)

# Instantiate an object to hold over configuration

var state: State

state.packages = @[] # and an empty seq for our package list

# Get the global state table onto the stack

L.getglobal("state")

# Then the package table

L.getfield(-1, "packages") # Stack: [state_table, packages_array]

L.pushnil() # First key for iteration

while L.next(-2) != 0: # -2 is the packages array

# Stack: [state_table, packages_array, key, value]

state.packages.add($L.tostring(-1)) # Read in the package, append it to the seq

L.pop(1) # Pop value, keep key for next iteration

L.pop(1) # Pop the packages array

# note these two pops are different, one is to clean up the values in the packages array

# the other is to clean up the reference to the array itself

# It's a lot easier to fetch the value of manager, since it's just a simple string.

L.getfield(-1, "manager")

state.manager = $L.tostring(-1)

L.pop(1)

var pkgcheck = ""

var pkgman = ""

# from this point forward we just use the values as expected in nim

case $state.manager:

of "apk":

pkgcheck = "apk info --installed "

pkgman = "apk add "

of "snap":

pkgcheck = "snap list | grep "

pkgman = "snap install "

for package in state.packages:

let (check, code) = execCmdEx(pkgcheck & $package)

if code == 1:

let install = execCmdEx(pkgman & $package)

echo "Installed " & $package

else:

echo $package & " is already installed"

close(L)

main()

Now you're probably rightly saying that the example is obviously broken. Where is the grains value coming from? It isn't defined anywhere at all. Worry not, we can easily fix that by writing a little bit of lua code, that will nets us an ad hoc grain system.

# A new global table, we could reference this in our nim code

grains = {

os = ""

}

# And to populate it we just need a way to figure out what OS we're on, parsing /etc/os-release is a tried and true method

function get_linux_version()

local file = io.open("/etc/os-release", "r")

if not file then

return nil, "Could not open /etc/os-release"

end

local content = file:read("*all")

file:close()

local version_id = nil

for line in content:gmatch("([^\n]+)") do

if line:find("VERSION_ID=") then

version_id = line:match("VERSION_ID=\"([^\"]+)\"")

break

end

end

return version_id

end

grains.os = get_linux_version()

state = {

type = "package",

packages = {"nebula", "htop", "iftop", "mg"},

manager = ""

}

# This check will now actually work instead of throwing errors

if grains.os == "Ubuntu" then

state.manager = "snap"

elseif grains.os == "Alpine" then

state.manager = "apk"

end

Executing our little micro IaC nets us a stateful result! It detects what is in compliance, reports it, and corrects what's out of compliance. Pretty cool for around 100 lines of nim and lua combined.

~/Development/lambdacreate/views/posts|>> ./main

nebula is already installed

htop is already installed

Installed iftop

Installed mg

In essence, this means the system described above can fulfill the role of static configuration data much the same as yaml, but also the role of imperative configuration state, something that modifies itself depending on the scenario in which it's deployed. And that can be extended by simply adding more Lua instead of modifying the Nim runtime. This is a really neat trick, but it's not an idempotent declarative IaC system. It's nowhere even close to it in fact! But it has frankly been a great learning opportunity, and I'm excited to keep tinkering with it and see what I come up with. Worst case this will become a replacement for Verkos, my shell templating tool, but I'm kind of hoping I can replace Salt itself with my own home brewed Lua based system. Time will tell!

If you're curious and want to tinker a bit with these the state.lua file can be found here and the nim program is here.

Credit

My friends, acdw and mio , both deserve a massive shout out for so many reasons. Putting up with me pasting massive code snippets into IRC buffers for years and bickering with them over yaml being the only real way to do any of this stuff. Thanks for putting up with me, and nerd sniping me into making a thing. Here's to hoping it becomes something really cool! At very least, enjoy the learning!

Knowledge doesn't exist in a vacuum, Lucas Klassmann who wrote this absolutely phenomenal blog post about embedding Lua in C, deserves a shout out as well. It is an absolutely delightful read and an eye opening explanation as to how truly simple it is to embed Lua into something.

]]>What's a Snap exactly?

On the surface it's a bunch of yaml like every other DevOps inspired tool out there, but beneath the veneer of slight transposed json is a build ecosystem that curates a custom suqashfs container just for your application and all of its dependencies to live in. And beyond that even is a daemon service and apparmor configurations that help mount and expose that content in a secure fashion to the host os. All with the result being that snap install firefox essentially gets you a firefox command you can run, even if it's technically doing a whole lot more than that and might not be as performant as the apt package was.

I won't debate the merits of that specific package, but I do find the idea of snaps as a bleeding edge distribution mechanism on top of what is ostensibly slow Debian Sid with 6 month "stable" snapshots an interesting idea. I find snaps even more interesting when you take into consideration the fact that this will work on boring stable Debian LTS releases as well. No need to futz with the apt package spec, or go through the rigors of contributing to Debian or even Ubuntu to be able to meaningfully package and distribute up to date packages across a plethora of systemd based distributions!

Now that, I find really cool. There's several ways to affect private configuration of systems, but very few to make it easy to distribute your own software to systems you may occasionally deal with. That scenario happens often enough that I personally think investing the time learning a new toolset it worth it.

All of that said, lets snap up fServ as it's a favorite of mine and a daily use tool. For anyone outside my immediate circle, fServ is a file transfer server, with a semi-complete implementation of a 9p2000 file server backed into it. If that sounds like a weird combination of things for a tool, then you'd be right, but my use case is typically enabling development work from Linux onto a fleet of Windows and Linux systems that vary wildly. Having a single tool that is also a single static binary that I can quickly throw up and pull down is pretty critical to my personal workflow. Plus, it lets me edit config files and write powershell scripts in Emacs on the host without installing Emacs or using tramp, it's cool.

This is the snapcraft I've come up with for fServ, it's a little different from other golang packages I've seen but only because I want it to be as small as possible.

name: fserv

base: bare # This is the squashfs that gets distributed, which should only container our static binary

build-base: core24 # This is an ubuntu 24.04 base, where our package is initially compiled

version: "1.0.0"

summary: A tiny popup file server

description: |

fServ provides a way to quickly serve files from, or transfer files to a system.

fServ generates its own self signed HTTPS certificate to encrypt in transit traffic sent between it and the client.

Additional fServ can be used as an ad hoc 9pfs file server, though it's entirely experimental.

grade: stable # This apparently does something, but Popey says to just set it stable and ignore it, so I did.

confinement: strict # This is the apparmor confinement level, if you set it to classic the snap needs manual approval

# with strict confinement fServ's snap will only work on specified directories on the host.

parts:

fserv:

plugin: go

source: "https://krei.lambdacreate.com/durrendal/fServ.git"

source-tag: $SNAPCRAFT_PROJECT_VERSION

source-type: git

override-pull: | # craftctl couldn't resolve the tags on gitea, so I had to add some small custom logic for that

craftctl default

git fetch --tags

git checkout $SNAPCRAFT_PROJECT_VERSION

build-environment:

- CGO_ENABLED: "0" # This indicates we're compiling statically

build-snaps:

- go/latest/stable # This pulls in the tooling to compile golang packages in the 24.04 base

build-packages:

- gcc

override-build: | # and obviously this compiles fServ and sticks it into the squashfs

cd src/fServ

go build -o $SNAPCRAFT_PART_INSTALL/bin/fServ

apps:

fserv: # this key actually becomes the cli invocation of the snap

command: bin/fServ

plugs: # and each of these plugs allow a set of predefined permissions

- network # These two allows fServ to run on 0.0.0.0

- network-bind

- home # And these two allow fServ to be run from /home

- removable-media # and /mnt and /media respectively

Really it's not too much of a lift to get the configuration down. No worse than any other package spec, and we're hand waving a ton of complexity by statically compiling and using golang. The only minor annoyance is that fServ is invalid so the snap is named fserv and if I try to set apps.fServ the cli invocation of the snap becomes fserv.fServ which is obtuse and a bad user experience. Maybe I'm holding it wrong though! I'll find out as I continue down the rabbit hole.

Once you've got your little yaml manifest together you need a couple of things.

- An Ubuntu system, or honestly probably just something running

snapd - the

snapcraftcommand, which is a snap package

# Install snapcraft

sudo snap install snapcraft

# cd into the repo with the manifest & initialize

sudo snapcraft init

# package the squashfs

snapcraft pack

# install and confirm functionality

sudo snap install fserv_*.snap --devmode

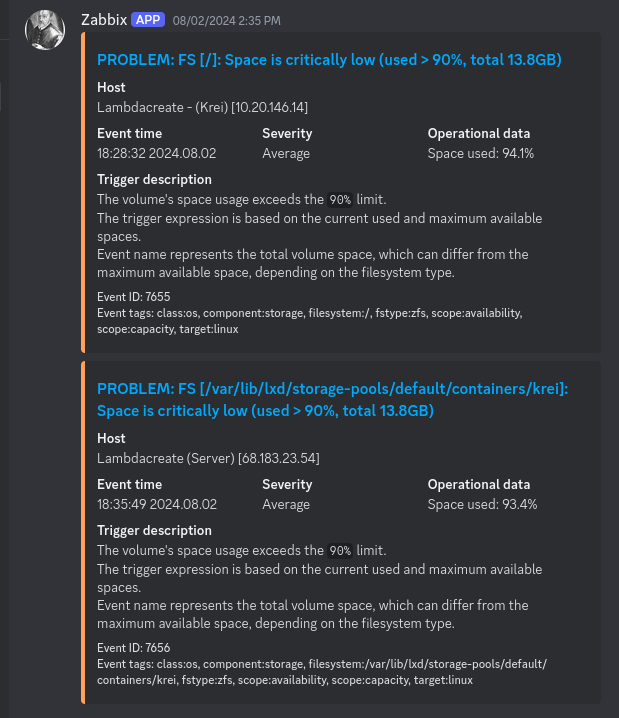

Anyways, this process will actually take a bit. Snapcraft uses LXD as its build backend, so it'll install that and put together a clean room environment to build the package in. I think it takes about 8gb of space to handle a single snap? I use 8gb volumes by default for my incus VMs and ended up needed to expand my snapcraft building VM in the process of packaging this and a couple other things.

Now, at this point you could stick the .snap file on a web server somewhere and just curl it down and install it with snap anywhere else that shares the same carch as the build system. But that's probably not what you want. You should release the package by uploading it to snapcraft.io, if for no other reason than you can then hook launchpad up to your github repo and build across all of the carches that Ubuntu currently supports.

It's enough to push the snap for the carch I built it on by just uploading it though. So maybe that's enough.

snap upload --release=stable fserv_*.snap

I'm too much of a completionist to stop here. On my gitea instance I created a snap organization and a repo for fServ, then created a push mirror on github so that I could connect the push mirror to Launchpad. That was totally worth it, because now whenever I release a new version of the snap package it gets rebuilt by Canonical's CI system. That's less for me to build and maintain, and a more trustworthy build source than my personal systems. This is a really nice feature resource for them to curate honestly.

This is what the build console looks like for all of the carches fServ is built on. Sadly the i386 build issue looks to be a compatibility issue with core24, I might be able to fix it by using core 20 though.

And this is what the build logging looks like. Very typical CI logging, but the key call out is that it's the exact same process you'd get if you run snapcraft through a manual build process which makes it really easy to reason about build failures. If anyone is curious here's the full build log from this screenshot.

Where'd you even learn this nonsense?

Well it all sort of started with the desire to have the Nebula mesh VPN on Debian systems. Version 1.9.3 of Nebula is actually packaged on Debian Trixie and in Sid, so there was something within reach, but version 1.9.5 of Nebula introduces a fix that allows you to issue v2 Nebula certs to clients, and then 1.9.6 has fixes for Windows system locking up. If I want Nebula on my Alpine boxes it's as easy as apk add nebula and I get an up to date version, for Windows there's (now) a Chocolatey package. But Debian? I would need to hand roll it, or dig back out my dust Debian packaging hat, and I have so little interest in doing that.

But I found that there's a snap package for nebula!, but it was unfortunately stuck on version 1.8.2 until very recently.

Instead of diving into packaging my own thing off the bat I took the opportunity to update the existing Nebula snap package. Which I was able to do with the current maintainers help and kindness! And I even have some additional changes pending that will enable the nebula-cert command to be exposed as nebula.nebula-cert and that will allow the snap to be used to manage the nebula CA, or at very least to print details about existing certificates for debugging/automation purposes. I've been talking with the current maintainer of this snap about potentially taking over maintenance of the package long term.

And of course none of my understanding of snaps came from a vacuum, Popey's blog has some great posts about debugging and maintaining snap packages. And I'm a huge 2.5 admins fan, so there's some appeal to seeing the technology demonstrated and learning it as well.

In the end, the quick foray into a new package ecosystem has netted me several things I need/want, and the maintenance burden on the contributions are pretty minimal given the amount of automation Canonical has provided for snapcraft. And the pragmatist in my appreciates all of this. Obviously this is quite literally just distributing something as a static binary in a different shaped box, but the real benefit is that I don't have to do anything other than change a version number in a yaml file. That level of simplistic maintenance is actually quite nice, and worth the effort.

]]>First off, lets address the elephant in the room, Windows?! I know! Surprise surprise, the Linux nut is tinkering with things he has actively dismissed his entire career as a waste of time. Don't worry, I still believe that Linux is the way, but I deal with a ton of Windows infrastructure. I have fond memories of XP as a kid, and Vista being utter shit, and 7 being cool and stable to the point of boredom. Then 8.1 turned everything into quasi tablet interfaces, people shoved it on phones, that felt really cool but it ultimately sucked. 10 was the last windoze, and now we're on 11 and for some reason the start menu is a react app. And that's to speak nothing of the server variants from 2003 until now where the licensing has only gotten more arcane, and the resource bloat more and more real. It's really silly, but I've never fully managed to run away from this terrible operating system that I despise, instead I've found unique and novel ways to apply my Linux knowledge in the service of maintaining these things.

So here we stand, at the precipice of my last OCC, typing to you from a Windows Vista netbook. Don't worry, I'm still using Emacs for this.

Vista?!

Yeup. Over the last 5 years I've done everything from unique deployments of Alpine, to Plan9, tinkering with Palm PDAs, mainlining a Motorola Droid4 on the daily, and now come to levels of sadism only the deranged would attempt. But there's logic to all of this, I swear!

See, in my professional life I get to remediate technology from all walks of life. The small business world is strung together by technology that should've been decommissioned decades ago, and while we technologists know that this is in fact not fine, it's all too common still. So even here, in 2025, I find myself dealing with Windows Server 2003 and 2008 on a frequent enough basis that it behooves me to understand the OS in some capacity. So I thought to myself, I'll build a lab, Server 2003 for a real gritty experience. And I'll take some old symix/progress install discs I found and setup an ERP, it'll be funny because if we don't laugh about it then we'll cry.

But of course, that wasn't that straight forward. Turns out it's a massive pain to get Windows Server 2003 in an Incus VM, and I can only take so much cognitive dissonance. No no, best go up a version instead where there's enough support that I can build my own VM template using existing tools. And since that's a thing I can try and deploy Server 2008 Core, since that's differentiating and neat, it's the first version that has a stripped down core offering. Maybe there's something neat there that I can leverage on an actual up to date version of Windows Server down the road.

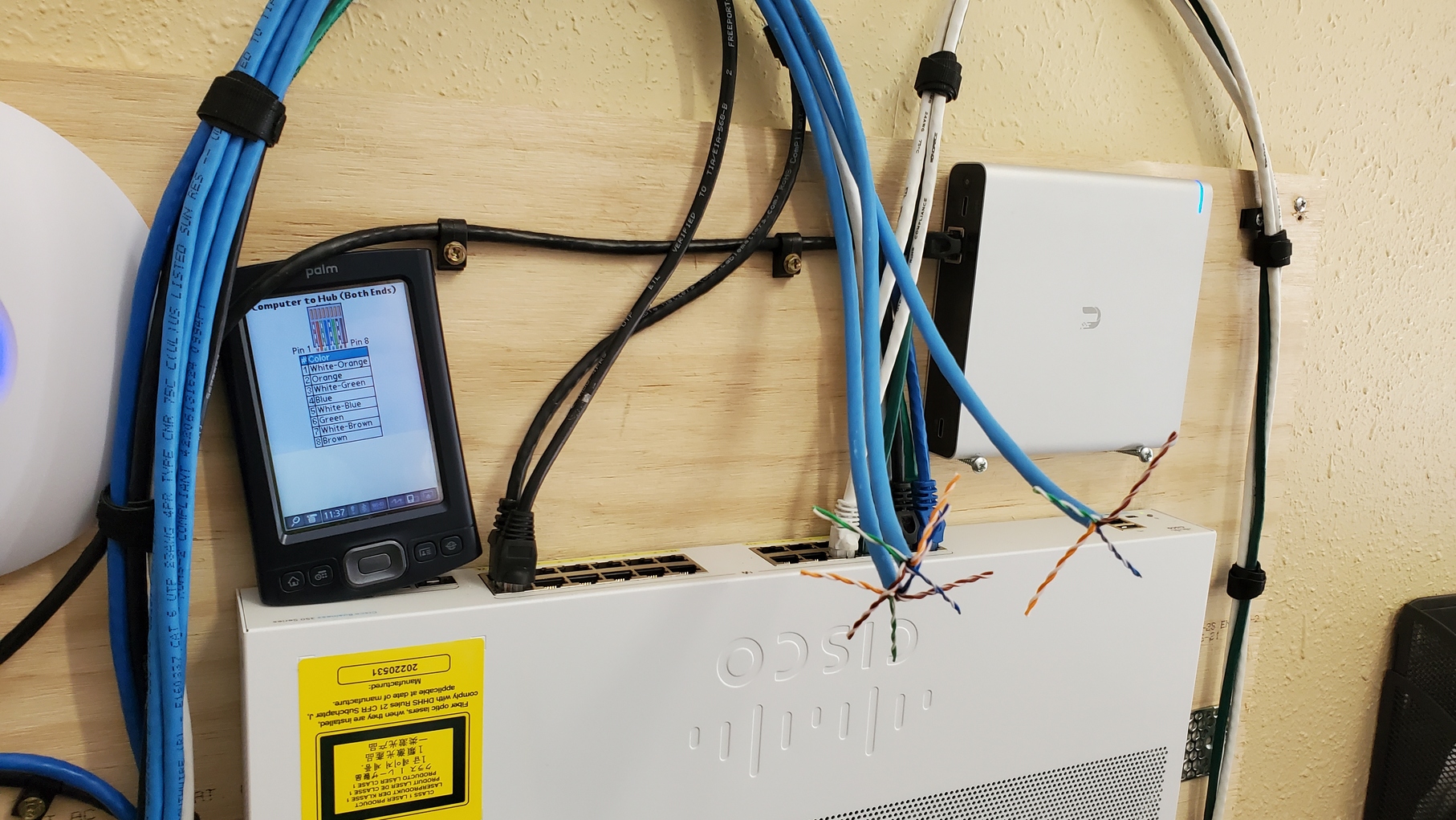

And since I'm going all in on Server 2008, and want to deploy a half-duplex lab and an ERP I might as well setup a Domain Controller, and some File Shares. Running DHCP and DNS on a Cisco 891FW is boring, so I'll shove that into the 2008 VMs as well just to give me more tinkering. And of course, none of that makes any lick of sense if I don't have a client system to domain join and leverage all these neat lab resources!

And then, we arrive at the odd realization that Server 2008 and Vista are the same thing. Womp. Sure I could have gone up to 7 and been fine for the purposes of the lab, but where's the fun in that? I remember HATING Vista growing up. It would hang, and crash, and BSOD for no reason. In fact, I vividly remember one of my first real Linux triumphs was imaging over a Vista install with Ubuntu and handing it back to a friend of mine and that one act solving all of her computer problems for a long time. Surely though, armed with almost two decades more experience, I won't suffer too much?

To nobodies surprise, I was wrong

The fact that this blog post is coming at you 5 days after I started the OCC gives you an idea of how obtuse this process has been.

My tribulations can be summarized as follows:

- 7/12: Prepare for the OCC

- 7/13: Imaging, updates, BSOD

- 7/14: Rescue, Rebuild

- 7/15: First day using Vista, fServ port

- 7/16: Second day using Vista, this post

- 7/17: I didn't even touch a computer

- 7/18-21: Last minute tinkering

7/12 - 7/13

Every year before OCC I typically take the day before the challenge starts to gather reference materials, installers, software. Anything I think might be helpful during the bootstrap process. This year that looked a lot like gathering ISO files for Vista and Server 2008, grabbing firmware files for the various Cisco equipment, fetching PDFs on Cisco IOS 15 and Windows Server 2008. Really basic stuff. Essentially my goal was to grab enough to start off with a thumb drive of things I thought I needed and go straight into labbing.

For the challenge I dug out my Acer Aspire One D255 netbook. There are no rules for this years challenge, so I figured that with it's 2c 2t Atom N550 & 1GB of RAM that it would run Vista like a dream, and I really didn't want to suffer TOO much since I was already dealing with Vista.

It had an old 9front install on it from an OCC a few years back, I think 2-3 ago. I obviously booted into that, did a system upgrade, and then decided I should probably keep the 9front install around. I did a bunch of rc scripting on it, and never actually put any of it in git. So dding the disc was the way. I just booted into an Alpine installer and did my thing, but.. it took 10hrs to dd the 32gb disc.

Now the disc I have in my D255 is the original that came with it when I bought it off Ebay. I was under the impression it was a spinning rust drive given the slowness, but it was actually an ancient crucial SSD. I had never actually opened this netbook because doing so requires you to remove the keyboard entirely to access the screws to remove the bottom. This was my first mistake, I should've swapped the disc immediately after a 10hr dd, that's just abnormal.

But nope, I went straight to installing Vista.

Fortunately that process was actually painless. I was able to boot into the installer using Ventoy's Wimboot mode and an ISO from Archive.org, and walked through a basic setup. That process was slow, but I got to a login screen without too much time wasted. I was ready for OCC!

So I start up the next day, it's sluggish, but it's Vista so whatever, and I start to install software. Right out the gate I didn't have working network drivers so I had to fetch sketchy installers from the interwebs, run them through the AV scanner, and sneakernet them over. Couldn't get the wifi drivers to work, and ended up tether to my Mikrotik MAP2nd travel router as a wireless <-> ethernet bridge, but I'm okay with that honestly it puts some distance between the Vista computer and my homelab.

Next up I installed legacyupdate which is actually a really cool community project, and let me apply a ton of Server 2008 security updates to my vista install. It also installed modern SSL certs making Vista actually somewhat usable! The last patch set looked like it was from 2024 which is utterly wild. Getting caught up from 2007 to last year took forever and a day though. I spent the first day of OCC just letting the netbook download updates while we went to visit a friend for a BBQ. I was okay with that, it felt authentic enough.

That's when things started to get messy. Once I got everything updated and I started to install more software, things like emacs, clamav, git for windows, and dotnet/powershell, the system started to become unstable. It would periodically freeze when anything disc intense would go on, like opening a file in emacs (this really shouldn't be intense) or opening file explorer. Again I just thought, Vista is shitty, this tracks. Installing any form of software was a 20-30m+ process regardless of how large or small. And then, I setup ERC, got online long enough to say hello to Mio, and went back to installing things. Tried to get Ruby and Go and a few different IRC client and then boom! A nice little oblique "critical error" message, followed by a BSOD.

Obviously the first thing I had to do was hop on IRC and complain about it lol.

23:28 @durrendal Finall got a second to poke vista again

23:29 @durrendal Boot it up, download go 1.10.8, run installer

23:29 @durrendal Bsod

23:29 @durrendal This is a truly authentic vista experience

23:30 @durrendal Oh fuck

23:30 @durrendal I think my HDD may have died or been corrupted. Now it won't boot at all

23:31 @durrendal I'm.. Going to just turn it off for a bit and see if it re-alives itself..

23:35 miyopan ohno

23:36 @durrendal I mean, I'm not surprised. This is precisely how bad I remember vista being lol

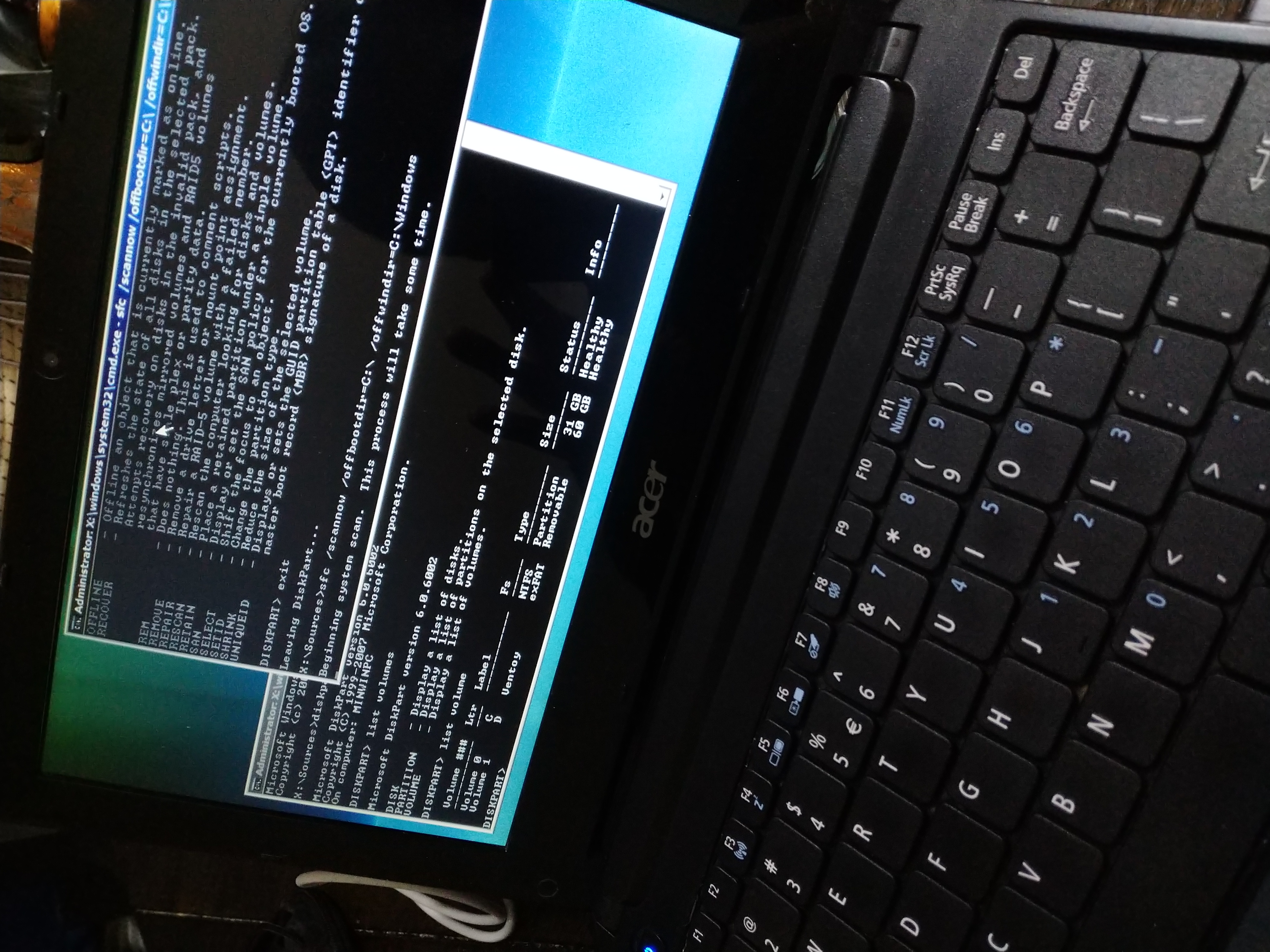

I wasn't able to grab a photo of the BSOD error message unfortunately, but after booting the netbook back up it couldn't even detect the SSD in the netbook. It defaulted over to PXE booting from tenejo.lc.c/boot, fortunately in Alpine I was able to see the disc and from there the process of recovering was easy I thought. I dug a spare 500gb crucial ssd out of the rummage drawer, nothing new, but also nothing that old, and a ubs-sata adapter and grabbed ddrescue from the apk repo.

setup-alpine #walk through setting up networking

echo "http://dl-cdn.alpinelinux.org/edge/main" > /etc/apk/repositories

apk update

apk add mg ntfs-3g tmuc ddrescue

tmux

fdisk -l # find the ID of the new and old disc (/dev/sda and /dev/sdb)

ddrescue /dev/sda /dev/sdb

ddrescue is a great little tool that reads damaged discs forwards and backwards and tries to piece together as much of the damaged resource as possible, perfect for my use case. This process took several hours as well, but the end result was a disc with my updated Vista install on it! I walked away at this point as it was late, and I had work in the morning.

7/14

The next evening I went back at it. With the newly imaged hard drive I dissembled the entire blasted netbook, installed the new drive, triumphantly booted it up! And instead of success I got this delightful error message that indicated the boot partition was corrupted.

UGH. At this point I considered quitting. Vista was a stupid second decision based on the fact that I wanted a system to domain join to a server 2008 lab. Why the hell am I even doing this in the first place? But no, I'm committed at this point. It's just a corrupted boot partition, it's not THAT hard to fix it.

I dig back out my Ventoy USB and boot into the Vista installer. I remember how to do this, it's easy. We'll just let the automated repair tool do the thing. But.. it doesn't work. Sure, maybe I can do it manually. I'll just boot back into the installer and run these commands to rebuild the boot records.

bootrec /fixmbr

bootrec /fixboot

bootrec /rebuildbcd

bootrec /scanos

Buuut, I got the same issue, winload is toast. At this point I tried a couple more things, sfc /scannow gave me nothing, but the installer could actually tell that there was a valid windows partition on the disc. This issue is really no different than when grub got bricked in alpine a yearish ago. What does it take to manually rebuild the boot partition on Windows Vista?

Surprisingly, (or perhaps not), the process is not all that different than how you'd handle it on a modern Windows system.

First off, just double confirming that the installer's recovery option isn't lying to me. I DO have a valid Windows partition right?

diskpart

list disk

select disk 0

list partition

detail partition

Checking this information corroborates what bootrec /scanos tells us, that there's a C: labeled partition on disk 0, and it's a valid windows partition. But the only partition starts at a 1mb offset, and there's no additional partitions. Which feels weird to me. I don't remember specifically if that's normal, I know on more modern Windows you have a typical UEFI boot partition, this is obviously MBR & BIOS though.

Best bet is to just start over and rebuild the entire boot record.

# First off, lets delete BCD, it's corrupted, we're going scorched earth.

del C:\Boot\BCD

# Recreate BCD so we an rebuild it

bcdedit /createstore C:\Boot\BCD

# Create the boot manager entry

# {bootmgr} is a special value, unlike the later used {GUID} which is a placeholder

bcdedit /store C:\Boot\BCD /create {bootmgr} /d "Windows Boot Manager"

bcdedit /store C:\Boot\BCD /set {bootmgr} device boot

bcdedit /store C:\Boot\BCD /set {bootmgr} path \bootmgr

# Create an OS Loader entry, and copy the GUID returned into a notepad.exe buffer

bcdedit /store C:\Boot\BCD /create /d "Microsoft Windows Vista" /application OSLOADER

# Take that GUID and configure an OS entry for the Windows partition

# Note that here where I have {GUID} you'd insert the actual GUID in curly brackets

bcdedit /store C:\Boot\BCD /set {GUID} device partition=C:

bcdedit /store C:\Boot\BCD /set {GUID} path \Windows\system32\winload.exe

bcdedit /store C:\Boot\BCD /set {GUID} osdevice partition=C:

bcdedit /store C:\Boot\BCD /set {GUID} systemroot \Windows

# Set the boot order

bcdedit /store C:\Boot\BCD /set {bootmgr} displayorder {GUID}

bcdedit /store C:\Boot\BCD /set {bootmgr} default {GUID}

# And finally, confirm all of that makes sense to Windows

bcdedit /store C:\Boot\BCD /enum

If all of that process works out, we just reboot, and it'll be like nothing ever happened. Which was precisely my experience. In fact, after upgrading the disc this system has not only been snappy and stable, but I've been able to use it to write a variant of my fserv project in nim, and write this blog post. Its honestly been worth all of the effort.

Now if all of that was too exciting and you're thinking "I want to try Vista too!" just remember.

7/15-7/16

With my Vista install stabilized to the point where I could actually do something, I started to reach for a tool I use regularly. It's a little pop up file transfer tool I wrote in go called fServ. Now it's not really a replacement for an ftp client or scp, it's not meant to do that, rather it's meant for adhoc deployment in scenarios where I need to temporarily retrieve or distribute something and either can't configure a more complicated solution. I use it a lot for bootstrapping initial access transferring ssh keys, or to pull logs/data off of systems during audits. It also has a 9pfs server built into it, so with the right level of arcane invocation and either Plan9 or plan9ports packaging you can simple brain slug other systems.

Unfortunately, I can't for the life of me get go to install on this bloody Vista system. Go says it's supported up to 1.10.8, but even going back to 1.10 I can get it to work. All of this is just annoying. I have emacs on this netbook, but no compilers to make tooling with. And I would really like to stop sneaker-netting things between this netbook and my NAS. Nim is my other compiled language of choice for small tools like this, and it turns out that Nim 2.2.4 just works on vista. All I needed to do was grab installer from here, extract it, and then drop it into C:\Users\durrendal\ and run finish.exe and it setup all of the paths etc, perfectly working nim and nimble install with minimal fuss. All of that felt really awesome, excited fServ is still written in Go, so it doesn't actually help me transfer files. Instead of building out lab components, I just wrote a minimal version of fserv in nim instead.

Obviously the use case for this was so that I could push photos I took on my Essential PH-1 to the netbook for use in this blog post. I wasn't about to go find a usb-c cable and try to figure out whether or not I could mount the phones storage on Vista. No no no, this is much easier, better to write some nim in emacs on Vista and be stubborn about the entire thing.

So that's all I've managed to get done thus far. Vista has really made this a struggle, but it has been a good learning experience nonetheless. And I think I have a couple more tools in the toolkit that I can apply to these older systems I keep running into even if I haven't quite gotten my lab back fully up. More to follow on that.

For those curious what software I've been using in Vista here's a list of all the things that have worked for me. There's still lots of options out there, and I think my needs are generally pretty light anyways.

- Emacs, for writing code, and ERC as a backup IRC client

- mIRC, primary IRC client, it has a more authentic Windows feel to it

- Supermium, it's a stripped down chromium fork that runs beautifully on Vista.

- Git for Windows, this is bash, git, and ssh tooling. It's an old version but works.

- nim + nimble!

- LegacyUpdate, for restored WUA functionality

- Winbox, for configuring my Mikrotik

- Peazip, for zip/tar functionality

- Evince, for reading all of those PDFs

- ClamAV, it's a Windows computer, gotta have something to use before you run things.

Like I said, it's not a whole lot, and is missing maybe the classic libreoffice install, but why would I want to type in anything other than emacs? I'd rather use org-tables than excel style spreadsheets. I'm not trying to make myself hate computing over here, and there's still a little longer on the challenge, but I'm excited for the future more than finishing up all of my previous plans.

7/18-7/21

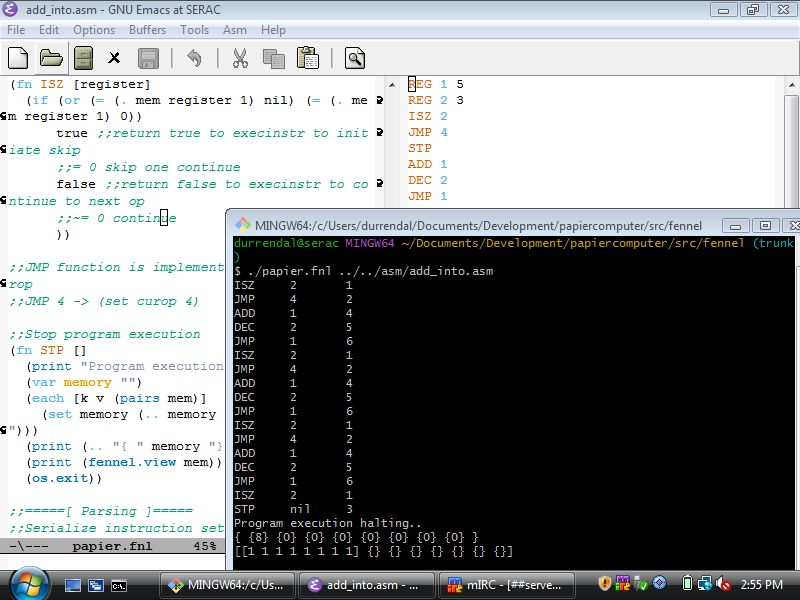

The last bit of the occ was spent tinkering a little bit with nim and fennel. It turns out that the pre-compile binary available on the fennel lang site just works flawlessly under vista. I shouldn't be surprised, fennel's static binaries are make with lua-static which shoves the whole Lua runtime into a little C binary, in the same way you'd embed lua in any other C program. But after fumbling with Go, especially given I went into this challenge assuming that "go just worked everywhere", this was a very pleasant surprise.

Because Fennel worked, I was able to dig up my implementation of the WDR paper computer I wrote a few years back during a blizzard. The paper computer is just a really stripped down stack machine assembly system, meant for teaching basic computing fundamentals to children. It is a fantastic teaching aid for the target audience, and the basics of implementing a little "virtual machine" for it is a good starting point for any curious about building their own systems, much like uxn which is more complicated by far and also a lot more interesting.

Of course, after confirming that this little toy worked in Fennel I had to rewrite it in Nim, because that's just what we're doing on this netbook. It was a good opportunity to add a little better error handling and flow control to the toy, and my son is old enough now that he showed some genuine curiosity in the how this worked component. That was the real gem here, it's not that interesting that I'd rewrite something from one language to nim, but to work through the bugs and tinker on the system, to load the little addition assembly program into the toy and have it produce a correct mathematical output. And then to take all of that and walk through each step of how the program "thought" about what it was doing as it processed the asm was a really cool experience as a technologist and a father.

I don't know that he quote got everything, but I think the next thing we're going to do is try to re-implement papier in python, ruby, or lua; whichever language he feels most comfortable with. It'll probably end up being Python honestly, but that's okay. I'll back fill what he doesn't want to do because it's neat to have these little references of the same system in many different languages.

Retrospect

Whelp, this went nothing like I planned!

I did nothing with server 2008, or any of the 100mb cisco gear. Well that's not true, I mounted it to the wall with the help of my son. And it did in fact get powered on, but that was the extent of it. I didn't expect to struggle with Vista so much initially. And then I didn't expect to actually USE Vista for so much afterwards!

If anything, this entirely week has been me mulling around this idea of portable tools, and systems. I, generally speaking, seem to be able to get by fine when I have Emacs. I can suffer through a less capable editor, but I won't be happy about it. When I do have it, I want to create things with it, and frankly Vista is not a limitation in that regards. The tools I expect to use don't always work, but some of them do. I am forever grateful for my inability to focus singularly on any one thing which has lead me to learn many languages out of simple curiosity.

I also really appreciate projects like 100 rabbits for creating interesting things like uxn and getting me curious about permacomputing and the retrocomputing community at large that keeps insisting that the difference between a usable system and junk are extremely blurred. It's not so much a necessity to have a specific type of system in hardware or software. The problem is just that too many of our tools are inflexible. I don't expect people to actively design and test software on something like Vista, but I do appreciate that the designers and maintainers of Fennel and Nim have chosen to distribute their compilers in a way that makes them backwards compatibly with Vista with minimal effort on my part.

All of that said, as I mentioned earlier, this is my last year doing OCC. It has been a delightful 5 years, but I'm ready for something new. In that vein I'm starting my own retrocomputing/permacompting/homelab focused group called LegacyLabs. I've really enjoyed the little week long challenges OCC has provided me, and a sincere thank you goes out to the hosts of the event, I hope they keep it up. For me personally though, I want a new space to better explore these ideas of sustainability, permacomputing, and computing history both old and new that I've been digging into over the last few years.

Feel free to join me if you're similarly interested, I'd love to have you along for the ride!

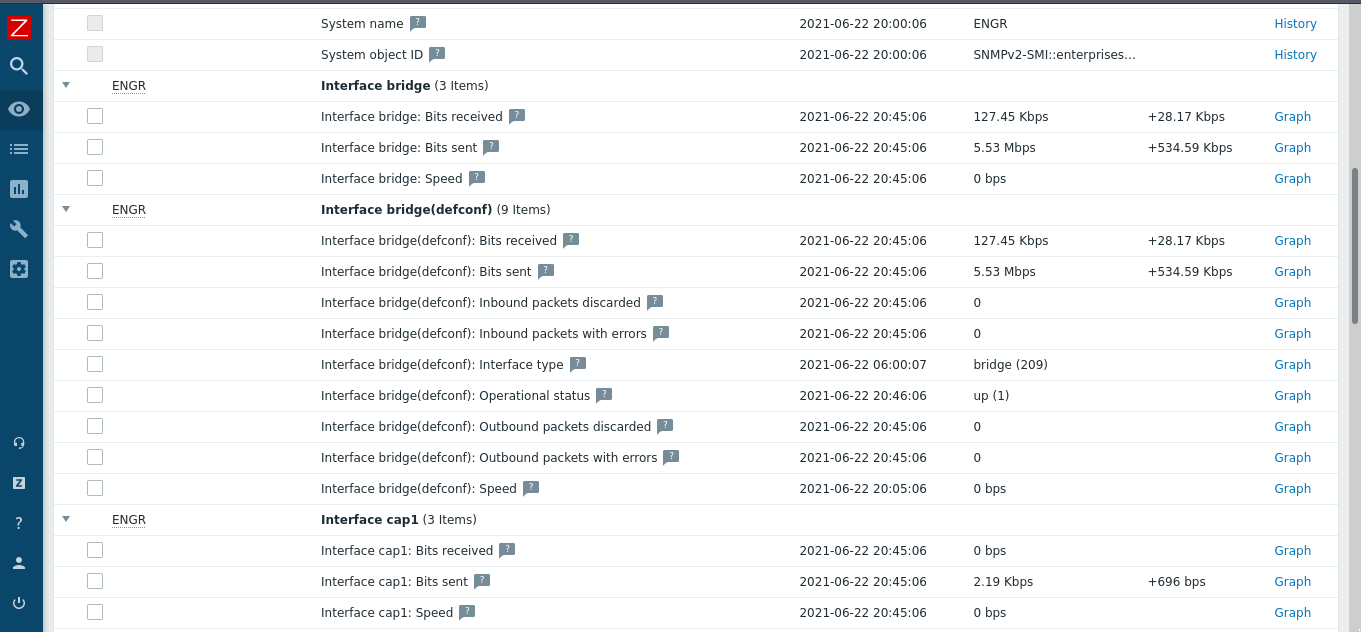

]]>That's not what we're hear to talk about though, well the Incus part yes, Broadcom no; we've all heard enough of that already. But because Broadcom acquired SaltStack, and then gutted the development team, which forced them into massively purging modules from the Salt code base in the name of future maintainability, there is this void in the ecosystem. A lot like what happened with Ansible, and Puppet, we now have a slew of modules (~750 total) that will be either abandoned or adopted and maintained by the community. Which as you can assume by this post affects me personally.

See I maintain SaltStack for Alpine, and while that hasn't been a smooth and problem free process, it's one I'm proud of and leverage a ton. I would really like to continue to use the modules I depend on, like the APK package handling & S3 file storage backend. I also want to add my own extensions, because that's like the entire selling point of SaltStack. It isn't just a state execution system, I could pick up an RMM if that's what I wanted. Rather Salt is this neat combination of remote execution, built in such a way as to be entirely modular and extensible. Everything is an extension or plugin built on top of a module which gets lazy loaded at execution time. Which means I can write custom discrete scenario specific logic into the system itself to address truly bizarre scenarios. That level of flexibility however comes with a price, and that price is technical complexity. For my desires the price I pay is having to learn how to.

Fortunately, that's right up my alley.

Despite that fact, I've been warily eyeing the project since the acquisition, hoping someone would adopt the packages I need so I wouldn't need to do anything. Always nice to benefit from someone else's hard work right? But after a yearish of waiting, it's pretty clear nobody is going to step up to the plate and do what I need. So I need to! I guess I'm well positioned to do it, I'm appropriately incentivized as a consumer of the software & I have the resources needed to materially affect the distribution I maintain Salt for. No brainer, I'll at least make sure my needs are met. The price I pay is more open source development, entirely worth it.

Just, one itty bitty tiny problem. The migration tooling, and complex testing & git commit workflows depend on python 3.10 which Alpine stopped shipping forever ago..

And that my friends is where this story ended about 6ish months ago. Barrier to entry was too high, so I gave up. I really don't like Python so I was demotivated, and it was still unclear whether or not the project would have life breathed back into it. Yet here we are, we've gotten 4 releases of salt on both the LTS and STS branches in the last month-ish, and things are feeling hopeful! Hopefully enough that I decided to take another crack at this.

Building Images with Incus

So my hypervisor of choice is Incus, it's a delightful little cli first tool for container and VM orchestration. System containers specifically versus the OCI style ones Docker & Podman provide. System containers being important here, I don't so much need immutable build once run anywhere systems, but rather an isolated environment in which I can pile on old dependencies, side loaded tooling, etc. All of it accessible from every system I use with no more than a simple incus shell saltext-copier call.

And incus makes it incredible easy to define and build custom containers! All you really need to do is have a rootfs to work with, and after that just a little bit of yaml will let you build the necessary squashfs with distrobuilder.

Here's the base definition for my Alpine 3.17 based Saltext container. Feel free to build and use it yourself.

image:

distribution: alpine

release: v3.17

architecture: x86_64

source:

downloader: rootfs-http

url: https://dl-cdn.alpinelinux.org/alpine/v3.17/releases/x86_64/alpine-minirootfs-3.17.9-x86_64.tar.gz

packages:

manager: apk

sets:

- packages:

- gcc

- python3

- py3-pip

- python3-dev

- musl-dev

- linux-headers

- dhcpcd

- openrc

- mg

- git

- bash

- openssh

- openssh-keygen

action: install

actions:

- trigger: post-packages

action: |-

#!/bin/sh

rc-update add networking boot

rc-update add dhcpcd boot

- trigger: post-packages

action: |-

#!/bin/sh

pip3 install copier --ignore-installed packaging

pip3 install copier_templates_extensions --ignore-installed packaging

- trigger: post-packages

action: |-

#!/bin/sh

# Create basic network interface config

cat > /etc/network/interfaces << EOF

auto lo

iface lo inet loopback

auto eth0

iface eth0 inet dhcp

EOF

targets:

incus: {}

Which is seriously as easy as running a single command.

sudo distrobuilder build-incus alpine-3.17.yaml

Which will produce two files, a squashfs and some incus metadata, which we can then use to import the image into our cluster. We then only need to launch a container from our built Alpine 3.17 base and it will pop up ready to go, with most of the base tooling ready to use!

incus image import incus.tar.xc rootfs.squashfs --alias alpine-3.17-saltext

incus launch alpine-3.17-saltext saltext-copier

incus config device add saltext-copier shm disk source=/dev/shm path=/dev/shm

Of course, I still needed to do some small quality of life things to this container, like configure git and add Emacs. It was only meant to be a temporary base system until we migrate away from 3.10 and to something more modern. Though chances are that the LTS release will continue to lag behind and I'll be re-creating more fleshed out custom images in the future.

Why didn't you just Cloud Init?

Great question! I would normally just use a cloud enabled incus image (images:alpine/edge/cloud) and provide a custom configuration to the container via cloud-init. I do this extensively for testing Ansible playbooks, or deploying things in my homelab. It just makes it easier to distribute things like SSH keys, etc, that you'd want configured during launch time. Sort of the expectation these days with AWS et al.

But, these images only go back as far as Alpine 3.19 in Incus. We stopped packaging Python 3.10 in v3.17.5 so that's the last version of Alpine I can pull down to get access to the right ecosystem of tools for this job.

My hope is I can maybe simplify this in the future once we're up to speed with 3.12 or 3.13 if it takes that long.

Additionally, I could have compiled Python from source and maintained a 3.10 version in personal APK repo. But that sounds like even more effort than I've already put into this, and I literally need it for like 2-3 tools that I know will be upgraded alongside Salt itself. We're going for the solution with the most bang for our buck here.

Actually migrating s3fs

All of this set the stage to finally migrate a few modules. I chose to start with s3fs for two reasons; I'm really reliant on this because I don't want to stash non-configuration data in my salt master, and it's literally a single python file. That's about as gentle an introduction to this as one could hope for. I just have to move one file, how hard could it be?

Well, after about 2 nights of effort, I can tell you that the gut reaction is to reach for saltext-copier as indicated in the name of the container. But that my friend is the hard path. It turns out that for the deprecated modules a custom migration tool was written around the copier tool which helps preserve git history and automatically updates non-compliant code.

Installing this tool is a simple uv command away, and that enables us to simply tell the migration tool the virtual name of the module we need to migrate.

uv tool install --python 3.10 git+https://github.com/salt-extensions/salt-extension-migrate

saltext-migrate s3fs

Once you kick off the migration tool it'll walk you through selecting the files you want to migrate, in this case it was literally just modules/fileserver/s3fs.py. And then begin skimming the salt git repo for history to preserve. It's actually a really neat process that materially lowers the barrier to entry. It's literally as simple as following through the prompts the copier and migration tools provide, which upon completion will drop you into a brand new structure git repo and provide you with curated next steps based on the code base you moved.

For example, this is the pre-commit linting information I got when initially attempting to pull out the apk package module from the larger pkg virtual environment. All super easy and actionable and small things to change.

Pre-commit is failing. Please fix all (2) failing hooks

✗ Failing hook (1): Check CLI examples on execution modules

- hook id: check-cli-examples

- exit code: 1

The function 'purge' on 'src/saltext/pkg/modules/apkpkg.py' does not have a 'CLI Example:' in it's docstring

✗ Failing hook (2): Lint Source Code

- hook id: nox

- exit code: 1

nox > Running session lint-code-pre-commit

nox > python -m pip install --progress-bar=off wheel

nox > python -m pip install '.[lint,tests]'

nox > pylint --rcfile=.pylintrc --disable=I src/saltext/pkg/modules/apkpkg.py

************* Module pkg.modules.apkpkg

src/saltext/pkg/modules/apkpkg.py:141:7: R1729: Use a generator instead 'any(salt.utils.data.is_true(kwargs.get(x)) for x in ('removed', 'purge_desired'))' (use-a-generator)

src/saltext/pkg/modules/apkpkg.py:207:4: C0206: Consider iterating with .items() (consider-using-dict-items)

src/saltext/pkg/modules/apkpkg.py:486:4: R1720: Unnecessary "else" after "raise", remove the "else" and de-indent the code inside it (no-else-raise)

src/saltext/pkg/modules/apkpkg.py:550:12: R1724: Unnecessary "else" after "continue", remove the "else" and de-indent the code inside it (no-else-continue)

------------------------------------------------------------------

Your code has been rated at 9.82/10 (previous run: 9.82/10, +0.00)

nox > Command pylint --rcfile=.pylintrc --disable=I src/saltext/pkg/modules/apkpkg.py failed with exit code 24

nox > Session lint-code-pre-commit failed.

So now what?

Well with the tooling in the right place, and an issue opened to officially adopt s3fs, I should probably talk to someone about how best to migrate the apk pkg module since it's a small subset of a much large set of extensions. I might accidentally break something if I guess wrong.

I also need to figure out how best to turn these extensions into Alpine packages and contribute them to Aports alongside my salt packages. My end goal will be to have a nice simple salt docker container where I can just do something like this:

FROM registry.alpinelinux.org/img/alpine:edge

RUN <<EOF

apk add --no-cache salt salt-master saltext-s3fs saltext-apk

rm -rf /var/cache/apk/*

rm -rf /tmp/*

EOF

ENTRYPOINT ["salt-master"]

If any of this seems interesting and you want to learn more about the extension system in Salt, I'm finding that Extending Salt by Joseph Hall to be a phenomenal resource. I'm chewing through it currently to get an even better grasp on how Salt's internals work while I work through all of this.

]]>The small business world is such a weird mesh of all of these questionably old technologies keeping core business operations going. We technologists all realize this is ridiculously scary in a real sense, but seriously, when is the last time you could say your software was so well built that it has been used for 10, 20, 30, hell 40 years straight! It is wild!

All of that vintage goodness has me thinking about what I should do for OCC this year. See I've come into possession of some nice enterprise 100mb Cisco networking, enough for a small branch network in fact. All of it is of course battle tested and weather worn, pulled straight from production a decade ago. Well, it should have been pulled a decade ago, but that's another story.

In conjunction with my stack of 100mb networking gear I've got access to a stack of Syteline ERP documentation and installation discs, plus the same for the supporting 4GL Progress database software. Some period appropriate Windows server & workstation discs as well, think Server 2003 and XP. Oh and a full set of physical reference manuals for Syteline as well!

So here's my thinking, I have enough random gear to setup a mini "business" network, complete with ERP system, domain control system, the works! Lots of crazy legacy infrastructure that I have way too much experience using professionally, but never for fun. It's a weird idea I know, but last year I just used the same old Alpine setup as the year prior, and this idea is a complete rejection of that in a way. Plus what else am I supposed to do with all these salvaged systems? They'd end up e-waste otherwise, and maybe this will be a good excuse to go through some CCNA materials and figure out if I want to spend the effort and capital to certify that knowledge.

This idea has been lovingly named

Project Half Duplex

(Yes I know all the gear I displayed is woefully out of date and likely not sufficient for this. I also have eve-ng in my homelab which is where all the real studying happens. But it will be fun to build a little 100mb LAN for all my weird little OCC infra to live in.)

Which is a whole other train of thought actually. It is becoming increasingly easy for someone to sound like they know a lot about everything. The age of LLM based AGI has moved the bar lower when it comes to sounding knowledgeable. One poorly worded question and you get paragraphs of talking material, bullet points to summarize, and you can converse to get deeper and deeper. Which naturally means that that thin veneer of knowledge is just as easy to break through, or run up against insurmountable obstacles. Which further reiterates the value of actual expertise and validated credentials. The people who are willing to put the effort into actually learning something will be in high demand when the technical debt machine that is AGI collapses in on itself.

It will be fun to watch.

Anyways, those are my current OCC musings. It's on the way, but not quite here yet (looking like it'll be around the week of 7/7 or 7/14, and that always has me super excited. Usually because I want to gather up some potential new gear, but I've got quite the stack of salvaged hardware to run through this year already!

Side Note

At the beginning of this year I told myself that I would try and publish a blog post every month, at least one, and it looks like I managed to hit January, then missed February, but followed it up with two in March! I was on a roll, and then I totally wasn't. Now the year is half over and I need to catch up. So expect several updates in the near future! I've been busy making material changes to the homelab and my little stack of hand crafted software, so I have plenty to talk about, just need to make the time to get it all down!

]]>But what did you do for the Hackathon?

Well, I personally painstakingly wrote ~250 lines of C and desperately tried to turn myself into a graphic designer before Mio kindly volunteered to help. And the result of a weeks worth of 2am hacking sessions and me frantically trying to remember how to do any of this stuff resulted in Pinout!. This legitimately only looks as good as it does thanks to Mio's contributions, all of the art is of their creation, and I am beyond thrilled with the results!

Seriously, you can take a look at my first three attempts at this application to get an idea of just how bad this would have looked without Mio's help. My talents firmly lie inside of the realms of operating cameras when it comes to art, and my brain has an immense amount of patience for wrangling painful things like C, but seems to revolt when faced with creating something that isn't code.

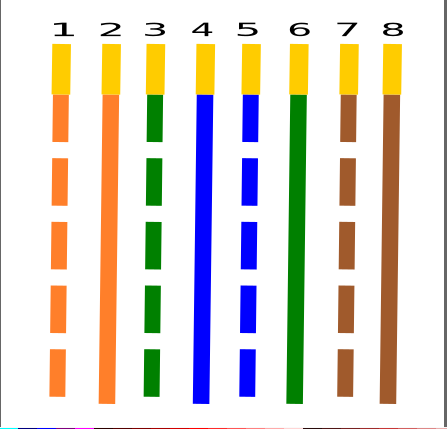

This was my first crack at Pinout, I threw this together while I was setting up a new office network. I couldn't remember the Pinout for RJ45B in the moment, and had to tip cables 30ft in the ceiling so that I could affix APs to an I-Beam. The safest reference I could think of was on my Pebble.

But of course that poorly rendered, totally in accurate version wasn't acceptable and I eventually stopped using it. I thought that perhaps I could be lazy since I'm not particularly artistic and I could just use a cribbed image I found online. I downscaled and dithered a pinout and threw it on the pebble! It worked! But it wasn't exactly usable.

Now Mio has recommended Inkscape to me in the past for creating SVGs, and I gave it my best effort, but after struggling for a few hours to come up with this bland, color in accurate, incorrectly scaled image. And realizing after loading it that I can created an icon and not a serviceable Pinout image, I was at a bit of an impasse and switched over to working on the actual code. Maybe I could figure out a dithering solution or vector art using PDC. I wasn't really sure, but I didn't think continuing with Inkscape was a conducive use of my time.

Admittedly, the last attempt was sort of on the right track, I think if I had had a lot more time and was just adding new cabling diagrams to the app that I could have gotten something acceptable together. Mio was kind enough to provide SVGs for the cable diagrams in Pinout, so I have a legitimately excellent starting point for the next time I try this, and I will definitely be releasing another version with more diagrams in it soon! I personally want to add RJ45 diagrams for rollover cables like you'd use for Cisco console cables, and one way passive cables. Those also open the doors to potentially adding RJ11 diagrams or maybe even serial pinouts! I think though that RJ45 is my primary use case and I just want Pinout to be as useful for as many Pebblers as is possible. It would please me to no end to know other people are using it!

So that C..ommon Lisp code?

Okay lets be real, I procrastinated until the last couple of days on this. I had ideas! But I spent the first 4 days writing Common Lisp libraries and trying to teach myself Inkscape. I think the C scared me, and my reaction when faced with "learn C" has always been "okay, I'll learn C...ommon Lisp". It's all of the ((())), the allure of a good list is just too much. Now there's nothing wrong with this process, and initially I thought it was necessary! The old Pebble tools are written in Python2, and while there has been some work done to update them to Python3 that's not really my style (though I will totally use them once the new Pebble's are release, package them for Alpine even!). There's some awesome work being done to re-implement the entire Pebble runtime in Rust so that it can run on the Playdate, which is wicked cool and the developer, Heiko Behrens, even released a pre-built binary of his PDC tool for the hackathon, so I knew from the get go that long term there's some solid work being done to re-implement these old tools. But if you know me, you know that I don't particularly care for Rust. Nothing wrong with memory safety, but needing hundreds of mbs of libraries to compile anything is ridiculous.

Wait, back up, PDC? Oh yeah, this is the fun stuff, if you thought Pinout was cool then lets take a detour into obscure binary formats, because that is precisely what PDC is! So the Pebble smartwatch can render icons and images via vector graphics. Animations occur throughout the Pebble smartwatch, like if you delete an item from your timeline you'll see a skull that expands and bursts. If you have an alarm you get a little clock that bounces up and down. Dismissing something results in a swoosh message disappearing, or clearing your notification runs them through a little animated shredder. This functionality is wicked cool! And unfortunately was largely lost due to the tooling being just old, the PDC format being undocumented publicly, and there just not being enough motivation to revive it.

Now for Pinout specifically I initially thought I'd do an application like the cards demo application where users could flip through cable diagrams instead of implementing a menu. This addressed two things for me 1) menu logic and 2) I thought I could do the diagrams as PDC sequences so that the pins would pop up one after another on the screen.

Ultimately despite documenting the PDC binary format thoroughly and even developing a parser for existent PDC files and the Pebble color space, I ruled that this was wildly out of scope for the limited time I had to make the app, and after fixating on it for 4 days straight only to be faced with the fact that I had nothing to show for the hackathon I made a hard pivot back into C land. None of this was time wasted in my mind, this is still a viable 2.0 option for Pinout that would be wicked cool to implement! And I feel I have enough of a grasp on the PDC binary format to potentially make an SVG -> PDC conversion tool, and eventually that may lead to an animation sequencing tool! I don't expect it to be officially adopted by Rebble or the Pebble folks frankly, but I like building my own weird tools so I don't care about all that, I'm hear to learn.

And I think this snippet from cl-pdc really emphasizes what all of that learning was about. Being able to describe what a PDC binary comprises of is meaningful progress in being able to translate it to either a different format (png, svg) or stitch them together into sequenced animations!

* (pdc:desc (pdc:parse "../ref/Pebble_50x50_Heavy_snow.pdc"))

PDC Image (v1): 50x50 with 14 commands

1. Path: [fill color:255; stroke color:192; stroke width:2] open [(9, 34) (9, 30) ]

2. Path: [fill color:255; stroke color:192; stroke width:2] open [(7, 32) (11, 32) ]

3. Path: [fill color:255; stroke color:192; stroke width:2] open [(26, 32) (30, 32) ]

4. Path: [fill color:255; stroke color:192; stroke width:2] open [(28, 34) (28, 30) ]

5. Path: [fill color:255; stroke color:192; stroke width:2] open [(26, 45) (30, 45) ]

6. Path: [fill color:255; stroke color:192; stroke width:2] open [(28, 47) (28, 43) ]

7. Path: [fill color:255; stroke color:192; stroke width:2] open [(17, 38) (21, 38) ]

8. Path: [fill color:255; stroke color:192; stroke width:2] open [(19, 40) (19, 36) ]

9. Path: [fill color:255; stroke color:192; stroke width:2] open [(7, 45) (11, 45) ]

10. Path: [fill color:255; stroke color:192; stroke width:2] open [(9, 47) (9, 43) ]

11. Path: [fill color:255; stroke color:192; stroke width:2] open [(35, 38) (39, 38) ]

12. Path: [fill color:255; stroke color:192; stroke width:2] open [(37, 40) (37, 36) ]

13. Path: [fill color:255; stroke color:192; stroke width:3] closed [(42, 25) (46, 21) (46, 16) (42, 12) (31, 12) (27, 8) (16, 8) (11, 13) (7, 13) (3, 17) (3, 21) (7, 25) ]

14. Path: [fill color:0; stroke color:192; stroke width:2] open [(12, 14) (18, 14) (21, 17) ]

And it's even cooler to see the same PDC file consumed into a struct that we could in theory pass around to various transformation functions. So close!!

* (pdc:parse "../ref/Pebble_50x50_Heavy_snow.pdc")

#S(PDC::PDC-IMAGE

:VERSION 1

:WIDTH 50

:HEIGHT 50

:COMMANDS (#S(PDC::COMMAND

:TYPE 1

:STROKE-COLOR 192

:STROKE-WIDTH 2

:FILL-COLOR 255

:POINTS (#S(PDC::POINT :X 9 :Y 34) #S(PDC::POINT :X 9 :Y 30))

:OPEN-PATH T

:RADIUS NIL)

#S(PDC::COMMAND

:TYPE 1

:STROKE-COLOR 192

:STROKE-WIDTH 2

:FILL-COLOR 255

:POINTS (#S(PDC::POINT :X 7 :Y 32) #S(PDC::POINT :X 11 :Y 32))

:OPEN-PATH T

:RADIUS NIL)

#S(PDC::COMMAND

:TYPE 1

:STROKE-COLOR 192

:STROKE-WIDTH 2

:FILL-COLOR 255

:POINTS (#S(PDC::POINT :X 26 :Y 32)

#S(PDC::POINT :X 30 :Y 32))

:OPEN-PATH T

:RADIUS NIL)

#S(PDC::COMMAND

:TYPE 1

:STROKE-COLOR 192

:STROKE-WIDTH 2

:FILL-COLOR 255

:POINTS (#S(PDC::POINT :X 28 :Y 34)

#S(PDC::POINT :X 28 :Y 30))

:OPEN-PATH T

:RADIUS NIL)

#S(PDC::COMMAND

:TYPE 1

:STROKE-COLOR 192

:STROKE-WIDTH 2

:FILL-COLOR 255

:POINTS (#S(PDC::POINT :X 26 :Y 45)

#S(PDC::POINT :X 30 :Y 45))

:OPEN-PATH T

:RADIUS NIL)

#S(PDC::COMMAND

:TYPE 1

:STROKE-COLOR 192

:STROKE-WIDTH 2

:FILL-COLOR 255

:POINTS (#S(PDC::POINT :X 28 :Y 47)

#S(PDC::POINT :X 28 :Y 43))

:OPEN-PATH T

:RADIUS NIL)

#S(PDC::COMMAND

:TYPE 1

:STROKE-COLOR 192

:STROKE-WIDTH 2

:FILL-COLOR 255

:POINTS (#S(PDC::POINT :X 17 :Y 38)

#S(PDC::POINT :X 21 :Y 38))

:OPEN-PATH T

:RADIUS NIL)

#S(PDC::COMMAND

:TYPE 1

:STROKE-COLOR 192

:STROKE-WIDTH 2

:FILL-COLOR 255

:POINTS (#S(PDC::POINT :X 19 :Y 40)

#S(PDC::POINT :X 19 :Y 36))

:OPEN-PATH T

:RADIUS NIL)

#S(PDC::COMMAND

:TYPE 1

:STROKE-COLOR 192

:STROKE-WIDTH 2

:FILL-COLOR 255

:POINTS (#S(PDC::POINT :X 7 :Y 45) #S(PDC::POINT :X 11 :Y 45))

:OPEN-PATH T

:RADIUS NIL)

#S(PDC::COMMAND

:TYPE 1

:STROKE-COLOR 192

:STROKE-WIDTH 2

:FILL-COLOR 255

:POINTS (#S(PDC::POINT :X 9 :Y 47) #S(PDC::POINT :X 9 :Y 43))

:OPEN-PATH T

:RADIUS NIL)

#S(PDC::COMMAND

:TYPE 1

:STROKE-COLOR 192

:STROKE-WIDTH 2

:FILL-COLOR 255

:POINTS (#S(PDC::POINT :X 35 :Y 38)

#S(PDC::POINT :X 39 :Y 38))

:OPEN-PATH T

:RADIUS NIL)

#S(PDC::COMMAND

:TYPE 1

:STROKE-COLOR 192

:STROKE-WIDTH 2

:FILL-COLOR 255

:POINTS (#S(PDC::POINT :X 37 :Y 40)

#S(PDC::POINT :X 37 :Y 36))

:OPEN-PATH T

:RADIUS NIL)

#S(PDC::COMMAND

:TYPE 1

:STROKE-COLOR 192

:STROKE-WIDTH 3

:FILL-COLOR 255

:POINTS (#S(PDC::POINT :X 42 :Y 25) #S(PDC::POINT :X 46 :Y 21)

#S(PDC::POINT :X 46 :Y 16) #S(PDC::POINT :X 42 :Y 12)

#S(PDC::POINT :X 31 :Y 12) #S(PDC::POINT :X 27 :Y 8)

#S(PDC::POINT :X 16 :Y 8) #S(PDC::POINT :X 11 :Y 13)

#S(PDC::POINT :X 7 :Y 13) #S(PDC::POINT :X 3 :Y 17)

#S(PDC::POINT :X 3 :Y 21) #S(PDC::POINT :X 7 :Y 25))

:OPEN-PATH NIL

:RADIUS NIL)

#S(PDC::COMMAND

:TYPE 1

:STROKE-COLOR 192

:STROKE-WIDTH 2

:FILL-COLOR 0

:POINTS (#S(PDC::POINT :X 12 :Y 14) #S(PDC::POINT :X 18 :Y 14)

#S(PDC::POINT :X 21 :Y 17))

:OPEN-PATH T

:RADIUS NIL)))

So that C code?

So now that we've demoed the thing I think I'm good at, lets look at what I think I'm not that good at. Pinout is an amalgamation of several example applications, and some code stolen from the other two watch faces I had previously published. That's a bit of a recurring theme for me, and probably most people. If I figure out a way to do something I re-implement it elsewhere because that just makes sense. Maybe these aren't the best ways to do any of this, but that's I think OK.

Pinout has three key components, a menu that allows you to select a diagram which then displays an image of the selected diagram, a battery widget, and a clock widget. Of the three the only one I had previously implemented was the clock widget.

Time Handling

This code was lifted straight from my emacs watch face. And it's really simplistic, I think it's in fact from one of the original watch face tutorials that Pebble provided. The only thing unique about it is that there's a check to ensure that a text layer (s_time_layer) exists before attempting to render to the screen. Since Pinout transitions between several different screens we need to make sure we don't attempt to render either the battery or time widget while transitioning.

//Update time handler

static void update_time() {

time_t temp = time(NULL);

struct tm *tick_time = localtime(&temp);

static char s_buffer[8];

// convert time to string, and update text

strftime(s_buffer, sizeof(s_buffer), clock_is_24h_style() ?

"%H:%M" : "%I:%M", tick_time);

// only updat the text if the layer exists, which won't happen until the menu item is selected

if (s_time_layer) {

text_layer_set_text(s_time_layer, s_buffer);

}

}

//Latch tick to update function

static void tick_handler(struct tm *tick_time, TimeUnits units_changed) {

update_time();

}

Inside of our image layer renderer we create a text layer in which we insert the current time. Note that we call update_time directly so that when the image is rendered we immediately have the current time rendered at the top of the application.

// Image window callbacks

static void image_window_load(Window *window) {

Layer *window_layer = window_get_root_layer(window);

GRect bounds = layer_get_bounds(window_layer);

//Image handling code removed for brevity.

//Allocate Time Layer

s_time_layer = text_layer_create(GRect(110, 0, 30, 20));

text_layer_set_text_color(s_time_layer, GColorBlack);

text_layer_set_background_color(s_time_layer, GColorLightGray);

layer_add_child(window_layer, text_layer_get_layer(s_time_layer));

//Update handler

update_time();

}

While the application is running we subscribe to the tick timer service, so that each time the time ticks up we get a call back to update the time in the application.

static void init(void) {

//Subscribe to timer/battery tick

tick_timer_service_subscribe(MINUTE_UNIT, tick_handler);

All of this works because once the application is launched its primary function is to initialize and then query those tick handlers then wait for user input.

int main(void) {

init();

app_event_loop();

deinit();

}

Battery Handling

You're probably not surprised that the battery widget works almost exactly the same as the time widget. We define an update function that checks the current state, and only renders if our containing layer exists.

// Current battery level

static int s_battery_level;

// record battery level on state change

static void battery_callback(BatteryChargeState state) {

s_battery_level = state.charge_percent;

static char s_buffer[8];

// convert battery state to string, and update text

snprintf(s_buffer, sizeof(s_buffer), "%d%%", s_battery_level);

// only update the text if the layer exists, which won't happen until the menu item is selected

if (s_battery_layer) {

text_layer_set_text(s_battery_layer, s_buffer);

layer_mark_dirty(text_layer_get_layer(s_battery_layer));

}

}

And we described another text layer inside of our image renderer with an update callback.

static void image_window_load(Window *window) {

Layer *window_layer = window_get_root_layer(window);

GRect bounds = layer_get_bounds(window_layer);

//Image handling code removed for brevity.

//Time handling code removed for brevity.

//Allocate Battery Layer

s_battery_layer = text_layer_create(GRect(5, 0, 30, 20));

text_layer_set_text_color(s_battery_layer, GColorBlack);

text_layer_set_background_color(s_battery_layer, GColorLightGray);

layer_add_child(window_layer, text_layer_get_layer(s_battery_layer));

//Update battery handler

battery_callback(battery_state_service_peek());

}

And the subscribe to the tick service in init! Almost exactly the same!

static void init(void) {

//Subscribe to timer/battery tick

tick_timer_service_subscribe(MINUTE_UNIT, tick_handler);

battery_state_service_subscribe(battery_callback);

// Get initial battery state

battery_callback(battery_state_service_peek());

It's really nice to see consistency like this. The Pebble C SDK is really well designed and documented with tons of examples from when Pebble was still in business. They really were something super unique, and it still shows today.

Menus & Image Rendering

Now image rendering and menu handling was new to me, but I was able to find this tutorial that helped immensely. Once I had my arms around the idea of how I thought the application might work it ended up being incredibly simple.

We define our menu as a simple enum and define the total length of the menu statically. Then we setup an array of IDs that are used to reference the PNG images for each diagram.

#define NUM_MENU_ITEMS 4

typedef enum {

MENU_ITEM_RJ45A,

MENU_ITEM_RJ45B,

MENU_ITEM_RJ45A_CROSSOVER,

MENU_ITEM_RJ45B_CROSSOVER

} MenuItemIndex;

// Images for each pinout

static GBitmap *s_pinout_images[NUM_MENU_ITEMS];

static uint32_t s_resource_ids[NUM_MENU_ITEMS] = {

RESOURCE_ID_RJ45A,

RESOURCE_ID_RJ45B,

RESOURCE_ID_RJ45A_CROSSOVER,

RESOURCE_ID_RJ45B_CROSSOVER

};

We keep track of where we are in the application by re-defining the currently displayed image upon selection. And then the menu rendering is as simple as iterating over the total length of of menu, and carving out a section of the screen for as many entries as will fit.

// Currently displayed image

static GBitmap *s_current_image;

// Menu callbacks

static uint16_t menu_get_num_sections_callback(MenuLayer *menu_layer, void *data) {

return 1; // We're only using a single menu layer, but maybe down the line the image will be a sub menu to textual information about the diagram.

}

// Return the number of menu rows at point