Just like many (most?) of you, I am using AI every day. I like it for some things, like giving me insights on topics and explore new ideas or create learning plans. I've been way less successful for other things (well, most things actually).

Heck, I'm even currently leading AI adoption for engineers in my current job, so let's say I'm pretty invested.

In this article though, I want to discuss a few of the ways AI has drastically impacted me and my life so far.

It's generally harder to find quality technical content online

Because AI is able to generate tons of content in minimal time, many content farms out there generate massive amounts of data and flood the market with it. Some sources even report that 3/4 of websites online already contain generated content.

It makes it increasingly difficult to use search engines to find quality technical information.

This is a not a new phenomenon in DevRel, it's well known that many of the large actors out there externalise the "lower quality / more generally" content to third parties. But with the arrival of LLMs, it has scaled up tremendously.

I simply find myself relying less and less on "insert your search engine" to get proper technical information. Instead, I directly go to specialised places like Discord, Reddit or simply the documentation.

This is also true for smaller scale content publishers and personal blogs. For as little as 50$ a month you can have a completely automated blog generating content for itself, including social media promotion.

The signal / ratio on social media has become abysmal

Social Media has always been a double edged sword for me. Even 10 years ago, unless you'd curate your timeline like a madman, you'd have to sift through a lot of irrelevant content to find a few gems. But those gems were worth it. As a long time Kotlin developer, I've found most of "my people" online first by sharing and reacting to Kotlin content. Today, those gems are still there but the amount of low quality / irrelevant content has spiked up to incredible amounts.

I feel like it's more blatant on Youtube, play around with shorts (🤢) for 30 minutes and your timeline will be full of AI generated videos that voice over successful reddit posts from completely automated accounts. Worse, the content is very often plain wrong.

Not only that, AI is also being pushed on people using social media. Insert a few words, and LinkedIn will transform those into a full fledged 500 words post babbling about said words.

Everything looks the same

Because of how easy it is to generate content, as well as how aggressively it is pushed everywhere (Gmail, Outlook, LinkedIn, ..., basically everywhere!) the share of people using it is increasingly large.

This leads to posts that basically all look the same. Same formatting, same verbosity, same use of emojis, bullet lists everywhere with separated sections AND SAME LIFELESS TONE... The online world is really losing its flavour. Essentially, AI generated content has no soul.

It also means that shallower content will tend to be posted more often, because of how cheap it is to generate posts. Which increases the signal/noise ratio issue I mentioned above.

I lost my (online) community

One can find many semi automated accounts posting engagement traps to and this simply pushes the more technical audience away.

The social media world has also become a lot more fragmented the last few years. First because of the Twitter acquisition (leading to many people being on different platforms) but also lately because the ever more aggressive chase for data to feed the almighty models. Add to this that by being on a platform, you have to coexist with some outrageously voluntarily biased models and the use of social media becomes very unattractive.

Because of all these reasons, a large share of the people I used to follow are now using social media for "push only", meaning that they use cross-posters and do not interact with their replies. Others have left the game altogether.

And given that discussions (for me) are where connection gets created, I simply find it harder to find inspiring people (whose behaviour or work give me energy) online.

We've become so much more used to stealing other people's content

One of the things that bothers me the most is that we all know that those large models are only possible because we have been essentially been stealing people's data at an unprecedented scale.

In a world where some of the most used Open-Source projects struggle to survive for lack of sponsorship, all of us techies are using AI assistants that have been fed the entirety of GitHub. Anthropic has just lost a lawsuit because they used books to train their models without authorisation. The same is true for images, music, video generation, . . . you name it.

We developers are at the same time lamenting for the lack of sponsoring from the big corps while using stolen data on a daily basis.

It all feels unsustainable

I am no expert in economics, so please don't take my word for it but given the already insane amount of revenue generated by AI the past years and the sheer size of the losses that those companies are still making, I find it extremely hard to believe that the current pricing models of AI tooling is sustainable (and I'm not the only one).

A few years back, Netflix cost 12 dollars. The convenience and the size of the catalog made me a very happy user. Eight years down the line, it is now close to 25 dollars (and climbing) and I have to pay 4 similar services for the same catalog.

What's even more, the insane levels of investments loops being made make me genuinely worried the whole industry ends up crashing hard.

It's like driving a Ferrari at 250km/h on the highway : It feels good and exhilarating, but it's genuinely a bit hard to not have a large part of your brain wondering what will happen when a mistake happens....

It's easy to be lazy

I use AI everyday, and it can really be amazing. A pet-project that used to take a week now takes 1/2 day. It's also really great at helping me dive into an existing project or domain quickly. Or update dependencies for older maintenance projects. It's also a rather good rubber ducky (even if it tends to agree with you too much for my taste) and it's a useful creative companion at giving project or gift ideas.

What I find difficult though is how easy it can be to fall into the trap of "just do it for me please".

- This blog post you have to write ? "Do it and I'll do some changes".

- This slide deck that is expected of you "Oh, I'll just need a couple hours and AI will do most of the work for me".

- Darn I'm stuck on Advent of Code, Claude, do your magic.

It takes effort to stay sharp. And now, we constantly have a backup plan next to us to do our work. It's easier to just let it slip and requires (me) more discipline and motivation to stay relevant.

I am sometimes really glad I am not a student today, because I feel GenAI would have done me a lot of harm back then. The grind is useful to get past a certain level of understanding.

It was always possible, you simply needed to copy the work of someone else or use a mechanical turk platforms. But now it's always available on all your devices, for free. And it turns out, research seems to agree.

Hiring online has become harder

A few months back, I was hiring for a couple technical positions. I made the mistake to post the openings on LinkedIn.

LinkedIn has always been a bit of a hit and miss for hiring, but I've had some success in the past finding very relevant people way out of my network (Shoutout to my former Adyen team!!). Things seem to be different since the GPT era though....

Within one hour, I had received over 300 responses. Most clearly generated and not a single one relevant to the position. I closed the gates as the requests were still pouring in and decided to use my local network again.

I am unsure exactly what happens here, having not used the platform to search for a job myself but I believe that many people use tools like n8n to build automated pipelines those days generating applications responses on the fly .

I have to admit I don't quite understand this to be honest, I've always been more of the "apply to 2 jobs but go 300% for it" kind of person. What are your chances to get an interview based on a fully generated application? The current market is quite overcrowded though, so I guess I can still understand why people do this...

And so what now?

This article ended up being much longer than I expected. I don't intend to do any low key AI bashing here. I am using AI everyday myself and am genuinely excited about the possibilities that technology can bring us in the future. I have Claude writing a new pet-project for me literally as I am writing this.

I'll write more about it soon but as a community person, I have never seen such engagement from a community than with AI tooling. There is deep, genuine interest; people experiment a lot and fast and this creates a lot of positive excitement. Optimists and Skeptics alike, close to everyone feels involved.

That being said, I also see that my behaviour has a changed a lot the past couple years :

- I mostly lost interest in social media, for the reasons mentioned above. I am almost push only now and it has become infrequent.

- I am slowly migrating out of GitHub and my pet projects have become private by default.

- I am much more skeptical of any news I see and verify almost everything I read (which is not a bad thing in itself).

- I am always "on alert" for anything I see / read / hear to see if it is generated content.

- Most visibly AI generated content has become a red flag for me and a sign of "low effort". I don't dismiss it but I do alter my expectations about the content / person / company in front of me based on this.

Most interestingly though, I see renewed interest in smaller, gated communities. Physical Meetups, local groups, closed content publications, indie writing, ...

I actually feel like we're coming back to where it all started for me 15 years ago. Locally, with people I know, making genuine connections. AI can't replace that, and in some ways, it might be making me more human again...

To be continued!

]]>You should know by now that I'm a pretty big fan of Coolify. I use it to manage all of my applications.

One of the things I love the most about Coolify is how it automagically handles DNS for you. Write where you want your app to be hosted and boom, it'll set it up for you. Dont, and it will generate one for you. You can read more about it here.

Today, I faced a new use case for the first time : I had a Claude artifact that was hosted somewhere already, but wanted one of my subdomains to redirect to it.

I know I could do it directly via my registrar's DNS interface, but because all of my apps are handled via Coolify I wanted to keep it as such if possible.

The issue is, to access this domain configuration setting from Coolify, you have to create a resource (git repo, Dockerfile, . . . ).

Turns out there is another way : the dynamic proxy configuration.

My use case is as such : I want to have https://blogprocessor.lengrand.quest/ redirect to https://claude.ai/public/artifacts/b21d3e9c-b05f-4fe3-923e-63e1144cfff8. To do this :

- Navigate to Servers > [YourServer] > Proxy > Dynamic Configuration in your Coolify dashboard.

- Create a new YAML file (in my case

claude.yaml) with the following structure:

http:

routers:

blogprocessor-redirect:

rule: Host(`blogprocessor.lengrand.quest`)

service: blogprocessor-redirect-service

middlewares:

- blogprocessor-redirect-middleware

tls:

certResolver: letsencrypt

middlewares:

blogprocessor-redirect-middleware:

redirectRegex:

regex: '^https://blogprocessor\.lengrand\.quest/?(.*)'

replacement: 'https://claude.ai/public/artifacts/b21d3e9c-b05f-4fe3-923e-63e1144cfff8'

permanent: true

services:

blogprocessor-redirect-service:

loadBalancer:

servers:

- url: 'http://127.0.0.1:9999'(Note : I am using the traefik proxy here, but you can use Caddy too)

The actual content of the configuration is pretty simple :

- A Middleware that describes what kind of redirection I want

- A router to be able to handle tls

- A (dummy) service because somehow the router requires a service and a middleware

I have tried to get rid of the dummy service but couldn't find a way to get the TLS handling without it. The good news however is that I can keep using that file as I create more Claude artifacts without having to create much more configuration.

If you find a way, please let me know!

I want to thank Cinzya, one of the moderators of the Coolify server for pointing me in the right direction, she's been super helpful!

]]>Earlier this year, I've bought my very first car. (Managed to spend almot 20 years of my adult life without owning one, isn't that a bit impressive? 😊).

The first thing I did was to buy a dashcam. I went for a rather simple model, but with both a front and back camera. Being a rather new (active) driver, I wanted to make sure that everything that could happen was recorded just in case.

The dashcam itself is great, and it turns out there isn't more than a couple days passing by without an incident that I'd like to record. People tend to be very dangerous on the road, and I'm honestly surprised how we can give a ton of steel to everyone and send them on their merry way to the same locations every day, on a schedule without causing more mayhem.

One of the limitations of that dashcam is that there is no easy way to "mark" moments of interest to extract them later. Just like most dash cams, the camera simply has a SD Card inside it and continuously records the road on a rolling stream.

My first thought was to shout "RECORD" every time I saw something I wanted to extract later and post process the video files searching for those moments. That gave me the opportunity to play with OpenAI's whisper. But it turns out that that microphone from the dash cam is of mediocre quality, and I also have a bad tendency to play music extremely loud when I drive 😅. It's also extremely power and resource consuming because it requires transcripting the entirety of the video files before searching for the keyword occurrences.

I gave it another thought and realized that I could quickly build a very efficient poor man's version of the system I wanted using Apple Shortcuts.

This is how my current process looks like :

- Create variables with the current data and location

- Create a string of text with both variables concatenated

- Append this text to an existing note.

We constantly talk about AI those days, and I realised that I barely use Siri except to ask it to set an alarm or a timer while cooking. Turns out the shortcut application is very powerful, being able to open and close applications, run loops and much, much more. It even has a sort of scripting language.

The last thing left to do is to find a good name for the shortcut. I called it "Record incident" so it's generic enough, while still very easily understandable by Siri on top of music.

Because of the location variable (which I added as a bonus), Apple will ask for additional permissions the first time shortcut is being run but that's only a good thing in my opinion.

That's it for now! I now have a special notes on my apple devices that lists all of the moments of interests that I can easily trigger by voice and without being dangerous while driving.

The next step is to write a short script that will parse those values and create small clips from the video stream around those moments.

Always fun to discover new capabilities, and find cheap and efficient ways to achieve my goals 😊.

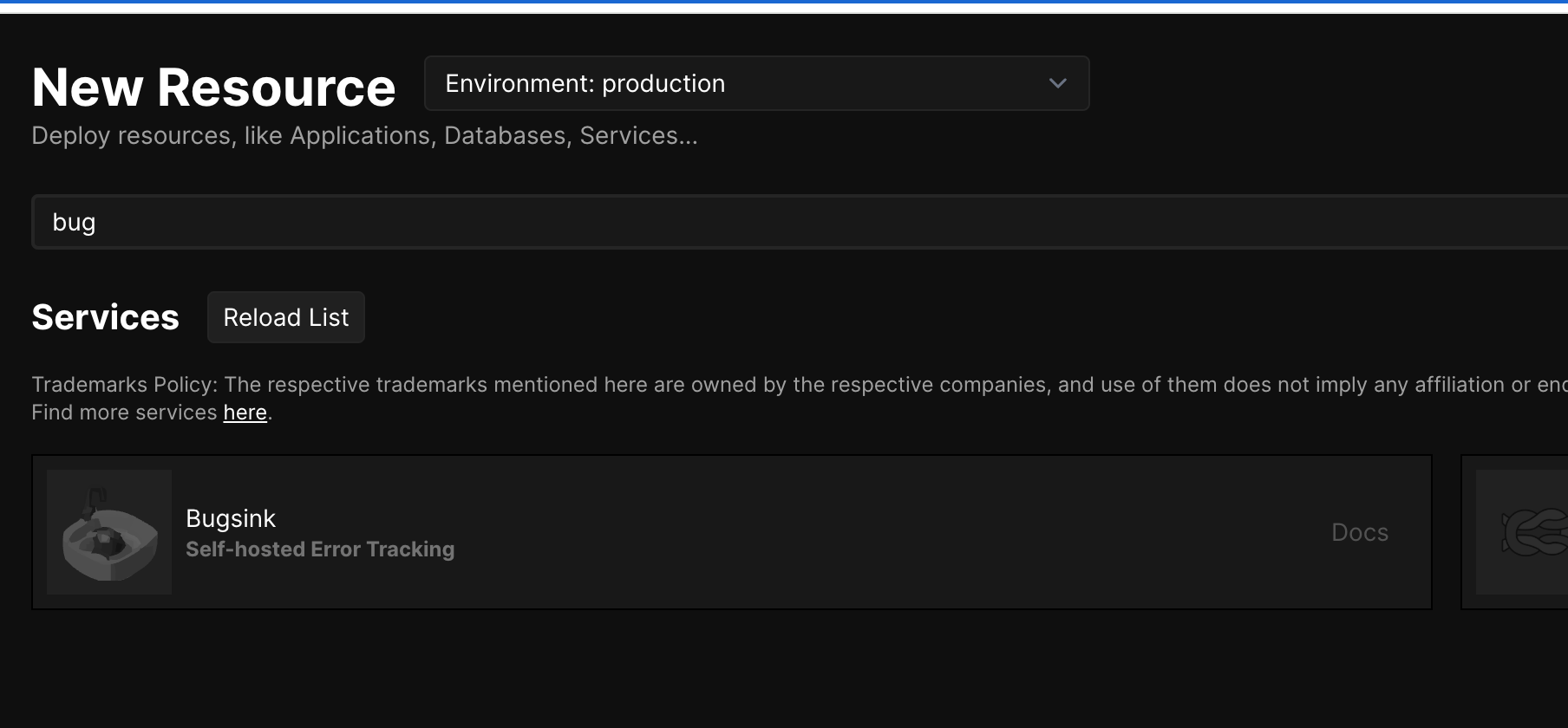

]]>TL;DR : There is a bug in Coolify currently preventing to use the default Bugsink deployment template. This post describes a method to deploy via Docker Compose while waiting for a fix.

Some context

As some of you may know already, I've been moving off the public cloud the past year and started deploying my applications on my own server instead using Coolify. I do this for many reasons, one of them being that I want to support indie projects more and I really like Andras' open approach to building his company.

Since last summer, I've been following Klaas' journey into building Bugsink; a simple but effective error tracker. (Disclaimer : I have been doing some advising work for Klaas and am biased here 😊, but my opinion is 100% honest here).

The main deployment template

Coolify has a module system that you can use to install services from templates. I have learnt about it a bit, since I contributed the first version of the Bugsink template to it 😊. The template uses a Docker Compose deployment in the background.

Pretty handy when working on a new project. Pick service, click, use.

The bugsink blog actually has coolify deployment page, but the problem is that the latest version of Coolify has a issue where some environment variables can't get updated properly after the original deployment. This makes it impossible to deploy Bugsink properly in my case.

While waiting for the bug to be fixed, I dived deeper into the Docker Compose file and ran my own version.

Current deployment

I started from the Bugsink Docker compose documentation page, but I ran into multiple issues specific with Coolify with the setup :

- I needed to setup port redirection because I didn't want my DSN address to contain any port (meaning I had to use Coolify's magic variables)

- I wanted HTTPS to work as expected

- I also wanted my

BASE_URLto be properly recognized by bugsink, while it is refusing any request that is not from an authorized domain.

This is the Docker Compose file that I currently use :

services:

mysql:

image: 'mysql:latest'

restart: unless-stopped

environment:

- 'MYSQL_ROOT_PASSWORD=${SERVICE_PASSWORD_ROOT}'

- 'MYSQL_DATABASE=${MYSQL_DATABASE:-bugsink}'

- 'MYSQL_USER=${SERVICE_USER_BUGSINK}'

- 'MYSQL_PASSWORD=${SERVICE_PASSWORD_BUGSINK}'

volumes:

- 'my-datavolume:/var/lib/mysql'

healthcheck:

test:

- CMD

- mysqladmin

- ping

- '-h'

- 127.0.0.1

interval: 5s

timeout: 20s

retries: 10

web:

image: bugsink/bugsink

restart: unless-stopped

environment:

- SECRET_KEY=$SERVICE_PASSWORD_64_BUGSINK

- 'CREATE_SUPERUSER=admin:$SERVICE_PASSWORD_BUGSINK'

- SERVICE_FQDN_BUGSINK_8000

- 'DATABASE_URL=mysql://${SERVICE_USER_BUGSINK}:$SERVICE_PASSWORD_BUGSINK@mysql:3306/${MYSQL_DATABASE:-bugsink}'

- BEHIND_HTTPS_PROXY=true

- BASE_URL=$BASE_URL

depends_on:

mysql:

condition: service_healthy

healthcheck:

test:

- CMD-SHELL

- 'python -c ''import requests; requests.get("http://localhost:8000/").raise_for_status()'''

interval: 5s

timeout: 20s

retries: 10

There are only a noticeable changes :

- I added the

BEHIND_HTTPS_PROXYvariable, because you don't want to run a bug tracker on plain HTTP. - I separated the generated qualified domain name that Coolify uses and the

BASE_URLvariables.

Now, before deploying there is two more small manual steps to do :

- Set the desired domain name in the Bugsink web settings (you can use the default generated if you want, I decided to roll my own).

- Set the

BASE_URLenvironment variable to the same value

Note : you need to align the port that bugsink is running on, the port in the domain settings in bugsink, set the rediretion in the DockerFile using the FQDN environment variable and omit it in the base url variable for everything to work as expected.

Testing is all

The last thing to do is to deploy, and test an error against a project in Bugsink. For this, you can create a project in the application until you hit the SDK setup page.

Once this is done, you need to trigger an error in a project, Bugsink's documentation explain how very well. There are several ways to do this but in the past I faced network and setup issues.

To make sure I am testing only Bugsink and not my application setup, I created a small project called Sentry Error Generator (This application does not log anything, but be aware this is a sensitive action to do). Go to the website, enter the setup URL Bugsink gave you and press generate error. You should see it in Bugsink.

Congratulations, you are now collecting your application's errors (for free, if you have followed this guide! Oh, and if your current bug tracker too expensive and want a smaller and simpler alternative but with no maintenance, Bugsink has some hosted and supported options too (As I've said earlier, I'm biased!).

]]>TL;DR : After years of focus on the compiler and KMP, The JetBrains folks are coming it out with tons of announcements for server folks too, and that feels great

The past few years

You probably know me by now, I've been liking Kotlin a lot for a long time. Back in 2019 already we were the first at ING moving some of of our production code from Java to Kotlin. Over the past years though, I've also been vocal online about my worries that all of the love was going towards KMP. And Google deciding to sunset the (non Android) Kotlin category has just been another pointer in the same direction. I've even mentioned this exact thing already right after visiting KotlinConf 2023.

Even with Java releasing more often, and getting more and more new features every iteration I'm still much happier using Kotlin. But for pure backend developers is the developer experience still that differentiating that it makes it worth switching? After all, Java got pretty good records those days. A much better functional programming experience. And virtual threads. The list goes on....

Note : This article is my personal impression based on my usage of the language. Depending what you're working on, your opinion may vary 😊. I've also included many screenshots from the Keynote itself, please watch it for the complete announcements!

Note 2 : There's also been lots of great AI related announcements, but I'll intentionally skip them in this article. I'm generally happy JetBrains picks up the AI wave and innovates while staying open. I'm a mostly happy user of their product myself.

New language features

As a backend engineer, I think the main improvements I've personally seen in Kotlin that made a difference to me the past years are the time API (back in 2021!) and the K2 compiler. Of course, the K2 compiler is a huge (and needed) improvement that came with Kotlin 2.0. But it also didn't bring a huge amount of new language features.

Now, today's keynote was a VERY welcome change to this. Others will do a complete breakdown better than me but here some actual new language features I'm excited about!

- Name-based destructuring to ensure I am grabbing the right properties. An actual improvements compared to the current version.

- Rich errors : an improvement to error handling, sitting on top of the already great null safety features of the language. Not only do we now have complete return types but they also get propagated so we can neatly handle all error cases in a single location.

- Even Kotlin compiler plugins are getting more powerful, with Power Assert offering an expressive and clear error message. Look at this, we're almost topping the already amazing Elm error handling capabilities.

This is developer experience like I haven't seen in a long time from the teams at JetBrains, and it genuinely makes me happy.

Tooling ecosystem, and strategic partnerships

I've already mentioned lately that I was a big fan of Kotlin notebooks. I find them generally amazing, because they combine the unique experience of Python Notebooks with the gigantic JVM ecosystem (and without the Python horrible dependency management issues).

I was legit 🤯 this morning when I saw that the folks at JetBrains combine this already amazing experience together with the ecosystem that pretty much every JVM dev uses on the planet : Spring!

The screenshots aren't doing justice to the announcement, so please just go and have a look at the Keynote yourself. The latest version of notebooks can attach itself to a running Spring kernel and have access to all of its context. And that even for applications that aren't using Kotlin yet.

Again, this is next level for me in terms of developer experience : combining the flexibility of notebooks for experimentation together with the sturdiness of a production like application. With the kandy and dataframe data manipulation capabilities, I can definitely see this speed up my day to day development speed.

And of course, JetBrains also announcing a strategic partnership with Spring on stage shows that they clearly intend to give us "corporate" backend developers some serious love.

Growing Kotlin Foundation

For a long time, Kotlin was completely in the hands of JetBrains. This has massively changed over the past years with the creation of the Kotlin Foundation.

As a professional having to pick a technology, it is crucial to look at how promising its future looks. Seeing that Kotlin is jointly supported by several companies, and especially companies that aren't making a living of the language makes it a much safer business case to adopt inside a company.

Seeing two more companies (and none other than Meta) join the foundation gives a great idea of how bright of a future Kotlin has 😊.

The Kotlin built Java library ecosystem

A few weeks back, I was mentioning that for the first time I found a library that was completely built in Kotlin, but had a clear Java compatibility too. If you're interested, I'm talking about the KBsky.

A Java library, built in Kotlin

Today, I learnt that the two most successful JVM AI libraries (the OpenAI Java SDK and the Anthropic Java SDK) both are actually written in Kotlin.

I find this a great sign for the language. Of course, the amount of Java developers out there is much larger than people writing Kotlin and it makes sense for library builders to want to maximize their reach. But them choosing Kotlin to write the library speaks volume about the quality of the language, but is also a great sign for its future.

The official KOTLIN LANGUAGE SERVER

This is, by far, the announcement I am most excited about and I genuinely didn't see it coming. JetBrains announced the official release of their Kotlin Language Server protocol today.

For those unaware, the language server protocol "defines the protocol used between an editor or IDE and a language server that provides language features like auto complete, go to definition, find all references etc.". Having an official language server protocol implementation basically means that all IDEs (from vim to IntelliJ, or SublimeText) can support the language equally well.

The fact that JetBrains is behind Kotlin always made it rather logical for them to not Open-Source a language server. After all, they're selling their IDEs and that'd pretty much mean they're competing against themselves. And it's always been one of my biggest issues with the language.

Yes, IntelliJ is a great IDE. I use it every single day, have paid for my license for years and I love it. But in simply just don't believe in the success of gated products. More and more people use VSCode every day. People now use Cursor. Or SublimeText. Some still use vim. Forcing folks to use a specific IDE just feels wrong and tames the excitement using a new language should bring.

Yes, there's been community driven language server implementations for Kotlin, but they've always been struggling with support. And literally being a company building IDEs for a living, JetBrains always felt like the best party to handle this properly.

I'm over the moon that JetBrains has the courage to open up and even lead the language server efforts in the future. It shows a strong trust in the future of the language and that can only help drive adoption across the industry.

ING as use case

As I mentioned already, I was part of the first team to start using Kotlin at ING back in 2019. Five years later, adoption has grown a lot internally and I was super happy to see JetBrains offer us to be one of the leading industry use cases for their server side Keynote.

Kotlin powers some of our critical services today, and it's a great symbol of how good and powerful the language is. I can't wait to see where we'll be in 5 years.

I've always been a fan of Kotlin, and it's been an honour to be able to contribute to the content of the program, no matter how small my contribution was.

Conclusion

There's much more I'd like to talk about (amper, klibs, kmp, ...) but I'll keep it to the essential : It feels to me with their keynote that JetBrains intentionally started putting backend developers in the spotlight again. They made big, impactful announcements, both at an ecosystem and language levels and I can't wait to try out all of the new goodies.

Well done JetBrains, well done!

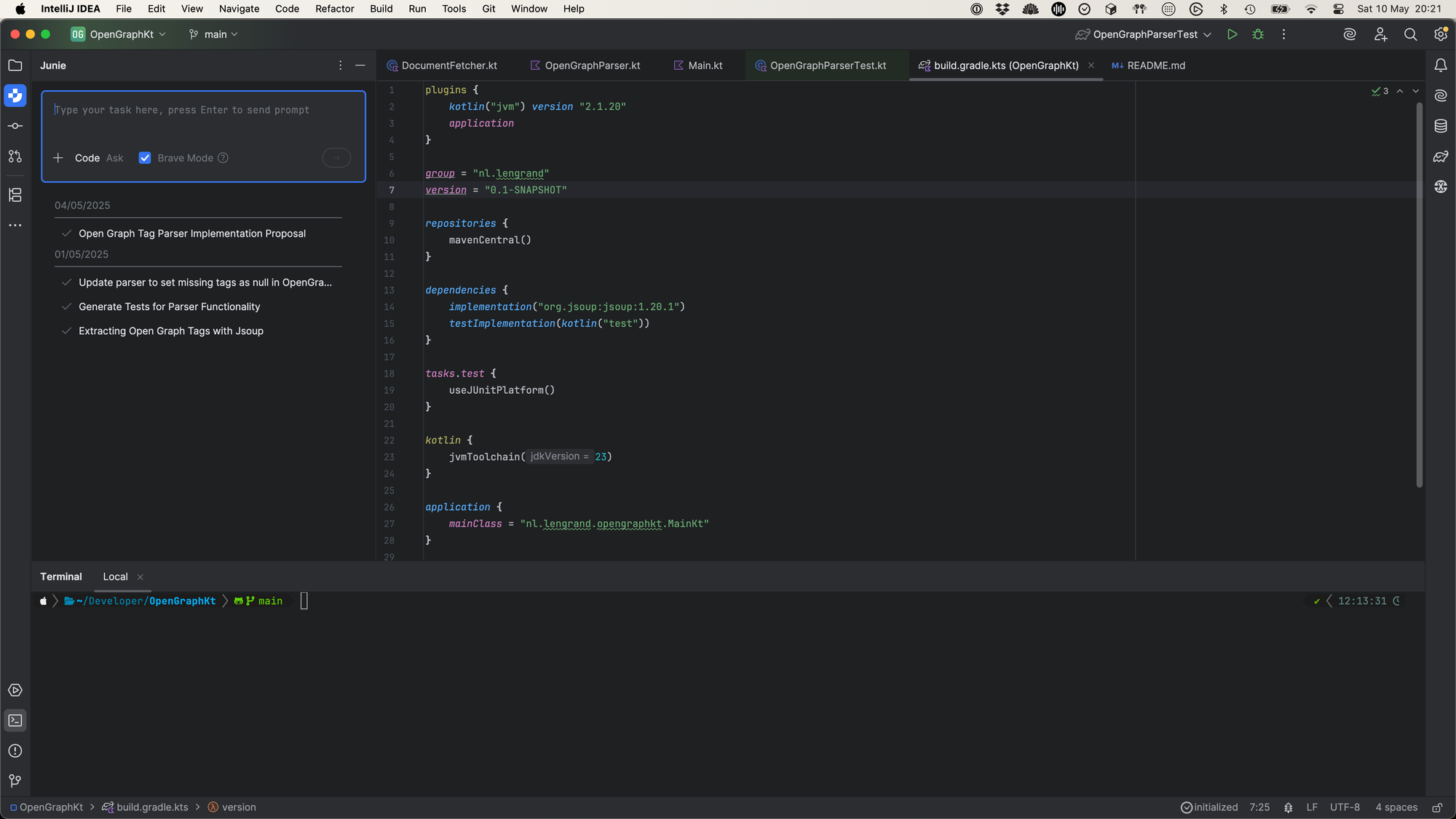

]]>I've been a big fan of all things JetBrains for a long time. The company itself, for many reasons but also their high quality products (and languages, but you know that about me already 😝). As a fan, I've jumped on the occasion to be using Junie (JetBrains coding agent) as soon as it came out. This post summarises my experience, in no particular order of features.

NOTE: The examples below are a compilation created over a long period of time. They are illustrations of the issues I've met but aren't meant to be exact examples.

Quick intro

Junie is JetBrains equivalent of Copilot (or Windsurf / Cursor together with the IDE). It is only available inside the IntelliJ based IDEs (Rider, Pycharm, .... included) and is available through an additional "AI" monthly paid fee (10$/month at the time of writing). They have a limited free plan too.

You can install Junie from the Marketplace. Once installed it appears as a window in your IDE

Junie has 2 modes at the moment :

- Code : You ask Junie something, it will most likely write code / create / edit files in your project.

- Ask : In case you don't want changes, but rather ask a question or chat with the AI, you can switch to Ask mode. Handy if you want to weight choices in your project, or ask about potential issues / optimisations.

I'm very glad they added this mode actually, I tend to use the AI a lot to help me with reasoning and it is very frustrating to have half a dozen files changes only when asking of a refactoring makes sense.

You can also activate the "brave mode" option, which will allow the AI to run commands in your terminal without your explicit permission every time. I was a little worried at the beginning but so far nothing crazy has happened. I'll report the first time Junie decides to $ rm -rf /, that'll sure be fun and I can only blame myself then.

The many AI products, naming and differentiations

I'm a little confused between the different types of AI products IntelliJ is offering at the moment, which are most relevant to me, but also what are their differentiations. I just found out writing this blog that they have a dedicated page for this actually.

To me, it's still really not clear what the difference between Junie and the AI assistant is. It looks like Junie is the AI assistant, but with more bells and whistles? Except Junie is not customisable (models, prompt library, ...), while the assistant is? Then there's also Mellum, the JetBrains LLM. It feels like I have to learn a whole lot of new product names with little differentiation and it's a bit more than I want to have to remember.

The pricing page with features also basically tells you you get everything as part of the subscription anyways, which is good news! (unless you're enterprise, then you're screwed). Oh, and one thing to mention : pretty cool to see a flat price for the agent, when most of the competition mentions token limit pricing instead!

I understand the market is moving very fast and is honestly quite a mess at the moment, but I'd appreciate a "use me if, don't use me if" part that's a bit clearer.

Junie is VERY eager to solve my issues

I like to use my coding agent as if I had a junior pair programmer with me. I usually don't ask it for a complete project / solution, but rather first look at a list of things we need to achieve for a particular outcome, before diving into each piece of it separately. That way seems to be an optimal way to speed up delivery, while keeping an actual control over quality and overall architecture.

In my experience so far, Junie tends to REALLY want to be doing a lot of work and I sometimes have a hard time telling it to only do one thing.

I may ask it to create a single test, and I'll have a complete test class created, the implementation will be refactored and it will also go on and upgrade some of my dependencies while it's at it.

It can be frustrating, because it's not what I asked for, and it will take me time to refuse part of the solution... Here is a concrete example where I ask Junie to create a test for a single class method.

By the way, this was my method at the time 😅. Yup, it was basically empty.

And this is the summary of what Junie did by the end of its quest. Not only did it create a test, it also decided to create the actual implementation of the method. As I'd tell my junior if they were not an AI : very industrious, but not what I asked for buddy....

I refused Junie's solution, and asked it again. This time being more precise about what I DIDN'T want (modifying the implementation):

This time, the results were as expected. But it took me 2 tries, and my sentence in my request actually was longer.

EDIT : The awesome Marit Van Dijk mentioned the allowlist on Bluesky, which I didn't know about!

Junie can get some basic stuff wrong

This was a bit surprising to me. I've seen Junie get imports wrong for AssertJ for example. To the extent that the code wouldn't compile. (Also, I would have liked it to ask me to add AssertJ as a dependency instead of just decide to start using it 😅). It's quickly and easily fixed, since it will try to build by itself, fail and iterate. But still, I wonder where that comes from.

For smaller libraries, I've also seen Junie struggle to use basic objects. In the below example, it wants to use reflection and complex shenanigans where a simple instantiation just works out of the box. The actual implementation uses val feature = RichtextFacetMention() . It also tries to access fields using getDeclaredField() where accessors are perfectly fine facet.features = mutableListOf(feature).

I'm not exactly sure why this is happening and I honestly also haven't dived very deep into it. I wonder if that isn't because kbsky is both multiplatform as well as Java compatible and there is some confusing generation happening to get that compatibility.

To fix this, I've had to write some of the code myself so the agent can learn from it. It's become pretty good at it now, but it also means that I don't trust the agent with the code as much as I'd like to 😊.

Trust, but verify

Related, but tangential : It happens very often that Junie does some extra things for me that are completely unrelated to the task at hand. It can be changing some formatting, or updating a version, or changing a Gradle option. The changes aren't bad per se, but this also means that I have to be very careful about checking the output of the task when Junie is done. Those changes also can be in places that aren't really suitable for tests, making them harder to spot in any other way than a thorough code review.

Here is an example where Junie decided that checking for null fields wasn't enough. The fields also shouldn't be empty. This isn't a bad change per se, however it wasn't really done at the right moment. At least, it does inform me at the end of the task though so that's that. Not quite welcome if it had slipped, but also not terrible. I'm on the edge on this one, I like the changes but it also makes me feel I can't quite trust the agent. What if those changes had large impact on my clients?

Junie can be opiniated about the names / locations of my files

I've had a few times where Junie would take my file, take its content and decide to place it somewhere else. It's also explicit about it, telling me my file is now deprecated. Even though the agent may be correct overall (maybe my naming isn't great. Maybe the file should be in another place), I don't quite like that it makes tracking history more difficult in the future. It also makes reviewing the changes harder. As usual, I'd rather have this done in a separate step, not at the same time as functional changes.

Funnily enough, this can even happen mid task, where Junie moves the file around, finds a solution to the problem and moves everything back. I'd love to know more about the reasoning behind this ^^.

I really miss the option to refuse part of the solution

At the moment, what I miss THE MOST, BY FAR about Junie compared to other similar agent is the option to accept / refuse part of a solution. When Junie thinks it's done, it will tell you. You can then decide to accept, refuse or tell it to try again.

Now, I may be 90% OK with the solution, but want to remove the gradle options that it also added at the same time. I've had a time where it added tests, and also a Jitpack configuration. I mean, thank you, but no?

Cursor is more fine grained in that regard, and will let you individually accept / refuse changes :

When I asked about this on the discord, the official answer was to use the commit window of IntelliJ to do this. This is a valid, but subpar answer imho. It also makes it more difficult for me to check whether the refused changes are breaking the complete task in any way. I have to switch to manual mode for this. There must be a way to do better.

Ask mode responses are great!

The scratch files as an output to the ask mode are great. When you chat with Junie in ask mode, the output is a Markdown file that is placed in your scratches folder. I really like this for several reasons :

- The output is structured and readable

- It is easily shareable

- I can reuse this as input for later tasks.

I haven't tried this yet, but I also think that makes it for a very good start of keeping a log of decisions if they were placed somewhere together with my source code.

Junie is extremely slow in my experience compared to other tools I've used

This is my main gripe with Junie at the moment honestly. Even for simple-ish requests (think, write a simple method to filter a data class ), Junie will take between 3 and 4 minutes to complete. I haven't benchmarked this, but it felt much longer than most other coding agents I've tried.

This is understandable, given how it works. It will first make a plan, verify its plan across many files of the project, create the implementation, make sure the code builds, write tests for the method, run those tests. It usually will discover a couple bugs that way, reiterate and keep looping until it finally succeeds. This is pretty much how I, mere human, would also do it. (This is while using Brave mode by the way).

My issue with this is that during that whole time, I am not actually actively involved in the process. I will be needed soon to verify the implementation because I'm not actually pair programming and seeing the code being written live I cannot be in a "copilot seat". The pilot actually closes the door of the cockpit and reopens it when it thinks it's ready.

My issues with this is that it really disrupts my flow. During that time, I cannot quite start doing something else to keep the context fresh in my head. I also do not want to start checking email / slack because I want to keep in the flow. I haven't found a good way yet to use that time in a way that isn't disrupting. It has made me actually decide to not use Junie for many things several times.

When spending 4/5 hours in the IDE, this spinning wheel really started grinding my gears after a while 🫤.

Random 401s when leaving the IDE open too long

Not sure whether others experience this too, but Junie regularly lost contact with its servers and the only way to fix this was to restart IntelliJ completely. I never close my IDE, and rarely restart my computer and after a few days Junie tends to just give me an unknown error that won't go away even when reloading the plugin. Not a huge issue, CMD+Q CMD-Space are only a couple keystrokes but still, it's a mild annoyance.

The need for structured Junie guidelines?

There are many prompt libraries out there. I asked on the Junie discord where people were sharing their Junie guidelines. To my surprise, that doesn't really seem to be a thing today, and everyone mentioned they either didn't use any or they were too project specific. However, the first thing that the Junie guide mentions is to create those guidelines.

This makes me wonder whether there is a need for a Junie guidelines library, or at least a place where people can share how they use this file. Because at the moment I feel like I'm underutilising the tool and I could pick up great ideas from others.

In summary, some personal tips :

- Use a guidelines file to personalize Junie as you want it.

- Chat about large pieces of work with Junie, and then ask it to do the work piece by piece for better implementation results.

- Don't ask Junie to just "upgrade" your project because it will be late on versions. Instead, check first and be specific about what you want.

- Don't hesitate to switch between ask and code modes consciously, for best results.

- If you find a way to stay actively in the flow while Junie is doing its thing, please let me know.

- I really hope Junie becomes faster over time, because during long coding days it's sometimes a make or break situation for me, and I'm likely to start using another agent in the future simply because of this.

TL;DR : Many companies searching for Developer Advocates probably shouldn't be hiring yet. More often than not, what they need first is some internal mindset change. And even then, Developer Experience will go a long way to achieve the first stages of a healthy community.

Many companies searching for Developer Advocates probably shouldn't be hiring yet. More often than not, what they need first is some internal mindset change. And even then, a Developer Experience will go a long way to achieve the first stages of a healthy community.

Note : I don't intend to describe what a Developer Advocate does in this post. Others have done it better than I could. This article also both discusses internal as well as external Developer Advocacy challenges.

I've been officially in the world of Developer Relations for just about 4 years now and I've had multiple occasions where people would come up to me and asking me for advice on how to find their first Developer Advocate.

And even if there's many ways I could help them with that, my answer after chatting for some time is "well you probably shouldn't". This blog is a list of a few of the reasons that keep coming back and my thoughts on it

"Why do you folks want to hire a Developer Advocate?"

The company struggles with talent retention

They struggle to keep their best profiles, who go on to find better pastures. They want to hire a developer advocate to improve this.

This one is always super interesting to me. When digging up more, it turns out that the reasons are typically pretty clear :

- It's a shame, we're a great company but we're losing out best people because the competition pays more.

- Our best elements are leaving because they reach a ceiling and cannot be promoted any more unless they move to management

- Our developers wants to write / speak at conferences / organise events but we don't have budget for them / our policies don't allow it.

- Our developers build what they're told, it's the product team who decides what to build.

A lot of the answers above cannot directly be solved by a Developer Advocate, and maybe even make them worse. A lot of the keys needed to solve the issues above lie in the HR realm.

Improve your compensation packages, open up more growth opportunities for your tech profiles, rework your product / tech work relationship, ...

Yes, those may be longer projects and / or more expensive but believe me they're better than putting lipstick on a pig. Your engineers are smart and you'll do more harm than good.

The company struggles with internal upskilling

"Our developers aren't upskilling and staying up to date with market trends and we need someone to bring that knowledge inside and motivate people to learn".

I find that one super interesting. As a developer in a company, I'd be tremendously pissed if someone was paid to go to conferences in my stead and report to me on what I should learn.

Again, I find that sort of discussion fascinating :

- Do you not have a good internal candidate for this already? Why not?

- Are your developers not upskilling, or are you simply not aware of it?

- Humans are typically curious by nature. What makes it that they're not learning ? Do you have budget / opportunities in place? What does the workload look like?

- And picking from the amazing "Accelerate" book : Do you have a culture of safety and experimentation in place? Do you lead by example? When was the last time you went on a conference or shared knowledge yourself?

The company struggles with hiring "A" players

They want to speed up hiring and capture better profiles.

Typically my first question is "what makes your current profiles not good enough"? And we're typically back to the upskilling / retention discussion. What is an A player anyway?

One of the things that I consistently discover and find super interesting is that there always seems to be a disconnect between the Marketing team who will be responsible for Tech Branding and the actual techies on the floor. And the problem only grows the larger the company is.

I've literally been in discussions where people would say "we need to hire influencers in the space" only to answer "really? How about this person in your company already who wrote 2 books on topic X. Or this person who spoke at 4 conferences the past year. Or this lady who happens to be a Java Champion? Or this other who literally organises part of the event you're sponsoring every year on their own time?"

Sure you can hire a Developer Advocate to find out those internal profiles and nurture them, but it seems cheaper to make sure whoever is responsible for Tech Branding also happens to be close to the communities they want to reach, or even better be part of it 😊.

Another point of discussion I raise usually is : why do you want A players? And what does that mean? Are your problems hard enough that you will be able to keep them sharp?

I don't know anyone who will say openly that they want to hire less than great developers. Still, not all companies are born equal. Refining what you mean exactly will go a long way in finding the right profiles, and will also help line up expectations for new hires. There's nothing more damaging to your technical brand than advertising something and getting people who convert to realise they've been fed incorrect information. Especially "A players".

(Consultancy) Company wants to be more visible in the market

This one is actually more frequent than I was expecting. (Consultancy) company, who makes a living selling hours or projects (not products) wants someone to speak at conferences / podcasts / write for them in order to increase their brand visibility (aka thought leadership).

That can make a lot of sense, and there's great examples of this on the market. That being said, it depends on the strategy being used. You want to be more visible, great! But visible to who? Who is the decision maker at your future customer? Which kind of events do those people participate in, where do they get their influences from, and from what kind of people ?

A lot of the times, the answer is probably close to C-level or directors. And I would then argue that your own C-level / directors probably would be the best leaders to create influence outside the company in that setting. They are the ones shaping the vision of the company, and spreading it out. At least that's where it should start from.

(Small) company has a (technical) product and wants to market it to techies

Now, we're getting closer from the realm of what your typical Developer Advocate excels at. The request makes sense, but let's dive into the topic a little deeper.

The question "What kind of activities do you want your Developer Advocate to do?" are usually answered with :

- Go to conferences / man conference booths and talk about our products

- Make videos and blogs about the product / new features

- Respond to questions on Stack Overflow / Social Media

- ...

With the typical goal of largely increasing the number of new accounts created / monthly entering developers.

Now, that's where I love to discuss more about the product in itself. All the activities described make a lot of sense, but each activity also targets a specific part of the product funnel. And before promoting the heck out of a project, you want to know if people will love the project.

Because your real success metric actually probably is the number of monthly active developers (ideally paying). If most of them are leaking out right after entering, you're going to struggle being successful.

Many developers simply don't go to conferences, and aren't going to read about you until they have a specific issue to fix. Conferences are also incidentally the most expensive activity one can think of, in straight up money but also time!

At this point we're entering the wonderful and exciting world of ✨Developer Experience✨.

- Do you offer free accounts?

- Are you using the expected standards in the industry in terms of APIs, WebHooks, ....

- Do you have sample data for folks to get onboard quickly?

- How accessible is your roadmap?

- Are you already active in the community of the domain you're in ? Do you conform to their expectations? (Example : If you're into APM, do you support OpenTelemetry? If you're doing PostgreSQL, are you an active contributer?)

The outcome of that discussion gives you a pretty clear idea of how far they are in the process, and how much can achieve joining them.

In conclusion

I find all those discussions fascinating. They're all very important problems and they're typically things that executives spend a lot of brain cycles on. What I find most interesting is that very often those people already know what their real issue is. But it seems harder or more expensive to fix the root cause so they want to hire a Developer Advocate instead to tackle the issue.

It's definitely possible to hire a consultant to help you shape your strategy, setup an action plan or more. I find it interesting that "hire a developer advocate" became some kind of catch all in a way. A superpower that will help solve underlying issues. This could absolutely work, but if the hire has the direct mandate to solve those issues and they typically cross many departments.

One more thing that I remark is that "Developer Advocate" often seems conflated with "Developer Relations" and many of the other fields adjacent to it. Developer Education, Developer Experience, Technical Product Management, ... these are all very valid needs, but they're not quite the same as "Developer Advocacy".

Not an issue per se, it's all part of the journey to dive into this. Hiring someone without giving them the keys for being successful, in a field that is generally known for being hard to measure without going through the journey is a waste though (but that's a topic for a whole other post)!

Wanna chat, do you agree, disagree or think you have another challenge? Just hit me up 😊!

Thanks Floor for the insanely fast review

]]>TL;DR : I love the remarkable for reading articles and writing notes on books and I love the computer / website / tablet sync, but I find the ecosystem relatively poor and I still prefer to take my notes on paper. I haven't tried the handwriting to text capabilities.

BTW, this isn't a sponsored post in any way (though do feel free to send your proposals, good folks at Remarkable xD).

I've been eyeing the Remarkable tablet for a very long time. I'm an avid reader, and I still take a lot of notes on paper. I also love to write and highlight things in the books I read, and I've been a Kobo user since pretty much forever. I've never bought one though, because I just found it way too pricy for the capabilities.

Why now

But when they announced the remarkable pro couple months ago, I decided it was the right time to give it a shot. Many of the remarkable fans jumped on the new product and there was a lot of the second model online for relatively cheap.

I bought a 6 months old, still under warranty, close to new condition remarkable with 2 covers, the pen and pen tips for 375 euros (about a 45% discount on the store price).

First impressions

I like the tablet as much as I was expecting to. The product looks and feels qualitative. It's a pleasure to hold the pen. The interface is snappy. The magnet and covers are great and it transports easily.

Reading

The (active) reading experience is the aspect of the tablet I love the most. I really like my Kobo, especially as it fits in my trouser backpocket but reading larger format books or PDFs in general is a pain.

The experience on the remarkable is ... well ... remarkable. The screen is high quality enough that I feel like I'm pretty much reading a paper A4 format book. Highlighting snaps to sentences and it feels great when reading. Its also very nice to quickly be able to tag pages, write some notes at the bottom, ... Basically stuff I do with a paper book but in a way that I can reuse easier later.

I have an iPad as well, but in my experience eink displays are just so much more pleasant to read on the difference is extremely noticeable. I'll talk more about it later but reading shorter articles / publications is the thing I think the remarkable excels at.

I do dearly miss the presence of a backlight, like any e-reader has since forever. I can live without it, but being able to read books in the dark is one of the unique selling points of ebooks compared to good old paper and I find it a shame not to find it there.

DRMs

One small, but relevant item I learnt that hard way is that the tablet does not support files with DRM. That is logical, given the product is then encrypted to the dive but that also means that you cannot buy an ebook anywhere and expect it to be readable on your tablet. For example for me in the Netherlands Bol.com is a no go. (And most cases you can't get ebooks reimbursed, given they're digital products).

Writing

In my opinion, the writing experience is .... alright. I've tried the iPad / paperlike combo in the past and didn't quite like it. At least the remarkable pen feels great (it's not smooth) and the tablet is very thin compared to an iPad so you feel like your hand is on a notebook. But it's still a screen, and in my personal experience the input lag is slightly noticeable which isn't pleasant.

I also don't quite like the fact that the angle you put the pen on the tablet changes the thickness of the writing. I get it, but the effect is bolder than what I'd get with a real pen and it annoys me.

I'll come back to it later in the UI part but I'm left handed and it's SUPER annoying that the "close" button is basically located where you hand lies on the tablet. I was going crazy closing my documents by mistake. Turns out it's a known problem and the only actual "solution" is to actively hide the UI...

My left hand closing the page (hard to simulate with a phone in my hand :P)

I know my handwriting is pretty bad, so I'm not having much hope for the handwriting to text conversion, though I tried it just for this post. As expected, it's OK, but far from good enough for me to make use of it. Can't blame them completely though, my writing is bad. But if I have to care about my handwriting at all times or rewrite half my notes, it's just as good as nothing.

In any case, it's not much of an issue for me, since I don't take notes to remember later, but to remember now.

The UI

I don't dislike the interface of the Remarkable, but I can't say I love it either. It's barebones (which is a good thing in my opinion) but it feels clunky to me at times too.

(I don't pretend to be a pro , so please correct me if there's obvious things I missed)

I try to take one notes per meeting, and to create a new note I need to click at least at 3 different places in the UI, maybe in a folder too, AND pick the name of my notes.

Creating a new note... Not without friction

Any note application I've used in the past 10 years let's you start taking notes first, and setup later.

With Apple Notes, I'm directly productive

I use folders to take notes, and I constantly need to click in and out of the folders. As far as I can tell, drag and drop isn't supported.

As I was saying earlier, the UI is also useful, but you can hit it by accident when writing and that is super frustrating (and distracting) half way through a meeting.

That being said, I LOVE the (web and native) application two way sync. It's super nice to just be able to drop anything on the website and have it ready to be read in the train (though, obviously your tablet has to have access to the internet). In the same way, taking notes all day and seeing them in the web environment is pretty cool.

It's 2024, and I would love to have a folder "à la Dropbox" (or even a Dropbox integration like Manning books supports since 2015(!!)) that auto syncs local content from my computer with the tablet and back, but I guess that's a bridge too far 😊.

The ecosystem

I have quite a few things to say about the ecosystem, in the generic sense of the word.

Remarkable connect

First, I find it quite annoying to have to pay for a monthly service when already buying a premium piece of hardware which sets you 500 euros back.

Sure, you don't need to pay for connect; but then you don't get your notes synced up... So you're basically back to having a glorified ebook.

I DO understand that the company needs to make a living, and I'm totally fine with their "50 days" free tier limitation. But come on, at least add the notes to it...

That being said, it's only a few euros a month so at least we're not talking about yet the price of another Netflix subscription.

I don't use any of the other features of connect, so I can't talk much about them.

Hardware, anyone?

Another gripe of mine is that the only keyboard that Remarkable officially supports is their own 220 euros type folio.

What's more interesting to me is that the tablet is literally equipped with a USB-C connector and the pins at the back of the tablet are also the pins of a USB connector. Which means that folks are literally able to 3D print and reverse engineer support for a keyboard themselves.

Looks to me like it is a conscious decision to sustain lockin, which is a shame really.

In the same way, the tablet currently doesn't support bluetooth (which could have been another way to support input devices). It's not a large issue, but would have been appreciated.

Integrations

Another thing I find surprisingly lacking is the relative lack of integrations with the tablet.

I'm an avid Pocket user for example, and synchronising pocket articles on the Remarkable requires setting up your own sync server (Thanks Open-source once more!). This is something that Kobo supports since forever and I was surprised to not find it available. Same for Dropbox, agenda features, Google Drive, or anything really.

(EDIT : Remarkable actually supports Google Drive and Dropbox, but I just had no idea until someone pointed it out to me when reviewing the article. I don't think I received any information about it, and it seems only possible via the website so I never actually saw the option ^^. My other remarks stay valid)

(EDIT2: It's actually crazy, they have a "save articles on your tablet" extension as well, but none of those integrations are visible on the tablet or app themselves, only at the bottom of their website. Completely missed them... Installed and trying it out to see if it can replace pocket in the future).

Turns out everything is possible, but they're quite literally all informal and made by the community. I hear Remarkable is a small company and we can't ask them all; but I'd like them at least supporting those efforts in some way or even better : centralize them.

Templates

Another thing I didn't expect when I started using the tablet is the sheer amount of people using custom page templates. There is quite literally a parallel industry around these. Some of them cost up to 40 euros!

It's always fascinating to me to see how products can create new market opportunities, with value in them. One thing I wished, just like for integrations, is to see Remarkable recognise and support them (whatever that may mean).

A great place of inspiration for me is how Notion offers a gigantic list of community templates for example, but also a directory of Notion partners you can contact (and who make a living of it!).

Tiny sloppy things that accumulate

This is something that has no relation with the product, but I find a good illustration of my general feeling of the remarkable product. When logging in my account with Apple, I got an email telling me they detected the login with a link to my account to approve / refuse it.

The email looks great, and came in under a second. Except the myremarkable.com domain isn't active, and we're basically one step away from being scammed. The actual URL is my.remarkable.com.

When contacting support to mention it, I received no confirmation a ticket was created, only a direct answer that contained no information about my request.

It's well intended, but honestly a bit sloppy and a bad look (and if they don't own myremarkable.com; could actually be straight harmful).

Conclusion

The article is longer than I expected and I feel like I spent a lot of time describing what I didn't like in the product.

Overall, I love my remarkable. It's really a GREAT tool to read articles and practise active reading. That being said, it also leaves a sour taste in my mouth because I feel like there's huge amount of potential to develop the ecosystem that isn't tapped into. And for the price of the product, I find it hard to accept it. I paid less than 100 euros for my Kobo; about 7 years ago. I paid about 2/3 of the price of my iPad for this tablet. But my iPad comes with a complete app store and all the Mac ecosystem. I hope I can say the same for Remarkable.

For now, I'll keep using it to read articles; and write notes on paper like before...

]]>TL;DR : With Coolify you can host you Kotlin applications in seconds on your own server and benefit from auto deploys, custom domains, preview branches and more. You can see the code here, and access the sample here.

Lately, I've been increasingly thinking about the fact that all of my applications / experiments are spread across providers (Supabase, AWS, Koyeb, Digital Ocean, ...) and I've been toying with the idea of owning all of this back on my own servers. After discovering Hetzner auction servers, I realised that I could have a super beefy server for very cheap and decided to try it out.

Installing Coolify is as simple as running $ curl -fsSL https://cdn.coollabs.io/coolify/install.sh | bash on your server.

A sample Kotlin application

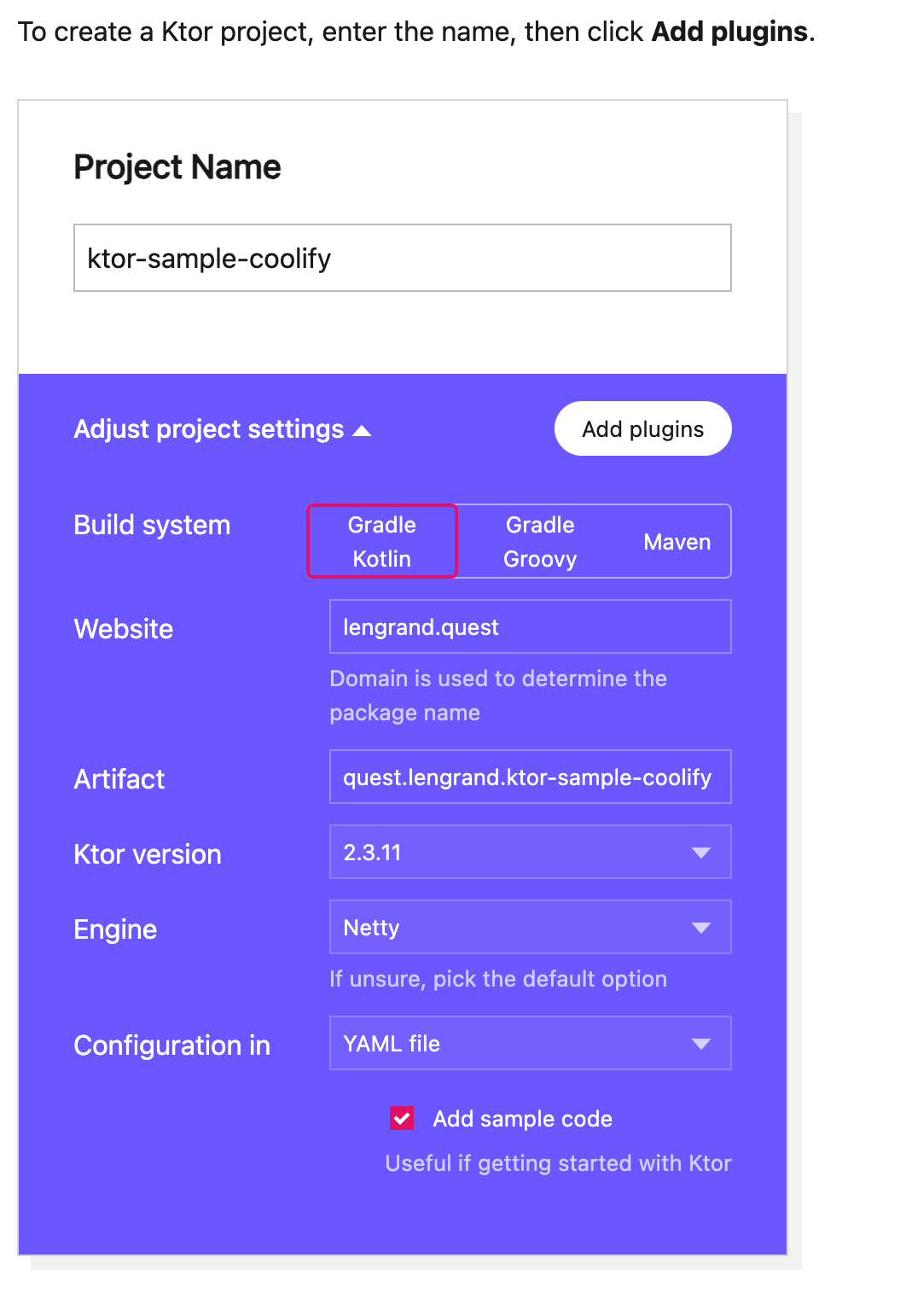

For this test, I'll go to the Ktor Starter website and create the simplest application I can think of.

I'll then unzip the repo and create a GitHub repository from it (using the GitHub CLI, get it if you don't have it yet it's awesome 😊).

$ unzip ktor-sample-coolify.zip -d ktor-sample-coolify

$ cd ktor-sample-coolify

$ gh repo create .

## Some setup, and final repository push to GitHub

Once that is done, I can access my repository here.

Creating a Coolify GitHub application

We have to deploy this application to Coolify now. There are several ways to do it, but the most powerful one will be via a GitHub app, we'll see why very soon.

To do this, we'll add a new GitHub app source to Coolify.

It will then ask us which features we want to activate, and we'll be redirected to GitHub to approve the creation of the app, and then requested to select which repositories to apply this application to (I selected them all, but you can also choose to segregate better and only add the one repository we created earlier).

Deploying our Ktor application

Now that the connection between Coolify and GitHub is setup, we want to deploy our Ktor application. To do this, we create a new resource and select the Private repository with GitHub option.

I'm not going to show you all of the dialogs, but you'll need to select which server to deploy on, which GitHub app to use and then which repository to choose.

Once all of this is done, we'll have access to our deployment configuration. We'll select the main branch for the deployment, and the port 8080 which is the default Ktor port.

Once that is done, we can hit the deploy button. By the way, we can also very much appreciate the fact that Coolify will use sslip.io to generate a domain URL for your app without you having to setup anything (Granted, it's not the URL we want but it's so much better than an IP address and port combination).

First roadblock : Invalid Nixpack start command

Once thing that I haven't mentioned yet here is that our Ktor sample application does not have any kind of DockerFile. Nixpacks will magically detect which kind of project it is yet, and start building it, running gradle tests, building the project and creating a Docker deployment based on its own inference. I didn't know about this yet, and honestly I was 🤯.

The issue though, is that our deployment fails :

Now, that's a very well known error for any seasoned JVM developer I think 😊. We can spot the issue rather quickly when investigating the logs. Here is the commands that Nixpack will use to build/deploy our project :

╔══════════════════════════════ Nixpacks v1.24.1 ═════════════════════════════╗

║ setup │ jdk17, gradle, curl, wget ║

║─────────────────────────────────────────────────────────────────────────────║

║ build │ ./gradlew clean build -x check -x test ║

║─────────────────────────────────────────────────────────────────────────────║

║ start │ java $JAVA_OPTS -jar $(ls -1 build/libs/*jar | grep -v plain) ║

╚═════════════════════════════════════════════════════════════════════════════╝

The issue, however, is that we actually build 2 jars during our build step, and Nixpack runs the incorrect one in its start phase. This is not a Ktor only issue by the way, it seems to happen for Spring boot too.

╱ ~/Dev/ktor-sample-coolify/b/libs ╱ feat/automat…-branch-test ls -la ✔ ╱ 12:00:46

total 30584

drwxr-xr-x 7 julienlengrand-lambert staff 224 Jun 10 15:23 .

drwxr-xr-x 11 julienlengrand-lambert staff 352 Jun 10 15:22 ..

-rw-r--r--@ 1 julienlengrand-lambert staff 6148 Jun 10 15:23 .DS_Store

drwx------ 7 julienlengrand-lambert staff 224 Jun 10 15:23 quest.lengrand.ktor-sample-coolify-0.0.1

-rw-r--r--@ 1 julienlengrand-lambert staff 5193 Jun 10 15:21 quest.lengrand.ktor-sample-coolify-0.0.1.jar

drwx------ 18 julienlengrand-lambert staff 576 Jun 10 15:23 quest.lengrand.ktor-sample-coolify-all

-rw-r--r--@ 1 julienlengrand-lambert staff 15638896 Jun 10 15:22 quest.lengrand.ktor-sample-coolify-all.jarWe have 2 ways to fix this :

- Create a

nixpacks.tomlto customize the start command - Change the Coolify configuration and set the start command to

./gradlew start

I've chosen the latter for simplicity this time.

We change, save and press deploy again.

Second roadblock : Issue with healthchecks

Deployment somehow fails again. This time, it seems to be due to the automated healthchecks from Coolify to indicate that the application is unhealthy. And the default behaviour for Coolify is to 404 any traffic to unheathly applications.

[COMMAND] docker inspect --format='{{json .State.Health.Status}}' xc8g0s0-100357313316

[OUTPUT]

"unhealthy"

[2024-Jun-11 10:06:05.645616]

[COMMAND] docker inspect --format='{{json .State.Health.Log}}' xc8g0s0-100357313316

[OUTPUT]

[{"Start":"2024-06-11T12:05:43.755413473+02:00","End":"2024-06-11T12:05:43.808713519+02:00","ExitCode":1,"Output":""},{"Start":"2024-06-11T12:05:48.809795855+02:00","End":"2024-06-11T12:05:48.8473437+02:00","ExitCode":1,"Output":""},{"Start":"2024-06-11T12:05:53.848150166+02:00","End":"2024-06-11T12:05:53.985646744+02:00","ExitCode":1,"Output":""},{"Start":"2024-06-11T12:05:58.986804475+02:00","End":"2024-06-11T12:05:59.036324085+02:00","ExitCode":1,"Output":""},{"Start":"2024-06-11T12:06:04.037004721+02:00","End":"2024-06-11T12:06:04.07944818+02:00","ExitCode":1,"Output":""}]

[2024-Jun-11 10:06:05.648560] Attempt 10 of 10 | Healthcheck status: "unhealthy"

[2024-Jun-11 10:06:05.651092] Healthcheck logs: (no logs) | Return code: 1

[2024-Jun-11 10:06:05.653988] ----------------------------------------

[2024-Jun-11 10:06:05.656408] Container logs:

[2024-Jun-11 10:06:05.745223] Downloading https://services.gradle.org/distributions/gradle-8.4-bin.zip

............10%............20%.............30%............40%.............50%............60%.............70%............80%.............90%............100%

Welcome to Gradle 8.4!

Here are the highlights of this release:

- Compiling and testing with Java 21

- Faster Java compilation on Windows

- Role focused dependency configurations creation

For more details see https://docs.gradle.org/8.4/release-notes.html

Starting a Gradle Daemon (subsequent builds will be faster)

[2024-Jun-11 10:06:05.748707] ----------------------------------------

[2024-Jun-11 10:06:05.751778] Removing old containers.

[2024-Jun-11 10:06:05.754548] New container is not healthy, rolling back to the old container.

[2024-Jun-11 10:06:06.335355] Rolling update completed.I haven't found the solution for this just yet, so I've decided to disable healthchecks for now. We press deploy again.

That's it, this time we're in business! If we go to the generated URL, our application answers as expected

Where the magic begins!

Now that our configuration is valid, we can benefit from all the magic that a Fly.io, Koyeb or any other cloud provider can offer us, but on our own terms!

Preview Pull requests

One of the features I love the most is preview pull requests. We create a new branch with a custom endpoint:

fun Application.configureRouting() {

routing {

...

get("/mood/{mood}"){

call.respondText("Are you feeling ${call.parameters["mood"]}?")

}

}

}

We commit, push the new branch and create a Pull Request. Coolify will automatically detect this, start a new deployment and generate a new SSlip URL, all of this while our main deployment is still running! You can see this happen here.

And just like expected, you will also have access to information about the deployment directly in your Pull Request:

Similarly, any merge / commit to main trigger a new deployment, so you can basically have CI/CD with a great Developer Experience, all of that on your own premises.

There you go, I'm feeling happy. 😊

Custom secure domains

This is not the object of today's article, but adding custom domains to Coolify is also very simple and the tool will take care of all the SSL certificates setup / renewal for you automagically so it takes seconds to create shareable domains, including multiple wildcards. For example, you can access my app here, here and here too and all I had to do was change the "Domains" input and restart.

A word of conclusion

You know me by now, I'm a sucker for good DevEx. And I usually love to share my excitement for new tooling, which is why I'm a big fan of tools like Supabase, TinyBird, Koyeb or Digital Ocean.

What impresses me A LOT here though, is that all of this is available for free locally as well, and is mainly developed by a single person. I honestly wish the very best to Andras and will definitely be supporting his work further.

I could deploy a complete application within an hour using lots of tooling I have no experience about, and without having to read any documentation. That allows me to just focus on writing my application, and I just love this.

Now, there's still a lot I want to explore. Obviously, we need to fix those healthchecks. I also want to create a more "production like" application, with a database, observability setup and more, but we'll see this soon, I have total confidence I can figure it out! 😊

Try out Coolify, it's worth it!

]]>Upgrading a simple Kotlin and PicoCLI project to Kotlin 2.0 in under 5 minutes. TL;DR: You can see the diff here.

Introduction

As you may or may not know, KotlinConf will be kicking off tomorrow and Kotlin 2.0 will be announced during the Keynote. I can't be there this year, but I'm celebrating in my own way by upgrading one of my projects tonight 😊. I'll be upgrading SwaCLI, a StarWars CLI demo app I've used in talks to demo the amazing PicoCLI project.

I have been following the whole 2.0 project from very far away, having mostly done Java for the past months, so I really don't know what to expect. Hopefully a smooth experience!

First, this is what SwaCLI does : It lists Star Wars planets or characters, in many different ways. This is the paginated version

When running $ swacli planets for example, it gives us a sorted list of planets.

As any typical Kotlin project, it can be compiled using $./gradlew build.

The gradle.build file looks like this:

plugins {

id 'java'

id 'org.jetbrains.kotlin.jvm' version '1.4.10'

id 'org.jetbrains.kotlin.plugin.serialization' version '1.4.10'

}

apply plugin: 'kotlin-kapt'

group 'nl.lengrand'

version '1.0-SNAPSHOT'

repositories {

mavenCentral()

}

dependencies {

implementation "org.jetbrains.kotlin:kotlin-stdlib"

implementation 'info.picocli:picocli:4.7.6'

implementation 'org.jetbrains.kotlinx:kotlinx-serialization-json:1.6.3'

implementation 'com.github.kittinunf.fuel:fuel:2.3.1'

implementation 'com.github.kittinunf.fuel:fuel-kotlinx-serialization:2.3.1'

testImplementation "org.junit.jupiter:junit-jupiter-api:5.10.2"

testRuntimeOnly "org.junit.jupiter:junit-jupiter-engine:5.10.2"

kapt 'info.picocli:picocli-codegen:4.7.6'

}

compileKotlin {

kotlinOptions.jvmTarget = "21"

}

compileTestKotlin {

kotlinOptions.jvmTarget = "21"

}

kapt {

arguments {

arg("project", "${project.group}/${project.name}")

}

}

tasks.register('customFatJar', Jar) {

duplicatesStrategy = DuplicatesStrategy.EXCLUDE

manifest {

attributes 'Main-Class': 'nl.lengrand.swacli.SwaCLIPaginate'

}

archiveBaseName = 'all-in-one-jar'

from { configurations.runtimeClasspath.collect { it.isDirectory() ? it : zipTree(it) } }

with jar

}Yup, I haven't touched that project in a looooong time, we're still rocking kotlin 1.4.10 😅.

Rambo style, without reading anything I'll just update my plugins version to 2.0.0, let's see what happens. I saw it pop up yesterday on Twitter, so I'm curious.

plugins {

id 'java'

id 'org.jetbrains.kotlin.jvm' version '2.0.0'

id 'org.jetbrains.kotlin.plugin.serialization' version '2.0.0'

}

Downloading, and migrations; that's a good sign!

Ok, First thing I'm seeing is a deprecation on the kotlinOptions. Let's change that. Without checking the docs, it looks like we want the kotlin.compilerOptions now.

Uh, error.

Build file '/Users/julienlengrand-lambert/Developer/swacli/build.gradle' line: 38

A problem occurred evaluating root project 'swacli'.

> Cannot set the value of property 'jvmTarget' of type org.jetbrains.kotlin.gradle.dsl.JvmTarget using an instance of type java.lang.String.

Ohhhhh, looks like our targets have their own enum now! Let's change that!

compileKotlin {

kotlin.compilerOptions.jvmTarget = JvmTarget.JVM_21

}

compileTestKotlin {

kotlin.compilerOptions.jvmTarget = JvmTarget.JVM_21

}Now, that was easy! Haven't touched the doc yet 😬. Let's keep going. We run ./gradlew. build again :

$ ./gradlew build

> Task :kaptGenerateStubsKotlin

w: Kapt currently doesn't support language version 2.0+. Falling back to 1.9.

> Task :kaptGenerateStubsTestKotlin

w: Kapt currently doesn't support language version 2.0+. Falling back to 1.9.

> Task :kaptTestKotlin

warning: The following options were not recognized by any processor: '[project, kapt.kotlin.generated]'

Deprecated Gradle features were used in this build, making it incompatible with Gradle 9.0.

You can use '--warning-mode all' to show the individual deprecation warnings and determine if they come from your own scripts or plugins.

For more on this, please refer to https://docs.gradle.org/8.7/userguide/command_line_interface.html#sec:command_line_warnings in the Gradle documentation.

BUILD SUCCESSFUL in 6s

8 actionable tasks: 8 executed