Checkout my videos on the webp vulnerability:

The huffman table algorithm as implemented in C is very confusing and I could not understand how the attackers were able to control the overflow properly. But after watching

]]>Checkout my videos on the webp vulnerability:

The huffman table algorithm as implemented in C is very confusing and I could not understand how the attackers were able to control the overflow properly. But after watching Ian Beer's talk at OffensiveCon something cliked! While I still don't really understand it, I had the idea to visualize the huffman table that is created by VP8LBuildHuffmanTable() , which also allows us to see what exactly is written outside the allocated range.

You can find the visualization here (zoom out a bit): https://liveoverflow.com/content/files/2024/05/CVE-2023-4863-1.html

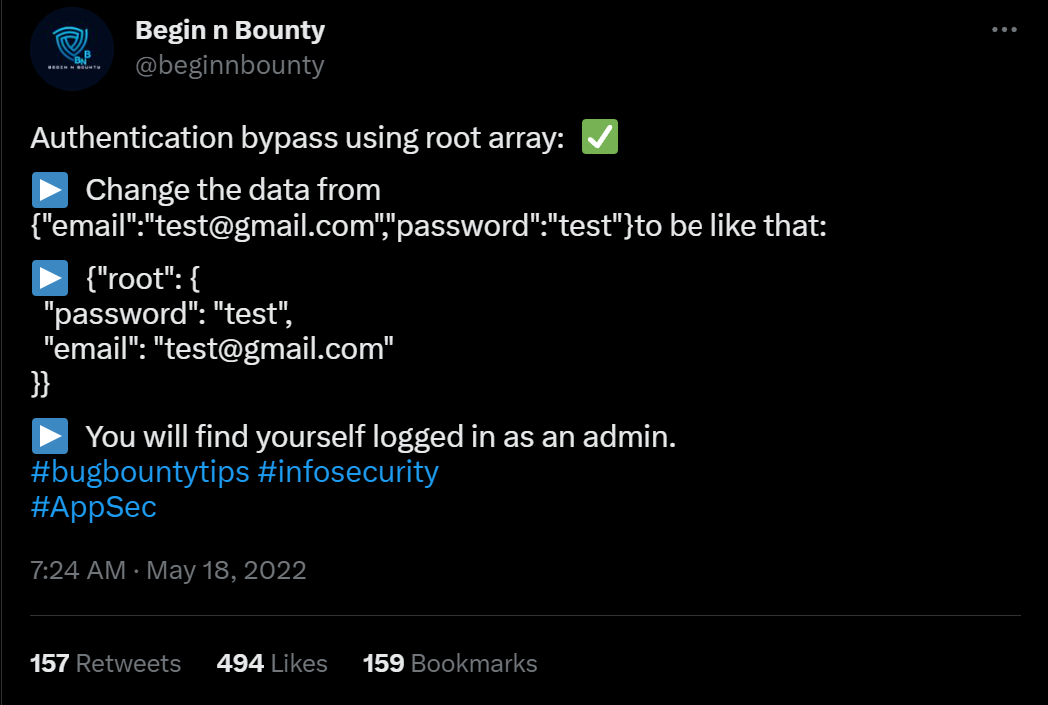

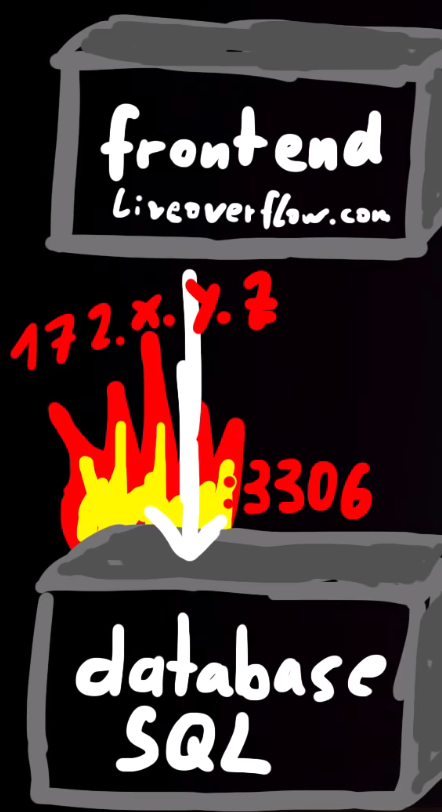

]]>A while ago I came across this tweet, showing off a weird authentication bypass. Based on my experience in auditing websites this didn't make sense to me, so I tried to figure out the root cause. During this process I believe I have identified two potential coding anti-patterns

]]>A while ago I came across this tweet, showing off a weird authentication bypass. Based on my experience in auditing websites this didn't make sense to me, so I tried to figure out the root cause. During this process I believe I have identified two potential coding anti-patterns that are worth checking for, when auditing web applications developed with PHP and CodeIgniter.

The architectural issues might also translate to other web frameworks with similar APIs.

Originally this bypass was found by Eslam Akl and documented on infosecwriteups.com. Since then he created an updated writeup on his personal blog including more of the details how he stumbled over this.

Empty WHERE Clauses

SQL WHERE clauses work in CodeIgniter using the query builder by passing in a PHP array containing key => value pairs to the $builder->getWhere() function. This is then later translated into a complete SQL query with a where clause WHERE key=value.

Consider the following controller implementing something like a login function. You can see it takes a request, performs some basic input validation and then runs a query to find the user with the matching username and password.

namespace App\Controllers;

class Home extends BaseController

{

public function index()

{

$db = db_connect();

$request = \Config\Services::request();

$json = $request->getJSON(true);

$where = [];

if(isset($json['username']) || isset($json['password'])) {

$where['username'] = $json['username'] ?? null;

$where['pass'] = $json['password'] ?? null;

}

$query = $db->table('users')->getWhere($where, 1, 0);

$row = $query->getRowArray();

if(!$row) {

return "not found";

} else {

return json_encode($row);

}

}

}

The problem with the code above is that the $where array will be empty when the username and password were not included in the original JSON request. This results in $builder->getWhere() to be called with an empty array and it will construct a SQL query without a WHERE clause. Which then returns the complete table.

It is recommended to be extremely careful when building queries in CodeIgniter and other web frameworks. Very strict input validation and throwing of early errors could prevent the issue. It might also be useful for CodeIgniter to implement sanity checks that throw errors when empty WHERE clauses are passed to the function.

SQL Injection in $builder->getWhere()

Eslam also told me that the application was vulnerable to SQL injection issues. Having seen the requests leading to SQL errors, I believe to have identified a second likely coding-anti pattern.

The controller below takes the incoming JSON request data and passes it to the $builder->getWhere(). Some developers might think this to be a clever way to implement query filters, for example when creating an API endpoint. The CodeIgniter documentation does not include a warning regarding completely attacker controlled arrays passed to this function.

namespace App\Controllers;

class Home extends BaseController

{

public function index()

{

$db = db_connect();

$request = \Config\Services::request();

$json = $request->getJSON(true);

$query = $db->table('users')->getWhere($json, 1, 0);

$row = $query->getRowArray();

if(!$row) {

return "not found";

} else {

return json_encode($row);

}

}

}When an attacker submits a JSON request containing an injection payload in the key part, it will not be escaped: {"username":"admin", "\" or 1=1 -- -":"asdf"}.

It is recommended to carefully build WHERE clauses with strictly controlled keys and not let attacker controlled arrays be passed directly to $builder->getWhere().

Additional Resources

Does anybody know if this has been documented before? Please send me the links @LiveOverflow.

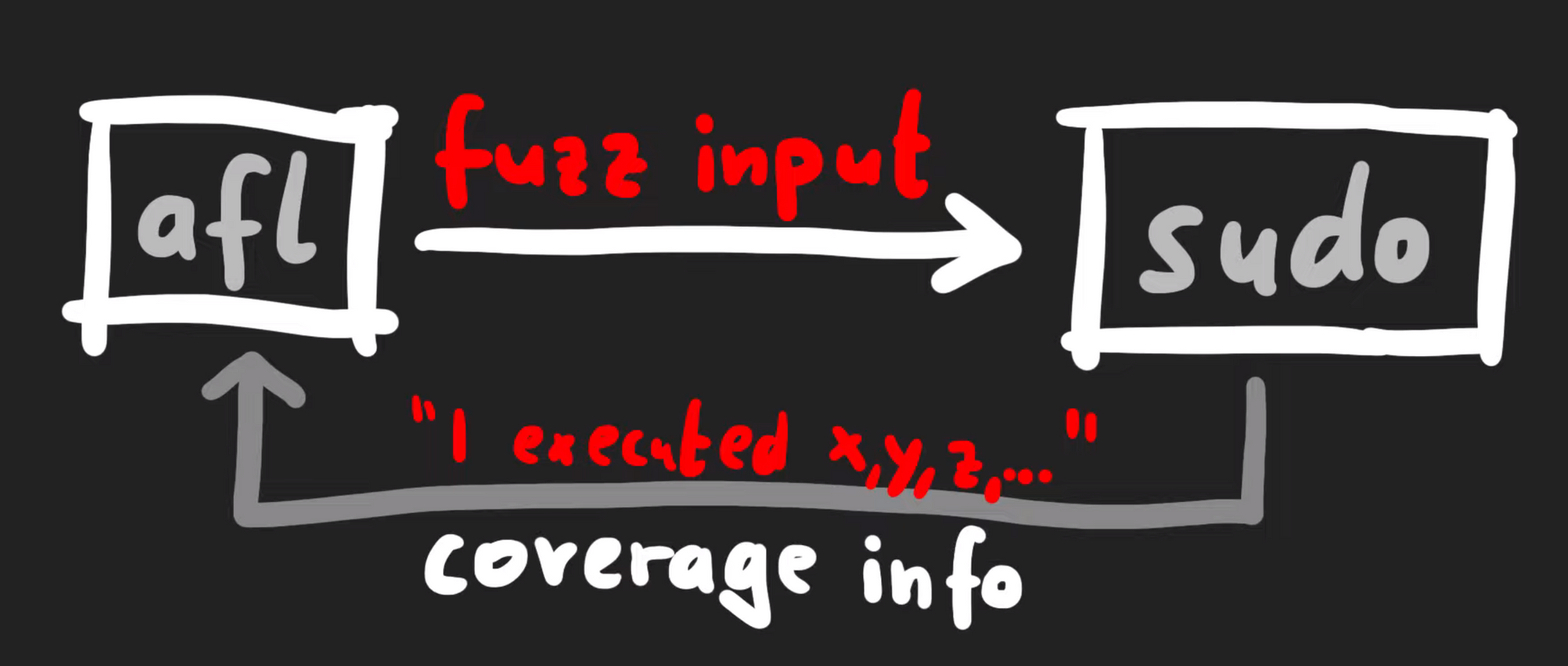

In our attempt to "re-discover" the sudoedit vulnerability (CVE-2021-3156), we use the address sanitation tool to investigate a heap overflow. After fixing it, we investigate several other unique crashes registered by the AFL fuzzer.

The Video

Introduction

In the last episode of the sudoedit vulnerability series (CVE-2021-3156), we found a crash using AFL fuzzing that looks quite similar to the actual vulnerability, at least in its format. However, the crash is actually an abort triggered by malloc because the heap seems to be corrupted. This abort is a symptom of a memory corruption that must have happened earlier in the program execution. Now, we need to find when it is that the heap overflows. Finding the root cause of this vulnerability is thus our objective for the day.

If you program in C, there's a tool that you should know about: address sanitizer. It's actually a compiler tool, that is extremely helpful for debugging all kinds of bugs – not just security ones. The reason for us integrating this tool in our workflow in the sudoedit vulnerability serise is that it'll help us track down some more information about this crash.

Investigating the Memory Corruption with ASAN

Creating the ASAN Build

Let's build sudo with ASAN (that's "address sanitizer"), so that we can investigate why the memory was corrupted. From The Fuzzing Project, we can find information that will assist us in finding bugs using compiler features, as well as other content, relating to flags to use during compilation.

-fsanitize=address flag will be coming in handy.We added this flag to our compilation of sudo. Due to not fuzzing with afl anymore (as we switched to afl++), we can go ahead and just use the vanilla clang, instead of afl-clang. We then build sudo with make, and contemplate the output in the console. You'll note that there is a lot going on in said console – that's -fsanitize=address at work.

Unfortunately, the build fails... wait, we probably forgot the LD flags. We quickly put them into our build configuration command, and hit the Return key again.

./configure CFLAGS="-fsanitize=address,undefined" LDFLAGS="-fsanitize=address" CC=clangWith this step complete, we re-run make, which seems to finish successfully. If we execute the binary, with ./src/sudo, we can see that ASAN is working, as it mentions in the console that it is finding memory leaks. However, these memory leaks are not important for security – they just mean that some memory has been allocated, and not freed at a later point. We can (and did) disable the memory leak reporting by ASAN, so that the output is a little more cleaned up.

We pipe in our malicious input into our new sudo build, and let sudo crash with ASAN keeping watch! It... didn't crash. What? We get a password prompt, and then another memory leak? What is going on?

Ironing Out Some Issues

As it turns out, there is a big issue we had to deal with behind the scenes. We wound up killing a lot of time trying to iron it out. We decided to discuss it so that you can see our troubleshooting methodology and avoid making the same mistake. Research and projects (whether security-oriented or even outside of this sector) are rarely straightforward and smooth; there are often snags, detours, and problems to address. Sometimes, these issues are really simple to sort out, and sometimes, they are awfully difficult. It's just normal.

Back to the memory leak: throwing the input at the normally-compiled sudo via our wrapper still works. What gives? There are a few things that we can consider from here.

First: the recompilation. Recompiling with massive changes such as AFL instrumentation can introduce issues – as can using address sanitation, for that matter. Large changes such as this can move code around in the binary and thus create a different environment in which the malicious input can no longer cause a crash. It can also be that the input crashed the program because of something in AFL. Here, it seems like something with the ASAN build has changed the binary too significantly, such that the input doesn't break anything. This is weird, as ASAN builds are typically more sensitive, and should be immediately reporting overflow cases, even if they don't lead to an abort condition later. We were therefore quite suspicious at this point, as we expected the report that in the end, we did not get.

Where do we go from here? We could try the original crash, as opposed to the minimized one. Alternately, we could increase the overwrite and the data, or generally impart more modifications on the input. Unfortunately, nothing was working.

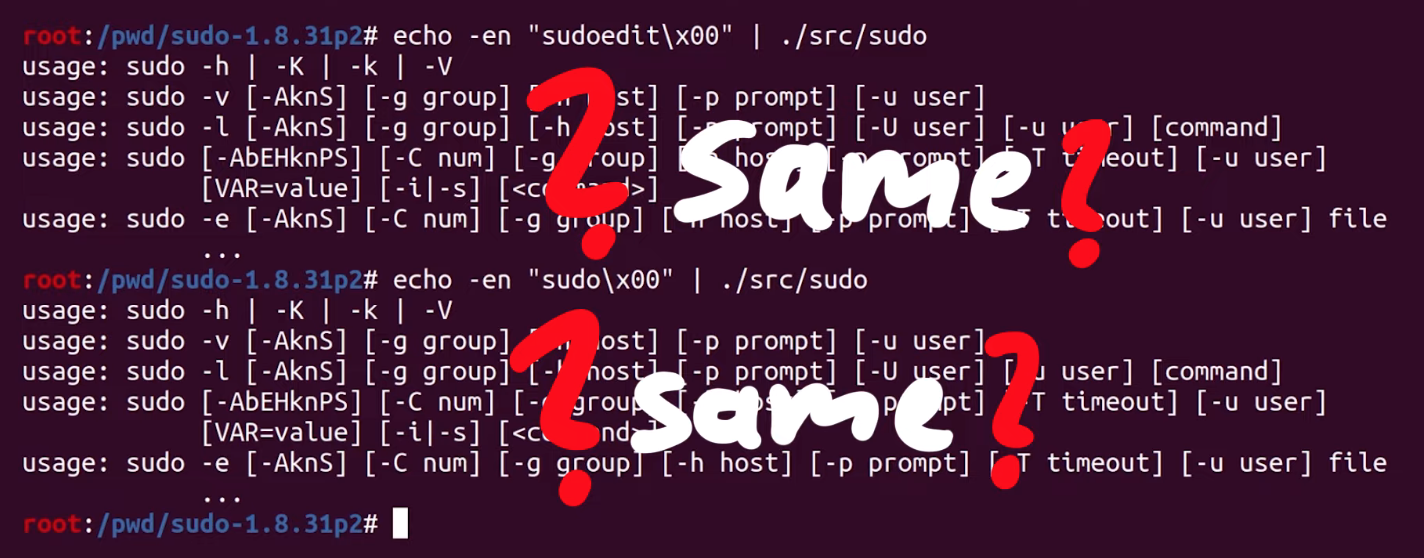

We therefore decided to take a step back, and assess the big picture to try and identify what we should do. We checked whether the argument parsing still worked, by running sudo -l :

echo -en "sudo\x00-l" |./src/sudoSurprisingly, the password prompt popped up in the console. We instead expected to see a different output.

It seems like ASAN has changed something else... but that doesn't make a lot of sense. It has to be something else, as using ASAN should not affect the binary like this.

What we have here is a typical weird technical issue. Somewhere, somehow, we have made a mistake. We must have! Backtracking further makes a lot of sense. Removing some of our previous modifications to code as part of preparation for fuzzing might help. We decided to rebuild the "normal" sudo, this time with ASAN, so that we can specify the arguments directly ourselves. In the root folder of the Docker container, there is the unmodified and regular version of sudo, which we build with ASAN. Let's see what happens: ./src/sudo -l works with this version. This is a good sign! We can now create a symbolic link to 0edit and attempt to call 0edit with the malicious argument... but it doesn't crash.

Accidentally Fixing the Issue

It's time for more sanity-checking. Here is a previous Docker system where sudoedit clearly crashes – and that was built from the same source, just without ASAN. In our desperation, we decided to install this ASAN version of sudo as the system version with make install. We created the symbolic link from that new ASAN sudo version as 0edit, tried it, and bam! It works?! Indeed it does: ASAN reports a heap buffer overflow at a specified address. This left us pleased, yet confused. Why did this happen? What was our mistake?

For absolutely no reason, the file ./src/sudo is not the binary anymore.

Previously, this was the path to the built binary, and now, it's a shell script. The real sudo is located in .libs.

That's it. That's the mistake, that's the error that cost us hours of troubleshooting.

sudo is actually located.If we symlink the actual binary in .libs and try again, we get the ASAN heap-overflow detection. We really, really love computers, but on some days, we really don't.

Improving the ASAN Debug Output

At any rate, we have our ASAN output now. The overflow seems to be happening in a function called set_cmnd(), but the report only shows us the binary offset in the object file and not its exact source code line. We could use a sudo debug build... so we made one with the -g compiler flag. We extended the CFLAGS, so that the compiler includes the -g flag during the configuration. Unfortunately, this didn't really help. Time for gdb!

We tried to set a breakpoint to set_cmnd(), but the breakpoint isn't hit, and thus this approach didn't work. Looking more closely, we noticed the address range of this function: looks like a library address! As a result, we considered the virtual memory map for the sudo process; we can see that the address previously listed must belong to an already-loaded library, perhaps the libsudo_util one.

It's worth it for us to try to build sudo without external libraries – a static build, with everything contained within the binary. This would after all greatly facilitate the debugging procedure.

We can do this with the --disabled_shared flag. You might've noticed in previous builds that we used this very same flag. We saw it in The Fuzzing Project's tutorial and figured that we should just use it too. However, when we built sudo with ASAN, we did not specify this flag. Adding it again should disable the creation of shared libraries and make this simpler and nicer to debug.

CFLAGS="-ggdb" CXXFLAGS="-ggdb" ./configure --disable-sharedBy the way, we noticed that the --disabled_shared flag dictates whether we get a binary in .libs, or if ./src/sudo is already the binary. Therefore, this was the reason why the binary used to be in ./src/sudo, and why the earlier ASAN build wound up in .libs, which is absolutely not what we expected. This is the issue that ate up hours of our time. So, if you retain any lesson from this video and article... use the --disabled_shared flag.

Now that we have a working and proper sudo build that involves ASAN and disabled shared libraries. When we test the crash (again), we get painfully clear information about which file and which code line the crash happened at. There we go! Thank you, ASAN. Now, let's have a gander.

At this code line, we find a loop that copies some bytes around. It copies them from the *from pointer to the location of the *to. We also noticed that this loop depends on a check for a backslash, one level upstream. This might explain why our proof-of-concept crash had a backslash at the end. And now, we can start analyzing the bug that we found.

Triaging Other Unique Crashes

It's worth mentioning that during this entire time, afl++ has been at work, trying all kinds of different inputs to generate a crash. It noted three unique vulnerabilities! We'll triage them, and see what their root cause is. Maybe we found a zero-day after all!

We can use afl-tmin to minimize the two other crashes, which goes quickly. We then look at the two cases, and surprise surprise: they look the same as the one we've been using thus far. When minimized, they follow the same structure: a variation of the sudoedit call to -s, with a backslash at the end. So, these are the "same crash", but as we explained previously, afl++ looks at the executed edges to determine if you've reached a different functionality, and it's a unique crash because different edges were executed to reach this conclusion.

Anyway, we're still curious to see if regular afl will find the bug, because so far only afl++ has. We also want to see if specifying sudoedit determines whether the fuzzer can still converge on the vulnerability. We'll leave the fuzzers to continue working while we progress with our series on CVE-2021-3156.

The Next Steps

What could we do next? We could jump forward into exploit development, and try to exploit the crash we found. It could prove to be too hard to exploit, though, without a more thorough and complete understanding of its mechanism. Having this stronger foundation would also mean that exploit development would be an easier task than without the extra background.

It's worth noting that the original Qualys advisory did explain in some detail why this overflow actually happened. It would be beneficial for us to reach the same conclusion. So how does one figure out this detailed explanation?

After all, the theme and the learning methodology of this video series is for us to imagine that we are the researchers, and that we generate this knowledge as organically as possible. Therefore, our role also includes writing a report that goes in-depth to explain the root cause of this vulnerability. So, let's do that next!

]]>alert(1) XSS payload doesn't actually tell you where the payload is executed. Choosing alert(document.domain) and alert(window.origin) instead tells you about where the code is being run, helping you determine whether you have a bug you can submit. The Video

Introduction

Cross-site scripting, also known as XSS, is a type of security vulnerability involving the injection of malicious script into normal and safe websites. This injection is designed to affect other users of the website. Injecting an XSS payload containing alert(1) allows a window to pop-up as a result of the payload being executed. The window popping up is evidence that the payload was run. Therefore, based on where the code was run, there may be potential for injecting malicious code. This is also a kind of vulnerability that is reported via bug bounties!

The alert(1) XSS Payload

The first clear advantage of using the alert(1) XSS payload is that it is very visual. You can inject the code and see very clearly when it gets executed which is convenient for webpages with lots of inputs. By varying the argument of the JavaScript alert() function, you can quickly locate where the XSS injection has worked.

alert(1) and praying it works.There is a second upside to using alert(1), and it is that there are some client-side browser JavaScript frameworks with templating that allow some limited form of JavaScript, like printing scope variables or doing relatively basic math. Due to the limitation imposed by the frameworks, you cannot actually inject malicious code, but you can use window.alert(1), since the window object is necessary for webpage functionality. The very same window object also holds the information that an attacker would be the most interested in, such as window.localStorage or window.document.cookie. In this case, successfully executing the alert() can be an indication that your XSS finding has a high severity, and should be reported.

These limited JavaScript templates however are no longer used as often as they used to be; over time it became clear that it was becoming too difficult to use, with many resorting to fixes and bypasses to circumvent the limitations. There's some information on AngularJS sandboxing attempts if you are further interested in the circumventing we just mentioned, available here. We also have a short playlist covering this topic, available here.

Even though it seems on the surface like a great injection metric, since it shows you if your XSS injection is critically exploitable, this is not the case. Having a window pop up is not necessarily the proof that there is a security vulnerability, in fact. Let's have a look at why this is the case using Google's own Blogger service.

Just a quick note, keep in mind the bug bounty scope may change between the time when we recorded the video and wrote this post, and the the time when you are reading this. At the time of recording the video and writing the blog post, the services in scope include:

*.google.com*.youtube.com*.blogger.com

For our example, all subdomains of blogger.com are in scope, which is exactly what we need.

XSS with Googler Blogger

If you've started using Blogger and taken the time to explore the features, you might have noticed that you can inject some HTML and JavaScript. To do so, create a new blog post and head over to the Layout menu on the left sidebar. There, click on Add Gadget and then on HTML/JavaScript. As the name implies, it allows you to inject a script with an alert(1), like so:

<script>alert(1)</script>alert() function call.

Now, we don't know where this script actually gets executed, so let's just keep using the Blogger platform to finish our blog post. We type up a well-know snippet of text, and hit the Preview button on the top-right of the blog post page to see what we've come up with so far.

And look who's here: the alert(1) XSS payload trigger! Check out the browser address bar: the URL reads https://www.blogger.com/blog/post/edit/preview/..., so the site is in scope and this means have found a bug, right?

alert(1) in all of its glory.Not quite, unfortunately. Let's examine why. By changing alert(1) to alert(document.domain) in our code, we have a payload that will tell us what domain we're actually injecting the code into. In our case, it's usersubdomain.blogspot.com, and not blogger.com. The reason for this becomes clear if you use the developer tools to look at the webpage code. You'll see that the blogger.com webpage embeds the usersubdomain.blogspot.com site in an iframe, and the payload is sent to the latter domain, which explains why the trigger didn't output blogger.com but instead usersubdomain.blogspot.com.

An important question we asked ourselves at this point is: why would Google use two different domains to implement the blogger service? Well, XSS is the reason. To protect themselves and their users, they use sandboxes, as mentioned here.

Sandboxes

Google specifically stipulates that they use

a range of sandbox domains to safely host various types of user-generated content. Many of these sandboxes are specifically meant to isolate user-uploaded HTML, JavaScript, or Flash applets and make sure that they can't access any user data.

So what's important about all of this? The point of an XSS attack is to access data that you supposedly aren't allowed to access. Take for example another user's cookies; these will be on the blogger.com domain, so an XSS attack used from the usersubdomain.blogger.com website cannot access the cookies, due to the same-origin policy. The same-origin policy ensures that a script contained in a first webpage can only access a second webpage if the pages have the same origin. In our case, we have our blog in our sandbox and its script, but it cannot access anybody else's sandbox, since these webpages do not have the same origin.

usersubdomain.blogspot.com cannot reach cookies on blogger.com.This is the reason behind why we want to use alert(document.domain) or alert(window.origin) payloads; doing so tells us exactly on which domain the XSS is getting executed, which really is the domain that we can access. In this case here, it's usersubdomain.blogger.com.

To summarize, Google lets users add custom HTML - and thus JavaScript - functionality to their blogs so that users have a chance to further customize the content. It's a great feature! To ensure that this feature cannot be used to attack other blog(ger)s with XSS injections, they placed each user's data in its sandboxed environment and then embedded it into the blogger.com domain using an iframe. So, when using an XSS payload, use alert(document.domain) or alert(window.origin) so you can be sure about what domain or subdomain the XSS is getting executed on. This is a deciding factor to establish whether you've found an actual security issue, or a dud.

Sandboxed iframes

Apart from sandboxing domains, it is also possible to sandbox iframes. We've actually discussed some of it before in a previous video (here); there, Google implemented a JSONP sandbox, where they injected an iframe with a user-controlled XSS payload, but also set the sandbox attribute on the iframe. Why? Let's have a look!

iframe sandbox.We implemented a simple tool that allows us to execute JavaScript expressions via eval.

function unsafe(t) {

document.getElementById("result").innerText = eval(t);

}For instance, we type 1+2 in the expression box, and the result returned is 3 (no surprises here!).

We've also implemented a secret session token,

document.secret = "SESSION_TOKEN";which we can steal by injecting alert(document.secret), as the resulting window pop-up reads SESSION_TOKEN, demonstrating the success of the method.

Let's now modify the script a little to have the script execute within an iframe. We write the new unsafe function as

function unsafe(t) {

var i = document.getElementById('result'); // get the <iframe>

i.srcdoc = "<body><script>document.write("+t+");<"+"/script></body>;

}Note that the iframe is given the sandbox attribute:

<iframe id="result" sandbox="allow-scripts allow-modals"></iframe>Our previous example of summing 1 and 2 still works.

1+2 to demonstrate that the functionality is still here.If we execute alert(1), we get our pop-up window with the result 1, which demonstrates that this code is as vulnerable as the previous example, right?

alert(1) works!To find out, we try to get the secret session token with alert(document.session).

document.session with an XSS payload.It doesn't work! Let's see what the code yields if we input alert(window.origin) or alert(document.domain). Both return an empty result! Why is this?

alert(window.origin) returns a null and alert(document.domain) returns nothing!This is different from the sandboxed subdomains we saw in the Google Blogger example, but there are parallels. Just like the subdomains with the blogging platform, here the iframes are also isolated from the website they are embedded into, so you cannot access the secret session token.

The result of this experiment reinforces the value of using alert(document.domain) and alert(window.origin): they are extremely helpful in determining whether you have a valid security issue to submit as a bug bounty.

Console Logs

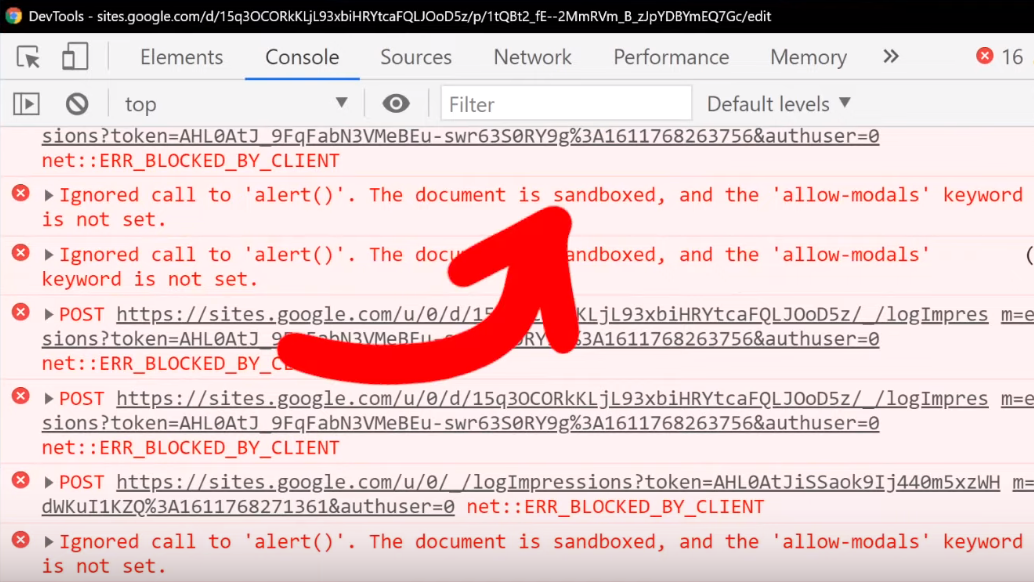

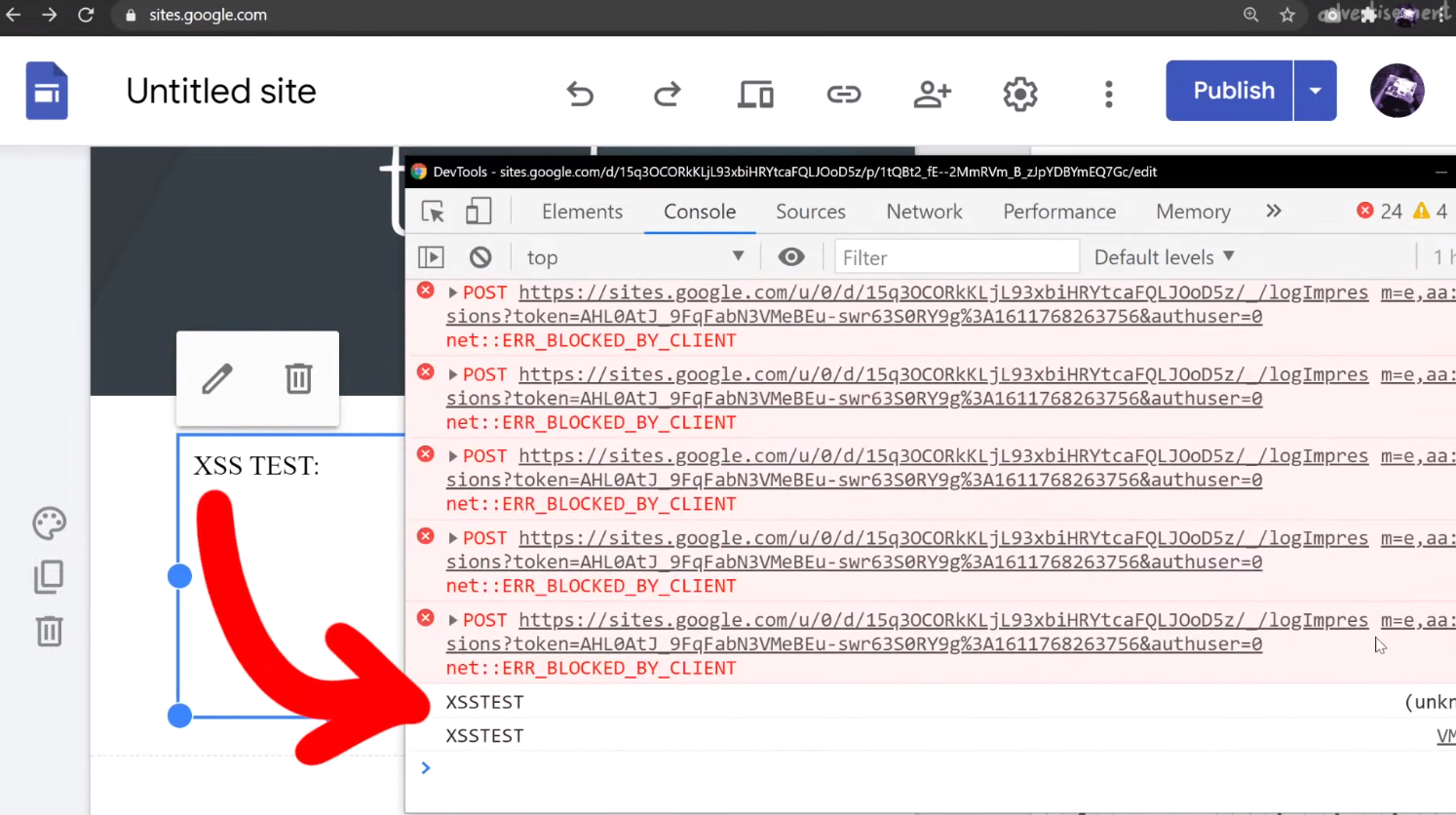

When you find an injection into a sandboxed iframe, typically the allow-modals option in the sandbox attribute is not enabled. For instance, with sites.google.com, we can create our own webpage. Let's use it to embed some raw HTML and JavaScript!

XSS TEST:

<script>alert(1)</script>However, when we inject this payload, nothing happens.

We can further investigate this if we use the console log using the browser's developer tools. You might notice in the log that the alert(1) is blocked by a sandboxed iframe. Remember to use filtering if you can't find it in the log.

alert().In this case, it's actually better to use

XSS TEST:

<script>console.log("XSSTEST")</script>to see where, or even whether, your XSS payload is being executed. You'll find that in the console log, there will be the word XSSTEST, or whatever you might specify when you try. Clearly, the payload was executed. Is this a bug?

XSSTEST showing up in the console log is evidence that the payload was executed.Again, this unfortunately is not a vulnerability. Let's look at the actual execution context by looking at (you guessed it) alert(document.domain) output. If you do that, or modify your script to read

XSS TEST:

<script>console.log("XSSTEST"+window.origin)</script>then you'll see that we are once more using a sandboxed domain, and that's exactly where the payload is being executed.

Closing Remarks

Throughout this article, we showed you that using alert(document.domain) and alert(window.origin) would tell you what domain or iframe your XSS payload is being executed in. In each example, we saw that the payloads were executed in isolated, sandboxed environments. This meant that none could access the object of interest, whether a secret session token, or another user's information.

So, why should you still investigate XSS injections in a sandboxed iframe or subdomain? It doesn't qualify for a bounty, so where is the incentive?

Take for example a website with an embedded (and sandboxed) JSONP iframe. The website typically will communicate with the iframe using a postMessage, and so there could be a way to exploit the messaging system between the website and the iframe to have the XSS payload be pushed to the website and executed there.

This is basically a sandbox escape, though the vulnerability is not with the first XSS in the sandbox, but rather the ability to escape and trigger the XSS on the website.

In summary, Google allows XSS by design in sandboxed subdomains, and a simple alert is not enough to prove that you have uncovered a serious XSS issue. You should always check what domain the XSS is executed on by using the alert(document.domain) or alert(window.origin) payloads. Hopefully you can appreciate the value of sandboxing domains and iframe environments, at least from a defense standpoint. Don't be discouraged though! Having an alert(1) execute could be the start of uncovering something bigger, so keep at it and take notes! We just want you to understand the broader context so that you can better investigate and see if you can find a bug that can get you a bounty. Good luck hunting!

The Video

Introduction

In the last episode of the sudo vulnerability series (CVE-2021-3156), we ironed out some buffer overflow issues with AFL and set up four fuzzing process. These corresponded to fuzzing sudo and sudoedit using both AFL and AFL++. This is an interesting experiment, as it tests for whether AFL or AFL++ can find the vulnerability through fuzzing, and if so, if it needed a broad or more specific test case input.

In this article, we pick up from there and analyze the results from this overnight fuzzing session. We also attempt to minimize some testcases for further fuzzing.

Fuzzing Results

Since we let these run overnight, the first thing we did when we rolled out of bed was – naturally – go to check the fuzzing results. Here's what we found:

- The first process, running AFL with the

sudotest case, had no crashes and just a couple thousand of timeouts to report. - The second process, with AFL and

sudoedit, was running slow and has a whopping 11.6 thousand timeouts, though only 61 were unique. We eventually let that one run for a week and it got some speed going again, but we digress. - The third, with AFL++ fuzzing just

sudo, had no crashes to report, which we found rather interesting. We can however tell you that this process eventually foundsudoedit. - The fourth fuzzing process, running AFL++ and the

sudoedittest case, showed 1 crash (and therefore 1 unique crash).

With the last new path time counter starting to tick into the "a few hours" territory, we figured that the fuzzers might have reached the point where it had already found all the easy fuzzing arguments and that further results would take several more hours. It's difficult to decide whether more than an hour is enough or if we should let the fuzzing run for longer; we don't have the experience to judiciously make this decision. Eventually, we decided to call it good and have a look at the unique crash from the fourth fuzzing process.

sudoedit Crash Investigation

Checking the Input File

First, we checked the crash input file itself, looking at it with hexdump. We would've expected it to find a variant of the vulnerability with sudoedit -s or something like that. Apparently, this was not the case. However, this input does have a backslash at the end of the argument, so maybe we might've stumbled on an another input variant that triggers the sudoedit bug? Or, it might be a false positive. Let's run this input file through a series of tests to determine whether it's legitimate, or a false positive.

Testing the Input

We copied the crash to /tmp/crash, then piped it as input for the AFL instrumented version of the sudo binary with

cat /tmp/crash | /pwd/sudo-1.8.31p2/src/sudoand watched as a malloc(): invalid next size (unsorted) error popped up immediately.

Maybe this is just a result of using our instrumented binary, so we tried it with our neat wrapper that we wrote in the last article.

cat /tmp/crash | ./afl2sudoWe get once again the very same error.

malloc() error.This is a good sign! Let's do one last test, though. You might have noted that we conducted these tests as root, which we decided to do (a while ago) to facilitate reaching the error. With that in mind, it's important to remember that CVE-2021-3156 involves an unauthorized user privilege escalation, so it makes sense to try to encounter the vulnerability as an unprivileged user instead of as root. We switched users using su user, and ran the same command we immediately did... and it works!

malloc() error is popping up for the unprivileged user. That's a bingo!This input passes our three tests. It is thus time for us to look at it in much greater detail.

Inner Workings of the Crash

We used gdb to further investigate the cause of the crash. To help us, we used the gdb extension called GEF, or GDB Enhanced Features, which you can find here. You could also use pwndbg, which is available here. We open up our afl2sudo wrapper with gdb, to properly set up the arguments, and run the /tmp/crash input.

Our binary will execute, set up the arguments, and then execute the real sudo binary... and then we crash, this time with a SIGABRT signal, instead of a segmentation fault. We say "crash", but it's more of an "abort", to be technically accurate. So why did that happen?

Apparently malloc detected an inconsistency with the next size, and decided to bail out and stop the program execution before something broke. This however still means that we must've corrupted something on the memory heap.

malloc aborted as soon as it detected the inconsistency.The backtrace gets us a list of the functions that were executed, and leads us step-by-step to the point where malloc aborted the program. Some of these functions are from malloc, so they belong to libc, whereas the last one was from sudo, namely, sudo_getgrouplist2_v1. When we use up to get into that stack frame, gdb can also show us the source code line that caused the program to abort. In our present case, the function tried to reallocate something, but malloc detected a heap inconsistency and aborted. This in turn means that we did in fact cause some kind of heap corruption. Neat.

gdb.This is of course pretty exciting, but we need to better understand this crash and the mechanism underlying it. Is this a variant of the sudoedit vulnerability? Or is this – dare we say – maybe a new and different sudo crash? After all, the test case did not contain sudoedit. Let's not get prematurely excited though. This is probably not a different sudo crash, but we figured that we could at least consider the option if only for a short, fleeting moment.

Keep in mind that you can find the code we've run through, it is available on GitHub. Feel free to play around with it – perhaps you can figure out what happened!

Further Testing

The next test we carry out is to see whether the sudo program in the latest version of Ubuntu, which has been patched to address CVE-2021-3156, also crashes. If that's the case, then we would know that it's a new zero-day. We edit our afl2sudo wrapper code to use it outside of our Docker container and instead execute the actual sudo program that is shipped with Ubuntu instead of our version; we do so simply by changing the execution path in the wrapper. We then compile our wrapper with gcc, and pipe the original input file to the new wrapper. No dice.

We knew that our crash being a new zero-day was a bit of a stretch, but we had to find out for sure. It is possible however that this crash is a variant of the sudoedit CVE-2021-3156 vulnerability... but still a curious variant. Indeed, it seems to not include sudoedit. Remember, sudo shows different behaviors based on whether sudo or sudoedit is invoked (via symlinking). Also, from the console output, you can see that the program name for sudoedit is botched, while the argument lists correspond to sudo. This is quite the curious behavior.

Input Test Case Minimization

At any rate, this test case input file we have is quite complex, and has a significant amount of extraneous information. As a result, we want to minimize this file, by which we mean reduce its contents to the core material. This allows us to have a shorter file to act as a proof-of-concept test case, and it is significantly easier to work with and understand. On the AFL++ GitHub readme, you can find out more about the minimization of test cases. We have no experience with any of these, and we do want to try them all. We'll start with the actively-maintained halfempty project from Google Project Zero.

halfempty

To use this program, we have to create a small wrapper shell script that checks if the input crashed the program or not. The example code provided on the project GitHub page looks like this, for gzip:

#!/bin/sh

gzip -dc

# Check if we were killed with SIGSEGV

if test $? -eq 139; then

exit 0 # We want this input

else

exit 1 # We don't want this input

fihalfempty GitHub page.The exit value of 139 corresponds to a segmentation fault, but we're interested in the SIGABRT signal, with code 134. We checked this by using echo $? after creating the crash by piping the input test case into the instrumented sudo binary.

SIGABRT.We thus modify the script to fit our needs:

#!/bin/sh

/pwd/sudo-1.8.31p2/src/sudo

# Check if we were killed with SIGABRT

if test $? -eq 134; then

exit 0 # We want this input

else

exit 1 # We don't want this input

fiNow, we call halfempty with the minimizing shell script and our crashing input. To our surprise, it doesn't work – the bisection strategy failed. We tried it ourselves by using the command suggested in our console, and it seemed to work, since the return exit code from the script was indeed 0, just as we wanted. At this point, we just gave up on halfempty. Let's try another method.

afl-pytmin

How about this one? It seems to be a wrapper around AFL, so let's try it! We adjusted the path to our input file and the other parameters, and hit the Return key. The console quickly prints [i] Processing /tmp/in_crash/1 file... before giving us the user input line in the shell again. Is it... done? We check for the file created, and it's empty. That's interesting. Maybe an empty input file also crashes sudo? Unfortunately, that doesn't work either.

afl-tmin

At this point, we were pretty frustrated, so we went with the minimizer that ships directly with afl. We fed it the crashing input, a destination to write the minimized file to, and finally the instrumented binary. Once again, we hit the Return key and the program runs quickly and finishes in a couple of seconds. In fact, we thought that once again we were facing a failure.

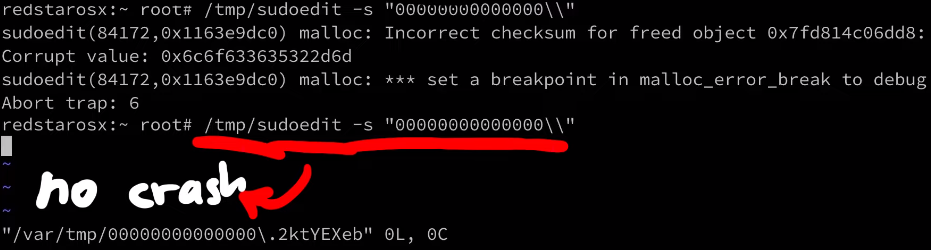

We checked the output file with hexdump, and it's very clean now, certainly a lot more than our initial test case file was. That's it, 0edit -s 00000\ is our crashing input! This looks like our sudoedit vulnerability.

Let's try it by first switching to the unprivileged user account using su user, change directory, symlink 0edit to sudo, and then punch it in with our -s 00000\\ flag and arguments (the double-backslash is due to needing to escape the backslash), and then adding a couple more 0s for good measure and... it crashes!

user account.It's interesting that calling just 0edit outputs the sudo help to the console, unlike sudoedit pulling up the sudoedit help, as expected, but the 0edit still goes into the sudoedit argument parsing code and then crashes. Interesting behavior for different symlinks.

We can also play a little with the name and try different variants, and it's really weird why it works. After all, it's not like the name can be just anything, it's weirdly specific and has to follow a <name>edit format, with at least one character before the edit portion.

We incidentally mentioned in a previous video that there is a check that only compares the end of the program name to the edit, but we only found this link from the test cases we just discussed.

Final Words

Now that we have a minimized test case, we can investigate this further. As mentioned, we are crashing when sudo tries to call realloc(). It detects that some metadata value is invalid, which means that the heap metadata was corrupted, too, having been overwritten at some earlier point. So, we now need to figure out where exactly the heap corruption occurs. In fact, you can try it out yourself. How would you find the true location of the overflow? We'll explain our methodology in our next video.

sudo vulnerability (CVE-2021-3156). The crashes are surprisingly due to buffer overflows in AFL itself, so we set out to fix it. Finally, we set up AFL and AFL++ running in parallel with different test cases to see if we could find the vulnerability.The Video

Introduction

In our last video and article, we set up afl to fuzz the sudo binary in order to find the sudoedit vulnerability (CVE-2021-3156). We let it run over a day, and then decided it was time to check the results. afl's dashboard indicated that we had some crashes (49 unique ones), so there was definitely a chance that we'd found the sudoedit vulnerability! You know it: we have to investigate.

Checking Crashes

Finding the Fuzzed Argument

If you remember the way afl works and is set up, you'll know that afl stores each input that crashed the fuzzed binary in the out folder, which for our purposes, is located at /tmp/out3/.... Since we ran four parallel fuzzing processes last time, we also have four output folders in the aforementioned directory, aptly-named f1, f2, f3, and f4. Each of these folders contains a crashes subfolder, which itself contains the crashing inputs. Since they are in binary, we use hexdump to read its contents:

hexdump -C /tmp/out3/f2/crashes/id000000,sig:11,src:000641,op:havoc,rep:8hexdump to the rescue.The output needs to be seen as a list of command arguments that have a null-byte separation. Also, the first one should be the program name, in argv[0]. However, our test case shows neither sudo nor sudoedit in the argv[0]. Could afl even have found the sudoedit vulnerability?

To go faster, we used grep to parse through all the output files for the keyword sudoedit using:

grep -R sudoedit .sudoedit in the crash-inducing inputs, the fast way.This lovely command should sort us. It returns... nothing. Absolutely nothing. This is not at all what we were hoping to see. On one hand, we have some crashes, and on the other, none seem to be sudoedit. But, we might have found another zero-day in sudo, and that is exciting! You know what we have to do: keep digging.

Internal Testing

As a first test, we can cat (that is, output) the contents of a crashing input and directly pipe them to our sudo binary using

cat id:000049,* | /pwd/sudo-1.8.31p2/src/sudoand we can in fact confirm that this input crashes the binary (via a segmentation fault). Excellent!

To better understand how the crash occurs, we use gdb in conjunction with the input, typing in the following into our shell:

gdb /pwd/sudo-1.8.31p2/src/sudogdb to the input.Just a quick note, we installed the pwndbg plugin into the container to get a nicer gdb output. The installation consists of copy/pasting three commands, which you can find here, on the GitHub page.

We run the binary, piping one of the crashes in. As expected, the crash occurs, and we get the details about the reason. It turns out that... afl_init_argv.in_buf() is to blame? What? But afl_init_argv() is from the header file! The afl_init_argv() function also has an in_buf[MAX_CMDLINE_LEN] static char variable that holds the fake argv arguments. What's going on here?

If we take a look around with gdb, and specifically looking at the step-by-step of what happened before the crash, we find that indeed we crash inside of sudo, and we find a call rax to a function pointer that is in sudo_warn_gettext_v1. We thus have a reason to be fairly suspicious of what is going on here.

rax.Before we continue, let's consider the possible reasons for what we are seeing, and think about how we can sort this out.

Proof of Concept

Before we carry forward with this, we wanted to create a simple proof of concept that crashes regular sudo, not the afl instrumented one, the actual system sudo. If the input crashes the system sudo, then it's a "legitimate" input. If not, we'll have a clear sign that something else is wrong in our methodology. Sounds good? Let's set it up.

The first step is to convert the crash input into an actual set of arguments we can use to execute sudo. We can actually reuse the argv-fuzz-inl.h header file to create a simple wrapper to use the argv macro to pass in the fake arguments and execute the real sudo binary with these fake arguments. Our code looks like this:

#include "argv-fuzz-inl.h"

int main(int argc, char *argv[], char *envp[])

{

AFL_INIT_ARGV(); // argv is now the fake argv

execve("/usr/local/bin/sudo", argv, envp);

}

argv are being passed into the execve function to be used as arguments with the real sudo.It's test time: running

echo -en "sudo\x00-l\x00" | ./afl2sudosudo -l.should execute sudo -l, and in fact it does! So the wrapper works. Cool, so let's try the crash file, now!

cat /tmp/crash | ./afl2sudoand a lovely Segmentation fault (core dumped) gets output to the console. This is excellent! This could be another zero-day! Let's further investigate with gdb, by debugging our own wrapper (yes, the one we just wrote) and piping in the crash input.

We quickly see that we crash inside of our own program, and once again, it's inside the AFL_INIT_ARGV() function; we didn't even reach the execve function to execute sudo that we called in the next line of our wrapper. Therefore, this leads us to the conclusion that we have a bug in our fuzzing setup. It's not what we wanted to conclude, but it is the reality of the problem. Let's fix it!

Counting High

We decided that the first step in our solution should be the classic "add printf statements to see what the program is doing" approach. It is certainly crude, but it works. In the afl_init_argv() function from the argv-fuzz-inl.h header file, we added the following line,

printf("[*] rc=%d ptr=%p -> %c\n", rc, ptr, *ptr);which gives us a readout of the values of rc and the pointer. After compiling and running, we immediately see that rc's value is climbing fast, quickly reaching past 1000... even though the [MAX_CMDLINE_PAR]'s value is fixed at 1000. This means that we are in fact causing a buffer overflow, which in this case shows that we are writing pointers from our fake argv to some other memory location. This also explains why we see crashes with afl. Therefore, the crash afl found through fuzzing is a bug in afl's experimental argv wrapper code.

As our fake argv is a static buffer, so basically a global variable, our input must've caused rc to go past 1000, thereby overriding data it shouldn't be touching. This also includes a function pointer used by sudo_warn_gettext_v1, which got overwritten by a pointer from our fake argv.

sudo_warn_gettext_v1 function in some cases.Though this did not immediately crash sudo, in some instances the sudo_warn_gettext_v1 function was executed using the overwritten pointer, causing the crash that got logged by afl.

The Solution

Fixing Wrappers

With this issue clarified, we fixed the wrapper by ensuring that rc can never exceed the [MAX_CMDLINE_PAR]'s value of 1000.

while (*ptr) {

if(rc >= MAX_CMDLINE_PAR) {

break;

}

ret[rc] = ptr;

// a lot more code goes in here, but we like properly closing the while loop for code cleanliness.

}rc doesn't exceed MAX_CMDLINE_PAR.This should address our false positive crashes. We once again recompiled sudo, and prepared the fuzzing setup.

rc reaches 1000 or more, we break out of the loop.Running the Fuzzer

This time, we decided to spice things up a little and get some further experimentations going. Let's summarize the setup:

- The first fuzzing process is the same as what we've been using previously. We added a test case with argument flags from the

sudomanual page, so thataflcan use some correct arguments in its fuzzing strategy. - The second fuzzing process includes the same flags as the first process, in addition to a

sudoedittestcase, to see if the fuzzer can find the vulnerability. Using this approach helps accelerate the fuzzing, as we are leaving less information for the fuzzer to go through. This test case therefore allows us to see if our setup can find the vulnerability altogether.

Both of the previously-described cases use afl. We decided to use afl++ for the remaining two cases, which mirror the first two in their test cases. afl++ is said to be

a superior fork to Google's afl - more speed, more and better mutations, more and better instrumentation, custom module support, etc.

as mentioned on the GitHub page. To facilitate the setup if you want to follow along with us, we added the installation to the Docker file for this episode. Instead of afl, the image installs afl++. The usage is pretty similar, so we didn't need to change anything except for using afl-cc as the compiler:

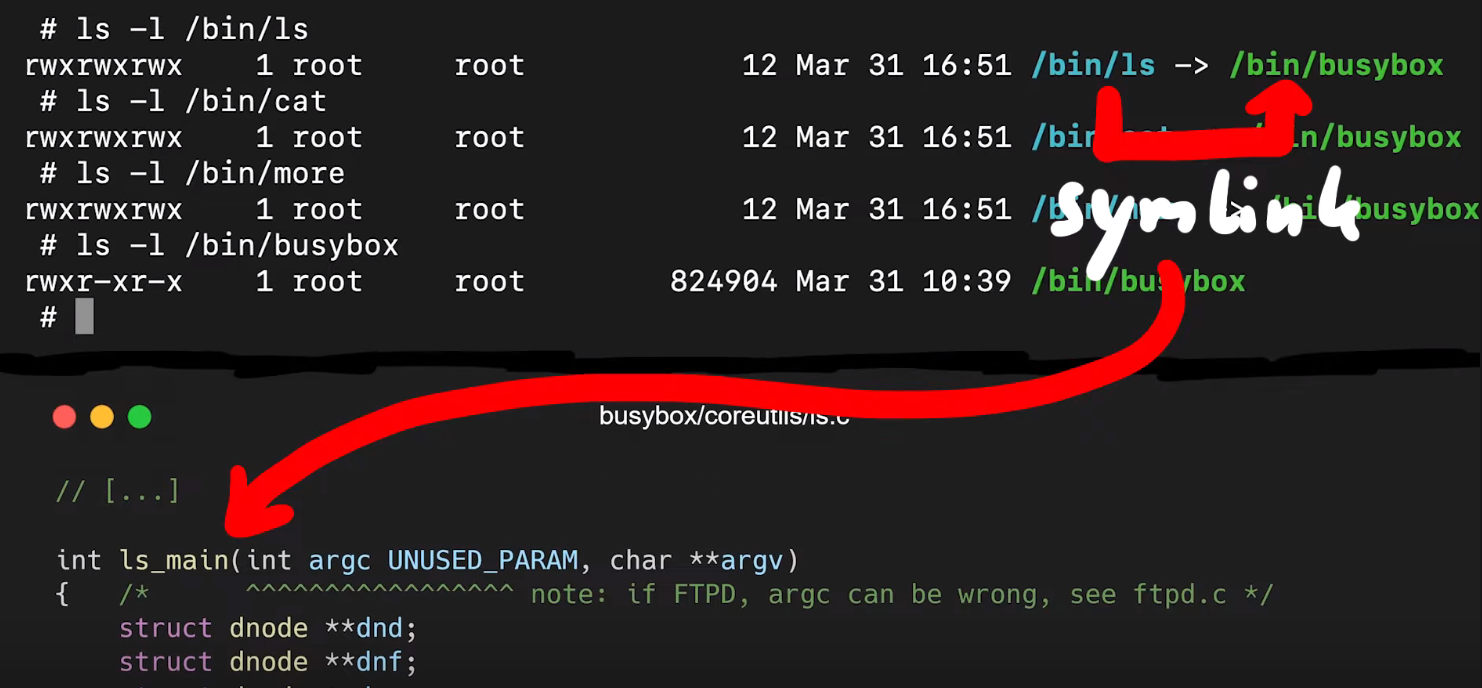

CC=afl-cc ./configure --disabled-shared && makeUsing any other variant such as afl-clang-fast is just a symbolic link (or "symlink") to afl-cc. With the configuration done, we build and make sudo. Now, we use the same input test cases as mentioned above, this time with afl++ instead of afl. Our four fuzzing processes are thus:

aflfuzzer, flags from thesudo manpageaflfuzzer, flags from thesudo manpage and thesudoedittest caseafl++fuzzer, flags from thesudo manpageafl++fuzzer, flags from thesudo manpage and thesudoedittest case

With those four processes now running, we have a good way of seeing the differences across the test cases and fuzzers.

The Fuzzing Dashboard

This entire time, we haven't actually discussed what the afl (or afl++) dashboard looks like, and what information it contains. We only mentioned that it was pretty to look at; it's time to talk about what we can learn from it.

There are various sections of interest on the dashboard. The process timing section presents how long the fuzzer has been running for (the wall time, to be very specific), as well as the last new path, unique crash, and unique hang. Using this section is a great way to decide early on if your fuzzing approach is working. This brings us to the question of time, and deciding when to call it quits.

process timing section of the afl dashboard.Time spent fuzzing is relative. Some projects can involve fuzzing that is completed in a matter of minutes. Some could require multiple days or (dare we say it) weeks. When to decide to cut your (time) losses is entirely up to you.

The stage progress section in particular contains information about the fuzzing speed. This is a great way to see how many executions are occurring every second, but it is also a method of spotting if you're encountering a resource problem, like we have in a previous episode of this series. Look at the number though – something like 200 or 300 executions per second is really nice when you think about it! That's a lot of executing of the sudo binary. Again we need to think about the project scale, though. For us, this is probably enough. For larger fuzzing campaigns, 200 or 300 executions per second might be on the low side. The section also features the total tally of executions. Expect this number to be large.

stage progress, with the execution speed.The findings in depth section gives you information about crashes, including the total number of crashes (including unique ones). The number of timeouts is also provided; timeouts are for when the program runs into an endless loop or a lengthy calculation. After a given amount of time, a timeout is considered to have occurred and the relevant tally increases. If your timeout counter is really large, either you need to increase the timeout cutoff value, or investigate further and find out what is causing the timeout to occur on the fuzzed program's side.

findings in depth section gives you more detailed information about crashes, timeouts, and the edges and favored paths taken by the fuzzer.The fuzzing strategies section details some mutations between runs, but we won't pretend that we are fuzzing experts, so we cannot say for sure whether some values showing up in this section of the dashboard is representative of something weird occurring either with the fuzzer or the fuzzed program.

At any rate, let's let the fuzzers run, and we'll see later what it is that they have come up with.

Final Words

In this episode of the CVE-2021-3156 sudo vulnerability series, we found some crashes using afl! Upon further investigation, we found that these crashes were not related to sudoedit. In fact, the crashes were happening instead in afl_init_argv.in_buf(), which is part of the afl_init_argv() function from the header file we used to set up the fuzzing! There, we were causing a buffer overflow, itself causing the crashes afl reported.

After ironing out this issue with a proof of concept and enforcing a limitation on the value of the rc counter, we returned to fuzzing, this time running two cases for both the afl and afl++ fuzzers. This way, we can find out what fuzzer works best, and whether they are even able to find the sudo vulnerability using either the sudo flags by themselves, or with some help by pointing the fuzzer directly at sudoedit in addition to fuzzing with the flags.

In our quest to rediscover the vulnerability, we have set up a fuzzer, crashed it, modified it, run it with test cases, crashed it again, run out of computing resources, gotten false positives... Finding a bug like this sudo vulnerability using fuzzing is not an easy task, so we are happy with the progress we have made! It has been quite the learning experience so far. In the next episode, we will go over the results of this latest fuzzing iteration, and continue on. In the meantime, happy fuzzing!

You can find the files on GitHub here: https://github.com/LiveOverflow/pwnedit/tree/main/episode04

]]>In our quest to find the CVE-2021-3156 vulnerability through fuzzing, we found that afl was causing our computer CPU and disk resources to get all used up. Using a couple of lines of code, we turned that to our advantage, so afl can now tell us if a file has been created while it fuzzes sudo. We also address some userid issues so that we can fuzz as root but invoke sudo as an unprivileged user.

The Video

Introduction

In the last article of the CVE-2021-3156 vulnerability (re)discovery series, we successfully got AFL to fuzz sudo using the LLVM compiler and modifying a bit of code in progname.c. We then left AFL to fuzz sudo overnight.

So far in this series, what we've found out is that fuzzing sudo is not as trivial as we anticipated. In this article, we'll pick up where we left off, discussing the result of this bout of fuzzing, and making further progress.

Parallelization and Resource Usage

Running Out of CPU...

After only running our four parallel processes for an hour, we noticed that the execution speed reported by afl was struggling at around 30 executions per second, which afl itself told us was slow!. This wasn't going to get us to the vulnerability very quickly! Time to investigate.

(slow!)We decided to stop and restart one fuzzing process to see what was going on. Stopping was no issue, but on restart, the program aborted due to our machine running out of CPU cores to allocate for the program. How's that?!

Using ps aux to pull up the running processes list, we found tons of /usr/bin/vi processes. It seemed to us like afl , in its fuzzing frenzy, found arguments that launched the vi editor... but didn't close it. So we wound up with an accumulation of vi editor processes that were eating up our available computational resources. We set everything straight by killing the relevant processes with pkill vi. We started fuzzing again, with no more issues. Great! This is just a band-aid fix though, and we figured that it would be better to permanently sort this.

pkill all of those pesky vi processes.Let's take a step back, consider the big picture, and think about this for a second. The fuzzing operation started the vi editor multiple times, and kept the processes running. Who is to say that it wouldn't launch other programs that would also consume precious computational resources? The best way to address this is to prevent any launching of programs. This is not to say that you cannot find a privilege escalation vulnerability through code execution, but we are specifically interested in a memory corruption issue. Therefore, it makes sense for us to prevent the fuzzer from launching other programs.

There are several variations of exec that are available in Linux, such as execl, execlp, execle, execv... so we searched for these terms in the sudo source code and commented each relevant line out. This is akin to having the exec failing.

execs and their variations. Don't forget to recompile!Good news - the fuzzing speed has now improved! Time to catch a bit of sleep overnight.

... And of Disk Space

In the morning, we checked the afl fuzzing dashboard, excited to see what we had missed while we were getting some well-deserved rest. Oh... we got an error message: unable to create /tmp/out/f2/queue/... due to the lack of remaining available space on the storage device.

Interesting. We couldn't even create a new file ourselves in the terminal. Using df -h, we checked out the disk usage. Curiously enough, the shell output showed only 32% of disk usage. So how could we have run out of space? Thanks to some extensive googling, we found out that we could check the allocated inodes using df -i. Sure enough, as indicated by the shell, all 100% of the inodes were used. What are inodes, anyways? As summarized in the corresponding Wiki,

The inode (index node) is a data structure in a Unix-style file system that describes a file-system object such as a file or a directory. Each inode stores the attributes and disk block locations of the object's data.

There is a limited amount that the file system can count to. Effectively, as the article mentions, it is possible to run out of inodes without actually having filled the disk. In our case, this is a sign that afl created tons of small, individual files. We tried to track where all of these files actually were. It turns out that many of them were housed in /var/tmp, and by many, we mean a whopping 2.3 million files (2,328,889 files, to be specific). That's an issue, as the fuzzer should not be creating files via sudo, as that's not what we are interested in! With all that noted, we noticed that the filenames in this directory were pretty long and random, which indicates that user input can control the file name. Since sudo runs as root, maybe there is a path traversal where we can inject ../ into the var/tmp filepath and write a root-owned file somewhere.

/var/tmp, and they have some pretty random filenames.Unlike exec, which we promptly removed on discovery, we want to use the file generation to our advantage. afl logs the arguments that it used that caused a crash. By forcing a crash to occur when a file is created, we can make afl tell us what arguments of sudo create a file. This is actually a fuzzing trick that is not used for memory corruptions, but we can use it so afl signals to us that it encountered conditions that we are interested in. We use

printf("mkstemps(%s)\n", suff);

*(int*)0 = 0; // force crash

tfd = mkstemps(*tfile, suff ?, strlen(suff) : 0);

The second line of this code snippet forces a null dereference, causing a segmentation fault that triggers the afl logging of the crash. The offending file's path is also output, so we can find it at a later time. Now, when afl finds a crash, we should get an example argument list that causes the crash, as well as the location of the new tmp file that was created. With the compilation and restart of the fuzzing processes completed, afl quickly began picking up crashes, meaning that we could start investigating.

afl is picking up crashes. Neat!Let's consider an example input. We used hexdump to output the file contents both into the shell as well as a file located in /tmp/mktemp. We can then use cat to pipe the file contents as an input to sudo. Good news: we get the expected crash.

There is a caveat though.

To root Or Not To root...

Throughout this entire procedure, we were running and fuzzing as root. Can a regular user even reach the arguments that lead to temporary file creation? Time to find out. We switched users to a "normal" one (aptly named user) and ran the same tests, but we get an error stating that the effective uid is not zero, indicating that we are not root. Is sudo installed setuid root?

We copied the binary we had to /tmp/sudo and set the proper uid permission bit. Now, we have a setuid sudo binary and so we can try our payload once again.

setuid.We get a password prompt. Yikes! This is in fact a fuzzing issue that we hadn't even considered. Effectively, we are targeting a binary that is set up such that it runs with setuid, and we are interested in the special case where the binary is invoked by an unprivileged user but runs as root with setuid. However, we cannot simply fuzz a Turns out setuid binary as a user, as afl would not work due to not being able to communicate with the privileged setuid process.afl can fuzz a setuid process, but I must have made some other mistake and misinterpreted the error. I concluded it wouldn't work. Eitherway, the solution still shows an important technique when fuzzing - modifying the target to help the fuzzing efforts.

Therefore, we either run completely as user or as root, but we cannot do both (one for fuzzing and one for running). Using both is not representative of normal sudo usage, therefore, we want to avoid this setup entirely. In light of this, we need another plan.

... That Is the Question!

We wound up deciding to fuzz and run as root, but somewhere in this process, sudo should get the current user. If we find that location, we can thus modify it and force it to think that an unprivileged user invoked it. The typical function to get the userid of the user that executed the program is (drum roll, please) getuid().

uid for a regular user.Searching for all the uses of getuid(), we found the get_user_info() function in sudo.c. Instead of letting getuid() return 0 for the root user, we simply hardcoded the value of 1000, which is the userid for a regular user. After compiling, we used our /tmp/mktemp payload once more. Instead of facing the temporary file creation as we did running as root, we now were staring straight at a password prompt, just as we would if we were an unprivileged user. Bingo!

uid in the getuid() function.This looks like it should work, then. It should also resolve our issue with file creation and running out of inodes, as these temporary files were only created by running sudo, already being root. This means that after all this tinkering, we should finally have a pretty good sudo-fuzzing setup. This in turn means that we should go for yet another round of fuzzing.

You know the drill: get in your PJs, get comfortable, and just watch the afl dashboard as the program does its thing. What a pretty sight.

Final Words

With afl now running and most kinks ironed out (that we know of, at least), we want to hear from you. Do any of you have experience with other fuzzers or fuzzing frameworks, such as libFuzzer or honggfuzz? If you can set up a minimal fuzzing environment for sudo and share it with us, we would be very happy to have a look! We have no experience with these tools and we would love to compare their setup, associated workflows, and performance with our specific use-case against afl.

Also, if you have any recommendations regarding how to optimize the afl files, please do share your fuzzing setup as well. We know about afl++ and as mentioned before, we will be switching to it in due course, but we want to hear about the tricks you might have for afl itself.

In the next video, we'll check out the results from our fuzzing campaign. Spoiler alert: there will be more technical hurdles to overcome. Keep an eye out for the next video and article!

]]>The Video

Introduction

First things first, I'm not testing pens (unfortunately). Now... my professional job is "penetration tester" (often shortened to "pentester"), but I'm not really a pentester. I don't like saying that I am one because people tend to think that all I do is hack companies. That's not accurate, so I need to find a new name for what I do. I specifically want to discuss pentesting, "pentesting", and where bug bounties fit in all of this.

Pentesting vs. "Pentesting"

When I say the word "pentesting," what comes to your mind? I asked you via polls on Twitter and YouTube, and this is what you had to say:

nmap, Metasploit, responder, and wifi hacking tools.Most people responded that nmap, Metasploit, responder, and wifi hacking were what they thought about first. This is really the area that red-teaming addresses. Red-teaming is an exercise often used in IT security where a group representing an enemy or threat (the red team) tries to get access to information or gain control of a network that they are not allowed to access. The blue team, conversely, must defend the information or the network by implementing the right safeguards. Red teams can be either groups internal to companies, or outside contractors hired to test a company's security.

An example of red-teaming is a contractor that comes onsite, tries to hack the wifi, then uses nmap to scan the network, find some outdated servers where they can use Metasploit to find some potential exploits, while running responder to win against the blue team... You get the idea. I haven't actually done this, so don't quote me on the methodology.

That is pentesting.

I am a "pentester". I don't actually use tools like this to do my job. Instead, I read code and carry out black-box testing. My job typically consists of testing websites, native applications, or mobile applications, in particular in terms of their security. I look at the code, I run dynamic testing, I use Burp and look at the API requests, I maybe do a bit of fuzzing, too. The whole point is to find new vulnerabilities that were not known before. Then, I gather all of my findings and write an extensive report, which I send to the client. This report contains all of the technical findings, of course, but also how I feel about their website or application.

Different Extremes

These are not quite the same definition of the word "pentesting", then. In fact, I'd say that both are on either extreme of the scale. Pentesting usually is done at the corporation level. Sometimes, pentesting will involve working with products, but its general scope is typically very broad. Pentesters have at their disposition a variety of different tools to carry out their hacking task. Essentially, pentesting is about hacking a company.

"Pentesting" on the other hand is done at the zoomed-in application or website level. It is instead focused on the nitty-gritty technical details, trying to ascertain whether the customer data is safe, for instance. In fact, sometimes finding a vulnerability in a web application doesn't even affect the company directly, since the service is hosted on third party server equipment outside of the company itself.

It is important to note that companies should carry out both pentesting and "pentesting", either internally or via outsourcing. Having robust networks and websites and/or applications that can be secure and thwart hackers' attacks is essential for any company. This is to say, one is not better than the other; they are complementary, not exclusive.

I tend to gravitate towards "pentesting". I don't really like the corporate side as much as I do the application-level work. Focusing on details is not as easy with the former, whereas with the latter, details are the bread and butter. The reliance on a suite of different tools also isn't something that I am necessarily fond of. This is how I feel about it, and it is in no way a judgment call on the value of pentesting vs. "pentesting".

Across the Pond...

I've also noticed a cultural difference between Europe and the United States when it comes to what people think the word "pentesting" means. I don't mean to exclude the rest of the world; I have simply worked more often with people from those regions, and therefore, I am more attuned to their respective takes on the word. In my (anecdotal) experience, Europeans consider the word "pentesting" to denote the application-level one, whereas Americans would think more readily about red-teaming and corporate pentesting. I obviously haven't conducted an in-depth study on the reason for this difference, I simply have observed it as part of my interactions with people in the IT security sector. That, and the polls you answered point to this conclusion as well.

I Need A New Name

Calling both activities the word "pentesting" is an issue, then. We need a better naming system to better separate them and remove the ambiguity that using the same term poses. Once again, I asked you all what you thought about it; the best answer to me was "application security tester". So, for the remainder of this article, for clarity's sake, I'll call the corporate, high-level pentesting "pentesting", and the application-level vulnerability evaluation "appsec", short for "application security".

By the way, if you look at my YouTube channel, you'll find a video I recently made giving an overview of all the topics that I've covered on my channel. You might notice that I've never made a video on pentesting, whereas I have countless videos on application security. I don't have a single video where I've used Metasploit, I've probably used nmap once, and I most certainly have never brought up active directories, pass the hash, or using wifi hacking tools. It's just not my kind of work. Again, I am not passing judgment here, it's just a preference.

I also do a lot of CTFs (Capture The Flag), they are more fun to me and are a great way to keep up with the industry and learn new things. Some say that CTFs are unrealistic, however. This point of view makes sense if you consider it from the pentesting perspective, where the scope is typically broad, and diving into the intricate details of the programming of an application is generally outside of the red-teaming scope. For an application security tester, CTFs' very detail-oriented nature is an excellent way to dig deep into the technology and hunt for little mistakes that could involve a vulnerability. It is excellent practice for personal methodology and workflow, as well as getting into the mindset of application security.

It seems that with the ambiguity of the "pentesting" term that we initially discussed in this article, we've talked past each other a few times, between the pentesting and appsec sides. When people talk about jobs in IT security, they might mean this side of security, whereas I mean that side of security. This extends from the offensive aspect of IT security to the defensive side, too. For instance, blue-teaming, the opposite of red-teaming, involves analysts in Security Operation Centers (SOCs) or active directory administrators who protect the corporation. On the application security side, there are programmers, software engineers, architects... their role is to protect customers' data, for example. With a more clear "name" for myself, I can better situate myself on the scale between application security and pentesting, and better relate the content that I've been publishing on YouTube to my skillset.

Security Education

Learning tools such as Metasploit, Nessus, responder, wifi hacking, and RAT implants are important skills for the corporate hacking world. There are plenty of resources out there to learn about how to use those tools well. I haven't worked with them though; I find that the pentesting job market is a little limited, and I am more interested in application security anyways. On the appsec side, there are tons of developers. I think that my channel's success is due to the greater applicability of my content for developers. The topics I cover encourage people to be critical of their own code and implementation logic and methods. I also think that CTFs benefit developers the most: you are probably a much better developer if you write code with security in mind. CTFs offer a great way to sharpen the skills you need and see what code works and what code doesn't when it comes to implementing specific programming features.

I also think that being a developer or devops is a lot closer to the application security work that I do than the pentesting side. Personally, I'd much rather do software development with security than doing corporate pentesting as either blue team or red team. Again, it's a preference, both appsec and pentesting have their roles in the IT security industry.

This is all great, but where do bug bounties fall in all of this?

Bug Bounty's Place

I'm pretty sure you expected this, but I'd place bug bounties right in the middle between pentesting and appsec. It borrows aspects from both! You have a large scope to work with, and you approach the bug bounty from the outside. Some of the applications you target may be either products or part of the corporate side. Bug bounties also involve the use of tools and a strong knowledge of technical details and intricacies of programming, so that you can take a deep dive on an API or find some exploits for application-specific vulnerabilities. This is also a place where the CTF mindset is helpful, though you are still approaching the exercise from the outside.

By doing pentesting or appsec in collaboration with a company enables you to have access to white-box knowledge - such as source code or beta software. This saves heaps of time, as you don't need to toil away, trying to bruteforce things such as API endpoints. You just look up all the configured routes and specifically audit the API endpoints. Generally, application security sits a little earlier in the software development pipeline, with testing carried out on a staging or development build before the code is released into production. If all goes well, you catch all the bugs and issues before the product is rolled out to customers. Or, you might report back to the company and tell them where they stand security-wise.

There is no guarantee that software is 100% vulnerability-free. That's why bigger companies such as Google run bug bounty programs. They have their own internal teams auditing code, and it's important to carry out the internal application security work ideally before the production stage, but bugs will be missed... bugs that bug bounty hunters can find at a later date.

Final words

I hope that these thoughts and the resulting discussion helped you to get a better overview of the different areas within the IT security sector. I also hope that it helps you better focus on what you should learn for the job that you are interested in.

]]>Using LLVM and clang, we were able to fuzz Linux programs in the command line using the AFL fuzzer. Exploiting the fact that sudoedit is symlinked to sudo, we tried to find the CVE-2021-3156 vulnerability using fuzzing methods.

The Video

Introduction

In the last article in the series, we talked about the critical sudo vulnerability (CVE-2021-3156) allowing an unprivileged user who is not part of the sudo group to elevate their own privileges to root. We set up American Fuzzy Lop to fuzz function arguments in the terminal instead of using the program standard input. However, when we tried to run it, we hit a segmentation fault, and we're not sure why.

It's important to consider that we are not following the method that the researchers used to find the vulnerability. Instead, we're choosing our own approach, relying on the actual documented methodology used by the researchers and others on the internet as a crutch when we run into some technical issues. This allows us to explore the context around this vulnerability in our own way, and in doing so, we learn. That is valuable.

In today's article, we'll try to find a way around the segmentation fault that we encountered last time, so we can discover, analyze, and exploit the sudo vulnerability.

AFL-gcc vs. LLVM

After the segmentation fault, we tried checking a few more things with gdb, to no avail. So we did what anyone else would do when they hit the proverbial wall: we googled it. Using segmentation fault __afl_setup_first as our query, we tried seeing if anyone else had had the issue. We didn't find anything conclusive; between gdb and our googling, we figured that it was time for a peek at what others had done in terms of fuzzing sudo.

gdb.We stumbled across a blog post by a certain milek7 (available here) , titled "How long it would have taken to fuzz recent buffer overflow in sudo?". In this post, milek7 sets out all the steps to follow in order to fuzz sudo, with a notable appearance of the argv-fuzz-inl.h header file and the AFL_INIT_ARGV function we've discussed in the previous article in this series. The other important bit of information that milek7 wrote is that

For some reason afl-gcc instrumentation didn’t work, so I used LLVM-based one. We just need to overrideCCfor./configure

which they followed up with this code snippet:

CC=afl-clang-fast ./configure

The blog post goes on to mention a few more things to do to get the fuzzing running successfully. But remember, we're trying to figure out most of it on our own and only rely on others' work when absolutely necessary... like when dealing with a mostly non-descript segmentation fault. So, we'll skip reading the rest and just focus on using the LLVM-based instrumentation.