The result is a simple, self-hosted analytics system built entirely on Next.js API routes and PostgreSQL. No cookies, no third-party scripts, no vendor lock-in. All collected data is displayed publicly on /about-this-site.

The database schema

Everything is stored in a single pageviews table:

CREATE TABLE IF NOT EXISTS pageviews (

id SERIAL PRIMARY KEY,

path TEXT NOT NULL,

referrer TEXT,

visitor_hash TEXT NOT NULL,

country TEXT,

city TEXT,

latitude REAL,

longitude REAL,

user_agent TEXT,

device_type TEXT,

browser TEXT,

os TEXT,

language TEXT,

screen_width INT,

created_at TIMESTAMPTZ DEFAULT NOW()

);

Each row represents a single pageview. There are no sessions, no persistent user IDs and no cookies. Unique visitors are identified by a daily rotating hash described below.

To keep queries fast I added partial indexes on the columns used most often:

CREATE INDEX ON pageviews (created_at);

CREATE INDEX ON pageviews (path);

CREATE INDEX ON pageviews (visitor_hash, created_at);

CREATE INDEX ON pageviews (latitude, longitude) WHERE latitude IS NOT NULL AND longitude IS NOT NULL;

Tracking pageviews from the client

The tracking component is a small React component included in the root layout:

"use client";

import { usePathname } from "next/navigation";

import { useEffect, useRef } from "react";

export default function PageviewTracker() {

const pathname = usePathname();

const lastTrackedPath = useRef<string | null>(null);

useEffect(() => {

if (pathname === lastTrackedPath.current) return;

if (localStorage.getItem("notrack") === "1") return;

lastTrackedPath.current = pathname;

const payload = JSON.stringify({

path: pathname,

referrer: document.referrer || null,

screenWidth: window.screen?.width || null,

});

if (navigator.sendBeacon) {

const blob = new Blob([payload], { type: "application/json" });

navigator.sendBeacon("/api/pageview", blob);

} else {

fetch("/api/pageview", {

method: "POST",

headers: { "Content-Type": "application/json" },

body: payload,

keepalive: true,

}).catch(() => {});

}

}, [pathname]);

return null;

}

It uses usePathname to detect route changes in the Next.js App Router and fires on every navigation. navigator.sendBeacon is preferred over fetch because it is non-blocking and survives page unloads reliably.

The pageview API route

The API route at /api/pageview handles each incoming event. It does several things before writing to the database:

Rate limiting rejects more than 30 requests per minute from the same IP. The limiter is a plain Map in memory. Each IP gets a counter and a reset timestamp. No Redis, no external dependency:

const rateLimitMap = new Map<string, { count: number; resetTime: number }>();

if (!entry || now > entry.resetTime) {

rateLimitMap.set(ip, { count: 1, resetTime: now + interval });

return { success: true, remaining: limit - 1 };

}

if (entry.count >= limit) {

return { success: false, remaining: 0 };

}

The client IP is read from x-real-ip first (set by nginx), falling back to the last entry in x-forwarded-for. Using the last entry rather than the first prevents clients from spoofing the header by prepending a fake IP.

Bot filtering tests the User-Agent against a regex of known crawler patterns before doing any database work.

Same-origin validation rejects requests that did not originate from the site. It parses the Origin or Referer header with new URL() and compares the host against the request Host header. A simple substring check would allow evil-mxd.codes to pass, so exact host matching is important. Requests with no Origin or Referer are allowed through. Those are same-site form submissions or direct navigations where the browser does not send either header.

Geolocation looks up the visitor's IP using the MaxMind GeoLite2 City database. The .mmdb file is loaded on the first request and cached in memory via @maxmind/geoip2-node and reused for every request with no external API call and no network latency. Coordinates are rounded to one decimal place to reduce precision:

latitude: Math.round(response.location.latitude * 10) / 10

User-Agent parsing is done with plain regex functions instead of a library. The browser, OS and device type are each extracted with a short chain of pattern checks. The order matters. Edge and Opera both include chrome in their User-Agent string, so they have to be matched first.

Visitor hashing creates a daily identifier without storing any persistent user data. The hash is a SHA-256 of the IP address, a server-side secret and the current date. The same visitor gets the same hash all day, which makes unique visitor counting possible. On the next day the hash is different, so there is no cross-day tracking.

Querying the data

All analytics queries live in src/lib/analytics.ts. The functions cover the most common dimensions:

getAnalyticsStats()returns total pageviews and total unique visitor-daysgetCurrentVisitors()counts distinct hashes seen in the last 5 minutesgetTopPages(limit)groups by path and orders by view countgetTopReferrers(limit)filters out self-referrals and empty referrersgetTopBrowsers,getTopOS,getTopLanguages,getTopCountries,getTopCitieseach group by their columngetDeviceBreakdown()splits into mobile, tablet and desktopgetScreenWidthDistribution()buckets screen widths into four categoriesgetPageviewsOverTime(days)returns a daily count for the last N days for the sparkline chartgetVisitorLocations()returns grouped coordinates for the visitor map

The /api/stats endpoint handles the overall pageview and visit counts, current visitor count and a few additional counts from other tables (comments, webmentions, emoji reactions, subscribers). It caches the result in memory for 24 hours. The more detailed breakdown queries (top pages, referrers, device types, countries and so on) are called directly from the about-this-site page on the server at request time.

Rendering the dashboard

Everything is displayed at /about-this-site, a server-rendered page with dynamic = "force-dynamic" so it always shows fresh data. The page fetches all the analytics functions in parallel on the server and passes the results down as props.

The 30-day pageview trend is rendered as an SVG sparkline chart built without any charting library:

const w = 400;

const h = 80;

const padding = 4;

const range = maxViews - minViews || 1;

const coords = data.map((d, i) => {

const x = (i / (data.length - 1)) * (w - padding * 2) + padding;

const y = h - padding - ((d.views - minViews) / range) * (h - padding * 2);

return { x, y };

});

const polyline = coords.map((c) => `${c.x},${c.y}`).join(" ");

const areaPath = `M${coords[0].x},${coords[0].y} ${coords

.slice(1)

.map((c) => `L${c.x},${c.y}`)

.join(" ")} L${w - padding},${h - padding} L${padding},${h - padding} Z`;

The area fill uses an SVG <path> element and the line is a <polyline>. Normalizing against the range (maxViews - minViews) rather than the absolute max keeps the chart readable even on low-traffic days. Colors come from the CSS custom property --secondary-color so the chart respects the site's light and dark theme automatically. Here is the live chart for this site:

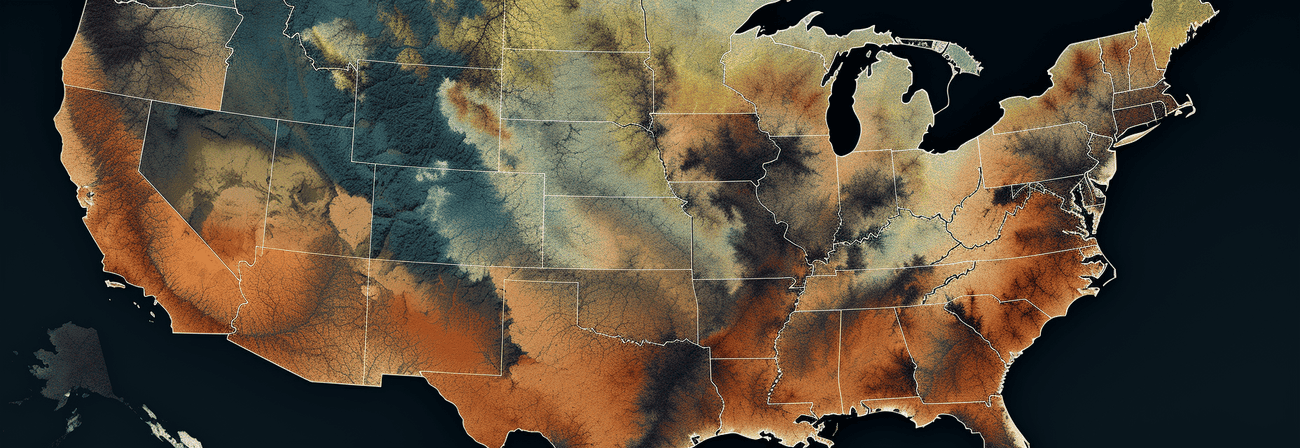

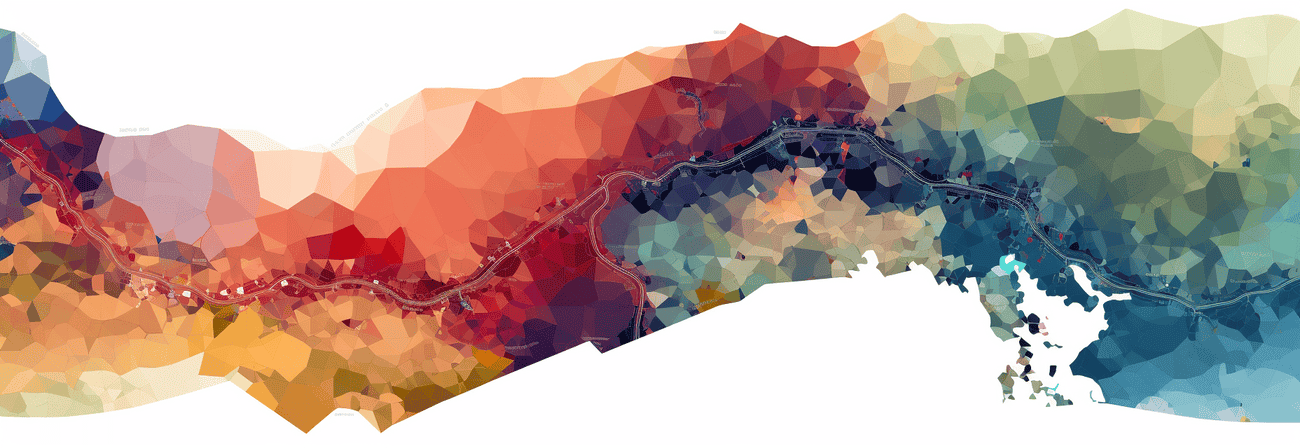

Visitor locations are rendered on an interactive map using OpenLayers with cluster styling that scales logarithmically with the number of visits from each location.

The rest of the page uses simple HTML lists and inline percentage bars rendered with CSS width set proportionally to the maximum value in each group.

Privacy

No cookies are set. No data is shared with third parties. The IP address is used only to look up a rough location and to create the daily hash, then it is discarded. Coordinates are stored with reduced precision.

The full analytics dashboard is publicly visible at /about-this-site, which I think is a reasonable trade-off: if I am collecting data, I should be transparent about what it shows.

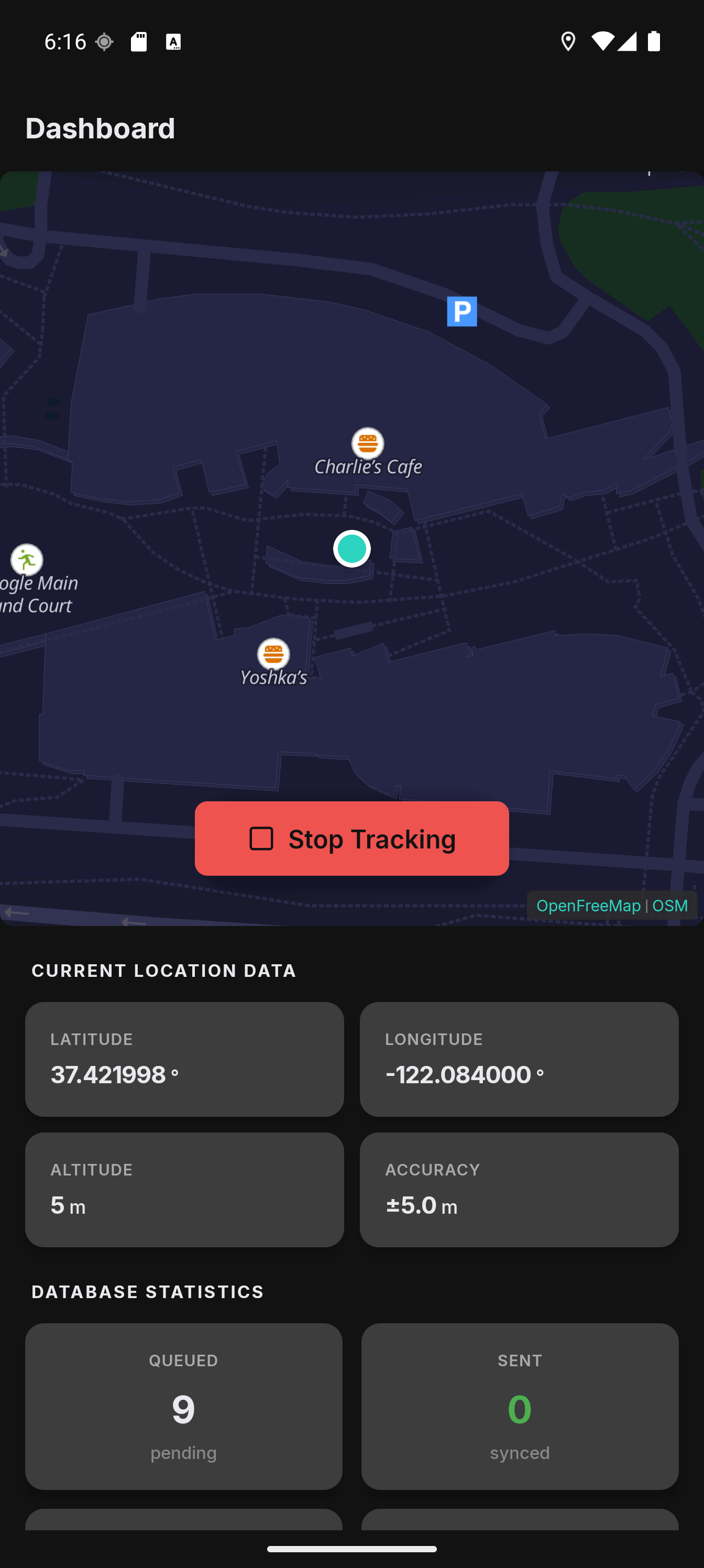

]]>I have been using Colota daily since the closed testing phase and kept running into things that bothered me. The WebView-based maps felt sluggish, switching GPS settings between walking and driving was annoying, and I did not want to record my location while sitting at my desk all day. Version 1.1.0 is the result of fixing all of that.

Native Maps with MapLibre GL

The biggest change is the map engine. In v1.0 the maps were rendered using OpenLayers inside a WebView. Panning had noticeable lag, pinch-to-zoom was not smooth, and memory usage was higher than it should be.

I replaced the entire map stack with MapLibre GL Native via @maplibre/maplibre-react-native. The maps now render on the GPU. Panning and zooming that used to stutter are instant now, even with geofence overlays and accuracy circles drawn on top.

The tile source is OpenFreeMap which provides free vector tiles based on OpenStreetMap data without requiring an API key. This keeps Colota fully FOSS-compatible, which matters for the F-Droid build.

Dark Mode

With vector tiles and MapLibre I could add a proper dark mode for the map. The app fetches the OpenFreeMap style JSON once, transforms the paint properties to dark colors, and caches the result. No extra network request after the first load.

The color palette uses a navy/indigo family that fits well with the rest of the dark theme. Water is almost black, buildings are a subtle purple-gray, and text labels use a light gray with a dark halo for readability.

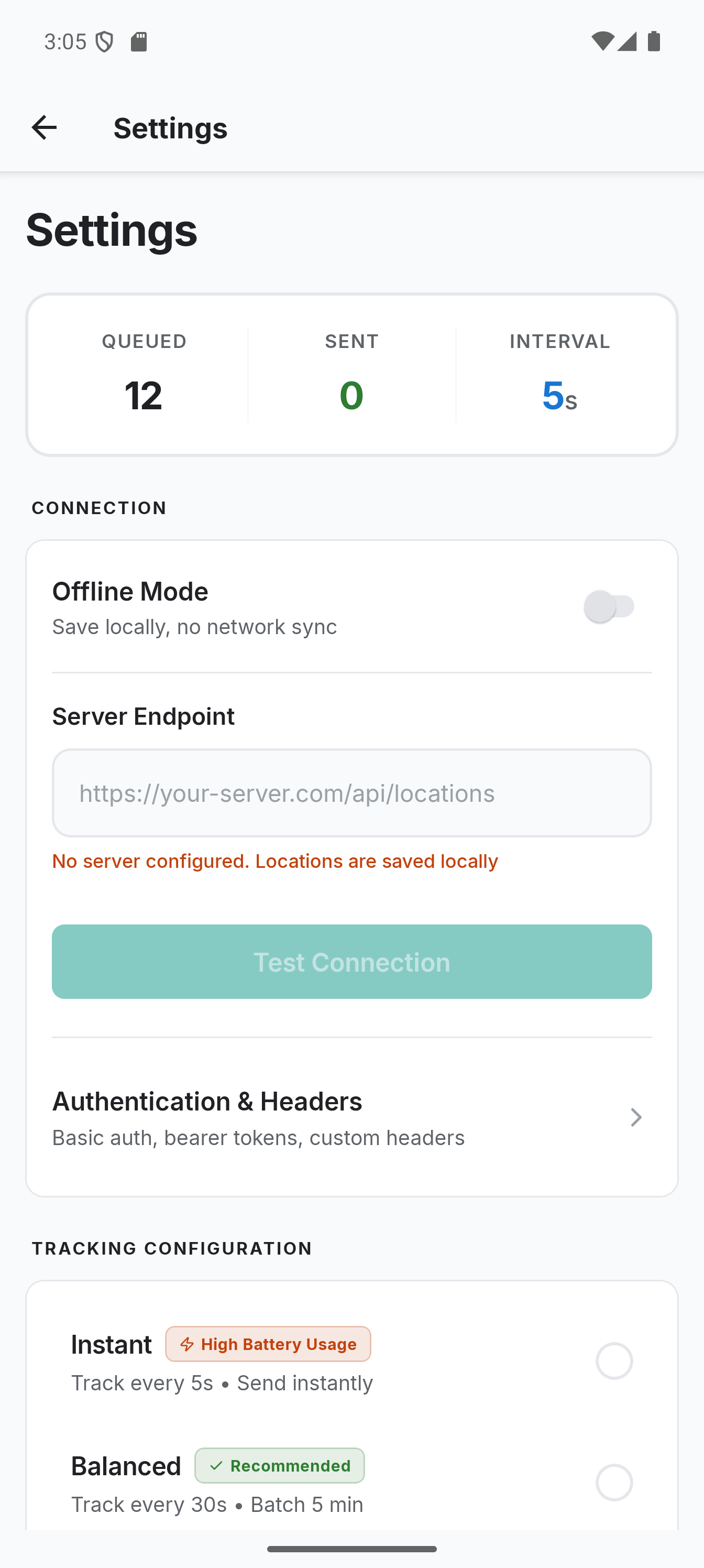

Tracking Profiles

This was the feature I wanted most for my own use. The GPS settings that work well for walking (high frequency, small distance threshold) drain the battery when driving. And the settings that work for driving miss too many points when walking.

Tracking profiles solve this by automatically switching GPS settings based on conditions. You can define profiles that activate when:

- The phone is charging

- Android Auto is connected (car mode)

- Speed is above a threshold

- Speed is below a threshold

![]()

For example, I have a "Driving" profile that activates when Android Auto connects. It sets the GPS interval to 4 seconds with a 20m distance threshold. When I disconnect from the car, it switches back to my default settings (2 second interval, 2m threshold).

The profile system uses priority-based resolution when multiple profiles match. It also has a deactivation delay to prevent rapid toggling when your speed fluctuates around the threshold.

Pause Zones

Sometimes you do not want to record your location at all. I do not need a GPS point every second while sitting at my desk at home or at the office.

Pause zones are geofences that automatically stop location recording when you enter them. You define a center point and a radius on the map, give it a name, and the app handles the rest. When you leave the zone, recording resumes automatically.

The distance calculation uses the haversine formula. The geofence check runs on every GPS fix inside the foreground service, so it works even when the React Native UI is not active. The zones are also visible on the dashboard map as colored circles with labels.

Speed-Colored Tracks

The location history map got a visual upgrade. Track segments are now colored by speed using a green-to-yellow-to-red gradient. This makes it easy to see at a glance where you were walking, cycling, or driving.

Each point on the track is tappable. A popup shows the exact coordinates, speed, accuracy, altitude, and timestamp. I also added a daily distance counter that shows how far you moved on any given day.

Deep Link Setup

Setting up the app with all the server details (endpoint URL, auth credentials, sync settings) is tedious to do manually. Colota now supports a colota://setup deep link that lets you encode the entire configuration in a base64 payload.

The URL format looks like this:

colota://setup?config=eyJlbmRwb2ludCI6Imh0dHBzOi8vZXhhbXBsZS5jb20vYXBpL2xvY2F0aW9ucyIsInVzZXJuYW1lIjoidXNlciJ9

The base64 payload decodes to a JSON object with all configuration fields. You can generate a setup link on your server and share it. Scanning or tapping it on the phone configures everything in one step.

Settings and Sync Presets

The settings screen got a cleanup. Sync presets (Instant, Balanced, Power Saver) make it easier to pick the right tradeoff between freshness and battery life without touching individual values.

Smaller Improvements

- Improved About screen with debug info copy and build variant display

- Better data management with sync progress indicators and database vacuum feedback

- Fixed map panning being blocked during active tracking (the user location overlay was re-rendering every 250ms, removed the animation and memoized the marker coordinates)

- Fixed stale tracking state after Android revokes location permission in the background

- Reliable Android Auto detection using the CarConnection API instead of broadcast hacks

New Icon

The app has a new icon. The old one was a placeholder I threw together in five minutes. The new one follows the Android adaptive icon guidelines and actually looks decent on both light and dark launchers.

Try It Out

The app is available on Google Play, F-Droid (pending review), and as a direct APK download on GitHub. The full source code is AGPL-3.0 licensed.

For setup instructions with different backends (Traccar, Home Assistant, OwnTracks, Dawarich, PhoneTrack) check the documentation. If you run into issues with background tracking being killed by your phone manufacturer, have a look at the battery optimization guide.

If you have been following along from my earlier post about location tracking with OwnTracks and Node.js, Colota is basically the evolution of that setup. The tracking app is now my own, fully open-source, and does not depend on OwnTracks anymore. The server-side stack (PostgreSQL, GeoServer, MapProxy) still works the same way. You just point Colota to your webhook endpoint and it sends the same kind of location payloads.

]]>

Securing web applications with HTTPS is a must, and Let’s Encrypt makes it easy by offering free SSL certificates. But what if you want a wildcard certificate to cover all subdomains under a domain? Fortunately, Let’s Encrypt supports wildcard certificates via the DNS-01 challenge, which requires updating DNS TXT records.

This guide is specific to using Cloudflare as your DNS provider, using their API to automate DNS updates during certificate issuance and renewal. Let’s walk through the process step by step.

Prerequisites

Before we dive in, make sure you have:

- A registered domain managed via Cloudflare (e.g.,

example.com) - A Cloudflare API token with DNS edit permissions (see Step 2)

- Docker and Docker Compose installed

If you use other DNS providers like DigitalOcean or AWS Route 53, you’ll need different DNS plugins and API credentials. This guide is tailored specifically for Cloudflare.

Step 1: Set Up the Docker Environment

Create a directory for your setup:

mkdir nginx-wildcard-ssl && cd nginx-wildcard-ssl

Create a docker-compose.yml file with the following content:

version: '3'

services:

nginx:

image: nginx:latest

container_name: nginx

restart: unless-stopped

ports:

- "80:80"

- "443:443"

volumes:

## Config

- ./nginx/nginx.conf:/etc/nginx/nginx.conf:ro

- ./nginx/sites-available:/etc/nginx/sites-enabled:ro

## SSL

- /etc/ssl:/etc/ssl

- /data/containers/nginx/ssl/dhparam.pem:/etc/ssl/dhparam.pem:ro

- /data/containers/certbot/conf:/etc/letsencrypt:ro

## Logs (optional)

#- /data/containers/nginx/logs:/var/log/nginx:rw

command: /bin/sh -c "while :; do sleep 6h & wait $${!}; nginx -s reload; done & nginx -g 'daemon off;'"

networks:

- web

- internal

certbot:

container_name: certbot

image: certbot/dns-cloudflare

restart: unless-stopped

volumes:

- /data/containers/certbot/conf:/etc/letsencrypt:rw

- /data/containers/certbot/www:/var/www/certbot:rw

entrypoint: "/bin/sh -c 'trap exit TERM; while :; do certbot renew --dns-cloudflare --dns-cloudflare-credentials /etc/letsencrypt/.secrets/cloudflare.ini; sleep 48h & wait $${!}; nginx -s reload; done;'"

networks:

- internal

networks:

web:

external: true

name: nginx

internal:

driver: bridge

Step 2: Prepare Your Cloudflare API Token

To allow Certbot to update DNS TXT records automatically for the DNS-01 challenge, you need a Cloudflare API token with DNS edit permissions.

How to create the API token:

- Log into your Cloudflare dashboard: https://dash.cloudflare.com/.

- Click your user icon → My Profile → API Tokens.

- Click Create Token.

- Use the Edit zone DNS template or create a custom token with:

- Permissions: Zone → DNS → Edit

- Zone Resources: Restrict to your domain(s) for security.

- Name your token (e.g.,

Certbot DNS Token). - Click Continue to summary and then Create Token.

- Copy the token immediately — you won't be able to see it again.

Save the token securely

Create a file /data/containers/certbot/conf/.secrets/cloudflare.ini with:

dns_cloudflare_api_token = your_cloudflare_api_token_here

Important: This file contains sensitive credentials!

So it's recommended to restrict permissions for the file. Therefore secure the credentials file with:

chmod 600 /data/containers/certbot/conf/.secrets/cloudflare.ini

This command sets file permissions so only the owner can read and write the file. It prevents other users on the system from reading your API token, enhancing security.

Step 3: Request Your Wildcard Certificate

Request your wildcard certificate by running:

docker run --rm \

-v /data/containers/certbot/conf:/etc/letsencrypt \

-v /data/containers/certbot/www:/var/www/certbot \

certbot/dns-cloudflare certonly \

--dns-cloudflare \

--dns-cloudflare-credentials /etc/letsencrypt/.secrets/cloudflare.ini \

--email [email protected] \

--agree-tos \

--no-eff-email \

-d example.com \

-d "*.example.com"

What this command does:

- Runs the Certbot Docker container with the Cloudflare DNS plugin.

- Mounts your configuration and webroot folders inside the container.

- Uses the Cloudflare API token to create temporary DNS TXT records required by Let’s Encrypt to prove domain ownership.

- Requests certificates for both your apex domain (example.com) and all subdomains (*.example.com).

- Stores the certificates in /etc/letsencrypt/live/example.com/ (inside the container, mapped to your host folder).

- Agrees to the Let's Encrypt terms of service.

- Uses your email for urgent notices (expiry reminders).

If successful, you’ll see something like:

IMPORTANT NOTES:

- Congratulations! Your certificate and chain have been saved at:

/etc/letsencrypt/live/example.com/fullchain.pem

Your key file has been saved at:

/etc/letsencrypt/live/example.com/privkey.pem

- Your certificate will expire on 2025-09-15. To obtain a new or tweaked

version of this certificate in the future, simply run certbot again.

- If you like Certbot, please consider supporting our work by:

Donating to ISRG / Let's Encrypt: https://letsencrypt.org/donate

Step 4: Configure Nginx to Use the Wildcard Certificate

Create a virtual host config, for example ./nginx/sites-available/example.conf:

server {

listen 443 ssl;

server_name example.com *.example.com;

ssl_certificate /etc/letsencrypt/live/example.com/fullchain.pem;

ssl_certificate_key /etc/letsencrypt/live/example.com/privkey.pem;

ssl_dhparam /etc/ssl/dhparam.pem;

location / {

proxy_pass http://your_backend;

proxy_set_header Host $host;

proxy_set_header X-Real-IP $remote_addr;

proxy_set_header X-Forwarded-For $proxy_add_x_forwarded_for;

proxy_set_header X-Forwarded-Proto $scheme;

}

}

server {

listen 80;

server_name example.com *.example.com;

return 301 https://$host$request_uri;

}

You’ll also need a basic nginx.conf to include your sites:

user nginx;

worker_processes auto;

error_log /var/log/nginx/error.log warn;

pid /var/run/nginx.pid;

events {

worker_connections 1024;

}

http {

include /etc/nginx/mime.types;

default_type application/octet-stream;

# Logging

access_log /var/log/nginx/access.log;

error_log /var/log/nginx/error.log;

# Performance

sendfile on;

tcp_nopush on;

tcp_nodelay on;

keepalive_timeout 65;

types_hash_max_size 2048;

# Gzip Compression

gzip on;

gzip_disable "msie6";

gzip_vary on;

gzip_proxied any;

gzip_comp_level 6;

gzip_buffers 16 8k;

gzip_min_length 1024;

gzip_types text/plain text/css application/json application/javascript text/xml application/xml application/xml+rss text/javascript;

# Security Headers (can be overridden in virtual hosts)

add_header X-Frame-Options "SAMEORIGIN";

add_header X-Content-Type-Options "nosniff";

# SSL Defaults (override per-site)

ssl_protocols TLSv1.2 TLSv1.3;

ssl_prefer_server_ciphers on;

# Include Virtual Hosts

include /etc/nginx/sites-enabled/*.conf;

}

Restart the stack or reload Nginx to apply changes:

docker-compose up -d

Step 5: Automate Renewal and Nginx Reload

The Certbot container is configured to:

- Automatically attempt certificate renewal every 48 hours.

- After successful renewal, it triggers an Nginx reload to apply the updated certificates without downtime.

This is handled by the command in docker-compose.yml:

entrypoint: "/bin/sh -c 'trap exit TERM; while :; do certbot renew --dns-cloudflare --dns-cloudflare-credentials /etc/letsencrypt/.secrets/cloudflare.ini; sleep 48h & wait $${!}; nginx -s reload; done;'"

You can test renewal manually with:

docker run --rm \

-v /data/containers/certbot/conf:/etc/letsencrypt \

certbot/certbot renew --dry-run

Summary

- This guide is tailored for domains managed on Cloudflare.

- You set up Docker containers running Nginx and Certbot with the Cloudflare DNS plugin.

- Created a Cloudflare API token to allow DNS automation.

- Requested and installed a wildcard SSL certificate covering your domain and all subdomains.

- Nginx uses the wildcard certificate for HTTPS.

- Automatic certificate renewal and zero-downtime Nginx reload are configured.

If you use another DNS provider, look for the appropriate Certbot DNS plugin and adjust the API credentials accordingly.

Feel free to ask if you want me to help with other providers or configurations!

]]>

Plausible Analytics is a lightweight, privacy-focused, cookie-free analytics solution. In this article, we’ll implement it inside a Next.js App Router project in a way that bypasses ad blockers using proxying. By proxying the tracking script and API requests through your own domain, you significantly reduce the chance of them being blocked by common ad-blocking extensions.

We’ll also ensure that page views are correctly tracked on every route change in a client-side rendered app.

This guide assumes you are self-hosting Plausible on a custom subdomain such as

analytics.yourdomain.com.

Proxy the Plausible Script and API

To reduce the chance of being blocked by ad blockers, we'll proxy Plausible's script and API through your own domain.

In your next.config.js, add the following:

async rewrites() {

return [

{

source: "/js/script.js",

destination:

"https://analytics.yourdomain.com/js/script.file-downloads.hash.outbound-links.pageview-props.revenue.tagged-events.js",

},

{

source: "/api/event",

destination: "https://analytics.yourdomain.com/api/event",

},

];

}

Next.js uses this to route requests internally without triggering a redirect (i.e., the user sees the original URL in their browser).

- When a user or script on your site requests

/js/script.jsor/api/event, the request is internally proxied to the external URL. - The browser still thinks it loaded locally, but the content is coming from

analytics.yourdomain.com.

What is this script variant?

The URL points to a self-hosted and enhanced version of the Plausible tracking script:

script.file-downloads.hash.outbound-links.pageview-props.revenue.tagged-events.js

This version includes support for:

- File downloads

- URL hash tracking

- Outbound link clicks

- Custom pageview properties

- Revenue tracking

- Tagged events

This is ideal if you want richer insights without modifying your app further.

Create a Route Tracker Component

Create a new file: app/RouteTracker.tsx

'use client';

import { usePathname } from 'next/navigation';

import { useEffect } from 'react';

const RouteTracker = () => {

const pathname = usePathname();

useEffect(() => {

// 1. Add the Plausible script to the DOM if not already added

if (!document.getElementById("next-p")) {

const script = document.createElement("script");

script.id = "next-p";

script.async = true;

script.defer = true;

script.setAttribute("data-domain", "yourdomain.com");

script.src = "/js/script.js"; // Note: this uses the proxied script

document.head.appendChild(script);

}

// 2. Add Plausible's minimal global initializer

if (!document.getElementById("next-p-init")) {

const initScript = document.createElement("script");

initScript.id = "next-p-init";

initScript.innerHTML =

"window.plausible = window.plausible || function() { (window.plausible.q = window.plausible.q || []).push(arguments) }";

document.head.appendChild(initScript);

}

// 3. Manually track a pageview when route changes

const trackPageview = (url: string) => {

const eventData = {

name: "pageview",

url,

domain: window.location.hostname,

...(document.referrer && { referrer: document.referrer }),

};

fetch("/api/event", {

method: "POST",

headers: {

"Content-Type": "application/json",

},

body: JSON.stringify(eventData),

}).catch((err) => console.error("Error tracking pageview:", err));

};

trackPageview(pathname);

}, [pathname]);

return null;

};

export default RouteTracker;

What this does

- Dynamically injects the Plausible script into the page, ensuring it's only loaded once.

- Adds a global Plausible initializer, so custom events can be tracked if needed.

- Manually sends a pageview event whenever the route changes in the client, which is essential in App Router / SPA apps.

Use the Component in Your Layout

Open your root layout file (app/layout.tsx) and import the tracker:

import RouteTracker from "@/src/hooks/plausible"; // adjust path to match your project

Then include it in your layout:

<body>

<RouteTracker />

{children}

</body>

Final Notes

- This implementation ensures Plausible is loaded only on the client and is less likely to be blocked.

- All route changes are tracked, making it perfect for SPA-like behavior with App Router.

- You benefit from all of Plausible’s extended features using the custom script variant.

By combining script proxying and client-side tracking, you get powerful, privacy-compliant analytics without sacrificing usability or insight.

]]>

When running databases like PostgreSQL and MariaDB on a server, ensuring regular backups is crucial for protecting your data from unexpected events such as crashes, human error, or system failure. While there are several ways to create backups, scripting a backup solution gives you complete control and automation.

In this article, we'll show you how to create a simple yet effective backup script for PostgreSQL and MariaDB running in Docker containers on a server. We'll automate the process to ensure that your databases are regularly backed up without you needing to manually intervene.

Why You Need Backups?

Before diving into the script, let’s take a moment to highlight why having regular backups is essential:

- Protection Against Data Loss: Regular backups prevent loss of valuable data due to failures or accidents.

- Disaster Recovery: Having up-to-date backups makes it easier to restore services quickly after a failure.

- Compliance and Audits: Some industries require maintaining backup copies of data for regulatory compliance.

- Peace of Mind: Knowing your data is safe provides confidence and good sleep.

Prerequisites

Before creating the backup script, make sure you have:

- A VPS running a Linux-based operating system.

- Docker installed and running.

- PostgreSQL and MariaDB running inside Docker containers.

- Basic knowledge of using the terminal and running Docker commands.

For the purpose of this tutorial, let’s assume your PostgreSQL and MariaDB containers are named postgres_container and mariadb_container.

Create a Backup Directory

Start by creating a directory on your server where the backups will be stored. This will help keep everything organized.

mkdir -p /home/youruser/backup

Replace /home/youruser/backup with the location where you'd like to store your backups.

Create the Backup Script

Now let’s create a bash script that will run daily backups for both PostgreSQL and MariaDB databases. Open your favorite text editor and create a file named backup_databases.sh.

#!/bin/bash

set -euo pipefail

# Backup a PostgreSQL database into a daily file.

BACKUP_DIR="/data/backups"

LOG_FILE="/var/log/db_backup.log"

DAYS_TO_KEEP=30

POSTGRESDATABASES=("db1" "db2") # PostgreSQL DBs to backup

MARIADBDATABASES=("db1") # MariaDB DBs to backup

POSTGRESCONTAINER="postgres_container"

MARIADBCONTAINER="mariadb_container"

# Create necessary directories

mkdir -p "${BACKUP_DIR}"

mkdir -p "$(dirname "${LOG_FILE}")"

# Create backup directory if it doesn't exist

mkdir -p "${BACKUP_DIR}"

# Function to log messages

log() {

local level="$1"

local message="$2"

local timestamp

timestamp=$(date +"%Y-%m-%d %H:%M:%S")

# Log to both stdout and a log file

echo -e "${timestamp} [${level}] ${message}" | tee -a "${LOG_FILE}"

}

for DATABASE in "${POSTGRESDATABASES[@]}"; do

TIMESTAMP=$(date +"%Y%m%d%H%M")

FILE="${TIMESTAMP}_${DATABASE}.sql.gz"

OUTPUT_FILE="${BACKUP_DIR}/${FILE}"

log "INFO" "Starting backup for database: ${DATABASE}"

# Perform the backup and compress the output

if docker exec -i "${POSTGRESCONTAINER}" /usr/bin/pg_dump -U "${USER}" "${DATABASE}" | gzip -9 > "${OUTPUT_FILE}"; then

log "SUCCESS" "Backup created: ${OUTPUT_FILE}"

ls -l "${OUTPUT_FILE}" | tee -a "${LOG_FILE}"

else

log "ERROR" "Backup failed for database ${DATABASE}" >&2

continue

fi

# Prune old backups

find "${BACKUP_DIR}" -maxdepth 1 -mtime +"${DAYS_TO_KEEP}" -name "*${DATABASE}.sql.gz" -exec rm -f {} \; \

&& log "INFO" "Old backups deleted for database ${DATABASE}" \

|| log "ERROR" "Failed to delete old backups for ${DATABASE}" >&2

done

## MariaDB Backup

for DATABASE in ${MARIADBDATABASES[@]}; do

TIMESTAMP=$(date +"%Y%m%d%H%M")

FILE="${TIMESTAMP}_${DATABASE}.sql.gz"

OUTPUT_FILE="${BACKUP_DIR}/${FILE}"

# Perform the database backup (dump)

if docker exec ${MARIADBCONTAINER} /usr/bin/mariadb-dump -u root --password=yourpassword ${DATABASE} | gzip -9 > ${OUTPUT_FILE}; then

log "SUCCESS" "Backup created: ${OUTPUT_FILE}"

ls -l "${OUTPUT_FILE}" | tee -a "${LOG_FILE}"

else

log "ERROR" "Backup failed for database ${DATABASE}" >&2

continue

fi

# Prune old backups

find "${BACKUP_DIR}" -maxdepth 1 -mtime +"${DAYS_TO_KEEP}" -name "*${DATABASE}.sql.gz" -exec rm -f {} \; \

&& log "INFO" "Old backups deleted for database ${DATABASE}" \

|| log "ERROR" "Failed to delete old backups for ${DATABASE}" >&2

done

log "INFO" "Finished database backups!"

Explanation:

- Variables: The script starts by defining a backup directory and more parameters like days to keep backups and individual databases to backup.

- PostgreSQL Backup: It uses

docker execto run thepg_dumpcommand inside the PostgreSQL container to dump previously defined databases. - MariaDB Backup: Similarly, it uses

docker execto run themysqldumpinside the MariaDB container and backup previously defined databases. - Compression: All backups are compressed using

gzipto save space. - Backup Cleanup: Finally, the script deletes backups older than

DAYS_TO_KEEPdays to prevent disk space issues.

Customizing the Script:

- Change

BACKUP_DIRto the directory where you want to store your backups. - Replace

DAYS_TO_KEEPwith the days how long you want to keep backups. - Replace

POSTGRESDATABASESwith the PostgreSQL databases to backup. - Replace

MARIADBDATABASESwith the MariaDB databases to backup. - Replace

POSTGRESCONTAINERandMARIADBCONTAINERwith the name of your PostgreSQL and MariaDB containers. - Replace

yourpasswordwith the password for the root user in MariaDB..

Make the Script Executable

After saving the script, make it executable:

chmod +x /path/to/backup_databases.sh

Automate the Backup with Cron

To schedule automatic backups, set up a cron job.

- Open the crontab editor:

crontab -e

- Add the following line to run the backup script daily at 2:00 AM:

0 2 * * * /path/to/backup_databases.sh

This will execute the backup script every day at 2:00 AM.

Make sure to adjust the path /path/to/backup_databases.sh to the correct location of your script.

Verify the Backup

It’s always a good idea to manually run the backup script once to ensure everything is working correctly.

/path/to/backup_databases.sh

Check the backup directory to ensure that the backup files have been created and compressed.

Restore from Backup (Bonus)

In case you need to restore a backup, you can use the following commands to load the backups back into your PostgreSQL and MariaDB containers.

PostgreSQL Restore:

docker exec -i postgres_container psql -U postgres -d database < /home/youruser/backup/DATE_DATABASE.sql

MariaDB Restore:

docker exec -i mariadb_container mariadb u root --password=yourpassword database < /home/youruser/backup/DATE_DATABASE.sql

Replace DATE and DATABASE with the appropriate backup file’s date and database name

By following these steps, you've created a simple and automated backup solution for your PostgreSQL and MariaDB databases running inside Docker containers. Regular backups are essential for protecting your data, and this script ensures that your backups run smoothly without manual intervention. You can also use this script to backup databases e.g. in Unraid with the User Scriptplugin.

You can further enhance this backup strategy by sending notifications, backing up to remote storage (e.g., AWS S3 or Google Cloud), or setting up encryption for additional security.

With your databases securely backed up, you can rest easy knowing your data is safe and easily recoverable in case of an emergency.

]]>

Webmentions are a powerful tool for adding decentralized social interactions, such as comments, likes, reposts, and replies, directly on your website. If you're building a dynamic site with Next.js, integrating Webmentions can help encourage cross-site conversations, boost SEO, and enhance user engagement. In this guide, I will show you how to implement Webmentions into your Next.js project with PostgreSQL for storing and displaying them.

What Are Webmentions?

Webmention is an open web standard (W3C Recommendation) that enables decentralized cross-site interactions.

In simpler terms, Webmentions allow users to interact with your content across the web by leaving comments, likes, reposts and other responses on other sites. These interactions enrich your site’s user experience, and they help establish meaningful connections with others.

When you link to a webpage, you can send a Webmention notification. If the receiving site supports Webmentions, it may display your post as a comment, like, or response—enabling rich cross-site conversations.

Why Should You Use Webmentions?

- Encourage Engagement: Promote cross-site discussions and interactions.

- Boost Social Proof: Display interactions from well-known sources to establish credibility.

- Enhance SEO: Webmentions generate backlinks, improving visibility and search engine rankings.

Here’s an example of how Webmentions appear on my site:

You can check a live version of this in the Webmentions section of this article: [/articles/fetching-and-storing-activities-from-garmin-connect-with-strapi-and-visualizing-them-with-next-js#replies].

A typical webmentions structure in JSON looks like this:

{

"type": "entry",

"author": {

"type": "card",

"name": "Some Name",

"photo": "URL to author image",

"url": "URL to author profile"

},

"url": "Webmention URL",

"wm-received": "Date of Webmention",

"wm-id": 1876563,

"wm-source": "Source URL",

"wm-target": "Target URL",

"wm-property": "Type of mention (e.g., like-of, repost-of)",

"wm-private": false

}

To keep your Webmentions accessible even if an external service is discontinued, it’s a good idea to store them locally. In this tutorial, we’ll guide you through setting up a PostgreSQL database to store Webmentions and display them dynamically in your Next.js app.

Setting Up PostgreSQL

Before we dive into Webmentions, ensure you have PostgreSQL installed on your server. If not, check on of these guides.

Once PostgreSQL is ready:

- Create a New Database:

# Create a new database for storing Webmentions

createdb personalwebsite

- Create a Table to Store Webmentions: Here's the SQL structure to store Webmentions in your database:

-- Define a table structure to store Webmentions

-- DROP TABLE public.webmentions;

CREATE TABLE public.webmentions (

id serial4 NOT NULL,

wm_id int8 NOT NULL,

wm_source text NOT NULL,

wm_target text NOT NULL,

wm_property text NOT NULL,

url text NULL,

author_name text NULL,

author_photo text NULL,

author_url text NULL,

content_html text NULL,

content_text text NULL,

published_at timestamp NULL,

received_at timestamp DEFAULT CURRENT_TIMESTAMP NULL,

CONSTRAINT webmentions_pkey PRIMARY KEY (id),

CONSTRAINT webmentions_wm_id_key UNIQUE (wm_id)

);

- Create a Log Table for Fetching Webmentions: This table logs each fetch, allowing you to track when Webmentions were last retrieved and avoid overloading the service:

-- public.webmention_fetch_log definition

-- Drop table

-- DROP TABLE public.webmention_fetch_log;

CREATE TABLE public.webmention_fetch_log (

id serial4 NOT NULL,

last_fetch timestamptz NOT NULL,

CONSTRAINT webmention_fetch_log_pkey PRIMARY KEY (id)

);

Using Webmention.io to Receive Webmentions

Before we store webmentions to display them we have to get them somewhere. If you dont want to implement your own Webmentions Receiver I recommend to use Webmention.io which is a service to easily receive webmentions.

Steps:

- Set Up IndieAuth for Your Site: Follow these IndieAuth setup instructions

- Sign Up for Webmention.io: Create an account at Webmention.io

- Add the Webmention Link to Your

_app.tsx:

<Head>

...

<link rel="webmention" href="proxy.php?url=https://webmention.io/username/webmention" />

...

</Head>

From here on, Webmention.io will collect all the Webmentions for your site. Now, let’s create a script that fetches and stores them every ten minutes in the PostgreSQL database

Fetching Webmentions from Webmention.io API

To keep Webmentions up-to-date, we'll fetch them periodically. Here’s the logic of the script.

Create a script src/utils/fetch-webmentions.js to fetch and store Webmentions:

import fetch from "node-fetch"

import { Pool } from "pg"

const pool = new Pool({

user: process.env.PGUSER,

host: process.env.PGHOST,

database: process.env.PGDATABASE,

password: process.env.PGPASSWORD,

port: process.env.PGPORT,

})

function isNotOlderThanTenMinutes(date: Date) {

if (!(date instanceof Date) || isNaN(date.getTime())) return false

return Date.now() - date.getTime() <= 10 * 60 * 1000

}

export async function fetchAndStoreWebmentions() {

const client = await pool.connect() // Use a client for transaction safety

try {

console.log("🔄 Checking last webmention fetch...")

// Get the latest fetch timestamp

const { rows } = await client.query(

`SELECT last_fetch FROM webmention_fetch_log ORDER BY last_fetch DESC LIMIT 1`

)

const lastFetchDate = rows[0]?.last_fetch

if (isNotOlderThanTenMinutes(lastFetchDate)) {

console.log("✅ Webmentions are already updated!")

return

}

// Insert new fetch timestamp

const now = new Date().toISOString()

await client.query(`INSERT INTO webmention_fetch_log (last_fetch) VALUES ($1)`, [now])

console.log("📌 Updated Webmentions fetch log")

// Generate Webmention API URL

const baseUrl = `https://webmention.io/api/mentions.jf2?domain=mxd.codes&per-page=1000&page=0&token=${process.env.WEBMENTION_IO_TOKEN}`

const webmentionsUrl =

lastFetchDate instanceof Date && !isNaN(lastFetchDate.getTime())

? `${baseUrl}&since=${lastFetchDate.toISOString()}`

: baseUrl

// Fetch new Webmentions from Webmention.io

console.log("🔄 Fetching webmentions from Webmention.io...")

const response = await fetch(webmentionsUrl)

const { children: webmentions } = await response.json()

if (!Array.isArray(webmentions) || webmentions.length === 0) {

console.log("⚠️ No new webmentions found.")

return

}

console.log(`📥 Processing ${webmentions.length} webmentions...`)

// Prepare batch insert query

const insertQuery = `

INSERT INTO webmentions (

wm_id, wm_source, wm_target, wm_property, url,

author_name, author_photo, author_url, content_html, content_text, published_at, received_at

) VALUES ($1, $2, $3, $4, $5, $6, $7, $8, $9, $10, $11, $12)

ON CONFLICT (wm_id) DO NOTHING;

`

for (const mention of webmentions) {

const values = [

mention["wm-id"],

mention["wm-source"],

mention["wm-target"],

mention["wm-property"],

mention["url"],

mention.author?.name || null,

mention.author?.photo || null,

mention.author?.url || null,

mention.content?.html || null,

mention.content?.text || null,

mention.published ? new Date(mention.published) : null,

new Date(mention["wm-received"]),

]

await pool.query(insertQuery, values)

}

console.log(`✅ Stored ${webmentions.length} webmentions successfully!`)

} catch (error) {

console.error("❌ Error fetching or storing webmentions:", error)

} finally {

client.release() // Ensure client is released back to the pool

}

}

// Run the function

fetchAndStoreWebmentions()

This function can now be called everytime before webmentions are queried for a page from PostgreSQL database. Ideally, you should abstract the logic for determining whether new webmentions need to be fetched into an API layer. This prevents unnecessary database queries with every request, but this is out of scope for this article.

Retrieving Webmentions for a Page

To retrieve Webmentions dynamically for a page, we create an API route in pages/api/get-webmentions.js. This route allows us to fetch mentions for a specific target URL stored in our PostgreSQL database.

import { Pool } from "pg"

import { fetchAndStoreWebmentions } from "@/src/utils/fetch-webmentions"

const pool = new Pool({

user: process.env.PGUSER,

host: process.env.PGHOST,

database: process.env.PGDATABASE,

password: process.env.PGPASSWORD,

port: process.env.PGPORT,

})

export default async function handler(req, res) {

if (req.method !== "GET")

return res.status(405).json({ error: "Method not allowed" })

const { target } = req.query

if (!target) return res.status(400).json({ error: "Missing target URL" })

// Updating Webmentions before selecting for page url

await fetchAndStoreWebmentions()

const query = `SELECT wm_id, wm_source, wm_target, wm_property, url, author_name, author_photo, author_url, content_text, published_at FROM webmentions WHERE wm_target LIKE '%${target}%' ORDER BY received_at DESC;`

const result = await pool.query(query)

res.json(result.rows)

}

Now you can call this API route and pass a query param target with the URL to get all Webmentions for a page.

Displaying Webmentions in Next.js

To visually display Webmentions on our website, we create a dedicated React component components/Webmentions.js. This component fetches the Webmentions from our API and renders them.

import { useEffect, useState } from "react";

// React component to display Webmentions for a given page

const Webmentions = ({ targetUrl }) => {

const [mentions, setMentions] = useState([]);

useEffect(() => {

// Fetch Webmentions for the target URL from the API route

fetch(`/api/get-webmentions?target=${encodeURIComponent(targetUrl)}`)

.then((res) => res.json())

.then((data) => setMentions(data));

}, [targetUrl]);

return (

<div>

<h3>Webmentions</h3>

{mentions.length === 0 ? (

<p>No webmentions yet.</p>

) : (

mentions.map((mention) => (

<div className="vcard h-card p-author" key={mention.wm_id} style={{ border: "1px solid #ddd", padding: "10px", marginBottom: "10px" }}>

{mention.author_photo && (

// Display author profile picture if available

<img src={mention.author_photo} alt={mention.author_name} className="u-photo" style={{ width: "40px", height: "40px", borderRadius: "50%" }} />

)}

<p>

<strong>{mention.author_name}</strong> {mention.wm_property.replace("-", " ")}

{mention.wm_property === "like-of" && " ❤️"}

{mention.wm_property === "repost-of" && " 🔁"}

{mention.wm_property === "in-reply-to" && " 💬"}

</p>

<a className="u-url" href={mention.url || mention.wm_source} target="_blank" rel="noopener noreferrer">View Mention</a>

</div>

))

)}

</div>

);

};

export default Webmentions;

I highly recommend periodically verifying the authenticity of Webmention sources to prevent spam.

Testing Webmentions

To ensure your Webmentions setup works correctly, use the following tools:

- Webmention Rocks! – A tool for testing Webmention implementations.

By integrating Webmentions into your Next.js site, you can create an interactive and engaging web community. Whether you're running a blog, portfolio, or e-commerce site, Webmentions provide a powerful way to enhance content, boost SEO, and encourage meaningful connections.

If you have created a response to this post you can send me a webmention and it will appear below the post.

More Links

- IndieWeb Webmention

- You can read more about Webmention and the IndieWeb on the https://indieweb.org/.

Many developers rely on Google’s Static Maps API to generate map images, but this has limitations such as:

- API rate limits

- Cost for high-volume usage

- Potential data privacy concerns

- Dependency on an external service

For a long time I was looking for an alternative to Maps Static API from Google which can be selfhosted but I couldn't find anything which seemed to fit my needs.

However I found staticmaps which is a Node.JS library for creating map images with markers, polylines, polygons and text. But the library doesn't provide a web interface so I decided to built one on top of it with express and containerized the staticmaps API.

Docker Static Maps API

docker-staticmaps is a containerized web version for staticmaps with express.

In general docker-staticmaps provides a self-hosted alternative that allows you to generate static map images on your own server without relying on third-party APIs.

Demo

Usage

To get a static map from the endpoint /staticmaps several prameters have to be provided.

center- Center coordinates of the map in the formatlon, latzoom- Set the zoom level for the map.width- default300- Width in pixels of the final imageheight- default300- Height in pixels of the final imageformat- defaultpng(e.g.png,jpgorwebp)basemap- defaultosm- Map base layer

Basemaps

For different basemaps docker-staticmaps is using exisiting tile-services from various providers. Be sure to check their Terms of Use for your use case or use a custom tileserver with the tileUrl parameter!

basemap- default "osm" - Select the basemaposm- default - Open Street Mapstreets- Esri street basemapsatellite- Esri's satellite basemaphybrid- Satellite basemap with labelstopo- Esri topographic mapgray- Esri gray canvas with labelsgray-background- Esri gray canvas without labelsoceans- Esri ocean basemapnational-geographic- National Geographic basemapotm- OpenTopoMapstamen-toner- Stamen Toner black and white map with labelsstamen-toner-background- Stamen Toner map without labelsstamen-toner-lite- Stamen Toner Light with labelsstamen-terrain- Stamen Terrain with labelsstamen-terrain-background- Stamen Terrain without labelsstamen-watercolor- Stamen Watercolorcarto-light- Carto Free usage for up to 75,000 mapviews per month, non-commercial services only.carto-dark- Carto Free usage for up to 75,000 mapviews per month, non-commercial services only.carto-voyager- Carto Free usage for up to 75,000 mapviews per month, non-commercial services only.custom- Pass through the tile URL using parametertileurl

Polylines

With the parameter polyline you can add a polyline to the map in the following format:

polyline=polylineStyle|polylineCoord1|polylineCoord2|...

polylineCoord- required - in formatlat,lonand seperated by|. Atleast two locations are needed to draw a polyline.

The polylineStyle consists of the following two parameters separated by |.

weight- default5- Weight of the polyline in pixels, e.g.weight:5color- defaultblue-24-Bit-color hex value, e.g.color:0000ff

If no center is specified, the polyline will be centered.

Polyline with no

zoom, weight:6 and color:0000ff

http://localhost:3000/staticmaps?width=600&height=600&polyline=weight:6|color:0000ff|48.726304979176675,-3.9829935637739382|48.72623035828412,-3.9829726446543385|48.726126671101639,-3.9829546542797467|48.725965124843256,-3.9829070729298808|48.725871429380568,-3.9828726793245273|48.725764250990267,-3.9828064532306628|48.725679557682362,-3.9827385375789146|48.72567025076134,-3.9827310750289113|48.725529844164292,-3.9826617613709225|48.725412537198615,-3.9826296635284164|48.725351694726704,-3.9826201452878531|48.725258599474508,-3.9826063049230411|48.725157520450125,-3.9825900299314232|48.725077863838543,-3.9825779905509102|48.724930435729831,-3.9825514102373938|48.724815578113535,-3.9825237355887291|48.724760905376989,-3.9825013965800564|48.724677938456551,-3.9824534296566916|48.724379435330384,-3.9822469276001118|48.724304509274596,-3.9821850264836076|48.7242453124599,-3.9821320570321772|48.724206187829317,-3.9821063430223207|48.724117073204575,-3.9820862134785551

Polygons

With the parameter polygon you can add a polygon to the map in the following format:

polygon=polygonStyle|polygonCoord1|polygonCoord2|...

polygonCoord- required - in formatlat,lonand seperated by|. First and last locations have to be the same to close the polygon.

The polygonStyle consists of the following two parameters separated by |.

color- defaultblue-24-Bit-color hex value, e.g.color:4874dbweight- default5- Weight of the polygon in pixels, e.g.weight:5fill- defaultgreen-24-Bit-color hex value, e.g.fill:eb7a34

If no center is specified, the polygon will be centered.

http://localhost:3000/staticmaps?width=600&height=600&polygon=color:4874db|weight:7|fill:eb7a34|41.891169,12.491691|41.890633,12.493697|41.889012,12.492989|41.889467,12.490811|41.891169,12.491691Polygon with no

zoom, color:4874db,weight:7 and fill:eb7a3

Markers

With the parameter markers you can draw one or multiple markers depending on how much pair of coordinates you pass to the parameter

markers=markerCoord1|markerCoord2|...

markerCoord- required - in formatlat,lonand separated by|. Atleast one coordinate is needed to draw a marker.

If no center is specified, the markers will be centered.

Markers

http://localhost:3000/staticmaps?width=600&height=600&markers=48.726304979176675,-3.9829935637739382|48.724117073204575,-3.9820862134785551

Circles

With the parameter circle you can add a circle to the map in the following format:

circle=circleStyle|circleCoord

circleCoord- required - in formatlat,lonand separated by|. Atleast one locations is needed to draw a marker.

The circleStyle consists of the following parameters seperated by |.

radius- required - Circle radius in meter, e.g.radius:500color- default#0000bb- Stroke color of the circle, e.g.color:#0000bbwidth- default3- Stroke width of the circle, e.g.width:3fill- default#AA0000- Fill color of the circle, e.g.fill:#AA0000

If no center is specified, the circle will be centered.

Circle with no zoom

http://localhost:3000/staticmaps?width=600&height=600&basemap=osm&circle=radius:100|48.726304979176675,-3.9829935637739382

More usage Examples

Minimal example:

center and zoom

http://localhost:3000/staticmaps?center=-119.49280,37.81084&zoom=9

width=500, height=500, center=-73.99515,40.76761, zoom=10, format=webp, basemap=carto-voyager

http://localhost:3000/staticmaps?width=500&height=500¢er=-73.99515,40.76761&zoom=10&format=webp&basemap=carto-voyager

Markers and Polyline

http://localhost:3000/staticmaps?width=600&height=600&polyline=weight:6|color:0000ff|48.726304979176675,-3.9829935637739382|48.72623035828412,-3.9829726446543385|48.726126671101639,-3.9829546542797467|48.725965124843256,-3.9829070729298808|48.725871429380568,-3.9828726793245273|48.725764250990267,-3.9828064532306628|48.725679557682362,-3.9827385375789146|48.72567025076134,-3.9827310750289113|48.725529844164292,-3.9826617613709225|48.725412537198615,-3.9826296635284164|48.725351694726704,-3.9826201452878531|48.725258599474508,-3.9826063049230411|48.725157520450125,-3.9825900299314232|48.725077863838543,-3.9825779905509102|48.724930435729831,-3.9825514102373938|48.724815578113535,-3.9825237355887291|48.724760905376989,-3.9825013965800564|48.724677938456551,-3.9824534296566916|48.724379435330384,-3.9822469276001118|48.724304509274596,-3.9821850264836076|48.7242453124599,-3.9821320570321772|48.724206187829317,-3.9821063430223207|48.724117073204575,-3.9820862134785551&markers=48.726304979176675,-3.9829935637739382|48.724117073204575,-3.9820862134785551

Deployment

with Docker

docker run -d \

--name='static-maps-api' \

-p '3003:3000/tcp' \

'mxdcodes/docker-staticmaps:latest'

with Node.js

git clone https://github.com/dietrichmax/docker-staticmaps

npm i

npm run start

Links

]]>

In general there are two possibilies to use GoogleAdsense on your GatsbyJS website:

- Auto Ads and

- custom ad blocks.

Depending on whether you choose to include Adsense ads on certain spots or whether you will leave this job to the Google AI, you can choose one/and or the other.

Auto Ads

With Auto Ads, the optimal positions for an advertising banner are determined using a Google AI and a display ad is automatically switched there. All you have to do is place the following AdSense code in html.js.

<script data-ad-client="ca-pub-0037698828864449" async src="proxy.php?url=https://pagead2.googlesyndication.com/pagead/js/adsbygoogle.js"></script>

and activate Auto ads in Adsense.

and activate Auto ads in Adsense.

On GIS-Netzwerk.com I used Auto ads and I'm honestly surprised how well it works. Ads are displayed in a text every few paragraphs and are also responsive.

Ad loads

You also have the option of increasing or decreasing the number of ads in the settings. Unfortunately, you cannot specify a specific number of ads.

In my opinion, a lot of ads are shown even when you set it to "min". You can play around with the ad load and find out the best setting for your purposes. Somestimes it can take a few minutes until the new ad load is effective.

Display formats

You can also influence the ad formats. Basically there are:

- In-page ads (displayed in the main part of the page)

- Matched content (tool for content promotion)

- Anchor ads (mobile ads on the edge of the screen)

- Vignette ads (mobile full screen display, at page transitions)

I have only deactivated anchor texts, because I personally find them very annoying.

In addition, you can also completely exclude individual pages from advertisements.

Adsense with GatsbyJS

If you want to use Auto ads on your GatsbyJS page, you can do it super easily with the plugin gatsby-plugin-google-adsense.

Install

npm install --save gatsby-plugin-google-adsense

or

yarn add gatsby-plugin-google-adsense

modify gatsby-config.js

// In your gatsby-config.js file

plugins: [

{

resolve: `gatsby-plugin-google-adsense`,

options: {

publisherId: `ca-pub-xxxxxxxxxx`

},

},

]

The remaining settings can then be adjusted on Adsense.

Ad units

In addition to auto ads, there is also the "classic" option of inserting individual ad units at specific positions. With the React Component react-adsense you can insert Google AdSense and Baidu ads in any place.

npm install --save react-adsense

or

yarn add react-adsense

In order for the components to be rendered, you still need the AdSense script code. You can either insert this manually in the html.js file or, if you want to combine individual ad units with Auto ads, you can also use the plug-in already mentioned to insert the script.

When auto ads and individual ad units are combined, the individual ad units always have a higher "priority". This means that all ad units that are inserted manually are usually also rendered and, if the text / ads ratio permits, additional ads from Auto ads are automatically inserted.

If the script has been integrated and react-adsense has been installed, you can use

import React from 'react';

import AdSense from 'react-adsense';

// ads with no set-up

<AdSense.Google

client='ca-pub-7292810486004926'

slot='7806394673'

/>

// ads with custom format

<AdSense.Google

client='ca-pub-7292810486004926'

slot='7806394673'

style={{ width: 500, height: 300, float: 'left' }}

format=''

/>

// responsive and native ads

<AdSense.Google

client='ca-pub-7292810486004926'

slot='7806394673'

style={{ display: 'block' }}

layout='in-article'

format='fluid'

/>

// auto full width responsive ads

<AdSense.Google

client='ca-pub-7292810486004926'

slot='7806394673'

style={{ display: 'block' }}

format='auto'

responsive='true'

layoutKey='-gw-1+2a-9x+5c'

/>

to insert components for the ad units.

The respective client id

client='ca-pub-7292810486004926'

and the ad slot

slot='7806394673'

must always be specified.

The rest is optional.

Optional props:

className:

style:

layout:

layoutKey:

format:

responsive:

In case you have more questions there is also ad Adsense community where you can get some answers. Google AdSense Help Community

]]>

Maps have long been a fundamental element in web development, transforming static websites into dynamic, location-aware applications. Whether you're navigating through the bustling streets of a city, planning a route for your next adventure, or visualizing data in a geographic context, maps play a crucial role in enhancing user experiences.

Overview of OpenLayers and its Capabilities

OpenLayers, a robust open-source JavaScript library, stands at the forefront of enabling you to seamlessly integrate interactive maps into web applications. Its versatile and feature-rich nature makes it a go-to choice for projects requiring dynamic geospatial visualizations.

At its core, OpenLayers provides a comprehensive set of tools to manipulate maps, overlay data, and interact with geographic information. Its capabilities extend from simple map displays to complex GIS applications, offering you the flexibility to create compelling and interactive mapping solutions. OpenLayers supports a modular and extensible architecture, allowing you to tailor their maps precisely to project requirements.

Explanation of Key Concepts: Maps, Layers, Views, and Sources

Understanding the key concepts within OpenLayers is fundamental to harnessing its full potential:

Map

In OpenLayers, a map is a container for various layers and the view, serving as the canvas where geographical data is displayed. you can create multiple maps within an application, each with its set of layers and views.

The markup below could be used to create a <div> that contains your map.

<div id="map" style="width: 100%; height: 400px"></div>

The script below constructs a map that is rendered in the <div> above, using the map id of the element as a selector.

import Map from 'ol/Map.js';

const map = new Map({target: 'map'});

API Doc: ol/Map

View

The view in OpenLayers determines the center, zoom and projection of the map. It acts as the window through which users observe the geographic data. you can configure different views to represent varying perspectives or zoom levels within a single map.

import View from 'ol/View.js';

map.setView(new View({

center: [0, 0],

zoom: 2,

}));

The projection determines the coordinate system of the center and the units for map resolution calculations. If not specified (like in the above snippet), the default projection is Spherical Mercator (EPSG:3857), with meters as map units.

The available zoom levels are determined by maxZoom (default: 28), zoomFactor (default: 2) and maxResolution (default is calculated in such a way that the projection's validity extent fits in a 256x256 pixel tile).

API Doc: ol/View

Source

Sources provide the data for layers. OpenLayers supports different sources, including Tile sources for raster data, Vector sources for vector data, and Image sources for static images. These sources can fetch data from various providers or be customized to handle specific data formats.

To get remote data for a layer you can use the ol/source subclasses.

import OSM from 'ol/source/OSM.js';

const source = OSM();

API Doc: ol/source.

Layer

Layers define the visual content of the map. OpenLayers supports various layer types, such as Tile layers for raster data, Vector layers for vector data, and Image layers for rendering images. Layers can be stacked to combine different types of information into a single, coherent map.

ol/layer/Tile- Renders sources that provide tiled images in grids that are organized by zoom levels for specific resolutions.ol/layer/Image- Renders sources that provide map images at arbitrary extents and resolutions.ol/layer/Vector- Renders vector data client-side.ol/layer/VectorTile- Renders data that is provided as vector tiles.

import TileLayer from 'ol/layer/Tile.js';

// ...

const layer = new TileLayer({source: source});

map.addLayer(layer);

API Doc: ol/slayer.

Installing OpenLayers

To start your journey into the world of interactive maps with OpenLayers and React, the first step is to install OpenLayers using your preferred package manager – npm or yarn. Open a terminal and execute one of the following commands:

npm install ol

# or

yarn add ol

This command fetches the latest version of OpenLayers and installs it as a dependency in your project. With the library now available, you're ready to embark on the next steps of integrating OpenLayers with React.

Setting Up a Basic React Component for the Map

Now that OpenLayers is part of your project, the next crucial step is to create a React component that will serve as the container for your interactive map.

If you try to render the Map before the component has been mounted (meaning outside of useEffect) like following you will get an error message.

const MapComponent = () => {

const mapRef = useRef()

// Incorrect: Rendering content before the component has mounted

const map = new Map({

target: mapRef.current

...

})

return <div ref={mapRef} style={{ width: '100%', height: '400px' }}></div>;

};

Solution:

Ensure that you only render content when the component has properly mounted. You can use lifecycle methods like componentDidMount in class components or useEffect in functional components.

const MapComponent = () => {

const mapRef = useRef()

useEffect(() => {

// Code here runs after the component has mounted

const map = new Map({

target: mapRef.current,

...

}

return () => map.setTarget(undefined)

}, []);

return <div ref={mapRef} style={{ width: '100%', height: '400px' }}></div>;

};

The return function will reponsible for resource cleanup for the map.

So a basic OpenLayers React example could look like the following:

// MapComponent.js

import React, { useEffect, useRef } from "react"

import { Map, View } from "ol"

import TileLayer from "ol/layer/Tile"

import OSM from "ol/source/OSM"

import "ol/ol.css"

function MapComponent() {

const mapRef = useRef<HTMLDivElement | null>(null)

useEffect(() => {

const osmLayer = new TileLayer({

preload: Infinity,

source: new OSM(),

})

const map = new Map({

target: mapRef.current,

layers: [osmLayer],

view: new View({

center: [0, 0],

zoom: 0,

}),

})

return () => map.setTarget(undefined)

}, [])

return (

<div

style={{ height: "300px", width: "100%" }}

ref={mapRef}

className="map-container"

/>

)

}

export default MapComponent

In this example, the MapComponent initializes an OpenLayers map with a simple OpenStreetMap layer and the useEffect hook ensures that the map is created when the component mounts.

To ensure the correct styling and functionality of OpenLayers, it's crucial to import the necessary CSS and modules. In the MapComponent.js file, notice the import statement for the OpenLayers CSS:

import 'ol/ol.css'; // Import OpenLayers CSS

This line imports the essential stylesheets required for OpenLayers to render properly. Additionally, other modules from OpenLayers, such as Map, View, TileLayer, and OSM, are imported to create the map instance and layers.

By following these steps, you've successfully set up a basic React component housing an OpenLayers map. You're now ready to delve deeper into the capabilities of OpenLayers and explore advanced features for creating dynamic and interactive maps within your React applications.

Also I created two examples for React and Openlayers:

- OpenLayers 6 React example using a functional component

- OpenLayers 6 React example using a class component

Markers, Popups, and Custom Overlays

Markers, popups, and custom overlays enhance the visual storytelling capabilities of a map, providing users with valuable context. OpenLayers simplifies the process of adding these elements:

- Markers: Representing specific points of interest on a map becomes intuitive with markers. you can add markers to highlight locations, making the map more informative and engaging.

- Popups: Interactive popups can be attached to markers, providing additional information when users click on specific map features. This allows for a more detailed exploration of the data.

- Custom Overlays: OpenLayers allows you to create custom overlays, enabling the display of additional information in a tailored manner. This could include tooltips, legends, or any other supplementary elements.

Here's a simplified example demonstrating the addition of a marker with a popup:

// MarkerPopupMap.js

import { useEffect, useRef } from "react"

import "ol/ol.css"

import Map from "ol/Map"

import View from "ol/View"

import Overlay from "ol/Overlay"

import { toLonLat } from "ol/proj.js"

import { toStringHDMS } from "ol/coordinate.js"

import styled from "styled-components"

import { Icon, Style } from "ol/style.js"

import Feature from "ol/Feature.js"

import { Vector as VectorSource } from "ol/source.js"

import { Tile as TileLayer, Vector as VectorLayer } from "ol/layer.js"

import Point from "ol/geom/Point.js"

const Popup = styled.div`

background-color: var(--content-bg);

padding: var(--space-sm);

`

const MarkerPopupMap = () => {

const mapRef = useRef()

const popupRef = useRef()

const osm = new TileLayer({

preload: Infinity,

source: new OSM(),

})

const iconFeature = new Feature({

geometry: new Point([0, 0]),

name: "Null Island",

population: 4000,

rainfall: 500,

})

const iconStyle = new Style({

image: new Icon({

anchor: [0.5, 46],

anchorXUnits: "fraction",

anchorYUnits: "pixels",

src: "https://openlayers.org/en/latest/examples/data/icon.png",

}),

})

iconFeature.setStyle(iconStyle)

const vectorSource = new VectorSource({

features: [iconFeature],

})

const vectorLayer = new VectorLayer({

source: vectorSource,

})

useEffect(() => {

const overlay = new Overlay({

element: popupRef.current,

autoPan: {

animation: {

duration: 250,

},

},

})

const map = new Map({

target: mapRef.current,

layers: [osm, vectorLayer],

view: new View({

center: [0, 0],

zoom: 3,

}),

overlays: [overlay],

})

/**

* Add a click handler to the map to render the popup.

*/

map.on("singleclick", function (evt) {

// Get Coordinates of click

const coordinate = evt.coordinate;

const hdms = toStringHDMS(toLonLat(coordinate));

// Show popup at clicked position

overlay.setPosition(coordinate);

if (popupRef.current) {

popupRef.current.innerHTML = `<p>You clicked here:</p><code>` + hdms + `</code>`;

}

overlay.setPosition(coordinate)

})

return () => map.setTarget(undefined)

}, [])

return (

<div>

<div ref={mapRef} style={{ width: "100%", height: "400px" }} />

<div ref={popupRef} className="ol-popup" style={popupStyle} />

</div>

)

}

const popupStyle = {

position: "absolute",

backgroundColor: "white",

padding: "5px",

borderRadius: "5px",

border: "1px solid black",

transform: "translate(-50%, -100%)",

pointerEvents: "none",

width: "220px",

color: "black"

};

export default MarkerPopupMap

Click anywhere on the map to create a popup:

Handling Map Events and User Interactions:

Interactive maps come to life when you handle events and user interactions effectively. OpenLayers simplifies this process by providing robust event handling mechanisms. Consider the following example demonstrating how to capture a click event on the map:

// Handle a click event on the map

map.on('click', (event) => {

const clickedCoordinate = event.coordinate;

console.log('Clicked Coordinate:', clickedCoordinate);

});

This example displays OpenLayers' event handling to log the coordinates of a click event on the map. you can extend this functionality to respond to various user interactions, such as dragging, zooming, or even custom gestures.

useState for Managing State: Use the useState hook to manage state within the React component. This is particularly useful for dynamic changes to the map, such as updating the center or zoom level based on user interactions.

const [mapCenter, setMapCenter] = useState([0, 0]);

// Update the map's center based on user interaction

const handleMapInteraction = (event) => {

const newCenter = event.map.getView().getCenter();

setMapCenter(newCenter);

};

Advanced OpenLayers Map Features

Adding Vector Layers and Working with GeoJSON Data

Vector layers in OpenLayers allow you to display and interact with vector data, opening up possibilities for intricate and detailed map representations. Leveraging GeoJSON, a popular format for encoding geographic data, is a common practice. Below is an example of incorporating a vector layer with GeoJSON data into a React component:

// VectorLayerMap.js

import { useEffect, useRef } from "react"

import "ol/ol.css"

import Map from "ol/Map"

import View from "ol/View"

import TileLayer from "ol/layer/Tile"

import OSM from "ol/source/OSM"

import VectorLayer from "ol/layer/Vector"

import VectorSource from "ol/source/Vector"

import GeoJSON from "ol/format/GeoJSON"

import {getCenter} from 'ol/extent';

const VectorLayerMap = () => {

const mapRef = useRef()

// read geojson feature

const geoJSONFeatures = new GeoJSON().readFeatures(geojsonObject)

// create vector source

const vectorSource = new VectorSource({

features: geoJSONFeatures,

})

// create vector layer with source

const vectorLayer = new VectorLayer({

source: vectorSource,

})

// default view

const view = new View({

center: [0, 0],

zoom: 2,

})

useEffect(() => {

const map = new Map({

target: mapRef.current,

layers: [

new TileLayer({

source: new OSM(),

}),

vectorLayer,

],

view: view

})

// fit view to geometry of geojson feature with padding

view.fit(geoJSONFeatures[0].getGeometry().getExtent(), { padding: [100, 100, 100, 100]});

return () => map.setTarget(undefined)

}, [])

return (

<div

ref={mapRef}

style={{ position: "relative", width: "100%", height: "400px" }}

></div>

)

}

export default VectorLayerMap

const geojsonObject = {

type: "Feature",

geometry: {

type: "MultiLineString",

coordinates: [

[

[-1e6, -7.5e5],

[-1e6, 7.5e5],

],

[

[1e6, -7.5e5],

[1e6, 7.5e5],

],

[

[-7.5e5, -1e6],

[7.5e5, -1e6],

],

[

[-7.5e5, 1e6],

[7.5e5, 1e6],

],

],

},

}

Optimizing React and OpenLayers Integration: Strategies for Rendering Performance

1. Addressing Rendering Performance Concerns:

Efficient rendering is paramount in any web application, and integrating OpenLayers with React requires careful consideration of performance concerns. Here are some strategies to address rendering performance:

Debouncing and Throttling: When handling events that trigger frequent updates, such as map movements or zoom changes, implement debouncing or throttling techniques. This prevents excessive re-renders and ensures that updates are processed at a controlled rate.

Batched State Updates: Use React's

setStatebatching mechanism to group multiple state updates into a single render cycle. This reduces the number of renders triggered by multiple state changes, resulting in a more efficient rendering process.

2. Implementing Lazy Loading for Map Components:

To enhance overall application performance, especially in scenarios where maps are not initially visible or are part of larger applications, consider implementing lazy loading for map components. This ensures that the OpenLayers library and associated map components are only loaded when needed.

- Dynamic Imports: Use dynamic imports and React's

React.lazyto load OpenLayers and map components lazily. This approach allows you to split your code into smaller chunks that are loaded on-demand, reducing the initial page load time.

// Example using React.lazy

const LazyLoadedMap = React.lazy(() => import('./LazyLoadedMap'));

const App = () => (

<div>

{/* Other components */}

<React.Suspense fallback={<div>Loading...</div>}>

<LazyLoadedMap />

</React.Suspense>

</div>

);