Artificial Intelligence (AI) is no longer a futuristic concept confined to science fiction. It's here, and it's everywhere. From our web browsers to our operating systems, AI is becoming an integral part of our digital lives. But as AI continues to permeate every aspect of our daily routines, one question arises: Is this always necessary?

The AI Everywhere Phenomenon

AI is being added to products and services at an unprecedented rate. Google recently announced AI integration in their Chrome browser, promising a more personalized browsing experience. OnePlus has introduced AI in its latest Oxygen OS 16 to create a Mind Space that collects and queries user data. Even Microsoft's SQL Server Management Studio (SSMS) version 22 comes with Copilot, an AI-powered assistant designed to streamline database management tasks.

While these advancements sound impressive, they also raise important questions about the necessity of AI in every tool we use. Is AI always an improvement, or are we risking overcomplication and potential security vulnerabilities?

In today's market, the mere mention of AI can be a powerful selling point. Products and services that incorporate AI often attract more attention and can command higher prices, simply because AI is seen as cutting-edge and innovative. This phenomenon is driven by a combination of consumer curiosity, the fear of missing out (FOMO), and the perception that AI-equipped products are more advanced or superior. However, this can lead to a situation where AI is added to products not because it genuinely enhances functionality or user experience, but because it is perceived as a valuable marketing tool. Companies may feel pressured to integrate AI to keep up with competitors, even if the actual benefits to the user are minimal or nonexistent.

The Problem with Forced AI Integration

One of the main issues with the current trend of AI integration is the lack of user choice. Many AI features are forced upon users without the option to opt out. This can be frustrating for those who prefer simplicity or have concerns about privacy. Imagine your grandparents trying to use a web browser or an operating system with AI features they don't understand or need. For them, these tools should be simple and intuitive, not filled with complex features they never asked for. For many, these tools are meant to be straightforward and efficient, and adding AI might complicate things without adding significant value.

Moreover, the forced integration of AI can lead to unnecessary complexity. Take the example of Google Chrome. For most users, a browser is a tool to access the internet quickly and securely. Adding AI might introduce new features, but it could also slow down the browser or introduce new vulnerabilities. The focus should be on improving core functionalities rather than adding flashy features that may not provide real value to the user.

Privacy and Security Concerns

With AI collecting and analyzing user data, there are significant implications for privacy and security. Users may be unaware of the extent to which their data is being collected and used. Transparency about data usage and robust security measures are essential to protect user information. Companies should provide clear options for users to control their data and opt out of AI-driven features if they choose.

Consider OnePlus's Oxygen OS 16, which uses AI to create a "mind space" that collects and queries user data. While this might sound innovative, it raises questions about the necessity of such features and the potential risks to user privacy. Do users really need an AI to manage their digital lives, or is this just another gimmick to stay competitive?

Consider the following scenario: A hacker gains access to the personalized AI models used by a popular operating system. Each user's AI model is tailored to their habits, preferences, and behaviors. With this information, the hacker can craft highly targeted phishing attacks, impersonate users with uncanny accuracy, and even manipulate users into revealing sensitive information. The impact of such a breach could be devastating, leading to identity theft, financial loss, and a loss of trust in AI technologies. This underscores the importance of robust security measures to protect user data and ensure that AI systems are not vulnerable to exploitation.

The Impact on Critical Thinking

One of the concerning trends with the increasing reliance on AI is the tendency for people to reduce their own critical thinking and reflection. Instead of taking the time to think through a problem or question, many individuals turn to AI for quick answers. While AI can provide information rapidly, it's not always accurate or tailored to the individual's specific context.

This over-reliance on AI can lead to a decrease in problem-solving skills and independent thinking. It's essential for users to understand that AI should be used as a tool to assist, rather than replace, their own cognitive processes. By relying too heavily on AI, we risk losing our ability to think critically and make well-informed decisions.

The Limitations of AI Training Data

It's crucial to remember that AI systems are trained on data created by humans. This data can come from various sources like code on GitHub, articles on Wikipedia, or other online content. However, not all of this data is accurate, relevant, or up-to-date.

For instance, code on GitHub may contain bugs or outdated practices, and articles on Wikipedia can have errors or biases. As a result, AI systems can inadvertently learn and propagate these inaccuracies. This highlights the importance of critically evaluating the information provided by AI and not assuming that it is always correct or unbiased.

The Economic Impact on Documentation Platforms

The increasing reliance on AI for quick answers and solutions is also having a significant impact on platforms that traditionally rely on advertising revenue from their documentation. For instance, Tailwind, a popular CSS framework, has seen an 80% decline in revenue due to a decrease in traffic to their documentation pages.

Similarly, journalism platforms are experiencing a drop in readership as users turn to AI for instant news summaries and answers. As more users turn to AI for instant answers, platforms like Tailwind and journalism websites are struggling to maintain their revenue streams. This shift highlights the broader economic implications of AI on the tech ecosystem and the need for alternative revenue models for open-source projects and documentation platforms.

As AI continues to reshape the digital landscape, even our favorite programming libraries and tools are at risk. How would you feel if your favorite programming library started to decline because users are turning to AI for quick answers instead of consulting the documentation? Would you be willing to see your favorite tools struggle to maintain their relevance and revenue streams?

The Balance Between Innovation and Necessity

Striking a balance between innovation and necessity is crucial. While AI has the potential to bring about groundbreaking improvements, it should not come at the cost of simplicity and usability. Companies should focus on identifying areas where AI can genuinely make a difference and avoid implementing it just because it's the latest trend.

For instance, Microsoft's SSMS version 22 with Copilot could potentially streamline database management tasks. However, it's important to ask whether AI is necessary for every tool or if we're risking overcomplication. User feedback and thorough testing can help determine where AI adds value and where it might be superfluous.

The Importance of User Choice

Giving users the choice to opt in or out of AI features is essential. This approach respects user preferences and allows individuals to customize their experience according to their needs and comfort levels. When companies force AI on users without providing an option to disable it, they risk alienating those who prefer a simpler, more straightforward experience.

The importance of user choice cannot be overstated. Currently, the extent of what AI gathers from users is not always clear. Users should have the ability to clearly define what data can and cannot be used by AI systems. This could be achieved through a transparent and user-friendly consent mechanism, similar to the cookie consent pop-ups we see on websites. Just as users can choose which cookies to allow, they should be able to specify what data AI can collect and how it can be used. This level of transparency and control would not only empower users but also build trust in AI technologies. Companies should prioritize clear communication about data usage and provide straightforward options for users to manage their preferences.

The Future of AI: Striking the Right Balance

As AI continues to evolve, it's important for companies to consider the actual needs and preferences of their users. AI should be an opt-in feature rather than a forced addition. By prioritizing simplicity, security, and user choice, we can ensure that AI is used responsibly and effectively.

User feedback and thorough testing are crucial in determining where AI adds value and where it might be superfluous. Companies should focus on areas where AI can genuinely make a difference and avoid implementing it just because it's the latest trend.

Conclusion

While AI has the potential to revolutionize many aspects of our lives, its widespread integration into every product and service is not always necessary or beneficial. It's crucial for companies to consider the actual needs and preferences of their users. By prioritizing simplicity, security, and user choice, we can ensure that AI is used responsibly and effectively.

However, there is hope on the horizon. Innovations in privacy-focused AI, such as Confer, created by the founder of Signal, are emerging. These tools prioritize user privacy and data security, demonstrating that it is possible to harness the power of AI without compromising personal information. As these privacy-first AI solutions continue to develop, they offer a promising alternative to the current trend of data-hungry AI systems.

What are your thoughts on the proliferation of AI in everyday tools? Do you think it's necessary or just a trend?

]]>It was 5:06 AM on December 6, 2025, when my phone lit up with a notification from my server hosting provider:

Your VPS plan has reached its CPU limit, which may cause your website or application to slow down, or even become temporarily unavailable.

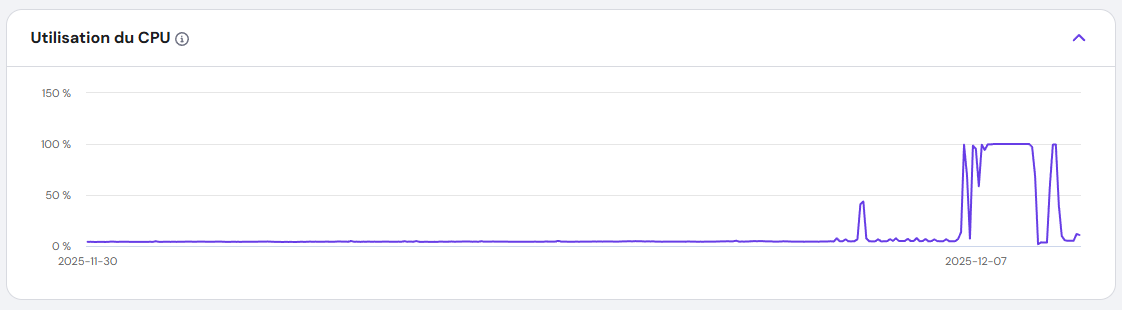

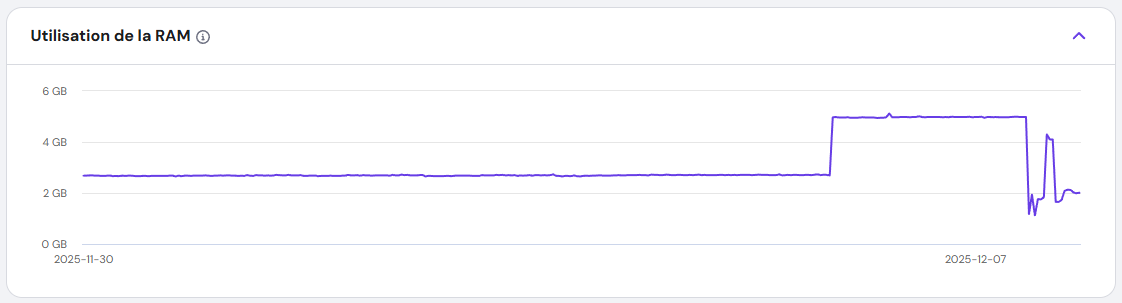

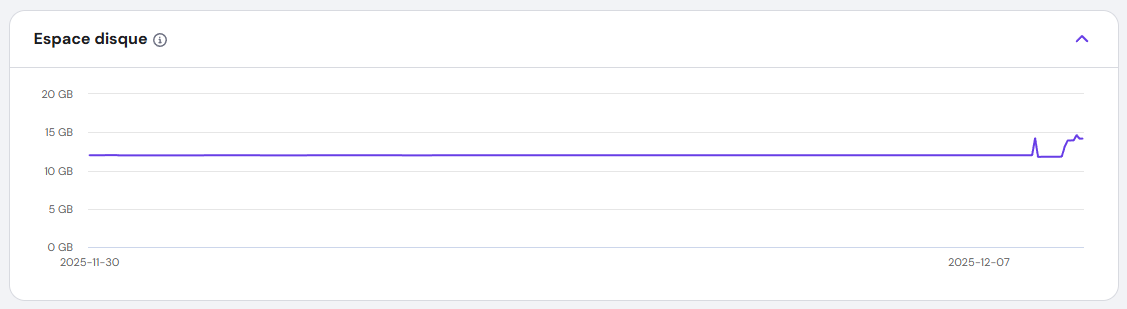

I rubbed my eyes, assuming it was a false alarm. But when I logged into my dashboard, the CPU usage graph told a different story: a flat line at 100%, stretching back over an hour. My VPS, which usually idled at a quiet 4%, was now screaming under full load.

I hadn’t touched the server in days. No new deployments. No configuration changes. Something was wrong.

Everything else seemed OK from my point of view.

The Hunt for the Culprit: Stopping the Bleeding

First Suspicions: DDoS or a Runaway Process?

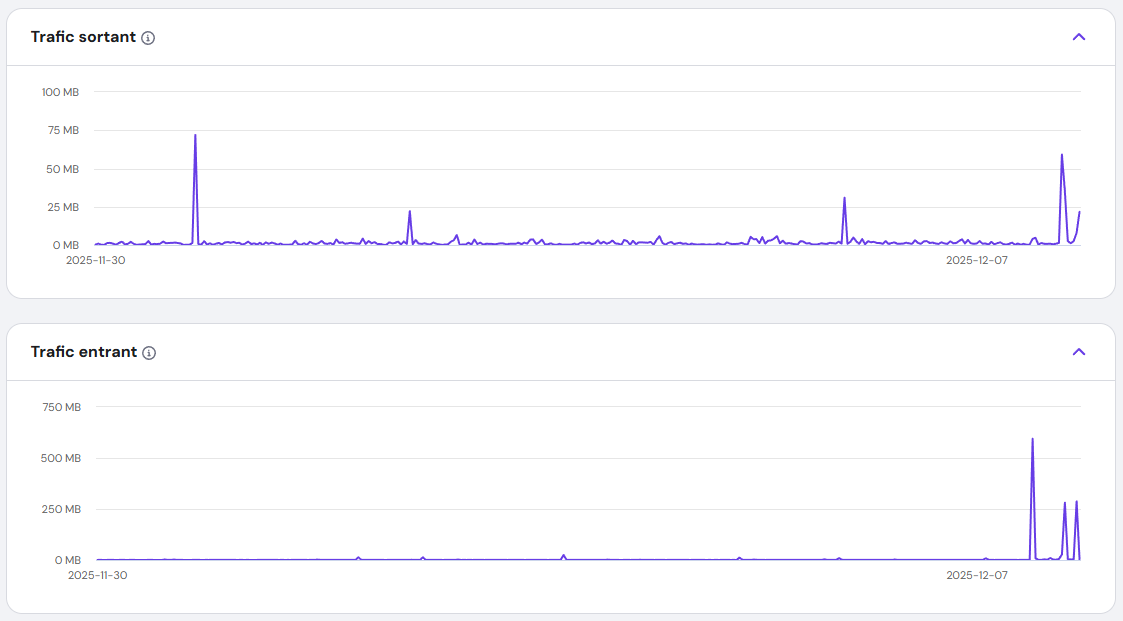

I ruled out a DDoS attack quickly—my traffic logs were normal. Next, I wondered if one of my applications had gone into an infinite loop. I restarted the VPS, but the problem persisted. The CPU usage remained stubbornly at 100%.

The Breakthrough: Umami Analytics

I run multiple Docker containers managed by Dokploy. One by one, I stopped each container, watching the CPU graph like a hawk. And then—success. When I stopped the Umami Analytics container, the CPU usage plummeted.

Umami? It’s just an analytics tool. Why was it suddenly consuming all my resources?

Uncovering the Malware: A Hidden Crypto Miner

The Virus Scan

I installed a virus scanner and let it run. Within minutes, it flagged a suspicious binary: fghfg, buried in a Docker volume. But the real shock came when I found apache.sh, a shell script connecting to https://tr.earn.top.I didn't know exactly what it was, but it knew it was not something good.

The Next.js Connection

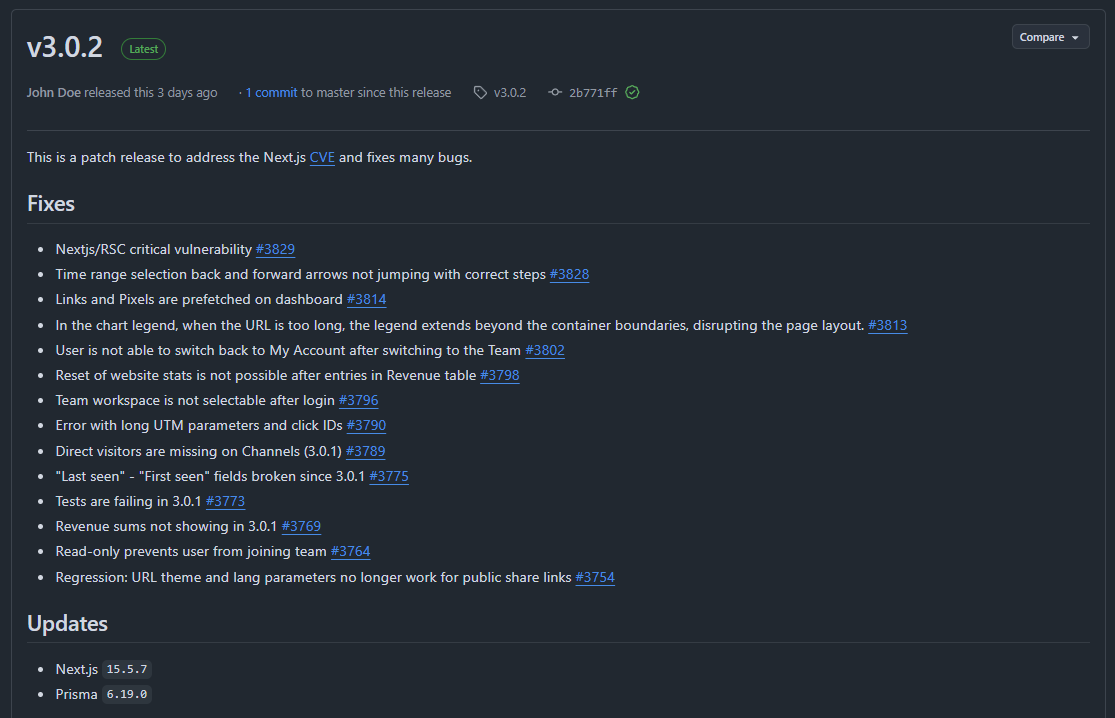

The files were in a folder named Nextjs. That’s when it clicked. Earlier that week, I’d read about CVE-2025-55182 and CVE-2025-66478, a critical vulnerability in React Server Components. I checked the Umami Analytics GitHub repo and saw the confirmation: a recent patch for the compromised Next.js 15.3.1 dependency.

I was running Umami 2.18.1, which used the vulnerable version. The Next.js team’s official blog post confirmed my fears: attackers were exploiting this flaw to inject malicious code.

The Fix: Reclaiming My Server

Delete the Malware

I immediately deleted fghfg and apache.sh, but I knew the damage might run deeper.

Update and Rebuild

I pulled the latest Umami Analytics (3.0.2), which used the patched Next.js version. Then, I rebuilt the Docker container from scratch, ensuring no traces of the malware remained.

Watch the Recovery

I refreshed the CPU graph. The line dropped from 100% to normal levels within minutes. My VPS was finally breathing again.

The Bigger Lesson: Supply Chain Attacks in Node.js

This wasn’t just bad luck—it was a supply chain attack. Someone had exploited a vulnerability in a trusted library (Next.js) to turn my server into a crypto-mining slave. The scariest part? I never saw it coming.

Why This Matters

- Outdated dependencies are a ticking time bomb. Even one unpatched library can open the door to attackers.

- Docker volumes can hide malware. If you’re not scanning them, you might miss hidden threats.

- Supply chain attacks are rising. The Node.js ecosystem, with its vast dependencies, is a prime target.

How to Protect Yourself: Lessons from the Front Lines

Automate Updates

Set up automated dependency updates for your projects. Tools like npm audit and yarn audit can catch vulnerabilities before they become disasters.

Scan Your Containers

Use Trivy or Clair to scan Docker images for hidden threats. Don’t assume your containers are safe—verify it.

Monitor Relentlessly

CPU spikes? Unusual processes? Investigate immediately. Tools like Netdata or Prometheus can alert you before things spiral out of control.

Minimize Your Attack Surface

Use minimal base images for Docker. The fewer packages you have, the fewer opportunities for attackers.

Conclusion: A Wake-Up Call

That night, I learned a hard lesson: Security isn’t just about firewalls—it’s about vigilance. A single outdated dependency turned my VPS into a crypto-mining rig. But by acting fast—updating, scanning, and monitoring—I took back control.

While this was a serious cyber attack, there’s something oddly rewarding about facing such challenges. It forced me to dig deeper, learn more, and grow as a developer and sysadmin. Every problem is an opportunity to sharpen your skills, and this one reminded me just how important it is to stay proactive in the ever-evolving world of cybersecurity.

The digital world is full of hidden threats, but you don’t have to be a victim. Stay updated, stay alert, and never let your guard down. And remember: even the toughest challenges can become valuable lessons.

]]>Imagine your self-hosted Azure DevOps agents as a team of hardworking robots. They build, test, and deploy your code day in and day out—no coffee breaks, no complaints. But just like your laptop after months of downloads, they start to slow down, cluttered with old files, cached data, and forgotten artifacts.

Enter Azure DevOps Maintenance Jobs: the automated cleanup crew that keeps your agents running like they just rolled off the assembly line. No more manual deletions, no more "why is my pipeline failing?" mysteries—just smooth, efficient automation.

In this guide, we’ll walk through:

- What Azure DevOps is and why it’s a developer’s best friend

- The lowdown on agent pools, pipelines, and releases

- Self-hosted agents: the good, the bad, and the "why did my disk fill up?"

- A step-by-step guide to setting up maintenance jobs

- Why this tiny feature is a game-changer for your CI/CD workflow

Ready to give your agents the TLC they deserve? Let’s get started!

What Is Azure DevOps?

Azure DevOps is Microsoft’s all-in-one platform for planning, developing, testing, and deploying software. It combines:

- Repos (Git repositories)

- Pipelines (CI/CD automation)

- Boards (Agile project management)

- Test Plans (manual and automated testing)

- Artifacts (package management)

Whether you’re a solo developer or part of a large enterprise, Azure DevOps helps streamline workflows, reduce manual errors, and speed up releases.

What Are Agent Pools, Pipelines, and Releases?

Agent Pools

An agent pool is a collection of machines (agents) that execute jobs in your pipelines. Azure DevOps offers two types:

- Microsoft-hosted agents (managed by Azure, no maintenance required)

- Self-hosted agents (run on your own infrastructure, giving you full control)

Pipelines & Releases

- Pipelines automate builds, tests, and deployments.

- Releases manage the deployment of your application across different environments (dev, staging, production).

Self-hosted agents are great for customization but require manual maintenance—unless you set up maintenance jobs!

Self-Hosted Agents: Pros & Cons

Pros

- Full control over hardware, software, and security

- Cost-effective for long-running or high-frequency jobs

- Custom environments (specific tools, dependencies, or configurations)

Cons

- Maintenance overhead (updates, cleanup, monitoring)

- Risk of clutter (old jobs, cached files, disk space issues)

- Manual intervention required if not automated

Solution? Maintenance jobs!

How to Configure Maintenance Jobs in Azure DevOps

Maintenance jobs help automatically clean up old jobs, free disk space, and keep agents running efficiently. Here’s how to set them up:

Step-by-Step Guide

Go to Organization Settings. From your Azure DevOps home page, click on Organization settings on the bottom left corner.

Navigate to Agent Pools. Under Pipelines, select Agent pools.

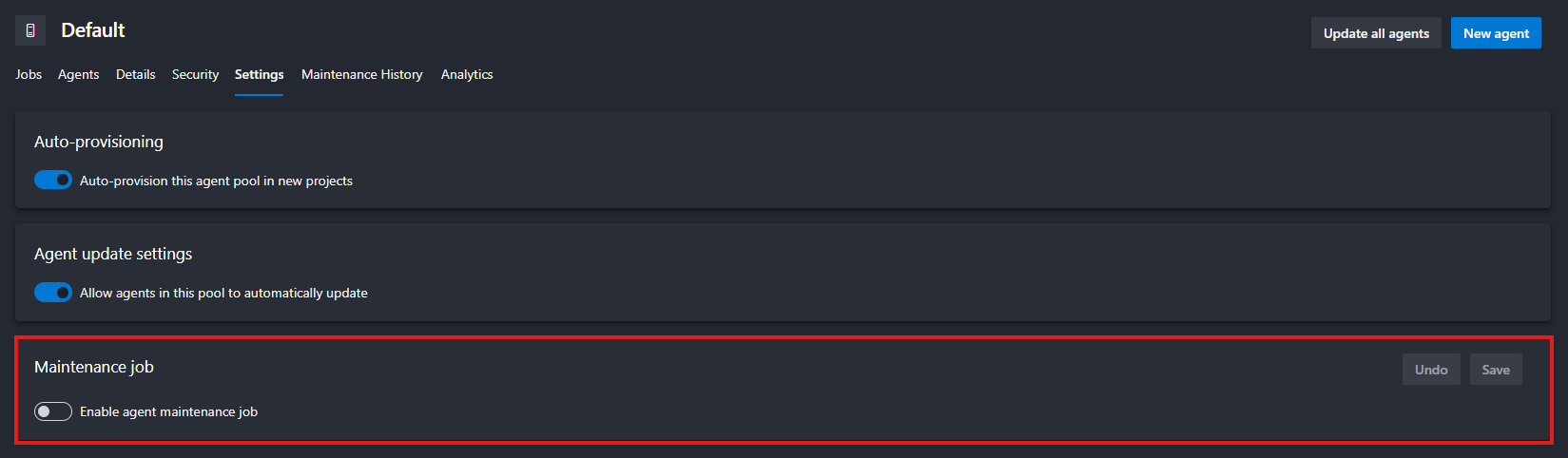

Choose the pool you want to configure (e.g., Default) then click the Settings tab at the top.

Toggle Enable maintenance jobs to On.

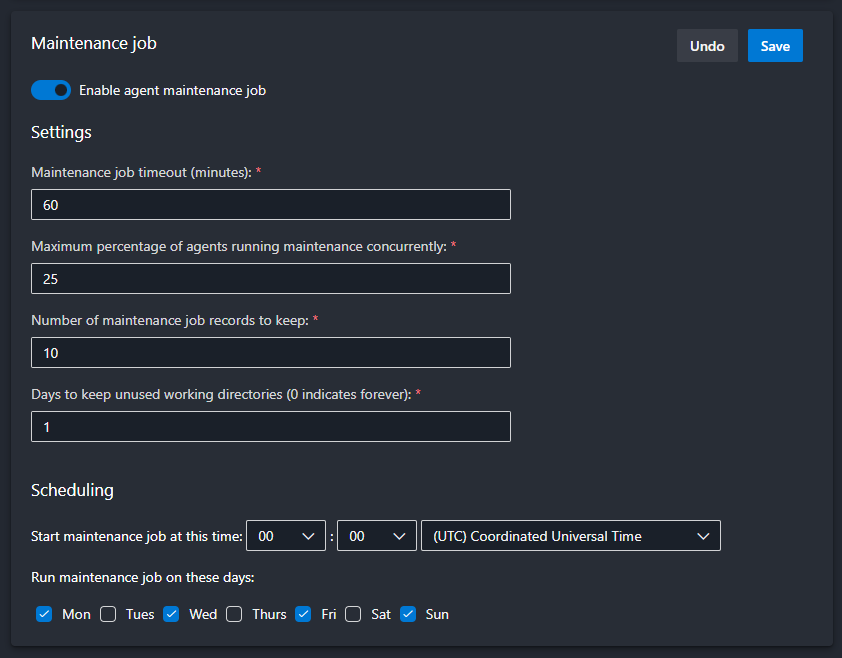

Configure the Schedule

- Set the frequency (daily, weekly, custom).

- Define retention policies (e.g., delete jobs older than 30 days).

Don't forget to Save at the end.

Why Maintenance Jobs Are a Game-Changer

- Prevents disk space issues (no more failed jobs due to full storage!)

- Reduces manual cleanup (set it and forget it)

- Improves agent performance (faster builds, fewer errors)

- Enhances security (removes old, potentially vulnerable files)

Final Thoughts

Self-hosted agents give you power, but with great power comes great responsibility. Maintenance jobs are your secret weapon to keep things running smoothly without the hassle.

]]>Let’s be honest: most of us don’t think about online privacy until something goes wrong. Maybe it’s a creepy ad that just happens to know you were talking about buying a new toaster. Or maybe it’s the sinking feeling when you realize that photo of your kids you posted five years ago is now floating around on some shady data broker’s website.

Here’s the truth: The internet wasn’t built for privacy. It was built for convenience, for connection, and—let’s not sugarcoat it—for companies to make money off your data. But here’s the good news: You don’t have to accept that.

Now, before we dive in, let’s clarify what this article isn’t about. We’re not here to lecture you on multi-factor authentication (MFA), strong passwords, or random usernames—though those are important! Instead, we’re focusing on what you share, who sees it, and how to take back control of your digital life.

This isn’t about becoming a paranoid hermit who communicates only via carrier pigeon. It’s about making conscious choices—deciding what you share, with whom, and on your own terms. Because, let’s face it, the world doesn’t need to know what you had for breakfast, where you went on vacation, or what you Googled at 2 a.m. after binge-watching conspiracy theories.

So, why should you care? Let’s break it down.

The Social Issue: Oversharing in the Age of Algorithms

The World Doesn’t Need to Know Everything About Me

Remember the days when sharing your life meant inviting neighbors over for a slide show of your vacation photos? You’d gather in the living room, flip through a few dozen prints, and maybe endure a few eye rolls at Uncle Bob’s 200th sunset shot. It was personal. It was contained. And most importantly, it wasn’t permanent.

Fast forward to today, and we’ve replaced those slide shows with real-time broadcasts of our lives. Every meal, every gym session, every minor achievement—even the mundane, like what we had for breakfast or how we organized our sock drawer—gets uploaded, shared, and archived for hundreds, if not thousands, of people to see. Many of whom? Strangers. People we’ve never met, whose intentions we don’t know, and who—let’s be honest—probably don’t care as much as we think they do.

The Problem With Treating Life Like a Reality Show

We’ve been conditioned to believe that sharing equals connection. But at what cost?

- Your personal life isn’t a reality show. That photo of your kid’s first day of school? It’s adorable now, but in 10 years, will your child thank you for making it searchable forever? Kids grow up with digital footprints they never consented to—and once something’s online, it’s nearly impossible to erase. Imagine your most embarrassing childhood moment following you into job interviews. That’s the reality we’re creating for the next generation.

- Your home isn’t a public exhibit. Posting pictures of your house, your car, or even your daily routine might seem harmless, but it’s essentially handing out a map of your life to strangers. Burglars do use social media to scope out targets—vacation posts are basically neon signs saying, “My house is empty!” And let’s not forget the creepier side: geotagging your location in real-time is like sending an open invitation to anyone who wants to find you.

- Algorithms thrive on drama (and so do trolls). Ever notice how the more you engage with polarizing content, the more the algorithm shoves it in your face? That’s not an accident. Social media platforms profit from outrage, anxiety, and addiction. The more you react, the more they learn about you—and the more they can manipulate what you see. Before you know it, you’re stuck in a digital echo chamber, surrounded by content that’s designed to keep you scrolling, not to inform or uplift you.

- Would you hand a stranger your photo album? Probably not. So why do we willingly post our most intimate moments for the world to see, judge, and potentially exploit? We’ve blurred the line between sharing with friends and performing for an audience—and in the process, we’ve lost control over our own narratives.

The Psychological Toll of Living in a Fishbowl

Here’s the thing: Oversharing doesn’t just put your privacy at risk—it messes with your head.

- Comparison culture. When everyone’s life looks like a highlight reel, it’s easy to feel like you’re falling behind. But remember: No one posts their failures, their bad days, or their mundane moments. (Well, except for that one friend who loves complaining about their commute. You know who you are.)

- The pressure to perform. If you’re constantly documenting your life, you’re not just living—you’re directing. That’s exhausting. When did we decide that every moment needs to be content?

- Digital exhaustion. The more you share, the more you invite unsolicited opinions, judgments, and even harassment. Not everything needs an audience. Some memories are better kept just for you.

How to Share Mindfully (Without Becoming a Hermit)

Look, we’re not saying you should delete all your social media and move to a cabin in the woods (unless that’s your thing—no judgment). But it’s worth asking: What am I really gaining from this post?

- The 10-Year Rule: Before posting, ask yourself: Would I want this to be public in 10 years? If the answer is no, hit delete.

- The Stranger Test: If you wouldn’t show it to a random person on the street, don’t post it online.

- The Algorithm Audit: Notice how certain posts make you feel. If it’s stress, anxiety, or emptiness, it’s time to reevaluate.

Your life is yours. Not the internet’s. Not the algorithms’. Yours. And the beautiful thing about memories? The best ones don’t need an audience.

The Security Issue: Cybersecurity Nightmares

In Case of a Cyber Attack, the World Can Know Everything About You

Let’s talk about data breaches—those digital disasters that seem to happen almost weekly. You’ve heard the horror stories: hackers leaking passwords, credit card numbers, private messages, and even medical records. But here’s the part no one really talks about: Once your data is out there, it’s out there forever.

Think of the internet like a tattoo. You can try to cover it up, but it’s always there under the surface. That “deleted” tweet? Archived by multiple services. That “private” Facebook post? Screenshotted, shared, and potentially weaponized. That embarrassing photo from your college days? Living rent-free on some obscure server, just waiting to resurface at the worst possible moment.

And it’s not just about embarrassment. Your digital footprint is a goldmine for cybercriminals.

How Your Data Becomes a Weapon

- The "Deleted" Myth

- When you hit "delete," you’re not erasing your data—you’re just hiding it from your view.

- Wayback Machine, Google Cache, and data brokers ensure that almost nothing truly disappears.

- Example: Remember when old tweets from celebrities and politicians resurfaced years later, ruining careers? That could be you.

- Oversharing = A Hacker’s Treasure Map

- Phishing scams often start with publicly available info. Your pet’s name? Your mother’s maiden name? Your high school mascot? All common security questions—and all easily found on social media.

- Identity theft isn’t just about stealing credit card numbers. With enough personal details, criminals can open accounts, take out loans, or even file taxes in your name.

- Blackmail and doxxing are on the rise. That “harmless” rant you posted? It could be used against you.

- Your Data Is for Sale (Whether You Like It or Not)

- Ever gotten a call from "Microsoft Support" about a virus you definitely didn’t have? Congrats, your data was sold.

- Data brokers buy and sell your info like it’s a commodity. Your phone number, email, address, and even your shopping habits are packaged and traded in the shadows of the internet.

- Scammers don’t need to hack you—they just need to buy your data.

- The Domino Effect of a Single Breach

- One leaked password can unlock multiple accounts if you reuse credentials (which, let’s be honest, most of us do).

- Email breaches expose not just your inbox but every service tied to that email—banking, social media, subscriptions.

- Even "harmless" data (like your Netflix watch history) can be used to profile you for scams, ads, or even discrimination.

Real-Life Consequences (Because This Isn’t Just Theory)

- Job Loss: Employers do check social media. One ill-advised post can cost you a career.

- Financial Ruin: Identity theft can destroy your credit score in hours.

- Reputation Damage: Once something goes viral for the wrong reasons, you can’t unring that bell.

- Physical Safety: Stalkers and criminals use overshared info to track victims.

The Uncomfortable Truth: Nothing Is Truly Private Online

We like to think we’re in control. We set our profiles to "private," we delete old posts, we ignore those Terms & Conditions pop-ups. But here’s the hard truth: If it’s online, it’s vulnerable.

- Cloud backups? Hackable.

- Direct messages? Leakable.

- "Private" groups? Screenshotable.

The only real privacy is the data you never share in the first place.

What You Can Do (Without Becoming a Paranoid Recluse)

You don’t need to delete all your accounts and live off-grid (unless you want to). But you do need to adopt a mindset of digital minimalism.

- Assume Everything You Post Is Public—Forever.

- If you wouldn’t want it on a billboard, don’t put it online.

- Audit Your Digital Footprint.

- Google yourself. See what’s out there.

- Delete old accounts you no longer use.

- Use tools like HaveIBeenPwned to check if your data has been leaked.

- Lock Down What You Can’t Delete.

- Enable two-factor authentication (yes, even though we said we wouldn’t focus on security, this is non-negotiable).

- Use a password manager to avoid reusing passwords.

- Adjust privacy settings on social media—but remember, “private” ≠ “safe.”

- Be Skeptical of "Free" Services.

- If you’re not paying for the product, you are the product.

- Opt for privacy-focused alternatives (we’ll cover those later).

- Think Before You Share.

- Do you really need to post that? Will it add value to your life, or just clutter the internet?

- Who is this for? Friends? Family? Strangers? Adjust your audience accordingly.

Bottom Line: The Less You Share, the Less There Is to Steal

The internet is forever. And in a world where data breaches are inevitable, the best defense is not giving hackers, scammers, and data brokers anything to work with.

Your privacy isn’t just about hiding—it’s about protecting the life you’ve built. And trust us, future you will be grateful.

A Matter of Principle: Who Really Benefits From Your Data?

Companies Use Your Data to Sell It to Data Brokers—and You’re Footing the Bill

Let’s start with a hard truth: If you’re not paying for a product, you are the product. That “free” app, social network, or service isn’t doing you a favor. It’s trading your personal information for profit—and you’re none the wiser.

Your Data Is Big Business (And You’re Not Getting a Cut)

Imagine this: Every click, like, search, and location ping is collected, analyzed, and sold to the highest bidder. Companies like Google, Facebook (Meta), Amazon, and even lesser-known data brokers don’t just have your data—they package it, auction it, and use it to influence your behavior.

Ever had an ad so creepily accurate it felt like your phone was reading your mind? That’s not a coincidence. That’s surveillance capitalism—a business model where your attention, habits, and personal details are the currency.

- Google doesn’t just track your searches—it logs your location, emails, YouTube history, and even voice recordings (thanks, "Hey Google").

- Facebook (Meta) doesn’t just know who your friends are—it tracks your off-platform activity through pixels and third-party apps.

- Amazon doesn’t just sell you products—it monetizes your shopping habits, Alexa queries, and even what you almost bought.

This isn’t just advertising—it’s manipulation. The more they know about you, the better they can nudge you toward purchases, opinions, and even political beliefs.

But They Need My Data to Offer a Better Service! (Do They, Though?)

Sure, some data collection is necessary for functionality. If Spotify didn’t know what songs you listened to, it couldn’t generate your Discover Weekly playlist. If Google Maps didn’t access your location, it couldn’t give you directions.

But here’s the catch: Most apps demand far more data than they need—and they’re not always honest about why.

- Does a weather app really need access to your contacts, microphone, and precise location 24/7? (No.)

- Does a mobile game really need to scan your photos and files? (Absolutely not.)

- Does a flashlight app really need to track your location? (You already know the answer.)

These permissions aren’t just invasive—they’re a security risk. Every extra piece of data you hand over is another potential entry point for hackers, scammers, and data brokers.

The Slippery Slope of App Permissions: How "Convenience" Becomes Exploitation

Mobile apps have normalized outrageous data collection under the guise of "improving user experience." But let’s call it what it is: exploitation.

Here’s how it works:

- They Ask for More Than They Need

- Example: A fitness app asks for access to your contacts, camera, and browsing history. Why? Because they can sell that data—or use it to profile you for ads.

- They Bury the Real Reason in Legal Jargon

- Ever read a Terms & Conditions document? Neither have we. That’s the point. Companies count on you not paying attention.

- They Make It Hard to Say No

- "Allow access to proceed" isn’t a choice—it’s coercion. Deny permissions, and suddenly the app "doesn’t work properly." (Spoiler: It works fine. They just want your data.)

- They Sell Your Data to Third Parties

- Your info gets bundled and sold to data brokers, advertisers, and even government agencies. Ever wonder why you get spam calls from numbers you’ve never seen? Now you know.

The Rare Exceptions: When Data Collection Actually Benefits You

Not all data collection is evil. Some companies use your data for you—not for profit.

- Spotify’s Yearly Wrap is a fun, personalized recap because it’s designed for your enjoyment, not to sell you something.

- Duolingo’s streaks and progress tracking help you learn a language—not to manipulate you into buying ads.

- Password managers store your credentials to keep you secure, not to monetize your logins.

The key difference? These services give you value in return—they don’t exploit your data for hidden profits.

The Real Question: Is Convenience Worth the Cost?

We’ve been conditioned to trade privacy for convenience. But at what point do we ask: Is it really worth it?

- Do you really need to log in with Facebook to use a random quiz app?

- Is it really necessary to give a food delivery app access to your contacts?

- Are personalized ads really improving your life—or just making corporations richer?

Here’s the thing: You don’t have to quit the internet to protect your privacy. But you should question why companies want your data—and what they’re really doing with it.

What You Can Do: Take Back Control

You don’t have to boycott all tech to push back against surveillance capitalism. Start with these small but powerful steps:

- Deny Unnecessary Permissions

- If an app asks for access it doesn’t need, say no. (Yes, even if it complains.)

- Use Privacy-Focused Alternatives

- Ditch Google for DuckDuckGo.

- Swap Gmail for Proton Mail.

- Replace Chrome with Brave or Firefox.

- Opt Out of Data Collection

- Disable ad tracking in your phone settings.

- Use a VPN to mask your browsing activity.

- Request your data from companies (GDPR gives you this right!) and delete what you can.

- Support Ethical Companies

- Choose services that prioritize privacy (like Signal over WhatsApp, or Nextcloud over Google Drive).

- Pay for premium versions if it means no ads, no tracking.

- Spread the Word

- Talk about privacy with friends and family. The more people demand better, the more companies will have to listen.

Bottom Line: Your Data Is Yours—Act Like It

At the end of the day, your data belongs to you—not to Mark Zuckerberg, not to Google, and certainly not to some shady data broker.

Privacy isn’t about hiding—it’s about choice. It’s about deciding who gets to know what about you, and why.

So next time an app asks for more than it needs, ask yourself: Who’s really benefiting from this? And if the answer isn’t you—hit decline.

Best Practices: How to Protect Your Privacy Without Losing Your Mind

Let’s get one thing straight: You don’t need to live like a digital recluse to protect your privacy. You don’t have to delete all your social media, communicate in Morse code, or move to a cabin in the woods (unless you want to, in which case, more power to you). Privacy isn’t about paranoia—it’s about making smarter choices.

Here’s how to lock down your digital life without losing your mind—one simple step at a time.

Ask Yourself: "Do I Really Need an Account Here?"

We’ve all been there: You want to read an article, try a new app, or enter a giveaway, and suddenly, you’re forced to create an account. But before you hand over your email (or worse, your phone number), ask yourself:

- Would I give this information to a stranger on the street? If the answer is no, don’t give it to a random website.

- Is there a guest checkout option? Use it. Fewer accounts = fewer ways your data can leak.

- Do I actually need this service? If it’s a one-time thing, use a temporary email (like Temp-Mail) or skip it entirely.

Pro tip: If a service insists you create an account for something trivial (like reading a blog post), it’s not worth your privacy. Walk away.

Read the Terms and Conditions (Yes, Really)

We get it—no one actually reads the Terms & Conditions. They’re longer than a Game of Thrones novel and written in legalese so dense it could stop a bullet. But here’s the thing: If a company’s privacy policy is longer than a Harry Potter book, that’s a huge red flag.

You don’t need to read every word. Just skim for these key details:

What data do they collect?

- Are they tracking your location, contacts, browsing history, or biometrics? If so, why?

Who do they share it with?

- Do they sell your data to third-party advertisers, data brokers, or "partners"?

- Look for phrases like "we may share your data with trusted partners"—that’s code for "we sell your info."

How long do they keep it?

- Some companies delete your data after a set time. Others keep it forever. Guess which ones you should avoid?

Can you delete your data?

- If the answer is no (or buried in fine print), run.

Quick hack: Use tools like ToS;DR (Terms of Service; Didn’t Read) to get plain-English summaries of privacy policies.

Prioritize Tools That Respect Legal Standards

Not all countries (or companies) treat your privacy equally. Some have strong laws. Others? Not so much.

GDPR Compliance (EU Users, Rejoice!)

- The General Data Protection Regulation (GDPR) is one of the strictest privacy laws in the world.

- If a company is GDPR-compliant, it means they can’t just do whatever they want with your data.

- Look for this when choosing services—especially if you’re in the EU.

Avoid Tools Based in Countries with Weak Privacy Laws

- Some countries (looking at you, certain U.S. states) have lax data protection laws, meaning companies can sell your data with little oversight.

- China, Russia, and some Middle Eastern countries also have questionable data practices—be cautious with apps based there.

Rule of thumb: If a company is based in a country with strong privacy laws (like Switzerland, Germany, or Canada), they’re more likely to respect your data.

Choose Tools That Are Actually Private

Not all apps are created equal. Here’s what to look for in a truly privacy-focused tool:

End-to-End Encrypted (E2EE)

- No one—not even the company—can read your data.

- Examples: Signal (messaging), Proton Mail (email), Tresorit (cloud storage).

Offline-First (Or At Least Offline-Friendly)

- Less cloud dependency = less exposure to breaches.

- Examples: Joplin (notes), Cryptomator (encrypted files), KeePassXC (passwords).

Open-Source (Because Transparency Matters)

- Open-source tools let anyone inspect the code—so you know there’s no hidden tracking.

- Examples: Signal, Linux, Nextcloud, Firefox.

Easy to Delete (No Hostage Situations)

- If you can’t leave easily, it’s a trap.

- Red flag: Services that make you jump through hoops to delete your account.

- Green flag: Companies like Proton Mail and Tutanota let you nuke your data with one click.

Bonus: Look for tools that don’t require a phone number or real email to sign up. The less they know about you, the better.

Use Email Aliases to Catch Data Leaks (Like a Spy)

Ever wonder who’s selling your email to spammers? Here’s how to find out—and stop it.

Services like:

- SimpleLogin (now part of Proton)

- Firefox Relay

- AnonAddy

let you create unique email aliases for every site. Here’s why that’s genius:

Track Who Sells Your Data

- If you start getting spam at

[email protected], you know Amazon (or one of its partners) leaked your info.

Block Spam Before It Starts

- Disable an alias if it gets compromised. No more endless unsubscribe clicks.

Keep Your Real Email Private

- Never give out your real email again. Use aliases for everything—even friends who love forwarding chain emails.

Pro tip: Use a different alias for every service. That way, if one gets hacked, the rest stay safe.

But What Happens When Companies Refuse to Let You Use Aliases?

Just when you think you’ve got your privacy locked down, some companies actively work against you. A few years ago, a major gaming platform (you know the one) banned email aliases entirely, forcing users to hand over their real email addresses if they wanted to play. Suddenly, privacy tools like SimpleLogin and FastMail were off the table—and users had to choose between access and anonymity.

Why does this matter? Because it’s not just about gaming—it’s about control. And it’s a warning sign for all of us.

- Forcing Real Emails = More Data Collection

- The company claimed this was to "prevent fraud" and "improve security." But in reality, it was about controlling user data.

- By banning aliases, they ensured every user was tied to a real, traceable email, making it easier to track, profile, and monetize players.

- A Direct Attack on Privacy Tools

- Email aliases (like those from FastMail, SimpleLogin, or AnonAddy) are a key privacy tool. They let users mask their real email, reducing spam and tracking.

- By blocking aliases, the company removed a layer of privacy that many users relied on.

- Setting a Dangerous Precedent

- This wasn’t the first company to do this, and it won’t be the last. When big platforms force users to hand over real emails, it normalizes the idea that privacy is optional.

- Message to users: "Your convenience is less important than our data collection."

- The Hypocrisy of "Security" Claims

- The company framed this as a security measure, but real security doesn’t require sacrificing privacy.

- If they really cared about fraud, they could’ve implemented better verification methods—like two-factor authentication (2FA)—without banning aliases outright.

Prioritize Tools That Let You Actually Delete Your Account

Here’s a hard truth: If a company makes it difficult to leave, they don’t respect you. They see you as a product, not a person.

Red Flags (Run Away!)

- "Contact support to delete your account" (Why should you beg to leave?)

- "Your data will be retained for [vague time period]" (Translation: We’ll keep it forever.)

- No delete option at all (Yes, some apps still do this.)

Green Flags (Stay a While)

- "Delete my account" button in settings. (Looking at you, Proton Mail and Tutanota.)

- Automatic data deletion after inactivity.

- Clear explanations of what happens to your data when you leave.

Remember: If a service treats leaving like a breakup ("But why?! We can change!"), it’s not worth your time.

Beware of Default Settings: The Sneaky Data Leaks You Didn’t Sign Up For

Here’s a dirty little secret: Most apps and services are designed to share your data by default. They count on you not checking the settings—because if you did, you’d probably turn half of it off.

Why Default Settings Are a Privacy Nightmare

- They’re Set to "Share Everything"

- Example: That new app you downloaded? It’s probably sending your usage data, location, and device info to "improve the service" (aka sell to advertisers).

- Example: Windows 10/11 tracks your keystrokes, app usage, and even your voice data unless you manually disable it.

- They Bury the Opt-Out in Menus

- Example: Instagram’s default settings allow it to track your activity across other apps and websites. You have to dig into Settings > Ads > Ad Preferences to turn it off.

- Example: Many smart TVs send your viewing habits to manufacturers unless you opt out during setup (which most people skip).

- They Use Vague, Misleading Language

- "Help improve our services" = We’re selling your data.

- "Personalized experience" = We’re tracking everything you do.

- "Diagnostics and usage data" = We’re logging your every move.

- They Reset After Updates

- Example: iOS and Android sometimes re-enable tracking after updates. Always double-check your settings after installing a new version.

How to Fight Back: A Quick Settings Checklist

Before you use any new app or service, do this first (it takes 2 minutes and saves years of regret):

Check Privacy Settings Immediately

- Look for options like:

- "Data Sharing" (turn it OFF)

- "Analytics" (disable it)

- "Personalized Ads" (opt out)

- "Location Access" (set to "Never" or "While Using")

Deny Unnecessary Permissions

- Does a calculator app need your contacts? No.

- Does a game need your microphone? Probably not.

- Rule of thumb: If it doesn’t need the permission to function, don’t grant it.

Opt Out of "Improvement Programs"

- These are just fancy names for data collection.

- Example: Windows’ "Diagnostic Data" or Apple’s "iCloud Analytics."

Disable "Personalized Ads"

- Google: Ad Settings → Turn off ad personalization.

- Facebook/Instagram: Settings → Ads → Ad Preferences → Reset or turn off.

- iOS/Android: Go to your advertising ID settings and opt out.

Turn Off "Usage Data" Sharing

- Example: In Zoom, go to Settings → Telemetry and disable it.

- Example: In Spotify, turn off "Share my listening activity on Facebook."

Use a Burner Email for Sign-Ups

- SimpleLogin, Firefox Relay, or AnonAddy let you create aliases so your real email stays private.

Review App Permissions Regularly

- iOS: Settings → Privacy → See which apps have access to what.

- Android: Settings → Apps → Permissions → Revoke anything suspicious.

The Golden Rule: Assume the Worst and Adjust Accordingly

Companies want your data—it’s how they make money. Their default settings are designed to maximize collection, not protect you.

Your move:

- Before using any new app, check the settings.

- Opt out of everything that’s not essential.

- Repeat this every time you update an app.

It’s not paranoia—it’s basic digital hygiene. You wouldn’t eat food without checking the expiration date. Don’t use an app without checking its privacy settings.

Remember: If a company really cared about your privacy, they’d make it easy to opt out. The fact that they don’t? That’s your answer.

Bonus: Small Habits for Big Privacy Wins

You don’t need to overhaul your entire digital life to make a difference. Start with these easy, low-effort habits:

Log Out of Accounts When You’re Done

- Staying logged in = more tracking. Sign out when you’re not using a service.

Use Private/Incognito Mode (But Know Its Limits)

- It doesn’t make you anonymous, but it reduces tracking between sessions.

Turn Off Unused App Permissions

- Go to your phone’s settings and revoke access for apps that don’t need it.

Set a Quarterly "Privacy Cleanup" Reminder

- Delete old posts, clear cookies, and review app permissions every few months.

Think Before You Post

- Ask yourself: "Would I be okay with this being public forever?" If not, don’t hit share.

Tool Recommendations: Privacy-First Alternatives (Because You Deserve Better)

Tired of feeling like every app, website, and service is spying on you? You’re not alone. The good news? There are better, safer alternatives for almost everything. Here’s your privacy upgrade guide—no tech degree required.

Email: Ditch Gmail (And Reclaim Your Inbox)

Gmail is convenient, but it’s also a data collection machine. Google scans your emails for ads, tracks your activity, and shares your data with third parties. Time to switch.

Proton Mail (proton.me)

- End-to-end encrypted (even Proton can’t read your emails).

- Swiss-based (strong privacy laws).

- Ad-free, no tracking.

- Free tier available (with paid upgrades for more storage).

- Bonus: Works seamlessly with Proton VPN and Proton Calendar.

SimpleLogin (simplelogin.io)

- Mask your real email with unlimited aliases.

- Forward emails to your main inbox without exposing your address.

- Now owned by Proton, so it integrates perfectly with Proton Mail.

- Use case: Sign up for newsletters, online shops, or services without giving away your real email.

Fastmail (fastmail.com)

- Privacy-focused, with no ads or tracking.

- Custom domains (use your own email address).

- Great for professionals who need reliability + security.

- Bonus: Supports email aliases natively.

Tutanota (tutanota.com)

- Fully encrypted (emails, contacts, and calendar).

- Open-source and German-based (GDPR-compliant).

- Free plan available.

Why it matters:

- No more targeted ads based on your emails.

- No more data breaches exposing your private conversations.

- You control who sees your real email.

Browsers: Stop Feeding the Tracking Beast

Chrome, Edge, and Safari track your every move. These browsers don’t.

Brave (brave.com)

- Blocks ads and trackers by default (no extensions needed).

- Built-in Tor mode for extra privacy.

- Rewards you with crypto for viewing privacy-respecting ads (if you opt in).

- Faster than Chrome (because it’s not bogged down by trackers).

Firefox (mozilla.org)

- Open-source and non-profit (no corporate agenda).

- Strong privacy protections out of the box.

- Customizable with add-ons like:

- uBlock Origin (blocks ads and trackers).

- Privacy Badger (stops hidden trackers).

- HTTPS Everywhere (forces secure connections).

- Bonus: Works with Firefox Relay for email masking.

LibreWolf (librewolf.net)

- A hardened, privacy-focused fork of Firefox.

- No telemetry, no tracking, no BS.

- Pre-configured for security (no tweaking needed).

Why it matters:

- Fewer ads = faster browsing.

- No more creepy "recommended for you" content.

- Less risk of malware and phishing.

Search Engines: No More Google Snooping

Google logs every search—even in "incognito mode." These alternatives don’t.

DuckDuckGo (duckduckgo.com)

- No tracking, no search bubbles (you get unbiased results).

- Bang shortcuts (!Amazon, !Wikipedia) for quick searches.

- Blocks hidden trackers on websites you visit.

- Mobile app available (with built-in tracker blocking).

Qwant (qwant.com)

- European-based (strong privacy laws).

- No personalized ads or profiling.

- Separate search categories (news, social, images).

- Bonus: Qwant Maps is a privacy-friendly alternative to Google Maps.

Startpage (startpage.com)

- Gives you Google results without the tracking.

- Anonymous View lets you visit websites without leaving traces.

- No IP logging.

SearX (Self-hosted or searx.me)

- Open-source, decentralized search.

- No logs, no tracking.

- Customizable (you can host your own instance).

- Use case: For tech-savvy users who want full control.

Why it matters:

- Your searches stay private.

- No more ads following you around the web.

- No filter bubbles (you see the real internet, not just what algorithms think you want).

Note-Taking: Keep Your Thoughts Actually Private

Evernote, OneNote, and Google Keep store your notes in the cloud—where they can be hacked, leaked, or scanned. These tools keep your thoughts secure.

Standard Notes (standardnotes.com)

- End-to-end encrypted (only you can read your notes).

- Simple, distraction-free interface.

- Works offline (syncs when you’re online).

- Extensions for editors, spreadsheets, and tasks.

- Free plan available (with paid upgrades for more features).

Joplin (joplinapp.org)

- Open-source and self-hostable (you control your data).

- Markdown support (great for coders and writers).

- Syncs with Nextcloud, Dropbox, or WebDAV.

- Encrypted backups.

- Use case: Perfect for students, researchers, and writers who need secure, organized notes.

Obsidian (obsidian.md)

- Local-first (your notes stay on your device).

- Plain-text files (no vendor lock-in).

- Plugins for encryption, backlinks, and more.

- Use case: Ideal for knowledge workers and long-term note-takers.

CryptPad (cryptpad.fr)

- Encrypted, real-time collaboration (like Google Docs, but private).

- No account needed (just create a pad and share the link).

- Use case: Great for teams or sensitive projects.

A Physical Notebook (Yes, Really)

- No hacks, no breaches, no tracking.

- Bonus: Writing by hand boosts memory and creativity.

- Use case: For brainstorming, journaling, or anything you want to keep 100% offline.

Why it matters:

- Your ideas stay yours.

- No risk of cloud leaks or ransomware.

- Peace of mind knowing no one else can access your notes.

Video Streaming: Watch Without the Tracking

YouTube tracks everything you watch, serves you creepy ads, and recommends increasingly extreme content to keep you hooked. These alternatives let you watch in peace.

Invidious (invidious.io)

- Open-source YouTube front-end (no ads, no tracking).

- No account needed.

- Dark mode, keyboard shortcuts, and no algorithmic manipulation.

- Multiple instances available (if one goes down, try another).

- Use case: For casual viewers who want a cleaner YouTube experience.

PeerTube (joinpeertube.org)

- Decentralized, open-source video platform.

- No ads, no tracking, no algorithms.

- Anyone can host a server (decentralized = no single point of failure).

- Use case: For creators and viewers who want ethical, censorship-resistant video hosting.

Why it matters:

- No more ads interrupting your videos.

- No more "recommended" rabbit holes.

- Support for creators who aren’t algorithm-driven.

Bonus: More Privacy Tools You Might Not Know About

- Cloud Storage: Nextcloud (self-hosted) or Tresorit (end-to-end encrypted).

- Messaging: Signal (for texts/calls) or Element (for decentralized chat).

- Maps: OpenStreetMap or Organic Maps (offline maps).

- Calendars: Proton Calendar or EteSync (end-to-end encrypted).

Conclusion

Privacy Isn’t Paranoia—It’s Power and Basic Dignity

Look, we’re not saying you need to live in a bunker, communicate in smoke signals, or wrap your router in tinfoil (though if that’s your thing, we won’t judge). But in a world where your smart fridge might be gossiping about your grocery habits and your fitness tracker could be selling your heart rate to insurance companies, a little caution isn’t paranoia—it’s common sense.

Privacy isn’t about hiding—it’s about dignity. It’s like locking the bathroom door: You don’t do it because you’re ashamed of what’s happening in there. You do it because some things are none of anyone else’s business. The same goes for your emails, searches, location, and personal life. You’re not a criminal for wanting boundaries—you’re a human being who values control over your own information.

"I Have Nothing to Hide" Is a Dangerous Myth

We’ve all heard it: "I don’t care about privacy—I have nothing to hide!" But let’s be real—would you be okay with someone filming you in the bathroom just because you’re "not doing anything wrong"? Of course not. Privacy isn’t about guilt—it’s about basic respect.

Start Small (Because Even a Little Privacy Goes a Long Way)

You don’t need to go full hermit mode to protect yourself. Small, intentional changes make a big difference. Here’s how to start:

Swap one tool this week.

- Ditch Gmail for Proton Mail.

- Replace Chrome with Brave.

- Try DuckDuckGo instead of Google.

Read one privacy policy before signing up.

- Look for: What data they collect, who they share it with, and if you can delete it.

- Use ToS;DR for the SparkNotes version.

Ask yourself: "Would I be okay with this if the bathroom door were open?"

- Would I want this post/photo/data public forever?

- Who benefits from this? (If it’s not you, think twice.)

Delete one old account you no longer use.

- Use JustDeleteMe to find the escape hatch.

Turn off one unnecessary app permission.

- Does your flashlight app really need your location? (Spoiler: No.)

Set up one email alias for sign-ups.

- Use SimpleLogin or Firefox Relay to keep your real email under wraps.

Remember: The Internet Doesn’t Forget (But You Can Control What It Remembers)

Every photo, post, search, and click leaves a digital trail—and once it’s out there, it’s out there forever. But here’s the good news: You don’t have to be perfect. Every small step reduces your risk and puts you back in control.

- Switched to a privacy-focused browser? 🎉 Win.

- Deleted an old tweet? 🎉 Win.

- Actually read a Terms & Conditions page? 🎉 Legendary win.

So, What’s the First Change You’ll Make?

Maybe it’s installing a privacy tool, adjusting your settings, or just pausing before you hit "post."

Your data. Your dignity. Your power.

Now go lock that digital bathroom door—before someone walks in on your metaphorical privacy. (And remember: "I have nothing to hide" is the new "I’ll just leave the door unlocked—what’s the worst that could happen?")

]]>Git is a powerful version control system that allows developers to track changes in their codebase. While most commits include modifications to files, Git also supports empty commits—commits that contain no changes to the repository’s files. At first glance, empty commits might seem pointless, but they can be surprisingly useful in specific scenarios.

In this article, we’ll explore:

- What empty commits are

- How to create them

- Practical use cases

- Best practices and potential pitfalls

What Are Empty Commits?

An empty commit is a Git commit that doesn’t modify any files in the repository. It only updates the commit history with a new entry, timestamp, and message. Empty commits are created using the --allow-empty flag with the git commit command.

How to Create an Empty Commit

To create an empty commit, run:

git commit --allow-empty -m "Your commit message here"This command adds a new commit to the branch without staging any changes.

Why Use Empty Commits?

While empty commits might seem unnecessary, they serve several practical purposes:

1. Triggering CI/CD Pipelines

Some continuous integration/continuous deployment (CI/CD) systems trigger builds or deployments based on new commits. If you need to manually trigger a pipeline without changing code (e.g., to redeploy a configuration or test a build environment), an empty commit can be a quick solution.

2. Marking Significant Events

Empty commits can act as milestones in your repository’s history. For example:

- Marking the start or end of a sprint

- Documenting a decision or event (e.g., "Project paused due to dependency issues")

- Creating a placeholder for future work

3. Testing Git Hooks

If you’re developing or debugging Git hooks (e.g., pre-commit or post-commit hooks), empty commits allow you to test them without altering your codebase.

4. Resolving Merge Conflicts

In rare cases, empty commits can help resolve merge conflicts by creating a merge commit without introducing new changes.

Best Practices for Using Empty Commits

While empty commits can be useful, they should be used judiciously to avoid cluttering your repository’s history. Here are some best practices:

1. Use Descriptive Commit Messages

Always include a clear, descriptive message explaining the purpose of the empty commit. For example:

git commit --allow-empty -m "Trigger CI build for environment testing"2. Avoid Overuse

Empty commits should be an exception, not a rule. Frequent empty commits can make the commit history harder to navigate and understand.

3. Document Their Purpose

If your team uses empty commits for specific workflows (e.g., triggering deployments), document this practice in your project’s contribution guidelines.

4. Consider Alternatives

Before using an empty commit, ask yourself if there’s a better alternative:

- For CI/CD triggers, consider using a dedicated API or webhook.

- For milestones, use Git tags or annotations instead.

Potential Pitfalls

While empty commits are generally harmless, they can cause issues if misused:

1. Cluttered History

Too many empty commits can make it difficult to track meaningful changes in your repository.

2. Confusion Among Team Members

Team members unfamiliar with empty commits might find them confusing or unnecessary. Always communicate their purpose clearly.

3. Unintended CI/CD Triggers

If your CI/CD pipeline is configured to run on every commit, empty commits might trigger unnecessary builds, wasting resources.

Conclusion

Empty commits are a niche but powerful feature in Git. When used thoughtfully, they can help trigger CI/CD pipelines, mark important events, and test Git hooks. However, it’s essential to use them sparingly and document their purpose to maintain a clean and understandable commit history.

By understanding the use cases and best practices for empty commits, you can leverage them effectively in your development workflow.

]]>Data deletion is a deceptively complex problem in modern software systems.

Pressing delete in your application might seem harmless, but in production, it can mean permanent data loss, broken relationships, and compliance nightmares.

That’s where soft delete comes in — a development pattern that marks data as deleted instead of removing it entirely. It lets you safely hide, recover, or audit records without losing critical information.

In this article, we’ll explore:

- Why soft delete is important in real-world .NET applications

- How to implement it in Entity Framework Core using query filters and client-side cascade deletion

- Pros and cons of soft delete versus hard delete

- The privacy challenge it introduces under regulations like GDPR and how to handle user deletion requests correctly

By the end, you’ll understand not just how to implement soft delete in .NET, but also when to use it — and how to stay both safe and compliant.

Why Soft Delete Is Important

In any system with users, orders, or transactions, deleting records outright can be risky. Accidental deletions, debugging needs, or audit trails often require access to “deleted” data.

Soft delete solves this by introducing a simple boolean flag (IsDeleted) or timestamp (DeletedAt) that hides data from queries instead of removing it.

Key benefits include:

- Easy recovery from accidental deletions

- Full audit and compliance traceability

- Preserved referential integrity between related entities

- Safe testing and debugging environments

But it’s not just about convenience — it’s about data safety and trust.

How to Implement Soft Delete in EF Core

Entity Framework Core (EF Core) makes implementing soft delete straightforward with global query filters and client cascade relationships.

- Add a soft delete flag to your entities (

IsDeleted,DeletedAt). - Apply a global filter with

HasQueryFilter()to exclude soft-deleted rows from all queries. - Configure relationships with

.OnDelete(DeleteBehavior.ClientCascade)so related entities are also marked as deleted automatically. - Override

SaveChanges()to intercept EF’sEntityState.Deletedentries and turn them into soft deletes instead of physical deletes.

With this pattern, calling _db.Remove(entity) doesn’t issue a DELETE SQL statement.

Instead, EF Core updates the entity and its dependents to set IsDeleted = true — safe, reversible, and consistent.

Define the Soft Deletable Interface and Base Class

public interface ISoftDeletable

{

bool IsDeleted { get; set; }

DateTime? DeletedAt { get; set; }

}

public abstract class SoftDeletableEntity : ISoftDeletable

{

public bool IsDeleted { get; set; }

public DateTime? DeletedAt { get; set; }

}This base abstraction makes it easy to apply the same logic across all your entities.

Create Your Entities

public class Customer : SoftDeletableEntity

{

public int Id { get; set; }

public string Name { get; set; } = default!;

public List<Order> Orders { get; set; } = new();

}

public class Order : SoftDeletableEntity

{

public int Id { get; set; }

public string Description { get; set; } = default!;

public int CustomerId { get; set; }

public Customer Customer { get; set; } = default!;

}Both entities inherit from the soft delete base class, meaning they’ll automatically support the IsDeleted flag and deletion timestamp.

Configure EF Core Model and Query Filters

public class AppDbContext : DbContext

{

public DbSet<Customer> Customers => Set<Customer>();

public DbSet<Order> Orders => Set<Order>();

public AppDbContext(DbContextOptions<AppDbContext> options)

: base(options) { }

protected override void OnModelCreating(ModelBuilder modelBuilder)

{

base.OnModelCreating(modelBuilder);

// Apply soft delete global filters

modelBuilder.Entity<Customer>().HasQueryFilter(c => !c.IsDeleted);

modelBuilder.Entity<Order>().HasQueryFilter(o => !o.IsDeleted);

// Relationship: Client-side cascade for soft delete propagation

modelBuilder.Entity<Order>()

.HasOne(o => o.Customer)

.WithMany(c => c.Orders)

.OnDelete(DeleteBehavior.ClientCascade);

}

// Intercept SaveChanges to convert deletes into soft deletes

public override int SaveChanges()

{

ConvertDeletesToSoftDeletes();

return base.SaveChanges();

}

public override Task<int> SaveChangesAsync(CancellationToken cancellationToken = default)

{

ConvertDeletesToSoftDeletes();

return base.SaveChangesAsync(cancellationToken);

}

private void ConvertDeletesToSoftDeletes()

{

// EF Core automatically marks related entities as Deleted due to ClientCascade

var deletedEntries = ChangeTracker.Entries()

.Where(e => e.State == EntityState.Deleted && e.Entity is ISoftDeletable)

.ToList();

foreach (var entry in deletedEntries)

{

var entity = (ISoftDeletable)entry.Entity;

entity.IsDeleted = true;

entity.DeletedAt = DateTime.UtcNow;

// Prevent EF from issuing a physical DELETE

entry.State = EntityState.Modified;

}

}

}Key points:

- The global query filters ensure deleted rows are hidden from normal queries.

- The

ClientCascadeensures related entities are also marked as deleted in the change tracker. - The

ConvertDeletesToSoftDeletes()method intercepts before EF runs SQL, converting allDeletedentities into updates instead.

Usage Example

public class CustomerService

{

private readonly AppDbContext _db;

public CustomerService(AppDbContext db)

{

_db = db;

}

public async Task DeleteCustomerAsync(int customerId)

{

var customer = await _db.Customers

.Include(c => c.Orders)

.FirstOrDefaultAsync(c => c.Id == customerId);

if (customer == null)

throw new InvalidOperationException("Customer not found");

_db.Customers.Remove(customer);

await _db.SaveChangesAsync();

}

public async Task<List<Customer>> GetActiveCustomersAsync()

{

// Soft-deleted entities are automatically excluded

return await _db.Customers.Include(c => c.Orders).ToListAsync();

}

public async Task<List<Customer>> GetAllCustomersIncludingDeletedAsync()

{

// Use IgnoreQueryFilters to view all entities, even deleted ones

return await _db.Customers

.IgnoreQueryFilters()

.Include(c => c.Orders)

.ToListAsync();

}

}When you call:

await customerService.DeleteCustomerAsync(1);EF Core:

- Marks the

Customerand relatedOrdersas deleted in the ChangeTracker (due toClientCascade). - The

ConvertDeletesToSoftDeletes()method intercepts these changes. - EF executes UPDATE statements, not DELETEs:

UPDATE Customers SET IsDeleted = 1, DeletedAt = '2025-10-23' WHERE Id = 1;

UPDATE Orders SET IsDeleted = 1, DeletedAt = '2025-10-23' WHERE CustomerId = 1;All without manually traversing relationships or risking physical data loss.

Pros and Cons of Soft Delete

| Pros | Cons |

|---|---|

| ✅ Prevents accidental data loss | ⚠️ Data still exists (can be privacy-sensitive) |

| ✅ Enables recovery and undo | ⚠️ Requires custom handling for unique constraints |

| ✅ Maintains relational integrity | ⚠️ Can bloat database size over time |

| ✅ Improves auditability | ⚠️ Adds logic complexity for queries and updates |

The biggest downside? Privacy compliance — which brings us to the tricky part.

The Privacy Challenge: Handling User Deletion Requests with Anonymization

Soft delete ensures you don’t lose data accidentally — but for privacy compliance (GDPR, CCPA, etc.), you need to ensure personally identifiable information (PII) can’t be reconstructed once a user asks to be forgotten.

Instead of deleting rows entirely, you can anonymize user-related fields.

This way:

- You preserve relationships and historical data (for audits or reports)

- You remove personal identifiers

- You satisfy privacy requirements

Updated User Entity for Anonymization

Here’s an example of a user entity that supports both soft delete and anonymization:

public class User : SoftDeletableEntity

{

public int Id { get; set; }

public string Email { get; set; } = default!;

public string FullName { get; set; } = default!;

public bool IsAnonymized { get; set; }

}Anonymization Service

Instead of deleting, we update sensitive fields with anonymized values. This preserves the row but renders it untraceable to the original user.

public class UserService

{

private readonly AppDbContext _db;

public UserService(AppDbContext db)

{

_db = db;

}

public async Task SoftDeleteUserAsync(int userId)

{

var user = await _db.Users.FindAsync(userId);

if (user == null) return;

user.IsDeleted = true;

user.DeletedAt = DateTime.UtcNow;

await _db.SaveChangesAsync();

}

public async Task AnonymizeUserAsync(int userId)

{

var user = await _db.Users

.IgnoreQueryFilters()

.FirstOrDefaultAsync(u => u.Id == userId);

if (user == null || user.IsAnonymized)

return;

user.IsDeleted = true;

user.IsAnonymized = true;

user.DeletedAt = DateTime.UtcNow;

// Anonymize identifiable information

user.FullName = "Deleted User";

user.Email = $"deleted-{Guid.NewGuid()}@example.com";

await _db.SaveChangesAsync();

}

}What happens here:

- The record remains in the database (maintains foreign keys, reports, logs).

- Personal data is scrubbed.

- The record is clearly flagged as deleted and anonymized.

Optional: Automate Anonymization for Deletion Requests

If your application needs to delay anonymization (e.g., allow a “grace period” for account recovery), you can extend your model:

public class User : SoftDeletableEntity

{

public bool PendingAnonymization { get; set; }

public bool IsAnonymized { get; set; }

public string Email { get; set; } = default!;

public string FullName { get; set; } = default!;

}Then schedule anonymization through a background job (e.g., Hangfire, Quartz.NET):

public class AnonymizationJob : BackgroundService

{

private readonly IServiceScopeFactory _scopeFactory;

public AnonymizationJob(IServiceScopeFactory scopeFactory)

{

_scopeFactory = scopeFactory;

}

protected override async Task ExecuteAsync(CancellationToken stoppingToken)

{

while (!stoppingToken.IsCancellationRequested)

{

using var scope = _scopeFactory.CreateScope();

var db = scope.ServiceProvider.GetRequiredService<AppDbContext>();

var cutoff = DateTime.UtcNow.AddDays(-30); // 30-day retention

var users = db.Users

.IgnoreQueryFilters()

.Where(u => u.PendingAnonymization && !u.IsAnonymized && u.DeletedAt < cutoff);

await foreach (var user in users.AsAsyncEnumerable())

{

user.IsAnonymized = true;

user.Email = $"deleted-{Guid.NewGuid()}@example.com";

user.FullName = "Deleted User";

}

await db.SaveChangesAsync();

await Task.Delay(TimeSpan.FromHours(12), stoppingToken);

}

}

}Why Anonymization Is Better Than Hard Delete

| Aspect | Hard Delete | Anonymization |

|---|---|---|

| Data loss | Permanent | Reversible for non-sensitive data |

| Referential integrity | Can break foreign keys | Preserved |

| Audit trails | Destroyed | Maintained |

| Privacy compliance | ✅ (if total removal) | ✅ (if properly scrubbed) |

| Business continuity | ⚠️ Risky | ✅ Safe and compliant |

In most enterprise or SaaS systems, anonymization is preferred because you often need to:

- Keep invoices, logs, or analytics records

- Retain relational data for business operations

- Ensure that data cannot identify the original user

Anonymization gives you privacy without losing data integrity.

Conclusion

Soft delete with EF Core gives you a safety net against accidental data loss — but it’s not the full story.

To stay compliant with privacy regulations, you must ensure that user data can’t be reconstructed after a deletion request.

That’s where anonymization shines:

it lets you keep business-critical and relational data intact while ensuring no personally identifiable information remains in your database.

In summary:

- Use soft delete for reversible, application-level deletes

- Use ClientCascade for automatic relationship propagation

- Use anonymization for privacy-compliant user deletion

Together, they form a robust, compliant, and developer-friendly data deletion strategy that protects both your system’s integrity and your users’ privacy.

]]>In PowerShell, many object properties are backed by enumerations (enums). Enums define a fixed set of values that a property can take. A common example is the StartType property of a Windows service, which is defined by the ServiceStartMode enum.

If you want to write reliable scripts or automate system tasks, it’s useful to know both the enum type and all the values it supports. This guide shows you how to:

- Determine the enum type of a Windows Service property

- Retrieve all available enum values with their names and numeric representations

Identify the Enum Type of a Property

Start by examining a service object. Running the following command returns the start type of the first service on your system:

(Get-Service)[0].StartTypeWhile this displays the value, it doesn’t reveal the actual enum type. To inspect the type, convert the property to XML:

(Get-Service)[0].StartType | ConvertTo-Xml -As StringInside the XML output, you’ll find a line like this:

<Object Type="System.ServiceProcess.ServiceStartMode">This shows that the property is based on the System.ServiceProcess.ServiceStartMode enum.

If the type doesn’t appear, you can increase the depth of the XML conversion:

(Get-Service)[0].StartType | ConvertTo-Xml -As String -Depth 10This command will display the FQCN (Fully-Qualified Class Name) of the associated enum.

You can also get the actual enum type using the GetType() method:

$service = Get-Service | Select-Object -First 1

$enumType = $service.StartType.GetType()List All Values of the Enum

Once you know the enum type, you can use [enum]::GetValues() to display all available options. Here’s a script that outputs both the name and the integer value:

[enum]::GetValues([System.ServiceProcess.ServiceStartMode]) |

ForEach-Object {

[PSCustomObject]@{

Name = $_.ToString()

Value = [int]$_

}

} | Format-Table -AutoSizeThe result is a neat table showing every possible setting, such as:

- Automatic

- Manual

- Disabled

along with the integer values that represent them internally.

This command may throw en erorr like TypeNotFound.

To fix it, you'll have to manually specify the namespace of the library that contains the enum type like:

Add-Type -AssemblyName System.ServiceProcessIf you encounter this error, don't worry. You can use the second script from the previous section to use the actual enum type instead of the FQCN:

[enum]::GetValues($enumType) |

ForEach-Object {

[PSCustomObject]@{

Name = $_.ToString()

Value = [int]$_

}

} | Format-Table -AutoSize It will generate the same output and works in all environments.