While sometimes Claude is writing commit messages for the work it's doing, I still, gasp, write code manually from time-to-time, and outside of my day job I'm not the best at writing descriptive commit messages. Which is a shame, because I do find myself grepping old commit messages fairly often trying to find things.

So I (...Claude) wrote a zsh function that stages everything, passes the diff to Claude, and gets back a properly formatted commit message. Here's what it looks like in action:

...which resulted in this commit.

The function itself is pretty straightforward:

gcam() {

# Stage all changes

git add -A

# Get the staged diff

local diff=$(git diff --cached)

# Check if there are staged changes

if [ -z "$diff" ]; then

echo "No changes to commit"

return 1

fi

# Generate commit message using Claude and edit it with nvim via vipe

local final_msg=$(echo "$diff" | claude -p "Generate a simple, straightforward git commit message for these changes. Be concise but specific about what was changed. Include specific keywords and technical terms (function names, file types, configuration settings, etc.) that would be useful for searching commit history later. Do not be overly detailed. Use proper sentences with correct capitalization and punctuation, including periods at the end of sentences. Return only the commit message without any explanation or formatting." --output-format text | EDITOR=nvim vipe)

# If the message is not empty, commit with it

if [ -n "$final_msg" ]; then

git commit -m "$final_msg"

else

echo "Commit aborted (empty message)"

return 1

fi

}

The stack here is simple: zsh function → git diff → Claude Code → vipe (for piping in-and-out of $EDITOR) -> nvim → git commit. Everything gets staged automatically, Claude generates a searchable commit message with the right technical terms, and you get a chance to edit it in nvim before it's committed. 🎉

Edit: Hello! I've decided to sunset the JobsByReferral.com project (which might have redirected you here). It was super fun while it lasted, but wasn't free to operate!

Throughout June 2025, several of my developer friends had been laid off.

We were discussing the state of the job market in tech (which is... Not Great, Bob), and were collectively surprised to find that searching for jobs on LinkedIn no longer had an "In My Network" filter (but more on this later -- it's still there, sorta).

The conversation kept coming back to how frustrating the job search had become in general. Cold applying to jobs has become ineffective when you're competing with hundreds or thousands of applicants per job post. AI tools make it easy to tailor resumes and cover letters to each position, so companies are drowning in applications.

In my 20 years in tech, the majority of roles I've landed were through a referral of some sort. Knowing what roles are available is the first step in that process -- you can't leverage your network if you don't know where the opportunities are.

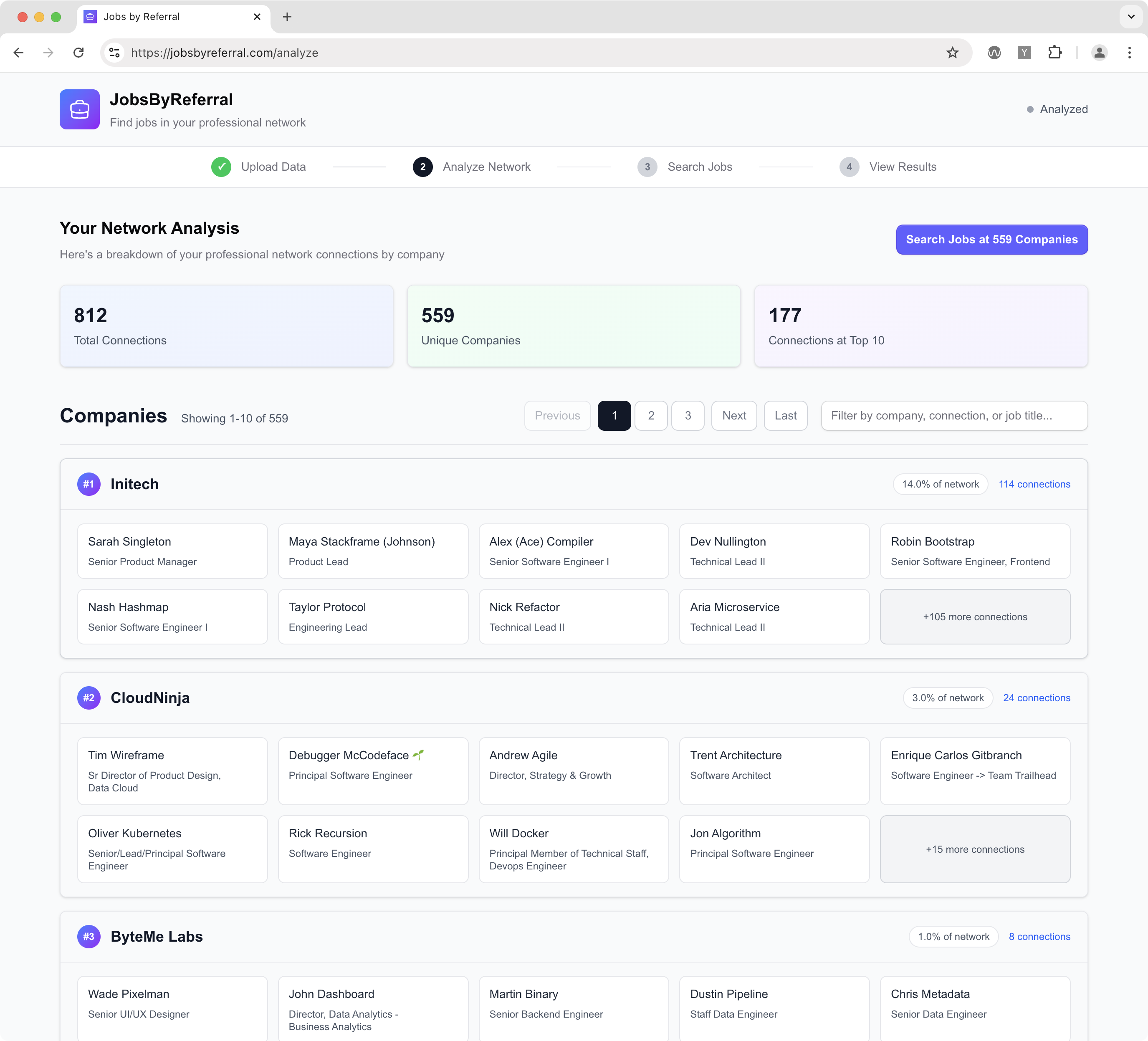

I was quickly nerd-sniped and spent a couple of hours with Claude Code vibe-coding an app that would do something simple: take your personal LinkedIn connections data and show you open jobs at companies they worked at: JobsByReferral.com.

The 15-Minute MVP

Within 15 minutes I had a working approach: you could request your own personal LinkedIn data archive which contains a Connections.csv file. This has your connections' names, company names, and email addresses. From there the app I created would analyze your network and show you the companies you're connected to (and who works there):

Then, the app runs each company through JSearch, which uses the Google for Jobs API:

I shared this with my friends who were job hunting, and some of them found it pretty useful. They were finding companies they didn't realize they were connected to, and it gave them a systematic way to approach their network rather than just pouring through job boards.

Tech Stack

I built this with Next.js on Vercel, and used jszip to do the data archive ZIP extraction entirely on the client. This was one of my core tenets of this project: don't let personal data touch a server. As I had noted in this HN comment:

we do not pass user data to the backend endpoints, which you can verify by viewing the network calls. When you upload the ZIP or CSV the extraction/parsing happens entirely client-side, and then we use auto-generated IDs to map connection data from the JSearch API response to the client-side stored connection data.

Searching for "In My Network" jobs on LinkedIn

As I had noted above, turns out we were somewhat mistaken on the lack of an "In My Network" feature in LinkedIn Jobs search. They're rolling out a new AI-powered Jobs Search platform, so the previous filter for "In My Network" is built into the query itself instead of being a toggle you could flip, like the old search.

So the functionality is still there but it's less discoverable, which is what motivated this little experiment in the first place. I think there's an interesting sidebar here about how AI/LLM integrations in products are hiding advanced features that many folks rely on and expect from tools, but that's another post!

24 hours on the front page of Hacker News

I threw it on Hacker News as a Show HN post and rushed out the door to take my kid to dance class. A couple hours later I noticed 50+ realtime users on my Fathom dashboard, and knew it had popped to the homepage - where it sat for about 24 hours!

This was a fun project to build and was neat to see some traction, though the popularity is likely driven by the state of the job market, which is more than a little bittersweet.

]]>I write up a GH issue in detail, including links to lines, files, and all the context needed for implementation.

I can add images in the GH issue that will be used in the implementation (ie., to put onto a website).

I give it a budget: "Max budget: $10.00" to keep scope manageable. Claude Code won't accept the issue if there's no budget specified.

I assign that issue to Claude Code

Claude Code immediately starts working:

- It clones the repo and checks out a new branch.

- It pours all of my input into context.

- It downloads any images I had attached to the issue and puts them in the appropriate place in the repo.

- It runs the Next.js app and iterates through doing the work until it hits the max budget. It should visually verify the changes it's making.

When the max budget is reached or it thinks it's sufficiently complete, it submits a PR.

I review the PR using GitHub's standard review process - adding line comments and feedback.

Once I submit my review, if it's "Request changes," Claude goes back to work (I'll give it a new budget for the fixes).

We iterate until the PR is solid

I approve the PR and Claude merges it, triggering auto deploy and CI processes

Does this exist? Let me know!

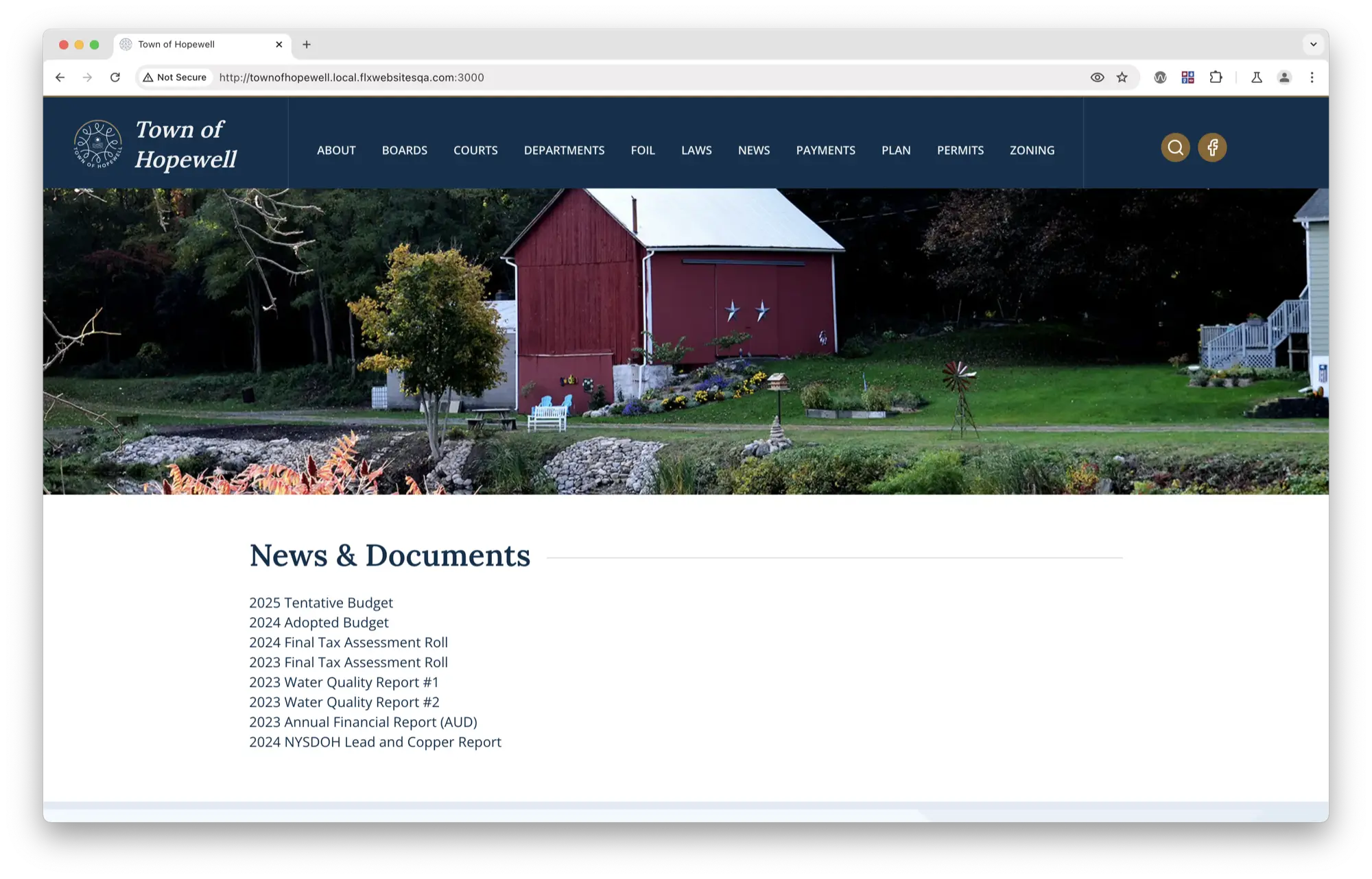

]]>Chrome:

Safari:

At some point in the past, we used to be able to fix this with:

-webkit-font-smoothing: antialiased;

-moz-osx-font-smoothing: grayscale;

But, this no longer seems to have an effect. I landed on this Stack Overflow answer and indeed that fixes the issue:

Safari with font-synthesis: style:

So by not providing font-synthesis: weight, we can tell the browser "stop artificially modifying this font and faithfully render the weights provided to you by the font". Which sort of feels like should be the default (and appears to be in Chrome), but... 🤷♂️.

const header = headers();

const ip = header.get('x-forwarded-for');

if (!['1.2.3.4', '::1'].includes(ip || '')) {

return notFound();

}

Sure enough, removing that reverts back to Next.js's typical app-router caching behavior.

The docs are also quite clear on this, which is nice:

]]>headers is a Dynamic API whose returned values cannot be known ahead of time. Using it in will opt a route into dynamic rendering.

I recently started using an RSS reader again after a failed attempt at building our own. Landed on NetNewsWire and it really is quite lovely. Also easy to tinker with if you're into learning some macOS dev / Swift (GitHub).

This blog itself isn't open source because it's running on our website platform that we've developed for FLX Websites (which I founded ~5 years ago).

Some bloggers I've been enjoying lately:

- Cole's Blog (who also runs a website business similar to FLX Websites!)

- Jack Baty

- Kev Quirk (who's also into watches!)

- Jason Cohen

Anyone else I should start following?

]]>What is this post?

This post identifies a growing technical challenge that some companies might experience after working with a large monolith (such as Rails or Django) that is tightly coupled to a front-end application (JavaScript).

I'll provide an overview of the problems you might be facing today, and what those problems might lead to. I'll cover the major pieces of the back-end-to-front-end relationship, and what each of them handles.

Finally, I'll talk about how you might fix these problems by moving to a front-end heavy web application (if that makes sense for your application), by implementing a common and consistent REST API on the back-end.

The role of the back-end

Let's assume Rails is your back-end application framework of choice. It's responsible for most of the heavy lifting and integration work of our product. It handles data:

- storage

- retrieval

- relationships

- caching

Your Rails back-end is the source of truth for the business-logic of your product. It defines terminology and behavior (what is an application? what is an organization?), and it's charged with processing and massaging that data into more useful data, such as graphs, aggregation, association, etc. It also provides a platform for processing data outside of the typical user-to-product relationship:

- Email delivery

- Transactional messaging

- Third-party associations: Twitter <-> applicationFacebook <-> application

In support of these many responsibilities, your Rails back-end might interface with several systems:

- Web browsers via HTML rendering and JSON endpoints (currently Rails resource endpoints)

- Our datastore via database connections

- Third-party APIs such as Zuora, NetSuite, SendGrid, Salesforce, etc.

The relationship between the Rails application and web browsers is overloaded. Delivering HTML, JavaScript, and resource data to the browser and then patching business logic via tightly-coupled JavaScript applications is what this post is intended to address.

The role of the front-end

The role of the front-end in a modern web application is to deliver the visual representation of your product, and its purpose, to users. It portrays how your application looks and how it works.

The components of a web front-end are:

- HTML rendering

- CSS styling

- JavaScript for application and business logic

The JavaScript part of your front-end is a piece that gets a large amount of attention, as significant amounts of business logic have been moving from the back-end to the front-end in recent years. Some examples of application and business logic that JavaScript might now be responsible for are:

- Code that combines resources (data) according to known relationships, and presents that information to the user.

- Input validation from a user before sending data to the back-end.

- State management: application viewsstate storage in URLs, local storage, etc.

Differences between back-end heavy and front-end heavy applications

When web applications were in their infancy, they tended to be back-end heavy. Servers would send HTML to a web browser, respond to user input, crunch some data and send back more HTML. This traditional request/response cycle was useful and necessary in the early days of the web. It had some benefits:

- Browsers had poor support for handling much more than the typical request/response cycle.

- Servers were more powerful than personal computers (this one is making a comeback).

There are still some significant benefits to writing back-end heavy applications today. They tend to shine best when users of your application will typically only do a small number of things during their visit. You can do all the back-end processing on a powerful server and deliver a single response with everything the user needs to do that one thing. Consider Wikipedia as an example here: the majority of visitors are landing on the page from a Google search, and it makes sense to deliver that page as fast as possible with no additional overhead than what's needed to display that page.

But with more technologically advanced browsers came an opportunity for application developers to build systems that could front-load processing on a user's device and only request smaller updates from a server incrementally, instead of making large requests on every single view. This shift drove the rapid development of front-end application frameworks such as jQuery, Backbone, Angular, Ember, and React.

Let's imagine two cycles: one back-end heavy and one front-end heavy:

Back-end heavy cycle

- User visits a page that lists the comments on one of their blog posts.

- The server looks at the request, sees the supplied cookie, and starts hitting the database: Who's this user? Get the user's blog post that matches this request / URL. Get the blog post's comments that match this result. Get any extra data you might need to render the page (say, user's organization, a count of all blog posts, etc). Do a templating cycle that renders a page based on any supplied templates (headers, footers, blog post, comments, etc). Compile the templates into a string. Send the HTML back to the browser.

This is all fine and well, until the user visits another view. The back-end heavy application has to do this all over again. This is computationally expensive both on the server and the client, and is a waste of resources.

Front-end heavy cycle

- Do all of the things above, but include a heap of JavaScript that sets up an application that will then handle any future route changes via pushState.

- When a route changes or an action happens on the page, the browser can ask the server "can I have this user's blog posts?", and the server can deliver just the blog posts, not a payload that requires an entire cycle of extraneous data, templating, compiling, etc.

Of course, this introduces a new layer: processing on the client. It means that the first time the page loads, the browser on the client now must do some processing to establish code that will handle future actions and route changes.

Why do this? If your users will typically visit more than one route or perform more than one action, you'll save measurable cycles on both the server and the client. This doesn't just save cycles for the current session - for the entire time the user is logged-in and has cached JavaScript, you benefit from this lightweight data-transport approach.

When you have an application that encourages users to visit multiple views or perform many actions, and you provide a back-end heavy implementation, you will eventually deal with performance bottlenecks that will eat engineering time. You can always layer on more caching, but it's far easier to cache raw data than entire request/response cycles and the complexities around that. This is why front-end heavy applications have become commonplace.

Where do you go from here?

There are short-term wins that you can start doing to help facilitate the switch to a front-end heavy application:

- Instate new front-end build tools (Grunt, Gulp, etc.)

- Separate the front-end from Rails. This means HTML, CSS, and JavaScript. Say goodbye to

.erbtemplates. - Use modern development practices: switch to ES6, adopt a stricter component architecture, and streamline tests.

In the long-term, you should hope to have clear separation between the Rails application and the front-end. This will allow you to hire specialists on both the front-end and the Rails side, and help you strengthen your codebase at a faster rate.

How do you change?

You change by discussing this post as an organization and proposing incremental actions. As mentioned above, you might start with:

- Improving the JS and CSS build process with tools such as Gulp.

- Supporting ES6 with Rails 4 + Sprockets (or similar workflows for other back-ends)

Your next steps are to identify exactly how you make technical and workflow changes, in addition to what you've already done.

A common, consistent, and scalable API.

What is the point of an API? It's an interface for data, which systems (and humans) can use. A good API is one which employs consistency, intelligible interfacing, and complete documentation.

A common interface is one which is guessable, across resources:

/api/users/api/organizations/api/users/1/api/organizations/1/api/users/1/organizations

HATEOAS

We're all familiar with REST APIs, and HATEOAS has gotten a fair amount of coverage over the years. HATEOAS is a specification of a REST implementation that primarily differentiates how resources are represented and linked to via the system. HATEOAS is not an excuse for a lack of documentation, and it does provide usability benefits for both human and computer driven clients. It allows the server to define resource locations dynamically and seamlessly upgrade resource relationships. An example of a HATEOAS-style resource endpoint:

/api/users/1

{

id: 1,

username: 'nick',

organization: 'https://api.app.com/organizations/60',

applications: 'https://api.app.com/users/1/applications'

}

The endpoint provides enough information for us to subsequently access additional related resources, but doesn't make assumptions about what we want to do with the resource.

Endpoints could also filter via querystring:

/api/applications?user=1

[

{

id: 123

}

]

HATEOAS-style APIs can reduce computational load on the API server by reducing the number of relationships which must be calculated and retrieved on endpoints. It's up to the client to make subsequent requests to retrieve the data it needs to paint a complete picture of resources and their relationships. This means a greater number of requests from client-to-server, but ideally the lighter data load on the API (as far as relationships go) should allow us to more easily optimize the performance of individual endpoints.

A happy side effect for front-end developers building on top of a HATEOAS-style API is the ability to click through an API via standard web browser. Surprisingly this can make debugging even production systems much more pleasant (ie., share the resource URL via the API instead of a full UI).

Complex and non-traditional endpoints

While HATEOAS/REST specifications dictate that a strict coherence to resource structure must be followed, in the real-world there are sometimes edge cases for complex requests. Any divergence from the traditional REST structure makes the API less consistent, less discoverable, and more difficult to develop with. The edge case must present a strong-enough benefit to diverge (such as performance, security, etc), but sometimes that might happen.

Some examples of non-standard endpoints might include:

- /api/applications/1/usage-graph?from=01-01-2015

- /api/applications/1/recent-actions?include=users

Any endpoint that returns data that would not strictly be considered a "resource" would be a non-traditional endpoint and should be treated with care. As mentioned, exceptions can be made, but only when the trade-offs are clear and the endpoint is well documented.

Transactional endpoints

Sometimes it's necessary to build an endpoint that takes a request, performs some background action, and returns the result. An example of this might be uploading a user's public key via a PATCH to the endpoint:

PATCH /api/users/1

Request body:

{

ssh_key: 'ssh-dss AAAB'

}

You might want to take that key and store it on S3, perhaps. What happens if there's an error uploading the file to S3, though? Instead of having the endpoint respond immediately, one alternative is to respond after the upload is finished, but this introduces latency with the API and can create disconnects between the server and client (e.g. the user closes their browser). One potential solution is to respond with a request identifier that the server maintains and that both the client and server can work with later. An example response to our sample request above:

{

status: 'working',

message: 'Uploading key to S3.',

request: 'https://api.app.com/requests/12345'

}

This response can be instantly returned - even before we begin any background process. Later, the client can ask the server for information on the request:

/api/requests/12345

{

status: 'complete'.

message: 'Key uploaded successfully.'

}

This type of request handling is non-standard within the REST/HATEOAS spec, but again, sometimes exceptions must be made to ensure performance and data consistency between systems.

Authentication

With a completely separated front-end and back-end, authentication and authorization become the primary mechanisms for identifying who a user is and what they can do. With a typical Rails app, this is handled via cookies / session data stored on the server. With an API you need to authenticate first, then give that client a secret they can use later to identify themselves with every call. This is most often done via OAuth.

Mocking API endpoints

One of the primary benefits a common API provides to a front-end team is the ability to mock endpoints between the client and the server. This can be very useful during development, as the front-end team can build features against an API that will eventually exist.

It can also be helpful for the back-end team, in that they can see exactly what the front-end team has built a feature against. There are many libraries that exist to facilitate the creation of a mock API, and it is trivial to build one from scratch, as well. Being able to create mock endpoints is pointless, though, without a common and consistent API that exists for the production back-end.

Documentation

Along with a clean and consistent API comes a responsibility for writing equally clean and consistent documentation for that API. As mentioned, HATEOAS is not an excuse for a lack of documentation. An API is only as good as its documentation.

Every endpoint should be documented, clearly explaining the following:

- Acceptable request methods.

- Response format for each method.

- Available request parameters.

- Any special cases and/or caveats.

Additionally, each endpoint in the doc should provide examples of request/response pairs, and explain (generally) the usage and purpose of the endpoint.

Many types of API doc frameworks exist, but from a publication standpoint, GitHub's API documentation is an excellent example (and is open-source).

Why should we change?

Velocity

A strong separation of concerns allows teams to move faster through the use of improved documentation, clear boundaries, and better cross-team communication.

Testability

By cleanly separating the front-end from the back-end, each respective codebase can adopt best practices for testing with regards to build tools, testing platforms, etc.

Scalability

In a world where the front-end and the back-end are completely separate, systems engineers can more appropriately delegate resources for each system. Front-ends and back-ends have different hardware requirements, and keeping them separate allows us to more appropriately delegate those resources. It also makes fine-tuning performance easier - the front-end need not be burdened by the computational load of the back-end.

When you have them properly separated, the front-end can deliver a controlled experience to the user faster - and we can manage load-time expectations from the back-end much more quickly.

Hire-ability

Front-end and back-end engineering have been going through a specialization phase in the industry for a few years. Front-end engineers have increasingly grown fond of working only with JavaScript, interfacing with an API somewhere. Back-end engineers have begun taking roles exclusively building APIs for applications.

By separating the front-end from the back-end, we're not precluding full-stack engineers from working on either piece - you're making it easier to hire specialists and in return also lowering the barrier for engineers with cross-platform interests to work between the two.

Workflow changes post-API

Once you've developed a stronger separation between the back-end and the front-end, you'll begin to see shifts in what specific types of engineers are interested in working with.

Rails engineers can focus on data, business logic, storage, caching, tests, permissions, etc. Front-end engineers can focus on things such as routing, defining stricter view components, handling data/resources from the API, and more fine-grained tests based on the assumption of a common, consistent API.

From a project management perspective, resources may eventually become more specific. You'll likely have dedicated front-end engineers and dedicated back-end engineers working on their respective pieces. Project managers will need to re-allocate engineering resources according to how workflows eventually shift. Ideally, the separation of concerns between the front-end and the back-end will make individual engineers more effective and efficient with their work.

]]>AWS Lambda is an on-demand computation service that allows you to write code that responds to events, and can be executed at scale within the AWS ecosystem. It has some unique benefits that make working with it particularly desirable. It's cost-effective, scalable, and presents an alternative to spinning up heavy servers to do straightforward event-based work.

At Localytics, we process billions of data points in real-time. At the end of our processing pipeline we output our data to Kinesis streams and S3 buckets. This allows teams to process either live data via the stream or historical data via S3. The format of the data is identical. Lambda was an ideal fit for handling both data sources, as we could write the event handling logic as a single Lambda, and make our data-processing code source-agnostic.

Event sources

Lambda responds to events from a variety of sources. For our purposes we were focused on handling Kinesis stream events and S3 PUT events. See here if you'd like to learn more about the types of events that Lambda supports.

We were tasked with creating a new service that could process historical and live data. As we've made the format identical between S3 and Kinesis data sources, we were able to write a single lambda to handle both event sources. This reduced the surface area of our code that needed to be maintained and clarified the deploy process.

S3 PUT Events

Our Lambda will receive an event when invoked from an S3 PUT notification. It looks like this:

{

"Records":[

{

"eventVersion":"2.0",

"eventSource":"aws:s3",

"awsRegion":"us-east-1",

"eventTime":"1970-01-01T00:00:00.000Z",

"eventName":"ObjectCreated:Put",

"userIdentity":{

"principalId":"EXAMPLE"

},

"requestParameters":{

"sourceIPAddress":"127.0.0.1"

},

"responseElements":{

"x-amz-request-id":"EXAMPLE",

"x-amz-id-2":"EXAMPLE"

},

"s3":{

"s3SchemaVersion":"1.0",

"configurationId":"testConfigRule",

"bucket":{

"name":"sourcebucket",

"ownerIdentity":{

"principalId":"EXAMPLE"

},

"arn":"arn:aws:s3:::mybucket"

},

"object":{

"key":"HappyFace.jpg",

"size":1024,

"eTag":"d41d8cd98f00b204e9800998ecf8427e"

}

}

}

]

}

It's important to note that we're only given metadata about the object (not the data itself). It's on us to get that object from S3. Also, we store our data gzipped, so we need to ungzip the data before we can do something with it.

Here's the functional code that handles this in our lambda (we'll show a complete example later on):

async.waterfall([

function download(next) {

s3.getObject({

Bucket: record.s3.bucket.name,

Key: record.s3.object.key

}, function(err, data) {

next(err, data);

});

},

function gunzip(response, next) {

var buffer = new Buffer(response.Body);

zlib.gunzip(buffer, function(err, decoded) {

next(err, decoded && decoded.toString());

});

},

function doSomething(data, next) {

// `data` is raw data, ready for use.

}

], function(e, r) {

if (e) throw e;

});

Kinesis Events

Our Kinesis stream is always on and channeling data, so our lambda simply listens to the stream and acts upon it.

When Lambda responds to a Kinesis stream event, our event source looks like this:

{

"Records":[

{

"kinesis":{

"partitionKey":"partitionKey-3",

"kinesisSchemaVersion":"1.0",

"data":"TG9jYWx5dGljcyBFbmdpbmVlcmluZyBpcyBoaXJpbmchIGh0dHA6Ly9iaXQubHkvMURqN2N1bA==",

"sequenceNumber":"EXAMPLE"

},

"eventSource":"aws:kinesis",

"eventID":"shardId-000000000000:EXAMPLE",

"invokeIdentityArn":"arn:aws:iam::EXAMPLE",

"eventVersion":"1.0",

"eventName":"aws:kinesis:record",

"eventSourceARN":"arn:aws:kinesis:EXAMPLE",

"awsRegion":"us-east-1"

}

]

}

Records[0].kinesis.datais what we want. The beauty of this event source is that it contains base64 encoded data. Very simple to decode and use in our lambda:

var data = new Buffer(Records[0].kinesis.data, 'base64').toString('utf8');

Creating a dual-purpose lambda

Let's walk through creating and deploying a single lambda that can handle both S3 PUT notifications as well as Kinesis stream events. The full codebase for this example can be found on GitHub.

Permissions

First off, there are two specific permissions that you'll need:

- User permission for

iam:PassRole. This policy needs to be applied to the user who is creating the lambda: - Lambda execution role. You need to create a new role that the Lambda will run as. We assume that role is named

lambda_basic_executionfor the purposes of this project. That role must have (at least) this policy applied:

{

"Version": "2012-10-17",

"Statement": [

{

"Sid": "Stmt1429124462000",

"Effect": "Allow",

"Action": [

"iam:PassRole"

],

"Resource": [

"arn:aws:iam::<account_id>:role/lambda_basic_execution"

]

}

]

}

{

"Version": "2012-10-17",

"Statement": [

{

"Effect": "Allow",

"Action": [

"logs:*"

],

"Resource": "arn:aws:logs:*:*:*"

}

]

}

Your lambda execution role will also need permissions for whatever services you want to use within your function. If you intend to be working with S3, for example, you need to specifically grant your execution role permissions for whatever you intend to do with S3.

Source code walkthrough for a dual-purpose lambda

Let's create a file namedMyLambda.js, and require some things:

var async = require('async');

var AWS = require('aws-sdk');

var fs = require('fs');

var zlib = require('zlib');

We'll be usingasyncas mentioned previously to pull objects from S3 and unzip them withzlib.gunzip.aws-sdkis required for working with S3.

Let's initialize the SDK:

var s3 = new AWS.S3();

Since our code is running as a role within the Lambda system, we don't need to provide credentials. The SDK will happily make any requests you ask of it, and the role's permissions will dictate what we can and cannot do.

Let's write some code that will handle Kinesis events:

exports.kinesisHandler = function(records, context) {

var data = records

.map(function(record) {

return new Buffer(record.kinesis.data, 'base64').toString('utf8');

})

.join();

doWork(data);

context.done();

};

When we get a Kinesis stream event, we could have any number of records to process. Our code expects that, maps the base64-encoded value and joins them to provide a single base64-decoded string that we can work with.

Then we calldoWork(data). In the real world you might be doing asynchronous work on the data (and you may be interested in reading Better Asynchronous JavaScript).

context.done()is how we let Lambda know that we're finished doing work.

That's all we need to do to handle Kinesis event streams, so let's move on to S3 PUT events.

exports.s3Handler = function(record, context) {

async.waterfall([

function download(next) {

s3.getObject({

Bucket: record.s3.bucket.name,

Key: record.s3.object.key

}, function(err, data) {

next(err, data);

});

},

function gunzip(response, next) {

var buffer = new Buffer(response.Body);

zlib.gunzip(buffer, function(err, decoded) {

next(err, decoded && decoded.toString());

});

},

function doSomething(data, next) {

doWork(data);

context.done();

}

], function(err) {

if (err) throw err;

});

};

This should look familiar from earlier in this post. When we get a S3 PUT event, we know that we'll only ever have a single record to work with. So we pass that record to ours3Handler, download the object, unzip the object, and finallydoSomethingwith the data.

Now that we have our two specific handlers for each event type we intend to support, we need to handle the direct event source from Lambda:

exports.handler = function(event, context) {

var record = event.Records[0];

if (record.kinesis) {

exports.kinesisHandler(event.Records, context);

} else if (record.s3) {

exports.s3Handler(record, context);

}

};

Our actual handler is very simple. If the event looks like an S3 event, let thes3Handlerdo the work. Otherwise, if it looks like a Kinesis event, letkinesisHandlerdo the work.

This is all of the code that's necessary to write your first lambda that supports both S3 and Kinesis.

Deployment

Now that we have our code that we want to deploy to Lambda, it's time to actually upload it.

A few basic first steps:

- Install the AWS CLI via

pip install awscli - Configure your AWS credentials at

~/.aws/credentials: - Ensure you've given your user permissions for

iam:PassRole. - Create the

lambda_basic_executionrole as directed above.

[default]

aws_access_key_id = ...

aws_access_key_secret = ...

Once those are set, we need to package our module up:

npm initnpm install async aws-sdk --savenpm installzip -r ./MyLambda.zip *

Now we can upload the module:

aws lambda create-function \

--region us-east-1 \

--function-name node-lambda-starter \

--zip-file fileb://MyLambda.zip \

--handler MyLambda.handler \

--runtime nodejs \

--role arn:aws:iam::<account_id>:role/lambda_basic_execution

If your upload was successful, you should receive a response like this:

{

"FunctionName": "node-lambda-starter",

"CodeSize": 1158014,

"MemorySize": 128,

"FunctionArn": "arn:aws:lambda:us-east-1:<account_id>:function:node-lambda-starter",

"Handler": "MyLambda.handler",

"Role": "arn:aws:iam::<account_id>:role/lambda_basic_execution",

"Timeout": 3,

"LastModified": "2015-04-23T20:58:17.586+0000",

"Runtime": "nodejs",

"Description": ""

}

You can see your uploaded lambda on your dashboard. From there you can also edit/invoke with sample data.

Add event sources

Now that your lambda has been created and uploaded, you can add event sources to it via the dashboard. As mentioned, both S3 PUT events and Kinesis streams will work properly with this lambda we've created.

Starter module

To make working with Lambda a bit easier, we wrote a starter Lambda module. We defined a handful of Make targets which can make managing a single lambda a bit easier:

make upload-- upload your function for the first time.make update-- upload new function code.make get-- retrieve details of your existing function on Lambda.make invoke-- invoke your function with sample data provided within the repomake delete-- remove this function from Lambda.

We hope you find it useful! Be sure to drop an issue on GitHub for any questions / bugs.

Conclusion

Lambda presents a new way of programming application logic around events instead of infrastructure. We think this has the potential to bring entirely new types of applications and workflows to market, and it fills a gap in AWS's cloud computing lineup that makes it easier and faster to do real-time work on data within the ecosystem.

Even aside from the affordability and durability of Lambda, being able to direct chunks of logic to process individual events from systems represents an opportunity for data-heavy organizations to drastically streamline their technical infrastructure.

]]>- Running a Node app on port 80.

- Running nginx on port 80.

- Running a watched test-suite for a Node app.

- Auto-compiling SCSS source files to CSS.

- Running a Django app with Gunicorn.

- Running a Django app with the built-in dev server.

There are a few things that make Supervisor well-suited for the above tasks. All of the above tasks:

- I prefer to have running in the background.

- I need quick access to logs, especially streaming via

tail -f. - I'd like to have a common interface for starting, stopping, and restarting.

My favorite part of having Supervisor wrap all of these processes is the common interface for accessing logs. Let's say I want to watch the logs for my Django app I'm running via the built-in dev server (wheresniptis the program name I configured -- more on that later):

> sudo supervisorctl tail -f snipt stderr

The output streams to my terminal:

> sudo supervisorctl tail -f snipt stderr

==> Press Ctrl-C to exit <==

"GET /static/js/projects.js HTTP/1.1" 304 0

[08/May/2013 18:05:42] "GET /login/?next=/ HTTP/1.1" 200 3811

...

Or if I just want to dump all of the logs:

> sudo supervisorctl tail snipt stderr

You get the idea. If you want to learn more about what Supervisor can really do, check out the docs.

Installation

Installing Supervisor on OS X is simple:

> sudo pip install supervisor

This assumes you havepip. If you don't:

> curl -O http://python-distribute.org/distribute_setup.py

> python distribute_setup.py

> sudo easy_install pip

> sudo pip install supervisor

Or if you use Homebrew:

> brew install distribute

> sudo easy_install pip

> sudo pip install supervisor

Or you could install everything from source. Good luck with that.

Regardless of how you've done it, once you've successfully installed you should be able to runsupervisorctland get something like:

Error: No config file found at default paths

That's fine, that means Supervisor is installed and ready to configure. On to bigger and better things (Configuration).

Configuration

The Supervisor documentation provides excellent information on configuration (as well as everything else). For the sake of getting this running on OS X, however, here's what mysupervisord.conflooks like:

[unix_http_server]

file=/tmp/supervisor.sock

chmod=0700

[supervisord]

logfile = /Users/Nick/Sources/dotfiles-private/supervisor/logs/supervisord.log

logfile_maxbytes = 50MB

logfile_backups=10

loglevel = info

pidfile = /tmp/supervisord.pid

nodaemon = False

minfds = 1024

minprocs = 200

umask = 022

identifier = supervisor

directory = /tmp

nocleanup = true

childlogdir = /tmp

[supervisorctl]

serverurl = unix:///tmp/supervisor.sock

[rpcinterface:supervisor]

supervisor.rpcinterface_factory = supervisor.rpcinterface:make_main_rpcinterface

[include]

files = /usr/local/share/supervisor/conf.d/*.conf

You may want to configure some of these to your liking if you know what you're after, otherwise just change the directories to point to your log file, configuration directory, etc. The configuration directory can be anywhere, just make sure yoursupervisord.confis directed to include configuration files from there.

Supervisor is going to look for thissupervisord.conffile in a few places by default:

/usr/local/share/etc/supervisord.conf

/usr/local/share/supervisord.conf

./supervisord.conf

./etc/supervisord.conf

/etc/supervisord.conf

You can place it anywhere, though, as we'll be running the Supervisor daemon with a configuration flag (see below).

Running Supervisor

Now that we have a main configuration file somewhere, we can run the daemon. Oh, about that: there are two parts to Supervisor: the daemon (supervisord) and the client (supervisorctl). The daemon runs in the background and does all of the hard work, and the client provides a nice little UI for... doing stuff.

You can run the daemon manually like this:

> supervisord -c /path/to/supervisord.conf

But we're not interested in that. We want Supervisor to run on startup as root so we can have Supervisor do things with root-level priviliges (like running servers on port 80).

So in order to start Supervisor on startup, we need to use OS X'slaunchdsystem, which loads programs asrooton startup. You don't actually use thelaunchdprogram, you write a configuration file and you load it withlaunchctl. Here's the file:

/Library/LaunchDaemons/com.agendaless.supervisord.plist

<?xml version="1.0" encoding="UTF-8"?>

<!DOCTYPE plist PUBLIC "-//Apple//DTD PLIST 1.0//EN" "http://www.apple.com/DTDs/PropertyList-1.0.dtd">

<plist version="1.0">

<dict>

<key>KeepAlive</key>

<dict>

<key>SuccessfulExit</key>

<false/>

</dict>

<key>Label</key>

<string>com.agendaless.supervisord</string>

<key>ProgramArguments</key>

<array>

<string>/usr/local/share/python/supervisord</string>

<string>-n</string>

<string>-c</string>

<string>/usr/local/share/supervisor/supervisord.conf</string>

</array>

<key>RunAtLoad</key>

<true/>

</dict>

</plist>

Note: The "agendaless" connotation is the organization that created Supervisor. You can use whatever you want there, but "agendaless" makes the most sense.

This plist file tells OS X to run thesupervisordprogram on startup (theRunAtLoadpart), and to run the program as "nodaemon" (the-nflag), meaning we want it in the foreground. Then we pass the configuration file (the-cpart followed by the .conf path). Here's where you'd change the path to your configuration file, if needed.

To register this plist, run this:

> launchctl load /Library/LaunchDaemons/com.agendaless.supervisord.plist

If everything worked well, you should be able to runsudo supervisorctland see something like this:

\t> sudo supervisorctl supervisor>

If you see that, you're in the Supervisor terminal-based UI, the Supervisor daemon is running properly, and thesupervisorctlprogram has found thesupervisord.conffile correctly. This means you're ready to start configuring programs.

If you see anything other thansupervisor>, something is probably wrong. First off: reboot your computer. It's possible that you need to get OS X to loadsupervisordfromlaunchdas root on startup in order to get things to work. If you've rebooted and you're still having problems, drop a note in the comments and we'll see if we can figure it out :)

Otherwise, you're ready to start configuring programs.

Program configurations

In Supervisor, you write configurations like this. It's quite simple, and the configuration options for program definitions are robust. The simplest program configuration looks like this:

[program:foo]

command=/bin/cat

That would be the content of the file/usr/local/share/supervisor/conf.d/foo.conf(or wherever you pointed your configuration files at insupervisord.conf.

Let's pretend for a moment that the programcatruns in the foreground and you just want to run it via Supervisor:

- Hop into the Supervisor UI:

> supervisorctl. - Tell Supervisor to read all of the configuration files:

reread. - You should get a notice that

foois available. - Add the

fooprogram:add foo. foois now ready to be managed by Supervisor.- Type

statusto see a list of programs:fooshould be in there, with a status ofSTOPPED.

At this point, thefooprogram is ready to be managed by Supervisor, and is stopped. Here are some common operations:

start foo

restart foo

stop foo

tail foo stderr

tail foo stdout

tail -f foo

tail -f foo stderr

...etc, etc. For a complete list of commands, typehelpin the Supervisor UI, orsudo supervisorctl help. Which brings up a good point: you can run Supervisor commands either inside the UI (viasudo supervisorctl) or directly from the commandline, like this:

sudo supervisorctl start foo

That's about it for creating a basic program to be managed by Supervisor.

Here are some program-specific examples of configuration files:

Django app with built-in dev server

[program:myawesomeprogram]

command=/path/to/python /path/to/project/manage.py runserver localhost:4000

directory=/path/to/project

autostart=false

autorestart=true

stopsignal=KILL

killasgroup=true

stopasgroup=true

Note: thekillasgroupandstopasgroupdeclarations are very important when running the Django dev server via Supervisor.

Django app with gunicorn process

[program:anotherawesomeprogram]

command=/path/to/bin/gunicorn -c /path/to/gunicorn.conf.py debug_wsgi:application

directory=/path/to/project

autostart=false

autorestart=true

Node app

[program:yetanotherprogram]

command=sudo /usr/local/bin/node app 80

directory=/path/to/node/project

autostart=false

autorestart=true

Auto-compiling SCSS source files to CSS

[program:css]

command=/usr/local/opt/ruby/bin/scss --watch hi.scss:hi.css

directory=/path/to/css

autostart=false

autorestart=true

Nginx

[program:nginx]

command=sudo /usr/local/sbin/nginx

autostart=false

autorestart=true

# Note: in your nginx.conf, make sure to set `daemon off;`.

# Also note: since Supervisor is run as root, you can configure Nginx to run on port 80 without trouble.

It's important to provide absolute paths to programs, especially if those programs were user-installed, like Ruby gems, Python programs, etc. Remember, thesupervisordprogram does all the work, and it's run as root, which won't source user-level PATHs.

Django settings

I ran into an issue with some app complaining about not being able to work with naive timezones. You'll need to add this to your settings.py:

USE_TZ = True

Tag changes

The URL tag has changed to take a path to a view function as a string. Previously this wasn't stringified.

{% url whatever args %} -> {% url 'whatever' args %}

direct_to_template

The olddirect_to_templategeneric view has been changed to a more traditional class. Here's the new structure:

from django.views.generic import TemplateView

...

url(r'^500/$', TemplateView.as_view(template_name='500.html')),

django-registration

The quite-popular django-registration app took some time to get proper 1.5 support, but the awesome Mr. Bennett was able to get support rolling during a PyCon sprint. You should Gittip him.

Primarily,django-registrationhas been rewritten to use class-based views. If you had a custom form you were passing to your registration app, you'll need to do some things.

On Snipt I use a custom registration form that further restricts characters in usernames, as well as forces uniqueness of email site-wide. Here's what you need to do to get 1.5 support fromdjango-registrationwith custom forms:

- First off, make sure you're using the

django-registrationfrom Bitbucket, not pip. It should land on pip soon, but for now do this. When you install it, you must also have Django installed first. I have no idea why this is the case. - Second, set up your custom registration_register URL.

- Create your custom registration form.

- Create your custom registration view and point it to the form.

- Make sure your custom registration view sub classes the

RegistrationViewfromregistration.backends.default.views.

django-haystack

This may or may not be 1.5-specific, but you need to make sure yourindex_querysetis accepting**kwargs, like this.

pyelasticsearch

This was fun. Both of thesepyelasticsearchrepos will fail in 1.5:

You need to specifically install version 0.3 frompip.

@mixin border-radius($radius: 5px) {

-webkit-background-clip: padding-box;

-webkit-border-radius: $radius;

-moz-background-clip: padding-box;

-moz-border-radius: $radius;

border-radius: $radius;

background-clip: padding-box;

}

@mixin box-shadow($horizontal: 0px, $vertical: 1px, $blur: 2px, $color: #CCC) {

-webkit-box-shadow: $horizontal $vertical $blur $color;

-moz-box-shadow: $horizontal $vertical $blur $color;

box-shadow: $horizontal $vertical $blur $color;

}

@mixin inset-box-shadow($horizontal: 0px, $vertical: 1px, $blur: 2px, $color: #CCC) {

-webkit-box-shadow: inset $horizontal $vertical $blur $color;

-moz-box-shadow: inset $horizontal $vertical $blur $color;

box-shadow: inset $horizontal $vertical $blur $color;

}

@mixin multi-color-border($top, $sides, $bottom) {

border-top: 1px solid $top;

border-left: 1px solid $sides;

border-right: 1px solid $sides;

border-bottom: 1px solid $bottom;

}

@mixin multi-border-radius($topLeft: 5px, $topRight: 5px, $bottomRight: 5px, $bottomLeft: 5px) {

-webkit-border-top-left-radius: $topLeft;

-webkit-border-top-right-radius: $topRight;

-webkit-border-bottom-right-radius: $bottomRight;

-webkit-border-bottom-left-radius: $bottomLeft;

-moz-border-radius-topleft: $topLeft;

-moz-border-radius-topright: $topRight;

-moz-border-radius-bottomright: $bottomRight;

-moz-border-radius-bottomleft: $bottomLeft;

border-top-left-radius: $topLeft;

border-top-right-radius: $topRight;

border-bottom-right-radius: $bottomRight;

border-bottom-left-radius: $bottomLeft;

}

@mixin vertical-gradient($start: #000, $stop: #FFF) { background: ($start + $stop) / 2;

background: -webkit-gradient(linear, left top, left bottom, from($start), to($stop));

background: -moz-linear-gradient(center top, $start 0%, $stop 100%);

background: -moz-gradient(center top, $start 0%, $stop 100%);

}

@mixin vertical-gradient-with-image($image, $start: #000, $stop: #FFF) {

background: ($start + $stop) / 2 $image;

background: $image, -webkit-gradient(linear, left top, left bottom, from($start), to($stop));

background: $image, -moz-linear-gradient(center top, $start 0%, $stop 100%);

background: $image, -moz-gradient(center top, $start 0%, $stop 100%);

}

@mixin opacity($op) {

-khtml-opacity: $op;

-moz-opacity: $op;

opacity: $op;

}

]]>"Supervisor is a client/server system that allows its users to monitor and control a number of processes on UNIX-like operating systems."

Steve Losh said that the trick to getting anything working with Supervisor is to make sure it doesn't daemonize. But, alas, there are other tricks.

This blog post concerns getting PostgreSQL working with Supervisor.

First, install PostgreSQL normally. On Ubuntu 10.10, the default way of running PostgreSQL is via Unix socket. This socket lives at/var/run/postgresql. Now, believe it or not, this creates a problem when trying to run PostgreSQL in foreground mode, as required by Supervisor. More on that later.

To understand the problem, you have to understand how PostgreSQL runs normally. Typically, you run PostgreSQL via init script, like/etc/init.d/postgresql start. This script runs a bunch of code, some of which actually creates the dir/var/run/postgresqland sets the proper permissions.

Problem: when you run PostgreSQL in foreground mode with Supervisor, it tries to open the socket in/var/run/postgresql. This will work if you've previously run PostgreSQL with the init script, because the init script created and set the permissions for that dir. However, after reboot, that dir will be gone, and PostgreSQL won't be able to open the socket there, resulting in failure. So, we either need to move the socket (meaning a config change with your app), or make PostgreSQL run on a TCP port instead. TCP port it is.

To get things running with Supervisor:

- Stop PostgreSQL:

sudo /etc/init.d/postgresql stop - Move the init script somewhere safe:

sudo mv /etc/init.d/postgresql ~/somewhere-safe(so it won't startup in daemon mode) - Edit the PostgreSQL config:

sudo vim /etc/postgresql/8.4/main/postgresql.conf - Line 49: comment out

external_pid_file(not needed for TCP mode) - Line 63: comment out port (unless you want to change the default port of 5432)

- Line 68: change

unix_socket_directoryto/tmp

With this config, PostgreSQL will default to using TCP port 5432 instead of a Unix socket.

Here's what my Supervisor conf looks like for PostgreSQL:

[program:postgresql]

user=postgres

command=/usr/lib/postgresql/8.4/bin/postmaster -D "/var/lib/postgresql/8.4/main"

process_name=%(program_name)s

stopsignal=INT

autostart=true

autorestart=true

redirect_stderr=true

Now, you might also get an error about PostgreSQL not being able to read the config file at/var/lib/postgresql/8.4/main/postgresql.conf.

I had to symlink:

sudo ln -s /etc/postgresql/8.4/main/postgresql.conf /var/lib/postgresql/8.4/main/postgresql.conf

Your mileage may vary. Good luck.

]]>When Pete and I first arrived in Boston (me from Rochester, him from Acton, MA), we started talking about trying to build something that might make a splash at the event. We knew these conferences get a lot of coverage on Twitter, Flickr, Facebook, etc. We thought it'd be convenient to have one place to view a live stream of activity that covered all of the major sources, instead of having to bounce through Twitter hashtag searches, Flickr tag searches, etc. So we decided to build a feed aggregator.

We called it A Feed Apart, and it aggregates the conference hashtags from Twitter and tags Flickr, and does so in real-time (currently switched off). Check out Pete's posts on what we learned, and how we built it.

As some of you may know, I'm a terrible notetaker. So, my reactions / recaps / thoughts listed below are a combination of my memory of the presentations, the slides (available only to AEA attendees), and the reactions from people on Twitter (which you can find here: http://afeedapart.com/sessions). If I misquoted you, or you want direct attribution, drop me a line at nick at this domain.com.

By the way, be sure to check out Jeremy Keith's (@adactio) liveblogging journals, which I've linked to at the beginning of each session from the first day below. Excellent stuff there - thanks Jeremy, you rock.

Revealing Design Treasures from The Amazon: Jarod Spool (@jmspool)

- Jeremy Keith's liveblog of the presentation

- Tuscan Whole Milk, 1 Gallon, 128 fl oz (read reviews)

- 71,431,000 amazon.com visitors in December, 2008

- Amazon: 1 of every 5 purchases comes from a review

- The question "Was this review helpful to you?" was responsible for an additional $2.7B of revenue

- 0.07% of users leave reviews after purchasing a product

- Denon AKDL1 Dedicated Link Cable (read tags)

- "Risk adverse companies produce crap" - brilliant

- Experimenting with new ideas is a critical part of innovation

- Amazon took 12 weeks to roll out navigation changes, and they studied the user experience in phases

- Amazon turns over its inventory every 20 days - nuts

- Understand where people are spending time

- Design is about creating an experience that meets a business objective

Content First: Kristina Halvorson (@halvorson)

- Jeremy Keith's liveblog of the presentation

- Have a content strategy for every project

- Content should focus on the user, not on products

- Content is not a feature - it's a living-breathing thing that evolves & involves multiple avenues of input

- Content is cyclical, you can't "set it and forget it"

- Someone needs to own the content from the beginning of the process

- Lorem ipsum must die

- You can't just put icons on the page and expect users to find their way

- Crappy content == crappy user experience

Thinking Small: Jason Santa Maria (@jasonsantamaria)

- Jeremy Keith's liveblog of the presentation

- Use grid-based layouts

- Use a notebook for sketching designs before any real design work happens

- "Sketchbooks are not about being a good artist, they're about being a good thinker"

- Arial is like fishing gum out of a urinal

Future Shock Treatment: Jeremy Keith (@adactio)

- Jeremy Keith's PDF of his presentation

- Write for your future self, because you don't want to piss off your future self

- Use a JavaScript framework

- Don't use a CSS framework

- Standards are like sausages - they're delicious but you don't want to see how they're made

- http://dowebsitesneedtolookexactlythesameineverybrowser.com/ (view in multiple browsers)

- HTML5 is coming sooner than you think, and you should be ready for it now

- Create your own reusable CSS libraries for things like lists, forms, tables, clearfix, etc.

- Choose a framework based on its philosophy - work with the framework rather than against it

Designing With Psychology in Mind: Joshua Porter (@bokardo)

- Jeremy Keith's liveblog of the presentation

- People are easily swayed to being bad (Stanford prison experiment)

- Changing behavior is what web designers do, and what psychologists study

- Behavior first, design second

- We can't change the person but we can change the environment - thus changing the experience

- Websites are the most common form of persuasive technology today

- http://hunch.com

- The behavior you're seeing is the behavior you designed for, whether intentional or not

- Awareness test: http://www.dothetest.co.uk/

DIY UX: Give Your Users an Upgrade (Without Calling In a Pro): Whitney Hess (@WhitneyHess)

- Jeremy Keith's liveblog of the presentation

- Creative spaces and tools allow for creative thinking

- "Never tell your UX Designer 'it looks good' - they'll punch you in the face. Tell them what an epic fail it is, instead."

- Customers that feel they're being heard translates to word of mouth (and more business)

- "You need to have humility and listen. Users aren't always right but you need to hear them."

- "The key to a company's success is the 'culture of iteration'."

Implementing Design: Bulletproof A-Z: Dan Cederholm (@simplebits)

- Jeremy Keith's liveblog of the presentation

- "There's like 50 letters in the alphabet, or something"

- "It's the craftsmanship stupid"

- RGBa = Red, Green, Blue, AWESOME

- Neuropol is the best font in the universe

Beyond Pixel Pushing: A Simple Way to Better Websites and Happier Clients: Brett Welch (@higoodbarry)

- "Lowering prices is a race to the bottom - we need to sell our design value more effectively"

- "A website needs a marketing plan. Always."

- "When dealing with a scrooge client, cut your scope, not your price"

A Site Redesign: Jeffrey Zeldman (@zeldman)

- Any project not for yourself should start with research. Research produces achievable goals

- Research makes a you a credible partner, not a nag or another mouth to feed

- User research is not market research - it's about how people act and think

- Find people that represent real users and define their personas. Design your site around those personas

- Have a content strategy, and place real content in your designs instead of placeholder text

- Zeldman likes bacon, mayonnaise, and coffee

- Clients have a lot of stuff on their mind. Use the Alzheimer's method of repetition in a non-condescending fashion; remind them of steps / changes, and keep in contact

- "Sell ideas, not pixels"

- Always avoid combating the client, you want them to always be your friend

- More sexy time

- Working with text in Photoshop is hard - writing a few lines of CSS is not

- "Beta testing is good - it gives the impression that you care"

- "Mostly I have really terrible ideas one after another, and eventually I get to mediocrity and feel so relieved"

- "I would kill myself if I had to listen to all of my bad ideas"

- Simply re-skinning a site is a missed opportunity

Flash and Web Standards - Getting Along on the Playground: Daniel Mall (@danielmall)

- "Flash is the smelly kid." (joking)

- Jakob Nielson's article in 2000 calling Flash 99% bad was very hurtful to the Flash community, but 100% true

- "Why'd you create Comic Sans? - 'beacuse sometimes it's better than Times New Roman.'"

- In design, there is no bad - just appropriate and inappropriate

- Flash is another tool in your arsenal

- The web is a playground - our job is to figure out how to get all of our 'friends' (HTML/CSS/JS/Flash) to play along

- Be willing to compromise and use whatever technique that gets the task done

Accessibility - Experiments at the Edges of Experience: Derek Featherstone (@feather)

- The coolest thing ever can become passe more quickly than you can even realize

- The API (generally speaking) is the best tool you have

- "I have no idea if this is useful, but we'll never know unless we try"

- "We have to push our limits so that others may break through theirs" - brilliant

- "When you think you're at your 'accessibility limit', push through and find the next limit"

Findability Bliss Through Web Standards: Aarron Walter (@aarron)

- "If its natural and OK, put keywords in your URLs. If not, then you are negatively impacting your findability"

- Keep your SEO keyword usage placement \<= 7% to avoid being penalized

- Meta descriptions do not help boost your SEO, but help your users tremendously in findability

- Google is now recognizing (some) Microformats

- http://microformats.org/

- http://sensational-seo.com/

- http://buildingfindablewebsites.com/

Change the World (Wide Web)?: Scott Thomas (@simplescott)

- Deliver clear and concise messaging, focused on the "we" rather than the "he" (Obama)

- "We were building a plane while in flight" - had to deliver materials day-to-day while contributing to the longer-term mission

- The fold is dead

- Websites are living-breathing-evolving organisms

- "Empower other people in the process, and really wonderful things can happen"

- http://data.gov

Surprise & Delight: Heather Champ (@hchamp)

- Respect your members

- 'Archer' is very six months ago

- Provide guidelines for good citizenship

- Leaderboards will bite you in the ass

- http://www.flickr.com/explore/panda

- Put more tools into the hands of your users

- A tsunami of feedback has a lifecycle

- Site changes make users react "like we were throwing kittens out of helicopters on top of small children playing with glass"

- Be as transparent as you can

- "Things will happen. It's how you step up, move forward, and change - you'll always make mistakes"

- Make Lemonade

- Embrace the chaos

- noindex all of your abuse / report pages so they don't appear in searches

- Greenland?

Walls Come Tumbling Down: Andy Clarke (@malarkey)

- Andy Clarke puts the sexy in CSS

- "Limitations imposed by a recession make us think & work in new ways, help us to focus, sharpen our skills, and make us more competitive"

- "We own our skills, no one else - now is the time to improve ourselves"

- It's time to discard outdated workflows

- Develop new workflows based on creativity

- Embrace agile design / development

- "Designing static visuals that will live in a browser fails by definition"

- "It's time to stop showing clients static design visuals"

- "Static visuals reinforce the misconception that websites should look exactly the same in every browser"

- As the browser landscape gets more diverse, it becomes uneconomical and undesirable to seek "cross-browser, pixel-perfection"

- "You know, sometimes I think that web designers have got no fucking balls"

- "Different does not mean broken"

- Does your aunt fire up two browsers and say, 'It doesn't fucking look the same in Firefox!'?

- "HTML/CSS should be a tool in the work belt of developers AND designers"

- Design systems, not individual pages

- "Clients are not paying you for the hours you work, but for the years of knowledge you have"

- Do not charge less just because you can work faster

- Focus efforts on redefining why we do what we love so much

Fabric is:

...a simple pythonic remote deployment tool. It is designed to upload files to, and run shell commands on, a number of servers in parallel or serially. These commands are grouped in tasks (regular python functions) and specified in a 'fabfile.' It is a bit like a dumbed down Capistrano, except it's in Python, doesn't expect you to be deploying Rails applications, and the 'put' command works. Unlike Capistrano, Fabric wants to stay small, light, easy to change and not bound to any specific framework.

It is awesome. But don't let me tell you, let me show you.

We have lots of projects floating all over the place, all neat and tidy in our Subversion repository. When I'm ready to start building a new feature or fix a bug, I like to have a copy of the production database for that application on my local machine for development. Most of the time, these applications are Drupal or Django based. When I'm ready to start building, I do something like this:

The shell script I run essentially does this:

- log into the remote production server

- take a snapshot of the production database

- save the dumpfile

- log out of the server

- transfer the file from the production server to my workstation

- remove the existing development database from my local MySQL installation

- create a new database to contain the production database

- import the production database into the new database

It's nice that the script takes care of all of that for me - but, you see, there are 10-20 of those files (one for each project). This becomes an enormous headache when we change servers or add a new feature to the script. We needed a better solution.

Enter Fabric. I got it up and running in about 5 minutes, and began to migrate that shell script into one 'fabfile'. However, since I needed to use exactly the same functions for every project, we needed to share the functions with all projects. Fabric makes this easy.

In each project root, there is a file named 'fabfile.py', with the following contents:

Important note: The reason I use config.fab_hosts instead of 'set(fab_hosts = ['...'])' is because I've built my Fabric installation from the git master branch. If you've downloaded the 0.0.9 package, use:

The fabfile sets some basic variables for the server we're connecting to, the user we connect with, and MySQL credentials. The very first thing the file does is import a file named 'fabric_global.py', which contains the following:

At first glance, it may look a little confusing, but this code is something like 10% of what it would be if it were duplicated for each project (and with all the additional commands).

The 'fabric_global.py' file defines two functions that we can run on a codebase. One is for updating the development database with the production database, and the other is for simply committing file changes and updating them on the server.

Now, when I need to grab the production database at the beginning a project, I simply do this:

Well crap, that's a lot easier.

When it's time to deploy my code changes, I simply do:

If I had already committed my changes and simply wanted to update them on the production server, I would just do:

Quite amazing, if you ask me.

Also, Fabric can do a whole lot more than what I just demonstrated, so checkout the docs.

I'd like to thank the Fabric team for probably preserving a few years of my lifespan. Also, it should be noted that the current version of Fabric is 0.0.9, which should give you an idea of the amount of awesomeness to eventually come to newer releases.

]]>We also build a lot of other generic web applications separate from our Zope4Media installations. Naturally, it makes sense for us to investigate the use of Zope 3 for use as our primary development environment for these other applications.

UPDATE: Aaron Lehmann of Zope Corporation was kind enough to respond to many of the points I make in this post. I've added his responses inline below.

What is Zope 3?

Zope 3 is the latest in the line of Zope-powered web application servers. It was released nearly 4 years ago on November 6th, 2004. It is a standalone server written in the excellent Python programming language that provides a framework for developing applications such as content management systems, intranets, and other dynamic web-based tools. It provides data storage in the form of the ZODB (Zope Object Database) which operates as transparent and persistent storage of Python objects.

Aaron Lehmann: Zope 3 is technically not a web server but a group of libraries that can be used together. While some of the packages handle web publishing, it is possible to write sophisticated applications in Zope 3 that have nothing to do with web publishing.

In fact, the heart and soul of Zope 3 is the Component Architecture, which allows behaviors to be loosely coupled to the data involved.

Zope 3, like other modern development platforms, follows a loose interpretation of the MVC (Model-View-Controller) design pattern. By nature of it being a Python application, it encourages clean and intelligently designed code.

The purpose of this blog post is to journey through the installation, configuration and development of a simple Zope 3 web application.

Installation

First things first, we need to download and install Zope 3. It must be installed and configured on the server before you may begin development.

Since I'm a Subversion junkie, I want to grab a checkout of the Zope 3 application, rather than download.

Aaron Lehmann: The best way to get hold of the packages you need is to make a buildout (analogous to a Makefile) and use zc.buildout to get the necessary dependencies. To ensure the packages you get all work together, you'll want to nail the versions. There is a known good set, which has been consistently inter-compatible. For a very quick start and a sample buildout, you might try the zopeproject egg, which can be gotten from PyPI.

For a less steep learning curve some folks recommend the Grok project, which is built on top of the Zope 3 libraries.

Head over to the Zope 3 website and look for their SVN repository. There's a link in the left sidebar for Zope SVN, so that's probably a good place to start. This page, however, doesn't seem to have any information on where the SVN repo lives, so we hit up the SVN FAQ. The first thing I see on this page is:

svn co svn://svn.zope.org/repos/main/Zope/trunk Zope

That looks to be exactly what we're after, so I go ahead and try to checkout. Unfortunately, this appears to checkout Zope 2, not Zope 3. There's also no indiciation on their FAQ that this is or isn't the case.

I'll save you the headache of trying to figure out just where Zope 3 lives on their repository, since it's not really documented anywhere. The SVN list of Zope 3 releases really lives here:

svn ls svn://svn.zope.org/repos/main/Zope3/tags

From there, you'll see a list of Zope 3 releases. We're interested in the latest release, which from what I can see from here, is 3.3.1. We grab that release by checking out with the following:

svn co svn://svn.zope.org/repos/main/Zope3/tags/3.3.1/ zope

The checkout will take a while. After it's finished, it looks like the entire checkout clocks in at around 111 MB. Quite large.

Now that we've got the latest version of Zope 3 in our hands, we need to install it on our system. Here's where I picked up Benji York's excellent Zope 3 quick start guide.

Following from Benji's tutorial, I run the installation command for Linux, since I'm on OS X:

python setup.py install_data --install-dir .

All went well with installation, so now's time to setup our first instance of Zope 3 (a standalone application):

python zope/bin/mkzopeinstance

The initial instances weighs in at 376k.

Running the server

Our Zope instances makes available to us the Zope 3 application server to host our instance. We do so with the following command from within the instance path:

bin/runzope

Your Zope 3 application should be accessible at http://localhost:8080.

At this point, I'll be completely honest with you. I wrote this tutorial in segments, meaning I wrote down my initial steps first, then iterate on the instructions and comments to increase accuracy. However, the second time around, when trying to run the server for the application I had already built, I got the following error:

ImportError: No module named zope.app.twisted.main

Now, I am completely certain I had a working application when I last touched it. That said, I decided to start fresh with the instructions above. I checked out a fresh copy of Zope 3, reinstalled, and attempted to create a new instance. This is what I get when trying to run 'mkzopeinstance':

ImportError: No module named _zope_proxy_proxy

I honestly have no clue what's going on here. I'm beginning to think the SVN location above is incorrect, but there is absolutely no (good) information on Zope.org to point me in the right direction. Even the official 3.3.1 release page has no reference of the SVN location.

Unfortunately, the list of releases in the Zope 3 SVN project makes it quite hard to figure out what release is correct:

3.2.2/3.2.3/3.3.0/3.3.0-zope.interface/3.3.0b2/3.3.0c1/3.3.1/3.3.2/3.4.0a1/3.4.0b1/3.4.0b2/3.4.0c1/Zope-3.1.0/Zope-3.1.0b1/Zope-3.1.0c1/Zope-3.1.0c2/Zope-3.1.0c3/Zope-3.2.0/Zope-3.2.0b1/Zope-3.2.0b2/Zope-3.2.0b3/Zope-3.2.1/Zope-3.3.0b1/Zope3-29921/

Naturally, '3.3.1/' appears to be (the most) correct.

Anyway, I'm going to continue with the tutorial assuming you finally got it working, somehow.

Zope Management Interface (ZMI)

The Zope Management Interface is the default interface you see when accessing your application for the first time. It goes without saying that this interface is incredibly intimidating to those who are using Zope 3 for the first time. Even after working with the interface a bit, I'm still not sure exactly what everything does, and why everything is there.

Aaron Lehmann: I agree somewhat with you about the Rotterdam Skin (the default ZMI Look and Feel). This is an example where getting a monolithic package works against you, as a custom-assembled application can avoid using it entirely.

That said, I'm sure everything is there for a reason. It'd be nice to have a more formal beginners documentation regarding exactly what everything in the ZMI does.

Aaron Lehmann: The ZMI is intended to be a way to manage all of one's configurations through the web. It's a philosophical holdover from Zope 2. We don't use it much in Z4M, but it does allow quite a lot of options for through the web control.

My biggest pet peeve with ZMI is that there's no way to log out of the application. I can successfully login using the credentials I provided when creating the instance, but there is no logout link anywhere. After a few tries at guessing what the logout URL might be, it turns out that it is:

http://localhost:8080/logout.html

That should come in handy.

Zope Object Database (ZODB)

As mentioned, Zope 3 stores data using the ZODB. From Wikipedia:

The Zope Object Database (ZODB) is an object-oriented database for transparently and persistently storing Python objects. It is included as part of the Zope web application server, but can also be used independently of Zope.

Essentially, this means data is read and written from the database transparently through the use of Python objects, which also means there is no need for SQL. Unfortunately, this also means data portability is somewhat affected, since it's not as easy to import or extract data from ZODB (at least, not apparently).