LLMs cannot access private company data like internal documentation, customer records, product specifications, and HR processes. When employees ask questions about company HR policies, a standard LLM can only provide generic responses. Likewise, when customers inquire about specific products, it can only rely on common patterns. These patterns have been learned from public internet data.

Retrieval-Augmented Generation (RAG) solve these problems by giving AI system access to specific documents and data.

RAG does not depend solely on what the model learned during training. It allows the system to look up relevant information from a particular document collection. This happens before generating a response.

What is Retrieval-Augmented Generation (RAG)?

Retrieval augmented generation (RAG) is a generative AI method. It enhances LLM performance by combining world knowledge with custom or private knowledge. This combining of knowledge sets in RAG is helpful for several reasons:

- Providing LLMs with up-to-date information: LLM training data is sometimes incomplete. It may also become outdated over time. RAG allows adding new or updated knowledge without retraining the LLM.

- Preventing AI hallucinations: The more accurate and relevant the in-context information LLMs have, the less likely they’ll invent facts. They are also less likely to respond out of context.

- Maintaining a dynamic knowledge base: Custom documents can be updated, added, removed, or modified anytime. This keeps RAG systems up-to-date without retraining.

How RAG Works?

RAG works in two phases, which are:

- Document preparation phase, which occurs once we set up the system. This can also happen later on when new documents or new sources of information are added to the system.

- Query processing phase which happens in real-time whenever a user asks a question.

This two-phase approach is powerful. It provides a separation of concerns between the computationally intensive document-preparation phase and the latency-sensitive query phase.

1. The document preparation phase

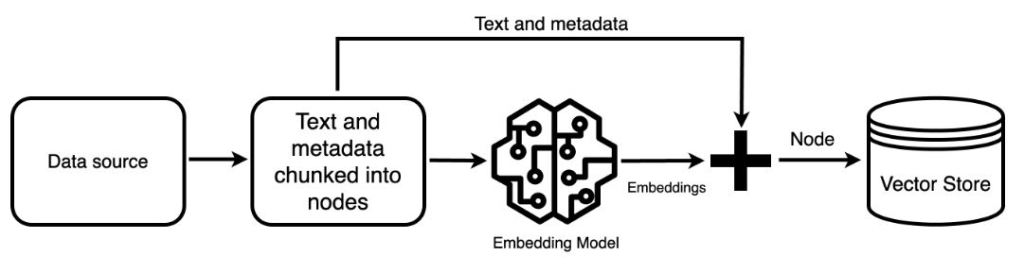

In this phase, first, the documents need to be collected and processed. Each document needs to be converted into plain text. This includes PDFs, Word documents, web pages, or database records.

Once the text is extracted, it breaks it into smaller chunks. This chunking is necessary because documents are usually too long to process as single units. A 100-page technical manual might be split into hundreds of smaller passages, each containing a few paragraphs.

The next step transforms these text chunks into numerical representations known as embedding. These numbers encode the meaning of the text in a way that allows mathematical comparison. Similar concepts produce similar number patterns, which enables the machine to find related content even when different words are used.

These embedding, along with the original text chunks and their metadata, are then stored in a specialized vector database. This database is optimized for finding similar vectors. It indexes the embedding in a way that allows rapid similarity searches across millions of chunks.

2. Query Processing Phase

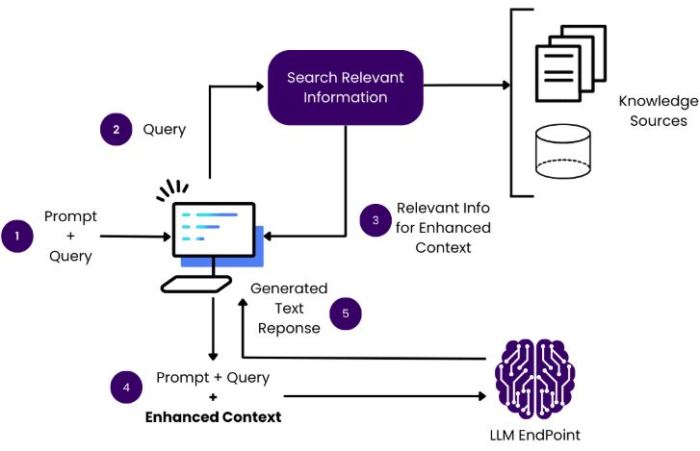

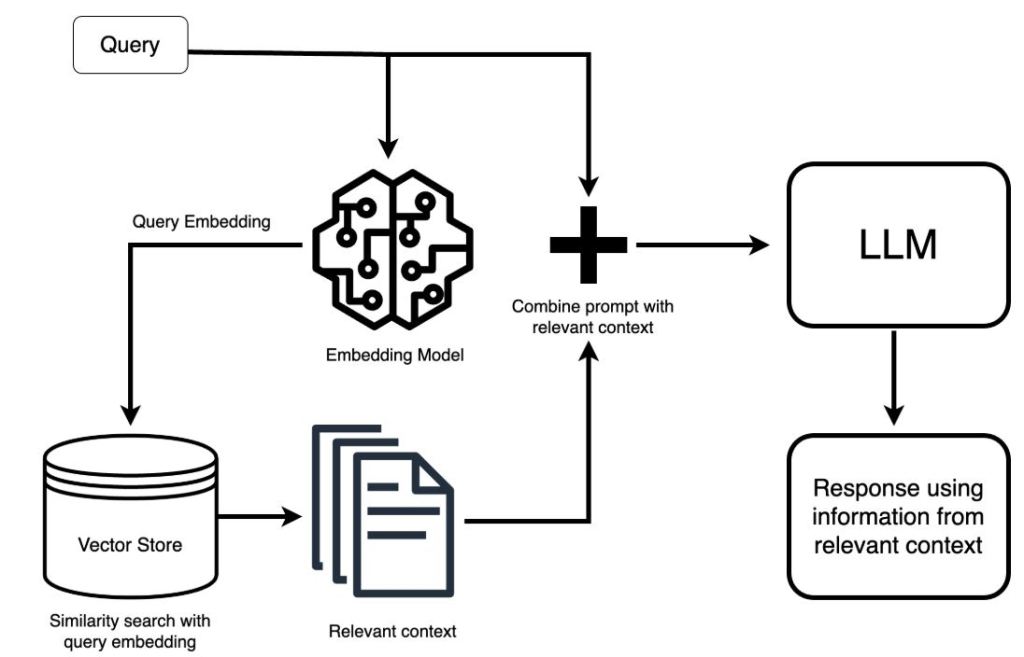

The Query processing journey starts when the user enter the question in the system. That question first goes through the same embedding process as the document chunks.

For example, the question “What is our refund policy for electronics?” gets converted into its own numerical vector using the same embedding model that processed the documents.

With the query now in vector form, the system searches the vector database for the most similar document chunks. This similarity search is fast because it uses mathematical operations rather than text comparison. The database might contain millions of chunks, but specialized algorithms can find the most relevant ones in milliseconds. Typically, the system retrieves the top 3 to 10 most relevant chunks based on their similarity scores.

These retrieved chunks then need to be prepared for the language model. The system assembles them into a context. It often ranks them by relevance. Sometimes, it filters based on metadata or business rules. For example, more recent documents might receive priority over older ones. Some sources might be viewed as more authoritative than others.

The language model now receives both the user’s original question and the relevant context.

The prompt contain the following details:

- Context documents provided

- User’s specific question

- Instructions to answer based on the provided context

- Guidelines for handling information not found in the context

The language model processes this augmented prompt and generates a response. Since it has specific, relevant information in its context, the response can be accurate and detailed rather than generic.

Finally, the response often goes through post-processing before reaching the user. This may involve adding citations that link back to source documents. It might also include formatting the response for better readability. Additionally, checking that the answer properly addresses the question is important.

Embedding – The numerical language of AI

Someone might ask about a computer problem in various ways. They could say, “laptop won’t start,” “computer fails to boot,” “system not powering on,” or “PC is dead.” These phrases share almost no common words, yet they all describe the same issue.

These phrases share almost no common words, yet they all describe the same issue. A keyword-based system would treat these as completely different queries and miss troubleshooting guides that use different terminology.

Embedding solve this by capturing semantic meaning rather than surface-level word matches.

Embedding models Vs Large Language models (LLM) in RAG

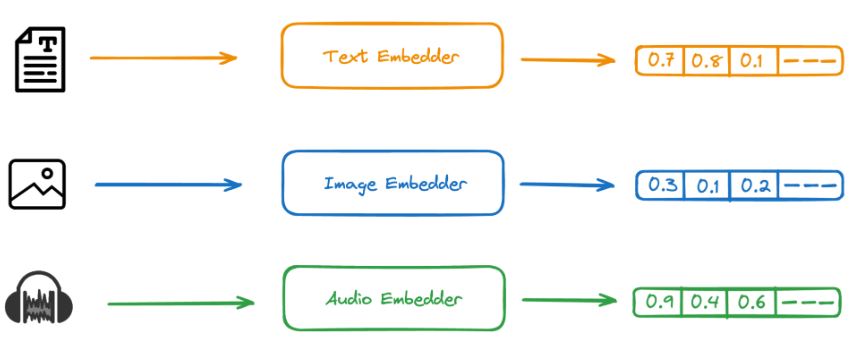

An embedding model convert non-numeric data, such as words, sentences, or images, into dense numerical vectors that capture the semantic meaning of the input.

In contrast, An LLM is the large, sophisticated deep-learning model that uses the numerical representations provided by embeddings to generate human-like text and perform complex language tasks.

This specialization is why RAG systems use two separate models. The embedding model efficiently converts all the documents and queries into vectors, enabling fast similarity search. The LLM then takes the retrieved relevant documents and generates intelligent, contextual responses.

Final Point

Retrieval-Augmented Generation represents a practical solution to the very real limitations of LLMs in business applications. RAG combines the power of semantic search through embeddings with the generation capabilities of LLMs. This combination enables AI systems to provide accurate and specific answers based on the organization’s own documents and data.