We often hear people ask how to install Oracle Service Bus, and also its integrated development environment (which is based on Eclipse) on 64-bit systems. The confusion comes from the apparent unavailability of the 64-bit installer for the WebLogic and Oracle Enterprise Pack for Eclipse installer – leading to the perception that the IDE can only be run in 32-bit mode.

In this post, we will attempt to quell the confusion by showing you how to easily install both OSB 11.1.1.5 and the IDE in 64-bit mode on 64-bit Oracle Linux.

Getting ready

First, you will need to download the necessary files. You will need the following:

- A 64-bit version of the JDK, we recommend 1.6.0_26

jdk-6u26-linux-x64.bin - The 64-bit version of OEPE – note that this must be this exact version

oepe-helios-all-in-one-11.1.1.7.2.201103302044-linux-gtk-x86_64.zip - The ‘generic’ version of the Oracle Service Bus 11.1.1.5 installer

ofm_osb_generic_11.1.1.5.0_disk1_1of1.zip - The ‘generic’ version of WebLogic Server 10.3.5

wls1035_generic.jar - And the Linux version of the Repository Creation Utility

ofm_rcu_linux_11.1.1.5.0_disk1_1of1.zip

The key thing here is to make sure you get the correct version of the Oracle Enterprise Pack for Eclipse (OEPE) installer. You need to get the exact version mentioned here, not an older version like 11.1.1.5, not a newer version like 11.1.1.8, but the exact version we have listed. You can find the OEPE download page on Oracle Technology Network here. Yes, we can understand your confusion 🙂

Update: For OSB 11.1.1.7, you need OEPE 11.1.1.8. You can get it from here:

Prepare your system and install a database

We have covered this before in other posts, so we wont repeat all the details here, but you will need to prepare your system by making sure it is up to date, install the oracle-validated package (using yum install oracle-validated) and then install a suitable database. On our system, we are using Oracle Database 11gR2 (11.2.0.1) for 64-bit Linux. You will also want to make sure your database is configured with adequate sessions and processes.

If you need more information, this is covered in this older post.

Install Java

Our first step is to install the Java Development Kit. We recommend that you use 1.6.0_26. We have seen some issues with later releases of the JDK and this version of OSB. Install the JDK using the following command:

sh ./path_to_download/jdk-6u26-linux-x64.bin

When this is complete, you need to edit your .profile to add this JDK to your PATH variable. You will need to add a line like this:

PATH="$HOME/jdk1.6.0_26/bin:$PATH"

You should then source the .profile in your current shell, or just close it and open a new shell. The use the following commands to confirm you are finding the correct version of the JDK:

which java java –version

The output should show you that you are using the 64-bit version of JDK 1.6.0_26 that you just installed. If not, go back and check that you set your path correctly.

Prepare your database

Next we need to prepare our datbase by running the Repository Creation Utility (RCU). Unzip the rcu file you downloaded into a temporary directory, then change into this directory, then the rcuHome/bin directory and run rcu:

cd /path_to_unzipped_rcu cd rcuHome/bin ./rcu

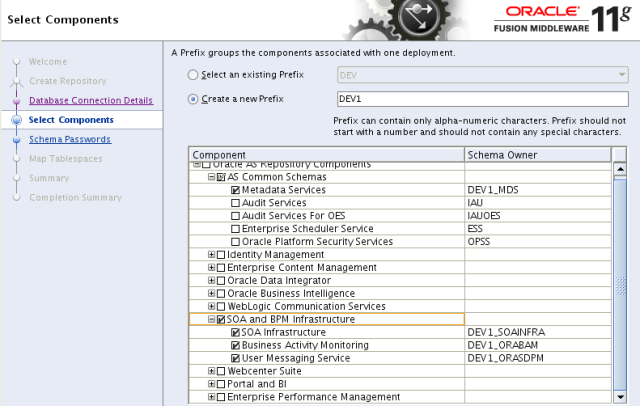

Again, we have been through the whole RCU process in earlier posts, like the one mentioned above, so we will just present highlights here. When you get to the Select Components screen, as shown below, you just need to select the category called SOA and BPM infrastructure. This will select all of the necessary options for Service Bus.

Install WebLogic Server

Now we need to install WebLogic Server. OSB 11.1.1.5 works with WebLogic Server 10.3.5, and we want to use the ‘generic’ version so that we will get a 64-bit install. The generic version will produce a 64-bit runtime if you install it with a 64-bit JDK or a 32-bit runtime if you install it with a 32-bit JDK. So be careful you have the right JDK on your path! You can start the installation using this command:

java –jar wls1035_generic.jar

Update: You should also use the -d64 argument to make ‘extra sure’ that you get a 64-bit WebLogic Server. Personally I have only seen this needed on Solaris, however Jian tells me that you can run into problems trying to deploy web services developed in eclipse to WebLogic Server if you don’t use this switch.

Again, we have covered this a number of times before, so we will just review the highlights. Click on Next on the first screen, then select you middleware home, then click on Next, bypass the email notification screens (or fill it in if you want to) and click on Next, choose a typical install and clicck on Next, choose your 64-bit JDK and click on Next, check the directories and click on Next, review the summary and click on Next. WebLogic Server will now be installed. When it is done, deselect the box to run quickstart and then click on Done.

Install Oracle Enterprise Pack for Eclipse

Next we want to install the OEPE using the full bundled installer that we downloaded earlier. Check once more that you have the exact right version, then we will go ahead and install it.

Create a directory inside your newly created middleware home to hold OEPE, for example using the command:

mkdir ~/fmwhome/oepe

No change into that directory and unzip the OEPE bundle, for example:

unzip ~/path_to/oepe-helios-all-in-one-11.1.1.7.2.201103302044-linux-gtk-x86_64.zip

Install Oracle Service Bus

Now we have all the prerequisites in place and we are ready to install the OSB itself. Make a temporary directory to hold the OSB installer and unzip the OSB installer that you downloaded into this new directory. Now change into this directory and run the installer, for example using the following commands:

mkdir osb cd osb unzip ../ofm_osb_generic_11.1.1.5.0_disk1_1of1.zip cd Disk1 ./runInstaller

When you are prompted, enter your JDK location, then the installer will start. If you are prompted to create the Oracle Inventory directory and/or to run some scripts as root, go ahead and do this now.

Proceed through the installer as follows: On the first page, click on Next, skip the software updates section and click on Next, choose your Oracle middleware home and click on Next, choose a custom installation and click on Next, deselect the samples, we do not want to install them, then click on Next, now you will see that it has found your WebLogic Server and OEPE install locations – if you installed the wrong version of OEPE then you would see and empty box here and you would need to go back and get the right version of OEPE isntalled and try again – otherwise, click on Next and then Install to continue.

Now OSB will be installed. When it is done, click on Next then Finish.

Setting up an OSB domain

Now you are ready to create your OSB domain. This is done using the domain creation wizard. Again we have covered this before, so just the highlights. Start the domain creation wizard with the following command:

/path_to_middleware_home/oracle_common/common/bin/config.sh

Follow through the steps, which should be fairly self explanatory. Select an OSB for Developers installation and add in the Enterprise Manager. You can just take the defaults for everything else for the purposes of this post. This will create a new single server OSB domain for you in /path_to_middleware_home/user_projects/domains/base_domain.

Start up your OSB server

Now we can start the OSB server using the following command:

/path_to_middleware_home/user_projects/domains/base_domain/startWebLogic.sh

When the server is up and running, you can access its WebLogic Server console at http://yourserver:7001/console and the OSB console at http://yourserver:7001/sbconsole. Try these to make sure they work.

Setup the IDE

Now we are ready to set up OEPE. Start it up using the following command:

/path_to_middleware_home/oepe/eclipse &

Once it starts up, open the Oracle Service Bus perspective by choosingWindow then Open Perspective then Other from the main menu bar. In the dialog box, choose Oracle Service Bus and click on OK.

In the Servers window, in the bottom pane, right click and select New then Server from the pop up menu.

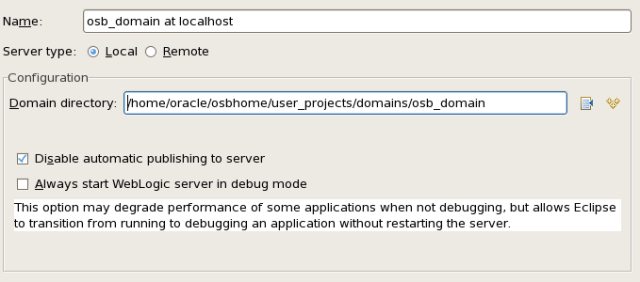

In the dialog box (shown above) select Oracle WebLogic Server 11gR1 PatchSet 4 as the server type, and then enter the hostname, a name for your server (this is just the name it will be known by in eclipse) and then find the correct runtime environment. Then click on Next.

On this page (above) you need to enter the domain directory. Note that yours will probably be different to the one shown in the example. We recommend that you leave the Disable automatic publishing to server option selected, otherwise you will have to wait for eclipse to go republish every time you touch anything, which can get very tiring.

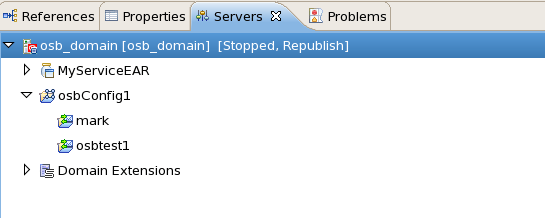

You should now see your shiny new 64-bit OSB server in eclipse:

Yours obviously wont have any projects deployed on it yet, but you are now all ready to go and define some and deploy them.

So, there you have a 64-bit version of Oracle Service Bus and a 64-bit version of its IDE running on eclipse on 64-bit Linux. A special thanks to Jian Liang for his assistance with this process.

http://www.oracle.com/technetwork/developer-tools/eclipse/downloads/oepe-archive-1716547.html

You must be logged in to post a comment.