Prefer to watch a video? Watch this content on YouTube instead

To start up a database, you can use this command, just replace the “xxxxxx”s with proper passwords. Note that the volume is needed so data will be persisted across container restarts, if you leave that out, you’ll get a new empty database every time you restart the container:

docker run -d \

-p 1521:1522 \

-p 1522:1522 \

-p 8443:8443 \

-p 27017:27017 \

-e WALLET_PASSWORD=xxxxxx \

-e ADMIN_PASSWORD=xxxxxx \

--cap-add SYS_ADMIN \

--device /dev/fuse \

--volume adb-free-volume:/data \

--name adb-free \

container-registry.oracle.com/database/adb-free:latest-23aiThe ports listed are for the following access methods:

- 1521 TLS

- 1522 mTLS

- 8443 HTTPS port for ORDS, APEX and Database Actions

- 27017 MongoDB API

Once the database has started up, you can access the web UI using these URLs:

- APEX https://localhost:8443/ords/apex

- Database Actions https://localhost:8443/ords/sql-developer

Here’s what the Database Actions login page looks like, you can log in with the user “admin” and the password you specified:

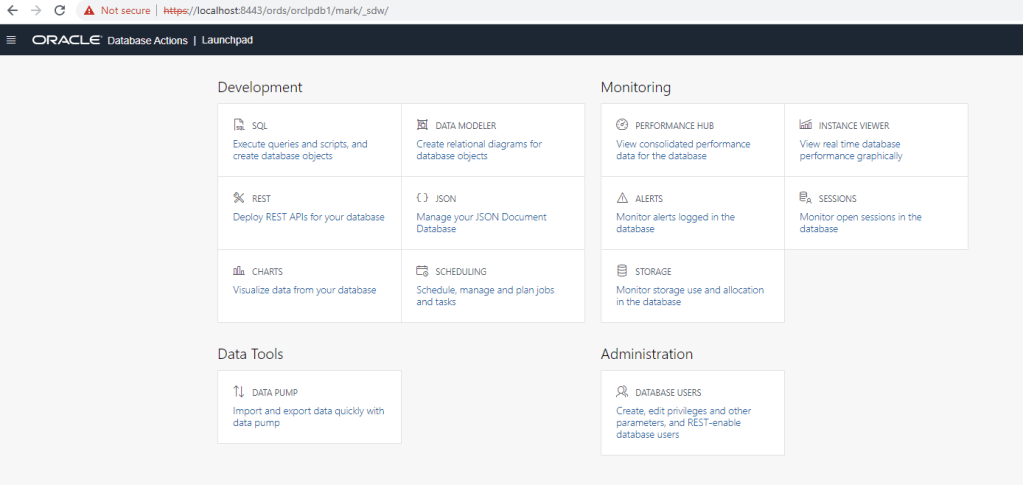

When you sign in, you will see the launchpad, from where you can access various tools:

For example, you could open the SQL tool and try executing a statement:

You may also want to connect to your database using other tools like Oracle SQL Developer (which is a Visual Studio Code extension) or SQLcl (which is a command line tool), or from a program. To do this, you will probably want to grab the wallet, read on!

Connecting the the database

If you want to use mTLS, you can get the wallet by copying it from the image using this command, just provide the desired destination path in the last argument:

docker cp adb-free:/u01/app/oracle/wallets/tls_wallet /path/to/walletNote that the address will be ‘localhost’ in the tnsnames.ora, so you will need to update that if necessary.

To use the wallet, set your TNS_ADMIN environment variable:

export TNS_ADMIN=/path/to/walletThe following TNS aliases are provided, for mTLS:

- myatp_medium

- myatp_high

- myatp_low

- myatp_tp

- myatp_tpurgent

And for TLS:

- myatp_medium_tls

- myatp_high_tls

- myatp_low_tls

- myatp_tp_tls

- myatp_tpurgent_tls

Here’s an example of connecting with SQLcl:

$ TNS_ADMIN=/path/to/wallet sql admin/xxxxxx@myatp_high

SQLcl: Release 24.1 Production on Fri Apr 25 10:41:46 2025

Copyright (c) 1982, 2025, Oracle. All rights reserved.

Last Successful login time: Fri Apr 25 2025 10:41:48 -04:00

Connected to:

Oracle Database 23ai Enterprise Edition Release 23.0.0.0.0 - Production

Version 23.6.0.24.11

SQL> select sysdate;

SYSDATE

____________

25-APR-25Here’s an example of connecting from SQL Developer. When you create the connection, just choose the location of the wallet (tnsnames.ora file) and it will let you select the TNS name to connect to:

Enjoy!

]]>Let’s assume you have a Kubernetes cluster running and you have configured kubectl access to the cluster.

The first step is to install Cert Manager, which is a pre-requisite for the Oracle Database Operator:

kubectl apply -f https://github.com/jetstack/cert-manager/releases/latest/download/cert-manager.yamlIt will take probably less than a minute to start up. You can check on it with this command:

kubectl -n cert-manager get pods

NAME READY STATUS RESTARTS AGE

cert-manager-8f49b54c8-xxd5v 1/1 Running 0 7d5h

cert-manager-cainjector-678548868-x5ljp 1/1 Running 0 7d5h

cert-manager-webhook-898d9d956-57m76 1/1 Running 0 7d5hNext, install the Oracle Database Operator itself:

kubectl apply -f https://raw.githubusercontent.com/oracle/oracle-database-operator/main/oracle-database-operator.yaml

That will start up pretty quickly too, and you can check with this command:

kubectl -n oracle-database-operator-system get pods Let’s create a Single Instance Database. The Oracle Database Operator will let you create other types of databases too, including sharded and multitenant databases, and to manage cloud database instances like Autonomous Database and Database Cloud Service. But today, I’m going to stick with a simple single instance.

Here’s the Kubernetes YAML file to describe the database we want, I called this sidb.yaml:

apiVersion: database.oracle.com/v1alpha1

kind: SingleInstanceDatabase

metadata:

name: sidb-sample

namespace: default

spec:

sid: ORCL1

edition: enterprise

adminPassword:

secretName: db-admin-secret

secretKey: oracle_pwd

keepSecret: true

charset: AL32UTF8

pdbName: orclpdb1

flashBack: false

archiveLog: false

forceLog: false

enableTCPS: false

tcpsCertRenewInterval: 8760h

image:

pullFrom: container-registry.oracle.com/database/enterprise:latest

pullSecrets: oracle-container-registry-secret

persistence:

size: 100Gi

storageClass: "oci-bv"

accessMode: "ReadWriteOnce"

loadBalancer: false

serviceAccountName: default

replicas: 1If you have not before, head over to Oracle Container Registry and go to the Database group, and accept the license agreement for the Enterprise option. You’ll also want to create a Kubernetes secret with your credentials so it can pull the image:

kubectl create secret docker-registry oracle-container-registry-secret \

--docker-server=container-registry.oracle.com \

--docker-username='[email protected]' \

--docker-password='whatever' \

--docker-email='[email protected]'You will want to change the storageClass to match your cluster. I am using Oracle Container Engine for Kuberentes in this example, so I used the “oci-bv” storage class. If you are using a different flavor of Kubernetes you should check what storage classes are available and use one of them.

This YAML describes a databse with the SID ORCL1 and a PDB called orclpdb1. It will get the password for sys, pdbadmin, etc., from a Kubernetes secret – so let’s create that:

kubectl create secret generic db-admin-secret --from-literal=oracle_pwd=Welcome12345Now we can create the database by applying that YAML file to our cluster:

kubectl apply -f sidb.yamlIt will take few minutes to start up fully – it has to pull the image (which took 3m30s on my cluster, for the “enterprise” image which is the biggest one), create the database instance the first time (mine took 8m), and apply any patches that are required (just over 1m for me). Subsequent startups will be much faster of course (I stopped it by scaling to zero replicas, then started it again by scaling back to one replica and it reached ready/healthy status in about 90s). For reference, my cluster had two nodes each with one OCPU and 16 GB of RAM. You can check on the progress with this command:

kubectl get singleinstancedatabases -o wide -w

As the database starts up, you will see the connection string and other fields populate in the output.

Now, let’s add Oracle REST Data Services. Here’s a Kubernetes YAML file that describes what we want, I called this ords.yaml:

apiVersion: database.oracle.com/v1alpha1

kind: OracleRestDataService

metadata:

name: ords-sample

namespace: default

spec:

databaseRef: "sidb-sample"

adminPassword:

secretName: db-admin-secret

ordsPassword:

secretName: ords-secret

image:

pullFrom: container-registry.oracle.com/database/ords:21.4.2-gh

restEnableSchemas:

- schemaName: mark

enable: true

urlMapping: markYou’ll need to create a secret to hold the password, for example:

kubectl create secret generic ords-secret --from-literal=oracle_pwd=Welcome12345You can apply that to your cluster with this command:

kubectl apply -f ords.yamlAnd we can check on progress with this command:

kubectl get oraclerestdataservice -wAs it becomes ready, you will see the URLs for the Database API REST endpoint and for Database Actions. Mine took about 2m to reach ready/healthy status.

If your nodes are on a private network, the quickest way to access the REST APIs and Database Actions is to use a port forward. You can get the name of the ORDS pod and start a port forwarding session with commands like this:

kubectl get pods

kubectl port-forward pod/ords-sample-g4wc7 8443Now you can hit the Database API REST endpoint with curl:

curl -k https://localhost:8443/ords/orclpdb1/_/db-api/stable/

{"links":[{"rel":"self","href":"https://localhost:8443/ords/orclpdb1/_/db-api/stable/"},{"rel":"describedby","href":"https://localhost:8443/ords/orclpdb1/_/db-api/stable/metadata-catalog/"}]}And you can access Database Actions at this address: http://localhost:8443/ords/sql-developer

On the login page, enter ORCLPDB1 for the PDB Name and mark as the user. Then on the password page enter Welcome12345, and you are good to go!

While we are at it, let’s also get SQLcl access to the database.

Again, we can use port forwarding to access the database from outside the cluster:

kubectl port-forward svc/sidb-sample 1521 &And then connect from SQLcl (if you have not checked out SQLcl yet, you should, it’s got cool features like command line completion and history):

sql mark/Welcome12345@//localhost:1521/orclpdb1

SQLcl: Release 22.2 Production on Mon May 01 14:32:57 2023

Copyright (c) 1982, 2023, Oracle. All rights reserved.

Last Successful login time: Mon May 01 2023 14:32:56 -04:00

Connected to:

Oracle Database 21c Enterprise Edition Release 21.0.0.0.0 - Production

Version 21.3.0.0.0

SQL> select * from dual;

DUMMY

________

X

SQL>There you go! That was super quick and easy! Enjoy!

]]>We also published updated source and sink Kafka connectors for Transactional Event Queues – but I’ll cover those in a separate post.

Let’s build a Kafka producer and consumer using the updated Kafka-compatible APIs.

Prepare the database

The first thing we want to do is start up the Oracle 23c Free Database. This is very easy to do in a container using a command like this:

docker run --name free23c -d -p 1521:1521 -e ORACLE_PWD=Welcome12345 container-registry.oracle.com/database/free:latestThis will pull the image and start up the database with a listener on port 1521. It will also create a pluggable database (a database container) called “FREEPDB1” and will set the admin passwords to the password you specified on this command.

You can tail the logs to see when the database is ready to use:

docker logs -f free23c

(look for this message...)

#########################

DATABASE IS READY TO USE!

#########################

Also, grab the IP address of the container, we’ll need that to connect to the database:

docker inspect free23c | grep IPA

"SecondaryIPAddresses": null,

"IPAddress": "172.17.0.2",

"IPAMConfig": null,

"IPAddress": "172.17.0.2",

To set up the necessary permissions, you’ll need to connect to the database with a client. If you don’t have one already, I’d recommend trying the new SQLcl CLI which you can download here. Start it up and connect to the database like this (note that your IP address and password may be different):

sql sys/Welcome12345@//172.17.0.2:1521/freepdb1 as sysdba

SQLcl: Release 22.2 Production on Tue Apr 11 12:36:24 2023

Copyright (c) 1982, 2023, Oracle. All rights reserved.

Connected to:

Oracle Database 23c Free, Release 23.0.0.0.0 - Developer-Release

Version 23.2.0.0.0

SQL>

Now, run these commands to create a user called “mark” and give it the necessary privileges:

SQL> create user mark identified by Welcome12345;

User MARK created.

SQL> grant resource, connect, unlimited tablespace to mark;

Grant succeeded.

SQL> grant execute on dbms_aq to mark;

Grant succeeded.

SQL> grant execute on dbms_aqadm to mark;

Grant succeeded.

SQL> grant execute on dbms_aqin to mark;

Grant succeeded.

SQL> grant execute on dbms_aqjms_internal to mark;

Grant succeeded.

SQL> grant execute on dbms_teqk to mark;

Grant succeeded.

SQL> grant execute on DBMS_RESOURCE_MANAGER to mark;

Grant succeeded.

SQL> grant select_catalog_role to mark;

Grant succeeded.

SQL> grant select on sys.aq$_queue_shards to mark;

Grant succeeded.

SQL> grant select on user_queue_partition_assignment_table to mark;

Grant succeeded.

SQL> exec dbms_teqk.AQ$_GRANT_PRIV_FOR_REPL('MARK');

PL/SQL procedure successfully completed.

SQL> commit;

Commit complete.

SQL> quit;Create a Kafka topic and consumer group using these statements. Note that you could also do this from the Java code, or using the Kafka-compatible Transactional Event Queues REST API (which I wrote about in this post):

begin

-- Creates a topic named TEQ with 5 partitions and 7 days of retention time

dbms_teqk.aq$_create_kafka_topic('TEQ', 5);

-- Creates a Consumer Group CG1 for Topic TEQ

dbms_aqadm.add_subscriber('TEQ', subscriber => sys.aq$_agent('CG1', null, null));

end;

/You should note that the dbms_teqk package is likely to be renamed in the GA release of Oracle Database 23c, but for the Oracle Database 23c Free – Developer Release you can use it.

Ok, we are ready to start on our Java code!

Create a Java project

Let’s create a Maven POM file (pom.xml) and add the dependencies we need for this application. I’ve also iunclude some profiles to make it easy to run the two main entry points we will create – the producer, and the consumer. Here’s the content for the pom.xml. Note that I have excluded the osdt_core and osdt_cert transitive dependencies, since we are not using a wallet or SSL in this example, so we do not need those libraries:

<?xml version="1.0" encoding="UTF-8"?>

<project xmlns="http://maven.apache.org/POM/4.0.0"

xmlns:xsi="http://www.w3.org/2001/XMLSchema-instance"

xsi:schemaLocation="http://maven.apache.org/POM/4.0.0 https://maven.apache.org/xsd/maven-4.0.0.xsd">

<modelVersion>4.0.0</modelVersion>

<groupId>com.example</groupId>

<artifactId>okafka-demo</artifactId>

<version>0.0.1-SNAPSHOT</version>

<name>okafka-demo</name>

<description>OKafka demo</description>

<properties>

<java.version>17</java.version>

<maven.compiler.target>17</maven.compiler.target>

<maven.compiler.source>17</maven.compiler.source>

</properties>

<dependencies>

<dependency>

<groupId>com.oracle.database.messaging</groupId>

<artifactId>okafka</artifactId>

<version>23.2.0.0</version>

<exclusions>

<exclusion>

<artifactId>osdt_core</artifactId>

<groupId>com.oracle.database.security</groupId>

</exclusion>

<exclusion>

<artifactId>osdt_cert</artifactId>

<groupId>com.oracle.database.security</groupId>

</exclusion>

</exclusions>

</dependency>

</dependencies>

<profiles>

<profile>

<id>consumer</id>

<build>

<plugins>

<plugin>

<groupId>org.codehaus.mojo</groupId>

<artifactId>exec-maven-plugin</artifactId>

<version>3.0.0</version>

<executions>

<execution>

<goals>

<goal>exec</goal>

</goals>

</execution>

</executions>

<configuration>

<executable>java</executable>

<arguments>

<argument>-Doracle.jdbc.fanEnabled=false</argument>

<argument>-classpath</argument>

<classpath/>

<argument>com.example.SimpleConsumerOKafka</argument>

</arguments>

</configuration>

</plugin>

</plugins>

</build>

</profile>

<profile>

<id>producer</id>

<build>

<plugins>

<plugin>

<groupId>org.codehaus.mojo</groupId>

<artifactId>exec-maven-plugin</artifactId>

<version>3.0.0</version>

<executions>

<execution>

<goals>

<goal>exec</goal>

</goals>

</execution>

</executions>

<configuration>

<executable>java</executable>

<arguments>

<argument>-Doracle.jdbc.fanEnabled=false</argument>

<argument>-classpath</argument>

<classpath/>

<argument>com.example.SimpleProducerOKafka</argument>

</arguments>

</configuration>

</plugin>

</plugins>

</build>

</profile>

</profiles>

</project>This is a pretty straightforward POM. I just set the project’s coordinates, declared my one dependency, and then created the two profiles so I can run the code easily.

Next, we are going to need a file called ojdbc.properties in the same directory as the POM with this content:

user=mark

password=Welcome12345The KafkaProducer and KafkaConsumer will use this to connect to the database.

Create the consumer

Ok, now let’s create our consumer. In a directory called src/main/jaba/com/example, create a new Java file called SimpleConsumerOKafka.java with the following content:

package com.example;

import java.util.Properties;

import java.time.Duration;

import java.util.Arrays;

import org.oracle.okafka.clients.consumer.KafkaConsumer;

import org.apache.kafka.clients.consumer.ConsumerRecords;

import org.apache.kafka.common.header.Header;

import org.apache.kafka.clients.consumer.Consumer;

import org.apache.kafka.clients.consumer.ConsumerRecord;

public class SimpleConsumerOKafka {

public static void main(String[] args) {

// set the required properties

Properties props = new Properties();

props.put("bootstrap.servers", "172.17.0.2:1521");

props.put("group.id" , "CG1");

props.put("enable.auto.commit","false");

props.put("max.poll.records", 100);

props.put("key.deserializer",

"org.apache.kafka.common.serialization.StringDeserializer");

props.put("value.deserializer",

"org.apache.kafka.common.serialization.StringDeserializer");

props.put("oracle.service.name", "freepdb1");

props.put("oracle.net.tns_admin", ".");

props.put("security.protocol","PLAINTEXT");

// create the consumer

Consumer<String , String> consumer = new KafkaConsumer<String, String>(props);

consumer.subscribe(Arrays.asList("TEQ"));

int expectedMsgCnt = 4000;

int msgCnt = 0;

long startTime = 0;

// consume messages

try {

startTime = System.currentTimeMillis();

while(true) {

try {

ConsumerRecords <String, String> records =

consumer.poll(Duration.ofMillis(10_000));

for (ConsumerRecord<String, String> record : records) {

System.out.printf("partition = %d, offset = %d, key = %s, value = %s\n ",

record.partition(), record.offset(), record.key(), record.value());

for(Header h: record.headers()) {

System.out.println("Header: " + h.toString());

}

}

// commit the records we received

if (records != null && records.count() > 0) {

msgCnt += records.count();

System.out.println("Committing records " + records.count());

try {

consumer.commitSync();

} catch(Exception e) {

System.out.println("Exception in commit " + e.getMessage());

continue;

}

// if we got all the messages we expected, then exit

if (msgCnt >= expectedMsgCnt ) {

System.out.println("Received " + msgCnt + ". Expected " +

expectedMsgCnt +". Exiting Now.");

break;

}

} else {

System.out.println("No records fetched. Retrying...");

Thread.sleep(1000);

}

} catch(Exception e) {

System.out.println("Inner Exception " + e.getMessage());

throw e;

}

}

} catch(Exception e) {

System.out.println("Exception from consumer " + e);

e.printStackTrace();

} finally {

long runDuration = System.currentTimeMillis() - startTime;

System.out.println("Application closing Consumer. Run duration " +

runDuration + " ms");

consumer.close();

}

}

}Let’s walk through this code together.

The first thing we do is prepare the properties for the KafkaConsumer. This is fairly standard, though notice that the bootstrap.servers property contains the address of your database listener:

Properties props = new Properties();

props.put("bootstrap.servers", "172.17.0.2:1521");

props.put("group.id" , "CG1");

props.put("enable.auto.commit","false");

props.put("max.poll.records", 100);

props.put("key.deserializer",

"org.apache.kafka.common.serialization.StringDeserializer");

props.put("value.deserializer",

"org.apache.kafka.common.serialization.StringDeserializer");Then, we add some Oracle-specific properties – oracle.service.name is the name of the service we are connecting to, in our case this is freepdb1; oracle.net.tns_admin needs to point to the directory where we put our ojdbc.properties file; and security.protocol controls whether we are using SSL, or not, as in this case:

props.put("oracle.service.name", "freepdb1");

props.put("oracle.net.tns_admin", ".");

props.put("security.protocol","PLAINTEXT");

With that done, we can create the KafkaConsumer and subscribe to a topic. Note that we use the Oracle version of KafkaConsumer which is basically just a wrapper that understand those extra Oracle-specific properites:

import org.oracle.okafka.clients.consumer.KafkaConsumer;

// ...

Consumer<String , String> consumer = new KafkaConsumer<String, String>(props);

consumer.subscribe(Arrays.asList("TEQ"));The rest of the code is standard Kafka code that polls for records, prints out any it finds, commits them, and then loops until it has received the number of records it expected and then exits.

Run the consumer

We can build and run the consumer with this command:

mvn exec:exec -P consumerIt will connect to the database and start polling for records, of course there won’t be any yet because we have not created the producer. It should output a message like this about every ten seconds:

No records fetched. Retrying...Let’s write that producer!

Create the producer

In a directory called src/main/jaba/com/example, create a new Java file called SimpleProducerOKafka.java with the following content:

package com.example;

import org.oracle.okafka.clients.producer.KafkaProducer;

import org.apache.kafka.clients.producer.Producer;

import org.apache.kafka.clients.producer.ProducerRecord;

import org.apache.kafka.clients.producer.RecordMetadata;

import org.apache.kafka.common.header.internals.RecordHeader;

import java.util.Properties;

import java.util.concurrent.Future;

public class SimpleProducerOKafka {

public static void main(String[] args) {

long startTime = 0;

try {

// set the required properties

Properties props = new Properties();

props.put("bootstrap.servers", "172.17.0.2:1521");

props.put("key.serializer",

"org.apache.kafka.common.serialization.StringSerializer");

props.put("value.serializer",

"org.apache.kafka.common.serialization.StringSerializer");

props.put("batch.size", "5000");

props.put("linger.ms","500");

props.put("oracle.service.name", "freepdb1");

props.put("oracle.net.tns_admin", ".");

props.put("security.protocol","PLAINTEXT");

// create the producer

Producer<String, String> producer = new KafkaProducer<String, String>(props);

Future<RecordMetadata> lastFuture = null;

int msgCnt = 4000;

startTime = System.currentTimeMillis();

// send the messages

for (int i = 0; i < msgCnt; i++) {

RecordHeader rH1 = new RecordHeader("CLIENT_ID", "FIRST_CLIENT".getBytes());

RecordHeader rH2 = new RecordHeader("REPLY_TO", "TOPIC_M5".getBytes());

ProducerRecord<String, String> producerRecord =

new ProducerRecord<String, String>(

"TEQ", String.valueOf(i), "Test message "+ i

);

producerRecord.headers().add(rH1).add(rH2);

lastFuture = producer.send(producerRecord);

}

// wait for the last one to finish

lastFuture.get();

// print summary

long runTime = System.currentTimeMillis() - startTime;

System.out.println("Produced "+ msgCnt +" messages in " + runTime + "ms.");

producer.close();

}

catch(Exception e) {

System.out.println("Caught exception: " + e );

e.printStackTrace();

}

}

}This code is quite similar to the consumer. We first set up the Kafka properties, including the Oracle-specific ones. Then we create a KafkaProducer, again using the Oracle version which understands those extra properties. After that we just loop and produce the desired number of records.

Make sure your consumer is still running (or restart it) and then build and run the producer with this command:

mvn exec:exec -P producerWhen you do this, it will run for a short time and then print a message like this to let you know it is done:

Produced 4000 messages in 1955ms.Now take a look at the output in the consumer window. You should see quite a lot of output there. Here’s a short snippet from the end:

partition = 0, offset = 23047, key = 3998, value = Test message 3998

Header: RecordHeader(key = CLIENT_ID, value = [70, 73, 82, 83, 84, 95, 67, 76, 73, 69, 78, 84])

Header: RecordHeader(key = REPLY_TO, value = [84, 79, 80, 73, 67, 95, 77, 53])

Committing records 27

Received 4000. Expected 4000. Exiting Now.

Application closing Consumer. Run duration 510201 msIt prints out a message for each record it finds, including the partition ID, the offset, and the key and value. It them prints out the headers. You will also see commit messages, and at the end it prints out how many records it found and how long it ws running for. I left mine running while I got the producer ready to go, so it shows a fairly long duration  But you can run it again and start the producer immediately after it and you will see a much shorter run duration.

But you can run it again and start the producer immediately after it and you will see a much shorter run duration.

Well, there you go! That’s a Kafka producer and consumer using the new updated 23c version of the Kafka-compatible Java API for Transactional Event Queues. Stay tuned for more!

]]>The new Oracle Database 23c Free – Developer Release is now available.

Oracle Database 23c Free – Developer Release is the first release of the next-generation Oracle Database, allowing developers a head-start on building applications with innovative 23c features that simplify development of modern data-driven apps. The entire feature set of Oracle Database 23c is planned to be generally available within the next 12 months.

It has heaps of new developer-focused features and its completely free! And easy to download and use!

My two favorite features are:

- the new JSON Relational Duality Views which allow you to create a JSON document representation from a number of existing tables, and they are read/write! So you can use JSON in your applications and have the underlying data stored in relational tables. Of course you can store it in JSON too if you want to!

- JavaScript Stored Procedures, or as I like to think of them – in-database microservices which can scale to zero, with fast startup and scaling, and resource management to prevent noisy neighbors!

I look forward to writing posts about those, and some other exicting new features really soon.

You can find it here: https://www.oracle.com/database/free/

]]>In this post I will start with a really simple example that just gets the exporter up and running and collects a few simple metrics from the database into Prometheus. In subsequent posts, I’ll go further and look at dashboards in Grafana, and also cover the logging and metrics capabilities! But you have to start somewhere right!

First thing we need is a database of course! I just fired one up in a container like this:

docker run -d \

--name oracle-db \

-p 1521:1521 \

-e ORACLE_PWD=Welcome123 \

-e ORACLE_SID=ORCL \

-e ORACLE_PDB=PDB1 \

container-registry.oracle.com/database/enterprise:21.3.0.0If you have not used this image before, you will first need to go to Oracle Container Registry at https://container-registry.oracle.com, log in, and navigate to the Database category and then the “enterprise” image and accept the license agreement. You will also need to login your docker client so you can pull the image:

docker login container-registry.oracle.com

# this will prompt you for your username and passwordThe image will take a short time to pull the first time, and the first startup will actually create the database instance, and that takes a few minutes too. You can watch the logs to see when the database is ready:

docker logs -f oracle-dbYou only need to have these delays the first time you start the image. After that you can stop and start the container as needed and it will retain the data and startup very quickly.

# to stop the container:

docker stop oracle-db

# to start the container:

docker start oracle-dbOk, so now we have a database available. Let’s connect to it and create some data to play with. You can use your favorite client – there’s SQL*Plus in that image if you don’t have anything else available. You can start it and connect to the database like this:

docker exec -ti oracle-db sqlplus pdbadmin/Welcome123@//localhost:1521/pdb1Note: If you have not already, you might want to check out the new SQLcl command line tool which features command line completion and many other great features – check it out at https://www.oracle.com/database/sqldeveloper/technologies/sqlcl/

Let’s create a “customer” table and add a record:

create table customer (id number, name varchar2(256));

insert into customer (id, name) values (1, 'mark');

commit;Great, and let’s just leave that session connected – that will come in handy later!

Now, let’s get the observability exporter and set it up.

First, you’ll need to clone the project from GitHub:

git clone https://github.com/oracle/oracle-db-appdev-monitoring

cd oracle-db-appdev-monitoringYou can build the project and create a container image (assuming you have Maven, Java and Docker installed) like this:

mvn clean package -DskipTests

docker build -t observability-exporter:0.1.0 .If you don’t have those installed and you don’t want to – you can skip this step and just grab a pre-built container image from Oracle Container Registry:

docker pull container-registry.oracle.com/database/observability-exporter:0.1.0 If you do it this way, make sure to use the full name later when we start the exporter, not the short version!

Now we need to create a configuration file and define our metrics. I called mine mark-metrics.toml and here’s the content:

[[metric]]

context = "customers"

request = "SELECT count(*) as num_custs FROM customer"

metricsdesc = { num_custs = "Number of customers." }

[[metric]]

context = "system"

request = "select count(*) as session_count from v$session where username is not null and type = 'USER' and con_id = sys_context('userenv','con_id')"

metricsdesc = { session_count = "Current session count." }

[[metric]]

context = "system"

request = "select count(*) as active_sessions from v$session where username is not null and type = 'USER' and status = 'ACTIVE' and con_id = sys_context('userenv','con_id')"

metricsdesc = { active_sessions = "Active sessions." }

[[metric]]

context = "system"

request = "select (c.session_count - a.active_sessions) as inactive_sessions from (select count(*) as session_count from v$session where username is not null and type = 'USER' and con_id = sys_context('userenv','con_id')) c, (select count(*) as active_sessions from v$session where username is not null and type = 'USER' and status = 'ACTIVE' and con_id = sys_context('userenv','con_id')) a"

metricsdesc = { inactive_sessions = "Inactive sessions." }

[[metric]]

context = "system"

request = "select b.session_count as blocked_sessions from (select count(*) as session_count from v$session where username is not null and type = 'USER' and blocking_session_status = 'VALID' and con_id = sys_context('userenv','con_id')) b"

metricsdesc = { blocked_sessions = "Blocked sessions." }I defined five metrics in this file. Each metric starts with the [[metric]] heading and can have several fields. You can see more information in the documentation here. In the spirit of keeping this first post simple, I just created basic metrics with no labels or anything fancy

Let’s take a close look at the first metric, here it is again:

[[metric]]

context = "customers"

request = "SELECT count(*) as num_custs FROM customer"

metricsdesc = { num_custs = "Number of customers." }It is in the context (or group) called customers. The metric itself is called num_custs. You can see how we use the metricsdesc to create a human-readable documentation/description for the metric. And the metric itself is defined with an SQL statement. Wow! That’s pretty cool, right? That means that anything I can write an SQL statement to get from the database can be exported as a metric! In this one I just count the number of entries in that customer table we just created.

The other four metrics are some simple queries that get the number of sessions in the database as well as how many are active, inactive and blocked. These are all in the system context. You can define whatever contexts you like.

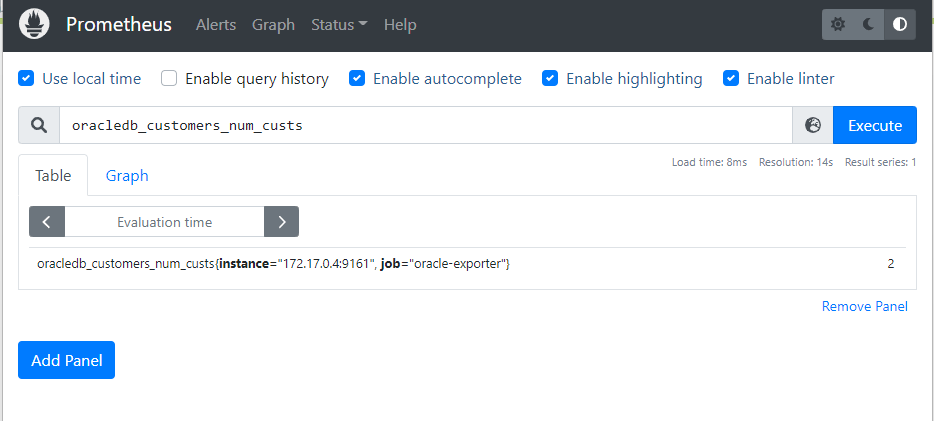

When you later look at a metric in Prometheus its name will be something like this:

oracledb_customers_num_custsNotice how the context (customers) and the metric name (num_custs) are in there.

Ok, now that we have defined our metrics, we can start up the exporter. Let’s run it in another container, alongside the database. We can start it like this:

docker run -d \

-v /home/mark/oracle-db-appdev-monitoring/mark-metrics.toml:/metrics.toml \

-p 9161:9161 \

-e DEFAULT_METRICS=/metrics.toml \

-e DATA_SOURCE_NAME=pdbadmin/[email protected]:1521/pdb1 \

--name exporter \

observability-exporter:0.1.0There’s a couple of things to note here. First, I am providing the configuration file we just created using the -v mount. This will give the exporter access to the metrics definitions. Second, we need to tell it how to connect to the database. You’ll need to get the IP address of the database container using this command:

docker inspect oracle-db | grep IPAddressYours will probably be diffrent to mine, so you’ll need to update the value of DATA_SOURCE_NAME to match your environment. And finally, a reminder – if you pulled the pre-built image down from Oracle Container Registry, you’ll need to use the fully qualified name on the last line.

Once this container starts up, grab its IP address too, we’ll need that in a minute:

docker inspect exporter | grep IPAddressThe exporter should start right up, and assuming we got the address right and no typos, it should be working and we can get metrics like this:

$ curl localhost:9161/metrics

# HELP oracledb_system_inactive_sessions Inactive sessions.

# TYPE oracledb_system_inactive_sessions gauge

oracledb_system_inactive_sessions 1.0

# HELP oracledb_up Whether the Oracle database server is up.

# TYPE oracledb_up gauge

oracledb_up 1.0

# HELP oracledb_system_blocked_sessions Blocked sessions.

# TYPE oracledb_system_blocked_sessions gauge

oracledb_system_blocked_sessions 0.0

# HELP oracledb_customers_num_custs Number of customers.

# TYPE oracledb_customers_num_custs gauge

oracledb_customers_num_custs 2.0

# HELP oracledb_system_active_sessions Active sessions.

# TYPE oracledb_system_active_sessions gauge

oracledb_system_active_sessions 1.0

# HELP oracledb_system_session_count Current session count.

# TYPE oracledb_system_session_count gauge

oracledb_system_session_count 2.0If you don’t see this, check the container logs to see what the error was:

docker logs exporterAssuming everything is working now, let’s start up Prometheus and configure it to scrape these metrics.

First, let’s create a configuration file called prometheus.yml with this content:

global:

scrape_interval: 10s

evaluation_interval: 10s

scrape_configs:

- job_name: 'prometheus'

static_configs:

- targets: ['127.0.0.1:9090']

- job_name: 'oracle-exporter'

metrics_path: '/metrics'

scrape_interval: 10s

scrape_timeout: 8s

static_configs:

- targets: ['172.17.0.4:9161']The only thing you’ll need to change here is the very last line – you need to put the IP address of your exporter container in there.

Then you can start up Prometheus using this configuration like this:

docker run -d \

--name prometheus \

-p 9090:9090 \

-v /home/mark/prometheus.yml:/etc/prometheus/prometheus.yml \

prom/prometheus --config.file=/etc/prometheus/prometheus.ymlIt should start right up and you can access it at http://localhost:9090

The user interface looks like this, and you can type into that search field to find a metric. If you start typing “num_custs” it should find our metric. Then hit enter, or click on the Execute button to see the value of the metric. It might take up to 10 seconds for data to be available, since we configured the scrape interval as 10 seconds in our configuration file. You should see something like this – yours will probably say 1, not 2:

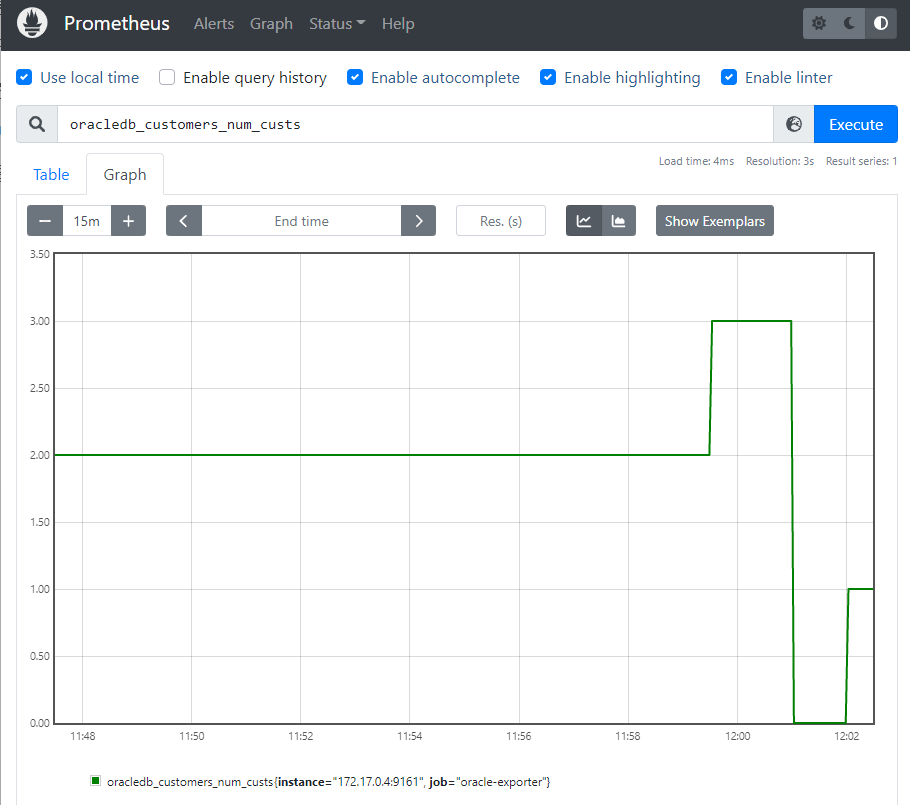

If you go insert some more records into that table and then check again, you’ll see the value is updated. You can also click on the Graph tab to view that as a time series graph. Try adding and removing records to see what happens. Remember to wait a little while between each update so that new metrics are collected.

You can also try the other metrics we created! So there we go, that’s covered the very basic starting steps of defining some metrics, running the exporter and scraping the metrics into Prometheus! Stay tuned for some follow up posts where I will build dashboards in Grafana, and also look at exporting logs and distributed tracing!

Bonus info: If you use WSL2 like I do, you might see a warning on the Prometheus web user interface about clock skew. If you do, you can fix that by updating the time in WSL like this:

sudo hwclock -s

The motivation for looking at Docker is twofold. Firstly, there are a lot of virtual machines images created for training purposes, e.g. for SOA Suite. These are then distributed to Oracle folks and partners around the world. They tend to be in the 20-40 GB range in terms of size. This means that downloading them takes time, unzipping them takes time, and you need to have plenty of space to store them and so on. Publishing updates to these images is hard, and in reality means you need to go download them again. It would be nice to have a better way to distribute pre-built environments like this – a method that allowed for much smaller downloads, and the ability to publish updates easily, without sacrificing the control over the configuration of the environment – so that you still know what you are going to end up with when you start up the ‘image’.

Secondly, as many of you know, I have a strong interest in Continuous Delivery and automation of the build-test-release lifecycle. Being able to quickly create environments that are in a known state, to use for testing automation, is a key capability we would want when building a delivery pipeline.

Docker provides some capabilities that could really help in both of these areas. In this post, we are just going to focus on the first one, and while we are exploring, let’s also look at how well Docker integrates with other tools we care about – like Vagrant, Chef and Puppet for example.

Introducing Docker

Docker is a virtualization technology that uses containers. A container is a feature that was added to the Linux kernel recently. Solaris has had containers (or ‘zones’) for a long time. A container is basically a virtual environment (like a VM) where you can run applications in isolation – protected from other applications in other containers or on the ‘host’ system. Unlike a VM, it does not emulate a processor and run its own copy of the operating system, with its own memory, and virtual devices. Instead, it shares the host operating system, but has its own file system, and uses a layering technology to overlay sparse file systems on top of each other to create its file system – you’ll see what this means in practice later on. When you are ‘in’ the container, it looks like you are on a real machine, just like when you are ‘in’ a VM. The difference is that the container approach uses a lot less system resources than the VM approach, since it is not running another copy of the operating system.

This means that more of your physical memory is available to run the actual application you care about, and less of it is consumed by the virtualization software and the virtualized operating system. When you are running VMs – this impact can be significant, especially if you need to run two or three VMs.

Containers are pretty mainstream – as we said, Solaris has had them for years, and people have been using them to isolate production workloads for a long time.

You can use Linux containers without using Docker. Docker just makes the whole experience a lot more pleasant. Docker allows you to create a container from an ‘image’, and to save the changes that you make, or to throw them away when you are done with the container.

These images are versioned, and they are layered on top of other images. So they are reusable. For example, if you had five demo/training environments you wanted to use, but they all have SOA Suite, WebLogic, JDK, etc., in them – you can put SOA Suite into one image, and then create five more images for each of the five demo/training environments – each of these as a layer on top of the SOA image. Now if you had one of those five ‘installed’ on your machine and you wanted to fire up one of the others, Docker allows you to just pull down that relatively small demo image and run it right on top of the relatively large SOA image you already have.

If you customize the demo and want to share the customizations with others, you can ‘push’ your image back to a shared registry (repository) of images. Docker is just going to push the (relatively very small) changes you made, and other people can then use them by running that image on top of the ones they already have.

Running your customized image on top of the others they have will not change the others, so they can still use them any time they need them. And this is done without the need for the relatively large ‘snapshots’ that you would create in a VM to achieve the same kind of flexibility.

Probably the best way to learn about Docker is to try it out. If you have never used container-based virtualization before, things are going to seem a little weird, and it might take a while to get it straight in your head. But please do persevere – it is worth it in the long run.

To follow along with this post you need a Ubuntu 14.04 desktop environment. Inside a VM/VirtualBox is fine for the purposes of learning about Docker. Just do a normal installation of Ubuntu.

Optional Section – Vagrant

Or, if you prefer not to install from scratch, you can follow the following vagrant steps

First install vagrant ( http://vagrantup.com/ ) and virtualbox. Then create a directory to hold your Ubuntu image.

# mkdir ubuntu-desktop # cd ubuntu-desktop/ # vagrant init janihur/ubuntu-1404-desktop

Before you start the image edit the Vagrantfile and uncomment the following section to assign some more memory to the Virtualbox image:

config.vm.provider "virtualbox" do |vb| # Don't boot with headless mode vb.gui = true # Use VBoxManage to customize the VM. For example to change memory: vb.customize ["modifyvm", :id, "--memory", "4096"] end

Start the image with:

# vagrant up

This will download ubuntu 14.04 with a disk space of 100GB and the installed desktop is LXDE based Lubuntu.

To authenticate you can use vagrant/vagrant, vagrant can also sudo without a password.

For more info see https://bitbucket.org/janihur/ubuntu-1404-server-vagrant

(End optional section)

Once you have a Ubuntu VM (or native install), then install docker:

(as root) # curl -sSL https://get.docker.com/ubuntu | /bin/sh

This will download the apt registry keys for Docker and install the Docker program. After it is done you can run ‘docker -v’ and it should report version 1.3.1 (at the time of writing).

For the rest of this post, we will display prompts as follows:

host# - indicating a command you type on the host system (as root) container# - indicating a command you type inside the container (as root) container$ - indicating a command you type inside the container (as a non-root user)

Now, let’s explore some of the basics.

Basic Docker Usage

The first obvious thing to do is to start up a container and see what it looks like. Let’s fire up an empty Ubuntu 14.04 container:

host# docker run ubuntu:14.04 ls

Since this is likely the first time you are using this ubuntu:14.04 container, it will be downloaded first then started up. You will see some output like this:

bin boot ... var

That’s just the output of running the ‘ls’ command inside a container that we just created. When the ‘ls’ command finished, the container stopped too. You can see its remnants using the following command (your container ID and name will be different):

host# docker ps -a CONTAINER ID IMAGE COMMAND CREATED STATUS PORTS NAMES 3f67ac6342d8 ubuntu:14.04 "ls" 6 seconds ago Exited (0) 5 seconds ago hungry_mcclintock

Also, we downloaded that ubuntu:14.04 image – you can see your images using this command:

host# docker images REPOSITORY TAG IMAGE ID CREATED VIRTUAL SIZE ubuntu 14.04 5506de2b643b 2 weeks ago 199.3 MB

Now that was not terribly interesting – the container only ran for milliseconds. Notice there was no waiting for the operating system to boot! Let’s fire up another container and run a shell in it so we can look around. Try this command:

host# docker run -ti ubuntu:14.04 /bin/bash

This will start up a new container, attach a terminal to it (-t), make it interactive (-i) as opposed to running it in the background, and run /bin/bash. Almost instantly you will be sitting at the bash prompt. Take a look around – just don’t exit the shell yet. You will notice that the container has its own file system, but you cannot see out to the host file system. It looks very much like being inside a ‘traditional’ VM.

Create a file and put some content into it, for example:

container# date > myfile

Now let’s exit the container and take a look around from the outside.

container# exit

You will be back at your host Ubuntu system’s bash prompt now. Try the ‘docker ps- a’ command again. Notice you have another container now.

Let’s see what happened in that container. Grab the name or the hex ID of the container and try this command – substituting in the right ID or name:

host# docker diff abcd12345abc

Note: For the rest of this post, we will just put “ID” or “SOA_CONTAINER_ID” or something like that, since the ID’s that you get will be different to ours anyway. When you see “ID” just substitute in the correct container or image ID depending on the context.

It will show you all the files you have changed in the container! You can go ahead and start up a new container with the same command, and it will be just like the last one was when you started it – those changes you made will not be there. So you can fire up as many containers as you like and have them in exactly the same state each time – that is pretty useful for demos and training environments.

But what if you want to keep those changes? You just need to save them as a new image. Try these commands:

host# docker commit abcd12345abc host# docker images

Now you will see that you have a new image. It is probably pretty small, in fact if all you did was create that one new file, it will probably show as the same size as the ubuntu:14.04 image you created it from because the change was so small it was a rounding error in the output.

You can start a new container using your image – just grab the ID of the image and try a command like this:

host# docker run -ti ff77ff66ff55 /bin/bash

Again, you will be dropped into the bash prompt inside a new container. Take a look – your file is there. You can exit out of this container, and start another one using ubuntu:14.04 and it looks just like it did before.

Each image is essentially a delta/change set on top of the image it was based on. So you can build up layers of images, or you can create as many images as you like (say for different demos) on top of a common base image. These are going to be very small in size, and much easier to manage than trying to share a single VM for a bunch of demos, or trying to manage snapshots, or multiple copies of VMs. And since that common base image will be the underlying operating system (Ubuntu in our case) all the people you share your image with are likely to have that already downloaded.

Now we have been playing around a bit, and we have a bunch of old containers laying around. Let’s clean up a bit. You can remove the old containers using the command:

host# docker rm abcde12345abc

Just put in the appropriate ID’s that you got in the output of ‘docker ps -a’.

Let’s move on to creating some useful containers now that we have a feel for the basics.

Creating your own containers

Let’s create a container with some more useful things in it now. Later we are going to build a container with SOA Suite in it and one with the Oracle Database in it. There are a few common things we will want in both of those, so let’s set those up in a container now, and make a base image from that container which we can then use to create both the SOA and the database containers later on.

What do we want in our container?

- First we want an oracle user and group

- We want to be able to run the GUI install programs, so let’s install the X libraries we will need for that too

- We may as well put the JDK in there as well

Ok, we have a plan. So let’s go ahead and create the container. We start by firing up a new container from the base ubuntu:14.04 image that we used before:

host# docker run -ti ubuntu:14.04 /bin/bash

Now let’s create our users and groups:

container# groupadd oracle container# useradd -m -d /home/oracle -s /bin/bash -g oracle oracle container# passwd oracle (type password in twice)

And, let’s install the X libraries we need:

container# apt-get install libxrender-dev libxtst-dev

And then we can install the JDK – oh wait, how do we get the JDK installer into the container? We don’t have a browser in the container to download it with. Well, we could install a browser:

container# apt-get install firefox

But that will make our container a bit bigger and we don’t really need it. So let’s choose a different approach. Download the JDK using the browser on the host and put it in a directory on the host, let’s say /home/oracle/Downloads for example. Now we need to mount that directory in our container. Go ahead and exit the container:

container# exit

Now get it’s ID:

host# docker ps -a

Create a ‘work in progress’ image from that container:

host# docker commit -a “user” -m “installed X libs and created oracle user” ID host# docker images

Grab the ID of the new image, and fire up a new container:

host# docker run -ti -v /home/oracle/Downloads:/home/oracle/Downloads ID /bin/bash

This will drop you into the root bash shell in the container. Change to the oracle user:

container# su - oracle

Take a look in your Downloads directory – it is a mirror of the one on the host – and there is your JDK tar file. Let’s untar it into our home directory:

container$ cd container$ tar xzvf Downloads/jdk1.7.0_71.tar.gz

Great, now we have everything we wanted. Before we leave the container, let’s just remove any unnecessary files in /tmp (there probably aren’t any right now, but it is good to get into this practice to keep image sizes as small as possible).

container$ exit container# rm -rf /tmp/* container# exit

Now let’s save our changes. Get the container ID and make a new image:

host# docker ps -a host# docker commit -a “user” -m “installed JDK” ID host# docker images

There is our new image. Let’s give it a name so we don’t have to keep using that ID. Docker uses a tag and a version to name images. Let’s call ours “oracle-base” and version ‘“1.0”:

host# docker tag ID oracle-base:1.0 host# docker images

That’s a lot nicer!

If you want to keep things clean, you can remove the older images that we no longer need with a command like:

host# docker rmi ID

Note that is rmi with an “i” on the end – rmi is for images. rm (without the “i”) is for containers.

Now, before we go on to create our SOA and database images, let’s learn about some more interesting and useful features of Docker.

More Interesting Stuff

Now we are going to want to be able to get ‘in’ to our containers from the outside – so we are going to need to expose some ports. Let’s look at that first.

Publishing ports

Docker allows you to publish ports from the container – this means that a port on the host will be forwarded to the container. So if we have WebLogic in the container listening on port 7001, we can have Docker make that available as a port on the host. This is done by adding some more details when we start a container, for example:

host# docker run -ti -p 0.0.0.0:7001:7001 oracle-base:1.0 /bin/bash

This will start up a new container, using our base image, and will have the host listen to port 7001 on all interfaces, and forward any traffic to port 7001 in the container. You can publish as many ports as you like by adding multiple -p options. Later (when we have WebLogic Server installed) we will see this in action.

Sharing the X display

Although it is usually possible to get by without a GUI when we are installing and configuring the FMW software, it might just be easier with one. So let’s see how to share the host’s X display with a container. Again, this is done with extra details when starting a container, like this:

host# docker run -ti -p 0.0.0.0:7001:7001 -v /tmp/.X11-unix:/tmp/.X11-unix -e DISPLAY=$DISPLAY oracle-base:1.0 /bin/bash

So the trick is to set a new environment variable (using -e) and to share the special file by mounting with -v. A very big “thank you” to Fábio Rehm for publishing this method at http://fabiorehm.com/blog/2014/09/11/running-gui-apps-with-docker/

Having done this, when you launch an X program in a container with the X libraries installed in it, the window will appear on the host’s display. Try it out:

container# su - oracle container$ jconsole

Making images smaller

Since keeping images as small as possible is a key goal, it is worth knowing the following method to reduce the size of an image after you have deleted files from it. For example, suppose you just installed SOA Suite in your container. That will have created a bunch of files in /tmp. Suppose you then deleted them. Maybe your image is still 6GB in size. You can use this method to make it as small as possible:

host# docker export ID | docker import - new:name

The ‘docker export’ command works on (from) a container, there is also ‘docker save’ which works from an image – but ‘docker save’ will include all the layers, so it wont necessarily give you a smaller image.

This will create a new image called new:name that has exactly the same content but is as small as possible. When I did this on that 6GB image I installed SOA in, it went down to about 3GB.

Daemon Mode

The other useful thing to know about is ‘deamon mode’ – this lets you start up a container without it taking over your session – it will just run in the background. To do this, you just add the -d option. For example, this will start up our oracle-base container in the background:

host# docker run -dt oracle-base:1.0 /bin/bash

This is also useful when containers have a predefined command to run, then you do not need to even specify the command (like /bin/bash) – it will just start itself up. More on this later.

Setting up a private registry

Before long, you are going to want to share images with other people. Docker provides a mechanism to do this for public images. But if you have some of your own data or licensed software in the image, you might want to set up a private Docker registry. The fastest way to do this is to just fire up a Docker container with the registry in it, and map port 5000 to its host:

host# docker run -ti -p 0.0.0.0:5000:5000 --name registry-server registry

This will pull down the registry image from the Docker Hub (the public one) and start it up. You can check it is working by hitting the following url:

http://host.domain:5000/v1/search

Substitute in the correct name of your host. This will give you back a little bit of JSON telling you there are no images in the registry right now.

You can also search the registry using the docker command:

host# docker search host.domain:5000/name

Substitute the correct host name in, and change ‘name’ to the name of the image you want, e.g. oracle-base. Of course, your new registry will not have any images in it yet. So let’s see how to publish an image to the registry.

Publishing an image

Publishing an image is a two step process – first we tag it with the registry’s information, then we do a ‘push’:

host# docker tag ID host.domain:5000/oracle-base:1.0 host# docker push host.domain:5000/oracle-base:1.0

Docker will make sure any other images that this one depends on (layers on top of) are also in the registry.

Now, your friends can use that image. Let’s see how:

host# docker pull host.domain:5000/oracle-base:1.0 host# docker run -ti oracle-base:1.0 /bin/bash

Easy, hey!

It is also possible to distribute images as tar files (if you don’t have or want to have a registry). To do this, you just run export against a container to create the tar, and then import on the other end:

host# docker export ID > my.tar host# cat my.tar | docker import - ID

Building Fusion Middleware containers

Ok, so now we have learned what we need to know about Docker, let’s actually use it to create, publish and use some containers with Fusion Middleware in them.

Creating a container with WebLogic Server

Let’s start by creating a container with a simple WebLogic Server installation. Let’s assume that you have downloaded the WebLogic Server installer and you have it in your /home/oracle/Downloads directory.

Here is the command to start up the container:

host# docker run -ti -p 0.0.0.0:7001:7001 -v /tmp/.X11-unix:/tmp/.X11-unix -v /home/oracle/Downloads:/home/oracle/Downloads -e DISPLAY=$DISPLAY oracle-base:1.0 /bin/bash

And now for the installation:

container# su - oracle container$ java -jar /home/oracle/Downloads/fmw_12.1.3.0.0_wls.jar (follow through the installer and then the config wizard to create a domain) container$ exit container# rm -rf /tmp/* container# exit host# docker commit -a “user” -m “wls installed and domain created” ID host# docker images

Now, let’s create a container using that new image and fire up WLS:

host# docker run -ti -p 0.0.0.0:7001:7001 -v /tmp/.X11-unix:/tmp/.X11-unix -v /home/oracle/Downloads:/home/oracle/Downloads -e DISPLAY=$DISPLAY IMAGE_ID /bin/bash container# su - oracle container$ wlshome/user_projects/domains/base_domain/startWebLogic.sh

Now, go and fire up a browser on the host and point it to http://localhost:7001/console and you will see the WebLogic Server console from inside the container! Have a play around, and shut down the container when you are finished.

Just for good measure, let’s also publish this image for others to use:

host# docker tag ID host.domain:5000/weblogic:12.1.3 host# docker push host.domain:5000/weblogic:12.1.3

Creating a container with the oracle database

As well as creating your images manually, you can also automate the image creation using something called a Dockerfile. Let’s use this approach to create the database image, so we can see how that works too. We can also use tools like Chef and Puppet to help with the configuration. In this section, let’s use Edwin’s Puppet module for the Oracle Database.

With using a Dockerfile we are able to reproduce our steps and easily change something without forgetting something important. (Infrastructure as code). The Dockerfile will do all the operating system actions like installing the required packages, upload the software etc. Puppet or Chef will do the Oracle database provisioning. To do it all in a Dockerfile is probably too complex and will lead to some many layers ( every step in a Dockerfile is a layer) so it probably better to re-use already proven Puppet modules or Chef cookbooks.

Puppet and Chef have their own configuration files (Manifests and Recipes respectively) which invoke the Puppet Resources/Modules or Chef Cookbooks to actually perform the configuration. So when we want to change the Database service name, add some schemas or change some init parameters we can do this in a Puppet Manifest/Chef Recipe.

For the database we will install the Oracle Database Standard Edition(12.1.0.1). You can also XE but this can lead to some unexpected FMW issues and installing the Enterprise Edition is too much for our use case.

We start by creating a Dockerfile. The whole project can also be found here https://github.com/biemond/docker-database-puppet. Let’s take a look through our Dockerfile.

The first line or instruction of a Dockerfile should be the FROM command, which details what our starting or base image is. In this case we will use CentOS version 6 because this comes the closest to Oracle Enterprise Linux and version 7 is probably too new. These minimal images are maintained by CentOS and can be found here at the docker registry https://registry.hub.docker.com/_/centos/

FROM centos:centos6

The next step is installing the required packages. One of the first things we need to do, is to install the open source edition of Puppet or Chef and its matching librarian program which retrieves the required modules from Git or Puppet Forge (in Chef this is called supermarket).

In CentOS we will use yum for this.

# Need to enable centosplus for the image libselinux issue RUN yum install -y yum-utils RUN yum-config-manager --enable centosplus RUN yum -y install hostname.x86_64 rubygems ruby-devel gcc git RUN echo "gem: --no-ri --no-rdoc" > ~/.gemrc RUN rpm --import https://yum.puppetlabs.com/RPM-GPG-KEY-puppetlabs && \ rpm -ivh http://yum.puppetlabs.com/puppetlabs-release-el-6.noarch.rpm # configure & install puppet RUN yum install -y puppet tar RUN gem install librarian-puppet -v 1.0.3 RUN yum -y install httpd; yum clean all

Now we will upload the Puppet manifest using the ADD command. This will copy the file from your local to the docker filesystem.

ADD puppet/Puppetfile /etc/puppet/ ADD puppet/manifests/site.pp /etc/puppet/

Puppetfile contains all the required Puppet modules which will be downloaded from Git or Puppetforge by the librarian program we installed previously.

site.pp is the Puppet manifest which contains our configuration for the database installation.You can see a small fragment of the site.pp file below (https://github.com/biemond/docker-database-puppet/blob/master/puppet/manifests/site.pp). This will create the oracle user, the oracle database groups and the required database packages:

For more information about the Puppet Oracle database provisioning you can take a look at github. https://github.com/biemond/biemond-oradb

Back to the Dockerfile commands…

With the WORKDIR we can change the current directory ( like the Linux ‘cd’ command ) on the docker filesystem and startup librarian with the RUN command.

WORKDIR /etc/puppet/ RUN librarian-puppet install

Everything is ready to start Puppet but first we need to create some directories and upload the database software.

# upload software RUN mkdir /var/tmp/install RUN chmod 777 /var/tmp/install RUN mkdir /software RUN chmod 777 /software COPY linuxamd64_12c_database_1of2.zip /software/ COPY linuxamd64_12c_database_2of2.zip /software/

Time to start up puppet:

RUN puppet apply /etc/puppet/site.pp --verbose --detailed-exitcodes || [ $? -eq 2 ]

After this we can do a cleanup. For now this will not make our docker image smaller because every step is a separate layer but we can export/ import the container later which will significantly reduce the image

# cleanup RUN rm -rf /software/* RUN rm -rf /var/tmp/install/* RUN rm -rf /var/tmp/* RUN rm -rf /tmp/*

We also need to add a script which will start the dbora service (which is created by Puppet). This will startup the Oracle database and the Oracle Listener.

ADD startup.sh / RUN chmod 0755 /startup.sh

Here is a snippet of the startup.sh script:

The most important part of this script to determine the current hostname (you can’t have a fixed hostname because docker will change it every time you create a new container, also localhost won’t work when you try to connect from outside this container) and change the host in the listener.ora.

The last part of the Dockerfile is to add commands so this image will work well in daemon mode. We need to expose the Oracle database listener port and add a default command. This will first start /startup.sh and after this bash, so the container will keep on running after the startup.sh script.

WORKDIR / EXPOSE 1521 CMD bash -C '/startup.sh';'bash'

So we are ready to build the database image, we can do this with the docker build command.

First we need to change directory on the host to the location of the Dockerfile.

host# docker build -t oracle/database12101_centos6 .

This probably will generate an image of 13GB, so when you do this from a Docker VM make sure you have enough space. With export and import all the layers will be removed and this image will shrink to 7GB

To test our database image we can try to start a new container. This will use the default command specified in the Dockerfile.

host# docker run -i -t -p 1521:1521 oracle/database12101_centos6:latest

Or with /bin/bash we can start it ourselves

host# docker run -i -t -p 1521:1521 oracle/database12101_centos6:latest /bin/bash container# /startup.sh

Creating a container with SOA

Let’s continue by creating a container with a SOA Suite installation. This time, for contrast, we will use the manual approach. Let’s assume that you have downloaded the SOA Suite and FMW Infrastructure installers and you have them in your /home/oracle/Downloads directory.

Here is the command to start up the container:

host# docker run -ti -p 0.0.0.0:7001:7001 -v /tmp/.X11-unix:/tmp/.X11-unix -v /home/oracle/Downloads:/home/oracle/Downloads -e DISPLAY=$DISPLAY oracle-base:1.0 /bin/bash

And now for the installation:

container# su - oracle container$ java -jar /home/oracle/Downloads/fmw_12.1.3.0.0_infrastructure.jar (follow through the installer - when it fails the prereq test click on the Skip button) container$ java -jar /home/oracle/Downloads/fmw_12.1.3.0.0_soa.jar (follow through the installer - when it fails the prereq test click on the Skip button) container$ exit container# rm -rf /tmp/* container# exit host# docker commit -a “user” -m “soa installed” ID host# docker images

Now we are going to need to fire up a container with the database in it and one with SOA and connect them together before we start working on the RCU installation and create the SOA domain. Starting up multiple containers does not have the same kind of overhead that starting up multiple VMs does – remember the containers are not running their own copy of the operating system.

Linking containers

We want to start up the database container we created earlier, run it in the background (in daemon mode) and have it available for our SOA container to connect to. As part of this, we want to give it a name, we will use ‘db’:

host# docker run -dt --name db -p 1521:1521 oracle-db:12.1.0.1

Now we can start up our SOA container and connect it to the database container:

host# docker run -ti --name soa -p 7001:7001 -p 7004:7004 -e DISPLAY=$DISPLAY -v /tmp/.X11-unix:/tmp/.X11-unix --link db:db oracle-soa:12.1.3 /bin/bash

The linking will make the ‘db’ container visible to the ‘soa’ container as ‘db’ – without exposing any ports publicly, i.e. it puts them on a private network. So from the soa container, you can ‘ping db’ and see the db container.

Now we are ready to run RCU:

container# su - oracle container$ soahome/oracle_common/bin/rcu

Follow through the RCU wizard. The database connect string will be db:1521:orcl.

Now we can create a SOA domain.

container$ soahome/oracle_common/common/bin/config.sh

Follow through the config wizard to create the domain.

Before we go on, it would be a good idea to image the two containers. Exit from both containers then create images:

host# docker ps -a host# docker commit -a “user” -m “db with soa schemas” ID host# docker tag ID oracle-soa-db:12.1.3 host# docker commit -a “user” -m “soa with domain” ID host# docker tag ID oracle-soa:12.1.3

We gave the db container a new name ‘oracle-soa-db’ so we can use the empty database for other things too, but the SOA container we just update. You should tag and push them to your private registry for others to use as well.

Now you can fire up some containers using these new images, and link them, and start up the servers!

host# docker rm db host# docker run -dt --name db -p 1521:1521 oracle-soa-db:12.1.3 (db)container# ./startup.sh host# docker rm soa host# docker run -ti --name soa -p 7001:7001 -p 7004:7004 -e DISPLAY=$DISPLAY -v /tmp/.X11-unix:/tmp/.X11-unix --link db:db oracle-soa:12.1.3 /bin/bash (soa)container# su - oracle container# nohup soahome/user_projects/domains/soa_domain/startWebLogic.sh > adminserver.log & container# nohup soahome/user_projects/domains/soa_domain/bin/startManagedWebLogic.sh soa_server1 t3://localhost:7001 > soa_server1.log &

Now point a browser to http://host:7001/em and take a look around.

Create a container with SOA demo content

Now we have a nice reusable SOA image that we can use as the basis for a small ‘demo’ SOA environment. We are going to leave this as an exercise for you to try out by yourself! If you have followed through this post, you already know everything you need to do this.

Start up and link your two containers (if they are not still running). Then fire up JDeveloper and create a simple BPEL project and deploy it onto the SOA server. We won’t go through how to do this in detail – if you are reading this you will already know how to make a BPEL process and deploy it.

Once you have that done, shutdown the containers and commit the changes into new images:

host# docker ps -a host# docker commit -a “user” -m “my soa demo db” DB_CONTAINER_ID host# docker commit -a “user” -m “my soa demo” SOA_CONTAINER_ID

Now you can fire up containers from these two new images to use your demo environment, or you can fire up containers from the previous two images to go back to a clean SOA environment – perhaps to build another demo, or to do some training or POC work.

Conclusion

Well hopefully this has given you a good overview of Docker, not just the basics in abstract, but a concrete example of using Docker in a Fusion Middleware environment, and demonstrated creating and distributing images, linking images, the lightweight virtualization, and also some (bonus) integration with Vagrant, Chef and Puppet!

We think that Docker is a pretty good fit for the first scenario we described in the motivation section – creating, managing and distributing demo and training environments from a centralized repository, with smaller image sizes, faster downloads, lower overhead and more flexible updates compare to the way a lot of people do it today – with VM images.

Stay tuned to hear some thoughts and experiences about using Docker in a Continuous Delivery environment – but that’s another post!

]]>I recommend you take a look!

]]>

Special thanks to Jacco H. Landlust, Software Architect, Web-DBA and Oracle ACE for his highly valuable input.

In order to continue to get good performance from your SOA or BPM 11g server, you will want to periodically check your database – the one you are storing your SOAINFRA schema in – to see if there are any performance issues there. You need to keep doing this, as the workload changes and the usage of space in the database changes. Depending on the volume of traffic going through your system, you might want to think about tuning the database every week or every month for example.

Tuning an Oracle database is a specialist task. It requires a highly trained and experienced DBA to do it well. It is not the kind of thing that you can learn from a short blog post, like this one for example. This post is not intended to teach you how to tune an Oracle database, but rather to just give a few pointers that might help your DBA, or that you can experiment with in your own environment if you don’t have the services of a good DBA.

If you are lucky enough to have a good DBA running your SOAINFRA database, then they will probably already know how to use AWR to tune and Oracle database. If this is the case, you should just let them know that common issues in SOA/BPM databases are SGA sizing, statistics, missing indexes and high watermark contention. They should know what to do with that information.

If, however you do not have a good DBA managing your database, perhaps you only have the database because it is needed for SOA/BPM, and it is being managed by a middleware-style systems administrator, then you might want to read on… but please keep in mind that this advise is not intended to replace the need for a well trained specialist. You should probably try to get a DBA on staff, or contract, to keep your database performing well.

This article provides a very brief introduction to the use of the Automatic Workload Repository (AWR) in the Oracle Database and what to look for in the reports for your SOA/BPM environment.

Before you start playing with AWR, it is a good idea to go and read a bit about it. A good place to start would be Overview of the Automatic Workload Repository and Managing the Automatic Workload Repository. You should pay particular attention to making sure you develop an understanding of the concept of ‘DB TIME,’ without which extracting much meaning from AWR reports will be difficult.

AWR is a built in feature of the Oracle Database. Your database will automatically collect performance information and create snapshots every hour. It will also automatically age and remove these over time.

You can also tell the database to take a snapshot manually using this command, which you will need to issue as a SYSDBA user in SQLPlus:

SELECT DBMS_WORKLOAD_REPOSITORY.Create_Snapshot FROM DUAL;

So the process is as follows:

- Create a snapshot (using the SQL above),

- Run your tests,

- Create another snapshot.

Your tests should be some kind of representative and repeatable workload if you are doing this in a test environment.

It is also safe to run these reports against your production environment. In this case, you need not create the snapshots manually, you can just use the hourly ones that the database creates for you automatically.

Once you have your snapshots, you are ready to create a report. You use the following command, again as a SYSDBA, to create the report:

@?/rdbms/admin/awrrpt.sql

This will ask you to select the start and end snapshots and for other details like the format and file name for the output.

After you have done this, open up your report and take a look. Be warned – it is a pretty big report.

Here is an example of the first page of the report, this is from a VM with BPM 11.1.1.5 plus the Feature Pack, running on Oracle Linux 5, with 10GB of memory, everything in the one VM – so not an ideal production environment, which is good, because we should be able to see some issues in the report.

WORKLOAD REPOSITORY report for

DB Name DB Id Instance Inst Num Startup Time Release RAC

------------ ----------- ------------ -------- --------------- ----------- ---

ORCL 1292287891 orcl 1 24-Nov-11 11:03 11.2.0.1.0 NO

Host Name Platform CPUs Cores Sockets Memory(GB)

---------------- -------------------------------- ---- ----- ------- ----------

bpmfp.mark.oracl Linux x86 64-bit 8 8 2 9.78

Snap Id Snap Time Sessions Curs/Sess

--------- ------------------- -------- ---------

Begin Snap: 468 24-Nov-11 11:27:20 41 5.0

End Snap: 469 24-Nov-11 11:33:44 42 6.8

Elapsed: 6.41 (mins)

DB Time: 0.42 (mins)

Cache Sizes Begin End

~~~~~~~~~~~ ---------- ----------

Buffer Cache: 252M 252M Std Block Size: 8K

Shared Pool Size: 396M 396M Log Buffer: 5,424K

Load Profile Per Second Per Transaction Per Exec Per Call

~~~~~~~~~~~~ --------------- --------------- ---------- ----------

DB Time(s): 0.1 0.0 0.00 0.00

DB CPU(s): 0.0 0.0 0.00 0.00

Redo size: 36,700.2 7,761.1

Logical reads: 244.9 51.8

Block changes: 158.5 33.5

Physical reads: 1.2 0.3

Physical writes: 3.6 0.8

User calls: 242.8 51.3

Parses: 33.9 7.2

Hard parses: 0.5 0.1

W/A MB processed: 0.1 0.0

Logons: 0.1 0.0

Executes: 69.1 14.6

Rollbacks: 0.8 0.2

Transactions: 4.7

Instance Efficiency Percentages (Target 100%)