Now, with 4.4, we are seeing some really cool incubating features becoming more stable inlcuding Helidon Data Repositories, Helidon SE Declarative and Helidon AI. I am working on a sample project called Helidon-Eats to show how these work together. In this installment, I am looking at the first two.

The “No-Magic” Power of Helidon SE Declarative

If you’ve used Helidon MP (MicroProfile), or if you are more familiar with Spring Boot’s Inversion of Control approach (like me), then you’re used to the convenience of dependency injection and annotations. Helidon SE, on the other hand, has always focused on transparency and avoiding “magic.” While that’s great for performance, it usually meant writing more boilerplate code to register routes and manage services manually.

Helidon SE Declarative changes that. It gives you an annotation-driven model like MP, but here is the trick: it does everything at build-time. Using Java annotation processors, Helidon generates service descriptors during compilation. This means you get the clean, injectable code you want, but without the runtime reflection overhead that slows down startup and eats memory. Benchmarks have even shown performance gains of up to +295% over traditional reflection-based models on modern JDKs. (see this article)

Now, to be completely fair, I will say that I am not completely sold on the build-time piece yet. For example, the @GenerateBinding annotation (if I am not mistaken) causes an ApplicationBinding class to be generated at build time, and that lives in your target/classes directory. I found during refactoring that you have to be careful to mvn clean each time to make sure it stays in sync with your code, just doing a mvn compile or package could get you into trouble. And I am not sure I am happy with it not being checked into the source code repository. But, I’ll withhold judegment until I have worked with it a bit more!

Simplifying Persistence with Helidon Data

The Helidon Data Repository is another big addition. It’s a high-level abstraction that acts as a compile-time alternative to heavy runtime ORM frameworks. Instead of writing JDBC code, you define a Java interface, and Helidon’s annotation processor generates the implementation for you.

It supports standard patterns like CrudRepository and PageableRepository, which I used in this project to handle the recipe collection. The framework can even derive queries directly from your method names (like Spring Data does) – so a method like findById is automatically turned into the correct SQL at build-time.

The Backend: Oracle AI Database 26ai

For this sample, I’m using Oracle AI Database 26ai Free. I sourced some public domain recipe data from Kaggle that comes as a line-by-line JSON file (LDJSON).

Normally, if you want to store hierarchical JSON in relational tables, you have to write complex mapping logic in your application. But I wanted to try a more novel approach using JSON Relational Duality Views (DV).

Duality Views are a game-changer because they decouple how data is stored from how it is accessed. My data is stored in three normalized tables (RECIPE, INGREDIENT, and DIRECTION) which ensures ACID consistency and no data duplication. The database can surface this data to applications as a single, hierarchical JSON document. I am not using that feature in this post, but I will in the future!

GraphQL-Based View Creation and Loading

One of the coolest parts of Oracle AI Database 26ai is that you can define these views using a GraphQL-based syntax . The database engine automatically figures out the joins based on the foreign key relationships.

Here is how I defined the recipe_dv:

CREATE OR REPLACE JSON RELATIONAL DUALITY VIEW recipe_dv AS

recipe @insert @update @delete

{

recipeId: id,

recipeTitle: title,

description: description,

category: category,

subcategory: subcategory,

ingredients: ingredient @insert @update @delete

[

{

id: id,

item: item

}

],

directions: direction @insert @update @delete

[

{

id: id,

step: step

}

]

};

Isn’t that just the cleanest piece of SQL that deals with JSON that you’ve ever seen?

Because the view is “updatable” (@insert, @update), I used it to actually load the data. Instead of a complex ETL process, my startup script just reads the LDJSON file line-by-line and does a simple SQL insert directly into the view. The database engine takes that single JSON object and automatically decomposes it into rows for the three underlying tables.

Modeling the Service

On the Java side, I modeled the Recipe entity to handle the parent-child relationships using standard @OneToMany collections.

One detail I want to highlight is the use of @JsonbTransient. When you build a REST API, you often have internal metadata like database primary keys or sort ordinals that you don’t want messing up the JSON that the end user gets to see. By annotating those fields with @JsonbTransient, they are excluded from the final JSON response. This keeps the API response clean and focused only on the recipe data.

@Entity

@Table(name = "RECIPE")

public class Recipe {

@Id

@Column(name = "ID")

private Long recipeId;

private String recipeTitle;

@JsonbTransient

private Long internalId; // Hidden from the API

@OneToMany(mappedBy = "recipe")

private List<Ingredient> ingredients;

//...

}

In the repository object, you can use the method naming conventions to automatically create queries (like Spring Data) and you can also write your own JPQL (not SQL) queries, as I did in this case (also like Spring Data):

package com.github.markxnelson.helidoneats.recipes.model;

import java.util.Optional;

import io.helidon.data.Data;

@Data.Repository

public interface RecipeRepository extends Data.CrudRepository<Recipe, Integer> {

@Data.Query("SELECT DISTINCT r FROM Recipe r "

+ "LEFT JOIN FETCH r.ingredients "

+ "LEFT JOIN FETCH r.directions "

+ "WHERE r.recipeId = :recipeId")

Optional<Recipe> findByRecipeIdWithDetails(Integer recipeId);

}

Wiring and Startup

The configuration is handled in the application.yaml, where I point Helidon to the Oracle instance using syntax that again is very reminiscent of what I’d do in Spring Boot.

server:

port: 8080

host: 0.0.0.0

app:

greeting: "Hello"

data:

sources:

sql:

- name: "food"

provider.hikari:

username: "food"

password: "Welcome12345##"

url: "jdbc:oracle:thin:@//localhost:1521/freepdb1"

jdbc-driver-class-name: "oracle.jdbc.OracleDriver"

persistence-units:

jakarta:

- name: "recipe"

data-source: "food"

properties:

hibernate.dialect: "org.hibernate.dialect.OracleDialect"

jakarta.persistence.schema-generation.database.action: "none"

With Declarative SE, injecting the repository into my endpoint is simple. I just use @Service.Inject on the constructor, which allows me to keep my fields private final.

@Service.Singleton

@Http.Path("/recipe")

public class RecipeEndpoint {

private final RecipeRepository repository;

@Service.Inject

public RecipeEndpoint(RecipeRepository repository) {

this.repository = repository;

}

@Http.GET

@Http.Path("/{id}")

public Optional<Recipe> getRecipe(Long id) {

return repository.findById(id);

}

}

Finally, the Main class uses @Service.GenerateBinding. This tells the annotation processor to generate the “wiring” code that starts the server and initializes the service registry without needing to scan the classpath at runtime.

@Service.GenerateBinding

public class Main {

public static void main(String args) {

LogConfig.configureRuntime();

ServiceRegistryManager.start(ApplicationBinding.create());

}

}

In this context, the “service registry” is something in Helidon that keeps track of the services in the application and handles injection and so on. It’s a lot like the way Spring Boot scans for beans and wires/injects them where needed.

The Result

When you hit the service, you get a clean, well structured JSON response that masks all the complexity of the underlying three-table relational join.

Example Response for http://localhost:8080/recipe/22387:

{

"category": "Appetizers And Snacks",

"description": "I came up with this rhubarb salsa while trying to figure out what to do with an over-abundance of rhubarb...",

"directions":,

"ingredients": [

"2 cups thinly sliced rhubarb",

"1 small red onion, coarsely chopped",

"3 roma (plum) tomatoes, finely diced"

],

"recipeId": 22387,

"recipeTitle": "Tangy Rhubarb Salsa",

"subcategory": "Salsa"

}

Wrap Up

Helidon 4.4 is making the SE flavor feel a lot more like a high-productivity framework without sacrificing performance. By shifting the data transformation logic to the database with Duality Views and using build-time code generation for injection, we can build services that are both incredibly fast and easy to maintain.

Now, you may have noticed that I said “like Spring” a lot in this post – and that’s because of two things – I do happen to use Spring a lot more than I use Helidon, and I like it. So I am very happy that Helidon is looking more like Spring, it makes it a lot easier to switch between the two, and I think it lowers the barrier to entry for people who are coming from the Spring world.

Grab the code from the Helidon-Eats repo and let me know what you think – and stay tuned for the next steps as I explore Helidon AI!

]]>This is a pattern you’ll need when you have a single application that needs to connect to multiple databases. Maybe you have different domains in separate databases, or you’re working with legacy systems, or you need to separate read and write operations across different database instances. Whatever the reason, Spring Boot makes this pretty straightforward once you understand the configuration pattern.

I’ve put together a complete working example on GitHub at https://github.com/markxnelson/spring-multiple-jpa-datasources, and in this post I’ll walk you through how to build it from scratch.

The Scenario

For this example, we’re going to build a simple application that manages two separate domains:

- Customers – stored in one database

- Products – stored in a different database

Each domain will have its own datasource, entity manager, and transaction manager. We’ll use Spring Data JPA repositories to interact with each database, and we’ll show how to use both datasources in a REST controller.

I am assuming you have a database with two users called customer and product and some tables. Here’s the SQL to set that up:

$ sqlplus sys/Welcome12345@localhost:1521/FREEPDB1 as sysdba

alter session set container=freepdb1;

create user customer identified by Welcome12345;

create user product identified by Welcome12345;

grant connect, resource, unlimited tablespace to customer;

grant connect, resource, unlimited tablespace to product;

commit;

$ sqlplus customer/Welcome12345@localhost:1521/FREEPDB1

create table customer (id number, name varchar2(64));

insert into customer (id, name) values (1, 'mark');

commit;

$ sqlplus product/Welcome12345@localhost:1521/FREEPDB1

create table product (id number, name varchar2(64));

insert into product (id, name) values (1, 'coffee machine');

commit;

Step 1: Dependencies

Let’s start with the Maven dependencies. Here’s what you’ll need in your pom.xml:

<dependencies>

<!-- Spring Boot Starter Web for REST endpoints -->

<dependency>

<groupId>org.springframework.boot</groupId>

<artifactId>spring-boot-starter-web</artifactId>

</dependency>

<!-- Spring Boot Starter Data JPA -->

<dependency>

<groupId>org.springframework.boot</groupId>

<artifactId>spring-boot-starter-data-jpa</artifactId>

</dependency>

<!-- Oracle Spring Boot Starter for UCP -->

<dependency>

<groupId>com.oracle.database.spring</groupId>

<artifactId>oracle-spring-boot-starter-ucp</artifactId>

<version>25.3.0</version>

</dependency>

</dependencies>

The key dependency here is the oracle-spring-boot-starter-ucp, which provides autoconfiguration for Oracle’s Universal Connection Pool. UCP is Oracle’s high-performance connection pool implementation that provides features like connection affinity, Fast Connection Failover, and Runtime Connection Load Balancing.

Step 2: Configure the Datasources in application.yaml

Now let’s configure our two datasources in the application.yaml file. We’ll define connection properties for both the customer and product databases:

spring:

application:

name: demo

jpa:

customer:

properties:

hibernate.dialect: org.hibernate.dialect.OracleDialect

hibernate.hbm2ddl.auto: validate

hibernate.format_sql: true

hibernate.show_sql: true

product:

properties:

hibernate.dialect: org.hibernate.dialect.OracleDialect

hibernate.hbm2ddl.auto: validate

hibernate.format_sql: true

hibernate.show_sql: true

datasource:

customer:

url: jdbc:oracle:thin:@localhost:1521/freepdb1

username: customer

password: Welcome12345

driver-class-name: oracle.jdbc.OracleDriver

type: oracle.ucp.jdbc.PoolDataSourceImpl

oracleucp:

connection-factory-class-name: oracle.jdbc.pool.OracleDataSource

connection-pool-name: CustomerConnectionPool

initial-pool-size: 15

min-pool-size: 10

max-pool-size: 30

shared: true

product:

url: jdbc:oracle:thin:@localhost:1521/freepdb1

username: product

password: Welcome12345

driver-class-name: oracle.jdbc.OracleDriver

type: oracle.ucp.jdbc.PoolDataSourceImpl

oracleucp:

connection-factory-class-name: oracle.jdbc.pool.OracleDataSource

connection-pool-name: CustomerConnectionPool

initial-pool-size: 15

min-pool-size: 10

max-pool-size: 30

shared: true

Notice that we’re using custom property prefixes (spring.datasource.customer and product) instead of the default spring.datasource. This is because Spring Boot’s autoconfiguration will only create a single datasource by default. When you need multiple datasources, you need to create them manually and use custom configuration properties.

In this example, both datasources happen to point to the same database server but use different schemas (users). In a real-world scenario, these would typically point to completely different database instances.

Step 3: Configure the Customer Datasource

Now we need to create the configuration classes that will set up our datasources, entity managers, and transaction managers. Let’s start with the customer datasource.

Create a new package called customer and add a configuration class called CustomerDataSourceConfig.java:

package com.example.demo.customer;

import javax.sql.DataSource;

import org.springframework.beans.factory.annotation.Qualifier;

import org.springframework.boot.autoconfigure.jdbc.DataSourceProperties;

import org.springframework.boot.context.properties.ConfigurationProperties;

import org.springframework.boot.orm.jpa.EntityManagerFactoryBuilder;

import org.springframework.context.annotation.Bean;

import org.springframework.context.annotation.Configuration;

import org.springframework.context.annotation.Primary;

import org.springframework.data.jpa.repository.config.EnableJpaRepositories;

import org.springframework.orm.jpa.JpaTransactionManager;

import org.springframework.orm.jpa.LocalContainerEntityManagerFactoryBean;

import org.springframework.transaction.PlatformTransactionManager;

import org.springframework.transaction.annotation.EnableTransactionManagement;

@Configuration

@EnableTransactionManagement

@EnableJpaRepositories(entityManagerFactoryRef = "customerEntityManagerFactory", transactionManagerRef = "customerTransactionManager", basePackages = {

"com.example.demo.customer" })

public class CustomerDataSourceConfig {

@Bean(name = "customerProperties")

@ConfigurationProperties("spring.datasource.customer")

public DataSourceProperties customerDataSourceProperties() {

return new DataSourceProperties();

}

/**

* Creates and configures the customer DataSource.

*

* @param properties the customer datasource properties

* @return configured DataSource instance

*/

@Primary

@Bean(name = "customerDataSource")

@ConfigurationProperties(prefix = "spring.datasource.customer")

public DataSource customerDataSource(@Qualifier("customerProperties") DataSourceProperties properties) {

return properties.initializeDataSourceBuilder().build();

}

/**

* Reads customer JPA properties from application.yaml.

*

* @return Map of JPA properties

*/

@Bean(name = "customerJpaProperties")

@ConfigurationProperties("spring.jpa.customer.properties")

public java.util.Map<String, String> customerJpaProperties() {

return new java.util.HashMap<>();

}

/**

* Creates and configures the customer EntityManagerFactory.

*

* @param builder the EntityManagerFactoryBuilder

* @param dataSource the customer datasource

* @param jpaProperties the JPA properties from application.yaml

* @return configured LocalContainerEntityManagerFactoryBean

*/

@Primary

@Bean(name = "customerEntityManagerFactory")

public LocalContainerEntityManagerFactoryBean customerEntityManagerFactory(EntityManagerFactoryBuilder builder,

@Qualifier("customerDataSource") DataSource dataSource,

@Qualifier("customerJpaProperties") java.util.Map<String, String> jpaProperties) {

return builder.dataSource(dataSource)

.packages("com.example.demo.customer")

.persistenceUnit("customers")

.properties(jpaProperties)

.build();

}

@Bean

@ConfigurationProperties("spring.jpa.customer")

public PlatformTransactionManager customerTransactionManager(

@Qualifier("customerEntityManagerFactory") LocalContainerEntityManagerFactoryBean entityManagerFactory) {

return new JpaTransactionManager(entityManagerFactory.getObject());

}

}

Let’s break down what’s happening here:

- @EnableJpaRepositories – This tells Spring Data JPA where to find the repositories for this datasource. We specify the base package (

com.example.multidatasource.customer), and we reference the entity manager factory and transaction manager beans by name. - @Primary – We mark the customer datasource as the primary one. This means it will be used by default when autowiring a datasource, entity manager, or transaction manager without a

@Qualifier. You must have exactly one primary datasource when using multiple datasources. - customerDataSource() – This creates the datasource bean using Spring Boot’s

DataSourceBuilder. The@ConfigurationPropertiesannotation binds the properties from ourapplication.yaml(with thecustomer.datasourceprefix) to the datasource configuration. - customerEntityManagerFactory() – This creates the JPA entity manager factory, which is responsible for creating entity managers. We configure it to scan for entities in the customer package and set up Hibernate properties.

- customerTransactionManager() – This creates the transaction manager for the customer datasource. The transaction manager handles transaction boundaries and ensures ACID properties.

Step 4: Configure the Product Datasource

Now let’s create the configuration for the product datasource. Create a new package called product and add ProductDataSourceConfig.java:

package com.example.demo.product;

import javax.sql.DataSource;

import org.springframework.beans.factory.annotation.Autowired;

import org.springframework.beans.factory.annotation.Qualifier;

import org.springframework.boot.autoconfigure.jdbc.DataSourceProperties;

import org.springframework.boot.context.properties.ConfigurationProperties;

import org.springframework.boot.orm.jpa.EntityManagerFactoryBuilder;

import org.springframework.context.annotation.Bean;

import org.springframework.context.annotation.Configuration;

import org.springframework.data.jpa.repository.config.EnableJpaRepositories;

import org.springframework.orm.jpa.JpaTransactionManager;

import org.springframework.orm.jpa.LocalContainerEntityManagerFactoryBean;

import org.springframework.transaction.PlatformTransactionManager;

import org.springframework.transaction.annotation.EnableTransactionManagement;

@Configuration

@EnableTransactionManagement

@EnableJpaRepositories(entityManagerFactoryRef = "productEntityManagerFactory", transactionManagerRef = "productTransactionManager", basePackages = {

"com.example.demo.product" })

public class ProductDataSourceConfig {

@Bean(name = "productProperties")

@ConfigurationProperties("spring.datasource.product")

public DataSourceProperties productDataSourceProperties() {

return new DataSourceProperties();

}

@Bean(name = "productDataSource")

@ConfigurationProperties(prefix = "spring.datasource.product")

public DataSource productDataSource(@Qualifier("productProperties") DataSourceProperties properties) {

return properties.initializeDataSourceBuilder().build();

}

/**

* Reads product JPA properties from application.yaml.

*

* @return Map of JPA properties

*/

@Bean(name = "productJpaProperties")

@ConfigurationProperties("spring.jpa.product.properties")

public java.util.Map<String, String> productJpaProperties() {

return new java.util.HashMap<>();

}

/**

* Creates and configures the product EntityManagerFactory.

*

* @param builder the EntityManagerFactoryBuilder

* @param dataSource the product datasource

* @param jpaProperties the JPA properties from application.yaml

* @return configured LocalContainerEntityManagerFactoryBean

*/

@Bean(name = "productEntityManagerFactory")

public LocalContainerEntityManagerFactoryBean productEntityManagerFactory(@Autowired EntityManagerFactoryBuilder builder,

@Qualifier("productDataSource") DataSource dataSource,

@Qualifier("productJpaProperties") java.util.Map<String, String> jpaProperties) {

return builder.dataSource(dataSource)

.packages("com.example.demo.product")

.persistenceUnit("products")

.properties(jpaProperties)

.build();

}

@Bean

@ConfigurationProperties("spring.jpa.product")

public PlatformTransactionManager productTransactionManager(

@Qualifier("productEntityManagerFactory") LocalContainerEntityManagerFactoryBean entityManagerFactory) {

return new JpaTransactionManager(entityManagerFactory.getObject());

}

}

The product configuration is almost identical to the customer configuration, with a few key differences:

- No @Primary annotations – Since we already designated the customer datasource as primary, we don’t mark the product beans as primary.

- Different package – The

@EnableJpaRepositoriespoints to the product package, and the entity manager factory scans the product package for entities. - Different bean names – All the beans have different names (productDataSource, productEntityManagerFactory, productTransactionManager) to avoid conflicts.

Step 5: Create the Domain Models

Now let’s create the JPA entities for each datasource. First, in the customer package, create Customer.java:

package com.example.demo.customer;

import jakarta.persistence.Entity;

import jakarta.persistence.Id;

@Entity

public class Customer {

@Id

public int id;

public String name;

public Customer() {

this.id = 0;

this.name = "";

}

}

And in the product package, create Product.java:

package com.example.demo.product;

import jakarta.persistence.Entity;

import jakarta.persistence.Id;

@Entity

public class Product {

@Id

public int id;

public String name;

public Product() {

this.id = 0;

this.name = "";

}

}

Step 6: Create the Repositories

Now let’s create Spring Data JPA repositories for each entity. In the customer package, create CustomerRepository.java:

package com.example.demo.customer;

import org.springframework.data.jpa.repository.JpaRepository;

public interface CustomerRepository extends JpaRepository<Customer, Integer> {

}

And in the product package, create ProductRepository.java:

package com.example.demo.product;

import org.springframework.data.jpa.repository.JpaRepository;

public interface ProductRepository extends JpaRepository<Product, Integer> {

}

Step 7: Create a REST Controller

Finally, let’s create a REST controller that demonstrates how to use both datasources. Create a controller package and add CustomerController.java:

package com.example.demo.controllers;

import java.util.List;

import org.springframework.web.bind.annotation.GetMapping;

import org.springframework.web.bind.annotation.RestController;

import com.example.demo.customer.Customer;

import com.example.demo.customer.CustomerRepository;

@RestController

public class CustomerController {

final CustomerRepository customerRepository;

public CustomerController(CustomerRepository customerRepository) {

this.customerRepository = customerRepository;

}

@GetMapping("/customers")

public List<Customer> getCustomers() {

return customerRepository.findAll();

}

}

A few important things to note about the controller:

- Transaction Managers – When you have multiple datasources, you need to explicitly specify which transaction manager to use. Notice the

@Transactional("customerTransactionManager")and@Transactional("productTransactionManager")annotations on the write operations. If you don’t specify a transaction manager, Spring will use the primary one (customer) by default. - Repository Autowiring – The repositories are autowired normally. Spring knows which datasource each repository uses based on the package they’re in, which we configured in our datasource configuration classes.

- Cross-datasource Operations – The

initializeData()method demonstrates working with both datasources in a single method. However, note that these operations are not in a distributed transaction – if one fails, the other won’t automatically roll back. If you need distributed transactions across multiple databases, you would need to use JTA (Java Transaction API).

Let’s also create ProductController.java:

package com.example.demo.controllers;

import java.util.List;

import org.springframework.web.bind.annotation.GetMapping;

import org.springframework.web.bind.annotation.RestController;

import com.example.demo.product.Product;

import com.example.demo.product.ProductRepository;

@RestController

public class ProductController {

final ProductRepository productRepository;

public ProductController(ProductRepository productRepository) {

this.productRepository = productRepository;

}

@GetMapping("/products")

public List<Product> getProducts() {

return productRepository.findAll();

}

}

Testing the Application

Now you can run your application! Make sure you have two Oracle database users created (customer and product), or adjust the configuration to point to your specific databases.

Start the application:

mvn spring-boot:run

Then you can test it with some curl commands:

# Get all customers

$ curl http://localhost:8080/customers

[{"id":1,"name":"mark"}]

# Get all products

$ curl http://localhost:8080/products

[{"id":1,"name":"coffee machine"}]

Wrapping Up

And there you have it! We’ve successfully configured a Spring Boot application with multiple datasources using Spring Data JPA and Oracle’s Universal Connection Pool. The key points to remember are:

- Custom configuration properties – Use custom prefixes for each datasource in your

application.yaml - Manual configuration – Create configuration classes for each datasource with beans for the datasource, entity manager factory, and transaction manager

- Primary datasource – Designate one datasource as primary using

@Primary - Package organization – Keep entities and repositories for each datasource in separate packages

- Explicit transaction managers – Specify which transaction manager to use for write operations with

@Transactional

This pattern works great when you need to connect to multiple databases, whether they’re different types of databases or different instances of the same database. Oracle’s Universal Connection Pool provides excellent performance and reliability for your database connections.

I hope this helps you work with multiple datasources in your Spring Boot applications! The complete working code is available on GitHub at https://github.com/markxnelson/spring-multiple-jpa-datasources.

Happy coding!

]]>We also published updated source and sink Kafka connectors for Transactional Event Queues – but I’ll cover those in a separate post.

Let’s build a Kafka producer and consumer using the updated Kafka-compatible APIs.

Prepare the database

The first thing we want to do is start up the Oracle 23c Free Database. This is very easy to do in a container using a command like this:

docker run --name free23c -d -p 1521:1521 -e ORACLE_PWD=Welcome12345 container-registry.oracle.com/database/free:latestThis will pull the image and start up the database with a listener on port 1521. It will also create a pluggable database (a database container) called “FREEPDB1” and will set the admin passwords to the password you specified on this command.

You can tail the logs to see when the database is ready to use:

docker logs -f free23c

(look for this message...)

#########################

DATABASE IS READY TO USE!

#########################

Also, grab the IP address of the container, we’ll need that to connect to the database:

docker inspect free23c | grep IPA

"SecondaryIPAddresses": null,

"IPAddress": "172.17.0.2",

"IPAMConfig": null,

"IPAddress": "172.17.0.2",

To set up the necessary permissions, you’ll need to connect to the database with a client. If you don’t have one already, I’d recommend trying the new SQLcl CLI which you can download here. Start it up and connect to the database like this (note that your IP address and password may be different):

sql sys/Welcome12345@//172.17.0.2:1521/freepdb1 as sysdba

SQLcl: Release 22.2 Production on Tue Apr 11 12:36:24 2023

Copyright (c) 1982, 2023, Oracle. All rights reserved.

Connected to:

Oracle Database 23c Free, Release 23.0.0.0.0 - Developer-Release

Version 23.2.0.0.0

SQL>

Now, run these commands to create a user called “mark” and give it the necessary privileges:

SQL> create user mark identified by Welcome12345;

User MARK created.

SQL> grant resource, connect, unlimited tablespace to mark;

Grant succeeded.

SQL> grant execute on dbms_aq to mark;

Grant succeeded.

SQL> grant execute on dbms_aqadm to mark;

Grant succeeded.

SQL> grant execute on dbms_aqin to mark;

Grant succeeded.

SQL> grant execute on dbms_aqjms_internal to mark;

Grant succeeded.

SQL> grant execute on dbms_teqk to mark;

Grant succeeded.

SQL> grant execute on DBMS_RESOURCE_MANAGER to mark;

Grant succeeded.

SQL> grant select_catalog_role to mark;

Grant succeeded.

SQL> grant select on sys.aq$_queue_shards to mark;

Grant succeeded.

SQL> grant select on user_queue_partition_assignment_table to mark;

Grant succeeded.

SQL> exec dbms_teqk.AQ$_GRANT_PRIV_FOR_REPL('MARK');

PL/SQL procedure successfully completed.

SQL> commit;

Commit complete.

SQL> quit;Create a Kafka topic and consumer group using these statements. Note that you could also do this from the Java code, or using the Kafka-compatible Transactional Event Queues REST API (which I wrote about in this post):

begin

-- Creates a topic named TEQ with 5 partitions and 7 days of retention time

dbms_teqk.aq$_create_kafka_topic('TEQ', 5);

-- Creates a Consumer Group CG1 for Topic TEQ

dbms_aqadm.add_subscriber('TEQ', subscriber => sys.aq$_agent('CG1', null, null));

end;

/You should note that the dbms_teqk package is likely to be renamed in the GA release of Oracle Database 23c, but for the Oracle Database 23c Free – Developer Release you can use it.

Ok, we are ready to start on our Java code!

Create a Java project

Let’s create a Maven POM file (pom.xml) and add the dependencies we need for this application. I’ve also iunclude some profiles to make it easy to run the two main entry points we will create – the producer, and the consumer. Here’s the content for the pom.xml. Note that I have excluded the osdt_core and osdt_cert transitive dependencies, since we are not using a wallet or SSL in this example, so we do not need those libraries:

<?xml version="1.0" encoding="UTF-8"?>

<project xmlns="http://maven.apache.org/POM/4.0.0"

xmlns:xsi="http://www.w3.org/2001/XMLSchema-instance"

xsi:schemaLocation="http://maven.apache.org/POM/4.0.0 https://maven.apache.org/xsd/maven-4.0.0.xsd">

<modelVersion>4.0.0</modelVersion>

<groupId>com.example</groupId>

<artifactId>okafka-demo</artifactId>

<version>0.0.1-SNAPSHOT</version>

<name>okafka-demo</name>

<description>OKafka demo</description>

<properties>

<java.version>17</java.version>

<maven.compiler.target>17</maven.compiler.target>

<maven.compiler.source>17</maven.compiler.source>

</properties>

<dependencies>

<dependency>

<groupId>com.oracle.database.messaging</groupId>

<artifactId>okafka</artifactId>

<version>23.2.0.0</version>

<exclusions>

<exclusion>

<artifactId>osdt_core</artifactId>

<groupId>com.oracle.database.security</groupId>

</exclusion>

<exclusion>

<artifactId>osdt_cert</artifactId>

<groupId>com.oracle.database.security</groupId>

</exclusion>

</exclusions>

</dependency>

</dependencies>

<profiles>

<profile>

<id>consumer</id>

<build>

<plugins>

<plugin>

<groupId>org.codehaus.mojo</groupId>

<artifactId>exec-maven-plugin</artifactId>

<version>3.0.0</version>

<executions>

<execution>

<goals>

<goal>exec</goal>

</goals>

</execution>

</executions>

<configuration>

<executable>java</executable>

<arguments>

<argument>-Doracle.jdbc.fanEnabled=false</argument>

<argument>-classpath</argument>

<classpath/>

<argument>com.example.SimpleConsumerOKafka</argument>

</arguments>

</configuration>

</plugin>

</plugins>

</build>

</profile>

<profile>

<id>producer</id>

<build>

<plugins>

<plugin>

<groupId>org.codehaus.mojo</groupId>

<artifactId>exec-maven-plugin</artifactId>

<version>3.0.0</version>

<executions>

<execution>

<goals>

<goal>exec</goal>

</goals>

</execution>

</executions>

<configuration>

<executable>java</executable>

<arguments>

<argument>-Doracle.jdbc.fanEnabled=false</argument>

<argument>-classpath</argument>

<classpath/>

<argument>com.example.SimpleProducerOKafka</argument>

</arguments>

</configuration>

</plugin>

</plugins>

</build>

</profile>

</profiles>

</project>This is a pretty straightforward POM. I just set the project’s coordinates, declared my one dependency, and then created the two profiles so I can run the code easily.

Next, we are going to need a file called ojdbc.properties in the same directory as the POM with this content:

user=mark

password=Welcome12345The KafkaProducer and KafkaConsumer will use this to connect to the database.

Create the consumer

Ok, now let’s create our consumer. In a directory called src/main/jaba/com/example, create a new Java file called SimpleConsumerOKafka.java with the following content:

package com.example;

import java.util.Properties;

import java.time.Duration;

import java.util.Arrays;

import org.oracle.okafka.clients.consumer.KafkaConsumer;

import org.apache.kafka.clients.consumer.ConsumerRecords;

import org.apache.kafka.common.header.Header;

import org.apache.kafka.clients.consumer.Consumer;

import org.apache.kafka.clients.consumer.ConsumerRecord;

public class SimpleConsumerOKafka {

public static void main(String[] args) {

// set the required properties

Properties props = new Properties();

props.put("bootstrap.servers", "172.17.0.2:1521");

props.put("group.id" , "CG1");

props.put("enable.auto.commit","false");

props.put("max.poll.records", 100);

props.put("key.deserializer",

"org.apache.kafka.common.serialization.StringDeserializer");

props.put("value.deserializer",

"org.apache.kafka.common.serialization.StringDeserializer");

props.put("oracle.service.name", "freepdb1");

props.put("oracle.net.tns_admin", ".");

props.put("security.protocol","PLAINTEXT");

// create the consumer

Consumer<String , String> consumer = new KafkaConsumer<String, String>(props);

consumer.subscribe(Arrays.asList("TEQ"));

int expectedMsgCnt = 4000;

int msgCnt = 0;

long startTime = 0;

// consume messages

try {

startTime = System.currentTimeMillis();

while(true) {

try {

ConsumerRecords <String, String> records =

consumer.poll(Duration.ofMillis(10_000));

for (ConsumerRecord<String, String> record : records) {

System.out.printf("partition = %d, offset = %d, key = %s, value = %s\n ",

record.partition(), record.offset(), record.key(), record.value());

for(Header h: record.headers()) {

System.out.println("Header: " + h.toString());

}

}

// commit the records we received

if (records != null && records.count() > 0) {

msgCnt += records.count();

System.out.println("Committing records " + records.count());

try {

consumer.commitSync();

} catch(Exception e) {

System.out.println("Exception in commit " + e.getMessage());

continue;

}

// if we got all the messages we expected, then exit

if (msgCnt >= expectedMsgCnt ) {

System.out.println("Received " + msgCnt + ". Expected " +

expectedMsgCnt +". Exiting Now.");

break;

}

} else {

System.out.println("No records fetched. Retrying...");

Thread.sleep(1000);

}

} catch(Exception e) {

System.out.println("Inner Exception " + e.getMessage());

throw e;

}

}

} catch(Exception e) {

System.out.println("Exception from consumer " + e);

e.printStackTrace();

} finally {

long runDuration = System.currentTimeMillis() - startTime;

System.out.println("Application closing Consumer. Run duration " +

runDuration + " ms");

consumer.close();

}

}

}Let’s walk through this code together.

The first thing we do is prepare the properties for the KafkaConsumer. This is fairly standard, though notice that the bootstrap.servers property contains the address of your database listener:

Properties props = new Properties();

props.put("bootstrap.servers", "172.17.0.2:1521");

props.put("group.id" , "CG1");

props.put("enable.auto.commit","false");

props.put("max.poll.records", 100);

props.put("key.deserializer",

"org.apache.kafka.common.serialization.StringDeserializer");

props.put("value.deserializer",

"org.apache.kafka.common.serialization.StringDeserializer");Then, we add some Oracle-specific properties – oracle.service.name is the name of the service we are connecting to, in our case this is freepdb1; oracle.net.tns_admin needs to point to the directory where we put our ojdbc.properties file; and security.protocol controls whether we are using SSL, or not, as in this case:

props.put("oracle.service.name", "freepdb1");

props.put("oracle.net.tns_admin", ".");

props.put("security.protocol","PLAINTEXT");

With that done, we can create the KafkaConsumer and subscribe to a topic. Note that we use the Oracle version of KafkaConsumer which is basically just a wrapper that understand those extra Oracle-specific properites:

import org.oracle.okafka.clients.consumer.KafkaConsumer;

// ...

Consumer<String , String> consumer = new KafkaConsumer<String, String>(props);

consumer.subscribe(Arrays.asList("TEQ"));The rest of the code is standard Kafka code that polls for records, prints out any it finds, commits them, and then loops until it has received the number of records it expected and then exits.

Run the consumer

We can build and run the consumer with this command:

mvn exec:exec -P consumerIt will connect to the database and start polling for records, of course there won’t be any yet because we have not created the producer. It should output a message like this about every ten seconds:

No records fetched. Retrying...Let’s write that producer!

Create the producer

In a directory called src/main/jaba/com/example, create a new Java file called SimpleProducerOKafka.java with the following content:

package com.example;

import org.oracle.okafka.clients.producer.KafkaProducer;

import org.apache.kafka.clients.producer.Producer;

import org.apache.kafka.clients.producer.ProducerRecord;

import org.apache.kafka.clients.producer.RecordMetadata;

import org.apache.kafka.common.header.internals.RecordHeader;

import java.util.Properties;

import java.util.concurrent.Future;

public class SimpleProducerOKafka {

public static void main(String[] args) {

long startTime = 0;

try {

// set the required properties

Properties props = new Properties();

props.put("bootstrap.servers", "172.17.0.2:1521");

props.put("key.serializer",

"org.apache.kafka.common.serialization.StringSerializer");

props.put("value.serializer",

"org.apache.kafka.common.serialization.StringSerializer");

props.put("batch.size", "5000");

props.put("linger.ms","500");

props.put("oracle.service.name", "freepdb1");

props.put("oracle.net.tns_admin", ".");

props.put("security.protocol","PLAINTEXT");

// create the producer

Producer<String, String> producer = new KafkaProducer<String, String>(props);

Future<RecordMetadata> lastFuture = null;

int msgCnt = 4000;

startTime = System.currentTimeMillis();

// send the messages

for (int i = 0; i < msgCnt; i++) {

RecordHeader rH1 = new RecordHeader("CLIENT_ID", "FIRST_CLIENT".getBytes());

RecordHeader rH2 = new RecordHeader("REPLY_TO", "TOPIC_M5".getBytes());

ProducerRecord<String, String> producerRecord =

new ProducerRecord<String, String>(

"TEQ", String.valueOf(i), "Test message "+ i

);

producerRecord.headers().add(rH1).add(rH2);

lastFuture = producer.send(producerRecord);

}

// wait for the last one to finish

lastFuture.get();

// print summary

long runTime = System.currentTimeMillis() - startTime;

System.out.println("Produced "+ msgCnt +" messages in " + runTime + "ms.");

producer.close();

}

catch(Exception e) {

System.out.println("Caught exception: " + e );

e.printStackTrace();

}

}

}This code is quite similar to the consumer. We first set up the Kafka properties, including the Oracle-specific ones. Then we create a KafkaProducer, again using the Oracle version which understands those extra properties. After that we just loop and produce the desired number of records.

Make sure your consumer is still running (or restart it) and then build and run the producer with this command:

mvn exec:exec -P producerWhen you do this, it will run for a short time and then print a message like this to let you know it is done:

Produced 4000 messages in 1955ms.Now take a look at the output in the consumer window. You should see quite a lot of output there. Here’s a short snippet from the end:

partition = 0, offset = 23047, key = 3998, value = Test message 3998

Header: RecordHeader(key = CLIENT_ID, value = [70, 73, 82, 83, 84, 95, 67, 76, 73, 69, 78, 84])

Header: RecordHeader(key = REPLY_TO, value = [84, 79, 80, 73, 67, 95, 77, 53])

Committing records 27

Received 4000. Expected 4000. Exiting Now.

Application closing Consumer. Run duration 510201 msIt prints out a message for each record it finds, including the partition ID, the offset, and the key and value. It them prints out the headers. You will also see commit messages, and at the end it prints out how many records it found and how long it ws running for. I left mine running while I got the producer ready to go, so it shows a fairly long duration  But you can run it again and start the producer immediately after it and you will see a much shorter run duration.

But you can run it again and start the producer immediately after it and you will see a much shorter run duration.

Well, there you go! That’s a Kafka producer and consumer using the new updated 23c version of the Kafka-compatible Java API for Transactional Event Queues. Stay tuned for more!

]]>Personally, I work on Windows 11 with the Windows Subsystem for Linux and Ubuntu 20.04. Of course you can adjust these instructions to work on macOS or Linux.

Java

First thing we need is the Java Development Kit. I used Java 17, here’s a permalink to download the latest tar for x64: https://download.oracle.com/java/17/latest/jdk-17_linux-x64_bin.tar.gz

You can just decompress that in your home directory and then add it to your path:

export JAVA_HOME=$HOME/jdk-17.0.3

export PATH=$JAVA_HOME/bin:$PATHYou can verify it is installed with this command:

$ java -version

java version "17.0.3" 2022-04-19 LTS

Java(TM) SE Runtime Environment (build 17.0.3+8-LTS-111)

Java HotSpot(TM) 64-Bit Server VM (build 17.0.3+8-LTS-111, mixed mode, sharing)Great! Now, let’s move on to build automation.

Maven

You can use Maven or Gradle to build Spring Boot projects, and when you generate a new project from Spring Initialzr (more on that later) it will give you a choice of these two. Personally, I prefer Maven, so that’s what I document here. If you prefer Gradle, I’m pretty sure you’ll already know how to set it up

I use Maven 3.8.6, which you can download from the Apache Maven website in various formats. Here’s a direct link for the zip file: https://dlcdn.apache.org/maven/maven-3/3.8.6/binaries/apache-maven-3.8.6-bin.tar.gz

You can also just decompress this in your home directory and add it to your path:

export PATH=$HOME/apache-maven-3.8.6/bin:$PATHYou can verify it is installed with this command:

$ mvn -v

Apache Maven 3.8.6 (84538c9988a25aec085021c365c560670ad80f63)

Maven home: /home/mark/apache-maven-3.8.6

Java version: 17.0.3, vendor: Oracle Corporation, runtime: /home/mark/jdk-17.0.3

Default locale: en, platform encoding: UTF-8

OS name: "linux", version: "5.10.102.1-microsoft-standard-wsl2", arch: "amd64", family: "unix"Ok, now we are going to need an IDE!

Visual Studio Code

These days I find I am using Visual Studio Code for most of my coding. It’s free, lightweight, has a lot of plugins, and is well supported. Of course, you can use a different IDE if you prefer.

Another great feature of Visual Studio Code that I really like is the support for “remote coding.” This lets you run Visual Studio Code itself on Windows but it connects to a remote Linux machine and that’s where the actual code is stored, built, run, etc. This could be an SSH connection, or it could be connecting to a WSL2 “VM” on your machine. This latter option is what I do most often. So I get a nice friendly, well-behaved native desktop applciation, but I am coding on Linux. Kind of the best of both worlds!

You can download Visual Studio Code from its website and install it.

I use a few extensions (plugins) that you will probably want to get too! These add support for the languages and frameworks and give you things like completion and syntax checking and so on:

You can install these by opening the extensions tab (Ctrl-Shift-X) and using the search bar at the top to find and install them.

Containers and Kubernetes

Since our microservices applications are probably almost certainly going to end up running in Kubernetes, its a good idea to have a local test environment. I like to use “docker compose” for initial testing locally and then move to Kubernetes later.

I use Rancher Desktop for both containers and Kubernetes on my laptop. There are other options if you prefer to use something different.

Oracle Database

And last, but not least, you will need the Oracle Database container image so we can run a local database to test against. If you don’t already have it, you will need to go to Oracle Container Registry first, and navigate to “Database,” then “Enterprise,” and accept the license agreement, then pull the image with these commands:

docker login container-registry.oracle.com -u [email protected]

docker pull container-registry.oracle.com/database/enterprise:21.3.0.0Then you can start a database with this command:

docker run -d \

--name oracle-db \

-p 1521:1521 \

-e ORACLE_PWD=Welcome123 \

-e ORACLE_SID=ORCL \

-e ORACLE_PDB=PDB1 \

container-registry.oracle.com/database/enterprise:21.3.0.0The first time yoiu start it up, it will create a database instacne for you. This takes a few minutes, you can watch the logs to see when it is done:

docker logs -f oracle-dbYou will see this message in the logs when it is ready:

#########################

DATABASE IS READY TO USE!

#########################You can then stop and start the database container as needed – you won’t need to wait for it to create the database instance each time, it will stop and start in just a second or two.

docker stop oracle-db

docker start oracle-dbYou are going to want to grab its IP address for later on, you can do that with this command:

docker inspect oracle-db | grep IPAddressThis container image has SQL*Plus in it, and you can use that as a database command line client, but I prefer the new Oracle SQLcl which is a lot nicer – it has completion and arrow key navigation and lots of other cool new features. Here’s a permalink for the latest version: https://download.oracle.com/otn_software/java/sqldeveloper/sqlcl-latest.zip

You can just unzip this and add it to your path too, like Maven and Java.

You can connect to the database using SQLcl like this (use the IP address you got above):

sql sys/Welcome123@//172.12.0.2:1521/pdb1 as sysdbaWell, that’s about everything we need! In the next post we’ll get started building a Spring Boot microservice!

]]>I’m looking forward to trying it out in Java 19.

Read all the details in this article by Nicolai Parlog – https://blogs.oracle.com/javamagazine/post/java-loom-virtual-threads-platform-threads

]]>The first thing you are going to need is an Oracle Autonomous Database instance. If you are reading this post, you probably already know how to get one. But just in case you don’t – here’s a good reference to get you started – and remember, this is available in the “always free” tier, so you can try this out for free!

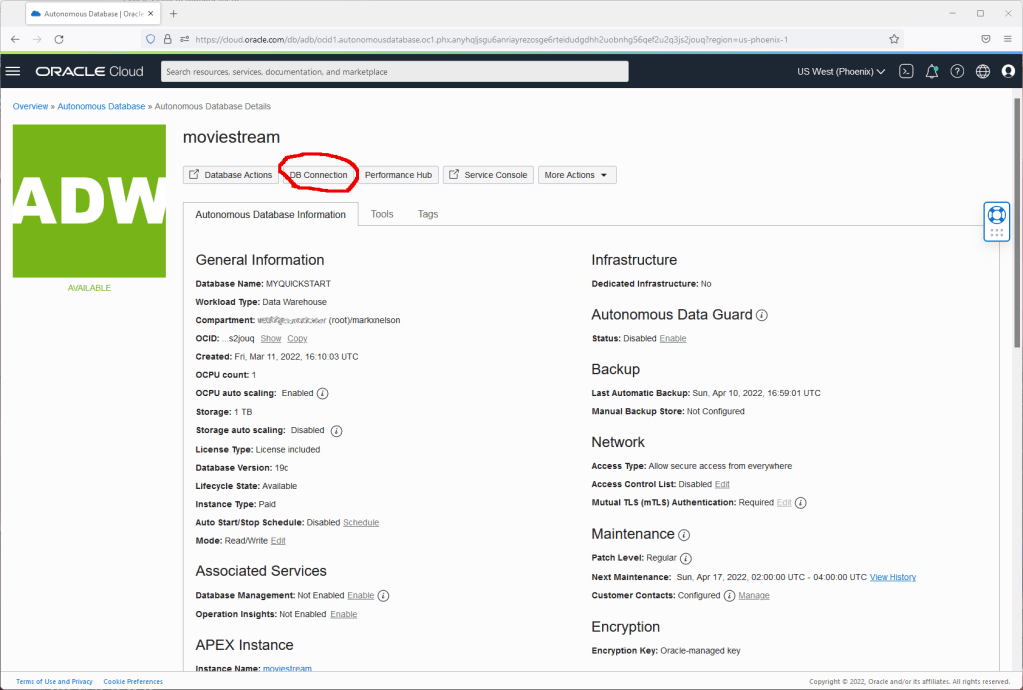

When you look at your instance in the Oracle Cloud (OCI) console, you will see there is a button labelled DB Connection – go ahead and click on that:

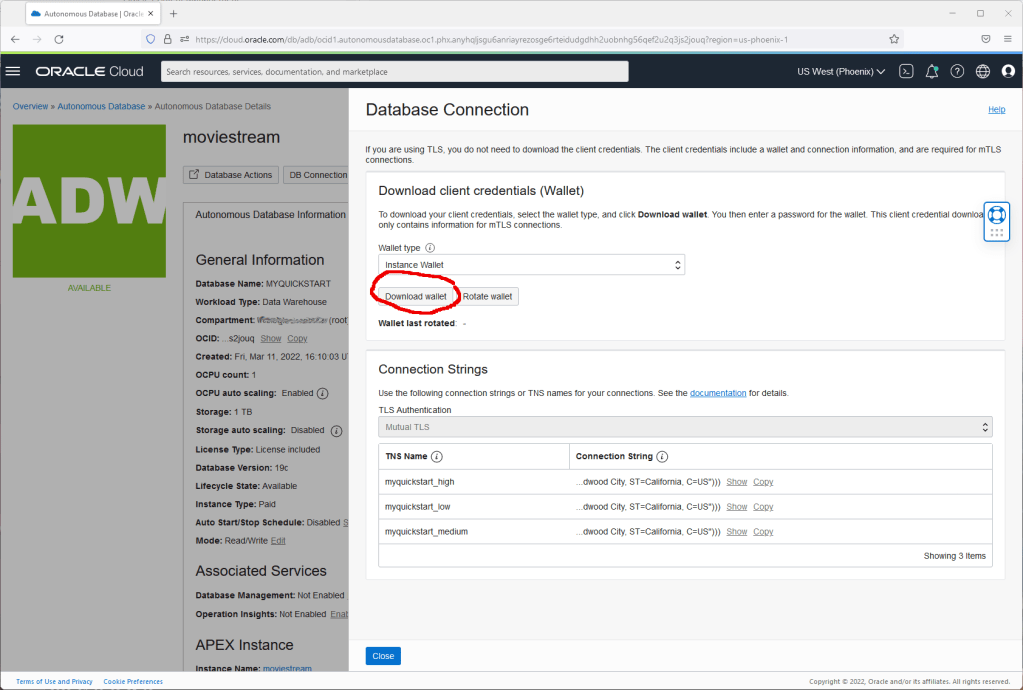

In the slide out details page, there is a button labelled Download wallet – click on that and save the file somewhere convenient.

When you unzip the wallet file, you will see it contains a number of files, as shown below, including a tnsnames.ora and sqlnet.ora to tell your client how to access the database server, as well as some wallet files that contain certificates to authenticate to the database:

$ ls

Wallet_MYQUICKSTART.zip

$ unzip Wallet_MYQUICKSTART.zip

Archive: Wallet_MYQUICKSTART.zip

inflating: README

inflating: cwallet.sso

inflating: tnsnames.ora

inflating: truststore.jks

inflating: ojdbc.properties

inflating: sqlnet.ora

inflating: ewallet.p12

inflating: keystore.jksThe first thing you need to do is edit the sqlnet.ora file and make sure the DIRECTORY entry matches the location where you unzipped the wallet, and then add the SSL_SERVER_DN_MATCH=yes option to the file, it should look something like this:

WALLET_LOCATION = (SOURCE = (METHOD = file) (METHOD_DATA = (DIRECTORY="/home/mark/blog")))

SSL_SERVER_DN_MATCH=yesBefore we set up Mutual TLS – let’s review how we can use this wallet as-is to connect to the database using a username and password. Let’s take a look at a simple Java application that we can use to validate connectivity – you can grab the source code from GitHub:

$ git clone https://github.com/markxnelson/adb-mtls-sampleThis repository contains a very simple, single class Java application that just connects to the database, checks that the connection was successful and then exits. It includes a Maven POM file to get the dependencies and to run the application.

Make sure you can compile the application successfully:

$ cd adb-mtls-sample

$ mvn clean compileBefore you run the sample, you will need to edit the Java class file to set the database JDBC URL and user to match your own environment. Notice these lines in the file src/main/java/com/github/markxnelson/SimpleJDBCTest.java:

// set the database JDBC URL - note that the alias ("myquickstart_high" in this example) and

// the location of the wallet must be changed to match your own environment

private static String url = "jdbc:oracle:thin:@myquickstart_high?TNS_ADMIN=/home/mark/blog";

// the username to connect to the database with

private static String username = "admin";You need to update these with the correct alias name for your database (it is defined in the tnsnames.ora file in the wallet you downloaded) and the location of the wallet, i.e. the directory where you unzipped the wallet, the same directory where the tnsnames.ora is located.

You also need to set the correct username that the sample should use to connect to your database. Note that the user must exist and have at least the connect privilege in the database.

Once you have made these updates, you can compile and run the sample. Note that this code expects you to provide the password for that use in an environment variable called DB_PASSWORD:

$ export DB_PASSWORD=whatever_it_is

$ mvn clean compile exec:execYou will see the output from Maven, and toward the end, something like this:

[INFO] --- exec-maven-plugin:3.0.0:exec (default-cli) @ adb-mtls-sample ---

Trying to connect...

Connected!

[INFO] ------------------------------------------------------------------------

[INFO] BUILD SUCCESS

[INFO] ------------------------------------------------------------------------Great! We can connect to the database normally, using a username and password. If you want to be sure, try commenting out the two lines that set the user and password on the data source and run this again – the connection will fail and you will get an error!

Now let’s configure it to use mutual TLS instead.

I included a script called setup_wallet.sh in the sample repository. If you prefer, you can just run that script and provide the username and passwords when asked. If you want to do it manually, then read on!

First, we need to configure the Java class path to include the Oracle Wallet JAR files. Maven will have downloaded these from Maven Central for you when you compiled the application above, so you can find them in your local Maven repository:

- $HOME/.m2/repository/com/oracle/database/security/oraclepki/19.3.0.0/oraclepki-19.3.0.0.jar

- $HOME/.m2/repository/com/oracle/database/security/osdt_core/19.3.0.0/osdt_core-19.3.0.0.jar

- $HOME/.m2/repository/com/oracle/database/security/osdt_cert/19.3.0.0/osdt_cert-19.3.0.0.jar

You’ll need these for the command we run below – you can put them into an environment variable called CLASSPATH for easy access.

export CLASSPATH=$HOME/.m2/repository/com/oracle/database/security/oraclepki/19.3.0.0/oraclepki-19.3.0.0.jar

export CLASSPATH=$CLASSPATH:$HOME/.m2/repository/com/oracle/database/security/osdt_core/19.3.0.0/osdt_core-19.3.0.0.jar

export CLASSPATH=$CLASSPATH:$HOME/.m2/repository/com/oracle/database/security/osdt_cert/19.3.0.0/osdt_cert-19.3.0.0.jarHere’s the command you will need to run to add your credentials to the wallet (don’t run it yet!):

java \

-Doracle.pki.debug=true \

-classpath ${CLASSPATH} \

oracle.security.pki.OracleSecretStoreTextUI \

-nologo \

-wrl "$USER_DEFINED_WALLET" \

-createCredential "myquickstart_high" \

$USER >/dev/null <<EOF

$DB_PASSWORD

$DB_PASSWORD

$WALLET_PASSWORD

EOFFirst, set the environment variable USER_DEFINED_WALLET to the directory where you unzipped the wallet, i.e. the directory where the tnsnames.ora is located.

export USER_DEFINED_WALLET=/home/mark/blogYou’ll also want the change the alias in this command to match your database alias. In the example above it is myquickstart_high. You get this value from your tnsnames.ora – its the same one you used in the Java code earlier.

Now we are ready to run the command. This will update the wallet to add your user’s credentials and associate them with that database alias.

Once we have done that, we can edit the Java source code to comment out (or remove) the two lines that set the user and password:

//ds.setUser(username);

//ds.setPassword(password);Now you can compile and run the program again, and this time it will get the credentials from the wallet and will use mutual TLS to connect to the database.

$ mvn clean compile exec:exec

... (lines omitted) ...

[INFO] --- exec-maven-plugin:3.0.0:exec (default-cli) @ adb-mtls-sample ---

Trying to connect...

Connected!

[INFO] ------------------------------------------------------------------------

[INFO] BUILD SUCCESS

[INFO] ------------------------------------------------------------------------There you have it! We can now use this wallet to allow Java applications to connect to our database securely. This example we used was pretty simple, but you could imagine perhaps putting this wallet into a Kubernetes secret and mounting that secret as a volume for a pod running a Java microservice. This provides separation of the code from the credentials and certificates needed to connect to and validate the database, and helps us to build more secure microservices. Enjoy!

]]>Match on Strings in a switch statement

No longer do we have to write those ugly case statements like the one shown below. Some people put the variable and the constant the other way around, but then you might get a NullPointerException, so you have to check for that too, which is even more ugly. I prefer to do it the way shown, but every time I type one, I can’t help feeling a little bit of distaste.

case ("something".equals(whatever));

But now, we can write these much more elegantly. Here is an example:

public class Test {

public static void main(String[] args) {

if (args.length != 1) {

System.out.println("You must specify exactly one argument");

System.exit(0);

}

switch (args[0]) {

case "fred":

System.out.println("Hello fred");

break;

case "joe":

System.out.println("Hello joe");

break;

default:

break;

}

}

}

Try with resources

Java 7 introduces a ‘try with resources’ construct which allows us to define our resources for a try block and have them automatically closed after the try block is completed – either successfully or when an exception occurs. This makes things a lot neater. Let’s take a look at the example below which include both the ‘old’ way and the ‘new’ way:

import java.io.File;

import java.io.FileInputStream;

import java.io.InputStream;

import java.io.IOException;

import java.io.FileNotFoundException;

public class Try {

public static void main(String[] args) {

// the old way...

InputStream oldfile = null;

try {

oldfile = new FileInputStream(new File("myfile.txt"));

// do something with the file

} catch (IOException e) {

e.printStackTrace();

} finally {

// we need to check if the InputSteam oldfile is still open

try {

if (oldfile != null) oldfile.close();

} catch (IOException ioe) {

// ignore

}

}

// the new way...

try (InputStream newfile = new FileInputStream(new File("myfile.txt"))) {

// do something with the file

} catch (IOException e) {

e.printStackTrace();

}

// the InputStream newfile will be closed automatically

}

}

When we do things the old way, there are a few areas of ugliness:

- We are forced to declare our resource in the scope outside the try so that it will still be visible in the finally,

- We have to assign it a null value, otherwise the compiler will complain (correctly) that it may not have been initialized when we try to use it in the finally block,

- We need to write a finally block to clean up after ourselves,

- In our finally block, we first have to check if things worked or not, and if they did not, then we have to clean up,

- We need another try inside the finally since the cleanup operation in the finally is usually that could throw another exception!

The new way is a lot cleaner. We simply define our resources in parentheses after the try and before the opening brace of the try code block. Multiple resources can be declared by separating them with a semicolons (;). No need to define the resource in the outer scope (unless you actually want it to be available out there).

Also, you don’t have to write a finally block at all. Any resources defined this way will automatically be released when the try block is finished. Well not any resource, but any that implement the java.lang.AutoCloseable interface. Several standard library classes, including those listed below, implement this interface.

- java.nio.channels.FileLock

- javax.imageio.stream.ImageInputStream

- java.beans.XMLEncoder

- java.beans.XMLDecoder

- java.io.ObjectInput

- java.io.ObjectOutput

- javax.sound.sampled.Line

- javax.sound.midi.Receiver

- javax.sound.midi.Transmitter

- javax.sound.midi.MidiDevice

- java.util.Scanner

- java.sql.Connection

- java.sql.ResultSet

- java.sql.Statement

Much nicer!

Catch multiple-type exceptions

Ever found yourself writing a whole heap of catch blocks with the same code in them, just to handle different types of exceptions? Me too! Java 7 provides a much nicer way to deal with this:

try {

// do something

} catch (JMSException | IOException | NamingException e) {

System.out.println("Oh no! Something bad happened);

// do something with the exception object...

e.printStackTrace();

}

You can list the types of exceptions you want to catch in one catch block using a pipe (|) separator. You can still have other catch blocks as well.

Type Inference

I never thought I would see the day ![]() and it is still a long way away from the type inference in languages like Scala, but we can now omit types when initializing a generic collection. One small step for Java… one huge leap for mankind!

and it is still a long way away from the type inference in languages like Scala, but we can now omit types when initializing a generic collection. One small step for Java… one huge leap for mankind!

Map<String, List<String>> stuff = new HashMap<>();

The ‘diamond operator’ (<>) is used for type inference. Saves a bunch of wasteful typing. However, it only works when the parameterised type is obvious from the context. So this wont compile:

List<String> list = new ArrayList<>(); list.addAll(new ArrayList<>());

But this will:

List<String> list = new ArrayList<>(); List<? extends String> list2 = new ArrayList<>(); list.addAll(list2);

Why? The addAll() method has a parameter of Collection<? extends String>. In the first example, since there is no context, the compiler will infer the type of new ArrayList<>() in the second line to be ArrayList<Object> and Object does not extend String.

The moral of the story: just because you might be able to use <> in a method call – probably you should try to limit its use to just variable definitions.

Nicer Numeric Literals

There are also a couple of small enhancements to numeric literals. Firstly, support for binary numbers using the ‘0b’ prefix (C programmers everywhere breathe a sigh of relief) and secondly, you can put underscores (_) between digits to make numbers more readable. That’s between digits only, nowhere else, not first, or last, or next to a decimal point or type indicator (like ‘f’, ‘l’, ‘b’, ‘x’).

Here are some examples:

// this is a valid AMEX number used for test transactions, you can't buy anything with it // but it will pass the credit card number validation tests long myCreditCardNumber = 3782_822463_10005L; long myNameInHex = 0x4D_41_52_4B; long myNameInBinary = 0b01001101_01000001_01010010_01001100; long populationOfEarth = 6_852_472_823L; // I glad I didn't have to count this!

New Look and Feel for Swing

There is a new look and feel for Swing (which it desperately needed!) called ‘Nimbus.’ It looks a bit like this:

Or this:

It was kinda there as a draft for a little while already in Java 6. Why is it good? It can be rendered at any resolution, and it is a lot faster (yeah!) and arguably better looking that those boring old ones!

Better parallel performance

I attended a presentation by Doug Lea a couple of days ago, and he told us proudly that Java 7 also has some of his code buried in there that has long been waiting to see the light of day. A new work stealing queue implementation in the scheduler which should provide a good boost to performance of parallel tasks. This should be especially helpful as we write more and more parallel software in these days of more cores rather than higher frequencies. Especially nice if you are writing using an easy concurrent programming model like Actors in Scala, but still good if you are struggling through threads, locks, latches and all that. Thanks Doug!

Summary

Well there you have, a few small steps forward. I am looking forward to seeing a few bigger steps in Java 8!

]]>