Team Members: Dr. Phil: Sebastian Ingino (ingino[at]stanford[dot]edu), Andrew (Drew) Woen (amwoen[at]stanford[dot]edu), Vidur Gupta (vidur[at]stanford[dot]edu), Max Cura (mcura[at]stanford[dot]edu)

Original Authors: Shengkai Lin, Shizhen Zhao, Peirui Cao, Xinchi Han, Quan Tian, Wenfeng Liu, Qi Wu, and Donghai Han, Xinbing Wang

Source: Lin, S., Zhao, S., Cao, P., Han, X., Tian, Q., Liu, W., Wu, Q., Han, D., and Wang, X. ONCache: A Cache-Based Low-Overhead container overlay network. In 22nd USENIX Symposium on Networked Systems Design and Implementation (NSDI 25) (Philadelphia, PA, Apr. 2025), USENIX Association, pp. 979–998. https://www.usenix.org/system/files/nsdi25-lin-shengkai.pdf.

Key Results: Compared to standard overlay networks (Cilium and Antrea), ONCache improves throughput to near-bare-metal levels, significantly reduces latency, and lowers CPU utilization. Our results find that ONCache, compared to Antrea for a single flow, provides 17% better throughput and a 38% better request-response transaction rate for TCP (119% and 25% respectively for UDP).

Introduction: Containers are lightweight, standalone execution environments that bundle an application with its dependencies and isolate it from the host. Their low overhead makes them ideal for running multiple services on the same machine. Kubernetes orchestrates the deployment and management of containerized applications across a cluster of multiple hosts. However, having multiple hosts means that container-to-container traffic must travel across the host network, which makes routing container-to-container communication more difficult. To solve this, Kubernetes requires a Container Network Interface (CNI) plugin to handle IP address assignment to containers and facilitate such communication.

While there are many ways to achieve this, one of the most flexible and transparent solutions is an “overlay network” which assigns IP addresses to containers, encapsulates packets using a tunneling protocol (i.e. VXLAN or GENEVE), and then sends them over the physical network. This, in turn, adds a significant amount of overhead to inter-container communication due to the packet encapsulation and decapsulation, which adds layers to packet egress and ingress paths to look up information about the current container topology. While less performant, overlay networks fully decouple the container from the underlying physical network and require no changes to the container.

The original paper analyzes the tunneling behavior in existing overlay networks and finds key invariants that allow the results of these processes to be cached and bypassed for later packets. Specifically, connection tracking, packet filtering, intra-host routing decisions, and outer header processing are constant during the lifetime of a flow, assuming no changes in the overlay network. As such, these invariants can be cached to bypass large parts of the overhead introduced into the ingress and egress paths by the overlay network. They then present ONCache, an extension to existing overlay networks that uses eBPF programs–sandboxed code running directly in the kernel–to perform this caching and provide ingress and egress fast-paths. To do so, the egress and ingress paths are hooked with eBPF programs at both ends. When a packet enters either the ingress or egress path, ONCache will do a cache lookup; if it hits, the overlay network’s extra layers can be skipped, and if it misses, ONCache will tag the packet so that the corresponding eBPF program at the other end of the path can read where the packet was routed to and fill in the cache accordingly (this is shown in Fig. 1 below; the bee icons represent eBPF programs). ONCache is transparent to the overlay and host networks, and provides a major performance improvement with minimal effort.

Fig. 1 Architecture of ONCache. Diagram from original paper, pg. 983 (originally Fig. 1).

We attempted to reproduce the original paper’s Figure 5 (below–we label it Fig. 2), which the authors used to demonstrate the increased throughput, increased request-response transaction rate, and decreased CPU utilization for both TCP and UDP across multiple flow counts. Furthermore, we wanted to corroborate the following claim based on Figure 5:

“Compared to the standard overlay networks, ONCache improves throughput and request-response transaction rate[] by 12% and 36% for TCP (20% and 34% for UDP), respectively, while significantly reducing per-packet CPU overhead” (Lin et al., 979)

We selected this figure because it clearly demonstrates the value proposition of ONCache across two measures of performance and how it is affected by the quantity of simultaneous flows. Note that we test a subset of the original comparisons: bare metal and two existing overlay networks (Antrea and Cilium). Additionally, we do not implement the optional kernel extension for optimizing the egress data path presented in 3.6; this is not tested in Figure 5.

Fig 2. Original results. Graphs from original paper, pg. 987 (originally Fig. 5).

Their Methods: To evaluate ONCache, the authors use three c6525-100g nodes on CloudLab, each with a 24-core (48 hyper-thread) AMD 7402P at 2.80GHz processor, 128 GB ECC memory, and a Mellanox ConnectX-5 Ex 100 GB NIC. They do not specify the network setup. Each machine uses Ubuntu 20.04 with Linux kernel 5.14. The testing was performed on Kubernetes v1.23 with Antrea v1.9.0 and Cilium v1.12.4. The authors do not specify the Kubernetes topology.

The authors specify that they conduct tests with one host having all of the client containers and a second having all of the server containers where all client containers are simultaneously making requests to the server containers. They use iperf3 for testing throughput and netperf for testing the request-response (RR) transaction rate. From personal correspondence, we know the authors used a 1 MB TCP payload with iperf3 and a 60-second netperf RR test in receive mode. The CPU utilization is measured on the server side using mpstat. They do not specify the iperf3 or netperf versions they used.

Our Methods: We use the same configuration on CloudLab (three c6525-100g nodes), and assume that the authors used a single switch connected to each node along a 100 Gbps link. Note that this is the only possible configuration as each NIC only has one port available for experiment use. We use the same version of Ubuntu (20.04.6 LTS), Linux kernel (5.14.21), Antrea (v1.9.0), and Cilium (v1.12.4). We use a newer version of Kubernetes (v1.32); we made this decision as the authors’ version (v1.23) reached end-of-life on February 28, 2023 and is thus extremely difficult to install. We verified that Kubernetes has made no changes to their treatment of container networks (by examining Kubernetes changelogs), which was expected as container networks are implemented by CNI plugins–Kubernetes does not handle inter-container networking itself. We also performed limited empirical verification by checking our results across several Kubernetes versions and observed no changes.

Our topology uses one controller node and two worker nodes; this is the most logical configuration as Kubernetes prevents scheduling containers on the controller by default. At the beginning of each test, we deploy the maximum number of containers needed to each node (32 for our tests); while we could’ve chosen to deploy only the required number of containers for each test iteration, the unused containers are either indefinitely sleeping or awaiting a connection and therefore use a negligible amount of resources. Additionally, we reuse the containers for each flow count (1 to 32) and packet type (TCP or UDP) which has a negligible effect on performance, as ONCache initializes its fast path extremely quickly (~30μs) compared to the length of the tests (60 seconds).

We conduct 60-second tests in the same manner as the original paper, with the same split between client and server containers by worker. For iperf3, we use v3.7-3 on bare metal (Ubuntu 20.04 LTS) and v3.12-1 (Debian bookworm) in the container (using image networkstatic/iperf3); we found no performance differences between the two versions in testing. For measuring TCP throughput, we use a 1MB buffer, and for measuring UDP throughput we disable iperf3’s bandwidth limit and use an 8KB buffer. For netperf, we use v2.7.0 on bare metal and the containers (using image networkstatic/netperf) with the same configuration as the original paper. However, rather than using mpstat to measure the CPU utilization, we use the built-in CPU utilization reporting for both iperf3 and netperf; we found these values to be more consistent, easier to measure, and possibly more correct than using mpstat; the correctness improvement is that self-reported CPU utilizations only account for the user and system time to processing packets for iperf3 and netperf and ignore any overhead in other workloads on the machine such as handling Kubernetes management traffic.

Tests are run sequentially by number of flows (powers of two from 1 to 32), packet type (TCP or UDP), and benchmark (throughput or RR). After deploying all of the containers for the benchmark, we wait for all containers to stabilize as reported by Kubernetes before running any workloads. We do not use a delay between any tests or a warm-up period as there is no indication that the original paper does so.

Our Result: We were able to replicate the results from the original paper, showing that ONCache reduces a significant amount of overhead in overlay networks. As done in the original paper, all data is the average of a single flow. Additionally, for all “CPU” graphs, utilization is normalized by the associated benchmark (throughput or RR) and scaled by Antrea’s value.

Fig 3. Replicated results.

Our results find that ONCache, compared to Antrea for a single flow, provides 17% better throughput and a 38% better request-response transaction rate for TCP (119% and 25% respectively for UDP). Figures 4 and 5 below are the same graphs paired with the original paper’s results, with our replication on the bottom of both sets of graphs. Major differences to note are: significantly lower Cilium throughput than expected, leading to the extremely high Cilium UDP throughput CPU utilization after scaling; and significantly higher bare-metal RR than expected, leading to lower RR CPU utilization after scaling.

Fig 4. Original results (top) and replicated results (bottom): TCP

Fig 5. Original results (top) and replicated results (bottom): UDP

Discussion: Examining the TCP throughput figure, we see that ONCache (light-blue) matches bare-metal throughput almost perfectly at a slightly higher value than the original paper. We see Antrea match after 4 flows as the 100 Gbps link is saturated, and Cilium joins at 16 flows. The difference for UDP throughput is even more drastic; we can see ONCache almost perfectly matches the bare-metal performance, which is significantly higher than both Antrea and Cilium. As previously mentioned, Cilium throughput was lower than expected for both packet types, and we can also see that UDP throughput for Antrea is much lower compared to the original results; we do not have a good explanation for these at this time. While the TCP throughput CPU utilization is not as clear as the original plot, we still see a measurable improvement over Antrea, with a clear win for ONCache in UDP throughput CPU utilization.

The request-response results also show an obvious improvement for ONCache, with the results closely matching the original paper except for bare metal. Our RR CPU utilization for ONCache is slightly higher than expected (for both TCP and UDP) but is still lower than Antrea and Cilium. As previously mentioned, bare-metal RR performance is much higher than expected for both packet types; our best explanation for this is that the original authors ran this benchmark after setting up the Kubernetes cluster while ours were done on fresh hosts.

Implementation Details and Additional Findings: While ONCache features a relatively simple pitch–bypass layers of the networking stack via caching–there are many key details that affect performance and overall behavior. Our code contains a large number of comments detailing all of these; we will discuss a subset of these here.

First, while the paper focuses on VXLAN (RFC 7348) as the encapsulation protocol which we initially designed our implementation for, Antrea uses GENEVE (RFC 8926) by default. VXLAN and GENEVE are quite similar as both encapsulate the underlying packet in a UDP packet plus an additional header. Since the destination port is always the same depending on the protocol, the source port does not matter for correctness. However, the source port matters significantly for performance, and both RFCs specify that the source port be calculated using a hash of the encapsulated packet’s headers such that all packets in the same flow have the same source port. This allows the receiver’s Linux kernel to properly perform packet/flow steering for multiprocessor systems and thus achieve greater parallelism. We initially had a bug in our implementation that incorrectly calculated the source port, leading to poor UDP RR performance at higher flow counts. Note that this behavior is additionally important for equal-cost multi-path (ECMP) routing, which we do not observe the effects of in our setup.

Secondly, while the paper focuses on the implementation of the caching layer and fast path, we found the user-space daemon to be more complicated in terms of ONCache initialization. To understand initialization, it’s important to note that the smallest deployable unit in Kubernetes is not a container but rather a pod: a group of one or more co-located containers. When a pod is fully deployed (called “ready” by Kubernetes), the daemon must iterate through all containers and attach the egress eBPF program to their outer virtual ethernet interface (in the root namespace) and the ingress initialization eBPF program to the inner interface (in the container namespace). The process of doing the latter is complicated; the daemon must determine the PID of the container, step into its namespace, and attach the program. As our daemon is written in Go and makes use of goroutines which can be scheduled across multiple OS threads, it is important to lock the OS thread during this operation or else another goroutine could run before the container namespace is exited.

Lastly, we discovered that both throughput and request-response rate vary drastically depending on the Linux kernel version used. For instance, while Linux 5.14 provides a bare-metal TCP throughput of 39.3 Gbps, Linux 5.15 gets 33.9 Gbps (-14%) and Linux 6.8 gets 30.1 Gbps (-23%). The same is true for TCP RR: -31% for Linux 5.15 and -34% for Linux 6.18 compared to Linux 5.14. We have no explanation for this behavior at this time. Interestingly, we found no difference between Linux 5.14 on Ubuntu 20.04.6 LTS (which shipped with Linux 5.4) and Linux 5.14 on Ubuntu 22.04.5 LTS (which shipped with Linux 5.15).

Lessons Learned: Throughout the process of implementing ONCache, we learned a significant amount about encapsulation protocols and how the kernel can have a major impact on networking performance. As discussed in the previous section, our implementation mistake in the UDP encapsulation source port was detrimental to performance and difficult to triage, highlighting the importance of following the specification as closely as possible as the authors also noted this issue.

Our experiences also underscore the importance of using the same software version to achieve comparable results, and also the importance of reproducible builds and tests. We reproduced the authors’ numbers after downgrading from Linux 6.8 to 5.14. With the variance of results across different software versions, it is extremely helpful for papers to include details of the experimental setup and even better if the testing apparatus is published along with the artifacts; we thank the authors for responding to our questions on this.

Acknowledgements: We’d like to thank Shengkai Lin, the first author of “ONCache: A Cache-Based Low-Overhead Container Overlay Network” for answering our questions surrounding testing and implementation details. We’d also like to thank Keith Winstein for his advice on network performance across Linux kernel versions.

Reproduction: Code and instructions are available at https://github.com/sebastianingino/CS244-oncache/

]]>Team Members: Atindra Jha ([email protected]); Onkar Deshpande ([email protected])

Source: https://dl.acm.org/doi/10.1145/1282427.1282421

Key result: Delivering web pages over Structured Stream Transport (SST) protocol works as fast as delivering over TCP (with pipelining)

BACKGROUND

Structured Stream Transport:

Structured Stream Transport (SST) is presented as a transport-layer “sweet spot” that combines the complementary strengths of TCP and UDP while avoiding many of their well-known drawbacks. Modern web stacks frequently load a single page through a patchwork of connections: small, latency-sensitive objects (voice samples, video frames, RPCs) often ride over UDP, whereas anything that must arrive intact (HTML, CSS, JavaScript bundles, JSON APIs) travels on one or more TCP streams. This split is messy. Every TCP flow pays for a three-way handshake, the TIME_WAIT drain on port state, and often contends unfairly for bandwidth when applications open dozens of parallel connections. UDP avoids those costs, but its loss probability skyrockets once packets grow beyond a single MTU, forcing applications to build ad-hoc fragmentation, reassembly, and reliability logic. Worse, developers must stitch together state across both transports to understand which datagrams correspond to which stream or request.

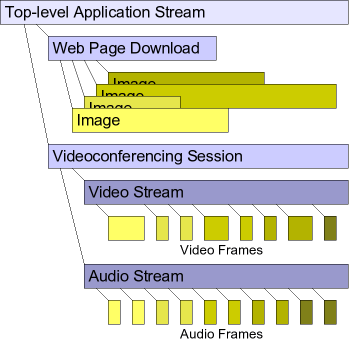

Figure 1: The high level architecture of SST. The protocol is split into 3 sub-protocols: The negotiation protocol sets up the channel and other initialization parameters. The channel protocol handles congestion control and ack delivery to the higher level stream protocol. The stream protocol handles the logical streams and optional packet retransmission for reliable delivery.

Why classic HTTP delivery struggles?

A typical HTTP/1.x page triggers tens or hundreds of fetches: the base document, then images, style sheets, scripts, fonts, and so on. Over a single persistent TCP connection this mix suffers head-of-line blocking: one lost segment stalls every subsequent response. HTTP pipelining lets the client queue requests aggressively, but the server must still reply in order, so a slow or dropped response blocks the rest. Firing up six to eight parallel TCP sockets (the general browser limit) mitigates blocking, yet re-introduces handshake delay, port and state churn, and unfair sharing of bandwidth.

SST’s core abstraction: channels and lightweight streams

SST introduces a two-level hierarchy. A channel is the long-lived, congestion-controlled pipe between peers, conceptually similar to a TCP connection(except it does not handle retransmission, only ack delivery). Inside that channel an application can spawn structured streams, which are cheap, sub-flow entities that inherit the parent channel’s congestion window but maintain their own sequence space, acknowledgments, and retransmissions. Creating or closing a child stream requires no handshake, no extra sockets, and no TIME_WAIT pause, so thousands of micro-transactions can coexist without bloating kernel state or congestion control metadata. Because each stream retransmits independently, the loss of one object no longer freezes its siblings. Head-of-line blocking disappears even while all streams share a single congestion context.

Unifying datagrams and reliable delivery

Within a channel a stream can be declared reliable or best-effort. Reliable streams behave like miniature TCP connections: every byte is acknowledged and retransmitted as needed. Best-effort streams look like UDP, except they still benefit from path-friendly congestion control. This duality elegantly solves the “large datagram” problem: instead of chunking a video frame across multiple UDP packets whose combined loss probability grows exponentially, an application can send the frame as a best-effort stream.

In sum, SST offers a coherent transport model in which applications create as many lightweight streams as their workload demands, enjoying TCP-grade fairness and optional reliability without paying TCP’s handshake, serialization, or TIME_WAIT taxes. By decoupling congestion control from retransmission granularity, it provides an attractive middle ground for the heterogeneous demands of modern web applications.

ORIGINAL RESULT AND METHODOLOGY

To evaluate the performance of SST, the authors compare HTTP over SST with HTTP over various TCP variants under simulated conditions. SST is implemented as a user space protocol running over UDP. The experiment models a typical residential internet connection using a 1.5 Mbps DSL link (with about 50ms minimum latency) and leverages the “UC Berkeley Home IP Web Traces” dataset. This dataset provides detailed information about real-world web activity, including request and response sizes.

To reconstruct realistic browsing sessions, the authors group requests by client IP and assume that any request ending in .html signifies the start of a new webpage. All subsequent requests are treated as secondary objects (such as images, stylesheets, or scripts) associated with the most recent .html page. These grouped page loads are then replayed in the simulator using a single client and server setup.

Under this setup, response times scale predictably with each transport variant. The results show that SST performs comparably to, or better than, traditional TCP variants in terms of total request–response time per web page, especially when dealing with pages that require a large number of secondary requests.

The paper mentions in Section 5.5 that they used “a fragment of the UC Berkeley Home IP web client traces available from the Internet Traffic Archive” but they don’t specify the exact filename. The paper doesn’t explicitly mention how long they ran their simulations for. The focus is on the performance metrics (page load times, request completion rates, etc.) rather than the total simulation duration. Figure 8 in the paper shows scatter plots of page load times against total page size for different numbers of requests per page, but doesn’t indicate how many total pages were simulated or the simulation time limit. The paper seems more concerned with the relative performance comparisons between different HTTP variants rather than absolute simulation parameters, which is typical for protocol comparison studies where the key insights come from the relative performance differences rather than the specific experimental duration.

OUR RESULT AND METHODOLOGY

We were able to replicate the results using a simulated link. We ran the simulation using ns-3 over docker.

We started by parsing UCB trace data into WebPage structures with primary/secondary request classification. Since the traces do not indicate which requests belong to one web page, we followed the authors in approximating this information by classifying requests by extension into “primary” (e.g., ‘.html’ or no extension) and “secondary” (e.g., ‘gif’, ‘.jpg’, ‘.class’), and then associating each contiguous run of secondary requests with the immediately preceding primary request. For our evaluation we used the 4 hour snippet of trace data UCB-home-IP-848278026-848292426.tr.gz, which we parsed using a trace parser that we wrote. We ran the simulation with time=1000 (seconds) for each TCP flavor as well as SST. We kept the one-way propagation delay at 25 ms and bandwidth at 1.5 Mbps to match the conditions under which the authors conducted their experiments.

In our TCP: HTTP/1.0 serial implementation (file titled http-trace-simulation.cc in our Github repository linked at the bottom of this page), HttpSerialClient processes web pages sequentially by making one request at a time within each page, which matches the paper’s description of “early web browsers that load pages by opening TCP connections for each request sequentially.” Key implementation details include using separate TCP connections for each HTTP request via the HttpSerialClient that creates new sockets per request; and measuring page load times from the start of the primary request to completion of all secondary requests.

Our TCP: HTTP/1.0 parallel implementation (http-parallel-simulation.cc in our Github repository) accurately captures the paper’s description of browsers that “open up to eight single-transaction TCP streams in parallel.” The HttpParallelClient manages a pool of 8 ParallelConnection objects, where each request gets its own dedicated TCP connection that closes after completion (true HTTP/1.0 behavior). Our implementation correctly follows the two-phase loading pattern described in the paper: first the primary HTML request completes, then all secondary requests (images, etc.) are launched simultaneously up to the 8-connection limit, with additional requests queued until connections become available. The key architectural difference from the serial version is the connection pooling mechanism (ProcessPendingRequests(), GetAvailableConnectionIndex()) that enables concurrent request processing rather than sequential execution. As evident in Figure 4 above, this approach significantly reduces page load times compared to the serial version when the number of requests per page is three or more (three rightmost three plots). When there are fewer than three requests per page, TCP: HTTP/1.0 parallel matches the performance of TCP: HTTP/1.0 serial (two leftmost plots). This matches the authors’ observation and results in Figure 8 of the paper (Figure 3 in this blog).

Our HTTP/1.1 persistent implementation (http-persistent-simulation.cc in our Github repository) accurately reflects the paper’s description of “modern browsers that use up to two concurrent persistent TCP streams as per RFC 2616.” The HttpPersistentClient maintains exactly 2 PersistentConnection objects that use HTTP/1.1 with “Connection: keep-alive” headers, allowing multiple requests to be sent sequentially over the same TCP connection without the overhead of establishing new connections for each request. Critically, our implementation avoids pipelining – each connection waits for the current request to complete (tracked via the isBusy flag) before sending the next request, which matches the paper’s note that this represents “persistent but non-pipelined TCP streams” that were the “current common case” when the paper was written. The server-side HttpPersistentServer properly supports connection reuse by maintaining per-socket buffers and processing multiple sequential requests. This approach reduces the connection establishment overhead of HTTP/1.0 while avoiding the complexity and compatibility issues of pipelining.

For our HTTP/1.1 pipelined implementation we tried the set-up with “two concurrent streams with up to four pipelined requests each” as described in the paper but also went beyond the paper’s basic description to try an “optimized” version using 6 connections (m_maxConnections(6)) with load balancing based on pending bytes (totalPendingBytes), size-based request sorting to minimize head-of-line blocking, and adaptive pipeline depth for small requests. The core pipelining mechanism correctly sends multiple requests without waiting for responses (using sentRequests and pendingRequests queues) while processing responses in FIFO order to maintain HTTP semantics. Surprisingly, our optimizations did not make any noticeable difference in the results we got. There was a slight difference in the number of pages completed and average page load time (2-3%) between these the non-optimized and optimized versions but the average request time and the plotted results were nearly identical.

Our SST implementation (http-sst-implementation.cc in our Github repository) represents a faithful translation of Ford’s SIGCOMM’07 protocol design, implementing the complete layered architecture with a UDP-based channel protocol that provides packet sequencing, acknowledgments, and shared TCP-friendly congestion control across all streams. The implementation captures SST’s key innovation for web workloads: using HTTP/1.0 semantics (one transaction per stream) while eliminating TCP’s connection establishment overhead through lightweight stream creation using Local Stream IDs (LSIDs). HttpSstClient creates unlimited parallel streams without handshaking delays after the initial channel setup, with all streams sharing a single congestion window – exactly matching the paper’s description of how “SST: HTTP/1.0 parallel” achieves the performance of HTTP/1.1 pipelining with lower complexity. The packet format follows Figure 3 from the paper with proper channel and stream headers, and the retransmission mechanism correctly assigns new sequence numbers to retransmitted packets (a key SST requirement).

It is evident in Figure 4 above that we were able to replicate the results that the authors presented in Figure 8 of the paper (Figure 3 above). Our SST implementation achieved performance in-line with our TCP: HTTP/1.1 pipelined implementation. Similar to the authors’ observation, the difference between the various TCP flavors and the SST implementation became more pronounced as the number of requests per page increased (as we move from the leftmost plot towards the right).

On the rightmost plot in Figure 4 above (9+ requests per page), where the differences between our various implementations are the most pronounced, we can see that TCP: HTTP/1.0 serial had the largest Request+Response time. The TCP: HTTP/1.1 persistent implementation is noticeably faster than our serial implementation and was very close to the performance of TCP: HTTP/1.0 parallel. Finally, the TCP: HTTP/1.1 pipelined and SST: HTTP/1.0 parallel implementations perform very similarly and have the lowest Request+Response time. The ever-so-slight differences between the plots in Figure 3 and Figure 4 above are because: (1) we ran the simulation for 1000 seconds and thus have significantly more data points; and (2) the exact name and portion of the trace file that the authors used for their simulation (of the five files on the UC Berkeley Home IP Web Traces website) were not mentioned in the paper (so we used the small 4 hour snippet provided on the website).

CHALLENGES, OBSERVATIONS, AND LESSONS LEARNED

The foremost challenge we faced when replicating the aforementioned results was the amount of code that had to be written: roughly 6000 to 7000 lines. Additionally, implementing TCP: HTTP/1.1 pipelined showed us why most browsers had the feature turned off – it had several parameters (number of concurrent connections, pipeline depth), implementational complexities associated with the pipelining mechanism, and optimization choices (like size-based request sorting to minimize head-of-line blocking). What was surprising was removing some of these optimizations (like size-based sorting, which would not be possible in a realistic scenario) and changing the number of concurrent connections and the maximum pipeline depth did not change the resulting plot noticeably.

Moreover, congestion control is hard. The congestion control algorithm might seem easy but there are many pitfalls with ACK delivery, retransmission of packets and multiplexing of connections. We noticed that packet loss had little effect on the results of our SST: HTTP/1.0 parallel implementation. This might be an artifact of the evaluation testbed. Since it’s a single client-server point to point connection, there is very little packet loss and thus the results did not change much when we added packet retransmission in our implementation.

STEPS TO REPLICATE OUR RESULTS

- Install Docker

- Clone our repo: https://github.com/merceod/CS244_SST.git

- The Dockerfile installs ns-3 among other dependencies and downloads/parses the traces. It contains 5 ENTRYPOINTs. Run the next step with each ENTRYPOINT one-by-one (each one produces the output log for one of the four TCP variants or our SST simulation).

- In the repo run this command (REMEMBER to change “output.txt” to an appropriate name for each of the 5 runs):

docker build . && docker run -it -v ./scratch:/ns-allinone-3.44/ns-3.44/scratch $(docker build -q . ) > output.txt - Produce the plots using our graphing script as follows (the .txt files below are the output logs for each of the 5 runs in the previous step):

python new_graphing.py --files trace_serial_time_1000_mP_0.txt trace_pipelined_time_1000_mP_0.txt trace_parallel_time_1000_mP_0.txt trace_persistent_time_1000_mP_0.txt trace_sst_time_1000_mP_0.txt --labels "TCP: HTTP/1.0 serial" "TCP: HTTP/1.1 pipelined" "TCP: HTTP/1.0 parallel" "TCP: HTTP/1.1 persistent" "SST: HTTP/1.0 parallel" --output ser_pipe_para_pers_sst_time_1000_mP_0.png --stats --show

REFERENCES

- Structured Streams: a New Transport Abstraction https://bford.info/pub/net/sst/#x1-350005.3 Bryan Ford, MIT. ACM SIGCOMM 2007

- UC Berkeley Home IP Web Traces https://ita.ee.lbl.gov/html/contrib/UCB.home-IP-HTTP.html

- ns-3 | a discrete-event network simulator for internet systems https://www.nsnam.org

Team Members: Francis Chua (fqchua[at]stanford[dot]edu), Adam Lambert (sfadam[at]stanford[dot]edu), June Lee (junelee1[at]stanford[dot]edu)

Source Paper: Arghavani, Mahdi, et al. “SUSS: Improving TCP Performance by Speeding up Slow-Start.” Proceedings of the ACM SIGCOMM 2024 Conference, 4 Aug. 2024, pp. 151–165, https://doi.org/10.1145/3651890.3672234.

Key Result: We were able to replicate the speedup of CUBIC with SUSS compared to CUBIC without SUSS.

Introduction

The paper our group chose to replicate was “SUSS: Improving TCP Performance by Speeding Up Slow Start” by Mahdi Arghavani, Haibo Zhang, David Eyers, and Abbas Arghavani. This paper aims to address the problems that come with traditional TCP slow-start algorithms, including low bandwidth utilization, slow increase of data delivery rate, and high flow completion time (FCT), especially for smaller flows, which make up much of current network traffic. To solve these issues, the authors propose SUSS (Speeding Up Slow Start), a sender-side add-on to TCP slow start. SUSS aims to predict whether the congestion window (cwnd) is likely to grow exponentially in the next RTT. If so, the SUSS algorithm grows the cwnd in the current RTT.

The SUSS paper contains two key contributions.

First, SUSS predicts whether the exponential growth of cwnd will continue in the next round. SUSS bases this prediction off the exit conditions in Hystart, the Linux default slow-start approach used in the CUBIC congestion control algorithm. The two conditions are as follows for each round of sent packets:

- The time between the start of the current round (the receipt of the first acknowledgement in the last “train” of packets) and the receipt of the last acknowledgement (ACK) in the “train” of packets must be within half the minimum RTT time.

- The minimum observed RTT of the current round must be less than 1.125 times the overall minimum RTT.

If these two conditions are met, the growth continuation of cwnd is predicted for the next RTT and SUSS accelerates the growth of cwnd in the current round, increasing the cwnd by two upon an ACK.

Second, SUSS aims to prevent consequences that a too-rapid cwnd growth could bring, such as burstiness or packet loss. It does so by using a combination of ACK clocking and packet pacing. SUSS has three distinct phases: the ACK clocking phase (shown in blue in the figure below), the packet pacing phase (shown in red), and the guard phases (shown in grey). In the clocking phase, SUSS sends out a train of packets whose length grows by a factor of two each round, just as in normal HyStart. During the pacing period, the remaining data packets for the round that were not sent during clocking are sent at a predetermined, spaced-out pace. Finally, the guard phase, in grey in the below figure, acts as a buffer between the pacing and clocking periods to reduce the interference of pacing with the measurements taken during the clocking period for the next round. By only sending the typical amount of packets during clocking as in normal HyStart, SUSS avoids causing undue congestion from the exponentially faster growth.

The results of the SUSS paper are especially relevant in the current day, as traditional TCP’s issues such as flow completion time becomes relatively worse for smaller flows. Small flows such as websites assets or social media short videos would benefit greatly from speedups in transmission rate upon startup.

Chosen Claim to Replicate

We chose to replicate the authors’ claims that CUBIC with SUSS outperforms CUBIC without SUSS with minimal drawbacks and that accelerating slow start provides significant performance gains for small flows. We chose to replicate 3 figures:

Figure 10 shows the total amount of data delivered over time with and without SUSS on an unspecified testing configuration. We aimed to replicate the authors’ claim that CUBIC with SUSS on is more rapidly able to achieve the same optimal sending rate as CUBIC without SUSS.

Figure 11 shows the performance of BBR, CUBIC with SUSS off, and CUBIC with SUSS off, tested using a Google Cloud server in Tokyo and clients in Sweden and New Zealand. The shaded area shows the standard deviation of 50 aggregate trials; We aimed to replicate the authors’ claim about the relative performance of CUBIC with and without SUSS over on paths from end-user devices located on Stanford’s campus to Google Cloud instances that displayed similar minimum RTTs.

Figure 12 is derived from Figure 11 and shows the relative improvement of CUBIC with SUSS over CUBIC without SUSS across each tested scenario.

Process / Implementation Details

In this section, we discuss the implementation details of our code. The two figures below represent the outline of SUSS and modifications made to Hystart by the authors, which we focused on mainly for our implementation.

We modified the base code of CUBIC, which included code for HyStart. To compute Gi, the growth factor for the next round, we created variables to store packet sequence numbers tracking the first and last blue packets sent for the current round. We also calculated the duration of the blue (clocking) ACK train (data packets sent) using several other new variables, such as the number of blue packets sent and current round’s start time, which we used to estimate the duration of the total sequence of ACKS for data packets sent in a round. Using all of these values, we were able to check if the two key conditions for SUSS were met, and accordingly set the growth factor. We would also use variables such as the number of exponential growth rounds conducted thus far to calculate the ratio in the flowchart above and check when we would need to end SUSS and go back to the original CUBIC slow start algorithm.

For transmissions, each arrival of an acknowledgement (ACK) would trigger the clocking phase, in which we would send twice the amount of data from the previous clocking phase. Using the cwnd ratio, we would calculate guard intervals within the code to provide a buffer between our clocking and pacing phases, and send the remaining data in the pacing phase to end up sending twice the data acknowledged overall.

During our implementation process, we realized there were certain ambiguities in the flowchart and in the paper. One example of such ambiguity was that some of the modifications the authors made to the Hystart flowchart were difficult to implement solely inside of the Hystart functions, for example, the count of blue ACKs received in the current round. We ended up splitting logic across several different CUBIC and Hystart functions as there was not a direct mapping from the flowchart to what was actually implemented in the kernel. Differences in how we did this was likely part of the reason why our SUSS implementation’s performance did not match the high level that the authors reported. It is also possible that the authors had optimizations in their code that we did not, which would contribute to our lower relative performance as well. That being said, we were able to replicate an improvement for CUBIC with SUSS over CUBIC without SUSS to a certain extent.

Methodology

The authors implemented SUSS as a modification to the HyStart slow start algorithm in the TCP CUBIC congestion control module in version 5.19.10 of the Linux kernel. The authors then recompiled the kernel with their modifications and launched instances running an Apache2 web server on in seven locations: a server on a university campus in New Zealand, three servers on Google Cloud in the eastern United States, Tokyo, and Singapore, and three servers on Oracle Cloud Infrastructure in the western United States, Sydney, and London. They also reported running servers on Microsoft Azure and testing netcat and iperf web servers, but did not report results from Microsoft Azure due to space constraints, and found no difference in performance when using netcat or iperf over Apache2 as the web server. Additionally, the authors utilized a local testbed containing five Linux-based client-server pairs in a dumbbell topology using two routers. The bottleneck router ran netem, a Linux traffic control utility, enabling the authors to configure parameters like link speed and buffer size for the bottleneck router.

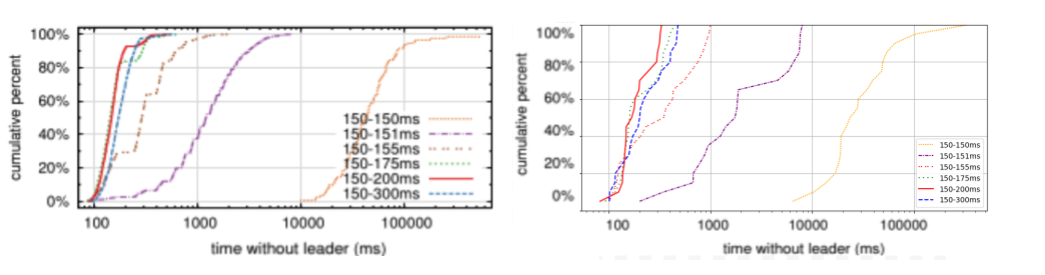

Clients were located in Sweden and New Zealand; the authors used desktop computers running Windows 10 with wired Ethernet connections, laptops running Linux 5.19.10 using Wifi, and mobile phones using Android 12 and iOS 17.3 over 4G and 5G links. To avoid interference by web browsers, the authors downloaded files using wget and curl from the command line. Each testing scenario (client-server pairing) consisted of downloading a file from the server 50 times, alternating between SUSS being on or off for each download. Server-side logging was then used to provide precise measurements of server-side connection state. The authors produced graphs of flow completion time (FCT) when using CUBIC with SUSS, CUBIC without SUSS, and BBR for 12MB file downloads using clients whose last hop was over a 5G link and over a wired link located in Sweden and clients whose last hop was over a Wifi link and a 4G link located in New Zealand.

In comparison, we rented instances on Google Cloud in the us-central1-c (Iowa) region with 12 x86 vCPUs and 6 GB of RAM with the 20.04 LTS Ubuntu image. We based our SUSS implementation on the 5.19.10 Linux kernel version as cited in the SUSS paper. We ran an Apache2 web server with files of various sizes and randomized contents and used curl to retrieve the files. In particular, we added the header “Connection: close” to ensure that the same TCP connection would not be reused across measurement runs. We used both curl trace logs and kernel timing logs to determine the transfer rate of our SUSS implementation.

Similarly to the authors’ testbed, our server included a filter for all traffic on port 80 to add a configurable delay via netem. Instead of choosing geographically distributed cloud providers to induce varying minRTTs, we used netem to get consistent and fine-grained control over the delay. Varying this parameter allowed us to see how our implementation of SUSS gives no speedup in flow completion time when the minRTT is low – even for small flows – contradicting the results in Table 1, but a very large speedup when the minRTT is artificially high (~500ms).

Results / Analysis

Overall, we found somewhat similar results to the authors’ conclusions. In all graphs below, the blue line represents our results when using CUBIC with SUSS off, and the red line represents our results when using CUBIC with SUSS on.

Figure 10:

Original Fig. 10

Fig. 10 replication: On the left, additional 0ms induced. On the right, additional 500 ms induced.

Above, we see our replication compared to the authors’ original Figure 10 result. We show that our replication of CUBIC with SUSS outperforms CUBIC without SUSS in the case that we artificially induce an additional 500 ms and deliver 12 MB of random data. However, in cases that no ms were added, we found that CUBIC performed the same whether SUSS was on or off.

Figure 11 & 12:

Original Fig. 11

Replicated Fig. 11 with 500 ms induced

Original Fig. 12

Fig. 11, 12 replication: On the left, additional 0ms induced. On the right, additional 500 ms was induced.

Above, we see our replication compared to the authors’ original Figures 11 and 12 results. We specifically focused on replicating their WiFi link results. We show that when we induce 500ms, the flow completion time for CUBIC without SUSS is shorter than the flow completion time for CUBIC with SUSS. We were surprised by our very large RTT as well as high improvement in flow completion time. We do believe, however, that this is due to our testing with >500ms RTT, which the authors never tested. These two extremes between 0ms and 600ms were chosen during our testing to understand exactly when our implementation was behaving as desired.

Moreover, despite the improvement, we believe our standard deviation was higher than the authors, which we show in our replicated graph through graphing multiple flows instead of a shaded area. This could also be attributed to different implementation details or testing methods. However, we are still unsure of why the authors’ graphed FCT improvement in Figure 12 slopes down after each jump instead of sloping up and jumping down as seen in our graph. This might be an artifact of our graphing since the flow sizes are more fine-grained for normal TCP than for the SUSS implementation. Overall, we believe we were able to replicate the results of the SUSS paper, though our implementation was more variable than the authors’ reports in the paper.

Lessons Learned

We learned several lessons while working on replicating SUSS. The most significant thing that we learned was how difficult kernel modification could be. The debugging process of working with the kernel code was especially difficult, both in part due to the complexity of the kernel code and long build times (20+ minutes). Even using incremental recompilation, it still took a significant amount of time to rebuild the kernel after modifying it, causing it to be time-consuming to incrementally develop our modifications. We also learned that in order to replicate an algorithm such as SUSS, it is essential to start implementing earlier, as this helps with understanding more than reading over the starting code and paper. Though we had initially tried to spend a long time reading through the code and paper to make sense of the concepts and details, when implementing, it would have been a better choice to start coding earlier, as this would have given us a greater understanding of what was going on.

We also believe that our testing and debugging process could have been greatly simplified if we had decided to carry out userspace testing instead of working with the kernel. A possibility could have been to compile the relevant parts of our SUSS implementation as a userspace program and test them in a more easily controlled environment or sandbox. As we never used a simulation of traffic using testbeds and recorded live traffic, we believe this made it much more difficult for us to collect data and test. Overall, we think the process of replicating the SUSS paper gave us a deeper understanding into the difficulty of carrying out research and the importance of planning out and beginning development early.

]]>Key Result(s): one-sentence, easily accessible description of each result.

Source(s): Dave Levin, Katrina LaCurts, Neil Spring, and Bobby Bhattacharjee. 2008. Bittorrent is an auction: analyzing and improving bittorrent’s incentives. SIGCOMM Comput. Commun. Rev. 38, 4 (October 2008), 243–254. https://doi.org/10.1145/1402946.1402987

Contacts: Yousef AbuHashem ([email protected]), Fabio Ibanez ([email protected]), Shounak Ray ([email protected]), Jacob Roberts-Baca ([email protected])

Introduction & Background

What is BitTorrent

BitTorrent is a peer-to-peer file sharing protocol that distributes large files efficiently by dividing them into small pieces and allowing peers to simultaneously download from and upload to multiple other peers. The protocol operates with seeders (peers with complete files), leechers (peers downloading files), and trackers (servers coordinating peer discovery). BitTorrent employs rarest-first piece selection and peer management through choking/unchoking mechanisms. Regular unchoking selects the fastest uploaders based on recent performance, while optimistic unchoking periodically gives chances to new peers. This design creates incentives for uploading while maximizing download efficiency across the swarm.

Project Motivation

BitTorrent has long been celebrated as a robust peer-to-peer file-sharing protocol, with many believing it uses a “tit-for-tat” mechanism that naturally incentivizes cooperation. However, research by Levin et al. revealed that BitTorrent doesn’t actually use tit-for-tat, and its incentive mechanisms are more vulnerable to gaming than previously thought.

The Core Problem

The researchers showed that BitTorrent’s unchoking algorithm, which is often described as a tit-for-tat system, actually functions more like an auction. In practice, peers “bid” for bandwidth by uploading data to their neighbors. Each peer selects a small number of other peers (typically three or four) to unchoke and send data to, based on who has uploaded the most to them recently. However, once selected, each of these top bidders receives an equal bandwidth allocation, regardless of how much they contributed. This design leads to a strategic behavior where peers aim to contribute just enough to remain among the top bidders, resulting in an incentive structure that encourages minimal cooperation.

This dynamic opens the door for exploitation. Some clients have been developed specifically to take advantage of these weaknesses:

- BitTyrant strategically determines the smallest upload contribution necessary to remain unchoked, maximizing download-to-upload ratio while minimizing actual contributions.

- BitThief avoids uploading almost entirely, yet still receives data by exploiting BitTorrent’s “optimistic unchoking” feature, which periodically unchokes random peers regardless of their past behavior in order to estimate their bandwidth.

These manipulations reduce overall system fairness and degrade performance for honest participants.

The PropShare Solution

To address these incentive misalignments, the authors propose PropShare, a new bandwidth allocation mechanism that replaces the auction-like system with a proportional sharing approach. Under PropShare, peers allocate their upload bandwidth in proportion to the amount of data received from each neighbor. This means that if a peer uploads twice as much to you as another peer does, it receives twice as much bandwidth in return. This proportional reciprocity is a better reflection of the tit-for-tat principle: the more you give, the more you get.

The researchers show that PropShare significantly improves fairness. It is much more difficult for selfish or malicious clients to game the system, because rewards scale with actual contribution. Additionally, PropShare is more robust against Sybil attacks (where a single attacker creates many fake identities to gain bandwidth) and collusion (where groups of clients share resources to manipulate outcomes).

Despite enforcing stronger fairness constraints and in many cases uploading less than the baseline BitTorrent protocol, Levin et al. demonstrate experimentally that PropShare maintains download speeds that are competitive with and often better than those achieved using BitTorrent’s original unchoking algorithm. With next to no explanation for why this is the case, we wanted to investigate this claim further and ultimately it became the focus of our replication efforts.

What We’re Replicating

Our project aims to reproduce Figure 8 from the paper, which shows the performance comparison between PropShare and standard BitTorrent clients. Specifically, we want to replicate the graph that measures:

- X-axis: Fraction of PropShare clients in the swarm (0.0 to 1.0)

- Y-axis: Average download times in seconds

- Two series: One for BitTorrent clients, one for PropShare clients

Original Experimental Setup

The original research was conducted using the following configuration:

- Platform: PlanetLab distributed testbed with approximately 110 nodes

- Network setup: 3 seeders per file with 128 KBps combined upload capacity

- File characteristics: 5MB files with locally run tracker

- Bandwidth distribution: Heterogeneous bandwidth caps per peer (using Piatek et al.’s distribution)

- Statistical rigor: Each data point averaged over at least 3 runs with 95% confidence intervals

- Protocol: Peers departed immediately upon download completion, different files used between runs to avoid tracker limitations

Key Research Findings

The original research showed that:

- PropShare either does the same or better than the traditional BitTorrent client implementation

- PropShare performance remains stable even as more peers adopt it (unlike BitTyrant, which suffers from tragedy of the commons)

- System-wide performance improves as more peers adopt PropShare

- PropShare maintains consistent low download times across all client fraction ratios, while BitTorrent clients experience increased download times as PropShare adoption grows

Methodology

Overview

To faithfully replicate the original study, we established a distributed experimental testbed using AWS. This approach was necessary because the original paper utilized PlanetLab, a global testbed for networking research that is no longer available. We developed infrastructure automation code to facilitate easy configuration and iteration of our experiments.

Our testbed consisted of 16 nodes distributed across multiple AWS regions, with a computational free-tier limit of 32 vCPUs. We deployed t2.micro Ubuntu instances configured with either proportional share or vanilla BitTorrent clients, mirroring the experimental design of the original paper. The deployment process included automatic security group creation with all-to-all communication rules and automatic instance termination to ensure clean experimental runs (specifically, avoiding there was no leakage in terms of unterminated instances across runs).

BitTorrent Client Implementation

We implemented our vanilla BitTorrent client by modifying an existing PyTorrent implementation to follow the original BEP 3 BitTorrent specification. Our modifications include:

- Peer-to-peer seeding

- Peer discovery mechanisms

- Optimistic unchoking algorithm

- Auction-based unchoking algorithm

- Bandwidth tracking

Increased frequency of peer exchange and discovery to ensure leechers are unchoked more rapidly.

To ensure consistent experimental conditions, we implemented a torrent source generation function that creates fresh torrent files and maintains empty peer lists on a global tracker (rotating across different links) for each experimental run, guaranteeing a clean startup process. This results in leechers not having to idly wait to connect with other peers.

Experimental Setup

We used a declarative configuration setup using YAML, allowing for precise specification of node roles and distribution. The configuration included:

aws:

instance_type: t2.micro

security_group: default

bittorrent:

github_repo: https://github.com/fabioibanez/Rogue-Packet.git

seed_fileurl: https://raw.githubusercontent.com/fabioibanez/Rogue-Packet/vplex-hopeful/seeder_sources/torrent_1.dat

torrent_url: https://raw.githubusercontent.com/fabioibanez/Rogue-Packet/vplex-hopeful/torrents/torrent_1.dat.torrent

controller:

port: 8080

regions:

- leechers: 1

name: us-west-1

seeders: 3

- leechers: 4

name: us-west-1

seeders: 1

...more regions...

timeout_minutes: 30

The testbed utilized 3 seeder nodes with a configurable fraction of proportional share machines among all leechers. We initially planned for multi-region distribution, but ultimately concentrated our deployment in the us-west-1 region since we were encountering unusually long latencies in other regions – which slowed down deployment times.

Deployment and Data Collection

Our deployment process followed a strict sequence to ensure experimental validity:

- Dependency Installation: Automated setup and dependency installation across all instances

- Controlled Launch Sequence: Leecher clients were launched first in parallel, followed by seeders launching in parallel, ensuring all leechers were running before seeders became available

- Real-time Monitoring: Streamed logs from instance VMs using an API server for real-time monitoring

- Data Collection: Download times and performance metrics were captured through streamed logs and stored both in on-disk CSV files and transmitted to a central controller VM

This controlled launch sequence addressed potential fairness issues where peers might miss initial discovery windows and only begin downloading after subsequent discovery cycles. The original paper did not specify the distribution strategy for leechers and seeders across regions, so we allocated these roles proportionally across our selected deployment areas.

We chose to implement custom infrastructure automation rather than using container orchestration platforms like Kubernetes or infrastructure-as-code tools like Terraform to maintain explicit control over the order of operations, including VM launches, software installation, and log communication timing. Although, in retrospect, using one of the pre-existing options may have been simpler.

Results

In the end, we produced this figure:

There are a few things to note about the figure above. For one, there are missing data points: due to the difficulty of orchestrating the AWS nodes used for the experiment, as well as limitations on the number of cores, we only ran the experiment three times:

- 10 baseline clients, 5 PropShare clients (0.33)

- 6 baseline clients, 9 PropShare clients (0.6)

- 5 PropShare clients (1.0)

It should also be noted that the scale of the axes differs in our replicated experiments (average download times ranging from 20-40 seconds compared to 125-225 seconds in the original paper) due to the different download file sizes. We felt that this was unimportant as we are more concerned with the relative performance of clients than their absolute download times in order to compare how PropShare clients compare to baseline ones.

We see that in our data, as compared to Levin et al., PropShare clients operating together appear to download a file faster than a heterogeneous swarm composed of both client types, however if it is difficult to ignore the lurking variable of number of clients in the swarm (the last experiment had only 5 clients, so perhaps less bandwidth was devoted to metadata needed for clients to e.g. determine which pieces are available) and this resulted in a faster download time. In terms of relative performance, for the two heterogeneous trials, there does not appear to be a compelling statistical difference: the difference may as well have been due to randomness rather than PropShare being a different implementation. We see this partly in the fact that in the first experiment, PropShare outperforms the baseline while underperforming in the second, and also due to their widely overlapping confidence intervals.

We attribute the differences between our results and Levin et al.’s to three broad categories: unstable experimental structure, divergent methodology, and underspecification of proportional sharing.

For one, we are unsure if the results shown in Levin et al. are the result of randomness themselves. The paper mentions running three trials and averaging the results, such that each data point in the figure we are reproducing is the culmination of these three trials. That said, the original system run on PlanetLab is a complicated system with many dynamics at play, and there is reason to believe that without many more trials to verify this result, there may be a butterfly effect at play that results in an experimental structure with high variance. This is equally true of our own replication methodology.

Of course, our replication is not the same as Levin et al.’s–it could not be. The biggest reason for this is that PlanetLab has been discontinued, and so we could not run the experiment on the same hardware that Levin et al. used. Instead, we chose to run on a handful of AWS EC2 instances distributed across regions with the aim of approximating the original PlanetLab experiment. There are non-negligible differences with this approach–for example, PlanetLab nodes are geographically isolated, whereas EC2 instances in the same data center will have much lower latencies than those across regions.

We also felt that the way that Levin et al. described the implementation details of proportional share to be lacking. For simplicity, we chose to implement proportional share probabilistically (e.g. if a client is responsible for 40% of the incoming bandwidth, then we will send them a requested piece randomly 40% of the time). This is not the only method: for example, if we knew our maximum outbound bandwidth, we could also have applied a leaky bucket or token bucket algorithm to limit the bandwidth. Additionally, we faced another implementation challenge: how do we account for the fact that a swarm in which one node has a monopoly on a file (e.g. at the start of an experiment) that node will be responsible for 100% of the upload bandwidth to other peers and thus starve other nodes? We could solve this by, for example, always allotting some baseline bandwidth to requesting peers, but this would open the door to the same kinds of Sybil attacks that Levin et al. aimed to solve. These implementation challenges were not addressed in Levin et al. but have an outsized effect on the performance of the PropShare client, perhaps leading to the results shown above.

Lessons Learned

One of the biggest challenges when replicating this paper is that we had to make assumptions about specific details within experimental methodology. One of these big things is that the paper forgoes any discussion of what BitTorrent client it was using as a baseline, and while BitTorrent is agnostic to implementation details as long as the specification is followed, implementation details can undoubtedly affect the ease of reproducibility. Another lesson learned is that mechanical orchestration of AWS infrastructure is a hefty lift. If we were to do this replication project again, we would look into existing orchestration solutions like Kubernetes or an existing testbed with easy access.

Another difficulty we encountered and something we would do differently is to implement a BitTorrent client from scratch rather than modify one to meet PropShare’s needs. This is because we incurred significant technical debt by getting PyTorrent up to speed. We made assumptions about PyTorrent’s capabilities, but it turned out that what PyTorrent claimed to be was not congruent with reality.

]]>Source Paper: “Timely Classification and Verification of Network Traffic Using Gaussian Mixture Models” by Alizadeh et al., 2020, linked here.

Key Result: We were unable to replicate the high accuracy scores reported in the original paper. We conducted extensive analysis and literature review, tried a comprehensive suite of methodological and technical remedies, and contacted the original authors.

Introduction

“Timely Classification and Verification of Network Traffic Using Gaussian Mixture Models” presents a lightweight alternative to deep learning for classifying encrypted network traffic. Specifically, this means being able to classify the potential source of network traffic without seeing the payload but only the header content. The authors propose to use Gaussian Mixture Models (GMMs) trained on a large number of statistical features derived from the first few packets of network flows. Their approach demonstrates high accuracy, up to 97.7%, using only nine initial packets per flow. Hence, it appears to be suitable for real-time traffic classification and anomaly detection. The paper’s contributions are practical given the increasing use of encrypted traffic and the need for quick analysis in security-sensitive environments!

Chosen Results

We decided to replicate the paper’s traffic classification results using Reference Packet Count (RPC) = 9, corresponding to the first 9 packets per network flow, for GMM experiments. This RPC value is the best-performing and most prominently-reported in the paper. Although, we tried all RPC values from 3 to 10 for three of the baselines: Naive Bayes, KNN, and Decision Trees. We focus on traffic classification rather than verification because it is central to the paper’s claims and the authors’ core focus. Hence, we mainly try to replicate the relevant parts of Figure 3 and Table 3 in the original paper. In particular, RPC = 9 results for GMM, and RPC = 3 to 10 results for the 3 baselines we implement.

Original Paper’s Methodology

The authors collect TCP flows from the UNIBS-2009 dataset (consisting of ~79,000 flows collected over 3 days at the University of Brescia) and extract 59 statistical features using Netmate-flowcalc. The flows are grouped into five primary application classes: MAILS, P2P, SKYPE, WEB, and OTHERS. The authors use a Sequential Forward Selection (SFS) algorithm to choose the most informative subset of features for each value of RPC and each trained model that maximizes the evaluation function (in this case, the F-1 score). For each class, a GMM is trained using a component-wise expectation maximization (CEM) algorithm, which includes automatic model selection to determine the number of mixture components. Flows are then classified using posterior estimates over these models. Final results are reported as both accuracy and F-1 scores (based on precision and recall). The authors also train 6 baseline classification models per experiment: Naive-Bayes (NB), Decision Tree (DT), K-Nearest Neighbors (KNN), Support Vector Machine (SVM), Random Forest (RF), and Gradient Boosted Trees (GBT). They show high performance using all models, with GMMs performing best over the baselines.

Our Methodology

We closely followed the original authors’ pipeline from beginning to end, including contacting the original UNIBS-2009 authors for their data, but with some necessary changes:

- Feature extraction: We reimplemented feature extraction using Python and dpkt, since Netmate-flowcalc is an incredibly outdated and unmaintained library. We matched the authors’ flow definitions and selection criteria (non-zero payload, bidirectional, etc.) as closely as possible. However, many features were not defined in detail, nor example numbers and ranges provided, which presented some difficulties which we will discuss later.

- Data processing: We matched each flow to their ground-truth application using the dataset’s provided groundtruth log file, grouped the data into the 5 major application classes using the exact application list for each class provided by the authors (we had a similar distribution and flow numbers across classes), and split them into train (day 1 flows) and test (day 2 and 3 flows), also following the authors exactly.

- Modeling: We used a Python port of the Figueiredo & Jain GMM algorithm (used by the authors to train their GMM models, paper here) instead of the original MATLAB code. This is because the original MATLAB code is very outdated and unmaintained, we did not have necessary MATLAB licenses, and our group did not have MATLAB expertise. Thankfully, the Python port was complete, 1-1, and validated by several independent parties. We updated the code (since it was also quite outdated), and validated the implementation with toy data and tests (provided by the GitHub authors). Toy test visualizations can be seen below. We then wrote a training script, exactly matching the authors’ specifications and hyperparameters (regularization factor, stopping threshold, and so forth).

- SFS and preprocessing: We implemented the SFS algorithm manually (see Algorithm 1 below, taken directly from their paper) and exactly as specified. We performed extensive testing, and also experimented with z-score normalization of the features (since they varied heavily in range – see the Feature Analysis section later for more). SFS worked well for some baselines but unfortunately not for the other baselines or GMMs. This already demonstrated some potential issues with the data and/or extracted features, which we discuss more later. For example, for GMMs and RPC=9, our best selected feature is the standard deviation of the backward packet length, but this is not even among the authors’ 4 selected features. As such, we did experiments using both our SFS selected features, and hardcoding the authors’ selected features.

- Training and evaluation: We trained on Day 1 data and tested on Days 2 and 3, as described in the paper. We experimented with both MLE and MAP classification (described later), and evaluated using macro, weighted, and pruned F-1 score across flows, packets, and bytes.

Replication Process Difficulties

We faced a couple of key challenges (among many potential others), which may be reasons for the discrepancy in our results:

- Feature ambiguity: some features can be defined in multiple ways, which we tried (e.g. payload and header length calculations, whether to calculate/normalize flow duration and byte counts by RPC-limited packets or the full number of packets). Results were not great no matter how we defined the ambiguous features. This is likely because of poor feature separability, which we show in the Feature Analysis section later. A full list of the authors’ original 59 features is included in Table 1 below (taken directly from the original paper).

- Class imbalance: the UNIBS-2009 dataset is highly unbalanced, with two of the classes making up 82% of the flows and 95% of the data, as seen in Table 2 below (taken directly from the original paper). We tried class balancing for some experiments, but this did not appear to help much.

Note: this table is the only information given for each feature.

Note: our distribution and number of flows per application category is very similar to the above.

Remedies We Tried

Clearly, our results were not replicating the original authors’ scores, potentially due to several difficulties. We tried a comprehensive suite of remedies to potentially increase performance, including but not limited to the following:

- Removal of zero payload packets during pre-filtering

- Removal of out of order packets with tolerance ranges from 1000 to 3000 packets

- Separate reference packet counts (RPC) data files for each RPC from 3 to 10 (focused on RPC=9 for majority of the experiments, but tried more for the baselines)

- Dropping zero variance features

- Different definitions for ambiguous features

- Training on different subsets of features and even all features (for Transformer experiments discussed later)

- Feature normalization and class balancing (for GMM and Transformer experiments)

- Different formulas for GMM classification (MAP vs. MLE, discussed below)

- Different formulas for the final metrics (average vs. weighted vs. pruned F-1)

- SOTA Transformer models for classification

- Looking at related works and cited papers for info (see last section)

- Contacting the original authors for help (to no avail)

Results corresponding to some of the above remedies we tried are discussed more in the following sections.

Our Results vs. Original Results

Baselines

The original paper included 6 baselines. We studied three to four, narrowing down on final experiments with 3 of them: Naive Bayes, KNN, and Decision Trees. Note that we also tried SVM, but our model had trouble converging on the given data, and was also taking a while to run. None of our results matched those reported in the paper. Just like with GMM (discussed below), we tried different F1 scoring methods, but nothing helps to achieve the reported numbers. We ran our SFS algorithm in multiple different ways and hardcoded the SFS features from the paper, none of which resulted in a gain in accuracy. The below table reports an example of our Naive Bayes baseline results for RPC = 4 with our SFS algorithm for feature selection.

As you can see, we achieve 0 precision, recall, and F-1 across 3 out of 5 classes, and only obtain positive results for P2P and WEB. Further, our overall accuracy is quite low at 61%. Essentially, the baselines are guessing whether the classification is WEB or P2P, the two most common tags.

We also plot our three baselines’ accuracy by RPC count: b) below, compared to the accuracy graph given by the original authors (extracted directly from their paper): a) below. Accuracy is assessed on a per flow basis, as opposed to a per packet or per byte basis, in both graphs below. One can see that across the RPC counts, we do noticeably worse than the original authors. Naive Bayes starts out quite strong, but does not improve with RPC count, unlike what the authors report. Our other baselines start noticeably lower and do not improve with RPC count either. From this, we can see that natural entropy of the underlying data is consistent and not well-formed.

a)

b)

GMMs: First Try

For our GMM experiments, we focused on RPC = 9 (best results for the authors, and most of their reported results are for this). Unfortunately, accuracy and F1 are noticeably below what the authors report, as seen in the table below. Note that Skype results are incredibly bad, but this is unsurprising since the class barely has any examples, a symptom of poor class balance. Note that macro F-1 refers to simply averaging the F-1 of each class.

| Metric (RPC = 9) | Paper | Ours (Initial) |

| Flow Macro F1 | 93.31 | 20.02 |

| Packet Macro F1 | 77.98 | 22.72 |

| Byte Macro F1 | 68.74 | 22.85 |

| Flow Acc | 97.74 | 26.00 |

| Packet Acc | 97.61 | 55.12 |

| Byte Acc | 98.03 | 55.91 |

| Mails Flow F1 | 92.21 | 13.25 |

| P2P Flow F1 | 97.74 | 49.16 |

| Web Flow F1 | 98.51 | 17.34 |

| Skype Flow F1 | 84.78 | 0.30 |

Our GMM implementation is confirmed correct through toy tests and other sanity checks, and we match the authors’ hyperparameters and specifications exactly. As such, we try a few other remedies:

- Normalize the features, which in this case, have heavily varying ranges. This is likely important, especially for GMMs, which typically assume that inputs are approximately normally distributed. To address this, we tried z-score normalization.

- Switch from MLE-based classification to MAP-based classification (to incorporate the prior probability of each class). See below for a more in-depth explanation including mathematical formulas.

- Try different versions of the final F1 calculation: weighted vs. macro average, removing worst classes with low F1 (below 0.1 in this case, which mostly results in removing Skype), and so forth.

- Tried a completely different GMM implementation. However, this did not improve the results, so we ignore it here.

MLE vs. MAP Explanation

A typical way of conducting prediction with GMMs involves the following Maximum Likelihood Estimate (MLE) formula:

Where θc are the parameters of the GMM trained on class c, and P(x∣θc) is the likelihood that x was generated by class c.

The Maximum A Posteriori Estimate (MAP) is a modification of this that includes the prior probability of each class c, estimated from the training data using the formula: p(c) = # of examples in class c / total training examples.

We add log p(c) directly to the log-likelihood during prediction. This essentially modifies MLE to include the weight of each class during prediction, which may help in cases such as ours with such an unbalanced dataset.

GMMs: Remedied

We tried the above remedies. We report our remedied results in the table below, compared to our initial results and the authors’ original results. Firstly, feature normalization noticeably improves scores (especially F1 and flow-level accuracy) across the board. There are major improvements on Skype and Web, but lowered performance on Mails. Secondly, weighting by class size and excluding classes below 0.1 F-1 (mainly Skype) improves results to a reasonable extent. Lastly, using MAP (instead of MLE) with normalization further improves certain metrics but detriments others. Unsurprisingly, since MAP weights by prior class probability, Web (the most prominent class) has much higher scores, but the other classes do worse, with Skype down all the way to 0. MAP presents a trade-off with mixed results. Overall, while our remedies improve scores noticeably, they are still quite far below the authors’ reported numbers.

| Metric (RPC = 9) | Paper | Ours (Initial) | Ours (Normalized) | Ours (Normalized + MAP) |

| Flow Macro F1 | 93.31 | 20.02 | 29.37 | 23.11 |

| Weighted Flow F1 | 26.73 | 49.72 | 47.77 | |

| Pruned Flow Macro F1 | 26.59 | 38.18 | 41.86 | |

| Packet Macro F1 | 77.98 | 22.72 | 25.05 | 25.77 |

| Byte Macro F1 | 68.74 | 22.85 | 25.19 | 26.15 |

| Flow Acc | 97.74 | 26.00 | 45.67 | 54.94 |

| Packet Acc | 97.61 | 55.12 | 56.01 | 54.12 |

| Byte Acc | 98.03 | 55.91 | 57.35 | 54.89 |

| Mails Flow F1 | 92.21 | 13.25 | 10.70 | 8.74 |

| P2P Flow F1 | 97.74 | 49.16 | 48.02 | 13.01 |

| Web Flow F1 | 98.51 | 17.34 | 55.82 | 70.70 |

| Skype Flow F1 | 84.78 | 0.30 | 2.94 | 0.00 |

Feature Analysis

In search of a reasonable explanation for the poor performance of all of our models, baselines included, we decided to conduct an extensive analysis of the structural qualities of the features that the authors choose to extract from the dataset.

To Normalize or Not To Normalize…