There seems to be a lot of recent interest in and excitement about the promise of “agentic AI” (tools like Claude Code or Cursor) for improving productivity in scientific research. The idea, or perhaps more accurately, the hope, seems to be that by automating key steps in scientific workflows, agentic AI tools can massively improve the productivity of practising scientists. It’s not uncommon these days to hear claims such as: “What used to take me weeks previously now only takes a few hours.” The general message (the discourse, the narrative, the zeitgeist, the vibe of the times) seems to be that if you’re a practising scientist (whatever your field may be), you should be using these tools, because if you’re not, you’re going to be massively left behind. This constant drumbeat of “use these tools or become obsolete” messaging seems to create a lot of fomo-induced anxiety among scientists and researchers who do not use these tools or do not find them as useful as their more tech-savvy or technophile colleagues (“Am I doing something wrong?”).

In the last week alone, I saw two pieces projecting this kind of message: this post by the machine learning researcher Tim Dettmers and this hour-long YouTube video by the astronomer David Kipping.

In this post, I’d like to argue that expectations of massive productivity boosts in scientific research through the use of agentic AI tools are completely unrealistic for one very simple and obvious reason: these tools do not address the main bottleneck in scientific research, which is experimentation (broadly construed to include things like running simulations or experiments in silico, etc.) or, more precisely, the limited resources available for experimentation.

If you’re an academic machine learning researcher, you typically have access to a limited amount of compute on your university’s HPC cluster (or on a national supercomputing resource or on some cloud computing service). This puts hard and severe limits on the number of simultaneous projects you can run, the number of experiments per project you can run, and the scale at which you can run these experiments. Every machine learning researcher knows extremely well that this is the main and the single most significant bottleneck throttling their work. Yet, agentic AI tools (or any other AI tools, for that matter) do absolutely nothing to address this bottleneck: you previously had ~50 H100 days of compute per month on your local HPC cluster (realistic estimate from my last academic employer) and you still have ~50 H100 days of compute per month with your Claude subscription.

Hard sciences where experiments interface with the real world are even more strongly bottlenecked by the resources available for experimentation, because experiments are more time-consuming and require at least a human in the loop in this case. If you’re running a wet biology lab, for instance, you can only run so many animal experiments, analyze, image, or test only so many biological samples, etc. in a given amount of time. Again, every biologist knows that these resource constraints are the main bottleneck limiting their work and AI again does absolutely nothing to change these constraints. Research that relies on large-scale instruments like the Large Hadron Collider (LHC), the James Webb Space Telescope (JWST), or supercomputers is maximally resource constrained, so I’m afraid your overpriced Claude subscription will help even less here. Incidentally, this is why I find it so strange to see David Kipping, an astronomer whose work relies on large-scale and very expensive telescopes like the JWST, join this “use these tools or become obsolete” bandwagon.

Or consider the following example from Tim Dettmers’s blog post (linked above). Dettmers claims that he uses agentic AI tools to help him write grant proposals. This would make sense if there were a million funding opportunities and you wanted to automate the submission of a broad range of high-quality ideas to maximize your chances of success (this kind of automated generation and submission of ideas would raise questions about your actual ownership of these ideas, but let’s leave these questions aside for now, since this scenario is already too unrealistic). In this hypothetical world of plenty, scientists would be genuinely limited by the time it takes to write a grant proposal, so it would make sense to automate their writing. But here’s the thing: there aren’t a million funding opportunities for supporting scientific research projects! There aren’t even a thousand or even a hundred of them. At best, there are only maybe 10 such opportunities for most scientists in any given year (if that), so writing grant proposals is not even remotely the main productivity bottleneck for scientists even if they work meticulously on each and every one of them.

Perhaps, some will argue that although AI doesn’t do anything to change the resource constraints scientists are subject to, it may enable them to use those resources much more effectively, hence still massively boosting productivity. So, for example, you may still have ~50 H100 days of compute per month on your local HPC cluster, but perhaps now you can run 10x more experiments or maybe 10x better experiments in some sense with those ~50 H100 days of compute when you give the reins to Claude Code. Or take Dettmers’s grant proposal writing example. Maybe the idea is that Claude Code will help you write 10x better grant proposals that will massively boost your chances of receiving funding, even though it obviously won’t do anything to increase the total amount of available funding resources (of course, it would be mathematically impossible for Claude Code to massively improve everybody’s chances simultaneously, given limited resources, but again let’s ignore this technicality for now). The idea that Claude Code will write 10x faster code or that it will generate 10x better ideas to test, explore, or experiment with (whatever “10x better” may mean in this context) doesn’t even pass the smell test, but let’s scrutinize it a bit further for a reality check.

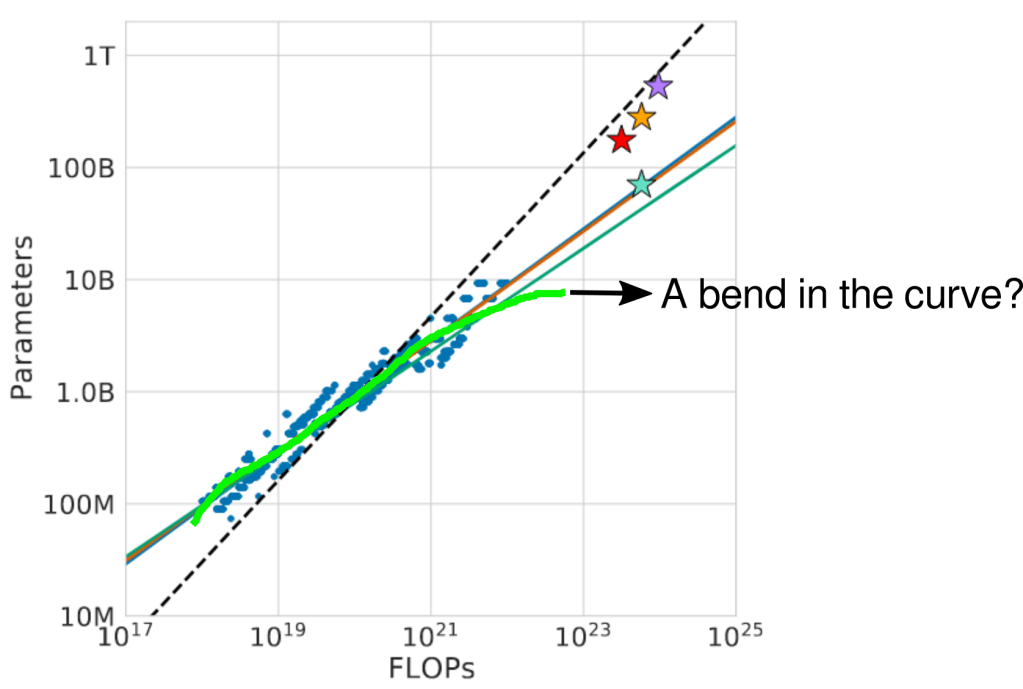

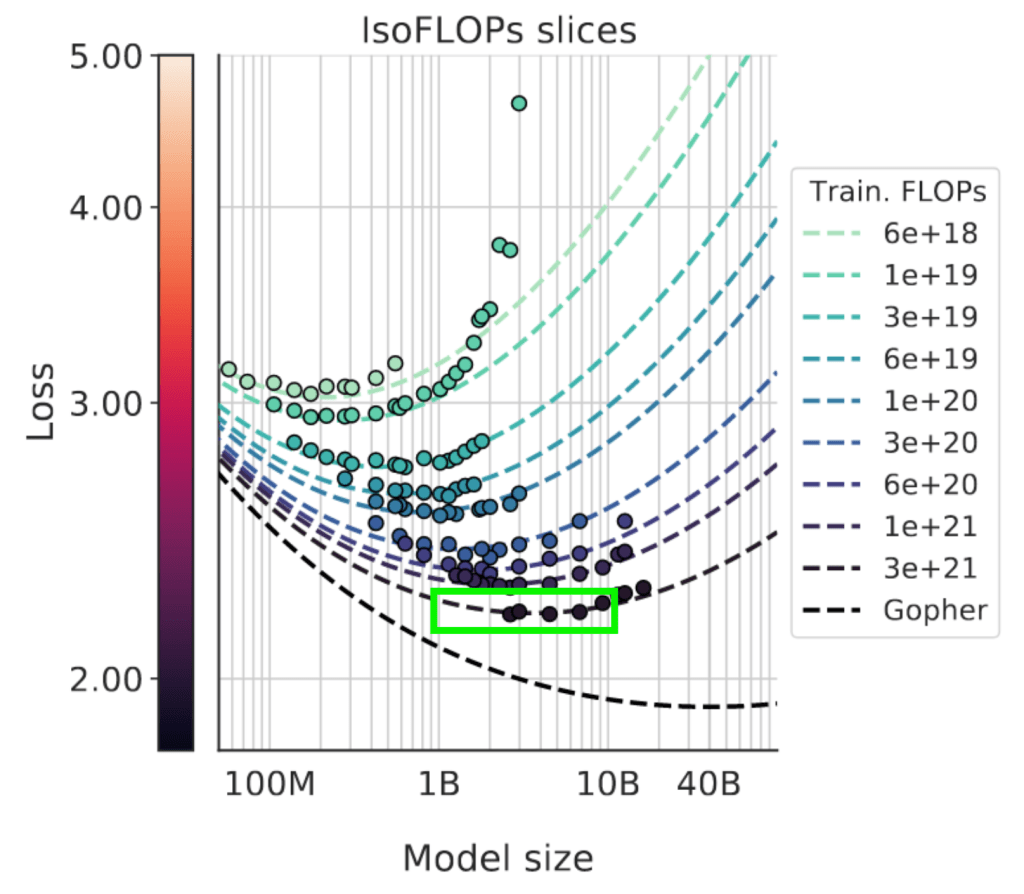

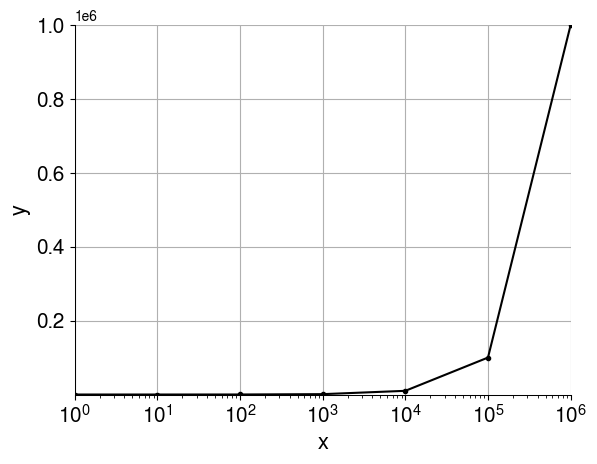

Let’s be as charitable to AI agents as possible and pick a domain where they are expected to excel; their home turf, so to speak: writing code. And just to give a concrete example, let’s take a look at this paper that came out very recently, which introduces a new state-of-the-art method for doing “discovery” with LLMs, i.e. finding novel, highly performant solutions to specific quantifiable problems. One of the problems they consider here is writing performant GPU kernels for specific matrix operations (e.g. triangular matrix multiplication). Note that this is a case where one can explicitly write down an unambiguous, well-defined, and easily verifiable reward function, namely the inverse runtime of the produced kernel, which can then be directly optimized in silico through reinforcement learning. For the H100 GPU, the best kernel this method came up with for triangular matrix multiplication is only 18% better than the best human-written code (for the B200, the number is more like 13%; see Table 4). For another kernel writing problem (MLA Decode on the MI300X), the method actually fails to discover a more performant kernel than the best human-written kernels (see Table 5). So, a grand improvement of less than 20% in the absolute best case, the most optimistic scenario for AI, where we can explicitly write down an unambiguous, well-defined, and easily verifiable reward function and then directly optimize it in silico. Needless to say, this is not going to be even remotely possible for most scientific applications.

How to actually boost scientific productivity massively

There’s actually a very simple and straightforward way we could significantly boost scientific productivity and every economist knows how to do this: if you want to improve productivity significantly, you have to make significant capital investments, including in human capital (no pains, no gains). There are no shortcuts to this, no silver bullets, no magic tricks, no free lunches. If you want to boost the productivity of your machine learning researchers 10x, for example, you have to buy them 10x more and/or 10x better GPUs. If you want to boost the productivity of your molecular biologists 10x, you have to buy them 10x more or 10x better microscopes (plus all the other instruments and devices that make cutting-edge research in biology possible), you have to give them 10x more lab space to run their experiments in, etc. If you want to boost the productivity of your astronomers 10x, you have to buy them 10x more or 10x more powerful telescopes. In general, if you want to boost the productivity of your scientists 10x, you have to be willing to increase their funding 10x (or something like that). Of course, this is much harder and much more expensive than simply buying them $20/month Claude subscriptions and I suspect that one of the reasons why this idea of agentic AI massively boosting scientific productivity seems so alluring to people is its “get rich quick” nature. I’m sorry to have to remind you that “get rich quick” schemes are unfortunately almost always scams.

Conclusion

Current AI tools are extremely useful for a limited set of problems/tasks, chief amongst which is writing code. For this subset of tasks, these tools likely do boost productivity significantly. However, writing code is not the main productivity bottleneck in scientific research (for most scientists in most fields) and has never been so (if it were so, there would be a lot more professional software engineers hired in scientific research organizations than there currently are). By far the main bottleneck in science is rather the (often severely) limited resources available for experimentation. Current AI tools do absolutely nothing to address this bottleneck, which is why they will have a very limited impact on scientific productivity in the short term. In the long run, AI is, of course, a part of the process that improves our instruments of experimentation, making our GPUs, telescopes, microscopes, etc. more efficient and more powerful, but this is a much slower process.