Real-Time AI Inference — On Live Production Data

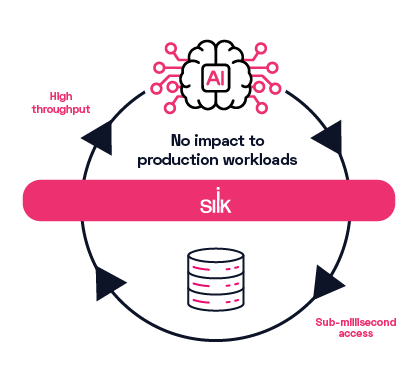

Silk enables real-time AI inference – without destabilizing production systems or driving up cloud costs.

Watch: AI Inference ExplainedReal-Time AI Breaks at the Data Layer

AI inference introduces bursty, non-human access patterns – often equivalent to thousands of concurrent users – against the same mission-critical applications that run the business. Traditional infrastructure can’t absorb these spikes without introducing latency, risk, or runaway overprovisioning.

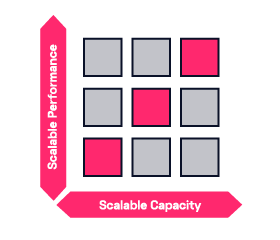

Silk eliminates the root cause: performance tied to capacity and static configuration. As a software-defined SAN and cloud acceleration layer, Silk delivers an unlimited data layer beneath applications so AI, analytics, and transactional systems can run against the same live production data – each receiving the performance they need automatically.

Real-Time AI, Without Disruption

Live-Context Inferencing on Production Data

Silk enables AI models to run directly against authoritative, real-time enterprise data. Instead of relying on delayed replicas or stale pipelines, inferencing stays grounded in live production context — improving accuracy and business relevance.

Predictable Latency Under Inference Spikes

AI applications generate bursty, unpredictable demand that can overwhelm traditional storage. Silk keeps latency stable even under heavy inference spikes, so real-time AI performance remains consistent as usage scales.

Before Silk

With Silk

No Noisy Neighbors Between AI and OLTP

Inference, analytics, and transactional systems all compete for the same data layer. Silk dynamically governs performance across applications with conflicting access patterns, eliminating contention and ensuring production applications stay protected.

Before Silk

With Silk

Scale AI Without Overprovisioning

Most organizations oversize infrastructure just to absorb peak inference demand. Silk decouples performance from capacity, allowing AI deployments to scale efficiently without driving up compute and storage costs.

Before Silk

With Silk

Eliminate Replicas and Delayed Pipelines

Copy-based workflows introduce complexity, cost, and operational lag. Silk reduces the need for full replicas and delayed pipelines by enabling governed, real-time access to live production data — accelerating AI without duplication.

Enterprise AI That’s Production-Safe by Design

Real-time inference shouldn’t come at the expense of stability. Silk makes AI applications safe to run alongside systems of record by delivering predictable performance and controlled access at the data layer.

Sentara's AI Initiative Is Enabled By Silk's Faster Performance and Reduced Cloud Costs

AI Inference Didn’t Break Your Architecture — It Reveals What Comes Next

Discover how leading enterprises are adapting their cloud architecture to handle real-time AI inferencing without costly re-architecture.

Watch the Webinar