TL;DR

This post investigates the following questions: Where should we steer a model? And how expressive can steering actually be?

By comparing steering and finetuning through a first-order lens, we find that often the best place to steer is after the skip connection, where attention and MLP outputs meet. Steering here is more expressive than steering individual submodules, and it ends up looking a lot closer to what finetuning does. Using this insight, we build lightweight post-block adapters that train only a fraction of the model’s parameters and achieve remarkably close performance to SFT.

Note: This post is aimed at readers comfortable with transformers and some linear algebra. We’ll keep the math light but precise.

The Paradigm: Activation Steering vs. finetuning

Activation steering is an alternative to parameter-efficient finetuning (PEFT). Instead of updating weights, it directly edits a model’s hidden activations at inference time, cutting the number of trainable parameters by an order of magnitude. ReFT [1], for example, reaches LoRA-level performance while using 15×–65× fewer parameters. If we can reliably match finetuning with so few parameters, that changes what’s feasible: we can adapt larger models, run more expensive training objectives, or simply get more done under the same compute budget.

Existing steering methods mainly differ in where they apply these interventions: ReFT modifies MLP outputs (post-MLP), LoFIT [2] steers at attention heads (pre-MLP), and JoLA [3] jointly learns both the steering vectors and the intervention locations.

The Problem: We Are Steering in the Dark

Despite empirical success, we still lack a clear understanding of why steering works and how to reason about where is the best steering location. Two key questions remain open:

- The Location Dilemma. Why do some steering locations outperform others? And does understanding how activation steering approximates weight updates help reveal which modules are best to steer? (Spoiler: it does)

- The Expressivity Gap. SFT and LoRA leverage the model’s nonlinear transformations, while most steering methods rely on linear adapters. How much does this limit their ability to mimic the effects of weight updates?

This work investigates both questions.

First: Where should we steer? We begin with a simple, linearized analysis of a GLU’s output changes under weight updates compared to activation steering. This shows us that steering at some locations can easily match the behavior of certain weight updates, but not others. However, we notice that linear steering at these locations cannot completely capture the full behavior of weight updates.

Then: How expressive can steering really be? We then experiment with oracle steering, which, while not a practical method, provides a principled way to test which locations are best to steer at. With this tool, one pattern stands out: the most expressive intervention point is the block output, after the skip connection. Steering here can draw on both the skip-connection input and the transformed MLP output, instead of relying solely on either the MLP or attention pathway.

Motivated by this, we introduce a new activation adapter placed at each block output. It retains a LoRA-like low-rank structure but incorporates a non-linearity after the down-projection. This allows it to capture some of the nonlinear effects of SFT, giving activation steering a more expressive update space.

And finally: A bit of theory. No matter what the steering adapter is, if the adapter is able enough to match the fine-tuned model at each layer, the steered model will be able to match the fine-tuned model. So, how accurate must we be to match a fine-tuned model closely?

We also show that, at least in some settings relating to the geometry of these hidden states and the residuals at each module, post-block steering can replicate post-MLP steering. Also, we show that under some (very specific) parameter settings, post-MLP steering cannot learn anything, while post-block steering still can. This can be evolved into an approximation showing that, in broader, more applicable settings, post-MLP steering can get quite close to pre-MLP steering.

Some notation/background

Throughout this article, we will be looking at a number of different places to steer, along with different ways that we can steer. Even if some of these choices don’t make sense at the moment, don’t worry! A lot of this will be explained much more throughout this article. Use this section as a reference for any unclear notation/names as you read.

We will use $\delta\cdot$ to represent small induced changes in our analysis, and $\Delta\cdot$ will represent changes to parameters, such as the finetuning updates to matrices $\Delta W$ or steering vector $\Delta h$.

First, a Transformer model is built from transformer blocks. Each block contains an Attention module and an MLP module. Unless otherwise specified, the MLP modules will be specifically GLU layers, a popular variant of standard 1-layer MLPs. The inputs to each submodule of each layer will pass through a LayerNorm. Each layer will involve two skip-connections, one around each submodule. This all can be seen in the picture below.

As for steering, there are 3 main variants we consider. (1) pre-MLP steering involves steering attention outputs; most commonly done by steering the output of individual attention heads, before skip-connection and normalization; (2) post-MLP steering involves steering the output of the MLP/GLU layer before it goes through the skip-connection; (3) post-block steering involves steering the output of each block, which can be seen as equivalently steering the output of the MLP/GLU layer after it goes through the skip connection. In our notation, a GLU is represented as

\[y_{\mathrm{GLU}}(h) = W_d(\sigma(W_g h) \odot W_u h).\]The matrices $W_d, W_g, W_u$ are called the down-projection, gated, and up-projection matrices respectively. When convenient, we will also write

\[y(h)=W_d m(h), \quad m(h) = \sigma(a_g) \odot a_u, \quad a_g = W_g h, \quad a_u = W_u h.\]For mathematical notation, the hidden state will be represented as a vector $h$ and steering will be represented as $\Delta h$. So, steering works by replacing $h$ with $h + \Delta h$.

Note: $\Delta h$, the steering vector, can depend on the input. Sometimes this is written explicitly as $\Delta h(h)$, but other times it is omitted.

- Fixed-vector: The simplest form of steering is by adding a fixed vector $v$ to the hidden state:

- Linear/ReFT: Linear steering involves some matrix $A$ and bias vector $b$, where the steering vector is a linear function of the hidden state. However, the matrix $A$ is usually replaced by a low-rank matrix (usually written as $W_uW_d^\top$ or $AB^\top$). The rank of this matrix is written as $r$ when necessary:

- Non-linear: This steering is parameterized by a 1-layer MLP with matrices $W_u, W_d$ as the down- and up-projections with SiLU activation $\phi$:

- Freely parametrized/Oracle: In this case, the steering vector has no explicit parameterization. It can depend on the hidden state $h$ in any way. The oracle specifically will be given by the difference between the hidden states of the base and fine-tuned model

With notation in place, we are now ready to begin our analysis!

Where should we steer?

Let’s start with the main question that drives the rest of our analysis:

At which points in the network can steering match the effect of updating the weights in that same module?

We will examine pre-MLP (steering attention outputs, like LoFIT) and post-MLP (steering the MLP output before the skip connection, like ReFT) steering as they are the most common choice in the literature. These spots nicely sandwich the MLP, so the most immediate module affected by steering at these points is the MLP itself. Our first step is simple: compare the output changes caused by steering at these locations to the output changes caused by tuning the MLP weights.

Note: Before we start, it will feel like there is a lot of math here, but we promise, everything in this section is linear algebra.

finetuning

Let the MLP output be

\[y(h)=W_d m(h), \quad m(h) = \sigma(a_g) \odot a_u, \quad a_g = W_g h, \quad a_u = W_u h.\]finetuning the weights gives us

\[W_g \mapsto W_g + \Delta W_g, \quad W_u \mapsto W_u + \Delta W_u, \quad W_d \mapsto W_d + \Delta W_d.\]The updates $\Delta W_g$ and $\Delta W_u$ induce the following changes in their immediate outputs:

\[\delta a_g = (\Delta W_g) h, \quad \delta a_u = (\Delta W_u) h.\]A first order Taylor expansion of $m = \sigma(a_g) \odot a_u$ gives

\[\delta m = (\sigma'(a_g) \odot a_u) \odot \delta a_g + \sigma(a_g) \odot \delta a_u + \text{(higher order terms)}.\]Plugging in $\delta m$ into finetuning output gives us

\[y_{\mathrm{FT}} (h) = (W_d + \Delta W_d)(m+\delta m) \approx W_d m + \Delta W_d m + W_d \delta m.\]This yields the first-order shift caused by finetuning:

\[\boxed{ \begin{aligned} \delta y_{\mathrm{FT}} &\equiv y_{\mathrm{FT}}(h) - y(h) \\ &\approx (\Delta W_d) m + W_d [ (\sigma'(a_g) \odot a_u) \odot ((\Delta W_g) h) + \sigma(a_g) \odot ((\Delta W_u) h) ]. \end{aligned} }\]Plugging $\delta m$ into $y = W_d m$ gives us

\[\boxed{ \delta y_{\mathrm{pre}} \approx W_d [(\sigma'(a_g) \odot a_u) \odot (W_g \Delta h) + \sigma (a_g) \odot (W_u \Delta h)]. }\]Let’s compare the finetuning and pre-MLP steering output shifts side by side

\[\begin{align*}\delta y_{\mathrm{FT}} &\approx (\Delta W_d) m + & W_d [ (\sigma'(a_g) \odot a_u) \odot &((\Delta W_g) h) + \sigma(a_g) \odot ((\Delta W_u) h), \\ \delta y_{\mathrm{pre}} &\approx &W_d [(\sigma'(a_g) \odot a_u) \odot &(W_g \Delta h) + \sigma (a_g) \odot (W_u \Delta h)]. \end{align*}\]Notice that the 2nd term in $\delta y_{\mathrm{FT}}$ is structurally similar to $\delta y_{\mathrm{pre}}$. What does this imply?

In principle, pre-MLP steering can match the shift caused by the updates $\Delta W_u$ and $\Delta W_g$, if there exist a $\Delta h$ such that $W_g \Delta h \approx (\Delta W_g) h$ and $W_u \Delta h \approx (\Delta W_u) h$.

Wait, what about the first term $(\Delta W_d) m$? For a $\Delta h$ to match this term, $(\Delta W_d) m$ must lie in a space reachable by pre-MLP steering. Let’s factor out $\Delta h$ to see what that space looks like:

\[\delta y_{\mathrm{pre}} \approx W_d [(\sigma'(a_g) \odot a_u) W_g + \sigma(a_g) W_u] \Delta h.\]Define $J(h) = (\sigma’(a_g) \odot a_u) \odot W_g + \sigma(a_g) \odot W_u \quad$ and $\quad A(h) = W_d J(h),$ where elementwise-product between a vector and a norm is performed row-wise, i.e. $a \odot W = \mathbf{1}a^\top \odot W$.

We can rewrite \(\delta y_{\mathrm{pre}} = A(h) \Delta h.\)

For pre-MLP steering to match finetuning MLP shift, we must have: \((\Delta W_d) m \in \text{col}(A(h)).\) What does this mean?

- Since $A(h) = W_d J(h) \subseteq \text{col}(W_d)$, any part of $(\Delta W_d) m$ orthogonal to $\text{col}(W_d)$ is unreachable.

- If finetuning pushes $\Delta W_d$ to new directions not spanned by $A(h)$, pre-MLP steering cannot match it.

- Note that as this condition must hold for every token $h$, this becomes really restrictive.

Bottom line:

Pre-MLP steering can partially imitate MLP finetuning (the $\Delta W_g$ and $\Delta W_u$ effects), but matching the full MLP update is generally very hard, if not impossible.

Post-MLP steering

Post-MLP steering directly modifies the output of the MLP $y$.

Because it acts after all the non-linearities inside the MLP, a sufficiently expressive parameterization could, in principle, reproduce any change made by finetuning the MLP weights. For example, if we were allowed a fully flexible oracle vector,

then adding this vector would give us the exact fine-tuned model output.

This already puts post-MLP steering in a much better position than pre-MLP steering when it comes to matching MLP weight updates. So are we all set with post-MLP steering as the way to go? Not quite.

The missing piece: the skip connection

Let’s look back at the structure of a Transformer block:

Post-MLP steering only modifies the MLP term.

But the block output is the sum of:

- the MLP contribution (which we can steer), and

- the skip-connected input (I’) (which remains unchanged under post-MLP steering).

So even if post-MLP steering perfectly matches the MLP shift, it will not modify the skip-connection term, which would be needed to mimic a fine-tuned model. This is not to say that post-MLP cannot learn some more complex steering from linear layers to mimic the same effect, but that is, in a way, less natural. This ‘naturality’ shows itself in the experiments below.

How big is this mismatch?

Here’s a look at the relative scale of the outputs of the MLP and the Attention models at different layers in an LLM. Across layers, post-MLP steering covers at most ~70% of the block output that finetuning changes, and in some layers, as little as ~40%.

The rest remains untouched, meaning post-MLP steering on a block-specific level cannot fully replicate the effect of finetuning at that block.

Summary

- Post-MLP steering is better positioned than pre-MLP steering when it comes to matching arbitrary MLP updates.

- However, it is not well-positioned for the changes happening through the skip-connection.

- Matching MLP behavior is not enough, we also need a way to account for the skip path.

Now that we’ve sorted out “where to steer” part of the story, the next piece of the puzzle is how much steering can actually do. In other words: how expressive can activation steering be? Since ReFT is the most widely used steering method today, and represents the strongest linear steering baseline, it’s the right place to begin.

ReFT does a post-MLP steering parameterized by

\[\delta y_{\mathrm{ReFT}} = \textbf{R}^\top (\textbf{W} y + \textbf{b} - \textbf{R}y).\]We use $\delta y$ to indicate this is happening after the MLP rather than before. The parameters $\textbf{R}, \textbf{W}, \textbf{b}$ are parameters, where $\textbf{R} \in \mathbb{R}^{r \times d_{\mathrm{model}}}$ has a rank $r$ and orthonormal rows, $\textbf{W} \in \mathbb{R}^{r \times d_{\mathrm{model}}}$, and $\mathbf{b} \in \mathbb{R}^r$.

Recall that the output of the MLP layer is $y = W_d m$, so we can write

\[\begin{align*} \delta y_{\mathrm{ReFT}} &= y_{\mathrm{ReFT}} - y = (\textbf{R}^\top \textbf{W} - \textbf{R}^\top\textbf{R})W_d m + \textbf{R}^\top b \\ &= \Delta W_d ^{\mathrm{eff}} m + \textbf{R}^\top \textbf{b}, \quad \quad \Delta W_d ^{\mathrm{eff}} = (\textbf{R}^\top \textbf{W} - \textbf{R}^\top\textbf{R})W_d \end{align*}\]Now, let’s compare $\delta y_{\mathrm{ReFT}}$ with the $\delta y_{\mathrm{FT}}$ we have from before. ReFT can induce a $\Delta W_d$-like update, but only within the subspace spanned by $\textbf{R}$. So its ability to mimic full finetuning depends on the nature of $\Delta W_d$ update, whether it is low-rank enough to fit inside that subspace.

The second term ($\textbf{R}^\top\textbf{b}$) can only reproduce $\delta y_{\mathrm{FT}}$’s $\Delta W_u$ and $\Delta W_g$ induced shift if it is approximately a linear function of the post-MLP output. This depends on how locally linear the mapping $h \mapsto y$ is. When these conditions hold, ReFT can approximate the effects of MLP weight updates reasonably well. However, as we show in our experiments (Table 1 in the next section), there are many situations where this does not hold, with ReFT performing significantly below the SFT model.

How expressive can steering really be

Now that we’ve seen that steering after the skip-connection provides us with the largest expressivity for steering, let’s see how good it can really be! In fact, our goal will be to match SFT, so let’s see how far we get.

The simplest (and strongest) thing when given the SFT model would be to let the steering vectors be the oracle from above. Just to recall,

\[\Delta h_{\mathrm{oracle}} = h_{\mathrm{FT}} - h_{\mathrm{base}}\]so, quite literally,

\[h_{\mathrm{steer}} = h_{\mathrm{base}} + \Delta h_{\mathrm{oracle}} = h_{\mathrm{FT}}\]

Okay, that’s a bit much. This is simply overwriting the hidden state of the base model with the hidden state of the fine-tuned model. However, this still provided a lot of insight into where to steer, and the properties of a desired steering method.

Taking all of these different oracle steering vectors, their properties can be looked at for patterns to exploit. We quickly found that these vectors were close to low-rank (their covariance had a concentrated spectrum). But be careful! Just because the oracle steering vectors almost exist in some low-dimension subspace, it does not mean the transformation from hidden states to steering vectors is linear! Sometimes it might be, sometimes it won’t.

In fact, if we try and replace the oracle with the best linear approximation of the map between hidden states and steering vectors, we find that the oracle goes from perfect matching to similar-to-or-worse-than ReFT!

Here, we are taking generations on some prompts and comparing the average KL divergence between the fully fine-tuned model’s output probabilities and those of ReFT’s and the linearized oracle’s. The oracle often deviates further from the fine-tuned model than ReFT does, although not by much.

The best way around this would be to learn a low-rank, non-linear function as the map between the hidden states and the steering vectors. What better than a small autoencoder! Its output space would be constrained by the column space of the up-projection, so it will still be low-rank. This isn’t a new idea to steer post-skip-connection ([4] [5] for example), but the systematic justification presented here is: when steering block-by-block, post-block steering will be the most expressive.

At this point, it seemed like we understood what would likely work as a steering vector, so we moved away from using the oracle as the gold-standard to match and now worked on training these adapters end-to-end.

Post-Block Performance

Now we train these steering vectors without a guide. We add low-rank steering modules at the end of each block (similar in spirit to LoRA/PEFT), but applied to activations rather than weights. Based on the discussion above, we test two variants:

- Linear adapters: a simple low-rank adapters.

- Non-linear adapters: a down-projection, followed by a nonlinearity, then an up-projection (essentially a tiny autoencoder).

The nonlinear version is motivated by our earlier observation (and by [4] and [5]) that the map from hidden states to steering vectors may itself be nonlinear, while still being largely confined to a low-rank subspace.

Here are the results we’re currently seeing for 1B-parameter models:

For a fair comparison, we match the parameter counts of our adapters to the baselines.

- For ReFT, we use rank-8 adapters for both ReFT and our method.

- For LoFIT, we train fixed vectors (rather than adapters) per layer.

- For JoLA, we train rank-1 adapters.

Across both Llama-1B and Gemma-1B, the trend is consistent: simply moving the steering location to post-block leads to a substantial boost in performance. Under identical parameter budgets, our linear post-block steering outperforms ReFT, and our fixed-vector and rank-1 variants outperform LoFIT and JoLA respectively.

In a few cases, linear steering even outperforms LoRA (learning an adapter on every Linear module) with the same rank, and occasionally out-performs SFT using full rank! This shows there are situations where steering is a better choice than finetuning.

What is surprising about these results is that linear steering is performing better than non-linear steering. Non-linear steering is not necessarily more expressive than linear steering (with the same rank), and for these tasks, it seems to be that the steering really is linear-like, validating the choice in ReFT. In this situation, it would be better to let the steering have more rank as a pure linear model rather than with some non-linearity messing with this structure.

Now compare this to some larger, 4B-parameter models:

The behavior is different! Now, non-linear steering typically performs better than linear steering (except notably in Winogrande). This shift is likely due to optimization effects of the different scales of model tested here. At the larger scale, the loss function typically ends up being smoother/flatter than smaller scale models. This could mean the larger models can learn the more-expressive non-linear adapter over the easier-to-learn linear adapter.

Additionally, this shift could mean something more fundamental to the best adapters as well. If the adapter is well-suited as a linear adapter with the correct rank, the non-linear adapter would have to work around its non-linearity with something like large scale parameters to match the linear adapter. So, it’s possible that the smaller models need a small-rank linear adapter while the larger models need a large-rank non-linear adapter, but this is left to future work.

Theory

Post-block is better than Post-MLP

Now that we’ve seen how there is a clear difference between post-MLP and post-block steering, we now should ask why is there such a difference. What makes post-block steering better than post-MLP?

This difference, already highlighted, is that a post-block steer can depend on outputs of the attention layer without passing through the GLU. To gain some simple intuition, let’s take a 1-layer model, made of one Attention layer and one GLU:

\[y(x) = x + \mathrm{Attn}(x) + \mathrm{GLU}(x + \mathrm{Attn}(x))\]where $x$ is the input of the model.

The two steerings we will be comparing are a post-MLP steer, that only depends on the outputs of the GLU, and a post-block steer, which steers the model after the skip-connection is added back into the model (which for this model, will just be another steer at the very end that depends on the original model outputs). Let’s remind ourselves of the structure of the GLU/MLP:

\[y_{\mathrm{GLU}}(h) = W_d(\sigma(W_g h) \odot W_u h)\]Consider the (fairly extreme) case where $W_d = 0$. In this situation, the GLU will be identically 0, so any input-dependent steering $\Delta y_{MLP}$ will be a fixed vector. Compare this to the post-block steering which can still depend on $h + \mathrm{Attn}(h)$. This also will extend to cases where $W_d$ is not full-rank, where the output of the steering cannot depend on the directions in the null-space, since the only contributions of a pre-MLP steer in the directions perpendicular to $\text{col}(W_d)$ will be a fixed-vector, while post-MLP steering does not have this restriction.

This tells us:

There are some situations that post-MLP steering does not perform well while post-block steering does.

Is it the case that post-block steering always can do what post-MLP steering can do? Unfortunately, no, not always. After adding back the skip-connection, it isn’t necessarily true that this is invertible so that the skip-connection and GLU terms can be distinguished; their subspaces might overlap with each other. In the situation when this isn’t true, we can match post-MLP steering perfectly.

To gain some intuition, let’s restrict ourselves to the linear case, where post-MLP steering is a steering in the style of ReFT. Now, to assure invertibility, assume that the subspace spanned by the skip-connection and the subspace spanned by the MLP outputs have trivial intersection. That is to say that there is a projection map $P$ such that

\[P(h + \mathrm{Attn}(h) + \mathrm{GLU}(h + \mathrm{Attn}(h))) = h + \mathrm{Attn}(h)\]At this point, the equivalence is easy to see. If $A$ is the rank-$r$ linear projector for post-MLP steering, then $\Delta h(h) = A P h$ as a post-block steer will match the post-MLP steering perfectly. It will also be rank-$r$ since $AP$ is a rank-$r$ (or less) matrix.

Of course, this setting where these two vector spaces are completely separate is a bit extreme. Steering might need to be done in the same direction as the steered vector. At this point, some probability needs to be involved since any projection will no longer be perfect. However, we save this for the paper.

Error Control

One last thing to mention is that, since we have an oracle at each layer, it’s now possible to measure how similar we are to that oracle. This is, of course, assuming that we have access to the fine-tuned model we want to mimic. Soon we will remove this and move towards a more oracle-free understanding, but for the moment, assume we have access to this oracle. We also now have fine-grained control about errors and how they grow through the model! At each layer, it’s possible to develop an approximate steering vector $\Delta h’$ to match the oracle $\Delta h_{\mathrm{oracle}}$ closely enough that no layer has an error between them of more than some $\epsilon$.

Importantly, this gives us knowledge of how complex our adapters need to be. For example, if our update is nearly linear, we can take enough rank $r$ so that the approximation is within $\epsilon$ to ensure that that error does not grow out of control through the model.

The exact form of this required control is beyond the scope of this blog. However, there are a few key insights.

- The layer-norms help control the error significantly since in most models, their vector-wise standard deviation is somewhat large.

- Differences in hidden-states are amplified by the 2-norms of the matrices of each layer times $\sqrt{n}$, where $n$ is the representation dimension. The difference in 2-norm between the matrices ends up with a factor of $n$ instead, so it is more important that steering is more expressive on layers where the difference in matrices is larger (unsurprisingly so).

We plan to further improve these bounds with nicer assumptions based on the behavior of real models soon!

Note:: The model we analyze in this theory section is a drastically simplified stand-in for a real block, but the same geometry: post-block can see both Attn and GLU, post-MLP only sees GLU, persists in deeper models.

Where this leaves us

If you’ve made it this far—kudos! That’s pretty much all we have to say (for now, wink). To conclude, here are some highlights of everything we unpacked:

- Pre-MLP vs. Post-MLP: They behave very differently, and post-MLP generally does a better job matching MLP weight updates.

- But Post-Block is generally better than Post-MLP. Steering the residual stream, not individual module outputs, is the real sweet spot.

- With only 0.04% trainable parameters (compared to LoRA’s 0.45% using the same rank), our method at this post-block location gets remarkably close to SFT, which updates all parameters.

Where do we go from here?

Lowering the number of trainable parameters is more than an efficiency win. It buys us compute headroom for adapting larger models, running more expensive algorithms like RL, and we can do all of this within realistic compute budgets.

Because our framework reasons about steering and weight updates in their most general form (arbitrary $\Delta h$ and arbitrary $\Delta W$), there’s nothing stopping those updates from being learned by far more expensive methods (e.g., GRPO or other RL-style objectives). So the natural next step is clear: test whether our steering can plug into these stronger algorithms and deliver the same performance with a fraction of the trainable parameters.

And now that we know it’s possible to learn nearly as well in activation space as we can in weight space in even more setting than ReFT originally could, an even more ambitious idea opens up:

What happens if we optimize in both spaces together?

Perhaps, jointly optimizing them might help us break past the limitations or local minima that each space hits on its own?

Stay tuned to find out!

🧪 Want to try it yourself?

We’ve packaged everything — activation adapters, post-block steering layers, training scripts — into a clean, lightweight library.

👉 **Try our code on GitHub** 🐙

References

[1] Wu, Z., Arora, A., Wang, Z., Geiger, A., Jurafsky, D., Manning, C., & Potts, C. (2024). ReFT: Representation Finetuning for Language Models.

[2] Fangcong Yin, Xi Ye, & Greg Durrett (2024). LoFiT: Localized finetuning on LLM Representations. In The Thirty-eighth Annual Conference on Neural Information Processing Systems.

[3] Lai, W., Fraser, A., & Titov, I. (2025). Joint Localization and Activation Editing for Low-Resource finetuning. arXiv preprint arXiv:2502.01179.

[4] Houlsby, N., Giurgiu, A., Jastrzebski, S., Morrone, B., De Laroussilhe, Q., Gesmundo, A., … & Gelly, S. (2019). Parameter-efficient transfer learning for NLP. In International conference on machine learning.

[5] Tomanek, K., Zayats, V., Padfield, D., Vaillancourt, K., & Biadsy, F. (2021). Residual adapters for parameter-efficient ASR adaptation to atypical and accented speech. In Proceedings of the 2021 Conference on Empirical Methods in Natural Language Processing.

Appendix

Implementation details:

For all methods, we sweep across 5 learning rates and keep other hyperparameters constant (batch size, scheduler, warmup ratio, weight decay). We keep the same learning rate sweep space for ours, ReFT, JoLA, and LoFIT, and slightly shift to smaller values for SFT and LoRA (since they train substantially more parameters).

The ReFT paper also treats the tokens to steer as a hyperparameter. After selecting the best learning rate, we sweep over two locations: the last prompt token (the default value) and (prefix+7, suffix+7) (the best configuration reported for GSM8K). Our method does not require this sweep, it intervenes at all token positions (both prompt and generated).

- SFT sweep values: [5e-6, 1e-5, 2e-5, 5e-5, 1e-4]

- LoRA sweep values: [5e-5, 1e-4, 3e-4, 7e-4, 1.5e-3]

- Activation steering methods sweep values: [5e-4, 1e-3, 2e-3, 3e-3, 5e-3]

]]>

Over the past decade, natural language processing (NLP) has evolved significantly. Beginning with word2vec in 2013. Word2vec’s word embeddings can capture nuanced lexical relationships through vector arithmetic. The famous equation “king – man + woman ≈ queen.” is a prime example of how word co-occurrences were used to embed semantic meaning. This approach emphasis on maintaining synonym and hypernym relationships stands in contrast to CLIP’s text encoder, which is primarily optimized for aligning images with text rather than preserving these detailed linguistic structures. As NLP developed, GloVe in 2014 improved word representations using global co-occurrence statistics. In 2018, BERT introduced a transformer-based approach, providing context-dependent word representations and setting new performance benchmarks. Following this, large language models (LLMs) like GPT-2 and GPT-3 emerged and further refined language understanding. While these models shifted focus toward richer contextual embeddings, the legacy of explicitly encoding relational properties gradually diminished, making modern VLMs like CLIP sensitive to linguistic variations.

We will start our story with an observation as following:

Semantic Disconnection: Uncovering CLIP’s Latent Space Limitations

One major issue is that CLIP’s latent space struggles to capture semantic differences in their pretraining stage. Consider these observations:

- Synonyms vs. Antonyms: The cosine similarity between synonyms can be even lower than that between obvious opposites. For example

- $\cos(\textit{happy}, \textit{sad}) = 0.8 \quad > \quad \cos(\textit{happy}, \textit{cheerful}) = 0.79$

- $\cos(\textit{smart}, \textit{dumb}) = 0.8 \quad > \quad \cos(\textit{smart}, \textit{intelligent}) = 0.75$ And, this is a common phenomenon for all words in the WordNet hierarchy!

- Template Variability: When words are embedded in different prompt templates, experiments reveal that only about 30% of expected semantic relationships remain consistent.

These challenges underscore why traditional methods—such as manual prompt engineering or static fine-tuning—often fall short. They are typically time-consuming, narrowly focused, and lack the ability to generalize across varied datasets or scenarios.

Methodology - Power of Wu-Palmer Similarity

Our approach focuses exclusively on fine-tuning the text encoder while leaving the image encoder unchanged. The key idea is to explicitly incorporating lexical relationships and adjust the text embedding space such that:

- Equivalent Expressions are mapped closer together.

- Other Words are pushed further apart.

Inspired by network embedding methods that are explicitly designed to preserve structure, we seek a metric that captures these inherent relationships. This motivation leads us to the Wu-Palmer Similarity, which is a measure used to quantify the semantic similarity between two concepts within a taxonomy, like WordNet. It relies on the depth of the concepts and their Least Common Subsumer (LCS), which is the most specific ancestor common to both concepts.

Wu-Palmer Similarity

The similarity between two concepts ( c_1 ) and ( c_2 ) is given by:

\[\text{sim}_{wup}(c_1, c_2) = 2 \times \frac{\text{depth}(LCS(c_1, c_2))}{\text{depth}(c_1) + \text{depth}(c_2)}\]where:

- $depth(c)$ denotes the depth of concept $c$ in the taxonomy.

- $LCS(c_1, c_2)$ is the least common subsumer of $c_1$ and $c_2$.

- Range: The similarity score ranges from 0 to 1, which is same as $cos$ function.

- Higher Score: A higher score indicates a greater degree of semantic similarity, as the concepts share a more specific (deeper) common ancestor.

Custom Loss Function

Our training objective is to fine-tune the text encoder using a composite loss that consists of two parts: a distance loss and regularization loss. Given two tokenized word vectors $w_i$ and $w_j$ generated by the model $M$, the losses are defined as follows:

1. Distance Loss

This component enforces that the cosine similarity between the embeddings $M(w_i)$ and $M(w_j)$ approaches a target similarity derived from the Wu-Palmer metric. Mathematically, it is given by:

\[\mathcal{L}_{\text{distance}}(w_i, w_j) = \left(c\times \Bigl( s_{\text{WP}}(w_i, w_j) - \cos\theta \bigl(M(w_i), M(w_j)\bigr) \Bigr) \right)^2\]where:

- $s_{\text{WP}}(w_i, w_j)$ is the Wu-Palmer similarity between $w_i$ and$w_j$ and $c$ is a constant.

- $\cos\theta \bigl(M(w_i), M(w_j)\bigr)$ is the cosine similarity between their embeddings, generated by $M$ in current state.

2. Regularization Loss

To prevent the fine-tuning process from deviating too far from the original embeddings, we introduce a regularization term. This is computed as the Euclidean Distance between the current embedding $M(w)$ and the precomputed original embedding $M_0(w)$ for each word $w$:

\[\mathcal{L}_{\text{reg}}(w) = \text{Euclidean}\Bigl( M(w), \, M_0(w) \Bigr)\]This term is scaled by a regularization strength multiplier $\lambda$

Overall Loss Function

The combined loss function that is minimized during training is:

\[\mathcal{L} = \mathcal{L}_{\text{distance}} + \lambda \, \mathcal{L}_{\text{reg}}\]This loss encourages the model to adjust its embeddings so that:

- The cosine similarity between $M(w_i)$ and $M(w_j)$ closely matches the target Wu-Palmer similarity.

- The embeddings do not stray too far from their original values, by the regularization term.

Training Loop

The training process involves iteratively updating the text encoder based on the computed loss over all word pairs. The high-level algorithm is as follows:

Benefit

By applying our method, we can

- Improve Adaptability: Enhance CLIP’s robustness when dealing with the inherent variability of natural language.

- Minimize Overhead: Achieve these improvements with minimal computational cost, all without requiring additional image content.

- Boost Accuracy: Preliminary results indicate that our approach can enhance the image classification accuracy noticeablely while using synonyms or hypernyms in classnames, as well as original classification accuracy, across multiple datasets

This innovative method take a step toward overcoming the linguistic rigidity of CLIP, potentially paving the way for more robust and versatile vision-language applications.

Stay tuned as we delve deeper into the experimental insights and future directions of this research.

Experiments

Datasets

- FER2013: The Facial Expression Recognition 2013 (FER2013) dataset have 7 classes. We select this as a showcase in small-scale experiment since their class names have rich synonyms.

- ImageNet: The ImageNet dataset is a large-scale image database designed to advance visual recognition research that organized according to the WordNet hierarchy. This is also the only large image-classification dataset which have official mapping to WordNet, which our method is currently relying on.

- OpenImage: The OpenImages dataset is similar to ImageNet. We carefully select 200 classes and assign WordNet Mapping to its class names with gpt-4o with human verifier.

Subset Creation

In this experiment, we focus exclusively on subsets of class labels for which synonyms or hypernyms are available in WordNet. To minimize potential disturbances, we ensure that all words within each subset are unique during the subset creation process.

For the hypernym setting, we limit our use to direct (level-1) hypernyms in the WordNet hierarchy, as higher-level hypernyms tend to be overly abstract and less applicable in practical real-world scenarios.

Evaluation Strategy

To evaluate the effectiveness of our method, we compare the zero-shot classification accuracy with the original pre-trained model1 and our fine-tuned version on two sets of class labels: the original class names and the class names replaced by their corresponding synonyms or hypernyms. We select distinct subset and combination to reduce variations

Results

Our method improves classification performance in both synonym setting and hypernym setting across different datasets. In ImageNet, we also conduct experiment on Mixing synonyms and hypernyms

Fer2013

As a starting point, we test our method’s capability on Fer2013 dataset. The tuned model demonstrates a notable increase in accuracy for both the original class labels and those replaced by synonyms whenever we use sentence template in the classification

ImageNet

Our ImageNet experiments show that fine-tuning not only boosts accuracy when we swap label names with synonyms or hypernyms, but it also improves zero-shot performance using the default labels. This means our method helps the base model get a better handle on semantics and generalize more effectively.

We conduct our experiment in all of three setting below.

OpenImage

To show adaptability of our approach, we also craft a subset of OpenImage to conduct similar experiment as we did imagenet. We observe a similar pattern of improvement

Generalization

Now, let’s see how well our method generalizes. We’ll show that a model fine-tuned on different ImageNet subsets can also boost the classification accuracy on the OpenImage subset.

Discussions & Conclusions

In this post, We explored a simple way to fine-tune CLIP’s text encoder without heavy computation—by aligning synonyms and hypernyms in the text embedding space. This tweak improves zero-shot classification accuracy across various datasets without even needing image content. Looking ahead, we’ll refine this approach and test its real-world applications to better connect language and vision.

Takeaways and Future Work

Our exploration of fine-tuning CLIP’s text encoder has revealed several critical challenges and exciting directions for future research:

-

Scalability/Polysemy Challenges:

In WordNet, 31989 out of 148730 words have polysemy. This inherent ambiguity could compromise the integrity of the underlying data structure as we scale up, necessitating advanced techniques to manage multiple word meanings effectively. In the experiment part, we also observe a decreasing marginal gain on our proposed method when we increase the number of classes. While 53811 out of 117659 words have synonym in wordnet, scaling is another concern. Both of which underscoring the need for scalable and robust solutions. -

Adapting to Image-Caption Datasets:

For broader applicability, we need to adjust the current methodology to work with image-caption datasets like LAION and Conceptual Captions. This adaptation could pave the way for more versatile and comprehensive vision-language models. -

Limitations with Propositional Words:

Frameworks like CLIP struggle with propositional terms such as not, is a, or comparative expressions like more/less than. These limitations hinder the model’s ability to fully grasp complex semantic relationships.

We hope you enjoyed our post! Our code is also released in Github.

]]>

Why is synthetic tabular data useful?

The ability to generate high-quality synthetic tabular data has far-reaching applications across numerous domains. One of the most compelling use cases is medical research, where strict privacy regulations prevent real patient datasets from being widely shared. These restrictions, while essential for protecting sensitive information, can slow down collaboration and innovation. If researchers could instead generate and share synthetic patient datasets that mimic real data while ensuring privacy, medical discoveries could be accelerated on a global scale—enabling scientists to uncover insights without compromising patient confidentiality.

Table synthesis also plays crucial roles in data augmentation and missing value imputation. In many real-world scenarios, such as observations of rare weather events, collecting large, high-quality datasets is expensive or impractical (feel free to check out my current work at NASA on this exact topic, for atypically powerful and destructive hurricanes). Additionally, many datasets suffer from incomplete records, and advanced synthesis techniques can be used to intelligently fill in missing values, preserving the integrity and usability of the data. For example, missing data is a common challenge in air traffic control when analyzing flight patterns and schedules. Reliable tabular missing value imputation methods would allow aviation authorities to reconstruct missing flight paths, estimate delay probabilities, and optimize air traffic flow—even when real-time data is incomplete.

How Tabby works

Tabby introduces a set of architectural modifications that can be applied to any transformer-based language model (LM), enabling it to generate high-fidelity synthetic tabular data. At its core, Tabby incorporates Gated Mixture-of-Experts (MoE) layers with column-specific parameter sets, allowing the model to better represent relationships between different table columns. These modifications introduce the necessary inductive biases that help the LM model structured tabular data rather than free-form text.

The figure below compares a Tabby model to a standard, non-Tabby “base” LLM, when intended for use with a dataset that has V columns:

Despite these significant architectural changes, Tabby’s fine-tuning process remains straightforward—closely mirroring the pre-existing approaches for adapting LMs to tabular data. Moreover, Tabby is designed to retain and leverage the knowledge gained during the large-scale text pre-training phase, allowing for faster and more efficient adaptation to structured datasets.

Beyond Tabby itself, we also introduce Plain—a lightweight yet powerful training method for fine-tuning LMs (both Tabby and non-Tabby) on tabular data. Plain consistently improves the quality of synthetic data generation, regardless of the underlying LM. If you’re curious about how it works, check out our paper for the full details!

Evaluations: Tabby synthetic data reaches parity with non-synthetic data!

To assess Tabby’s performance, we follow standard benchmarks for tabular data synthesis, using a diverse set of datasets and the widely-accepted Machine Learning Efficacy (MLE) metric to measure data quality.

Datasets: We train Tabby models on six datasets spanning various domains and sizes:

| Name | # Rows | # Columns | Domain |

|---|---|---|---|

| Diabetes | 576 | 9 | Medical |

| Travel | 715 | 7 | Business |

| Adult | 36631 | 15 | Census |

| Abalone | 3132 | 9 | Biology |

| Rainfall | 12566 | 4 | Weather |

| House | 15480 | 9 | Geographical |

Metrics: MLE measures how well synthetic data preserves real-world patterns by comparing the performance of machine learning models trained on synthetic vs. real data. The closer the synthetic data’s MLE score is to the real data’s, the higher the fidelity of the synthetic dataset.

Results

In the table below, the first row represents the MLE score of real (non-synthetic) data. Any synthetic method that matches or surpasses this score is considered to have reached parity with real data.

Tabby achieves parity with real data on three out of six datasets (Diabetes, Travel, and Adult). Additionally, Tabby outperforms the prior best LLM-based tabular synthesis method on all six datasets.

| Name | Diabetes | Travel | Adult | Abalone | Rainfall | House |

|---|---|---|---|---|---|---|

| Upper Bound | 0.73 | 0.87 | 0.85 | 0.45 | 0.54 | 0.61 |

| Our Best Tabby Model | 0.74 ✅ | 0.88 ✅ | 0.85 ✅ | 0.43 | 0.49 | 0.60 |

| Prior best LM approach | 0.72 | 0.87 | 0.83 | 0.40 | 0.05 | 0.55 |

✅: Parity with real data!

Extensions

One of the most exciting discoveries in our work is that Tabby is not limited to tabular data. Unlike previous tabular synthesis models, Tabby successfully adapts to other structured data formats too, such as nested JSON records.

Why does this matter?

Most prior tabular synthesis methods struggle when faced with hierarchical or nested structures, which are common in web data, API responses, and metadata-rich datasets. Tabby’s architecture enables it to capture these structures effectively, opening up new possibilities for structured data generation.

We’re excited to explore this direction further and believe Tabby could be a foundation for generating a much broader class of structured synthetic data beyond just tables.

Takeaways

Tabby is a highly promising and easy-to-use approach for generating realistic synthetic tabular data. By leveraging Mixture-of-Experts (MoE) layers and the Plain training process, Tabby achieves parity with real data while outperforming previous tabular synthesis methods.

In our paper, we dive deeper into:

✅ The technical details behind Tabby’s architecture

✅ The Plain training process, which boosts data quality across different LLMs

✅ Extensive evaluations and comparisons against prior methods

For a deeper look, check out our paper or code. Feel free to reach out at [email protected]—I’d love to hear your thoughts!

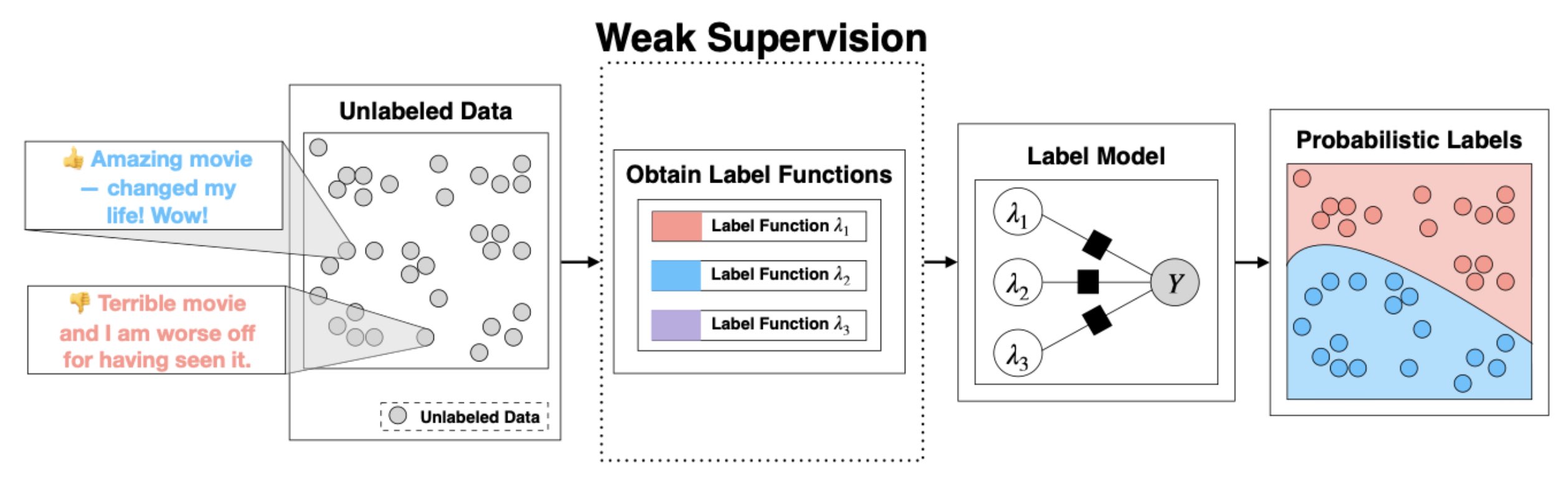

]]>For instance, labeling a dataset of 7,569 points with GPT-4 could cost over $1,200. Even worse, the resulting labels are static, making them difficult to tweak or audit.

A Novel Approach to Data Labeling.

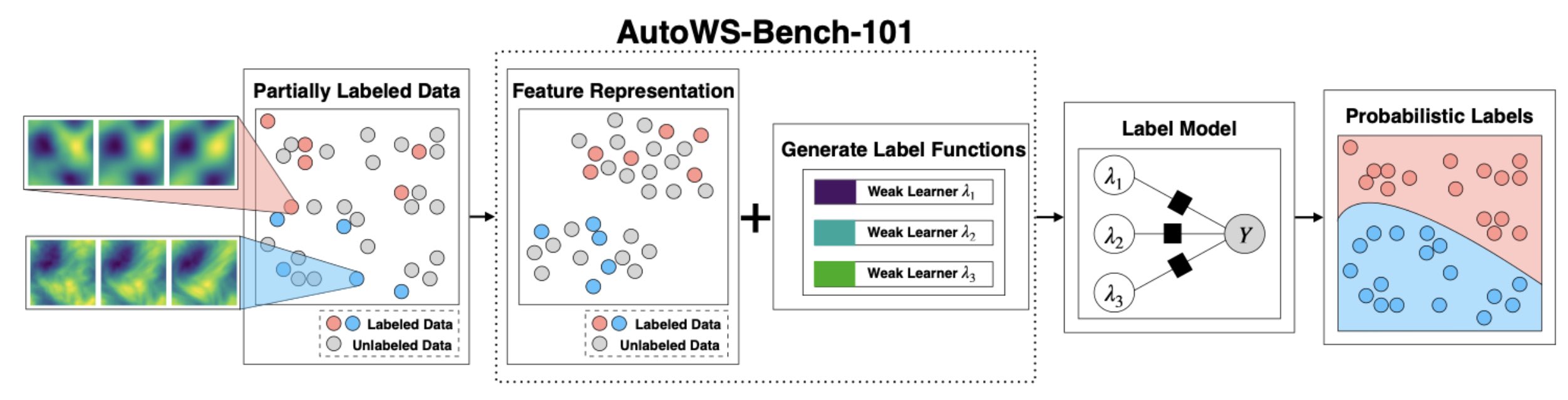

Instead of directly prompting LLMs for labels, we ask LLMs to generate programs that act as annotators. These synthesized programs encode the LLM’s labeling logic and can either label data directly or label a training dataset used to train a distilled specialist model.

Why Is This Better?

- Massive Cost Savings: Instead of making one API call per data point, we only need a handful of API calls to generate labeling programs (10 to 20 in our case). For the dataset mentioned above, the number of GPT-4 calls was reduced from 7,569 to just 10, reducing costs from $1,200 to $0.70—-a 1,700× reduction.

- Unlimited Local Predictions: Once generated, these programs can label as much data as needed without additional API costs.

- Transparency & Adaptability: The generated code is human-readable, allowing subject matter experts (SMEs) to inspect, refine, and adapt the labeling rules as needed.

Introducing Alchemist: Our Automated Labeling System.

We built Alchemist, a system that implements this approach. Empirically, Alchemist improves labeling performance on five out of eight datasets, with an average accuracy boost of 12.9%, while reducing costs by approximately 500×.

How Alchemist Works?

Step 1: Generate Labeling Programs We start with an unlabeled dataset—-such as YouTube comments or medical abstracts—-and provide an LLM with a simple prompt, instructing it to generate a labeling program that labels the data.

Step 1. Prompt the LLM for Programs We start with an unlabeled dataset (e.g., YouTube comments or medical abstracts). Write a simple prompt, an instruction, telling the LLM what you want—like a function to label spam (1) or ham (0). These prompts can integrate relevant information and may vary in their design, allowing for the synthesis of multiple programs.

Example prompt:

[Task Description] Write a bug-free Python function to label YouTube comments as spam or ham.

[Labeling Instruction] Return 1 for spam, 0 for ham, -1 if unsure.

[Function Signature] def label_spam(text_comment):

And the generated program:

def label_spam(text_comment):

"""

Classifies YouTube comments as spam (1), ham (0), or unsure (-1).

"""

if not isinstance(text_comment, str) or not text_comment.strip():

return -1

text = text_comment.lower()

# Key spam indicators

spam_phrases = ["sub4sub", "subscribe to my", "check out my channel", "follow me",

"make money", "click here", "free gift", "www.", "http", ".com"]

# Check for spam indicators

if any(phrase in text for phrase in spam_phrases):

return 1

# Check for suspicious patterns

suspicious = (

text.count('!') > 3 or

text.count('?') > 3 or

(len(text) > 10 and text.isupper()) or

any(char * 3 in text for char in "!?.,$*") or

any(segment.isdigit() and len(segment) >= 10 for segment in text.split())

)

return -1 if suspicious else 0

A single program might not capture all aspects of the labeling logic. To improve robustness, Alchemist generates multiple programs with diverse heuristics—some using keyword matching, others leveraging more complex patterns.

Step 2: Aggregate Labels with Weak Supervision The generated programs may be noisy or inconsistent. To address this, Alchemist uses weak supervision framework (such as Snorkel) to aggregate their outputs into a single, high-quality set of pseudolabels.

Step 3: Train a Local Model We can either use the pseudolabels directly or train a small, specialized model (e.g., a fine-tuned BERT model) to generalize the labeling logic. This allows completely local execution—-no further API calls required.

Handling to Complex Data Modalities.

Alchemist isn’t limited to text data. For non-text modalities like images, we introduce an intermediate step:

Concept Extraction: We first prompt the LLM to identify key concepts relevant to the classification task. For example, in a waterbird vs. landbird categorization task, the model may identify “wing shape,” “beak shape,” or “foot type” as distinguishing characteristics.

Feature Representation: Feature Representation: A local multimodal model (e.g., CLIP) extracts features corresponding to these concepts. This step generates low-dimensional feature vectors that can be effectively used by the labeling programs.

Program Synthesis: Using the extracted features and their similarity scores, we prompt the LLM to generate labeling programs that automate the annotation process.

Experimental Result (1).

We use eight text domain datasets to evaluate Alchemist. We use GPT-3.5 to generate 10 labeling programs for each dataset and compare labeling performance to LLM zero-shot prompting. Here are the results.

Experimental Result (2).

Next, we validate the extension of Alchemist to richer modalities. We extract features for the key recognized concepts by employing CLIP as our local feature extractor. Then, we converts these feature vectors to produce a set of similarity scores. Armed with these scores, we describe scores associated with their concepts in prompts and ask GPT4o and Claude 3 for 10 programs. Results show that Alchemist achieves comparable performance on average accuracy while improving robustness to spurious correlations. This is a key strength of Alchemist: targeting salient concepts to be used as features may help move models away from spurious shortcuts found in the data. This validates Alchemist’s ability to handle complex modalities while improving robustness.

Conclusion.

We propose an alternative approach to costly annotation procedures that require repeated API requests for labels. Our solution introduces a simple notion of prompting programs to serve as annotators. We developed an automated labeling system called Alchemist to embody this idea. Empirically, our results indicate that Alchemist demonstrates comparable or even superior performance compared to language model-based annotation, improving five out of eight datasets with an average enhancement of 12.9%.

🙋🏻 Still, prompting ChatGPT for your labels repeatedly? Try to generate your program code to save the project expenses!

- Full paper link: https://arxiv.org/abs/2407.11004

- Github repo: https://github.com/SprocketLab/Alchemist

- Twitter post: https://x.com/zihengh1/status/1843401287351824783

Imagine you can steer a language model’s behavior on the fly- no extra training, no rounds of fine-tuning, just on-demand alignment. In our paper, “Alignment, Simplified: Steering LLMs with Self-Generated Preferences”, we show that this isn’t just possible—it’s practical, even in complex scenarios like pluralistic alignment and personalization.

The Traditional Alignment Bottleneck

Traditional LLM alignment requires two critical components: (1) collecting large volumes of preference data, and (2) using this data to further optimize pretrained model weights to better follow these preferences. As models continue to scale, these requirements become increasingly prohibitive—creating a bottleneck in the deployment pipeline.

This problem intensifies when facing the growing need to align LLMs to multiple, often conflicting preferences simultaneously (Sorensen et al., 2024), alongside mounting demands for rapid, fine-grained individual user preference adaptation (Salemi et al., 2023).

Our research challenges this status quo: Must we always rely on expensive data collection and lengthy training cycles to achieve effective alignment?

The evidence suggests we don’t. When time and resources are limited—making it impractical to collect large annotated datasets—traditional methods like DPO struggle significantly with few training samples. Our more cost-effective approach, however, consistently outperforms these conventional techniques across multiple benchmarks, as demonstrated below:

These results reveal a clear path forward: alignment doesn’t have to be a resource-intensive bottleneck in your LLM deployment pipeline. Enter AlignEZ—our novel approach that reimagines how models can adapt to preferences without the traditional overhead.

On the fly Alignment: The EZ Solution

At its core, AlignEZ enables the (non-trivial) combination of two most cost-efficient choice of data and algorithm–using self-generated preference data and cut down the compute cost by replacing fine-tuning with embedding editing. This combination is non-trivial for several reasons:

- Model generated signal is often noisier in nature, necessitating an approach that is able to effectively harness alignment signal from the noise.

- On top of assuming access to clean human-labeled data, current embedding editing approaches assumes 1-vector-fits-all for to steer the LLM embeddings. By operating in carefully identified subspaces, it enables seamless extension to multi-objective alignment scenarios.

Now that hopefully have convinced you why this is the way to go, let’s break down how AlignEZ works, in plain English:

Step 1: Self-generated Preference Data

Instead of collecting human-labeled preference data, AlignEZ lets the model create its own diverse preference pairs. Diversity is key to capturing a broad range of alignment signals, ensuring we capture as much alignment signal as possible. We achieve this through a two-step prompting strategy:

- For each test query, we prompt the model to explicitly identify characteristics that distinguish “helpful” from “harmful” responses. This process is customized to the specific task at hand. For example, in a writing task, we might contrast attributes like “creative” versus “dull” to tailor the alignment signals appropriately.

- The model then generates multiple responses to the test query, each deliberately conditioned on the identified characteristics.

By applying this process across our dataset, we develop a rich preference dataset where each query is paired with multiple responses that reflect various dimensions of “helpful” and “unhelpful” behavior.

Importantly, we recognize that the initial batch of generated data may contain significant noise—often resulting from the model failing to properly follow the conditioned characteristic. As a critical first filtering step, we eliminate samples that are too similar in the embedding space, a characteristic that research by (Razin et al., 2024) has shown to increase the likelihood of dispreferred responses.

Step 2: Identify Alignment Subspace

With our self-generated preference data in hand, we next identify the alignment subspace within the LLM’s latent representation. Our approach adapts classic techniques from embedding debiasing literature (Bolukbasi et al., 2016) that were originally developed to identify subspaces representing specific word groups.

Formally, let $\Phi_l$ denote the function mapping an input sentence to the embedding space at layer $l$, and each preference pair as $(p_i^{help}, p_i^{harm})$. Firt, we construct embedding matrices for helpful and harmful preferences:

\[\begin{equation} \textbf{H}_{l}^{help} := \begin{bmatrix} \Phi_{l}(p_1^{help}) \\ \vdots \\ \Phi_{l}(p_K^{help}) \end{bmatrix}^T, \quad \textbf{H}_{l}^{harm} := \begin{bmatrix} \Phi_{l}(p_1^{harm}) \\ \vdots \\ \Phi_{l}(p_K^{harm}) \end{bmatrix}^T, \end{equation}\]where $K$ is the total number of preference pairs. Next, alignment subspace is identified by computing the difference between the helpful and harmful embeddings:

\[\begin{equation} \textbf{H}_{l}^{align} := \textbf{H}_{l}^{help} - \textbf{H}_{l}^{harm}. \end{equation}\]We then perform SVD on $\textbf{H}_{l}^{align}$:

\[\begin{equation} \textbf{H}_{l}^{align} = \textbf{U}\Sigma\textbf{V} \\ \Theta_l^{align} := \textbf{V}^T, \end{equation}\]An important trick we add here is to remove subspace directions that are already well-represented in the original LLM embedding. Formally for a query $q$:

\[\begin{equation} \Theta_{l,help}^{align}(q) := \left\{\,\theta \in \Theta_l^{align} \,\middle|\, \cos\left(\Phi_l(q),\theta\right) \leq 0 \right\}, \end{equation}\]This prevents any single direction from dominating the editing process and ensures we only add necessary new directions to the embedding space.

Step 3: Edit Embeddings During Inference

Finally, during inference when generating a new response, we modify the model’s hidden representations by projecting them in the direction of the alignment subspace $\Theta_l^{align}$. Our editing process is as follow:

\[\begin{aligned} \hat{x}_l &\leftarrow x_l,\\ \text{for each } \theta_l \in \Theta_l^{align}:\quad \hat{x}_l &\leftarrow \hat{x}_l + \alpha\,\sigma\!\bigl(\langle \hat{x}_l, \theta_l \rangle\bigr)\,\theta_l, \end{aligned}\]where $\sigma(\cdot)$ is an activation function and $\langle \cdot,\cdot \rangle$ denotes inner product. We iteratively adjust $\hat{x}_l$ by moving it toward or away from each direction $\theta_l$ in $\Theta_l$. We set $\sigma(\cdot)=\tanh(\cdot)$ with $\alpha = 1$, enabling smooth bidirectional scaling bounded by $[-1,1]$.

Why It Works: The Intuition

The core insight behind AlignEZ is that alignment information already exists within the pre-trained model - we just need to find it and amplify it.

Think of it like a radio signal. The alignment “station” is already broadcasting inside the model, but it’s mixed with static. Traditional methods try to boost the signal by retraining the entire radio (expensive!). AlignEZ instead acts like a targeted equalizer that simply turns up the volume on the channels where the alignment signal is strongest.

For more detailed explanation of our method with (more) proper mathematical notations, check out our paper!

How Does AlignEZ perform?

Our experiments reveal that AlignEZ achieves strong alignment gains with a fraction of the computational resources traditionally, significantly simplifies multi-objective/pluralistic alignment process, is compatible with and expedites more expensive alignment algorithms, and

Result 1: Boosting pre-trained Models Alignment Gain

We use the standard alignment automatic evaluation, GPT as a judge evaluation (Zheng et al., 2023), and measure $\Delta\%$, defined as Win Rate (W%) subtracted by lose rate (L%) against the base models.

AlignEZ delivers consistent improvements, achieving positive gains in 87.5% of cases with an average ∆% of 7.2%– showing more reliable performance than the test-time alignment baselines 75% for ITI and 56.3% CAA. Perhaps the most significant advantage? AlignEZ accomplishes all this without requiring ground-truth preference data—a limitation of both ITI and CAA.

Result 2: Enabling Fine-Grained Multi-Objective Control

Next, we test AlignEZ’s capacity for steering LLMs to multiple preferences at once. We test for two key abilities: (1) fine-grained control across dual preference axes (demonstrating precise regulation of each axis’s influence), and (2) ability to align to 3 preferences simultaneously. Following the setup from (Yang et al., 2024), we evaluate on three preference traits: helpfulness, harmlesness, humorous.

On fine-grained control, we we modulate the steering between two preference axes by applying weight pairs ($\alpha$, 1 − $\alpha$), where $\alpha$ ranges from 0.1 to 0.9 in increments of 0.1.

The result above shows that for uncorrelated preferences, (helpful, harmless) and (harmless, humor), AlignEZ successfully grant fine-grained control, as shown by the rewards that closely tracks the weight pairs ($\alpha$ and (1-$\alpha$)), showing precise tuning capabilities. Steering between correlated preference pair (helpful, harmless), however, shows limited effect. When we attempt to increase one while decreasing the other, their effects tend to counteract each other, resulting in minimal net change in model behavior.

On steering across three preference axes at once, we can see that AlignEZ can simultaneously increase the desired preferences–even outperforming RLHF-ed model prompted to generate these characteristics on the harmless and helpful axes.

Result 3: Expediting More Expensive Algorithms

Wait, there’s more? yes! We also show that AlignEZ is compatible with classic, more expensive alignment techniques–even giving them significant boost. We show above that AlignEZ is able to lift the performance of a model trained with only 1% of the data to reach the performance of that trained on 25% of the data.

Result 4: (PoC) Improving Powerful Reasoning Models

With this set of results showing AlignEZ’s efficacy on alignment tasks, given its cost efficient and practical nature, we are excited about the possibility to extend it to more challenging tasks– ones that requires specialized knowledge such as mathematical reasoning and code intelligence. As a first step in this direction, we perform a proof of concept experiment, applying AlignEZ on multi-step mathematical reasoning benchmarks.

Surprisingly, even when starting from a strong reasoning model, vanilla AlignEZ provides improvements! We attribute these gains to the identified subspace, which appears to strengthen the model’s tendency toward step-by-step reasoning while suppressing shortcuts to direct answers.

Concluding Thoughts and What’s Next?

AlignEZ represents a paradigm shift in how we approach LLM alignment. By leveraging self-generated preference data and targeted embedding editing, we’ve demonstrated that effective alignment doesn’t require massive datasets or expensive fine-tuning cycles. Our approach offers several key advantages:

-

Resource Efficiency: AlignEZ works at inference time with minimal computational overhead, making it accessible to researchers and developers with limited resources.

-

Versatility: From single-objective alignment to complex multi-preference scenarios, AlignEZ provides flexible control without sacrificing performance.

-

Compatibility: As shown in our DPO experiments, AlignEZ can complement existing alignment techniques, accelerating their effectiveness even with limited training data.

-

No Preference Bottleneck: By generating its own preference data, AlignEZ removes one of the most significant bottlenecks in the alignment pipeline.

Our promising results open several exciting avenues for future research. Most obvious next direction to explore is domain-specific alignment– extending our proof-of-concept reasoning experiments, we plan to investigate how AlignEZ can enhance performance in specialized domains like medical advice, legal reasoning, and scientific research.

📜🔥: Check out our paper! https://arxiv.org/abs/2406.03642

💻 : Code coming soon! Stay tuned for our GitHub repository.

]]>What is Weak-to-Strong Generalization?

Weak-to-strong generalization describes a scenario in which a strong model, trained on pseudolabels or outputs provided by a weak model, achieves superior performance compared to the weak model itself. In such settings, the weak model is capable of making predictions on a broad range of data, while the strong model leverages these predictions as a foundation to learn additional, more complex aspects of the data. This phenomenon is critical in contexts such as data-efficient learning and has implications for building more advanced, robust systems.

Data-Centric View: Overlap Density

A key insight from our work is that the potential for weak-to-strong generalization is driven by the overlap density in the data. Overlap density quantifies the proportion of data points that contain two complementary types of informative patterns: one that the weak model can readily capture and another that requires the capacity of a strong model. More formally, consider a dataset where each input ( x ) can be decomposed as

[ x = [x_{\text{easy}}, x_{\text{hard}}], ]

with ( x_{\text{easy}} ) representing features that are easily learned by the weak model and ( x_{\text{hard}} ) representing the more challenging features. Based on this decomposition, data points can be partitioned into three regions:

- Easy-only points: Points containing only ( x_{\text{easy}} ).

- Hard-only points: Points containing only ( x_{\text{hard}} ).

- Overlap points: Points containing both ( x_{\text{easy}} ) and ( x_{\text{hard}} ).

Overlap density is defined as the proportion of overlap points in the dataset. These overlap points are critical because they serve as a bridge: the weak model can accurately label them using the easy features, while the strong model can leverage these labels to learn the challenging features. This mechanism lays the foundation for a strong model to effectively leverage supervision signals from a weak model.

Overlap Detection in Practice

However, overlap density is not directly observable in real-world data since easy and hard features are not easily defined. To overcome this challenge, we developed an overlap detection algorithm that operates in two main steps:

-

Confidence-Based Separation:

The algorithm first uses the weak model’s confidence scores to separate data points. Typically, points where the weak model is less confident are likely to lack the patterns it can easily capture. By thresholding these confidence scores (using methods like change-point detection), we identify a subset of points that are likely to be dominated by the challenging patterns. -

Overlap Scoring: After the first step, we identify two groups: hard-only points (low confidence) and non-hard-only points (high confidence). Our next goal is to pinpoint the overlap points within the non-hard-only group. We achieve this by defining overlap scores based on the distance to the hard-only points. The intuition is that overlap points are closer to hard-only points because they contain some of the challenging features that easy-only points lack. Thus, among the non-hard-only points, those with small distances to the hard-only points are classified as overlap points.

This procedure yields an estimate of the overlap density in a dataset, which is crucial for understanding and enhancing weak-to-strong generalization.

Data Source Selection for Maximizing Overlap Density

Beyond estimating overlap density within a single dataset, our approach extends to selecting the best data sources from multiple candidates. The idea is to prioritize sources that exhibit a high overlap density, as these are more likely to provide supervisory signals that enable the strong model to learn the challenging aspects of the data.

Our UCB-based (Upper Confidence Bound) data selection algorithm works as follows:

- Initialization:

- Sample a small batch from each available data source.

- Apply the overlap detection algorithm to estimate the overlap density for each source.

- UCB Computation:

- For each source, calculate an upper confidence bound on its overlap density, balancing exploration (sampling less-visited sources) with exploitation (focusing on sources with high estimated overlap).

- Iterative Selection:

- Over several rounds, select the data source with the highest UCB score.

- Sample additional data from that source, update the overlap density estimates, and repeat the process.

Our experiments on datasets such as Amazon Polarity and DREAM demonstrate that this UCB-based strategy consistently identifies data sources with higher overlap density. When training the strong model with data selected through our algorithm, we observe improved generalization performance compared to using randomly sampled data.

Concluding Thoughts: A Path to Superintelligence

Our exploration of weak-to-strong generalization through the lens of overlap density paves the way for continuous, iterative enhancements in model performance. By identifying and leveraging data points that exhibit both easily captured and more challenging patterns, we can significantly boost the effectiveness of weak supervision. This data-focused strategy—prioritizing optimal data sources above all else—may prove crucial for developing truly advanced systems.

Weak-to-strong generalization may hold the key to achieving superintelligence. As AI evolves, the pool of human experts capable of providing meaningful supervision is shrinking. For instance, as mathematicians are increasingly tasked with annotating complex math problems, the scarcity of such expertise becomes a significant bottleneck. In the long run, efficient supervisory signals will be essential, making a deep understanding of weak-to-strong generalization vital.

Moreover, this process mirrors human academia—learning from imperfect past knowledge, generalizing it, and continuously pushing the boundaries forward. We believe our study on weak-to-strong generalization offers a principled pathway for scientific discovery.

To delve further into our theoretical analysis and experimental findings, please refer to our paper at https://arxiv.org/abs/2412.03881 and explore our implementation on GitHub at https://github.com/SprocketLab/datacentric_w2s.

]]>

Zero-shot models are impressive—they can classify images or texts they’ve never seen before. However, these models often inherit biases from their massive pretraining datasets. If a model is predominantly exposed to certain labels during training, it may overpredict those labels when deployed in new tasks. OTTER (Optimal TransporT adaptER) addresses this challenge by correcting label bias at inference time without requiring extra training data.

In our recent work, we introduce OTTER, a lightweight method that rebalances the predictions of a pretrained model to better align with the label distribution of the downstream task. The key insight is to leverage optimal transport—a mathematical framework for matching probability distributions—to adjust the model’s output.

The Core Idea: Classification = Transporting Mass from the Input Space to the Label Space

OTTER reinterprets classification as the problem of transporting probability mass from the input space to the label space. In a traditional zero-shot classifier, given a set of \(n\) data points \(\{x_1, x_2, \dots, x_n\}\), the model outputs scores \(s_\theta(x_i, j)\) for each class \(j \in \{1, \dots, K\}\). Typically, we assign each data point the label corresponding to the highest score (i.e. \(\hat{y}_i = \arg\max_{j} s_\theta(x_i, j)\)).

OTTER, however, views these scores as indicating how much “mass” should ideally be transported from each data point \(x_i\) to a class \(j\). We first represent the empirical distribution of inputs as

\[\mu = \frac{1}{n} \sum_{i=1}^{n} \delta_{x_i},\]and we prescribe a target label distribution

\[\nu = (p_1, p_2, \dots, p_K),\]with \(\sum_{j=1}^{K} p_j = 1\). The goal is to reassign the mass from the input points to the classes so that the overall distribution of predicted labels matches \(\nu\).

This is achieved by formulating an optimal transport problem. We define a cost matrix \(C\) where each element is given by

\[C_{ij} = -\log s_\theta(x_i, j).\]This cost function naturally penalizes lower prediction scores, so moving mass to classes with higher scores incurs a lower cost. Then, OTTER solves for a transport plan \(\pi\) via

\[\pi = \arg\min_{\gamma \in \Pi(\mu, \nu)} \langle \gamma, C \rangle,\]where \(\Pi(\mu, \nu)\) denotes the set of all joint distributions whose marginals are \(\mu\) and \(\nu\). In other words, the plan \(\pi\) determines how to reassign the input mass such that exactly \(n \cdot p_j\) points are assigned to class \(j\).

By computing the optimal \(\pi\) and then taking

\(\hat{y}_i = \arg\max_{j} \pi_{ij},\) OTTER produces modified predictions that not only reflect the model’s confidence (through the cost structure) but also enforce the desired label distribution. When the target distribution \(\nu\) is chosen to match the true downstream distribution, this procedure effectively corrects for the bias introduced during pretraining.

The theoretical results in the paper show that if the cost matrix were derived from the true posterior (i.e. \(-\log P(Y = j \mid x_i)\)), then the optimal transport solution would recover the Bayes-optimal classifier. Since the true target distribution is typically unknown, OTTER uses an estimated downstream label distribution to rebalance the predictions accordingly.

Theoretical Underpinnings

A key theoretical insight is that under mild conditions, OTTER recovers the Bayes-optimal classifier. Specifically, if the true target probabilities are \(P_t(Y = j \mid X = x_i)\), then OTTER’s predictions:

\(\hat{y}_i = \arg\max_{j \in [K]} \pi_{ij},\) will match the Bayes-optimal decisions:

\[f_t(x_i) = \arg\max_{j \in [K]} P_t(Y = j \mid X = x_i).\]Moreover, our analysis provides error bounds using perturbation theory—bounding the sensitivity of the transport plan with respect to deviations in both the cost matrix and the target distribution. This ensures that OTTER is robust in practical settings, even when the label distribution estimate is slightly noisy.

Experiment Results

We evaluated OTTER on a diverse set of image and text classification tasks, and our findings reveal several key benefits:

- Improved Zero-Shot Performance:

OTTER consistently boosts zero-shot classification accuracy, achieving an average improvement of about 4.8% on image tasks and up to 15.9% on text tasks across a variety of datasets.

- R-OTTER — Online Adaptation:

OTTER requires a potentially large batch size during prediction to function effectively. Our online variant, R-OTTER, overcomes this challenge by learning reweighting parameters from the model’s own pseudo-labels on a validation set, enabling real-time adjustments in dynamic environments without relying on additional labeled data.

- Mitigating Selection Bias:

Selection bias in LLMs refers to their tendency to favor certain answer choices in multiple-choice questions (MCQs). OTTER effectively mitigates this bias by providing a simple yet effective mechanism to ensure a more balanced and representative distribution of model outputs.

Why It Matters for Practitioners

For practitioners deploying zero-shot models, OTTER offers:

- Ease of Use: A tuning-free, plug-and-play method that adjusts predictions on the fly.

- Robust Performance: Strong theoretical guarantees and consistent improvements across various datasets.

- Flexibility: Extensions like R-OTTER enable online adjustments and can incorporate label hierarchy information to further refine predictions.

Concluding Thoughts

OTTER offers a practical approach to mitigating label bias in zero-shot models, enhancing their reliability and adaptability in real-world applications. Check out our paper: https://arxiv.org/abs/2404.08461 and code on GitHub: https://github.com/SprocketLab/OTTER.

Thank you for reading!