The post Jellyfish Can’t Swim in the Night Scape Story Experience Guide first appeared on STYLY.

]]>Experience Period: Saturday, October 18, 2025 – Tuesday, March 31, 2026

Recommended Experience Location: Shibuya area

*Available nationwide, but experiencing it in Shibuya, the anime’s setting, is especially recommended.

Experience Method: STYLY App (iOS/Android)

Ticket Price: ¥2,200 (tax included) / Some free AR experiences also available

Ticket Purchase Page (Special Website): https://styly.cc/yorukura-scape-story/

Duration: Approximately 1-2 hours (including travel)

About the STYLY App

To experience the content, you need to download the STYLY app.

Please download from each app store.

AppStore

https://apps.apple.com/jp/app/id1477168256

GooglePlay

https://play.google.com/store/apps/details?id=com.psychicvrlab.stylymr

Notes

- Data charges for app downloads and AR experiences are the customer’s responsibility

- Please note that some smartphone models may not support the experience

- Network connection is required for the experience

Please refer to the following for AR-compatible devices.

iPhone, iPad

https://www.apple.com/jp/augmented-reality/

Android

https://developers.google.com/ar/discover/supported-devices#google_play_devices

Experience Overview

In “Jellyfish Can’t Swim in the Night – Scape Story -“, you can enjoy the following two types of content.

“Jellyfish Can’t Swim in the Night – Scape Story -” Main Story

A fully-voiced story with an original 10-episode scenario

JELEE Photo Session

A feature that allows you to take original photos with JELEE members, also available during the main story experience

“Jellyfish Can’t Swim in the Night – Scape Story -” Main Story

The main story consists of two parts.

Audio Part: Part where you move while listening to audio

AR Part: Part where you experience AR at recommended locations

*AR experiences are available anywhere nationwide (no location restrictions), but experiencing it in Shibuya is especially recommended.

Please Read First

- The content size is approximately 300MB, so please be sure to follow the “Pre-download & Authentication” steps below *The main story cannot be played without performing “Pre-download & Authentication”

- Please close other apps before implementing pre-download & authentication

- Wearing earphones or headphones is strongly recommended for deep immersion in the story

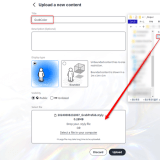

Implementing Pre-download & Authentication

When you press the button, batch downloading of content will begin. Since it’s approximately 300MB, please be mindful of your device’s storage capacity and network environment.

Steps for implementing pre-download & authentication

- Tap the [Pre-download & Authentication] button

- Content download will start

- When “Authenticate” is displayed, tap the authenticate button

- When the “Authenticated” message is displayed, you’ve succeeded

- Tap the menu button at the bottom right, then tap the exit scene button

If Pre-download & Authentication Results in an Error

Tap the [Approve] link below the [Pre-download & Authentication] button to authenticate.

Experience Flow

This content is a story where you alternately enjoy a moving part where you walk while listening to audio content and an AR part where you enjoy AR content.

Audio Part

In the audio part, tap the [Listen to broadcast] button and head to the location that becomes the stage for the AR part while listening to the audio.

When the audio starts playing, tap the [Open map] button and move to the stage following the map guidance.

When the audio ends and you arrive at the stage, tap the [Move to details] button.

AR Part

When you arrive at the location, search for the stage background yourself while being considerate of people around you, then tap the AR start button and point your camera at the surroundings.

Audio will play, so please turn off silent mode if it’s on to enjoy the experience.

AR Port Feature Introduction

Tap the jellyfish icon to access ①Position adjustment ②Replay ③Subtitle ON/OFF settings.

①Position adjustment: Freely adjust the character’s display position

②Replay: Play from the beginning

③Subtitles: Toggle subtitle display on/off

JELEE Photo Session

“JELEE Photo Session” is a special feature exclusive to “Scape Story” that allows you to take photos with JELEE members. You can change size, position, and effects to create one-of-a-kind original photos.

*The free version can be tried from the top page of the special website.

*The free version has some character and feature restrictions.

Tap the [Launch camera] button.

You can show/hide your favorite characters (paid version allows multiple character selection), change position, change size, as well as display grids, adjust character front position, adjust lights and shadows, and customize filters.

Precautions During AR Experience

- Please be careful not to obstruct other pedestrians or vehicle traffic

- Walking while looking at your phone is dangerous, so always stop in a safe location when operating the screen

- Please be mindful of steps and obstacles around you during the experience

- Photography and experiences may be restricted in some areas such as commercial facilities or private property. Please enjoy according to local rules

When Experiencing Outside Shibuya

The main story and JELEE Photo Session are available anywhere nationwide.

All maps displayed during the experience show various spots in Shibuya, the anime’s setting.

After experiencing it near your home, we would appreciate it if you could also experience it in Shibuya when you have the opportunity.

How to Experience with XREAL AR Glasses

By connecting your smartphone or tablet to XREAL, you can enjoy the main story of “Jellyfish Can’t Swim in the Night – Scape Story -“.

*Cannot be experienced with smartphones/tablets that cannot insert Type-C cables.

Recommended Devices

XREAL Air2 Pro, XREAL ONE series

Setup Method

Launch the main story of “Jellyfish Can’t Swim in the Night – Scape Story -” and tap the AR glasses icon from the function button.

When the camera background turns black, connect your XREAL.

Recommended Usage Method

• XREAL Device Display Mode Settings

Experience through smartphone screen mirroring

*Cannot be experienced with smartphones/tablets that cannot insert Type-C cables

*Use in 0DoF state (follow mode)

*3DoF or 6DoF spatial fixed modes are not recommended

Optimal Viewing Position

Start AR playback on your smartphone/tablet screen and perform AR positioning with the “Position adjustment” button. The optimal experience is achieved when the smartphone is positioned at chest height.

Device Fixation Method

Hold the smartphone so it moves together with your body. Or fix it with a neck band-type smartphone holder.

*Fixing enables a more stable experience

Notes

Depending on the user’s usage environment, it may not display correctly.

Contact Information

Customer Support

[email protected]

The post Jellyfish Can’t Swim in the Night Scape Story Experience Guide first appeared on STYLY.

]]>The post How to set screen rotation for each scene in the STYLY mobile app first appeared on STYLY.

]]>However, depending on the experience design, you may want to lock the screen orientation to “portrait” or “landscape” for each scene.

For example, if the scene includes a UI, supporting both portrait and landscape modes requires separate UI designs and implementations, which increases production costs.

By locking the screen orientation, you can focus on designing the UI for either portrait or landscape mode only, thereby reducing production costs and effort.

How to Set Screen Orientation

Here’s how to set the screen orientation for each scene.

Open your scene in STYLY Studio.

Click the gear icon in the hierarchy menu.

Select the screen orientation you want to lock.

- Auto: Automatically switches based on the device’s orientation

- Portrait: Always displayed in portrait mode

- Landscape: Always displayed in landscape mode

By configuring this setting in STYLY Studio, you can lock the screen orientation of scenes played on the mobile app.

This setting ensures that the scene is displayed in the specified orientation (portrait or landscape) regardless of the device’s rotation lock setting.

When Locked to Portrait

The UI in the scene is always displayed in portrait mode.

Even if the user holds their smartphone horizontally, the UI will remain in portrait mode.

When Locked to Landscape

The UI in the scene is always displayed in landscape mode.

Even if the user holds their smartphone vertically, the UI will remain in landscape mode.

The post How to set screen rotation for each scene in the STYLY mobile app first appeared on STYLY.

]]>The post How to use STYLY World Canvas | How to create AR content using 3D maps first appeared on STYLY.

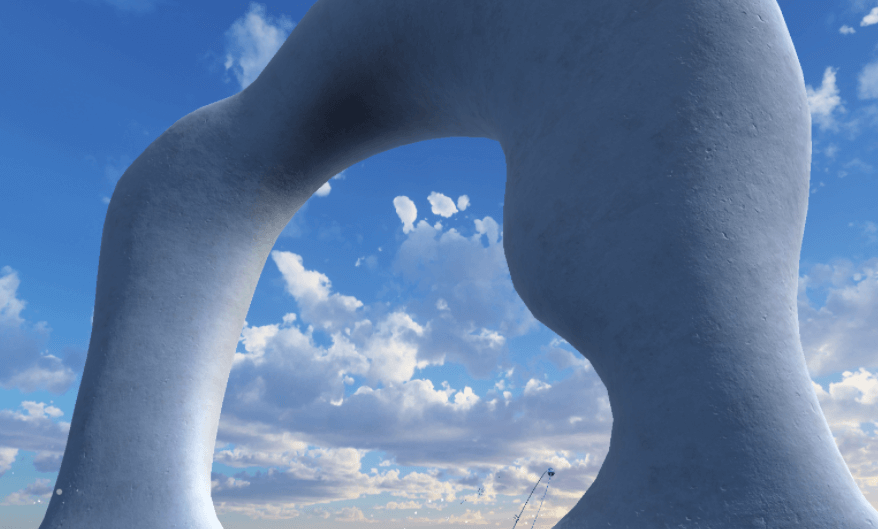

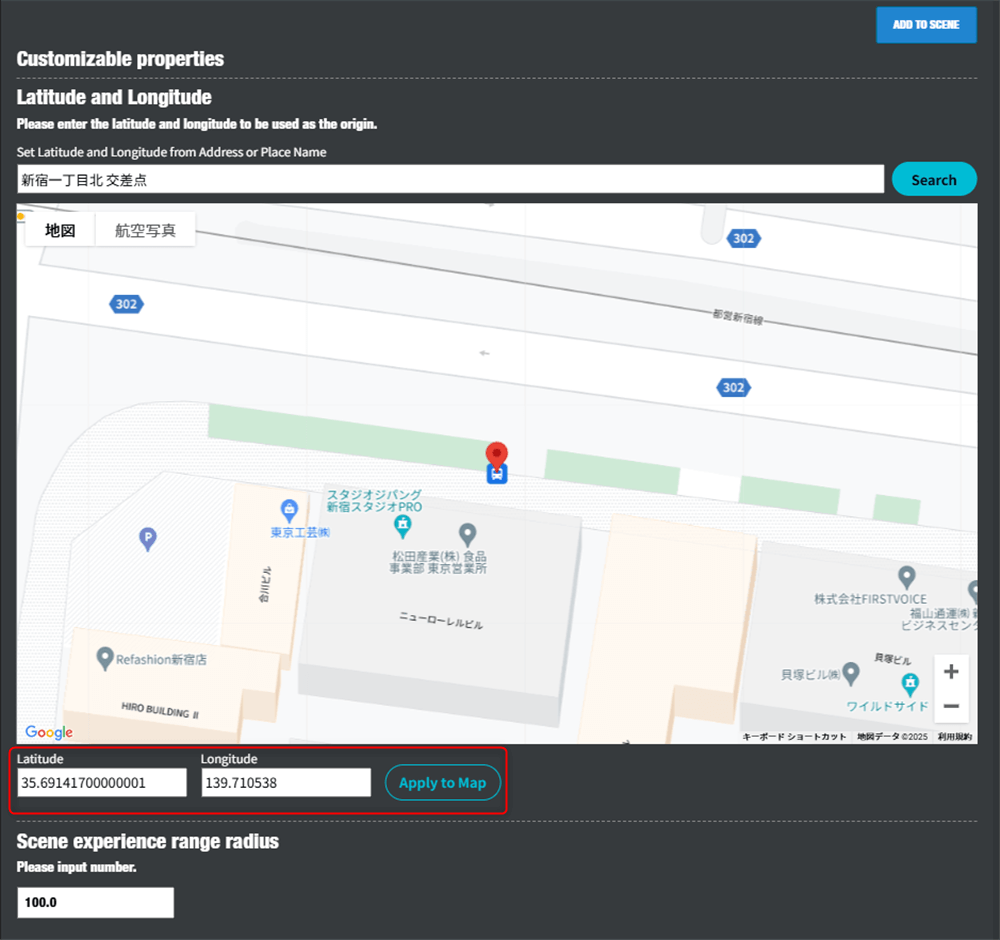

]]>STYLY World Canvas is a feature that automatically loads 3D map data from around the world when you place the City Anchor asset in STYLY Studio, allowing you to build precise location-based XR spaces. Based on real map data, you can place assets aligned with buildings and roads, enabling seamless integration with real cities. It supports global projects and tourism-related experiences, as well as urban XR expressions that reflect local culture and characteristics.

How to use STYLY World Canvas

When creating a new scene in STYLY Studio, select the AR template.

From the Function section in the asset selector, place “AR on City” and “City Anchor” into the scene. For how to use City Anchor, please refer to the article below.

To use the World Canvas feature, you need to place both “AR on City” and “City Anchor” assets in the scene

Once these two assets are placed in STYLY Studio, 3D map data will be loaded automatically. You can hide the 3D map data by unchecking “Show 3D Map Tiles” in the Map Mesh display at the top right.

Place assets according to the location.

Apply occlusion to buildings

You can apply occlusion to buildings by combining it with the City Occlusion asset.

Notes

Cannot be used with city templates

City templates that can be selected when creating a new scene already contain 3D model data of the city, so they cannot be used together with STYLY World Canvas.

Locations where use is difficult

- Highly dynamic areas: In places like construction sites or temporary event venues where terrain and structures change frequently, discrepancies between map data and actual conditions may make accurate display difficult.

- Locations far from roads: Since positioning is estimated based on road data, accuracy tends to decrease in areas that are far from road networks.

- Areas with many trees or outdoor natural environments: In places where trees block the view, GPS and sensor accuracy may drop, making it difficult to determine the correct position.

- Multi-layered structures: In areas such as underpasses, underground spaces, or around buildings with multiple floors, the user’s floor level may not be correctly recognized, and content may be displayed at the wrong position.

The post How to use STYLY World Canvas | How to create AR content using 3D maps first appeared on STYLY.

]]>The post Visual Scripting: How to display date and current time with DateTime first appeared on STYLY.

]]>What is DateTime?

In Unity, DateTime is a class provided by C#’s standard library for handling date and time information. It is useful for managing time.

Preparation

- Place an empty GameObject in Unity’s scene.

- Attach “Script Machine” to it from Add Component and create a new “Script Graph”.

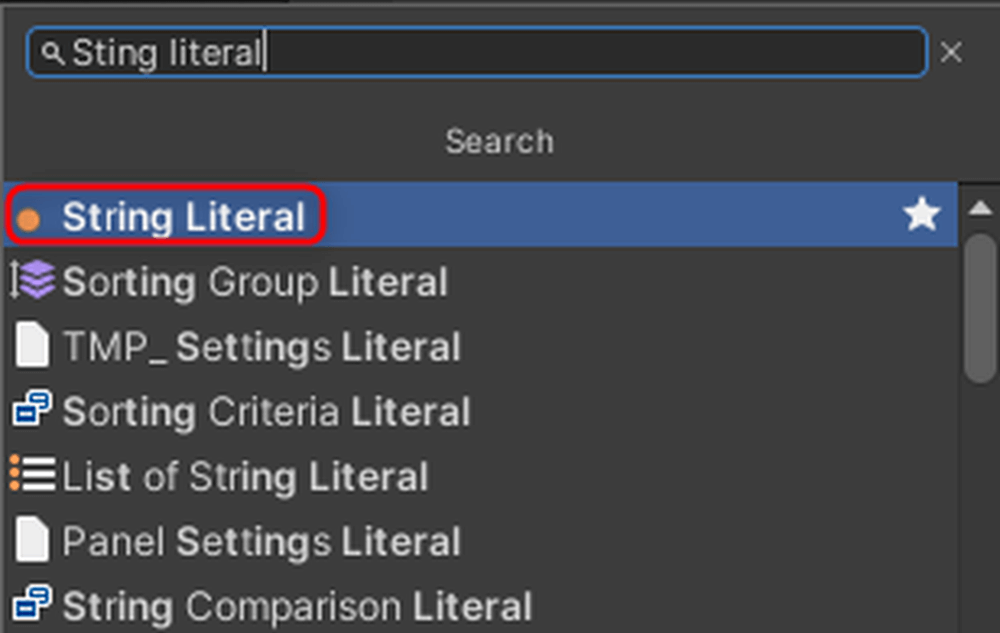

- Open Menu → Edit → Project Settings, select Visual Scripting, open TypeOption, and press the + button in the lower left.

- Add DateTime and TimeSpan, then press Regenerate Nodes.

Get and Display the Current Time in Console

The following nodes can be used to display the current time:

- Date Time: Get Now

- Using this, you can get the current time.

- Then, convert it to a String type and display it in the Console using Debug.Log.

Point: ToString is not necessary, but it is added to facilitate text display.

Extract Year, Hour, Minute, and Second from the Current Time

Year: Get Year

Hour: Get Hour

Minute: Get Minute

Second: Get Second

These nodes allow you to obtain individual time components.

Specify a Format for Time Display

- Add the Date Time: To String (Format) node.

- Enter the desired output format in the “Format” field. For example:

- yyyy/MM/dd H:mm:ss

You can specify the output format in the Format field.

For this example, we will use [yyyy/MM/dd H:mm:ss].

| yyyy | Year |

| MM | Month |

| dd | Day |

| H | Hour |

| mm | Minute |

| ss | Second |

The output follows the specified format. For example, if “yyyy” is changed to “yy”, 2025 will be displayed as 25.

After execution, the current time is displayed in the Console.

Shift Time from the Current Time

You can shift the time by adding Time Span to Date Time.

Required Nodes

- Add Time Span Create: Time Span (Day, Hour, Minutes, Seconds).

- For example, set it to shift the current time by 1 hour.

In this case, we shift the current time by 1 hour.

When executed, the time shifted by 1 hour is obtained.

The time was successfully shifted by 1 hour.

Conclusion

By using Visual Scripting, you can easily manipulate date and time information. The methods introduced in this article allow you to display time, customize formats, and even perform time calculations. Apply these techniques to implement timers or event scheduling in your game.

The post Visual Scripting: How to display date and current time with DateTime first appeared on STYLY.

]]>The post Create cinematic effects with Unity’s Cinemachine! How to move objects freely with Dolly Cart first appeared on STYLY.

]]>This time, we will introduce how to use CinemaScene in STYLY.

What is CinemaScene?

Cinemachine is a powerful tool in Unity that makes it easy to create cinematic camera effects. By using the “Cinemachine Virtual Camera,” you can intuitively set up various camera movements such as target tracking and smooth camera transitions.

Preparing CinemaScene

Open Unity’s menu bar and go to Window → Package Manager.

– Select Unity Registry and enter “Cinemachine” in the search bar at the top right.

– Select “Cinemachine” and install it.

Dolly Cart (Move an Object Along a Path)

Move an Object Along a Path Using Dolly Cart

- Place Dolly Track with Cart

- In the Hierarchy window, select “+” → Cinemachine → Dolly Track with Cart and place it in the scene.

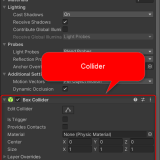

2. Prepare the Object to Move

- Place a “Cube” in the scene.

- Attach the “Cinemachine Dolly Cart” component to the Cube using Add Component.

* In this tutorial, we will show how to move any object along the track. Therefore, we won’t use the Dolly Cart that was placed automatically.

3. Set the Path

- Assign the “CinemachineSmoothPath” attached to the Dolly Track to the Path field of the “Cinemachine Dolly Cart” attached to the Cube.

- Set the Speed to “1”.

4. Enable Looping

- Select the Dolly Track and check the “Looped” option in the “CinemachineSmoothPath” settings.

5. Test the Movement

- When you run the scene, the Cube will move along the green rail (path).

Move the Cube in a Circular Path

1. Edit the Path

- In the Dolly Track’s “CinemachineSmoothPath” Waypoints, click “+” to add more points.

- Move the newly created points (spherical markers) to form a circular path.

2. Test the Movement

When you run the scene, the Cube will move along the circular path.

Upload the scene to STYLY and check the movement.

The scene is now successfully running in STYLY.

The post Create cinematic effects with Unity’s Cinemachine! How to move objects freely with Dolly Cart first appeared on STYLY.

]]>The post Visual Scripting: How to take advantage of Custom Events first appeared on STYLY.

]]>What is Custom Event?

In Unity’s Visual Scripting, a Custom Event is a mechanism that allows you to define and call custom events at any time. It is useful for communication between scripts and triggering specific processes.

This time, we will create a system where pressing a button changes the color of an object.

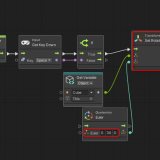

We will create a Script Graph with this structure.

The completed project can be downloaded from the following:

Preparation

Place the following objects in the scene:

- Cube (the object whose color will change)

- Button × 2 (buttons labeled “Red” and “Blue”)

Arrange the objects appropriately (refer to the sample layout).

Creating ChangeColorReceiver

1. Add a “Script Machine” component to the Cube.

2. Create a new Script Graph and name it ChangeColorReceiver.

3. Create String-type variables named “Change Red” and “Change Blue”.

Add the following nodes:

- Custom Event: Set an event name and configure it to receive a String-type argument.

- Get Material: Retrieve the Cube’s material.

- Change Color: Change the material color based on the received argument.

Creating ChangeBlueGraph and ChangeRedGraph

1. Add a “Script Machine” component to each button.

2. Create the following Script Graphs for each button:

- For Red: ChangeRedGraph

- For Blue: ChangeBlueGraph

3. Create a GameObject-type variable named “ChangeColorCube” and assign the Cube to it.

Add the following nodes:

On Button Click: Starts the process when the button is clicked.

Call Custom Event: Input the String-type variable names “ChangeRed” and “ChangeBlue” from the ChangeColorReceiver creation step into the CustomEvent node (make sure to enter the spelling correctly).

Execution

Pressing the buttons will change the Cube’s color to either “red” or “blue”.

Conclusion

Custom Events are a useful mechanism in Visual Scripting that enable flexible communication between scripts. By leveraging this system, you can build simple and manageable event-driven systems.

The post Visual Scripting: How to take advantage of Custom Events first appeared on STYLY.

]]>The post STYLY for Vision Pro: How to record and playback with a microphone ,Easy implementation with Unity Visual Scripting first appeared on STYLY.

]]>Place the Microphone

1. Place a Cube in the Hierarchy for the microphone.

2. Add the following components to the Cube:

- AudioSource (Add via Add Component)

- ScriptMachine (Add via Add Component)

To enable the Visual Scripting nodes used in this tutorial, open Edit → Project Settings.

Open TypeOptions under the Visual Scripting tab.

Scroll to the bottom, press the + button, and add a new option.

Add Microphone.

Press Regenerate Nodes to rebuild the options.

Preparing for Recording

1. Create a new ScriptGraph in ScriptMachine.

- Example: “Voice Recorder”

2. Add the following variable to ObjectVariable:

- Name: AudioSource

- Type: “AudioSource”

3. Assign the AudioSource from the Cube in the Hierarchy to the AudioSource variable by dragging and dropping.

4. Add the following components to the Cube:

- XR Grab Interactable

- XR Poke Filter

- Add settings to the Interactable Filter in XR Grab Interactable’s Hover option.

- For more details, refer to STYLY for Vision Pro: How to Easily Implement Button Operations with the Poke Feature.

Building the ScriptGraph

Add and connect the following nodes:

Access Vision Pro’s Microphone

- Use GetDevices and FirstItem nodes to get Vision Pro’s microphone.

- Assign the microphone name to the Device Name field in the Start node.

- Settings for the Start node:

- Length Sec: Recording duration (e.g., 5 seconds)

- Frequency: Sampling rate (e.g., 44100Hz)

Setting Recording Conditions

- Use GetPosition to get the volume level.

- Add a condition to skip recording if the volume is 0 or lower (i.e., no sound is detected).

Visual Feedback During Recording

- Create a new Material and attach it to the Cube.

- Use SetColor to indicate recording and playback states:

- Recording: Red

- Playback: Blue

Implementing Recording and Playback

Add and connect the following nodes:

- Use a Timer node to manage the recording time (5 seconds).

- Use an End node to stop recording.

- Use a Play node to play the recorded audio.

Node 1: xxxxx

Node 2: Full Graph

Run

You can now successfully record and play back audio.

Watch with audio here:

The post STYLY for Vision Pro: How to record and playback with a microphone ,Easy implementation with Unity Visual Scripting first appeared on STYLY.

]]>The post STYLY Modifier Manual first appeared on STYLY.

]]>First, in the overview section, we will cover everything from understanding the overall concept of modifiers to explaining their specific functions.

Overview

Modifiers are functions in STYLY Studio that allow you to add effects such as “animation” or “interaction” to assets.

These effects themselves are referred to as “Modifiers”.

Previously, adding such features in STYLY required the use of Unity or PlayMaker, but now anyone can easily add them without coding.

Modifiers added in STYLY Studio are compatible with the devices listed in the Modifier Operation Environment Table below. It is now easy to create effective scenes in VR/AR.

Modifier Operation Environment List

|

Layer |

Device |

Distribution App |

|

VR |

PCVR |

Steam |

|

VR |

Standalone VR |

Quest2 |

|

VR |

Mobile |

Android/iPhone/ |

|

AR |

Mobile |

Android/iPhone/ |

|

Web |

Web Browser |

Web Player |

Download List

Modifier Introduction

Here are some of the modifiers available in STYLY Studio.

Interaction

Interaction allows you to add modifiers that are useful when creating interactive works such as games.

Example: Make equippable

Style change

Style change allows you to alter the appearance of assets.

However, depending on the shape and other factors, the result may not appear as expected. (Texture mapping follows the object’s UV.)

Example: Stars

Animation

You can add motion such as rotation or movement to assets.

Example: Rotate

Humanoid Animation

Humanoid Animation is for models that support Unity’s humanoid animation format “Humanoid”.

You can apply animations to humanoid assets uploaded to STYLY Studio (*To use them, convert your asset to humanoid in Unity, create a prefab, and then upload it to STYLY).

Example: Breakdance Motion

STYLY is a platform where even non-engineer, non-programmer artists can easily create and publish VR/AR works.

For example,

“I can use Blender, but not Unity…”

“I don’t know how to program in Unity”

“I only know how to use Adobe software”

—even artists and creators like this can easily create animated and interactive works.

You can also upload and use 3D models made in 3DCG software like Blender, image files such as JPG/PNG, and video files like .mp4.

Refer to the following article for how to upload.

Add modifiers to your uploaded assets and create your own unique VR/AR work!

Practical Section

In the practical section, you’ll learn how to actually use modifiers and try creating a simple scene.

You need to create an account in advance to use STYLY Studio.

Refer to the following article to create an account.

Access STYLY Studio

Let’s access STYLY Studio.

https://gallery.styly.cc/studio

Or,click the STUDIO button at the top right of the STYLY Gallery page.

Access STYLY Studio and select “Create Scene”.

If you want to create an AR scene, select AR Scene Template; if you want to create a VR scene, select VR Scene Template.

This time, we’ll select the VR Scene Template to create a VR scene.

Once you select a template, the scene will be displayed on the screen as shown below.

Scene

You’re now ready to go.

Try using Modifiers

Let’s actually place an asset in the scene and get used to using Modifiers.

First, add an asset.

Click the “Asset button” in the top left menu bar.

[caption id="attachment_57520" align="aligncenter" width="1000"] Asset button

Asset button

The asset menu will appear. Select “3D Model” → “Model”.

Choose any 3D model you like.

This article uses “Leather Sofa Wine Red”.

Once you select the 3D model, it will be placed in the scene.

When you select the model, it will be highlighted.

In that state, click the Modifier icon.

A list of modifiers will appear.

Scroll to view various modifiers.

You can also use the search bar labeled “search…” at the top.

Use this when searching for specific modifiers.

This time, we’ll add the “Rotate” animation modifier to the sofa.

Type “Rotate” into the search bar to find the animation modifier.

Click [Animation] Rotate.

The sofa object will start rotating.

Additionally, a modifier settings panel will appear below the object icon.

Modifier List

Modifiers offer a variety of effects.

You can even create simple game-like scenes without any coding. Be sure to try out different options!

Interaction

You can equip objects to the controller, make them grabbable, or enable object destruction.

[Interaction] Make equippable

You can equip an object to the controller.

You can equip an object to the controller

[Interaction] Make draggable

Allows you to grab and move the object.

Allows you to grab and move the object

[Interaction] Make breaker / Breakable

When a Breaker object collides with a Breakable object, it destroys the Breakable object.

When a Breaker object collides with a Breakable object, it destroys the Breakable object

Style Change

You can change the appearance of objects.

However, depending on the shape, it may not look as expected. (Texture mapping follows the UVs of the object.)

[Style Change] Stars

Changes the appearance to a star pattern

Star color: Change the color of the stars

Background color: Change the background color

Number of stars: Change the number of stars per row and column

[Style Change] Rim light

Changes the appearance to a glowing rim light effect

Light Color: Change the light color

Intensity: Adjust the light intensity

[Style Change] Gradient Color

Changes the appearance to a gradient color

Start color: Set the starting color

End color: Set the ending color

[Style Change] Dots

Changes the appearance to a polka dot pattern

Dot Color: Change the dot color

Background Color: Change the background color

Number of dots: Change the number of dots per row and column

[Style Change] Change Color

Changes the overall color of the appearance

Color: Change the overall appearance color

[Style Change] Checker board

Changes the appearance to a checkerboard pattern

Color 1: Change the color of one set of squares

Color 2: Change the color of the other set of squares

Number of squares: Change the number of squares per row

[Style Change] Wood

Changes the appearance to a wood grain pattern

[Style Change] Rock

Changes the appearance to a rocky texture

[Style Change] Marble

Change the appearance to marble

[Style Change] Lava

Change the appearance to lava

Animation

You can add movement such as rotation and translation to objects.

[Animation] Rotate

Rotate the object

Angular Velocity X: Change rotation speed on X-axis

Angular Velocity Y: Change rotation speed on Y-axis

Angular Velocity Z: Change rotation speed on Z-axis

[Animation] Heartbeat

Make the object expand and contract in a steady rhythm

Beat Duration: Rhythm interval

Hold Duration: Time of size transition

Amplitude: Magnitude of expansion and contraction

[Animation] Orbit

Make the object rotate in a circular orbit

Radius: Change the orbit diameter

Angle Velocity X: Rotation speed on X-axis

Angle Velocity Y: Rotation speed on Y-axis

Angle Velocity Z: Rotation speed on Z-axis

[Animation] Go and back

Add animation to move back and forth between initial position and a relative destination

Destination X: Change X coordinate of destination

Destination Y: Change Y coordinate of destination

Destination Z: Change Z coordinate of destination

Trip time: Change one-way travel time

[Animation] Move straight

Add animation to move in a specified direction at a constant speed

Velocity X: Change speed on X-axis

Velocity Y: Change speed on Y-axis

Velocity Z: Change speed on Z-axis

[Animation] Go and back like spiral

Add animation to follow a spiral path

Velocity X: Speed along spiral X-axis

Velocity Y: Speed along spiral Y-axis

Velocity Z: Speed along spiral Z-axis

Trip time: Time to reach destination

Radius: Change the spiral radius

Orbit angular velocity: Change spiral rotation speed

[Animation] Go and back like waves

Add animation that follows a wavy up-and-down path

Velocity X: Movement speed on X-axis

Velocity Y: Movement speed on Y-axis

Velocity Z: Movement speed on Z-axis

Trip time: Time to reach destination

Wave height: Change height of the wave motion

Wave period: Change speed of the wave oscillation

[Animation] Move to loop

Add animation that repeatedly moves the object in a straight line relative to its initial position

Destination X: Change X coordinate of destination

Destination Y: Change Y coordinate of destination

Destination Z: Change Z coordinate of destination

Duration: Time to reach the destination

Humanoid Animation

Humanoid Animation supports models using Unity’s Humanoid animation format.

To use it, set the model as Humanoid in Unity, prefab it, and upload to STYLY.

Humanoid Animation

[Humanoid Animation] Breakdancing Motion

Add a breakdancing animation

[Humanoid Animation] Standing

Add an animation of a standing pose

[Humanoid Animation] Sitting Laughing

Add an animation of sitting and laughing

[Humanoid Animation] Rumba dancing

Add a rumba dance animation

The post STYLY Modifier Manual first appeared on STYLY.

]]>The post Manual for Creating AR Cityscapes of Major Cities in Japan first appeared on STYLY.

]]>We will show you how to create, distribute, and experience these AR scenes in Tokyo, Osaka, Nagoya, Sapporo, Fukuoka, Kyoto,Kanazawa, Hiroshima and Niigata.

How to Create AR Cityscapes

Select a City Template

Access STYLY Studio to get started.

A STYLY account is required, so if you do not have an account, go to https://gallery.styly.cc/signup to sign up.

Log into STYLY Studio and click the “CREATE SCENE” button. Then, a list of templates will be displayed.

Select a city template.

Enter a title for your scene and click “CREATE”.

List of available city templates:

- Sapporo Odori Park (Hokkaido)

- Tokyo Shinjuku West Exit (Tokyo)

- Tokyo Shibuya Station (Tokyo)

- Niigata Furumachi (Niigata)

- Niigata Bandaijima (Niigata)

- Kanazawa Station (Ishikawa)

- Nagoya Station (Aichi)

- Kyoto Station (Kyoto)

- Osaka Shibatamachi (Osaka)

- Osaka Dotonbori (Osaka)

- Osaka Castle (Osaka)

- Hiroshima Station (Hiroshima)

- Fukuoka Tenjin Station (Fukuoka)

Using city templates to create AR scenes linked to the real world

In STYLY, you can create AR scenes using the default assets such as 3D models and effects.

You can also upload your own 3D models, images and videos to create scenes with more originality.

For more information on how to use assets provided in STYLY Studio and how to upload your own assets, read the following article:

How to import scenes and prefabs from Unity:

How to add animation to an asset using Modifiers:

Now, let’s place the assets in STYLY Studio.

We recommend putting them on the roof or walls of a building.

Realistic scale 3D city models

The 3D city models are the same size as real world buildings.

The assets will be placed in the same location in the real world as they are positioned in STYLY Studio.

3D city models are not displayed during the AR experience

The 3D city models will not be displayed in the AR experience, as they are only used as a guide for building your scene.

Preview your scene with Your Position

Your Position allows you to view the scene from the viewer’s perspective.

Click “reset position” at the right of STYLY Studio to view the AR scene from Your Position’s perspective.

When building a scene, you will be inspecting it from various angles and heights, so it may start lacking the viewer’s ground level perspective. Go back and forth in multiple views to make sure your scene turns out exactly how you expect it to be.

Experience the AR scene anywhere from designated areas

To experience AR scenes using a city template, you must go to the actual city’s location.

For the exact areas of each city, see paragraph “Be at the actual city location before launching the scene”.

Using Scenes and Prefabs from Unity

Read how to upload your Unity scenes and prefabs to STYLY Studio below:

Points to note when creating AR Cityscapes

Do not delete city assets

When creating scenes using a city template, three assets are placed in the scene: the AR on City asset, the 3D city model, and the 3D city anchor.

Do not delete these three assets. If you delete them, the scene will not work as expected.

Assets that should not be deleted:

・AR on City asset

・3D City Model

・3D City Anchor

In the case of this scene, do not delete the AR on City, Tokyo Shibuya Station, and Tokyo Shibuya Station Anchor assets

How to restore city assets if you accidentally delete them

If you accidentally delete a city asset, open the Asset Selector and select the asset from Function.

You can add AR on City, the 3D city model and 3D city anchor assets from here.

If you delete the AR on City asset, add the AR on City asset to your scene.

If you delete a 3D city model, add the 3D city model that was originally in your scene.

If you delete a 3D city anchor, add the 3D city anchor that was originally in your scene.

The 3D city model and the 3D city anchor must be the same location.

If you are using the 3D city model of Tokyo Shibuya Station, make sure to match it with the Tokyo Shibuya Station Anchor.

Do not place a Skybox in your scene

When using XR Cityscape Assets, do not place a Skybox in the same scene. That applies to all Skyboxes in the Environment asset page.

If you place a Skybox in a scene, the scene will automatically switch to VR mode and you will not be able to see the environment in AR.

Do not use with the AR on Sky asset

A city template and the AR on Sky asset cannot be used together.

For more information on how to use AR on Sky, refer to the following article:

How to experience the AR Cityscape Scene

Download and Install the STYLY Mobile App

Download STYLY for iOS

https://apps.apple.com/jp/app/id1477168256

Download STYLY for Android

https://play.google.com/store/apps/details?id=com.psychicvrlab.stylymr

Devices supporting AR Cityscapes

https://developers.google.com/ar/devices

AR Cityscapes will only work on devices supporting Depth API.

1. Launch the STYLY mobile app and tap on My Page.

2. Tap the “Log In” button to log in.

3. A list of the scenes you have created will be shown, so tap the AR scene you would like to experience.

4. Tap the Download button to download the scene in advance.

Be at the actual city location before launching the scene

Make sure to be at the location of your city template before launching your scene.

For example, to experience an AR scene using the Tokyo Shibuya Station template, you will need to be in front of Shibuya Station in Tokyo.

To see the areas available for creating AR cityscapes, refer to the maps below:

AR Cityscapes Supported Areas

Sapporo Odori Park

Tokyo Shibuya Station

Nagoya Station

Kyoto Station

Osaka Dotonbori

Fukuoka Tenjin Station

Niigata Furumachi

When you arrive at the location, launch the STYLY mobile app and tap the “Play” button.

Point your camera to the surrounding buildings for the app to recognize your current location. Now you can experience the AR scene in the city!

Start the scene on ground level

Make sure to be on ground level when you start the AR scene.

Launching an AR scene on the second floor or above or on a bridge will result in misalignment.

For questions about STYLY, bug reports, and improvement requests, please contact the STYLY FORUM: https://en.forum.styly.cc/support/discussions

For business inquiries, contact us from the link below:

https://styly.cc/contact

Cautions

It will not work properly under the following circumstances

- Roofed areas

- Indoor, arcade shopping street, underground, etc.

- Dark places

- At night and in areas with low outdoor lighting (the Shibuya Scramble Crossing is an exception because it is very bright even at night).

- Near the water’s edge

- Places with water surface reflections such as rivers, ponds, lakes, and oceans

The post Manual for Creating AR Cityscapes of Major Cities in Japan first appeared on STYLY.

]]>The post Article describing the JACKSON kaki’s scene using the Modifier feature first appeared on STYLY.

]]>I introduce the appreciation points of the scene, how he uses Modifier, and how he arranges Modifier.

About JACKSON kaki

JACKSON kaki (real name: Takaumi Arakaki) is an artist/creator who creates multi-media works such as VR/AR/MR, video, game, installation, sound art, and DJ, mainly 3DCG.

Focusing on “dimension” and “existence”, he finds the relationship between virtual space and real space in the post-Internet society.

In Japan

P.O.N.D. (PARCO MUSEUM. 2020)

AWSM ( HASSYADAI, 2020)

Yurakucho Wall Art Gallery (IDEA , 2021)

BUG4ASS ( THE PLUG, 2021)

International

DIO’ C’ E ( UltraStudio, Pescara, Italia 2020 )

Spring Attitude Festival ( EUR SOCIAL PARK, ROMA, Italia 2021 )

ARCHIVIO CONTEMPORANEO(TUBE,ROMA, Italia 2021)

(Quoted from NEWVIEW official website

https://newview.design/en/works/swiming-in-the-river )

Twitter : https://twitter.com/Kakiaraara

Instagram : https://www.instagram.com/kakiaraara

About “KANKEISEI”

This is an AR work.

I recommend that you experience it in a large space.

When you launch the work, you will see a combination of objects with motifs of human faces and bodies, and abstract structures.

In addition, the collapsed human face object moves in the space with physical expression.

The one that stands out the most is the collapsed figure dancing in the center.

The monstrous standing figure is distinctive.

This work uses the human body as a motif and depicts how it becomes an object with physical expression and animation.

Points where he uses Modifier

You can actually copy a scene on STYLY Studio and check it against the following explanations.

How to copy a scene on STYLY Studio

- First, please login to STYLY Gallery.

Select LOGIN in the upper right corner of the STYLY Gallery screen if you don’t login to STYLY Gallery.

- Click on the Copy button below. *You must be logged in to copy to your account.

- When the scene is added to the scene list on STYLY Studio, you finished copying.

Explanation

This section explains what modifiers are used in STYLY Studio.

A face object called Thisperson2 has Humanoid Animation added to it.

This face object has a humanoid bone attached to it in Blender, and then it has been made into a Humanoid in Unity and uploaded to STYLY.

By adding the Humanoid Animation Modifier, animation is applied to the face object.

Similarly, the humanoid object moving in the center also includes Humanoid Animation.

There are several types of Humanoid Animation. By changing the content of the animation, you can make the object move in a different way.

The Face2 object includes the Rotate Animation to rotate the object.

You can apply Animation to any object.

You can easily move the object you have uploaded.

Animation is also used for other objects.

Face1 includes Animation’s Heartbeat.

With Heartbeat, you can apply an animation that changes in size at a constant rhythm.

The “red objects” in this scene are all colored red by the Change Color of Modifier’s Style Change.

The three-dimensional object Abstract2 is not only turned red by Change Color, but is also includes Make Draggable of Interaction.

Make Draggable allows you to move the object with the controller when experiencing the scene.

As in Abstract2, two or more Modifiers can be applied at the same time.

However, if the number of Modifiers becomes too large, it may be difficult to manage the scene, and some Modifiers may conflict with each other, such as animation, resulting in unintended operation.

This is an explanation of the “KANKEISEI” scene using Modifier feature.

How to experience a AR scene

If you are accessing this page from a smartphone, please click on the “Experience the Scene” button (*If you are experiencing the scene on a smartphone for the first time, please also refer to the following instructions).

After clicking, the following screen will be displayed.

If you have already downloaded the STYLY Mobile app, please select “Continue on Browser”.

You can then select “Play on Mobile App” to experience the scene.

If you are accessing this page from a PC (web browser), you can experience the scene by clicking the “Experience the Scene” button, selecting the Mobile icon on the scene page, and scanning the QR code.

Download the STYLY Mobile app

For those who want to know more about how to experience the scene

For more information on how to experience AR scenes, please refer to the following article.

The post Article describing the JACKSON kaki’s scene using the Modifier feature first appeared on STYLY.

]]>The post Unity Plugin for STYLY How to resolve the error when uploading first appeared on STYLY.

]]>If you encounter an error while trying to upload a Unity asset, please refer to this guide.

What is Unity Plugin for STYLY?

STYLY allows you to upload prefabs and scenes created in Unity.

The plugin used for this process is called “Unity Plugin for STYLY.”

Refer to the following article for instructions on how to upload:

Why STYLY Upload Takes Time

The role of Unity Plugin for STYLY is not just to upload prefabs and scenes to STYLY.

It also processes them for multi-platform compatibility.

Without this processing, uploaded assets will not function correctly in STYLY.

Although the upload process takes time, it is optimized to ensure a seamless XR experience for as many users as possible.

Preliminary Checks

Is Your Unity Version STYLY-Compatible?

Check the supported versions for STYLY Plugin for Unity here:

STYLY Plugin for Unity DOWNLOAD

Uploading will not work unless you use a compatible plugin version.

Are Required Modules Installed?

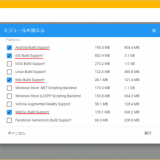

To use STYLY Plugin for Unity, certain modules must be installed in Unity beforehand.

If these modules are missing, you will see an error in the settings window like the one below.

Install the necessary modules and try uploading again.

Check the required modules at the following link:

Instructions for adding modules can be found in this article:

API Key / Email Authentication Error

If the following dialog appears during upload, an authentication error has occurred.

Authentication Error

In such cases, review the Email and API Key settings in the Asset Uploader Settings.

Not Connected to an Account

The STYLY Plugin for Unity requires a connection to an account.

If not connected, the following error will appear:

“〇〇 (Prefab name): You don’t have an account settings. [STYLY/Asset Uploader Settings]”

Connect your STYLY Plugin for Unity to your account before uploading.

Refer to this article for connection instructions:

Scripts Are Not Supported

The programming language used in Unity, C#, is not supported in STYLY.

Therefore, prefabs or scenes containing C# scripts will not function in STYLY.

To implement object control or interaction in STYLY, use “PlayMaker.”

Learn more about PlayMaker here:

Unity Upload File Size Limit

As of November 2021, STYLY recommends keeping uploaded prefabs under 20MB and scenes under 100MB.

For the latest file size limitations, refer to this page:

If the file size exceeds these limits, performance may degrade, or uploads may take longer.

To reduce asset file size, check this guide:

STYLY Storage Limit

When uploading prefabs or scenes from Unity, note that STYLY has a total storage limit.

STYLY allows up to approximately 1.7GB of assets in a scene.

If this limit is exceeded, additional assets cannot be placed in the scene.

When this happens, the following error appears:

If this message appears, you will need to reconfigure your scene.

Additionally, large file sizes may slow down scene interactions.

Manage asset sizes carefully when creating in Unity.

If you encounter an Out of Memory error preventing STYLY Studio from opening, refer to this guide:

How to Reduce Unity Upload Time

To shorten upload time, refer to this article:

Optimize your workflow for efficiency!

The post Unity Plugin for STYLY How to resolve the error when uploading first appeared on STYLY.

]]>The post [STYLY Modifier] How to Use the Style Change modifier first appeared on STYLY.

]]>Read more about STYLY Modifiers, including the Style Change Modifier, in this article:

Changing Appearances

Style Change Modifier allows you to change the appearance of an object; however, that object may not turn out as you might expect due to its shape or other factors (for example, the texture will be based on the object’s UV map).

New Style Content

We have added 44 new Style Change Modifiers to STYLY Studio, including wood, stone, glass, magma, crystal, and animated, liquid-like textures. Below, we introduce some of these basic Style Change Modifiers.

[Style Change] Crystal Clear

This Modifier makes an object look like a clear crystal and includes some animation.

Under [Style Change] Crystal Clear, use “Color” to change the color of an object.

[Style Change] Crystal Anim Frozen

This Modifier adds crystal-like animation to an object.

Under [Style Change] Crystal Anim Frozen, use “Color” to change the color of an object.

[Style Change] Liquid Anim Water

This Modifier adds liquid-like animation to an object.

Under [Style Change] Liquid Anim Water, use “Color” to change the color of an object.

[Style Change] Glass Gravel

This Modifier makes an object look like glass, but be careful: avoid creating objects that cover the entire screen or are extremely large, as Glass gravel, Glass sand, and Glass offset can make the scene very slow.

Under [Style Change] Glass Gravel, use “Color” to change the color of an object.

[Style Change] Grass Summer

This Modifier makes an object look like a summer meadow.

The “Tilling scale” controls the pattern repeat: the higher the number, the smaller the pattern.

[Style Change] Grass Winter

This Modifier makes an object look like a meadow covered with snow.

[Style Change] Wood Maple, Flat

This Modifier gives an object a woodgrain pattern.

The “Tilling scale” controls the pattern repeat: the higher the number, the smaller the pattern.

[Style Change] Wood Maple Tile, Aged

This modifier gives an object a tiled, woodgrain pattern.

The “Tilling scale” controls the pattern repeat: the higher the number, the smaller the pattern.

[Style Change] Marble Gray Tile

This modifier gives an object a tiled, marbled pattern.

The “Tilling scale” controls the pattern repeat: the higher the number, the smaller the pattern.

[Style Change] Marble White Offset

This modifier gives an object a displaced, tiled, marbled pattern.

The “Tilling scale” controls the pattern repeat: the higher the number, the smaller the pattern.

[Style Change] Plaster Gray

This Modifier makes an object look like plaster.

The “Tilling scale” controls the pattern repeat: the higher the number, the smaller the pattern.

[Style Change] Fire Rock Anim

This Modifier adds magma-like animation to an object.

Sample Scene

In the scene below, you can view all of the Style Change Modifiers currently available in STYLY Studio. In virtual reality (VR) mode, objects can be dragged and held by hand to see even more detail.

The post [STYLY Modifier] How to Use the Style Change modifier first appeared on STYLY.

]]>The post Template scene/asset guide using Light Probe first appeared on STYLY.

]]>This feature allows you to set up more realistic and lightweight spatial lighting textures for VR and AR.

STYLY Release Note:STYLY-VR v2.9.2 (2021/12/21)

Template scenes and assets that already have the “Light Probe” feature implemented can now be used in Studio.

The template scene includes Reflection Probe and LightMap in addition to the Light Probe features, so you can create a scene with well-lit.

In this section, I will explain how to use the template scene in STYLY Studio, as well as the features of the Light Probe and other lighting settings.

List of template scenes

Dim Museum

In this template, objects are displayed in a marble-based set with natural lighting. There is also a space for setting captions in the back, which can convey the world view of the exhibition after the viewers concentrate on the works.

Crypt Light

This template allows you to select your own images from custom assets to display on the wall. The European-style pillars, ceiling lights, and shadow textures are smooth, and the scene allows the viewer to concentrate on the work.

Crypt Dark

This is a dark version of the Crypt Light template. You can choose between Light and Dark scenes to match the mood of your work. You can enhance the sense of immersion by using Narration and BGM.

What is the “Light Probe” that became available in this update?

Light Probe is a setting that simulates the lighting in the scene space for each section in advance, and stores the information about the lighting in the scene. Originally, Unity and STYLY are set to simulate lights in real time, but due to the load, the simulation is limited.

Let’s compare the scene without Light Probe setting and the scene with setting in the image.

By setting up the Light Probe, the lighting information of the space can be stored and set up without placing lights that are burdensome to the simulation such as Point Light.

This is a scene without Light Probe. Objects do not blend well with the background in areas not directly illuminated by the directional light.

This is a scene with the Light Probe set. Even in a shaded area, the lighting of the object and the background match each other naturally.

Specifically, the following characteristics are reflected.

- The accuracy of lighting such as indirect light has been improved, resulting in a more natural appearance.

- Light is simulated in advance, making the scene lighter.

- When using Unity, the degree of shadow detail and the rendering range can be adjusted.

Other lighting features set up in the template scene

LightMap

A “LightMap” is also set in the template scene.

LightMap is a feature that maps and stores the light shining on an object onto the object’s texture in advance. This is especially applicable to backgrounds such as walls and ceilings. It also simulates the light hitting the object beforehand, making the scene lighter and the objects look more natural.

The following is a scene without LightMap. If you don’t place point lights in the shaded areas, there will be dark areas. However, it is difficult to set up a point light that illuminates the entire scene naturally and does not make the scene heavy.

The following is a scene with LightMap set up. The settings for the textures in the room being illuminated are saved in the textures themselves, so the scene is rendered in a lightweight and natural light.

Reflection Probe

In addition, a feature called “Reflection Probe” has been pre-set in the template scene. As the name suggests, this feature allows you to get a simulation of the reflected light. It can be used to show the specular reflection of metal, marble, etc. in a beautiful way.

The following is a scene without Reflection Probe set. Without setting the material and shader, the reflection will look unnatural.

The following is a scene with the Reflection Probe set. The surrounding objects are reflected naturally in the central specular object. Also, depending on the type of shader, the scene can easily be made lighter than simulating with shaders at any time, so you can expect to save weight when placing multiple objects.

How to use the template scene and assets

Let’s try using a template scene that already has the above features implemented.

This time, a “sample scene” with objects already placed in the template scene is also prepared, and you can use it from there.

How to use a template scene

Template scenes can be used from STYLY Studio.

If you are new to STYLY Studio, please refer to the STYLY Started Guide here.

To use a template scene, create a scene in STYLY Studio and open “Assets” indicated by the red frame in the upper left corner.

Click on “3D object” under Asset, then Then select “Featured”.

3D object

Featured

Open Featured to see the template scene we will be using is available.

Let’s start by explaining how to use the DimMuseum Set. Select “DimMuseum Set”.

DimMuseum Set

If you open the DimMuseum Set, you will find the following templates. Select “DimMuseum_room_sclupter”.

Select “DimMuseum_room_sclupter”

Select “DimMuseum_room_sclupter” and it will look like this.

In this case, we have already set up the lighting for the scene, so select the “Directional Light” icon and turn it off.

This will open the template.

The next step is to actually place the objects in the template scene.

This time, we will place a custom asset from STYLY Studio.

As before, click on “3D object” and continue Select “Primitive”.

3D object

Primitive

Select “Change Texture Sphere” and place it.

This time, I added the STYLY logo in the “Please upload an Image File” field.

To see how the specular reflection looks, I set the “Metalic” and “Smoothness” values to 1.

Once the settings are made, select the blue “ADD TO SCENE” button and place it on the pedestal.

Change Texture Sphere

This time, we will set it as shown in the red frame above

Also, under the object, select “Featured > DimMuseum Set > Dim Museum Shadw Circular” and place the round shadow in the following image on the pedestal.

Select “DimMuseum_shadow_circular” and place the shadow.

As you can see below, we have placed an object on the template pedestal that also reflects the simulation of the specular surface.

In this template scene, the image of the object reflected by the object placed in the scene will be the image of a virtual object.

You can also place text that will serve as a caption.

After selecting “3D object > Featured > DimMuseum Set”, select “CustomAsset” in the upper tab, and you can select “DimMuseum_ScreenText” as shown below.

Select “DimMuseum_ScreenText” from “CustomAsset”.

Enter “Title text” and “main text” here (English only) and select “ADD TO SCENE” to create a text object.

Insert text in “Title text” and “main text” and select “ADD TO SCENE”.

By creating and arranging objects in this way, you can reflect natural lighting on various types of objects, as shown in the following image. If you are a modeler or photogrammetrist, please try placing your own models in this way.

If you follow the same procedure as in DimMuseum and select “3D object > Featured > Crypt Set”, you can also use CryptLight and CryptDark templates. Here, if you select “Crypt_photoframe_wood” from Custom Asset, you can display an image with a frame that matches this template.

You can set up a framed image from “Crypt_photoframe_wood”.

You can use your favorite painting or image as the photo part of the hand in the following image and place it beautifully.

How to use the sample scene

You can also copy a sample scene that already has sample objects placed in it to your own account.

After logging in to STYLY Studio, click on the URL below and the sample scene will be added to the scene list in the STUDIO screen.

If you want to check the placement image first, or if you want to use the shadows and other settings as they are, it is convenient to open this page.

I hope you will try to publish your scenes created with STYLY Studio.

In this article, I explained about Light Probe, which is a new feature added to STYLY, and introduced a template scene asset using it.

Please exhibit your own 3DCG or images and try out the texture of the lighting.

In the future, STYLY MAGAZINE will also introduce how to set up Light Probe and LightMap in Unity, so please check out those articles as well.

The post Template scene/asset guide using Light Probe first appeared on STYLY.

]]>The post STYLY Starter Guide first appeared on STYLY.

]]>Create a STYLY Account

In order to use STYLY, you first need to create a STYLY account. If you are new to the site, read the following article to get started with creating an account.

Creating a VR/AR Work

How to Use the STYLY Studio

The basic operation of the STYLY studio can be learned like a game with the help of tutorial scenes. Learn how to use the STYLY studio by reading the following article.

Producing More Advanced Works Using Unity

Regarding Unity and STYLY Integration

With Unity, you can create things that you would not be able to create in the STYLY studio alone—for example, by adding animation or interactivity—and then upload them to STYLY as prefabs or scenes to be used on STYLY. You can learn more about how to use your Unity creations on STYLY in the following article.

If you have never used Unity before but you would like to try to create a new piece of work, start by reading the next section, titled “Installing Unity”.

Installing Unity

When you install Unity, there are a lot of minor details including versions and settings. If you are new to Unity, you may not be familiar with them. It could also happen that you install it, but the version of Unity is not suitable for STYLY. So, if you are new to Unity, we recommend that you set it up as described in the following article.

Learning How to Use Unity

NEWVIEW SCHOOL ONLINE

Unity has so many features and specifications that a beginner cannot grasp them all on their own.

For this reason, STYLY provides a resource called STYLY Learning Material to help you understand them.

The STYLY Learning Material explains the basics of Unity, PlayMaker and the Interaction SDK, which will be explained later, so that even beginners can understand.

Click here to go to STYLY Learning Material.

Creating Interactive Works with PlayMaker and the Interaction SDK

The Interaction SDK provided by STYLY is available for free and allows you to easily place various gimmicks in your scene that can be used on KSTYLY.

Since STYLY cannot use C# scripting, you can use PlayMaker to create complex gimmicks. PlayMaker is a visual scripting tool for Unity available for a fee in the Unity asset store; this tool allows you to create complex gimmicks visually Implementation.

Experiencing the VR/AR Work

The spaces created through STYLY can be experienced on a variety of devices. You can check out the spaces you’ve created and experience spaces created by others to refine your ideas or just enjoy XR for its own sake. Read the article below to find out how to experience this on different devices.

Frequently Asked Questions

For your convenience, the following article provides a bulleted list of issues that commonly arise when using STYLY and Unity.

This site also contains a variety of information about STYLY and Unity, which you can read to improve your own stumbling blocks and gain knowledge and skills that you never knew existed. We encourage you to browse and read articles that interest you.

Go to STYLY Manual

Go to STYLY Magazine

Community

STYLY FORUM

You can use STYLY FORUM to solve the problem. STYLY FORUM is a place where people can discuss a service or technical issue on STYLY, or provide bug reports on STYLY.

Go to STYLY FORUM

NEWVIEW

NEWVIEW is an experimental project/community that brings together people who embody contemporary culture in fashion, music, video, graphics, and other fields to pioneer and expand the design of creative expression and experiences in three-dimensional space.

NEWVIEW discovers, nurtures, and disseminates the next generation of artists and creative expression through activities such as collaborative work production, awards, and schools. Come join us in expanding NEWVIEW, a new world of hyper-experienced design.

Click here to go to NEWVIEW.

The post STYLY Starter Guide first appeared on STYLY.

]]>The post STYLY Studio Manual – Making a Wearable “AR Human Template” first appeared on STYLY.

]]>For guidelines on delivering a more attractive and comfortable experience, please refer to the production guidelines section at the end of the article.

*The AR Human Template discussed here is not AR that extends specific body parts such as the face or feet, but rather AR that expands the space around a human (the subject). In other words, the experience is designed to extend the area within a few meters around the person.

This article explains two different production approaches.

One approach is to create based on the templates provided by STYLY, and the other is to build around your own assets.

By reading through to the end, you will be able to create an AR Human Template and try it out on Instagram.

Creating an AR Human Template with Modifier

The AR Human Template is created using the “Modifier” feature in STYLY Studio.

A Modifier is a function in STYLY Studio that allows you to add effects such as “animation” and “interaction” to assets.

Additionally, the effects themselves are referred to as “Modifiers.”

Previously, STYLY required the use of Unity or PlayMaker to add functions, but now, anyone can easily add movement and animation to objects directly from the browser.

For a comprehensive overview and detailed functionality of Modifiers, please check past articles.

Creating Based on Templates

Several pre-made AR Human Templates are available in STYLY Studio.

You can replace existing assets that make up the template.

By referencing them to some extent, you can create your own unique expression.

For example, you can keep the same composition shown in the template but replace only the assets, or keep the assets and change the composition instead. This can serve as a source of inspiration or a shortcut in the production process.

Since this is an AR content experience that involves using a smartphone to view the scene, beginners may find it difficult to grasp how assets placed in STYLY Studio appear in the real-world space, and what kind of effects can create an engaging visual expression.

In such cases, the template’s presentation can be a helpful reference.

Copying a Scene to STYLY Studio

- First, make sure you are logged into STYLY Gallery.

- If the top right corner of the STYLY Gallery screen shows “Not Logged In,” select “Login.”

Enter your email address and password, then select “Login.”

- If the top right corner of the STYLY Gallery screen shows “Logged In” (your icon is displayed), you are already logged in.

- If the top right corner of the STYLY Gallery screen shows “Not Logged In,” select “Login.”

- Click the copy button below. *If you are not logged in, the scene will not be copied to your account.

- Once the scene has been added to the scene list in STYLY Studio, the process is complete.

Let’s open the scene right away.

From here on, we will explain based on the opened scene.

Template Structure and Key Usage Points

First, let’s go over the structure and key points of the template to get an overall understanding.

Template Structure

The template consists of two main types of elements.

These are non-interactive base assets and interactive assets.

Assets without an eye icon are essential parts required for any AR Human Template and cannot be interacted with.

Interactive assets can be modified by clicking on the Modifier icon to add movement.

The template already includes both of these types of assets.

Key Points for Using the Template

The template includes circles labeled 1m / 0.65m / 0.2m.

These circles are placed to help balance the relationship between the subject and the surrounding space when placing assets.

The 0.65m (1.3m total) area represents the Personal Space.

This is the ideal range for placing assets around a person.

The 1m (2m total) area represents the Social Space.

This is suitable for placing environmental assets.

Use the circles as guides when designing your scene.

The innermost 0.2m (0.4m total) circle represents the Intimate Space.

This area is not ideal for placing assets as they may overlap with the person.

The template we are using already includes placements that take these circles into account.

With this in mind, here are two key points to consider when using the template:

- How to place the assets

- How those assets will move

Keep these two perspectives in mind.

Now, let’s use the template to create an AR Human Template.

Creating with the Template

When you open the template, you will see several assets already placed.

Now, let’s add animation using Modifiers.

This time, we will adjust the parameters of the animations that have already been applied.

By following this process, you will get a better idea of how to create your unique expression while using the template as a base.

-

- Screen when opening the copied scene

- Select “Ring” and click the Modifier icon to choose the operation options for the asset.

- In the search box, select [Animation] Heartbeat.

- Screen when opening the copied scene

- Confirm that the Modifier has been added and check the current parameter values.

- Adjust the parameters and apply the changes.

Here, set Beat duration to 2, Hold duration to 1, and Amplitude to 0.1, then select “Apply.” - Select “Ring” and click the Modifier icon to add [Style change] wood maple flat.

As a result of these operations, we were able to modify the appearance and scale of the background ring.

The presentation now has a more playful feel compared to the original template.

By utilizing or referencing existing templates, you can create your own unique expressions.

Experiencing it on STYLY Mobile

Select the globe icon at the top left of the screen to publish your scene (you can choose between public or private).

Once published, the scene can be experienced on various platforms. On desktop, you can access it from STYLY Gallery, while on smartphones, it can be experienced using the dedicated STYLY Mobile app.

Experiencing on Desktop

On desktop, you can scan the displayed QR code with your smartphone to launch the STYLY Mobile app. If you haven’t installed the STYLY Mobile app yet, you’ll need to install it first. (This QR code can always be accessed from STYLY Gallery.)

Experiencing on Smartphone

- After launching STYLY Mobile, select your scene from My Page.

- Select the button labeled “Download.”

- Select the button labeled “View.”

Follow the on-screen instructions to slowly move your smartphone over a flat surface.

After a moment, the “Tap the screen to start” message will appear, allowing you to begin the experience.

By adjusting the subject’s outfit and pose, as well as the surrounding environment, you can create even more compelling content that fits well with the AR Human Template.

Uploading to Instagram

After selecting “View,” you will find a settings button at the bottom right of the STYLY Mobile app experience screen.

From there, you can switch to recording mode.

By recording a video, the file will be saved to your camera roll, making it possible to upload to Instagram.

View this post on Instagram

Creating with Your Own Assets

In the AR Human Template, in addition to the pre-existing assets placed within the template, you can also use custom 3D models created with modeling software or assets purchased from websites.

Here, we will explain how to upload your own assets.

- Select the upload icon located at the top left of the screen.

- Select the “My uploads” section.

- Select the “3D model” section.

- Select the file for the asset stored on your local device from the “Select” option.

-

Confirm that the asset is selected and choose “Upload.”

In this guide, we uploaded an asset stored as a local file, but you can also upload assets created in Unity.

Check out the following article for more details.

How to upload from Unity to STYLY

For guidelines on delivering a more immersive and comfortable experience, refer to the following article.

For questions about STYLY, bug reports, and feature requests, visit the STYLY FORUM

https://jp.forum.styly.cc/support/discussions

Edited by SASAnishiki

The post STYLY Studio Manual – Making a Wearable “AR Human Template” first appeared on STYLY.

]]>The post Article describing the Naoya Hirata’s scene using the Modifier feature first appeared on STYLY.

]]>I introduce the appreciation points of the scene, how he uses Modifier, and how he arranges Modifier.

About Naoya Hirata

Mr. Hirata was born in Nagano, Japan in 1991 and graduated from Musashino Art University, Department of Sculpture in 2014. From the time he was in the university, he began creating works using free 3D data and image data, which can be collected indefinitely on the Internet, as materials.

He creates works based on the data he collects in a computer virtual space where he defines the numerical values of gravity and other factors. Mr. Hirata considers them to be “sculptures in virtual space.”

(Quoted from “Sculptures in the virtual space. Naoya Hirata’s ‘Incomplete Prison’ at Guardian Garden”, Bijutsu Techo, December 25, 2018.

https://bijutsutecho.com/magazine/news/promotion/18956 )

Twitter : https://twitter.com/_naoya___H__

Instagram : https://www.instagram.com/_naoya___h__/

About “Manic Day Theater”

This is a VR work.

Mr. Hirata creates 3DCG ready-made art works in the virtual space.

Basically, his main focus is on the sculptural objects that are created, but this time, Manic Day Theater is a game approach work that can be enjoyed by exploring the space in a “maze”.

As you move through the maze, you will find 2D and 3D works of objects created by Mr. Hirata.

The objects are placed not only on the ground, but also in the air. Look for places where you can enjoy yourself by looking up.

There are also high-impact places, such as the sudden appearance of a giant horse.

Once you get out of the maze, you will find Mr. Hirata’s works on display.

There are only a few places where you can appreciate Mr. Hirata’s works in VR, so this is a rare experience!

Let’s get out the maze!

Points where he uses Modifier

You can actually copy a scene on STYLY Studio and check it against the following explanations.

How to copy a scene on STYLY Studio

- First, please login to STYLY Gallery.

Select LOGIN in the upper right corner of the STYLY Gallery screen if you don’t login to STYLY Gallery.

- Click on the Copy button below. *You must be logged in to copy to your account.

- When the scene is added to the scene list on STYLY Studio, you finished copying.

Explanation

The object named bust_of_gutenberg1 has an Animation Heartbeat applied to it.

This allows the user to add animation to a stationary object to create a “sudden movement” effect.

While viewing the work, I was surprised by the sudden increase in size of many of the objects that were basically stationary.

The bear object includes Style Change’s Gradient color to change its appearance.

The bear sculpture, which has been transformed into a poisonous coloring, has a strong presence.

By changing the parameters of this color, you can create your own bear sculpture.

For the Small_fire object, Rim Light of Style Change is used.

Rim Light allows you to change the appearance of the “glowing outline”.

This allows you to create a pseudo-flame like appearance.

In judge_prop, Animation’s Rotate is used to rotate the object.

judge_prop is a part of Mr. Hirata’s sculpture called Judge, and by moving that part, we can give “information” to the work.

The above is an introduction to Modifiers in Mr. Hirata’s work.

There are many other objects in which Modifier is used. Let’s take a look at them and experience how they are used effectively!

How to experience a VR scene

If you are accessing this page from a smartphone, please click on the “Experience the Scene” button (*If you are experiencing the scene on a smartphone for the first time, please also refer to the following instructions).

After clicking, the following screen will be displayed.

If you have already downloaded the STYLY Mobile app, please select “Continue on Browser”.

You can then select “Play on Mobile App” to experience the scene.

[VR]If you have an HMD device, click the “Experience the Scene” button from your PC (web browser), then click the VR icon on the scene page.

[AR]If you are accessing this page from a PC (web browser), you can experience the scene by clicking the “Experience the Scene” button, selecting the Mobile icon on the scene page, and scanning the QR code.

Download the STYLY Mobile app

Download the Steam version of STYLY app

https://store.steampowered.com/app/693990/STYLYVR_PLATFORM_FOR_ULTRA_EXPERIENCE/

Download the Oculus Quest version of STYLY app

https://www.oculus.com/experiences/quest/3982198145147898/

For those who want to know more about how to experience the scene

For more information on how to experience VR scenes, please refer to the following article.

The post Article describing the Naoya Hirata’s scene using the Modifier feature first appeared on STYLY.

]]>The post Luna Woelle Modifier Scene Description first appeared on STYLY.

]]>I will introduce the key points to appreciate the scene, how she used Modifiers, and how she arranged them.

About Luna Woelle

Luna Woelle

Born in Slovenia, born in 2000. Digital artist, graphic designer, DJ. Designer and visual curator of the experimental label “Mizuha”. Instagram: https://www.instagram.com/wo11.e SoundCloud: https://soundcloud.com/luna-woelle Bandcamp: https://mizuhamizuha.bandcamp.com/album/biosphere

(Quoted from

https://newview.design/en/fest2021/ )

Instagram: https://www.instagram.com/wo11.e

SoundCloud: https://soundcloud.com/luna-woelle

Bandcamp: https://mizuhamizuha.bandcamp.com/album/biosphere

About “Imaginary Robotics AR”

This work is an AR work.

I recommend that you experience it in a large space.

When the work is launched, a rotating robot object will appear.

Viewing in AR

Built around a white object, the robot combines mechanical movement with sculptural beauty.

Precisely crafted

Even the smallest parts are meticulously crafted.

Fun even at the micro level

The combination of objects and Modifier gives life to the sculpture and expresses its presence.

Strong presence

Modifier Application Points

You can actually copy a scene on STYLY Studio and check it against the following explanations.

How to copy a scene on STYLY Studio