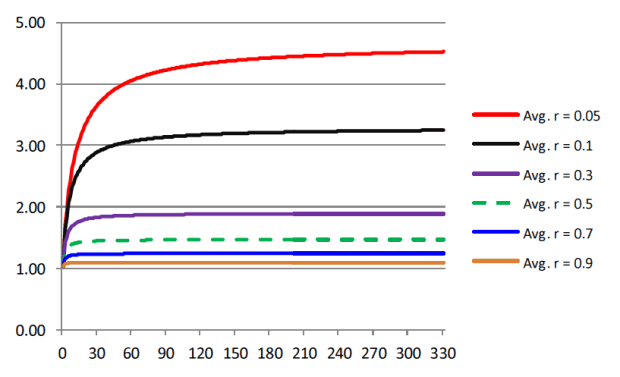

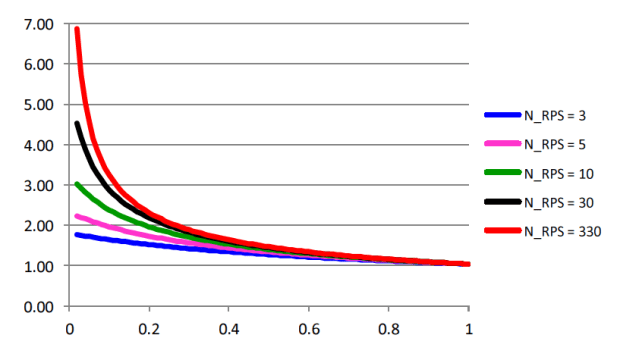

In this post, I would like to show some research I have done on the front of utilizing portfolio theory to efficiently and optimally allocate capital to a pair of systems.

Traditional theory applied outright can be problematic. As I mentioned in the previous post, the assumptions that go in are not consistent in the long run, ie expected return, therefore the optimized portfolio performance will deviate significantly from backtest results. This is similar to developing a system on data that no longer reflect the current market state which will ultimately bankrupt you.

In my opinion, there are ideas within the traditional framework that are useful. Expected return of individual assets may not be reliable, but expected return of a well designed system should be, in a probabilistic sense.

For the past year, I started to view everything as a return streams. By this I mean rather than differentiating between assets and trading systems applied on assets, one should look at them equally. Although this may sound obvious, I will come back to this subject later and expand on it. But this way of thinking has helped me go against traditional methods of system design to built more robust systems that are model free and parameter insensitive.

In portfolio theory, the lower the correlation between instruments the better. In this experiments, I will be referring to two trading systems that are different in nature; Mean Reversion and Trend Following. Both of these trading systems will be applied to the same asset, SPY. System details:

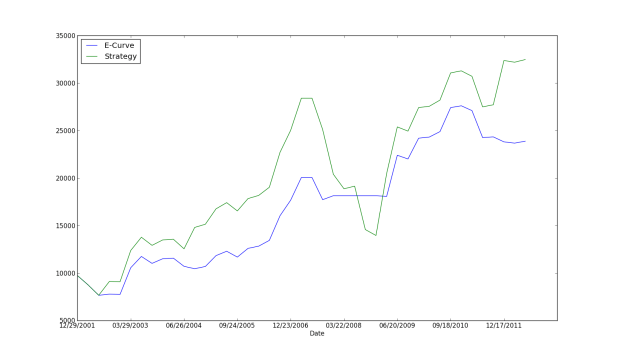

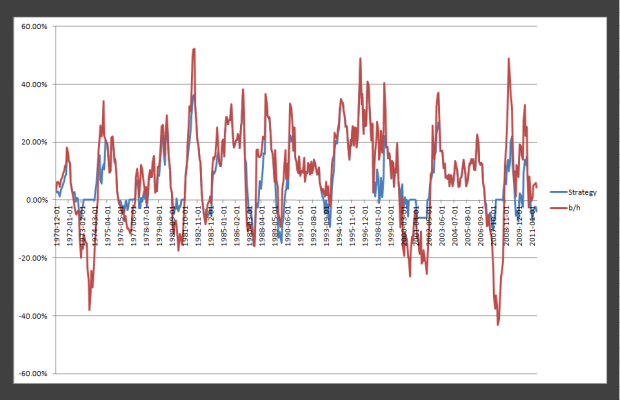

Their daily return correlation is 0.04. The following is their equity curve.

Both of them are profitable and aren’t optimized as all. Test date is 1995-2012. Daily data are used from yahoo finance and no commissions or slippage was taken in to account. Next is their risk reward chart as popularized by traditional theory.

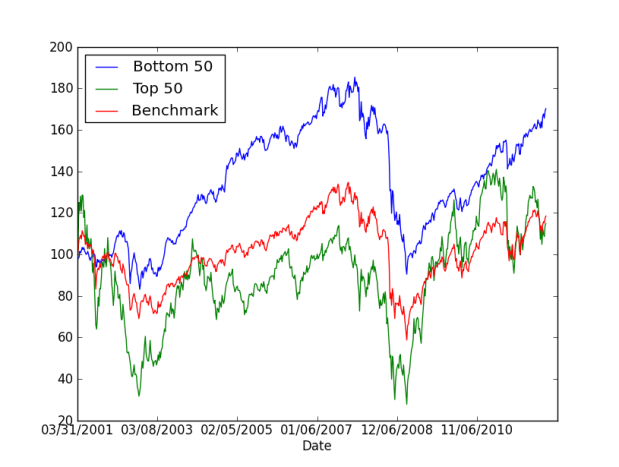

MR = Mean reversion, TF = Trend following, SPY = etf. From an asset allocation point of view, MR seems to be the most desirable of the three. Up next I show the efficient frontier of the two systems from 2000-2004 and then based on the minimum variance (MV) allocation in that period, I will forward test it. More concretely, I will compare the portfolio level equity curve from trading the two systems together.

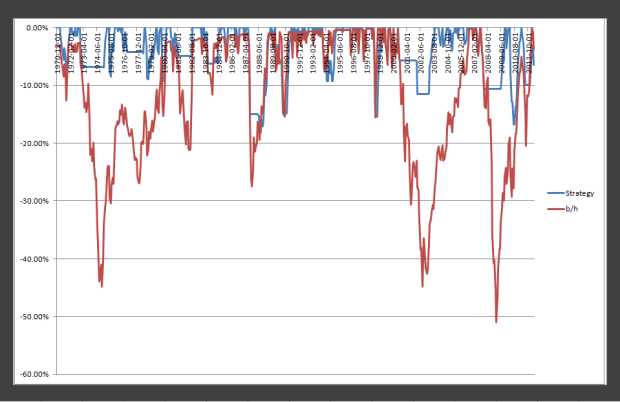

The MV allocation is the left most point on the curve. It’s the allocation that minimizes portfolio variance. The following is the equity curves from 2005-2012 with different allocations.

Apologies for not plotting the legend! The red curve is the buy and hold of the SPY, the blue is a equal weight allocation between the two systems, and the orange curve is the MV allocation (~19% TF and ~81% MR). From a pure return perspective, trading the MV allocation produced the most return but from a risk reward standpoint, the equal weight allocation is better. In my optimization process, I found that the allocation that maximizes sharpe ratio would be allocation 100% to MR system. Now the numbers…

If I were to choose, I would go with the equal weight allocation as it has in my opinion the features that I will be able to sleep at night. I am not going to discuss the results in more depth as its well passed midnight, maybe another time I will come back and do something different.

Note: the portfolio level testing was simulated using tradingblox while the optimization and plotting were done in R. If you have any questions or comments, please leave a comment below! Email me if you want the TB system files.