C’est exactement l’objectif de la nouvelle mise à jour d’Anatella (version 4.06) : transformer l’IA générative en une brique ETL industrielle comme une autre.

Voici ce que cela change concrètement pour vos flux de données.

1. La Souveraineté avant tout : Cloud ou Local, vous avez le choix

Le premier frein à l’adoption de l’IA en entreprise est la sécurité. Envoyer vos données clients ou des documents confidentiels vers les serveurs américains d’OpenAI est souvent inenvisageable.

Anatella brise cette barrière en offrant une approche hybride :

- Mode Remote (Cloud) : Connectez-vous aux APIs standards (OpenAI, etc.) pour des tests rapides ou des données publiques.

- Mode Local (On-Premise) : C’est la grande force de cette update. Vous pouvez exécuter des modèles Open Source (GPT, Llama, Mistral, Gemma, etc.) directement sur votre infrastructure via l’intégration native de Llama.cpp.

Le bénéfice pour vous ? Vos données ne quittent jamais votre serveur (Souveraineté totale). De plus, en utilisant votre propre GPU/CPU, vous éliminez les coûts variables liés aux appels API.

2. Du “Blabla” à la Donnée Structurée (L’industrialisation)

Le problème des LLM, c’est qu’ils sont bavards. Dans un processus ETL, vous n’avez pas besoin de politesses, vous avez besoin de JSON propre.

Anatella intègre désormais deux leviers pour “dompter” l’IA :

- Le System Prompt : Pour donner des directives strictes au modèle (ex : “Tu es un extracteur de données, ne réponds qu’en JSON”).

- Le Schéma JSON : Vous définissez une grammaire stricte. Le LLM est forcé de répondre selon ce format (ex: un objet contenant “Nom”, “Montant”, “Date”). Si la sortie ne correspond pas au schéma, elle est rejetée.

Le bénéfice pour vous ? Vous transformez du texte libre (emails, PDFs, commentaires) en tableaux structurés exploitables immédiatement par les boîtes suivantes de votre graphe.

3. Cas d’usages : Que pouvez-vous automatiser dès aujourd’hui ?

Cette nouvelle “boîte” ouvre la porte à des scénarios auparavant très complexes à coder :

- Intelligent Document Processing (IDP) : Passez le contenu d’un fichier (PDF, contrat) en “pièce jointe” (attachment) à votre prompt. Le LLM peut alors analyser le document pour en extraire des informations précises comme des IBANs ou des clauses spécifiques.

- Nettoyage de données contextuel : Utilisez l’IA pour normaliser des adresses mal formatées, déduire le genre d’après un prénom, ou catégoriser des produits.

- Génération de données synthétiques : Créez des jeux de données de test réalistes pour vos environnements de dev.

4. Pour les Experts : Scripting JS et Boucles de Contrôle

Pour les cas simples, le Mode Simplifié suffit : une colonne en entrée (Prompt), une colonne en sortie (Réponse).

Mais pour les ingénieurs Data, le Mode Script JS offre un contrôle total. Vous pouvez coder en JavaScript pour interagir finement avec le modèle :

- Boucles de validation : Demandez au LLM son niveau de confiance. Si la confiance est faible, scriptez une boucle pour reformuler la question et réinterroger le modèle automatiquement.

- Mémoire gérée : Manipulez le contexte (les échanges précédents) pour créer des conversations suivies sur plusieurs itérations.

5. Performance : L’efficience TIMi appliquée à l’IA

Faire tourner des modèles d’IA en local consomme de la mémoire vidéo (VRAM). Fidèle à sa philosophie d’optimisation, Anatella gère intelligemment les ressources serveurs :

- Gestion Automatique : Le serveur “Llama.exe” démarre quand on en a besoin et s’arrête pour libérer la mémoire.

- Exploitation CPU & GPU : Pas de GPU ? Pas de problème. Pour les modèles légers (< 5 milliards de paramètres), le processeur (CPU) suffit amplement. La carte graphique devient un accélérateur de confort, pas un prérequis bloquant.

- Quantization supportée : Anatella exploite les formats compressés (Q4 ou Q6). Cela permet de faire tourner des modèles massifs et très performants sur une simple carte graphique grand public (à 500€), en optimisant drastiquement l’empreinte mémoire.

Conclusion

L’IA Générative n’est plus une magie réservée aux Data Scientists parlant Python. Avec cette mise à jour, elle devient une composante robuste de votre arsenal ETL. Que ce soit pour résumer, extraire ou générer, vous pouvez désormais le faire à l’échelle, en toute sécurité.

Pour aller plus loin, cette nouvelle fonctionnalité est documentée en détail dans la section 5.9.12 du AnatellaQuickGuide.pdf. Nous vous recommandons également la lecture de la section 5.1.8, qui regorge d’informations pertinentes pour utiliser les LLM avec efficience.

Envie de tester ? La fonctionnalité inclut une installation automatisée des composants requis. Téléchargez la dernière version d’Anatella et lancez votre premier modèle local en quelques clics.

]]>

- An amazing experience with the HELMo students, who gave it their all.

- Strengthening our ties with AWEX—a partner always by our side.

- A great insight into the Spanish market and its opportunities.

Huge thanks to Maxime Durka, Théo Lardinois, Marie Cravatte, and Louka Delrez from HELMo.

And as always, a big shoutout to AWEX for their continuous support—Florence Vanholsbeeck, Patrick Lefebvre.

A special mention for our meeting with Ambassador Didier Nagant De Deuxchaisnes from Embajada de Bélgica en España—truly an honor!

Click here to contact us and find out more about our solutions.

]]>Why is this an issue? Thousands of companies still rely on Talend to orchestrate their critical data flows. Today, they find themselves stuck with a tool that is no longer maintained, no longer updated, and potentially vulnerable.

- If a bug occurs after a Java update? No support.

- If a critical data flow breaks? No fixes.

- If GDPR compliance becomes a nightmare? Not their problem.

For 18 years, we have been developing an advanced analytics platform with a clear vision: to provide a sovereign, high-performance, and reliable alternative.

Sources: The results presented are based on benchmarks conducted by IntoTheMinds, an independent consulting firm specializing in data and analytics:

1. “ETL benchmark: how long does it take to process 1 billion lines?”

2. “Data preparation: how to reduce the processing time by 85%”

We are proud to offer an ETL solution (Anatella) that stands out in several ways:

- Speed and scalability: Our users report performance gains of 100 to 1,000 times faster, depending on the case.

- Advanced transformations: Pivot, cell propagation (like in Excel), adjacent row calculations—no more frustrating limitations.

- Extensive connectivity: SharePoint, OneDrive, PowerBI, MS-Dynamics, and many more. No need for makeshift workarounds.

- Smooth user experience: Clear and readable error messages.

- Designed for Data Science and Big Data: Dynamic loops, conditional transformations, and native integration with R, Python, and JavaScript.

And most importantly: no “Data Tax.” Unlike pricing models that charge based on volume, we keep a fixed price, ensuring total cost control.

We firmly believe that Anatella is the best on-premise ETL solution on the market today. If you are using Talend Open Studio and looking for an alternative, let’s talk.

Sovereignty and performance should not be optional.

Click here to contact us and find out more about our solutions.

]]>The thing to remember is that you don’t have to keep reinventing the wheel. When you ‘save’ business knowledge in the form of an Anatella graph, you :

- avoid losing this precise knowledge if the employee decides to leave.

- take an already complex existing process and improve it even further.

To sum up, the important thing here is that TIMi enables innovation and business knowledge to be managed within the company. Without TIMi, it’s very difficult to develop this business knowledge, or even to safeguard it.

This extract is taken from an interview for the Business Inside TV programme presented by Jean-Marc Sylvestre for Forbes magazine.

Key points

- What is AutoML?

- A solution as universal as Excel for businesses.

- The benefits of no-code for business users.

- The importance of data sovereignty.

- Cheaper than traditional cloud solutions.

Read the full interview here.

—

Want to find out about other case studies? Contact us.

[email protected]

[email protected] +32 (0) 478 48 19 25 | +33 (0) 651 98 18 65

+32 (0) 478 48 19 25 | +33 (0) 651 98 18 65

Founded in 2007, Belgian company TIMi has established itself as a pioneer in automated machine learning and the massive exploitation of data (Big Data). Its CEO, Frank Vanden Berghen, talks about the company’s development, its innovations in data flow automation and its international ambitions.

What were your objectives when you set up TIMi?

TIMI was set up in 2007 to enable the exploitation of databases on the scale of a hundred billion lines and to produce predictive models. We were the first in the world to develop automated machine learning engines (Auto-ML). However, model creation remains a niche activity, and 95% of existing companies have no need for it.

So where are you focusing your efforts?

TIMi is now focusing its efforts on data flow automation solutions for business processes. This is because we have found that the best people to automate a business process are those who understand all the ins and outs of those processes and live those processes on a day-to-day basis. This idea is often referred to as the ‘Analytical Culture’. This automation work, which now concerns all organisations, often remains limited because of certain difficulties, starting with the code. Indeed, people ‘in the business’ often have no affinity with code. As our software works without code, we overcome this difficulty. TIMi also offers the ability to handle an almost infinite amount of data on a minimal infrastructure (a laptop, a server), access to all possible algorithms (open source or proprietary), and a large number of connectors. Thanks to innovative compression algorithms, for example, TIMi can handle a database volume of 5 TB using just 100 GB of disk space (the size of the data is typically divided by 50 compared with a traditional database).

What type of professional is your solution aimed at?

We are targeting Business Analysts, who are found particularly in corporate finance departments. We are currently working with 200 Deloitte tax specialists to optimise the accounts of the firm’s clients. Production engineers, faced with recurring breakdowns in their machines, are also looking for our solutions to analyse the causes of breakdowns and carry out predictive maintenance. Marketing specialists, who use data on customer behaviour to refine their sales strategies and increase their conversion rate, are also among our customers.

The aim of TIMi, a horizontal solution, is to become as universal as Excel. Excel is not tied to a specific sector, but is used in all company departments. As a result, we are aimed at anyone who needs to automate workflows.

What are your export ambitions?

Around 80% of our sales come from exports. We have branches in Latin America (Colombia and Peru) and are planning to extend our activities to Japan.

Sources: « L’automatisation des données, c’est l’affaire de TIMI » in the Business Inside TV programme for Forbes magazine.

]]>FR: Le contenu de cet article de blog est également disponible au format PDF ici.

We often get asked which servers offer the best performance and value for analytics. To answer, we’ve compared Azure (representing the “US clouds”) with Hetzner in a detailed analysis.

From the Azure documentation available here: https://learn.microsoft.com/en-us/azure/virtual-machines/sizes/overview

… the virtual machines available inside Azure are classified in 9 different “Series”:

| VM Series | vCPU Range | Memory (GB) | Purpose |

| A-series | 1 – 8 | 2 – 64 | Entry-level, dev/test, small apps |

| B-series | 2 – 32 | 1 – 128 | Burstable, cost-efficient workloads |

| D-series | 2 – 96 | 8 – 384 | General-purpose, business apps |

| E-series | 2 – 128 | 16 – 672 | Memory-intensive apps |

| F-series | 2 – 64 | 4 – 256 | Compute-intensive workloads |

| L-series | 4 – 80 | 32 – 512 | Storage-intensive workloads |

| M-series | 8 – 416 | 192 – 11,400 | Extremely high-memory workloads |

| N-series | 6 – 96 | 56 – 672 | GPU-based tasks (AI, ML, graphics) |

| H-series | 2 – 120 | 8 – 1200 | High-performance computing (HPC) |

Here are the processors available in each of these series:

| VM Series | Processor | vCPU Range | Memory Range (GB) | Key Features |

| A-series | Intel Xeon E5 family (E5-2673 v3 and v4), Intel Xeon Platinum 8370C and 8272CL, Intel Xeon 8171M (Ice Lake) | 1-8 | 2 – 64 | Budget-friendly, entry-level, consistent CPU performance |

| B-series | AMD EPYC 7763v (Genoa) and 7452, Intel Xeon Platinum 8473C (Sapphire Rapids) or 8370C (Ice Lake) | 2 – 32 | 1 – 128 | Burstable, credit-based CPU performance model |

| D-series | Intel Xeon Platinum 8573C (Emerald Rapids) and 8272CL (Cascade Lake), AMD EPYC 7763 (Milan), AMD’s 4th Generation EPYC 9004 9004 | 2 – 96 | 8 – 384 | High CPU-to-memory ratio for general-purpose workloads |

| E-series | Intel Xeon Platinum 8370C (Ice Lake), AMD EPYC 7763 (Milan), AMD’s fourth Generation EPYC 9004 9004 | 2 – 128 | 16 – 672 | Memory-optimized, great for relational databases and in-memory analytics |

| F-series | Intel Xeon Platinum 8272CL (Cascade Lake) | 2 – 64 | 4 – 256 | Compute-intensive workloads with a high CPU-to-memory ratio |

| L-series | Intel Xeon Platinum 8370C (Ice Lake), AMD EPYC 7763v (Milan). | 4 – 80 | 32 – 512 | Optimized for storage-intensive workloads with high IOPS |

| H-series | Intel Xeon Platinum 8168, 4th Gen AMD EPYC 7551 (Naples), AMD EPYC 7V12 (Genoa) , AMD EPYC 9V33X (Genoa-X), AMD EPYC 7V73X (Milan-X), AMD EPYC 7V12 7V73X (Milan-X), AMD EPYC 7V12 | 2 – 120 | 8 – 1200 | High-performance computing (HPC), with enhanced interconnects for fast data |

If we search the performances for each of these processors on cpubenchmark.net, we get (the higher the “Single thread rating”, the better the CPU):

| Processor | Single Thread Rating | Clock Speed [GHz] | URL |

| Intel Xeon E5-2673 v3 | 1,738 | 2.4 | https://www.cpubenchmark.net/cpu.php?cpu=Intel+Xeon+E5-2673+v3+%40+2.40GHz&id=2606 |

| Intel Xeon E5-2673 v4 | 2,107 | 2.3 | https://www.cpubenchmark.net/cpu.php?cpu=Intel+Xeon+E5-2673+v4+%40+2.30GHz&id=2888 |

| Intel Xeon Platinum 8575C | 2,593 | 4.0 | https://www.cpubenchmark.net/cpu.php?cpu=Intel+Xeon+Platinum+8575C&id=6173 |

| Intel Xeon Platinum 8370C (Ice Lake) | 2,474 | 3.5 | https://www.cpubenchmark.net/cpu.php?cpu=Intel+Xeon+Platinum+8375C+%40+2.90GHz&id=4486 |

| Intel Xeon Platinum 8272CL (Cascade Lake) | 2,386 | 3.0 | https://www.cpubenchmark.net/cpu.php?cpu=Intel+Xeon+Platinum+8275CL+%40+3.00GHz&id=3624 |

| Intel Xeon 8171M (Ice Lake) | 2,222 | 2.6 | https://www.cpubenchmark.net/cpu.php?cpu=Intel+Xeon+Platinum+8171M+%40+2.60GHz&id=3220 |

| AMD EPYC 7763v (Genoa) | 2,517 | 2.45 | https://www.cpubenchmark.net/cpu.php?cpu=AMD+EPYC+7763&id=4207 |

| AMD EPYC 7452 | 1,995 | 2.35 | https://www.cpubenchmark.net/cpu.php?cpu=AMD+EPYC+7452&id=3600 |

| Intel Xeon Platinum 8280L | 2,029 | 2.7 | https://www.cpubenchmark.net/cpu.php?cpu=Intel+Xeon+Platinum+8280+%40+2.70GHz&id=3662 |

| AMD EPYC 7551 | 1,766 | 2.0 | https://www.cpubenchmark.net/cpu.php?cpu=AMD+EPYC+7551&id=3089 |

| AMD EPYC 7V12 (7443) | 2,907 | 2.9 | https://www.cpubenchmark.net/cpu.php?cpu=AMD+EPYC+7443&id=4708 |

| Intel Xeon Platinum 8168 | 2,092 | 2.7 | https://www.cpubenchmark.net/cpu.php?cpu=Intel+Xeon+Platinum+8168+%40+2.70GHz&id=3111 |

| 4th Gen AMD EPYC 7Hx (HBv4) | 2022 | 2.6 | https://www.cpubenchmark.net/cpu.php?cpu=AMD+EPYC+7H12&id=3618&cpuCount=2 |

| AMD EPYC 9V33X (Genoa-X) (9334) | 2,367 | 2.7 | https://www.cpubenchmark.net/cpu.php?cpu=AMD+EPYC+9334&id=5519 |

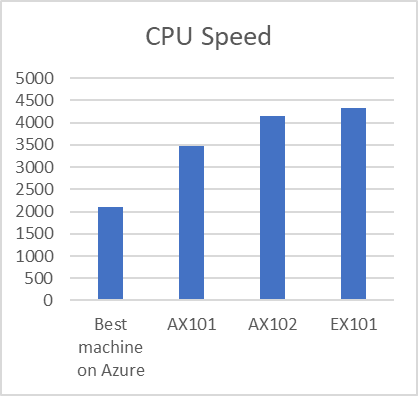

Inside Azure, the processor with the highest speed is thus AMD EPYC 7V12 (7443) with a score of 2907: These processors are only available inside the HBv2-series.

We’ll now make a pricing simulation for a virtual machines based on a the H series.

We start here: https://azure.microsoft.com/en-us/pricing/vm-selector/

Here are the configuration steps for the Wizard on Azure:

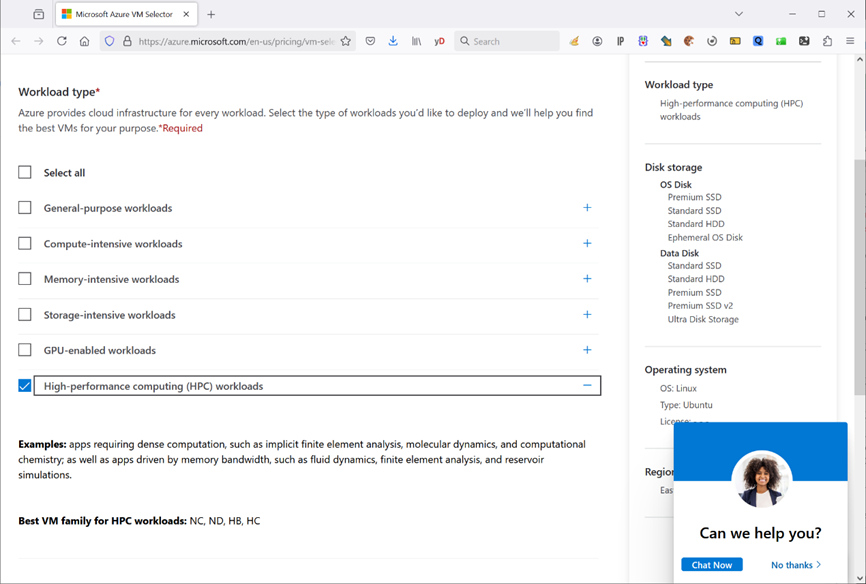

1. Select the “H series” for high performance computing load:

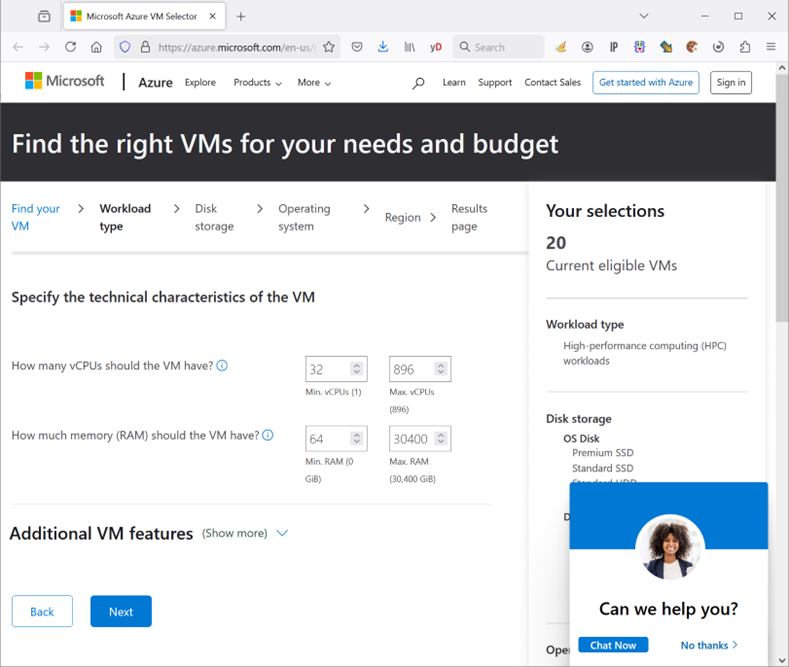

2. We want a machine with at least 32 cores and 64 GB of RAM:

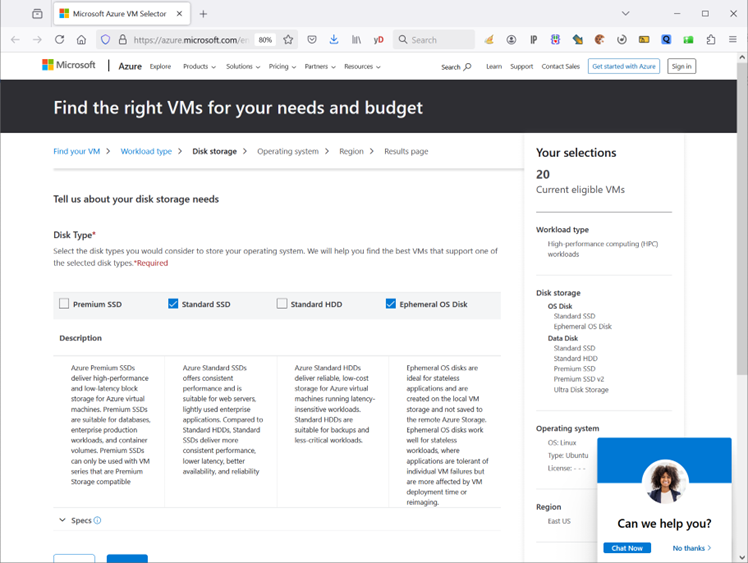

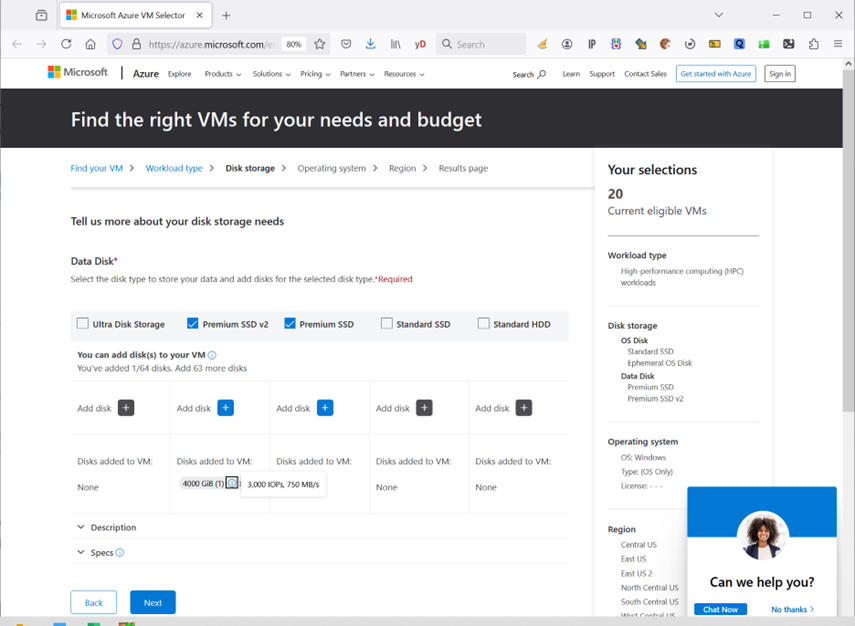

3. We want a machine with a fast SSD for the OS:

4. We want to store around 4 TB of data (same capacity as the machines on Hetzner): When we select this size, we can only get a SSD with an access-speed of 750 Mbyte/sec (i.e. quite slow). No way to get better speed without exploding the budget.

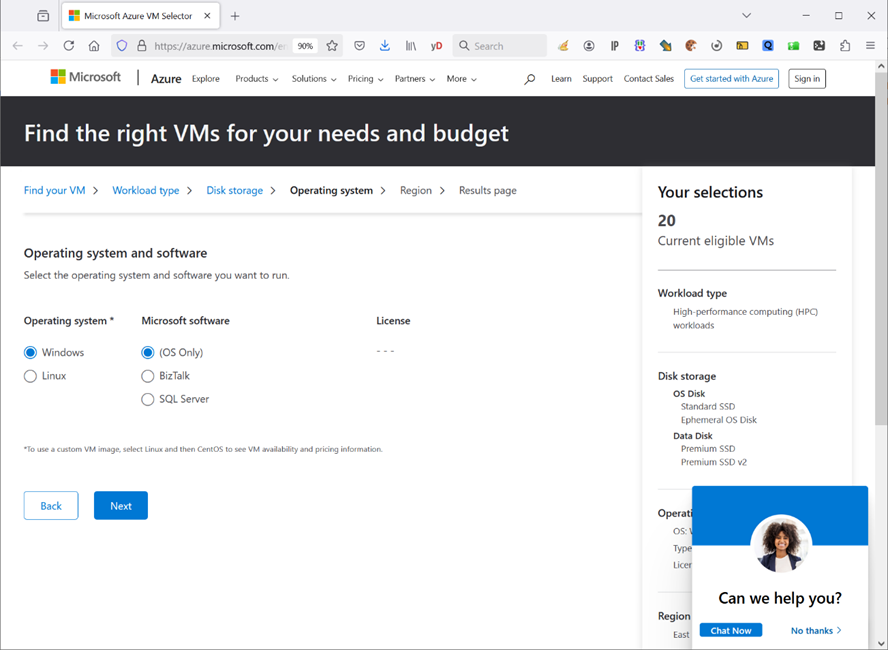

5. The OS on the machine should be Windows:

6. We want our machine in Europe:

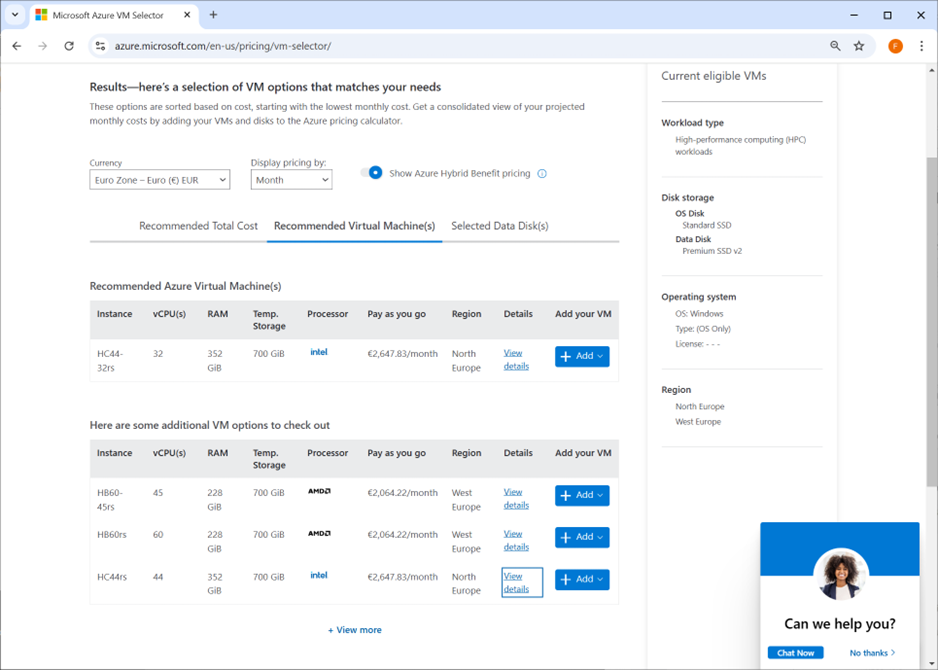

We finally arrive at a proposition from the Azure-Wizard for some machine based on HB60rs series.

The monthly price for the machine (without the SSD storage) is 2647 €: See screenshot:

We tried to make many different other simulations that would allow us to get another type of instance with some CPUs. Actually, when you click ‘+ View more’ , you can see more machines with really bad CPU’s (AMD EPYC 7002 and 7003). The only instance that is slightly better that the others is from the series HC44rs that has an Intel Xeon 8168 processor (you can see the exact processors when clicking on the “View details”) with a performance score of 2092.

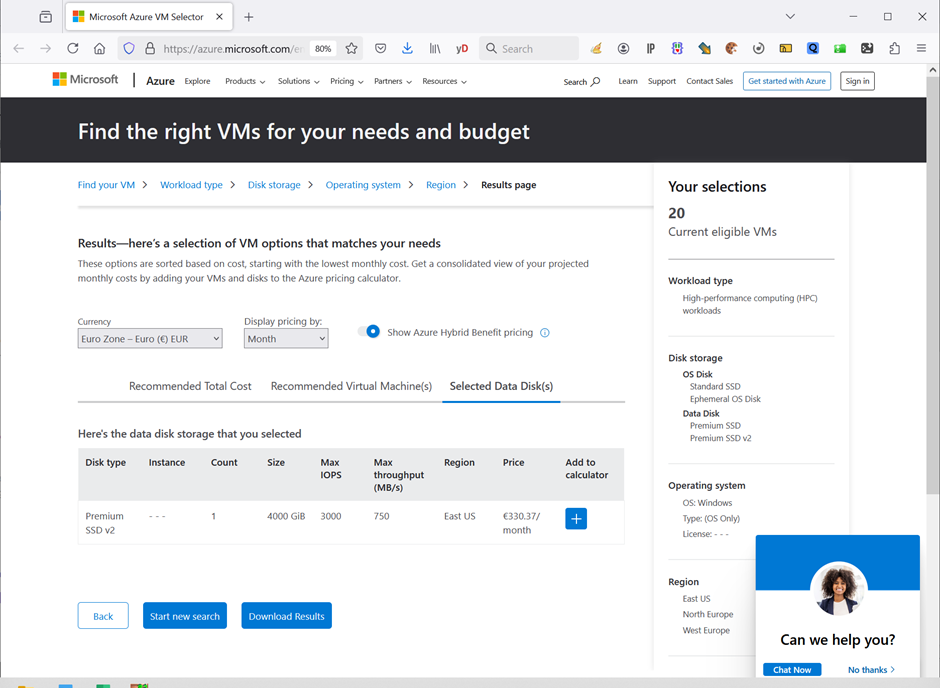

In addition to the machine cost, the monthly storage cost is around 330 €:

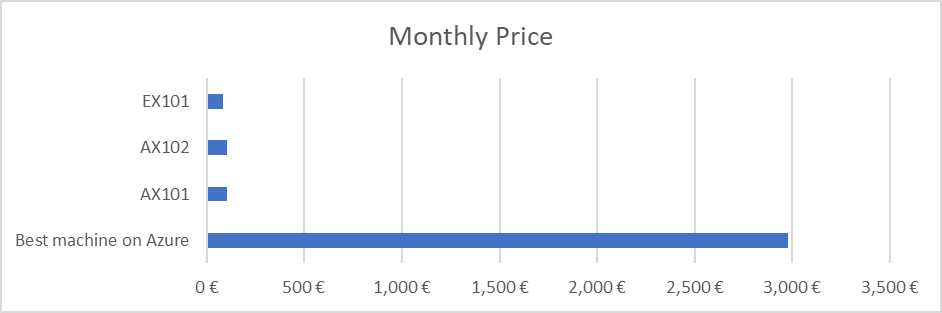

Thus, the total monthly cost for the best Azure machine is 2647 + 330 = 2977 €/month

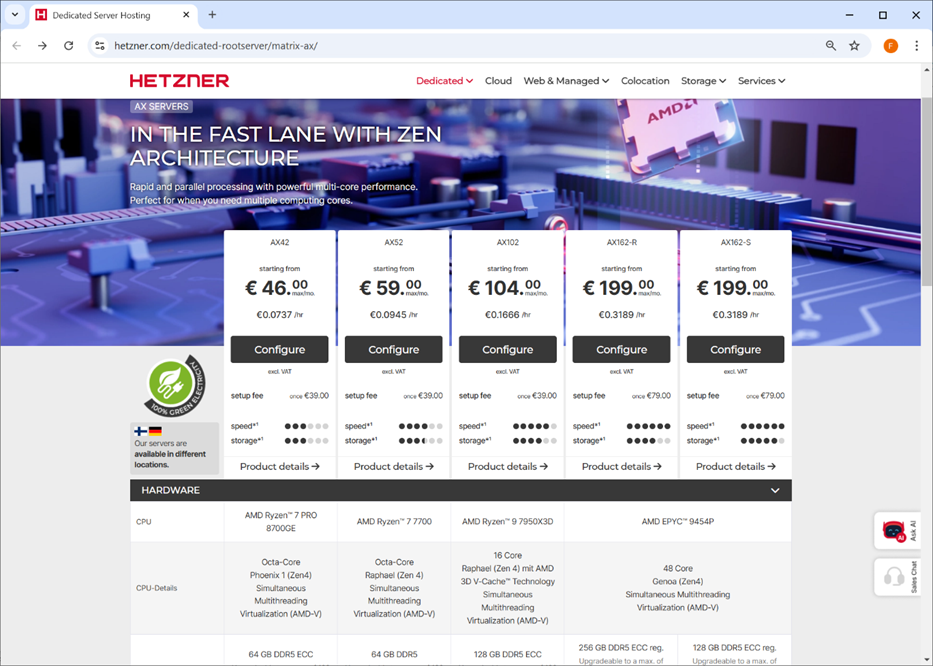

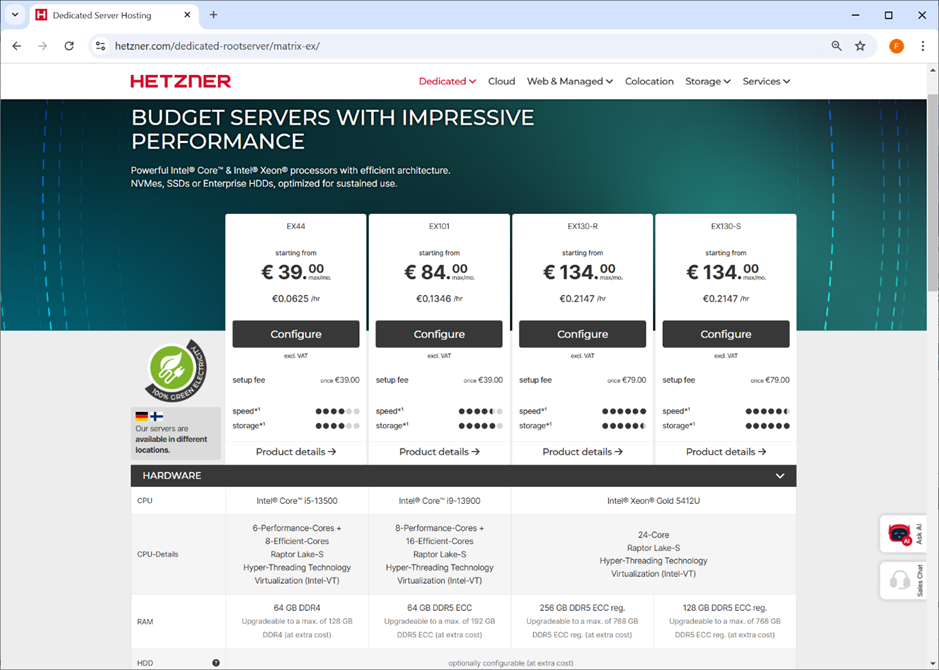

Let’s now compare the best&most efficient machine from Azure to 2 machines readily available on Hetzner: AX102 and EX101. Since the machine from Fiberklaar is the AX101 (an older version from AX102), we’ll also add it to the comparison.

Here is a table with the 3 processors from Hetzner:

| Processor | Single Thread Rating | Clock Speed | URL |

| AX101 AMD Ryzen 9 5950X | 3469 | 3.4 | https://www.cpubenchmark.net/cpu.php?cpu=AMD+Ryzen+9+5950X&id=3862 |

| AX102 AMD Ryzen 9 7950X3D | 4150 | 4.2 | https://www.cpubenchmark.net/cpu.php?id=5234&cpu=AMD+Ryzen+9+7950X3D |

| EX101 Intel Core i9-13900 | 4330 | 5 | https://www.cpubenchmark.net/cpu.php?id=5176&cpu=Intel+Core+i9-13900 |

Here are the specs for the AX102: https://www.hetzner.com/dedicated-rootserver/matrix-ax/

Here are the specs for the EX101: https://www.hetzner.com/dedicated-rootserver/matrix-ex/

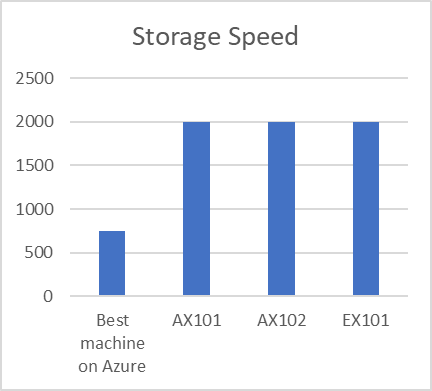

Here is the final comparison table between Hetzner and Azure:

| Best machine on Azure | AX101 | AX102 | EX101 | |

| CPU name | AMD EPYC 7551 | AMD Ryzen 9 5950X | AMD Ryzen 9 7950X3D | Intel Core i9-13900 |

| CPU Speed | 2092 | 3469 | 4150 | 4330 |

| RAM | 200 GB | 128 GB | 128 GB | 64 GB |

| Storage capacity | 4 TB | 4 TB | 4 TB | 4 TB |

| Storage Speed | 750 MB/sec | 2000 MB/sec | 2000 MB/sec | 2000 MB/sec |

| Monthly Price | 2977 € | 102 € | 104 € | 84 € |

We recommend to use the EX101 server on Hetzner because it is:

- 22.8 times cheaper than the best available Azure server

- 2.45 times faster in terms of CPU than the best available Azure server

- 2.66 times faster in terms of Storage-Access-Speed (to read & write data on SSD) than the best available Azure server

- The Hetzner server has enough RAM (64GB) to do everything and anything with TIMi.

Click here to contact us and find out more about our solutions.

]]>

Our assessment of these 4 intense days

1) New partners and potential customers from the USA, Japan, Peru, Germany, France and Belgium, with a general interest in :

- Data governance.

- Reducing CO₂ emissions.

- Processing massive volumes of data.

↳ We were in the right place at the right time.

2) Great meetings, especially during Belgian Beer Time and the Belwest evening-special mention to WIMO and it’s always a great pleasure to be placed next to our partners from PHOENIX AI ® .

3) Top media coverage with several interviews and articles in the press:

Interview with Thomas by Thierry Huart-Eeckhoudt of Startup Vie.

Interview with Thomas by Thierry Huart-Eeckhoudt of Startup Vie. Interview with Daniel by Marc-Lionel Gatto of myGlobalVillage.com

Interview with Daniel by Marc-Lionel Gatto of myGlobalVillage.com  L’Echo: “CES 2025: Nvidia et la Belgique en tête d’affiche à Las Vegas”

L’Echo: “CES 2025: Nvidia et la Belgique en tête d’affiche à Las Vegas” Trends Canal Z: “CES de Las Vegas: les entreprises wallonnes entre satisfaction et prudence”

Trends Canal Z: “CES de Las Vegas: les entreprises wallonnes entre satisfaction et prudence”

& more to come-we’ll keep you posted.

A huge thank you to Wallonia Export & Investment Agency (AWEX), Agence du Numérique (AdN), Digital Wallonia and BELWEST – Chamber of Commerce for organizing and enabling us to be present on this international stage.

Thanks also to everyone who stopped by our stand.

Click here to contact us and find out more about our solutions.

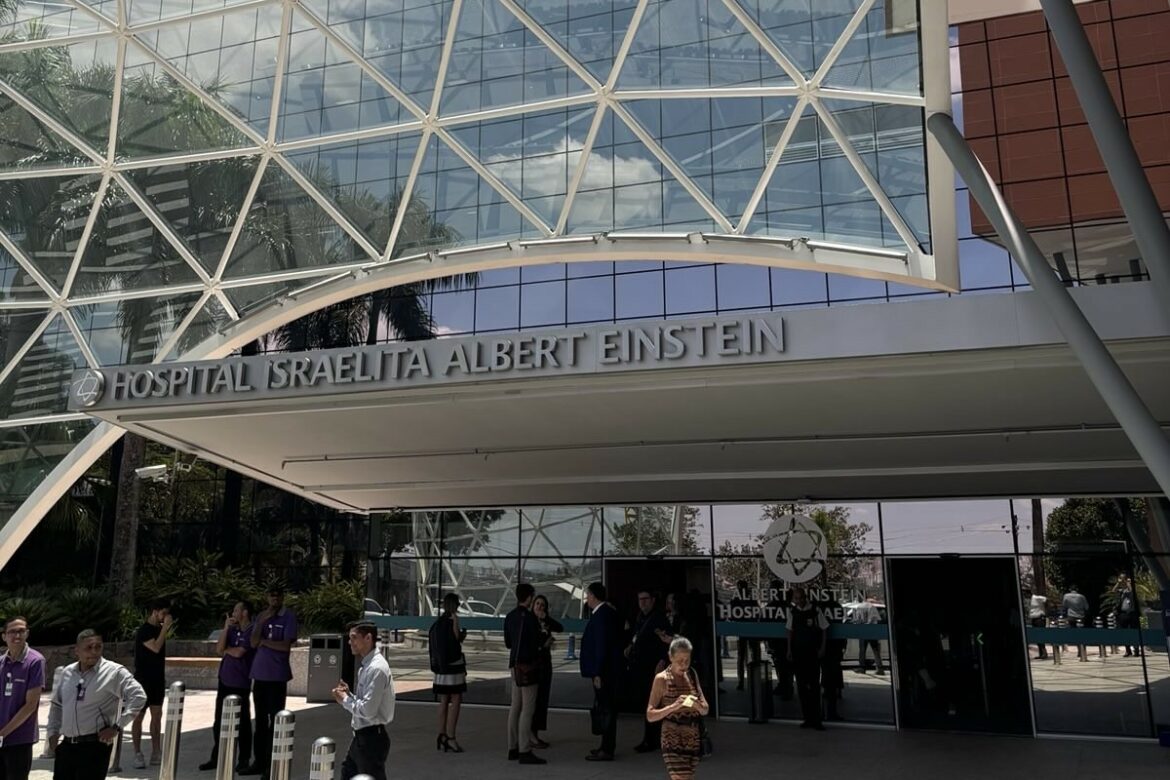

Thomas Van Heugen, our Head of Sales, took part in the Belgian economic mission to Brazil, led by HRH Princess Astrid of Belgium and organized by AWEX.

An opportunity, but also a challenge for Thomas to represent us among Wallonia’s most innovative companies.

Some highlights

- Visit to Helibras (Airbus helicopter division).

- Visit to the Hospital Albert Einstein, faced with colossal volumes of data – a challenge our tools are already solving for our customers.

- Meeting with Leonardo Aleixo de Oliveira, Latin America Director of Coface, a credit insurance specialist.

- Meeting with Embraer, a leader in civil and military aviation, where synergies similar to those developed with Safran are possible.

- Meeting with Fiocruz (research center), for whom our GIS technologies can help them map medical data to better control epidemics.

- Meeting with Rudinei Carapinheiro, director of IPV7, interested in our predictive modeling tool, Modeler, to complete their offer.

In conclusion, this mission reinforced our conviction that we can bring real added value to these key sectors in which Brazil is investing heavily.

We are proud to have been selected among the best Walloon companies to represent our region’s expertise.

Many thanks to Wallonia Export & Investment Agency, Agência Valã para a Exportação e para os Investimentos Estrangeiros (AWEX) to Laurent Bernard, Olivia Timmers, Rodrigo dos SANTOS A. GARCIA, and Karelle Lambert for this opportunity.

Special mention to the Belgian companies we met during the mission: UNIVERCELLS, NCP Wallonie, Shur-Lok International, Dumoulin Aero, OLYS Pharma, Skywin and Dirty Monitor.

And well done to Thomas for rising to the challenge.

Brazil is just the beginning.

Click here to contact us and find out more about our solutions.

]]>

Effective decision-making is the cornerstone of any successful business in today’s fast-paced world. Leveraging analytics is no longer optional but necessary for companies aiming to stay competitive. Advanced analytics transforms raw data into meaningful insights, guiding strategic decisions and driving operational efficiency.

The Importance of Data-Driven Decision-Making

Data-driven decision-making involves gathering, analyzing, and applying insights to guide business decisions. This approach is essential because it enables businesses to:

- Identify Trends and Opportunities: By analyzing historical data, companies can predict future trends and identify new market opportunities.

- Improve Operational Efficiency: Analytics helps streamline operations by pinpointing inefficiencies and suggesting improvements.

- Enhance Customer Experience: Understanding customer behavior and preferences through data analysis enables personalized marketing and better customer service.

- Mitigate Risks: Predictive analytics can foresee potential risks and enable preemptive measures to avoid them.

The Role of Advanced Analytics

Advanced analytics goes beyond traditional business intelligence (BI) by utilizing sophisticated techniques such as machine learning (ML), artificial intelligence (AI), and data mining. These methods allow for more accurate predictions and deeper insights. TIMi, a company at the forefront of advanced analytics, offers tools to optimize decision-making processes.

TIMi: Pioneering Advanced Analytics

Founded by Frank Vanden Berghen, TIMi (The Intelligent Mining Machine) has established itself as a leader in the analytics software industry. Initially named “Business-Insight SPRL,” the company rebranded to TIMi on December 28, 2017. This change reflects its core product, a comprehensive software suite that includes TIMi Modeler, Stardust, Anatella, and Kibella.

TIMi’s Software Suite

- TIMi Modeler: One of the first auto-ML (automated machine learning) tools commercially available, TIMi Modeler automates the machine learning process, making it accessible even to non-experts. This tool has shown its capabilities in leading competitions, such as the 2009 KDD Cup, where it ranked 18th out of over 1200 participants.

- Stardust: Launched in June 2010, Stardust specializes in advanced clustering algorithms like K-Means++ and PCA, handling large datasets efficiently. It’s particularly useful in sectors with extensive customer bases, such as telecommunications and retail.

- Anatella: Introduced in January 2011, Anatella is the core component of the TIMi suite. It is an analytical ETL (Extract, Transform, Load) tool that facilitates the preparation and transformation of large datasets for advanced analytics, surpassing traditional ETL tools in speed and functionality.

- Kibella: TIMi’s latest addition, Kibella, enhances data visualization and dashboarding, making it easier for businesses to interpret complex data and share insights across teams.

Implementing Analytics for Optimal Decision-Making

To effectively use analytics for decision-making, companies need to follow a structured approach:

- Define Objectives

Clearly outline the business objectives and questions that need answering. This could range from improving customer retention rates to optimizing supply chain operations.

- Collect Relevant Data

Gather data from various sources, ensuring it is accurate and up-to-date. Integrating data from multiple systems provides a comprehensive view of the business landscape.

- Choose the Right Tools

Selecting the appropriate analytics tools is crucial. TIMi’s suite offers versatile options that cater to different analytical needs, from data transformation with Anatella to predictive modeling with TIMi Modeler.

- Use an Iterative Approach for Data Analysis

Build complex workflows gradually to simplify maintenance and improvement. Use tools like TIMi to enhance clarity and ensure the sustainability of data processes.

- Self-Service

Enable those who understand the problem to solve it, reducing dependence on specialized data teams. Foster easy collaboration between business users and technical experts (e.g., R, Python, JS coders). Ensure tools and processes support this collaborative and self-reliant approach.

- Use a Federative Approach

Provide a single platform for business analysts, data scientists, engineers, CDPOs, DBAs, and executives to collaborate seamlessly. Break down silos and promote a unified approach, leveraging diverse expertise for cohesive data strategies.

- Straightforward Automation

Simplify the automation of data processes to enhance scalability and repeatability. Ensure data science efforts have a tangible impact on business outcomes. Make sure that your solutions are effectively going into production and are not confined to the data lab. Free up human resources for higher-value tasks and improve the speed and accuracy of insights.

- Elimination of Variable Costs

Minimize variable costs associated with analytical questions to incentivize data usage. This encourages the widespread use of data analytics and experimentation. Promote a culture of continuous innovation without the fear of escalating costs, ensuring data-driven decision-making is economically viable.

Companies can foster a robust data culture that empowers all employees by implementing these key elements. This holistic approach ensures that data-driven insights are timely, relevant, and actionable, ultimately leading to improved business outcomes.

TIMi’s Impact on Decision-Making

TIMi’s software has significantly enhanced decision-making across various industries. In collaboration with telecom operators in Africa, TIMi has shown its ability to efficiently process large volumes of data. This allows operators to create comprehensive, daily updated customer profiles with extensive data points and predictive models, which are valuable for gaining customer insights, improving retention, and identifying cross-selling opportunities.

The compatibility and efficiency of TIMi’s tools, built with high-speed C and Assembler code, allows businesses to achieve these results with minimal infrastructure. This technological advancement positions TIMi as a vital partner for companies looking to enhance their decision-making processes through analytics.

Source : How TIMi Tools Enhance Data-Driven Business Decisions by The Chicago Journal

]]>

Big shout-out to our amazing team after this intense week at BIG DATA & AI PARIS

Huge thanks to everyone who stopped by to see us and to the event organizers. It was a pleasure to connect and share our solutions with you all.

Everyone brings their unique skills and a healthy dose of motivation. We’re super proud of what we’re achieving together.

We can’t wait to see what we’ll accomplish together next!

Click here to contact us and find out more about our solutions.

]]>