In previous installments of this series we built out a fully functional plugin dedicated to tracking Bgp Peering connections. In this post we'll add final components: object permissions and API views.

Developing NetBox Plugin tutorial series

- Developing NetBox Plugin - Part 1 - Setup and initial build

- Developing NetBox Plugin - Part 2 - Adding web UI pages

- Developing NetBox Plugin - Part 3 - Adding search panel

- Developing NetBox Plugin - Part 4 - Small improvements

- Developing NetBox Plugin - Part 5 - Permissions and API

Contents

Adding permissions

Right now all users can view, edit and delete Bgp Peering objects. In the production system we would like to be able to have more granular control over who can perform a given operation. This is where the permissions system comes in.

In our plugin we will leverage Django authentication system [1] to enable permissions for views we built out.

Adding permissions to views

Below are the changes I made to views.py to add permission handling to each of the views:

# views.py

from django.contrib.auth.mixins import PermissionRequiredMixin

...

class BgpPeeringView(PermissionRequiredMixin, View):

"""Display BGP Peering details"""

permission_required = "netbox_bgppeering.view_bgppeering"

...

class BgpPeeringListView(PermissionRequiredMixin, View):

"""View for listing all existing BGP Peerings."""

permission_required = "netbox_bgppeering.view_bgppeering"

...

class BgpPeeringCreateView(PermissionRequiredMixin, CreateView):

"""View for creating a new BgpPeering instance."""

permission_required = "netbox_bgppeering.add_bgppeering"

...

class BgpPeeringDeleteView(PermissionRequiredMixin, DeleteView):

"""View for deleting a BgpPeering instance."""

permission_required = "netbox_bgppeering.delete_bgppeering"

...

class BgpPeeringEditView(PermissionRequiredMixin, UpdateView):

"""View for editing a BgpPeering instance."""

permission_required = "netbox_bgppeering.change_bgppeering"

...

I've only included bits that have changed. Below is quick breakdown of what we did:

-

We import

PermissionRequiredMixinmixin class fromdjango.contrib.auth.mixins. This class will handle permission checks logic and will plug into the NetBox's existing authorization system. -

For each view class we add

PermissionRequiredMixinto classes we subclass from. E.g.

class BgpPeeringCreateView(PermissionRequiredMixin, CreateView)

- In each of the classes we enabled for permission checks we need to specify permission, or iterable of permissions, required to access the view. To do that we use parameter

permission_required. E.g.

class BgpPeeringDeleteView(PermissionRequiredMixin, DeleteView):

"""View for deleting a BgpPeering instance."""

permission_required = "netbox_bgppeering.delete_bgppeering"

Permission names follow the following naming convention:

<app_name>.{view|add|delete|change}_<model_name>

Example permission names:

-

Allow displaying BgpPeering instance:

netbox_bgppeering.view_bgppeering. -

Allow deleting BgpPeering instance:

netbox_bgppeering.delete_bgppeering. -

Allow modifying BgpPeering instance:

netbox_bgppeering.change_bgppeering.

After reloading NetBox try logging in as a non-privileged user and accessing BgpPeering plugin. You should get Access Denied message, like the one below:

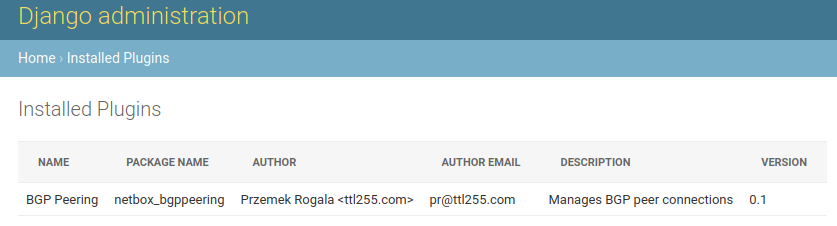

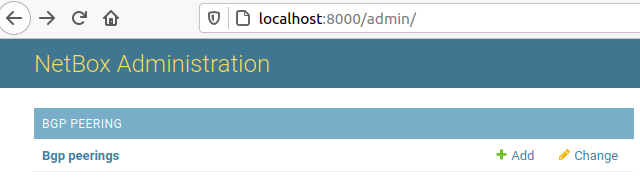

You can now switch to admin user and log into admin panel. In the admin panel if you add new permissions in User > Permissions, you should see that netbox_bgppeering > bgp peering permission now appears on the list of available object types.

Adding permissions to Web GUI elements

We now have in place permissions that control the ability to view, add, delete, and edit Bgp Peering objects. But Web GUI elements, like the edit button, will still be shown to all users. Time to change that and make this behaviour permission dependent.

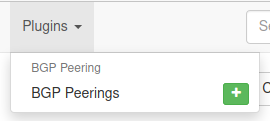

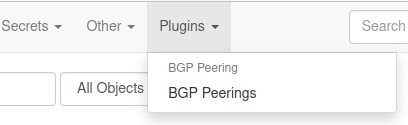

- First we set permission required to see plus,

+, button in the top plugin menu. We editnavigation.py:

# navigation.py

...

menu_items = (

PluginMenuItem(

...

buttons=(

PluginMenuButton(

...

permissions=["netbox_bgppeering.add_bgppeering"],

),

There's only one addition. We pass a new parameter, permissions, when creating a PluginMenuButton object. We set its value to an iterable of permissions, in our case it's just one permission: netbox_bgppeering.add_bgppeering.

After this is done only users who have Can add permission on BgpPeering objects will be able to see the + button.

- The final changes are to the templates that show

BgpPeeringrelated buttons. In our case those arebgppeering.htmlandbgppeering_list.html.

<!-- bgppeering.html -->

...

<div class="col-sm-8">

<div class="pull-right noprint">

{% if perms.netbox_bgppeering.change_bgppeering %}

<a href="{% url 'plugins:netbox_bgppeering:bgppeering_edit' pk=bgppeering.pk %}" class="btn btn-warning">

<span class="{{ icon_classes.pencil }}" aria-hidden="true"></span> Edit

</a>

{% endif %}

{% if perms.netbox_bgppeering.delete_bgppeering %}

<a href="{% url 'plugins:netbox_bgppeering:bgppeering_delete' pk=bgppeering.pk %}" class="btn btn-danger">

<span class="{{ icon_classes.trash }}" aria-hidden="true"></span> Delete

</a>

{% endif %}

</div>

</div>

...

<!-- bgppeering_list.html -->

...

<div class="pull-right noprint">

{% if perms.netbox_bgppeering.add_bgppeering %}

<a href="{% url 'plugins:netbox_bgppeering:bgppeering_add' %}" class="btn btn-primary">

<span class="{{ icon_classes.plus }}" aria-hidden="true"></span> Add

</a>

{% endif %}

</div>

...

To check permission is set before including the element we use {% if perms.<permission_name> %} conditional.

In our case we have the below conditionals:

{% if perms.netbox_bgppeering.change_bgppeering %}to includeEditbutton.{% if perms.netbox_bgppeering.delete_bgppeering %}to includeDeletebutton.{% if perms.netbox_bgppeering.bgppeering_add %}to includeAddbutton.

If the user doesn't have one of those permissions then the corresponding button will not show in Web GUI.

And that's it. With changes to the views and Web GUI elements we have implemented a permissions system for our plugin.

Next stop, API!

Source code up to this point is in branch adding-permission if you want to check it out: https://github.com/progala/ttl255-netbox-plugin-bgppeering/tree/adding-permissions.

Adding API

Being able to interact with BgpPeering objects in Web GUI is great and useful but it's not well suited to automation. If we want to programmatically interact with our plugin we need to expose it via API. And that's what we're going to do now.

To add code handling API we need to create a new directory called api. This is where source code files related to API functionality need to go.

In case of our plugin the path that includes api directory looks like so:

../ttl255-netbox-plugin-bgppeering/netbox_bgppeering/api

In this directory we will create three source code files, serializers.py, urls.py and views.py:

├── api

│ ├── serializers.py

│ ├── urls.py

│ └── views.py

With that out of the way, let's start coding!

Building serializer for BgpPeering objects

First thing we need to do is to specify how BgpPeering model instances will be rendered into json representation.

For that purpose we will use Django REST framework [2] as well as existing NetBox serializers.

Our serializers go into the api/serializers.py file, and below is the serializer I wrote for the BgpPeering model.

# api/serializers.py

from rest_framework import serializers

from ipam.api.nested_serializers import (

NestedIPAddressSerializer,

)

from dcim.api.nested_serializers import NestedDeviceSerializer, NestedSiteSerializer

from netbox_bgppeering.models import BgpPeering

class BgpPeeringSerializer(serializers.ModelSerializer):

"""Serializer for the BgpPeering model."""

site = NestedSiteSerializer(

many=False,

read_only=False,

required=False,

help_text="BgpPeering Site",

)

device = NestedDeviceSerializer(

many=False,

read_only=False,

required=True,

help_text="BgpPeering Device",

)

local_ip = NestedIPAddressSerializer(

many=False,

read_only=False,

required=True,

help_text="Local peering IP",

)

class Meta:

model = BgpPeering

fields = [

"id",

"site",

"device",

"local_ip",

"local_as",

"remote_ip",

"remote_as",

"peer_name",

"description",

]

Few new concepts appear here so let's dig into this code.

-

from rest_framework import serializers- We import moduleserializerswhich contains base serializer class we will use. -

Next we import nested serializers [3] for NetBox objects that we link to in our model. This will allow us to:

- Return

jsonrepresentation of those objects nested inside of BgpPeering data structure. - Use of

name,slug, etc., fields for linked objects in POST/PATCH/PUT requests. Otherwise we'd only be allowed to passidfor these.

- Return

from ipam.api.nested_serializers import (

NestedIPAddressSerializer,

)

from dcim.api.nested_serializers import NestedDeviceSerializer, NestedSiteSerializer

- Final import is the model that we're building a serializer for:

from netbox_bgppeering.models import BgpPeering.

With imports out of the way we can build the serializer class.

-

BgpPeeringSerializer(serializers.ModelSerializer)- I named our classBgpPeeringSerializerand I'm subclassingModelSerializerclass. That class will automatically handle serializing of most of the fields in our model, among other things. -

In internal class

Metawe specify a model that is being serialized, hereBgpPeering. Then we specify a list of fields that we want to be included when serialization takes place. Fields that don't require special treatment will be automatically rendered thanks to theBgpPeeringSerializerclass.

class Meta:

model = BgpPeering

fields = [

"id",

"site",

"device",

"local_ip",

"local_as",

"remote_ip",

"remote_as",

"peer_name",

"description",

]

- Finally, I'm telling the serializer class that three of the model fields need to be treated differently. Namely I want linked models to be also serialized and included in the

jsonpayload returned in the API response.

This is not required but in a lot of cases it makes sense to return these data structures nested inside of the main structure. It will help us avoid multiple API calls that would be otherwise needed to retrieve details of linked objects.

It also simplifies adding/modifying objects via API, as already mentioned.

For each of the fields that need to contain nested data we must:

- Identify the nested serializer matching model this field links to.

- Instantiate the class and assign the resulting object to the parameter named after the field.

For example below is the nested serializer we use for site field:

site = NestedSiteSerializer(

many=False,

read_only=False,

required=False,

help_text="BgpPeering Site",

)

When creating NestedSiteSerializer object we need to provide a few arguments [4]:

many- set it to match the relationship type set on the field in the model. We don't have any many-to-many relationships so in our casemanyis set toFalse.read_only- set it toTrueif you want the field to be read-only and not allowed as the input in API calls. In our case all custom fields can be used as input so we set it toFalse.required- specifies whether field is required. This should follow the corresponding property we set for the model field.help_text- used to give this field description that is picked up when rendering the field.

And that's it, our serializer is completed.

Building API views

With the serializer taken care of we move onto API view. This view goes into the api/views.py file and it will handle all of the different HTTP API calls.

# api/views.py

from rest_framework import mixins, viewsets

from netbox_bgppeering.models import BgpPeering

from netbox_bgppeering.filters import BgpPeeringFilter

from .serializers import BgpPeeringSerializer

class BgpPeeringView(

mixins.CreateModelMixin,

mixins.DestroyModelMixin,

mixins.ListModelMixin,

mixins.RetrieveModelMixin,

mixins.UpdateModelMixin,

viewsets.GenericViewSet,

):

"""Create, check status of, update, and delete BgpPeering object."""

queryset = BgpPeering.objects.all()

filterset_class = BgpPeeringFilter

serializer_class = BgpPeeringSerializer

Not too much code but some new concepts, let's break it down:

viewsets.GenericViewSet- This is aViewSet[5] class that lets us combine multiple different views into one view. Normally we would have to build separate views for adding, editing, deleting, etc. objects. We then would need to separately add each of these to URL conf. WithViewSetwe need just one view and we will only need one entry in URL conf.

Next, we subclass a number of mixin classes implementing different actions that will allow us to support HTTP request methods.

mixins.CreateModelMixin- This class takes care of creating and saving a new model instance. Responds to HTTP POST.mixins.DestroyModelMixin- This class will handle deletion of model instances. Responds to HTTP DELETE.mixins.ListModelMixin- This class allows returning a list of instances in API response. Used with HTTP GET.mixins.RetrieveModelMixin- This class handles retrieval of a single model instance. Used with HTTP GET.mixins.UpdateModelMixin- Finally, this class enables edits, both merge and replace. Used with HTTP PUT and PATCH.

In the body of the class we have three attributes:

-

queryset- This is like in a normal view, we specify BgpPeering objects that are of interest. Here we want all objects to be up for grabs in API calls:queryset = BgpPeering.objects.all() -

filterset_class- This specifies a filter class that can be used to apply search queries when retrieving objects via API. We're re-usingBgpPeeringFilterwhich we built for the Search Panel. This means we can filter objects through API using the same queries we used in Web GUI.filterset_class = BgpPeeringFilterFor example, if we wanted to get Bgp Peering objects that have string

primaryin description we could use general query parameterq:http://localhost:8000/api/plugins/bgp-peering/bgppeering/?q=primary -

serializer_class- Finally we have a serializer class that we just built. This will be used to render our model intojsonrepresentation.serializer_class = BgpPeeringSerializer

Defining URL for API calls

With serializer and view classes in place we just need to tie it together and expose API endpoints via URL. Code for that goes into the api/urls.py file.

# api/urls.py

from rest_framework import routers

from .views import BgpPeeringView

router = routers.DefaultRouter()

router.register(r"bgppeering", BgpPeeringView)

urlpatterns = router.urls

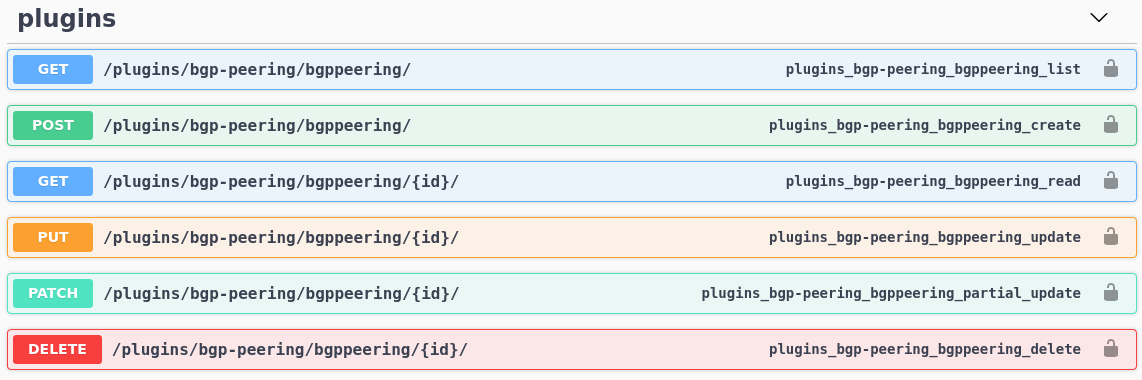

This is slightly different to urls.py for Web GUI views. Here we use the router class DefaultRouter [6] which comes from Django REST Framework. This will automatically handle requests to API URLs exposed by API using different HTTP methods.

What this means is that we don't have to manually create multiple URL rules. Thanks to DefaultRouter we need only one:

router.register(r"bgppeering", BgpPeeringView)

That's it. This one URL rule will handle GET, POST, etc. requests automatically.

Finally, we assign urls auto-generated by router class to urlpatterns variable. This variable is what Django uses for path mappings.

urlpatterns = router.urls

And we're done! With URLs in place we can take our API for a spin!

API usage examples

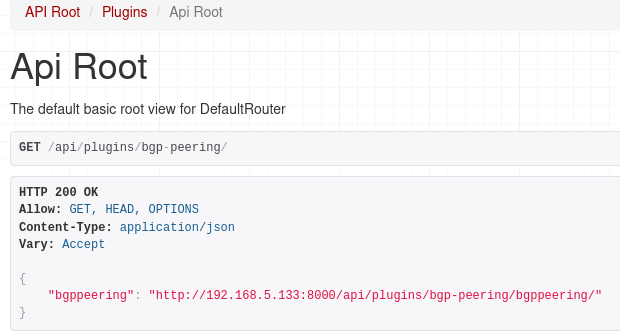

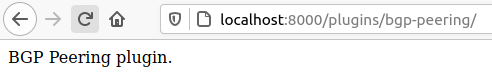

If you now navigate to the main API URL for our plugin, http://localhost:8000/api/plugins/bgp-peering/, you should see the available endpoint.

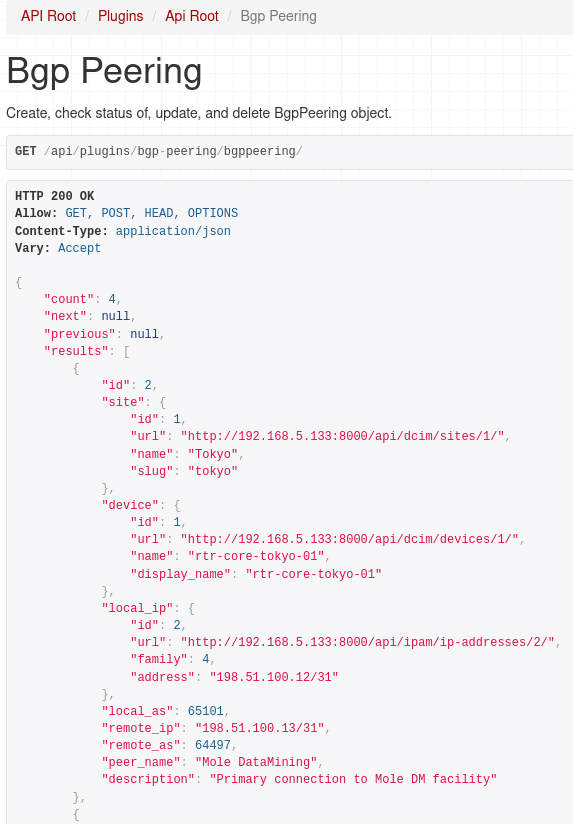

If we followed the URL for our endpoint we should get json reply with list of Bgp Peerings:

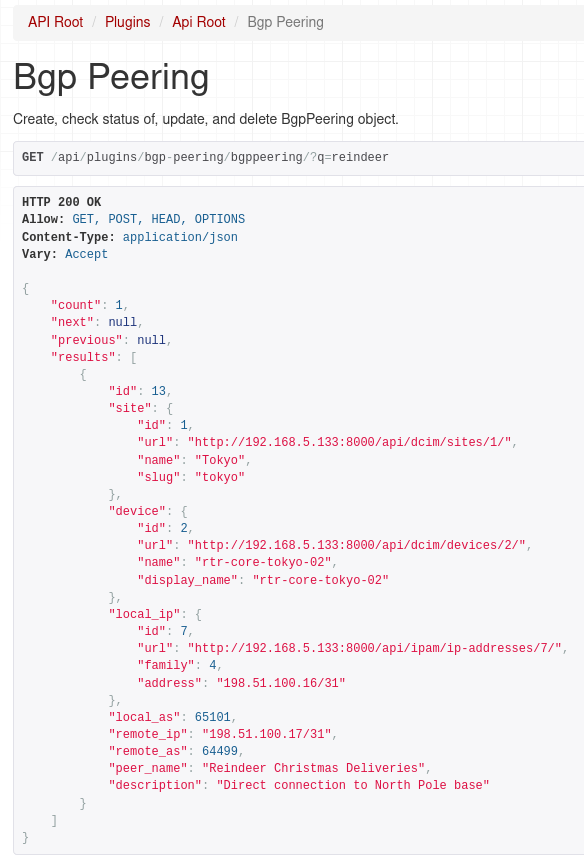

And as I mentioned earlier we can use filtering as well, here we ask for peerings that have 'reindeer' string in the peer name or description.

What's nice is that documentation for our API now shows up in Swagger docs:

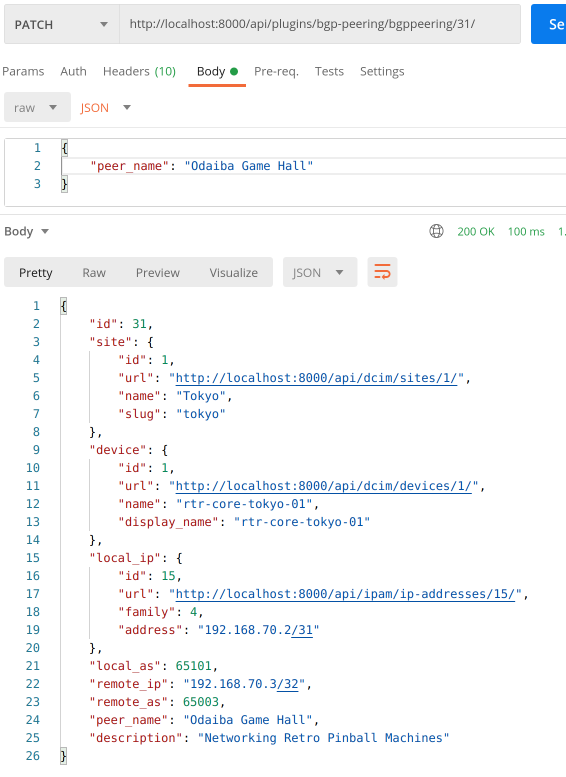

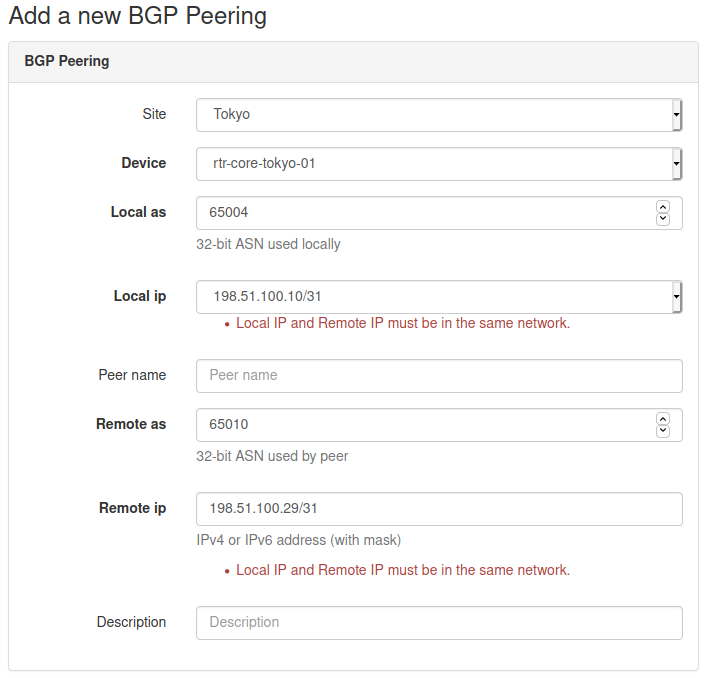

So we can view objects, but can we add or modify them?

Let's try out other HTTP methods using Postman.

POST:

Here we're adding a new object, notice nested payload values for site, device and IP address. If we didn't define nested serializers for these models we'd have to provide id of the objects we're linking to. This will make it much easier to consume our API for Bgp Peering plugin.

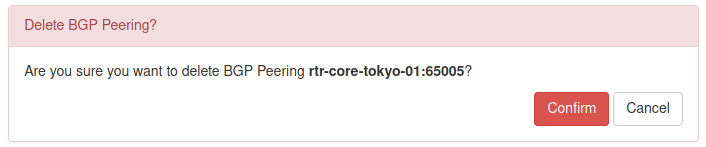

For completeness we'll try out PATCH and DELETE methods as well:

PATCH:

DELETE:

Awesome, everything is working as expected!

In case you were wondering, permissions we implemented in the first part of this post are automatically applied to API views.

This is what happens when user that has read-only access to Bgp Peering objects tries to create a new one:

Conclusion

And with that we came to the end of the tutorial walking you through the development of an example NetBox plugin. During our journey we built a functional plugin that implements a custom model representing Bgp Peering record. We built Web GUI views allowing users to view, add, edit and delete objects. Next we implemented object filtering before adding permissions and API.

There's much more we could do here. We could add export/import functionality. Perhaps improving data validation and search would be a welcome addition. But I believe that what you learned here should give you a solid base that you can build on top of. Everything else is up to your imagination.Happy coding!

Resources

- NetBox docs: Plugin Development: https://netbox.readthedocs.io/en/stable/plugins/development/

- NetBox source code on GitHub: https://github.com/netbox-community/netbox

- NetBox Extensibility Overview, NetBox Day 2020: https://www.youtube.com/watch?v=FSoCzuWOAE0

- NetBox Plugins Development, NetBox Day 2020: https://www.youtube.com/watch?v=LUCUBPrTtJ4

Django authentication system: https://docs.djangoproject.com/en/3.1/topics/auth/default/ ↩︎

Django REST framework: https://www.django-rest-framework.org/ ↩︎

Django REST nested relationships: https://www.django-rest-framework.org/api-guide/relations/#nested-relationships ↩︎

Django REST serializer arguments: https://www.django-rest-framework.org/api-guide/fields/#core-arguments ↩︎

Django REST ViewSets: https://www.django-rest-framework.org/api-guide/viewsets/ ↩︎

Django REST DefaultRouter: https://www.django-rest-framework.org/api-guide/routers/#defaultrouter ↩︎

Welcome to part 4 of the tutorial on developing NetBox plugin. By now BgpPeering plugin is functional but there are few things here and there that could make it better. In this post, we'll go through many improvements that will make the plugin look better and increase its functionality.

Developing NetBox Plugin tutorial series

- Developing NetBox Plugin - Part 1 - Setup and initial build

- Developing NetBox Plugin - Part 2 - Adding web UI pages

- Developing NetBox Plugin - Part 3 - Adding search panel

- Developing NetBox Plugin - Part 4 - Small improvements

- Developing NetBox Plugin - Part 5 - Permissions and API

Contents

- Updating display name of BgpPeering objects

- Enforce the same network for local and remote IPs

- Allowing objects to be deleted

- Allowing objects to be edited

- Prettifying field labels

- Conclusion

- Resources

- BGP Peering Plugin GitHub repository

Updating display name of BgpPeering objects

We'll start improvements by changing default display name of BgpPeering objects.

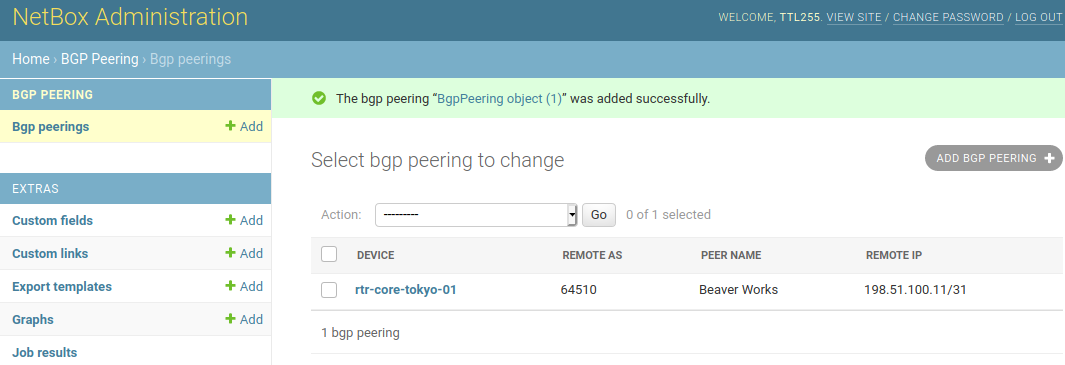

Currently, when we create new objects we get a name that is not very descriptive:

Instead of BgpPeering object (28) we'd like to have something more meaningful.

We could perhaps show here device name, peer name, or even remote BGP AS number.

Unfortunately, we decided to make peer name optional so this might not be a good candidate for the display name of an object. It's possible to include it conditionally. But that would result in inconsistent naming for objects that don't have peer name defined. For the sake of consistency, I will only include the device name and the remote BGP AS number.

Essentially, I want our display name to look like so:

rtr-core-tokyo-02:3131

To do that we need to override __str__ method in BgpPeering model class contained in models.py.

# models.py

class BgpPeering(ChangeLoggedModel):

...

def __str__(self):

return f"{self.peer_name}:{self.remote_as}"

If we now create a new object we'll see the below in changelog:

Much better, now the BGP Peering object names mean something. We can see the local device the peering is on and the AS number of remote peer.

Enforce the same network for local and remote IPs

Local IP address is selected from the list of IP addresses available in NetBox when creating new BGP Peering. But when we add remote IP address we have to type it in manually. If you try entering remote IP that is in a network different than the one local IP is in our form will happily accept it.

It perhaps is not that much of an issue but I thought this is a good place to show you how we can add custom validation to forms.

Add validation to form class

We'll update class BgpPeeringForm in forms.py with code that performs the required validation.

To validate multiple form fields we can use method clean()[1] which comes from Django Form class.

Let's implement our check:

# forms.py

import ipaddress

class BgpPeeringForm(BootstrapMixin, forms.ModelForm):

...

def clean(self):

cleaned_data = super().clean()

local_ip = cleaned_data.get("local_ip")

remote_ip = cleaned_data.get("remote_ip")

if local_ip and remote_ip:

# We can trust these are valid IP addresses. Address format validation done in .super()

if (

ipaddress.ip_interface(str(local_ip)).network

!= ipaddress.ip_interface(str(remote_ip)).network

):

msg = "Local IP and Remote IP must be in the same network."

self.add_error("local_ip", msg)

self.add_error("remote_ip", msg)

First, we import ipaddress library which we'll use to check if IPs belong to the same network.

Next, we go inside of clean() method.

To trigger field validation of individual fields and retrieve the results we call clean() method from the parent class.

cleaned_data = super().clean()

Now that we have validated field values in cleaned_data we can retrieve strings coming from local IP and remote IP form fields.

local_ip = cleaned_data.get("local_ip")

remote_ip = cleaned_data.get("remote_ip")

With values retrieved we write conditional to check if any of them are empty. This should never happen but we might want more processing here in the future so it's prudent to do so.

if local_ip and remote_ip:

Next, we have conditional implementing network checking logic. We use ip_interface [2] function from ipaddress module to create IPv4Interface or IPv4Interface objects from each of our IP addresses.

The interface objects have a handy network attribute that returns IP network the interface belongs to. This allows us to directly compare networks for peering IPs.

If networks are the same we take no action, i.e. form returns with no errors. However, if the networks differ we generate an error message and assign it to msg variable. We then append this error to validation results for local_ip and remote_ip form fields.

if (

ipaddress.ip_interface(str(local_ip)).network

!= ipaddress.ip_interface(str(remote_ip)).network

):

msg = "Local IP and Remote IP must be in the same network."

self.add_error("local_ip", msg)

self.add_error("remote_ip", msg)

Show errors on the form in web UI

We now have code handling validation and generating errors. We now have code handling validation and generating errors. The next step is to update the form template so that the errors show up in the web UI.

I added {% if field.errors %} block to div rendering fields in bgppeering_edit.html:

<div class="col-md-9">

{{ field }}

{% if field.help_text %}

<span class="help-block">{{ field.help_text|safe }}</span>

{% endif %}

{% if field.errors %}

<ul>

{% for error in field.errors %}

<li class="text-danger">{{ error }}</li>

{% endfor %}

</ul>

{% endif %}

</div>

When form fails validation, errors for each form field will be returned in field.errors attribute [^field_erros]. We can then loop over errors and display them under a given field.

Below is an example where I entered IP addresses belonging to different networks. You can see that the form failed validation and errors are displayed under each of the fields.

Allowing objects to be deleted

We have been happily adding BGP Peerings objects but at some point, we might have to delete some of them. Unfortunately, the only way to do it right now is from the admin panel. This is less than ideal and thus we decide to implement delete functionality.

Creating delete view

There are few places we need to modify, let's start with views.py.

from django.urls import reverse_lazy

...

from django.views.generic.edit import CreateView, DeleteView

...

class BgpPeeringDeleteView(DeleteView):

"""View for deleting a BgpPeering instance"""

model = BgpPeering

success_url = reverse_lazy("plugins:netbox_bgppeering:bgppeering_list")

template_name = "netbox_bgppeering/bgppeering_delete.html"

We import reverse_lazy function which will be used to return the page for the given namespace URL.

We also borrow another of Django's generic edit views, DeleteView[3], which will offload a lot of required work. This class class will handle both GET and POST methods.

- If

GETis used view will return the confirmation page. That page should contain a form that willPOSTto the URL pointing to this view. - If

POSTis used then the provided object will be deleted.

For our plugin, we will go down the route of GET followed by POST from the confirmation page form.

The actual view is relatively short:

- We define

BgpPeeringDeleteViewclass inheriting fromDeleteView. modelvariable takes model class for this view, hereBgpPeering.success_urldefines URL to which view will redirect after an object has been deleted. Here I provide a namespaced URL pointing to list view of our records.template_namepoints to the template that will be rendered when we're asked to confirm the deletion.

Next, we look at the deletion confirmation template.

Delete confirmation template

Deleting objects is a serious business so I thought that we should customize the deletion confirmation page. It will also make it look like other prompts in NetBox.

Here is our template:

# bgppeering_delete.html

{% extends 'base.html' %}

{% load form_helpers %}

{% block content %}

<div class="row">

<div class="col-md-6 col-md-offset-3">

<form action="" method="post" class="form">

{% csrf_token %}

<div class="panel panel-{{ panel_class|default:"danger" }}">

<div class="panel-heading">Delete BGP Peering?</div>

<div class="panel-body">

<p>Are you sure you want to delete BGP Peering <strong>{{ object }}</strong>?</p>

<div class="text-right">

<button type="submit" name="_confirm" class="btn btn-{{ button_class|default:"danger" }}">Confirm</button>

<a href="{% url 'plugins:netbox_bgppeering:bgppeering' pk=bgppeering.pk %}" class="btn btn-default">Cancel</a>

</div>

</div>

</div>

</form>

</div>

</div>

{% endblock %}

This is a pretty standard form. We have some styling to make users aware of the seriousness of the operation.

Of interest are two buttons, Confirm and Cancel.

Confirmwill submit the form to the URL pointing to our delete view. This will result in thePOSTaction triggering deletion of the object.Cancelwill take us back to the detailed view of the object instead of deleting it.

Add url to delete view

We created a delete view but there's no way to reach it currently. We need to add URL pointing to it.

Let's add the missing URL:

# urls.py

from .views import (

BgpPeeringCreateView,

BgpPeeringDeleteView,

BgpPeeringListView,

BgpPeeringView,

)

urlpatterns = [

...

path("<int:pk>/delete/", BgpPeeringDeleteView.as_view(), name="bgppeering_delete"),

]

We added BgpPeeringDeleteView class to the import list. Then we created new entry in urlpatterns:

path("<int:pk>/delete/", BgpPeeringDeleteView.as_view(), name="bgppeering_delete")

This is like URL for a detailed view. We use pk attribute again, which is whatBgpPeeringDeleteView will need to locate the object for deletion. We name this URL bgppeering_delete to make referring to it easier.

Adding object view header and delete button

The last thing we're missing is some kind of button in UI that would allow us to delete objects. This is what we're going to do next.

I'm going to edit the detailed object view template and add a few things:

- Breadcrumb with link to the list of objects followed by name of the current object.

- Date showing when this object was created.

- Time elapsed since this object was last updated.

- Delete object button leading to delete confirmation page.

# bgppeering.html

{% block header %}

<div class="row noprint">

<div class="col-sm-8">

<ol class="breadcrumb">

<li><a href="{% url 'plugins:netbox_bgppeering:bgppeering_list' %}">BGP Peerings</a></li>

<li>{{ bgppeering }}</li>

</ol>

</div>

</div>

<div class="col-sm-8">

<a href="{% url 'plugins:netbox_bgppeering:bgppeering_delete' pk=bgppeering.pk %}" class="btn btn-danger pull-right">

<span class="{{ icon_classes.trash }}" aria-hidden="true"></span> Delete

</a>

</div>

<div class="col-sm-8">

<h1>{% block title %}{{ bgppeering }}{% endblock %}</h1>

<p>

<small class="text-muted">Created {{ bgppeering.created }} · Updated <span

title="{{ bgppeering.last_updated }}">{{ bgppeering.last_updated|timesince }}</span> ago</small>

</p>

</div>

{% endblock %}

As you can see I'm overriding block header which comes from base.html. This block is used for elements displayed below the main menu but above the rest of the content.

Then we have 3 divs:

- Div with breadcrumb where we have URL for the page with list objects, followed by the name of the current object.

- Div with button pointing to URL responsible for deleting the object. We pass this URL the

pkvalue identifying the current object. - Div containing the name of the object, as a title. This is followed by the object creation date and time since this object was last updated.

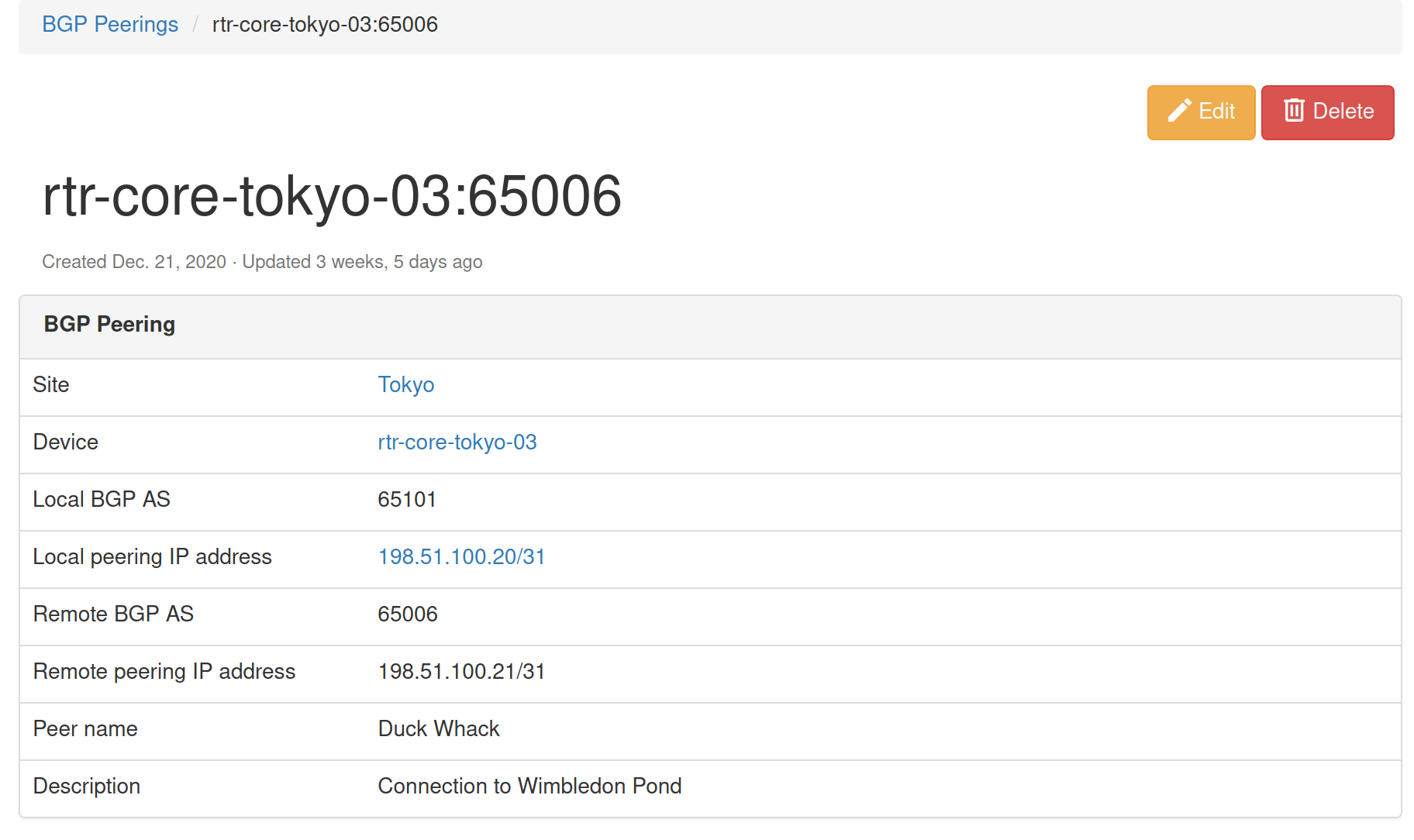

If you were to run the code we added up to this point you should see the object details page looking like the below one:

And when we click Delete button we should be presented with the confirmation prompt:

That's pretty cool, we can now add, and delete objects. This thing is starting to look better and better.

But we're not done here. Why not add edit functionality so that we can modify existing objects?

Allowing objects to be edited

You might be happy to know that to enable editing we can reuse most of the template code used for object creation. We will need to modify it and create an edit view but it takes less work than adding object deletion.

Creating edit view

To create the edit view class I added the below code to views.py:

# views.py

from django.views.generic.edit import CreateView, DeleteView, UpdateView

...

class BgpPeeringEditView(UpdateView):

"""View for editing a BgpPeering instance."""

model = BgpPeering

form_class = BgpPeeringForm

template_name = "netbox_bgppeering/bgppeering_edit.html"

We complete our collection of generic views by importing UpdateView [4]. As usual, this will do a lot of hard work for us.

Then comes our class, BgpPeeringEditView. In this class we define:

model- Model class used for this class, hereBgpPeering.form_class- Form used by the view and template. We reuse the form we built for the object creation view,BgpPeeringForm.template_name- Template used by this view, again, we reuse existing templatebgppeering_edit.html.

That was nice, we reused existing components and plugged them into edit view.

Next, we need to modify the template so it can support both creating and editing objects.

Modify object creation template

As I mentioned, we can mostly reuse the existing template, bgppeering_edit.html, with some mall modifications.

# bgppeering_edit.html

...

{% block title %}

{% if object.pk %}

Editing BGP Peering - {{ object }}

{% else %}

Add a new BGP Peering

{% endif %}

{% endblock %}

...

<div class="row">

<div class="col-md-6 col-md-offset-3 text-right">

{% block buttons %}

<button type="submit" name="_create" class="btn btn-primary">Create</button>

{% endblock %}

{% if object.pk %}

<button type="submit" name="_update" class="btn btn-primary">Update</button>

<a href="{% url 'plugins:netbox_bgppeering:bgppeering' pk=bgppeering.pk %}" class="btn btn-default">Cancel</a>

{% else %}

<button type="submit" name="_create" class="btn btn-primary">Create</button>

<a href="{% url 'plugins:netbox_bgppeering:bgppeering_list' %}" class="btn btn-default">Cancel</a>

{% endif %}

</div>

The first thing we're doing here is checking if object.pk has value. Edit view will automatically pass pk to the template. So if pk has value then we're dealing with object edit and display appropriate title. Otherwise, we display the original title for adding a new object.

Next, we modify the section showing buttons below the form. Here we also add conditional, checking if object.pk has value.

- If conditional evaluates to

Trueit means we're dealing with object edit action. We present two buttons,Updatewill save changes made to the object.Cancelwill take us back to the object details view. - If conditional evaluates to

Falsewe follow the logic we built originally, an object is either created or we're sent to the list view.

And that's it, our template now supports both object creation and editing.

Adding editing button and URL

Most of the work is done here. We should now add URL pointing to the edit view and edit button that will allow users to edit the object.

# urls.py

from .views import (

BgpPeeringCreateView,

BgpPeeringDeleteView,

BgpPeeringEditView,

BgpPeeringListView,

BgpPeeringView,

)

urlpatterns = [

...

path("<int:pk>/edit/", BgpPeeringEditView.as_view(), name="bgppeering_edit"),

]

This URL is similar to the one for deleting an object. We again have pk argument which we pass to class view. We use a friendly name for URL, bgppeering_edit.

Finally we will add edit button next to the delete button on object details view page:

# bgppeering_edit.html

<div class="pull-right noprint">

<a href="{% url 'plugins:netbox_bgppeering:bgppeering_edit' pk=bgppeering.pk %}" class="btn btn-warning">

<span class="{{ icon_classes.pencil }}" aria-hidden="true"></span> Edit

</a>

<a href="{% url 'plugins:netbox_bgppeering:bgppeering_delete' pk=bgppeering.pk %}" class="btn btn-danger">

<span class="{{ icon_classes.trash }}" aria-hidden="true"></span> Delete

</a>

</div>

And that's it, we should now be able to edit given object. Let's give it a try.

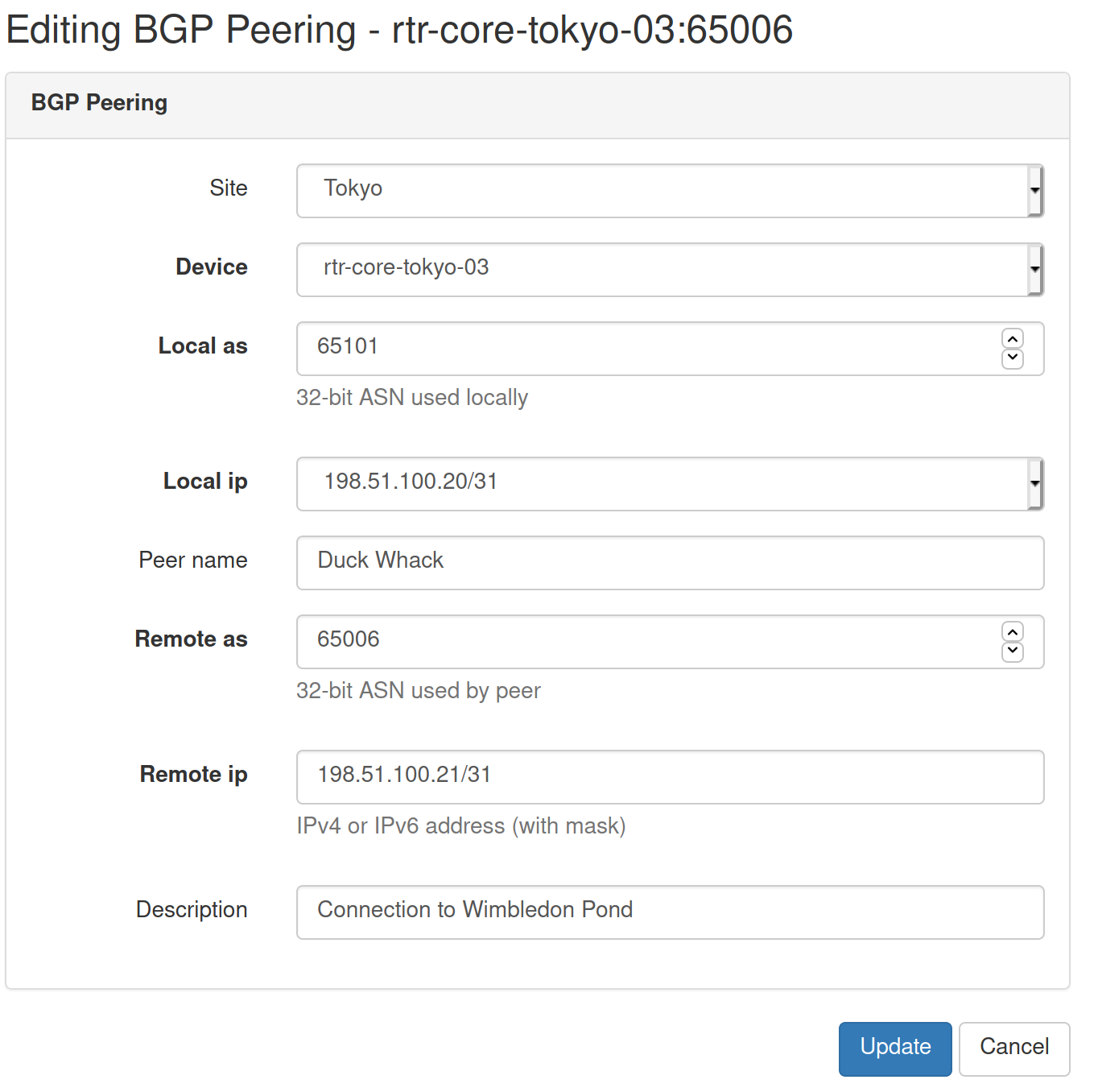

Here's the page with object details, notice Edit button.

And here's the edit view that we'll get after the edit button is clicked.

Another useful addition to our plugin is complete.

Prettifying field labels

Current field labels displayed in the list view and few other places are derived from model fields. Not all of them look pretty so we should work on making them look better.

Let's override default field names in model class in models.py.

# models.py

class BgpPeering(ChangeLoggedModel):

...

local_ip = models.ForeignKey(

to="ipam.IPAddress", on_delete=models.PROTECT, verbose_name="Local IP"

)

local_as = ASNField(help_text="32-bit ASN used locally", verbose_name="Local ASN")

remote_ip = IPAddressField(

help_text="IPv4 or IPv6 address (with mask)", verbose_name="Remote IP"

)

remote_as = ASNField(help_text="32-bit ASN used by peer", verbose_name="Remote ASN")

peer_name = models.CharField(max_length=64, blank=True, verbose_name="Peer Name")

To customize the text displayed for the given field we pass the argument verbose_name [5] when creating field objects. The value of each of the arguments will be the text we want to display as the field name.

We will also do the same for the search filter. In this case, we edit form class in forms.py.

# forms.py

class BgpPeeringFilterForm(BootstrapMixin, forms.ModelForm):

...

local_as = forms.IntegerField(

required=False,

label="Local ASN",

)

remote_as = forms.IntegerField(

required=False,

)

remote_as = forms.IntegerField(required=False, label="Remote ASN")

peer_name = forms.CharField(

required=False,

label="Peer Name",

)

We add label [6] argument to each form field we want to customize.

That's it, let's see how the fields are looking like now.

Perfect, names and labels are looking better now.

We'll finish our small improvements by adding Add button to the list view in bgppeering_list.html.

# bgppeering_list.html

...

{% block content %}

<div class="pull-right noprint">

<a href="{% url 'plugins:netbox_bgppeering:bgppeering_add' %}" class="btn btn-primary">

<span class="{{ icon_classes.plus }}" aria-hidden="true"></span> Add

</a>

</div>

And with this last modification we've come to an end of this post.

Source code with all the modifications we made up to this point is in branch small-improvements if you want to check it out: https://github.com/progala/ttl255-netbox-plugin-bgppeering/tree/small-improvements

Conclusion

In the previous posts of this series, we were focusing on getting the plugin off the ground. We implemented models, and several views and forms. Here we had a closer look and added improvements that make the plugin easier to use. It might not seem like much but these kinds of small additions can make our lives much easier.

The next post will be the last in the series. We'll complete our plugin by adding user permissions as well as API calls. See you next time!

Resources

- NetBox docs: Plugin Development: https://netbox.readthedocs.io/en/stable/plugins/development/

- NetBox source code on GitHub: https://github.com/netbox-community/netbox

- NetBox Extensibility Overview, NetBox Day 2020: https://www.youtube.com/watch?v=FSoCzuWOAE0

- NetBox Plugins Development, NetBox Day 2020: https://www.youtube.com/watch?v=LUCUBPrTtJ4

https://docs.djangoproject.com/en/3.1/ref/forms/api/#django.forms.Form.clean ↩︎

https://docs.python.org/3/library/ipaddress.html#ipaddress.ip_interface ↩︎

https://docs.djangoproject.com/en/3.1/ref/class-based-views/generic-editing/#django.views.generic.edit.DeleteView ↩︎

https://docs.djangoproject.com/en/3.1/ref/class-based-views/generic-editing/#django.views.generic.edit.UpdateView ↩︎

https://docs.djangoproject.com/en/3.1/topics/db/models/#verbose-field-names ↩︎

https://docs.djangoproject.com/en/3.1/ref/forms/fields/#label ↩︎

Welcome to part 3 of my tutorial walking you through process of developing NetBox plugin. In part 2 we added basic web UI views to our BgpPeering plugin. In this post we'll add search panel to list view to allow us to search/filter Bgp Peering objects.

Developing NetBox Plugin tutorial series

- Developing NetBox Plugin - Part 1 - Setup and initial build

- Developing NetBox Plugin - Part 2 - Adding web UI pages

- Developing NetBox Plugin - Part 3 - Adding search panel

- Developing NetBox Plugin - Part 4 - Small improvements

- Developing NetBox Plugin - Part 5 - Permissions and API

Contents

- Introduction

- Filter class

- Search form class

- Adding form to list view template

- Adding filtering to list view class

- Conclusion

- Resources

- BGP Peering Plugin GitHub repository

Introduction

List view we created for displaying all Bgp Peering objects in one place is very useful. However it will become difficult to find items of interest once we have more than 30-50 objects. For that purpose we should add means of filtering objects to the ones that meet certain criteria.

Other objects in NetBox already have filtering functionality and use search panel located to the right of object tables. We'll try and replicate the NetBox look and feel when upgrading our view.

In our case, I want to be able to filter Bgp Peering objects by:

site- This should be a drop-down list with NetBox defined sites.device- Again, drop-down list but with NetBox defined devices.local_as- BGP ASN used locally, it has to be an exact match.remote_as- BGP ASN used by 3rd party, it has to be an exact match.peer_name- Name of the peer, we want to allow partial matches.q- Generic query field that looks at peer name and descriptions. We want to allow partial matches here.

To make all this work we need a few components:

- filter class

- search form class

- updated list view template

- updated list view class

Filter class

First component we're going to work on is filter class. To help us with this we're using Django app called django_filters[1]. This app makes it easier to build model based filtering that will serve as an abstraction used by our list view.

Filtering class is going to be recorded in filters.py file.

# filters.py

import django_filters

from django.db.models import Q

from dcim.models import Device, Site

from .models import BgpPeering

class BgpPeeringFilter(django_filters.FilterSet):

"""Filter capabilities for BgpPeering instances."""

q = django_filters.CharFilter(

method="search",

label="Search",

)

site = django_filters.ModelMultipleChoiceFilter(

field_name="site__slug",

queryset=Site.objects.all(),

to_field_name="slug",

)

device = django_filters.ModelMultipleChoiceFilter(

field_name="device__name",

queryset=Device.objects.all(),

to_field_name="name",

)

peer_name = django_filters.CharFilter(

lookup_expr="icontains",

)

class Meta:

model = BgpPeering

fields = [

"local_as",

"remote_as",

"peer_name",

]

def search(self, queryset, name, value):

"""Perform the filtered search."""

if not value.strip():

return queryset

qs_filter = Q(peer_name__icontains=value) | Q(description__icontains=value)

return queryset.filter(qs_filter)

Since all this is new, I'm going to go through the code in detail.

We start building filter by creating BgpPeeringFilter class that inherits from django_filters.FilterSet.

Next, in class Meta, we define model this filter is based on.

In fields we specify list of fields for which filters will be auto-generated. This is based on the model definitions. Any fields that don't need special treatment can go in here.

class Meta:

model = BgpPeering

fields = [

"local_as",

"remote_as",

"peer_name",

]

Fields that need customization, like linking to other models, or partial matching, we will define as class attributes.

Let's have a look at those fields now.

-

site- We want this to be a drop down-menu with multiple choices. To do that we make this an instance ofdjango_filters.ModelMultipleChoiceFilterclass.site = django_filters.ModelMultipleChoiceFilterWe initialize the object with three arguments

field_name,querysetandto_field_name.-

field_name- Specifies attribute on the model field that we will filterBgpPeeringobjects on. Here we usesitefield from our model and its attributeslug. Attribute follows__(double underscore) after name of the field:field_name="site__slug" -

queryset- This defines collection ofSiteobjects filter will present as filtering choices. We importedSitemodel fromdcim.modelsand return all of the available objects. -

to_field_name- Here we specify name of the attribute,slug, that filter will take fromSiteobject and apply tofield_namewe specified earlier.

-

-

device- Similar tosite, it's a drop-down menu with multiple choices. Here we link toDeviceNetBox model from which filter will takenameattribute. We filter BgpPeering objects using field/attribute combination ofdevice__name. -

peer_name- Peer name is a character field, so we usedjango_filters.CharFilterclass to define it.

We want to allow case insensitive partial matches here. To do that we pass argumentlookup_expr="icontains"when creating object.

Default lookup method isexactwhich forces exact matches. See docs for available lookup methods [2]. -

q- This is general, string, query field. We want it to be of character typedjango_filters.CharFilter.

Inmethodargument we specify Python method, heresearch, that should be called when this field is used [3].

Andlabelfield is the text that will appear in the field as a hint.q = django_filters.CharFilter( method="search", label="Search", )Next we define method

search, inside of the class, whichqfield filter will use. You can define these methods outside of the class scope but in most cases it makes sense to keep it close to the field definition.def search(self, queryset, name, value): """Perform the filtered search.""" if not value.strip(): return queryset qs_filter = Q(peer_name__icontains=value) | Q(description__icontains=value) return queryset.filter(qs_filter)Methods we define for filter fields will be passed

queryset,nameandvaluearguments. Hence why our method has(self, queryset, name, value)signature.queryset- This is essentially list of objects currently meeting filter criteria, beforeqfilter is applied.name- Name of the filter field, hereq.value- Value entered into the filter field in web GUI.

Logic in our method is relatively simple. We return unchanged queryset if no value was provided:

if not value.strip(): return querysetIf there is a value we take advantage of Django

Q[4] object to build a query based on two fields.Q(peer_name__icontains=value)- We check, ignoring case, ifpeer_namefield containsvalue.Q(description__icontains=value)- We check, ignoring case, ifdescriptionfield containsvalue.

Then we combine these objects with

|- logical OR - operator and assign the result toqs_filtervariable.qs_filter = Q(peer_name__icontains=value) | Q(description__icontains=value)Finally, we apply this filter to queryset and return the result.

return queryset.filter(qs_filter)End effect is that if

valueis contained in eitherpeer_nameor peeringdescriptionfor given object then the object will be included in final queryset.

With that we finish filter class and move on to the form.

Search form class

Next step in our quest for filtering is creating class that will represent the search form.

We will add the below to forms.py:

# forms.py

from dcim.models import Device, Site

class BgpPeeringFilterForm(BootstrapMixin, forms.ModelForm):

"""Form for filtering BgpPeering instances."""

q = forms.CharField(required=False, label="Search")

site = forms.ModelChoiceField(

queryset=Site.objects.all(), required=False, to_field_name="slug"

)

device = forms.ModelChoiceField(

queryset=Device.objects.all(),

to_field_name="name",

required=False,

)

local_as = forms.IntegerField(

required=False,

)

remote_as = forms.IntegerField(

required=False,

)

peer_name = forms.CharField(

required=False,

)

class Meta:

model = BgpPeering

fields = []

Let's break this code down.

We create BgpPeeringFilterForm that inherits from BootstrapMixin and forms.ModelForm. This is just like the form we defined in part 2 of this tutorial.

Next we define model we're building this form from, BgpPeering, and fields that will be auto-generated. I want to define all fields explicitly so fields attribute will be an empty list. You need to include this attribute even if you don't list anything here or an exception will be raised.

class Meta:

model = BgpPeering

fields = []

Following that we will define types and attributes of the fields used by search form.

-

q- General query field should be a character field so we use typeforms.CharField. This field is optional and we manually set label of this field toSearch. -

site- Site selection field, this field is optional.- To link it to NetBox

Siteobjects we make it of typeforms.ModelChoiceField. - We ask for all

Siteobjects to be available in drop-down withqueryset=Site.objects.all(). - When given item is selected we want

slugattribute to be returned. This is whatto_field_name="slug"does.

- To link it to NetBox

-

device- Similar tositebut here we link toDevicemodel and ask fornameattribute to be returned. -

local_asandremote_as- Optional integer fields, we use typeforms.IntegerFieldfor those. -

peer_name- Simple, optional, character field. We use typeforms.CharFieldfor it.

And that's our form class done.

Adding form to list view template

To allow users to search through BgpPeering objects we need to update our web UI page. We'll add search panel on the right side of the object list view template.

We add new <div> in bgppeering_list.html right after the one we created in part 2:

<div class="col-md-9">

{% render_table table %}

</div>

<!-- search panel div start -->

<div class="col-md-3 noprint">

<div class="panel panel-default">

<div class="panel-heading">

<span class="{{ icon_classes.search }}" aria-hidden="true"></span>

<strong>Search</strong>

</div>

<div class="panel-body">

<form action="." method="get" class="form">

{% for field in filter_form.visible_fields %}

<div class="form-group">

{% if field.name == "q" %}

<div class="input-group">

<input type="text" name="q" class="form-control" placeholder="{{ field.label }}"

{% if request.GET.q %}value="{{ request.GET.q }}" {% endif %} />

<span class="input-group-btn">

<button type="submit" class="btn btn-primary">

<span class="{{ icon_classes.search }}" aria-hidden="true"></span>

</button>

</span>

</div>

{% else %}

{{ field.label_tag }}

{{ field }}

{% endif %}

</div>

{% endfor %}

<div class="text-right noprint">

<button type="submit" class="btn btn-primary">

<span class="{{ icon_classes.search }}" aria-hidden="true"></span> Apply

</button>

<a href="." class="btn btn-default">

<span class="{{ icon_classes.remove }}" aria-hidden="true"></span> Clear

</a>

</div>

</form>

</div>

</div>

</div>

We make this div 3 columns wide. Inside we place panel header and search form divs.

Form fields are rendered in for loop:

{% for field in filter_form.visible_fields %}

...

{% endfor %}

These fields are taken from form class we defined earlier. We leave all fields, with exception of q field, to their defaults by displaying field label followed by rendering actual field.

{{ field.label_tag }}

{{ field }}

Because q field does not belong to underlying model we handle it differently. We make it a text input field with label as a placeholder.

The interesting bit here is that the value of the field is carried over from previous search. This is done for us for auto-generated form fields but here we have to do it manually with the below expression:

{% if request.GET.q %}value="{{ request.GET.q }}" {% endif %} />

Remaining html/css code is for layout and visual elements.

Icon classes

You might have noticed a few references to icon_classes variable here like in class="{{ icon_classes.search }}". These are strings specifying CSS classes used for rendering icons. I pass these classes to the form in icon_classes variable from form view.

NetBox v2.10+ uses Material Design icons. Previous versions used Font Awesome. To make my plugin compatible with both versions I created icon_classes.py file with dictionary dynamically mapping class names to underlying MD or FA CSS classes.

# icon_classes.py

from .release import NETBOX_RELEASE_CURRENT, NETBOX_RELEASE_210

if NETBOX_RELEASE_CURRENT >= NETBOX_RELEASE_210:

icon_classes = {

"plus": "mdi mdi-plus-thick",

"search": "mdi mdi-magnify",

"remove": "mdi mdi-close-thick",

}

else:

icon_classes = {

"plus": "fa fa-plus",

"search": "fa fa-search",

"remove": "fa fa-remove",

}

I also use release.py borrowed from [5] to detect version of NetBox plugin is running under.

from packaging import version

from django.conf import settings

NETBOX_RELEASE_CURRENT = version.parse(settings.VERSION)

NETBOX_RELEASE_28 = version.parse("2.8")

NETBOX_RELEASE_29 = version.parse("2.9")

NETBOX_RELEASE_210 = version.parse("2.10")

The above additions allow me to easily add CSS classes for any other icons I might want to use in the future.

With that digression out of the way, let's put it all together by modifying list view class.

Adding filtering to list view class

All of the components we created up to this point need to be tied together in the class view. We're modifying the BgpPeeringListView class created in part 2, in order to support search/filtering functionality.

Changed BgpPeeringListView class:

# views.py

from .icon_classes import icon_classes

from .filters import BgpPeeringFilter

from .forms import BgpPeeringForm, BgpPeeringFilterForm

class BgpPeeringListView(View):

"""View for listing all existing BGP Peerings."""

queryset = BgpPeering.objects.all()

filterset = BgpPeeringFilter

filterset_form = BgpPeeringFilterForm

def get(self, request):

"""Get request."""

self.queryset = self.filterset(request.GET, self.queryset).qs

table = BgpPeeringTable(self.queryset)

RequestConfig(request, paginate={"per_page": 25}).configure(table)

return render(

request,

"netbox_bgppeering/bgppeering_list.html",

{

"table": table,

"filter_form": self.filterset_form(request.GET),

"icon_classes": icon_classes,

},

)

Few interesting things happen here, let's break them down.

-

filterset = BgpPeeringFilter- We addfiltersetattribute and set it toBgpPeeringFilterclass we created earlier. -

filterset_form = BgpPeeringFilterForm- This is the form that will be rendered in list view template. -

self.queryset = self.filterset(request.GET, self.queryset).qs- Here is where the filtering happens.

We feedfiltersetform values contained inrequest.GETandquerysetwith BgpPeering objects.

Method.qsreturnsQuerySetlike object that we assign back toself.queryset. This will be then fed to table constructor. Except now the resulting table will only contain objects matching filter values.

Finally, we provide two new arguments to render:

-

"filter_form": self.filterset_form(request.GET)- This is used to render form in UI.request.GETpreserves values used in previous searches. -

"icon_classes": icon_classes- This passes dictionary with CSS classes I defined for UI icons.

And that's it. We can now re-build plugin and take search panel for a spin.

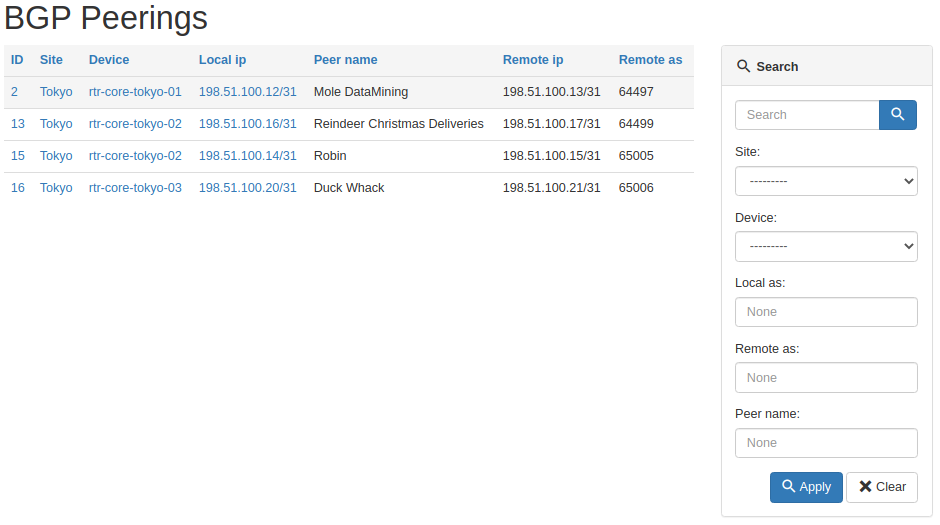

If you now navigate to /plugins/bgp-peering/ you should see search panel on the right hand side, next to table with the list of objects.

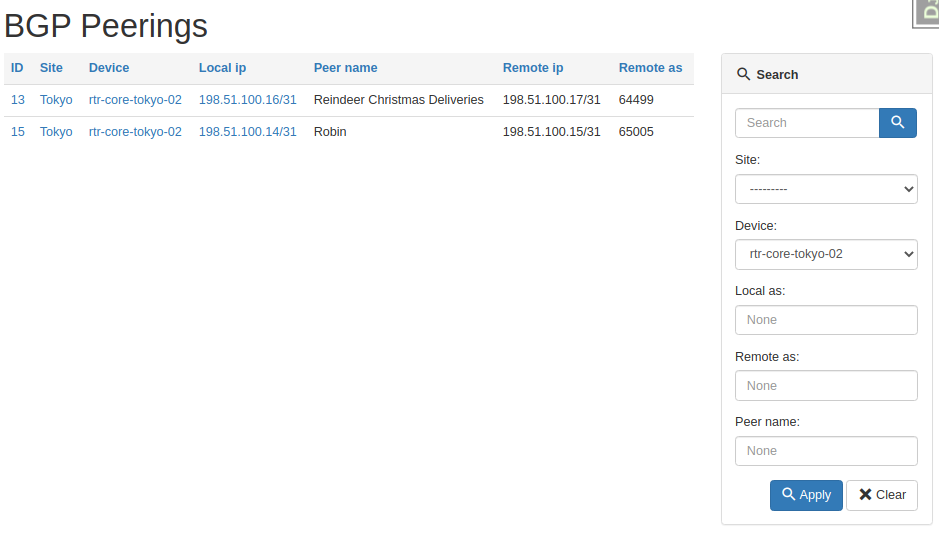

And here's the table after we asked for peerings on one device only.

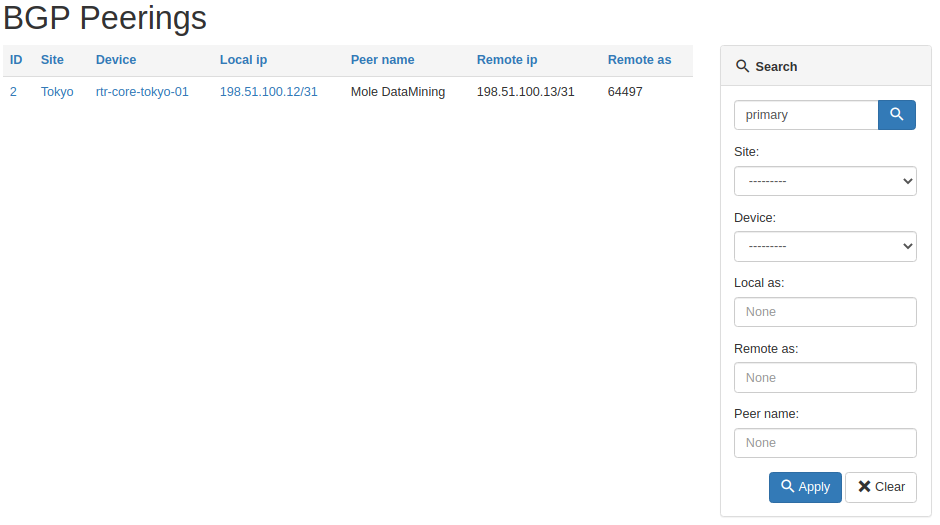

And here's a result of the general query for primary string.

All working as intended, pretty cool right?

Source code with all the modifications we made up to this point is in branch bgppeering-list-search if you want to check it out: https://github.com/progala/ttl255-netbox-plugin-bgppeering/tree/bgppeering-list-search

Conclusion

This concludes post 3 in Developing NetBox Plugin series. Search functionality we implemented here should greatly improve usability of our plugin. It's going to be much easier now to find peering objects of interest.

In the next post we'll continue with making improvements to the plugin. There a few small things here and there that will make this all even better. Stay tuned!

Resources

- NetBox docs: Plugin Development: https://netbox.readthedocs.io/en/stable/plugins/development/

- NetBox source code on GitHub: https://github.com/netbox-community/netbox

- NetBox Extensibility Overview, NetBox Day 2020: https://www.youtube.com/watch?v=FSoCzuWOAE0

- NetBox Plugins Development, NetBox Day 2020: https://www.youtube.com/watch?v=LUCUBPrTtJ4

Django Filter app - https://django-filter.readthedocs.io/en/stable/ ↩︎

Django field lookups - https://docs.djangoproject.com/en/3.1/ref/models/querysets/#field-lookups ↩︎

Django filter method - https://django-filter.readthedocs.io/en/stable/ref/filters.html#method ↩︎

Django Q object - https://docs.djangoproject.com/en/3.1/topics/db/queries/#complex-lookups-with-q ↩︎

NTC NetBox Onboarding plugin - https://github.com/networktocode/ntc-netbox-plugin-onboarding/blob/develop/netbox_onboarding/release.py ↩︎

Welcome to part 2 of my tutorial walking you through process of developing NetBox plugin. In part 1 we set up our development environment and built base version of Bgp Peering plugin. Here we will continue work on the plugin by adding UI pages allowing us to list, view and add, Bgp Peering objects.

Developing NetBox Plugin tutorial series

- Developing NetBox Plugin - Part 1 - Setup and initial build

- Developing NetBox Plugin - Part 2 - Adding web UI pages

- Developing NetBox Plugin - Part 3 - Adding search panel

- Developing NetBox Plugin - Part 4 - Small improvements

- Developing NetBox Plugin - Part 5 - Permissions and API

Contents

- Adding web UI pages

- Enable model for permissions framework

- Single object view -

BgpPeeringView - Object list view -

BgpPeeringListView - Object creation view -

BgpPeeringCreateView - Conclusion

- Resources

- BGP Peering Plugin repository

Disclaimer

Original version of this post contained views that relied on views used internally by NetBox. This practice is not recommended by NetBox maintainers as these are likely to change in the future and your plugin might stop working.

To add to it, bugs with plugins are often logged against the core NetBox adding to the mainteners' workload even though this has nothing to do with them. I've now refactored these views to decouple this plugin from NetBox's implementation details.

Adding web UI pages

We previously created BgpPeering model which we can even work with from admin panel. This was all for quick testing and we should now expose the model to the end users.

The best way to do that is by adding set of pages to web UI. We're also going to re-use some of the NetBox's components so that our pages match look and feel of core offering.

Here are some of the things we need to work on to make this happen.

- Create template for pages, these go into in

templatesdirectory. - Write code responsible for rendering web response, we store views in

views.pyfile. - Add URLs pointing to our new pages in

urls.py - Add menu items in

navigation.pywhere appropriate. - Create table class in

tables.pyfor list view.

You will see me talking about views in few places. If you've never heard the term before, know that view[1] in Django is Python code that receives web request, executes some logic and returns web response.

In this post I want to create three views:

- view displaying details of single object

- view displaying list of objects

- view with form for adding new object

But before we get to views there is one more thing that we need to do. We need to make our model compatible with NetBox's permissions framework.

Enable model for permissions framework

NetBox has support for granular query permissions. Even though we currently don't use any permissions we want our components to support them. This will make future additions easier to make.

Below is the code I'm adding to models.py.

from utilities.querysets import RestrictedQuerySet

class BgpPeering(ChangeLoggedModel):

...

objects = RestrictedQuerySet.as_manager()

We create class attribute called objects and assign RestrictedQuerySet to it. This will be used for retrieving and filtering BgpPeering records.

RestrictedQuerySet is a class we import from NetBox's utilities. This class provides support for permissions.

By using RestrictedQuerySet NetBox will be able to filter out objects for which user does not have specific rights.

objects is a default name Django uses for db query interface, Manager. Follow hyperlink to footnotes if you want to learn more [2].

Finally as_manager() method is used here to return instance of Manager class. This is a bit low level but know that Django expects to see Manager type here. We used custom QuerySet which as_manager() allows to use as Manager.

With that out of the way we can move to our first view.

Single object view - BgpPeeringView

First view we're going to create has name BgpPeeringView. Choice of names is completely arbitrary but I'm trying to follow naming convention used by NetBox.

This and all other views have to go into views.py file in your plugin's directory.

Also, for our views we'll be using Django class based views. These allow more flexiblity and reuse of code via inheritance compared to function based views[3].

Below is the initial code, which we're going to breakdown in a second.

# views.py

from django.shortcuts import get_object_or_404, render

from django.views import View

from .models import BgpPeering

class BgpPeeringView(View):

"""Display BGP Peering details"""

queryset = BgpPeering.objects.all()

def get(self, request, pk):

"""Get request."""

bgppeering_obj = get_object_or_404(self.queryset, pk=pk)

return render(

request,

"netbox_bgppeering/bgppeering.html",

{

"bgppeering": bgppeering_obj,

},

)

Let's have a closer look at this code.

-

We subclass

Viewwhich comes from core Django. This is one of the most basic type of class-based views. -

Next we have

querysetwhich we use to retrieve and filter interesting objects. We do this by calling methodall()onobjectsattribute we just defined inBgpPeeringmodel. No database calls are made at this stage so don't be alarmed by use ofall(). -

Method

get()is used to service incoming GET HTTP requests. -

Function

get_object_or_404()returns404HTML code instead of raising internal exception. This is more meaningful to end users. -

Then we're feeding defined queryset to

get_object_or_404asking for single object matchingpk.pkmeans primary key and each of objects will have one.

In our casepkmatches automatically genereatedidfield in our model. The value ofpkis passed toget()via URL defined inurls.pywhich we'll look at shortly. -

Finally

render()renders provided template and uses it to create a well-formed web response. We provide it name of the template to render, which we're going to build next.

Template for single object view

Most of our views will rely on templates stored in templates directory. Django templates use language[4] similar to Jinja2 so if you know Jinja you should be able to pick it up pretty quickly.

Best practice is to place templates used by our plugin in templates subdirectory named after our plugin. In our case that will be:

templates\bgppeering\

We refer to these templates later in few places. This makes it clear that template came from plugin namespace.

With that said, let's have a look at body of our template.

bgppeering.html

{% extends 'base.html' %}

{% load helpers %}

{% block content %}

<div class="row">

<div class="col-md-6 col-md-offset-3">

<div class="panel panel-default">

<div class="panel-heading">

<strong>BGP Peering</strong>

</div>

<table class="table table-hover panel-body attr-table">

<tr>

<td>Site</td>

<td>

{% if bgppeering.site %}

<a href="{% url 'dcim:site' slug=bgppeering.site.slug %}">{{ bgppeering.site }}</a>

{% else %}

<span class="text-muted">None</span>

{% endif %}

</td>

</tr>

<tr>

<td>Device</td>

<td>

<a href="{% url 'dcim:device' pk=bgppeering.device.pk %}">{{ bgppeering.device }}</a>

</td>

</tr>

<tr>

<td>Local BGP AS</td>

<td>{{ bgppeering.local_as }}</td>

</tr>

<tr>

<td>Local peering IP address</td>

<td>

<a href="{% url 'ipam:ipaddress' pk=bgppeering.local_ip.pk %}">{{ bgppeering.local_ip }}</a>

</td>

</tr>

<tr>

<td>Remote BGP AS</td>

<td>{{ bgppeering.remote_as }}</td>

</tr>

<tr>

<td>Remote peering IP address</td>

<td>{{ bgppeering.remote_ip }}</td>

</tr>

<tr>

<td>Peer name</td>

<td>{{ bgppeering.peer_name|placeholder }}</td>

</tr>

<tr>

<td>Description</td>

<td>{{ bgppeering.description|placeholder }}</td>

</tr>

</table>

</div>

</div>

</div>

{% endblock %}

This looks like a lot but most of it is code generating table cells displaying attributes of object.

There are some interesting bits here though, let's look at them now:

-

{% extends 'base.html' %}-base.htmlcomes from NetBox and takes care of NetBox's look and feel for our page. It'll give us menus, footer, etc., we just need to take care of the main content. -

{% load helpers %}- loads custom tags and filters defined inhelpers. Again, we borrowhelpersfrom core NetBox. We need this because ofplaceholderfilter used in our template. -

Below links pointing to NetBox objects use Django's

urlfilter[5]. With that filter we don't have to hardcode links, instead we reference paths inurls.py.<a href="{% url 'dcim:site' slug=bgppeering.site.slug %}">{{ bgppeering.site }}</a> <a href="{% url 'dcim:device' pk=bgppeering.device.pk %}">{{ bgppeering.device }}</a <a href="{% url 'ipam:ipaddress' pk=bgppeering.local_ip.pk %}">{{ bgppeering.local_ip }}</a>For instance:

<a href="{% url 'dcim:site' slug=bgppeering.site.slug %}">{{ bgppeering.site }}</a>Points to below path in

netbox/netbox/dcim/views.py:path('sites/<slug:slug>/', views.SiteView.as_view(), name='site')Because URL is in

dcimapp and hasnameequal tositewe feeddcim:sitetourlfilter. This path also expectsslugargument.BgpPeeringobject keeps site info insiteattribute, so the siteslugcan be retrieved withbgppeering.site.slug.If you want to link to any other NetBox objects you can look at the paths recorded in

urls.pyfor given app. Then you need to identify expected argument. With those two you can construct links usingurlfilter, just like we did above. -

Lastly we display attribute values for given

BgpPeeringinstance by using dot.notation.

In view we created earlier, template receives variable namedbgppeeringcontainingBgpPeeringobject retrieved from database. Inside of our template we use that name to retrieve each of the model attributes by placing.afterbgppeering, followed by name of the attribute. E.g.bgppeering.site bgppeering.remote_ip

URL for single object view

We have single object view and template in place. Now we need to add URL path for it so the view can be accessed.

# urls.py

from .views import BgpPeeringView

urlpatterns = [

...

path("<int:pk>/", BgpPeeringView.as_view(), name="bgppeering"),

]

First we import our view BgpPeeringView. Then we add another path entry to urlpatterns.

-

<int:pk>/equals toplugins/bgp-peering/<pk>, wherepkis the primary key of our record, and it's an integer, henceint. Our object use auto-incremented integeridfield as primary-key. Below is example of URL for object withidequal to 1.plugins/bgp-peering/1/ -

BgpPeering.as_view()-as_view()is needed here so that our view class can process requests. Technically speaking this creates callable that takes request and returns well- formed response. This is how we're going to use all our class based views.

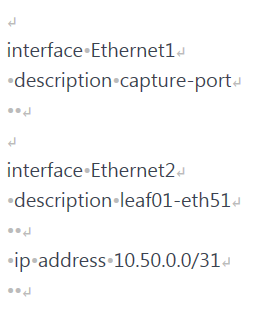

The end result of rendering this template is basic but clean looking table presenting details of BgpPeering object:

There are some improvements that we could make to this view but we'll leave that for later.

Source code up to this point is in branch bgppeering-view-init if you want to check it out: https://github.com/progala/ttl255-netbox-plugin-bgppeering/tree/bgppeering-view-init .

Object list view - BgpPeeringListView

List view is usually the default view for objects in NetBox. This provides overview of records for object of given type and includes links to detailed views.

We're now going to create list view for records using BgpPeering model. This view will be the one we link to from navigational menu we created in post 1.

Create table class

To create list page we'll start with building table class.

What's a table class you ask? NetBox uses Django app called django_tables2 [6] to make working with tables easier. We'll save ourselves some work by following NetBox's example and leveraging that package.

To do that we need to create file called tables.py in our plugin package and add code defining our table.

# tables.py

import django_tables2 as tables

from utilities.tables import BaseTable

from .models import BgpPeering

class BgpPeeringTable(BaseTable):

"""Table for displaying BGP Peering objects."""

id = tables.LinkColumn()

site = tables.LinkColumn()

device = tables.LinkColumn()

local_ip = tables.LinkColumn()

class Meta(BaseTable.Meta):

model = BgpPeering

fields = (

"id",

"site",

"device",

"local_ip",

"peer_name",

"remote_ip",

"remote_as",

)

Let's break this code down.

-

Our table is a class, named

BgpPeeringTable. We subclassBaseTablefrom NetBox utilities which adds some NetBox specific stuff. -

In class

Metawe define model used in the table, followed by names of the fields we want displayed in the table. This class needs to subclassBaseTable.Meta. Few things of note:- You don't have to list all of the fields used by your model. You should select whatever you feel makes sense to show as one line summary for each object.

- Order in which fields are displayed on the page matches order in which you listed them.

-

Finally we define class attributes for fields that need special treatment. We list several fields here:

id,site,deviceandlocal_ipusetables.LinkColumn()object. This will give us auto-generated links pointing to corresponding NetBox objects.

With that in place we're moving to the template.

Template for object list view

Compared to template for single view, this one is much shorter because we're offloading a lot of work. Let's have a look at the body of the template and then we'll break it down.

bgppeering_list.html

{% extends 'base.html' %}

{% load render_table from django_tables2 %}

{% block content %}

<h1>{% block title %}BGP Peerings{% endblock %}</h1>

<div class="row">

<div class="col-md-9">

{% render_table table %}

</div>

</div>

{% endblock %}

We are again extending from base.html. Then we have content block which contains suspiciously little amount of code.

There is title block, in which we override block in base.html with the same name. Then we just have render_table table statement inside of div.

That render_table[7] statement is where a lot of heavy lifting happens. It's template tag that comes from django_tables2 and it renders HTML table for us among other things. All we have to do is provide this template our previously defined table class in variable called table.

Object list view class

Finally we have view class. Our class will this time inherit from ObjectListView class coming from NetBox. That class provides a lot of goodness for building views for series of objects, and it will use table class we built earlier.

We're updating our views.py with the following additions.

# views.py

from django_tables2 import RequestConfig

...

class BgpPeeringListView(View):

"""View for listing all existing BGP Peerings."""

queryset = BgpPeering.objects.all()

def get(self, request):

"""Get request."""

table = BgpPeeringTable(self.queryset)

RequestConfig(request, paginate={"per_page": 25}).configure(table)

return render(

request, "netbox_bgppeering/bgppeering_list.html", {"table": table}

)

We named this view class BgpPeeringListView. Inside the class we have queryset where we specify that we want all of the objects to be given to the view.

Then we have our table class in table var and RequestConfig object. We use request to configure pagination of 25 object per page with:

RequestConfig(request, paginate={"per_page": 25}).configure(table)

Finally we call render to return well formed web response. We give it request object, name of the template, and table object used in our template.

We're almost done here, but there's one more thing we need to do before we try this view out.

Adding get_absolute_url to model

For list view to work we have to implement method get_absolute_url[8] in BgpPeering model. This is required by list view to automatically create links to details of BgpPeering objects.

If you remember, in the table class we made id a LinkedColumn with id = tables.LinkColumn(). Now we need to add some code to BgpPeering model for this to actually work.

Add below to models.py.

# models.py

from django.urls import reverse

class BgpPeering(ChangeLoggedModel):

...

def get_absolute_url(self):

"""Provide absolute URL to a Bgp Peering object."""

return reverse("plugins:netbox_bgppeering:bgppeering", kwargs={"pk": self.pk})

We defined get_absolute_url method that has single line of code.

In that line reverse function will generate correct URL for given BgpPeering record based on the provided pk. We use the name defined in urls to point to correct path mapped to single object view.

And that's it, we're ready to try out our list view.

If you now click on BGP Peerings in Plugins menu, you should get list view.

Notice auto-generated URLs to linked objects. We also get pagination for free!

Source code up to this point is in branch bgppeering-list-view-init if you want to check it out: https://github.com/progala/ttl255-netbox-plugin-bgppeering/tree/bgppeering-list-view-init .

Object creation view - BgpPeeringCreateView

We could already create objects from admin panel but that is not available to regular users.

Time to rectify this oversight and create view for adding BgpPeering objects.

Form for object creation view

For creation view to work we need to build class representing creation form. This is the form that end user will have to fill out when adding new object.

Forms go into forms.py file in the plugin's directory.

This is what I've added to that file.

# forms.py

from django import forms

from utilities.forms import BootstrapMixin

from .models import BgpPeering

class BgpPeeringForm(BootstrapMixin, forms.ModelForm):

"""Form for creating a new BgpPeering object."""

class Meta:

model = BgpPeering

fields = [

"site",

"device",

"local_as",

"local_ip",

"peer_name",

"remote_as",

"remote_ip",

"description",

]

-

We create class

BgpPeeringFormsubclassingBootstrapMixinandforms.ModelForm. -

forms.ModelForm[9] is a Django helper class that allows building forms from models.BootstrapMixincomes from NetBox and adds Bootstrap CSS classes. This makes our form match the look and feel of other forms used in NetBox. -

In form class itself we define

Metaclass where we:- specify model used to generate the form with

model = BgpPeering - list fields that will show up on the form in list assigned to

fieldsvariable.

- specify model used to generate the form with

And that's it, we're ready to create the view class.