This project aims to support children recovering from hand and wrist injuries by making the physiotherapy process fun and engaging. It minimises the need for frequent in-person appointments, reducing cost and effort for families, without compromising on impact.

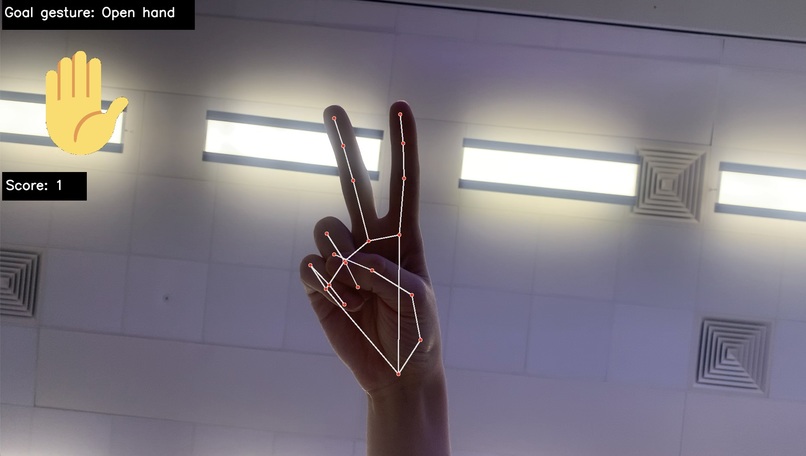

Recognising hand gestures, the program becomes an interactive game where the user can gain points and build confidence - it can even provide a fun minigame for siblings and parents!

This solution promotes creative escapism and confidence growth. Physiotherapy becomes exciting and accessible for a range of young patients.

Inspiration

I (Emily) have hypermobility and run into frequent issues concerning joint pain and injuries. We considered the physiotherapy required to treat fractures and muscle strain and how dull this appears from a child’s perspective. Inspired by the MotionLeap hand sensors, we wanted to start small with hand and wrist exercises and design a gamified alternative to expensive and time-consuming in-person appointments that would provide increased motivation with uncompromised impact.

What it does

There are two game modes, beginner and random. Beginner is aimed at those starting their recovery journey with only a small range of motion. The “player” is prompted to open and close their palm, earning points for each correct movement. No point will be awarded until the exercise is completed correctly. The “next exercise” is represented on screen.

Random mode randomly displays a user one of seven set hand positions. This mode is designed for players with a greater range of movement, and we envision a potential expansion to the gameplay where this mode could be played competitively, engaging the whole family in the recovery process.

Oh, and there is music!

Challenges we ran into

We visited the hardware lab at the start of the event and picked out a MotionLeap sensor. Unfortunately, the supporting software would not run on either of our laptops (they both crashed immediately…). From here, we decided to instead use a computer/phone’s inbuilt camera for the gesture recognition, using MediaPipe and a pre-trained model.

At the start of the project we began on a UI using Streamlit. Early into building the first page we attempted to implement an opencv webcam feed to display the user and the early stage of the game but could not make a connection. After speaking to Sashrika from MLH, we discovered there was a long-term bug in the Streamlit source code, so we instead decided to implement as many of our desired UI features as possible using opencv and Python, which our gesture recognition code was built on.

To make the game as fun as possible for our target audience, we added some space-themed elements including background music using PlaySound, and an astronaut graphic.

Log in or sign up for Devpost to join the conversation.