Inspiration

Over the summer during my internship, I was tasked with making an application that could randomly generate art of any form. I decided to do something with music since I really enjoy playing the piano. My attempt did not yield the results I would have liked, so for this hack I wanted to take another shot at creating a music AI.

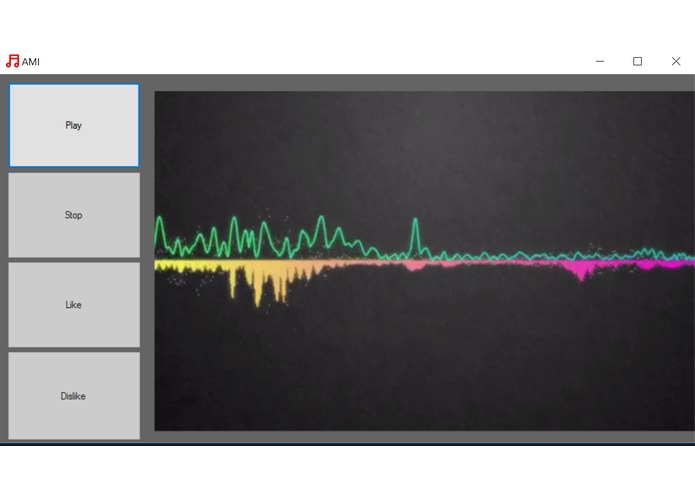

What it does

AMI takes in user submitted sheet music and chord sheets that have been inputted by our handy conversion tool. Using the average relative intervals of the songs in AMI's database, it can generate a musical composition and then plays it using MIDI. When a user likes a song, AMI stores the settings generated by the composition seed and makes the more favorable in future compositions. Likewise, when the user dislikes a song, the settings are made less favorable. This lets AMI learn to compose and play just for you over time.

AMI also utilizes cloud computing technology. As stated before, users submit sheet music via our translation tool. This lets AMI grow from user support. The information given by the user is translated into AMI’s musical language and stored in an SQL database. When the AMI client creates a composition request, the server sends a data packet containing the needing interval information to compose a new song.

Lastly, AMI implements a rudimentary neural network to tailor songs for each user. Whenever a user likes or dislikes a song, a node is added to its decision tree. These nodes effect the probability the given settings will be used in concert for later song compositions.

How we built it

This project was built with C#/.NET. Also, there was a lot of much needed time, effort and passion that was poured to get this done within a weekend.

Challenges we ran into

The biggest challenge in creating AMI was getting it to play the music in a way that sounded natural and had a nice flow. The composition is not restricted with specific tonal values at specific times like actual sheet music, rather, it just adds chords and assigns time values to them. In a way, this is more like a chord sheet. I really enjoy improvisational piano, and since Elton John is my favorite pianist, I wanted AMI to emulate his playing style. Getting AMI to sound competent riffing on the piano was hard, but I think we succeeded.

Accomplishments that we're proud of

We had to create a data structure that stored all of music theory for AMI to pull from in real time. The solution that we came up with was to store everything as semitones relative to a given tone. For example, a major chord would be (0, 4,7).

What we learned

Before this project, I did not know a ton about music theory. I never had any formal music education, so to create AMI, I needed to do some research to understand the fundamentals of music. Also, I learned how to do voice recognition, which was something I was not expecting going into this weekend.

What's next for AMI (Artificial Music Intelligence)

Right now, AMI generates all the settings randomly when creating a song (duration, key, tempo, etc), but in the future, having user inputted settings would allow AMI to compose music that more accurately fit the style of the user. Also, since this was a one-man coding operation, the graphical user interface is not complete, so that would need to get finished too.

Log in or sign up for Devpost to join the conversation.