Machine Learning is the field of study that gives computers the capability to learn without being explicitly programmed. ML is one of the most exciting technologies that one would have ever come across.

Machine Learning : The ability to learn.

This repository is about different Machine Learning algorithm approaches as per the industry practices.

- Fast Food Restaurants data analysis

- Avocado data price prediction

- Sales store item forecast

- Letter Recognition.

Problem Statement

The Fast Food Restaurants dataset we are analyzing and providing Ranking of Top City having Fast Food Restaurants in United States of America

Introduction

In the Exploratory Data Analysis we are using Python skills on a structured data set including loading, inspecting, wrangling, exploring, and drawing conclusions from data. The notebook has observations with each step in order to explain thoroughly how to approach the data set. Based on the observation some questions also are answered in the notebook for the reference though not all of them are explored in the analysis.

| COLUMN | DATA TYPES |

|---|---|

ADDRESS |

OBJECT |

CITY |

OBJECT |

COUNTRY |

OBJECT |

KEYS |

OBJECT |

LATITUDE |

FLOAT64 |

LONGITUDE |

FLOAT64 |

NAME |

OBJECT |

POSTALCODE |

OBJECT |

PROVINCE |

OBJECT |

WEBSITES |

OBJECT |

Observations

- Name : We found there are spelling mistakes(upper, lower and punctuation) on name column, we can group similar names.

- Keys : We noticed keys include country, province, city and address were present, all keys are considered as unique.

- Websites : We have 465 websites missing.

Other than Websites we don't have any missing data. - Standardize all column headers to lower case (to prevent typos!)

- Divided our data into 4 Zones with respect to province.

East_zone = ["CT", "MA", "ME", "NH", "NJ", "NY", "PA", "RI", "VT", "Co Spgs"]

West_zone = ["AK", "AZ", "CA", "CO", "HI", "ID", "MT", "NM", "NV", "OR", "UT", "WA", "WY"]

South_zone = ["AL", "AR", "DC", "DE", "FL", "GA", "KY", "LA", "MD", "MS", "NC", "OK", "SC", "TN", "TX", "VA", "WV"]

Central_zone = ["IA", "IL", "IN", "KS", "MI", "MN", "MO", "ND", "NE", "OH", "SD", "WI"]

Conclusion

- The Fast Food Restaurant Survey being conducted in US to helps and understand the place where the Fast food is highly consumed. By removing the punctuation on Name column we came to know that Mc Donald's count being the highest.

- Cincinnati City in Ohio being the Top ranking in US having highest number of restaurants.

- CA (California) state being the Top ranking in US having highest number of restaurants.TX (Texas) being the second highest in US, both states come under range of 600 - 700 restaurants count.

- McDonalds being the Top ranking in US having highest number of fast food restaurants, count is 2105. Burger King being the second highest in US, restaurant count is 1154.

- If we compare 4 Zones in US, South Zone being the Top ranking in US having highest number of fast food restaurants 41.7%. East Zone having 10.8% Fast Food restaurant in US, they are less eating Fast Food people rather than South Zone. Notebook

Problem Statement

The Avocado dataset we are classifying Organic & Conventional Type and prediting the Average price using Regression model from year 2015, 2016, 2017 and 2018 data.

Introduction

The Avocado dataset includes consumption of fruit in different regions of USA from 2015 till 2018 years of data.

We have two types of Avocado available

- Organic (Healthy)

- Conventional

| COLUMN | DATA TYPES |

|---|---|

DATE |

OBJECT |

AVERAGEPRICE |

FLOAT64 |

TOTALVOLUME |

FLOAT64 |

SMALL |

FLOAT64 |

LARGE |

FLOAT64 |

XLARGE |

FLOAT64 |

TOTALBAGS |

FLOAT64 |

SMALLBAGS |

FLOAT64 |

LARGEBAGS |

FLOAT64 |

XLARGEBAGS |

FLOAT64 |

TYPE |

OBJECT |

YEAR |

INT64 |

REGION |

OBJECT |

Observations

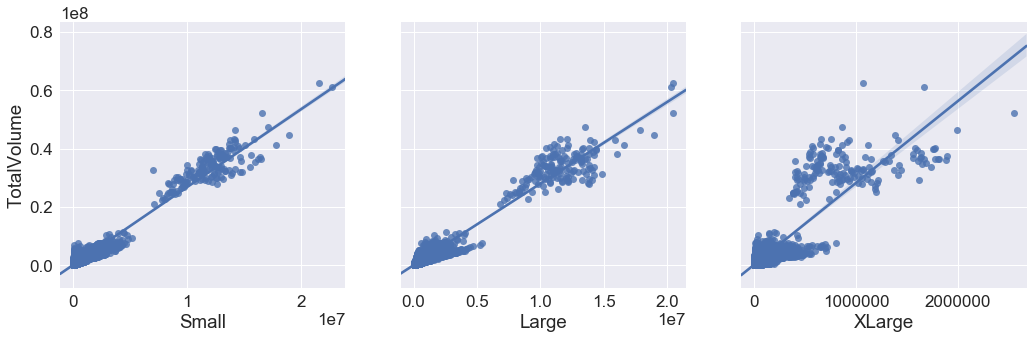

- There is a strong co-relation between TotalVolume Vs Small and TotalBags Vs SmallBags.

- We can say weak co-relation between TotalVolume Vs XLarge and TotalBags Vs XLargeBags.

- Large and LargeBags comes in the middle.

Conclusion

- Columns like Type of avocado, size and bags have impact on Average Price, lesser the RMSE value accurate the model is, when we consider Small Hass in Small Bags.

- Random forest Classifier has more accuracy than Logistic regression model for this dataset , accuracy is 0.99 it may also denote it is overfitting as it even classifies the outliers perfectly.

- Random forest classifier model predicts the type of Avocado more accurately than Logistic regression model.

- Random Forest Regressor model predicts the average price more accurately than Linear regression model.

Objective

- Build a model to forecast the sales in store.

- The data is classified in date/time and the store, item and sales.

| COLUMN | DATA TYPES |

|---|---|

DATE |

OBJECT |

STORE |

INT64 |

ITEM |

INT64 |

SALES |

INT64 |

Conclusion

- We have used Sales 1 : Items 1 data for forecasting.

- Used ARIMA model to predict best p, q, d values ie, ARIMA(6, 0, 1) AIC=601.196

- With the help of ACF and PACF plotting monitored in Autocorrelation graph and Partial Autocorrelation graph at every 7 point we can see recurring pattern.

Objective

The objective is to identify each of a large number of black-and-white rectangular pixel displays as one of the 26 capital letters in the English alphabet.

| Columns | Description |

|---|---|

| letter | capital letter (26 values from A to Z) |

| x-box | horizontal position of box |

| y-box | vertical position of box |

| width | width of box |

| high | height of box |

| onpix | total # on pixels |

| x-bar | mean x of on pixels in box |

| y-bar | mean y of on pixels in box |

| x2bar | mean x variance |

| y2bar | mean y variance |

| xybar | mean x y correlation |

| x2ybr | mean of x * x * y |

| xy2br | mean of x * y * y |

| x-ege | mean edge count left to right |

| xegvy | correlation of x-ege with y |

| y-ege | mean edge count bottom to top |

| yegvx | correlation of y-ege with x |

Conclusion

- Trained the model and predicted the letters with the help of test dataset.

- SVC provides the maximum accuracy and Random Forest being the second.

- We have verified the two output file output_svc and output_rfc.

- Comparing the output file and calculated the difference, 3825 records predicted correctly from 3999 when we compare SVC model as reference.