VCL (Verbal Command Language) is a structured, extensible query language designed to describe cognitive operations a human performs on documents. Think of it as a semantics-first abstraction layer between natural language and the concrete operations performed on legal/technical documents: search, summarize, extract, compare, integrate, verify, and so on.

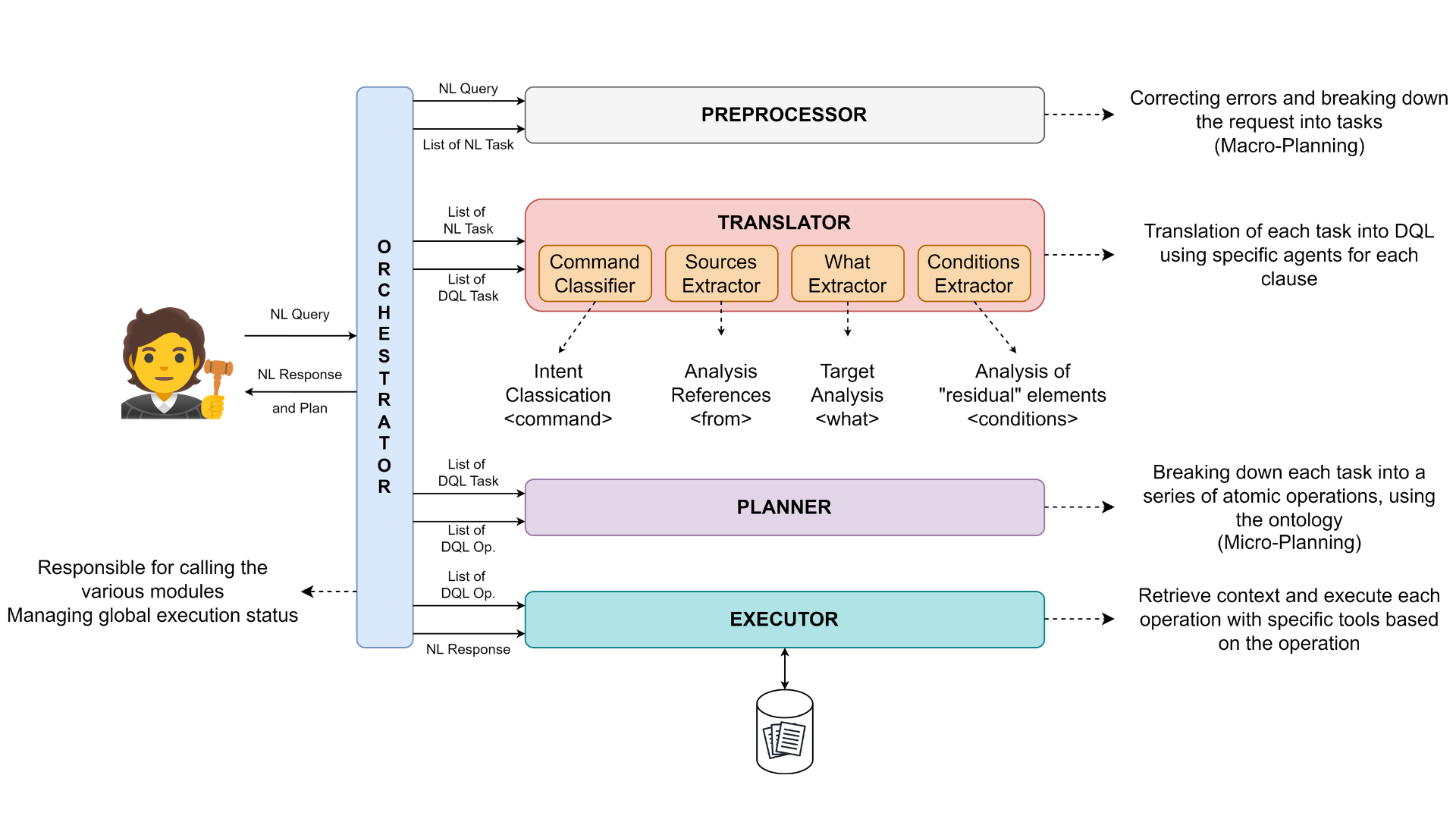

The project implements the following core modules:

- Preprocessor: Converts Natural Language (NL) input into a Directed Acyclic Graph (DAG), subsequently transforming it into a list of tasks in NL to guide the execution.

- Translator: A translator from natural language → VCL/JSON.

- Planner: Turns structured VCL into an ordered execution plan, managing dependencies between operations.

- Executor: Runs atomic operations against a document corpus using LLMs and deterministic tools where appropriate.

- GUI: A Streamlit-based interface for interactive usage.

- Storage & LLM Layer: Storage integrations (MongoDB/Elasticsearch) and a configurable multi-provider LLM layer.

- Evaluation Script: Includes a dedicated evaluation script to assess system performance, accuracy, and reliability across the workflow.

The system is intentionally modular so components can be swapped (different LLM providers, plug-in deterministic tools, alternative UIs).

This section explains, in depth, what VCL provides now (V0.2) and what it intends to provide.

-

Structured cognitive primitives A catalog of atomic operations (e.g.,

search,summarize,extract semantic,extract logical,compare,integrate,verify,classify,reorganize) with deterministic semantics and operational guidelines. Commands are configured in a single JSON language file so the semantics are explicit and editable. -

Natural Language → VCL translation The translator subsystem converts user NL queries into a structured VCL representation (JSON). This representation captures

command,what,source,conditions, and optionalparameters. -

Operation planner A planner maps a VCL representation into an ordered list of atomic operations (an execution plan). The planner resolves dependencies and can break some commands into multiple sub-operations when beneficial.

-

LLM-guided execution Execution primarily uses a LLM for tasks. In Future, deterministic tools or algorithmic routines will be used (e.g., exact reference extraction, regex-based monetary extraction) where possible.

-

Command-specific behavior & constraints Each VCL command has a specific set of operational guidelines (for example,

searchmust return verbatim text snippets only, no paraphrase;summarizemust be a conceptual rewrite). These guidelines are enforced by the executor. -

Streamlit GUI Lightweight web UI for interactive queries, document uploads, and result visualization.

-

Document DB integrations Built to work with MongoDB and Elasticsearch; ! Document upload and indexing have not been implemented yet.

-

Logging & monitoring Full logging of translation, planning and execution phases for auditability and debugging.

-

Conversation memory Keeps a history of interactions (V.1: stored but not used to influence understanding by default).

-

Editable configuration VCL possible sources, and the

whattypes are defined in a config JSON that can be edited by GUI.

Below is a compact reference of the supported commands. Each command entry summarizes the intent and the key operational guidelines the executor follows.

-

search(cerca) — Conceptual search for entities, legal concepts, or snippets.- Output: verbatim excerpts only (no paraphrase), only snippets that directly answer the query.

- Use: find references, parties, locations, monetary amounts, precedent citations.

-

summarize(riassumi) — Concise, autonomous summary preserving key facts, arguments and dispositive.- Output: new text (rewritten) capturing facts, relevant arguments, device; no verbatim copy of entire sections.

-

extract semantic(estrai semantico) — Extract semantically coherent sections (facts, reasons, devices).- Output: focused sections, reformulated for clarity but preserving conceptual content.

-

extract logical(estrai logico) — Reconstruct argumentation chain (sillogisms, premises→conclusion).- Output: stepwise mapping of premises, intermediate inferences, and conclusion(s). Reformulation allowed to clarify logical structure.

-

compare(confronta) — Comparative analysis across documents, highlighting agreements and divergences.- Output: grouped concordances and discordances, optionally short textual citations as evidence.

-

integrate(integra) — Merge multiple documents into a consolidated single text.- Output: consolidated text where duplicates are removed, conflicts are flagged or resolved under chosen policy.

-

verify(verifica) — Consistency and reference checking (legal citations, internal contradictions).- Output: report of anomalies (incorrect citations, logical inconsistencies). No opinions on merits.

-

analyze(analizza) — Structural decomposition and evaluation of completeness and argumentative robustness.- Output: an analytic report mapping sections, evaluating completeness and logical support.

-

reorganize(riorganizza) — Re-order sections according to a chosen criterion (chronological, topical).- Output: restructured text with preserved unit content.

-

classify(classifica) — Tag portions of text with legal function labels (e.g., petition, counterclaim, dispositive).- Output: mapping of text spans → labels.

-

other(altro) — Fallback for intentions not covered by predefined commands.- Output: best-effort response that may combine behaviors from other commands.

The system was designed as a modular pipeline with clear separation of concerns.

This section provides a compact but detailed description of each major component of execution

- Role: User entry point. Query entry, document upload, view results.

- Implementation: Streamlit + minimal front-end logic; calls orchestrator API or runs in-process.

- LLM-based: No (UI only; however, it can display LLM results).

- Role: Central coordinator. Receives user requests and routes them through preprocessing, translation, planning and execution steps. Responsible for format conversions (NL → JSON/DQL, JSON → plan).

- Implementation: Python service with modular plugs for each pipeline stage.

- LLM-based: No.

-

Role: Normalize text (lowercasing where needed), perform spellchecking/normalization, sanitize input.

-

Implementation: Two modes:

- LLM-based spelling correction (default) — uses an LLM to correct typos and preserve intent.

- Rule-based or dictionary-based fallback when

-parsersis set.

-

LLM-based: Optional (default yes, configurable).

-

Role: Convert an NL query to a VCL JSON structure with fields such as

command,what,source,conditions,params. -

Submodules (all LLM-based):

- Command Classifier: chooses the best-matching VCL command.

- Source Extractor: identifies which document sources are relevant (sentenza 1°, memoria, ricorso).

- What Extractor: determines the object of operation (e.g.,

fact,decision,precedent,sillogism). - Condition Extractor: pulls filters (dates, parties, jurisdiction).

-

LLM-based: Yes. Each component takes as input the NL query and possibly the results of the previous steps.

- Role: Convert VCL JSON into an ordered plan of atomic operations. Resolve dependencies and split complex requests into sub-operations when needed.

- Implementation: Rule engine plus a planner algorithm that maps VCL -> sequence of tasks (JSON list).

- LLM-based: No (deterministic planning logic).

-

Role: Executes the plan. For each atomic operation, invokes the appropriate tool:

- Deterministic functions: regex extraction, citation parsing, DB queries.

- LLM-invocations for summarization, logical reconstruction, paraphrasing, synthesis.

-

Behavior: The executor enforces the operational guidelines for each command (e.g.,

searchreturns verbatim snippets). -

LLM-based: Yes It takes as input the information that can be obtained from the language configuration and the user's VCL request.

- Role: Store and serve documents/history chat to the executor/GUI.

- Implementation: MongoDB/Elasticsearch

git clone https://github.com/unimib-datAI/VCL.git

cd VCLpython -m venv .venv

source .venv/bin/activate # Linux/macOS

.\.venv\Scripts\activate # Windowspip install -r requirements.txtCreate a .env file in the repo root with the variables you need:

# Example .env

OPENAI_API_KEY=sk-...

GOOGLE_API_KEY=...

HUGGINGFACEHUB_API_TOKEN=...

MONGO_URI=...

MONGO_INITDB_ROOT_USERNAME=...

MONGO_INITDB_ROOT_PASSWORD=...

In documents directory you must store your documents.

Each document is a JSON file containing three keys:

- name: the name of the file

- text: the text content to be processed

- type_doc: the label describing the document type

- owner: the username of the file owner

A docker-compose.yml is included for MongoDB.

docker compose up --build -dIf you run with Docker, set env vars in docker-compose.yml or mount an .env into the container.

python main.pyThen open: http://localhost:8501

The script accepts several optional flags (arguments) to customize its behavior.

-

-api <KEY>- Description: Provides the API key for the LLM.

-

-uri_db <URI>- Description: Provides the connection URL for the database (e.g., MongoDB).

-

-provider <PROVIDER_NAME>- Description: Specifies which LLM provider to use.

- Default:

google_genai - Choices:

google_genai,openai,copilot,huggingface.

-

-model_name <MODEL_NAME>- Description: Specifies the exact LLM model name to use.

- Default:

gemini-2.5-flash - Examples:

gpt-4o-mini,claude-3-5-sonnet,mistralai/Mistral-7B-Instruct-v0.2.

-

-wait_seconds <NUMBER>- Description: Sets the number of seconds to wait after each LLM call.

- Default:

0

-

-evaluation_mode- Description: If present, the Streamlit application is not started, but the experimentation script is started

- Usage: Just add the flag; it does not require a value

Example

python main.py -api sk-XXXX -provider openai -model_name gpt-4o-mini -uri_db mongodb://localhost:27017/dql